Mining Association Rules between Sets of Items in

Mining Association Rules between Sets of Items in Large Databases presented by Zhuang Wang

Outline • • • Introduction Formal Model Apriori Algorithm Experiments Summary

Introduction Association rule: - Association rules are used to discover elements that co -occur frequently within a dataset consisting of multiple independent selections of elements (such as purchasing transactions), and to discover rules. • Applications: • - Questions such as "if a customer purchases product A, how likely is he to purchase product B? " and "What products will a customer buy if he buys products C and D? " are answered by association-finding algorithms. (market basket analysis)

Formal Model • Let I = I_1, I_2, . . . , I_n be a set of items. Let T be a database of transactions. Each transaction t in T is represented as a subset of I. Let X be a subset of I. • Support and Confidence: By an association rule, we mean an implication of the form X I_k, where X is a set of some items in I, and I_k is a single item in I that is not present in X. support: probability that a transaction contains X and I_k. P(X , I_k) confidence: conditional probability that a transaction having X also contains I_k. P(l_k | X)

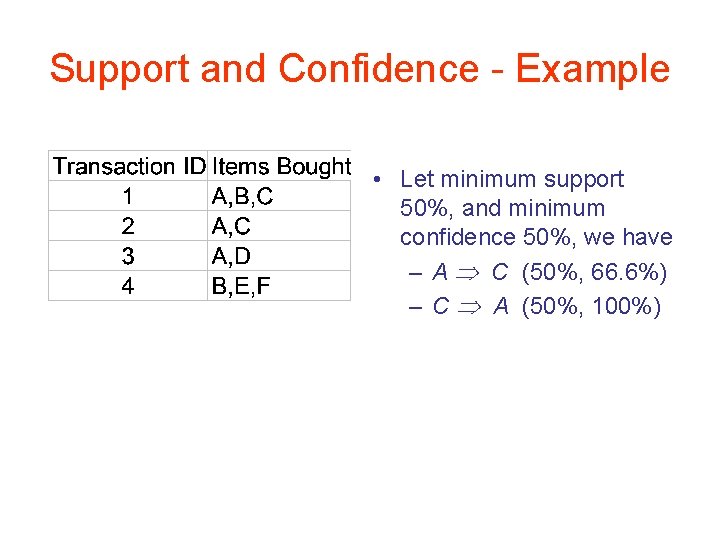

Support and Confidence - Example • Let minimum support 50%, and minimum confidence 50%, we have – A C (50%, 66. 6%) – C A (50%, 100%)

Apriori Algorithm • To find subsets which are common to at least a minimum confidence of the itemsets. • Using a "bottom up" approach, where frequent itemsets (the sets of items that follows minimum support) are extended one item at a time (a step known as candidate generation), and groups of candidates are tested against the data. • The algorithm terminates when no further successful extensions are found. • Generating from each large itemset, rules that use items from the large itemset

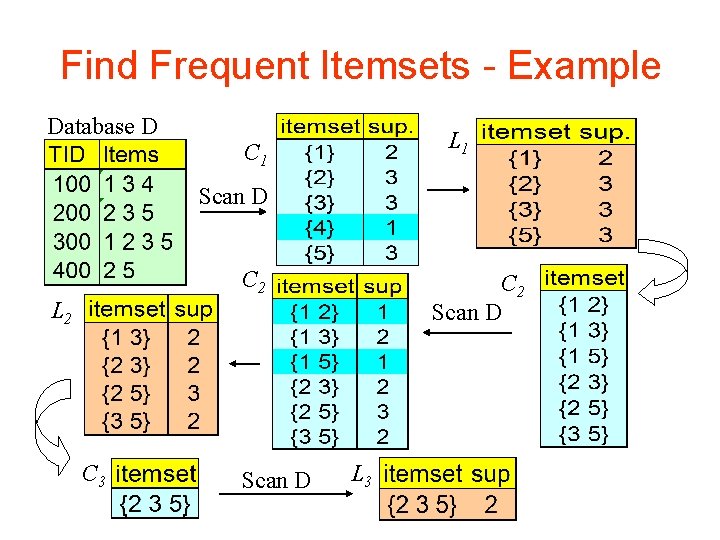

Find Frequent Itemsets - Example Database D L 1 C 1 Scan D C 2 Scan D L 2 C 3 Scan D L 3

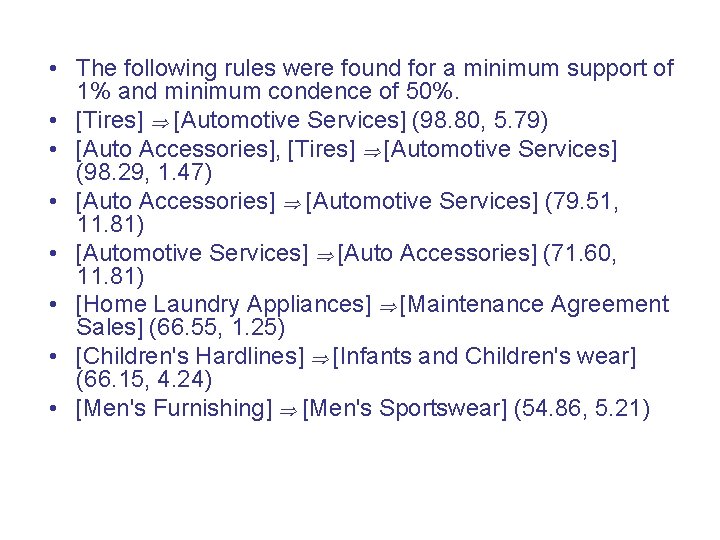

Experiments • We experimented with the rule mining algorithm using the sales data obtained from a large retailing company. • There a total of 46, 873 customer transactions in this data. Each transaction contains the department numbers from which a customer bought an item in a visit. • There a total of 63 departments. The algorithm finds if there is an association between departments in the customer purchasing behavior.

• The following rules were found for a minimum support of 1% and minimum condence of 50%. • [Tires] [Automotive Services] (98. 80, 5. 79) • [Auto Accessories], [Tires] [Automotive Services] (98. 29, 1. 47) • [Auto Accessories] [Automotive Services] (79. 51, 11. 81) • [Automotive Services] [Auto Accessories] (71. 60, 11. 81) • [Home Laundry Appliances] [Maintenance Agreement Sales] (66. 55, 1. 25) • [Children's Hardlines] [Infants and Children's wear] (66. 15, 4. 24) • [Men's Furnishing] [Men's Sportswear] (54. 86, 5. 21)

Summary • Apriori, while historically significant, suffers from a number of inefficiencies or trade-offs, which have spawned other algorithms. • Hash tables: uses a hash tree to store candidate itemsets. This hash tree has item sets at the leaves and at internal nodes • Partitioning: Any itemset that is potentially frequent in DB must be frequent in at least one of the partitions of DB • Sampling: mining on a subset of given data, need a lower support threshold + a method to determine the completeness.

Reference • R. Agrawal, T. Imielinski, A. Swami: “Mining Associations between Sets of Items in Massive Databases”, Proc. of the ACM SIGMOD Int'l Conference on Management of Data, Washington D. C. , May 1993, 207 -216. • http: //knight. cis. temple. edu/~vasilis/Courses/CIS 664/ • http: //en. wikipedia. org/wiki/Apriori_algorithm

- Slides: 11