Minimum Phoneme Error Based Heteroscedastic Linear Discriminant Analysis

Minimum Phoneme Error Based Heteroscedastic Linear Discriminant Analysis for Speech Recognition Bing Zhang and Spyros Matsoukas BBN Technologies Present by shih-hung Liu 2006/ 05/16 1

Outline • • • Review PCA, LDA, HLDA Introduction MPE-HLDA Experimental results Conclusions 2

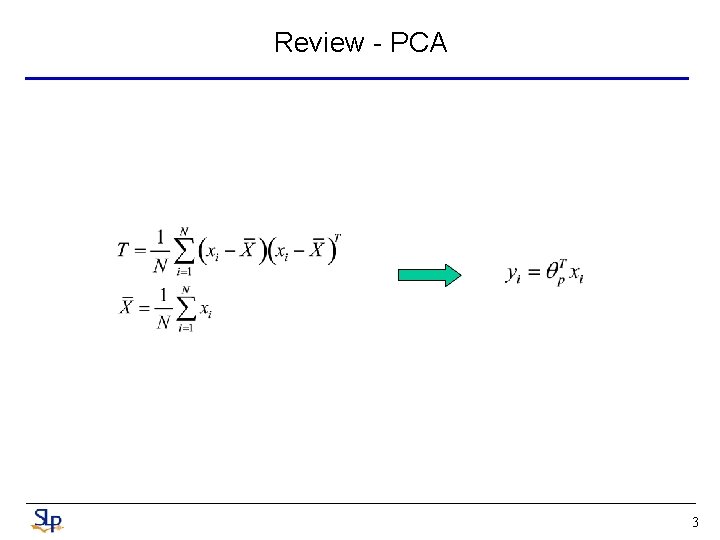

Review - PCA 3

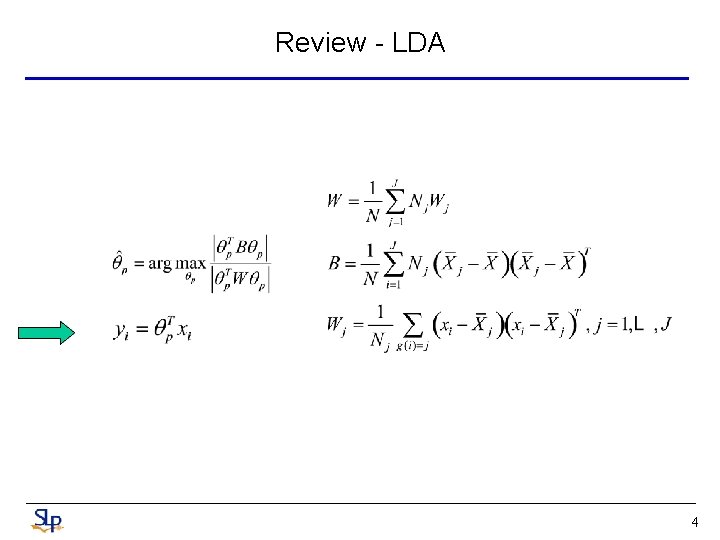

Review - LDA 4

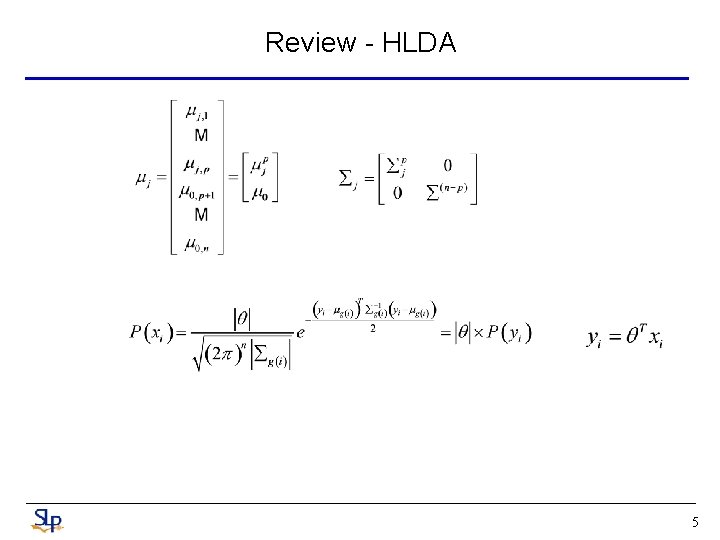

Review - HLDA 5

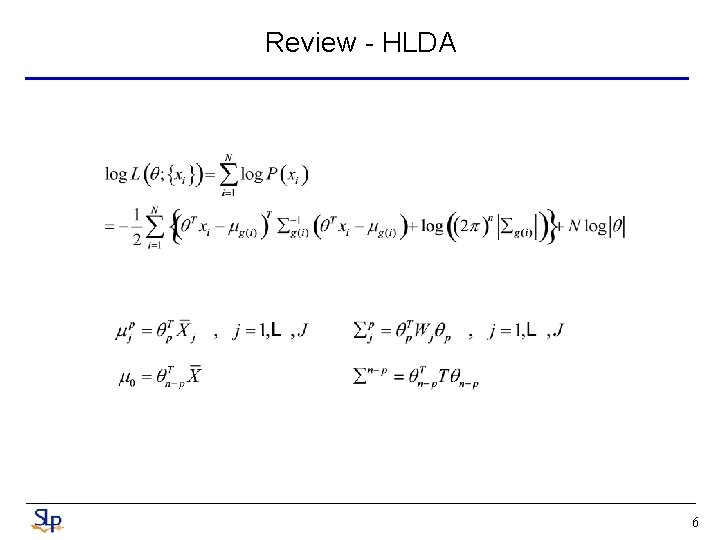

Review - HLDA 6

Introduction for MPE-HLDA • In speech recognition systems, feature analysis is usually employed for better classification accuracy and complexity control. • In recent years, extensions to the classical LDA have been widely adopted • Among them, HDA seeks to remove the equal variance constraint by LDA • ML-HLDA is taking the HMM structure (eg. diagonal covariance Guassian mixture state distribution) into consideration 7

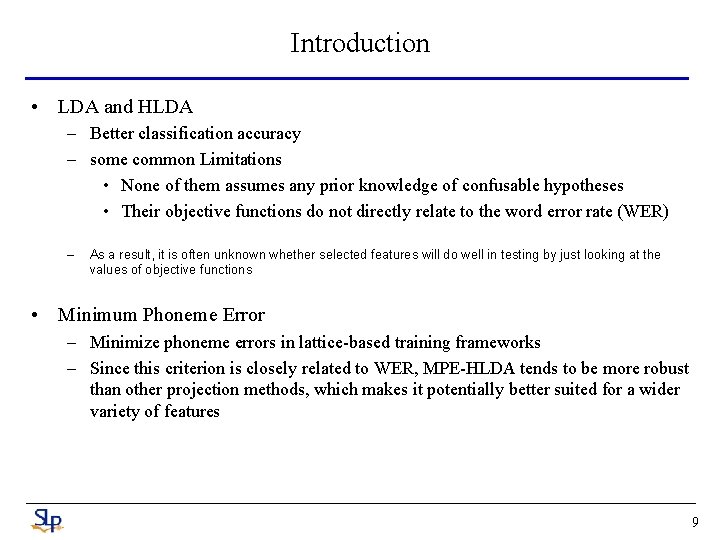

Introduction for MPE-HLDA • Despite the differences between the above techniques, they have some common limitation. • First, none of them assumes any prior knowledge of confusable hypotheses, so their choices are determined to be suboptimal for recognition • Second, their objective functions do not directly related to the WER • For example, we found that HLDA could select totally nondiscriminant features while improving its objective function by mapping all training samples to a single point in space along some dimensions 8

Introduction • LDA and HLDA – Better classification accuracy – some common Limitations • None of them assumes any prior knowledge of confusable hypotheses • Their objective functions do not directly relate to the word error rate (WER) – As a result, it is often unknown whether selected features will do well in testing by just looking at the values of objective functions • Minimum Phoneme Error – Minimize phoneme errors in lattice-based training frameworks – Since this criterion is closely related to WER, MPE-HLDA tends to be more robust than other projection methods, which makes it potentially better suited for a wider variety of features 9

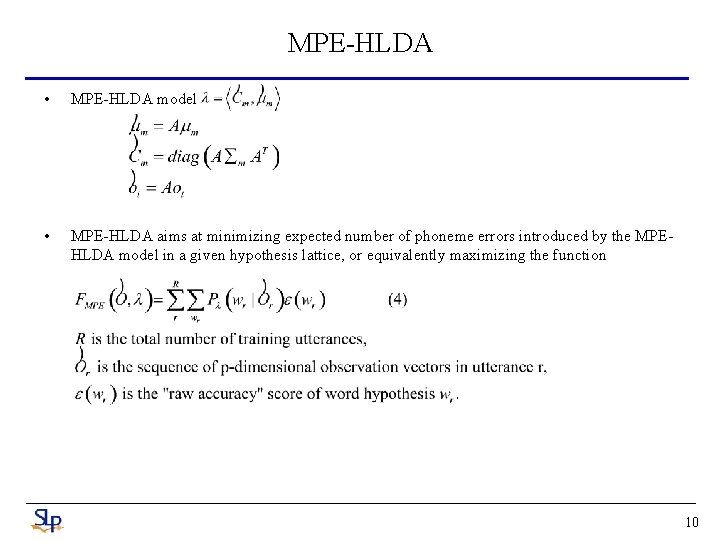

MPE-HLDA • MPE-HLDA model • MPE-HLDA aims at minimizing expected number of phoneme errors introduced by the MPEHLDA model in a given hypothesis lattice, or equivalently maximizing the function 10

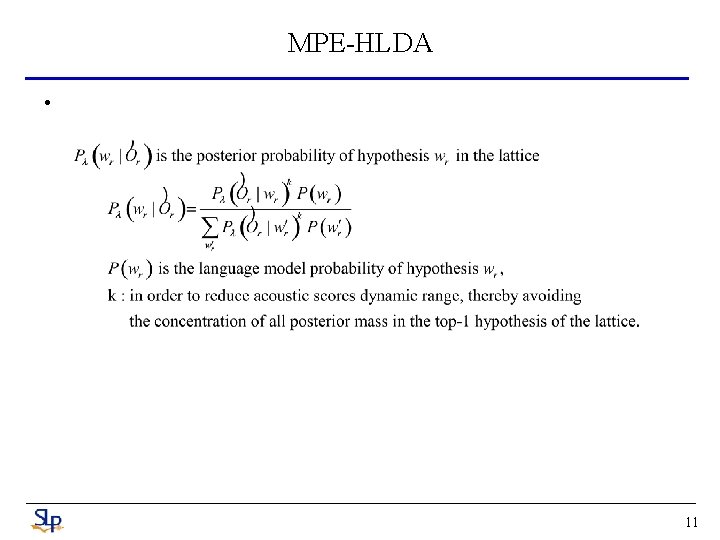

MPE-HLDA • 11

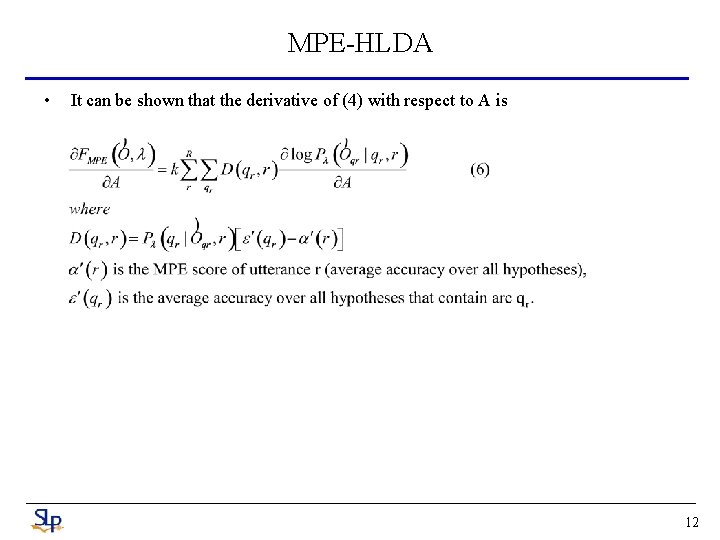

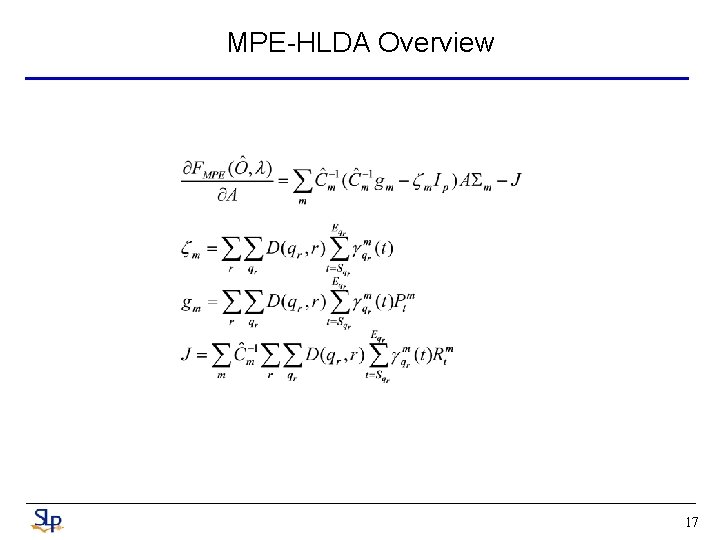

MPE-HLDA • It can be shown that the derivative of (4) with respect to A is 12

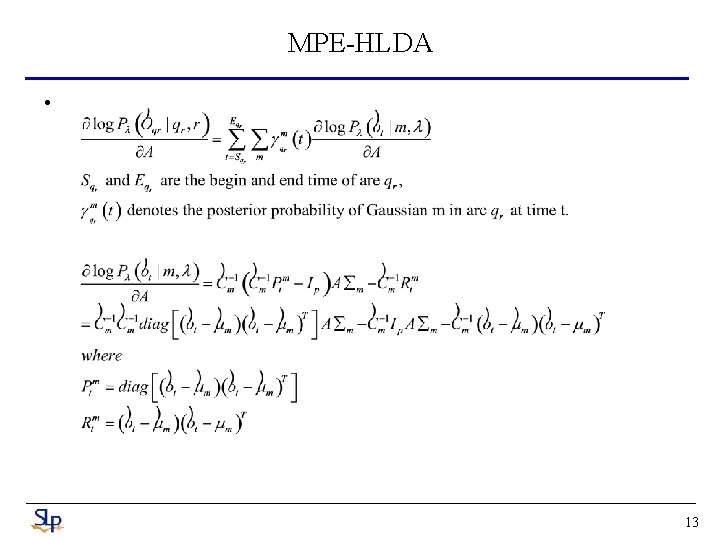

MPE-HLDA • 13

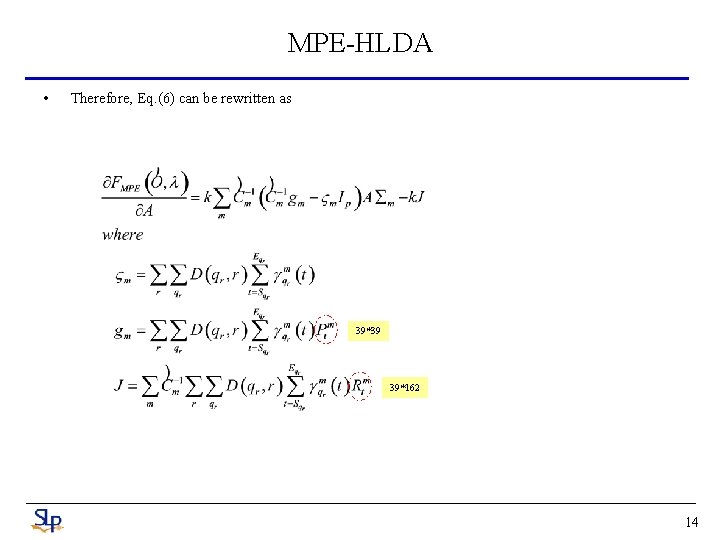

MPE-HLDA • Therefore, Eq. (6) can be rewritten as 39*39 39*162 14

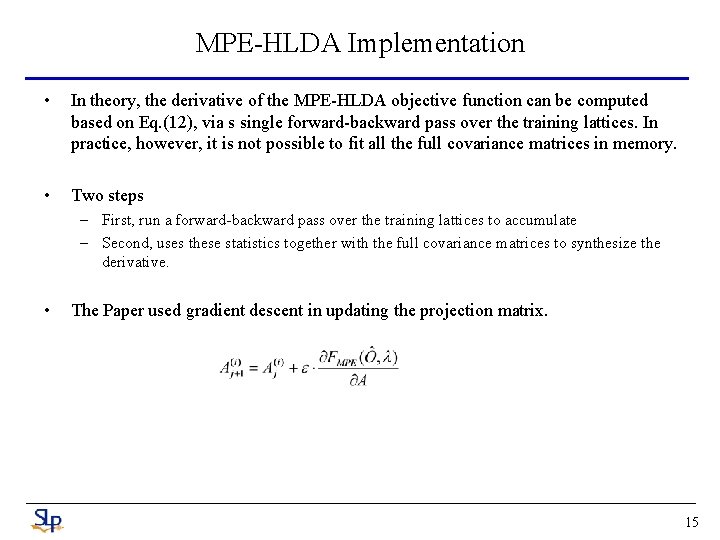

MPE-HLDA Implementation • In theory, the derivative of the MPE-HLDA objective function can be computed based on Eq. (12), via s single forward-backward pass over the training lattices. In practice, however, it is not possible to fit all the full covariance matrices in memory. • Two steps – First, run a forward-backward pass over the training lattices to accumulate – Second, uses these statistics together with the full covariance matrices to synthesize the derivative. • The Paper used gradient descent in updating the projection matrix. 15

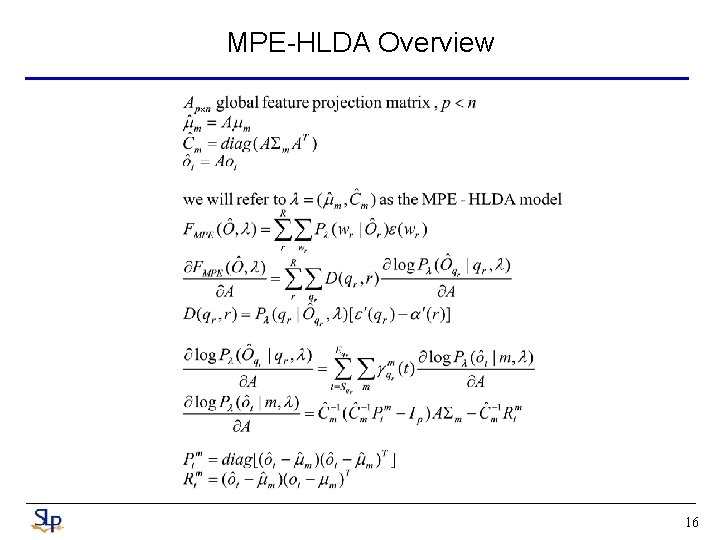

MPE-HLDA Overview 16

MPE-HLDA Overview 17

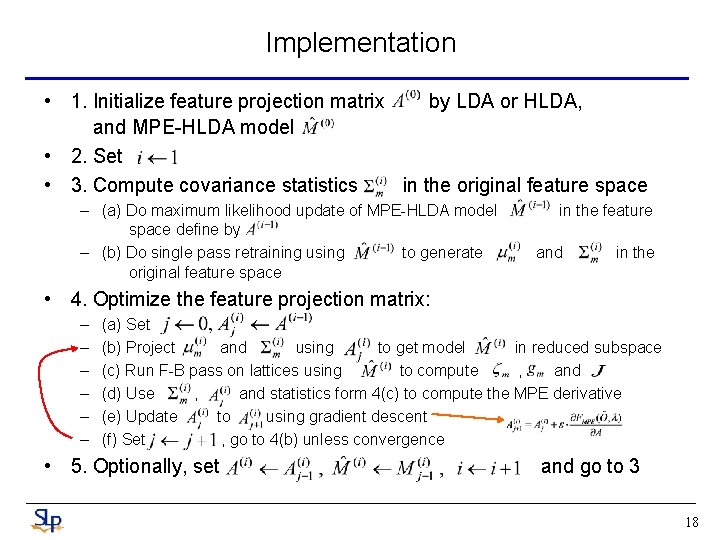

Implementation • 1. Initialize feature projection matrix by LDA or HLDA, and MPE-HLDA model • 2. Set • 3. Compute covariance statistics in the original feature space – (a) Do maximum likelihood update of MPE-HLDA model space define by – (b) Do single pass retraining using to generate original feature space in the feature and in the • 4. Optimize the feature projection matrix: – – – (a) Set (b) Project and using to get model in reduced subspace (c) Run F-B pass on lattices using to compute , and (d) Use , and statistics form 4(c) to compute the MPE derivative (e) Update to using gradient descent (f) Set , go to 4(b) unless convergence • 5. Optionally, set and go to 3 18

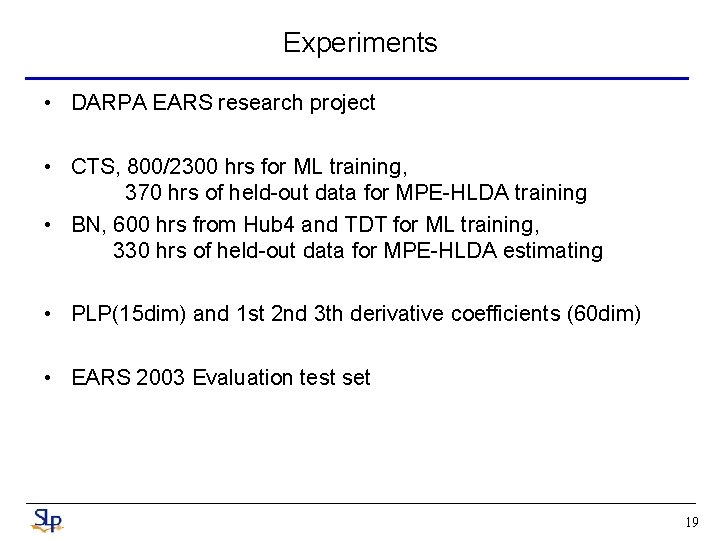

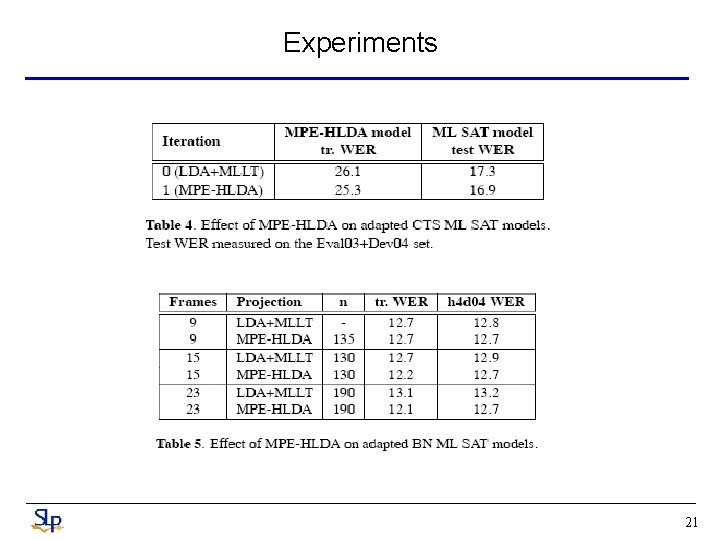

Experiments • DARPA EARS research project • CTS, 800/2300 hrs for ML training, 370 hrs of held-out data for MPE-HLDA training • BN, 600 hrs from Hub 4 and TDT for ML training, 330 hrs of held-out data for MPE-HLDA estimating • PLP(15 dim) and 1 st 2 nd 3 th derivative coefficients (60 dim) • EARS 2003 Evaluation test set 19

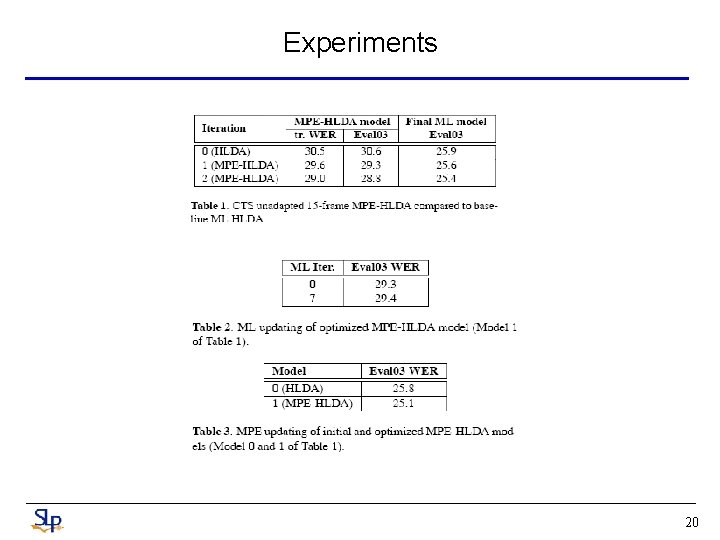

Experiments 20

Experiments 21

Conclusions • We have taken a first look at a new feature analysis method, MPE-HLDA. • It shows that it is effective in reducing recognition error, and that it is more robust than other commonly used analysis methods like LDA and HLDA 22

- Slides: 22