Minimum Mean Squared Error Time Series Classification Using

- Slides: 20

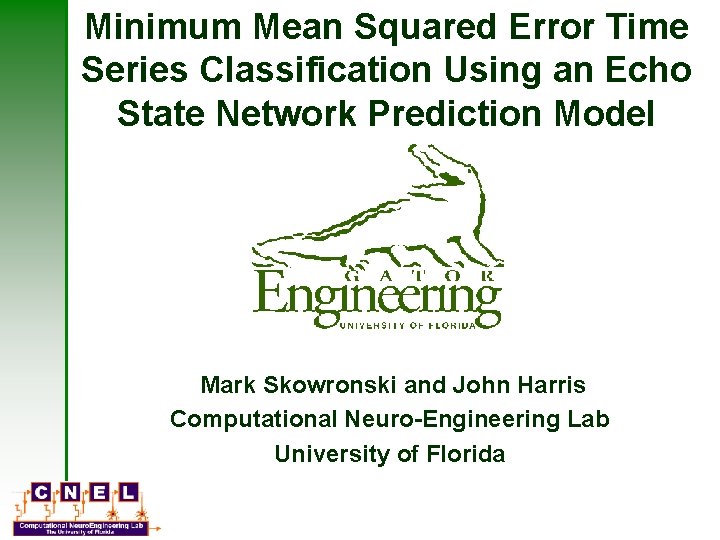

Minimum Mean Squared Error Time Series Classification Using an Echo State Network Prediction Model Mark Skowronski and John Harris Computational Neuro-Engineering Lab University of Florida

Automatic Speech Recognition Using an Echo State Network Mark Skowronski and John Harris Computational Neuro-Engineering Lab University of Florida

Transformation of a graduate student 2000 2006

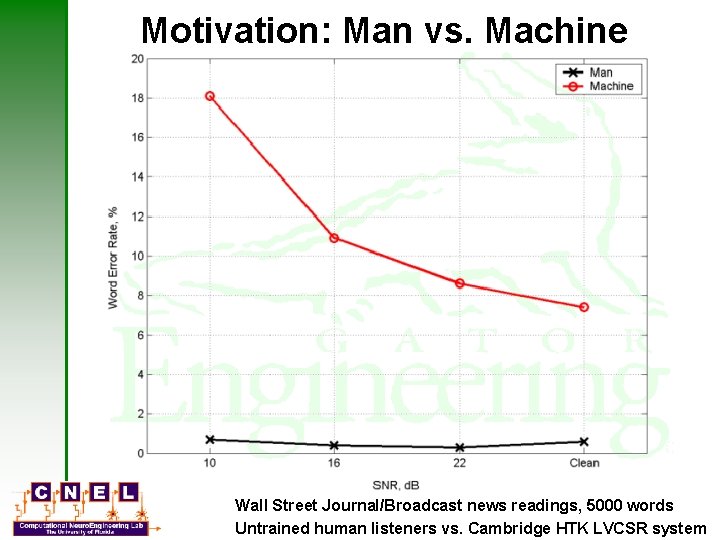

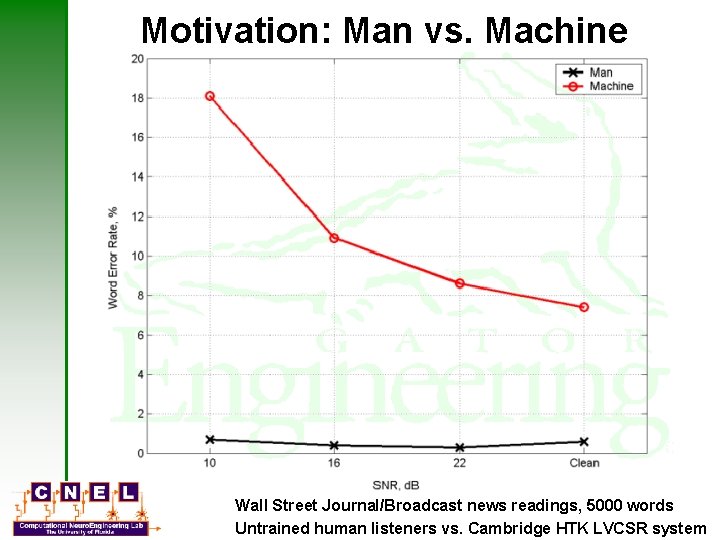

Motivation: Man vs. Machine Wall Street Journal/Broadcast news readings, 5000 words Untrained human listeners vs. Cambridge HTK LVCSR system

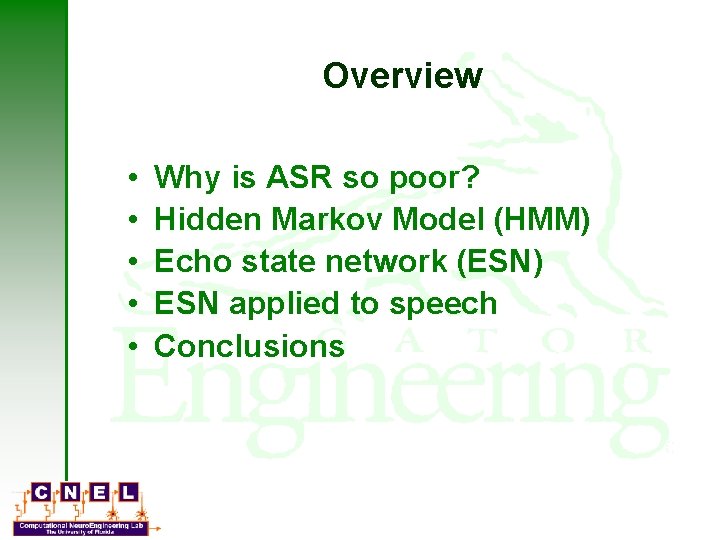

Overview • • • Why is ASR so poor? Hidden Markov Model (HMM) Echo state network (ESN) ESN applied to speech Conclusions

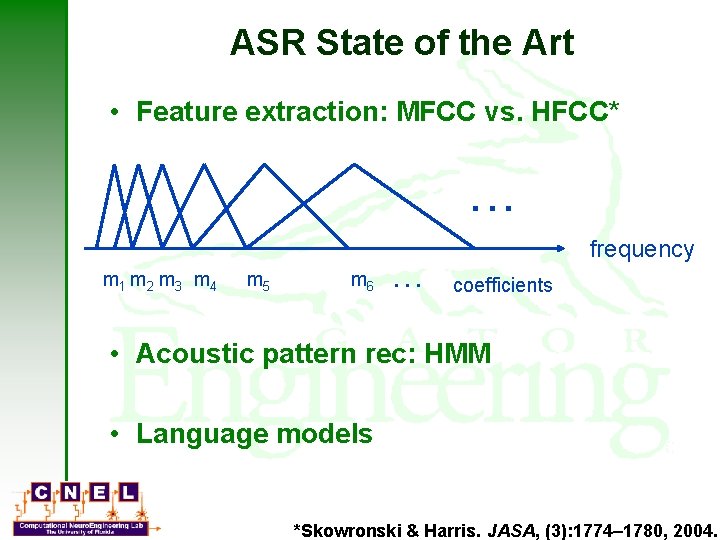

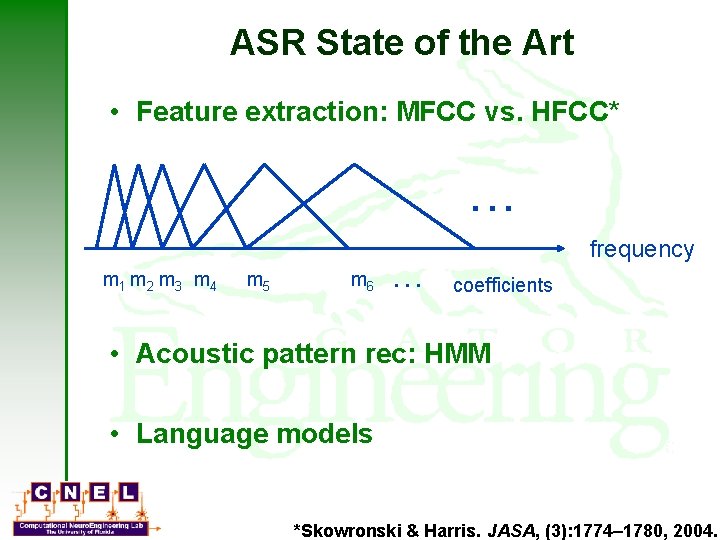

ASR State of the Art • Feature extraction: MFCC vs. HFCC* . . . m 1 m 2 m 3 m 4 m 5 m 6 … frequency coefficients • Acoustic pattern rec: HMM • Language models *Skowronski & Harris. JASA, (3): 1774– 1780, 2004.

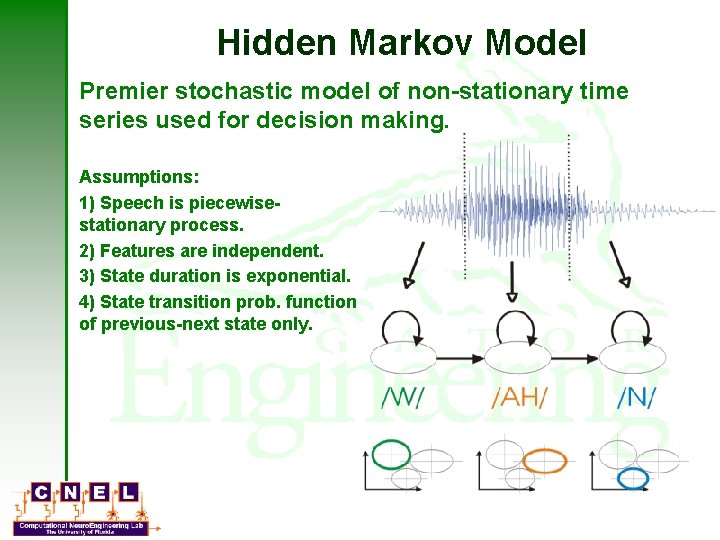

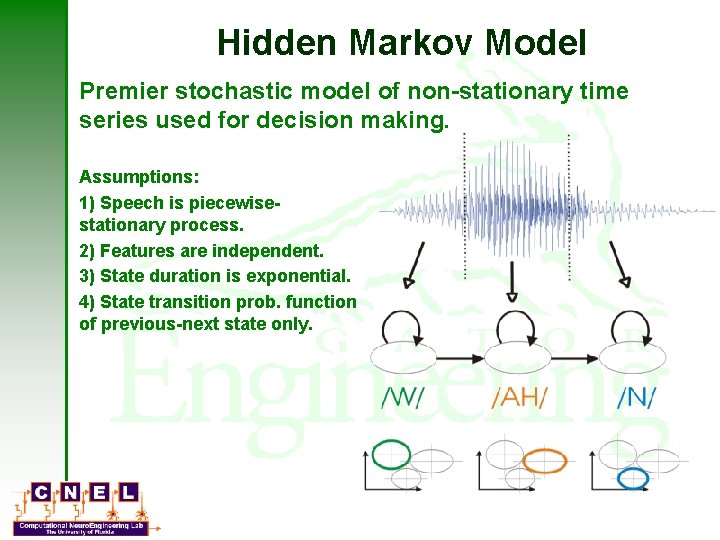

Hidden Markov Model Premier stochastic model of non-stationary time series used for decision making. Assumptions: 1) Speech is piecewisestationary process. 2) Features are independent. 3) State duration is exponential. 4) State transition prob. function of previous-next state only.

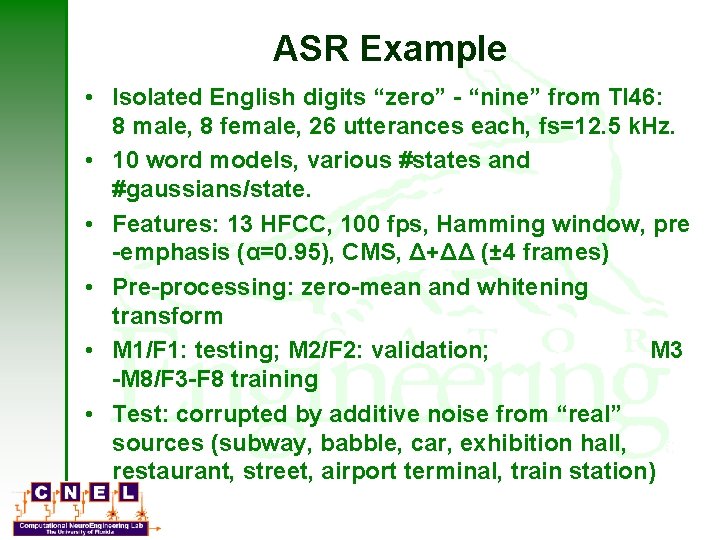

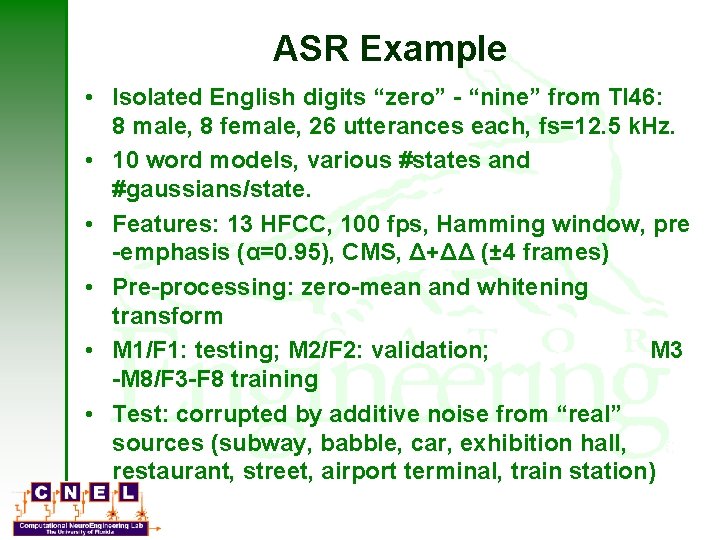

ASR Example • Isolated English digits “zero” - “nine” from TI 46: 8 male, 8 female, 26 utterances each, fs=12. 5 k. Hz. • 10 word models, various #states and #gaussians/state. • Features: 13 HFCC, 100 fps, Hamming window, pre -emphasis (α=0. 95), CMS, Δ+ΔΔ (± 4 frames) • Pre-processing: zero-mean and whitening transform • M 1/F 1: testing; M 2/F 2: validation; M 3 -M 8/F 3 -F 8 training • Test: corrupted by additive noise from “real” sources (subway, babble, car, exhibition hall, restaurant, street, airport terminal, train station)

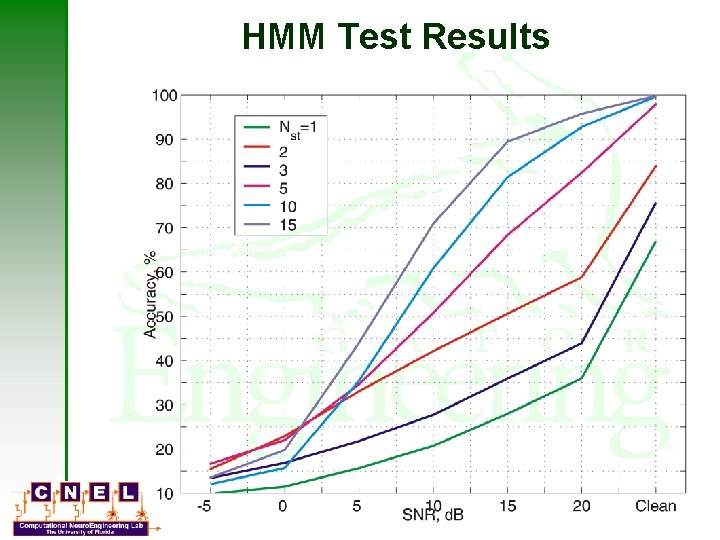

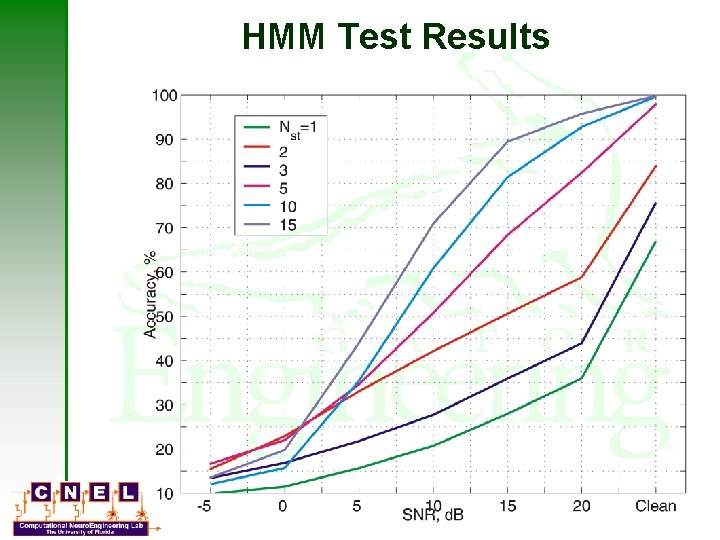

HMM Test Results

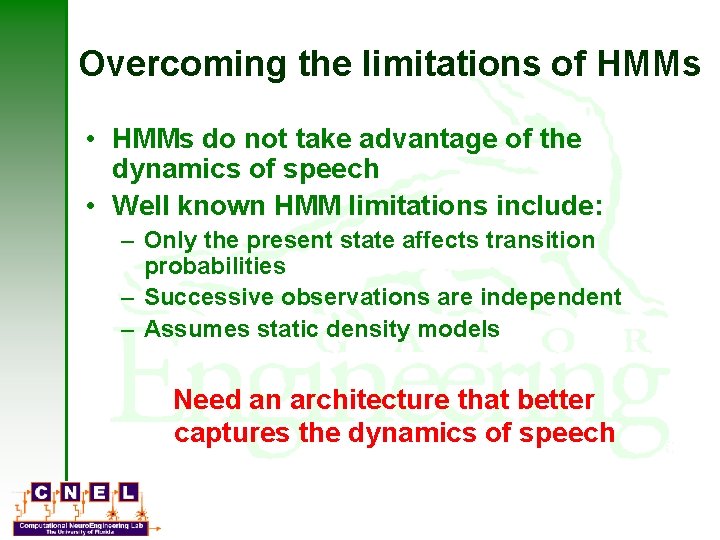

Overcoming the limitations of HMMs • HMMs do not take advantage of the dynamics of speech • Well known HMM limitations include: – Only the present state affects transition probabilities – Successive observations are independent – Assumes static density models Need an architecture that better captures the dynamics of speech

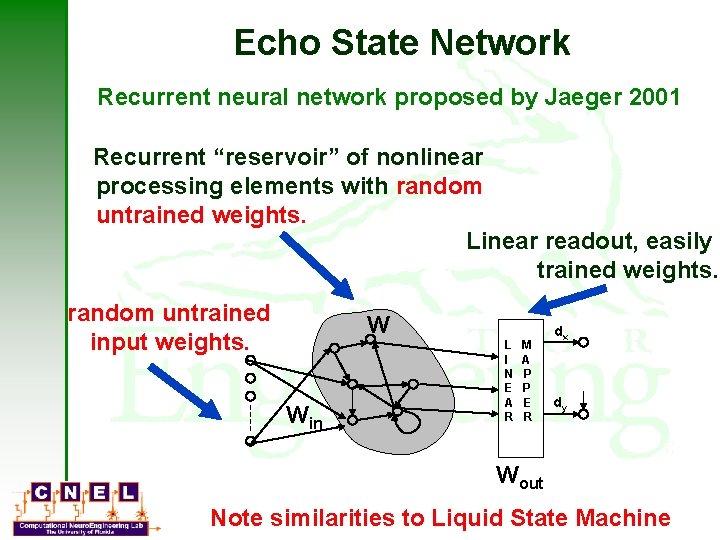

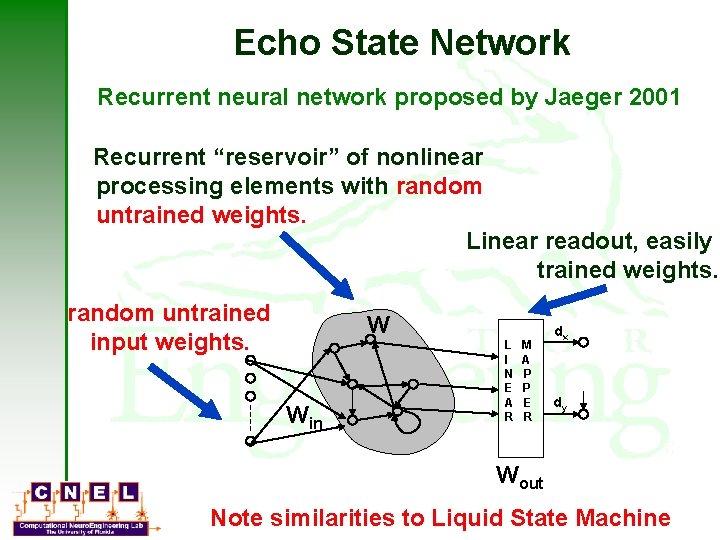

Echo State Network Recurrent neural network proposed by Jaeger 2001 Recurrent “reservoir” of nonlinear processing elements with random untrained weights. Linear readout, easily trained weights. random untrained input weights. W Win L I N E A R M A P P E R dx dy Wout Note similarities to Liquid State Machine

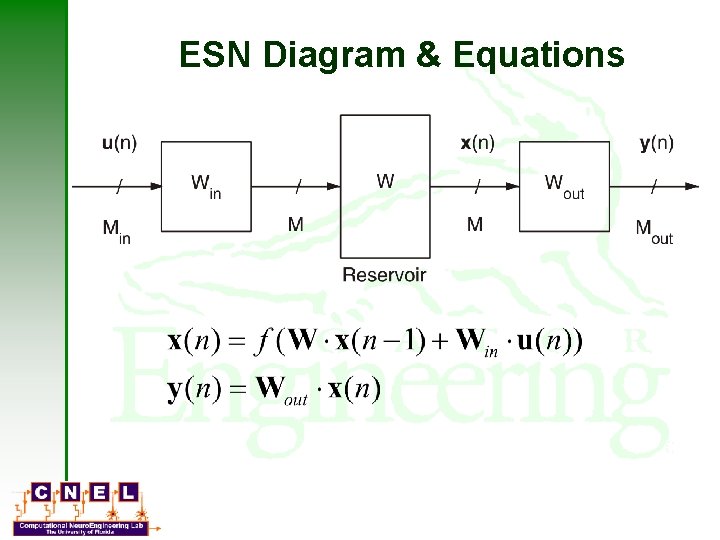

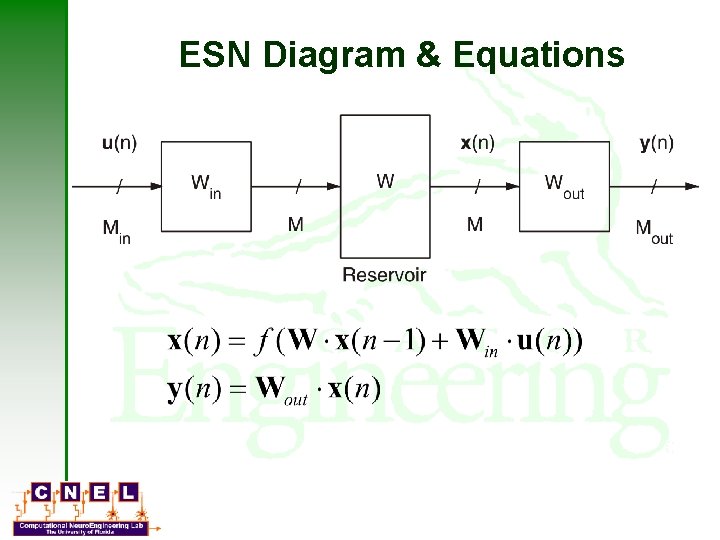

ESN Diagram & Equations

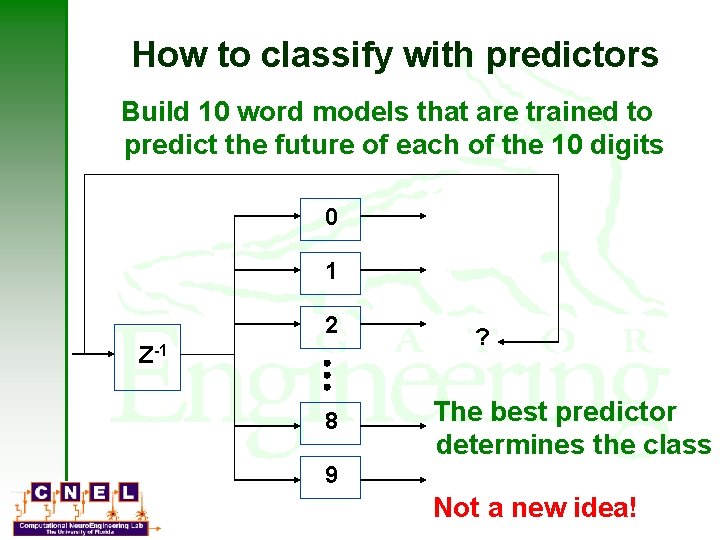

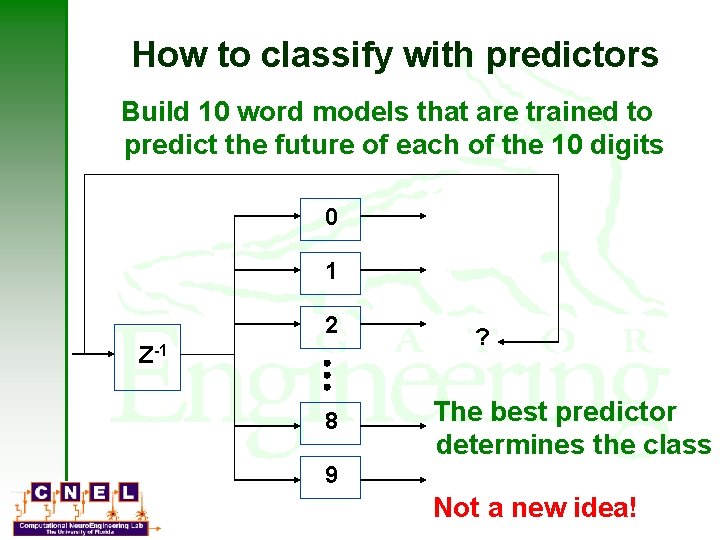

How to classify with predictors Build 10 word models that are trained to predict the future of each of the 10 digits 0 1 2 Z-1 8 ? The best predictor determines the class 9 Not a new idea!

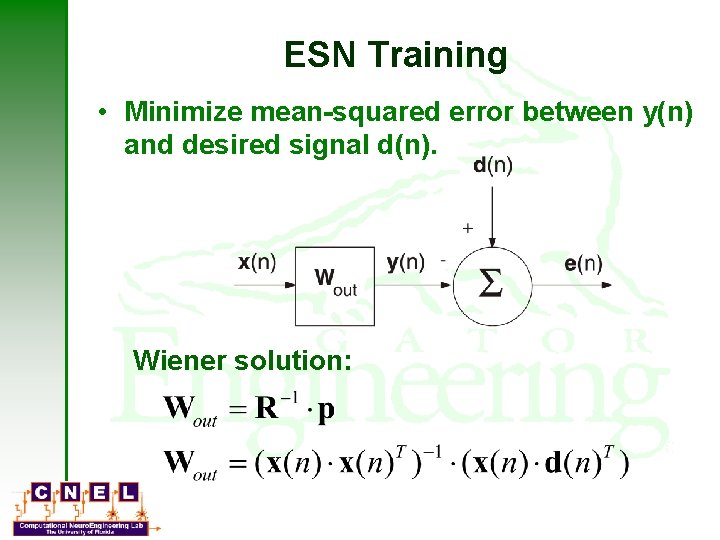

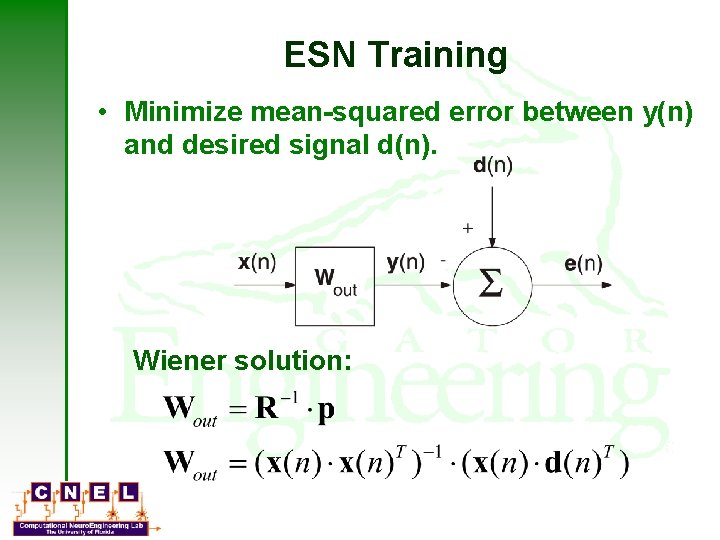

ESN Training • Minimize mean-squared error between y(n) and desired signal d(n). Wiener solution:

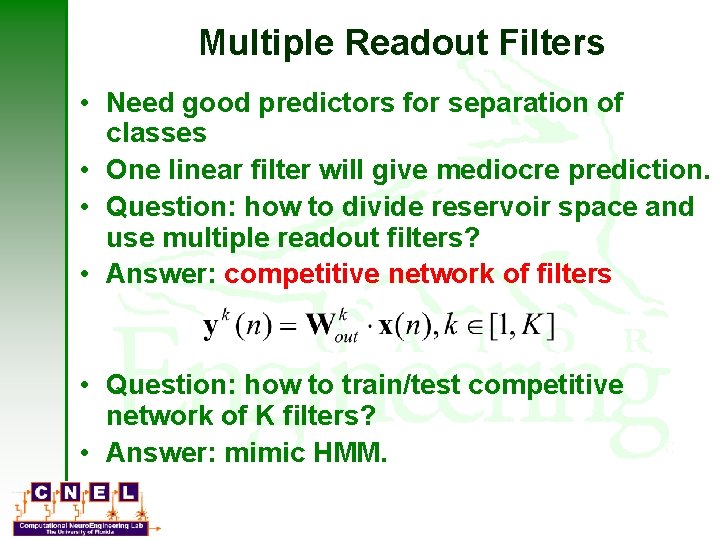

Multiple Readout Filters • Need good predictors for separation of classes • One linear filter will give mediocre prediction. • Question: how to divide reservoir space and use multiple readout filters? • Answer: competitive network of filters • Question: how to train/test competitive network of K filters? • Answer: mimic HMM.

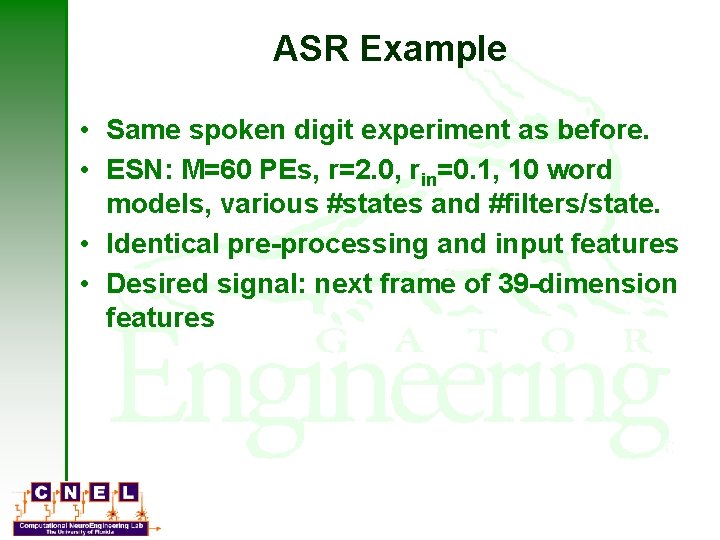

ASR Example • Same spoken digit experiment as before. • ESN: M=60 PEs, r=2. 0, rin=0. 1, 10 word models, various #states and #filters/state. • Identical pre-processing and input features • Desired signal: next frame of 39 -dimension features

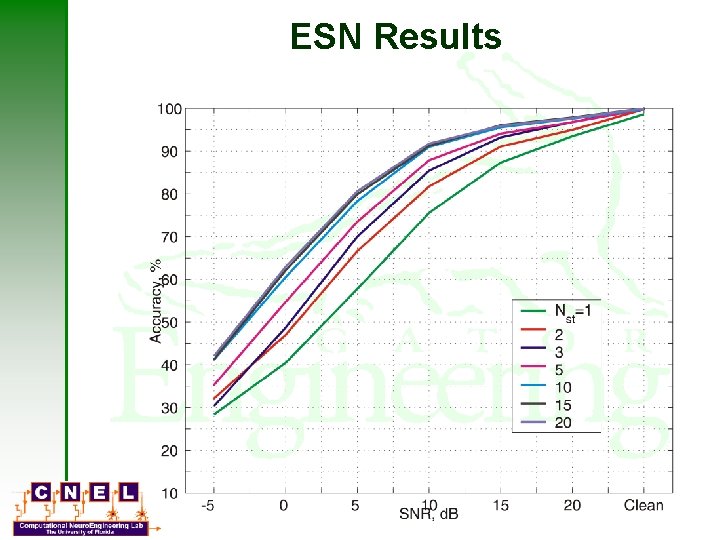

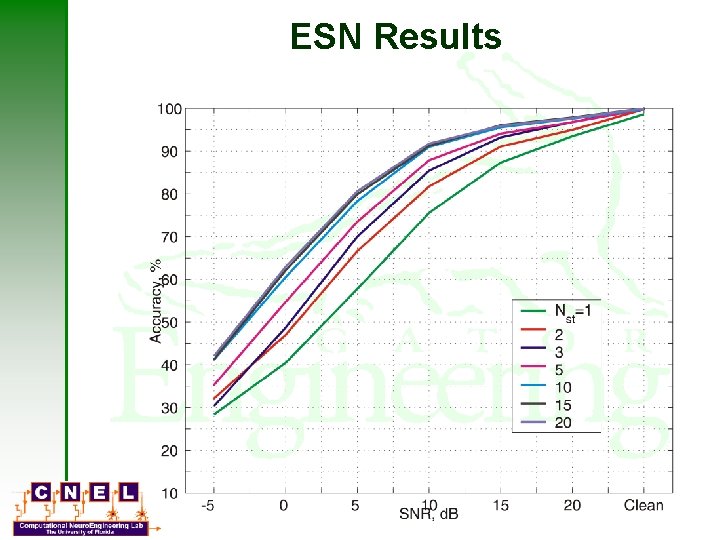

ESN Results

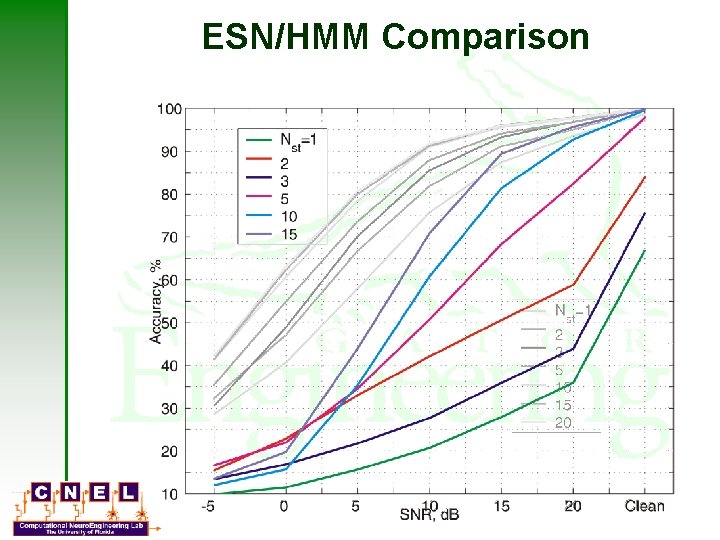

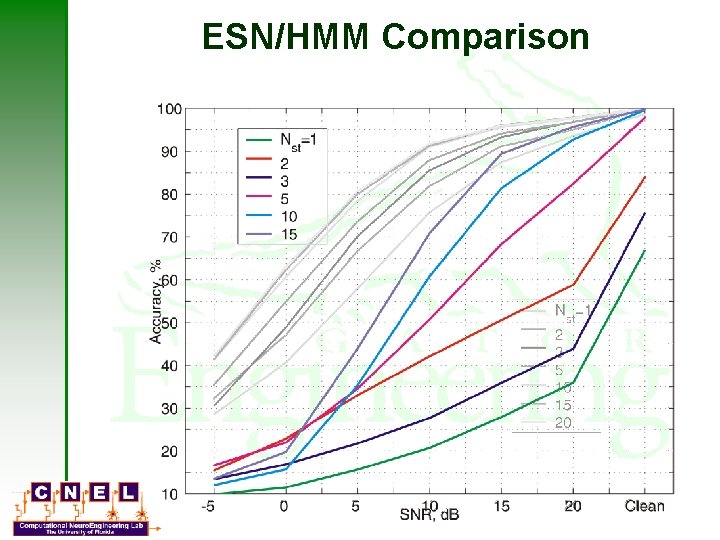

ESN/HMM Comparison

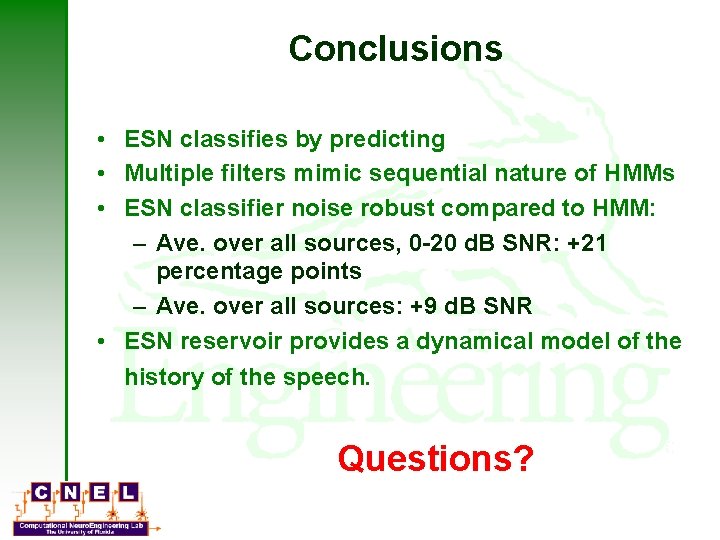

Conclusions • ESN classifies by predicting • Multiple filters mimic sequential nature of HMMs • ESN classifier noise robust compared to HMM: – Ave. over all sources, 0 -20 d. B SNR: +21 percentage points – Ave. over all sources: +9 d. B SNR • ESN reservoir provides a dynamical model of the history of the speech. Questions?

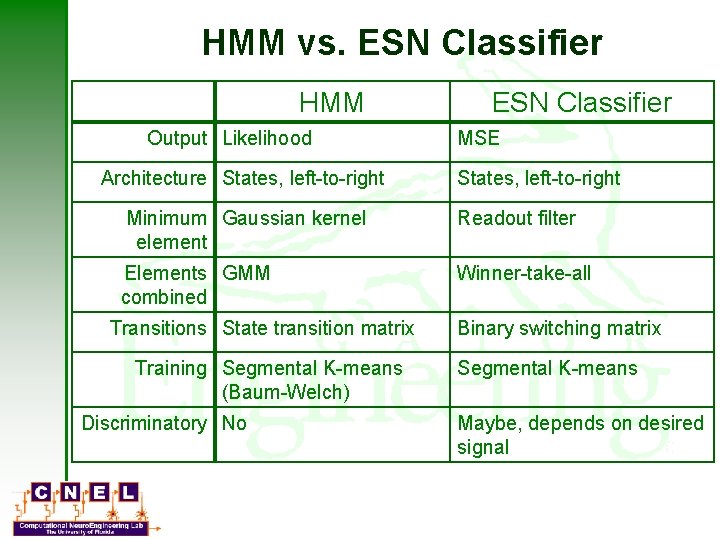

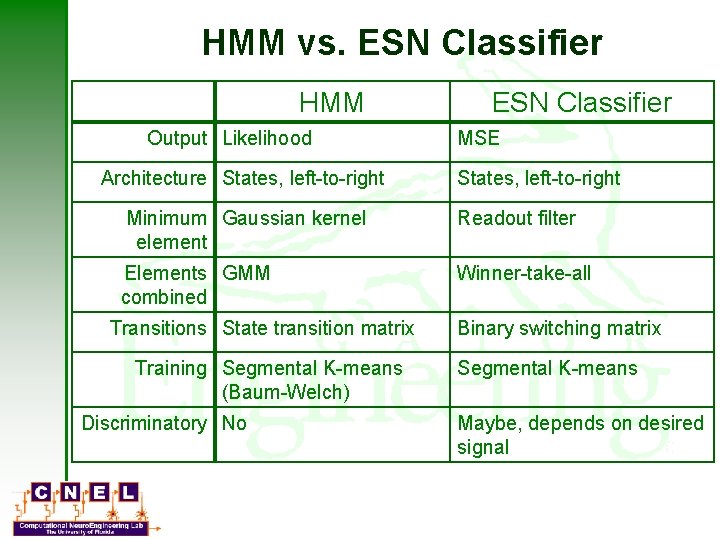

HMM vs. ESN Classifier HMM Output Likelihood Architecture States, left-to-right ESN Classifier MSE States, left-to-right Minimum Gaussian kernel element Readout filter Elements GMM combined Winner-take-all Transitions State transition matrix Training Segmental K-means (Baum-Welch) Discriminatory No Binary switching matrix Segmental K-means Maybe, depends on desired signal