Minimum Effort Design Space Subsetting for Configurable Caches

Minimum Effort Design Space Subsetting for Configurable Caches Hammam Alsafrjalani, Ann Gordon-Ross+, and Pablo Viana Department of Electrical and Computer Engineering University of Florida, Gainesville, Florida, USA Also Affiliated with NSF Center for High-Performance Reconfigurable Computing + This work was supported by National Science Foundation (NSF) grant CNS-0953447

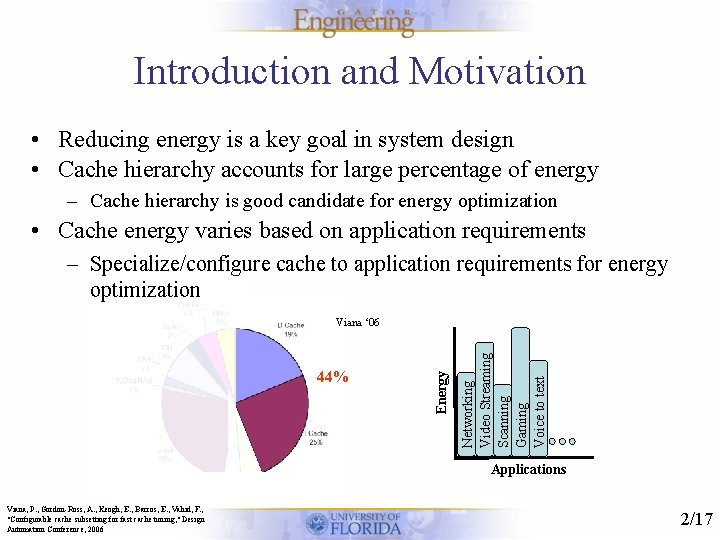

Introduction and Motivation • Reducing energy is a key goal in system design • Cache hierarchy accounts for large percentage of energy – Cache hierarchy is good candidate for energy optimization • Cache energy varies based on application requirements – Specialize/configure cache to application requirements for energy optimization Networking Video Streaming Scanning Gaming Voice to text 44% Energy Viana ‘ 06 Applications Viana, P. , Gordon-Ross, A. , Keogh, E. , Barros, E. , Vahid, F. , "Configurable cache subsetting for fast cache tuning, " Design Automation Conference, 2006 2/17

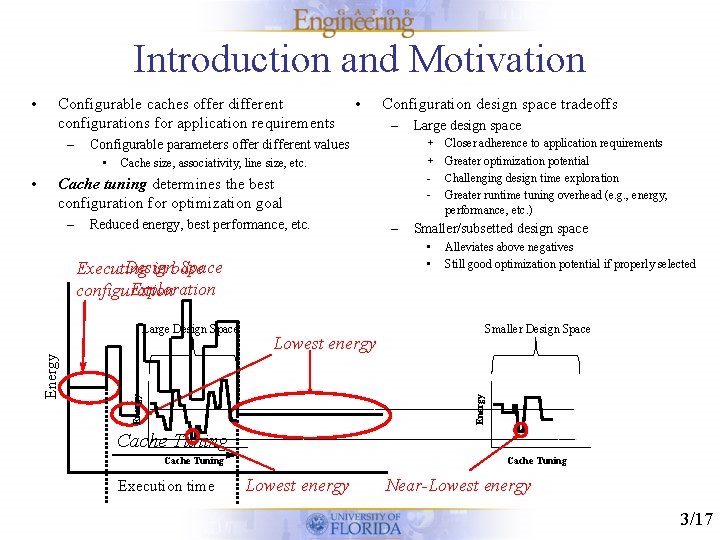

Introduction and Motivation Configurable caches offer different configurations for application requirements – • Configuration design space tradeoffs – – Cache size, associativity, line size, etc. Reduced energy, best performance, etc. – Smaller/subsetted design space • • Design Space Executing in base Exploration configuration Large Design Space Large design space + Closer adherence to application requirements + Greater optimization potential - Challenging design time exploration - Greater runtime tuning overhead (e. g. , energy, performance, etc. ) Cache tuning determines the best configuration for optimization goal Alleviates above negatives Still good optimization potential if properly selected Smaller Design Space Lowest energy Energy • • Configurable parameters offer different values Energy • Cache Tuning Execution time Cache Tuning Lowest energy Near-Lowest energy 3/17

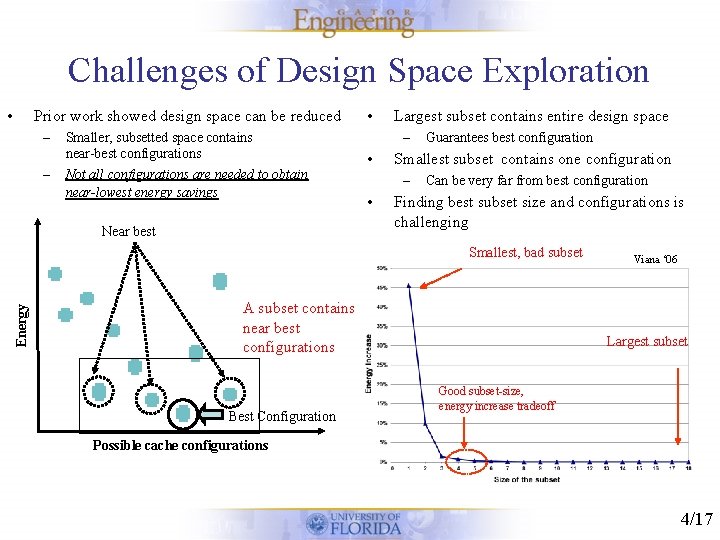

Challenges of Design Space Exploration • Prior work showed design space can be reduced – – Smaller, subsetted space contains near-best configurations Not all configurations are needed to obtain near-lowest energy savings Near best • Largest subset contains entire design space – • Smallest subset contains one configuration – • Guarantees best configuration Can be very far from best configuration Finding best subset size and configurations is challenging Energy Smallest, bad subset A subset contains near best configurations Best Configuration Viana ‘ 06 Largest subset Good subset-size, energy increase tradeoff Possible cache configurations 4/17

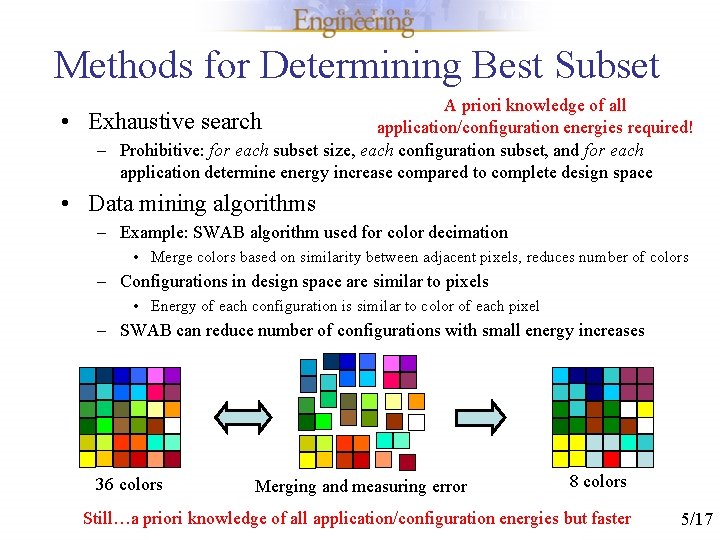

Methods for Determining Best Subset • A priori knowledge of all Exhaustive search application/configuration energies required! – Prohibitive: for each subset size, each configuration subset, and for each application determine energy increase compared to complete design space • Data mining algorithms – Example: SWAB algorithm used for color decimation • Merge colors based on similarity between adjacent pixels, reduces number of colors – Configurations in design space are similar to pixels • Energy of each configuration is similar to color of each pixel – SWAB can reduce number of configurations with small energy increases 36 colors Merging and measuring error 8 colors Still…a priori knowledge of all application/configuration energies but faster 5/17

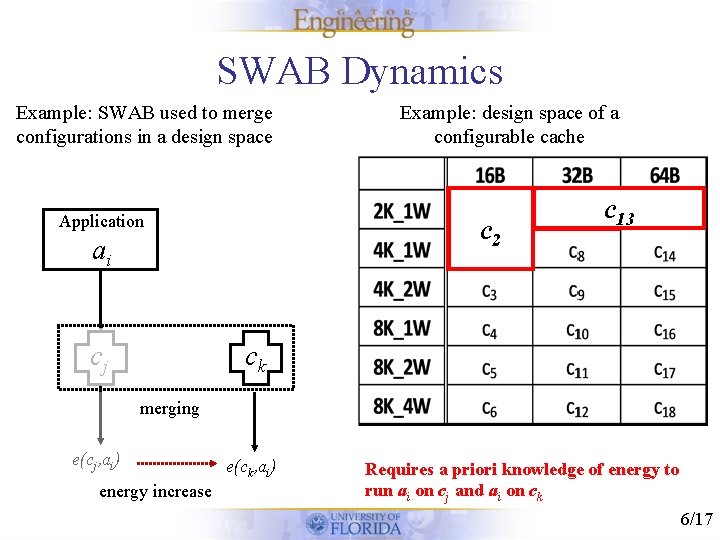

SWAB Dynamics Example: SWAB used to merge configurations in a design space Application c 12 ai cj Example: design space of a configurable cache c 71 cc 13 7 ck merging e(cj, ai) energy increase e(ck, ai) Requires a priori knowledge of energy to run ai on cj and ai on ck 6/17

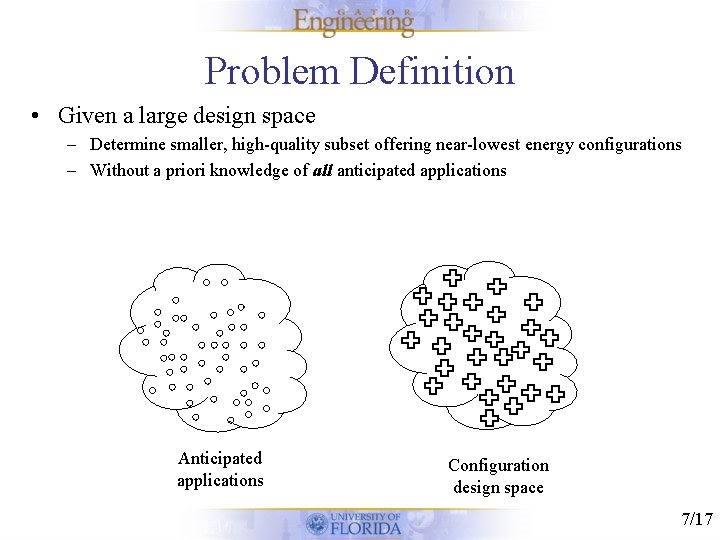

Problem Definition • Given a large design space – Determine smaller, high-quality subset offering near-lowest energy configurations – Without a priori knowledge of all anticipated applications Anticipated applications Configuration design space 7/17

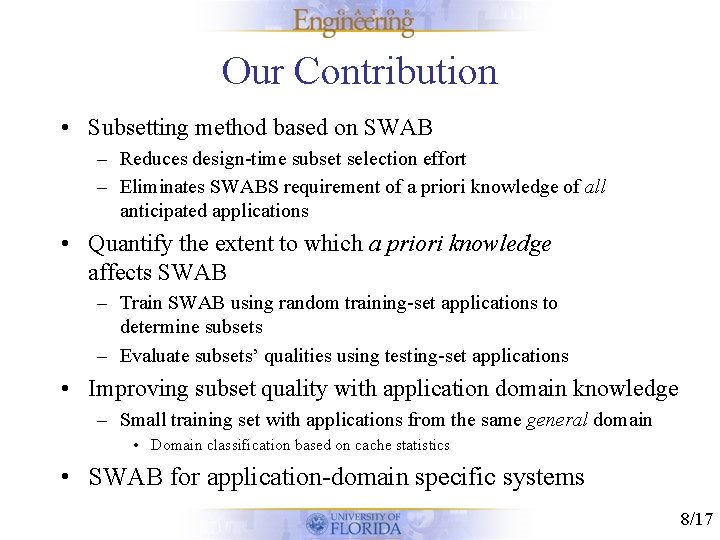

Our Contribution • Subsetting method based on SWAB – Reduces design-time subset selection effort – Eliminates SWABS requirement of a priori knowledge of all anticipated applications • Quantify the extent to which a priori knowledge affects SWAB – Train SWAB using random training-set applications to determine subsets – Evaluate subsets’ qualities using testing-set applications • Improving subset quality with application domain knowledge – Small training set with applications from the same general domain • Domain classification based on cache statistics • SWAB for application-domain specific systems 8/17

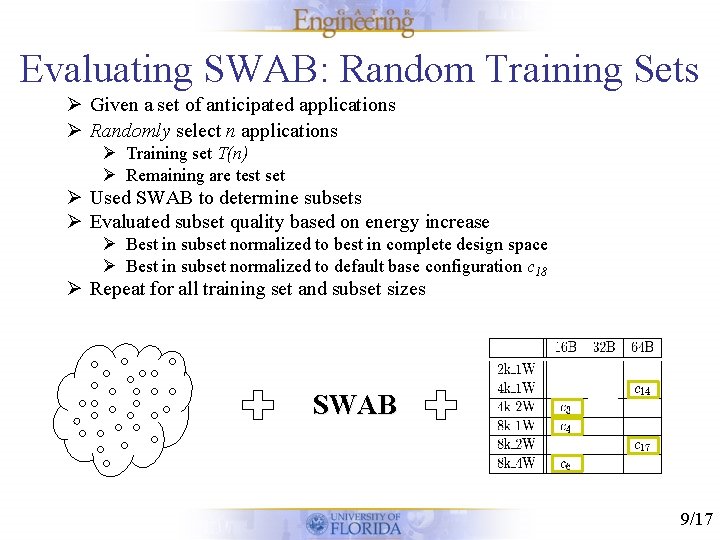

Evaluating SWAB: Random Training Sets Ø Given a set of anticipated applications Ø Randomly select n applications Ø Training set T(n) Ø Remaining are test set Ø Used SWAB to determine subsets Ø Evaluated subset quality based on energy increase Ø Best in subset normalized to best in complete design space Ø Best in subset normalized to default base configuration c 18 Ø Repeat for all training set and subset sizes SWAB 9/17

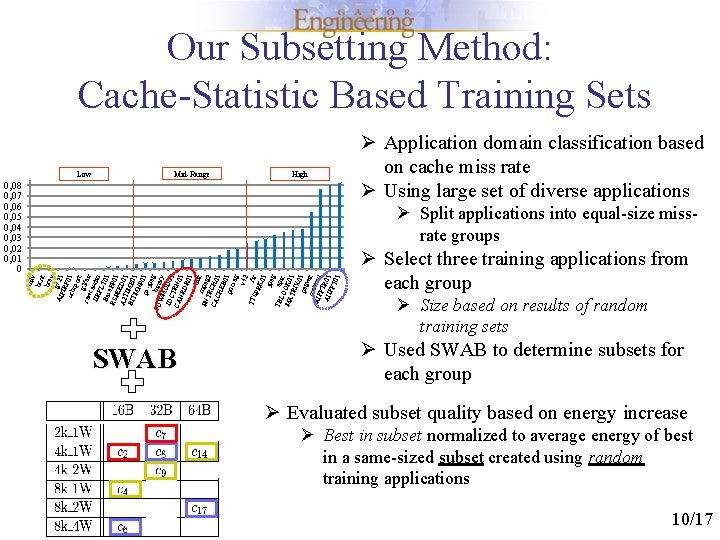

Our Subsetting Method: Cache-Statistic Based Training Sets Low Mid-Range High 0, 08 0, 07 0, 06 0, 05 0, 04 0, 03 0, 02 0, 01 0 Ø Application domain classification based on cache miss rate Ø Using large set of diverse applications bilv bcn t bre v g 7 AIF 21 IRF ucb 01 qso r g 3 f t raw a cau x IIR dio FL Bas T 01 RS e. FP 01 PE A 2 ED 01 T BIT IME 01 MN P ps- 01 jpe b g PU WM inary IDC OD 01 CA TRN 0 NR 1 DR 01 blit PN mpeg TR 2 CA CH 0 CH 1 EB 0 poc 1 sag v 42 TT SPR fir K 0 1 jpe g TB LO epic MA OK 0 TR 1 IX 0 peg 1 wit m AIF atmul FTR AII 0 FFT 1 01 Ø Split applications into equal-size missrate groups SWAB Ø Select three training applications from each group Ø Size based on results of random training sets Ø Used SWAB to determine subsets for each group Ø Evaluated subset quality based on energy increase Ø Best in subset normalized to average energy of best in a same-sized subset created using random training applications 10/17

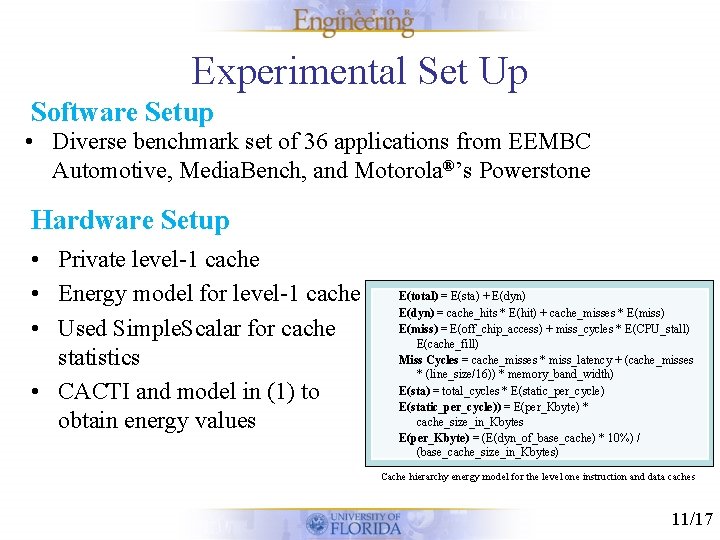

Experimental Set Up Software Setup • Diverse benchmark set of 36 applications from EEMBC Automotive, Media. Bench, and Motorola®’s Powerstone Hardware Setup • Private level-1 cache • Energy model for level-1 cache • Used Simple. Scalar for cache statistics • CACTI and model in (1) to obtain energy values E(total) = E(sta) + E(dyn) = cache_hits * E(hit) + cache_misses * E(miss) = E(off_chip_access) + miss_cycles * E(CPU_stall) E(cache_fill) Miss Cycles = cache_misses * miss_latency + (cache_misses * (line_size/16)) * memory_band_width) E(sta) = total_cycles * E(static_per_cycle)) = E(per_Kbyte) * cache_size_in_Kbytes E(per_Kbyte) = (E(dyn_of_base_cache) * 10%) / (base_cache_size_in_Kbytes) Cache hierarchy energy model for the level one instruction and data caches 11/17

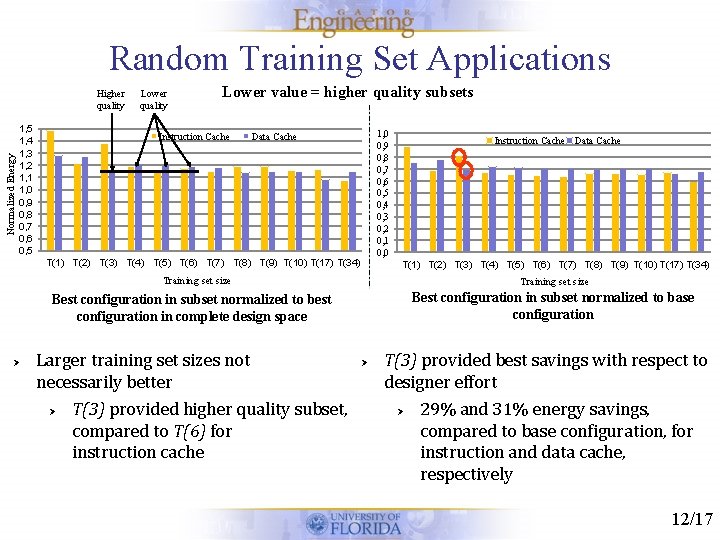

Random Training Set Applications Normalized Energy Higher quality 1, 5 1, 4 1, 3 1, 2 1, 1 1, 0 0, 9 0, 8 0, 7 0, 6 0, 5 Lower quality Lower value = higher quality subsets Instruction Cache 1, 0 0, 9 0, 8 0, 7 0, 6 0, 5 0, 4 0, 3 0, 2 0, 1 0, 0 Data Cache T(1) T(2) T(3) T(4) T(5) T(6) T(7) T(8) T(9) T(10) T(17) T(34) Instruction Cache Data Cache T(1) T(2) T(3) T(4) T(5) T(6) T(7) T(8) T(9) T(10) T(17) T(34) Training set size Best configuration in subset normalized to base configuration Best configuration in subset normalized to best configuration in complete design space Ø Larger training set sizes not necessarily better Ø T(3) provided higher quality subset, compared to T(6) for instruction cache Ø T(3) provided best savings with respect to designer effort Ø 29% and 31% energy savings, compared to base configuration, for instruction and data cache, respectively 12/17

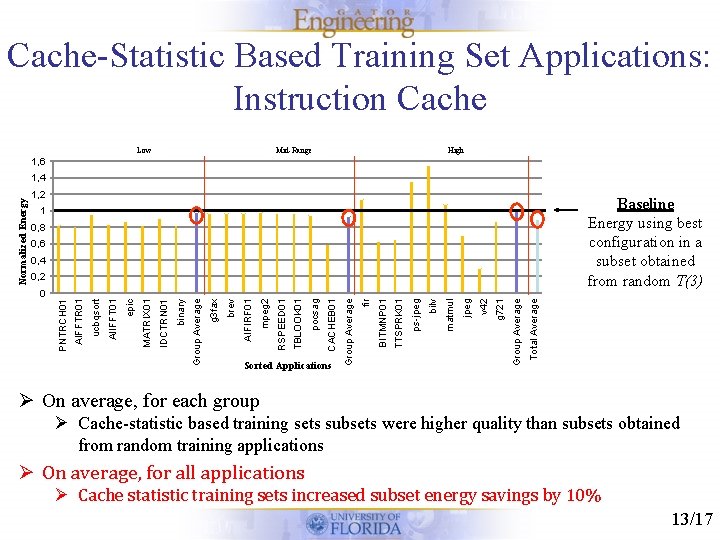

Cache-Statistic Based Training Set Applications: Instruction Cache Low Mid-Range High 1, 6 1, 2 Baseline Energy using best configuration in a subset obtained from random T(3) 1 0, 8 0, 6 0, 4 Total Average g 721 v 42 jpeg matmul bilv ps-jpeg TTSPRK 01 BITMNP 01 fir Group Average Sorted Applications Group Average CACHEB 01 pocsag TBLOOK 01 RSPEED 01 mpeg 2 AIFIRF 01 brev g 3 fax Group Average binary IDCTRN 01 MATRIX 01 epic AIFFTR 01 PNTRCH 01 0 AIIFFT 01 0, 2 ucbqsort Normalized Energy 1, 4 Ø On average, for each group Ø Cache-statistic based training sets subsets were higher quality than subsets obtained from random training applications Ø On average, for all applications Ø Cache statistic training sets increased subset energy savings by 10% 13/17

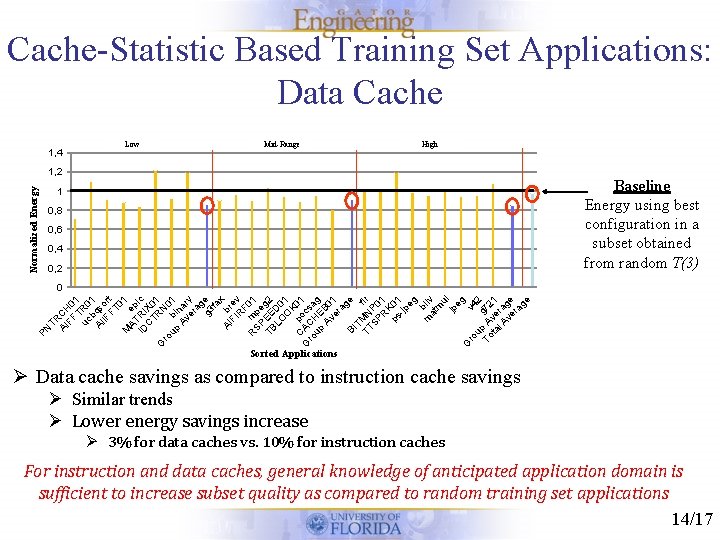

Cache-Statistic Based Training Set Applications: Data Cache 1, 4 Low Mid-Range High Normalized Energy 1, 2 Baseline Energy using best configuration in a subset obtained from random T(3) 1 0, 8 0, 6 0, 4 0, 2 PN TR AI CH FF 0 T 1 uc R 0 b 1 AI qs IF ort FT 01 M AT ep ID RI ic C X 0 TR 1 G N ro up bi 01 Av nar er y ag g 3 e fa x AI br FI ev R F 0 m R SP pe 1 TB EE g 2 LO D 0 O 1 K 0 p C G AC ocs 1 ro H a up E g Av B 0 er 1 ag e BI TM f TT N ir SP P 0 R 1 K ps 01 -jp eg b m ilv at m u jp l eg G v ro up g 42 To A 72 ta ve 1 l A ra ve ge ra ge 0 Sorted Applications Ø Data cache savings as compared to instruction cache savings Ø Similar trends Ø Lower energy savings increase Ø 3% for data caches vs. 10% for instruction caches For instruction and data caches, general knowledge of anticipated application domain is sufficient to increase subset quality as compared to random training set applications 14/17

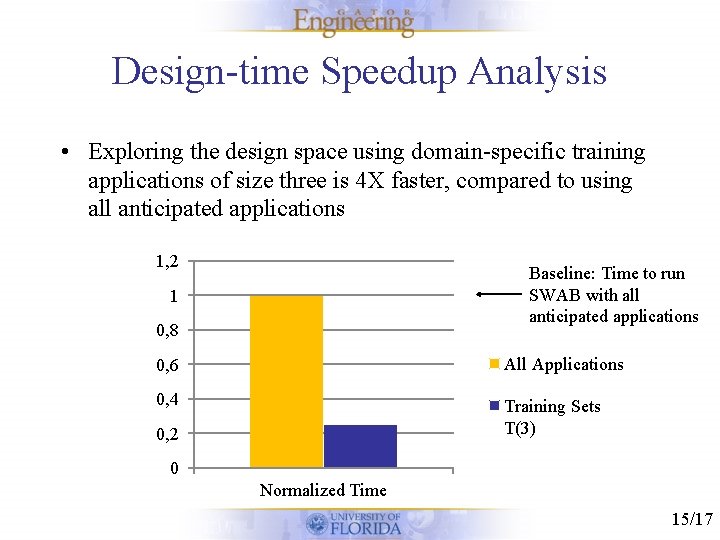

Design-time Speedup Analysis • Exploring the design space using domain-specific training applications of size three is 4 X faster, compared to using all anticipated applications 1, 2 Baseline: Time to run SWAB with all anticipated applications 1 0, 8 0, 6 All Applications 0, 4 Training Sets T(3) 0, 2 0 Normalized Time 15/17

Conclusion • Reducing design space exploration efforts – Used training set applications to evaluate design space subsetting, and evaluated the subsets' energy savings using disjoint testing applications • Subset quality – Random training set applications provided quality configuration subsets, and domain-specific training application increased subset quality • 4 X reduction in design space exploration time using domain-specific training applications as compared to using all anticipated applications • Our training set methods enable designers to leverage configurable cache energy savings with less design effort 16/17

Questions 17/17

- Slides: 17