Migratable Objects and Task Based Parallel Programming with

Migratable Objects and Task. Based Parallel Programming with Charm++ 1

Challenges in Parallel Programming • Applications are getting more sophisticated • Adaptive refinement • Multi-scale, multi-module, multi-physics • E. g. , load imbalance emerges as a huge problem for some apps • Exacerbated by strong scaling needs from apps • Strong scaling: run an application with same input data on more processors, and get better speedups • Weak scaling: larger datasets on more processors in the same time • Hardware variability • • Static/dynamic Heterogeneity: processor types, process variation, etc. Power/Temperature/Energy Component failure 2

Our View • To deal with these challenges, we must seek: • Not full automation • Not full burden on app-developers • But: a good division of labor between the system and app developers • Programmer: what to do in parallel, System: where, when • Develop language driven by needs of real applications • Avoid “platonic” pursuit of “beautiful” ideas • Co-developed with NAMD, Cha. NGa, Open. Atom, … • Pragmatic focus • Ground-up development, portability • Accessibility for a broad user base 3

What Is Charm++? • Charm++ is a generalized approach to writing parallel programs • An alternative to the likes of MPI, UPC, GA, etc. • But not to sequential languages such as C, C++, and Fortran • Represents: • The style of writing parallel programs • The runtime system • And the entire ecosystem that surrounds it • Three design principles: • Over-decomposition, Migratability, Asynchrony 4

Over-Decomposition • Decompose the work units & data units into many more pieces than execution units • Cores/Nodes/… • Not so hard: we do decomposition anyway 5

Migratability • Allow these work and data units to be migratable at runtime • i. e. , the programmer or runtime can move them • Consequences for the application developer • Communication must now be addressed to logical units with global names, not to physical processors • But this is a good thing • Consequences for RTS • Must keep track of where each unit is • Naming and location management 6

Asynchrony: Message-Driven Execution • With over-decomposition and migratability: • You have multiple units on each processor • They address each other via logical names • Need for scheduling: • What sequence should the work units execute in? • One answer: let the programmer sequence them • Seen in current codes (e. g. , some AMR frameworks) • Message-driven execution: • Let the work-unit that happens to have data (“message”) available for it execute next • Let the RTS select among ready work units • Programmer should not specify what executes next, but can influence it via priorities 7

Realization of This Model in Charm++ • Over-decomposed entities: chares • Chares are C++ objects • With methods designated as “entry” methods • Which can be invoked asynchronously by remote chares • Chares are organized into indexed collections • Each collection may have its own indexing scheme • 1 D, . . . , 6 D • Sparse • Bitvector or string as an index • Chares communicate via asynchronous method invocations • A[i]. foo(…); • A is the name of a collection, i is the index of the particular chare 8

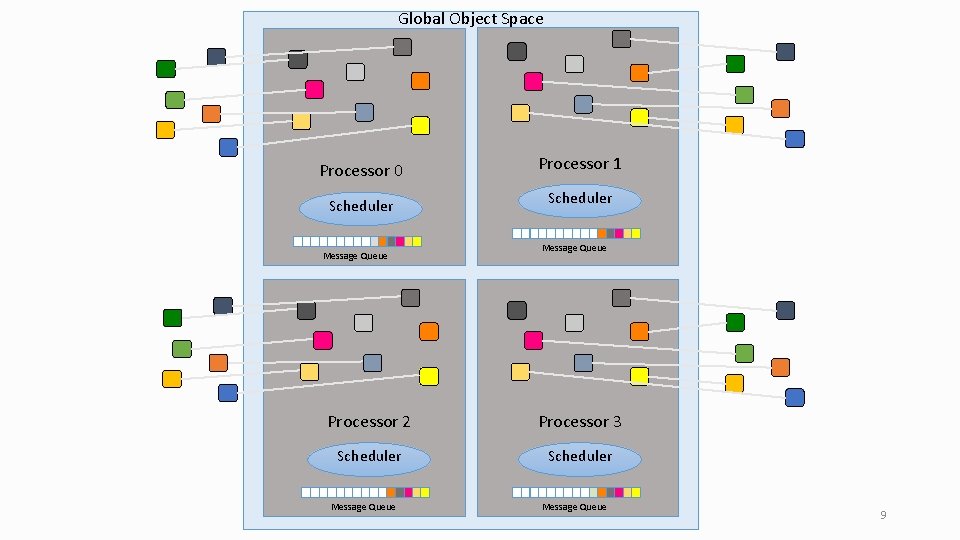

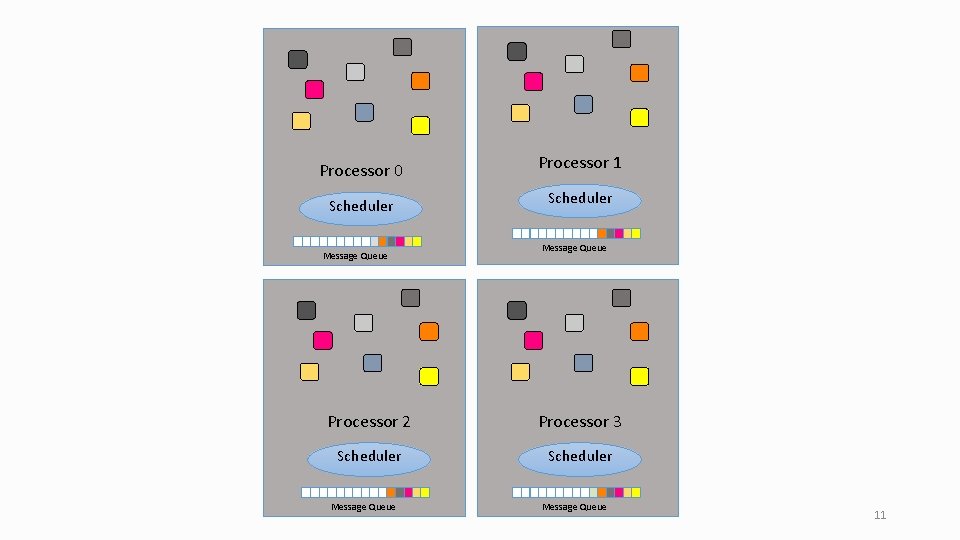

Global Object Space Processor 0 Processor 1 Scheduler Message Queue Processor 2 Processor 3 Scheduler Message Queue 9

![Message-Driven Execution A[23]. foo(…) Processor 0 Scheduler Message Queue Processor 1 Scheduler Message Queue Message-Driven Execution A[23]. foo(…) Processor 0 Scheduler Message Queue Processor 1 Scheduler Message Queue](http://slidetodoc.com/presentation_image_h2/6865d638d3aeb302e2f6d6f1e1b201d7/image-10.jpg)

Message-Driven Execution A[23]. foo(…) Processor 0 Scheduler Message Queue Processor 1 Scheduler Message Queue 10

Processor 0 Processor 1 Scheduler Message Queue Processor 2 Processor 3 Scheduler Message Queue 11

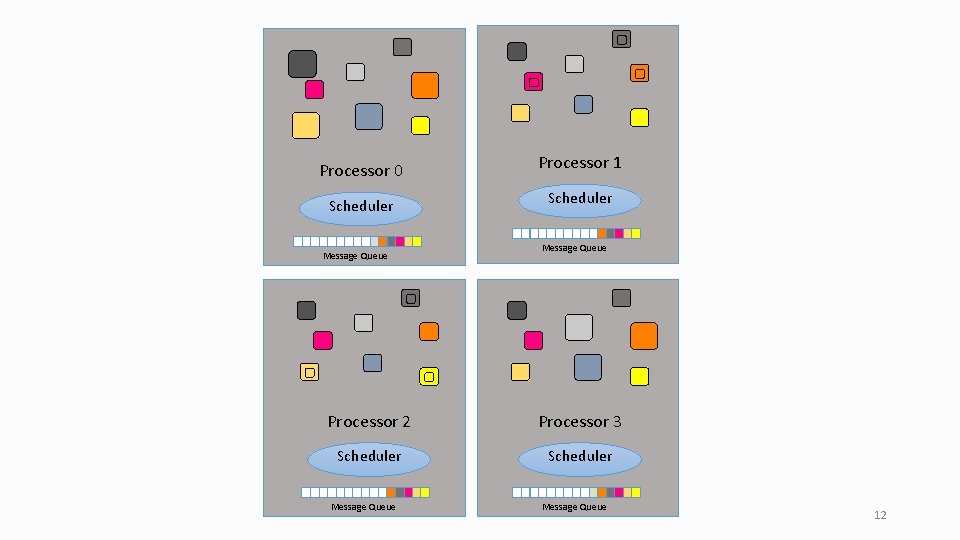

Processor 0 Processor 1 Scheduler Message Queue Processor 2 Processor 3 Scheduler Message Queue 12

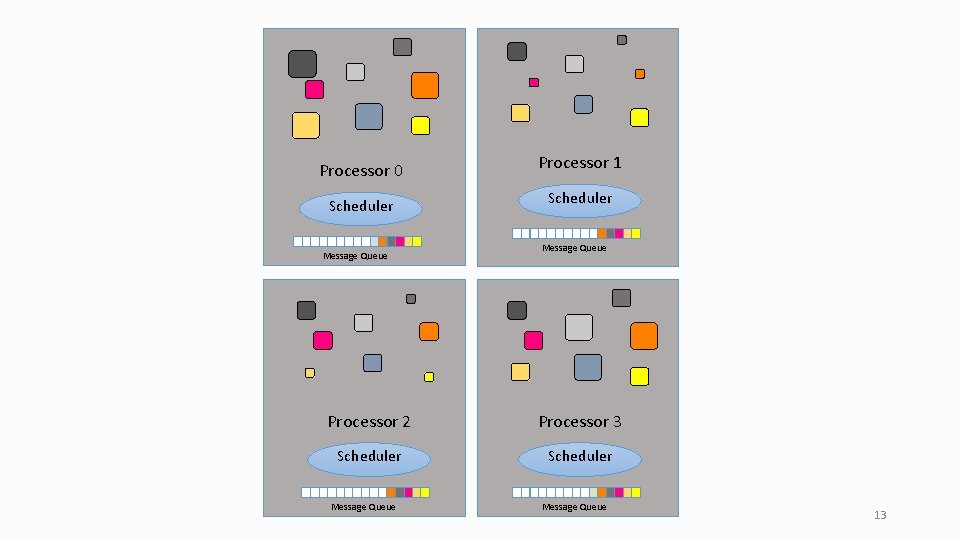

Processor 0 Processor 1 Scheduler Message Queue Processor 2 Processor 3 Scheduler Message Queue 13

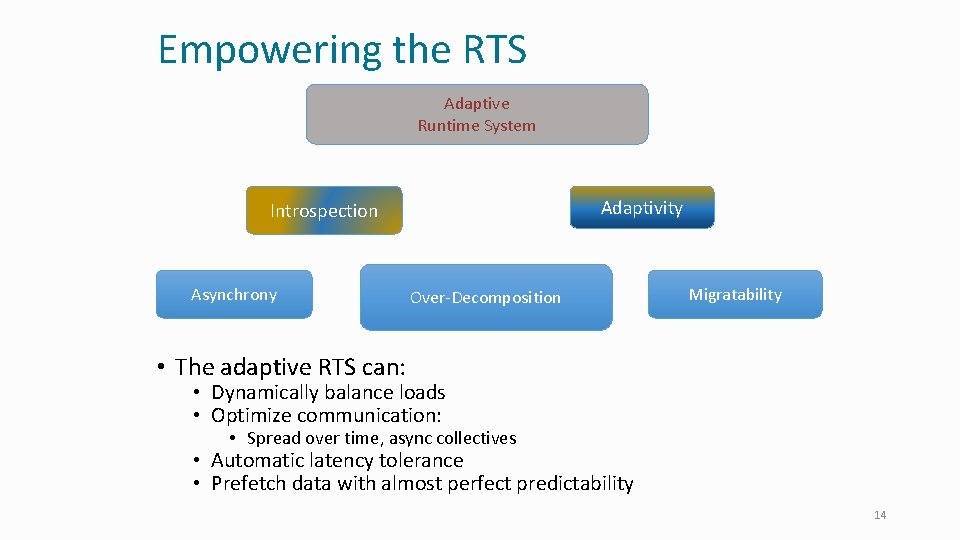

Empowering the RTS Adaptive Runtime System Adaptivity Introspection Asynchrony Over-Decomposition Migratability • The adaptive RTS can: • Dynamically balance loads • Optimize communication: • Spread over time, async collectives • Automatic latency tolerance • Prefetch data with almost perfect predictability 14

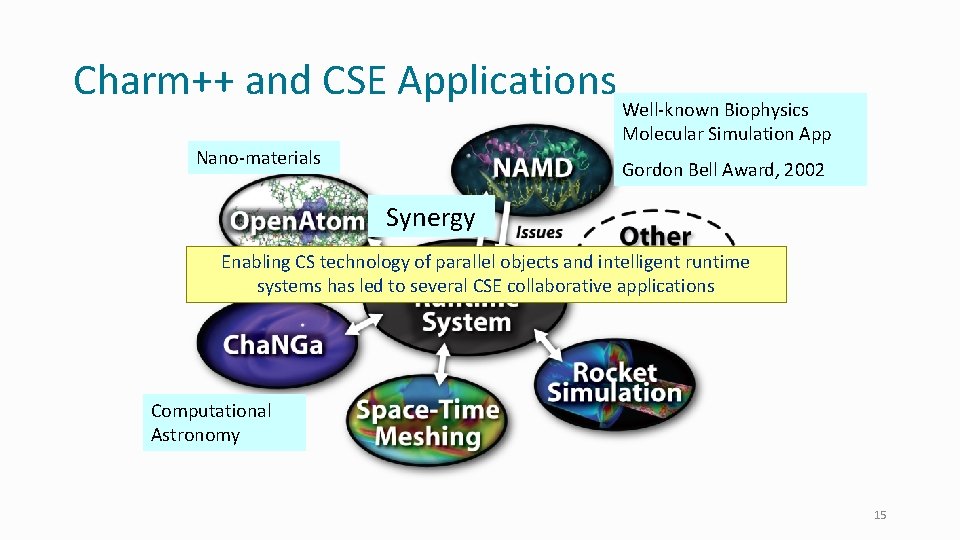

Charm++ and CSE Applications Nano-materials Well-known Biophysics Molecular Simulation App Gordon Bell Award, 2002 Synergy Enabling CS technology of parallel objects and intelligent runtime systems has led to several CSE collaborative applications Computational Astronomy 15

Summary: What Is Charm++? • Charm++ is a way of parallel programming • It is based on: • Objects • Over-decomposition • Asynchrony • Asynchronous method invocations • Migratability • Adaptive runtime system • It has been co-developed synergistically with multiple CSE applications 16

Parallel Programming with Charm++ Grainsize 17

Grainsize • Charm++ philosophy: • Let the programmer decompose their work and data into coarse-grained entities • It is important to understand what I mean by coarse-grained entities • You don’t write sequential programs that some system will auto-decompose • You don’t write programs when there is one object for each float • You consciously choose a grainsize, but choose it independently of the number of processors • Or parameterize it, so you can tune later 18

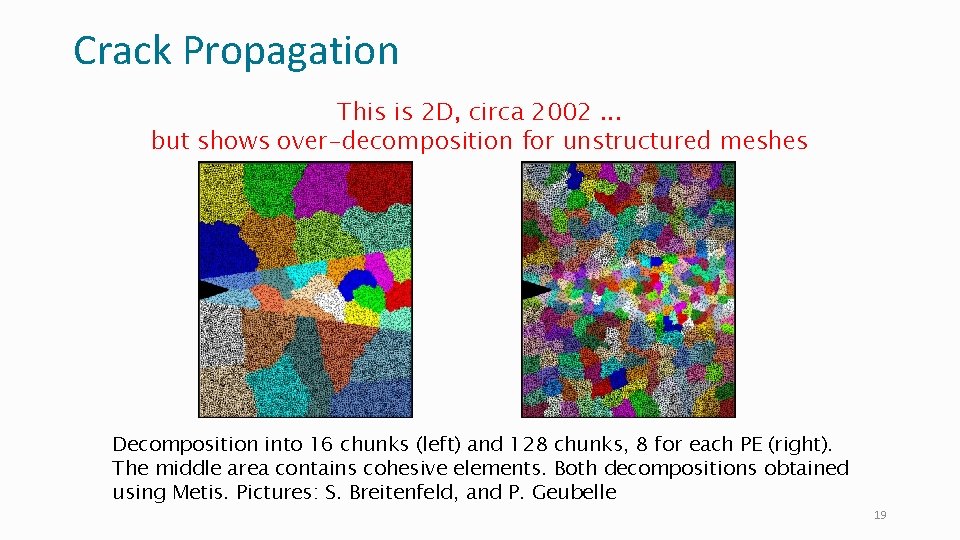

Crack Propagation This is 2 D, circa 2002. . . but shows over-decomposition for unstructured meshes Decomposition into 16 chunks (left) and 128 chunks, 8 for each PE (right). The middle area contains cohesive elements. Both decompositions obtained using Metis. Pictures: S. Breitenfeld, and P. Geubelle 19

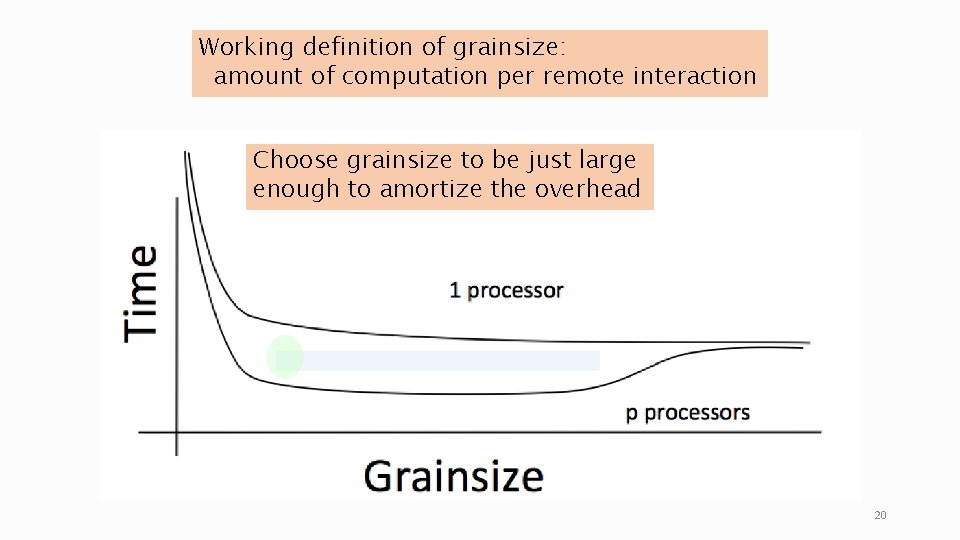

Working definition of grainsize: amount of computation per remote interaction Choose grainsize to be just large enough to amortize the overhead 20

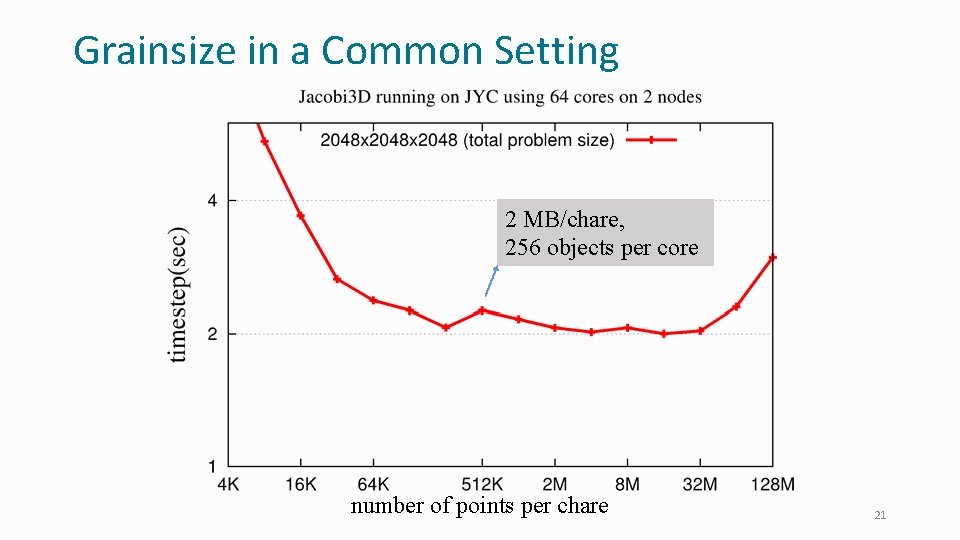

Grainsize in a Common Setting 2 MB/chare, 256 objects per core number of points per chare 21

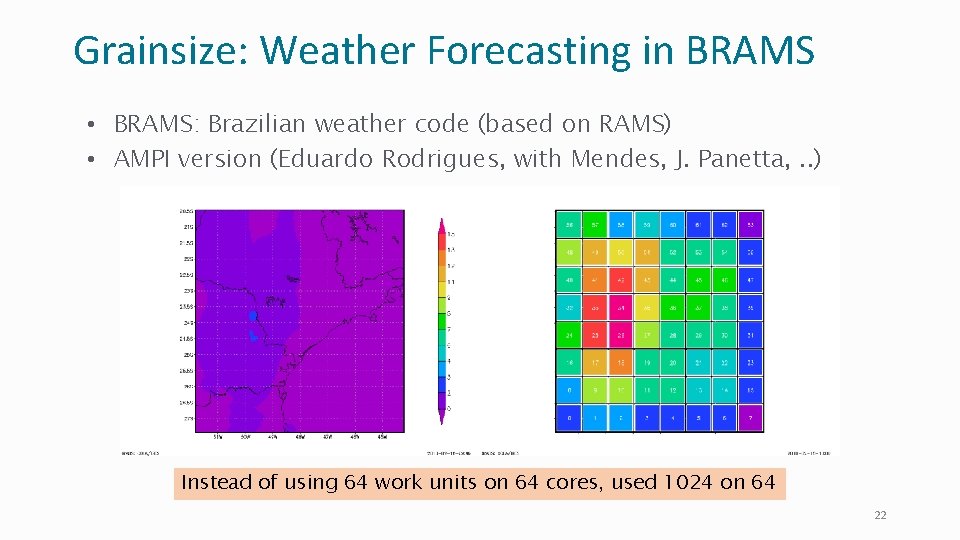

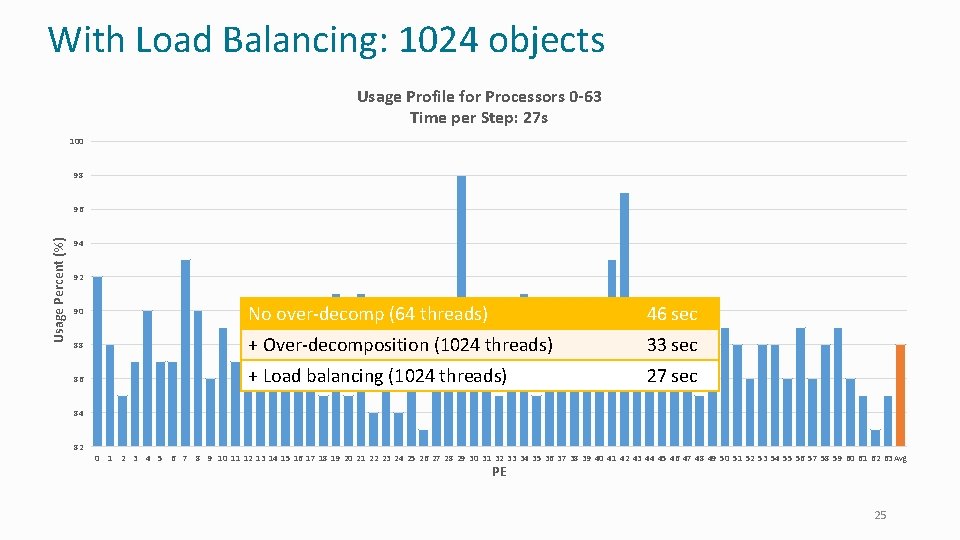

Grainsize: Weather Forecasting in BRAMS • BRAMS: Brazilian weather code (based on RAMS) • AMPI version (Eduardo Rodrigues, with Mendes, J. Panetta, . . ) Instead of using 64 work units on 64 cores, used 1024 on 64 22

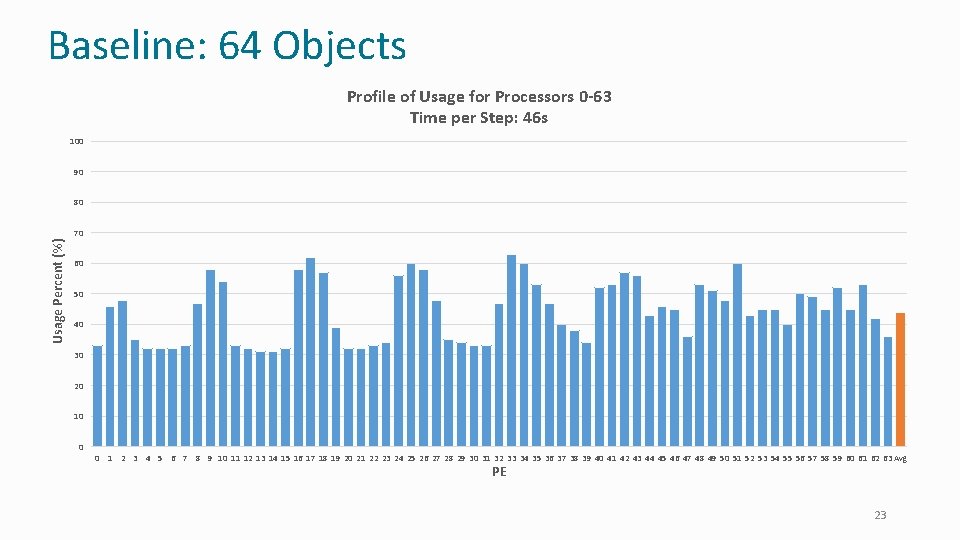

Baseline: 64 Objects Profile of Usage for Processors 0 -63 Time per Step: 46 s 100 90 80 Usage Percent (%) 70 60 50 40 30 20 10 0 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 Avg PE 23

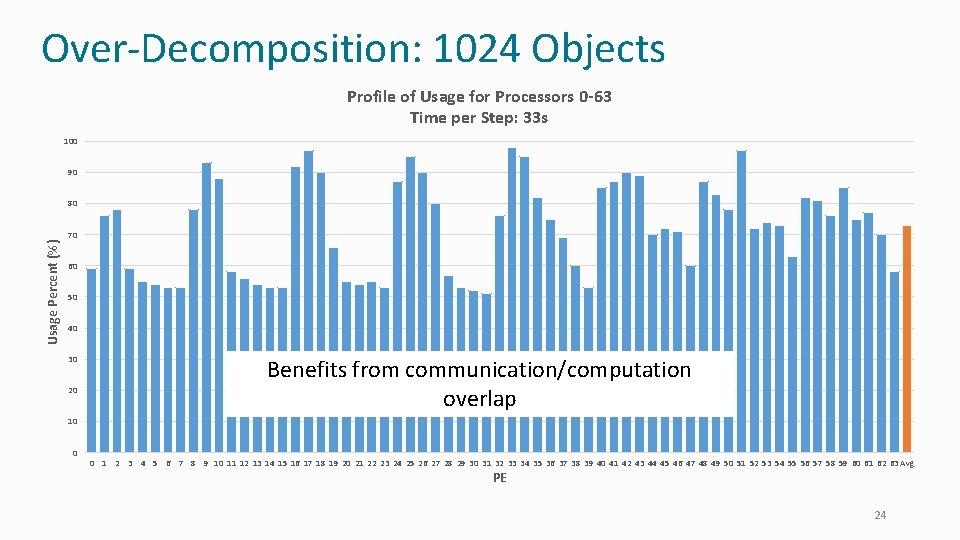

Over-Decomposition: 1024 Objects Profile of Usage for Processors 0 -63 Time per Step: 33 s 100 90 Usage Percent (%) 80 70 60 50 40 30 20 Benefits from communication/computation overlap 10 0 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 Avg PE 24

With Load Balancing: 1024 objects Usage Profile for Processors 0 -63 Time per Step: 27 s 100 98 Usage Percent (%) 96 94 92 90 No over-decomp (64 threads) 46 sec 88 + Over-decomposition (1024 threads) 33 sec 86 + Load balancing (1024 threads) 27 sec 84 82 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 Avg PE 25

Parallel Programming with Charm++ Benefits 26

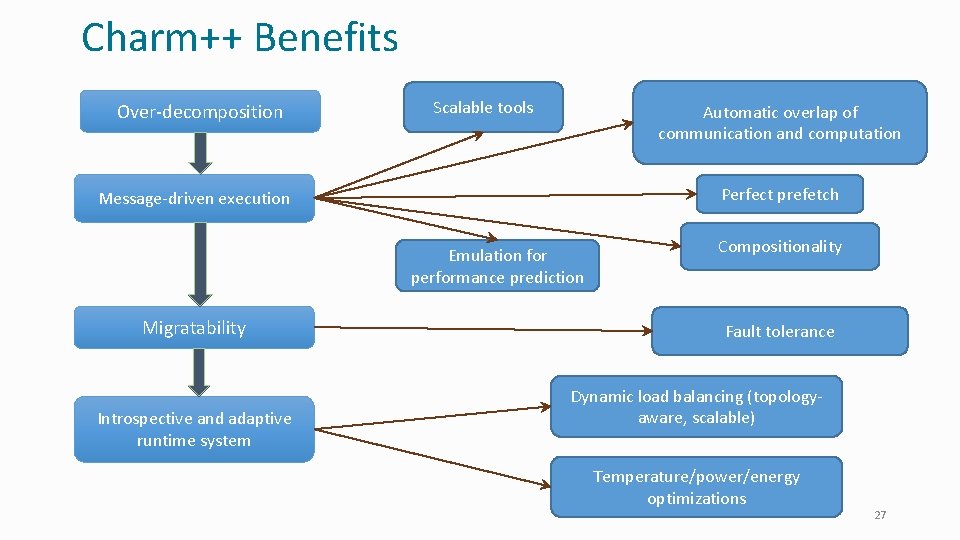

Charm++ Benefits Over-decomposition Scalable tools Automatic overlap of communication and computation Perfect prefetch Message-driven execution Emulation for performance prediction Migratability Introspective and adaptive runtime system Compositionality Fault tolerance Dynamic load balancing (topologyaware, scalable) Temperature/power/energy optimizations 27

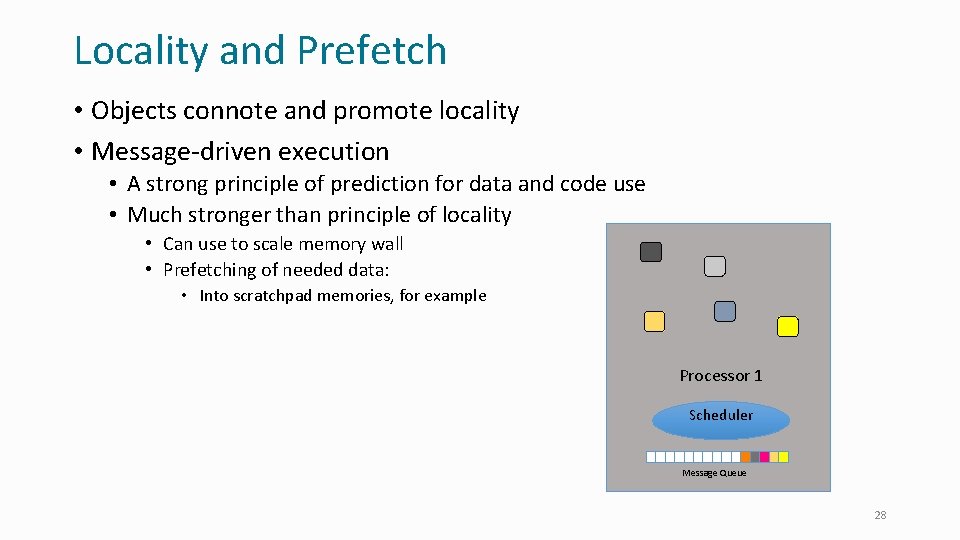

Locality and Prefetch • Objects connote and promote locality • Message-driven execution • A strong principle of prediction for data and code use • Much stronger than principle of locality • Can use to scale memory wall • Prefetching of needed data: • Into scratchpad memories, for example Processor 1 Scheduler Message Queue 28

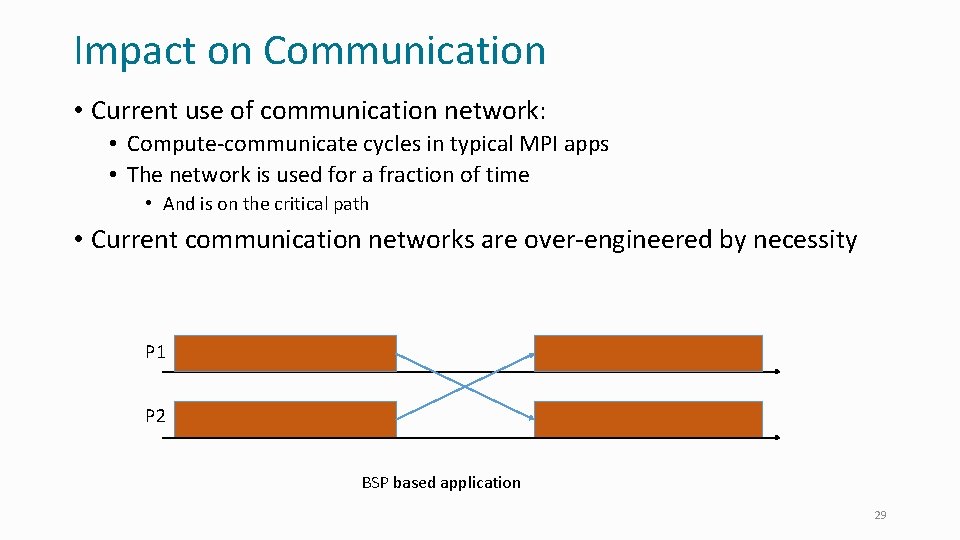

Impact on Communication • Current use of communication network: • Compute-communicate cycles in typical MPI apps • The network is used for a fraction of time • And is on the critical path • Current communication networks are over-engineered by necessity P 1 P 2 BSP based application 29

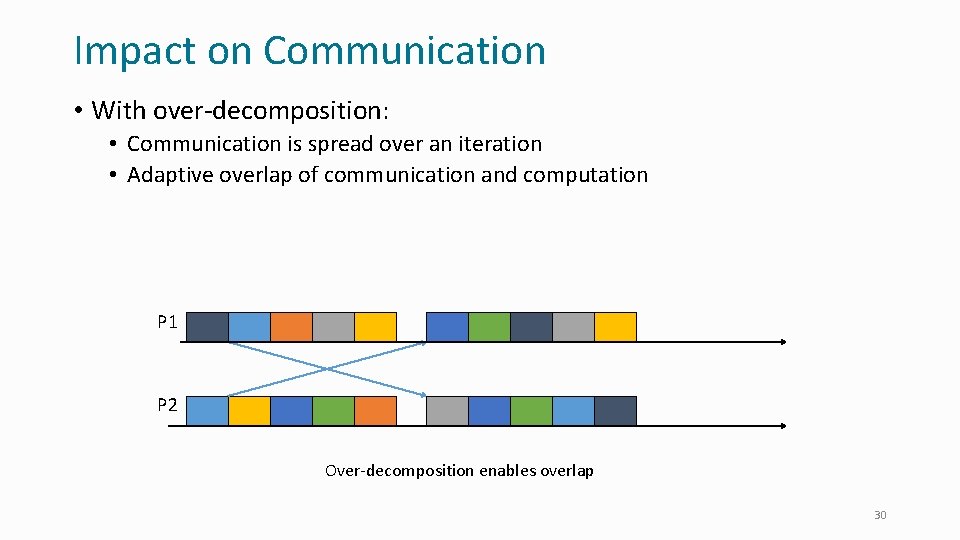

Impact on Communication • With over-decomposition: • Communication is spread over an iteration • Adaptive overlap of communication and computation P 1 P 2 Over-decomposition enables overlap 30

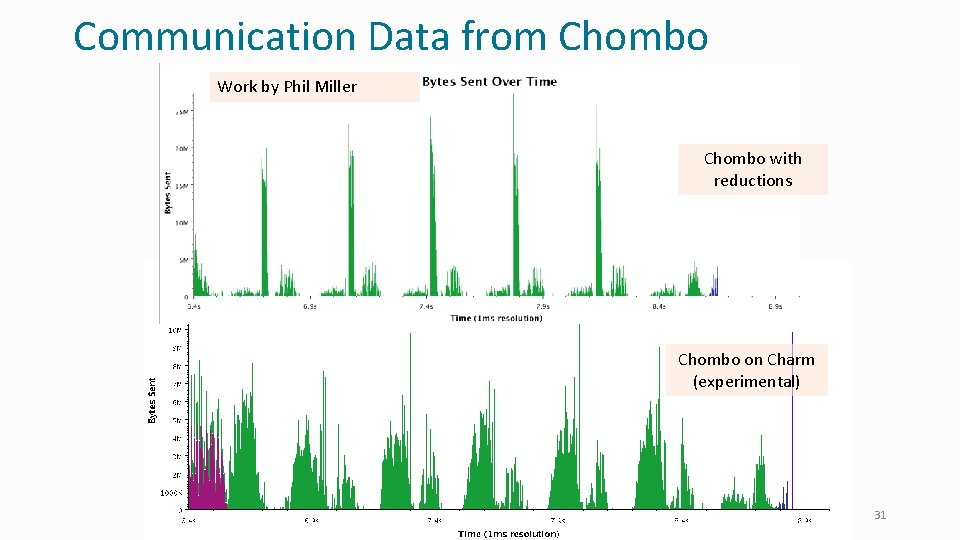

Communication Data from Chombo Work by Phil Miller Chombo with reductions Chombo on Charm (experimental) 31

Decomposition Challenges • Current method is to decompose to processors • This has many problems • Deciding which processor does what work in detail is difficult at large scale • Decomposition should be independent of number of processors – enabled by object-based decomposition • Let runtime system (RTS) assign objects to available resources adaptively 32

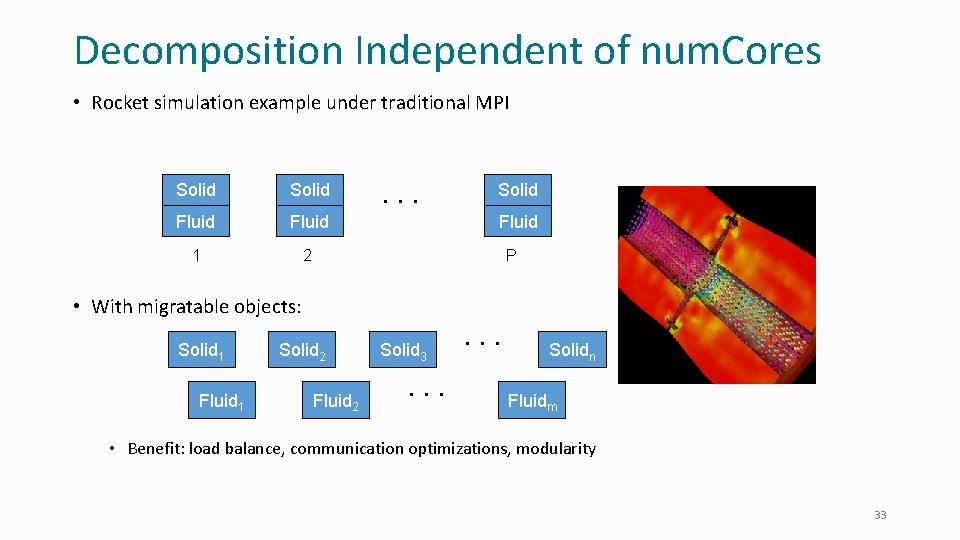

Decomposition Independent of num. Cores • Rocket simulation example under traditional MPI Solid Fluid 1 2 . . . Solid Fluid P • With migratable objects: Solid 1 Fluid 1 Solid 2 Fluid 2 Solid 3. . . Solidn Fluidm • Benefit: load balance, communication optimizations, modularity 33

Compositionality • It is important to support parallel composition • For multi-module, multi-physics, multi-paradigm applications … • What I mean by parallel composition • • B || C where B, C are independently developed modules B is parallel module by itself, and so is C Programmers who wrote B were unaware of C No dependency between B and C • This is not supported well by MPI • Developers support it by breaking abstraction boundaries • E. g. , wildcard recvs in module A to process messages for module B • Nor by Open. MP implementations 34

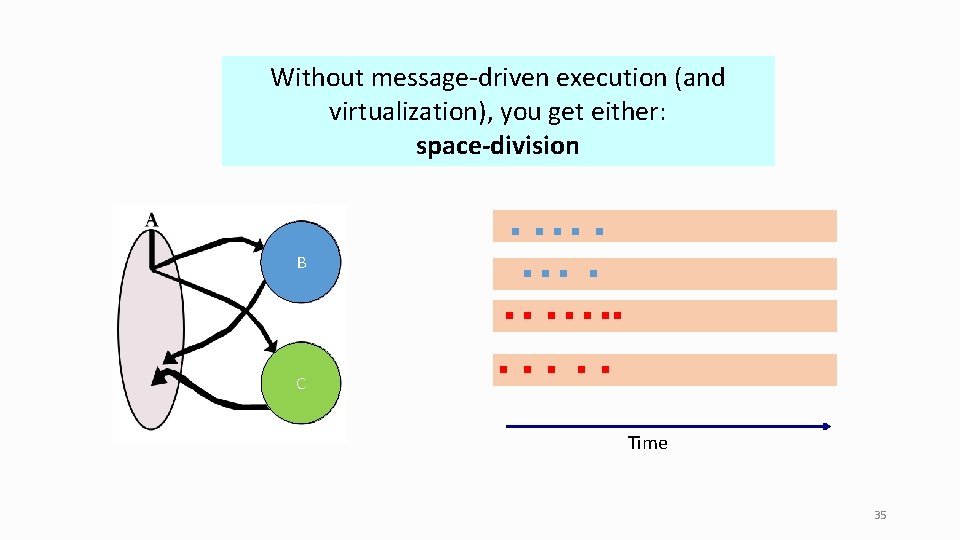

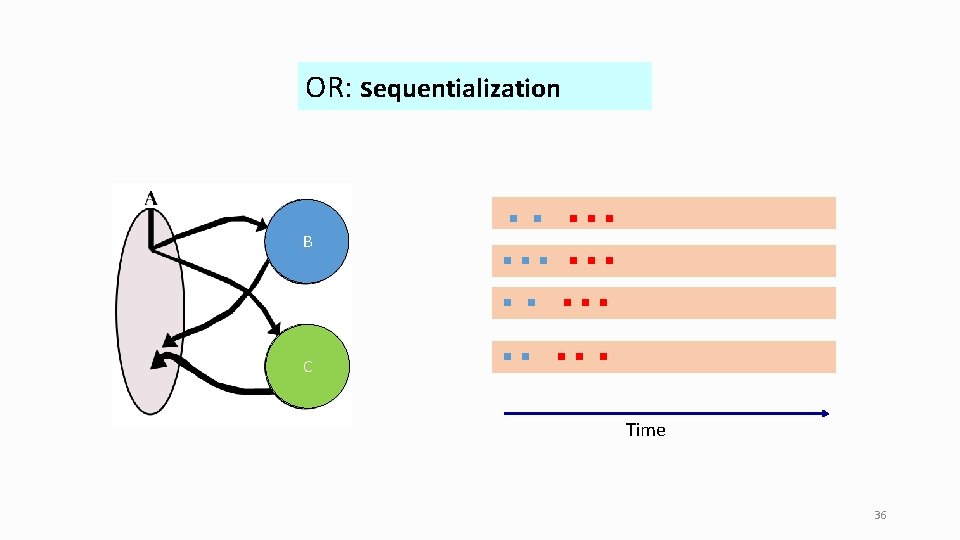

Without message-driven execution (and virtualization), you get either: space-division B C Time 35

OR: sequentialization B C Time 36

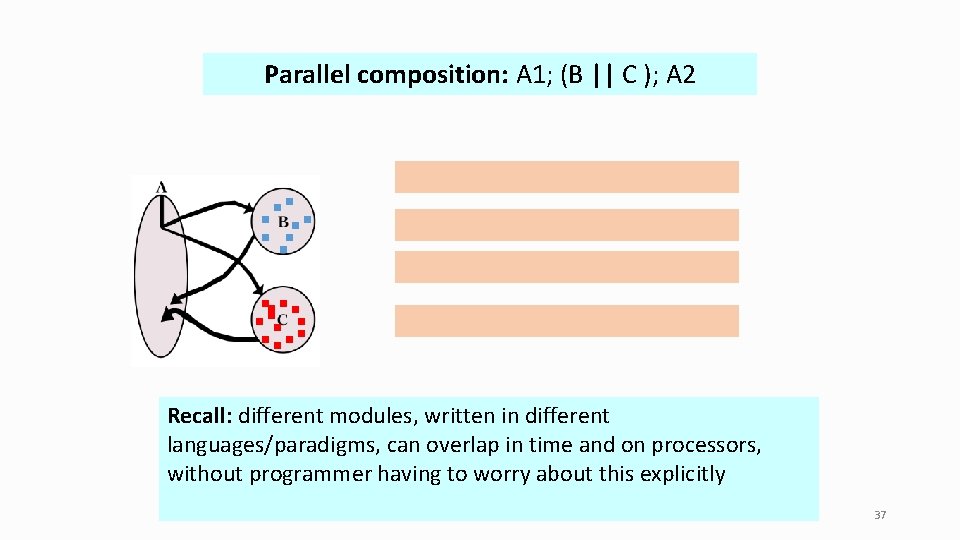

Parallel composition: A 1; (B || C ); A 2 Recall: different modules, written in different languages/paradigms, can overlap in time and on processors, without programmer having to worry about this explicitly 37

Chares: Message-Driven Objects Defining Chares and Understanding Asynchrony 38

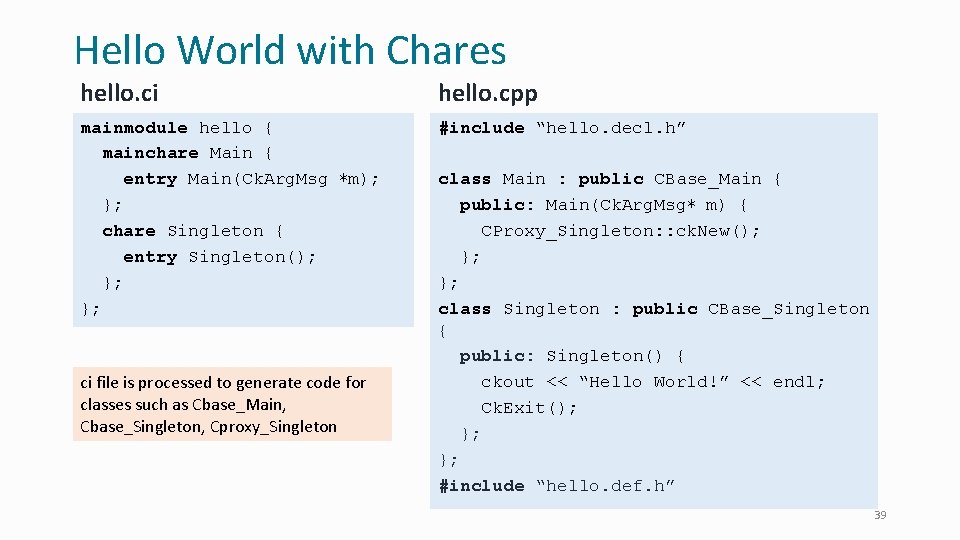

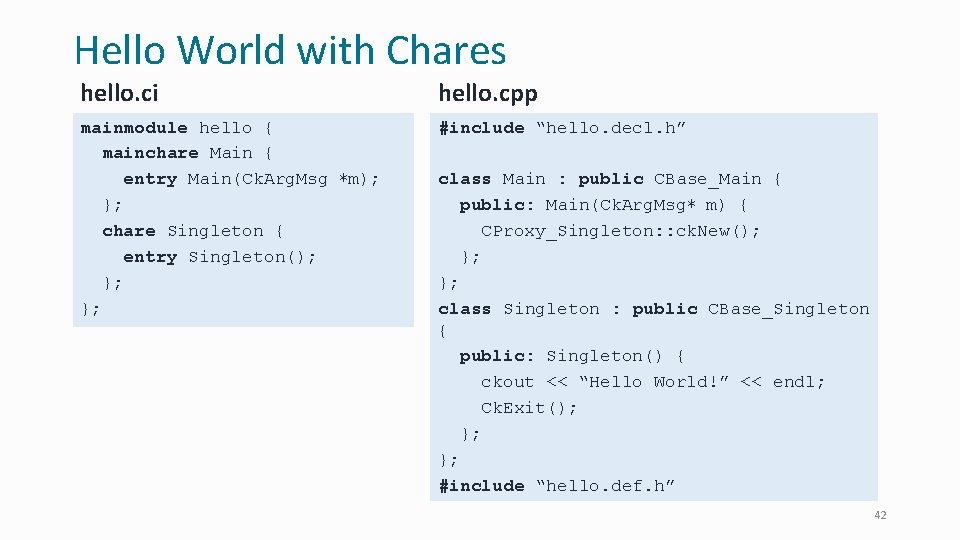

Hello World with Chares hello. ci hello. cpp mainmodule hello { mainchare Main { entry Main(Ck. Arg. Msg ∗m); }; chare Singleton { entry Singleton(); }; }; #include “hello. decl. h” ci file is processed to generate code for classes such as Cbase_Main, Cbase_Singleton, Cproxy_Singleton class Main : public CBase_Main { public: Main(Ck. Arg. Msg∗ m) { CProxy_Singleton: : ck. New(); }; }; class Singleton : public CBase_Singleton { public: Singleton() { ckout << “Hello World!” << endl; Ck. Exit(); }; }; #include “hello. def. h” 39

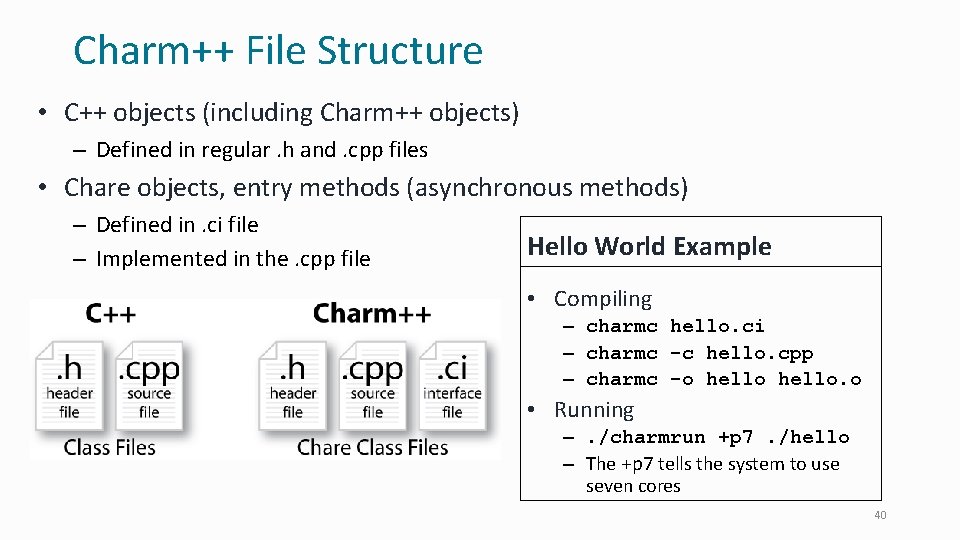

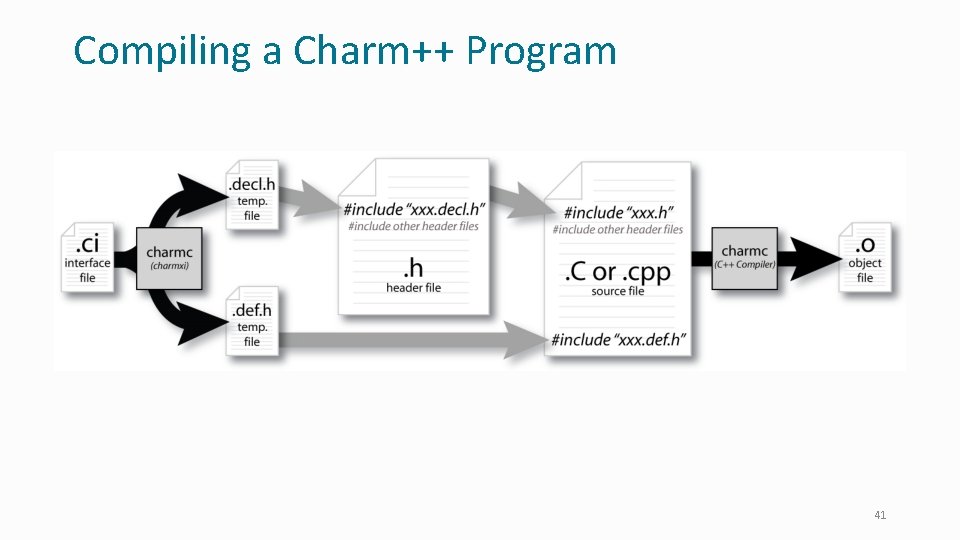

Charm++ File Structure • C++ objects (including Charm++ objects) – Defined in regular. h and. cpp files • Chare objects, entry methods (asynchronous methods) – Defined in. ci file – Implemented in the. cpp file Hello World Example • Compiling – charmc hello. ci – charmc -c hello. cpp – charmc -o hello. o • Running –. /charmrun +p 7. /hello – The +p 7 tells the system to use seven cores 40

Compiling a Charm++ Program 41

Hello World with Chares hello. ci hello. cpp mainmodule hello { mainchare Main { entry Main(Ck. Arg. Msg ∗m); }; chare Singleton { entry Singleton(); }; }; #include “hello. decl. h” class Main : public CBase_Main { public: Main(Ck. Arg. Msg∗ m) { CProxy_Singleton: : ck. New(); }; }; class Singleton : public CBase_Singleton { public: Singleton() { ckout << “Hello World!” << endl; Ck. Exit(); }; }; #include “hello. def. h” 42

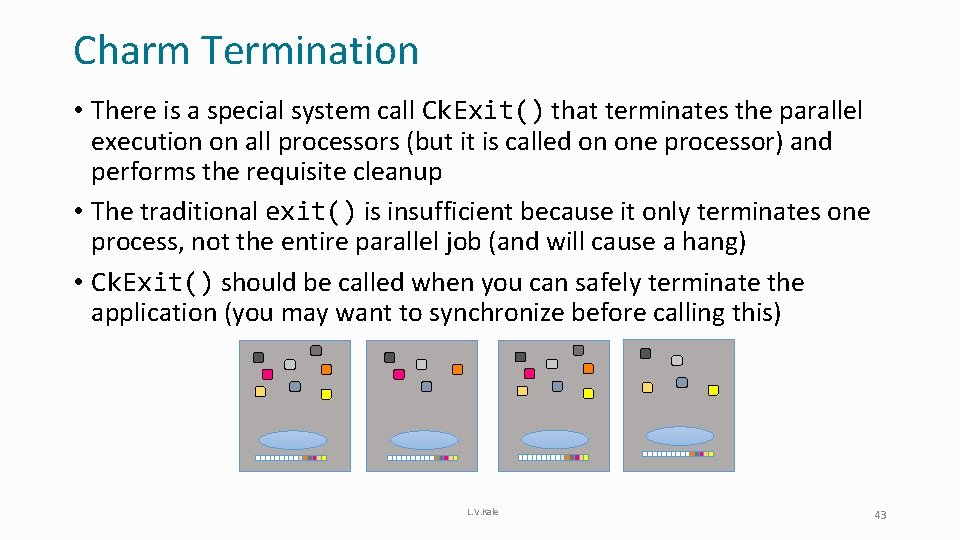

Charm Termination • There is a special system call Ck. Exit() that terminates the parallel execution on all processors (but it is called on one processor) and performs the requisite cleanup • The traditional exit() is insufficient because it only terminates one process, not the entire parallel job (and will cause a hang) • Ck. Exit() should be called when you can safely terminate the application (you may want to synchronize before calling this) L. V. Kale 43

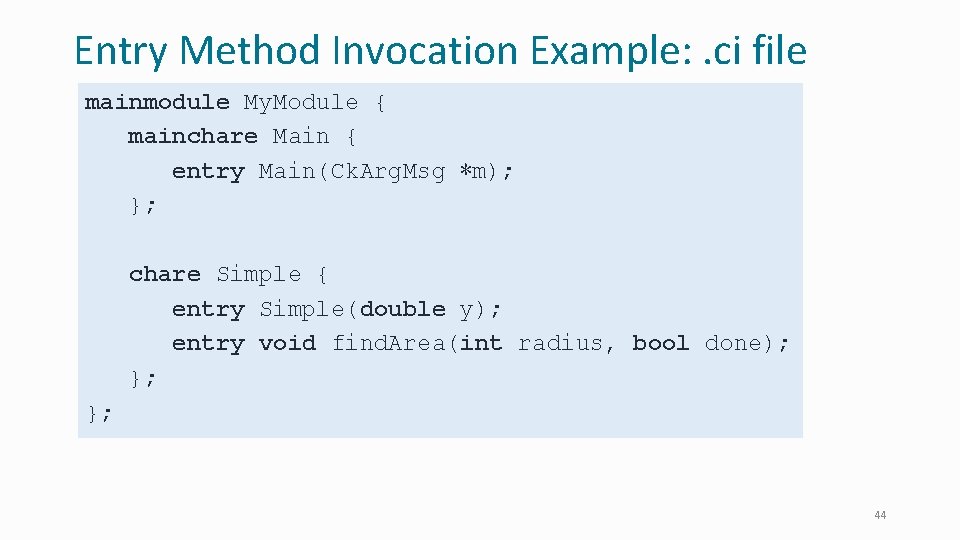

Entry Method Invocation Example: . ci file mainmodule My. Module { mainchare Main { entry Main(Ck. Arg. Msg ∗m); }; chare Simple { entry Simple(double y); entry void find. Area(int radius, bool done); }; }; 44

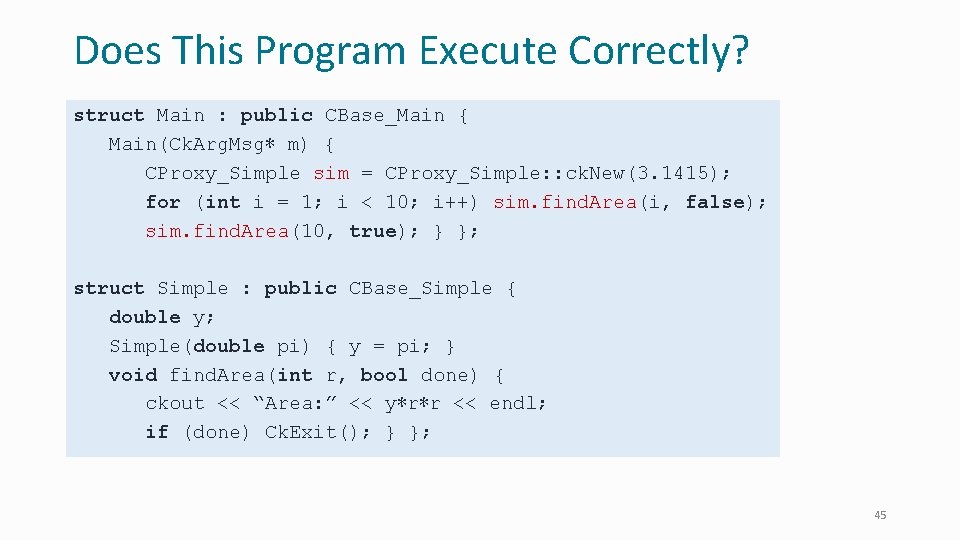

Does This Program Execute Correctly? struct Main : public CBase_Main { Main(Ck. Arg. Msg∗ m) { CProxy_Simple sim = CProxy_Simple: : ck. New(3. 1415); for (int i = 1; i < 10; i++) sim. find. Area(i, false); sim. find. Area(10, true); } }; struct Simple : public CBase_Simple { double y; Simple(double pi) { y = pi; } void find. Area(int r, bool done) { ckout << “Area: ” << y∗r∗r << endl; if (done) Ck. Exit(); } }; 45

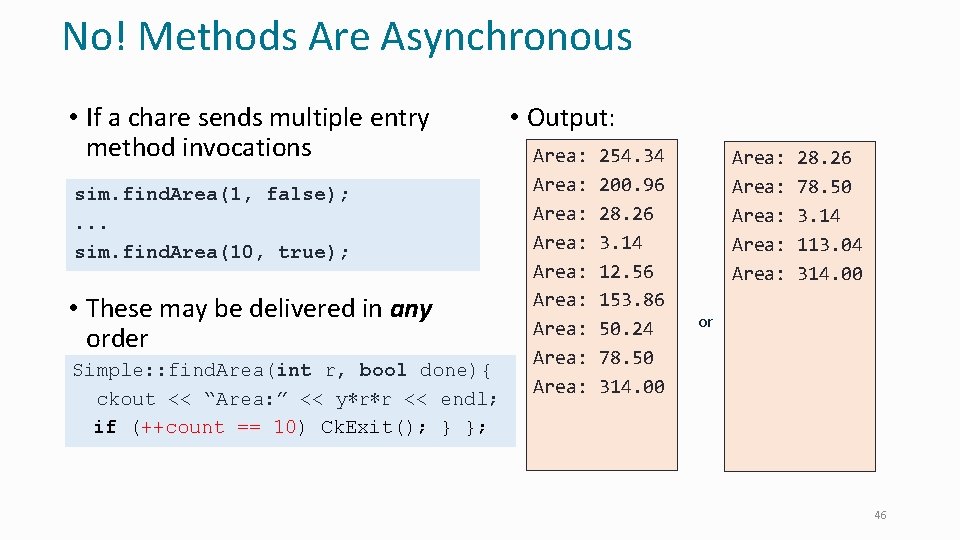

No! Methods Are Asynchronous • If a chare sends multiple entry method invocations sim. find. Area(1, false); . . . sim. find. Area(10, true); • These may be delivered in any order Simple: : find. Area(int r, bool done){ ckout << “Area: ” << y∗r∗r << endl; if == 10) Ck. Exit(); } }; if (++count (done) Ck. Exit(); } }; • Output: Area: Area: Area: 254. 34 200. 96 28. 26 3. 14 12. 56 153. 86 50. 24 78. 50 314. 00 Area: Area: 28. 26 78. 50 3. 14 113. 04 314. 00 or 46

Chare Arrays: Collection of Chares 47

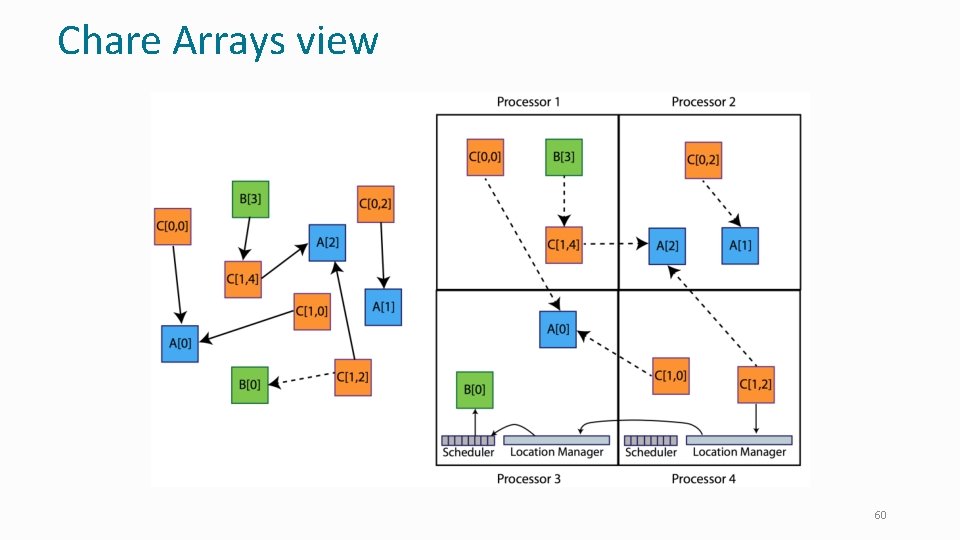

Chare Arrays • Indexed collections of chares • Every item in the collection has a unique index and proxy • Can be indexed like an array or by an arbitrary object • Can be sparse or dense • Elements may be dynamically inserted and deleted • Elements are distributed across the available processors • May be migrated to other nodes by the user or the runtime • For many scientific applications, collections of chares are a convenient abstraction 48

![Declaring a Chare Array. ci file: array chare [1 d] foo { entry foo(); Declaring a Chare Array. ci file: array chare [1 d] foo { entry foo();](http://slidetodoc.com/presentation_image_h2/6865d638d3aeb302e2f6d6f1e1b201d7/image-49.jpg)

Declaring a Chare Array. ci file: array chare [1 d] foo { entry foo(); // constructor // … entry methods … } chare [2 d] bar { array entry bar(); // constructor // … entry methods … } 49

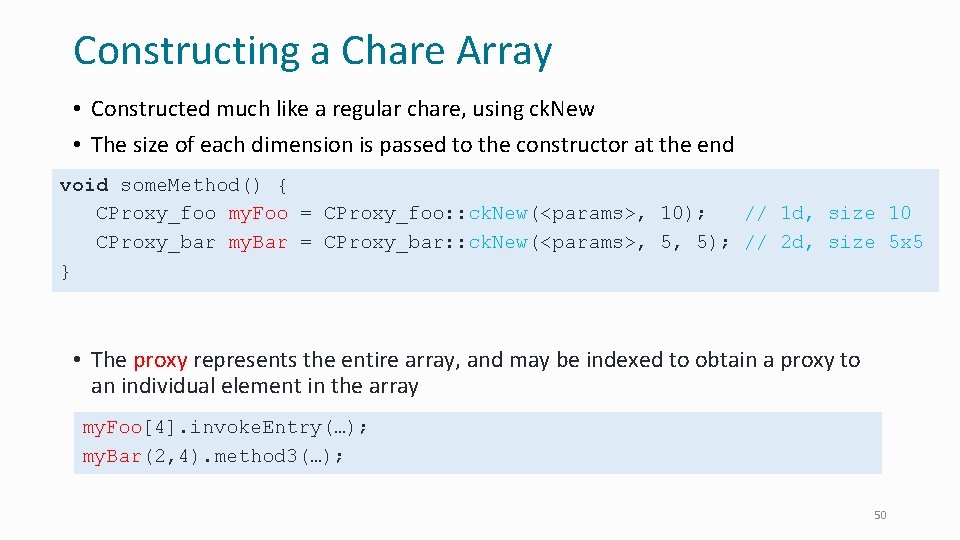

Constructing a Chare Array • Constructed much like a regular chare, using ck. New • The size of each dimension is passed to the constructor at the end void some. Method() { CProxy_foo my. Foo = CProxy_foo: : ck. New(<params>, 10); // 1 d, size 10 CProxy_bar my. Bar = CProxy_bar: : ck. New(<params>, 5, 5); // 2 d, size 5 x 5 } • The proxy represents the entire array, and may be indexed to obtain a proxy to an individual element in the array my. Foo[4]. invoke. Entry(…); my. Bar(2, 4). method 3(…); 50

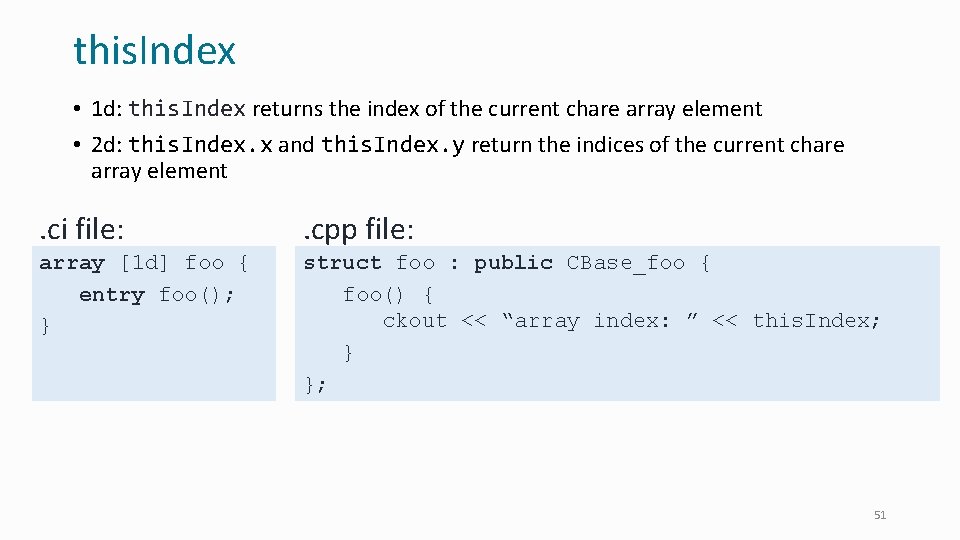

this. Index • 1 d: this. Index returns the index of the current chare array element • 2 d: this. Index. x and this. Index. y return the indices of the current chare array element . ci file: . cpp file: array [1 d] foo { entry foo(); } struct foo : public CBase_foo { foo() { ckout << “array index: ” << this. Index; } }; 51

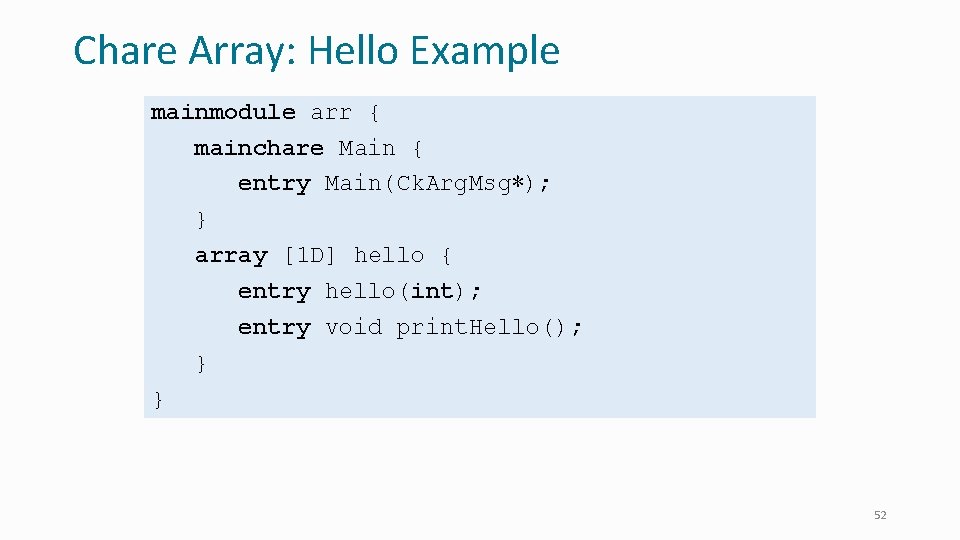

Chare Array: Hello Example mainmodule arr { mainchare Main { entry Main(Ck. Arg. Msg∗); } array [1 D] hello { entry hello(int); entry void print. Hello(); } } 52

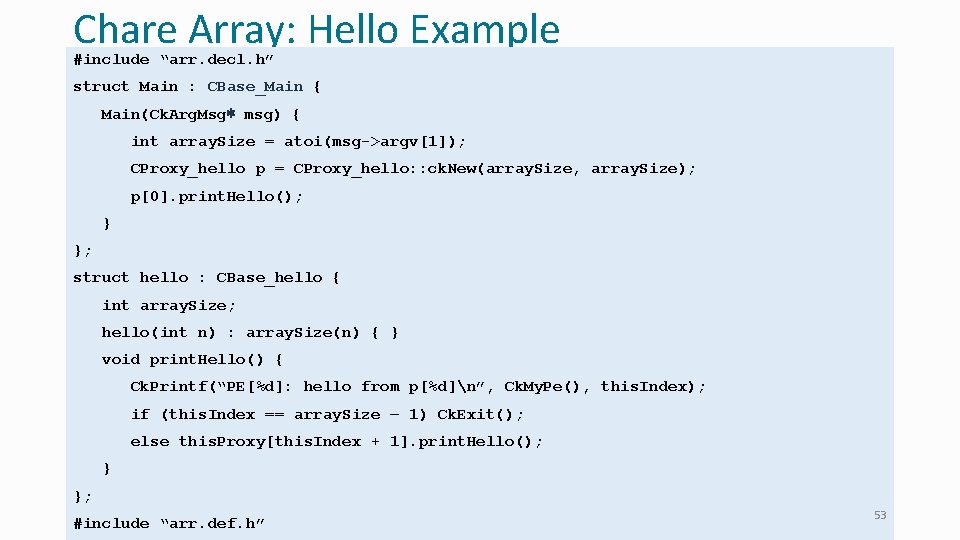

Chare Array: Hello Example #include “arr. decl. h” struct Main : CBase_Main { Main(Ck. Arg. Msg∗ msg) { int array. Size = atoi(msg->argv[1]); CProxy_hello p = CProxy_hello: : ck. New(array. Size, array. Size); p[0]. print. Hello(); } }; struct hello : CBase_hello { int array. Size; hello(int n) : array. Size(n) { } void print. Hello() { Ck. Printf(“PE[%d]: hello from p[%d]n”, Ck. My. Pe(), this. Index); if (this. Index == array. Size – 1) Ck. Exit(); else this. Proxy[this. Index + 1]. print. Hello(); } }; #include “arr. def. h” 53

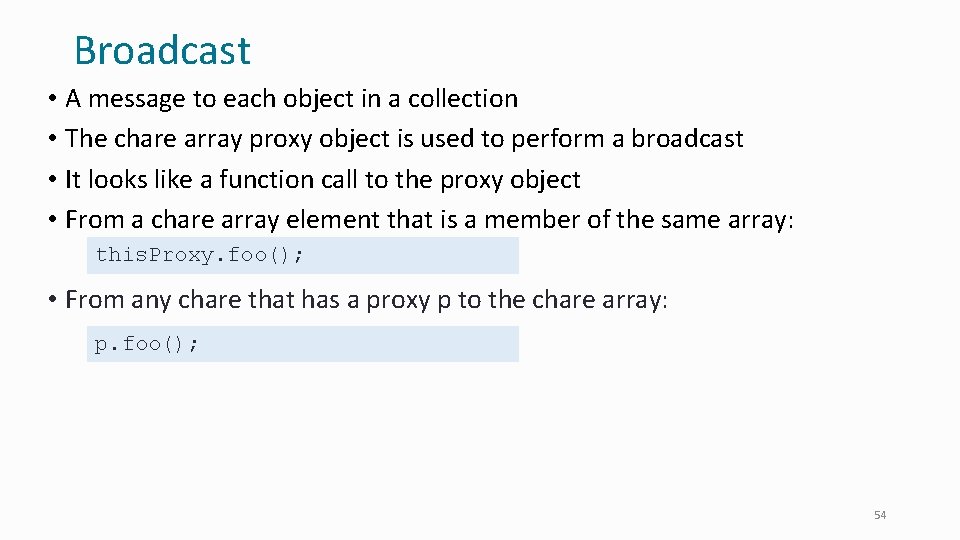

Broadcast • A message to each object in a collection • The chare array proxy object is used to perform a broadcast • It looks like a function call to the proxy object • From a chare array element that is a member of the same array: this. Proxy. foo(); • From any chare that has a proxy p to the chare array: p. foo(); 54

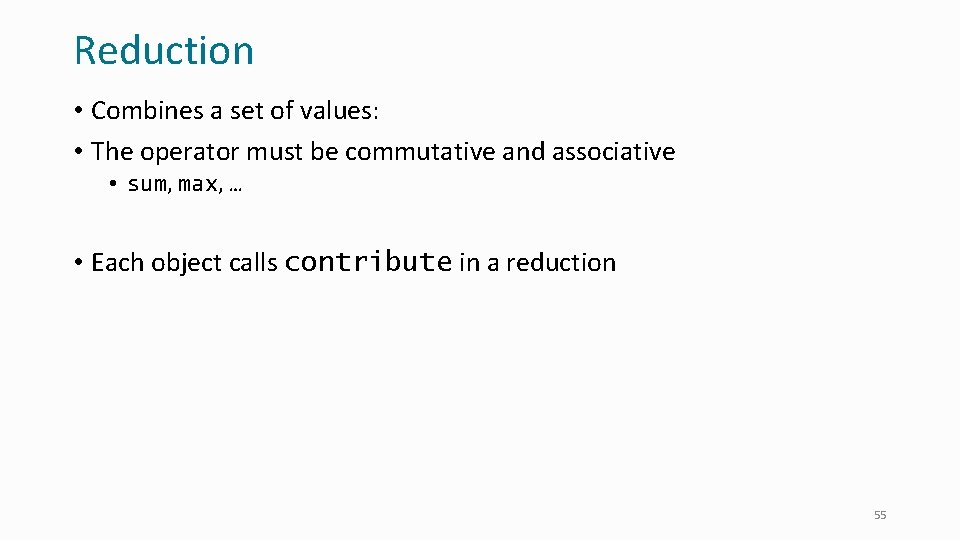

Reduction • Combines a set of values: • The operator must be commutative and associative • sum, max, … • Each object calls contribute in a reduction 55

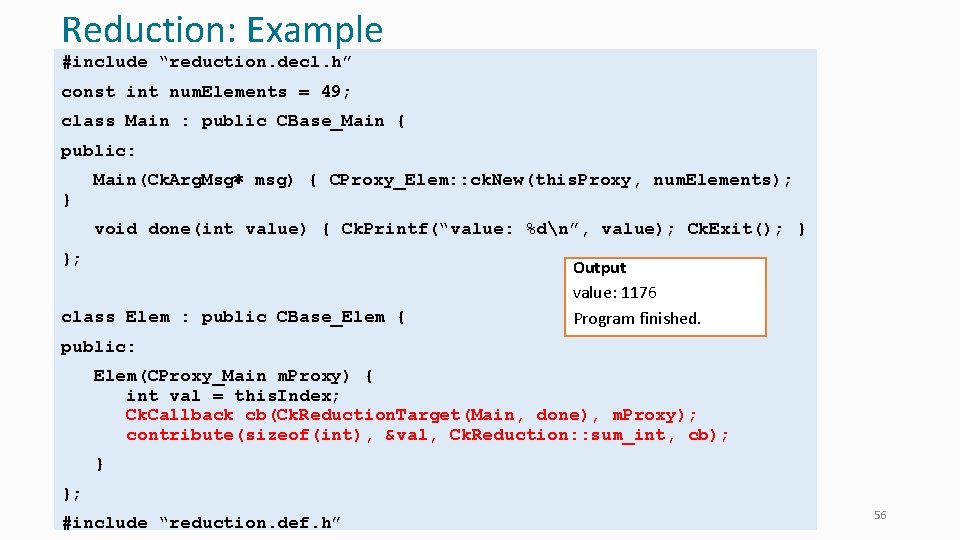

Reduction: Example #include “reduction. decl. h” const int num. Elements = 49; class Main : public CBase_Main { public: } Main(Ck. Arg. Msg∗ msg) { CProxy_Elem: : ck. New(this. Proxy, num. Elements); void done(int value) { Ck. Printf(“value: %dn”, value); Ck. Exit(); } }; class Elem : public CBase_Elem { Output value: 1176 Program finished. public: Elem(CProxy_Main m. Proxy) { int val = this. Index; Ck. Callback cb(Ck. Reduction. Target(Main, done), m. Proxy); contribute(sizeof(int), &val, Ck. Reduction: : sum_int, cb); } }; #include “reduction. def. h” 56

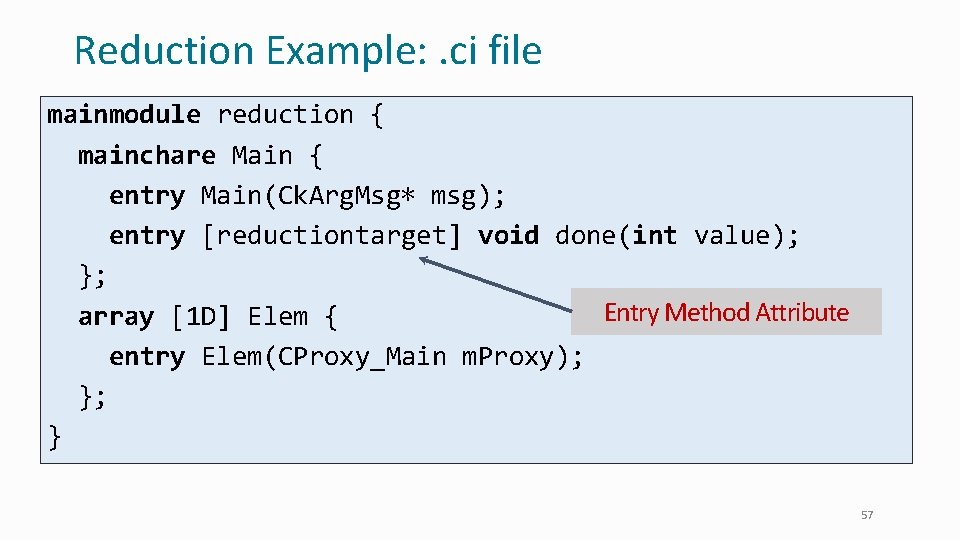

Reduction Example: . ci file mainmodule reduction { mainchare Main { entry Main(Ck. Arg. Msg∗ msg); entry [reductiontarget] void done(int value); }; Entry Method Attribute array [1 D] Elem { entry Elem(CProxy_Main m. Proxy); }; } 57

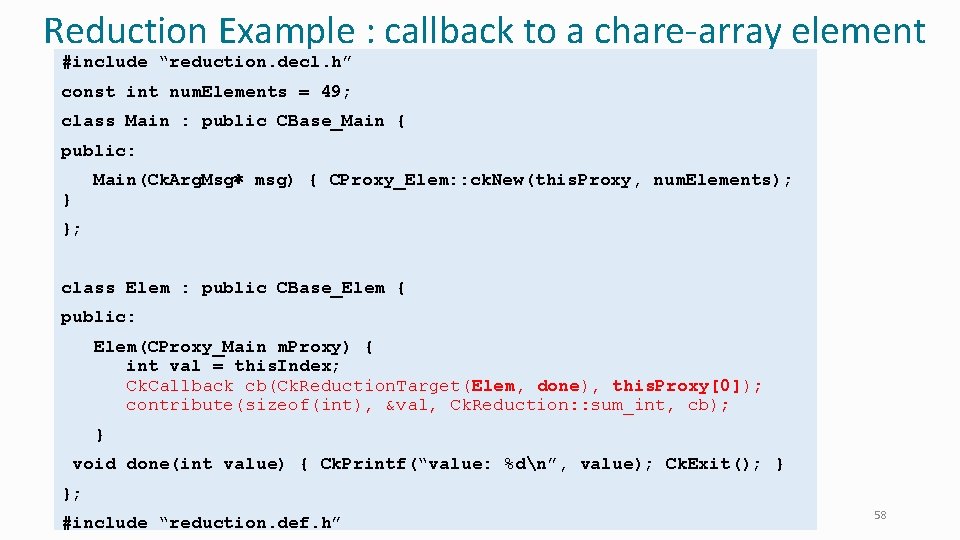

Reduction Example : callback to a chare-array element #include “reduction. decl. h” const int num. Elements = 49; class Main : public CBase_Main { public: Main(Ck. Arg. Msg∗ msg) { CProxy_Elem: : ck. New(this. Proxy, num. Elements); } }; class Elem : public CBase_Elem { public: Elem(CProxy_Main m. Proxy) { int val = this. Index; Ck. Callback cb(Ck. Reduction. Target(Elem, done), this. Proxy[0]); contribute(sizeof(int), &val, Ck. Reduction: : sum_int, cb); } void done(int value) { Ck. Printf(“value: %dn”, value); Ck. Exit(); } }; #include “reduction. def. h” 58

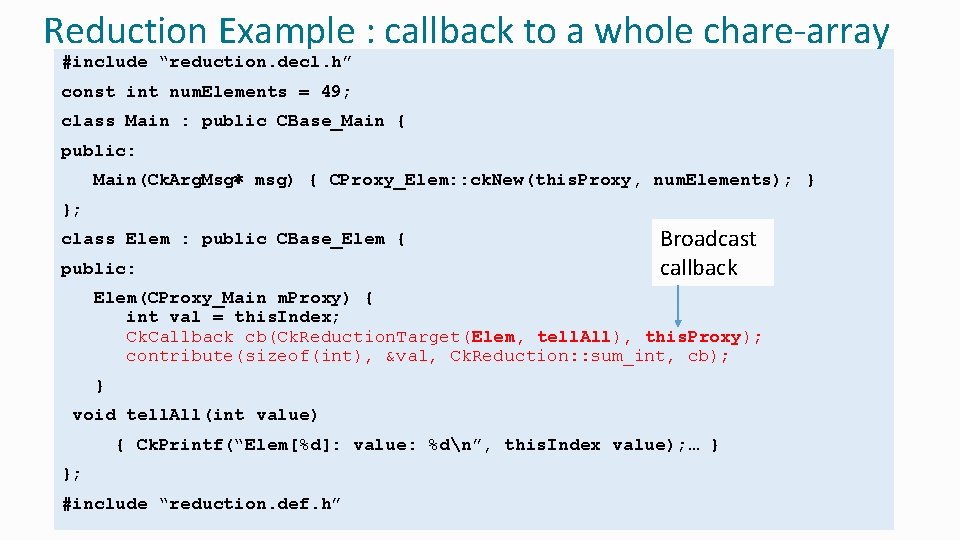

Reduction Example : callback to a whole chare-array #include “reduction. decl. h” const int num. Elements = 49; class Main : public CBase_Main { public: Main(Ck. Arg. Msg∗ msg) { CProxy_Elem: : ck. New(this. Proxy, num. Elements); } }; class Elem : public CBase_Elem { public: Broadcast callback Elem(CProxy_Main m. Proxy) { int val = this. Index; Ck. Callback cb(Ck. Reduction. Target(Elem, tell. All), this. Proxy); contribute(sizeof(int), &val, Ck. Reduction: : sum_int, cb); } void tell. All(int value) { Ck. Printf(“Elem[%d]: value: %dn”, this. Index value); … } }; #include “reduction. def. h” 59

Chare Arrays view 60

Advanced Features and Application Case Studies Brief peek at other features 61

Control Flow within Chare • Structured dagger notation • Provides a script-like language for expressing dag of dependencies between method invocations and computations • Threaded entry methods • Allows entry methods to block without blocking the PE • Supports futures and • Ability to suspend/resume threads 62

Advanced Concepts • Priorities • Entry method tags • Quiescence detection • Live. Viz: visualization from a parallel program • Charm. Debug: a powerful debugging tool • Projections: Performance Analysis and Visualization, a workhorse tool for Charm++ developers • Messages (instead of marshalled parameters) • Processor-aware constructs: • Groups: like a non-migratable chare array with one element on each “core” • Nodegroups: one element on each process 63

Saving Cooling Energy • Easy: increase A/C setting • But: some cores may get too hot • So, reduce frequency if temperature is high (DVFS) • Independently for each chip • But, this creates a load imbalance! • No problem, we can handle that: • Migrate objects away from the slowed-down processors • Balance load using an existing strategy • Strategies take speed of processors into account • Implemented in experimental version • SC 2011 paper, IEEE TC paper • Several new power/energy-related strategies • PASA ‘ 12: exploiting differential sensitivities of code segments to frequency change 64

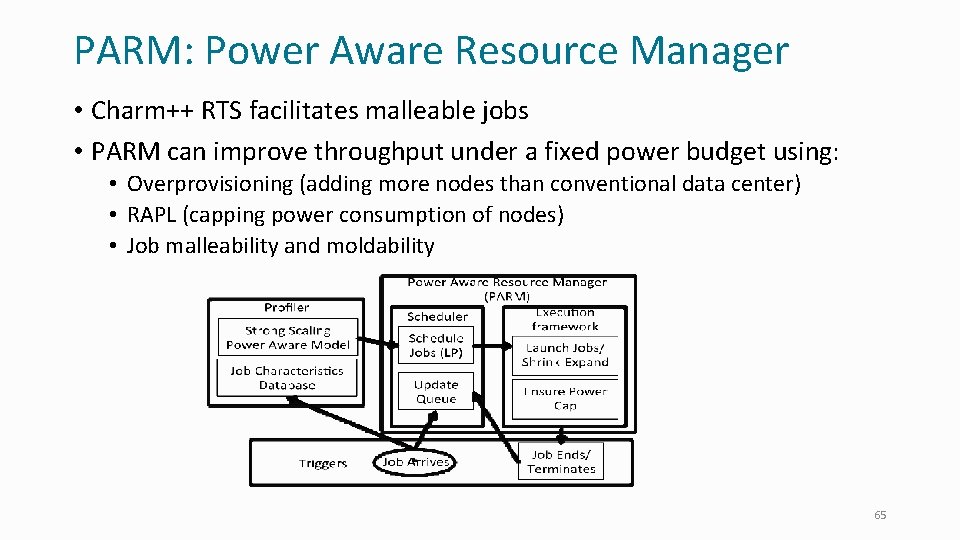

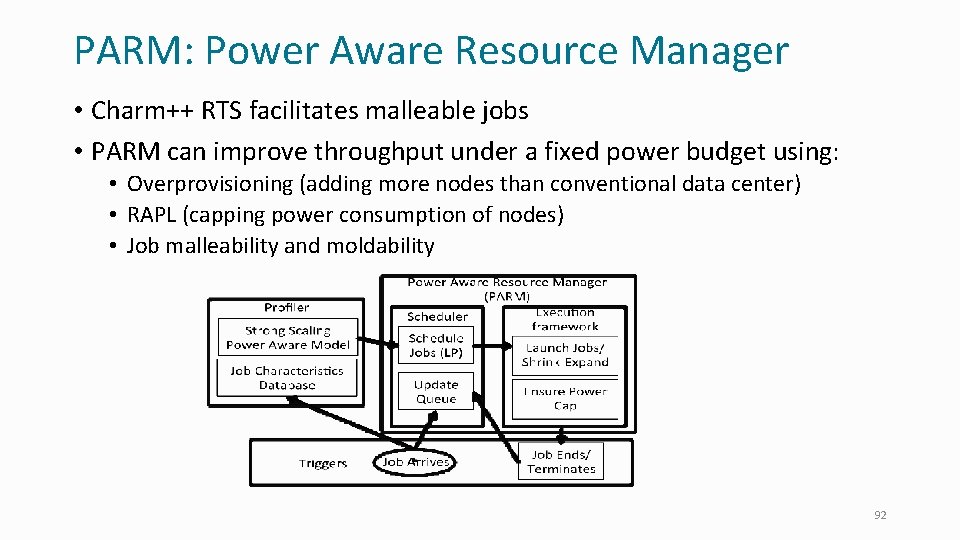

PARM: Power Aware Resource Manager • Charm++ RTS facilitates malleable jobs • PARM can improve throughput under a fixed power budget using: • Overprovisioning (adding more nodes than conventional data center) • RAPL (capping power consumption of nodes) • Job malleability and moldability 65

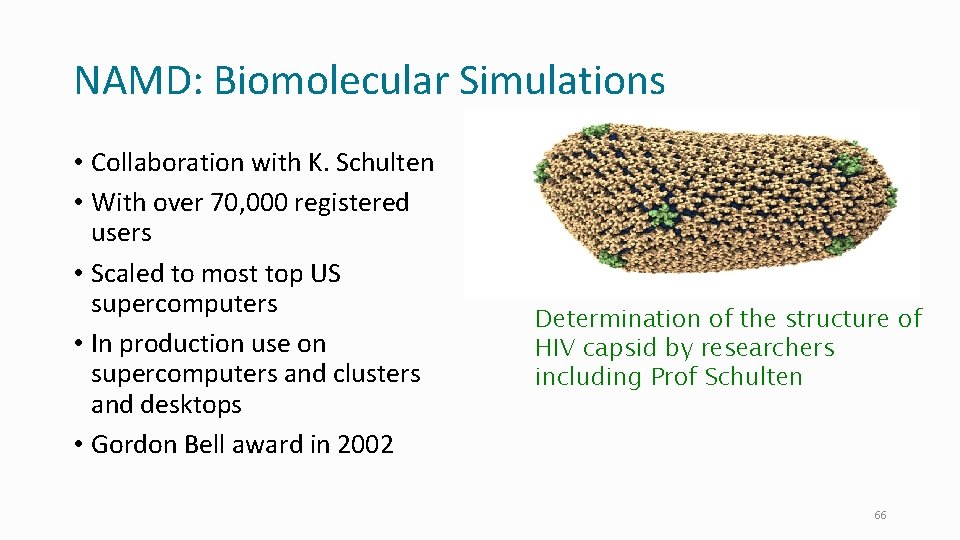

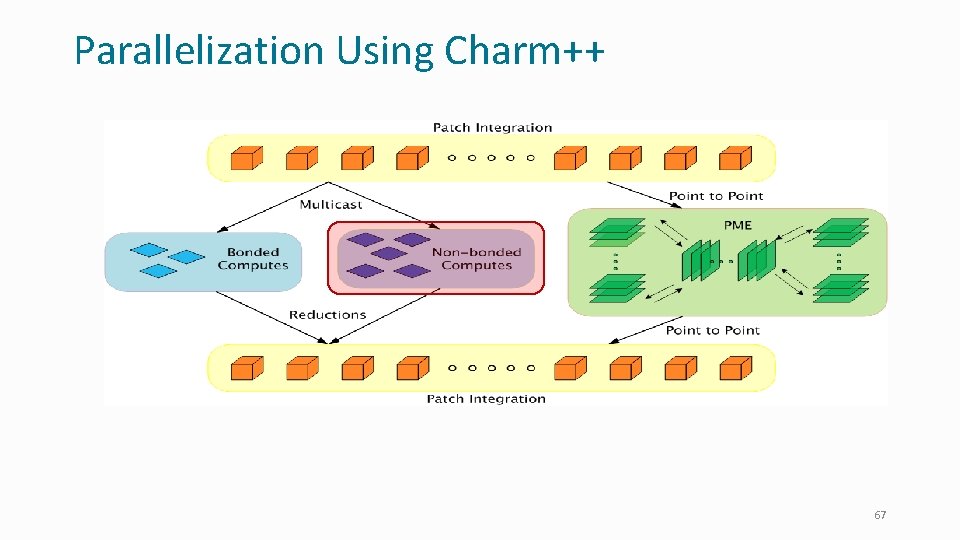

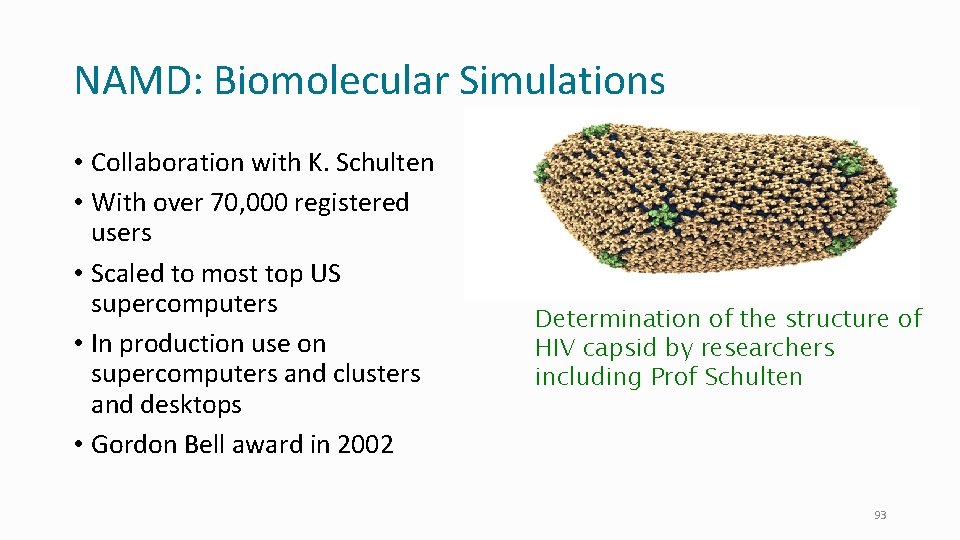

NAMD: Biomolecular Simulations • Collaboration with K. Schulten • With over 70, 000 registered users • Scaled to most top US supercomputers • In production use on supercomputers and clusters and desktops • Gordon Bell award in 2002 Determination of the structure of HIV capsid by researchers including Prof Schulten 66

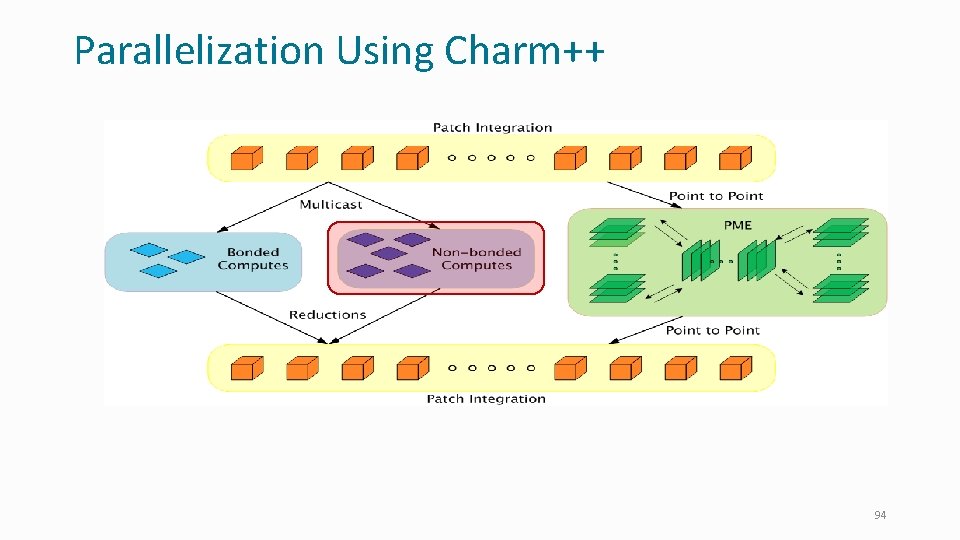

Parallelization Using Charm++ 67

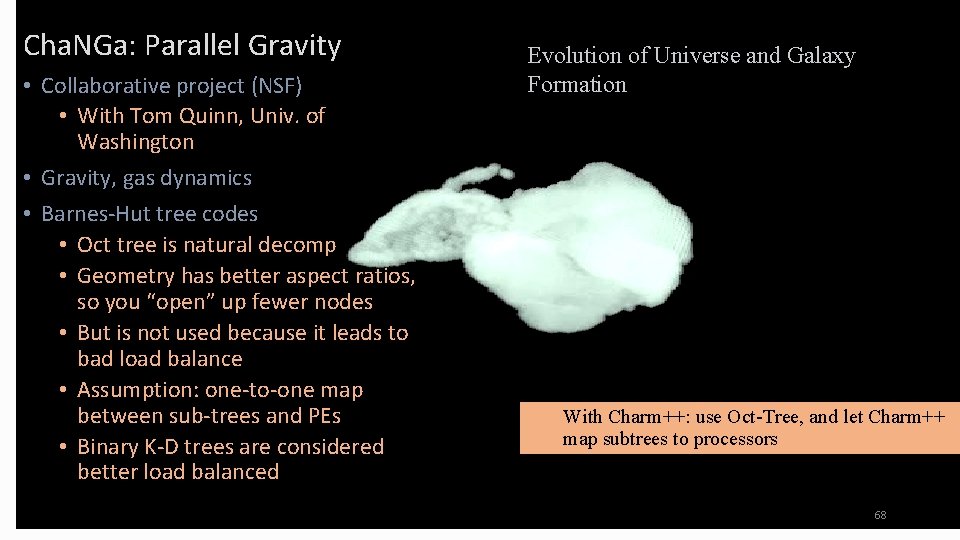

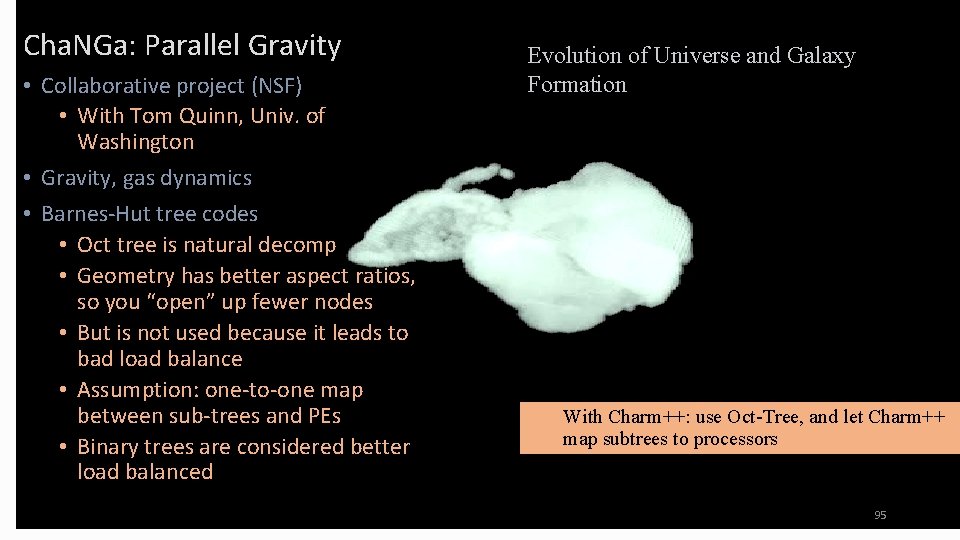

Cha. NGa: Parallel Gravity • Collaborative project (NSF) • With Tom Quinn, Univ. of Washington • Gravity, gas dynamics • Barnes-Hut tree codes • Oct tree is natural decomp • Geometry has better aspect ratios, so you “open” up fewer nodes • But is not used because it leads to bad load balance • Assumption: one-to-one map between sub-trees and PEs • Binary K-D trees are considered better load balanced Evolution of Universe and Galaxy Formation With Charm++: use Oct-Tree, and let Charm++ map subtrees to processors 68

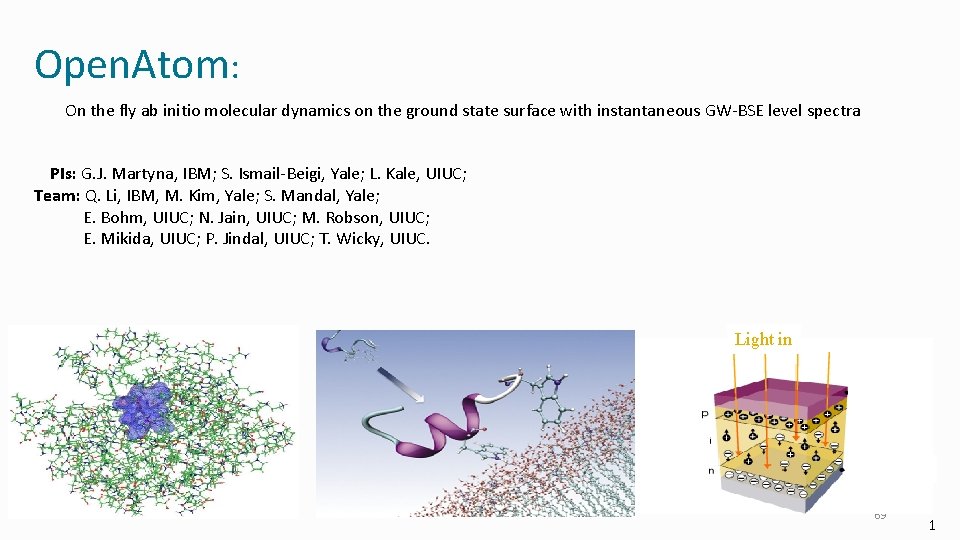

Open. Atom: On the fly ab initio molecular dynamics on the ground state surface with instantaneous GW-BSE level spectra PIs: G. J. Martyna, IBM; S. Ismail-Beigi, Yale; L. Kale, UIUC; Team: Q. Li, IBM, M. Kim, Yale; S. Mandal, Yale; E. Bohm, UIUC; N. Jain, UIUC; M. Robson, UIUC; E. Mikida, UIUC; P. Jindal, UIUC; T. Wicky, UIUC. Light in 69 1

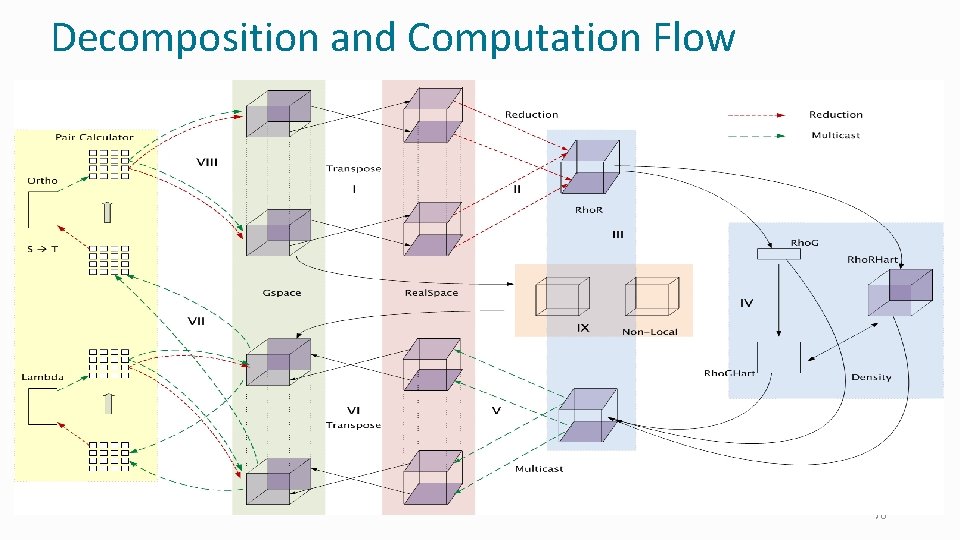

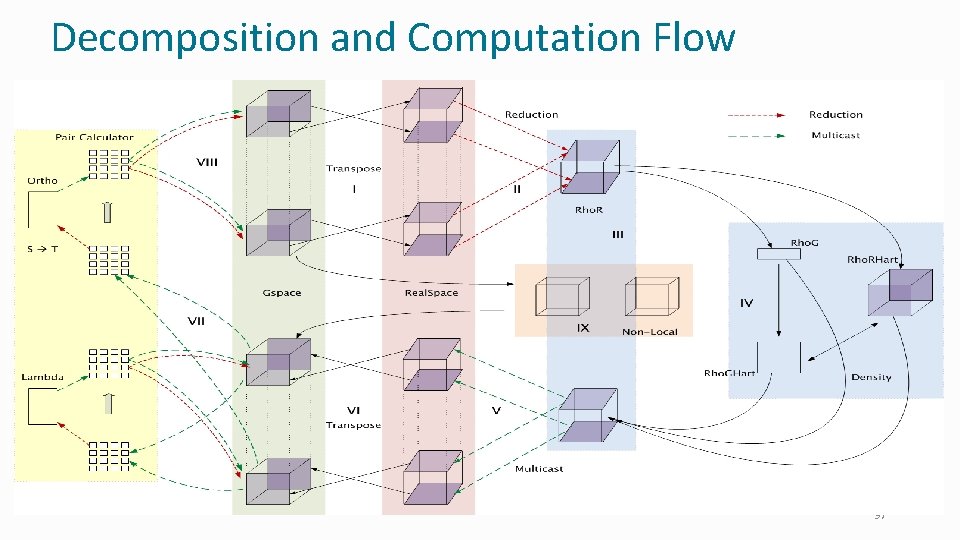

Decomposition and Computation Flow 70

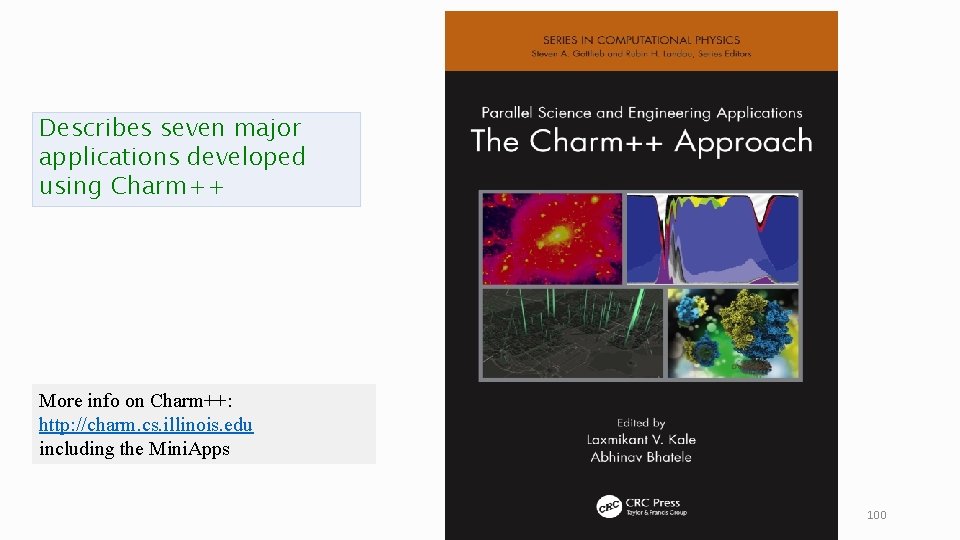

Describes seven major applications developed using Charm++ More info on Charm++: http: //charm. cs. illinois. edu including the Mini. Apps 71

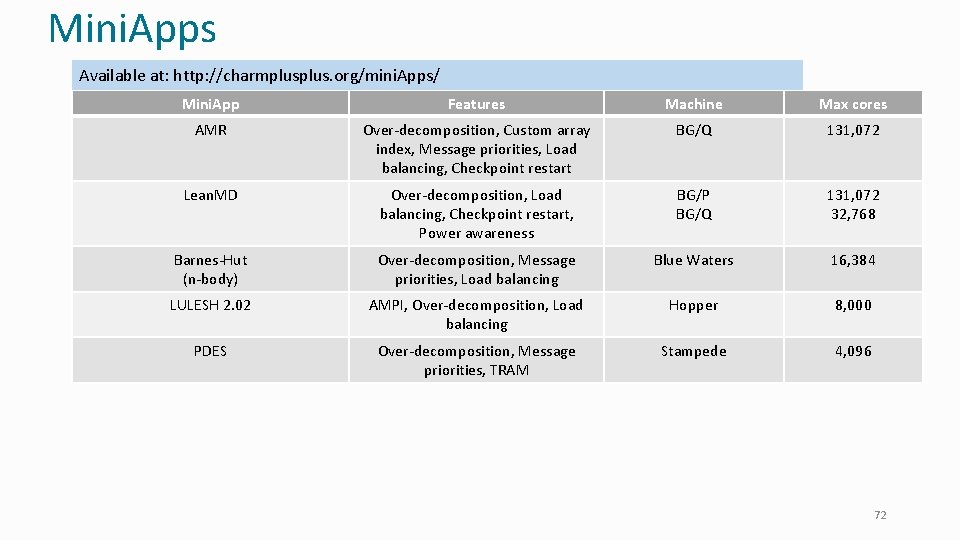

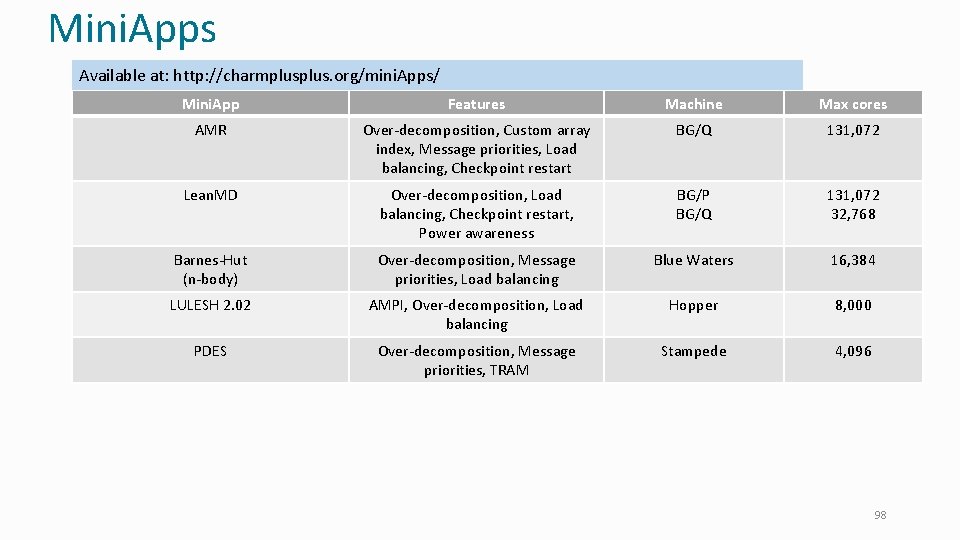

Mini. Apps Available at: http: //charmplus. org/mini. Apps/ Mini. App Features Machine Max cores AMR Over-decomposition, Custom array index, Message priorities, Load balancing, Checkpoint restart BG/Q 131, 072 Lean. MD Over-decomposition, Load balancing, Checkpoint restart, Power awareness BG/P BG/Q 131, 072 32, 768 Barnes-Hut (n-body) Over-decomposition, Message priorities, Load balancing Blue Waters 16, 384 LULESH 2. 02 AMPI, Over-decomposition, Load balancing Hopper 8, 000 PDES Over-decomposition, Message priorities, TRAM Stampede 4, 096 72

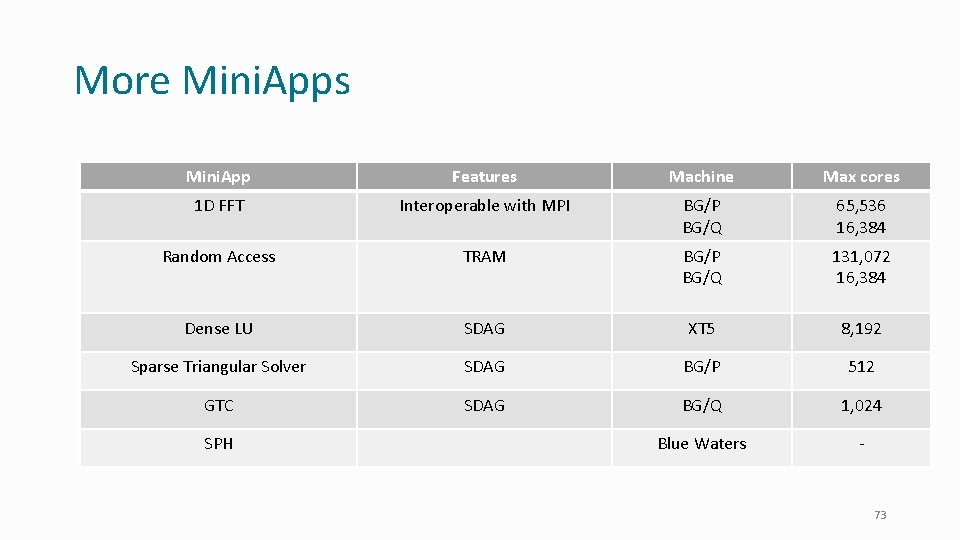

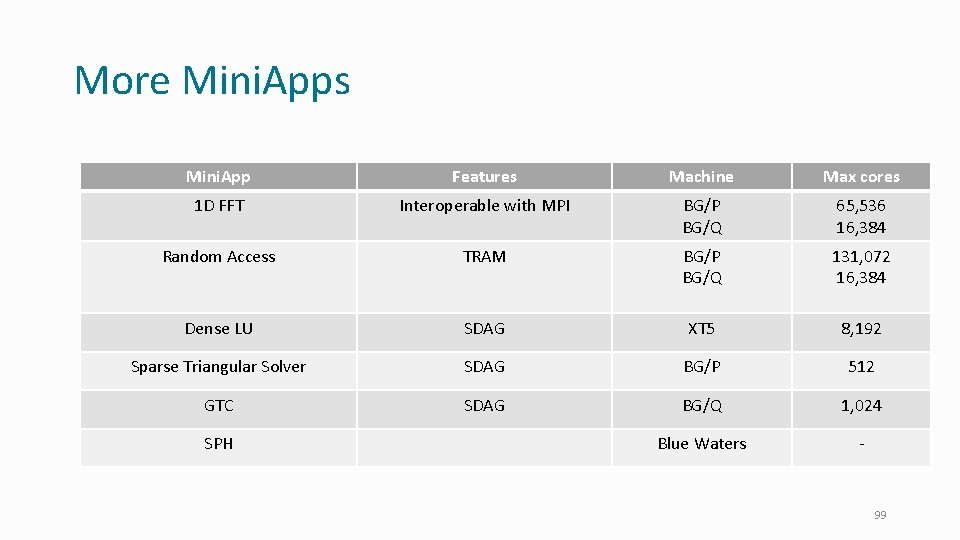

More Mini. Apps Mini. App Features Machine Max cores 1 D FFT Interoperable with MPI BG/P BG/Q 65, 536 16, 384 Random Access TRAM BG/P BG/Q 131, 072 16, 384 Dense LU SDAG XT 5 8, 192 Sparse Triangular Solver SDAG BG/P 512 GTC SDAG BG/Q 1, 024 Blue Waters - SPH 73

More information • A lecture series with instructional material coming soon • http: //charmplus. org/ (under “Learn”) • See also “Exercises” at the same link • Mini. Apps (source code): • http: //charmplus. org/mini. Apps/ • Research projects, papers, etc. • http: //charm. cs. illinois. edu/ • Commercial support: • https: //www. hpccharm. com/ 74

Dynamic Load Balancing in Charm++ Invoking Load Balancers, and serialization via PUPs 75

Dynamic Load Balancing • Object-based decomposition (i. e. , virtualized decomposition) helps • Charm++ RTS reassigns objects to PEs to balance load • But how does the RTS decide? • Multiple strategy options • E. g. , just move objects away from overloaded processors to underloaded processors • How is load determined? 76

Measurement Based Load Balancing • Principle of persistence • Object communication patterns and computational loads tend to persist over time • In spite of dynamic behavior • Abrupt but infrequent changes • Slow and small changes • Recent past is a good predictor of near future • Runtime instrumentation • Measures communication volume and computation time • Measurement-based load balancers • Measure load information for chares • Periodically use the instrumented database to make new decisions and migrate objects • Many alternative strategies can use the database 77

Using the Load Balancer • Link a LB module • -module <strategy> • Refine. LB, Neighbor. LB, Greedy. Comm. LB, others • Every. LB will include all load balancing strategies • Compile time option (specify default balancer) • -balancer Refine. LB • Runtime option (override default) • +balancer Refine. LB 78

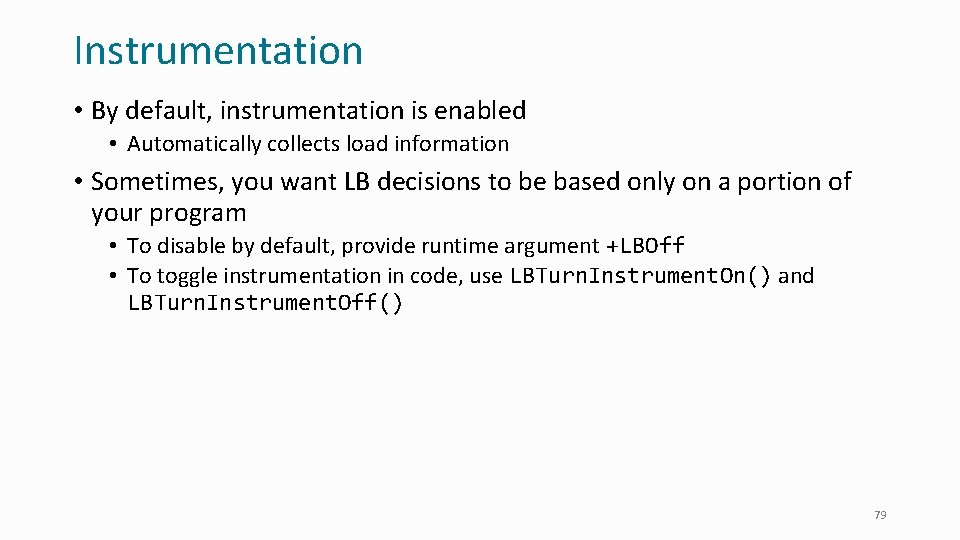

Instrumentation • By default, instrumentation is enabled • Automatically collects load information • Sometimes, you want LB decisions to be based only on a portion of your program • To disable by default, provide runtime argument +LBOff • To toggle instrumentation in code, use LBTurn. Instrument. On() and LBTurn. Instrument. Off() 79

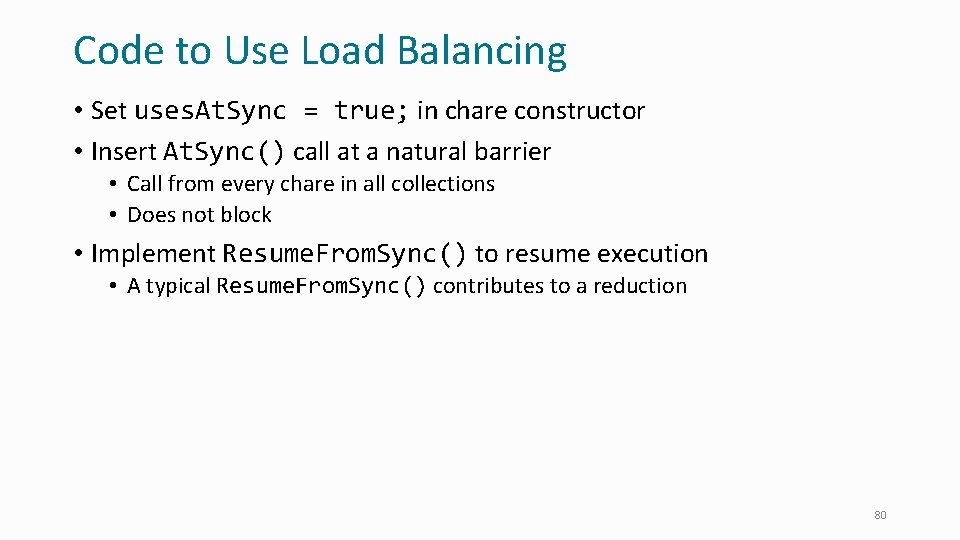

Code to Use Load Balancing • Set uses. At. Sync = true; in chare constructor • Insert At. Sync() call at a natural barrier • Call from every chare in all collections • Does not block • Implement Resume. From. Sync() to resume execution • A typical Resume. From. Sync() contributes to a reduction 80

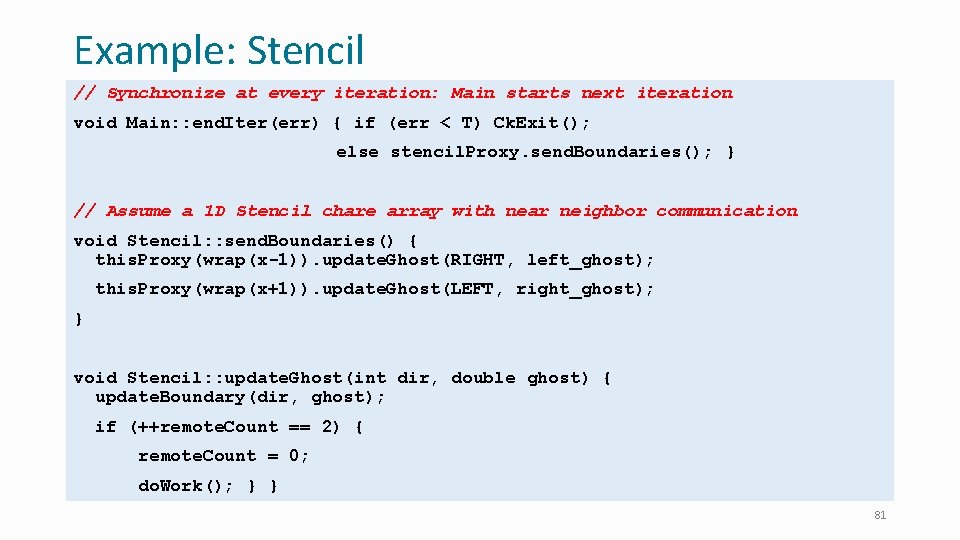

Example: Stencil // Synchronize at every iteration: Main starts next iteration void Main: : end. Iter(err) { if (err < T) Ck. Exit(); else stencil. Proxy. send. Boundaries(); } // Assume a 1 D Stencil chare array with near neighbor communication void Stencil: : send. Boundaries() { this. Proxy(wrap(x-1)). update. Ghost(RIGHT, left_ghost); this. Proxy(wrap(x+1)). update. Ghost(LEFT, right_ghost); } void Stencil: : update. Ghost(int dir, double ghost) { update. Boundary(dir, ghost); if (++remote. Count == 2) { remote. Count = 0; do. Work(); } } 81

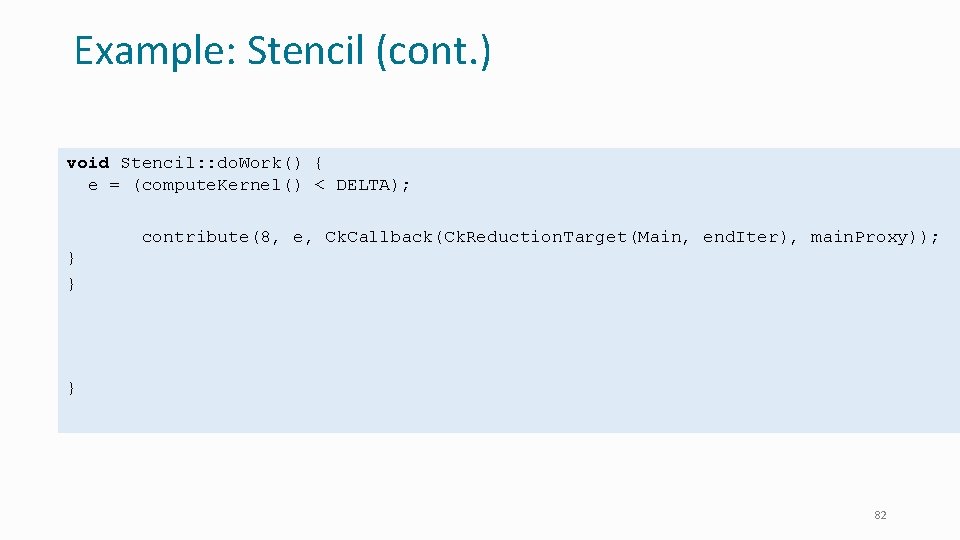

Example: Stencil (cont. ) void Stencil: : do. Work() { e = (compute. Kernel() < DELTA); if (++i % 10 == 0) { At. Sync(); } // Allow load balancing every 10 iterations else contribute(8, e, Ck. Callback(Ck. Reduction. Target(Main, end. Iter), main. Proxy)); } } void Stencil: : Resume. From. Sync() { contribute(Ck. Callback(Ck. Reduction. Target(Main, end. Iter), main. Proxy)); } 82

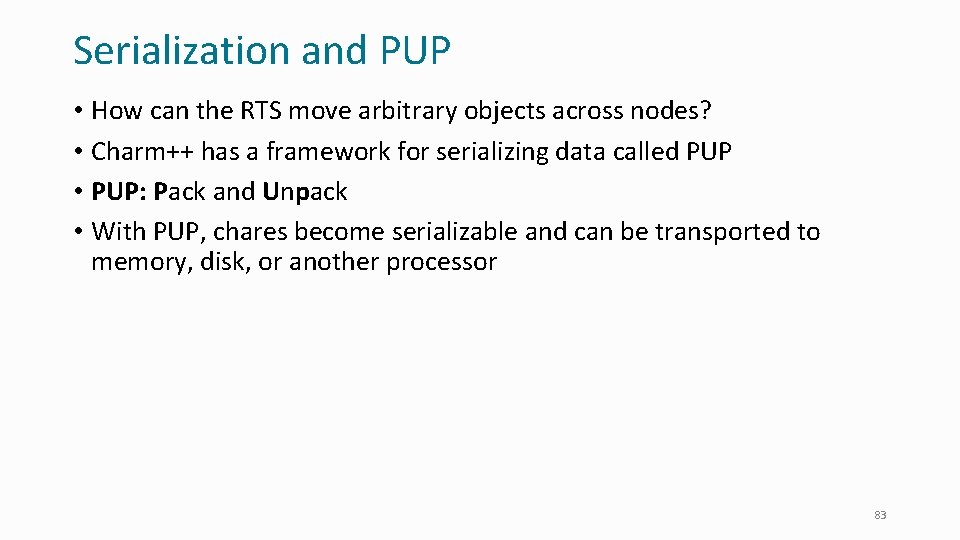

Serialization and PUP • How can the RTS move arbitrary objects across nodes? • Charm++ has a framework for serializing data called PUP • PUP: Pack and Unpack • With PUP, chares become serializable and can be transported to memory, disk, or another processor 83

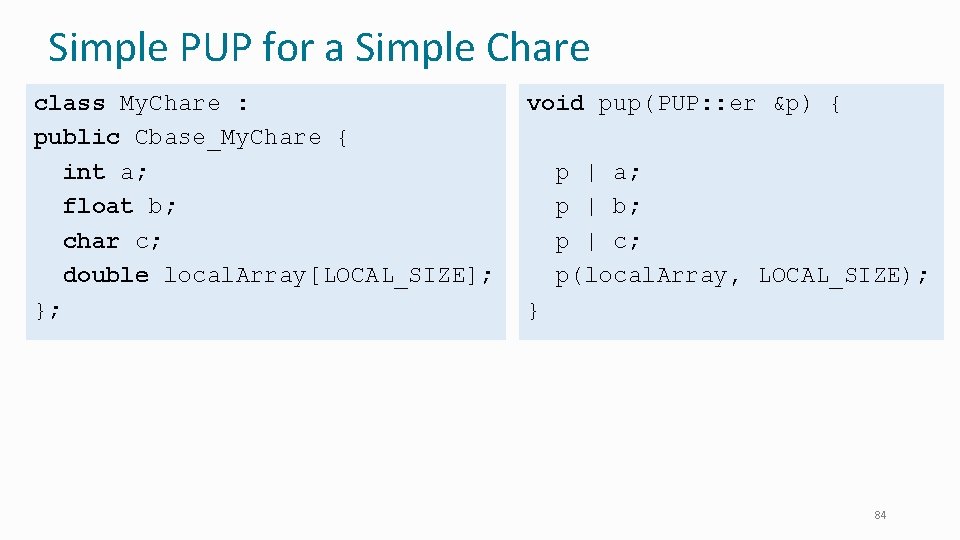

Simple PUP for a Simple Chare class My. Chare : public Cbase_My. Chare { int a; float b; char c; double local. Array[LOCAL_SIZE]; }; void pup(PUP: : er &p) { p | a; p | b; p | c; p(local. Array, LOCAL_SIZE); } 84

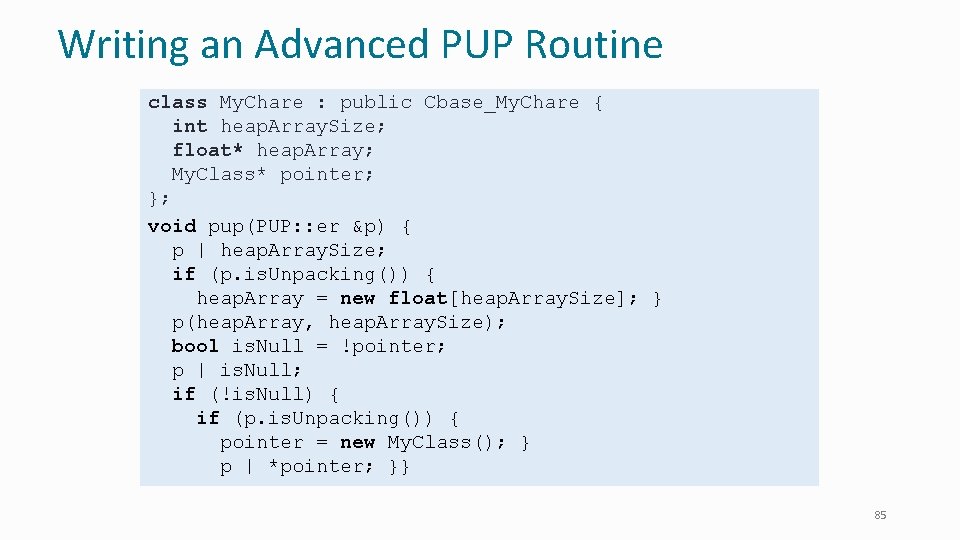

Writing an Advanced PUP Routine class My. Chare : public Cbase_My. Chare { int heap. Array. Size; float* heap. Array; My. Class* pointer; }; void pup(PUP: : er &p) { p | heap. Array. Size; if (p. is. Unpacking()) { heap. Array = new float[heap. Array. Size]; } p(heap. Array, heap. Array. Size); bool is. Null = !pointer; p | is. Null; if (!is. Null) { if (p. is. Unpacking()) { pointer = new My. Class(); } p | *pointer; }} 85

PUP Uses • Moving objects for load balancing • Marshalling user defined data types • When using a type you define as a parameter for an entry method • Type has to be serialized to go over network, uses PUP for this • Can add PUP to any class, doesn’t have to be a chare • Serializing for storage 86

Split Execution: Checkpoint Restart • Can use to stop execution and resume later • The job runs for 5 hours, then will continue in new allocation another day! • We can use PUP for this! • Instead of migrating to another PE, just “migrate” to disk • Bonus: in a well-written program, you can resume on a different number of nodes • Example: Original run on 100 nodes, checkpointed, can be resumed on 80 nodes (say because that’s what was available). 87

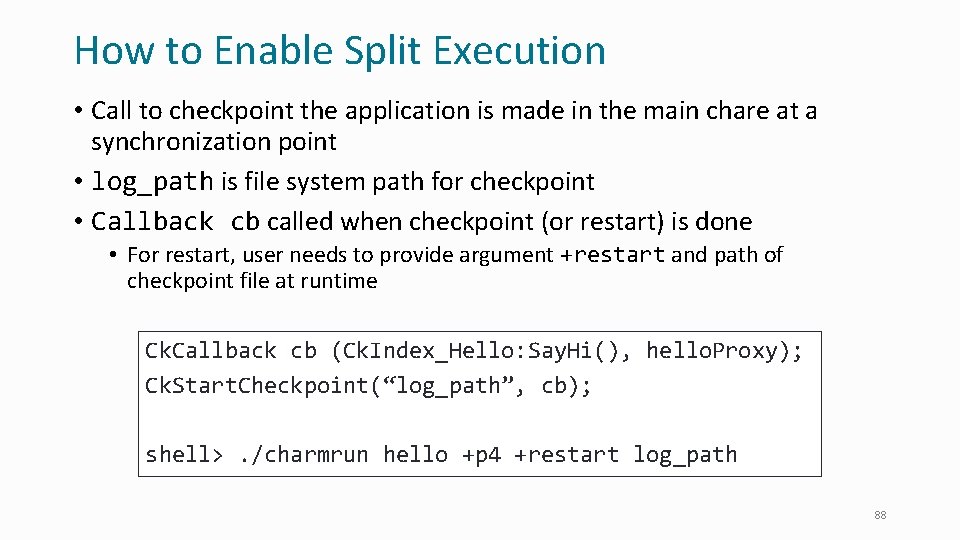

How to Enable Split Execution • Call to checkpoint the application is made in the main chare at a synchronization point • log_path is file system path for checkpoint • Callback cb called when checkpoint (or restart) is done • For restart, user needs to provide argument +restart and path of checkpoint file at runtime Ck. Callback cb (Ck. Index_Hello: Say. Hi(), hello. Proxy); Ck. Start. Checkpoint(“log_path”, cb); shell>. /charmrun hello +p 4 +restart log_path 88

Control Flow within Chare • Structured dagger notation • Provides a script-like language for expressing dag of dependencies between method invocations and computations • Threaded entry methods • Allows entry methods to block without blocking the PE • Supports futures and • Ability to suspend/resume threads 89

Advanced Concepts • Priorities • Entry method tags • Quiescence detection • Live. Viz: visualization from a parallel program • Charm. Debug: a powerful debugging tool • Projections: Performance Analysis and Visualization, really nice, and a workhorse tool for Charm++ developers • Messages (instead of marshalled parameters) • Processor-aware constructs: • Groups: like a non-migratable chare array with one element on each “core” • Nodegroups: one element on each process 90

Saving Cooling Energy • Easy: increase A/C setting • But: some cores may get too hot • So, reduce frequency if temperature is high (DVFS) • Independently for each chip • But, this creates a load imbalance! • No problem, we can handle that: • Migrate objects away from the slowed-down processors • Balance load using an existing strategy • Strategies take speed of processors into account • Implemented in experimental version • SC 2011 paper, IEEE TC paper • Several new power/energy-related strategies • PASA ‘ 12: exploiting differential sensitivities of code segments to frequency change 91

PARM: Power Aware Resource Manager • Charm++ RTS facilitates malleable jobs • PARM can improve throughput under a fixed power budget using: • Overprovisioning (adding more nodes than conventional data center) • RAPL (capping power consumption of nodes) • Job malleability and moldability 92

NAMD: Biomolecular Simulations • Collaboration with K. Schulten • With over 70, 000 registered users • Scaled to most top US supercomputers • In production use on supercomputers and clusters and desktops • Gordon Bell award in 2002 Determination of the structure of HIV capsid by researchers including Prof Schulten 93

Parallelization Using Charm++ 94

Cha. NGa: Parallel Gravity • Collaborative project (NSF) • With Tom Quinn, Univ. of Washington • Gravity, gas dynamics • Barnes-Hut tree codes • Oct tree is natural decomp • Geometry has better aspect ratios, so you “open” up fewer nodes • But is not used because it leads to bad load balance • Assumption: one-to-one map between sub-trees and PEs • Binary trees are considered better load balanced Evolution of Universe and Galaxy Formation With Charm++: use Oct-Tree, and let Charm++ map subtrees to processors 95

Open. Atom: On the fly ab initio molecular dynamics on the ground state surface with instantaneous GW-BSE level spectra PIs: G. J. Martyna, IBM; S. Ismail-Beigi, Yale; L. Kale, UIUC; Team: Q. Li, IBM, M. Kim, Yale; S. Mandal, Yale; E. Bohm, UIUC; N. Jain, UIUC; M. Robson, UIUC; E. Mikida, UIUC; P. Jindal, UIUC; T. Wicky, UIUC. Light in 96 1

Decomposition and Computation Flow 97

Mini. Apps Available at: http: //charmplus. org/mini. Apps/ Mini. App Features Machine Max cores AMR Over-decomposition, Custom array index, Message priorities, Load balancing, Checkpoint restart BG/Q 131, 072 Lean. MD Over-decomposition, Load balancing, Checkpoint restart, Power awareness BG/P BG/Q 131, 072 32, 768 Barnes-Hut (n-body) Over-decomposition, Message priorities, Load balancing Blue Waters 16, 384 LULESH 2. 02 AMPI, Over-decomposition, Load balancing Hopper 8, 000 PDES Over-decomposition, Message priorities, TRAM Stampede 4, 096 98

More Mini. Apps Mini. App Features Machine Max cores 1 D FFT Interoperable with MPI BG/P BG/Q 65, 536 16, 384 Random Access TRAM BG/P BG/Q 131, 072 16, 384 Dense LU SDAG XT 5 8, 192 Sparse Triangular Solver SDAG BG/P 512 GTC SDAG BG/Q 1, 024 Blue Waters - SPH 99

Describes seven major applications developed using Charm++ More info on Charm++: http: //charm. cs. illinois. edu including the Mini. Apps 100

More information • A lecture series with instructional material coming soon • http: //charmplus. org/ (under “Learn”) • See also “Exercises” at the same link • Mini. Apps (source code): • http: //charmplus. org/mini. Apps/ • Research projects, papers, etc. • http: //charm. cs. illinois. edu/ • Commercial support: • https: //www. hpccharm. com/ 101

Adaptive MPI 102

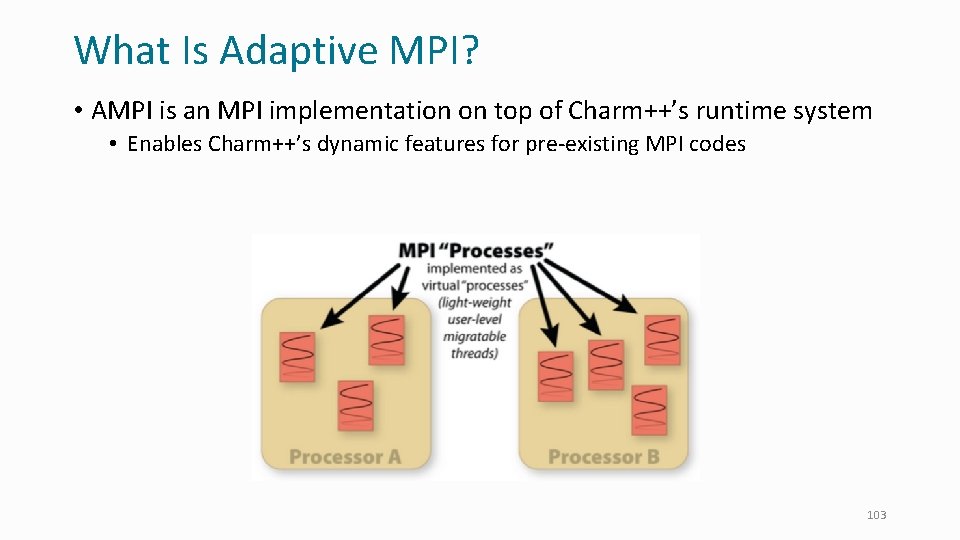

What Is Adaptive MPI? • AMPI is an MPI implementation on top of Charm++’s runtime system • Enables Charm++’s dynamic features for pre-existing MPI codes 103

Process Virtualization • AMPI virtualizes MPI “ranks, ” implementing them as migratable userlevel threads rather than OS processes • Benefits: • • Communication/computation overlap Cache benefits to smaller working sets Dynamic load balancing Lower latency messaging within a process • Disadvantages: • Global/static variables are shared by all threads in an OS process scope • Not an issue for new applications • AMPI provides support for automating this at compile/runtime • Ongoing work to fully automate 104

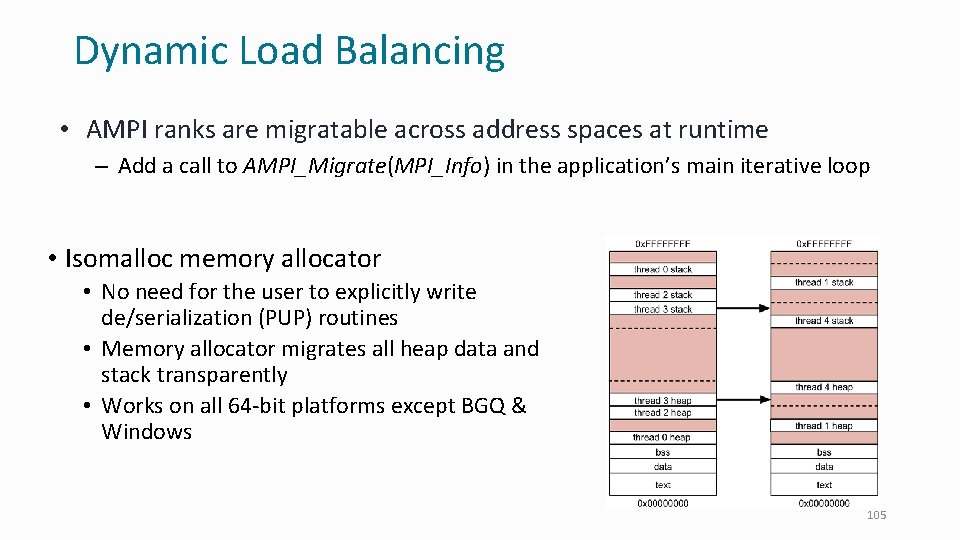

Dynamic Load Balancing • AMPI ranks are migratable across address spaces at runtime – Add a call to AMPI_Migrate(MPI_Info) in the application’s main iterative loop • Isomalloc memory allocator • No need for the user to explicitly write de/serialization (PUP) routines • Memory allocator migrates all heap data and stack transparently • Works on all 64 -bit platforms except BGQ & Windows 105

Fault Tolerance • AMPI ranks can be migrated to persistent storage or in remote memories for fault tolerance • Storage can be Disk, SSD, NVRAM, etc. • The runtime uses a scalable fault detection algorithm and restarts automatically on a failure • Restart is online, within the same job • Checkpointing strategy is specified by passing a different MPI_Info to AMPI_Migrate() 106

Compiling & Running AMPI Programs • To compile an AMPI program: • charm/bin/ampicc –o pgm. o • For migratability, link with: -memory isomalloc • For LB strategies, link with: –module Common. LBs • To run an AMPI job, specify the # of virtual processes (+vp) • . /charmrun +p 1024. /pgm +vp 16384 +balancer Refine. LB 107

Case Study • LULESH proxy-application (LLNL) • Shock hydrodynamics on an unstructured mesh • With artificial load imbalance included to test runtimes • No mutable global/static variables: can run on AMPI as is 1. Replace mpicc with ampicc 2. Link with “-module Common. LBs –memory isomalloc” 3. Run with # of virtual processes and a load balancing strategy: • . /charmrun +p 2048. /lulesh 2. 0 +vp 16384 +balancer Greedy. LB 108

AMPI Summary • AMPI provides the dynamic RTS support of Charm++ with the familiar API of MPI • • • Communication optimizations Dynamic load balancing Automatic fault tolerance Checkpoint/restart Open. MP runtime integration • See the AMPI Manual for more info 109

- Slides: 109