MemoryBased Models of inflectional morphology acquisition and processing

Memory-Based Models of inflectional morphology acquisition and processing Walter Daelemans (with Emmanuel Keuleers, CPL) CNTS, Dept. of Linguistics University of Antwerp walter. daelemans@ua. ac. be

2 Contents • Old and recent results using Memory-Based Learning in inflectional morphology (Pinker: fruit fly of psycholinguistics) • Memory-based learning with Ti. MBL - Learning is storage • Cases - (English and Dutch past tense) - More interesting • German plural • Dutch plural • Computational psycholinguistics methodology

3 Nature of the human language processing architecture • How is linguistic knowledge represented? - Words and Rules; mental lexicon and grammar - But: • Fuzziness, leakage • Semi-regularity, irregularity - Similarity-Based Reasoning • How is linguistic knowledge acquired? - Innate rules / constraints - Storage of (patterns of) observable linguistic items • Words, syllables, segments, …

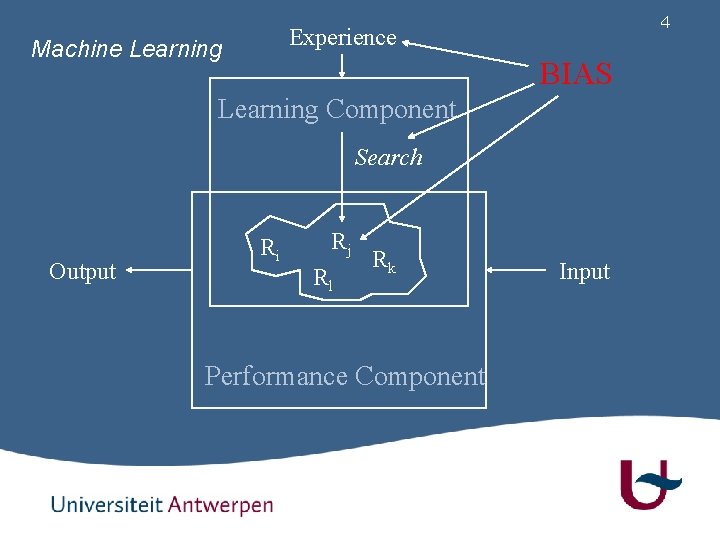

4 Experience Machine Learning BIAS Learning Component Search Output Ri Rj Rl Rk Performance Component Input

5 Supervised Learners • Learning is approximating an underlying function f such that • - y = f (x) or finding - MAXi P(yi|x) = MAXi P(y) * P(x|yi) Discriminative: - Directly estimate parameters of P(y|x) - BUT: No estimation of underlying distributions • Memory-Based Learning: no assumptions about underlying P(y|x) distributions; non-parametric, local • Generative: - Estimate parameters of P(y) and P(x|y) - Probability distribution functions - BUT: Conditional independence assumptions

6 Eager vs Lazy Learning • Eager: learning is compression - Minimal Description Length principle - Ockham’s razor - Minimize size of abstracted model (core) plus length of list of exceptions not covered by model (periphery) • Lazy: learning is storage of exemplars + analogy - In language, what is core and what is periphery? • Small disjuncts, pockets of exceptions, polymorphism, … • Zipfian distributions - “Forgetting exceptions is harmful in language learning”

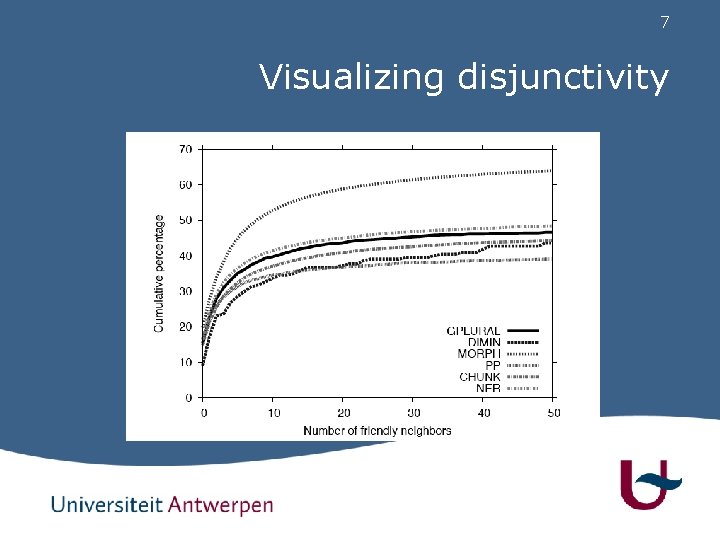

7 Visualizing disjunctivity

8 English Past Tense: Words + Rules • Mental Rule(s) + Memory • The past tense of to plit is plitted - VERB + ed (Default Rule), independent from noncategorical features - Explains generalization and default behavior • Is the past tense of to spling, splinged, splang, or splung (Bybee & Moder, 1983; Prasada & Pinker, 1993) - Race between Rule and Words (Associative Memory)

9 Prasada & Pinker Data • Rate on a scale from 1 to 7 - Today, I spling, yesterday I splinged - Today, I spling, yesterday I splung - Today, I plip, yesterday I plipped - Today, I plip, yesterday I plup

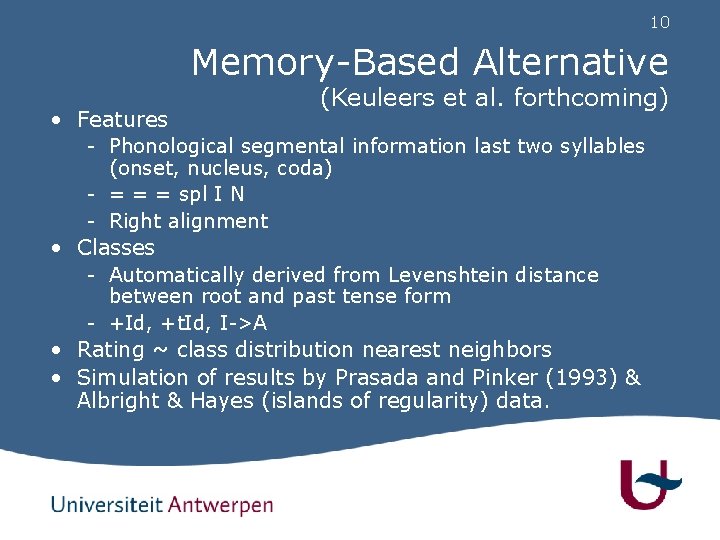

10 Memory-Based Alternative (Keuleers et al. forthcoming) • Features - Phonological segmental information last two syllables (onset, nucleus, coda) - = = = spl I N - Right alignment • Classes - Automatically derived from Levenshtein distance between root and past tense form - +Id, +t. Id, I->A • Rating ~ class distribution nearest neighbors • Simulation of results by Prasada and Pinker (1993) & Albright & Hayes (islands of regularity) data.

11 Prasada and Pinker Simulation

12 Replication Study in Dutch

13 Memory-based learning and classification • Learning: - Store instances in memory • Classification: - Given new test instance X, - Compare it to all memory instances • Compute similarity between X and memory instance Y • Update the top k of closest instances (nearest neighbors) - When done, extrapolate from the k nearest neighbors to the class of X

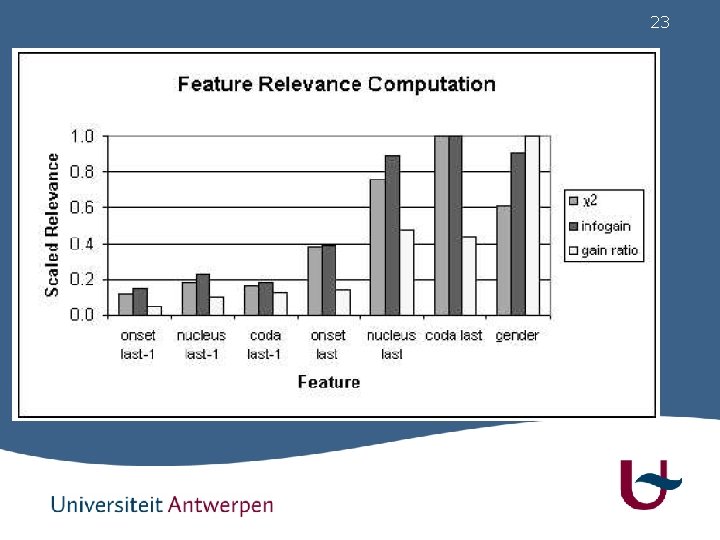

14 Similarity / distance • Similarity determined by - Feature relevance (Gain Ratio, Quinlan) Value similarity (mvdm, Stanfill & Waltz) Exemplar relevance Number of and distance to the nearest neighbors • Heterogeneity and density of the NN

15 The MVDM distance function • Estimate a numeric “distance” between pairs of values - “e” is more like “i” than like “p” in a phonetic task - “book” is more like “document” than like “the” in a parsing task - “NNP” is more like “NN” than like VBD in a part of speech tagging task • An instance of unsupervised learning in the context of a supervised learning task

16 Distance weighted class voting • Increasing the value of k is similar to smoothing • Subtler measurement of local neighborhood: making more distant neighbors count less in the class vote - Linear inverse of distance (with respect to max) - Inverse of distance - Exponential decay

17 Exemplar weighting • Scale the distance of a memory instance by some externally computed factor - Class prediction strength - Frequency - Typicality • Smaller distance for “good” instances • Bigger distance for “bad” instances

18 Ti. MBL http: //ilk. uvt. nl/timbl • Tilburg Memory-Based Learner 5. 2 - Version 6. 0 (soon) will be open source • Available for research and education • Lazy learning, extending k-NN and IB 1 • Optimized search for NN - Internal structure: tree, not flat instance base - Tree ordered by chosen feature weight - Many built-in optional metrics: feature weights, distance function, distance weights, exemplar weights, … - Server-client architecture

19 Back to Inflectional Morphology: German Plural • Notoriously complex but routinely acquired (at age 5) • Evidence for Words + Rules (Dual Mechanism)? -s suffix is default/regular (novel words, surnames, acronyms, …) -s suffix is infrequent (least frequent of the five most important suffixes) Vast majority of plurals should be handled in the words route according to W+R!

20

21 The default status of -s • • • Similar item missing Fnöhk-s Surname, product name Mann-s Borrowings Kiosk-s Acronyms BMW-s Lexicalized phrases Vergissmeinnicht-s Onomatopoeia, truncated roots, derived nouns, . . .

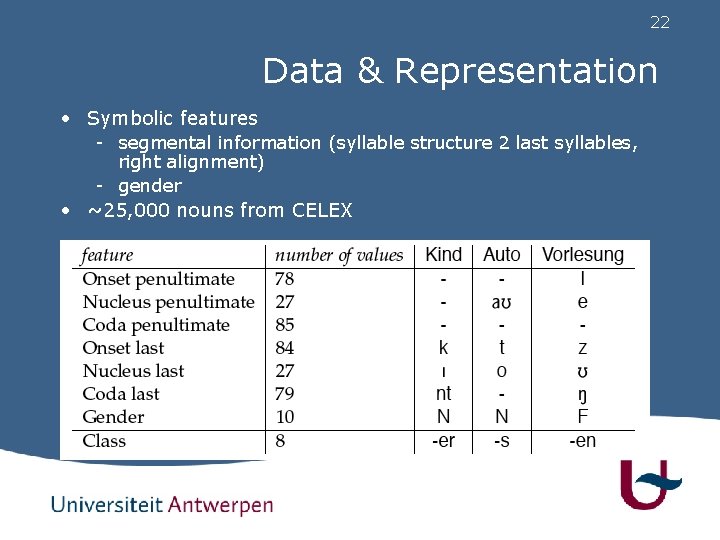

22 Data & Representation • Symbolic features - segmental information (syllable structure 2 last syllables, right alignment) - gender • ~25, 000 nouns from CELEX

23

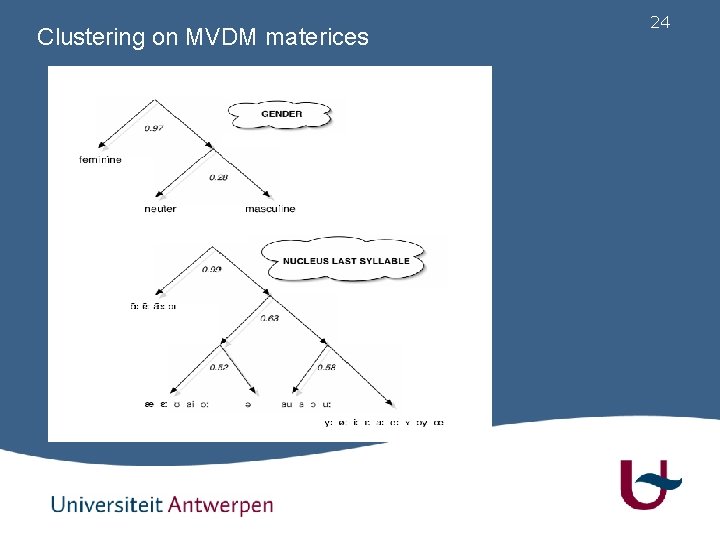

Clustering on MVDM materices 24

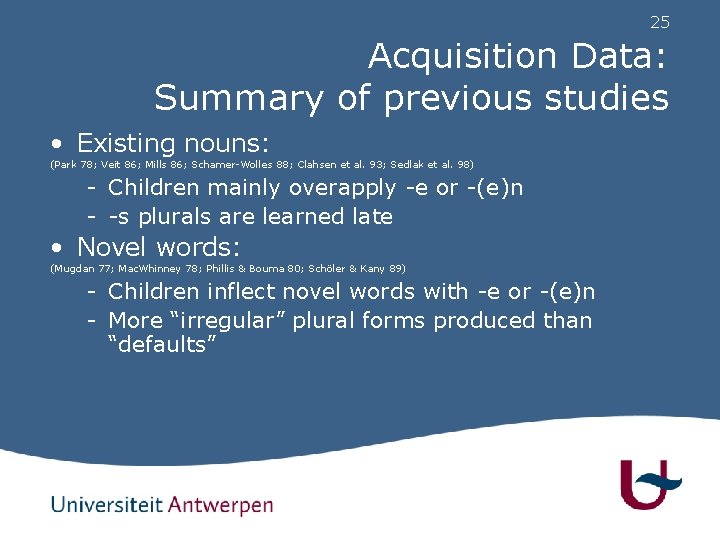

25 Acquisition Data: Summary of previous studies • Existing nouns: (Park 78; Veit 86; Mills 86; Schamer-Wolles 88; Clahsen et al. 93; Sedlak et al. 98) - Children mainly overapply -e or -(e)n - -s plurals are learned late • Novel words: (Mugdan 77; Mac. Whinney 78; Phillis & Bouma 80; Schöler & Kany 89) - Children inflect novel words with -e or -(e)n - More “irregular” plural forms produced than “defaults”

26

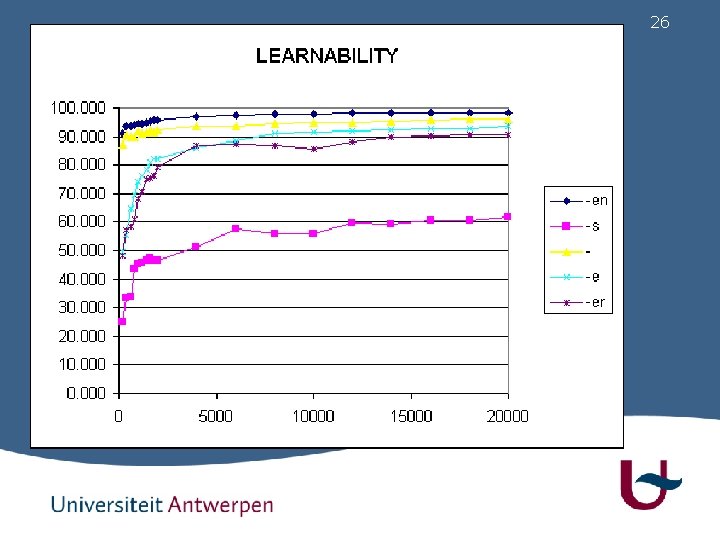

27 MBL simulation • model overapplies mainly -en and -e • -e and -en acquired fastest • -s is learned late and imperfectly • Mainly but not completely parallel to input frequency (more -s overgeneralization than -er generalization)

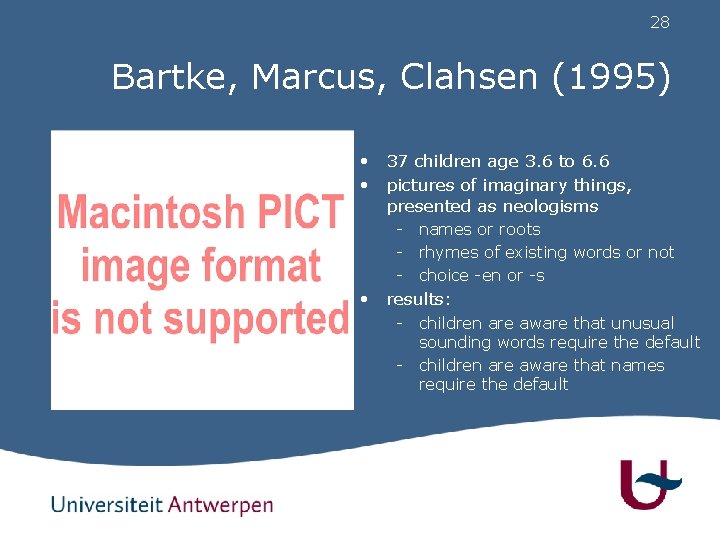

28 Bartke, Marcus, Clahsen (1995) • 37 children age 3. 6 to 6. 6 • pictures of imaginary things, presented as neologisms • - names or roots - rhymes of existing words or not - choice -en or -s results: - children are aware that unusual sounding words require the default - children are aware that names require the default

29 MBL simulation • sort CELEX data according to rhyme • compare overgeneralization • - to -en versus to -s - percentage of total number of errors results: - when new words don’t rhyme more errors are made - overgeneralization to -en drops below the level of overgeneralization to -s

30 Discussion • Three “classes” of plurals: ((-en -)(-e -er))(s) the former 4 suffixes seem “regular”, can be accurately learned using information from phonology and gender -s is learned reasonably well but information is lacking • Hypothesis: more “features” are needed (syntactic, semantic, metalinguistic, …) to enrich the “lexical similarity space” • No difference in accuracy and speed of learning with and without Umlaut • Overall generalization accuracy very high: 95% • Implicitly implements schema-based learning (Köpcke). *, *, i, r, M e

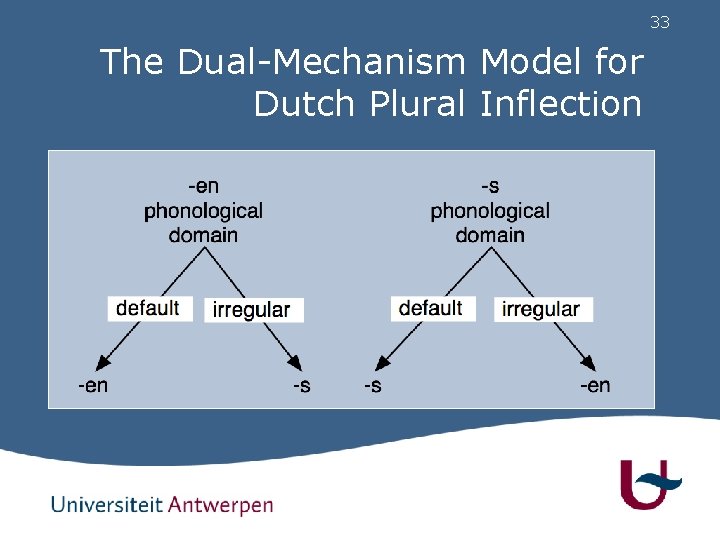

31 The Dutch plural • Two regular suffixes: -en and -s, in complementary distribution. • Some other infrequent inflectional processes - -eren (kind-eren, rund-eren) - latin (museum - musea), greek (corpus - corpora) - suppletion (brandweerman - brandweerlieden)

32 What is the default process in Dutch plural inflection? • • • The Dutch plural is problematic for the dual mechanism model Looking at criteria for determining which inflectional process is the default, no single default can be determined No overregularization in acquisition

33 The Dual-Mechanism Model for Dutch Plural Inflection

34 Inflecting non-canonical roots • Keuleers, Sandra, Daelemans, Gillis, Durieux, Martens (2007) Cognitive Psychology 878, 283 -318. • The inflection of atypical words is only problematic for unassimilated borrowings in the Dutch plural • Adding orthographical information to similarity space - Allows MBL single mechanism model to learn these cases - Has influence on participant’s behavior on non-word inflection • Which is again modeled by the MBL single mechanism model

35 Methodology: what is a good model? • Can we say anything useful about the nature of psycholinguistic processes if we find that a model simulation with a particular combination of parameter values fits our data well? • We need robustness - For different parameter settings - On different tasks / empirical datasets

36 MBL model of Dutch plural inflection • 23040 simulations on each of three tasks • 1 Lexical reconstruction task: cross-validation on CELEX (919/18384). - Compare to attested forms • 2 Pseudoword tasks: - Produce plural for 80 pseudowords (Baayen et al. , 2002) - Produce plural for 180 pseudowords (Keuleers et al. , 2007) - Compare predictions to majority of participants.

37 MBL model of Dutch plural inflection • Each simulation has a unique combination of information sources - Phonological information: 1, 2, 3, or 4 syllables - Prosodic information: with or without stress information - Orthographic information: with or without final grapheme - Alignment: onset-nucleus-coda, start-peak-end, or peak-valley - Padding: empty or delta padding

38 MBL model of Dutch plural inflection • Each simulation has a unique combination of task and algorithm parameter values - Classification task: categorical or transformation labels - Number of distances (k) and distance metric: • k =1, 3, 5, or 7 with the overlap metric • k = 1, 3, 5… or 51 with the MVDM metric - Distance weighting method: zero or inverse distance decay - Type merging: neighbors with equal information merged or not merged.

39 Results • Best models - Holdout task: 97. 8 % accuracy - Pseudoword task 1: 100 % accuracy - Pseudoword task 2: 89 % accuracy • Worst models - Holdout task: 48. 8 % accuracy - Pseudoword task 1: 47. 5 % accuracy - Pseudoword task 2: 51. 1 % accuracy

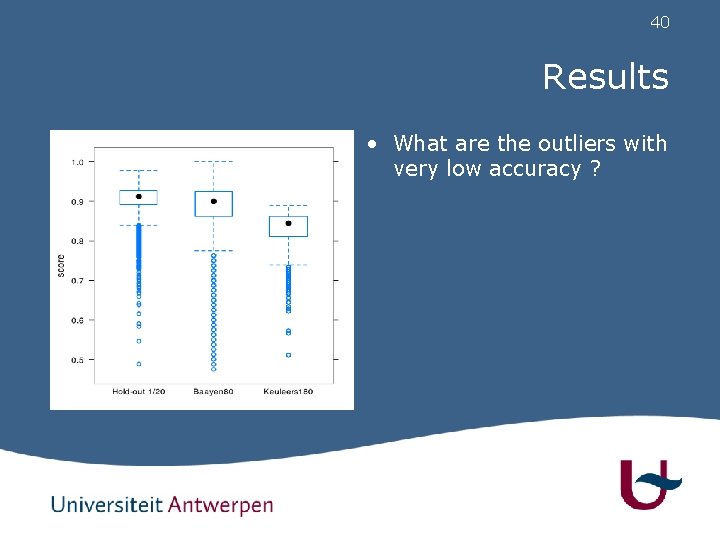

40 Results • What are the outliers with very low accuracy ?

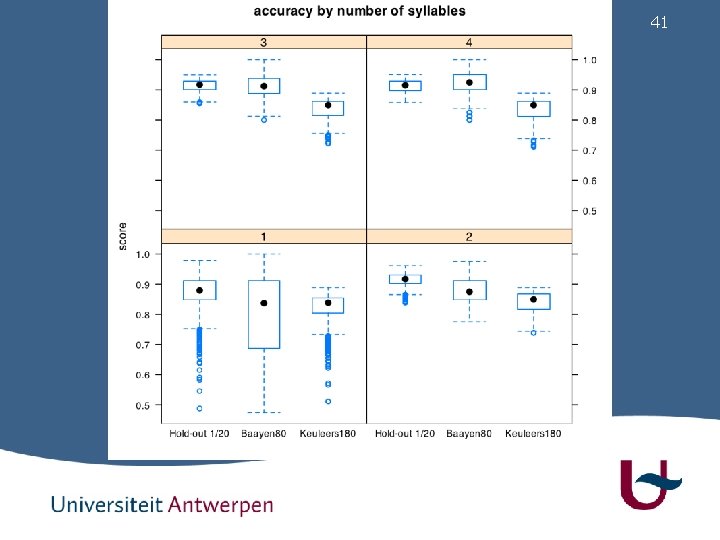

41

42 Results • Apart from one-syllable models, results from MBL appear to be quite robust. • Some small differences - Including stress information does not significantly improve mean accuracy. - Using more than 2 syllables does not improve mean accuracy, except on Baayen’s pseudowords.

43 Results • Some small differences - Including the final grapheme in the model improves mean accuracy by about 1 %. • hond (/hont/) - honden (/hond@/) • wagen (/wag@/) - wagens (/wag@s/) • Since we know (e. g. Baayen & Ernestus, 2001) that there is a difference in the final sounds of hond (/hont/) vs lont (/lont/) , this may even be justifiable. • Alignment and padding have almost no or very small effect on mean accuracy

44 Results • Some small differences - Transformation classes work better than categorical labels for pseudoword tasks - For the lexical reconstruction task, it’s the other way around.

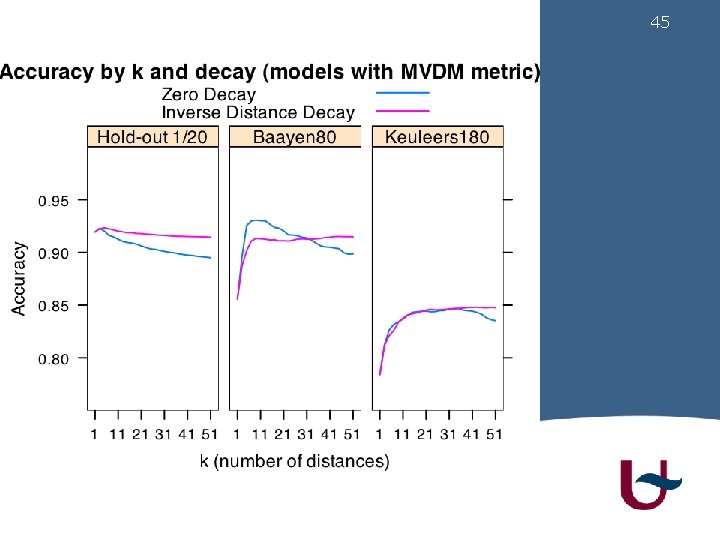

45

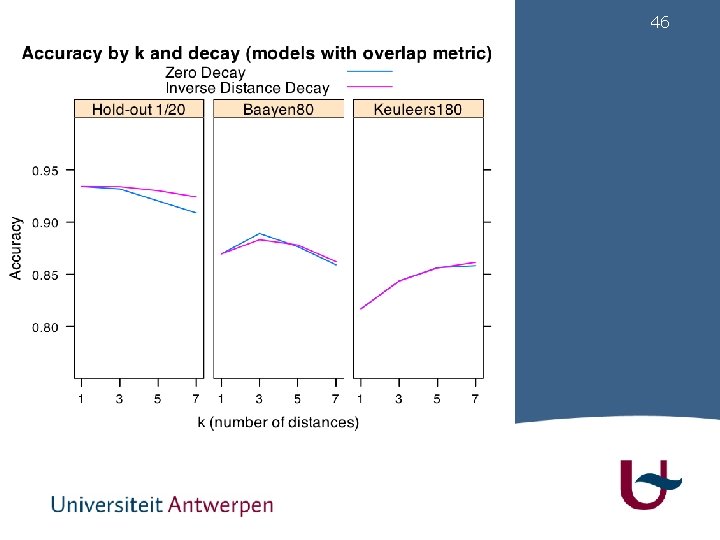

46

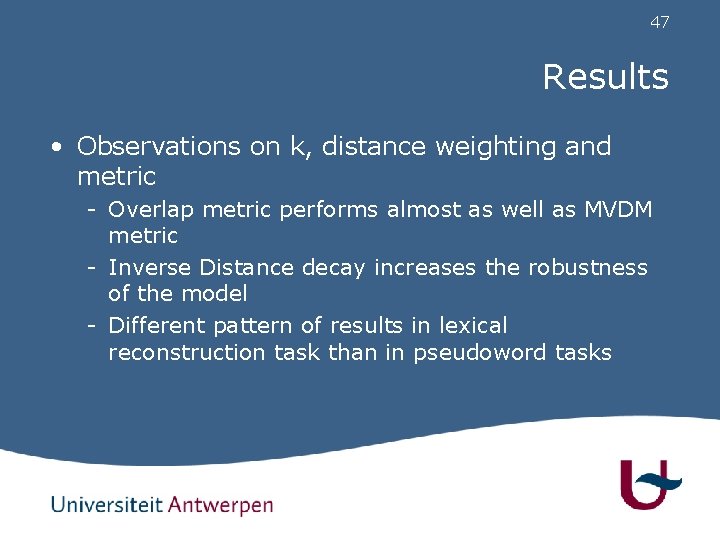

47 Results • Observations on k, distance weighting and metric - Overlap metric performs almost as well as MVDM metric - Inverse Distance decay increases the robustness of the model - Different pattern of results in lexical reconstruction task than in pseudoword tasks

48 Results • Models with low k (1, 3) perform well on the corpus prediction task, but very badly on the pseudoword tasks. • K=1 describes a system that generalizes on the basis of very few exemplars • K=5, 7, … means that irregular classes with few types cannot influence generalization • Apparently this is what happens in the experimental tasks

49 Conclusions • MBL is a robust model for inflectional morphology learning if we use sufficient information to represent exemplars • Large memory size (+18000 items) may have rendered the parameters less important • K is an important parameter with potential psycholinguistic relevance • Simulations should report ranges rather than best simulation • Simulations should address more than 1 task

50 Additional work with Ti. MBL • CNTS - CPL - Dutch gender, word stress, plural - English past tense - German plural • David Eddington - Spanish stress and gender - Italian conjugation • Andrea Krott, Baayen & Schreuder - Dutch and German compound linking element • Ingo Plag et al. - English compound stress

- Slides: 50