Medical student evaluations How much is Too Much

- Slides: 29

Medical student evaluations: �How much is Too Much? � L. M. Mellor, MD � Assistant Professor � Rutgers, RWJMS Centrastate Family Medicine Residency Program

Medical Student Evaluation Throughout History �Apprenticeships- direct observation and feedback, then out and independent �Schools developed- written exams, some observation/ feed back �Then schools relied more on written exams, little feedback �Now schools are trying many different methods of evaluation in an attempt to produce better educated physicians with more standardized evaluations

Current Status of Evaluation of Clinical Competence �Use as many evaluation methods as possible to try to get a complete picture of the student’s abilities. �But, How much is Too Much? �Is this really the best method?

Studies show clerkship grading varies dramatically! (1, 2) For example: variability in the number of HONORS- 2 -93%!

Less than 1% fail a required clerkship! (3) �Over 40% of clerkship directors admitted they had passed a student who should have failed (3) �Giving a student a “Pass” is the kiss of death in a clerkship so… �Grade inflation is a common issue

Why Don’t they Fail? --or even get a “Pass”? �Docs don’t want to deal with angry, upset or litigious students (3) �Often there is no set method of dealing with struggling students (4) �Remediation is TOUGH

To Make Matters even Worse: Non -cognitive predictors of grades exist �“One study showed that lower clerkship grades were associated with nonwhite race, male gender, older age, lower quality of clerkship experience, and being less assertive and more reticent” (5) �There is a vague sense from most students that the whole grading process is pretty subjective.

Many believe there is a solution. USE AS MANY DIFFERENT “OBJECTIVE” METHODS AS POSSIBLE TO ASSESSS STUDENTS ON CLINICAL ROTATIONS!

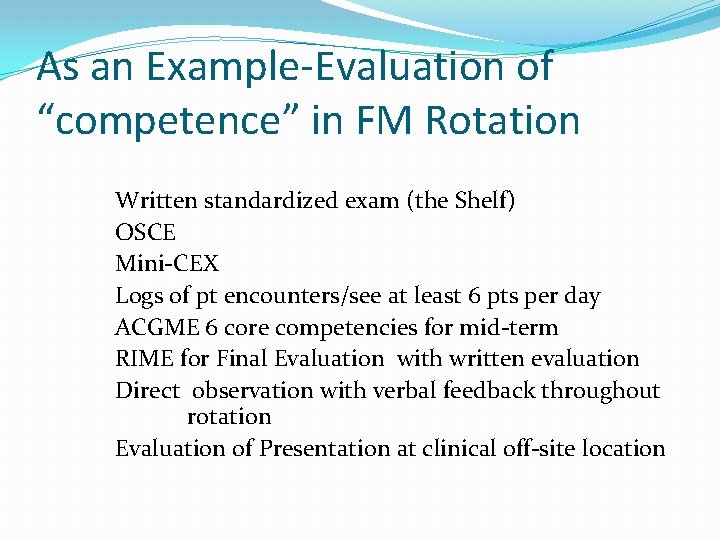

As an Example-Evaluation of “competence” in FM Rotation Written standardized exam (the Shelf) OSCE Mini-CEX Logs of pt encounters/see at least 6 pts per day ACGME 6 core competencies for mid-term RIME for Final Evaluation with written evaluation Direct observation with verbal feedback throughout rotation Evaluation of Presentation at clinical off-site location

Standardized exams- “SHELF” �Value of these exams to assess knowledge base has been established over years of study �Some students do not perform as well on these exams as they do in clinical situations �These exams are limited in their ability to assess critical thinking skills �These exams cannot accurately assess interpersonal skills

Direct observation with patient and written/verbal feedback �Personalized, more reliable than would be expected (study compared stud results to res directors(6) �Con: subjective , interrator variability, interpersonal relationships can play a role �Checklists being developed to assess history taking, physical exam performance and information sharing (6, 7)effort to standardize encounters �Some researchers feel that since this is happening less and less it should be weighted less for grading (8) �Verbal feedback is difficult and requires training to be done effectively(9) �STRONG PUSH TO SYSTEMATIZE(10)

6 core competencies �Developed by ACGME committees after Feds said they wanted better doctors graduated from residencies. �Now these competencies are applied to medical students

6 core competencies-pro/con �Standardized form developed by committee to describe what a good doc should be able to do �Thought to be complete evaluation �Widely used �Not well understood outside of academic circles (and sometimes even in academic circles!)(11)

MINI-CEX � Direct observation of a specific part of a clinical interaction – ie-the knee exam

MINI-CEX Pro/con �Many studies show it is effective, students find it valuable(11) �Studies show good interrator reliability (11) �Requires TIME and energy and cooperative patients �Can cause severe performance anxiety in some students affecting performance

OSCE exams �Use of standardized patients is well documented for reliability(13, 14) �Now used as part of USMLE exams

OSCE �Pros-standardized, objective(15) �Thought that it may be useful for evaluation of 6 core competencies? (16) �Cons-expensive, time-intensive

RIME �Newer evaluation format: �Reporter �Interpreter �Manager �Educator

RIME �Pro- it is new-supposed to be easier/ more reliable to use �Con-it is new-similar handicaps as 6 core competencies (wording, too easy to give everyone high marks) � “It is not focused on technical skills…the RIME scheme is used for students to show they were able to demonstrate skills, be consistent and progressive, and to show improvements” (17)

Clinical Logs �Used to track the number of patients seen and their diagnoses �Require good administrative skills on the part of the student �“If you talk to or touch the pt you should log the encounter” (18) �Many schools have a list of diagnoses the students must see in order to graduate for example-UNC 96(19)

Presentation at Community Site

In Conclusion… �Clerkship students are being evaluated in many dimensions �Is it any better? �What are other programs doing?

How do they Evaluate Us? �“Involving students in a humanistic but rigorous approach to medicine and being a physician students wanted to emulate seem particularly important. These aspects appear potentially amenable to faculty development” (20)

THANKS!

References � 1 - Study: Clerkship grading varies “Dramatically” at U. S. Medical Schools, US News and World Report, july 12, 2012. � 2 -Variation and imprecision of clerkship grading in U. S. medical schools, Acad. Med. , 2012 Aug, 87(8): 1070 -6. � 3 -Why Failing Med students Don’t Get Failing Grades, The New York Times, Feb 28, 2013. � 4 -medical school policies regarding struggling students during the internal medicine clerkships: results of a national survey- Acad. Med. -Sept 8 vol 83 issue 9 pp 876881.

Continued � 5 -”Making the Grade”- Noncognitive predictors of medical students’ clinical clerkship grades, J. Natl Med Assoc, 2007 oct; 99(10)1138 -1150. � 6 - Direct observation by Faculty, Practical Guide to the Evaluation of Clinical Competence, Chap 9, 119 -128. � 7 - Direct Observation in Medical Education: A review of the Literature and Evidence for Validity, Mount Sinai School of Medicine, 29 July 2009, vol 76, issue 4 pp 365 -371.

More references � 8 - Direct Observation of Students during Clerkship Rotations: a Multiyear Descriptive Study. Acad Med, mar 2004, vol 79 Issue 2, pp 276 -280. � 9 -Assessment in Medical Education, Clinical Collections NEJM, 2007, jan 25, 2007, pp 386 -397. � 10 -Systematic Direct Observation of Clinical Skills in the Clinical Year, Mededportal. org feb 13, 2014, pub id 9712. � 11 -Competency and the six core competencies, JSLS, 2002, Apr-Jun; 6(2); 95 -97

More references � 12 -Examiner Differences in the Mini-CEX; Advances in Medical Education, pp 170 -172. � 13 - An Overview of the uses of standardized patients for teaching and evaluating clinical skills; Acad Med, June 1993. � 14 -Use of an Objective Structured Clinical Examination in evaluating student performance; Family Medicine; May 1998 pp 338 -344. � 15 - OSCE- The assessment of choice; Oman Med J 2011 jul; 26(4) 219 -222.

And more… � 16 -Is OSCE valid for evaluation of the six ACGME general Competencies? Science Direct; 74(2001) 193194. � 17(RIME) for the Evaluation of First Professional Degree Students; Mededportal; id 834 Sept 12, 2013. � 18 -Clinical Log: UNC. EDU/medclerk. � 19 -Clinical Log-UNC. EDU/medclerk. � 20 -Third-year medical students’ perceptions of effective teaching behaviors in a multidisciplinary ambulatory clerkship; Acad Med, Aug 2008; 78(8) p 815 -819.