Measurement Reliability Validity and Introduction to Validity the

Measurement: Reliability Validity and

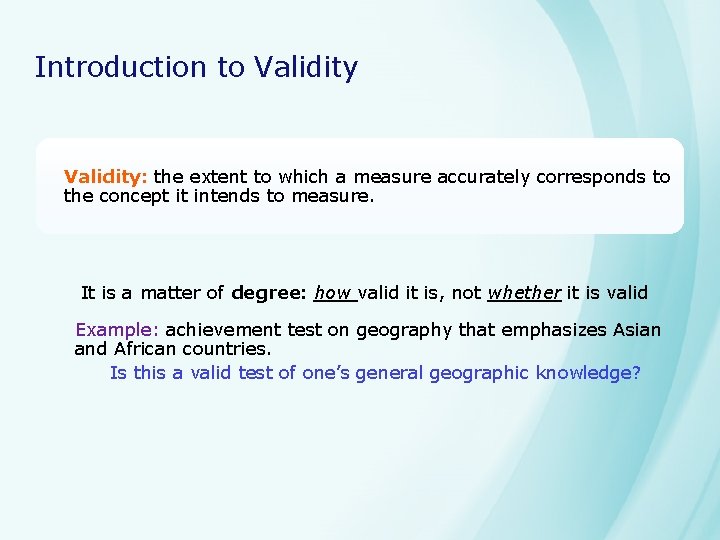

Introduction to Validity: the extent to which a measure accurately corresponds to the concept it intends to measure. It is a matter of degree: how valid it is, not whether it is valid Example: achievement test on geography that emphasizes Asian and African countries. Is this a valid test of one’s general geographic knowledge?

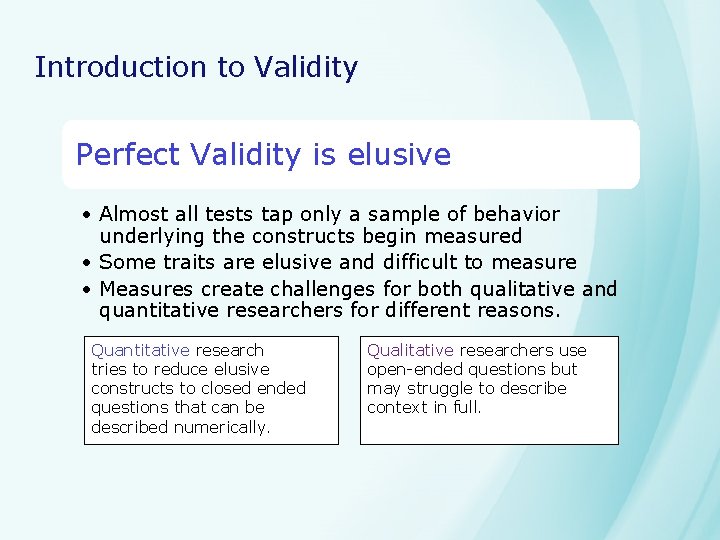

Introduction to Validity Perfect Validity is elusive • Almost all tests tap only a sample of behavior underlying the constructs begin measured • Some traits are elusive and difficult to measure • Measures create challenges for both qualitative and quantitative researchers for different reasons. Quantitative research tries to reduce elusive constructs to closed ended questions that can be described numerically. Qualitative researchers use open-ended questions but may struggle to describe context in full.

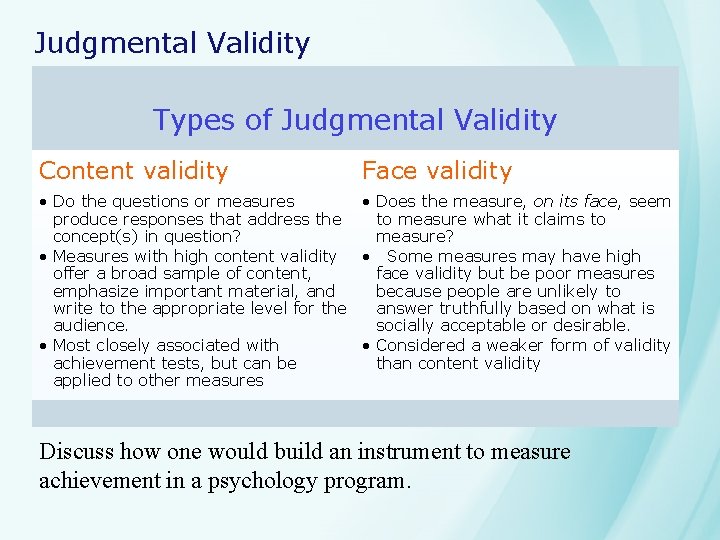

Judgmental Validity Types of Judgmental Validity Content validity Face validity • Do the questions or measures • Does the measure, on its face, seem produce responses that address the to measure what it claims to concept(s) in question? measure? • Measures with high content validity • Some measures may have high offer a broad sample of content, face validity but be poor measures emphasize important material, and because people are unlikely to write to the appropriate level for the answer truthfully based on what is audience. socially acceptable or desirable. • Most closely associated with • Considered a weaker form of validity achievement tests, but can be than content validity applied to other measures Discuss how one would build an instrument to measure achievement in a psychology program.

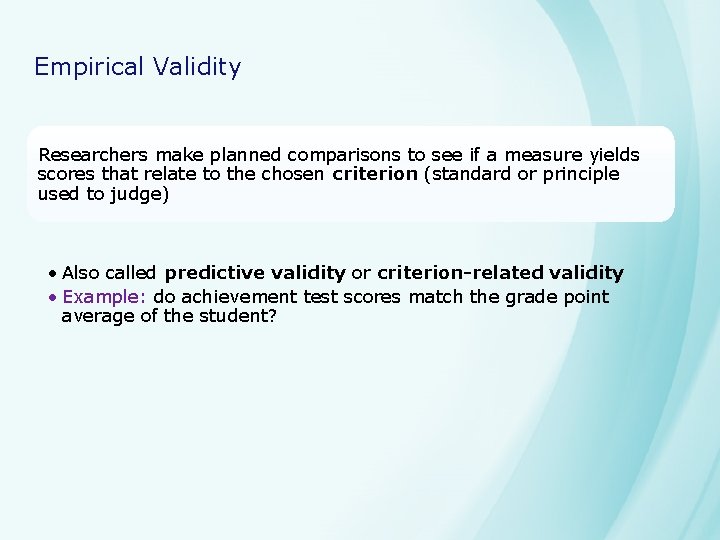

Empirical Validity Researchers make planned comparisons to see if a measure yields scores that relate to the chosen criterion (standard or principle used to judge) • Also called predictive validity or criterion-related validity • Example: do achievement test scores match the grade point average of the student?

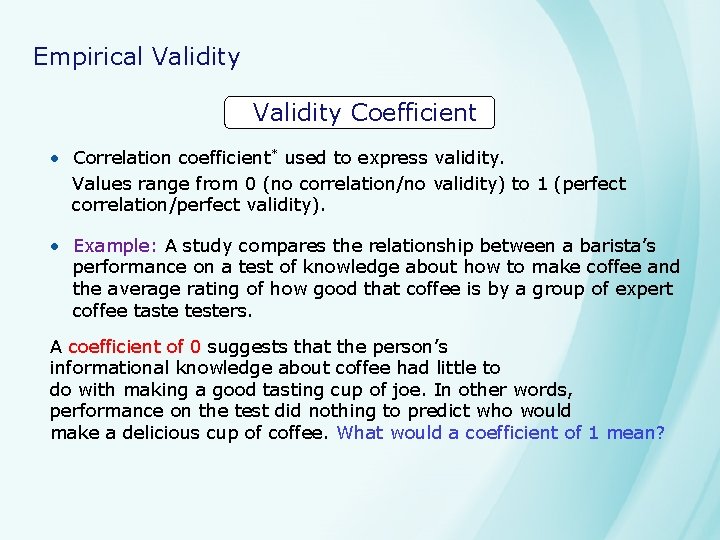

Empirical Validity Coefficient • Correlation coefficient* used to express validity. Values range from 0 (no correlation/no validity) to 1 (perfect correlation/perfect validity). • Example: A study compares the relationship between a barista’s performance on a test of knowledge about how to make coffee and the average rating of how good that coffee is by a group of expert coffee taste testers. A coefficient of 0 suggests that the person’s informational knowledge about coffee had little to do with making a good tasting cup of joe. In other words, performance on the test did nothing to predict who would make a delicious cup of coffee. What would a coefficient of 1 mean?

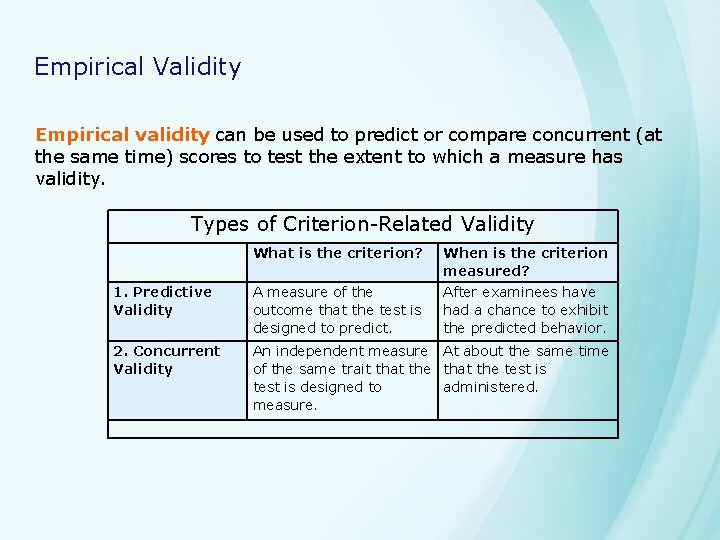

Empirical Validity Empirical validity can be used to predict or compare concurrent (at the same time) scores to test the extent to which a measure has validity. Types of Criterion-Related Validity What is the criterion? When is the criterion measured? 1. Predictive Validity A measure of the outcome that the test is designed to predict. After examinees have had a chance to exhibit the predicted behavior. 2. Concurrent Validity An independent measure At about the same time of the same trait that the test is designed to administered. measure.

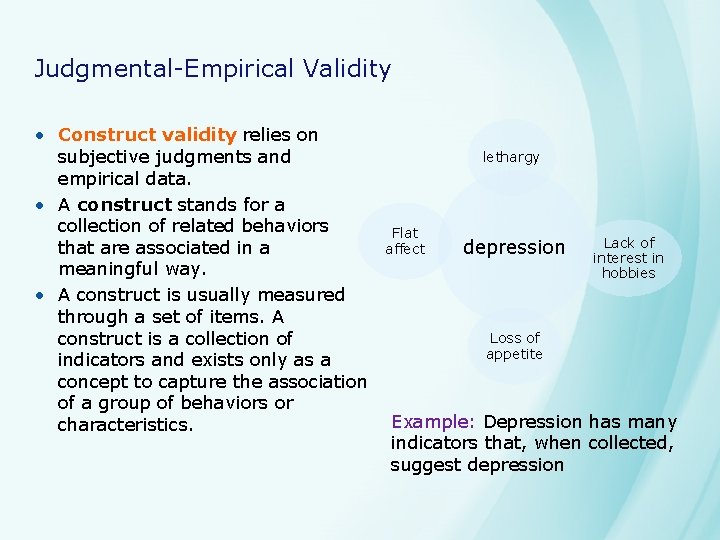

Judgmental-Empirical Validity • Construct validity relies on subjective judgments and empirical data. • A construct stands for a collection of related behaviors that are associated in a meaningful way. • A construct is usually measured through a set of items. A construct is a collection of indicators and exists only as a concept to capture the association of a group of behaviors or characteristics. lethargy Flat affect depression Lack of interest in hobbies Loss of appetite Example: Depression has many indicators that, when collected, suggest depression

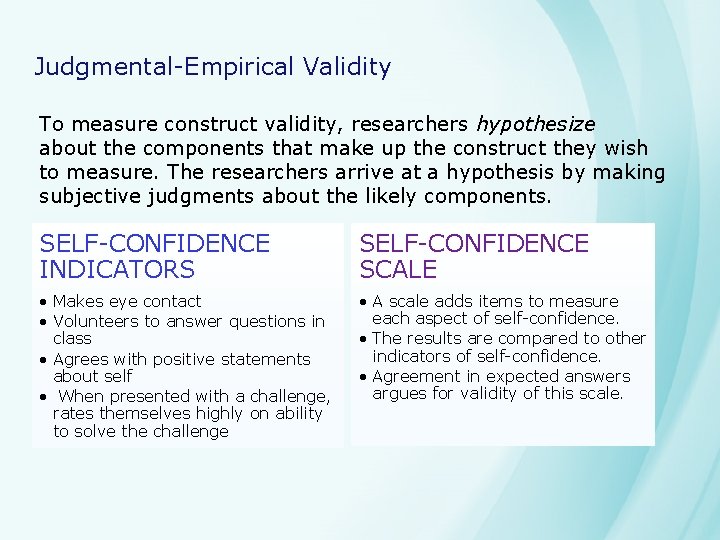

Judgmental-Empirical Validity To measure construct validity, researchers hypothesize about the components that make up the construct they wish to measure. The researchers arrive at a hypothesis by making subjective judgments about the likely components. SELF-CONFIDENCE INDICATORS SELF-CONFIDENCE SCALE • Makes eye contact • Volunteers to answer questions in class • Agrees with positive statements about self • When presented with a challenge, rates themselves highly on ability to solve the challenge • A scale adds items to measure each aspect of self-confidence. • The results are compared to other indicators of self-confidence. • Agreement in expected answers argues for validity of this scale.

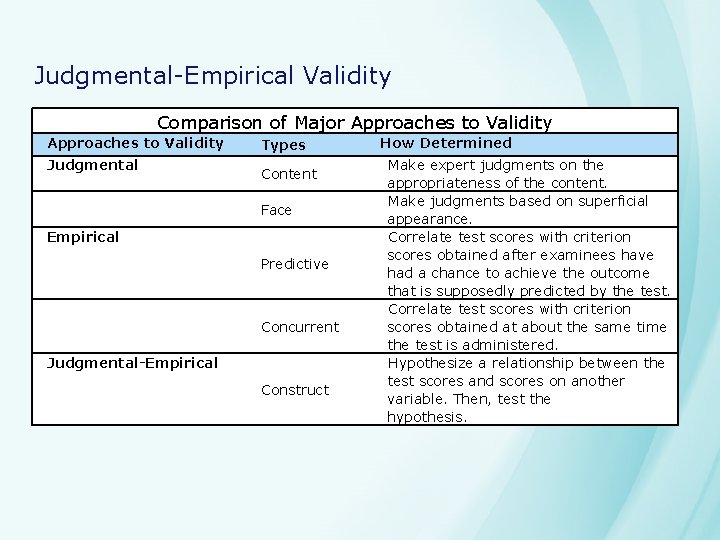

Judgmental-Empirical Validity Comparison of Major Approaches to Validity Judgmental Types Content Face Empirical Predictive Concurrent Judgmental-Empirical Construct How Determined Make expert judgments on the appropriateness of the content. Make judgments based on superficial appearance. Correlate test scores with criterion scores obtained after examinees have had a chance to achieve the outcome that is supposedly predicted by the test. Correlate test scores with criterion scores obtained at about the same time the test is administered. Hypothesize a relationship between the test scores and scores on another variable. Then, test the hypothesis.

Reliability and its Relationship to Validity • Reliability is consistency in results • Example: a person hits the bullseye three times in a row consecutively. • Someone might get lucky and hit bullseye once, but consistency is more likely to reveal skill.

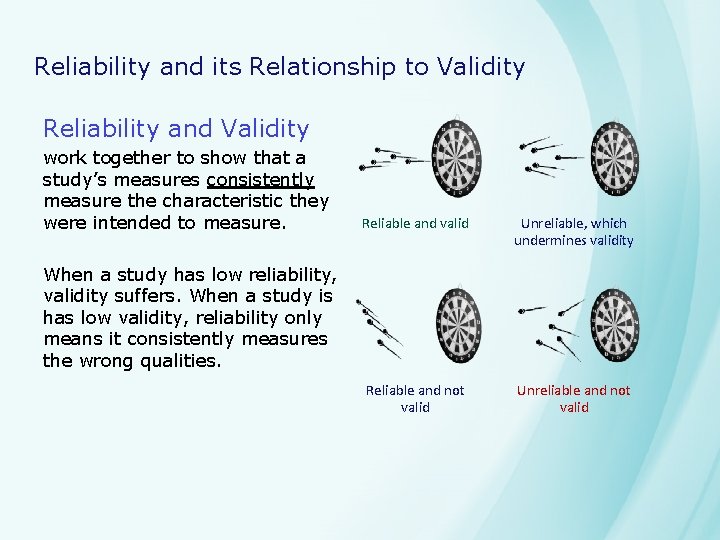

Reliability and its Relationship to Validity Reliability and Validity work together to show that a study’s measures consistently measure the characteristic they were intended to measure. Reliable and valid Unreliable, which undermines validity Reliable and not valid Unreliable and not valid When a study has low reliability, validity suffers. When a study is has low validity, reliability only means it consistently measures the wrong qualities.

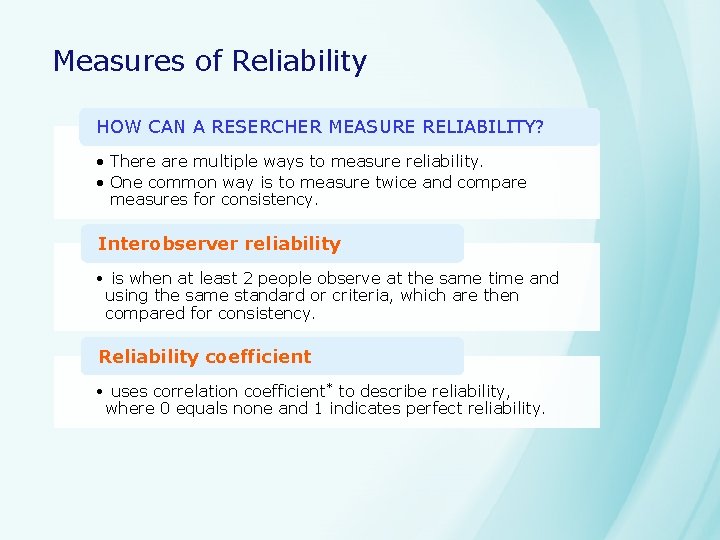

Measures of Reliability HOW CAN A RESERCHER MEASURE RELIABILITY? • There are multiple ways to measure reliability. • One common way is to measure twice and compare measures for consistency. Interobserver reliability • is when at least 2 people observe at the same time and using the same standard or criteria, which are then compared for consistency. Reliability coefficient • uses correlation coefficient* to describe reliability, where 0 equals none and 1 indicates perfect reliability.

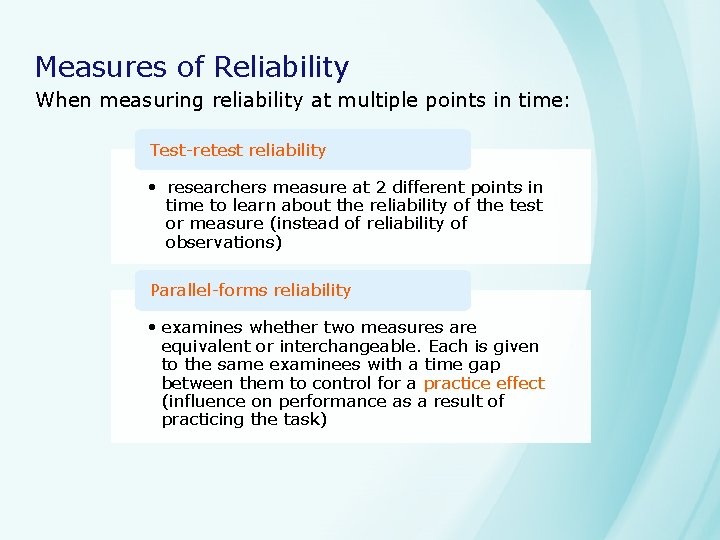

Measures of Reliability When measuring reliability at multiple points in time: Test-retest reliability • researchers measure at 2 different points in time to learn about the reliability of the test or measure (instead of reliability of observations) Parallel-forms reliability • examines whether two measures are equivalent or interchangeable. Each is given to the same examinees with a time gap between them to control for a practice effect (influence on performance as a result of practicing the task)

Measures of Reliability How high should a reliability coefficient be? • Most published tests have reliability coefficients of. 80 or higher. • Researchers should strive to select or build measures with coefficients of. 80 or higher when interpreting individual scores.

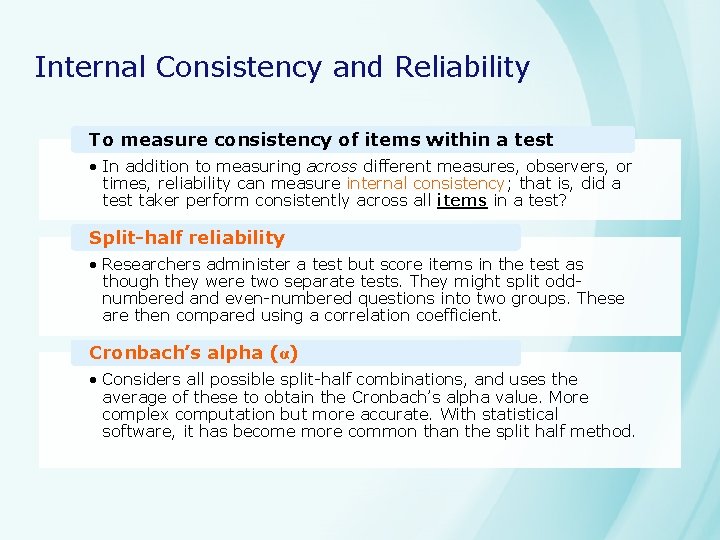

Internal Consistency and Reliability To measure consistency of items within a test • In addition to measuring across different measures, observers, or times, reliability can measure internal consistency; that is, did a test taker perform consistently across all items in a test? Split-half reliability • Researchers administer a test but score items in the test as though they were two separate tests. They might split oddnumbered and even-numbered questions into two groups. These are then compared using a correlation coefficient. Cronbach’s alpha (α) • Considers all possible split-half combinations, and uses the average of these to obtain the Cronbach’s alpha value. More complex computation but more accurate. With statistical software, it has become more common than the split half method.

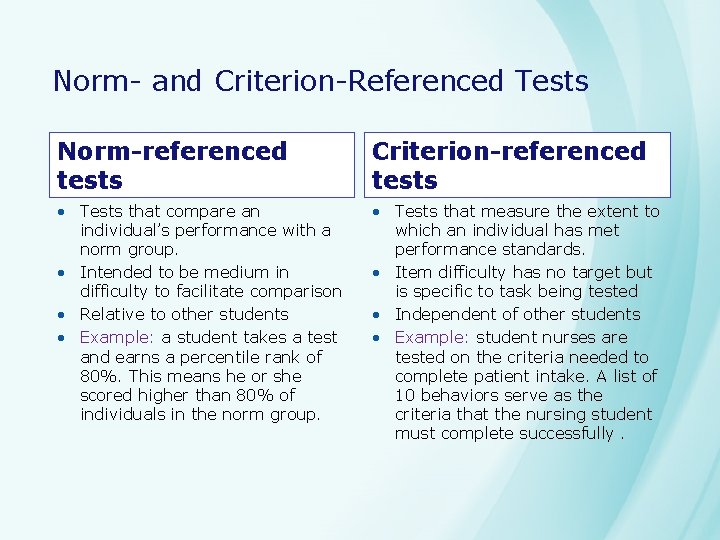

Norm- and Criterion-Referenced Tests Norm-referenced tests Criterion-referenced tests • Tests that compare an individual’s performance with a norm group. • Intended to be medium in difficulty to facilitate comparison • Relative to other students • Example: a student takes a test and earns a percentile rank of 80%. This means he or she scored higher than 80% of individuals in the norm group. • Tests that measure the extent to which an individual has met performance standards. • Item difficulty has no target but is specific to task being tested • Independent of other students • Example: student nurses are tested on the criteria needed to complete patient intake. A list of 10 behaviors serve as the criteria that the nursing student must complete successfully.

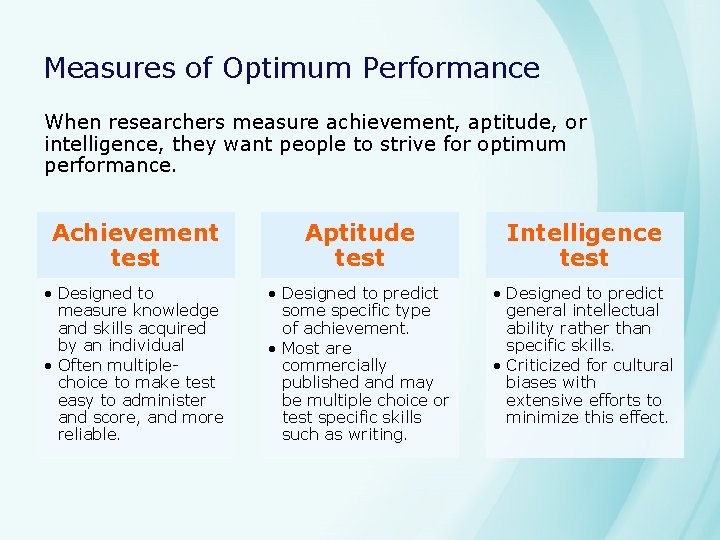

Measures of Optimum Performance When researchers measure achievement, aptitude, or intelligence, they want people to strive for optimum performance. Achievement test Aptitude test Intelligence test • Designed to measure knowledge and skills acquired by an individual • Often multiplechoice to make test easy to administer and score, and more reliable. • Designed to predict some specific type of achievement. • Most are commercially published and may be multiple choice or test specific skills such as writing. • Designed to predict general intellectual ability rather than specific skills. • Criticized for cultural biases with extensive efforts to minimize this effect.

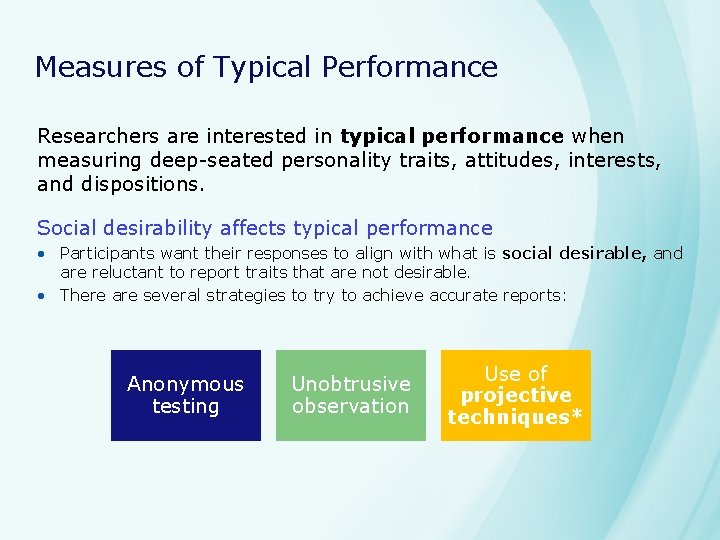

Measures of Typical Performance Researchers are interested in typical performance when measuring deep-seated personality traits, attitudes, interests, and dispositions. Social desirability affects typical performance • Participants want their responses to align with what is social desirable, and are reluctant to report traits that are not desirable. • There are several strategies to try to achieve accurate reports: Anonymous testing Unobtrusive observation Use of projective techniques*

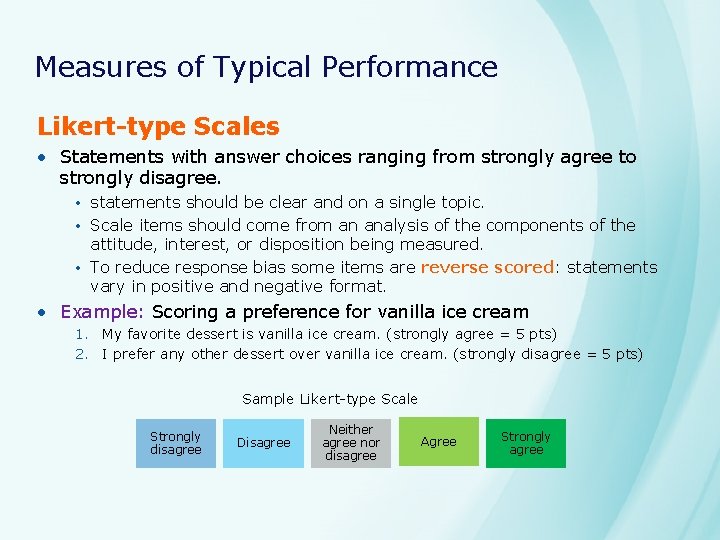

Measures of Typical Performance Likert-type Scales • Statements with answer choices ranging from strongly agree to strongly disagree. • statements should be clear and on a single topic. • Scale items should come from an analysis of the components of the attitude, interest, or disposition being measured. • To reduce response bias some items are reverse scored: statements vary in positive and negative format. • Example: Scoring a preference for vanilla ice cream 1. My favorite dessert is vanilla ice cream. (strongly agree = 5 pts) 2. I prefer any other dessert over vanilla ice cream. (strongly disagree = 5 pts) Sample Likert-type Scale Strongly disagree Disagree Neither agree nor disagree Agree Strongly agree

Measurement in Qualitative Research While validity and reliability relate directly to statistical techniques, qualitative researchers still consider the dependability and trustworthiness of their data. Triangulation uses multiple sources or methods to obtain data. When data collected in different ways points to the same conclusion, it strengthens the researcher’s argument and mitigates weaknesses of any one method.

Measurement in Qualitative Research Data Triangulation uses multiple types of data. • May use the same method to collect data but ask different participants Example: a researcher studying religious participation might interview individual members of a church and also interview the minister or others in the church organization. Methods Triangulation uses multiple methods to obtain data. • Uses at least two methods but may not collect from different groups. Example: a researcher studying religious participation might observe at a particular church and also interview individual members of the church When qualitative and quantitative methods are combined, it is called Mixed Methods

Measurement in Qualitative Research If a research team is involved, another possible form of triangulation is researcher triangulation. Each member of the research team participates in collecting and analyzing the data to reduce the chance that results present an one researcher’s idiosyncratic view. Forming a team of researchers with diverse backgrounds or from multiple disciplines can be helpful.

Measurement in Qualitative Research Additional methods for ensuring dependability and trustworthiness of qualitative data and analysis • Check the accuracy of the transcription. • Use interobserver agreement • Use peer review or auditing of the research data collection process • Use a process called member checking, in which the study participants check specific aspects of the research results and analysis.

- Slides: 24