MDPs and the RL Problem CMSC 471 Spring

MDPs and the RL Problem CMSC 471 – Spring 2014 Class #25 – Thursday, May 1 Russell & Norvig Chapter 21. 1 -21. 3 Thanks to Rich Sutton and Andy Barto for the use of their slides (modified with additional slides and in-class exercise) R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 1

Learning Without a Model p Last time, we saw how to learn a value function and/or a policy from a transition model p What if we don’t have a transition model? ? p Idea #1: n Explore the environment for a long time n Record all transitions n Learn the transition model n Apply value iteration/policy iteration n Slow and requires a lot of exploration! No intermediate learning! p Idea #2: Learn a value function (or policy) directly from interactions with the environment, while exploring R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 2

Simple Monte Carlo T TT T R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction TT T T TT 3

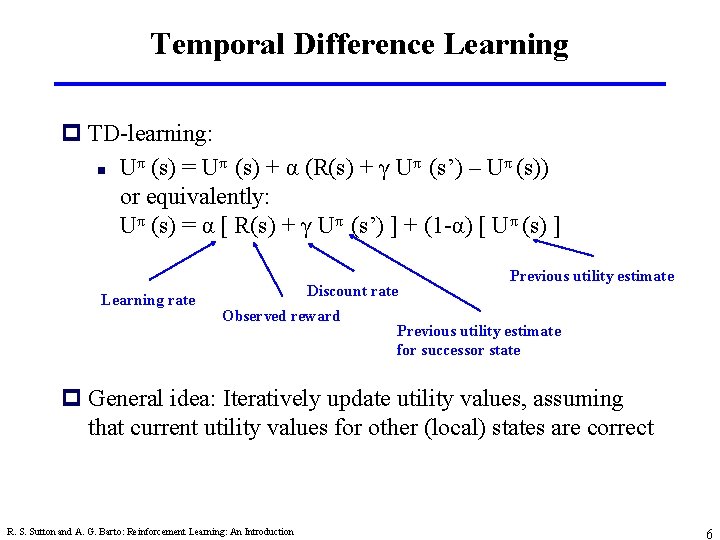

TD Prediction Policy Evaluation (the prediction problem): for a given policy p, compute the state-value function Recall: target: the actual return after time t target: an estimate of the return γ: a discount factor in [0, 1] (relative value of future rewards) R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 4

Simplest TD Method TT T T R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction TT T T TT 5

Temporal Difference Learning p TD-learning: π π n U (s) = U (s) + α (R(s) + γ U (s’) – U (s)) or equivalently: Uπ (s) = α [ R(s) + γ Uπ (s’) ] + (1 -α) [ Uπ (s) ] Learning rate Discount rate Observed reward Previous utility estimate for successor state p General idea: Iteratively update utility values, assuming that current utility values for other (local) states are correct R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 6

Exploration vs. Exploitation p Problem with naive reinforcement learning: n What action to take? n Best apparent action, based on learning to date – Greedy strategy – Often prematurely converges to a suboptimal policy! n Random action – Will cover entire state space – Very expensive and slow to learn! – When to stop being random? n Balance exploration (try random actions) with exploitation (use best action so far) R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 7

Q-Learning Q-value: Value of taking action A in state S (as opposed to V = value of state S) R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 8

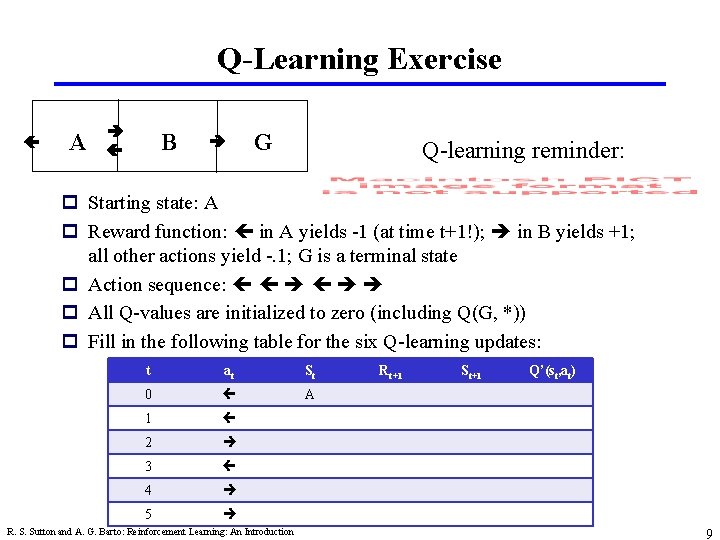

Q-Learning Exercise A B G Q-learning reminder: p Starting state: A p Reward function: in A yields -1 (at time t+1!); in B yields +1; all other actions yield -. 1; G is a terminal state p Action sequence: p All Q-values are initialized to zero (including Q(G, *)) p Fill in the following table for the six Q-learning updates: t at St 0 A 1 2 3 4 5 R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction Rt+1 St+1 Q’(st, at) 9

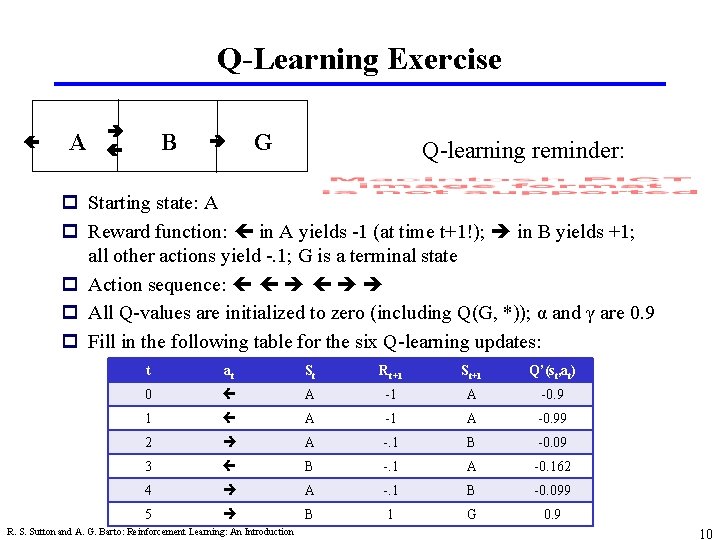

Q-Learning Exercise A B G Q-learning reminder: p Starting state: A p Reward function: in A yields -1 (at time t+1!); in B yields +1; all other actions yield -. 1; G is a terminal state p Action sequence: p All Q-values are initialized to zero (including Q(G, *)); α and γ are 0. 9 p Fill in the following table for the six Q-learning updates: t at St Rt+1 St+1 Q’(st, at) 0 A -1 A -0. 99 2 A -. 1 B -0. 09 3 B -. 1 A -0. 162 4 A -. 1 B -0. 099 5 B 1 G 0. 9 R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 10

- Slides: 10