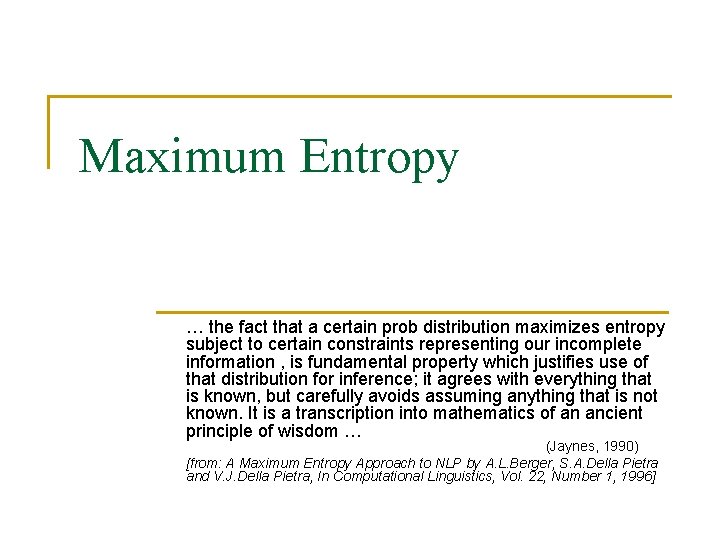

Maximum Entropy the fact that a certain prob

Maximum Entropy … the fact that a certain prob distribution maximizes entropy subject to certain constraints representing our incomplete information , is fundamental property which justifies use of that distribution for inference; it agrees with everything that is known, but carefully avoids assuming anything that is not known. It is a transcription into mathematics of an ancient principle of wisdom … (Jaynes, 1990) [from: A Maximum Entropy Approach to NLP by A. L. Berger, S. A. Della Pietra and V. J. Della Pietra, In Computational Linguistics, Vol. 22, Number 1, 1996]

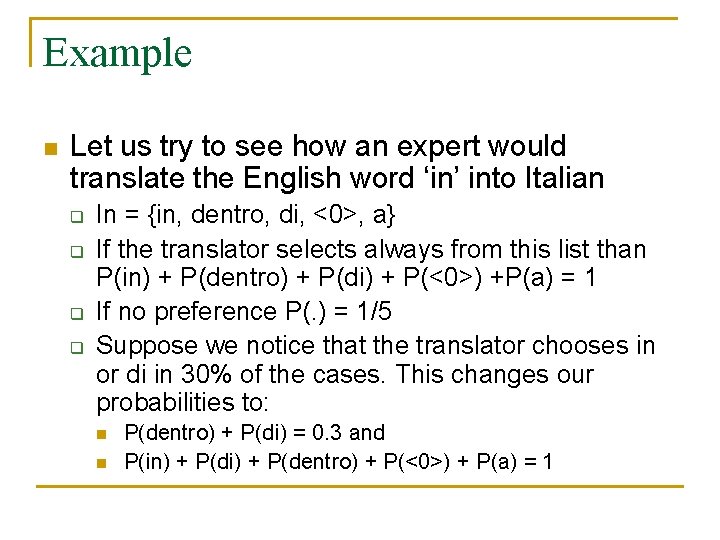

Example n Let us try to see how an expert would translate the English word ‘in’ into Italian q q In = {in, dentro, di, <0>, a} If the translator selects always from this list than P(in) + P(dentro) + P(di) + P(<0>) +P(a) = 1 If no preference P(. ) = 1/5 Suppose we notice that the translator chooses in or di in 30% of the cases. This changes our probabilities to: n n P(dentro) + P(di) = 0. 3 and P(in) + P(di) + P(dentro) + P(<0>) + P(a) = 1

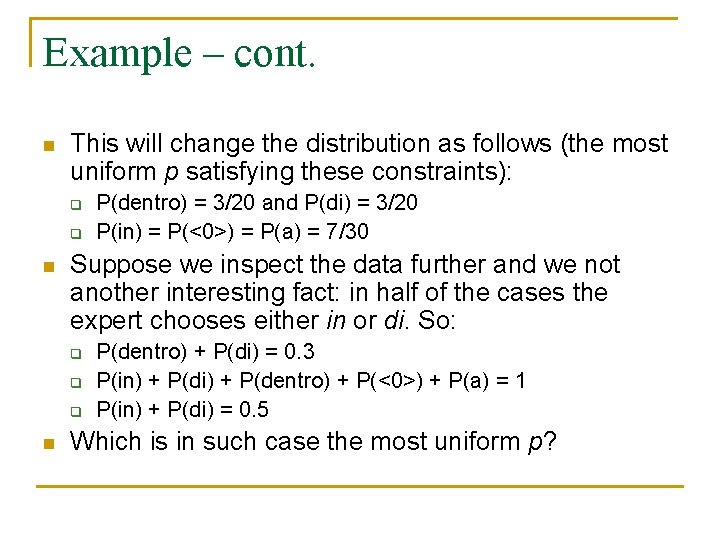

Example – cont. n This will change the distribution as follows (the most uniform p satisfying these constraints): q q n Suppose we inspect the data further and we not another interesting fact: in half of the cases the expert chooses either in or di. So: q q q n P(dentro) = 3/20 and P(di) = 3/20 P(in) = P(<0>) = P(a) = 7/30 P(dentro) + P(di) = 0. 3 P(in) + P(di) + P(dentro) + P(<0>) + P(a) = 1 P(in) + P(di) = 0. 5 Which is in such case the most uniform p?

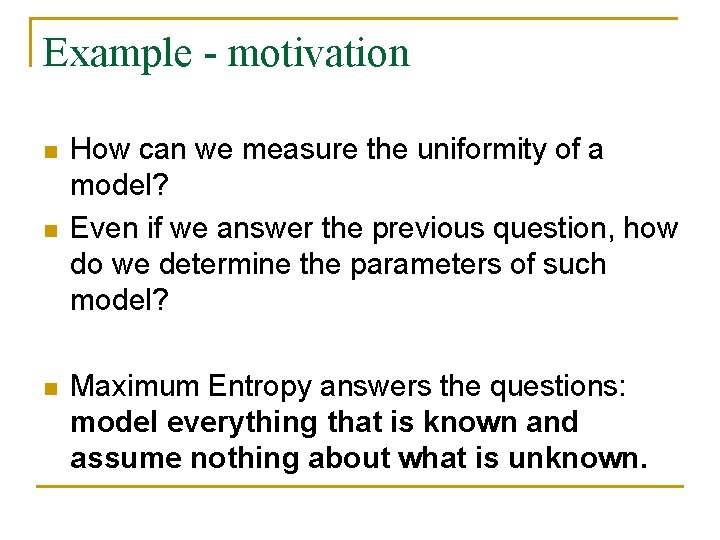

Example - motivation n How can we measure the uniformity of a model? Even if we answer the previous question, how do we determine the parameters of such model? Maximum Entropy answers the questions: model everything that is known and assume nothing about what is unknown.

Aim n Construct a statistical model of the process that generated the training sample P(x, y); n P(y|x)… given a context x, the prob that the system will output y.

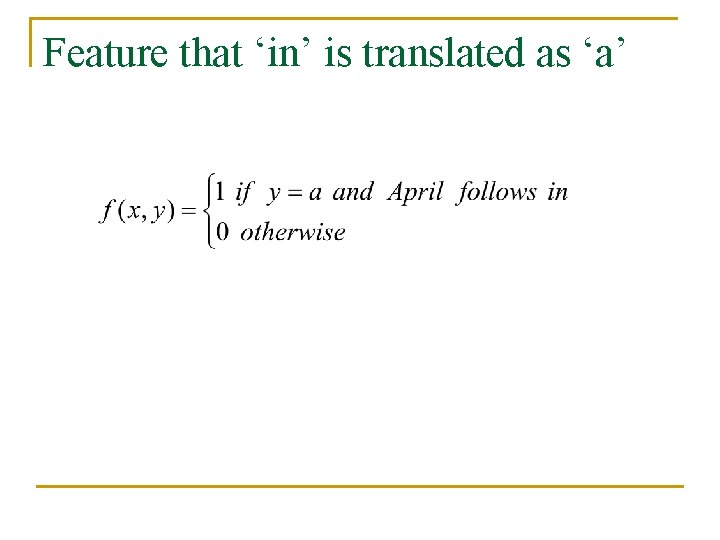

Feature that ‘in’ is translated as ‘a’

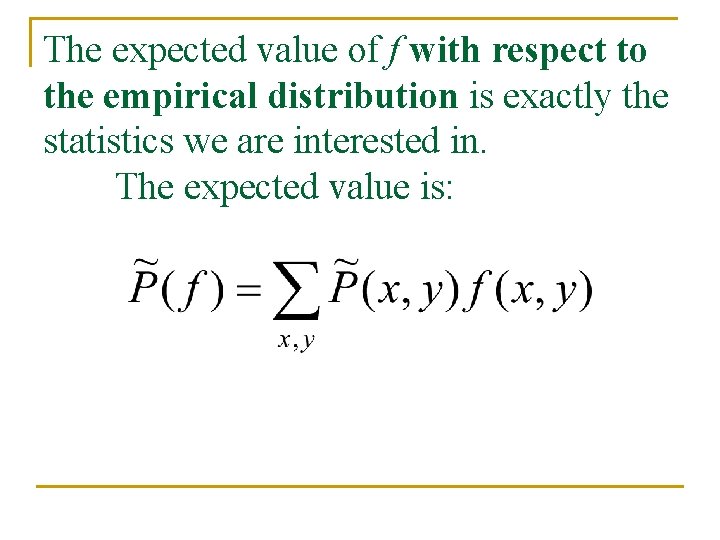

The expected value of f with respect to the empirical distribution is exactly the statistics we are interested in. The expected value is:

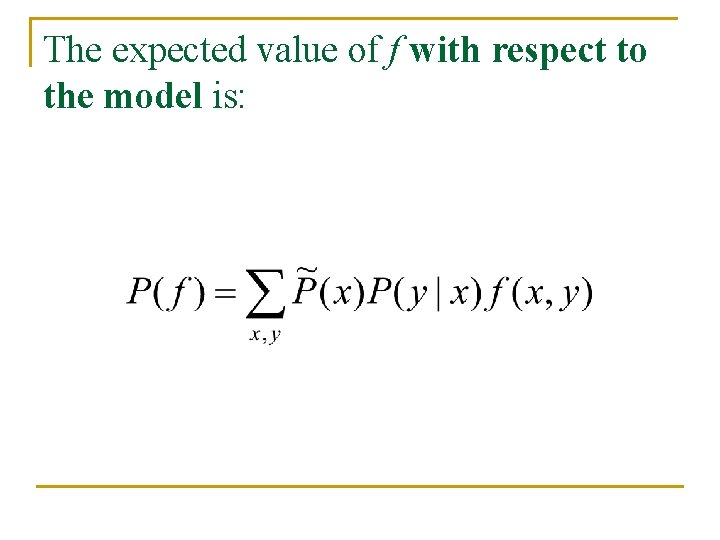

The expected value of f with respect to the model is:

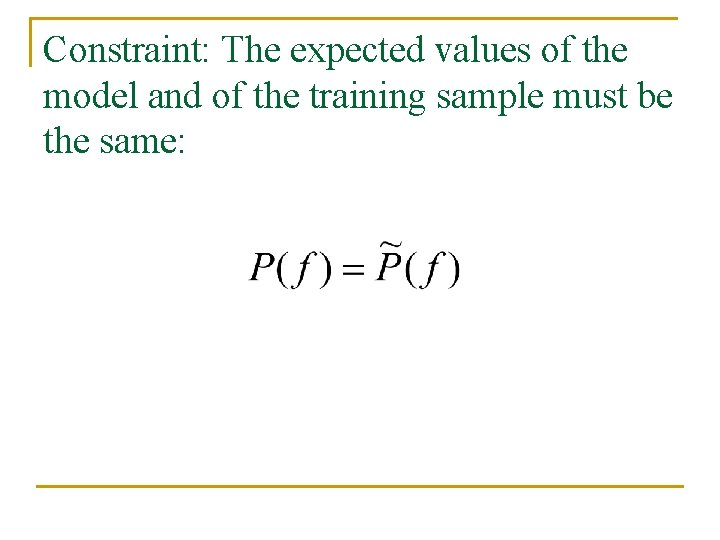

Constraint: The expected values of the model and of the training sample must be the same:

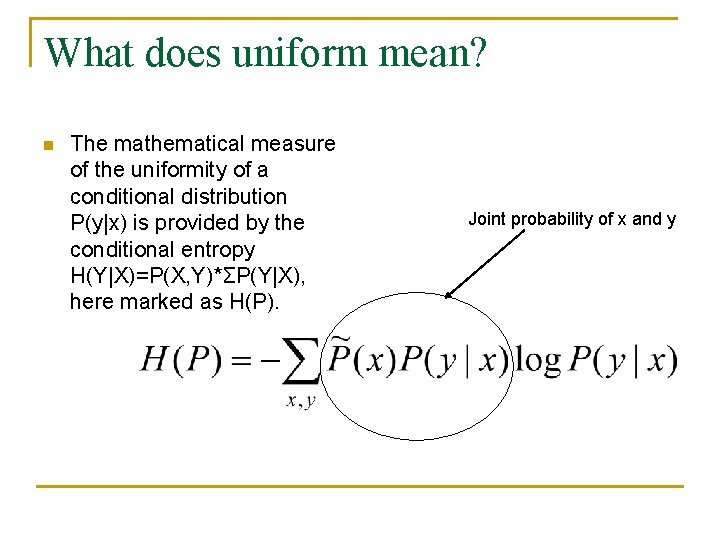

What does uniform mean? n The mathematical measure of the uniformity of a conditional distribution P(y|x) is provided by the conditional entropy H(Y|X)=P(X, Y)*ΣP(Y|X), here marked as H(P). Joint probability of x and y

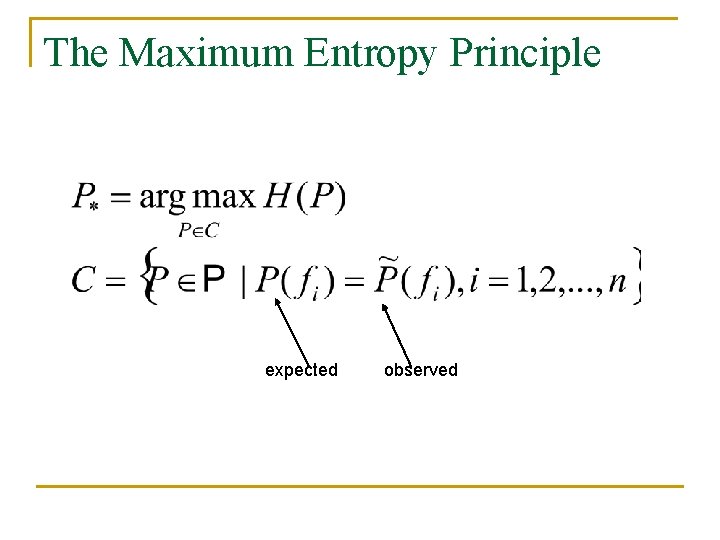

The Maximum Entropy Principle expected observed

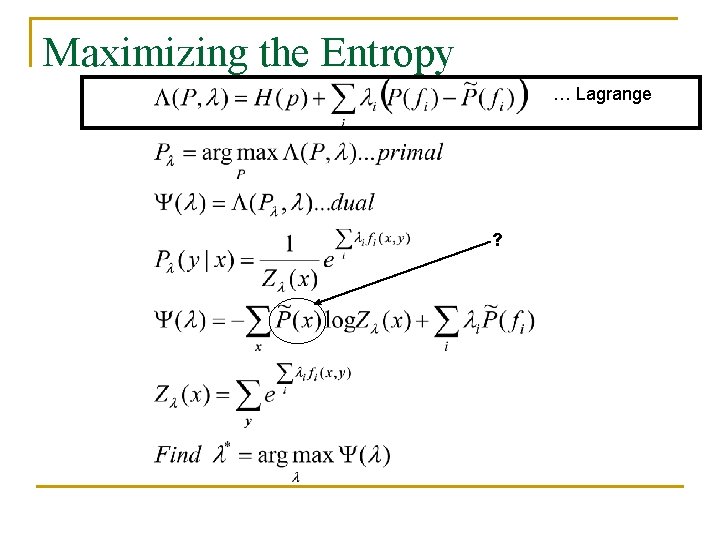

Maximizing the Entropy … Lagrange ?

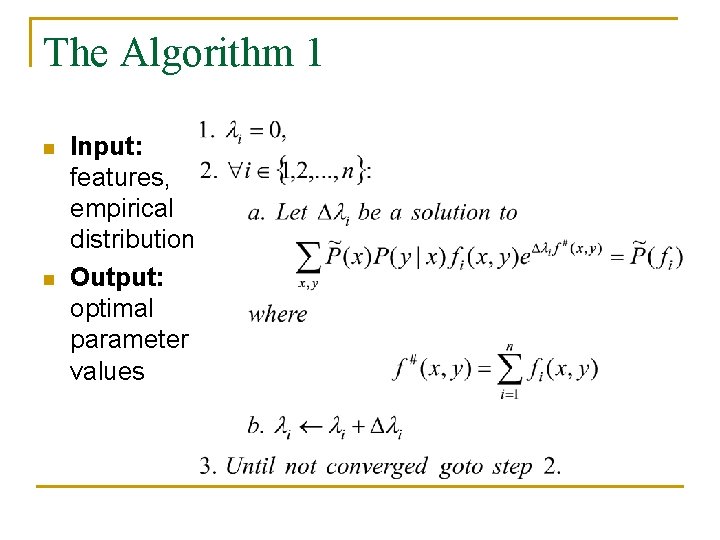

The Algorithm 1 n n Input: features, empirical distribution Output: optimal parameter values

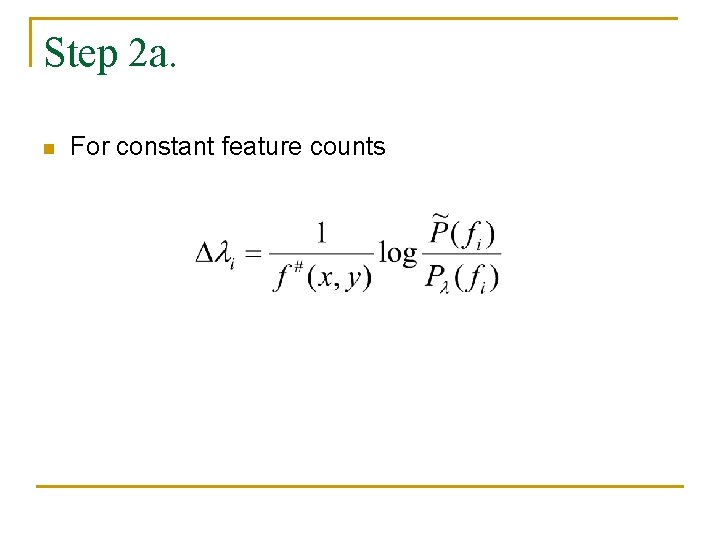

Step 2 a. n For constant feature counts

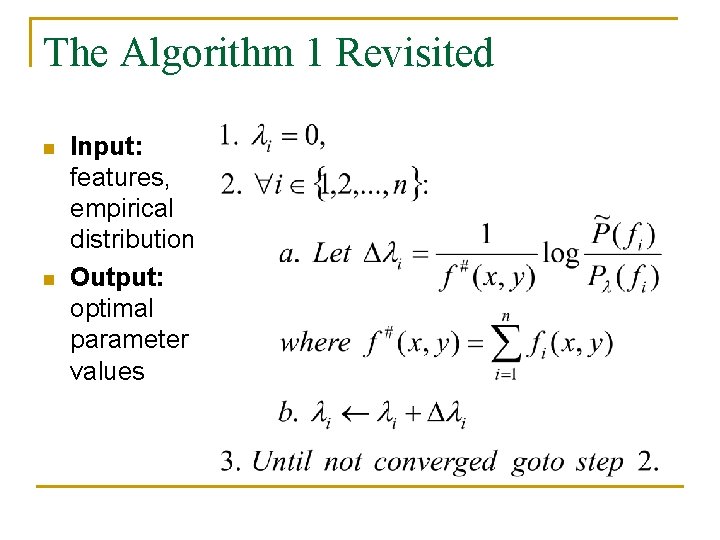

The Algorithm 1 Revisited n n Input: features, empirical distribution Output: optimal parameter values

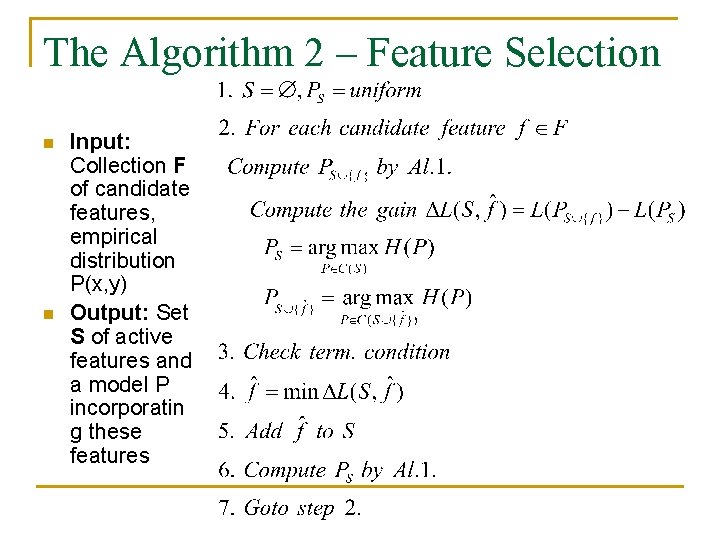

The Algorithm 2 – Feature Selection n n Input: Collection F of candidate features, empirical distribution P(x, y) Output: Set S of active features and a model P incorporatin g these features

- Slides: 16