MATH 685CSI 700 Lecture Notes Lecture 1 Intro

- Slides: 26

MATH 685/CSI 700 Lecture Notes Lecture 1. Intro to Scientific Computing

Useful info l l Course website: http: //math. gmu. edu/~memelian/teaching/Spring 10 MATLAB instructions: http: //math. gmu. edu/introtomatlab. htm Mathworks, the creator of MATLAB: http: //www. mathworks. com OCTAVE = free MATLAB clone Available for download at http: //octave. sourceforge. net/

Scientific computing l Design and analysis of algorithms for numerically solving mathematical problems in science and engineering l Deals with continuous quantities vs. discrete (as, say, computer science) l Considers the effect of approximations and performs error analysis l Is ubiquitous in modern simulations and algorithms modeling natural phenomena and in engineering applications l Closely related to numerical analysis

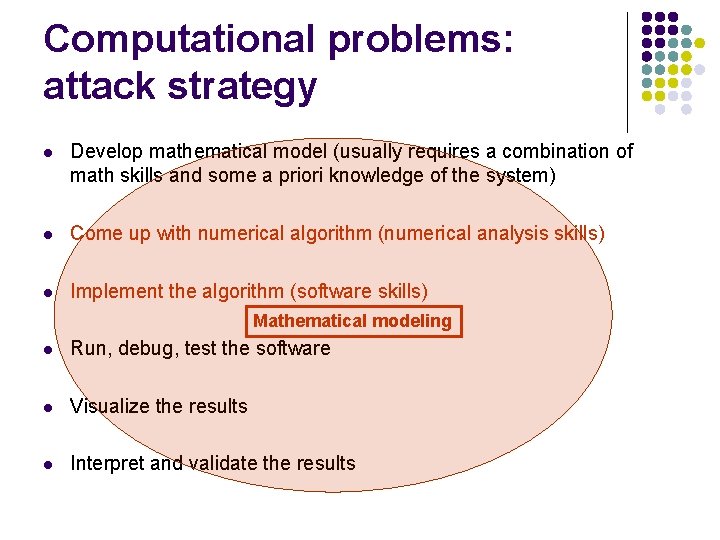

Computational problems: attack strategy l Develop mathematical model (usually requires a combination of math skills and some a priori knowledge of the system) l Come up with numerical algorithm (numerical analysis skills) l Implement the algorithm (software skills) Mathematical modeling l Run, debug, test the software l Visualize the results l Interpret and validate the results

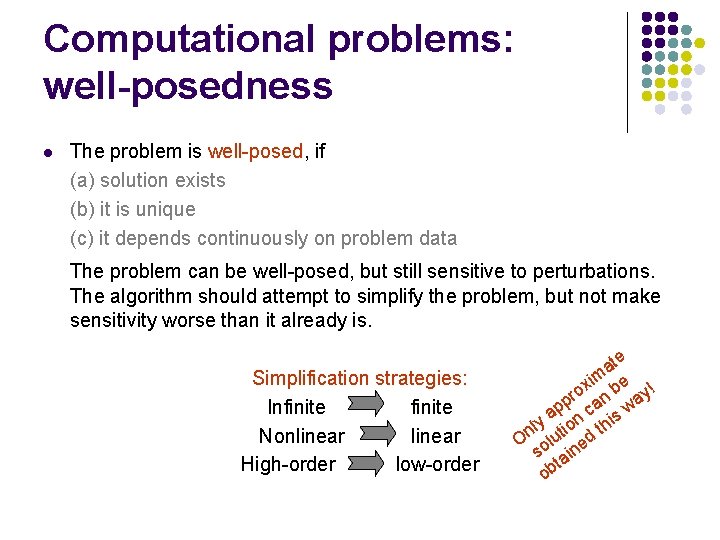

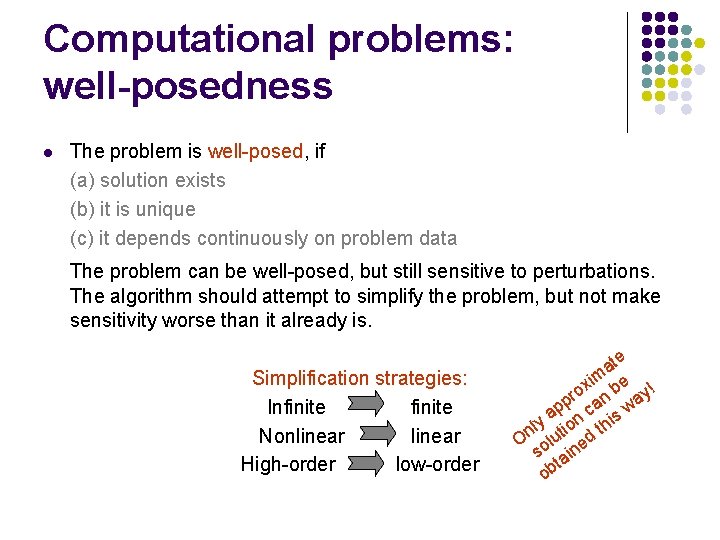

Computational problems: well-posedness l The problem is well-posed, if (a) solution exists (b) it is unique (c) it depends continuously on problem data The problem can be well-posed, but still sensitive to perturbations. The algorithm should attempt to simplify the problem, but not make sensitivity worse than it already is. Simplification strategies: Infinite Nonlinear High-order low-order e at im e ! x ro n b ay p p ca s w a ly tion thi n O olu ed s ain t ob

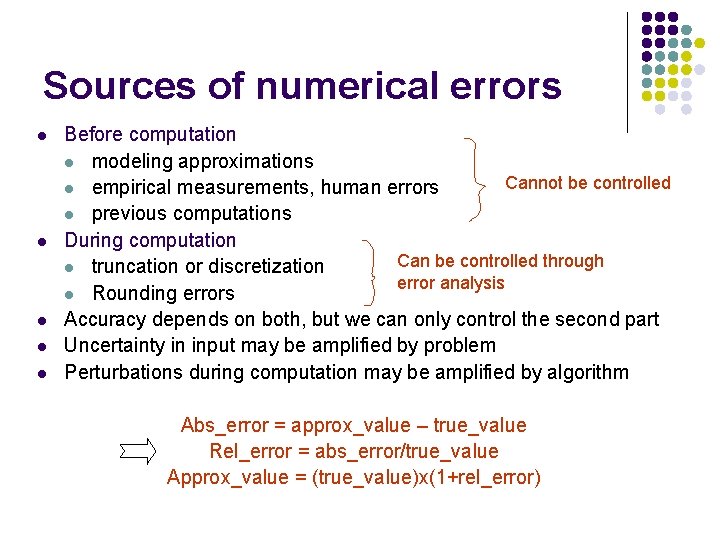

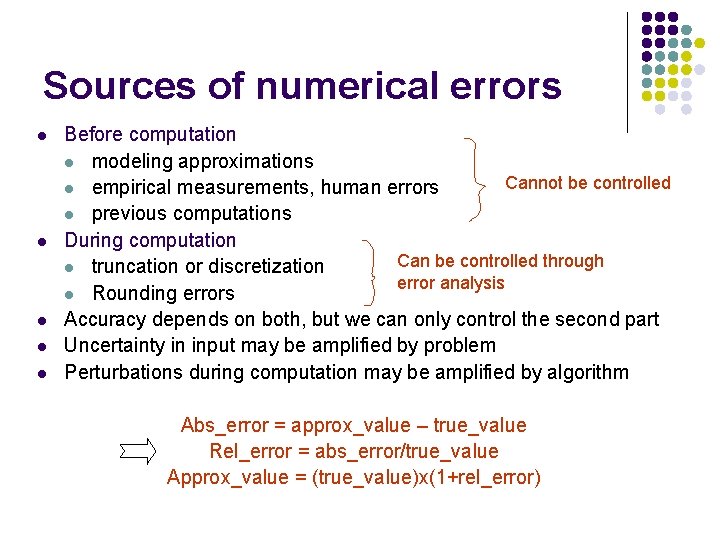

Sources of numerical errors l l l Before computation l modeling approximations Cannot be controlled l empirical measurements, human errors l previous computations During computation Can be controlled through l truncation or discretization error analysis l Rounding errors Accuracy depends on both, but we can only control the second part Uncertainty in input may be amplified by problem Perturbations during computation may be amplified by algorithm Abs_error = approx_value – true_value Rel_error = abs_error/true_value Approx_value = (true_value)x(1+rel_error)

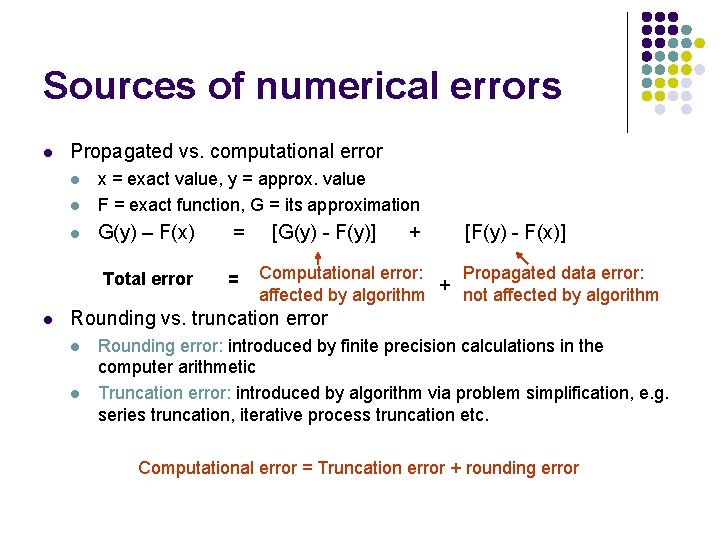

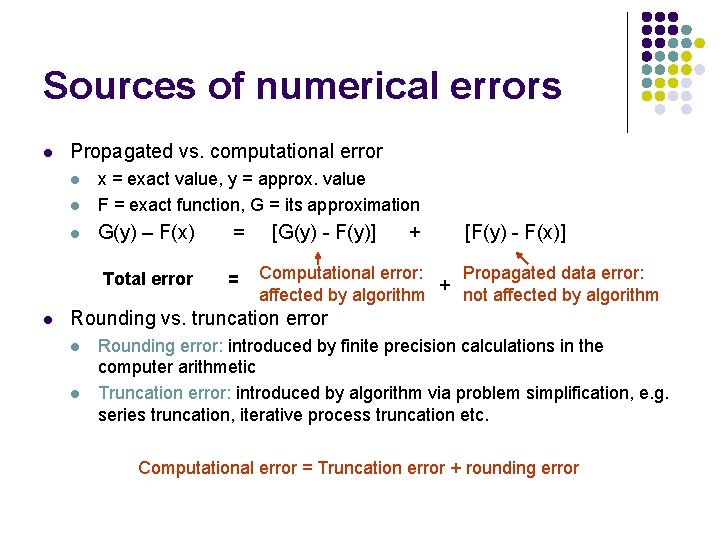

Sources of numerical errors l Propagated vs. computational error l x = exact value, y = approx. value F = exact function, G = its approximation l G(y) – F(x) l Total error l = = [G(y) - F(y)] + [F(y) - F(x)] Computational error: Propagated data error: + affected by algorithm not affected by algorithm Rounding vs. truncation error l l Rounding error: introduced by finite precision calculations in the computer arithmetic Truncation error: introduced by algorithm via problem simplification, e. g. series truncation, iterative process truncation etc. Computational error = Truncation error + rounding error

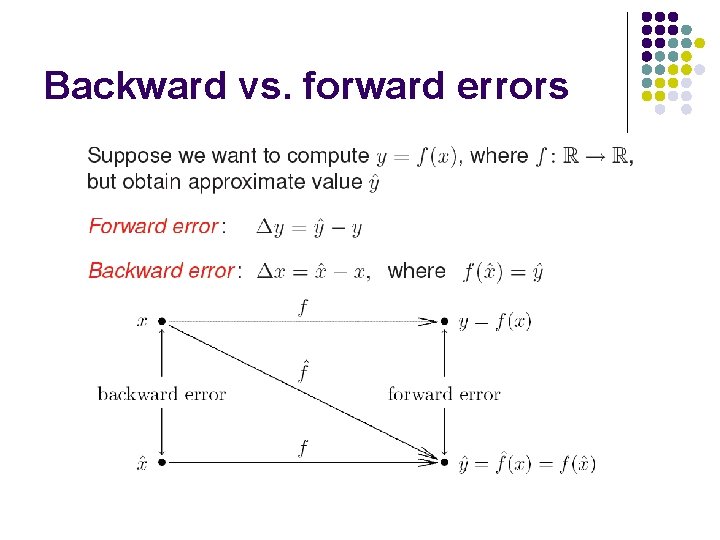

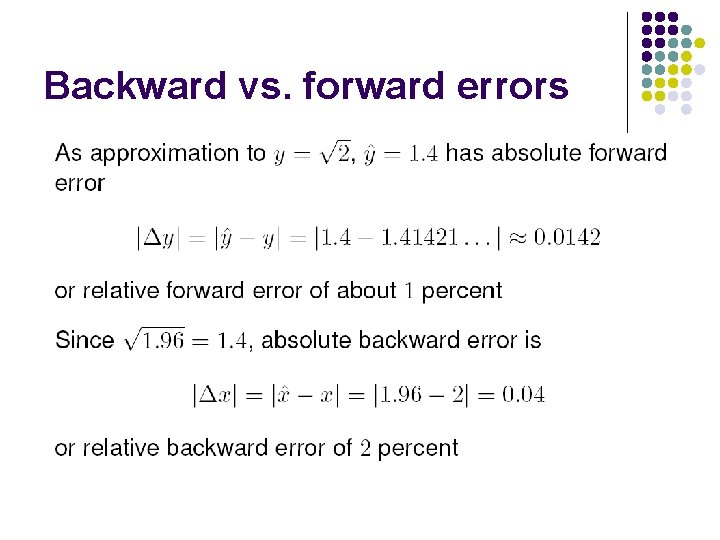

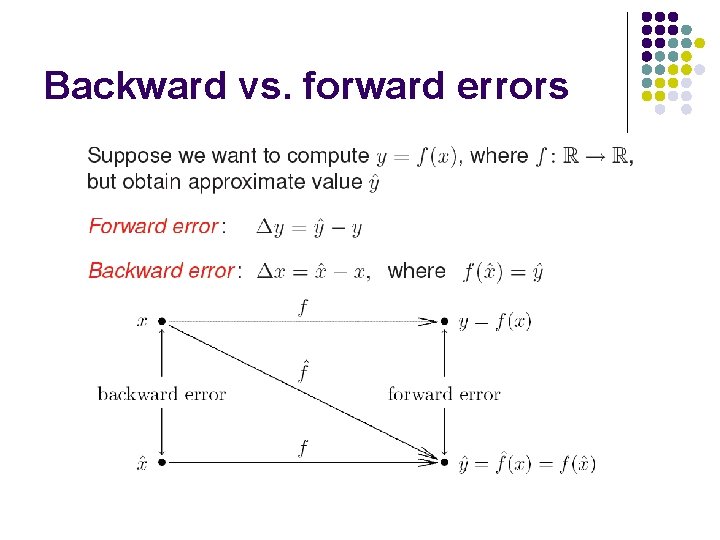

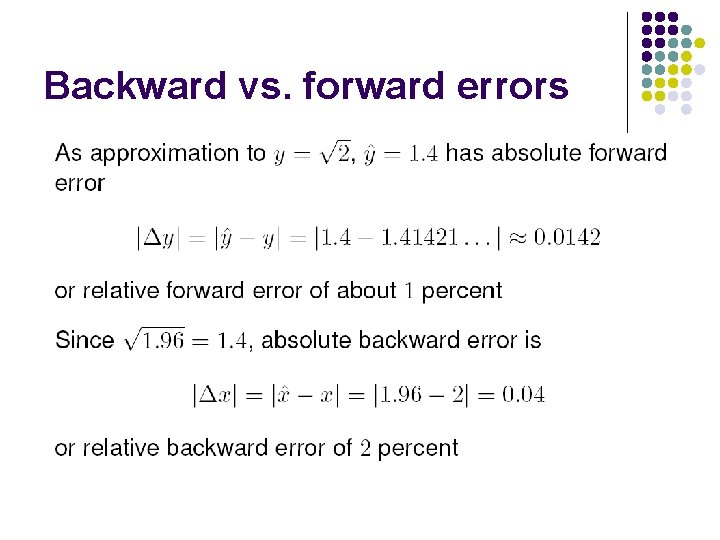

Backward vs. forward errors

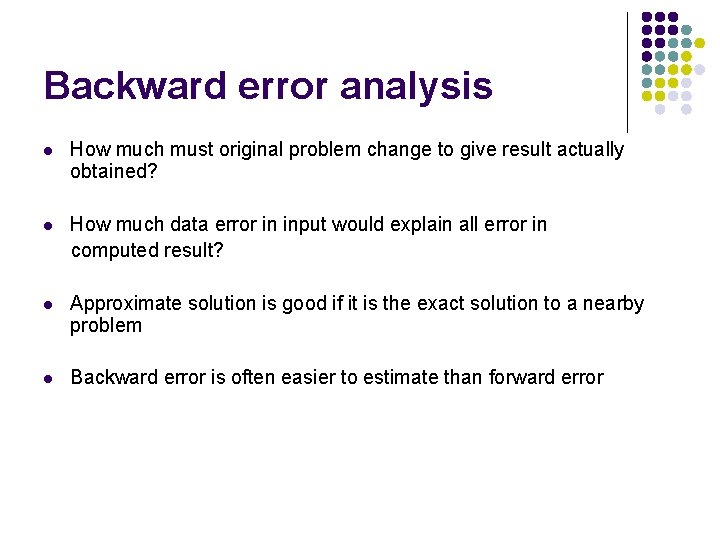

Backward error analysis l How much must original problem change to give result actually obtained? l How much data error in input would explain all error in computed result? l Approximate solution is good if it is the exact solution to a nearby problem l Backward error is often easier to estimate than forward error

Backward vs. forward errors

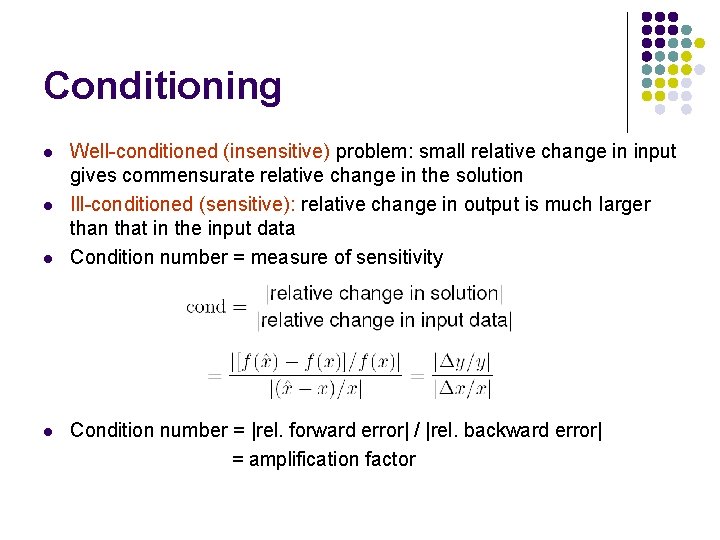

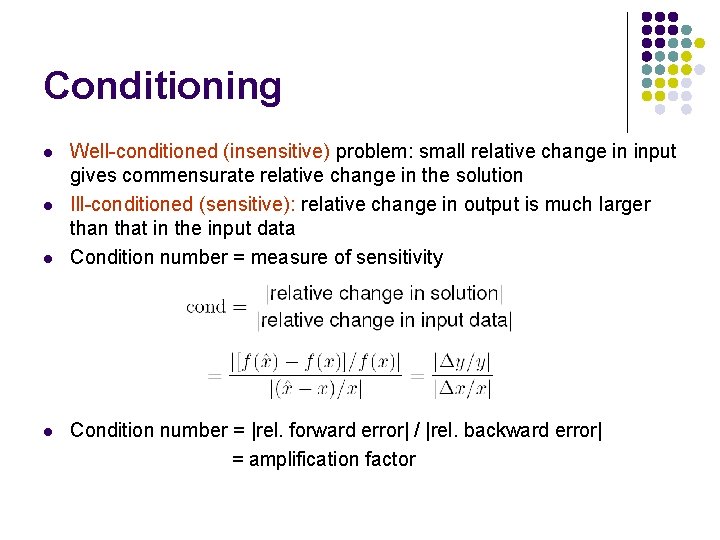

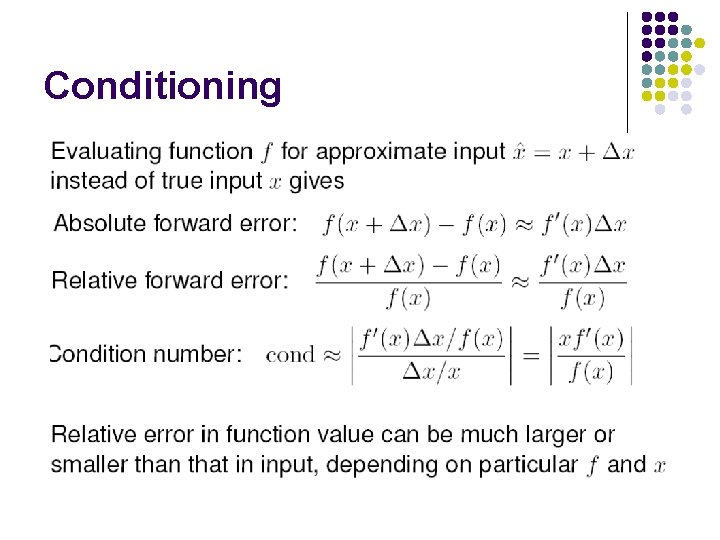

Conditioning l l Well-conditioned (insensitive) problem: small relative change in input gives commensurate relative change in the solution Ill-conditioned (sensitive): relative change in output is much larger than that in the input data Condition number = measure of sensitivity Condition number = |rel. forward error| / |rel. backward error| = amplification factor

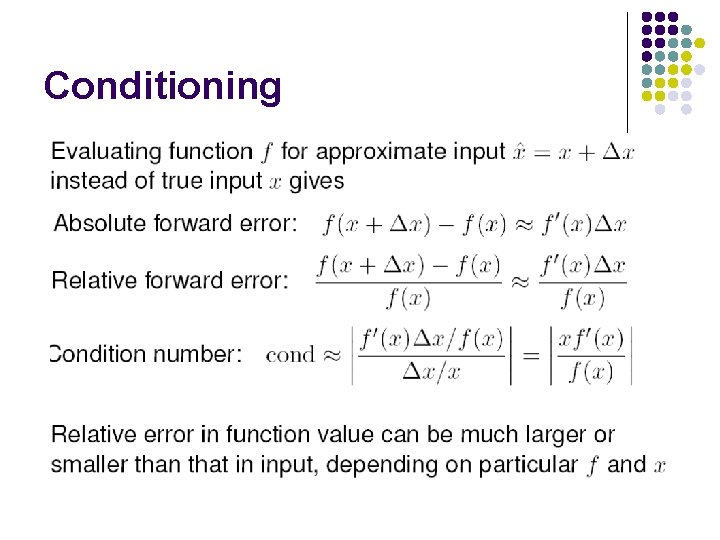

Conditioning

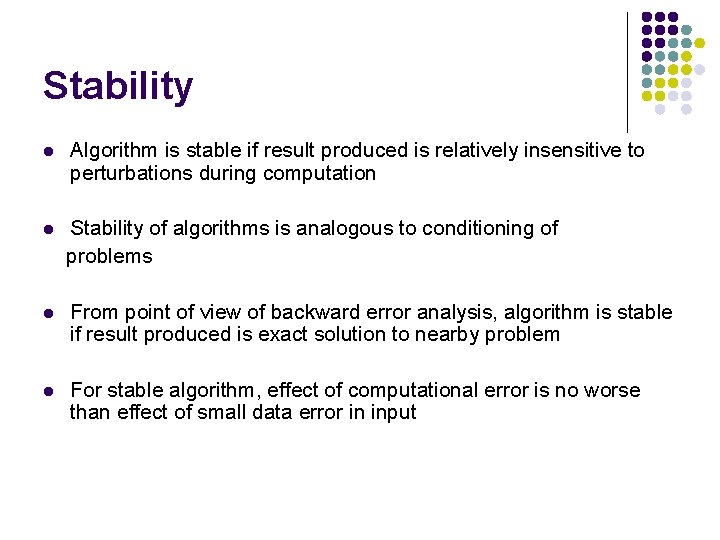

Stability l Algorithm is stable if result produced is relatively insensitive to perturbations during computation l Stability of algorithms is analogous to conditioning of problems l From point of view of backward error analysis, algorithm is stable if result produced is exact solution to nearby problem l For stable algorithm, effect of computational error is no worse than effect of small data error in input

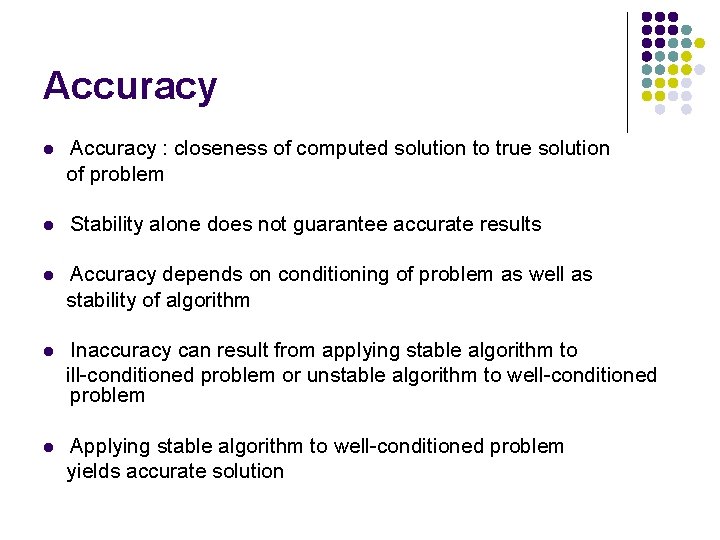

Accuracy l Accuracy : closeness of computed solution to true solution of problem l Stability alone does not guarantee accurate results l Accuracy depends on conditioning of problem as well as stability of algorithm l Inaccuracy can result from applying stable algorithm to ill-conditioned problem or unstable algorithm to well-conditioned problem l Applying stable algorithm to well-conditioned problem yields accurate solution

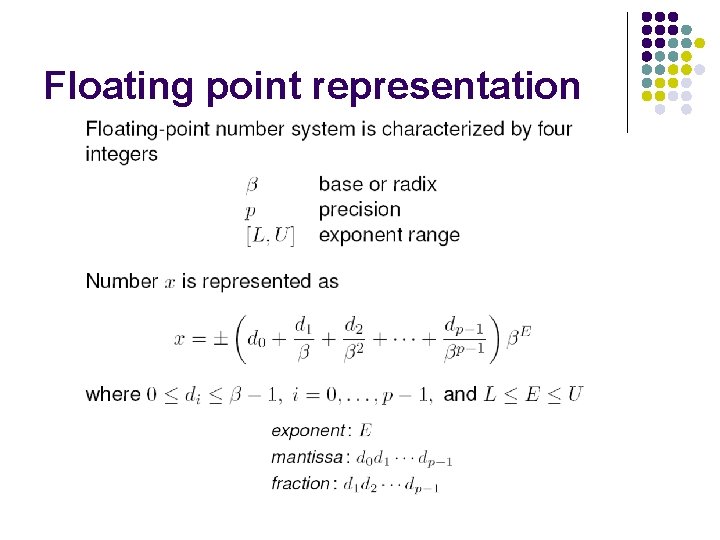

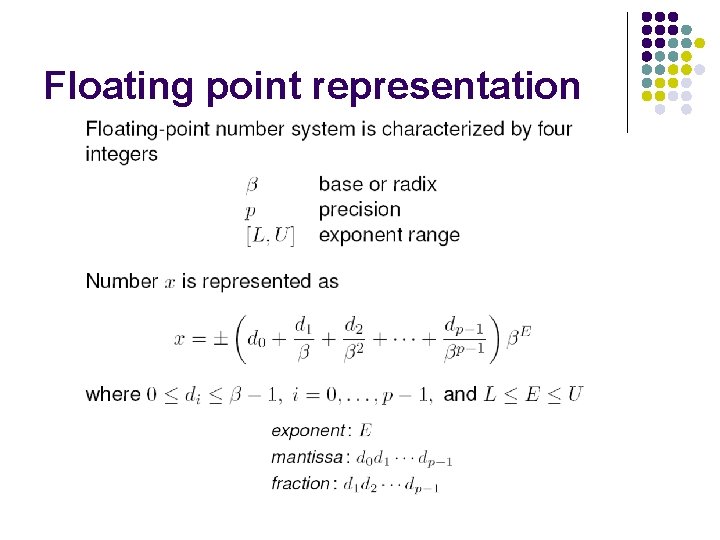

Floating point representation

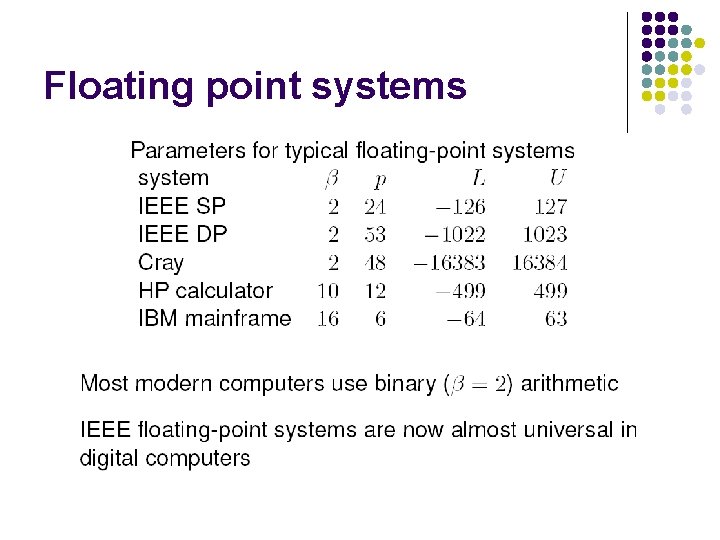

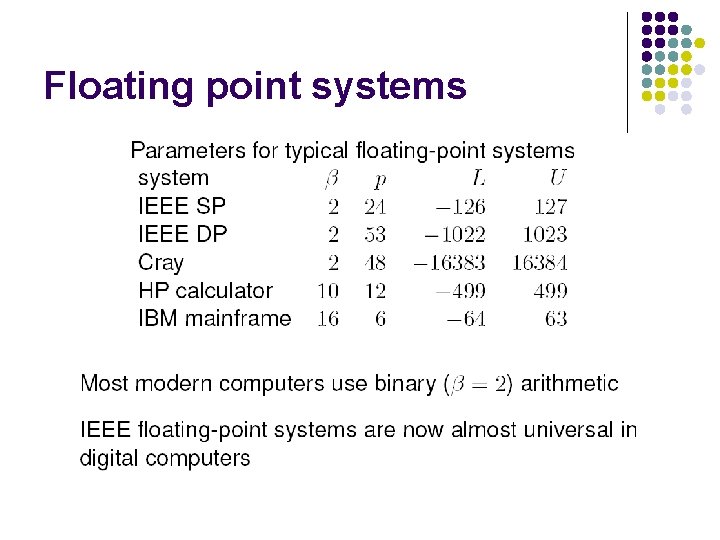

Floating point systems

Normalized representation Not all numbers can be represented this way, those that can are called machine numbers

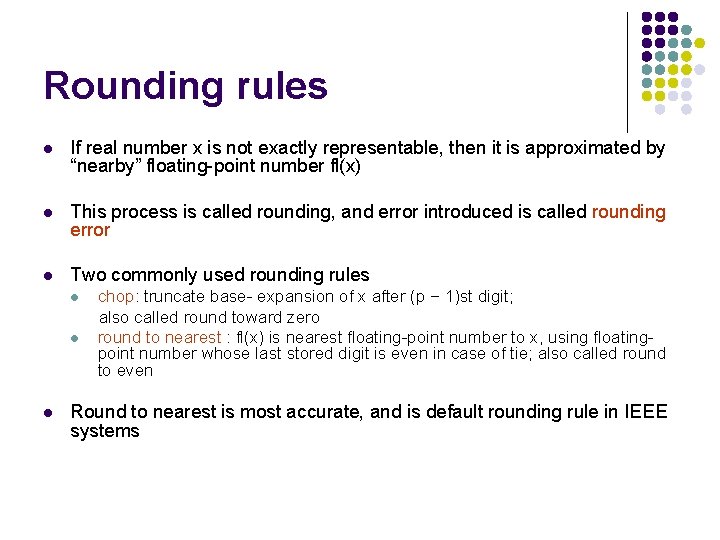

Rounding rules l If real number x is not exactly representable, then it is approximated by “nearby” floating-point number fl(x) l This process is called rounding, and error introduced is called rounding error l Two commonly used rounding rules l l l chop: truncate base- expansion of x after (p − 1)st digit; also called round toward zero round to nearest : fl(x) is nearest floating-point number to x, using floatingpoint number whose last stored digit is even in case of tie; also called round to even Round to nearest is most accurate, and is default rounding rule in IEEE systems

Floating point arithmetic

Machine precision

Floating point operations

Summing series in floatingpoint arithmetic

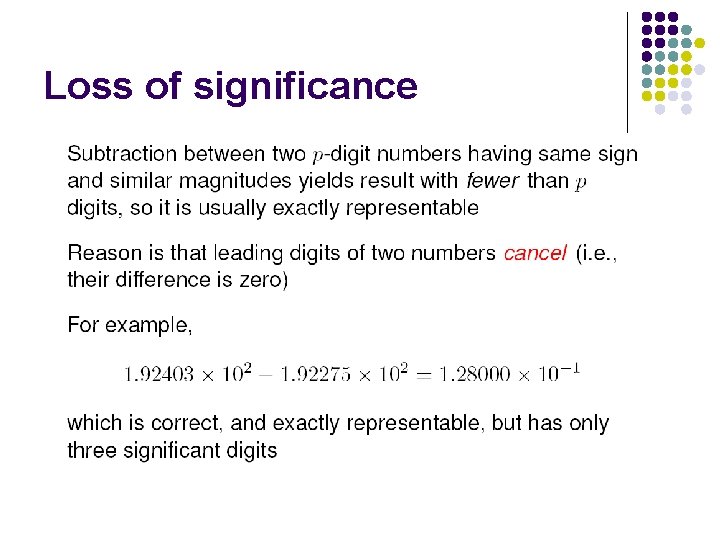

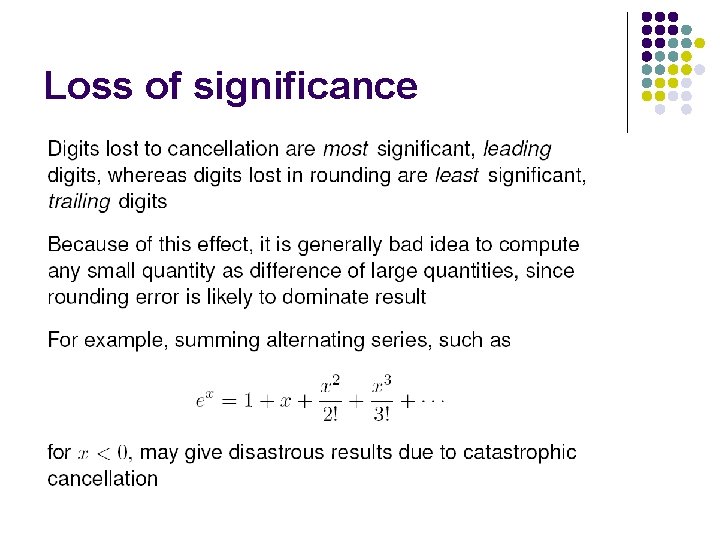

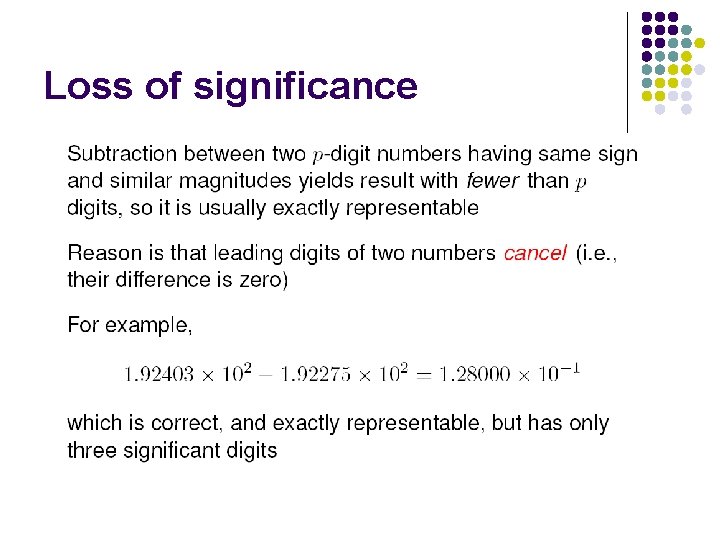

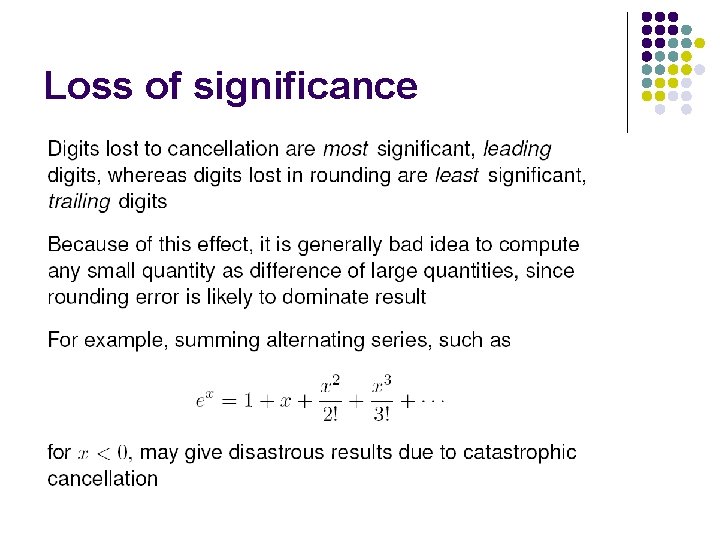

Loss of significance

Loss of significance

Loss of significance

Loss of significance