Mastergoal Machine Learning Environment Phase III Presentation Alejandro

- Slides: 32

Mastergoal Machine Learning Environment Phase III Presentation Alejandro Alliana CIS 895 MSE Project – KSU

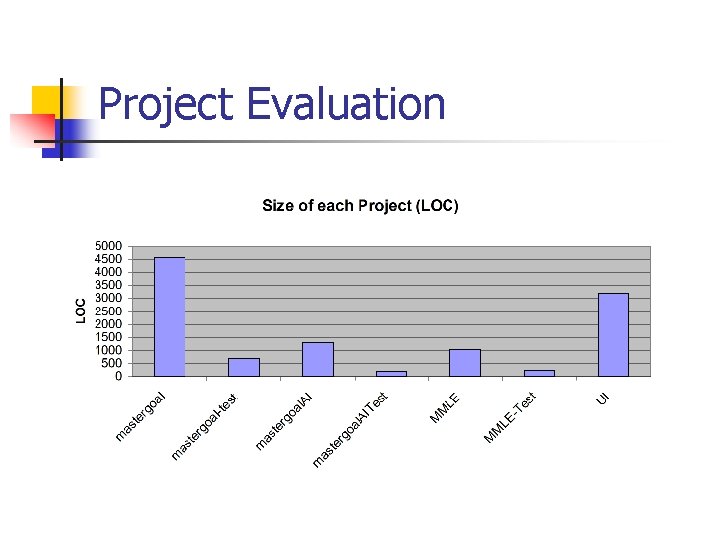

MMLE Project Overview n n Provide an environment to create, repeat, save experiments for creating strategies for playing Mastergoal using ML techniques. Divided in 4 sub-projects. n n Mastergoal (MG) Mastergoal AI (MGAI) Mastergoal Machine Learning (MMLE) User Interface (UI)

Phase III Artifacts n n n User Documentation Component Design Source Code (and exe) Assessment Evaluation Project Evaluation References

User Documentation n MMLE UI User manual provided. n n Install Common use cases n n n Data fields types and formats Examples MG, MGAI, MMLE libraries. n API generated by doxygen.

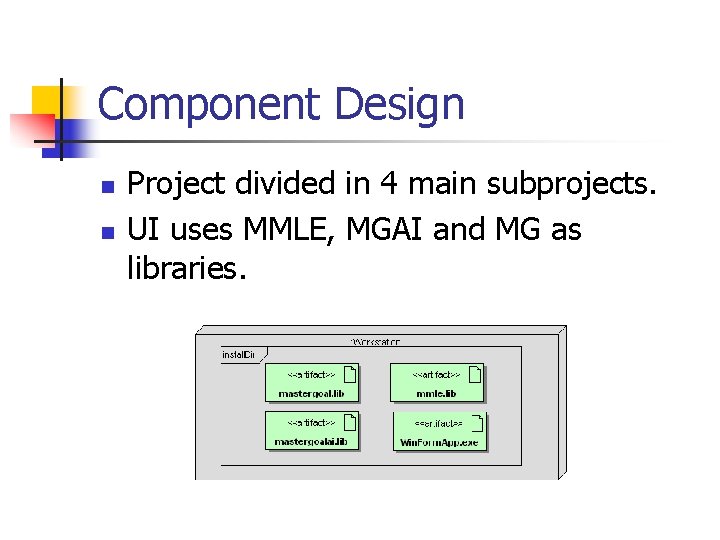

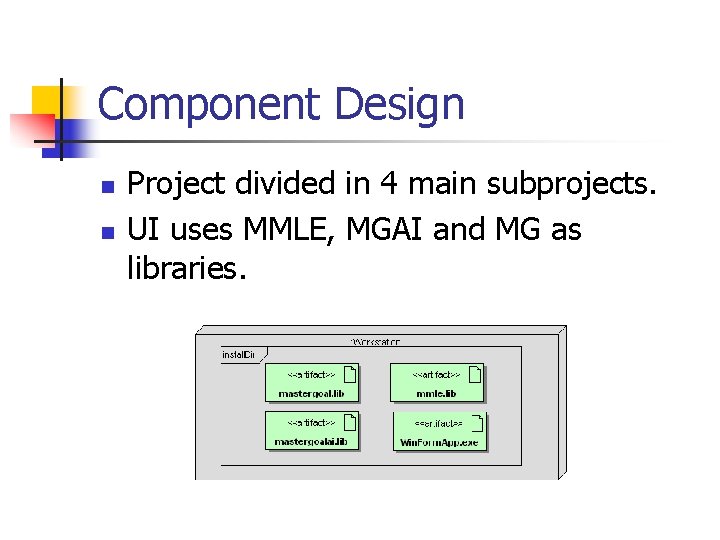

Component Design n n Project divided in 4 main subprojects. UI uses MMLE, MGAI and MG as libraries.

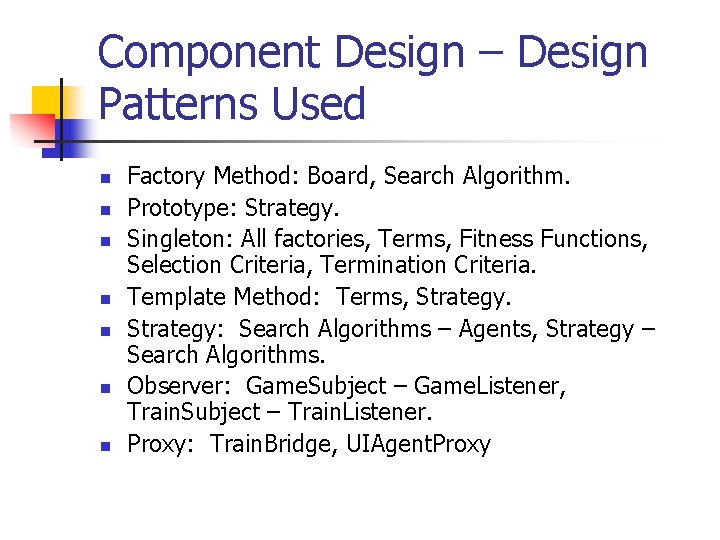

Component Design – Design Patterns Used n n n n Factory Method: Board, Search Algorithm. Prototype: Strategy. Singleton: All factories, Terms, Fitness Functions, Selection Criteria, Termination Criteria. Template Method: Terms, Strategy: Search Algorithms – Agents, Strategy – Search Algorithms. Observer: Game. Subject – Game. Listener, Train. Subject – Train. Listener. Proxy: Train. Bridge, UIAgent. Proxy

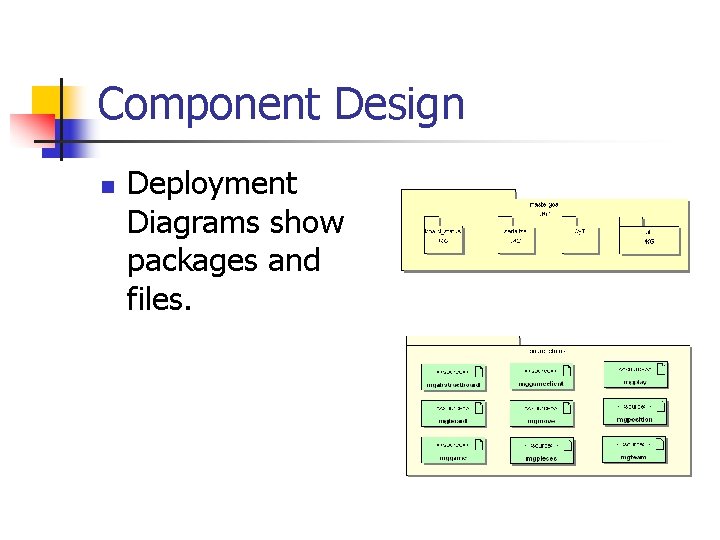

Component Design n Deployment Diagrams show packages and files.

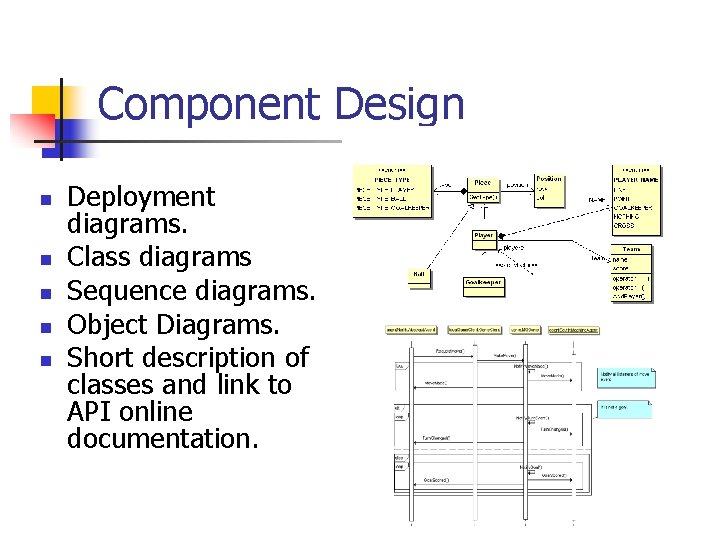

Component Design n n Deployment diagrams. Class diagrams Sequence diagrams. Object Diagrams. Short description of classes and link to API online documentation.

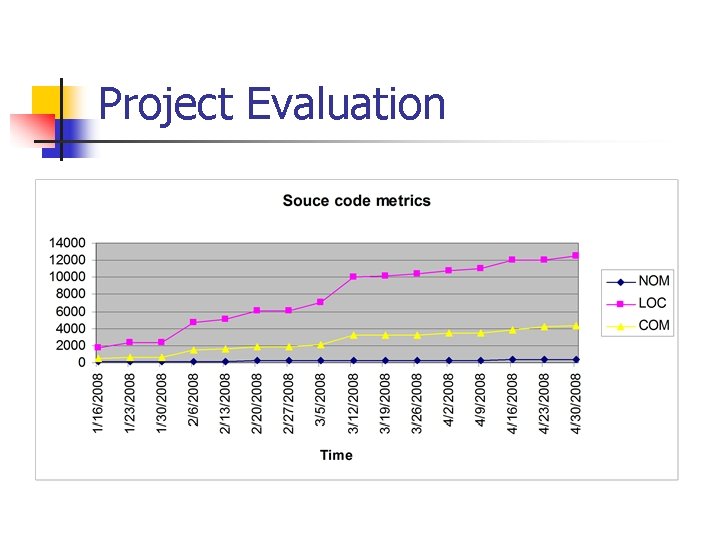

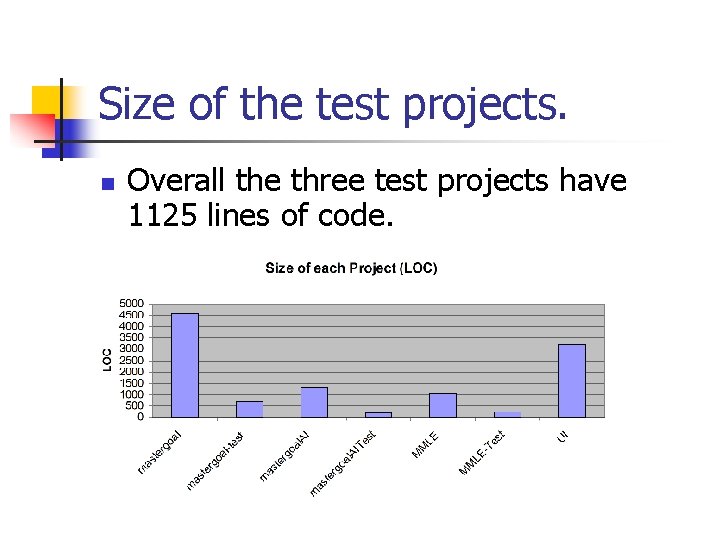

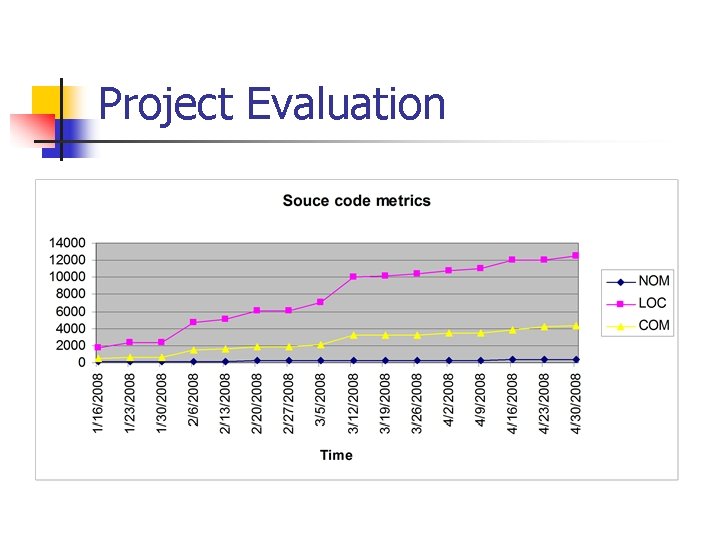

Source Code n Kept in SVN repository (7 projects). n n n 4 sub-projects. 3 test sub-projects. Metrics were taken weekly and will be discussed later in the presentation.

Installer and executable. n n n Installer created with NSIS (Nullsoft Scriptable Install System). UI created with the Windows Forms GUI API available in the. NET Framework. All other sub-projects coded in (unmanaged) C++ and are available as libraries.

MMLE Demonstration.

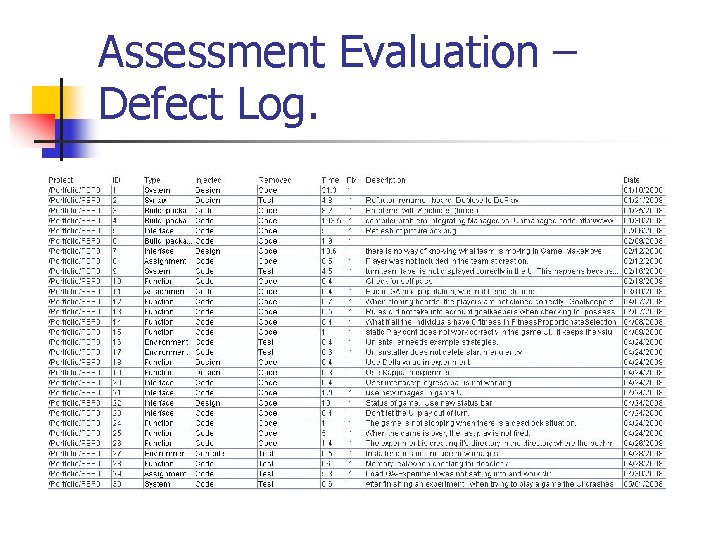

Assessment Evaluation n I used the CPPUnit framework to perform unit testing on the projects. n n n Mastergoal. Test Mastergoal. Ai. Test Mmle-test Assertions used to test for pre and postcoditions. I used the Visual Leak Detector system to detect memory leaks.

Assessment Evaluation n Test Plan n n All test passed* CPPUnit n n Regression Bugs. Coding of test cases. Document and debug test cases. Memory Leak Bugs.

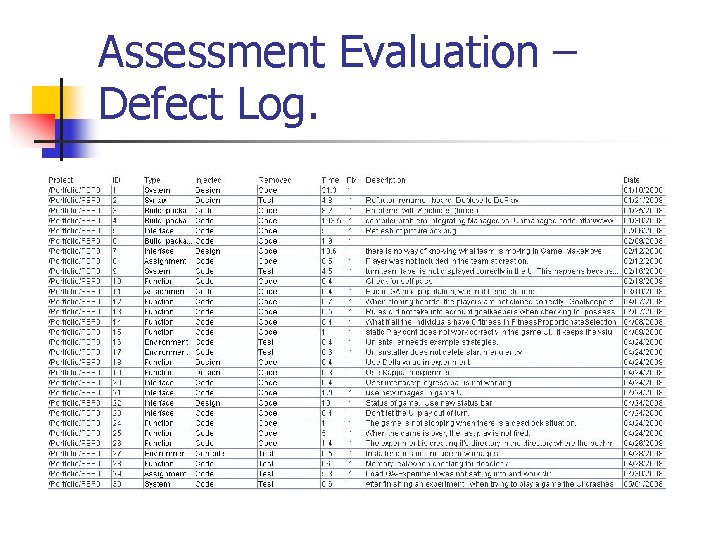

Assessment Evaluation – Defect Log.

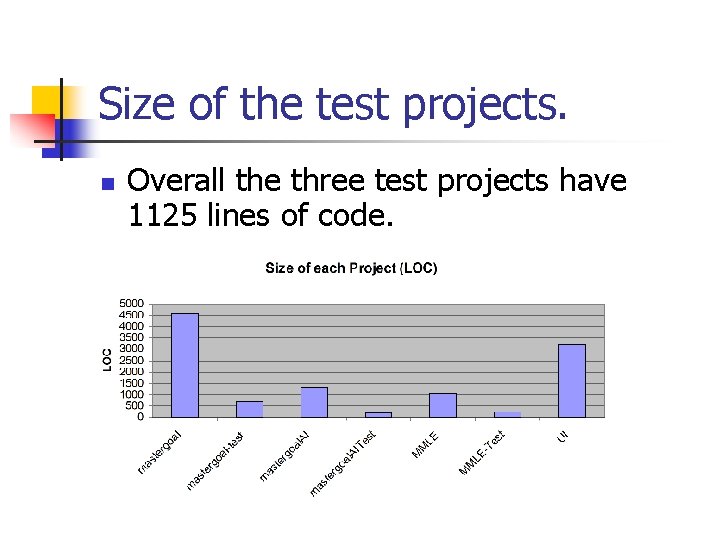

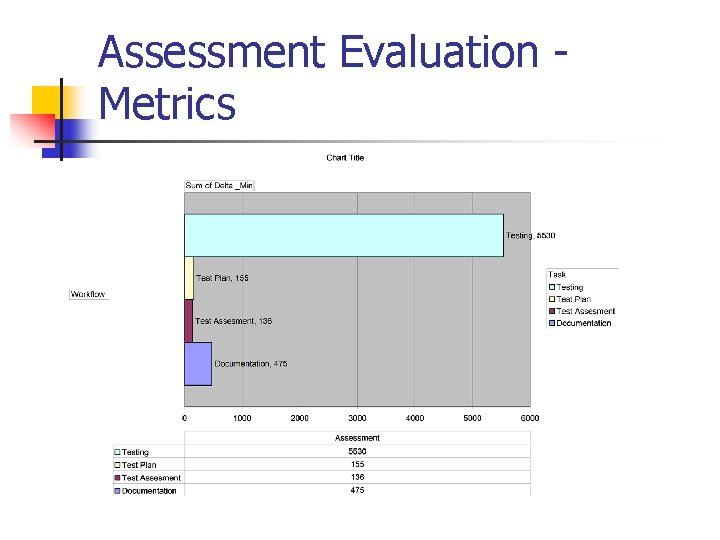

Size of the test projects. n Overall the three test projects have 1125 lines of code.

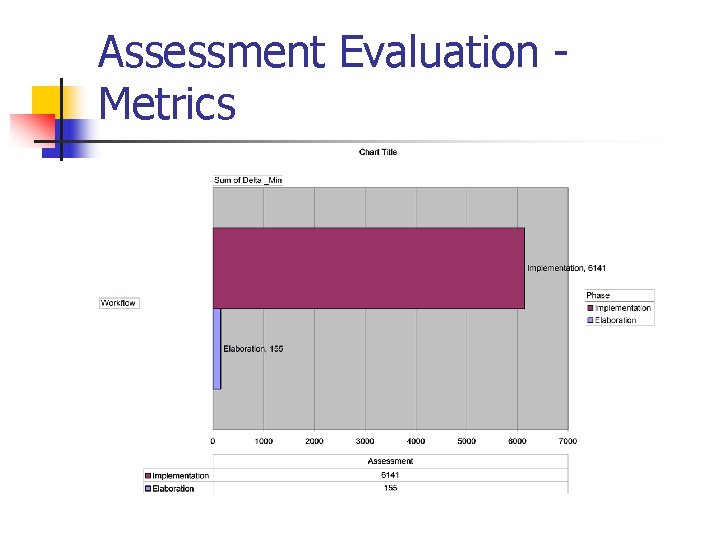

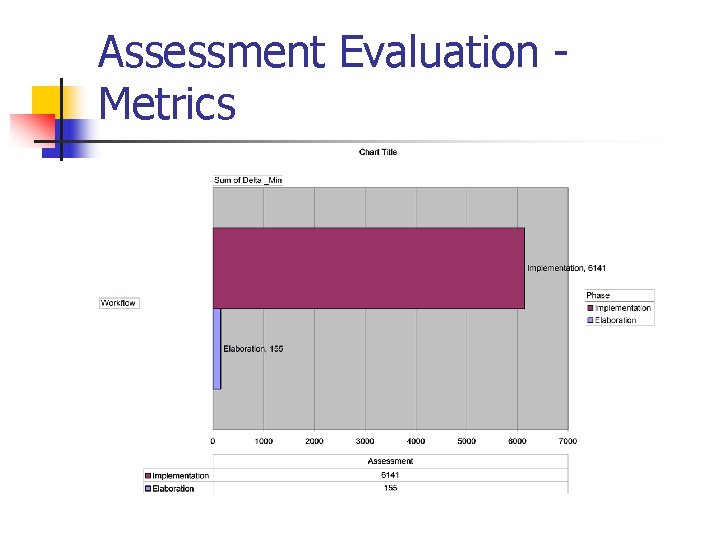

Assessment Evaluation Metrics

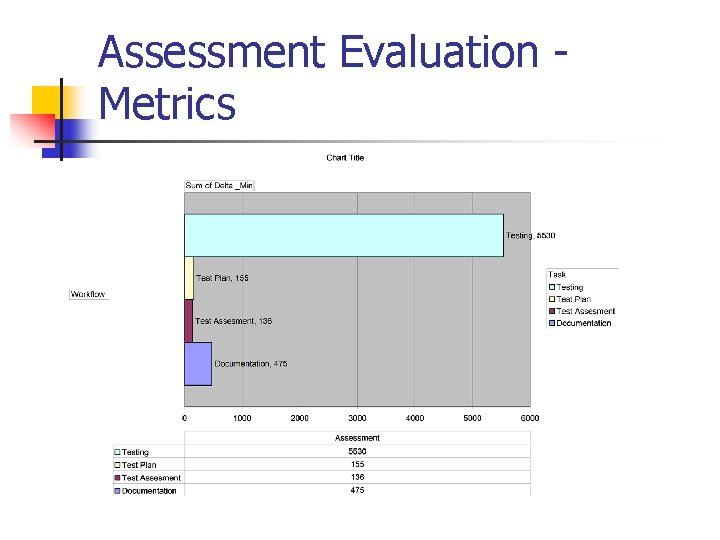

Assessment Evaluation Metrics

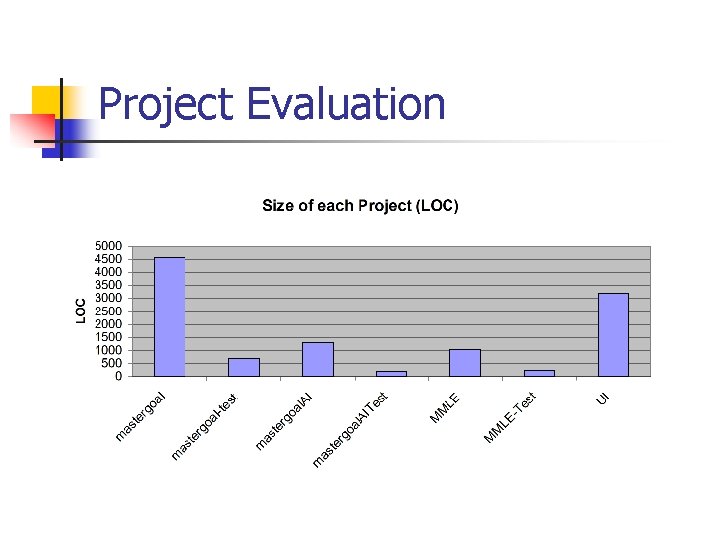

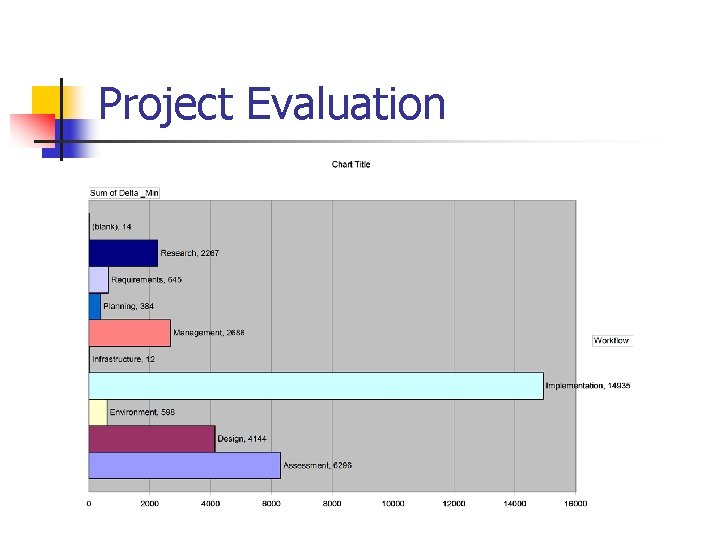

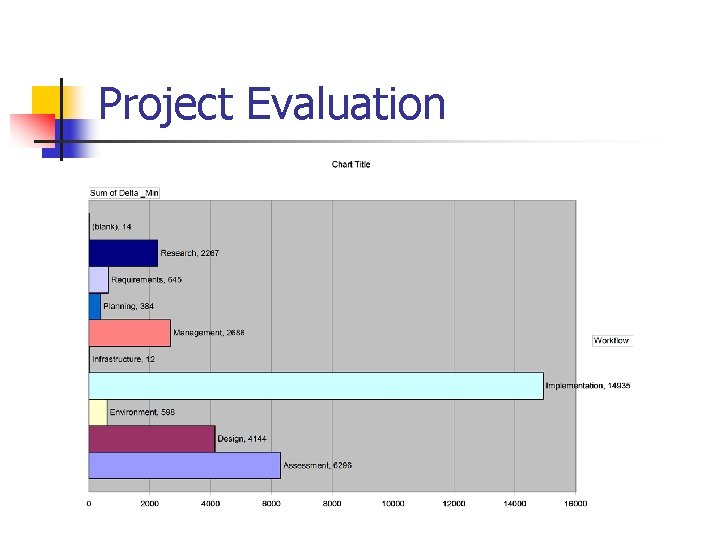

Project Evaluation

Project Evaluation

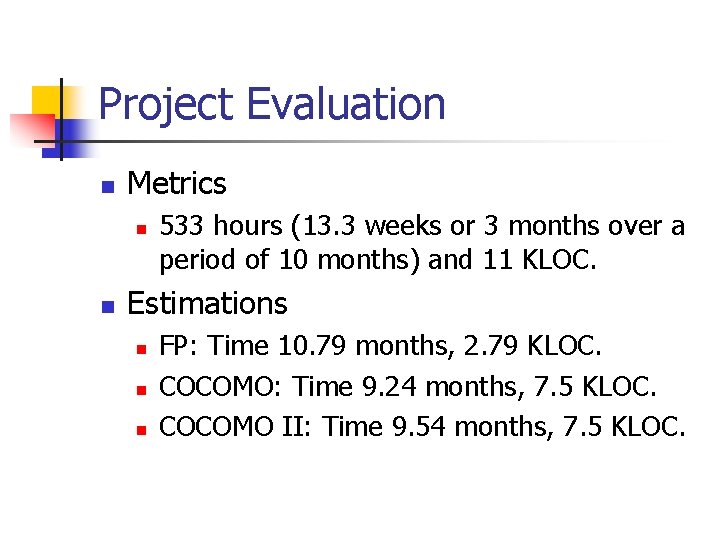

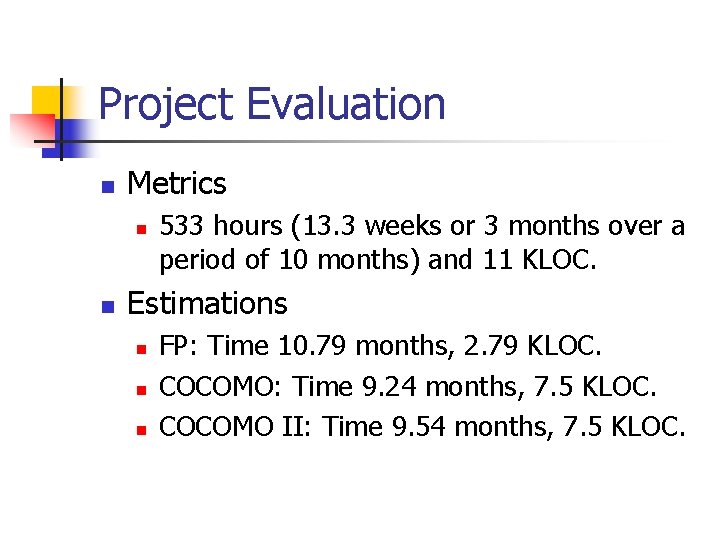

Project Evaluation n Metrics n n 533 hours (13. 3 weeks or 3 months over a period of 10 months) and 11 KLOC. Estimations n n n FP: Time 10. 79 months, 2. 79 KLOC. COCOMO: Time 9. 24 months, 7. 5 KLOC. COCOMO II: Time 9. 54 months, 7. 5 KLOC.

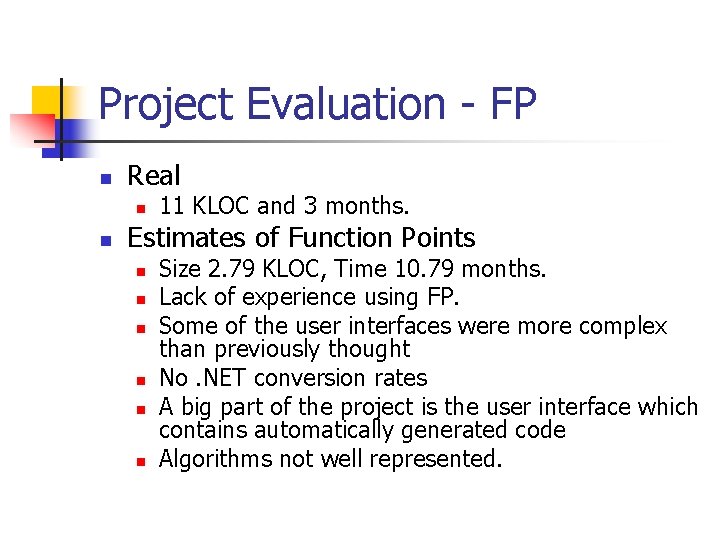

Project Evaluation - FP n Real n n 11 KLOC and 3 months. Estimates of Function Points n n n Size 2. 79 KLOC, Time 10. 79 months. Lack of experience using FP. Some of the user interfaces were more complex than previously thought No. NET conversion rates A big part of the project is the user interface which contains automatically generated code Algorithms not well represented.

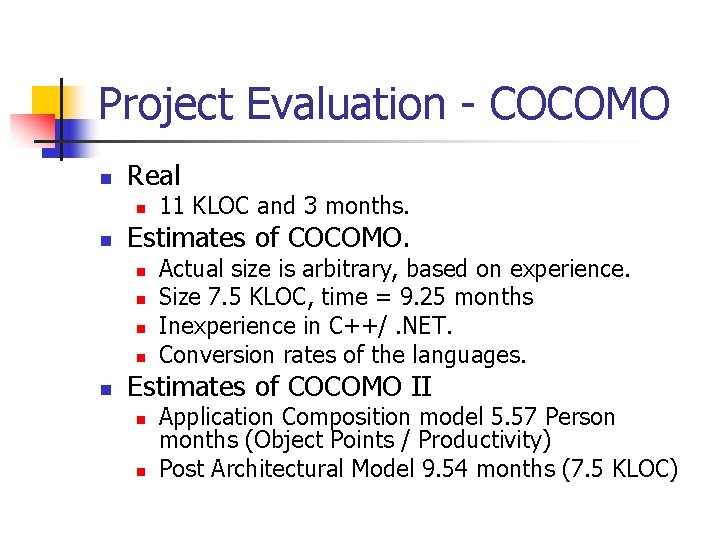

Project Evaluation - COCOMO n Real n n Estimates of COCOMO. n n n 11 KLOC and 3 months. Actual size is arbitrary, based on experience. Size 7. 5 KLOC, time = 9. 25 months Inexperience in C++/. NET. Conversion rates of the languages. Estimates of COCOMO II n n Application Composition model 5. 57 Person months (Object Points / Productivity) Post Architectural Model 9. 54 months (7. 5 KLOC)

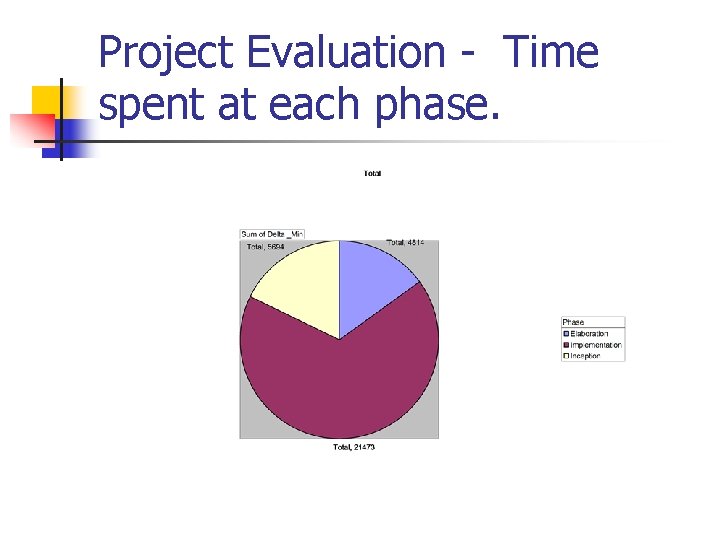

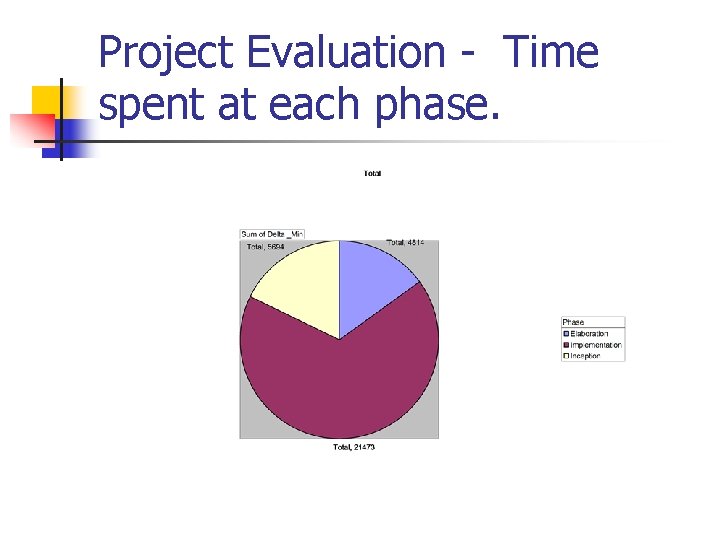

Project Evaluation - Time spent at each phase.

Project Evaluation

Project Evaluation - Lessons Learned n Implementation: n n n C++ language, memory management, implementation of design patterns. Tools and libraries (NSIS, CPPUnit, VLD, Doxygen) Design: n Design Patterns.

Project Evaluation - Lessons Learned n n Experience on various estimate models. Measurement n n Testing. n n n Tools (CCCC, Process Dashboard). CPPUnit framework. VLD. Process n n Iterative process Artifacts

Project Evaluation - Future work n n n n Improve performance of search algorithm and add new algorithms. Add more functionality to the game playing library and UI. Add more selection mechanisms to the GA Experiments. Add more learning algorithms. Distributed computation to speed up training Refactoring of some classes. Add test classes for each feature.

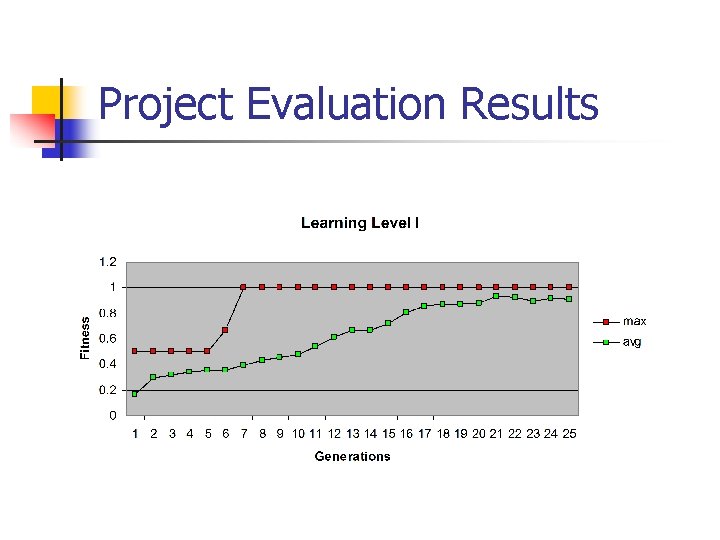

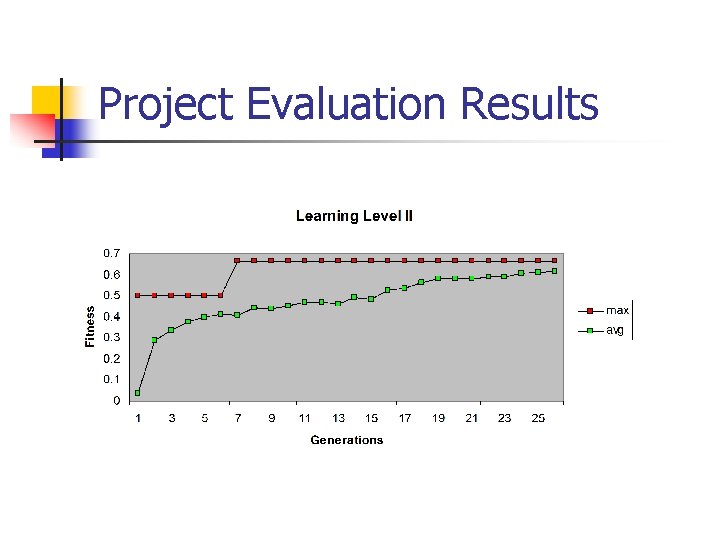

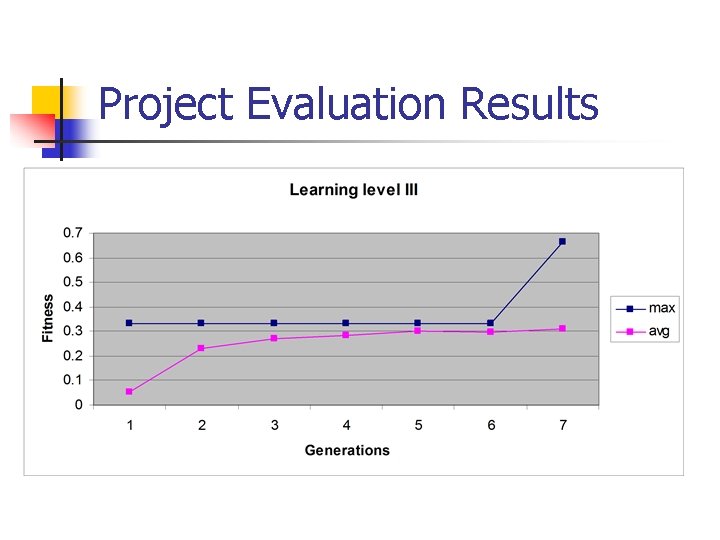

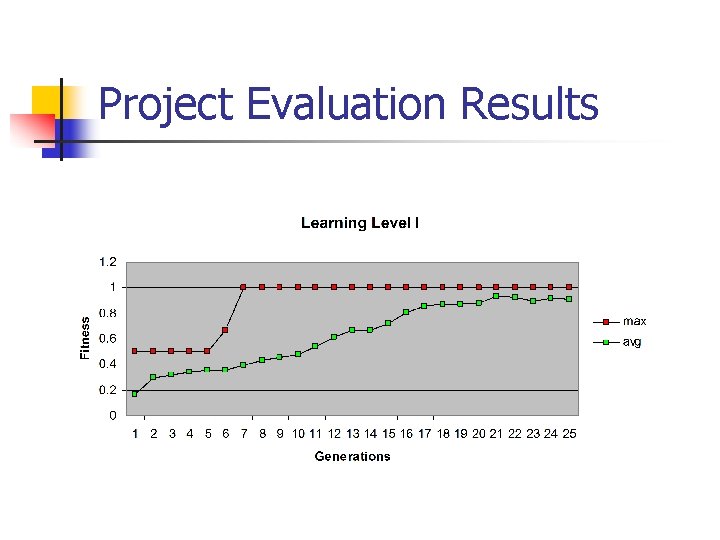

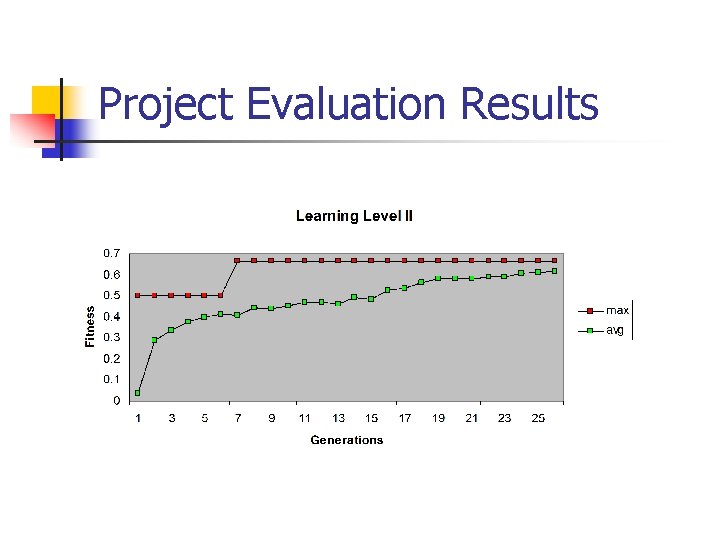

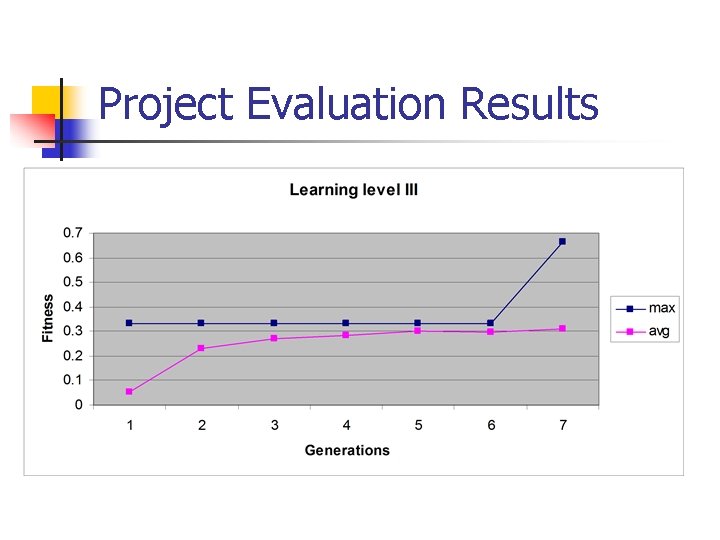

Project Evaluation Results

Project Evaluation Results

Project Evaluation Results

Tools Used n n n n n MS Visual Studio 2005 Bo. Uml Rational Software Architect NSIS (Nullsoft Scriptable Install System) CCCC (C and C++ Code Counter) Tiny. XML Visual Leak Detector Doxygen Process Dashboard Tortoise. SVN

References n n n n Design Patterns: Elements of Reusable Object-Oriented Software, Gamma, Erich; Richard Helm, Ralph Johnson, and John Vlissides (1995). Addison-Wesley. ISBN 0 -201 -63361 -2. Machine Learning, Tom Mitchell, Mc. Graw Hill, 1997 ISBN 0 -07 -042807 -7 Bo. UML http: //bouml. free. fr/ Rational Software Architect http: //www 306. ibm. com/software/awdtools/architect/swarchitect/ http: //sourceforge. net/projects/tinyxml/ NSIS (Nullsoft Scriptable Install System) http: //nsis. sourceforge. net/Main_Page CCCC (C and C++ Code Counter) http: //sourceforge. net/projects/cccc CPPUnit http: //cppunit. sourceforge. net/doc/lastest/cppunit_cookbook. html Tiny. XML http: //www. grinninglizard. com/tinyxml/ Visual Leak Detector available at http: //dmoulding. googlepages. com/vld Doxygen http: //www. stack. nl/~dimitri/doxygen/ Process Dashboard http: //processdash. sourceforge. net/ Tortoise. SVN http: //tortoisesvn. tigris. org/