Master Seminar Deep Learning for Medical Applications Mix

![When Can Semi-Supervised Learning Work? Ø Certain assumptions need to hold Entropy minimization [11]Olivier When Can Semi-Supervised Learning Work? Ø Certain assumptions need to hold Entropy minimization [11]Olivier](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-3.jpg)

![Related work v Consistency Regularization Ø Mean Teacher [4] • Averages model weights instead Related work v Consistency Regularization Ø Mean Teacher [4] • Averages model weights instead](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-7.jpg)

![Mix. Up [12]Shahine Bouabid, Vincent Delaitre. Mixup Regularization for Region Proposal based Object Detectors. Mix. Up [12]Shahine Bouabid, Vincent Delaitre. Mixup Regularization for Region Proposal based Object Detectors.](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-11.jpg)

![Methodology v Label Guessing v Sharpening [13]Noah Rubinstein. A fastai/Pytorch implementation of Mix. Match. Methodology v Label Guessing v Sharpening [13]Noah Rubinstein. A fastai/Pytorch implementation of Mix. Match.](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-14.jpg)

![References • [1]van Engelen, J. E. , Hoos, H. H. A survey on semi-supervised References • [1]van Engelen, J. E. , Hoos, H. H. A survey on semi-supervised](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-28.jpg)

![References • [8]Zagoruyko, Sergey and Komodakis, Nikos. Wide residual networks. In Proceedings of the References • [8]Zagoruyko, Sergey and Komodakis, Nikos. Wide residual networks. In Proceedings of the](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-29.jpg)

![Interpolation Consistency Training [14] • A special case in the ablation study where only Interpolation Consistency Training [14] • A special case in the ablation study where only](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-33.jpg)

- Slides: 33

Master Seminar: Deep Learning for Medical Applications Mix. Match: A Holistic Approach to Semi-Supervised Learning David Berthelot Avital Oliver Nicholas Carlini Nicolas Papernot Presented by: Festina Ismali Tutor: Tariq Mousa Bdair Ian Goodfellow Colin Raffel

Semi-Supervised Learning (SSL) • Unsupervised Learning Train a model with no labeled data available Ø Semi-supervised Learning (SSL) Train a model with a small fully labeled dataset and large unlabeled dataset • Supervised Learning Train a model with a fully labeled dataset Ø SSL Objective Improve the learner's performance by utilizing the unlabeled data, alleviating the need for labels Computer Aided Medical Procedures December 26, 2021 Slide 2

![When Can SemiSupervised Learning Work Ø Certain assumptions need to hold Entropy minimization 11Olivier When Can Semi-Supervised Learning Work? Ø Certain assumptions need to hold Entropy minimization [11]Olivier](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-3.jpg)

When Can Semi-Supervised Learning Work? Ø Certain assumptions need to hold Entropy minimization [11]Olivier Chapelle, Bernhard Scholkopf, and Alexander Zien. Semi-Supervised Learning. MIT Press, 2006. Computer Aided Medical Procedures December 26, 2021 Slide 3

Problem Statement • By leveraging large collections of labeled data, deep neural networks can achieve human level performance • However, in practice creating such large datasets with complete labels is: Ø Tedious and error prone Ø Time consuming Ø Difficult and costly, especially in medical domains since expert knowledge is required Computer Aided Medical Procedures December 26, 2021 Slide 4

Motivation • Many recent Semi-Supervised algorithms use one of the current dominant approaches: Consistency regularization • Entropy minimization Traditional regularization On the other hand, Mix. Match combines these approaches and finds a way to use them all in a unified manner, as a result it obtains the following benefits: Ø State-of-the-art results on all standard image benchmarks Ø State-of-the-art results on the PATE-Private Aggregation of Teacher Ensembles framework Computer Aided Medical Procedures December 26, 2021 Slide 5

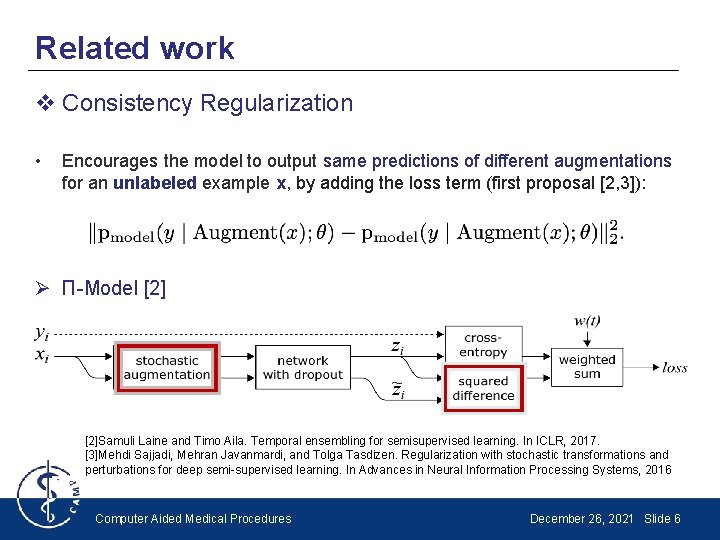

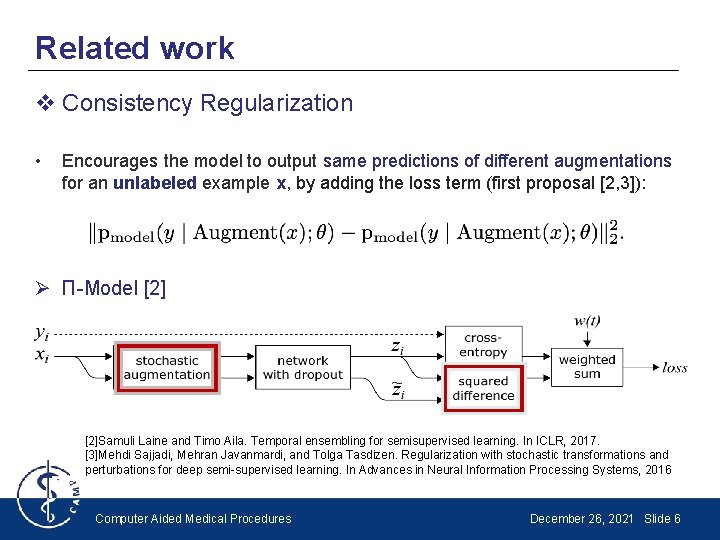

Related work v Consistency Regularization • Encourages the model to output same predictions of different augmentations for an unlabeled example x, by adding the loss term (first proposal [2, 3]): Ø Π-Model [2]Samuli Laine and Timo Aila. Temporal ensembling for semisupervised learning. In ICLR, 2017. [3]Mehdi Sajjadi, Mehran Javanmardi, and Tolga Tasdizen. Regularization with stochastic transformations and perturbations for deep semi-supervised learning. In Advances in Neural Information Processing Systems, 2016 Computer Aided Medical Procedures December 26, 2021 Slide 6

![Related work v Consistency Regularization Ø Mean Teacher 4 Averages model weights instead Related work v Consistency Regularization Ø Mean Teacher [4] • Averages model weights instead](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-7.jpg)

Related work v Consistency Regularization Ø Mean Teacher [4] • Averages model weights instead of predictions Ø Virtual Adversarial Training (VAT) • The applied perturbation to the input is carefully chosen (not stochastic) to maximally change the output class distribution [4]Antti Tarvainen and Harri Valpola. Mean teachers are better role models: Weight-averaged consistency targets improve semi-supervised deep learning results. Advances in Neural Information Processing Systems, 2017 [5] Takeru Miyato, Shin-ichi Maeda, Shin Ishii, and Masanori Koyama. Virtual adversarial training: a regularization method for supervised and semi-supervised learning. IEEE transactions on pattern analysis and machine intelligence, 2018. Computer Aided Medical Procedures December 26, 2021 Slide 7

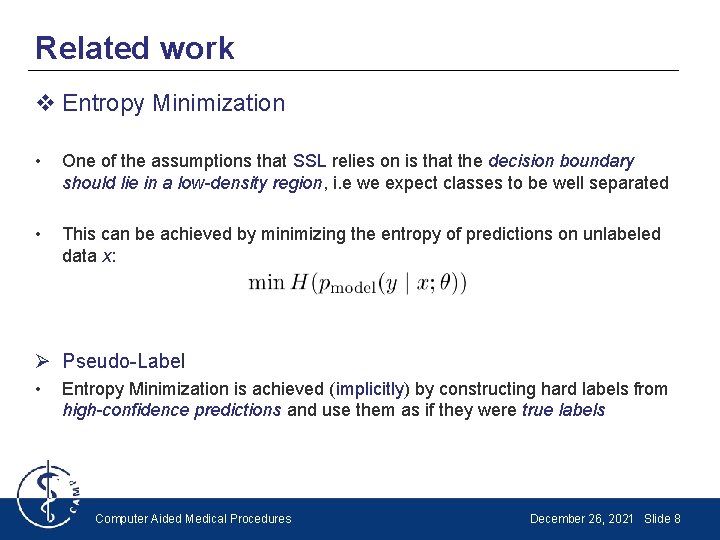

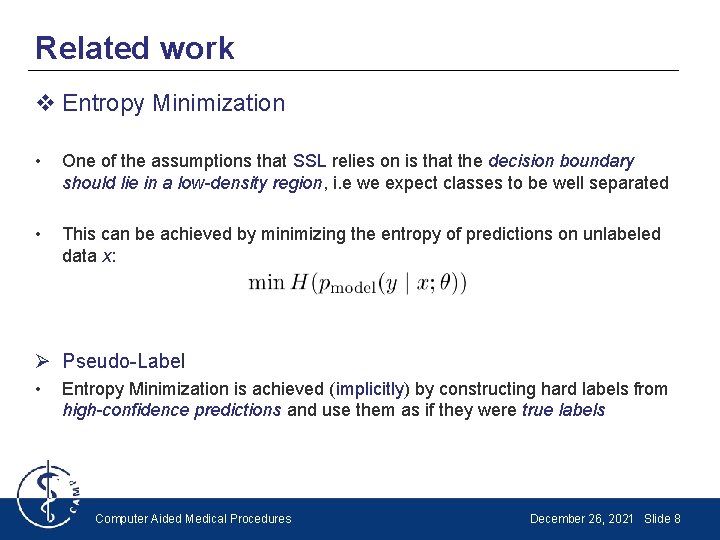

Related work v Entropy Minimization • One of the assumptions that SSL relies on is that the decision boundary should lie in a low-density region, i. e we expect classes to be well separated • This can be achieved by minimizing the entropy of predictions on unlabeled data x: Ø Pseudo-Label • Entropy Minimization is achieved (implicitly) by constructing hard labels from high-confidence predictions and use them as if they were true labels Computer Aided Medical Procedures December 26, 2021 Slide 8

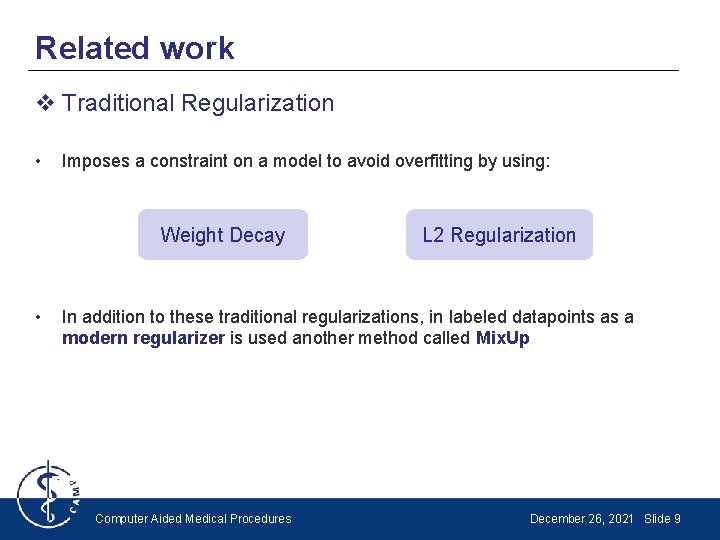

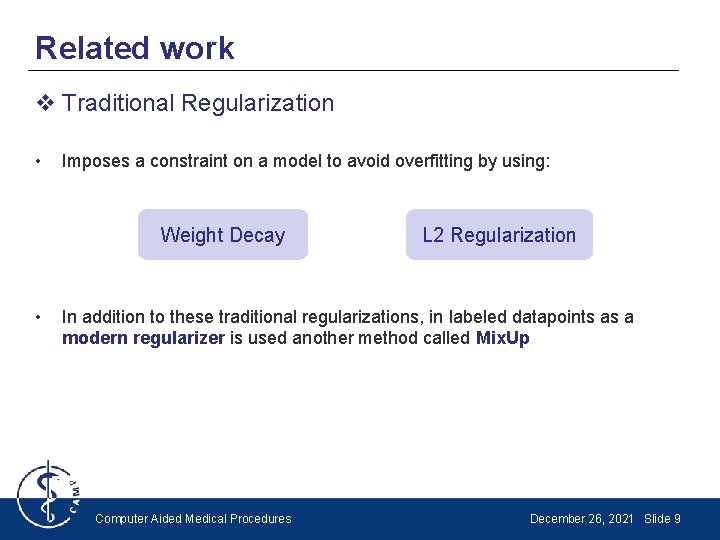

Related work v Traditional Regularization • Imposes a constraint on a model to avoid overfitting by using: Weight Decay • L 2 Regularization In addition to these traditional regularizations, in labeled datapoints as a modern regularizer is used another method called Mix. Up Computer Aided Medical Procedures December 26, 2021 Slide 9

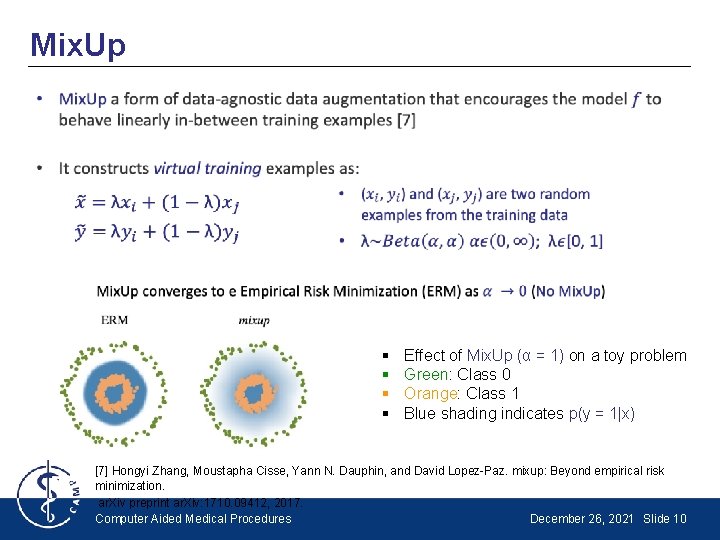

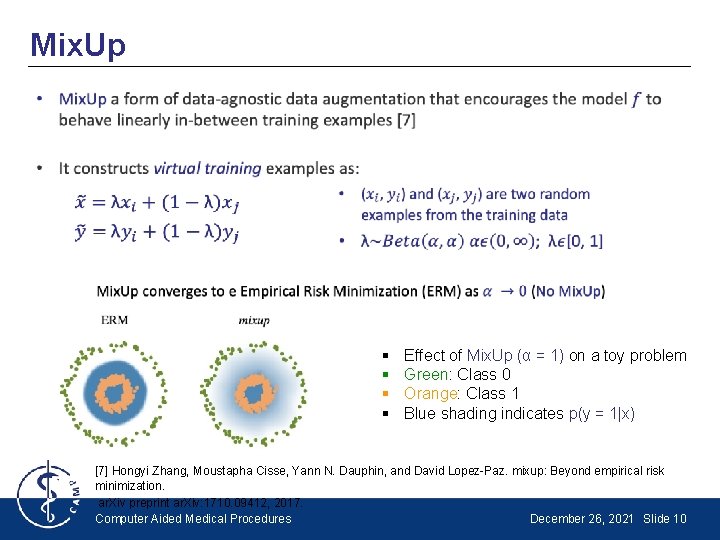

Mix. Up • § § Effect of Mix. Up (α = 1) on a toy problem Green: Class 0 Orange: Class 1 Blue shading indicates p(y = 1|x) [7] Hongyi Zhang, Moustapha Cisse, Yann N. Dauphin, and David Lopez-Paz. mixup: Beyond empirical risk minimization. ar. Xiv preprint ar. Xiv: 1710. 09412, 2017. Computer Aided Medical Procedures December 26, 2021 Slide 10

![Mix Up 12Shahine Bouabid Vincent Delaitre Mixup Regularization for Region Proposal based Object Detectors Mix. Up [12]Shahine Bouabid, Vincent Delaitre. Mixup Regularization for Region Proposal based Object Detectors.](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-11.jpg)

Mix. Up [12]Shahine Bouabid, Vincent Delaitre. Mixup Regularization for Region Proposal based Object Detectors. ar. Xiv: 2003. 02065 v 1 [cs. CV] 4 Mar 2020 Computer Aided Medical Procedures December 26, 2021 Slide 11

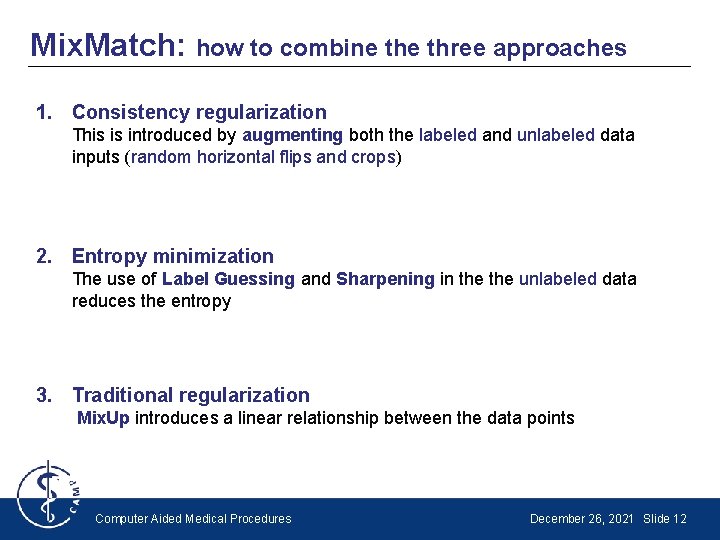

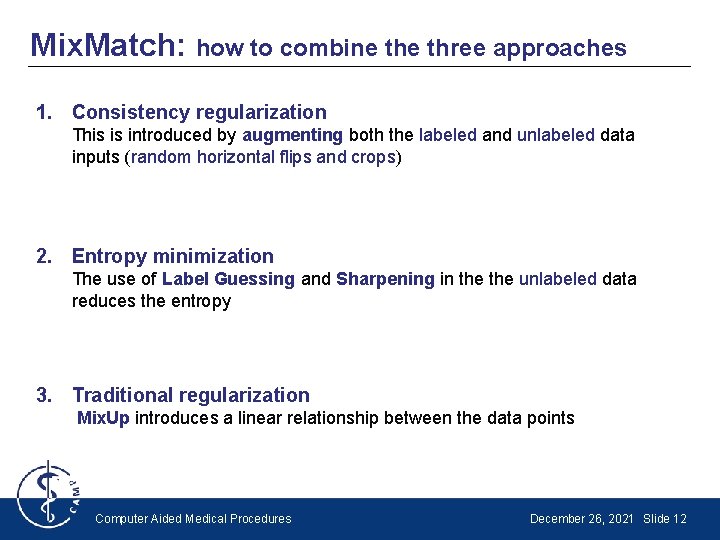

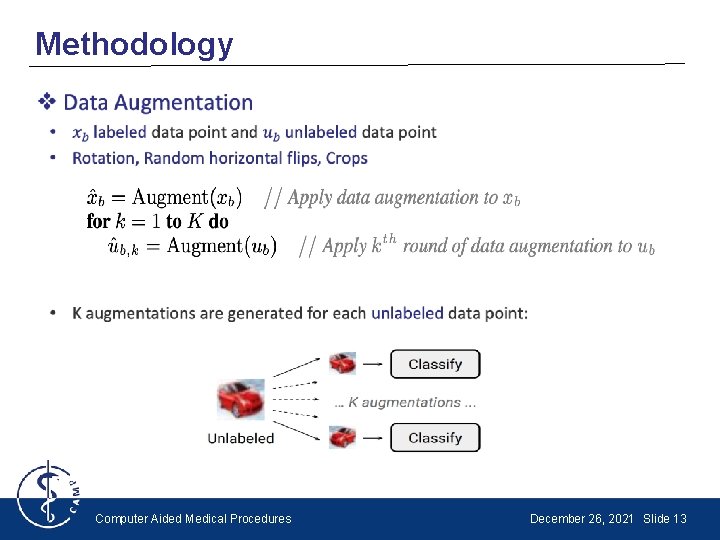

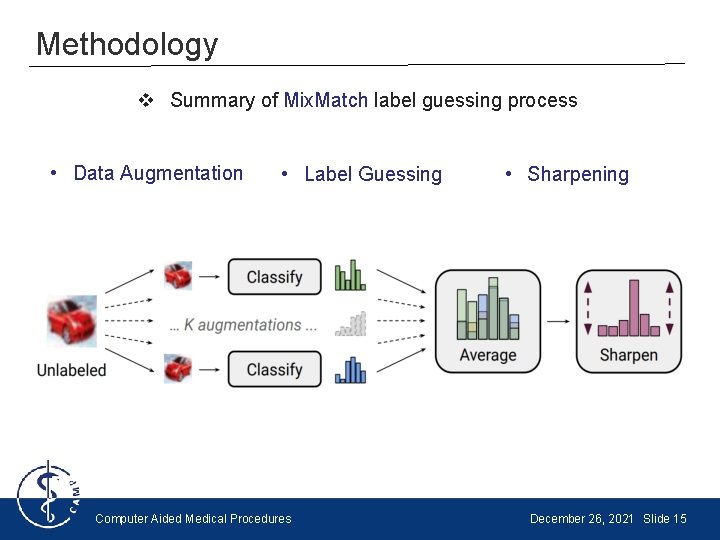

Mix. Match: how to combine three approaches 1. Consistency regularization This is introduced by augmenting both the labeled and unlabeled data inputs (random horizontal flips and crops) 2. Entropy minimization The use of Label Guessing and Sharpening in the unlabeled data reduces the entropy 3. Traditional regularization Mix. Up introduces a linear relationship between the data points Computer Aided Medical Procedures December 26, 2021 Slide 12

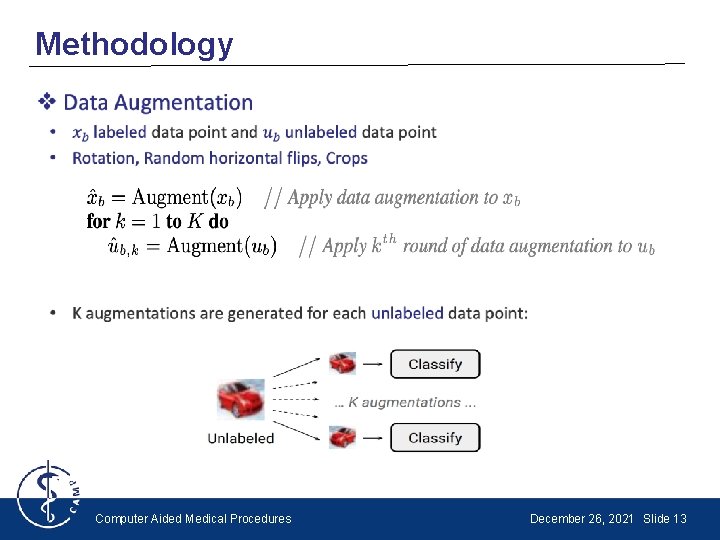

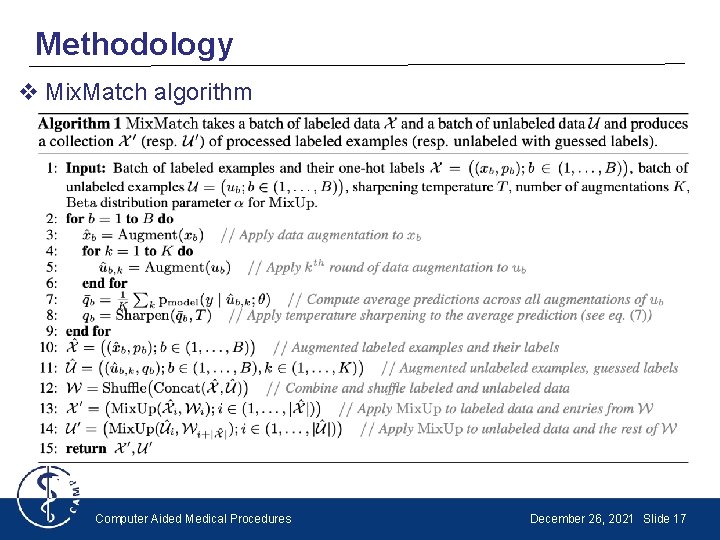

Methodology • Computer Aided Medical Procedures December 26, 2021 Slide 13

![Methodology v Label Guessing v Sharpening 13Noah Rubinstein A fastaiPytorch implementation of Mix Match Methodology v Label Guessing v Sharpening [13]Noah Rubinstein. A fastai/Pytorch implementation of Mix. Match.](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-14.jpg)

Methodology v Label Guessing v Sharpening [13]Noah Rubinstein. A fastai/Pytorch implementation of Mix. Match. Towardsdatascience 17 Jun 2017 Computer Aided Medical Procedures December 26, 2021 Slide 14

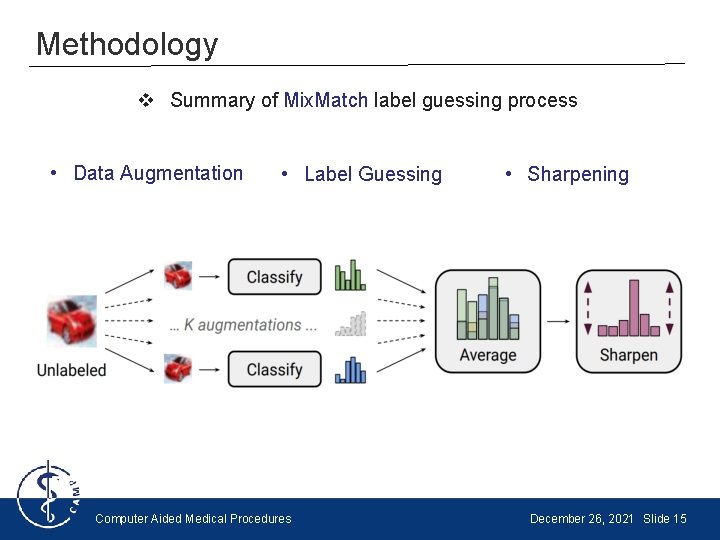

Methodology v Summary of Mix. Match label guessing process • Data Augmentation • Label Guessing Computer Aided Medical Procedures • Sharpening December 26, 2021 Slide 15

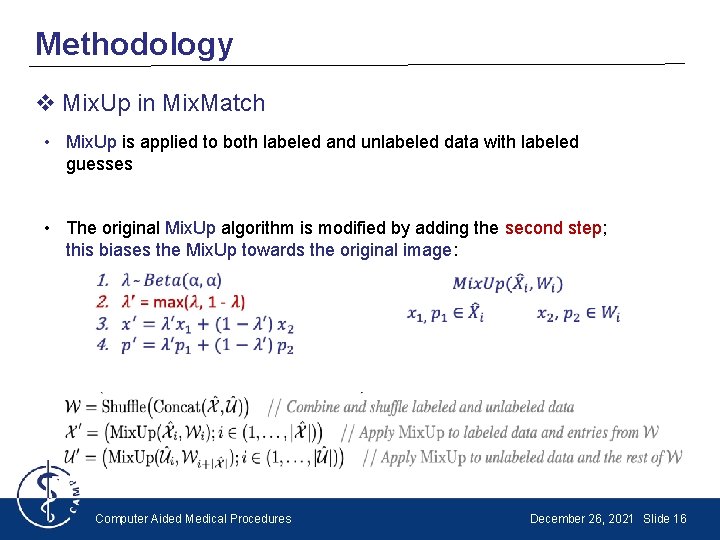

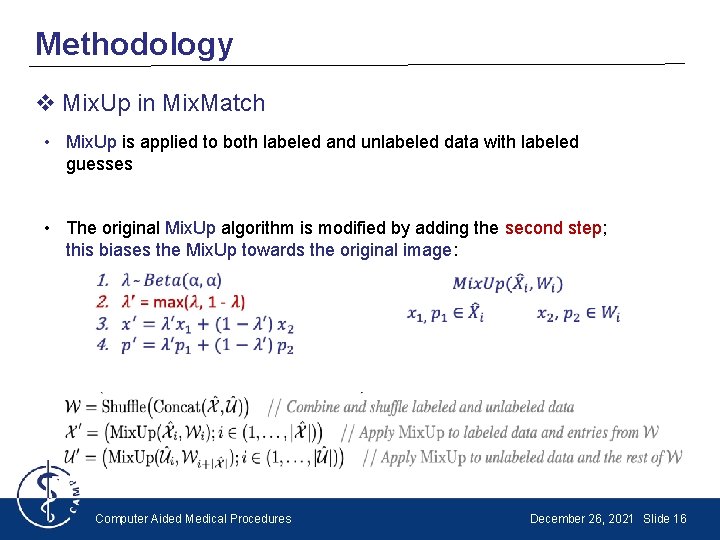

Methodology v Mix. Up in Mix. Match • Mix. Up is applied to both labeled and unlabeled data with labeled guesses • The original Mix. Up algorithm is modified by adding the second step; this biases the Mix. Up towards the original image: Computer Aided Medical Procedures December 26, 2021 Slide 16

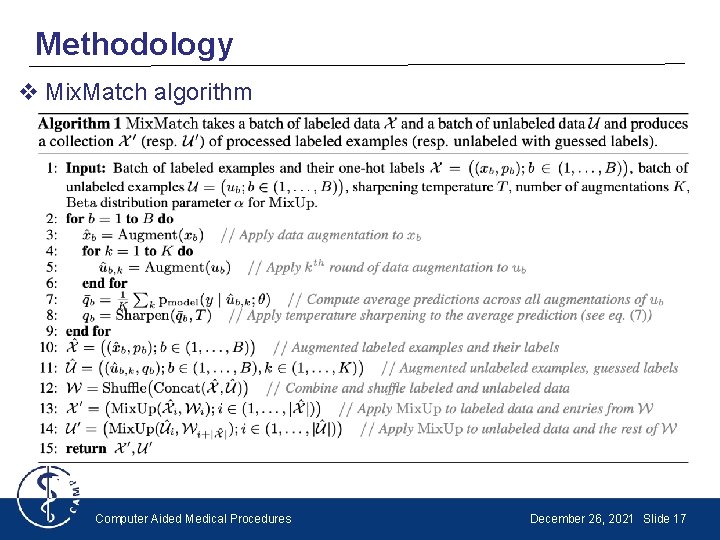

Methodology v Mix. Match algorithm Computer Aided Medical Procedures December 26, 2021 Slide 17

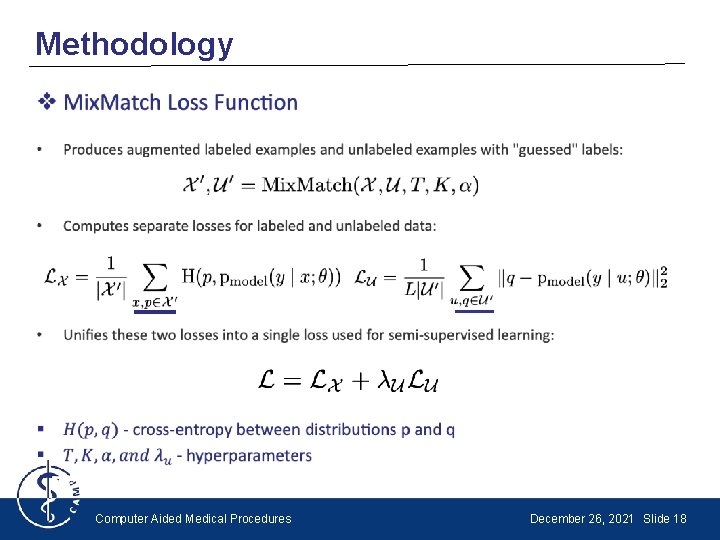

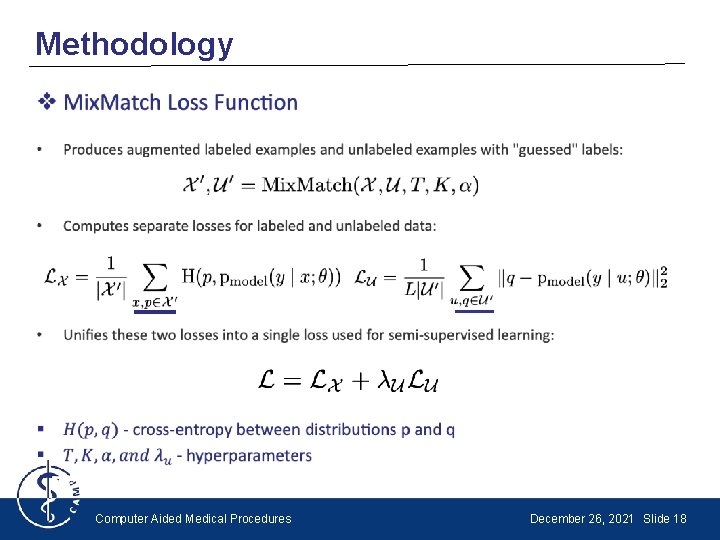

Methodology • Computer Aided Medical Procedures December 26, 2021 Slide 18

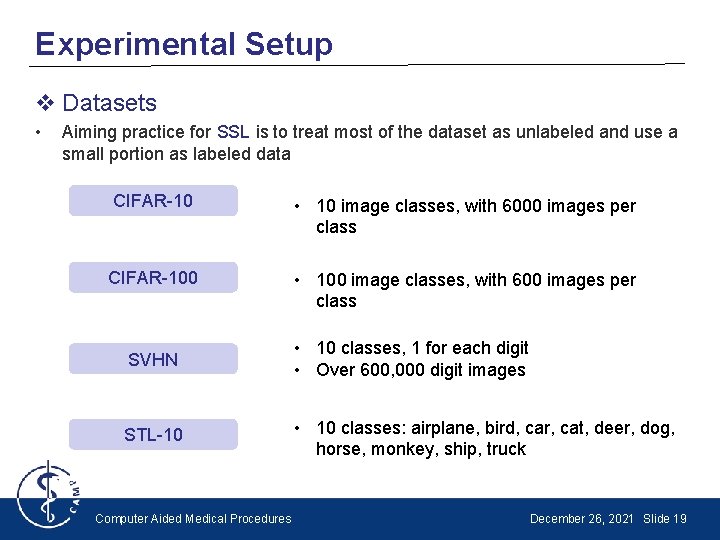

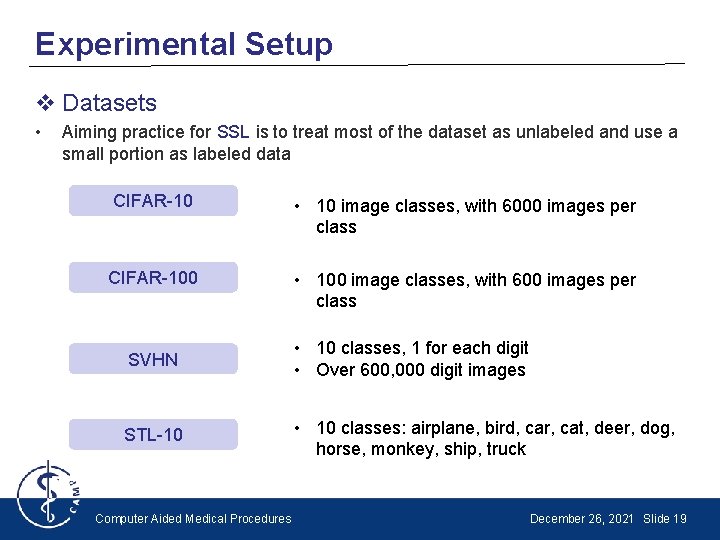

Experimental Setup v Datasets • Aiming practice for SSL is to treat most of the dataset as unlabeled and use a small portion as labeled data CIFAR-10 • 10 image classes, with 6000 images per class CIFAR-100 • 100 image classes, with 600 images per class SVHN • 10 classes, 1 for each digit • Over 600, 000 digit images STL-10 • 10 classes: airplane, bird, car, cat, deer, dog, horse, monkey, ship, truck Computer Aided Medical Procedures December 26, 2021 Slide 19

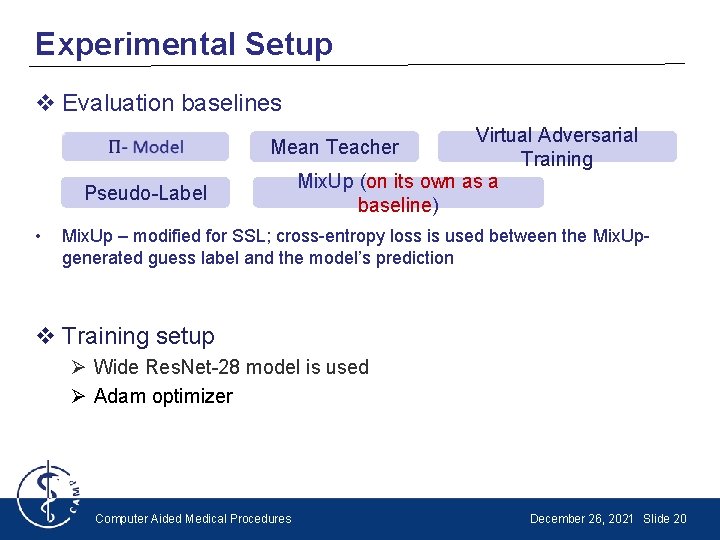

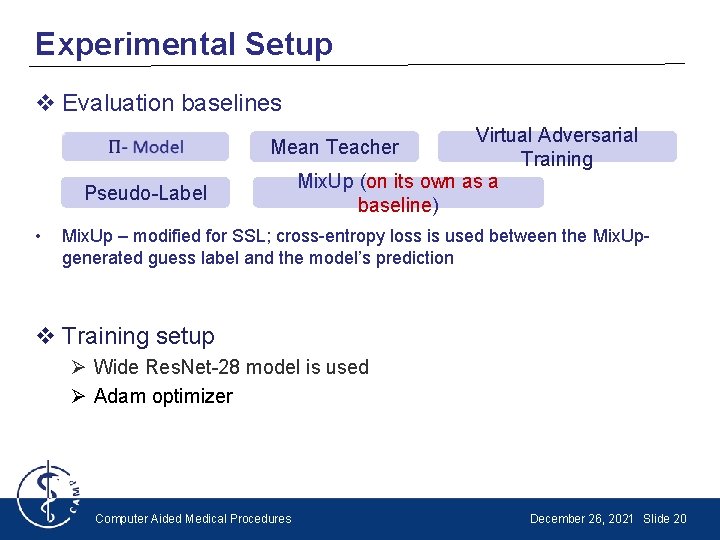

Experimental Setup v Evaluation baselines Virtual Adversarial Training Mix. Up (on its own as a baseline) Mean Teacher Pseudo-Label • Mix. Up – modified for SSL; cross-entropy loss is used between the Mix. Upgenerated guess label and the model’s prediction v Training setup Ø Wide Res. Net-28 model is used Ø Adam optimizer Computer Aided Medical Procedures December 26, 2021 Slide 20

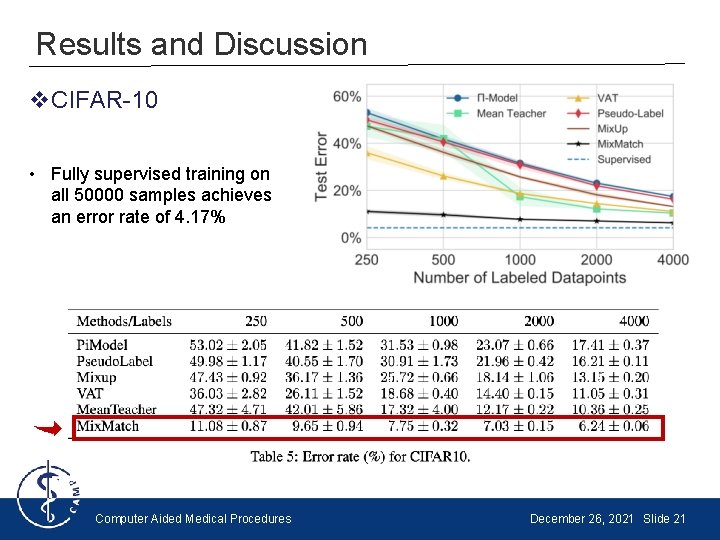

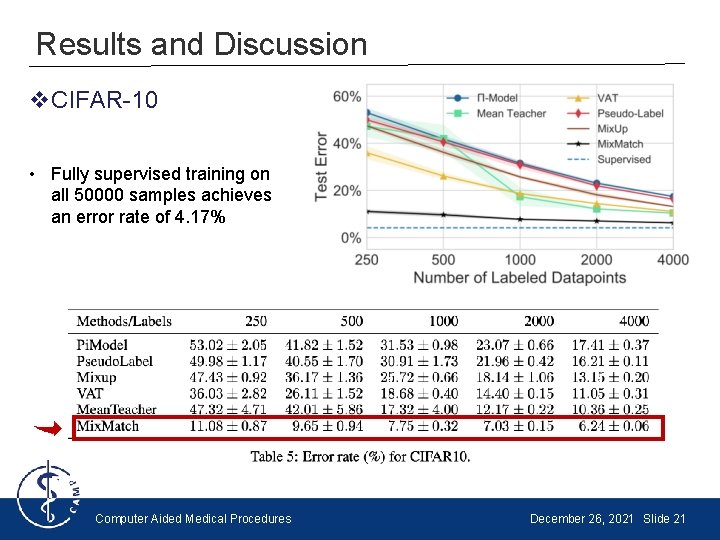

Results and Discussion v. CIFAR-10 • Fully supervised training on all 50000 samples achieves an error rate of 4. 17% Computer Aided Medical Procedures December 26, 2021 Slide 21

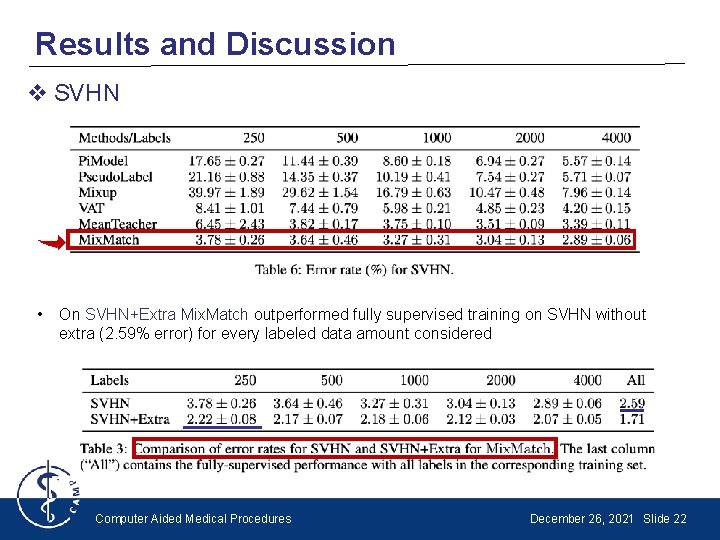

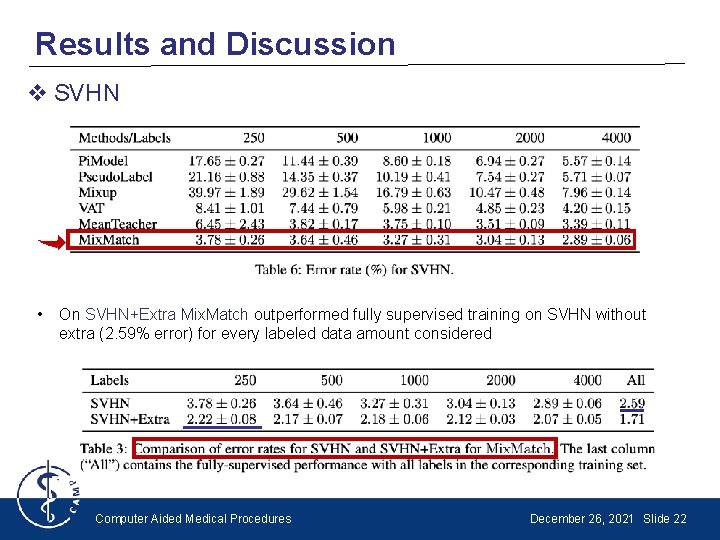

Results and Discussion v SVHN • On SVHN+Extra Mix. Match outperformed fully supervised training on SVHN without extra (2. 59% error) for every labeled data amount considered Computer Aided Medical Procedures December 26, 2021 Slide 22

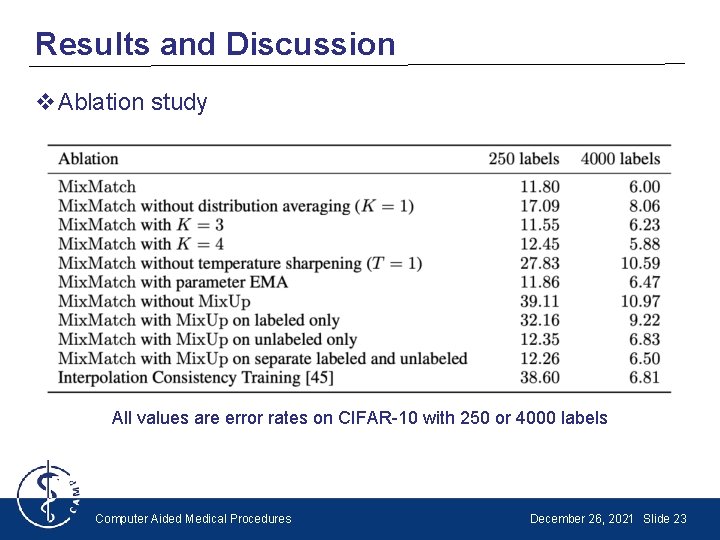

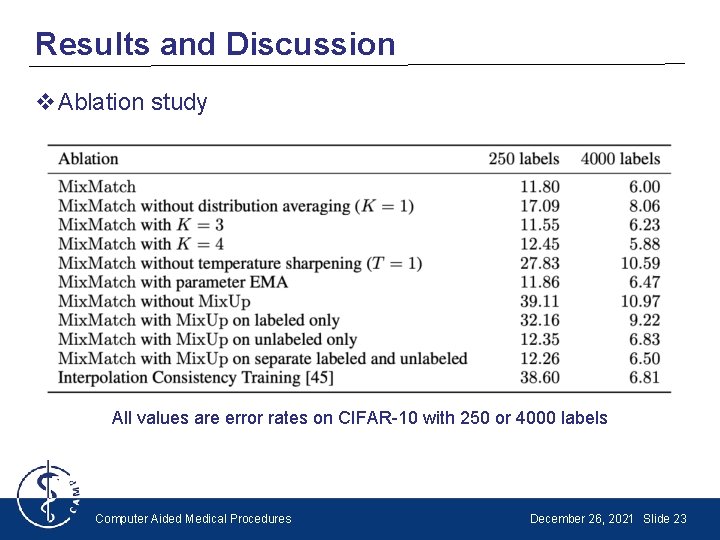

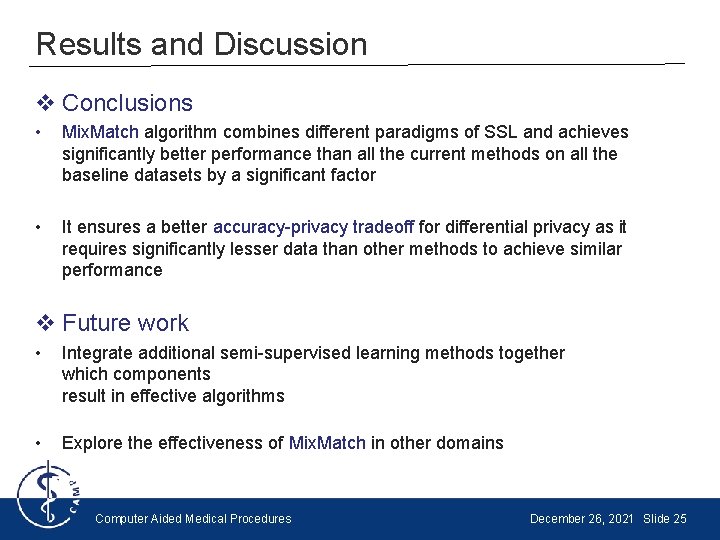

Results and Discussion v. Ablation study All values are error rates on CIFAR-10 with 250 or 4000 labels Computer Aided Medical Procedures December 26, 2021 Slide 23

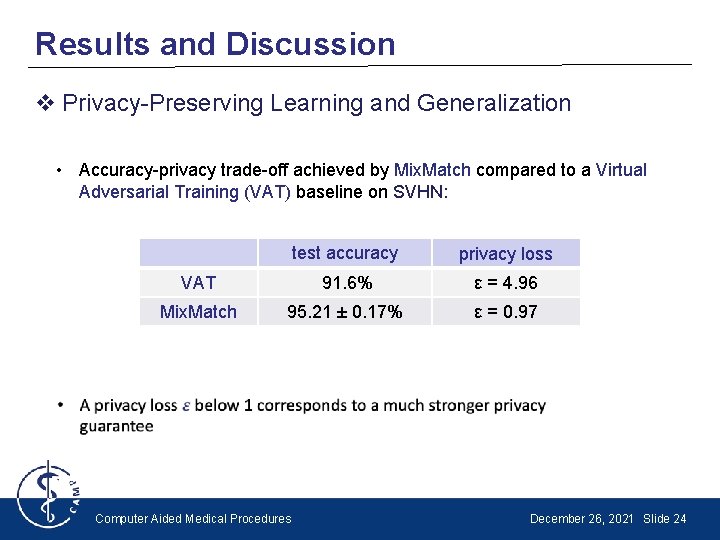

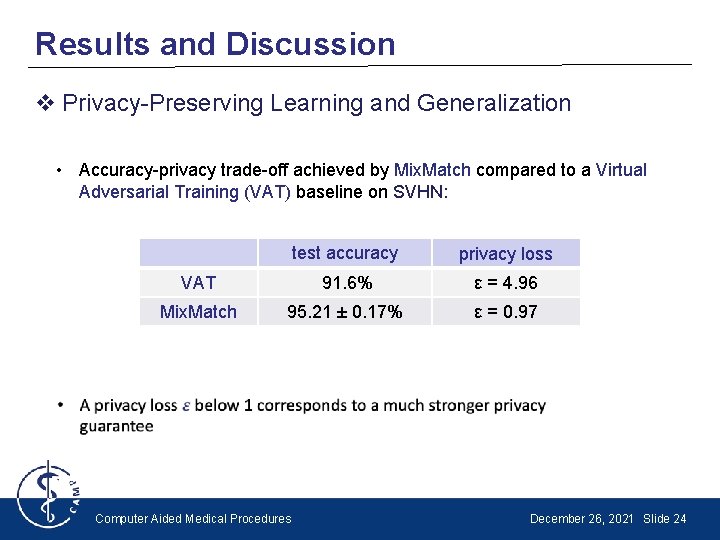

Results and Discussion v Privacy-Preserving Learning and Generalization • Accuracy-privacy trade-off achieved by Mix. Match compared to a Virtual Adversarial Training (VAT) baseline on SVHN: test accuracy privacy loss VAT 91. 6% ε = 4. 96 Mix. Match 95. 21 ± 0. 17% ε = 0. 97 Computer Aided Medical Procedures December 26, 2021 Slide 24

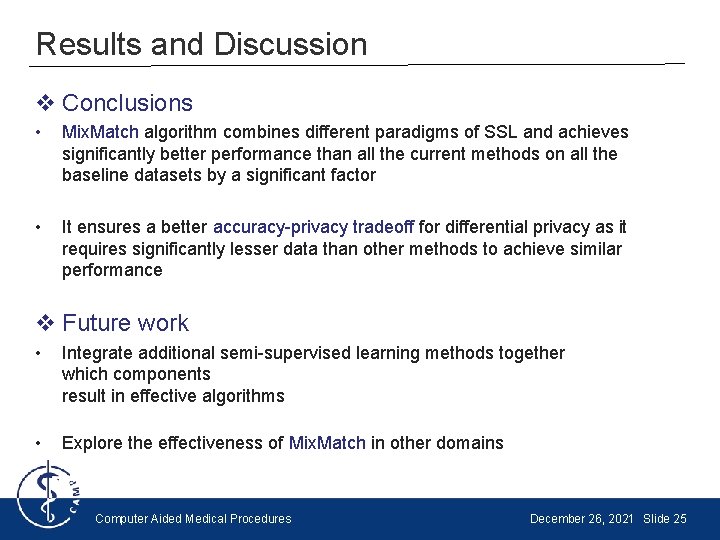

Results and Discussion v Conclusions • Mix. Match algorithm combines different paradigms of SSL and achieves significantly better performance than all the current methods on all the baseline datasets by a significant factor • It ensures a better accuracy-privacy tradeoff for differential privacy as it requires significantly lesser data than other methods to achieve similar performance v Future work • Integrate additional semi-supervised learning methods together which components result in effective algorithms • Explore the effectiveness of Mix. Match in other domains Computer Aided Medical Procedures December 26, 2021 Slide 25

Own review and discussion v Strengths • Well structured and organized • Code implementation is accessible • Fair comparison presentation with other methods § What is the effectiveness of this approach regarding medical data? § Why does Mix. Up work so well compared to other types of regularization, i. e why should enforcing linearity in predictions between images help the model? Computer Aided Medical Procedures December 26, 2021 Slide 26

THANK YOU FOR YOUR ATTENTION! Any Questions/Comments?

![References 1van Engelen J E Hoos H H A survey on semisupervised References • [1]van Engelen, J. E. , Hoos, H. H. A survey on semi-supervised](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-28.jpg)

References • [1]van Engelen, J. E. , Hoos, H. H. A survey on semi-supervised learning. Mach Learn 109, 373– 440 (2020). • [2]Samuli Laine and Timo Aila. Temporal ensembling for semisupervised learning. In ICLR, 2017. • [3]Mehdi Sajjadi, Mehran Javanmardi, and Tolga Tasdizen. Regularization with stochastic transformations and perturbations for deep semi-supervised learning. In Advances in Neural Information Processing Systems, 2016 • [4]Antti Tarvainen and Harri Valpola. Mean teachers are better role models: Weight-averaged consistency targets improve semi-supervised deep learning results. Advances in Neural Information Processing Systems, 2017 • [5] Takeru Miyato, Shin-ichi Maeda, Shin Ishii, and Masanori Koyama. Virtual adversarial training: a regularization method for supervised and semi-supervised learning. IEEE transactions on pattern analysis and machine intelligence, 2018. • [6] Dong-Hyun Lee. Pseudo-label: The simple and efficient semi-supervised learning method for deep neural networks. In ICML Workshop on Challenges in Representation Learning, 2013 • [7] Hongyi Zhang, Moustapha Cisse, Yann N. Dauphin, and David Lopez-Paz. mixup: Beyond empirical risk minimization. ar. Xiv preprint ar. Xiv: 1710. 09412, 2017 Computer Aided Medical Procedures December 26, 2021 Slide 28

![References 8Zagoruyko Sergey and Komodakis Nikos Wide residual networks In Proceedings of the References • [8]Zagoruyko, Sergey and Komodakis, Nikos. Wide residual networks. In Proceedings of the](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-29.jpg)

References • [8]Zagoruyko, Sergey and Komodakis, Nikos. Wide residual networks. In Proceedings of the British Machine Vision Conference (BMVC), 2016. • [9]Avital Oliver, Augustus Odena, Colin Raffel, Ekin Dogus Cubuk, and Ian Goodfellow. Realistic evaluation of deep semi-supervised learning algorithms. In Advances in Neural Information Processing Systems, pages 3235– 3246, 2018 • [10] Nicolas Papernot, Martín Abadi, Ulfar Erlingsson, Ian Goodfellow, and Kunal Talwar. Semisupervised knowledge transfer for deep learning from private training data. ar. Xiv preprint ar. Xiv: 1610. 05755, 2016 • [11] Olivier Chapelle, Bernhard Scholkopf, and Alexander Zien. Semi-Supervised Learning. MIT Press, 2006 • [12] Shahine Bouabid, Vincent Delaitre. Mixup Regularization for Region Proposal based Object Detectors. ar. Xiv: 2003. 02065 v 1 [cs. CV] 4 Mar 2020 • [13] Noah Rubinstein. A fastai/Pytorch implementation of Mix. Match. Towardsdatascience 17 Jun 2017 • [14] Vikas Verma, Alex Lamb, Juho Kannala, Yoshua Bengio, and David Lopez-Paz. Interpolation consistency training for semi-supervised learning. ar. Xiv preprint ar. Xiv: 1903. 03825, 2019. Computer Aided Medical Procedures December 26, 2021 Slide 29

EXTRA SLIDES

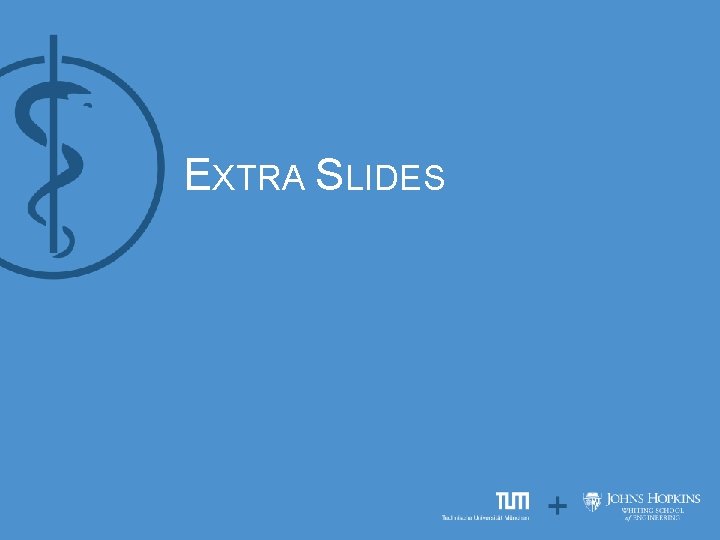

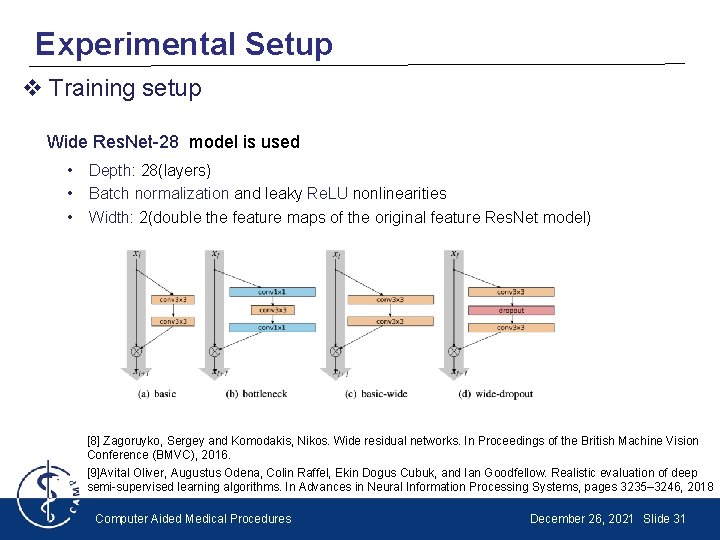

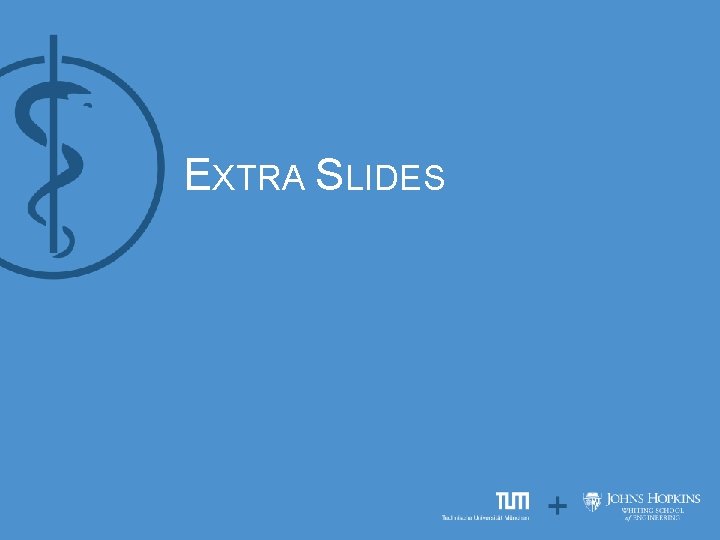

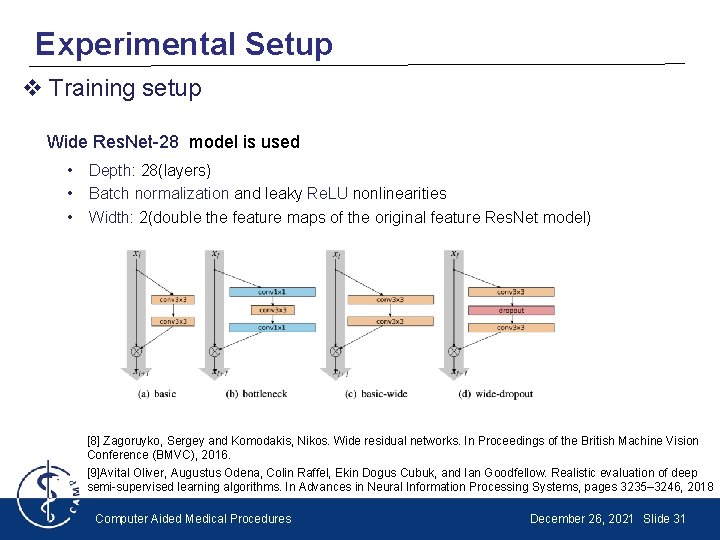

Experimental Setup v Training setup Wide Res. Net-28 model is used • Depth: 28(layers) • Batch normalization and leaky Re. LU nonlinearities • Width: 2(double the feature maps of the original feature Res. Net model) [8] Zagoruyko, Sergey and Komodakis, Nikos. Wide residual networks. In Proceedings of the British Machine Vision Conference (BMVC), 2016. [9]Avital Oliver, Augustus Odena, Colin Raffel, Ekin Dogus Cubuk, and Ian Goodfellow. Realistic evaluation of deep semi-supervised learning algorithms. In Advances in Neural Information Processing Systems, pages 3235– 3246, 2018 Computer Aided Medical Procedures December 26, 2021 Slide 31

Experimental Setup v Training setup Structure of wide residual networks: • • k determines the number of width (scales the width of residual blocks) N the number of blocks in a group Filter size is 3 x 3 B(3, 3) denotes a residual block with 3 x 3 convolutional layers o Adam optimizer for training o Weight decay of 0. 0004 at each update for the Wide Res. Net-28 model o Checkpoint every 2 16 training samples and report the median error rate of the last 20 checkpoints Computer Aided Medical Procedures December 26, 2021 Slide 32

![Interpolation Consistency Training 14 A special case in the ablation study where only Interpolation Consistency Training [14] • A special case in the ablation study where only](https://slidetodoc.com/presentation_image_h2/351911d958cf0f12ffc075506bd1ebf4/image-33.jpg)

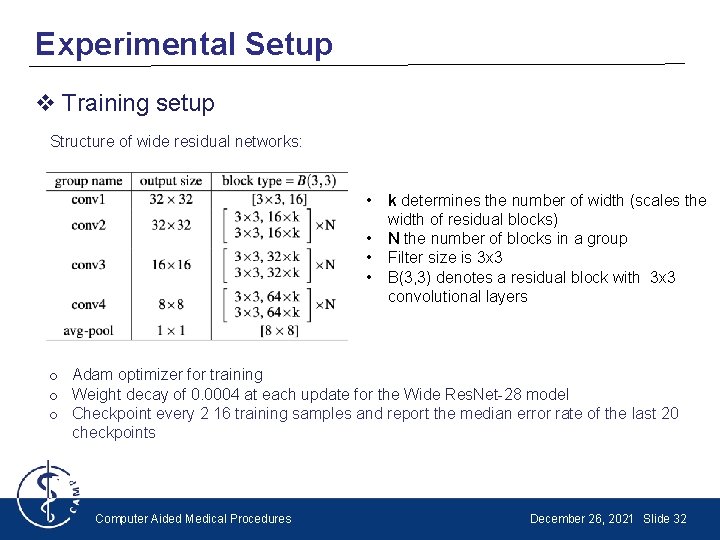

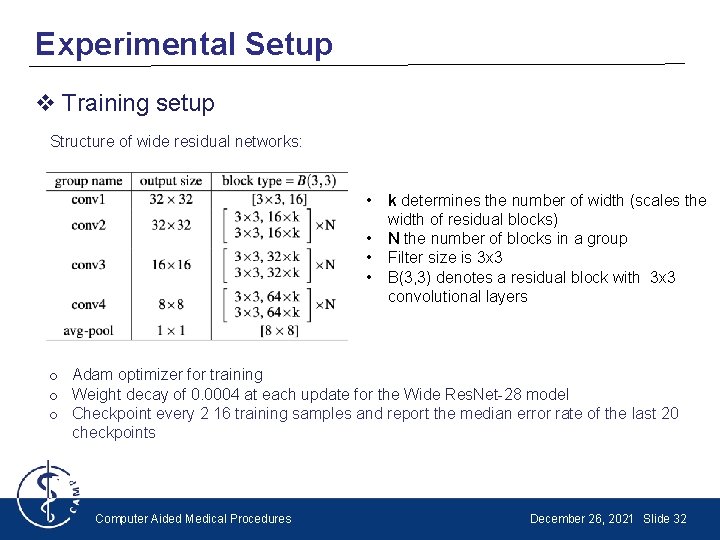

Interpolation Consistency Training [14] • A special case in the ablation study where only unlabeled mixup is used, no sharpening is applied and EMA parameters are used for label guessing [14] Vikas Verma, Alex Lamb, Juho Kannala, Yoshua Bengio, and David Lopez-Paz. Interpolation consistency training for semi-supervised learning. ar. Xiv preprint ar. Xiv: 1903. 03825, 2019. Computer Aided Medical Procedures December 26, 2021 Slide 33