Markov Decision Process MDP Ruti Glick BarIlan university

Markov Decision Process (MDP) Ruti Glick Bar-Ilan university 1

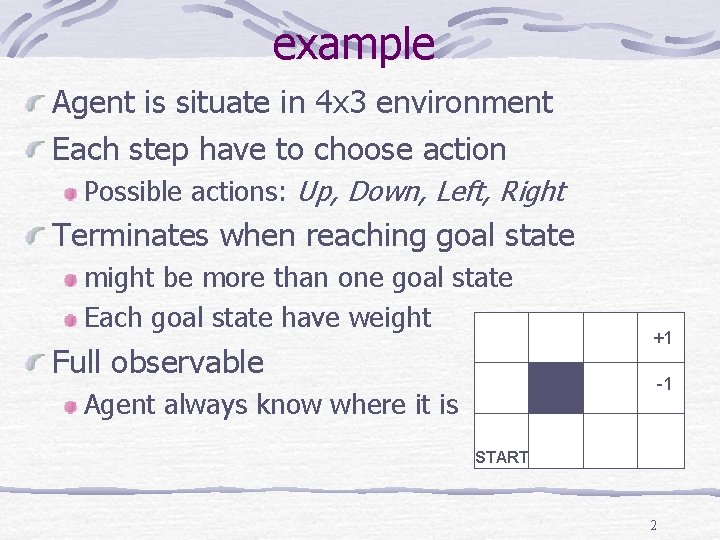

example Agent is situate in 4 x 3 environment Each step have to choose action Possible actions: Up, Down, Left, Right Terminates when reaching goal state might be more than one goal state Each goal state have weight Full observable +1 -1 Agent always know where it is START 2

![Example (cont. ) In deterministic environment solution easy: [Up, Right, Right] [Right, Up, Right] Example (cont. ) In deterministic environment solution easy: [Up, Right, Right] [Right, Up, Right]](http://slidetodoc.com/presentation_image_h/ed7f1b2a0bb7f5ed9b9a52df3cb07cf3/image-3.jpg)

Example (cont. ) In deterministic environment solution easy: [Up, Right, Right] [Right, Up, Right] START +1 +1 -1 -1 START 3

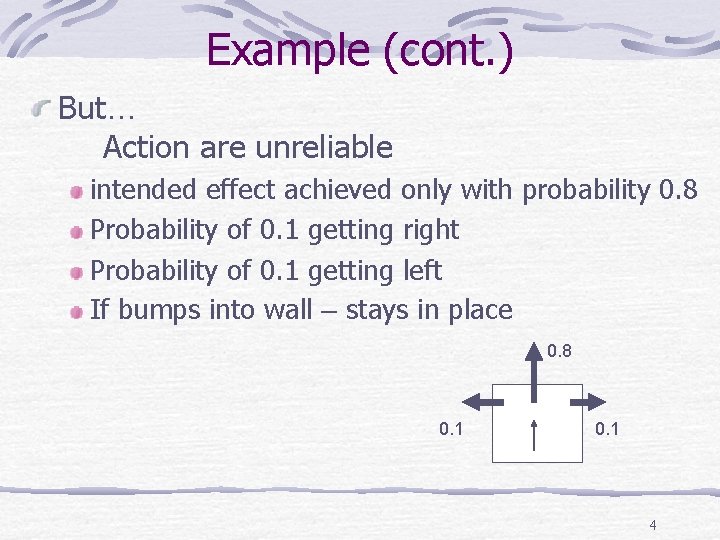

Example (cont. ) But… Action are unreliable intended effect achieved only with probability 0. 8 Probability of 0. 1 getting right Probability of 0. 1 getting left If bumps into wall – stays in place 0. 8 0. 1 4

![Example (cont. ) by executing the sequence [Up, Right, Right]: Chance of following the Example (cont. ) by executing the sequence [Up, Right, Right]: Chance of following the](http://slidetodoc.com/presentation_image_h/ed7f1b2a0bb7f5ed9b9a52df3cb07cf3/image-5.jpg)

Example (cont. ) by executing the sequence [Up, Right, Right]: Chance of following the desired path: +1 0. 8*0. 8 = 0. 85 =0. 32768 -1 START Chance of accidentally get to goal from the other path: 0. 1*0. 8 = 0. 14 * 0. 81 =0. 00008 +1 -1 Total of only 0. 32776 to get to desired goal START 5

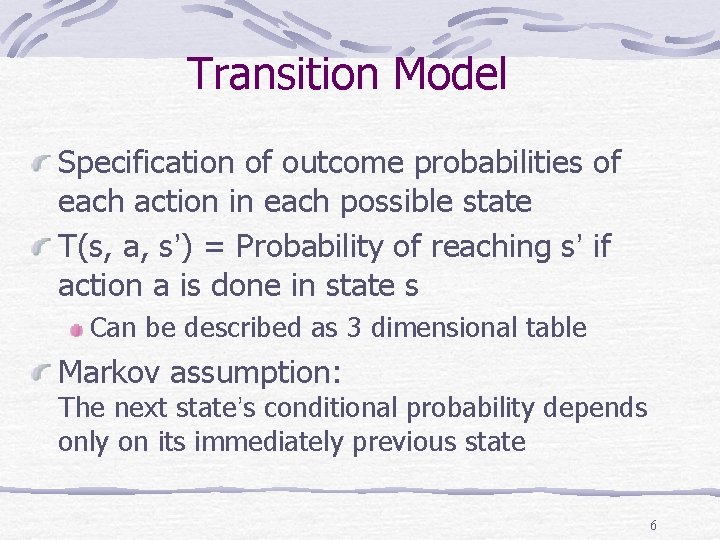

Transition Model Specification of outcome probabilities of each action in each possible state T(s, a, s’) = Probability of reaching s’ if action a is done in state s Can be described as 3 dimensional table Markov assumption: The next state’s conditional probability depends only on its immediately previous state 6

Reward Positive of negative reward that agent receives in state s Sometimes reward is associated only with state R(S) Sometimes reward is assumed associated with state and action R(S, A) Sometimes, reward associated with state, action and destination-state R(S, A, J) In our example: R([4, 3]) = +1 R([4, 2]) = -1 R(s) = -0. 04, s ≠ [4, 3] and s ≠ [4, 2] Can be seen as the desired of agent staying in game 7

Environment history Decision problem is sequential Utility function depend on sequence of state Utility function is sum of rewards received In our example: If reached (4, 3) after 10 steps than total utility= 1+10*(-0. 04) = 0. 6 8

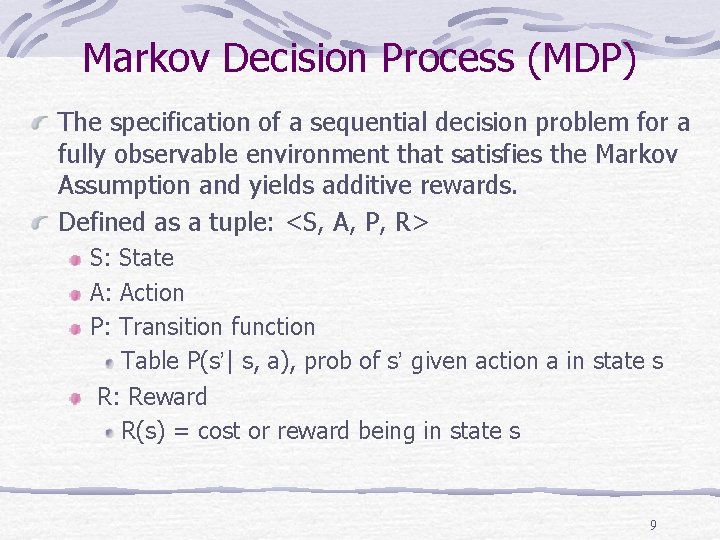

Markov Decision Process (MDP) The specification of a sequential decision problem for a fully observable environment that satisfies the Markov Assumption and yields additive rewards. Defined as a tuple: <S, A, P, R> S: State A: Action P: Transition function Table P(s’| s, a), prob of s’ given action a in state s R: Reward R(s) = cost or reward being in state s 9

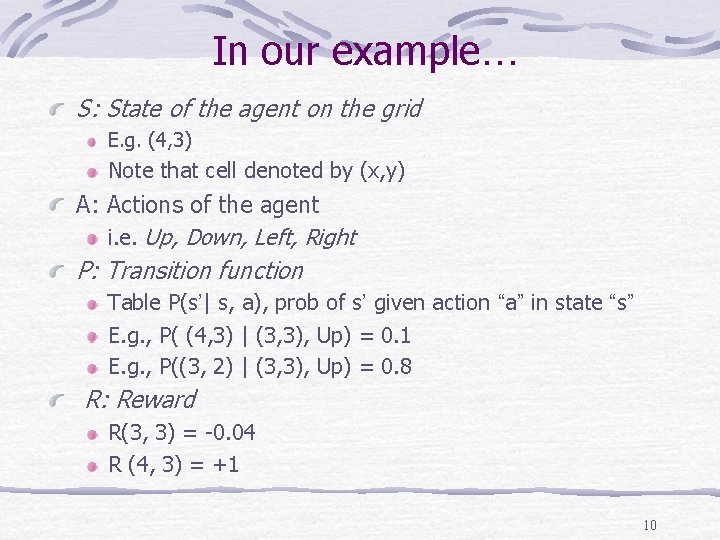

In our example… S: State of the agent on the grid E. g. (4, 3) Note that cell denoted by (x, y) A: Actions of the agent i. e. Up, Down, Left, Right P: Transition function Table P(s’| s, a), prob of s’ given action “a” in state “s” E. g. , P( (4, 3) | (3, 3), Up) = 0. 1 E. g. , P((3, 2) | (3, 3), Up) = 0. 8 R: Reward R(3, 3) = -0. 04 R (4, 3) = +1 10

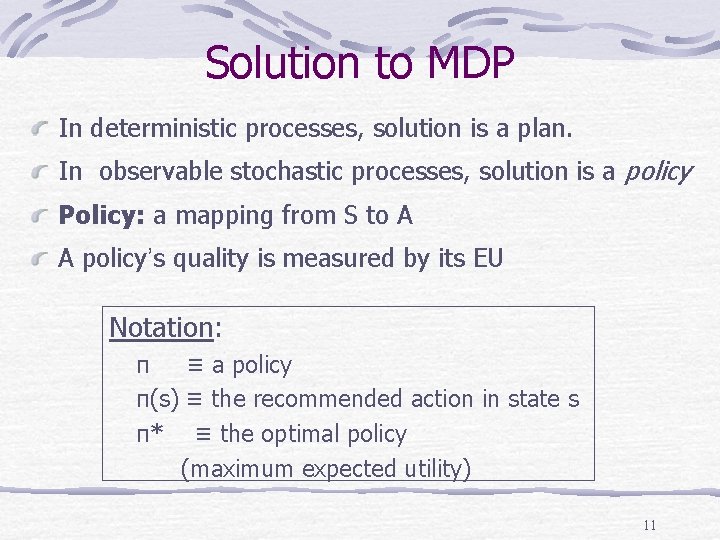

Solution to MDP In deterministic processes, solution is a plan. In observable stochastic processes, solution is a policy Policy: a mapping from S to A A policy’s quality is measured by its EU Notation: π ≡ a policy π(s) ≡ the recommended action in state s π* ≡ the optimal policy (maximum expected utility) 11

Following a Policy Procedure: 1. Determine current state s 2. Execute action π(s) 3. Repeat 1 -2 12

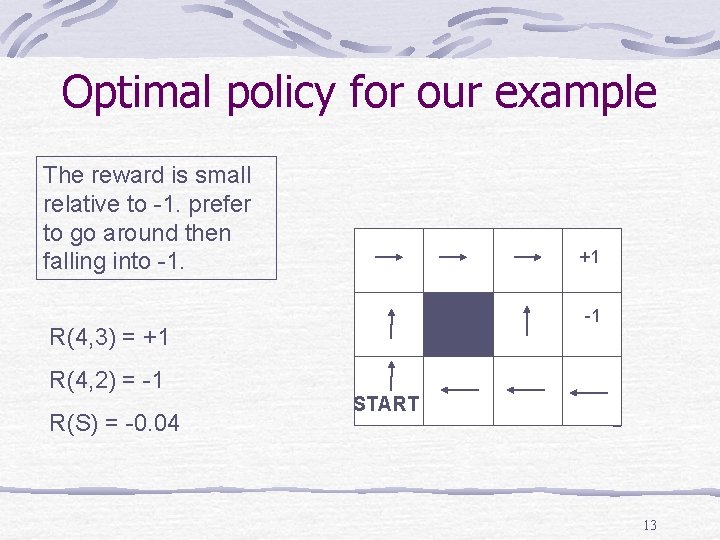

Optimal policy for our example The reward is small relative to -1. prefer to go around then falling into -1. +1 -1 R(4, 3) = +1 R(4, 2) = -1 R(S) = -0. 04 START 13

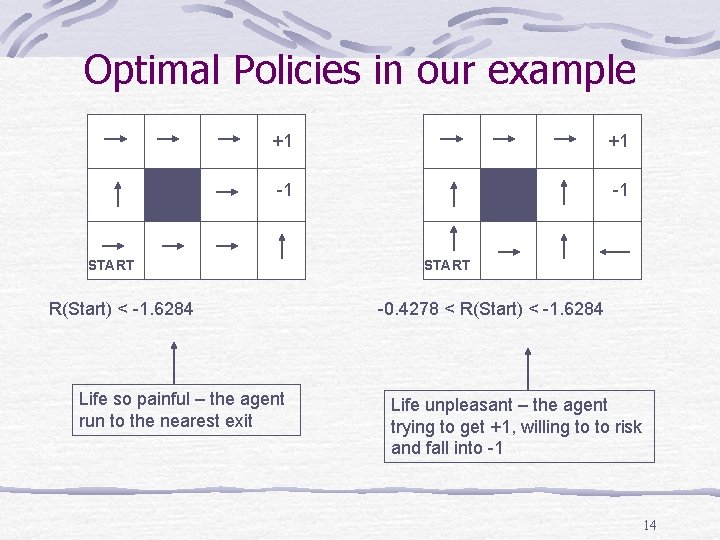

Optimal Policies in our example +1 +1 -1 -1 START R(Start) < -1. 6284 Life so painful – the agent run to the nearest exit START -0. 4278 < R(Start) < -1. 6284 Life unpleasant – the agent trying to get +1, willing to to risk and fall into -1 14

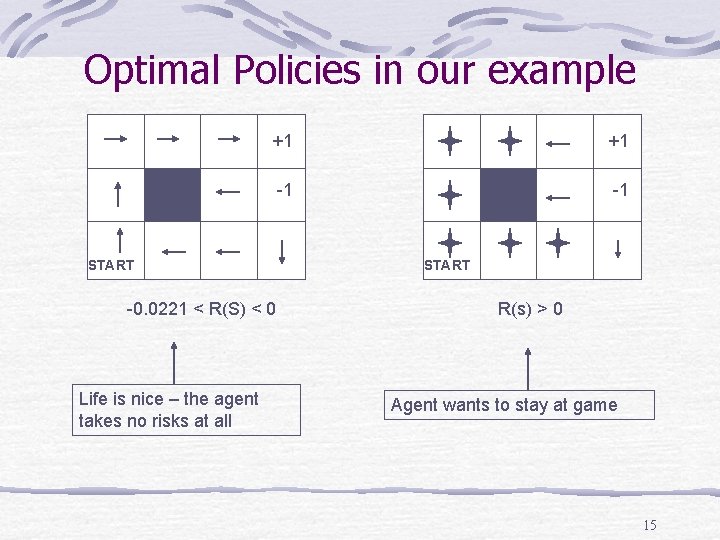

Optimal Policies in our example +1 +1 -1 -1 START -0. 0221 < R(S) < 0 Life is nice – the agent takes no risks at all START R(s) > 0 Agent wants to stay at game 15

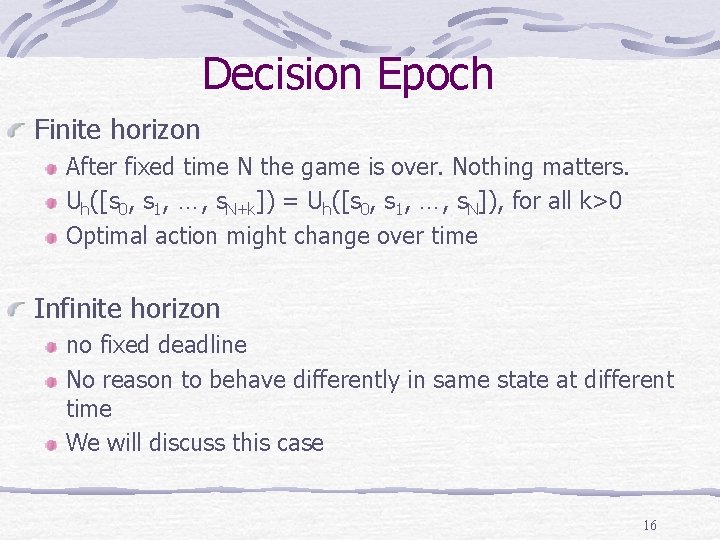

Decision Epoch Finite horizon After fixed time N the game is over. Nothing matters. Uh([s 0, s 1, …, s. N+k]) = Uh([s 0, s 1, …, s. N]), for all k>0 Optimal action might change over time Infinite horizon no fixed deadline No reason to behave differently in same state at different time We will discuss this case 16

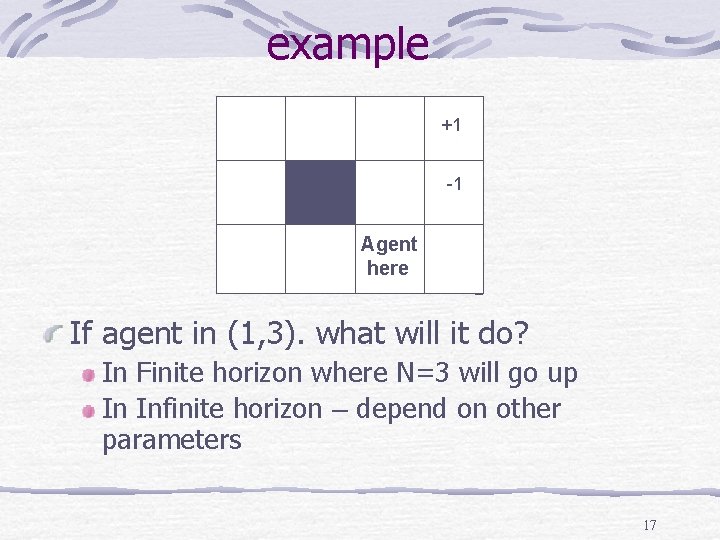

example +1 -1 Agent here If agent in (1, 3). what will it do? In Finite horizon where N=3 will go up In Infinite horizon – depend on other parameters 17

![Assigning Utility to Sequences Additive reward Uh([s 0, s 1, s 2, …]) = Assigning Utility to Sequences Additive reward Uh([s 0, s 1, s 2, …]) =](http://slidetodoc.com/presentation_image_h/ed7f1b2a0bb7f5ed9b9a52df3cb07cf3/image-18.jpg)

Assigning Utility to Sequences Additive reward Uh([s 0, s 1, s 2, …]) = R(s 0) + R(s 1) + R(s 2) + … In our last example we used this method Discounted Factor Uh([s 0, s 1, s 2, …]) = R(s 0) + γ R(s 1) + γ 2 R(s 2) + … 0<γ<1 γrepresent the chance the world will continue exist We will assume discounter reward 18

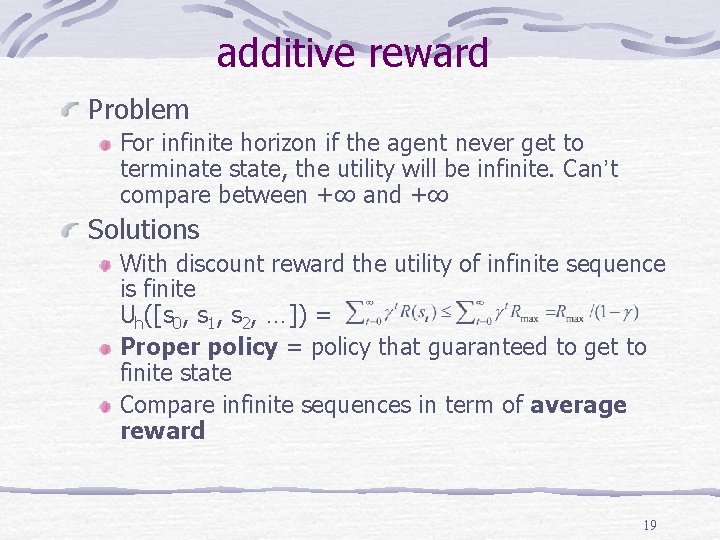

additive reward Problem For infinite horizon if the agent never get to terminate state, the utility will be infinite. Can’t compare between +∞ and +∞ Solutions With discount reward the utility of infinite sequence is finite Uh([s 0, s 1, s 2, …]) = Proper policy = policy that guaranteed to get to finite state Compare infinite sequences in term of average reward 19

conclusion Discount reward is the best solution for infinite horizon Policy π represent a group of possible sequences Specific probability of each case Value of policy is the expected sum of all possible state sequences 20

- Slides: 20