Markov Chains Sourced by Presentation Introduction12 20211026 2

Markov Chains Sourced by 交大王晉元老師 Presentation:陳晨、士庭

Introduction(1/2) • 2021/10/26 2

Introduction(2/2) • 2021/10/26 3

Examples: Weather • 2021/10/26 4

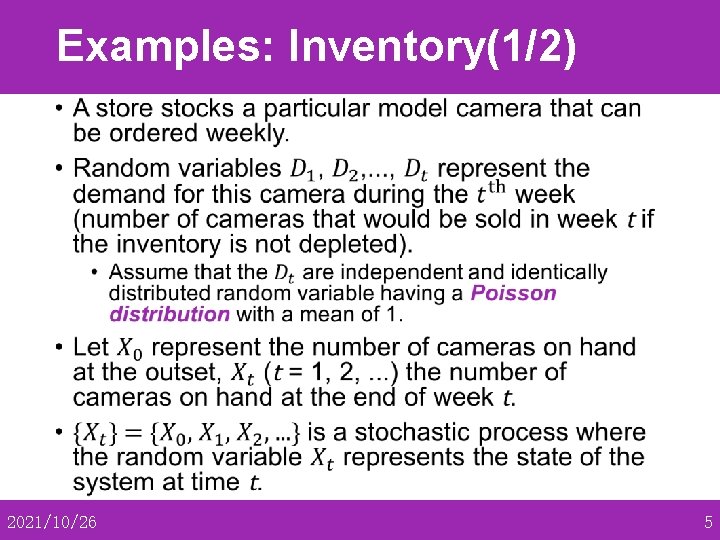

Examples: Inventory(1/2) • 2021/10/26 5

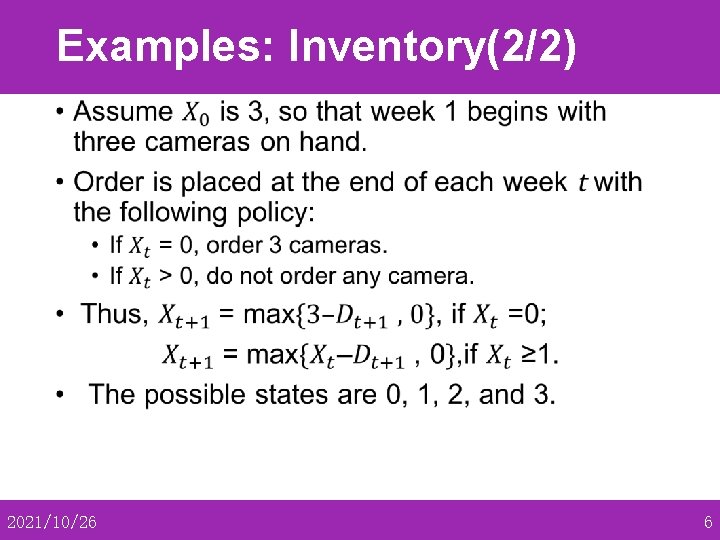

Examples: Inventory(2/2) • 2021/10/26 6

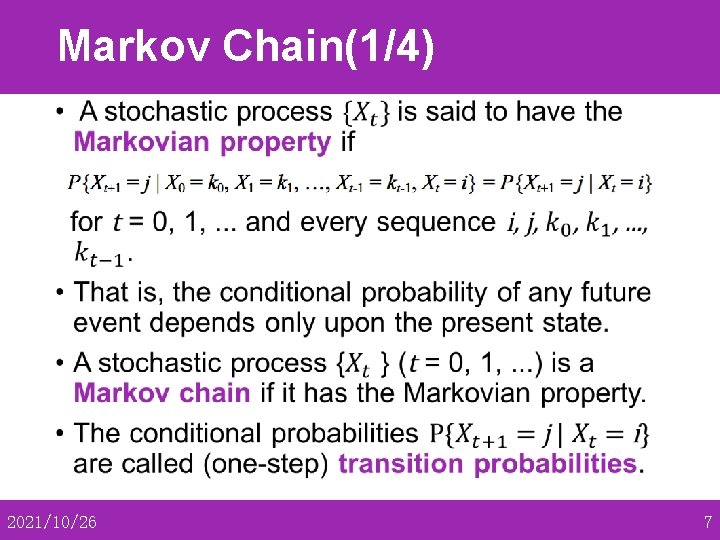

Markov Chain(1/4) • 2021/10/26 7

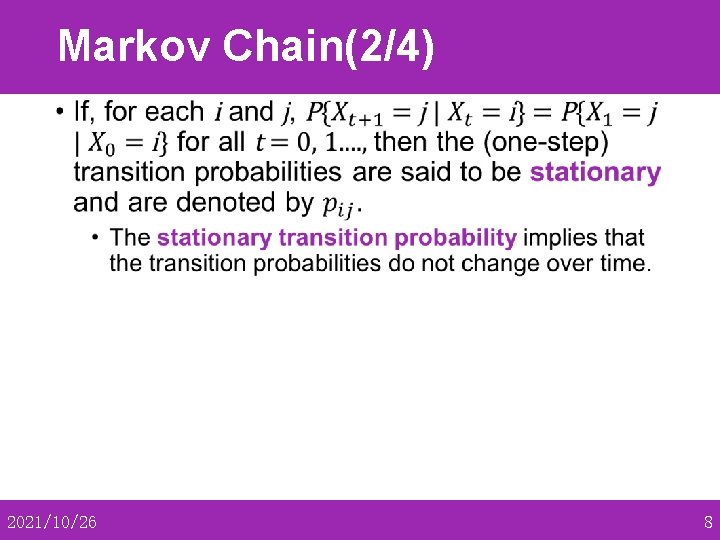

Markov Chain(2/4) • 2021/10/26 8

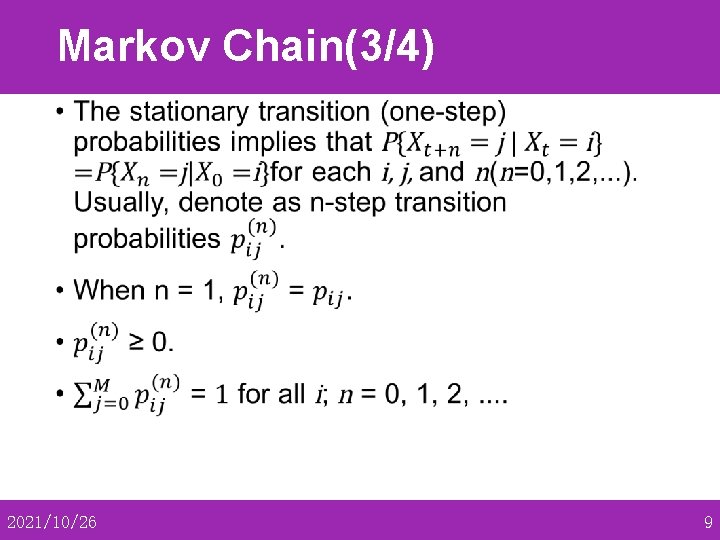

Markov Chain(3/4) • 2021/10/26 9

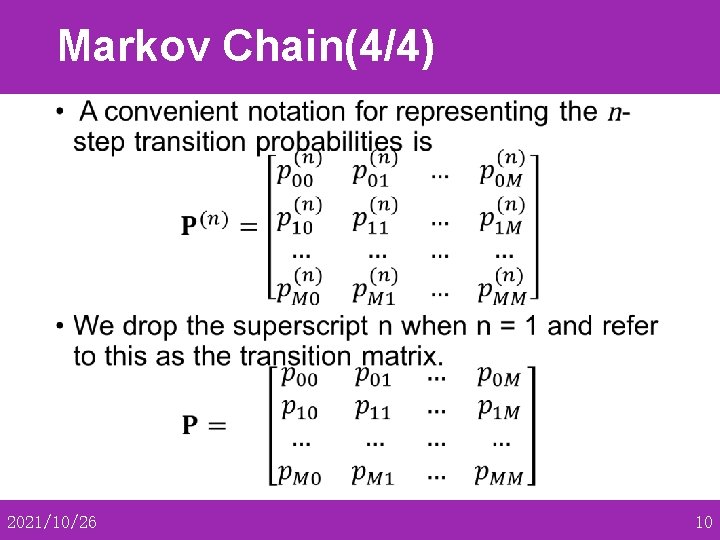

Markov Chain(4/4) • 2021/10/26 10

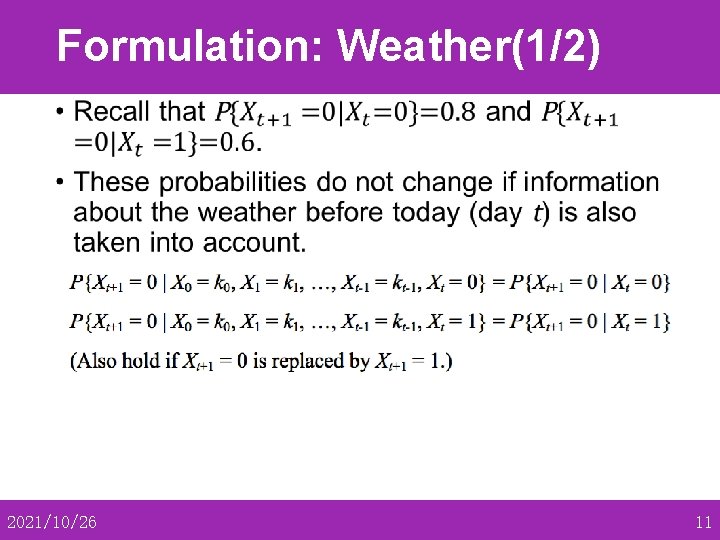

Formulation: Weather(1/2) • 2021/10/26 11

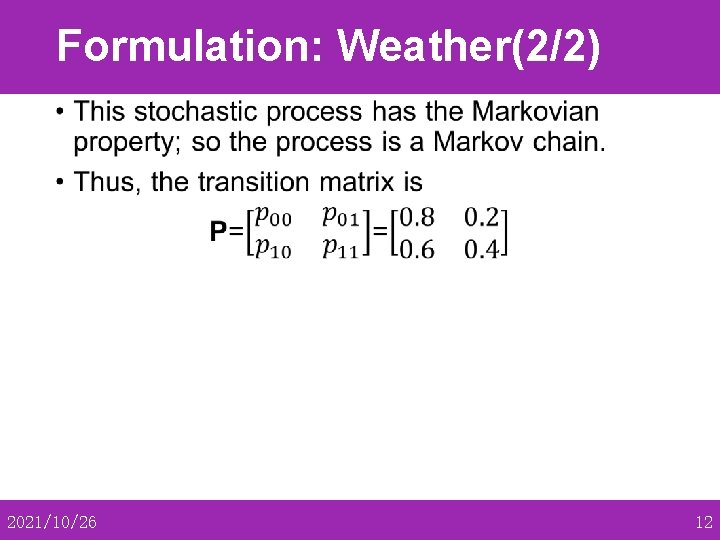

Formulation: Weather(2/2) • 2021/10/26 12

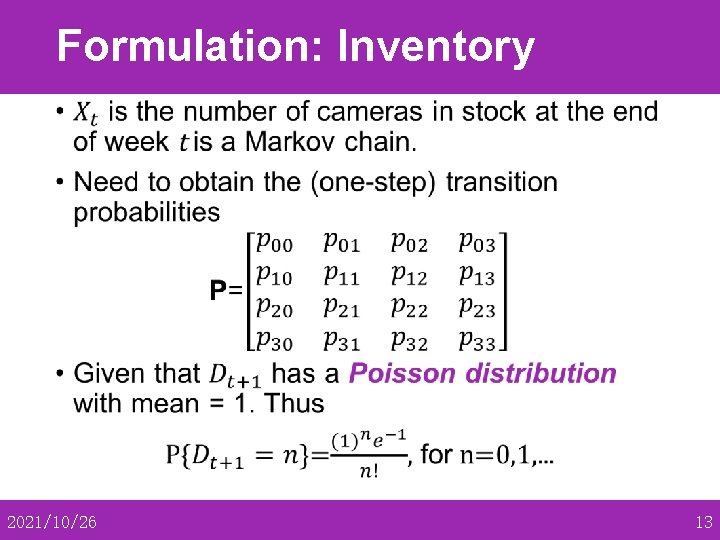

Formulation: Inventory • 2021/10/26 13

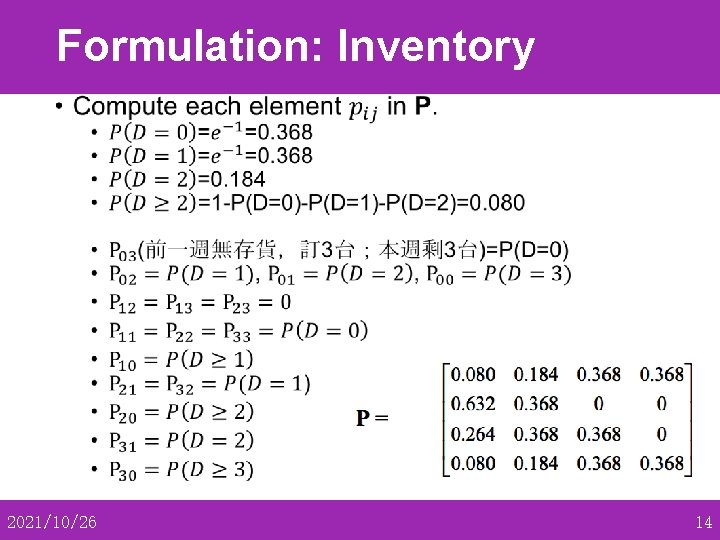

Formulation: Inventory • 2021/10/26 14

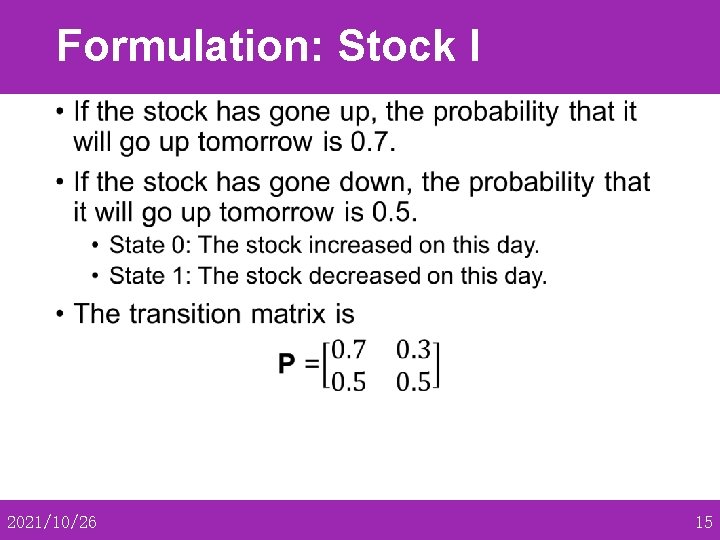

Formulation: Stock I • 2021/10/26 15

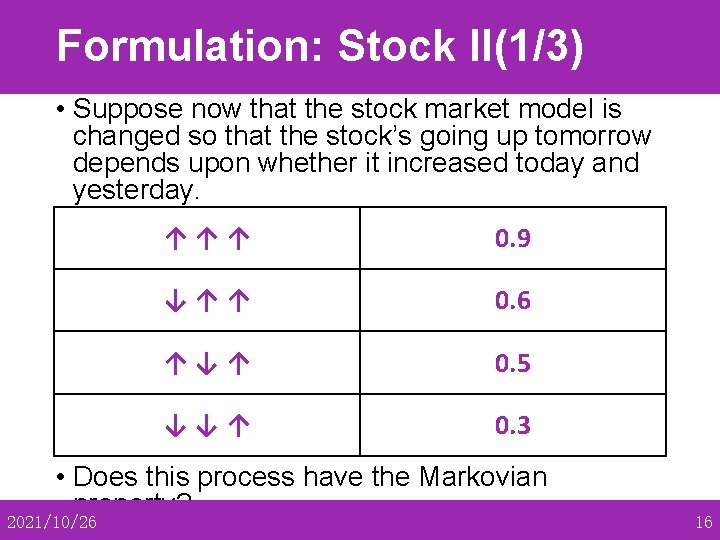

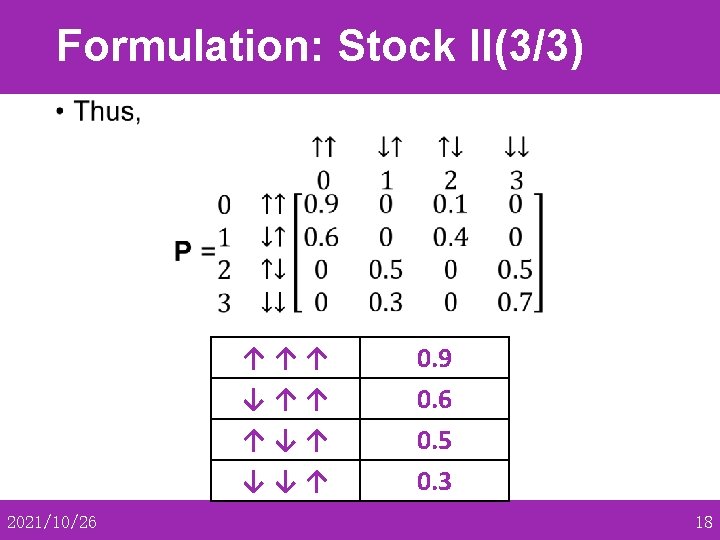

Formulation: Stock II(1/3) • Suppose now that the stock market model is changed so that the stock’s going up tomorrow depends upon whether it increased today and yesterday. • If the stock has increased for the past two days, it ↑ ↑ ↑tomorrow with probability 0. 9. will increase • If the stock increased today but decreased yesterday, it will increase tomorrow with ↓↑↑ 0. 6 probability 0. 6. • If the stock decreased today but increased ↑↓↑ 0. 5 with yesterday, then it will increase tomorrow probability 0. 5. • If the↓ stock decreased for the past two ↓↑ 0. 3 days, it will increase tomorrow with probability 0. 3. • Does this process have the Markovian property? 2021/10/26 16

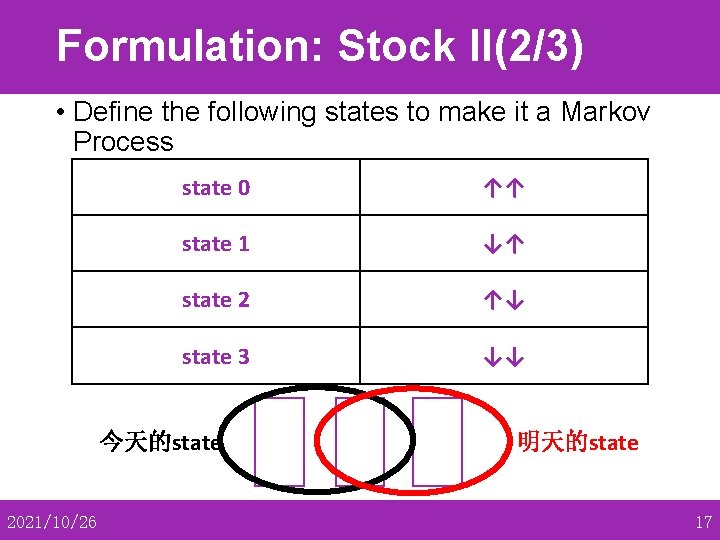

Formulation: Stock II(2/3) • Define the following states to make it a Markov Process • State 0: state The 0 stock increased both today ↑↑ and yesterday. • State 1: state The 1 stock increased today ↓↑ and decreased yesterday. • State 2: state The 2 stock decreased today↑↓ and increased yesterday. • State 3: state The 3 stock decreased both today ↓↓ and yesterday. 今天的state 2021/10/26 明天的state 17

Formulation: Stock II(3/3) • ↑↑↑ ↓↑↑ ↑↓↑ ↓↓↑ 2021/10/26 0. 9 0. 6 0. 5 0. 3 18

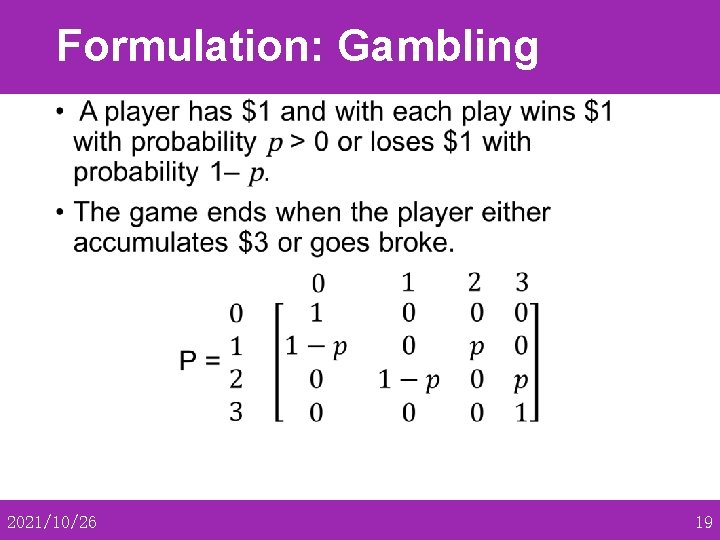

Formulation: Gambling • 2021/10/26 19

- Slides: 19