Markov Chains Markov chains describe the changes in

Markov Chains Markov chains describe the changes in a system over the course of time.

Markov Chains Markov chains describe the changes in a system over the course of time. Key Idea: The state at one point in time depends on the previous state.

Markov Chains Markov chains describe the changes in a system over the course of time. Key Idea: The state at one point in time depends on the previous state. This might remind you of recursions.

Markov Chains We need two components to describe a Markov chain:

Markov Chains We need two components to describe a Markov chain: • Transition matrix, T

Markov Chains We need two components to describe a Markov chain: • Transition matrix, T • Initial Distribution, D 0

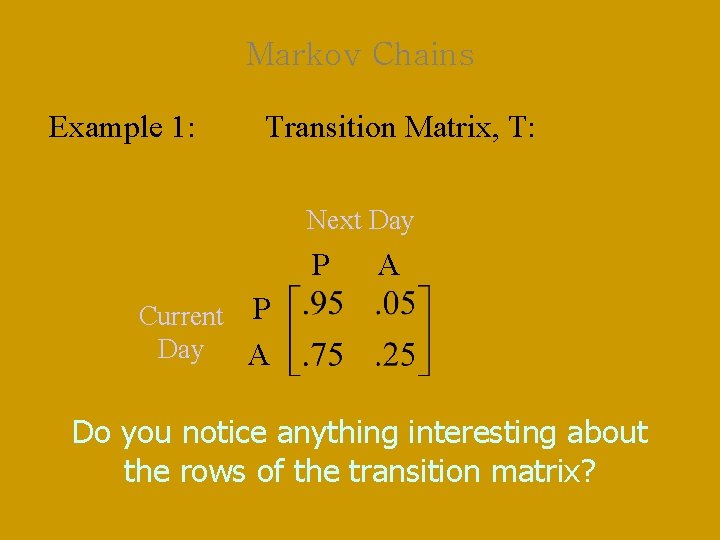

Markov Chains Example 1: A study of the attendance records at a certain school has revealed an interesting trend. Of the students that are present in school one day, 95% will also be present the following school day. Of those that are absent one day, 75% will return the following school day. Create a transition matrix for attendance at this school.

Markov Chains Example 1: Start by considering those who are present: 95% will be present the next day; 5% will therefore be absent the next day

Markov Chains Example 1: Start by considering those who are present: 95% will be present the next day; 5% will therefore be absent the next day Next consider those who are absent: 75% will be present the next day; 25% will therefore be absent again.

Markov Chains Example 1: Transition Matrix, T: Next Day P Current P Day A A

Markov Chains Example 1: Transition Matrix, T: Next Day P A Current P Day A Do you notice anything interesting about the rows of the transition matrix?

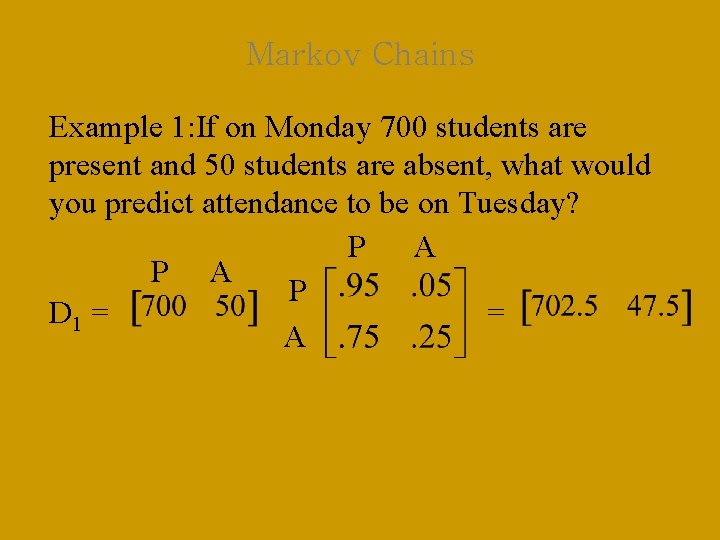

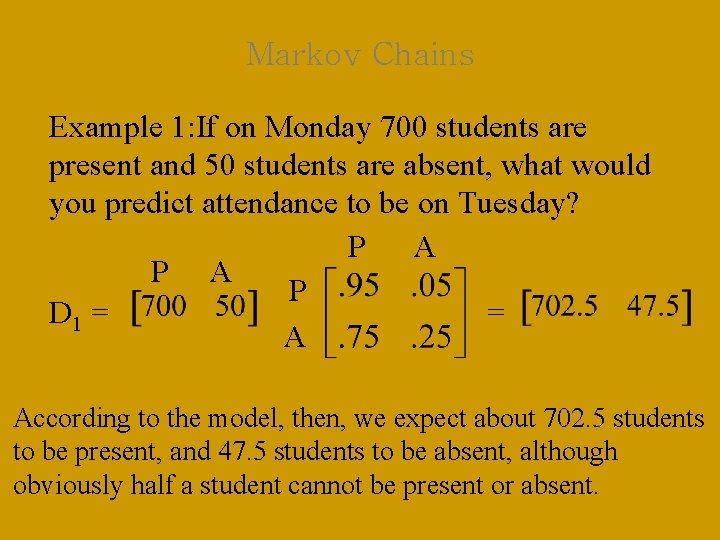

Markov Chains Example 1: If on Monday 700 students are present and 50 students are absent, what would you predict attendance to be on Tuesday?

Markov Chains Example 1: If on Monday 700 students are present and 50 students are absent, what would you predict attendance to be on Tuesday? We’ll consider Monday the initial distribution, D 0. Then Tuesday’s attendance will be represented by D 1.

Markov Chains Example 1: If on Monday 700 students are present and 50 students are absent, what would you predict attendance to be on Tuesday? D 1 = D 0 T

Markov Chains Example 1: If on Monday 700 students are present and 50 students are absent, what would you predict attendance to be on Tuesday? P A P D 1 = = A

Markov Chains Example 1: If on Monday 700 students are present and 50 students are absent, what would you predict attendance to be on Tuesday? P A P D 1 = = A According to the model, then, we expect about 702. 5 students to be present, and 47. 5 students to be absent, although obviously half a student cannot be present or absent.

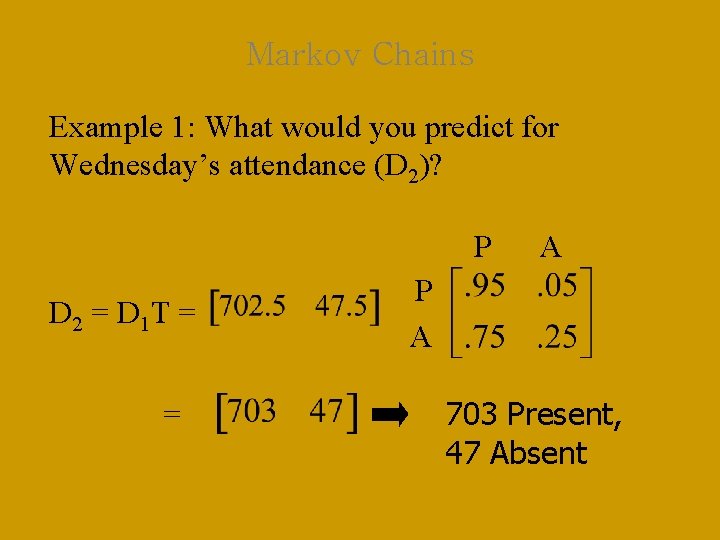

Markov Chains Example 1: What would you predict for Wednesday’s attendance (D 2)? P D 2 = D 1 T = = A P A 703 Present, 47 Absent

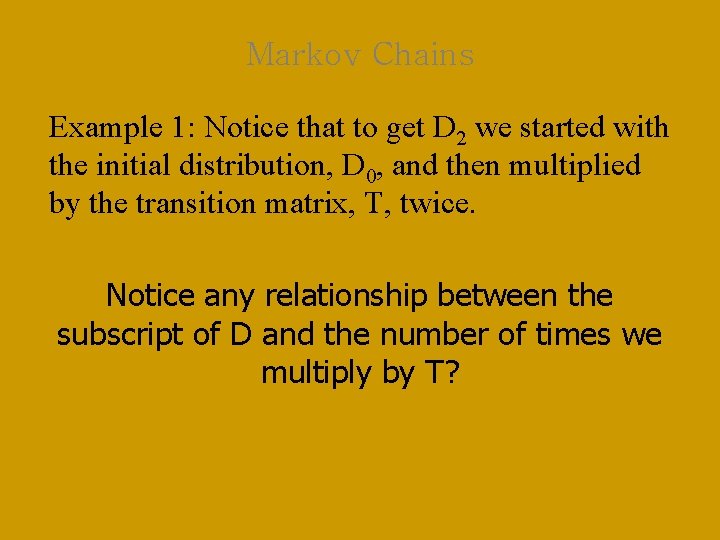

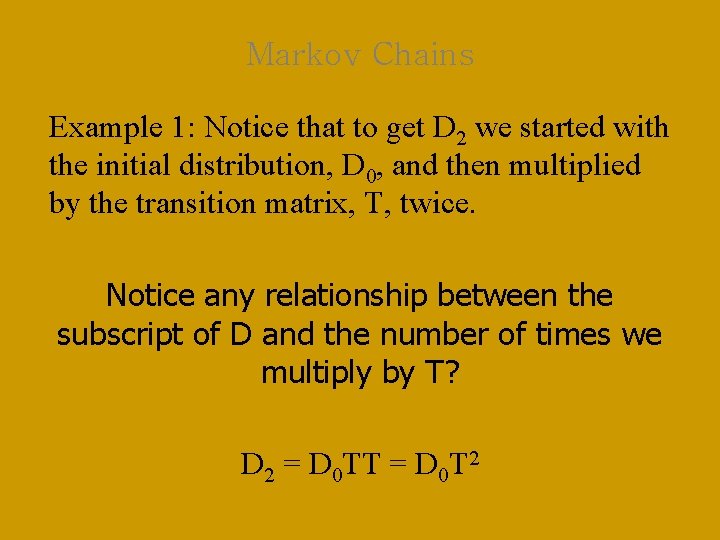

Markov Chains Example 1: Notice that to get D 2 we started with the initial distribution, D 0, and then multiplied by the transition matrix, T, twice. Notice any relationship between the subscript of D and the number of times we multiply by T?

Markov Chains Example 1: Notice that to get D 2 we started with the initial distribution, D 0, and then multiplied by the transition matrix, T, twice. Notice any relationship between the subscript of D and the number of times we multiply by T? D 2 = D 0 TT = D 0 T 2

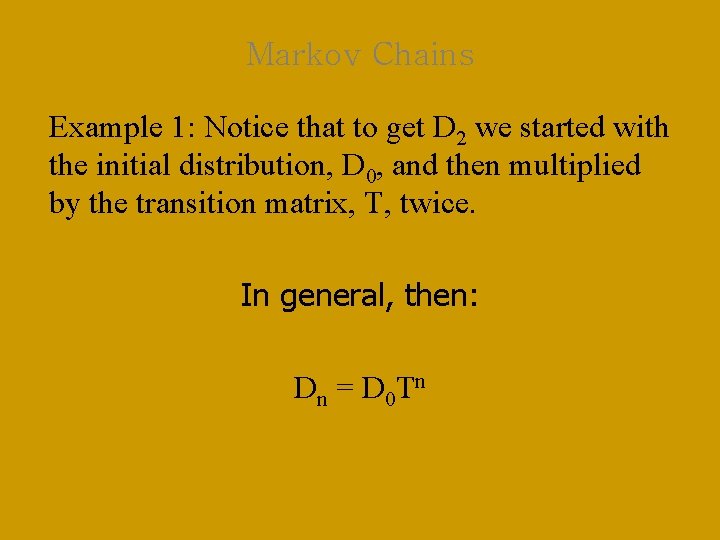

Markov Chains Example 1: Notice that to get D 2 we started with the initial distribution, D 0, and then multiplied by the transition matrix, T, twice. Likewise, if we wanted to predict the third state after the original, D 3 (Thursday’s attendance): D 3 = D 0 TTT = D 0 T 3

Markov Chains Example 1: Notice that to get D 2 we started with the initial distribution, D 0, and then multiplied by the transition matrix, T, twice. In general, then: Dn = D 0 Tn

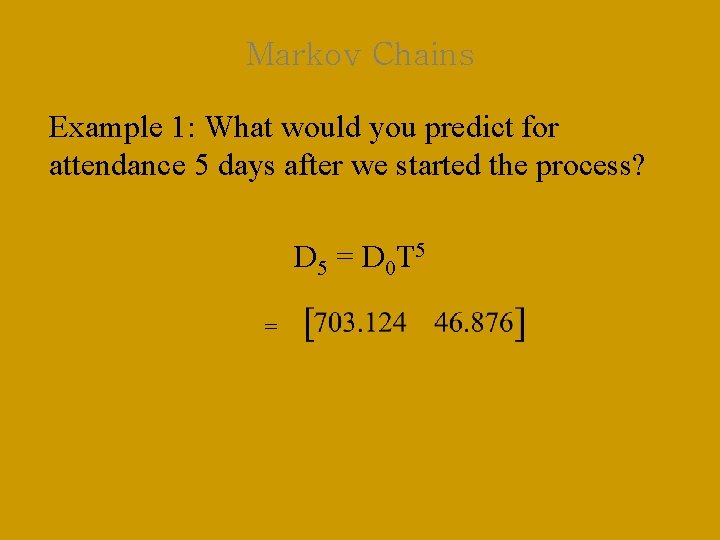

Markov Chains Example 1: What would you predict for attendance 5 days after we started the process? D 5 = D 0 T 5 =

Markov Chains Example 1: What would you predict for attendance 5 days after we started the process? D 5 = D 0 T 5 = Again, bear in mind that we cannot have fractional parts of students absent, but we’ll keep that in our model.

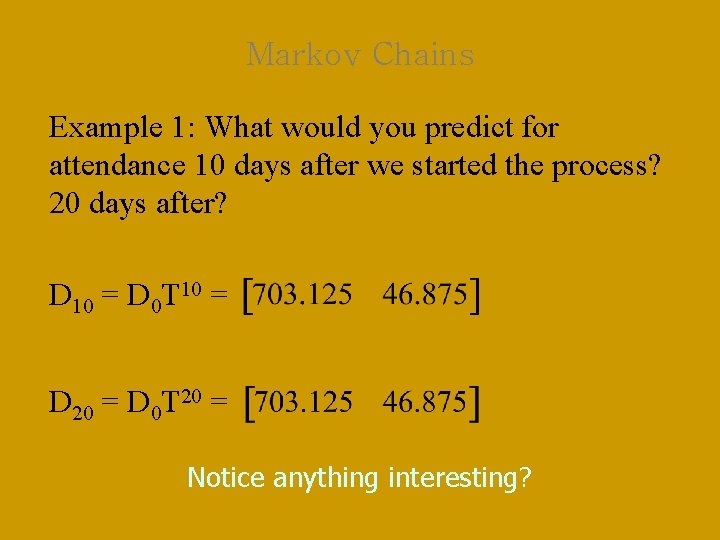

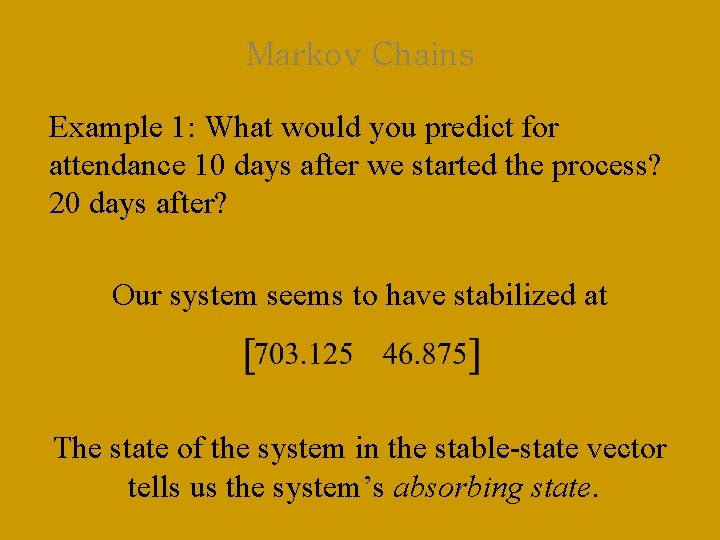

Markov Chains Example 1: What would you predict for attendance 10 days after we started the process? 20 days after? D 10 = D 0 T 10 = D 20 = D 0 T 20 =

Markov Chains Example 1: What would you predict for attendance 10 days after we started the process? 20 days after? D 10 = D 0 T 10 = D 20 = D 0 T 20 = Notice anything interesting?

Markov Chains Example 1: What would you predict for attendance 10 days after we started the process? 20 days after? Our system seems to have stabilized at This is called the stable-state vector.

Markov Chains Example 1: What would you predict for attendance 10 days after we started the process? 20 days after? Our system seems to have stabilized at The state of the system in the stable-state vector tells us the system’s absorbing state.

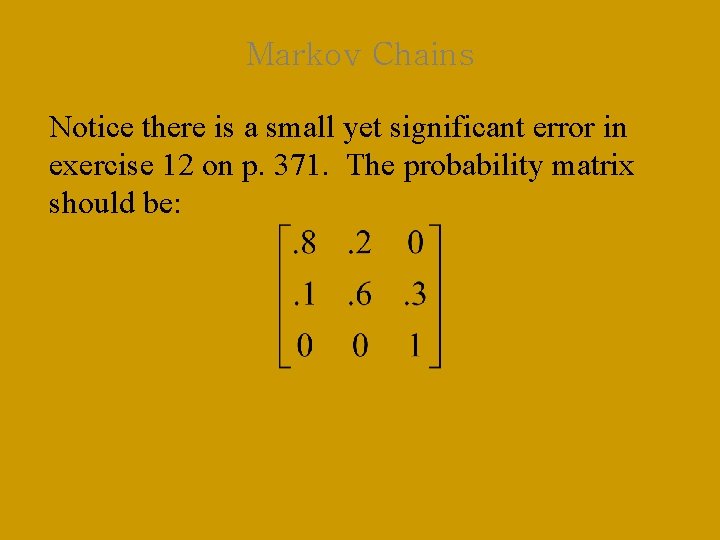

Markov Chains Notice there is a small yet significant error in exercise 12 on p. 371. The probability matrix should be:

Markov Chains Notice there is a small yet significant error in exercise 12 on p. 371. The probability matrix should be: Can you spot the error in the textbook, and can you explain how you would have known there was an error?

- Slides: 29