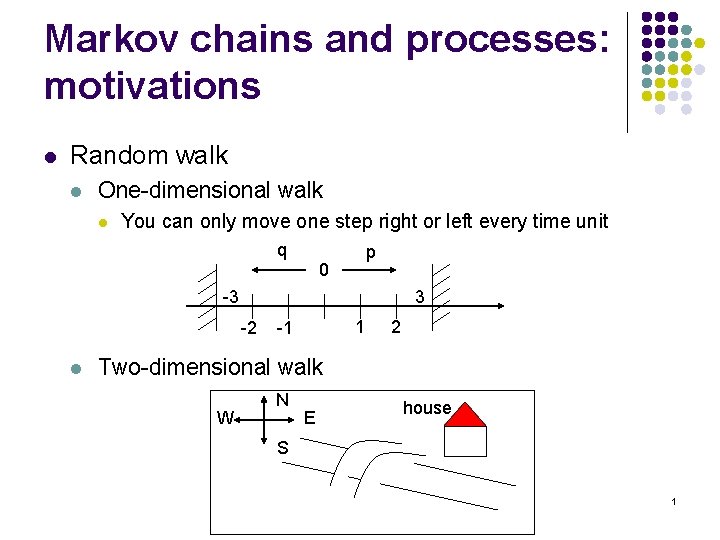

Markov chains and processes motivations l Random walk

- Slides: 23

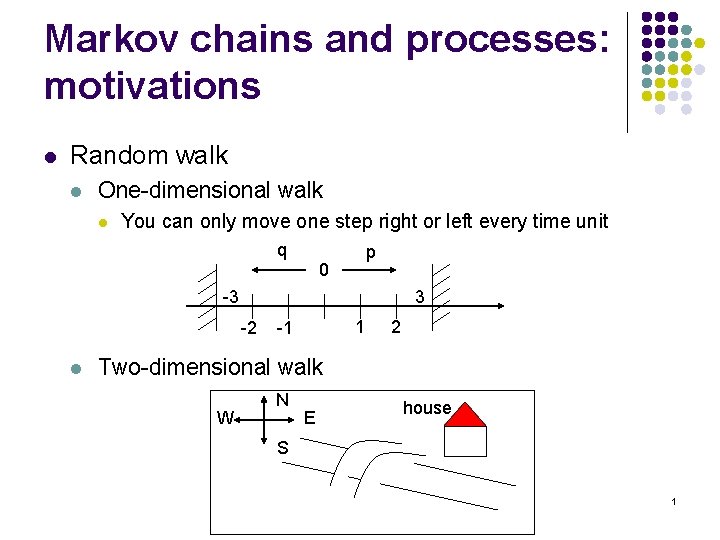

Markov chains and processes: motivations l Random walk l One-dimensional walk l You can only move one step right or left every time unit q p 0 -3 3 -2 l 1 -1 2 Two-dimensional walk W N E house S 1

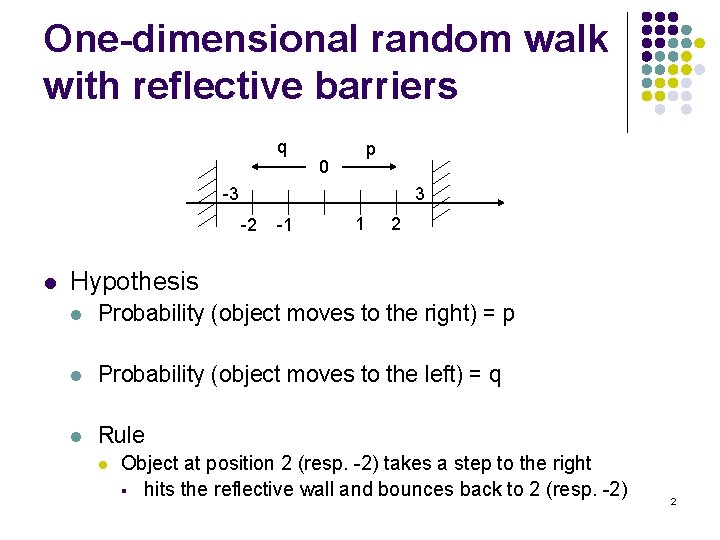

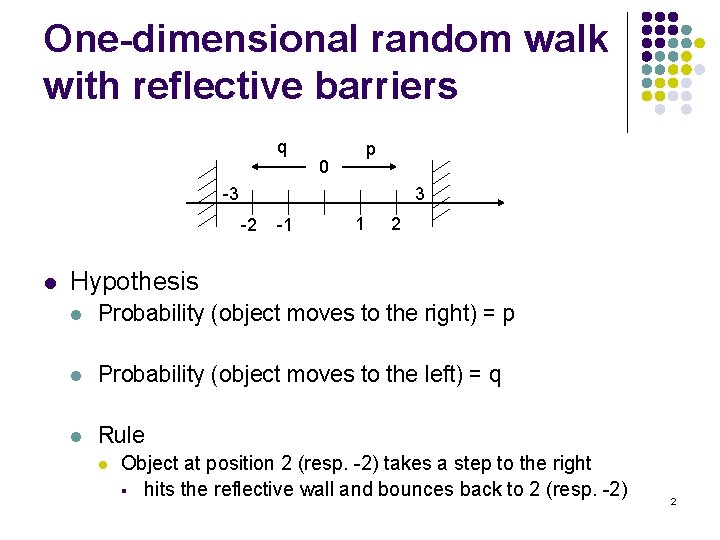

One-dimensional random walk with reflective barriers q p 0 -3 3 -2 l -1 1 2 Hypothesis l Probability (object moves to the right) = p l Probability (object moves to the left) = q l Rule l Object at position 2 (resp. -2) takes a step to the right § hits the reflective wall and bounces back to 2 (resp. -2) 2

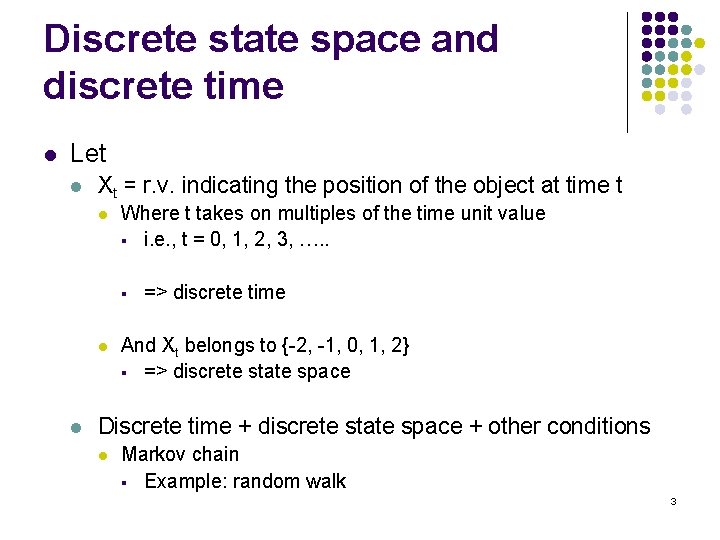

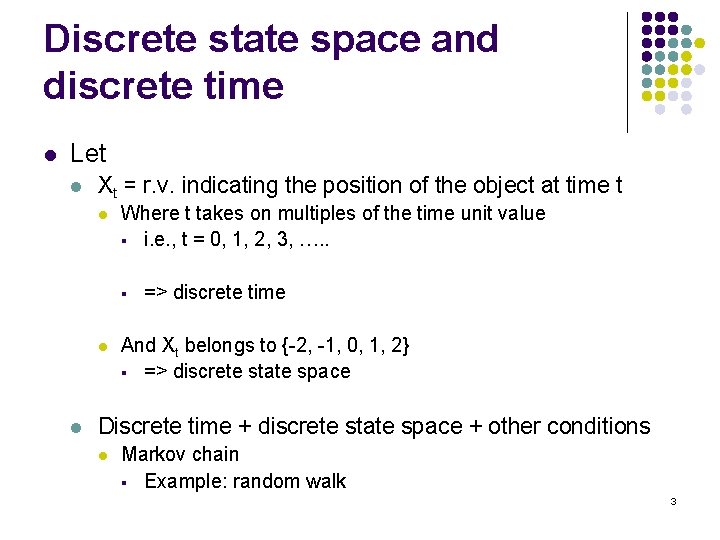

Discrete state space and discrete time l Let l Xt = r. v. indicating the position of the object at time t l Where t takes on multiples of the time unit value § i. e. , t = 0, 1, 2, 3, …. . § l l => discrete time And Xt belongs to {-2, -1, 0, 1, 2} § => discrete state space Discrete time + discrete state space + other conditions l Markov chain § Example: random walk 3

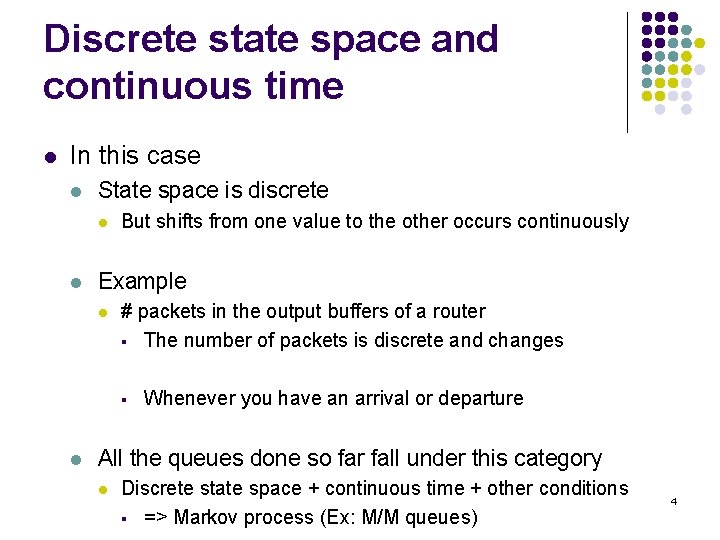

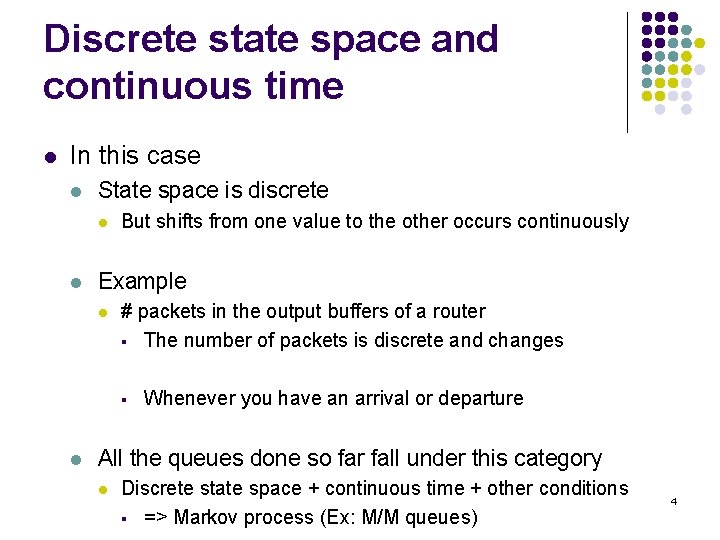

Discrete state space and continuous time l In this case l State space is discrete l l But shifts from one value to the other occurs continuously Example l # packets in the output buffers of a router § The number of packets is discrete and changes § l Whenever you have an arrival or departure All the queues done so far fall under this category l Discrete state space + continuous time + other conditions § => Markov process (Ex: M/M queues) 4

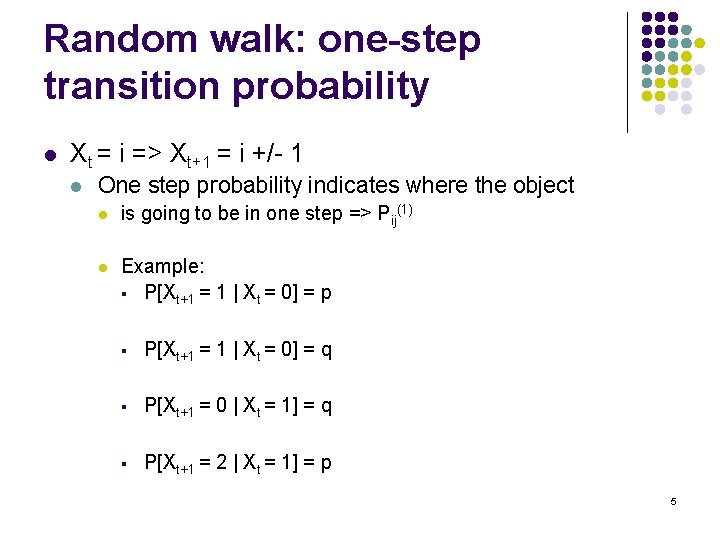

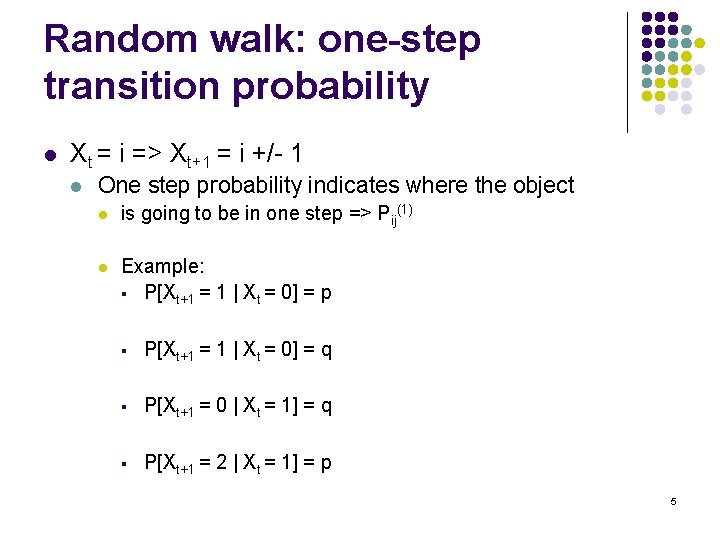

Random walk: one-step transition probability l Xt = i => Xt+1 = i +/- 1 l One step probability indicates where the object l is going to be in one step => Pij(1) l Example: § P[Xt+1 = 1 | Xt = 0] = p § P[Xt+1 = 1 | Xt = 0] = q § P[Xt+1 = 0 | Xt = 1] = q § P[Xt+1 = 2 | Xt = 1] = p 5

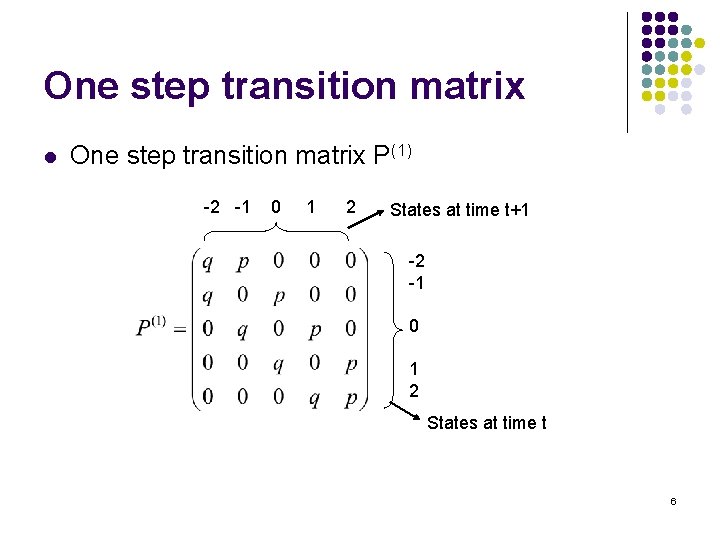

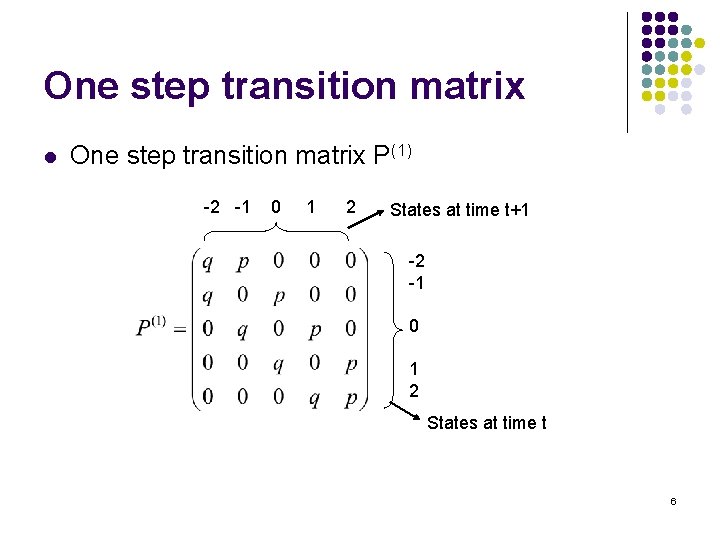

One step transition matrix l One step transition matrix P(1) -2 -1 0 1 2 States at time t+1 -2 -1 0 1 2 States at time t 6

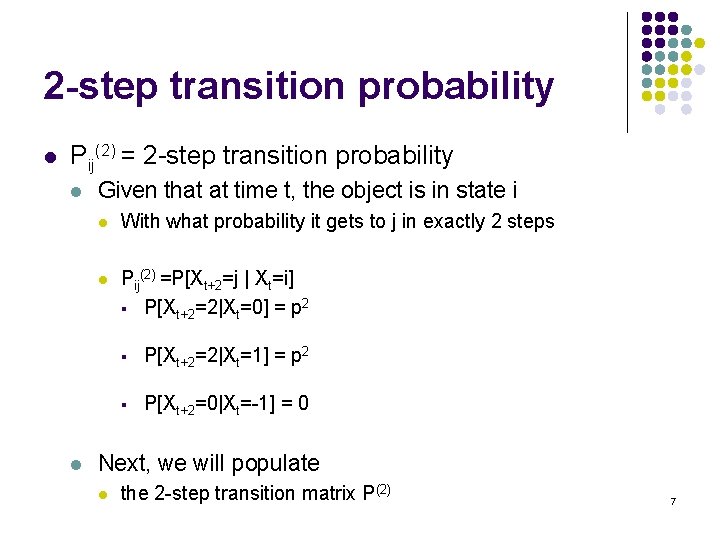

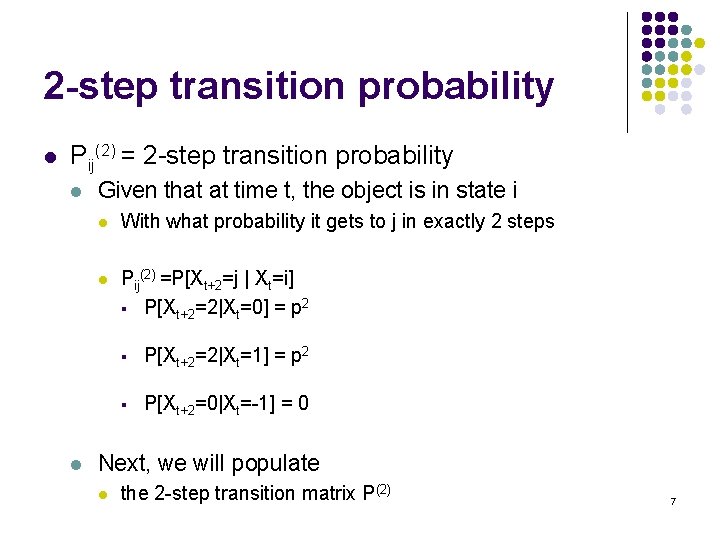

2 -step transition probability l Pij(2) = 2 -step transition probability l l Given that at time t, the object is in state i l With what probability it gets to j in exactly 2 steps l Pij(2) =P[Xt+2=j | Xt=i] § P[Xt+2=2|Xt=0] = p 2 § P[Xt+2=2|Xt=1] = p 2 § P[Xt+2=0|Xt=-1] = 0 Next, we will populate l the 2 -step transition matrix P(2) 7

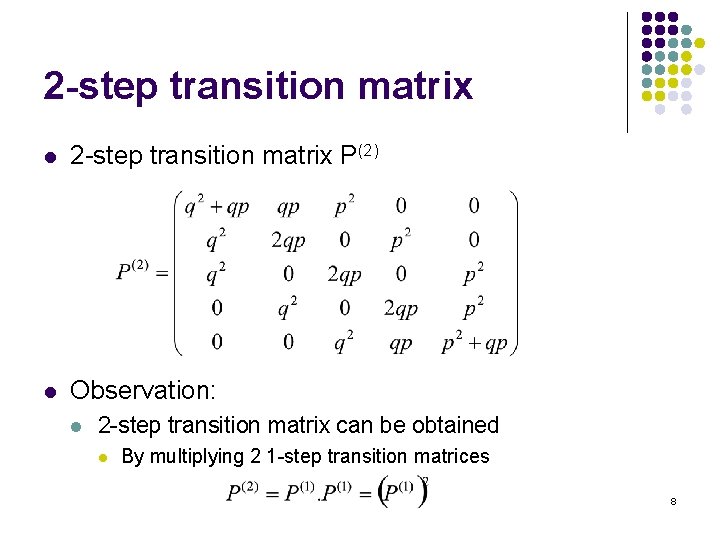

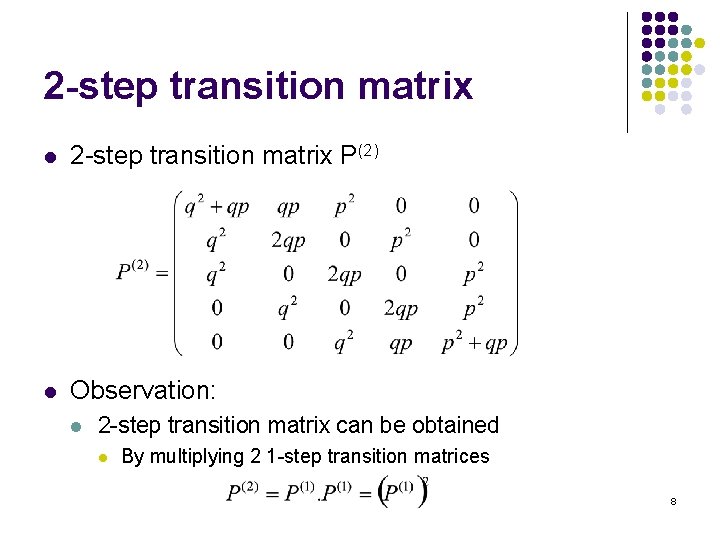

2 -step transition matrix l 2 -step transition matrix P(2) l Observation: l 2 -step transition matrix can be obtained l By multiplying 2 1 -step transition matrices 8

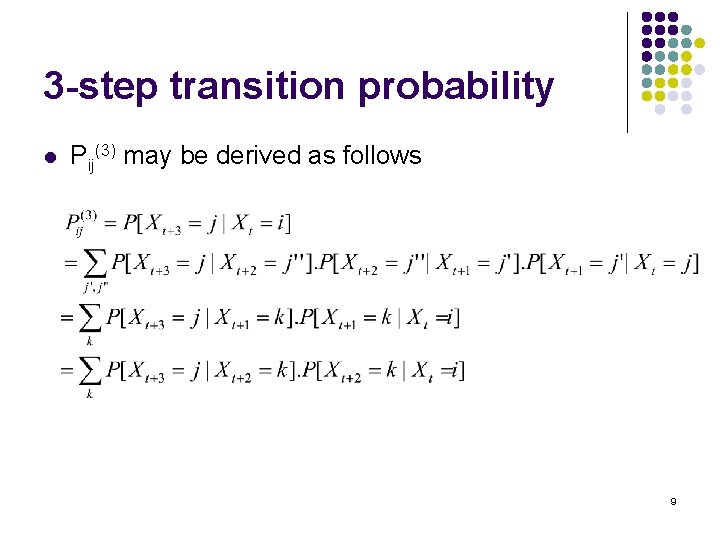

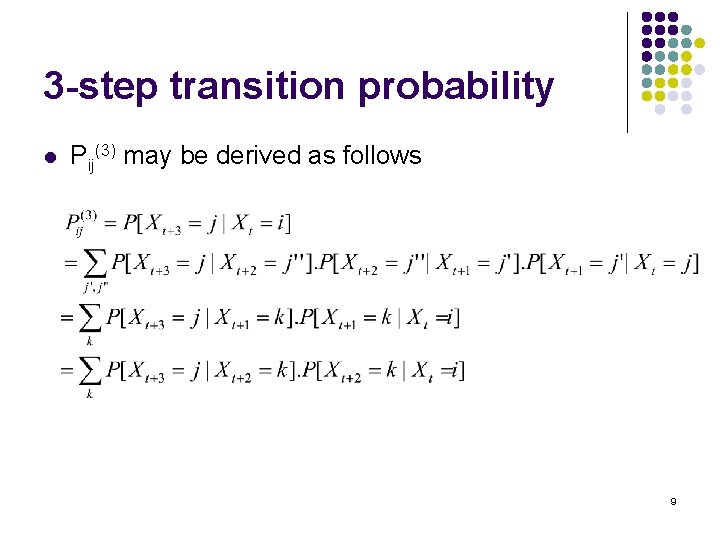

3 -step transition probability l Pij(3) may be derived as follows 9

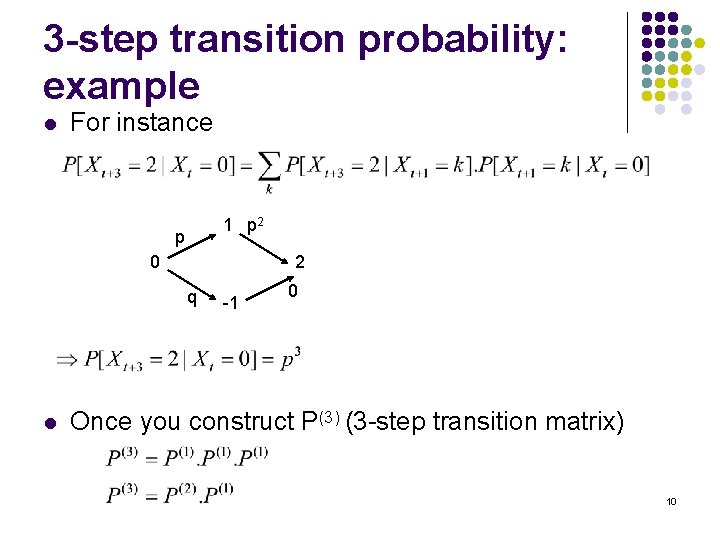

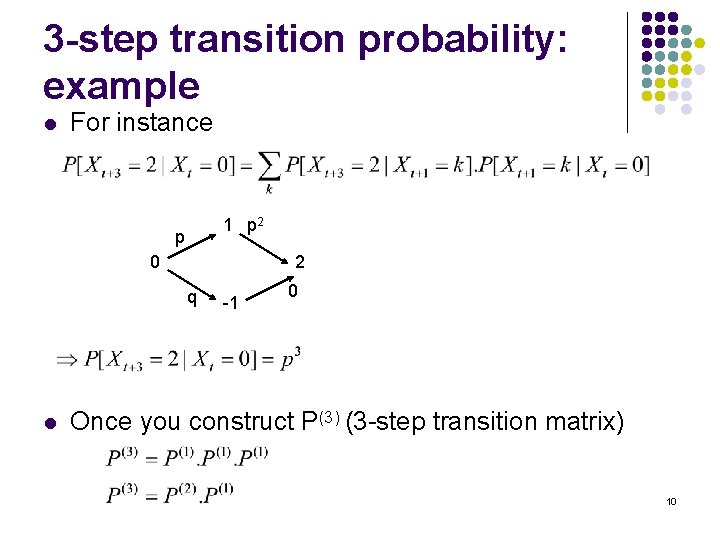

3 -step transition probability: example l For instance 1 p 2 p 0 2 q l -1 0 Once you construct P(3) (3 -step transition matrix) 10

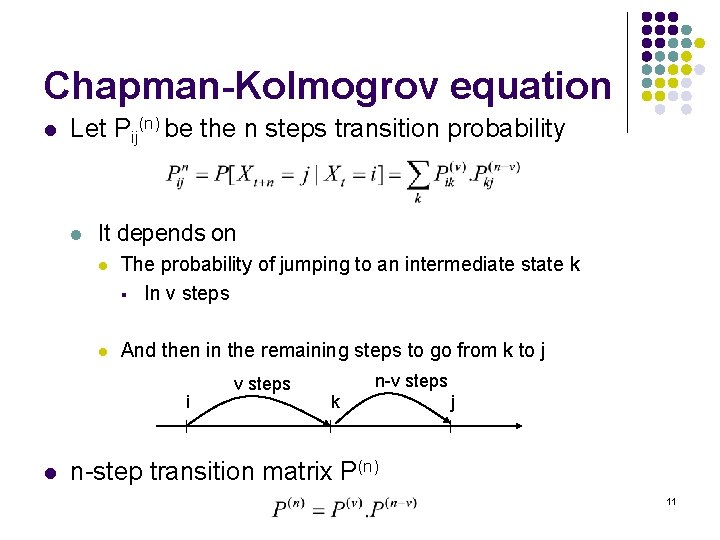

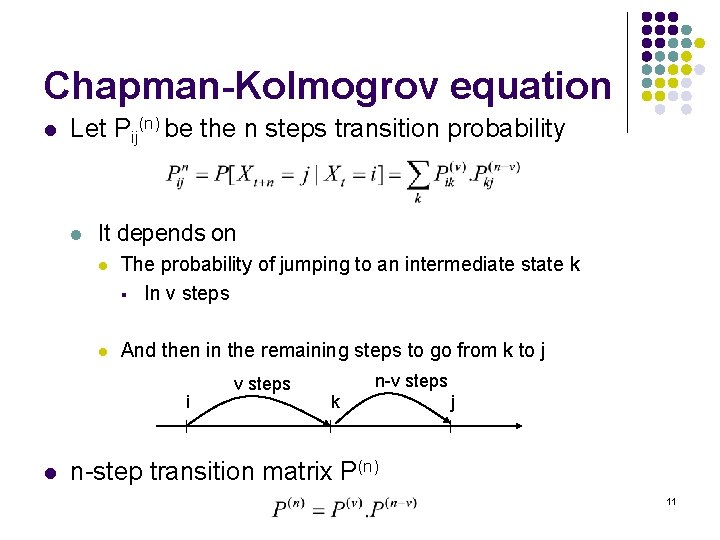

Chapman-Kolmogrov equation l Let Pij(n) be the n steps transition probability l It depends on l The probability of jumping to an intermediate state k § In v steps l And then in the remaining steps to go from k to j i l v steps n-v steps k j n-step transition matrix P(n) 11

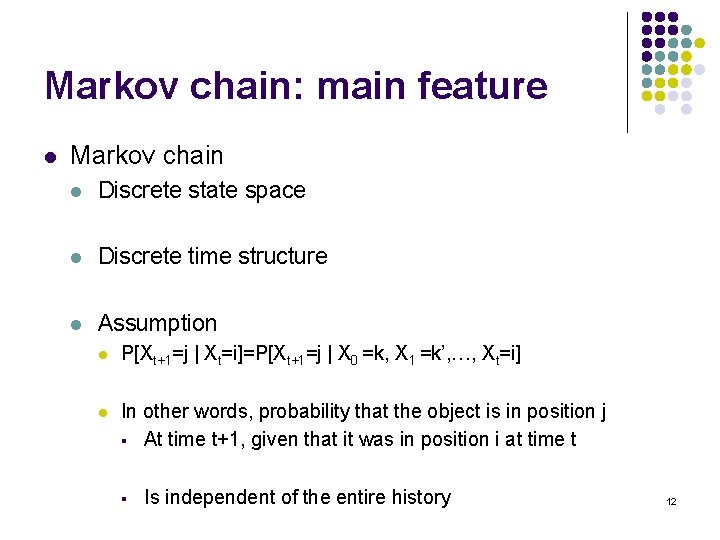

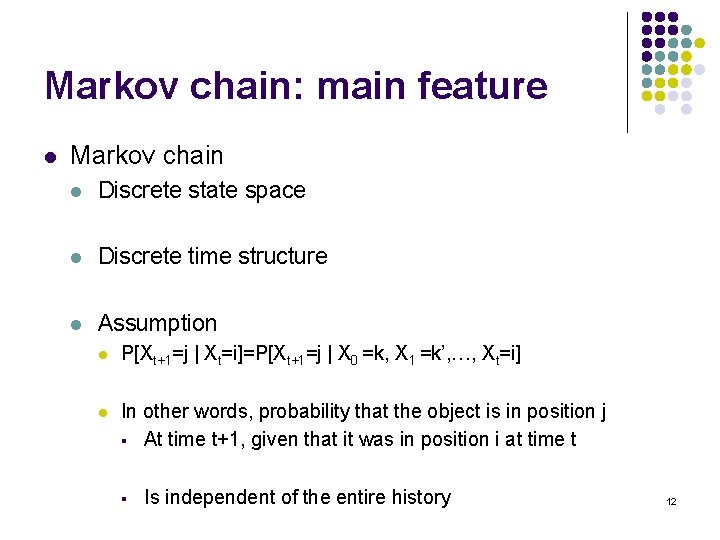

Markov chain: main feature l Markov chain l Discrete state space l Discrete time structure l Assumption l P[Xt+1=j | Xt=i]=P[Xt+1=j | X 0 =k, X 1 =k’, …, Xt=i] l In other words, probability that the object is in position j § At time t+1, given that it was in position i at time t § Is independent of the entire history 12

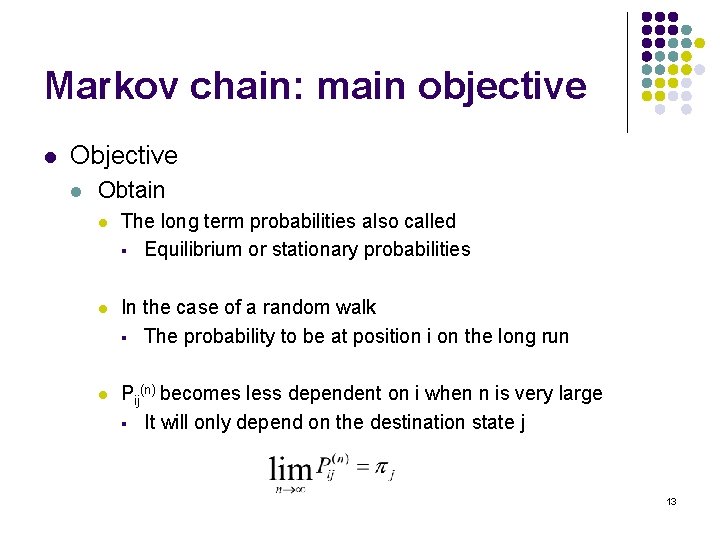

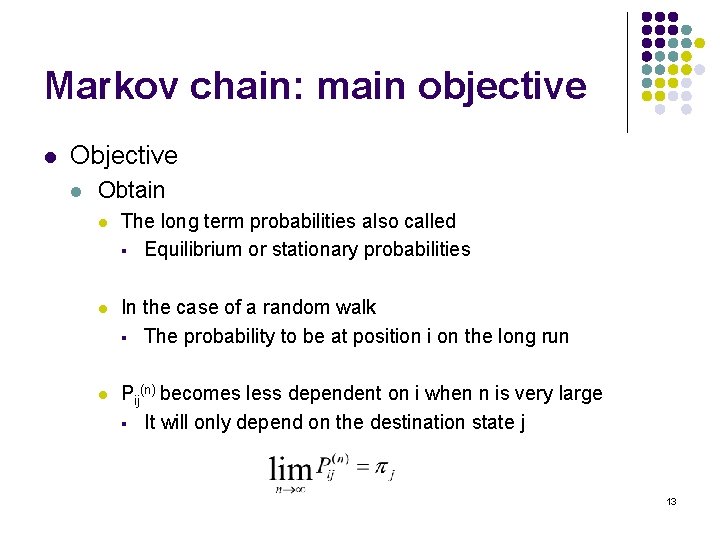

Markov chain: main objective l Obtain l The long term probabilities also called § Equilibrium or stationary probabilities l In the case of a random walk § The probability to be at position i on the long run l Pij(n) becomes less dependent on i when n is very large § It will only depend on the destination state j 13

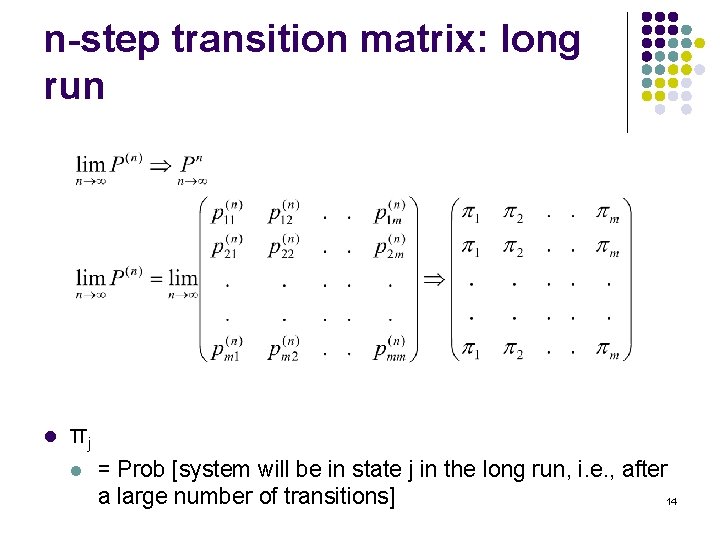

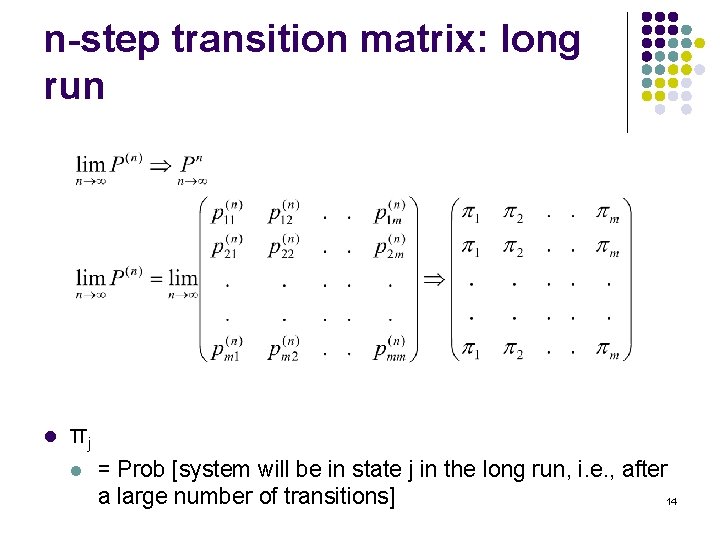

n-step transition matrix: long run l πj l = Prob [system will be in state j in the long run, i. e. , after a large number of transitions] 14

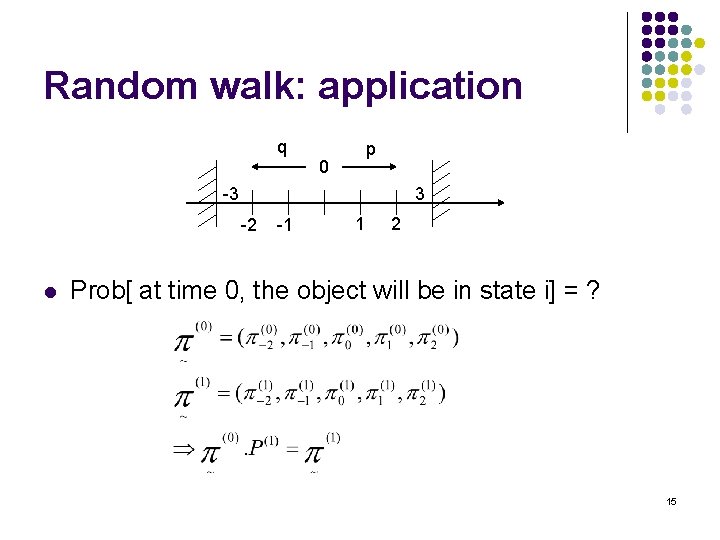

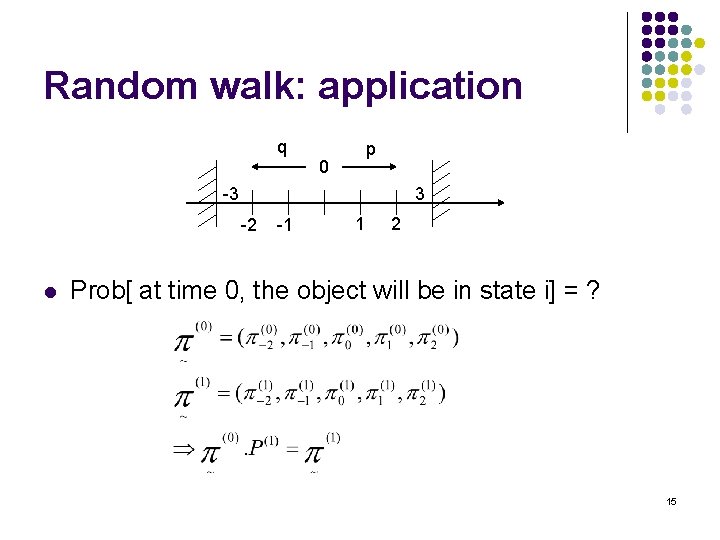

Random walk: application q p 0 -3 3 -2 l -1 1 2 Prob[ at time 0, the object will be in state i] = ? 15

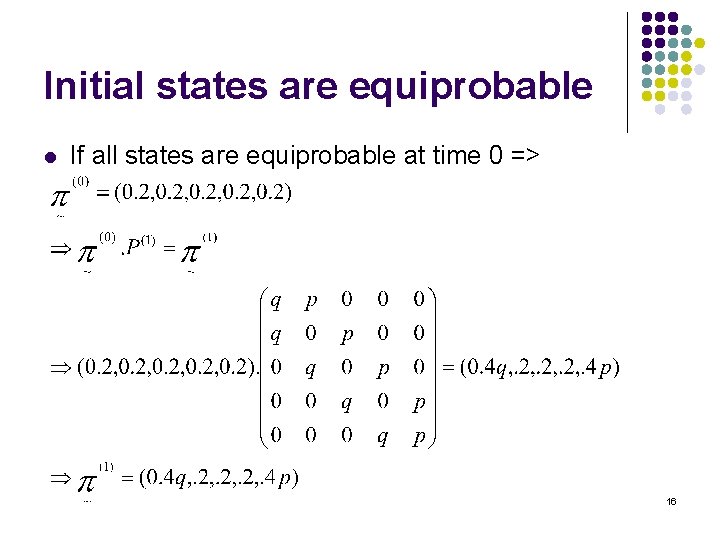

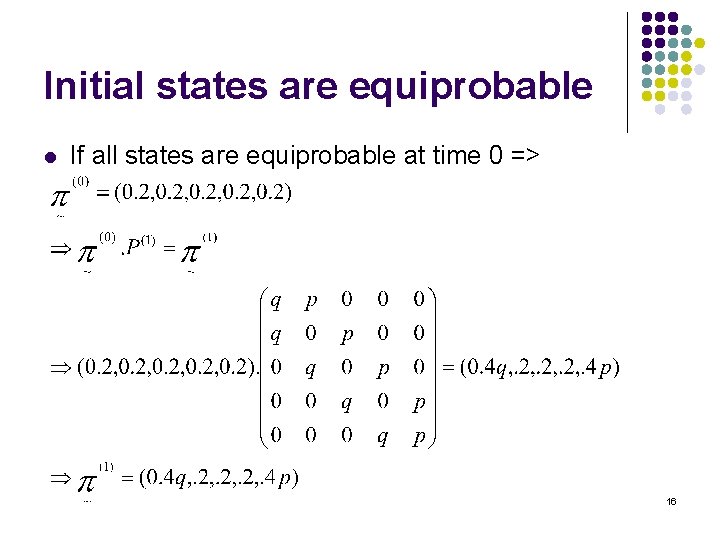

Initial states are equiprobable l If all states are equiprobable at time 0 => 16

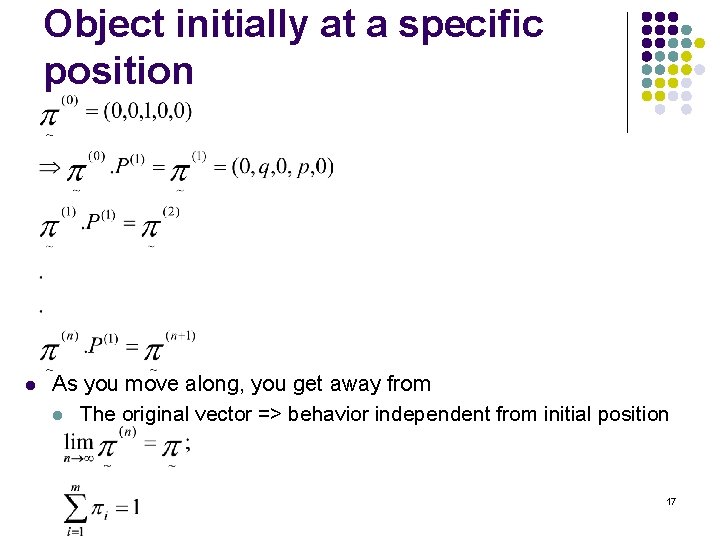

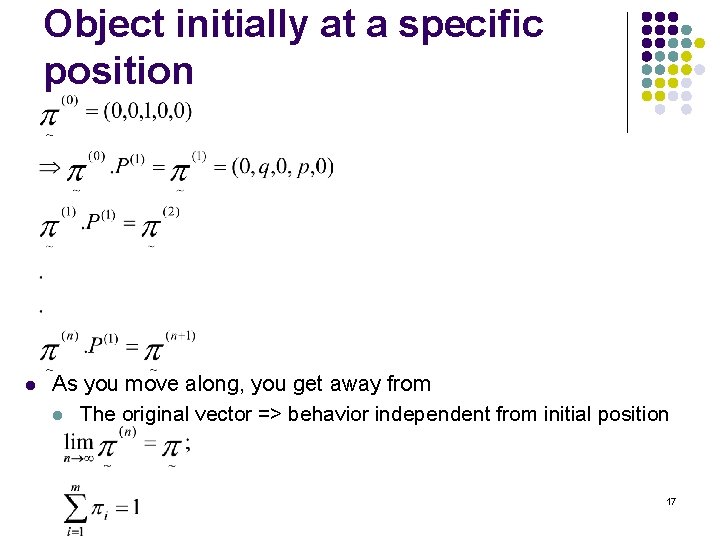

Object initially at a specific position l As you move along, you get away from l The original vector => behavior independent from initial position 17

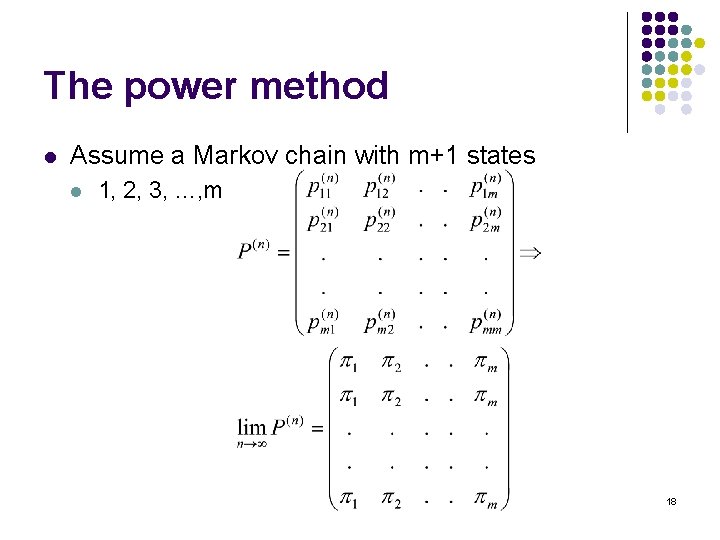

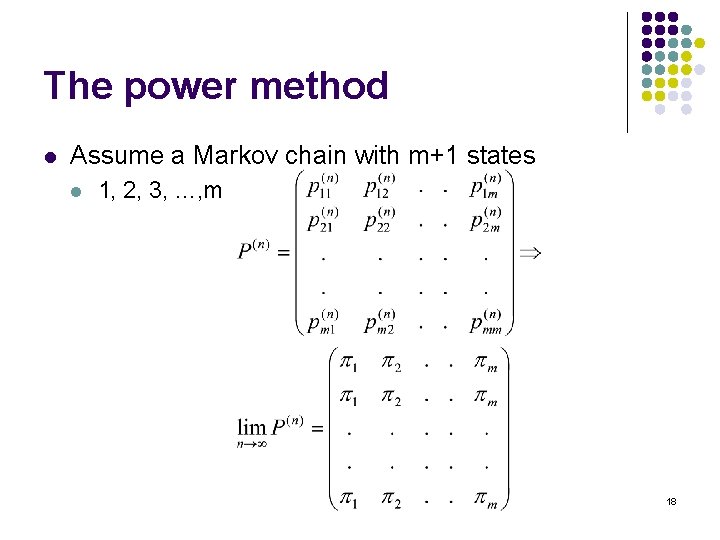

The power method l Assume a Markov chain with m+1 states l 1, 2, 3, …, m 18

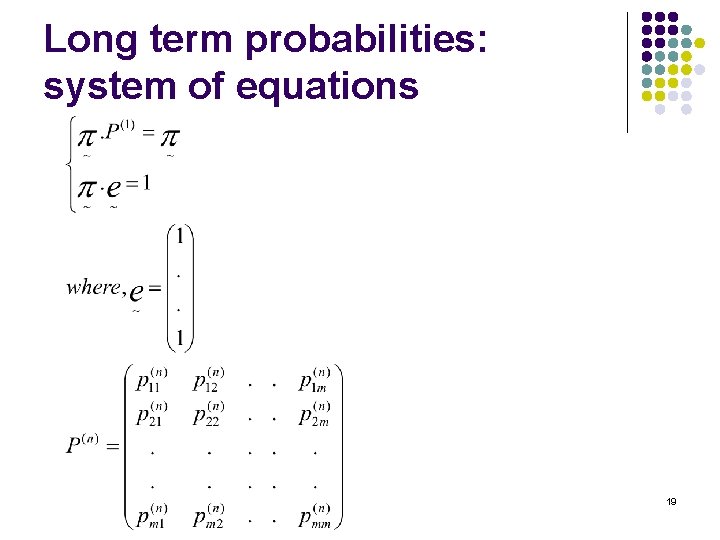

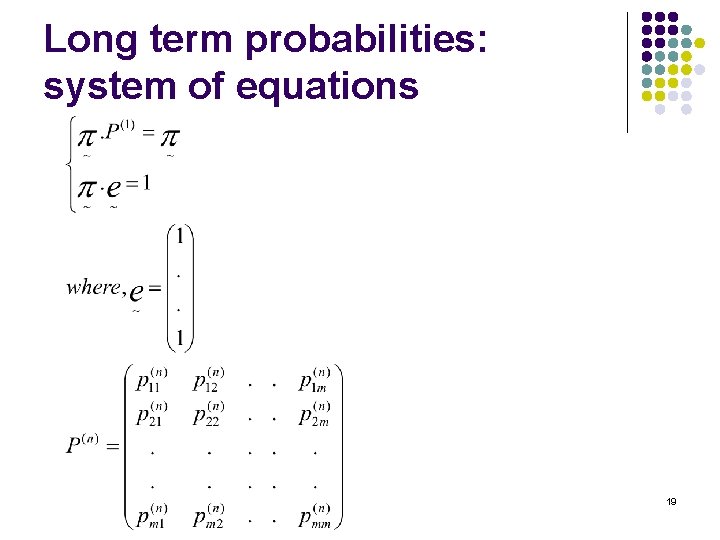

Long term probabilities: system of equations 19

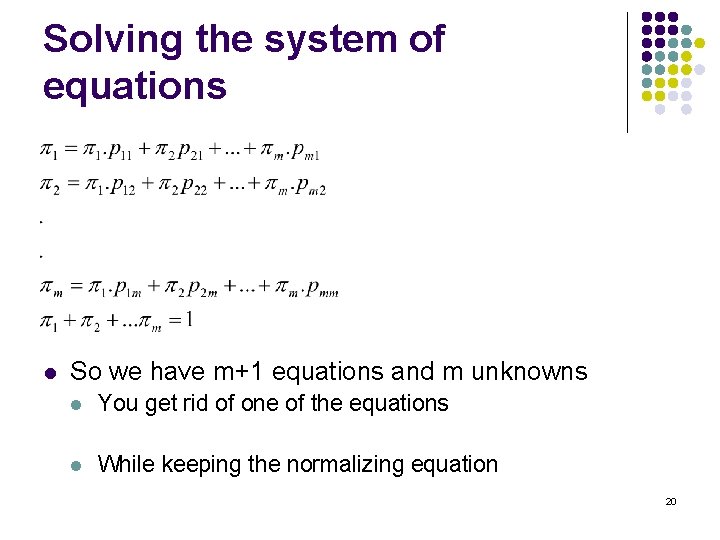

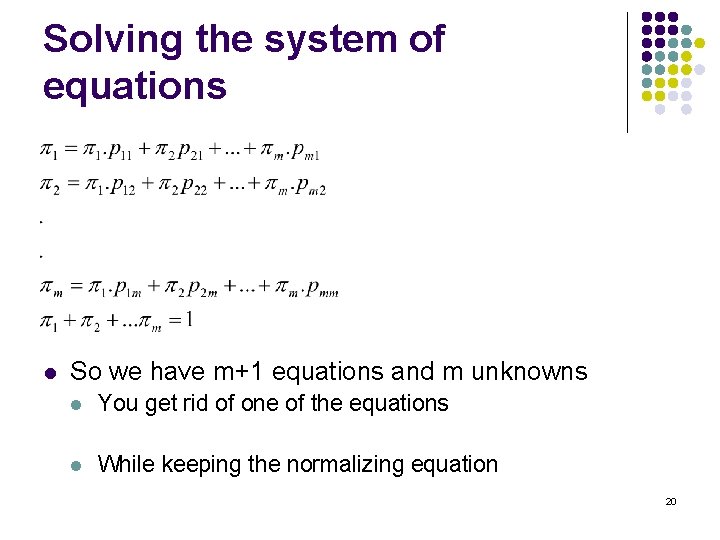

Solving the system of equations l So we have m+1 equations and m unknowns l You get rid of one of the equations l While keeping the normalizing equation 20

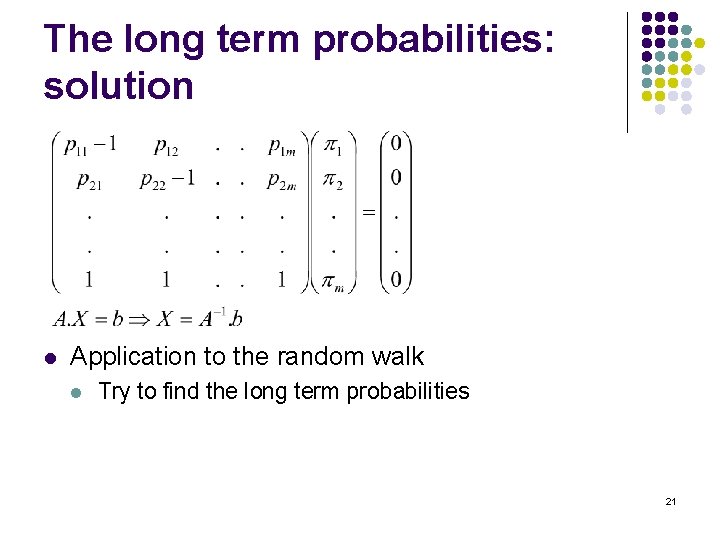

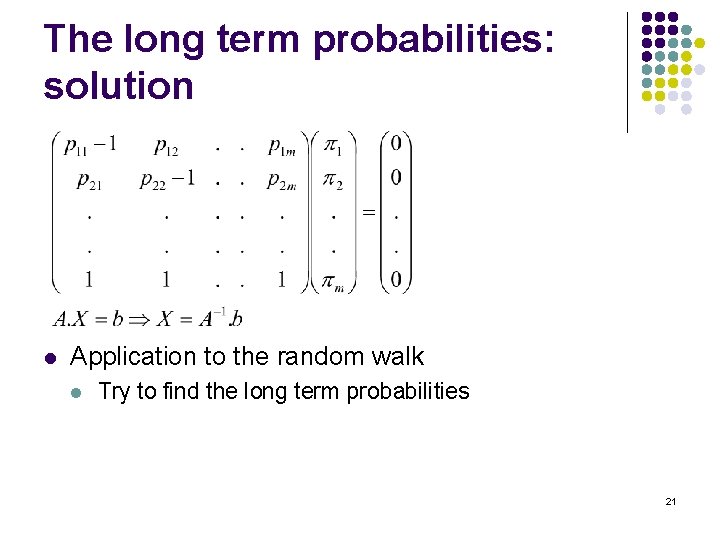

The long term probabilities: solution l Application to the random walk l Try to find the long term probabilities 21

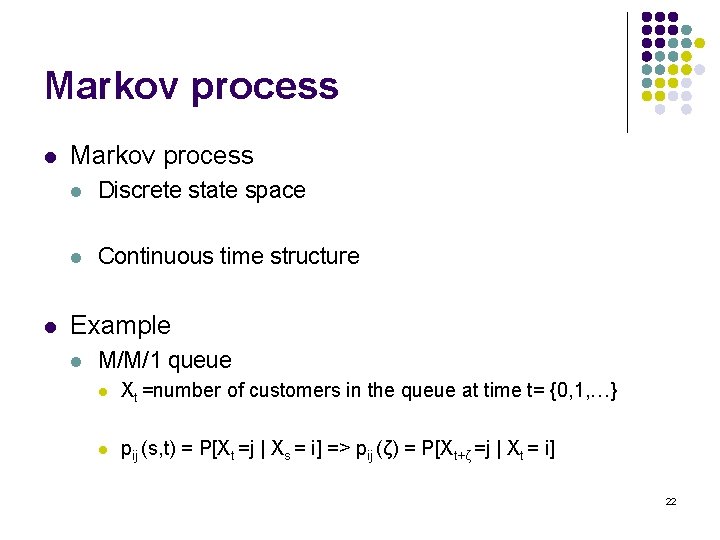

Markov process l l Markov process l Discrete state space l Continuous time structure Example l M/M/1 queue l Xt =number of customers in the queue at time t= {0, 1, …} l pij (s, t) = P[Xt =j | Xs = i] => pij (ζ) = P[Xt+ζ =j | Xt = i] 22

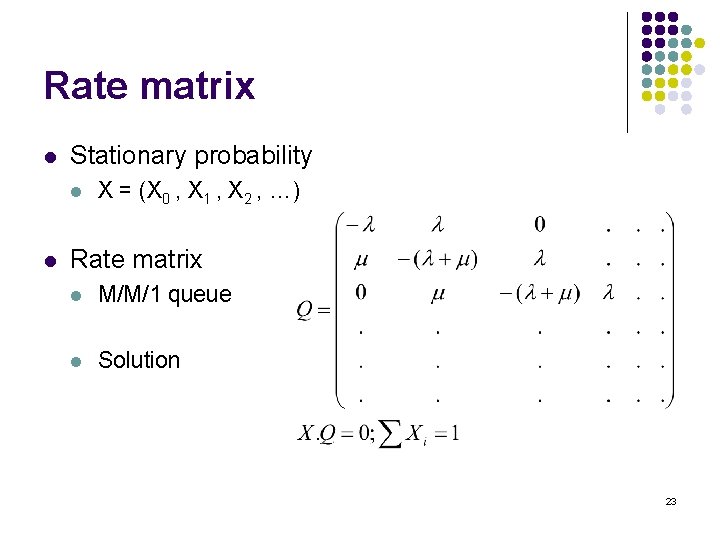

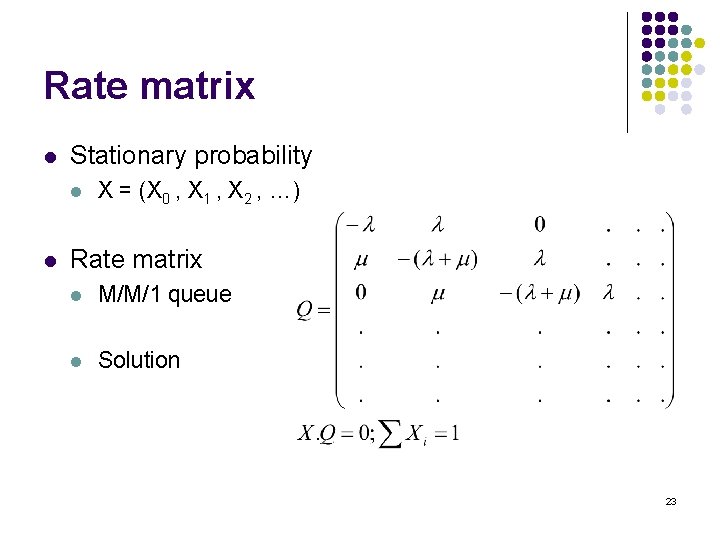

Rate matrix l Stationary probability l l X = (X 0 , X 1 , X 2 , …) Rate matrix l M/M/1 queue l Solution 23