Managing Wire Delay in Large CMP Caches Bradford

Managing Wire Delay in Large CMP Caches Bradford M. Beckmann David A. Wood Multifacet Project University of Wisconsin-Madison MICRO 2004 12/8/04

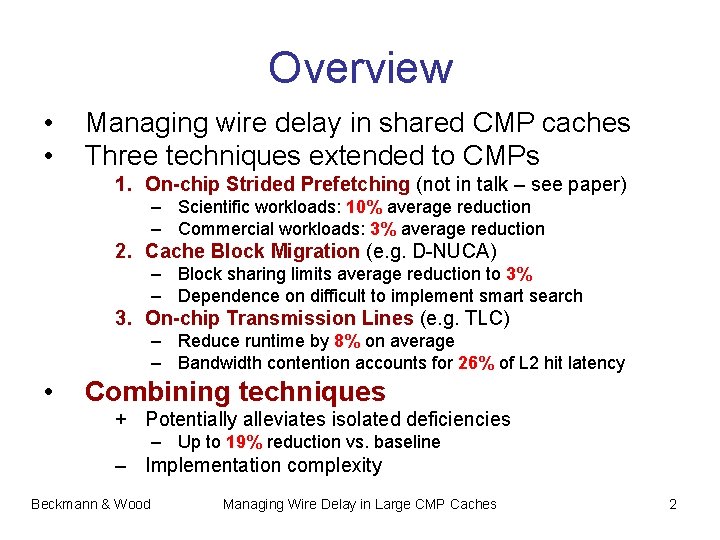

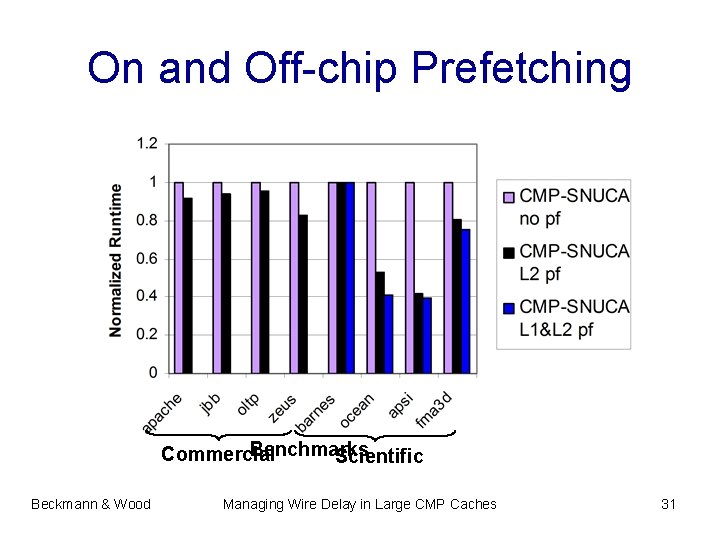

Overview • • Managing wire delay in shared CMP caches Three techniques extended to CMPs 1. On-chip Strided Prefetching (not in talk – see paper) – Scientific workloads: 10% average reduction – Commercial workloads: 3% average reduction 2. Cache Block Migration (e. g. D-NUCA) – Block sharing limits average reduction to 3% – Dependence on difficult to implement smart search 3. On-chip Transmission Lines (e. g. TLC) – Reduce runtime by 8% on average – Bandwidth contention accounts for 26% of L 2 hit latency • Combining techniques + Potentially alleviates isolated deficiencies – Up to 19% reduction vs. baseline – Implementation complexity Beckmann & Wood Managing Wire Delay in Large CMP Caches 2

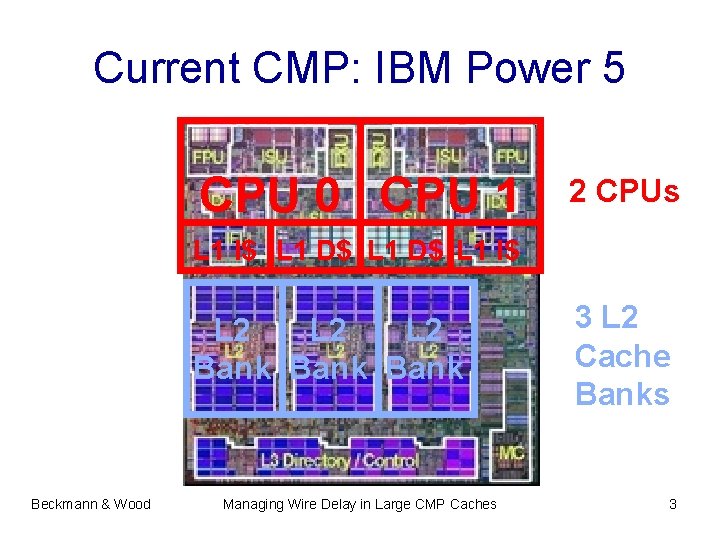

Current CMP: IBM Power 5 CPU 0 CPU 1 2 CPUs L 1 I$ L 1 D$ L 1 I$ L 2 L 2 Bank Beckmann & Wood Managing Wire Delay in Large CMP Caches 3 L 2 Cache Banks 3

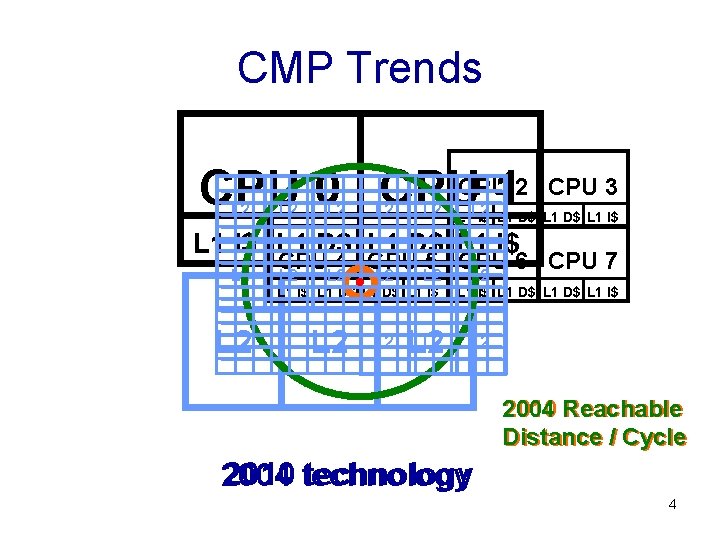

CMP Trends CPU 0 CPUCPU 12 L 2 L 2 CPU 3 L 2 L 1 L 2 I$ L 1 D$ L 1 I$ L 2 CPU 4 CPU 5 CPU 6 CPU 7 L 2 L 2 L 2 L 1 I$ L 1 D$ L 1 I$ L 2 2004 2010 Reachable Distance / Cycle 2010 technology 2004 4

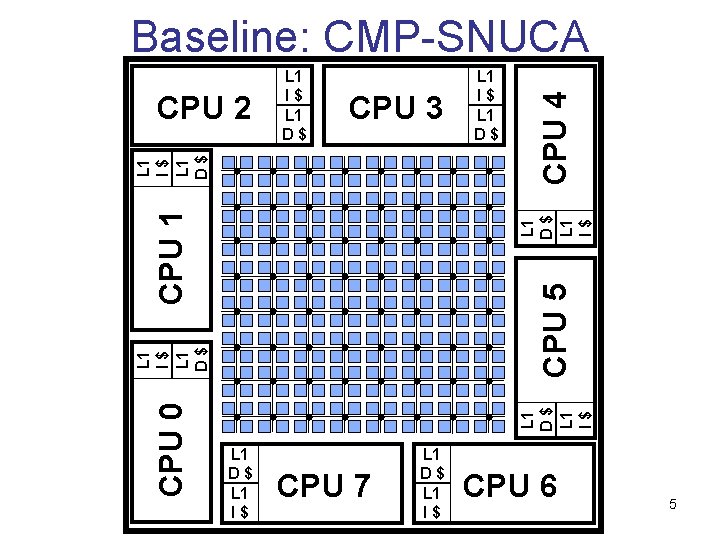

CPU 3 CPU 5 L 1 D$ L 1 I$ L 1 D$ CPU 0 L 1 I$ L 1 D$ L 1 I$ CPU 1 L 1 I$ L 1 D$ CPU 2 L 1 I$ L 1 D$ CPU 4 Baseline: CMP-SNUCA L 1 D$ L 1 I$ CPU 7 L 1 D$ L 1 I$ CPU 6 5

Outline • Global interconnect and CMP trends • Latency Management Techniques • Evaluation – Methodology – Block Migration: CMP-DNUCA – Transmission Lines: CMP-TLC – Combination: CMP-Hybrid Beckmann & Wood Managing Wire Delay in Large CMP Caches 6

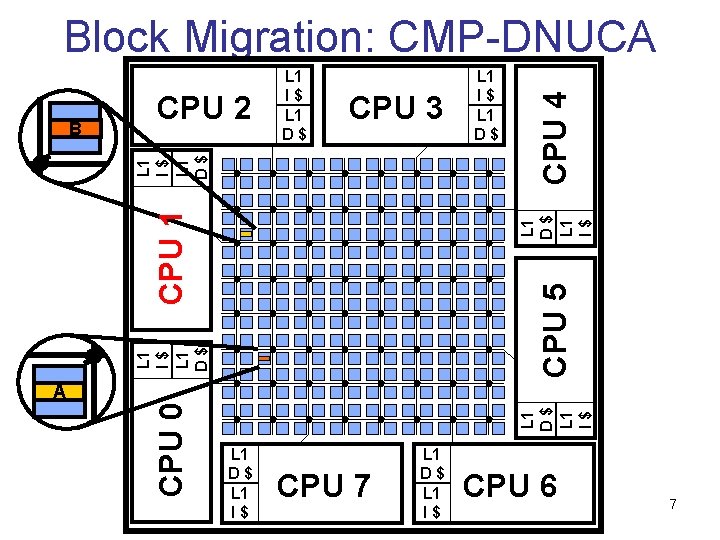

CPU 3 L 1 I$ L 1 D$ CPU 5 L 1 D$ L 1 I$ CPU 1 L 1 I$ L 1 D$ A B CPU 2 L 1 I$ L 1 D$ CPU 4 Block Migration: CMP-DNUCA L 1 D$ L 1 I$ CPU 0 B A L 1 D$ L 1 I$ CPU 7 L 1 D$ L 1 I$ CPU 6 7

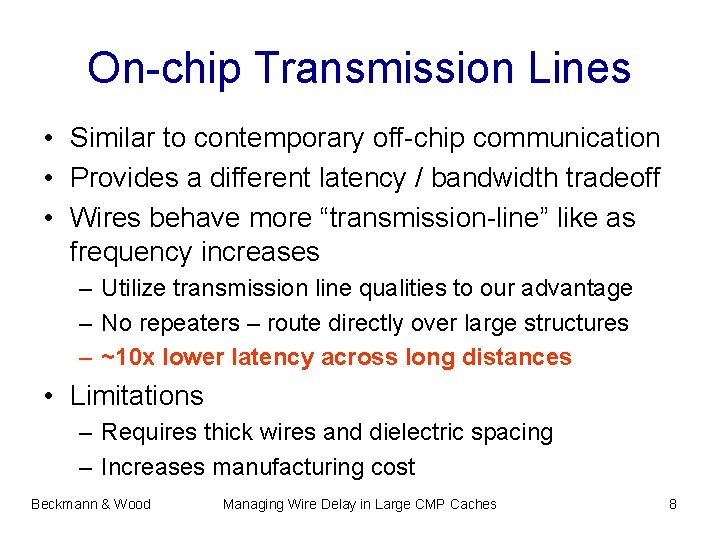

On-chip Transmission Lines • Similar to contemporary off-chip communication • Provides a different latency / bandwidth tradeoff • Wires behave more “transmission-line” like as frequency increases – Utilize transmission line qualities to our advantage – No repeaters – route directly over large structures – ~10 x lower latency across long distances • Limitations – Requires thick wires and dielectric spacing – Increases manufacturing cost Beckmann & Wood Managing Wire Delay in Large CMP Caches 8

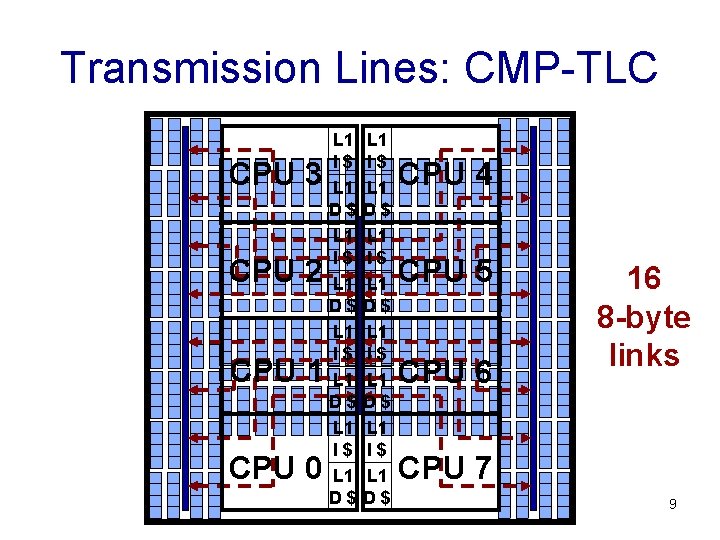

Transmission Lines: CMP-TLC CPU 3 CPU 2 CPU 1 CPU 0 L 1 I$ L 1 D$ L 1 I$ L 1 D$ CPU 4 CPU 5 CPU 6 16 8 -byte links CPU 7 9

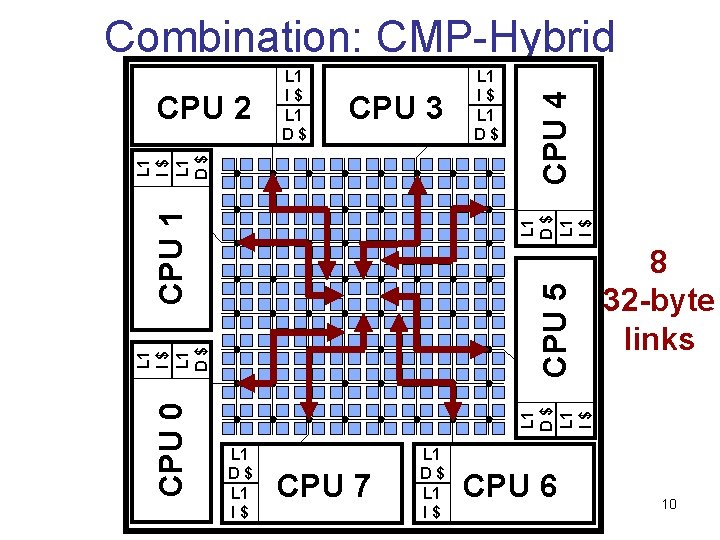

CPU 3 CPU 5 8 32 -byte links L 1 D$ L 1 I$ L 1 D$ CPU 0 L 1 I$ L 1 D$ L 1 I$ CPU 1 L 1 I$ L 1 D$ CPU 2 L 1 I$ L 1 D$ CPU 4 Combination: CMP-Hybrid L 1 D$ L 1 I$ CPU 7 L 1 D$ L 1 I$ CPU 6 10

Outline • Global interconnect and CMP trends • Latency Management Techniques • Evaluation – Methodology – Block Migration: CMP-DNUCA – Transmission Lines: CMP-TLC – Combination: CMP-Hybrid Beckmann & Wood Managing Wire Delay in Large CMP Caches 11

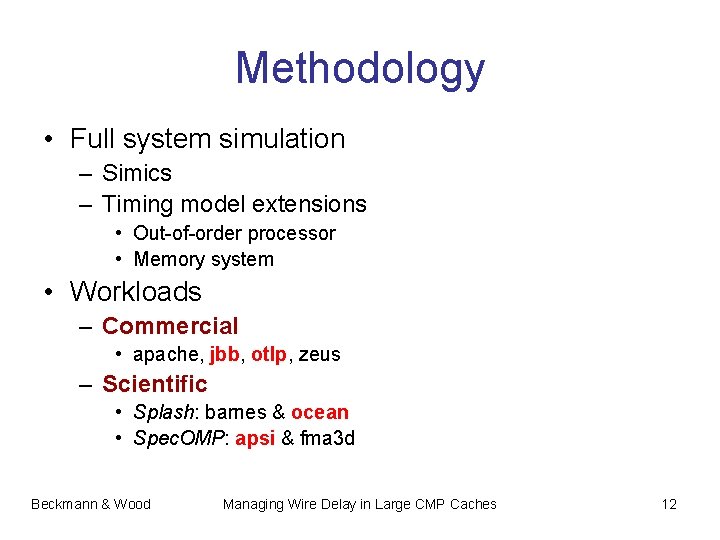

Methodology • Full system simulation – Simics – Timing model extensions • Out-of-order processor • Memory system • Workloads – Commercial • apache, jbb, otlp, zeus – Scientific • Splash: barnes & ocean • Spec. OMP: apsi & fma 3 d Beckmann & Wood Managing Wire Delay in Large CMP Caches 12

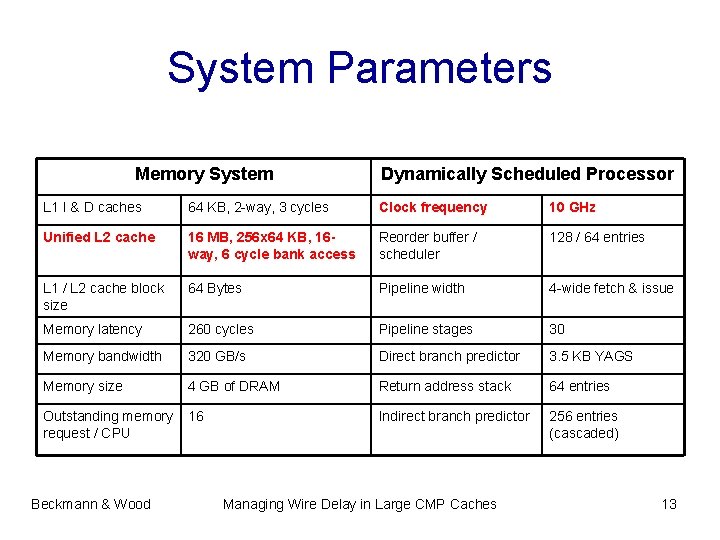

System Parameters Memory System Dynamically Scheduled Processor L 1 I & D caches 64 KB, 2 -way, 3 cycles Clock frequency 10 GHz Unified L 2 cache 16 MB, 256 x 64 KB, 16 way, 6 cycle bank access Reorder buffer / scheduler 128 / 64 entries L 1 / L 2 cache block size 64 Bytes Pipeline width 4 -wide fetch & issue Memory latency 260 cycles Pipeline stages 30 Memory bandwidth 320 GB/s Direct branch predictor 3. 5 KB YAGS Memory size 4 GB of DRAM Return address stack 64 entries Outstanding memory request / CPU 16 Indirect branch predictor 256 entries (cascaded) Beckmann & Wood Managing Wire Delay in Large CMP Caches 13

Outline • Global interconnect and CMP trends • Latency Management Techniques • Evaluation – Methodology – Block Migration: CMP-DNUCA – Transmission Lines: CMP-TLC – Combination: CMP-Hybrid Beckmann & Wood Managing Wire Delay in Large CMP Caches 14

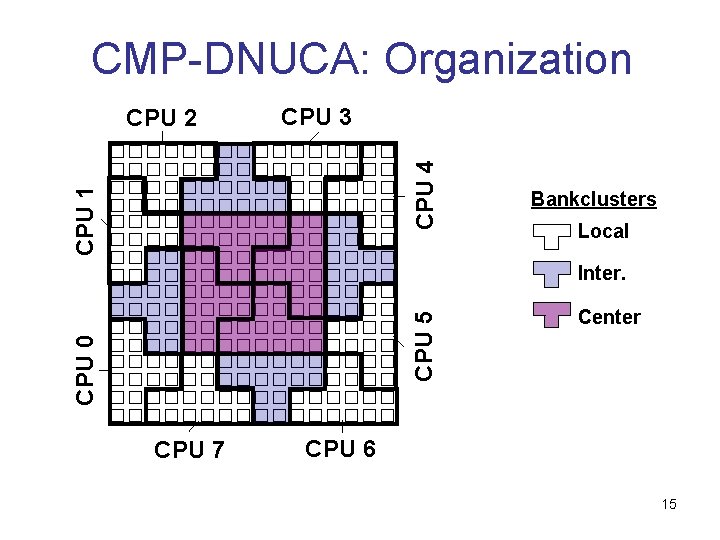

CMP-DNUCA: Organization CPU 3 CPU 1 CPU 4 CPU 2 Bankclusters Local CPU 0 CPU 5 Inter. CPU 7 Center CPU 6 15

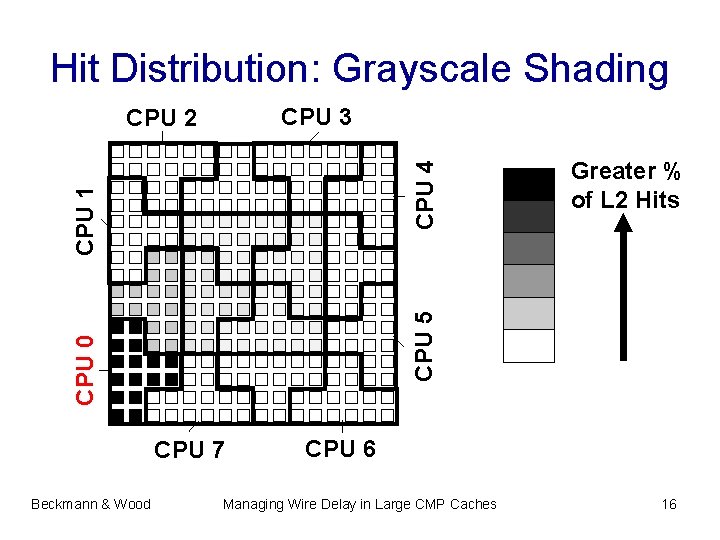

Hit Distribution: Grayscale Shading CPU 3 CPU 0 CPU 7 Beckmann & Wood Greater % of L 2 Hits CPU 5 CPU 1 CPU 4 CPU 2 CPU 6 Managing Wire Delay in Large CMP Caches 16

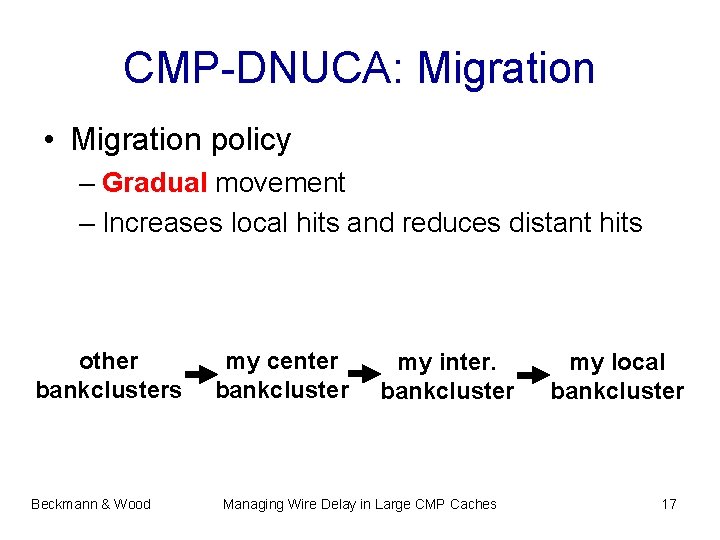

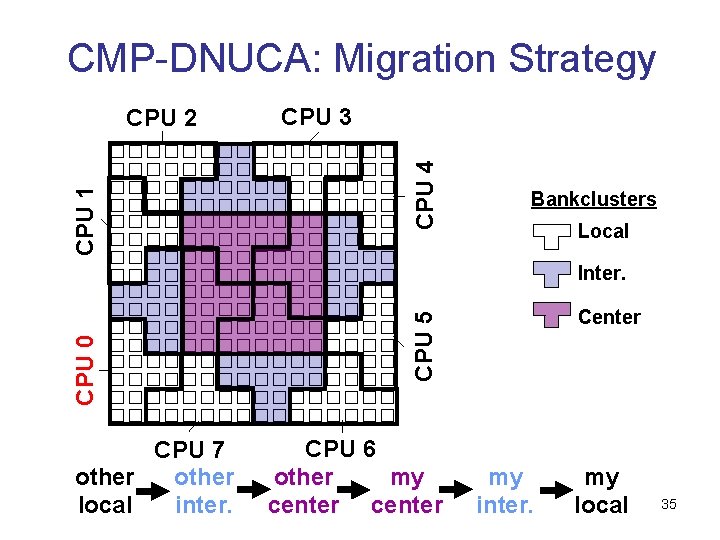

CMP-DNUCA: Migration • Migration policy – Gradual movement – Increases local hits and reduces distant hits other bankclusters Beckmann & Wood my center bankcluster my inter. bankcluster Managing Wire Delay in Large CMP Caches my local bankcluster 17

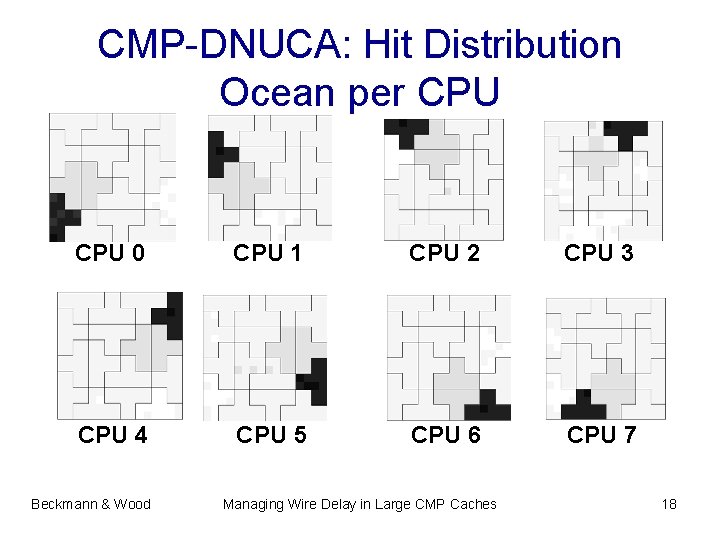

CMP-DNUCA: Hit Distribution Ocean per CPU 0 CPU 1 CPU 2 CPU 3 CPU 4 CPU 5 CPU 6 CPU 7 Beckmann & Wood Managing Wire Delay in Large CMP Caches 18

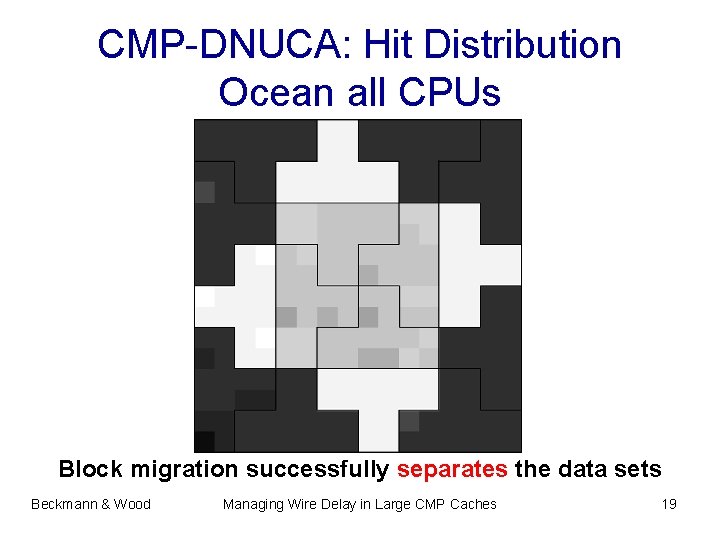

CMP-DNUCA: Hit Distribution Ocean all CPUs Block migration successfully separates the data sets Beckmann & Wood Managing Wire Delay in Large CMP Caches 19

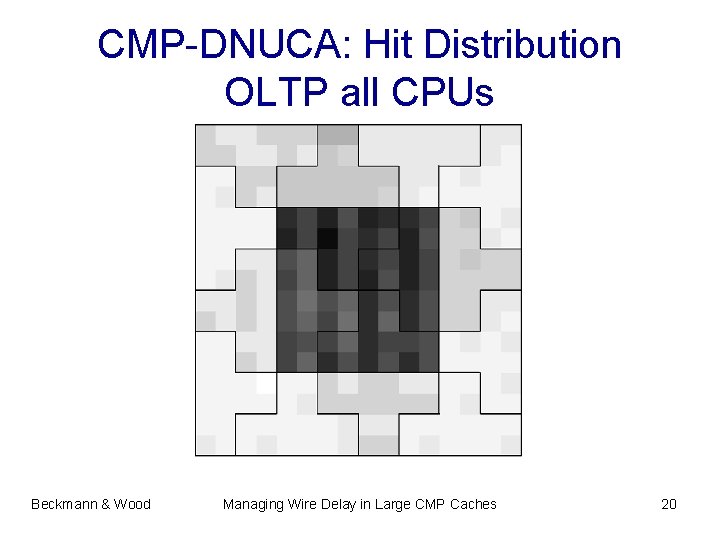

CMP-DNUCA: Hit Distribution OLTP all CPUs Beckmann & Wood Managing Wire Delay in Large CMP Caches 20

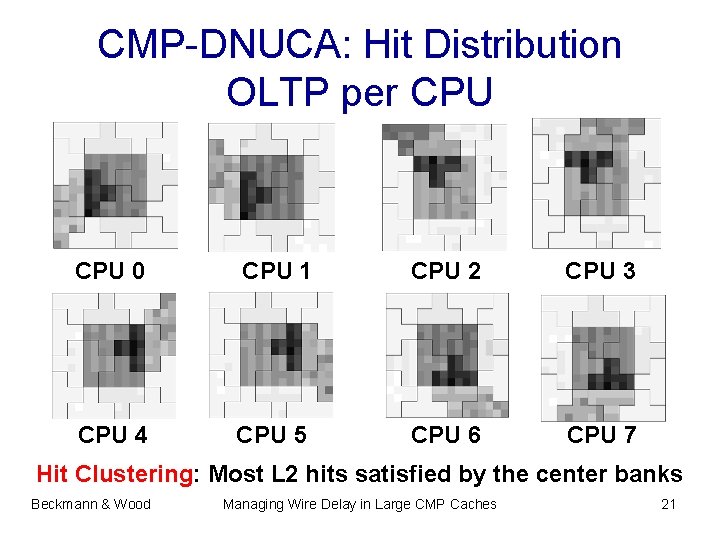

CMP-DNUCA: Hit Distribution OLTP per CPU 0 CPU 1 CPU 2 CPU 3 CPU 4 CPU 5 CPU 6 CPU 7 Hit Clustering: Most L 2 hits satisfied by the center banks Beckmann & Wood Managing Wire Delay in Large CMP Caches 21

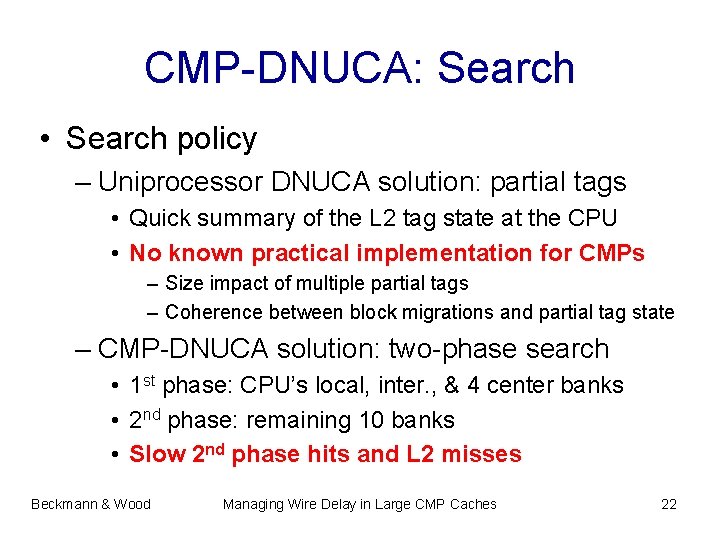

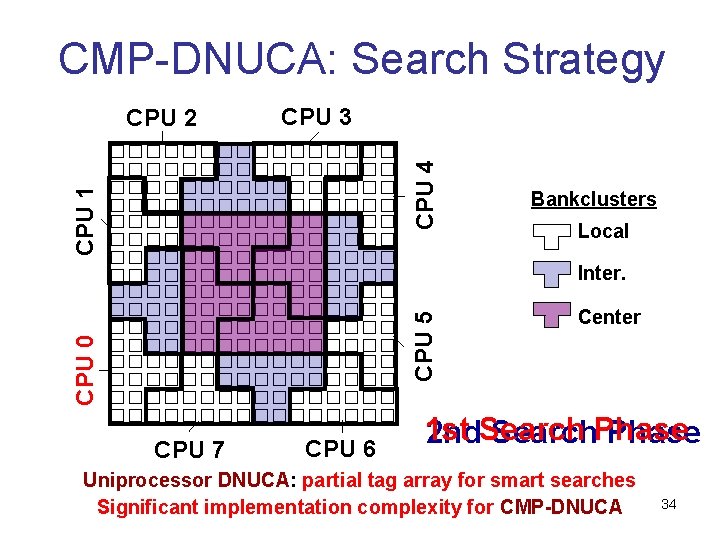

CMP-DNUCA: Search • Search policy – Uniprocessor DNUCA solution: partial tags • Quick summary of the L 2 tag state at the CPU • No known practical implementation for CMPs – Size impact of multiple partial tags – Coherence between block migrations and partial tag state – CMP-DNUCA solution: two-phase search • 1 st phase: CPU’s local, inter. , & 4 center banks • 2 nd phase: remaining 10 banks • Slow 2 nd phase hits and L 2 misses Beckmann & Wood Managing Wire Delay in Large CMP Caches 22

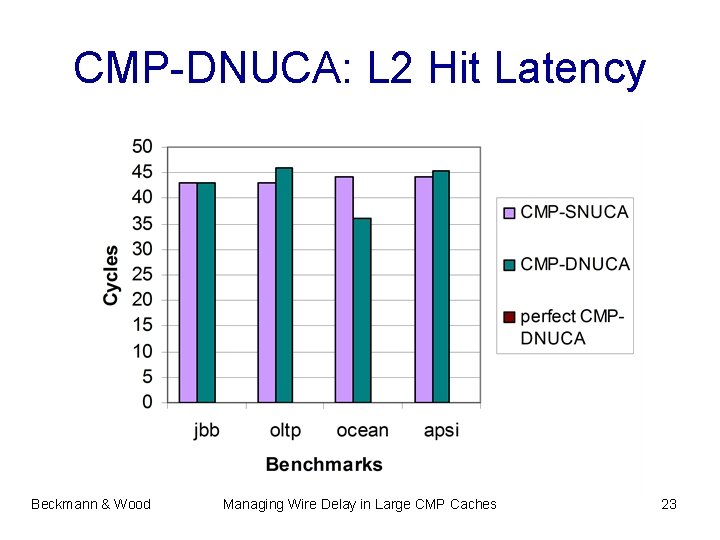

CMP-DNUCA: L 2 Hit Latency Beckmann & Wood Managing Wire Delay in Large CMP Caches 23

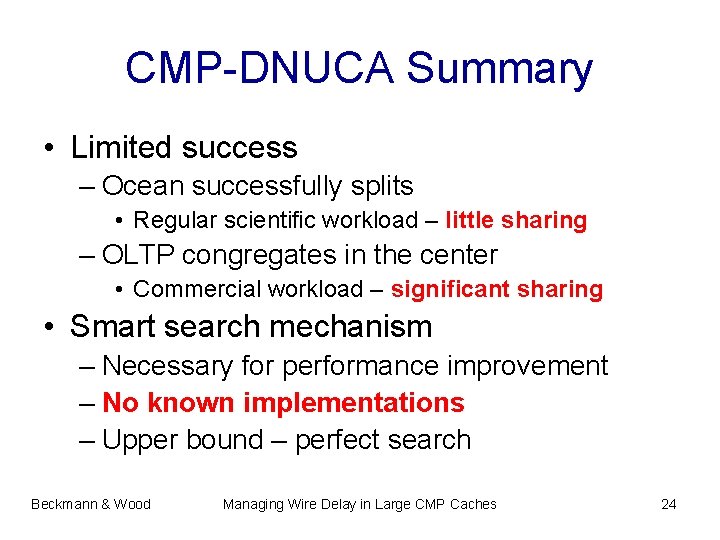

CMP-DNUCA Summary • Limited success – Ocean successfully splits • Regular scientific workload – little sharing – OLTP congregates in the center • Commercial workload – significant sharing • Smart search mechanism – Necessary for performance improvement – No known implementations – Upper bound – perfect search Beckmann & Wood Managing Wire Delay in Large CMP Caches 24

Outline • Global interconnect and CMP trends • Latency Management Techniques • Evaluation – Methodology – Block Migration: CMP-DNUCA – Transmission Lines: CMP-TLC – Combination: CMP-Hybrid Beckmann & Wood Managing Wire Delay in Large CMP Caches 25

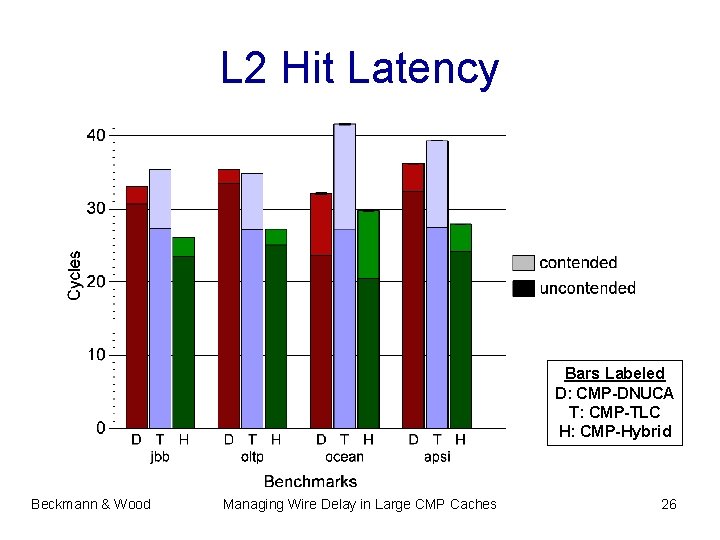

L 2 Hit Latency Bars Labeled D: CMP-DNUCA T: CMP-TLC H: CMP-Hybrid Beckmann & Wood Managing Wire Delay in Large CMP Caches 26

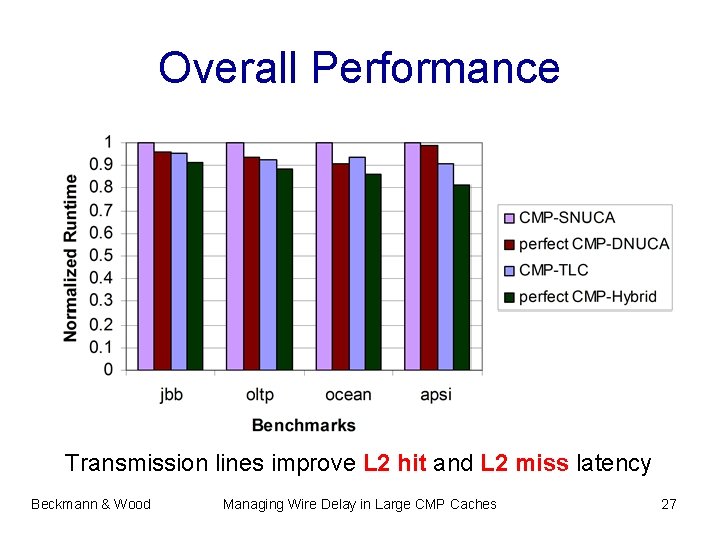

Overall Performance Transmission lines improve L 2 hit and L 2 miss latency Beckmann & Wood Managing Wire Delay in Large CMP Caches 27

Conclusions • Individual Latency Management Techniques – Strided Prefetching: subset of misses – Cache Block Migration: sharing impedes migration – On-chip Transmission Lines: limited bandwidth • Combination: CMP-Hybrid – Potentially alleviates bottlenecks – Disadvantages • Relies on smart-search mechanism • Manufacturing cost of transmission lines Beckmann & Wood Managing Wire Delay in Large CMP Caches 28

Backup Slides Beckmann & Wood Managing Wire Delay in Large CMP Caches 29

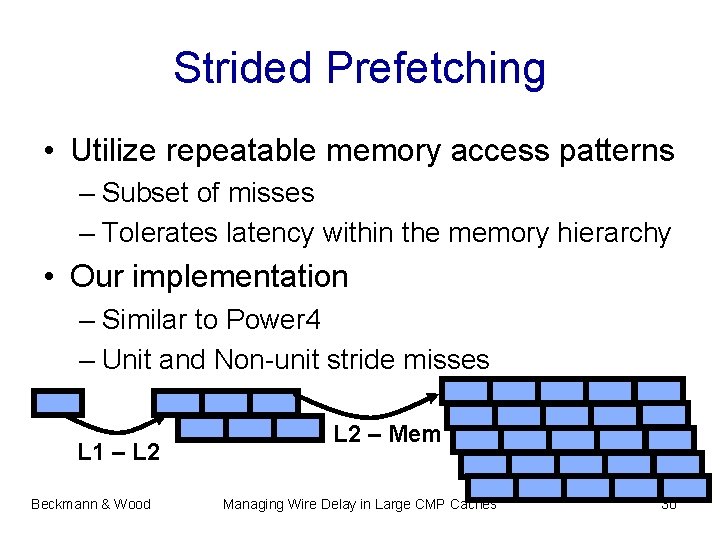

Strided Prefetching • Utilize repeatable memory access patterns – Subset of misses – Tolerates latency within the memory hierarchy • Our implementation – Similar to Power 4 – Unit and Non-unit stride misses L 1 – L 2 Beckmann & Wood L 2 – Mem Managing Wire Delay in Large CMP Caches 30

On and Off-chip Prefetching Benchmarks Commercial Scientific Beckmann & Wood Managing Wire Delay in Large CMP Caches 31

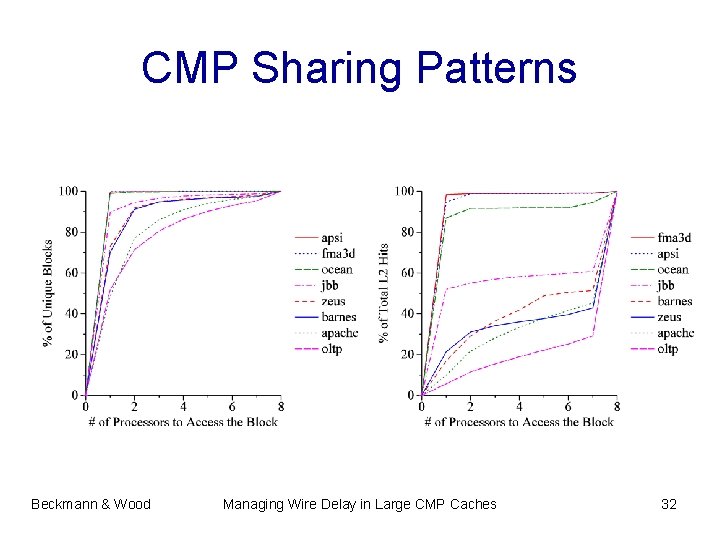

CMP Sharing Patterns Beckmann & Wood Managing Wire Delay in Large CMP Caches 32

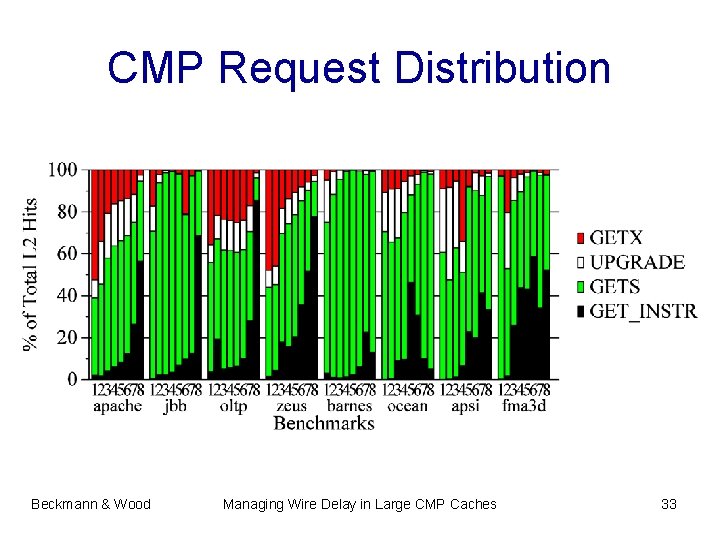

CMP Request Distribution Beckmann & Wood Managing Wire Delay in Large CMP Caches 33

CMP-DNUCA: Search Strategy CPU 3 CPU 1 CPU 4 CPU 2 Bankclusters Local CPU 0 CPU 5 Inter. CPU 7 CPU 6 Center 1 st 2 nd. Search. Phase Uniprocessor DNUCA: partial tag array for smart searches Significant implementation complexity for CMP-DNUCA 34

CMP-DNUCA: Migration Strategy CPU 3 CPU 4 CPU 1 CPU 2 Bankclusters Local CPU 7 other local inter. Center CPU 5 CPU 0 Inter. CPU 6 other my center my inter. my local 35

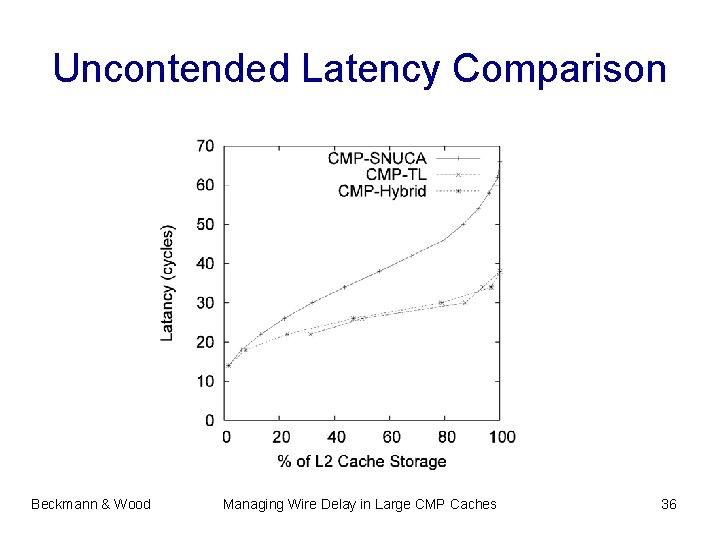

Uncontended Latency Comparison Beckmann & Wood Managing Wire Delay in Large CMP Caches 36

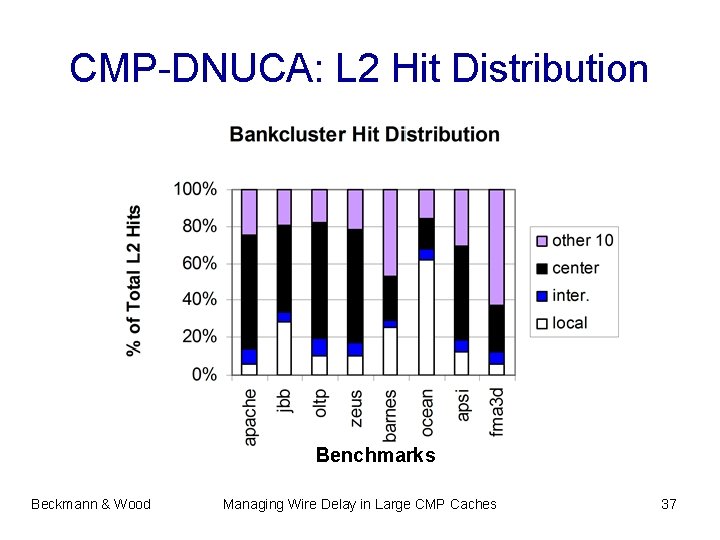

CMP-DNUCA: L 2 Hit Distribution Benchmarks Beckmann & Wood Managing Wire Delay in Large CMP Caches 37

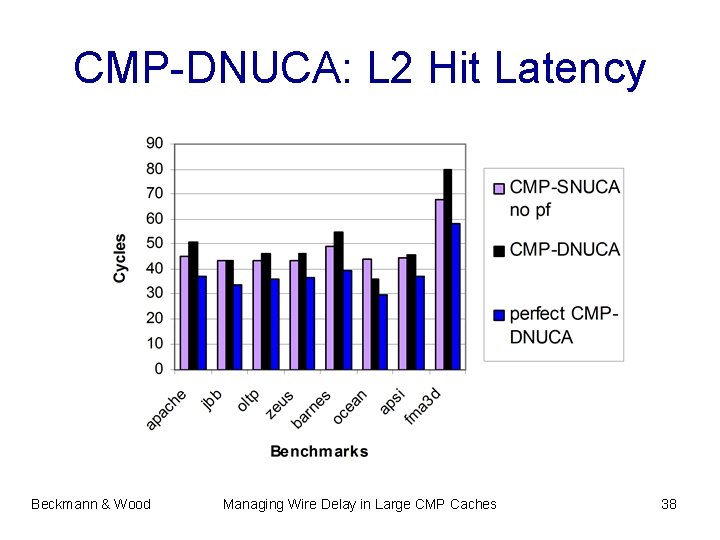

CMP-DNUCA: L 2 Hit Latency Beckmann & Wood Managing Wire Delay in Large CMP Caches 38

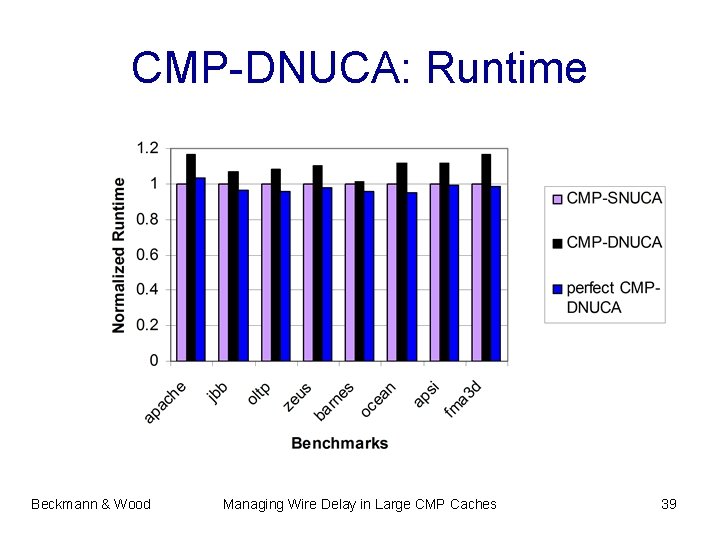

CMP-DNUCA: Runtime Beckmann & Wood Managing Wire Delay in Large CMP Caches 39

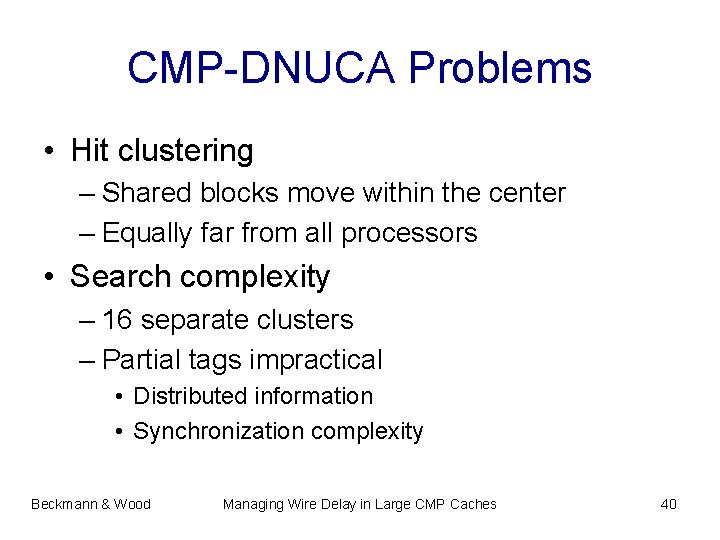

CMP-DNUCA Problems • Hit clustering – Shared blocks move within the center – Equally far from all processors • Search complexity – 16 separate clusters – Partial tags impractical • Distributed information • Synchronization complexity Beckmann & Wood Managing Wire Delay in Large CMP Caches 40

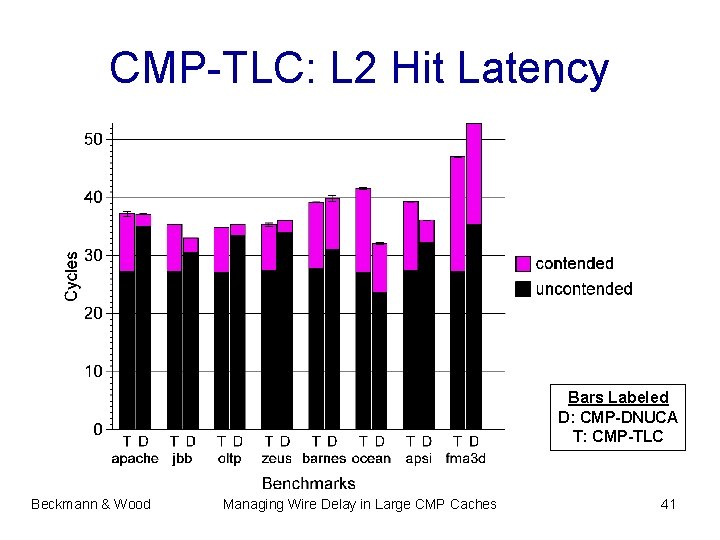

CMP-TLC: L 2 Hit Latency Bars Labeled D: CMP-DNUCA T: CMP-TLC Beckmann & Wood Managing Wire Delay in Large CMP Caches 41

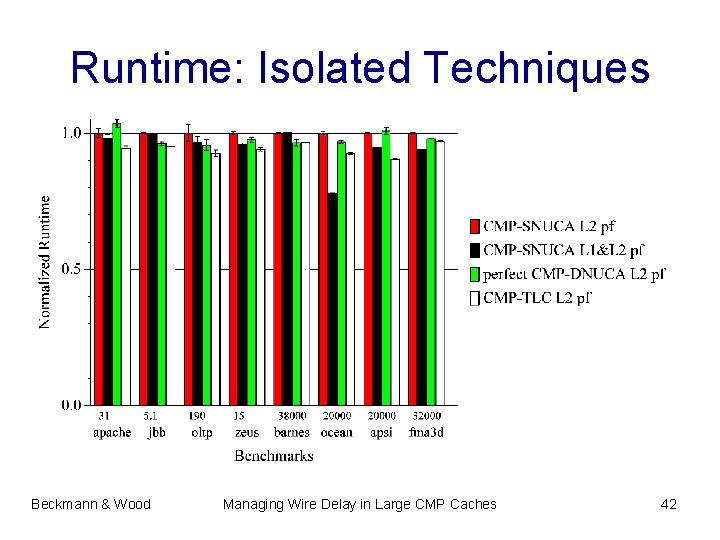

Runtime: Isolated Techniques Beckmann & Wood Managing Wire Delay in Large CMP Caches 42

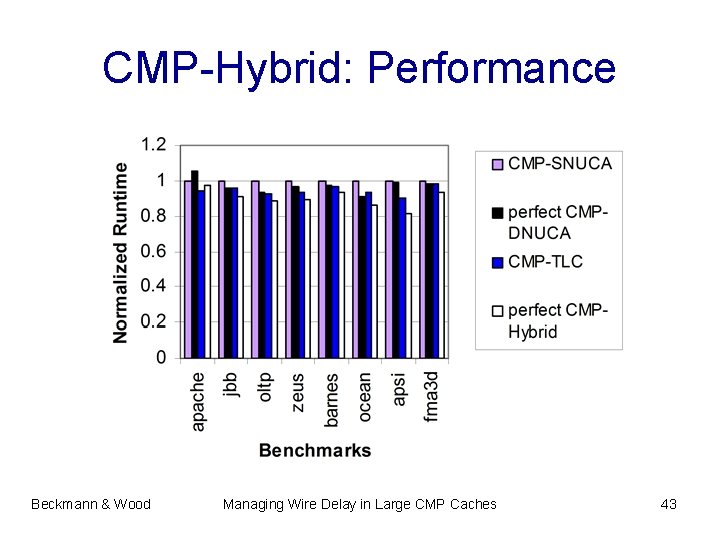

CMP-Hybrid: Performance Beckmann & Wood Managing Wire Delay in Large CMP Caches 43

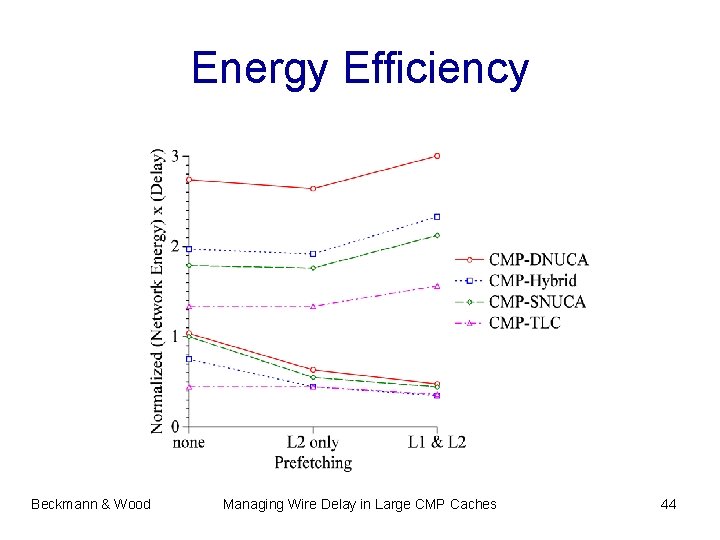

Energy Efficiency Beckmann & Wood Managing Wire Delay in Large CMP Caches 44

- Slides: 44