Main Memory CS 448 Main Memory Bottom of

Main Memory CS 448

Main Memory • Bottom of the memory hierarchy • Measured in – Access Time • Time between a read is requested and data delivered – Cycle Time • Minimum time between requests to memory • Greater than access time to ensure address lines are stable • Three Important Issues – Capacity • Bell’s law - 1 MB per MIP needed for balance, avoid page faults – Latency • Time to access the data – Bandwidth • Amount of data that can be transferred

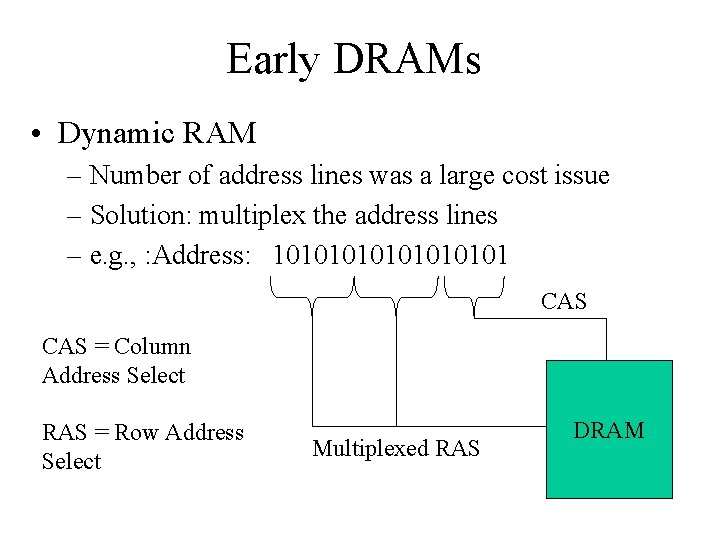

Early DRAMs • Dynamic RAM – Number of address lines was a large cost issue – Solution: multiplex the address lines – e. g. , : Address: 101010101 CAS = Column Address Select RAS = Row Address Select Multiplexed RAS DRAM

DRAMS • Dynamic RAM – Transistores each bit – Loss over time – Must periodically “refresh” the bits • All bits in a row can be refreshed by reading that row • Memory controllers periodically refresh, e. g. every 8 ms – If the CPU tries to access memory during the refresh, we must wait (hopefully won’t occur often) – Typical cycle times 60 -90 ns

SRAMs • Static RAM – Does not need a refresh – Faster than DRAM, generally not multiplexed – But more expensive • Typical memories – DRAM 4 -8 times the capacity of SRAM • Used for main memory – SRAM 8 -16 times faster than DRAM • • Typical cycle times 4 -7 ns Also 8 -16 times as expensive Used to build cache Exceptions; Cray built main memory out of SRAM

Memory Example • Consider the following scenario – 1 cycle to send the address – 4 cycles per word of access – 1 cycle to transmit the data • If main memory is organized by word – 6 cycles for every word • Given a cache line of 4 words – 24 cycles is the miss penalty Yikes! • What can we do about this penalty?

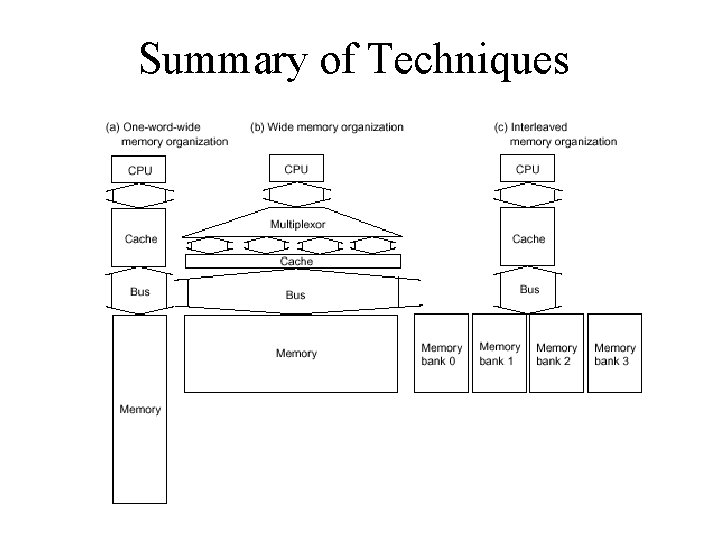

#1 : More Bandwidth to Memory • Make a word of main memory look like a cache line – Easy to do conceptually – Say we want 4 words, so send all four words back on the bus at one time instead of one after the other • In the previous scenario, we could send 1 address, access the four words (4 cycles if in parallel or 16 if sequential), and then send all data at once in 1 more cycle for a total of 6 or 18 cycles • Still better than 24 even if we don’t access each word in parallel • Problem – Need a wider bus, which is expensive – Especially true if this contributes to the pin count on the CPU • Usually the bus width to memory will match the width of the L 2 cache

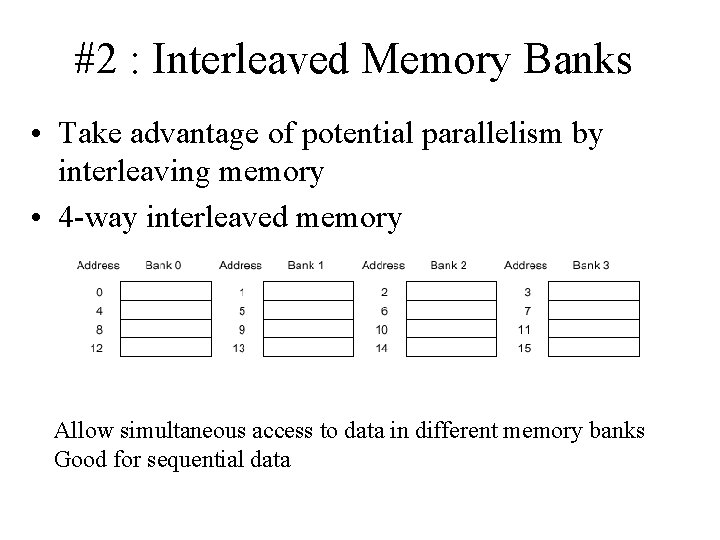

#2 : Interleaved Memory Banks • Take advantage of potential parallelism by interleaving memory • 4 -way interleaved memory Allow simultaneous access to data in different memory banks Good for sequential data

#3: Independent Memory Banks • Can take the idea of interleaved banks a bit farther… make independent banks altogether – Multiple independent accesses – Multiple memory controllers – Each bank needs separate address lines and probably a separate data bus • Will see this idea appear again with multiprocessors that share a common memory

Summary of Techniques

#4 : Avoiding Memory Bank Conflicts • Have the compiler schedule code to operate on all data in a bank first before moving on to a separate bank – Might result in cache conflicts – Similar to previous optimizations we saw using compiler-based scheduling

Other Types of RAM • SDRAM – Synchronous Dynamic RAM – Like a DRAM, but uses pipelining across two sets of memory addresses – 8 -10 ns read cycle time • RDRAM – Rambus Direct RAM – Also uses pipelining – Replace RAS/CAS with a packet-switched bus, new interface to act like a memory system not memory component – Can deliver one byte every 2 ns – Somewhat expensive, most architectures need a new bus systems to really take advantage of the increased RAM speed

- Slides: 12