Machine Learning Neural Networks Support Vector Machines Georg

- Slides: 34

Machine Learning Neural Networks, Support Vector Machines Georg Dorffner Section for Artificial Intelligence and Decision Support Ce. MSIIS – Medical University of Vienna

Machine Learning – possible definitions • Computer programs that improve with experience (Mitchell 1997) (artificial intelligence) • To find non-trivial structures in data based on examples (pattern recognition, data mining) • To estimate a model from data, which describes them (statistical data analysis) 2

Some prerequisites • Features – Describe the cases of a problem – Measurements, data • Learner (Version Space) – A class of models • Learning rule – An algorithm that finds the best model • Generalisation – Model is supposed to describe new data well 3

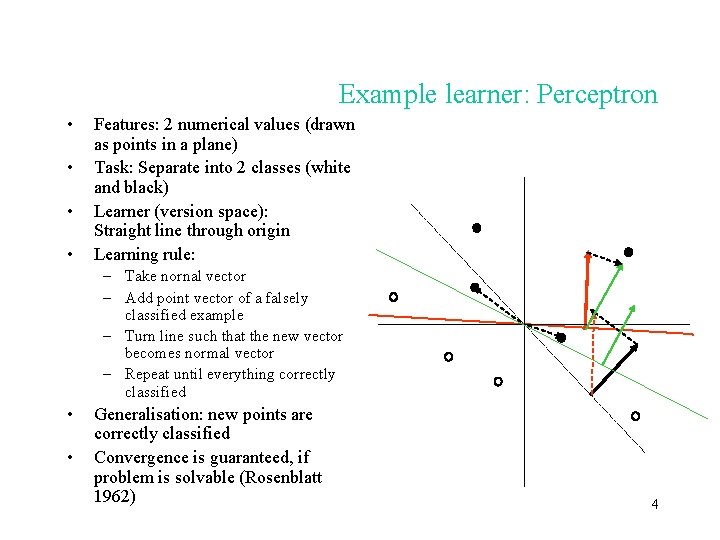

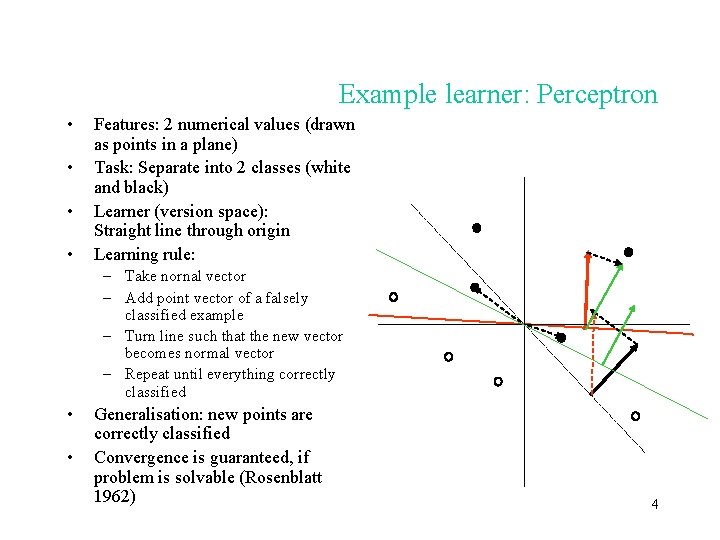

Example learner: Perceptron • • Features: 2 numerical values (drawn as points in a plane) Task: Separate into 2 classes (white and black) Learner (version space): Straight line through origin Learning rule: – Take nornal vector – Add point vector of a falsely classified example – Turn line such that the new vector becomes normal vector – Repeat until everything correctly classified • • Generalisation: new points are correctly classified Convergence is guaranteed, if problem is solvable (Rosenblatt 1962) 4

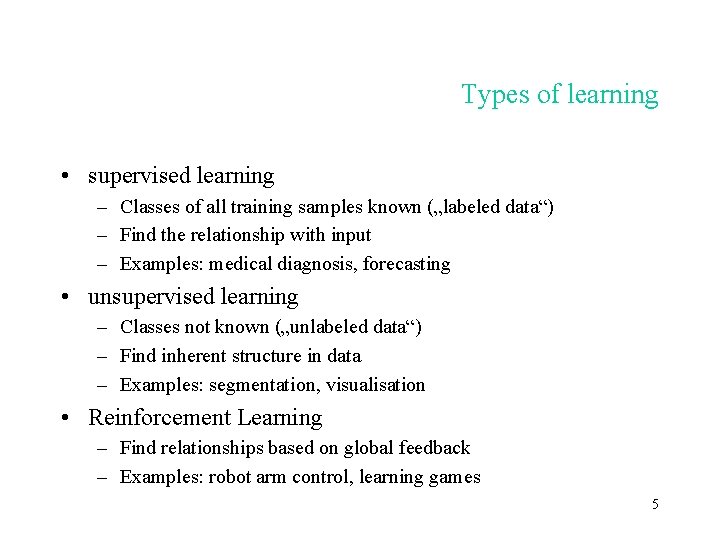

Types of learning • supervised learning – Classes of all training samples known („labeled data“) – Find the relationship with input – Examples: medical diagnosis, forecasting • unsupervised learning – Classes not known („unlabeled data“) – Find inherent structure in data – Examples: segmentation, visualisation • Reinforcement Learning – Find relationships based on global feedback – Examples: robot arm control, learning games 5

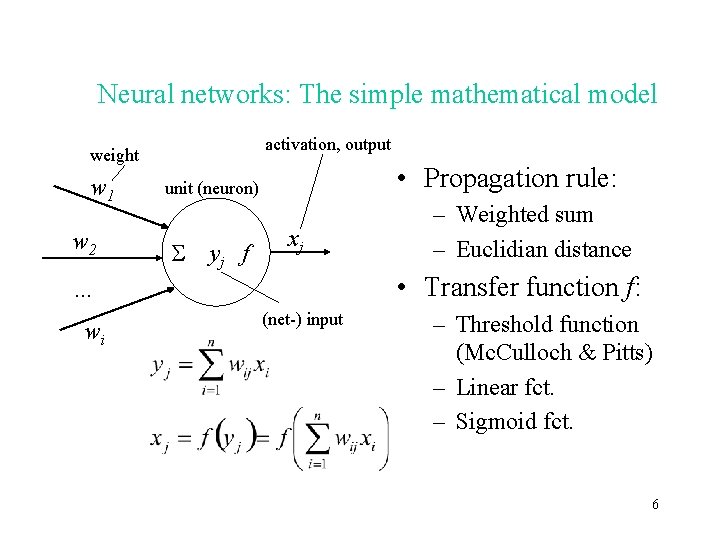

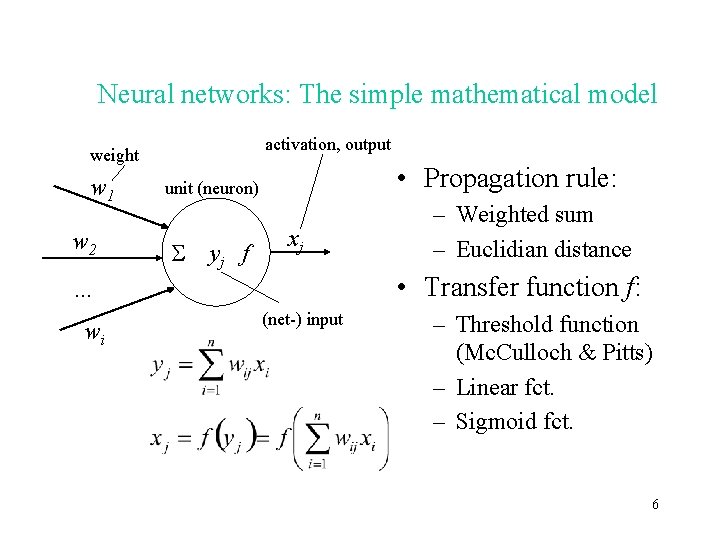

Neural networks: The simple mathematical model activation, output weight w 1 w 2 • Propagation rule: unit (neuron) yj f xj • Transfer function f: … wi – Weighted sum – Euclidian distance (net-) input – Threshold function (Mc. Culloch & Pitts) – Linear fct. – Sigmoid fct. 6

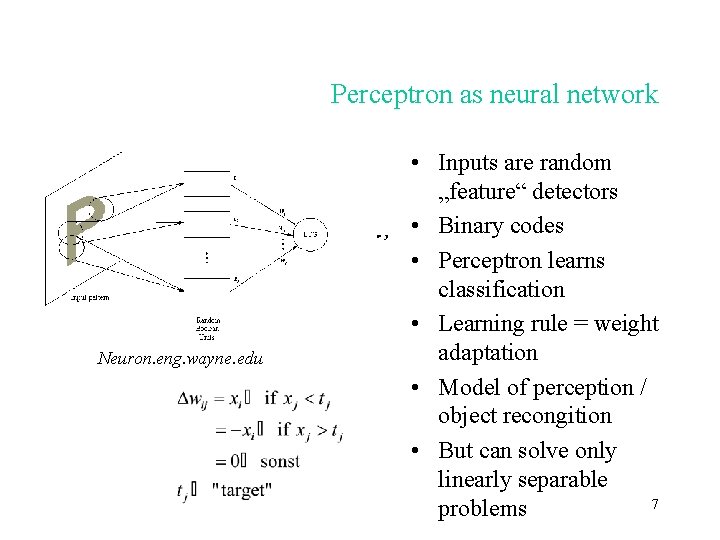

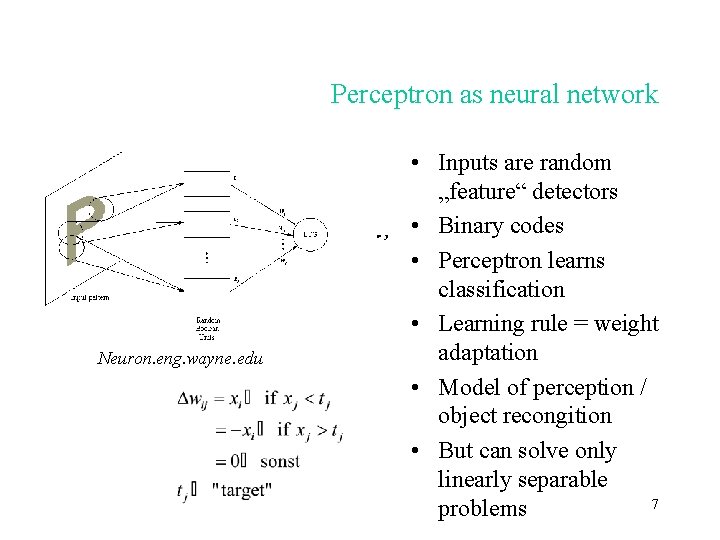

Perceptron as neural network Neuron. eng. wayne. edu • Inputs are random „feature“ detectors • Binary codes • Perceptron learns classification • Learning rule = weight adaptation • Model of perception / object recongition • But can solve only linearly separable 7 problems

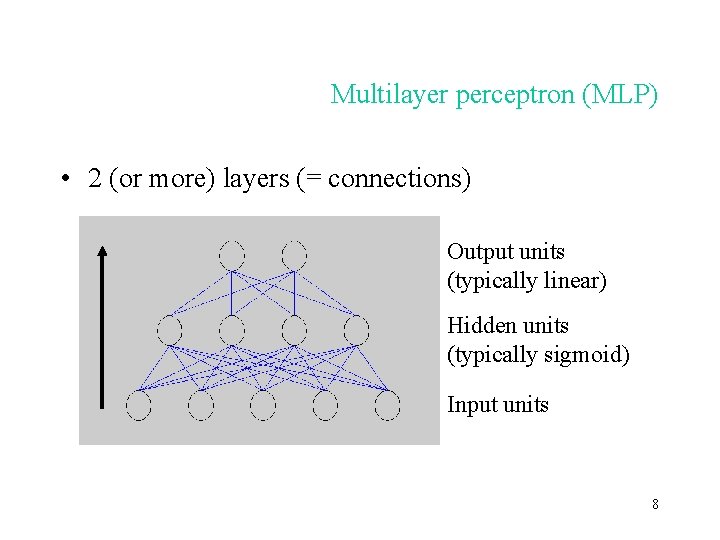

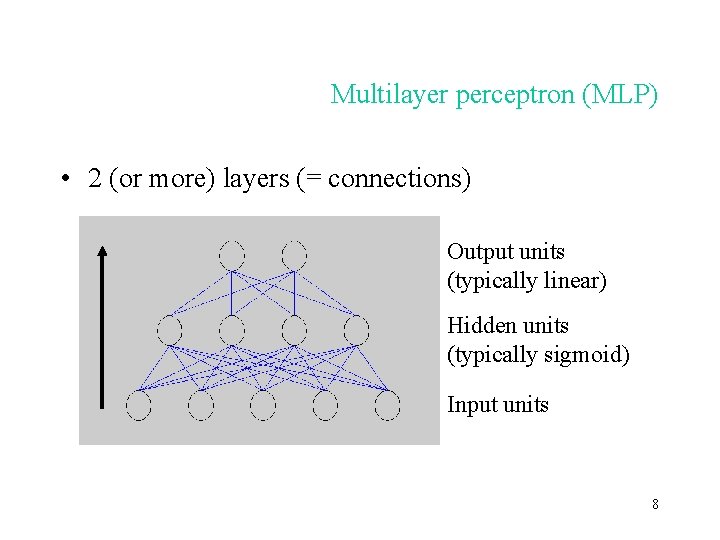

Multilayer perceptron (MLP) • 2 (or more) layers (= connections) Output units (typically linear) Hidden units (typically sigmoid) Input units 8

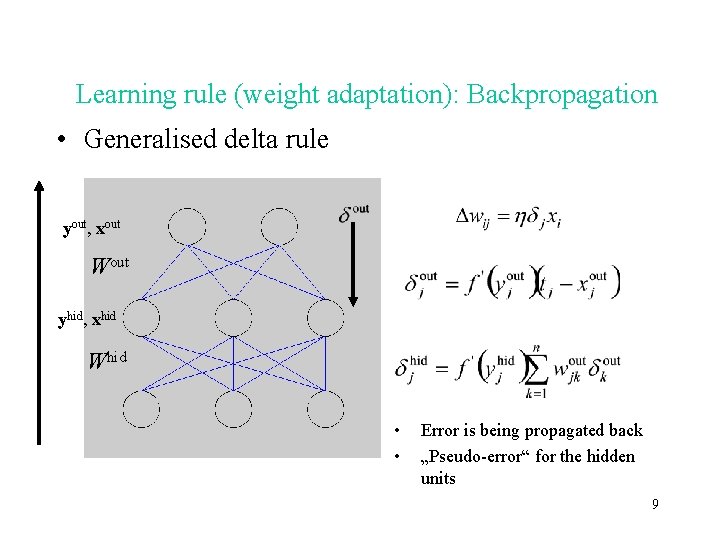

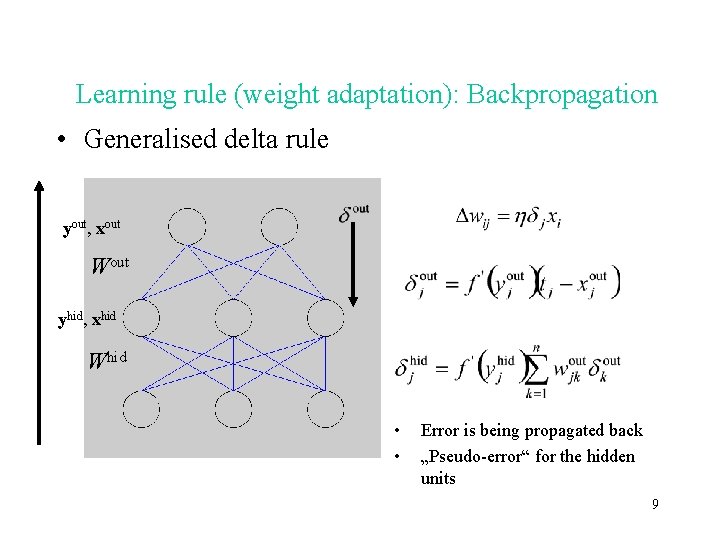

Learning rule (weight adaptation): Backpropagation • Generalised delta rule yout, xout Wout yhid, xhid Whid • • Error is being propagated back „Pseudo-error“ for the hidden units 9

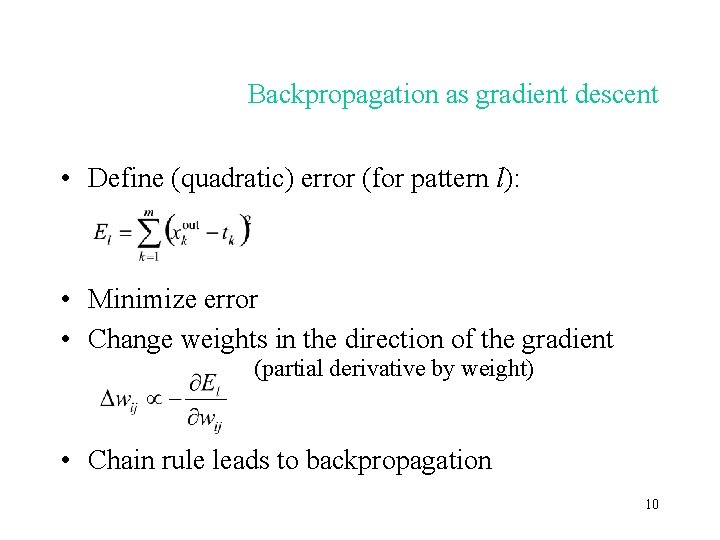

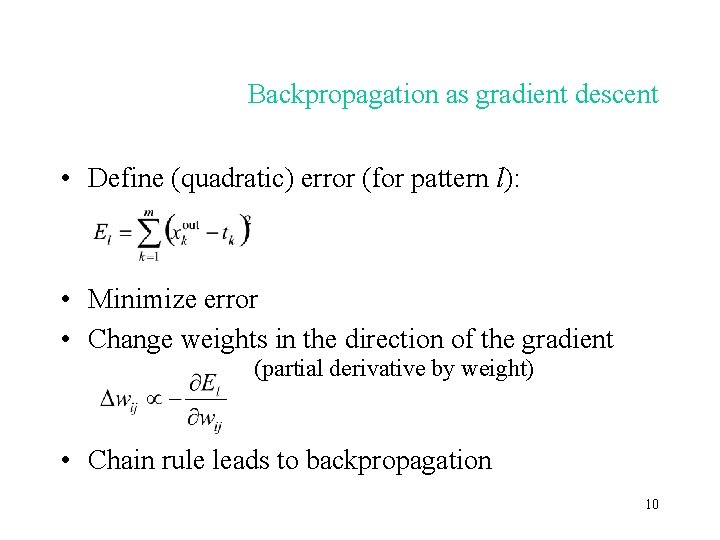

Backpropagation as gradient descent • Define (quadratic) error (for pattern l): • Minimize error • Change weights in the direction of the gradient (partial derivative by weight) • Chain rule leads to backpropagation 10

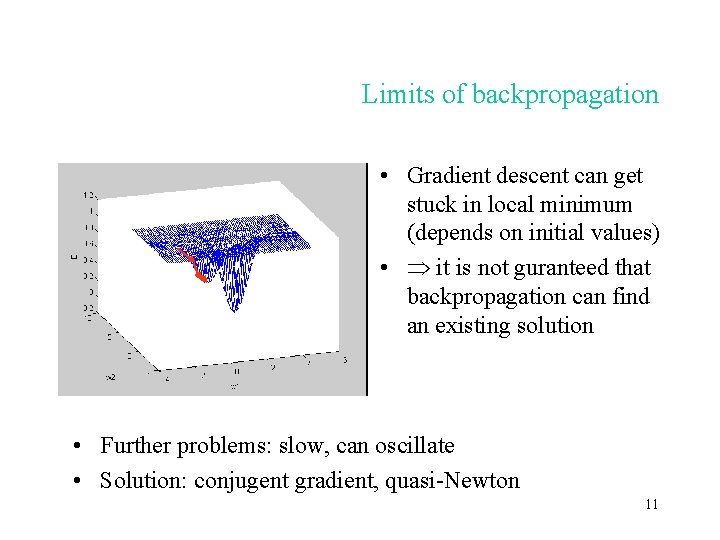

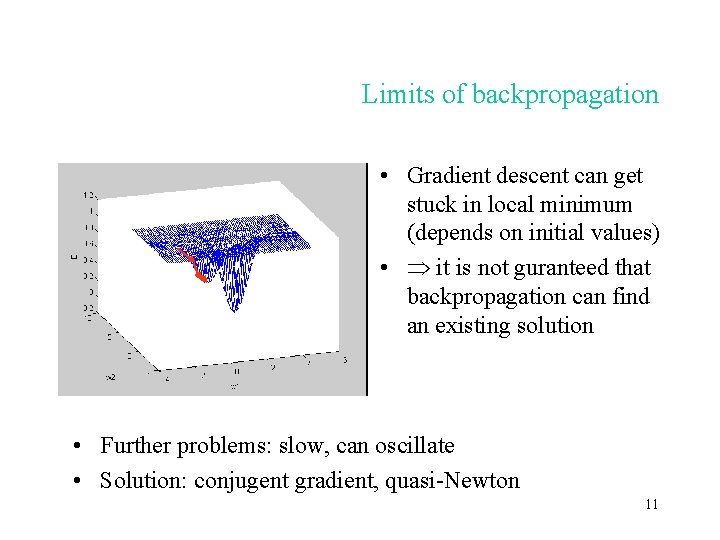

Limits of backpropagation • Gradient descent can get stuck in local minimum (depends on initial values) • it is not guranteed that backpropagation can find an existing solution • Further problems: slow, can oscillate • Solution: conjugent gradient, quasi-Newton 11

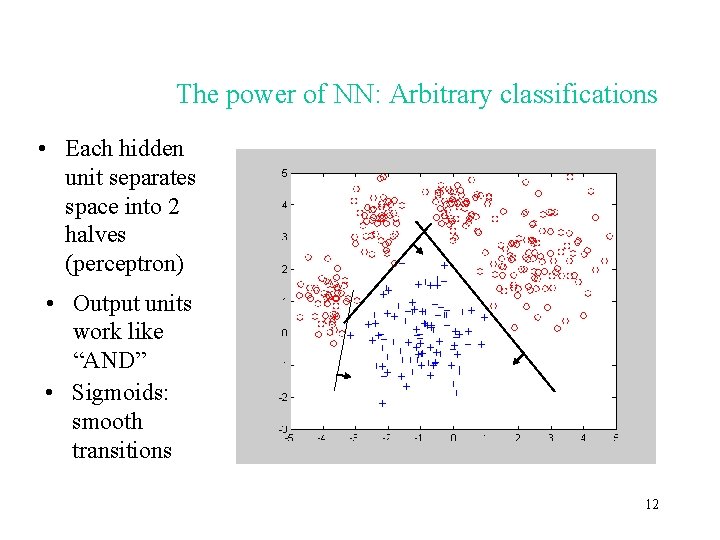

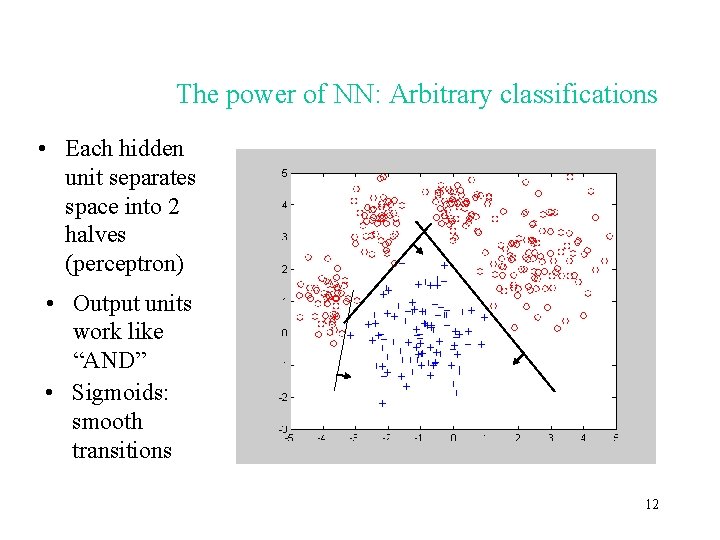

The power of NN: Arbitrary classifications • Each hidden unit separates space into 2 halves (perceptron) • Output units work like “AND” • Sigmoids: smooth transitions 12

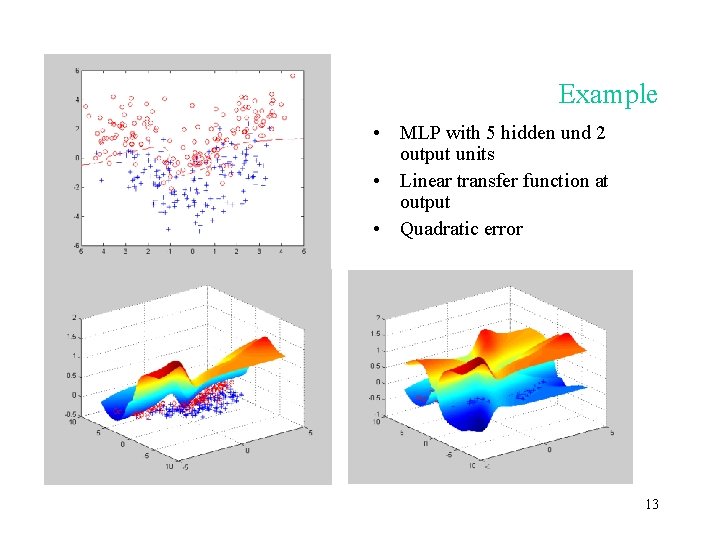

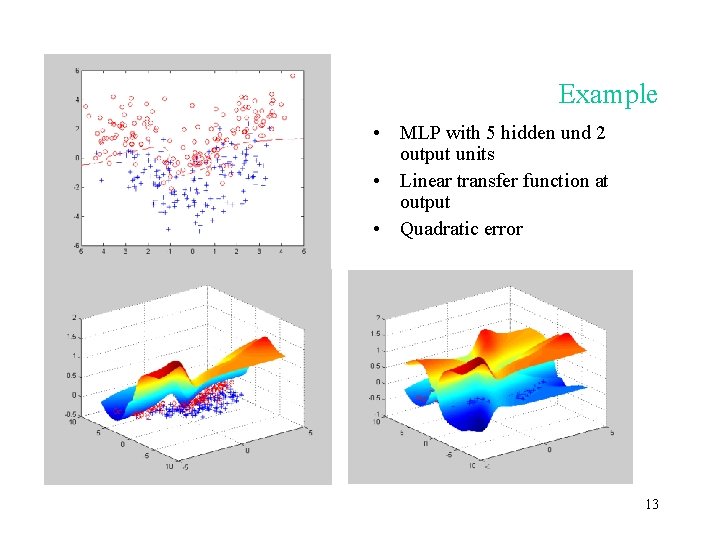

Example • MLP with 5 hidden und 2 output units • Linear transfer function at output • Quadratic error 13

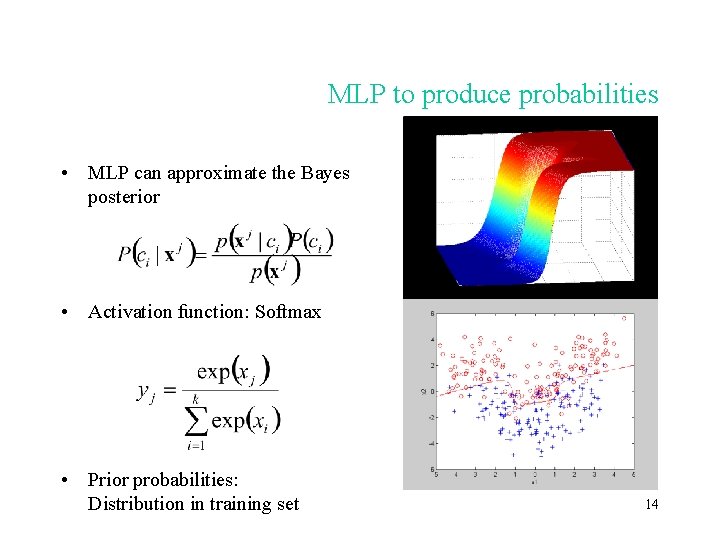

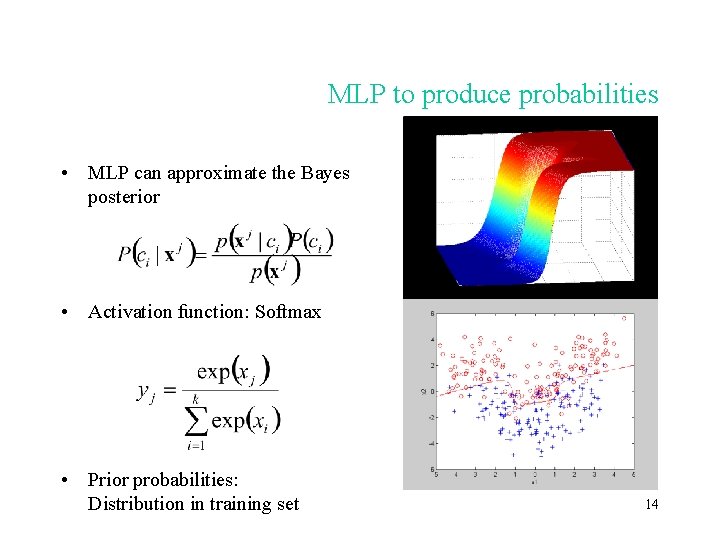

MLP to produce probabilities • MLP can approximate the Bayes posterior • Activation function: Softmax • Prior probabilities: Distribution in training set 14

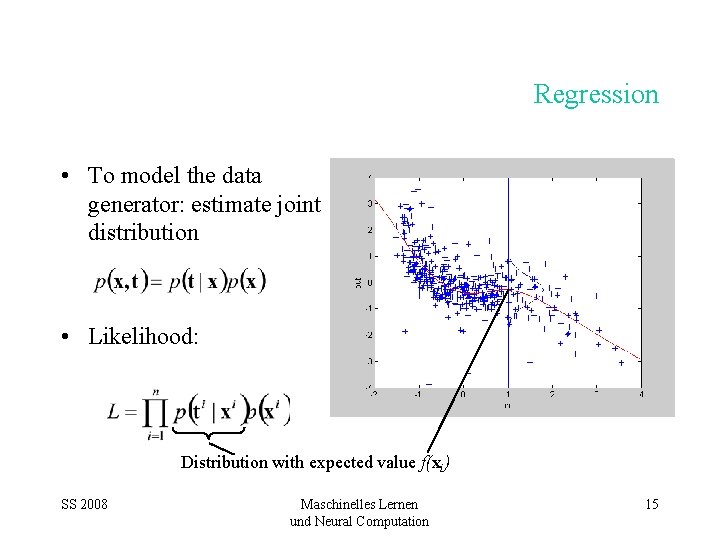

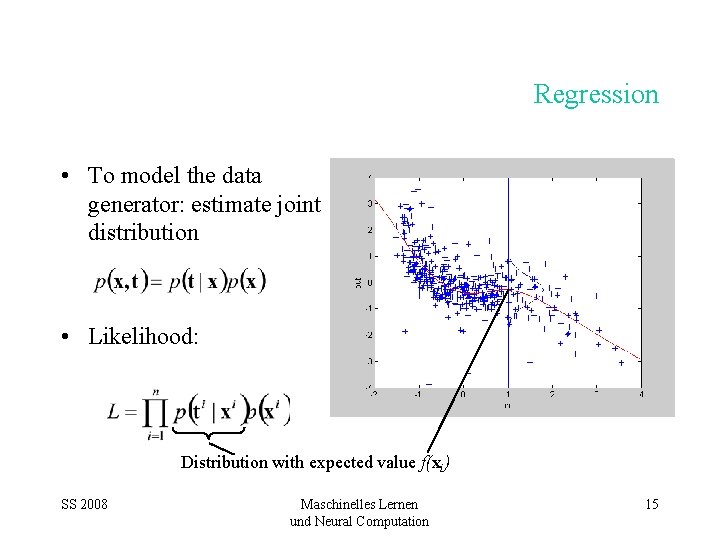

Regression • To model the data generator: estimate joint distribution • Likelihood: Distribution with expected value f(xi) SS 2008 Maschinelles Lernen und Neural Computation 15

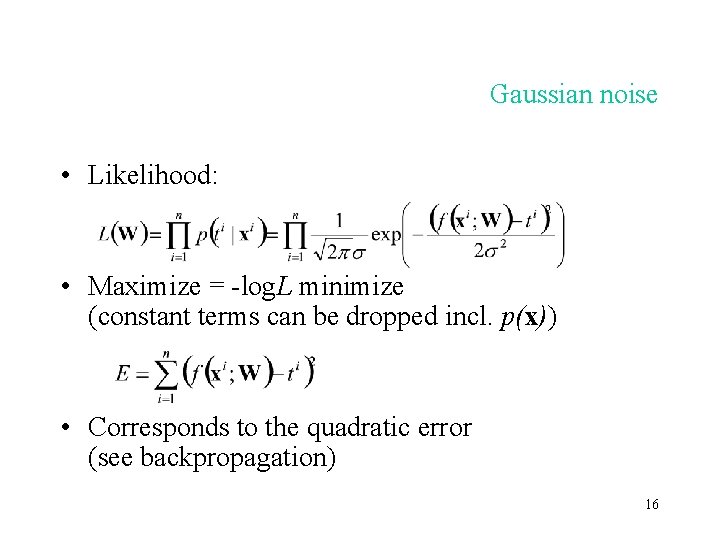

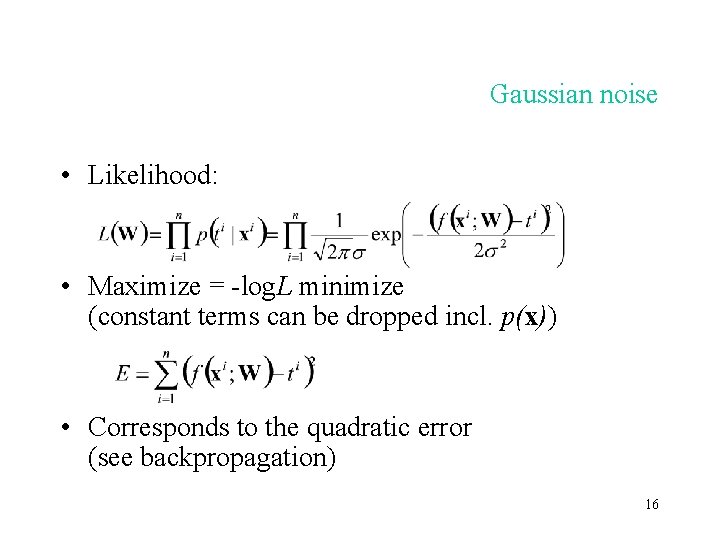

Gaussian noise • Likelihood: • Maximize = -log. L minimize (constant terms can be dropped incl. p(x)) • Corresponds to the quadratic error (see backpropagation) 16

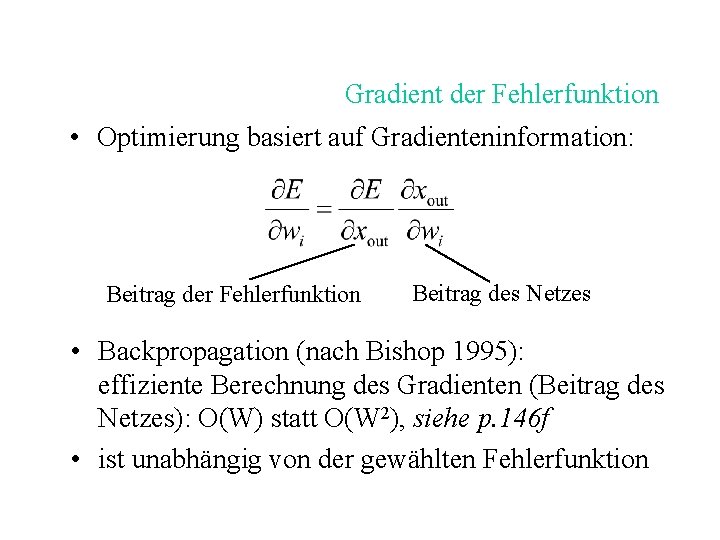

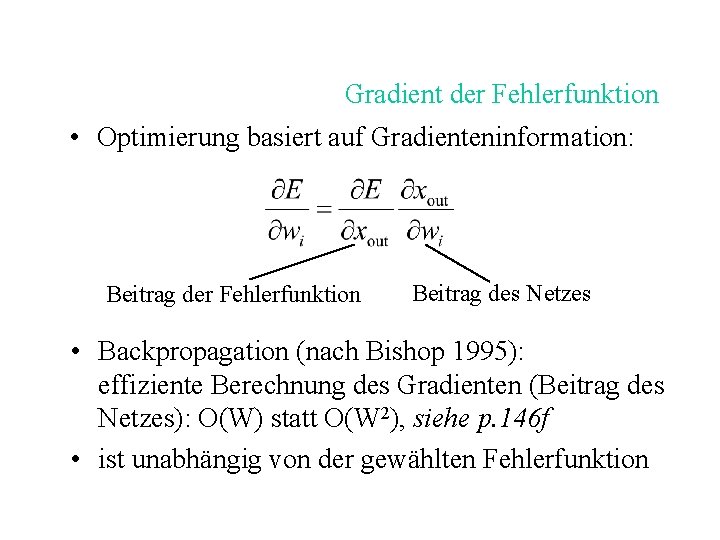

Gradient der Fehlerfunktion • Optimierung basiert auf Gradienteninformation: Beitrag der Fehlerfunktion Beitrag des Netzes • Backpropagation (nach Bishop 1995): effiziente Berechnung des Gradienten (Beitrag des Netzes): O(W) statt O(W 2), siehe p. 146 f • ist unabhängig von der gewählten Fehlerfunktion

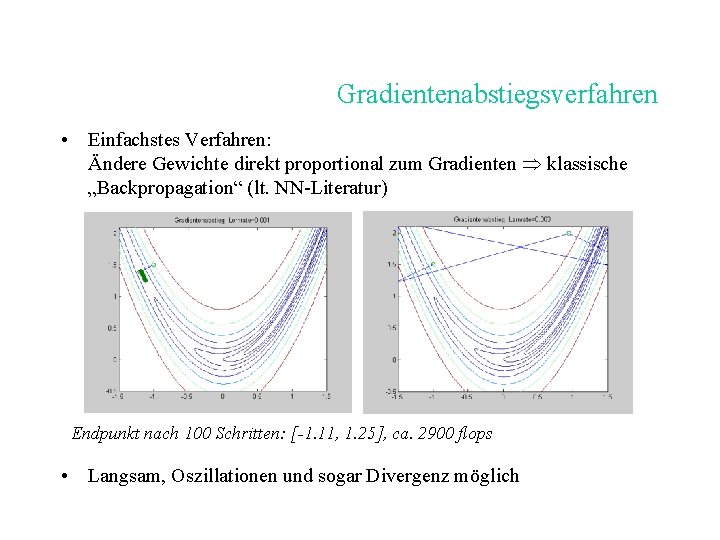

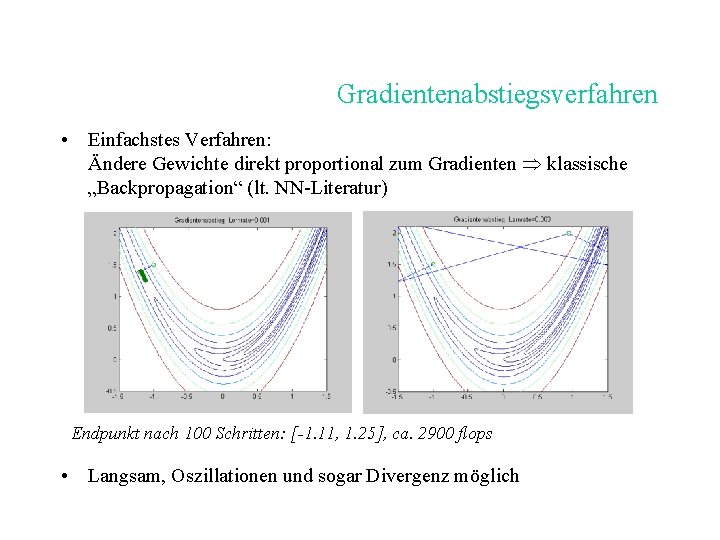

Gradientenabstiegsverfahren • Einfachstes Verfahren: Ändere Gewichte direkt proportional zum Gradienten klassische „Backpropagation“ (lt. NN-Literatur) Endpunkt nach 100 Schritten: [-1. 11, 1. 25], ca. 2900 flops • Langsam, Oszillationen und sogar Divergenz möglich

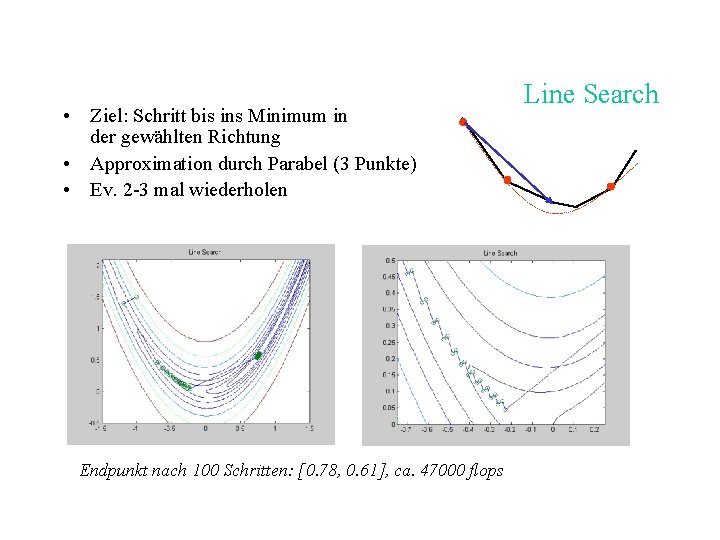

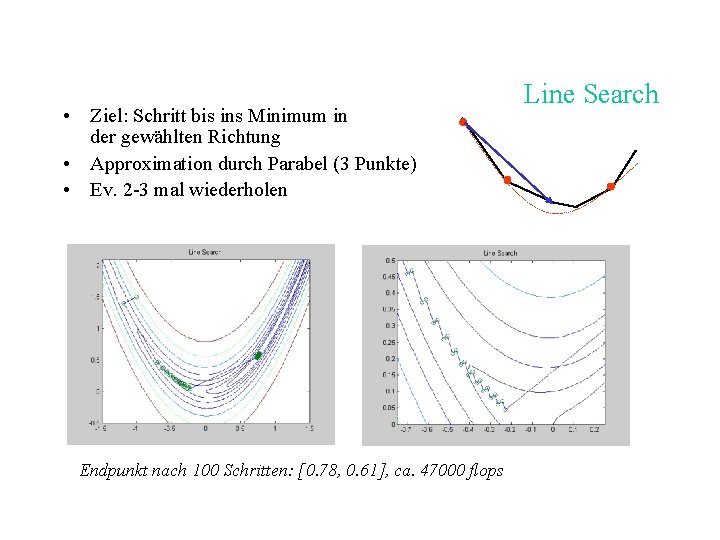

• Ziel: Schritt bis ins Minimum in der gewählten Richtung • Approximation durch Parabel (3 Punkte) • Ev. 2 -3 mal wiederholen Endpunkt nach 100 Schritten: [0. 78, 0. 61], ca. 47000 flops Line Search

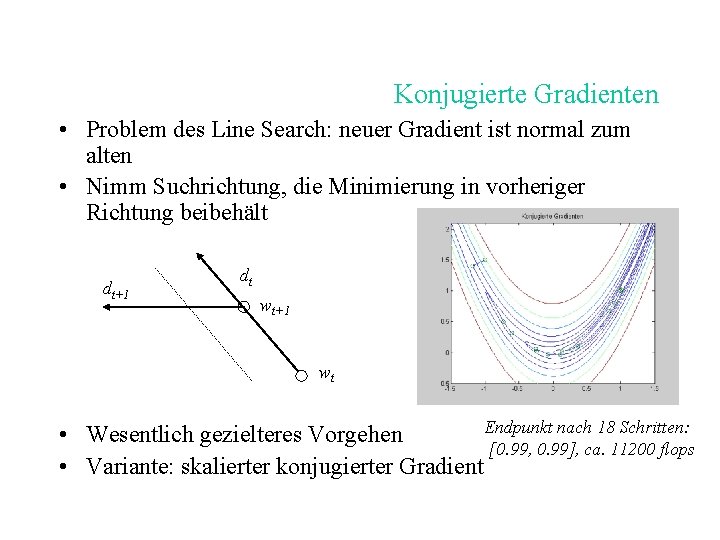

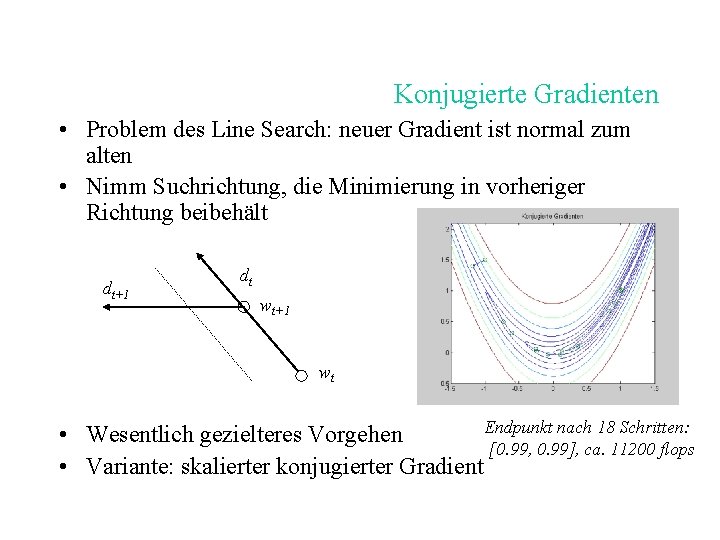

Konjugierte Gradienten • Problem des Line Search: neuer Gradient ist normal zum alten • Nimm Suchrichtung, die Minimierung in vorheriger Richtung beibehält dt+1 dt wt+1 wt Endpunkt nach 18 Schritten: • Wesentlich gezielteres Vorgehen [0. 99, 0. 99], ca. 11200 flops • Variante: skalierter konjugierter Gradient

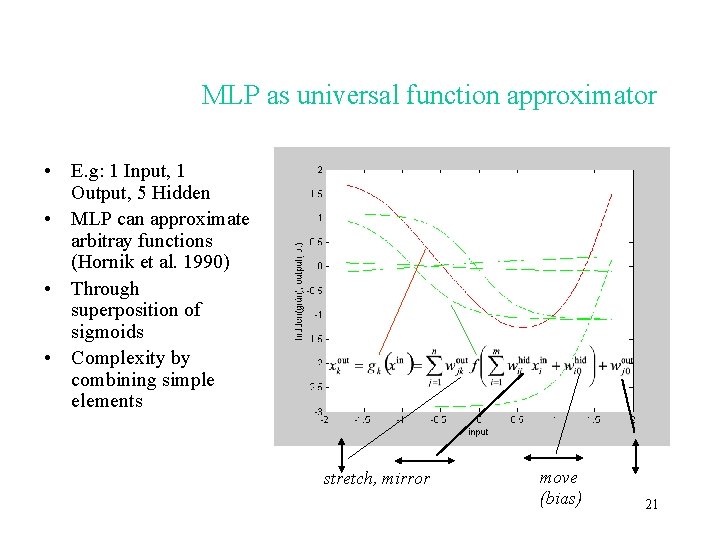

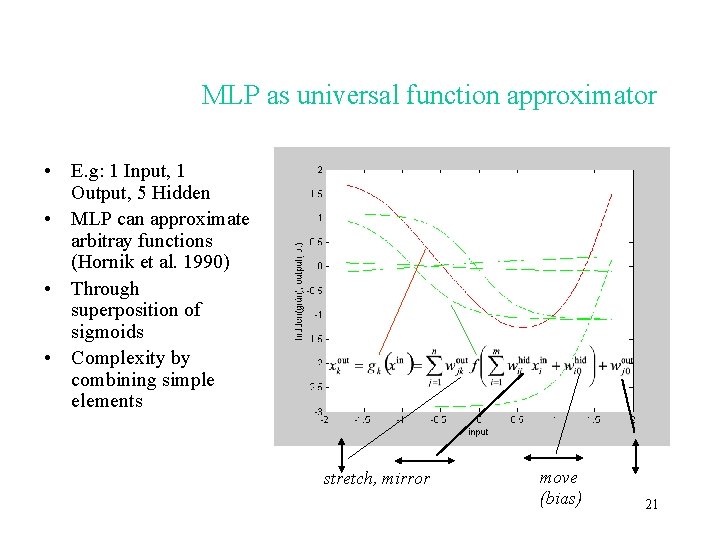

MLP as universal function approximator • E. g: 1 Input, 1 Output, 5 Hidden • MLP can approximate arbitray functions (Hornik et al. 1990) • Through superposition of sigmoids • Complexity by combining simple elements stretch, mirror move (bias) 21

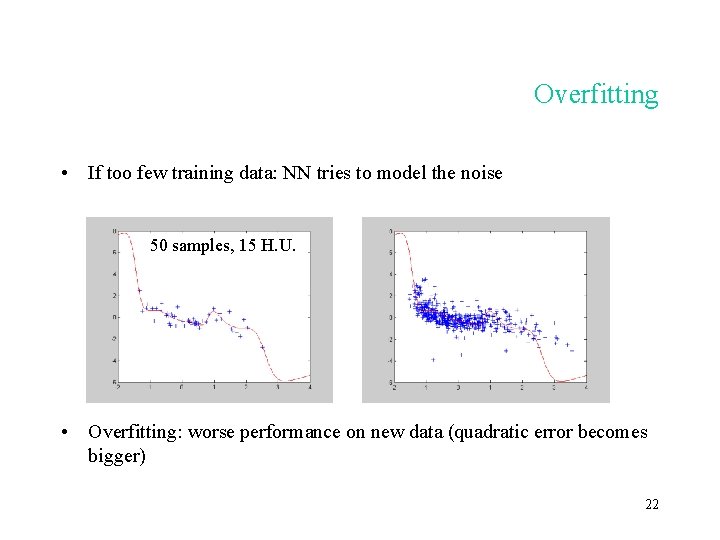

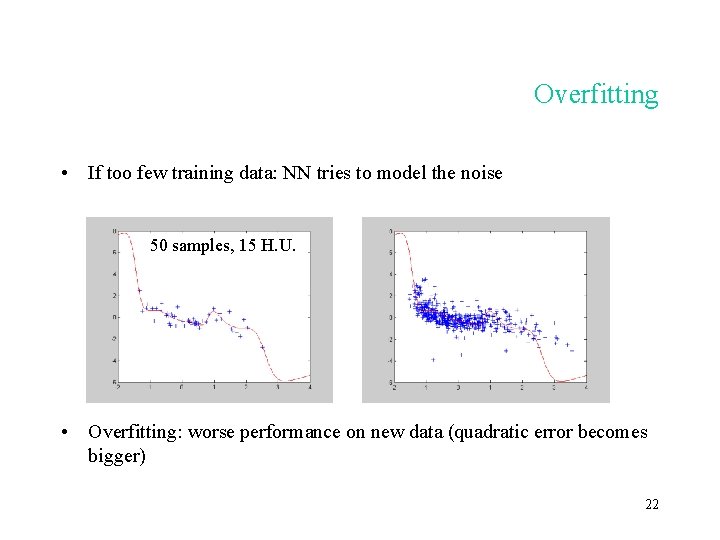

Overfitting • If too few training data: NN tries to model the noise 50 samples, 15 H. U. • Overfitting: worse performance on new data (quadratic error becomes bigger) 22

Avoiding overfitting • As much data as possible (good coverage of distribution) • Model (network) as small as possible • More generally: regularisation (= limit the effective number of degrees of freedom): – Several training runs, average – Penalty for large networks, e. g. : – „Pruning“ (remove connections) – Early stopping 23

The important steps in practice Owing to their power and characteristics, neural network require a sound and careful strategy: 1. 2. 3. 4. 5. 6. 7. Data inspection (visualisation) Data preprocessing Feature selection Model selection (pick best network size) Comparison with simpler methods Testing on independent data Interpretation of results 24

Model selection • Strategy for the optimal choice of model complexity: – – Start small (e. g. 1 or 2 hidden units) n-fold cross-validation Add hidden units one by one Accept as long as there is a significant improvement (test) • No regularization necessary overfitting is captured by cross-validation (averaging) • Too many hidden units too large variance no statistical significance • The same method can also be used for feature selection (“wrapper”) 25

Support Vector Machines: Returning to the perceptron • Advantage of (linear) perecptron: – Global solution guaranteed (no local minima) – Easy to solve / optimize • Disadvantage: – Restricted to linear separability • Idea: – Transformation of data to a highdimensional space, such that problem becomes linearly separable 26

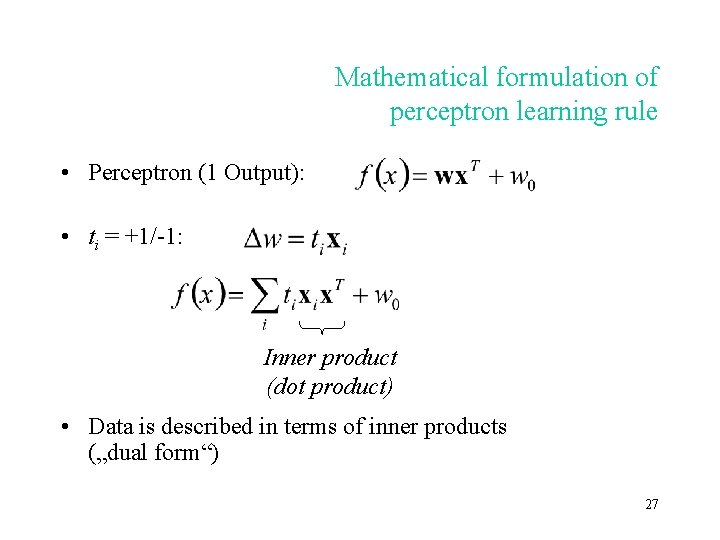

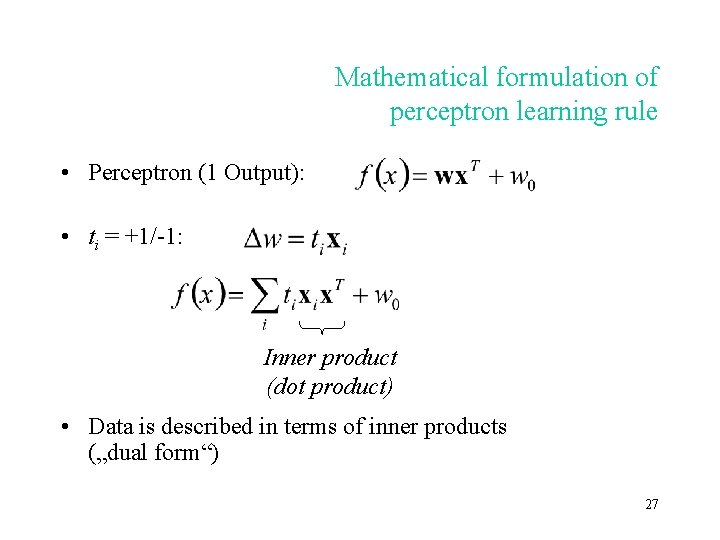

Mathematical formulation of perceptron learning rule • Perceptron (1 Output): • ti = +1/-1: Inner product (dot product) • Data is described in terms of inner products („dual form“) 27

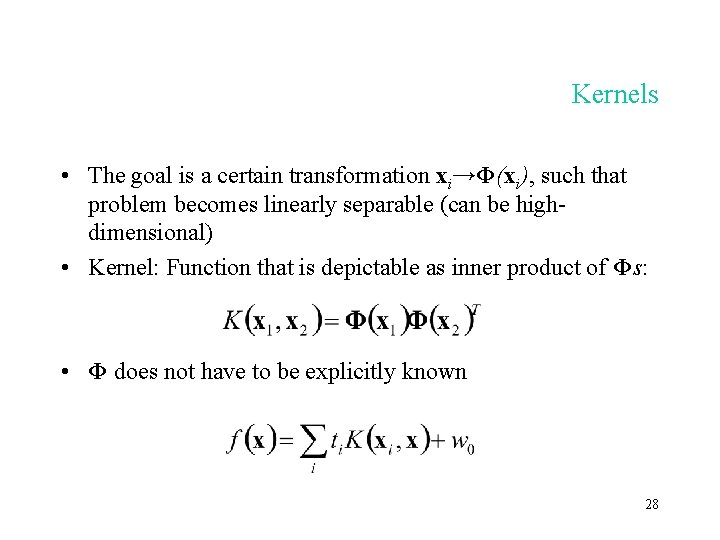

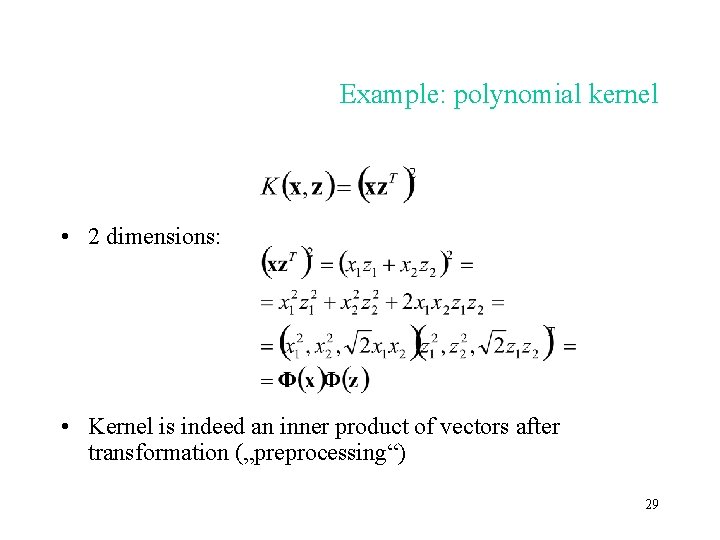

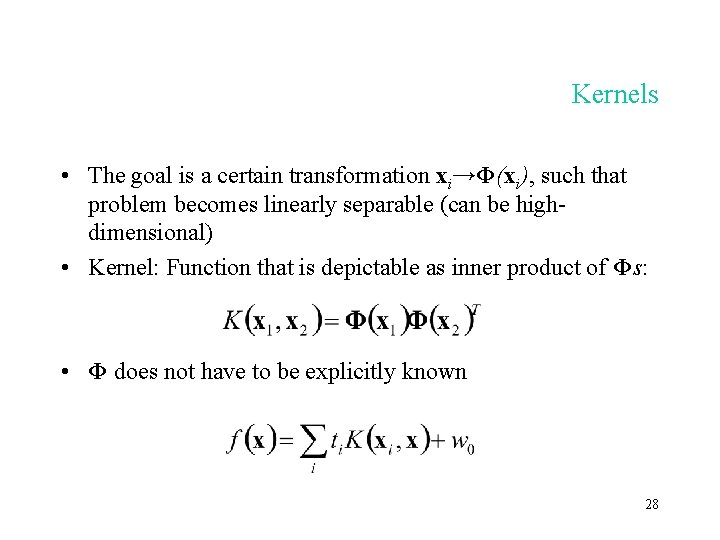

Kernels • The goal is a certain transformation xi→Φ(xi), such that problem becomes linearly separable (can be highdimensional) • Kernel: Function that is depictable as inner product of Φs: • Φ does not have to be explicitly known 28

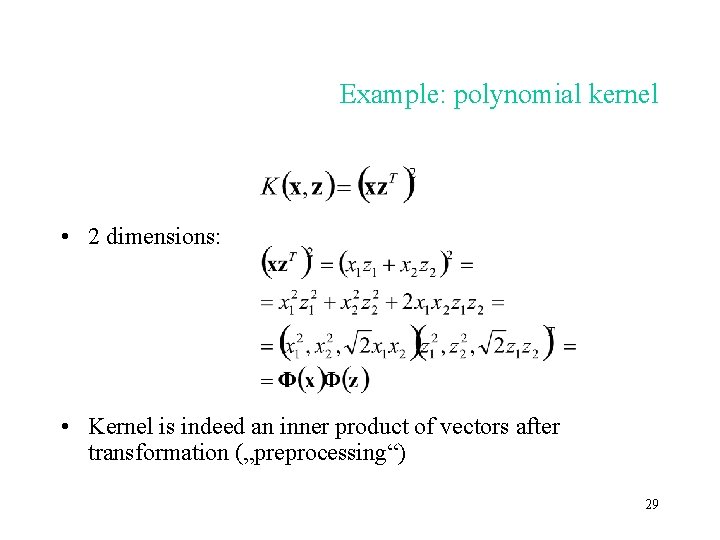

Example: polynomial kernel • 2 dimensions: • Kernel is indeed an inner product of vectors after transformation („preprocessing“) 29

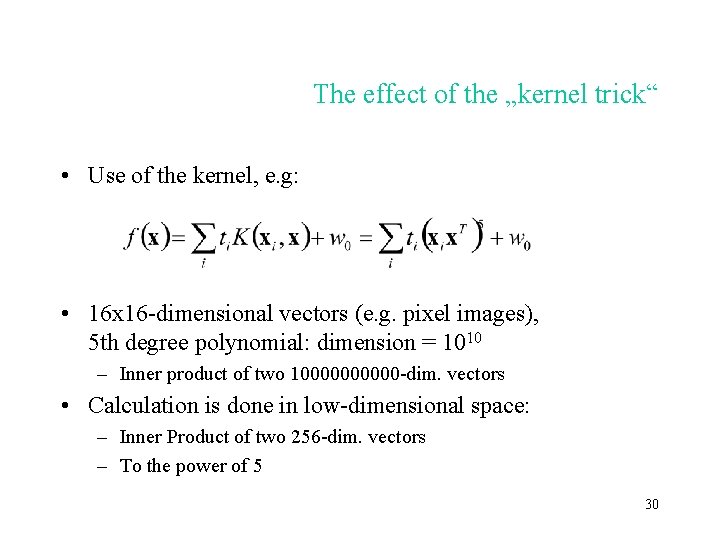

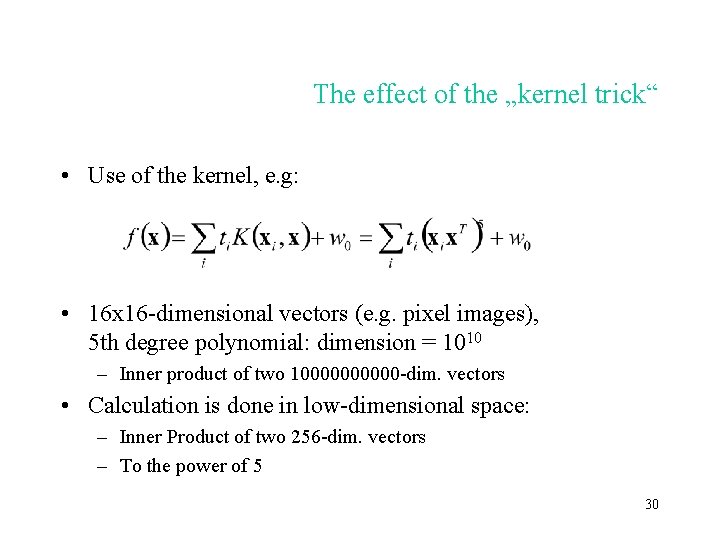

The effect of the „kernel trick“ • Use of the kernel, e. g: • 16 x 16 -dimensional vectors (e. g. pixel images), 5 th degree polynomial: dimension = 1010 – Inner product of two 100000 -dim. vectors • Calculation is done in low-dimensional space: – Inner Product of two 256 -dim. vectors – To the power of 5 30

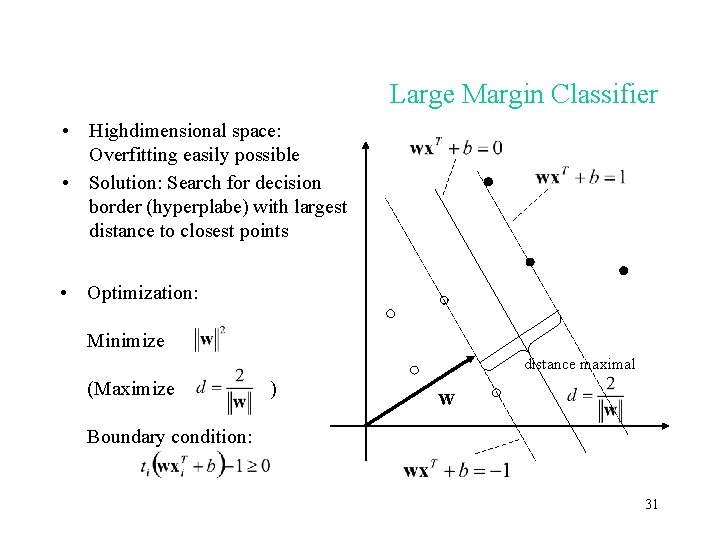

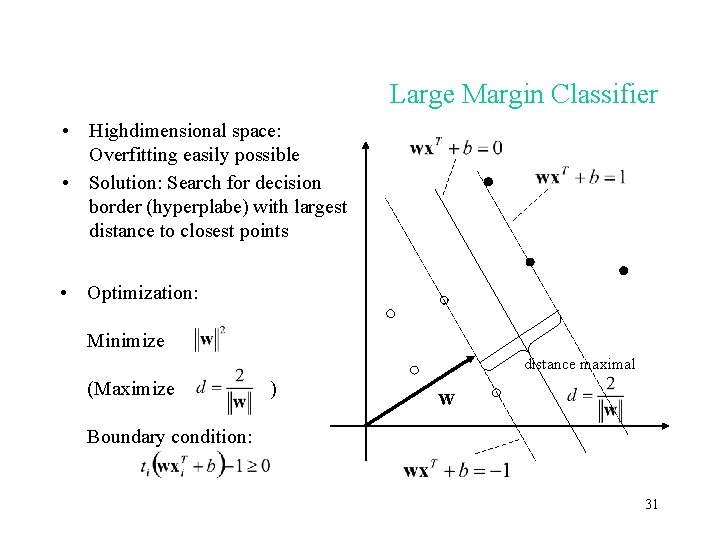

Large Margin Classifier • Highdimensional space: Overfitting easily possible • Solution: Search for decision border (hyperplabe) with largest distance to closest points • Optimization: Minimize distance maximal (Maximize ) w Boundary condition: 31

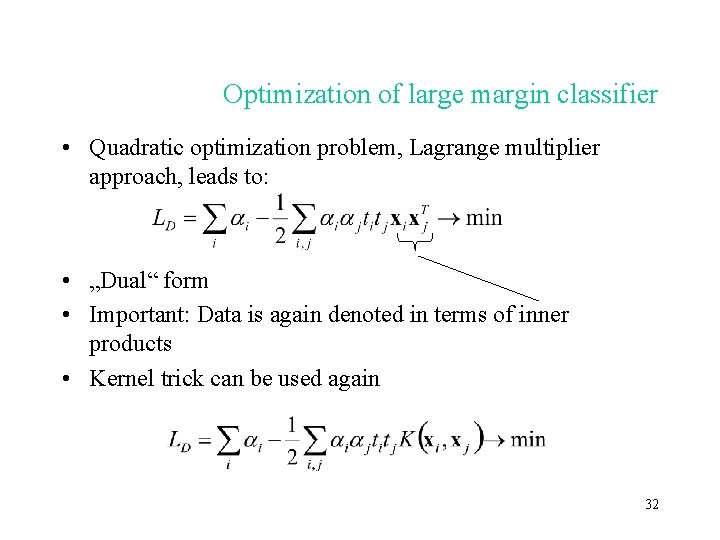

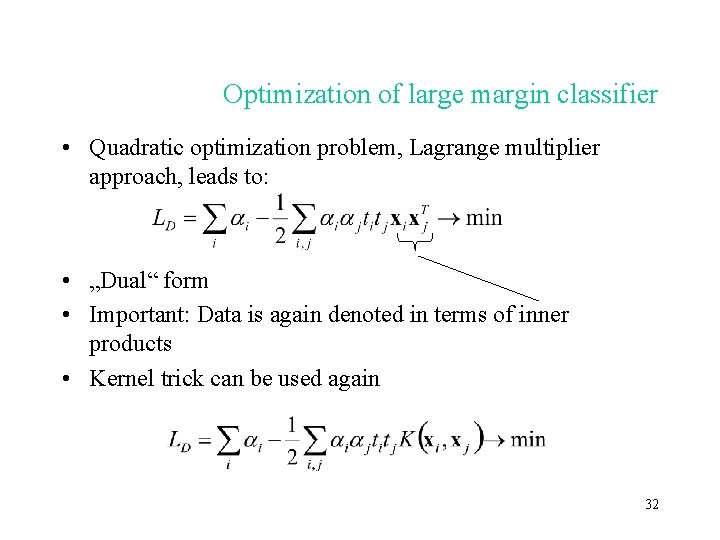

Optimization of large margin classifier • Quadratic optimization problem, Lagrange multiplier approach, leads to: • „Dual“ form • Important: Data is again denoted in terms of inner products • Kernel trick can be used again 32

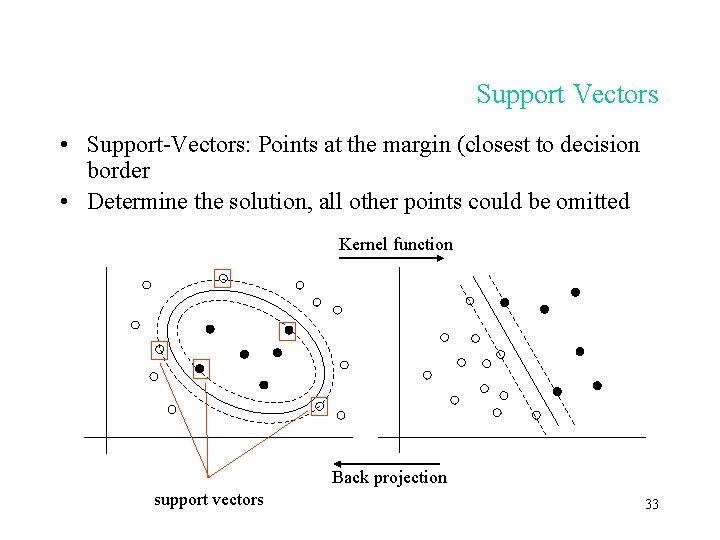

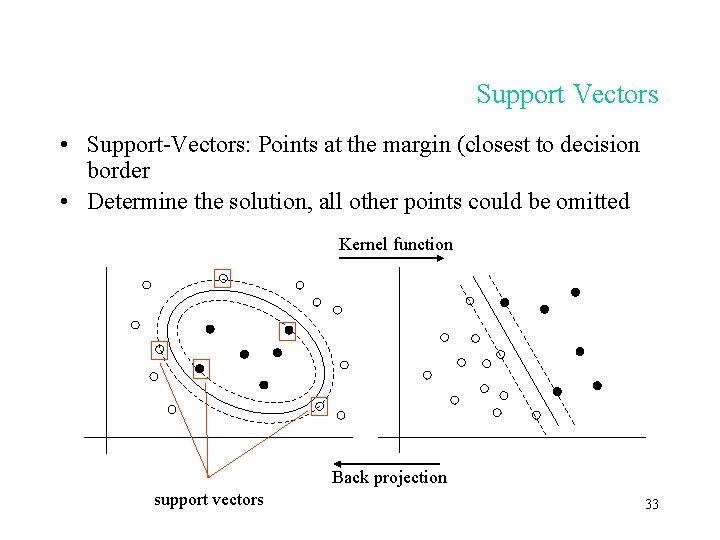

Support Vectors • Support-Vectors: Points at the margin (closest to decision border • Determine the solution, all other points could be omitted Kernel function Back projection support vectors 33

Summary • Neural networks are powerful machine learners for numerical features, initally inspired by neurophysiology • Nonlinearity through interplay of simpler learners (perceptrons) • Statistical/probabilistic framework most appropriate • Learning = Maximum Likelihood, minimizing error function with efficient gradient-based method (e. g. conjugent gradient) • Power comes with downsides (overfitting) -> careful validation necessary • Support vector machines are interesting alternatives, simplify learning problem through „Kernel trick“ 34