Machine Learning Group WEKA Tutorial Sugato Basu and

Machine Learning Group WEKA Tutorial Sugato Basu and Prem Melville Machine Learning Group Department of Computer Sciences University of Texas at Austin

Machine Learning Group What is WEKA? • Collection of ML algorithms – open-source Java package – http: //www. cs. waikato. ac. nz/ml/weka/ • Schemes for classification include: – decision trees, rule learners, naive Bayes, decision tables, locally weighted regression, SVMs, instance-based learners, logistic regression, voted perceptrons, multi-layer perceptron • Schemes for numeric prediction include: – linear regression, model tree generators, locally weighted regression, instance-based learners, decision tables, multi-layer perceptron • Meta-schemes include: – Bagging, boosting, stacking, regression via classification, classification via regression, cost sensitive classification • Schemes for clustering: – EM and Cobweb University of Texas at Austin 2

Machine Learning Group Getting Started • Set environment variable WEKAHOME – setenv • /u/ml/software/weka Add $WEKAHOME/weka. jar to your CLASSPATH – setenv • WEKAHOME CLASSPATH /u/ml/software/weka. jar Test – java weka. classifiers. j 48. J 48 –t $WEKAHOME/data/iris. arff University of Texas at Austin 3

Machine Learning Group ARFF File Format • • Require declarations of @RELATION, @ATTRIBUTE and @DATA @RELATION declaration associates a name with the dataset – @RELATION <relation-name> @RELATION iris • @ATTRIBUTE declaration specifies the name and type of an attribute – @attribute <attribute-name> <datatype> – Datatype can be numeric, nominal, string or date @ATTRIBUTE sepallength NUMERIC @ATTRIBUTE petalwidth NUMERIC @ATTRIBUTE class {Iris-setosa, Iris-versicolor, Iris-virginica} • @DATA declaration is a single line denoting the start of the data segment – Missing values are represented by ? @DATA 5. 1, 3. 5, 1. 4, 0. 2, Iris-setosa 4. 9, ? , 1. 4, ? , Iris-versicolor University of Texas at Austin 4

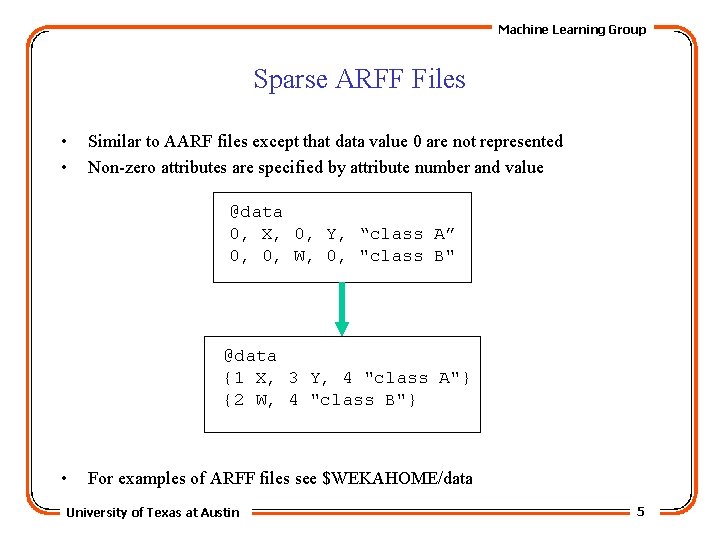

Machine Learning Group Sparse ARFF Files • • Similar to AARF files except that data value 0 are not represented Non-zero attributes are specified by attribute number and value @data 0, X, 0, Y, “class A” 0, 0, W, 0, "class B" @data {1 X, 3 Y, 4 "class A"} {2 W, 4 "class B"} • For examples of ARFF files see $WEKAHOME/data University of Texas at Austin 5

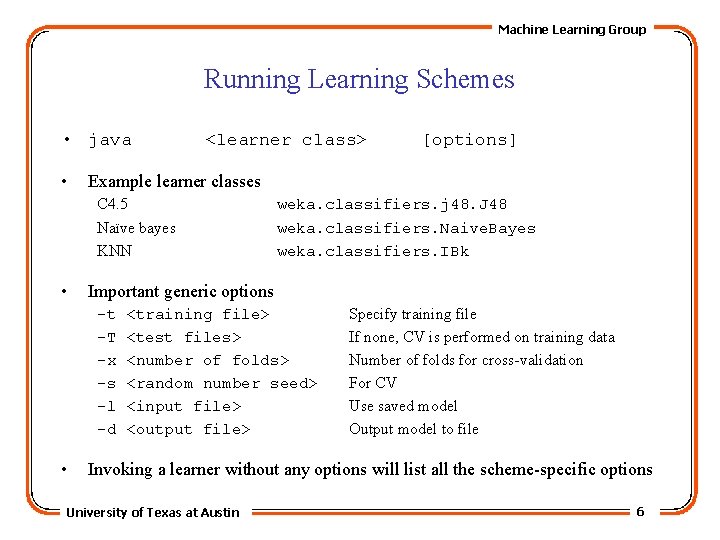

Machine Learning Group Running Learning Schemes • java • <learner class> Example learner classes C 4. 5 Naïve bayes KNN • weka. classifiers. j 48. J 48 weka. classifiers. Naive. Bayes weka. classifiers. IBk Important generic options -t -T -x -s -l -d • [options] <training file> <test files> <number of folds> <random number seed> <input file> <output file> Specify training file If none, CV is performed on training data Number of folds for cross-validation For CV Use saved model Output model to file Invoking a learner without any options will list all the scheme-specific options University of Texas at Austin 6

Machine Learning Group Output • Summary of model – if possible • Statistics on training data • Cross-validation statistics • Example • Output for numeric prediction is different – Correlation coefficient instead of accuracy – No confusion matrices University of Texas at Austin 7

![Machine Learning Group Using Meta-Learners • java <meta-scheme class> -W <base-learner> [meta-options] -- [base-options] Machine Learning Group Using Meta-Learners • java <meta-scheme class> -W <base-learner> [meta-options] -- [base-options]](http://slidetodoc.com/presentation_image_h2/6f3025b5c06044744d4ef33ccfa099f3/image-8.jpg)

Machine Learning Group Using Meta-Learners • java <meta-scheme class> -W <base-learner> [meta-options] -- [base-options] – The double minus sign (--) separates the two lists of options, e. g. java weka. classifiers. Bagging –I 8 -W weka. classifiers. j 48. J 48 -t iris. arff -- -U • Multi. Classifier allows you to use a binary classifier for multiclass data java weka. classifiers. Multi. Classifier –W weka. classifiers. SMO –t weather. arff • CVParameter. Selection finds best value for specified param using CV – Use –P option to specify the parameter and space to search -P “<param name> <starting value> <last value> <# of steps>, e. g. java …CVParameter. Selection –W …One. R –P “B 1 10 10” –t iris. arff University of Texas at Austin 8

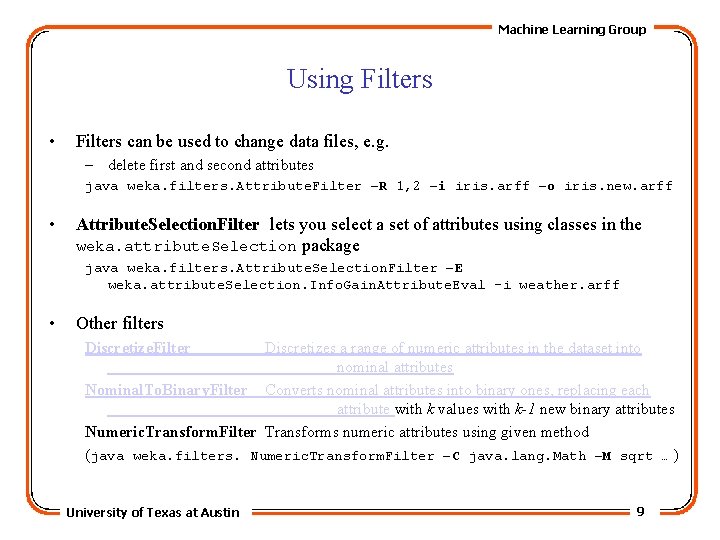

Machine Learning Group Using Filters • Filters can be used to change data files, e. g. – delete first and second attributes java weka. filters. Attribute. Filter –R 1, 2 –i iris. arff –o iris. new. arff • Attribute. Selection. Filter lets you select a set of attributes using classes in the weka. attribute. Selection package java weka. filters. Attribute. Selection. Filter –E weka. attribute. Selection. Info. Gain. Attribute. Eval –i weather. arff • Other filters Discretize. Filter Discretizes a range of numeric attributes in the dataset into nominal attributes Nominal. To. Binary. Filter Converts nominal attributes into binary ones, replacing each attribute with k values with k-1 new binary attributes Numeric. Transform. Filter Transforms numeric attributes using given method (java weka. filters. Numeric. Transform. Filter –C java. lang. Math –M sqrt … ) University of Texas at Austin 9

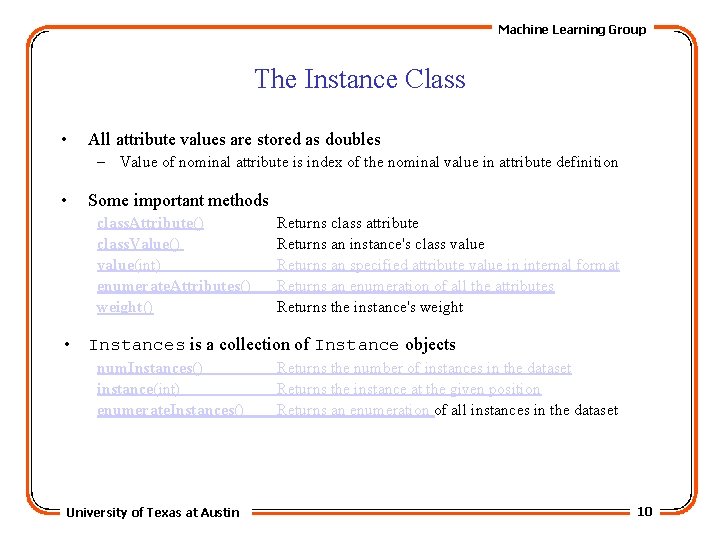

Machine Learning Group The Instance Class • All attribute values are stored as doubles – Value of nominal attribute is index of the nominal value in attribute definition • Some important methods class. Attribute() class. Value() value(int) enumerate. Attributes() weight() Returns class attribute Returns an instance's class value Returns an specified attribute value in internal format Returns an enumeration of all the attributes Returns the instance's weight • Instances is a collection of Instance objects num. Instances() instance(int) enumerate. Instances() University of Texas at Austin Returns the number of instances in the dataset Returns the instance at the given position Returns an enumeration of all instances in the dataset 10

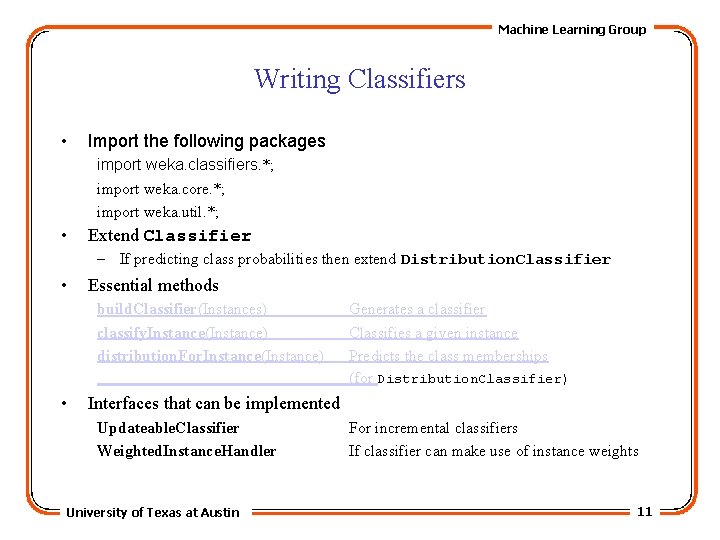

Machine Learning Group Writing Classifiers • Import the following packages import weka. classifiers. *; import weka. core. *; import weka. util. *; • Extend Classifier – If predicting class probabilities then extend Distribution. Classifier • Essential methods build. Classifier(Instances) classify. Instance(Instance) distribution. For. Instance(Instance) • Generates a classifier Classifies a given instance Predicts the class memberships (for Distribution. Classifier) Interfaces that can be implemented Updateable. Classifier Weighted. Instance. Handler University of Texas at Austin For incremental classifiers If classifier can make use of instance weights 11

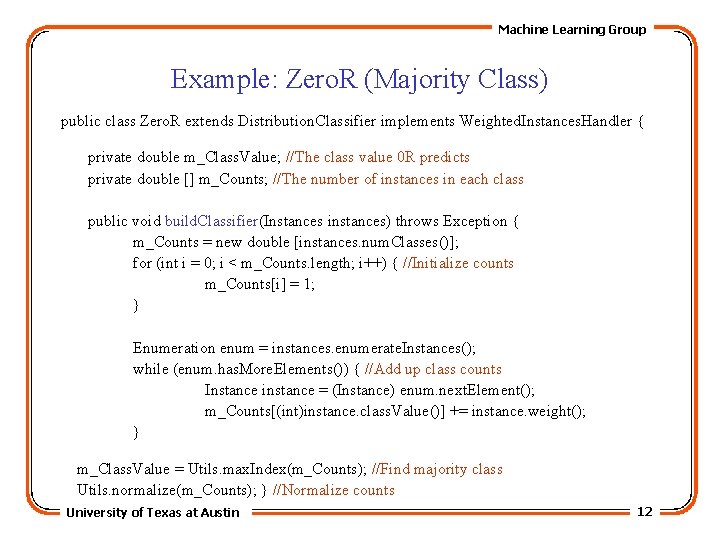

Machine Learning Group Example: Zero. R (Majority Class) public class Zero. R extends Distribution. Classifier implements Weighted. Instances. Handler { private double m_Class. Value; //The class value 0 R predicts private double [] m_Counts; //The number of instances in each class public void build. Classifier(Instances instances) throws Exception { m_Counts = new double [instances. num. Classes()]; for (int i = 0; i < m_Counts. length; i++) { //Initialize counts m_Counts[i] = 1; } Enumeration enum = instances. enumerate. Instances(); while (enum. has. More. Elements()) { //Add up class counts Instance instance = (Instance) enum. next. Element(); m_Counts[(int)instance. class. Value()] += instance. weight(); } m_Class. Value = Utils. max. Index(m_Counts); //Find majority class Utils. normalize(m_Counts); } //Normalize counts University of Texas at Austin 12

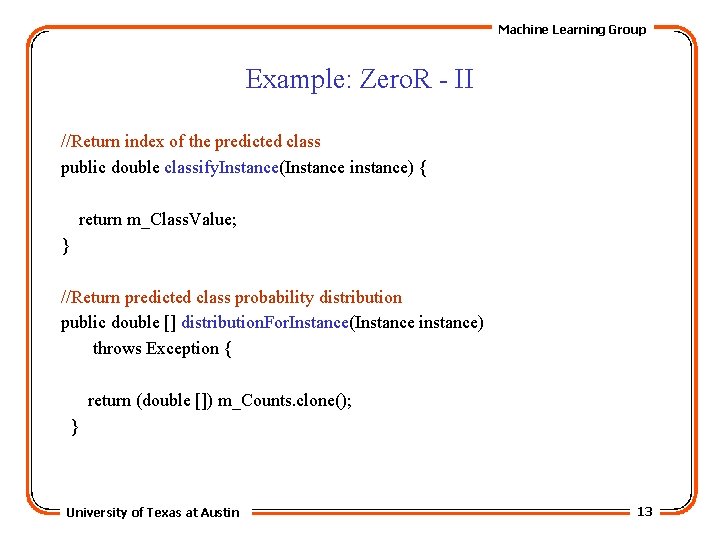

Machine Learning Group Example: Zero. R - II //Return index of the predicted class public double classify. Instance(Instance instance) { return m_Class. Value; } //Return predicted class probability distribution public double [] distribution. For. Instance(Instance instance) throws Exception { return (double []) m_Counts. clone(); } University of Texas at Austin 13

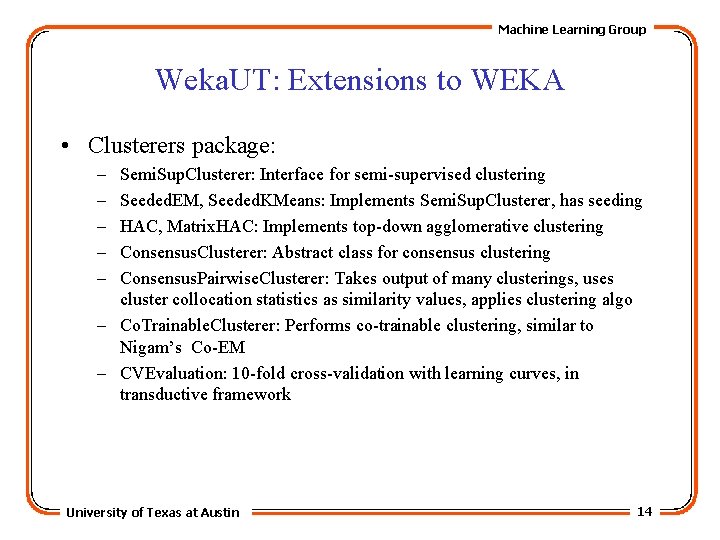

Machine Learning Group Weka. UT: Extensions to WEKA • Clusterers package: – – – Semi. Sup. Clusterer: Interface for semi-supervised clustering Seeded. EM, Seeded. KMeans: Implements Semi. Sup. Clusterer, has seeding HAC, Matrix. HAC: Implements top-down agglomerative clustering Consensus. Clusterer: Abstract class for consensus clustering Consensus. Pairwise. Clusterer: Takes output of many clusterings, uses cluster collocation statistics as similarity values, applies clustering algo – Co. Trainable. Clusterer: Performs co-trainable clustering, similar to Nigam’s Co-EM – CVEvaluation: 10 -fold cross-validation with learning curves, in transductive framework University of Texas at Austin 14

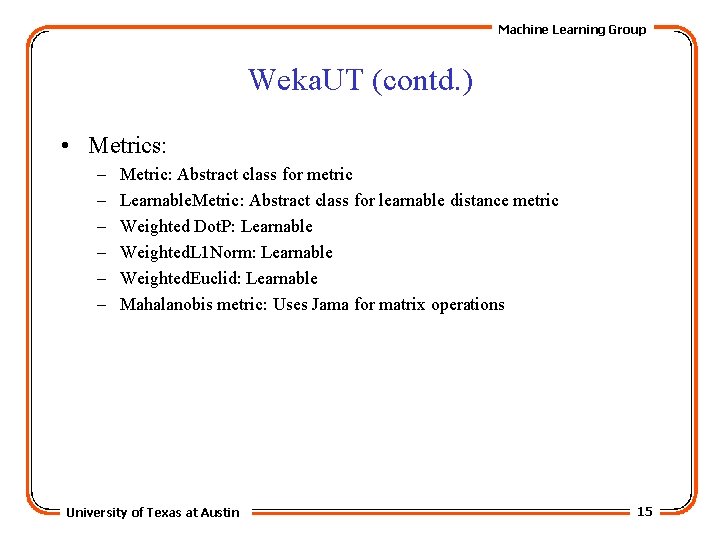

Machine Learning Group Weka. UT (contd. ) • Metrics: – – – Metric: Abstract class for metric Learnable. Metric: Abstract class for learnable distance metric Weighted Dot. P: Learnable Weighted. L 1 Norm: Learnable Weighted. Euclid: Learnable Mahalanobis metric: Uses Jama for matrix operations University of Texas at Austin 15

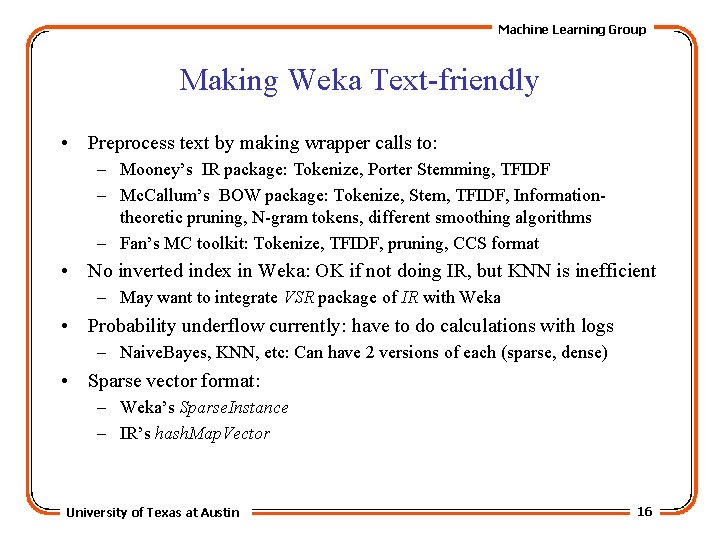

Machine Learning Group Making Weka Text-friendly • Preprocess text by making wrapper calls to: – Mooney’s IR package: Tokenize, Porter Stemming, TFIDF – Mc. Callum’s BOW package: Tokenize, Stem, TFIDF, Informationtheoretic pruning, N-gram tokens, different smoothing algorithms – Fan’s MC toolkit: Tokenize, TFIDF, pruning, CCS format • No inverted index in Weka: OK if not doing IR, but KNN is inefficient – May want to integrate VSR package of IR with Weka • Probability underflow currently: have to do calculations with logs – Naive. Bayes, KNN, etc: Can have 2 versions of each (sparse, dense) • Sparse vector format: – Weka’s Sparse. Instance – IR’s hash. Map. Vector University of Texas at Austin 16

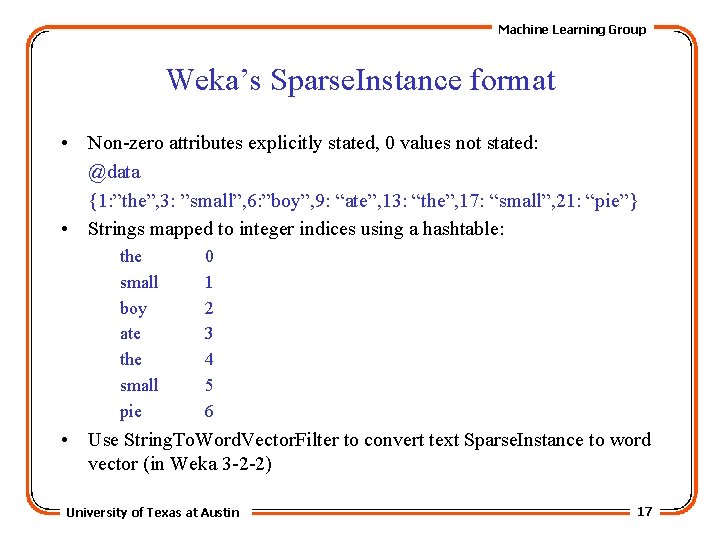

Machine Learning Group Weka’s Sparse. Instance format • Non-zero attributes explicitly stated, 0 values not stated: @data {1: ”the”, 3: ”small”, 6: ”boy”, 9: “ate”, 13: “the”, 17: “small”, 21: “pie”} • Strings mapped to integer indices using a hashtable: the small boy ate the small pie 0 1 2 3 4 5 6 • Use String. To. Word. Vector. Filter to convert text Sparse. Instance to word vector (in Weka 3 -2 -2) University of Texas at Austin 17

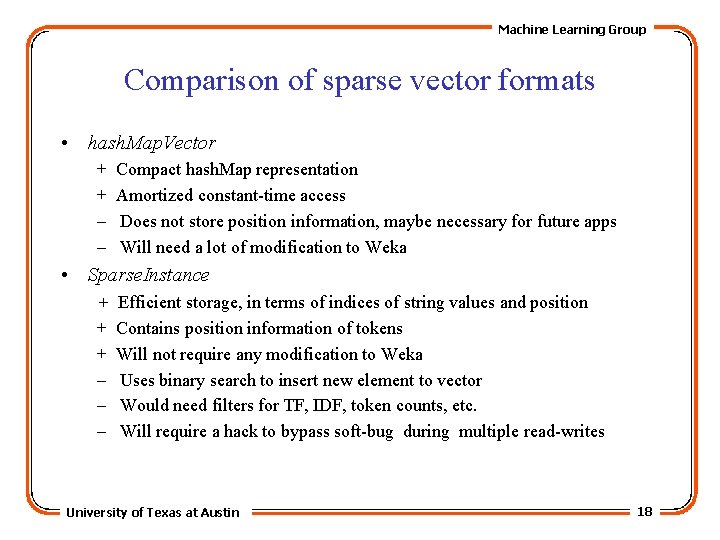

Machine Learning Group Comparison of sparse vector formats • hash. Map. Vector + + – – Compact hash. Map representation Amortized constant-time access Does not store position information, maybe necessary for future apps Will need a lot of modification to Weka • Sparse. Instance + + + – – – Efficient storage, in terms of indices of string values and position Contains position information of tokens Will not require any modification to Weka Uses binary search to insert new element to vector Would need filters for TF, IDF, token counts, etc. Will require a hack to bypass soft-bug during multiple read-writes University of Texas at Austin 18

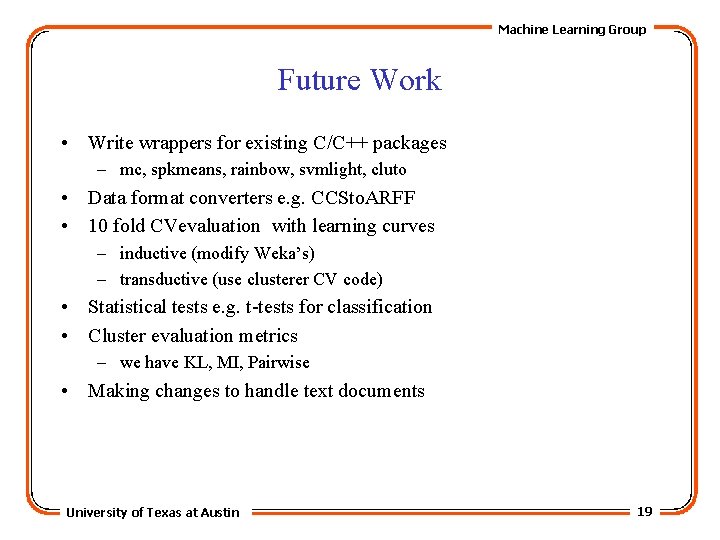

Machine Learning Group Future Work • Write wrappers for existing C/C++ packages – mc, spkmeans, rainbow, svmlight, cluto • Data format converters e. g. CCSto. ARFF • 10 fold CVevaluation with learning curves – inductive (modify Weka’s) – transductive (use clusterer CV code) • Statistical tests e. g. t-tests for classification • Cluster evaluation metrics – we have KL, MI, Pairwise • Making changes to handle text documents University of Texas at Austin 19

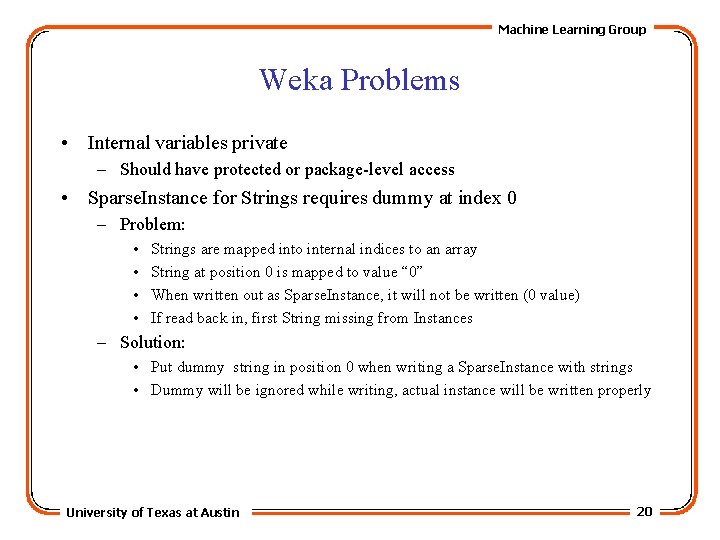

Machine Learning Group Weka Problems • Internal variables private – Should have protected or package-level access • Sparse. Instance for Strings requires dummy at index 0 – Problem: • • Strings are mapped into internal indices to an array String at position 0 is mapped to value “ 0” When written out as Sparse. Instance, it will not be written (0 value) If read back in, first String missing from Instances – Solution: • Put dummy string in position 0 when writing a Sparse. Instance with strings • Dummy will be ignored while writing, actual instance will be written properly University of Texas at Austin 20

- Slides: 20