Machine learning for metabolomics Genome Center Bioinformatics Technology

Machine learning for metabolomics Genome Center Bioinformatics Technology Forum Tobias Kind – September 2006 • A quick introduction into machine learning • Algorithms and tools and importance of feature selection • Machine Learning for classification and prediction of cancer data 1

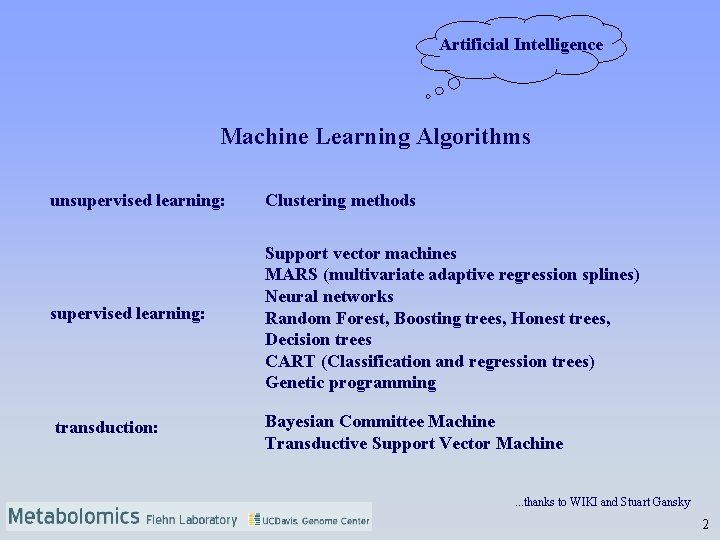

Artificial Intelligence Machine Learning Algorithms unsupervised learning: Clustering methods supervised learning: Support vector machines MARS (multivariate adaptive regression splines) Neural networks Random Forest, Boosting trees, Honest trees, Decision trees CART (Classification and regression trees) Genetic programming transduction: Bayesian Committee Machine Transductive Support Vector Machine. . . thanks to WIKI and Stuart Gansky 2

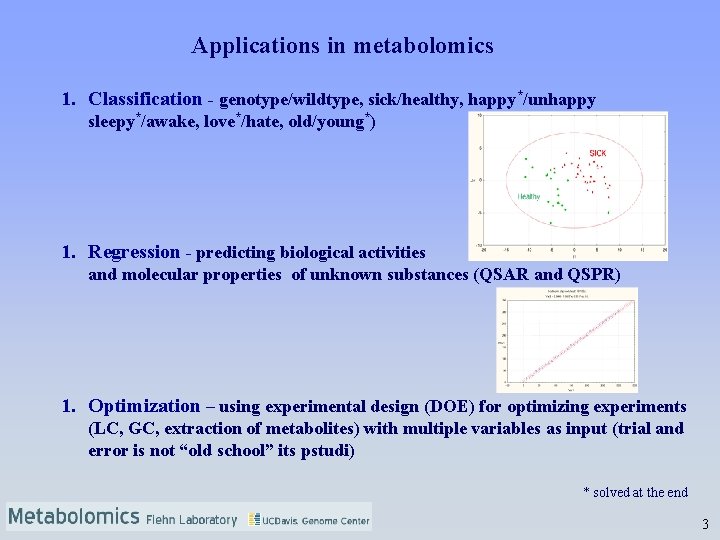

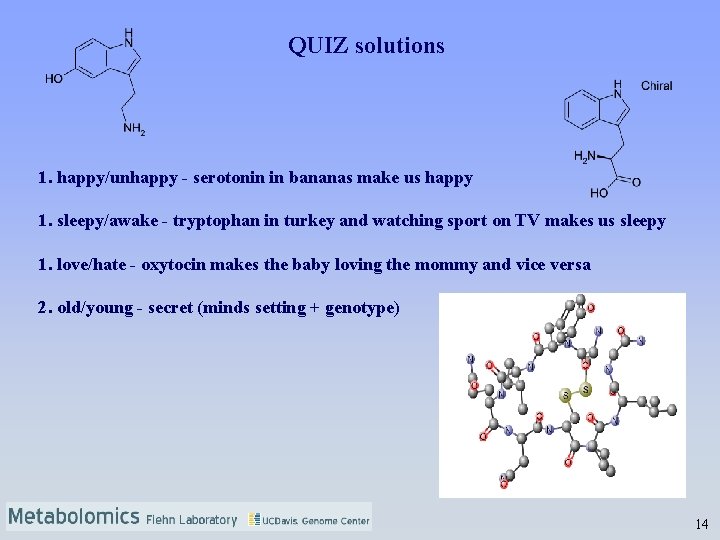

Applications in metabolomics 1. Classification - genotype/wildtype, sick/healthy, happy*/unhappy sleepy*/awake, love*/hate, old/young*) 1. Regression - predicting biological activities and molecular properties of unknown substances (QSAR and QSPR) 1. Optimization – using experimental design (DOE) for optimizing experiments (LC, GC, extraction of metabolites) with multiple variables as input (trial and error is not “old school” its pstudi) * solved at the end 3

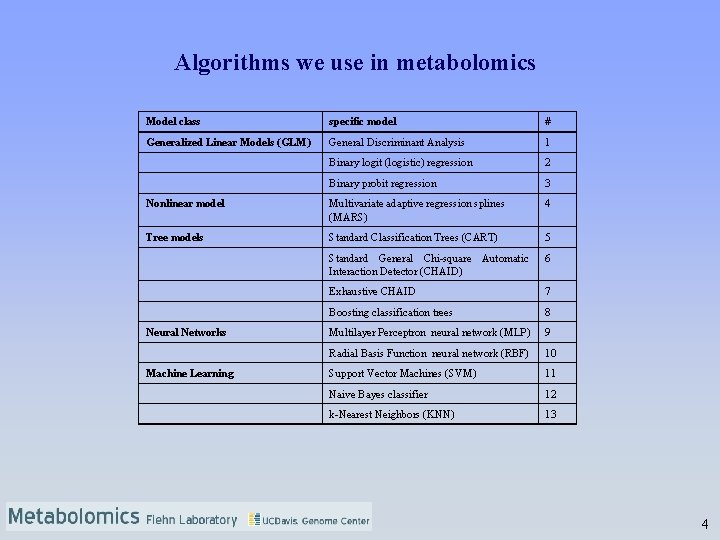

Algorithms we use in metabolomics Model class specific model # Generalized Linear Models (GLM) General Discriminant Analysis 1 Binary logit (logistic) regression 2 Binary probit regression 3 Nonlinear model Multivariate adaptive regression splines (MARS) 4 Tree models Standard Classification Trees (CART) 5 Standard General Chi-square Automatic Interaction Detector (CHAID) 6 Exhaustive CHAID 7 Boosting classification trees 8 Multilayer Perceptron neural network (MLP) 9 Radial Basis Function neural network (RBF) 10 Support Vector Machines (SVM) 11 Naive Bayes classifier 12 k-Nearest Neighbors (KNN) 13 Neural Networks Machine Learning 4

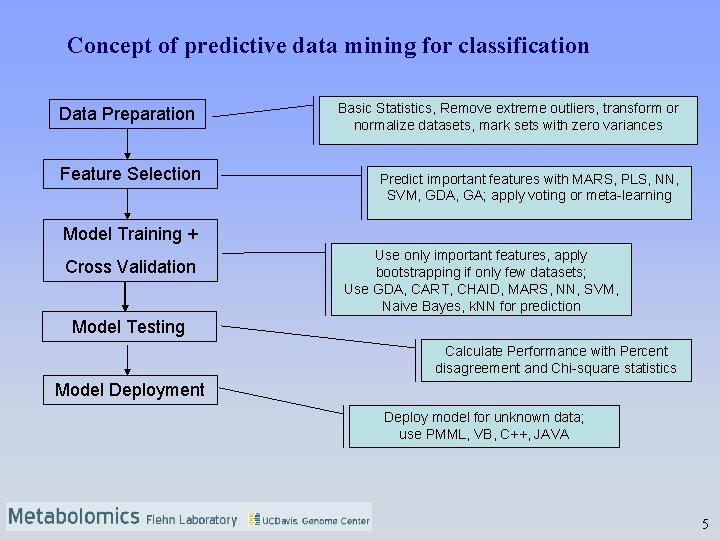

Concept of predictive data mining for classification Data Preparation Feature Selection Basic Statistics, Remove extreme outliers, transform or normalize datasets, mark sets with zero variances Predict important features with MARS, PLS, NN, SVM, GDA, GA; apply voting or meta-learning Model Training + Cross Validation Use only important features, apply bootstrapping if only few datasets; Use GDA, CART, CHAID, MARS, NN, SVM, Naive Bayes, k. NN for prediction Model Testing Calculate Performance with Percent disagreement and Chi-square statistics Model Deployment Deploy model for unknown data; use PMML, VB, C++, JAVA 5

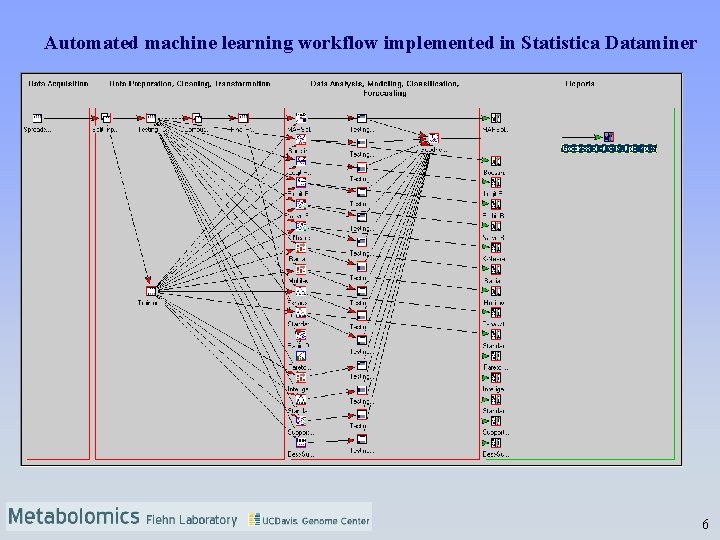

Automated machine learning workflow implemented in Statistica Dataminer 6

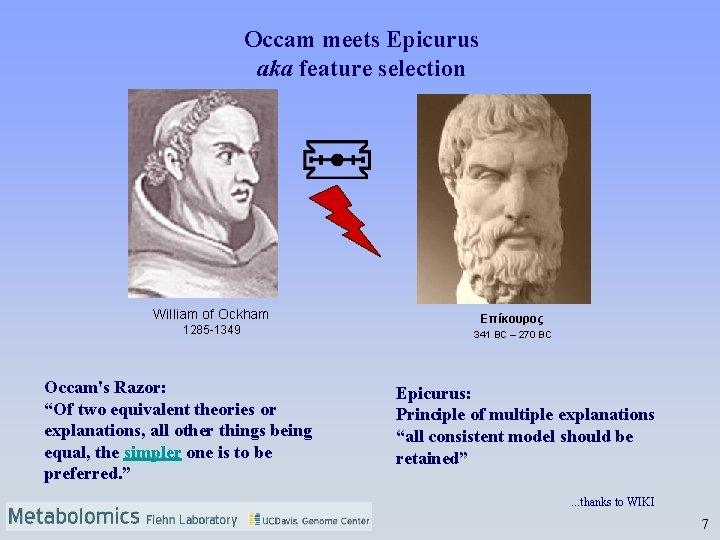

Occam meets Epicurus aka feature selection William of Ockham 1285 -1349 Occam's Razor: “Of two equivalent theories or explanations, all other things being equal, the simpler one is to be preferred. ” Eπίκουρος 341 BC – 270 BC Epicurus: Principle of multiple explanations “all consistent model should be retained”. . . thanks to WIKI 7

What's the deal with feature selection? • Reduces computational complexity • Curse of dimensionality is avoided • Improves accuracy • The selected features can provide insights about the nature of the problem* * Margin Based Feature Selection Theory and Algorithms; Amir Navot 8

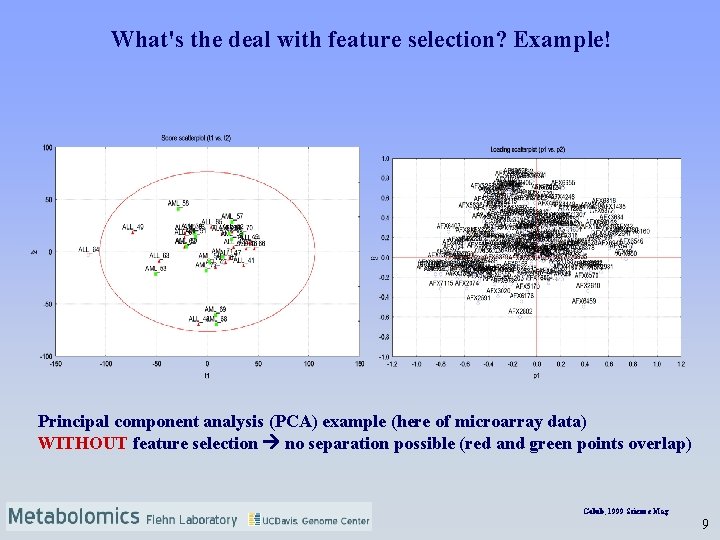

What's the deal with feature selection? Example! Principal component analysis (PCA) example (here of microarray data) WITHOUT feature selection no separation possible (red and green points overlap) Golub, 1999 Science Mag 9

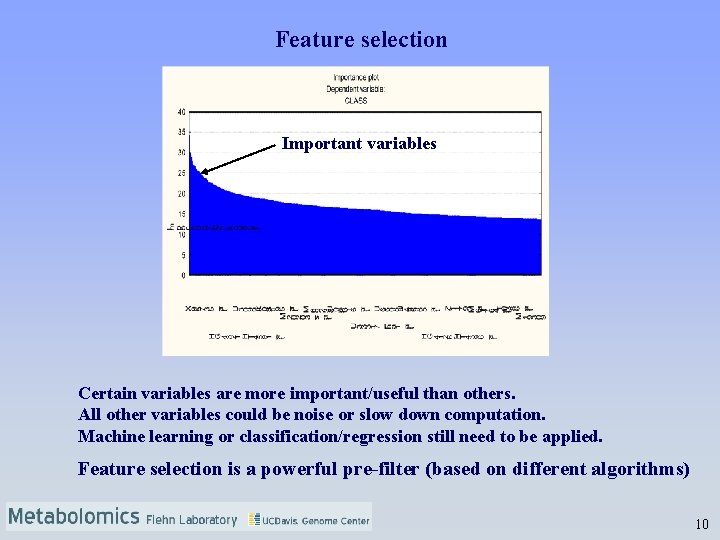

Feature selection Important variables Certain variables are more important/useful than others. All other variables could be noise or slow down computation. Machine learning or classification/regression still need to be applied. Feature selection is a powerful pre-filter (based on different algorithms) 10

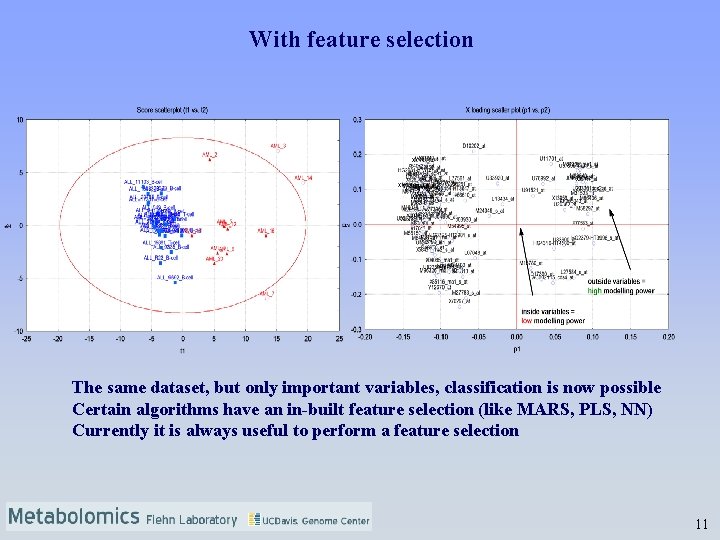

With feature selection The same dataset, but only important variables, classification is now possible Certain algorithms have an in-built feature selection (like MARS, PLS, NN) Currently it is always useful to perform a feature selection 11

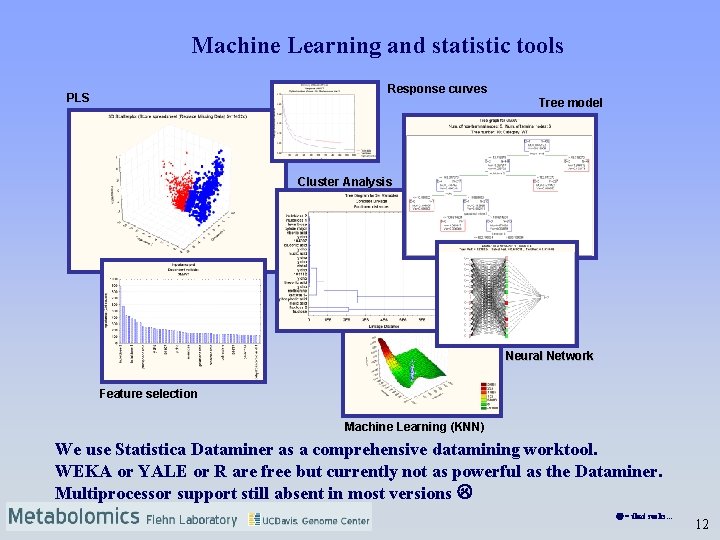

Machine Learning and statistic tools Response curves PLS Tree model Cluster Analysis Neural Network Feature selection Machine Learning (KNN) We use Statistica Dataminer as a comprehensive datamining worktool. WEKA or YALE or R are free but currently not as powerful as the Dataminer. Multiprocessor support still absent in most versions = that sucks. . . 12

LIVE Demo with Statistica Dataminer (~10 -15 min) Classification of cancer data from LC-MS and GC-MS experiments 13

QUIZ solutions 1. happy/unhappy - serotonin in bananas make us happy 1. sleepy/awake - tryptophan in turkey and watching sport on TV makes us sleepy 1. love/hate - oxytocin makes the baby loving the mommy and vice versa 2. old/young - secret (minds setting + genotype) 14

Machine learning for metabolomics Genome Center Bioinformatics Technology Forum Tobias Kind – September 2006 Thank you! Thanks to the Fiehn. Lab! 15

- Slides: 15