LYNX CAD for FPGAbased NetworksonChip Mohamed Abdelfattah Vaughn

LYNX: CAD for FPGA-based Networks-on-Chip Mohamed Abdelfattah Vaughn Betz

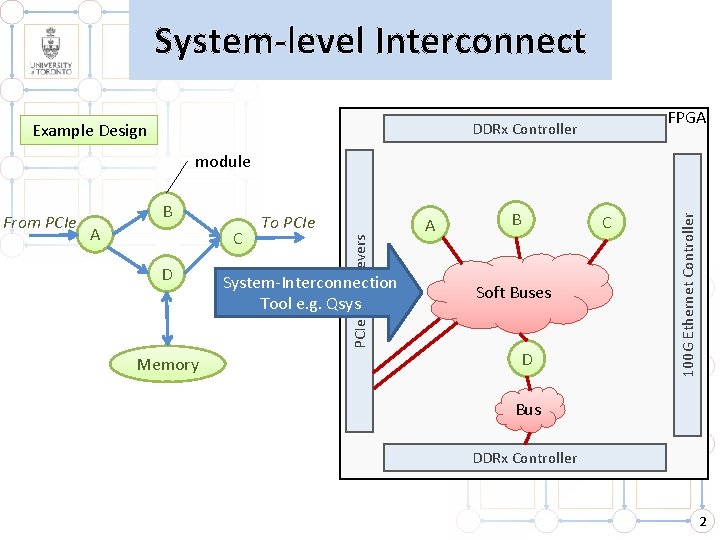

System-level Interconnect Example Design FPGA DDRx Controller B A C D Memory To PCIe Transcievers From PCIe System-Interconnection Tool e. g. Qsys A B C Soft Buses D 100 G Ethernet Controller module Bus DDRx Controller 2

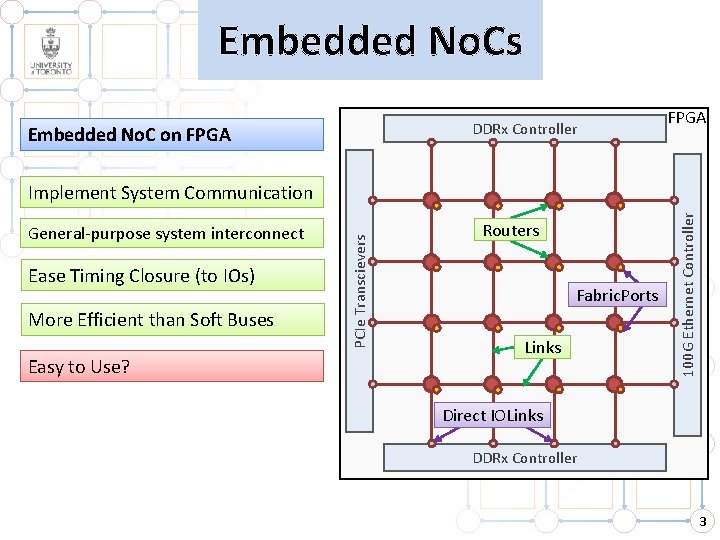

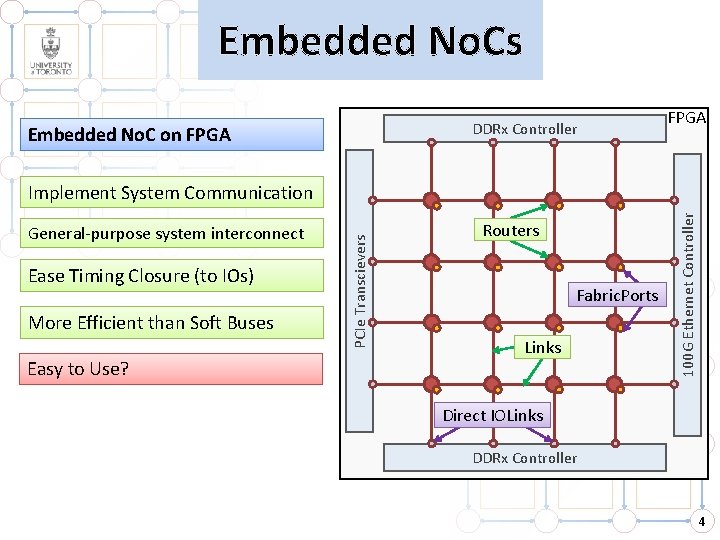

Embedded No. Cs DDRx Controller Embedded No. C on FPGA Ease Timing Closure (to IOs) More Efficient than Soft Buses Easy to Use? Routers Fabric. Ports Links 100 G Ethernet Controller General-purpose system interconnect PCIe Transcievers Implement System Communication Direct IOLinks DDRx Controller 3

Embedded No. Cs DDRx Controller Embedded No. C on FPGA Ease Timing Closure (to IOs) More Efficient than Soft Buses Easy to Use? Routers Fabric. Ports Links 100 G Ethernet Controller General-purpose system interconnect PCIe Transcievers Implement System Communication Direct IOLinks DDRx Controller 4

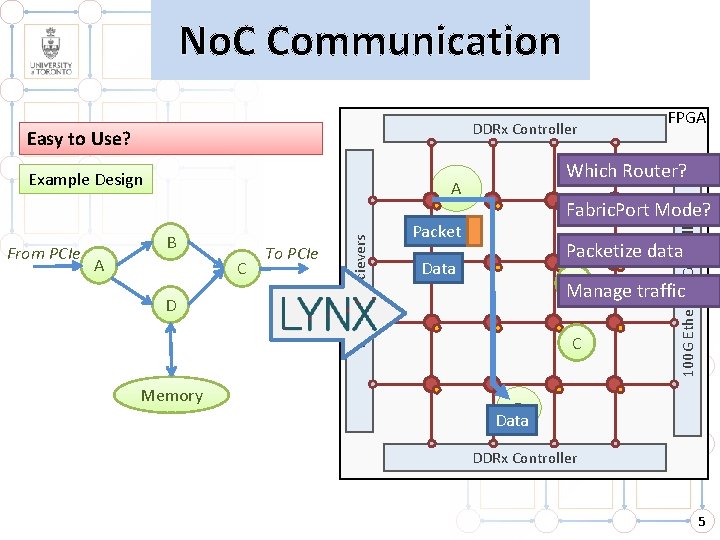

No. C Communication DDRx Controller Example Design A B A C D Memory To PCIe Transcievers From PCIe Which Router? Fabric. Port Mode? Packet 100 G Ethernet Controller Easy to Use? FPGA Packetize data Data B Manage traffic C D Data DDRx Controller 5

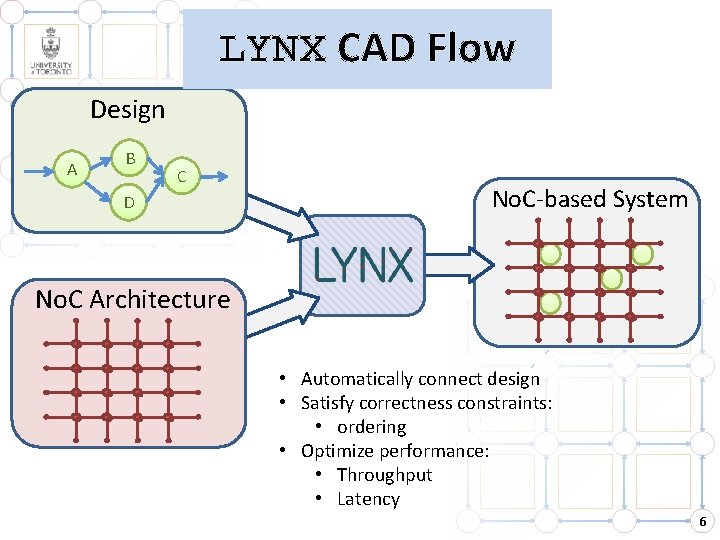

LYNX CAD Flow Design A B C D No. C-based System No. C Architecture • Automatically connect design • Satisfy correctness constraints: • ordering • Optimize performance: • Throughput • Latency 6

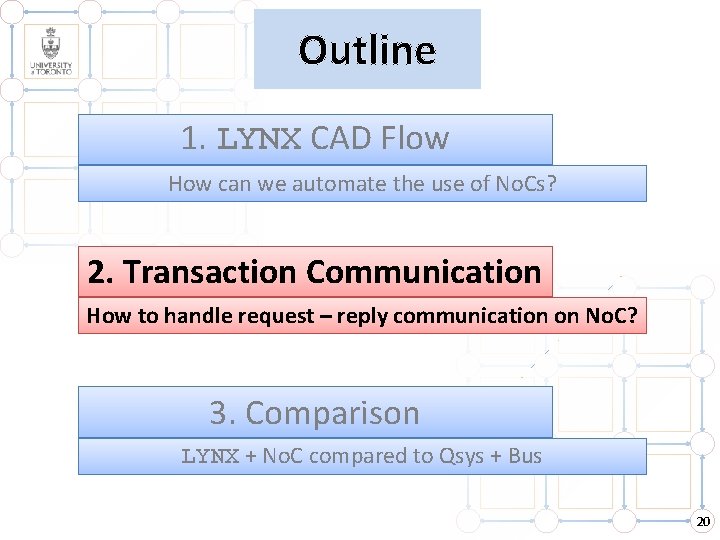

Outline 1. LYNX CAD Flow How can we automate the use of No. Cs? 2. Transaction Communication How to handle request – reply communication on No. C? 3. Comparison LYNX + No. C compared to Qsys + Bus 7

Outline 1. LYNX CAD Flow How can we automate the use of No. Cs? 2. Transaction Communication How to handle request – reply communication on No. C? 3. Comparison LYNX + No. C compared to Qsys + Bus 8

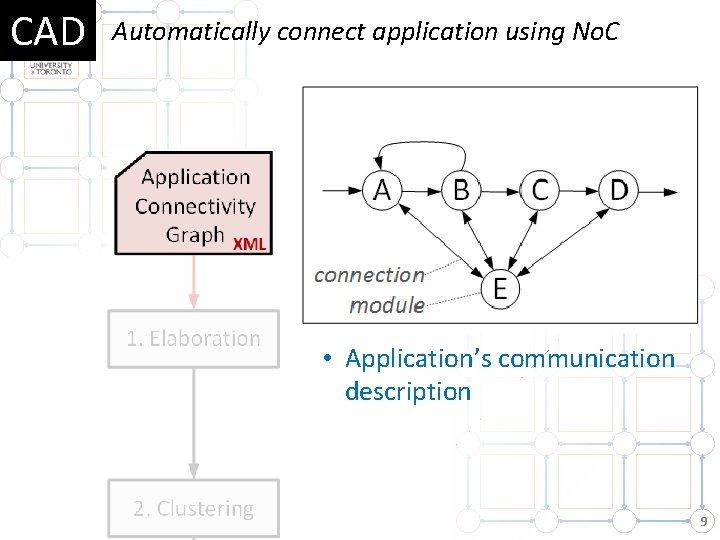

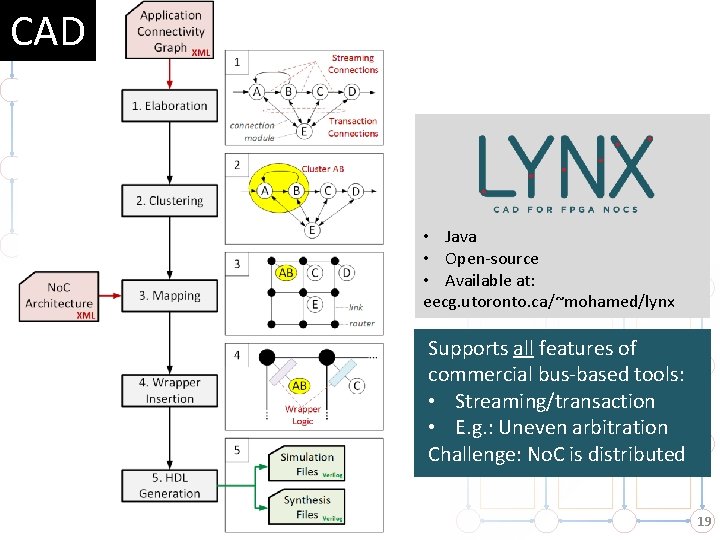

CAD Automatically connect application using No. C • Application’s communication description 9

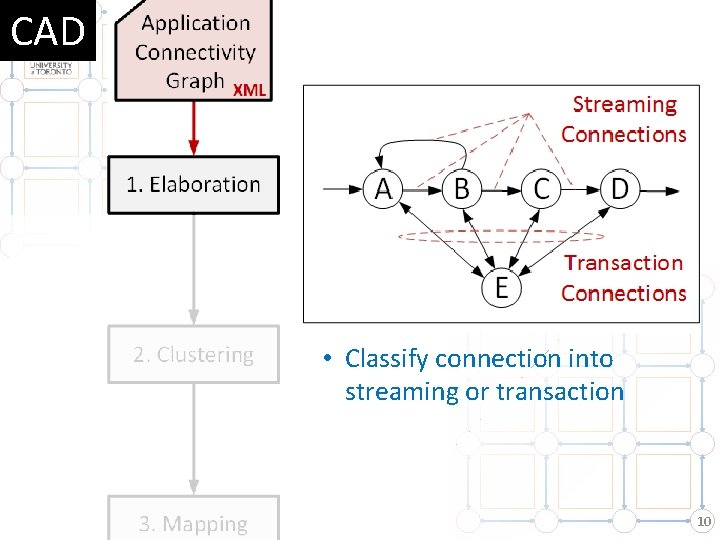

CAD • Classify connection into streaming or transaction 10

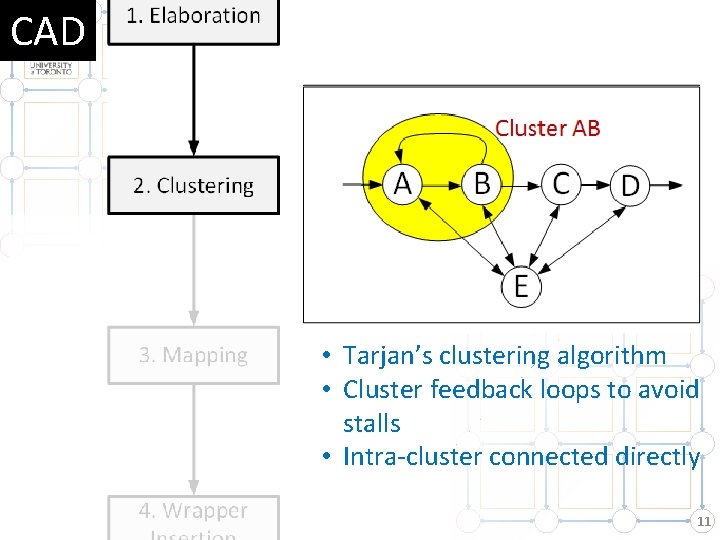

CAD • Tarjan’s clustering algorithm • Cluster feedback loops to avoid stalls • Intra-cluster connected directly 11

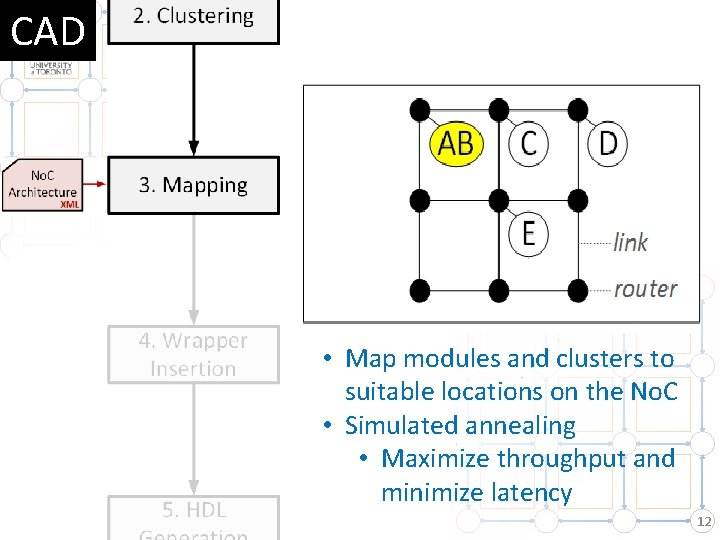

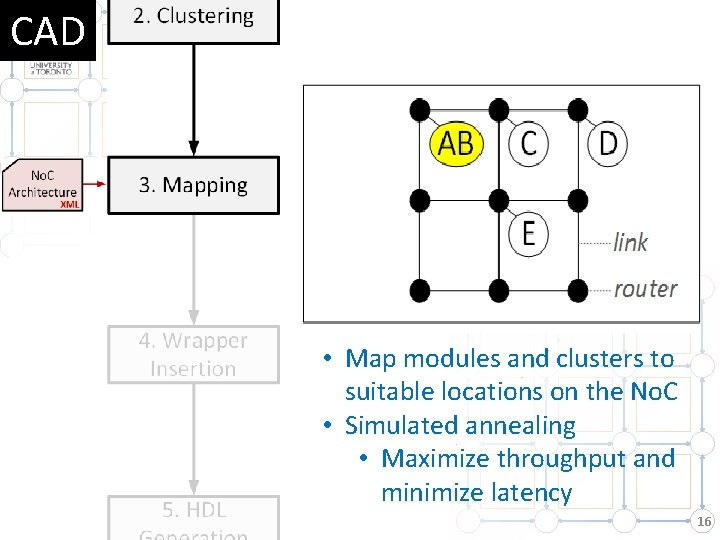

CAD • Map modules and clusters to suitable locations on the No. C • Simulated annealing • Maximize throughput and minimize latency 12

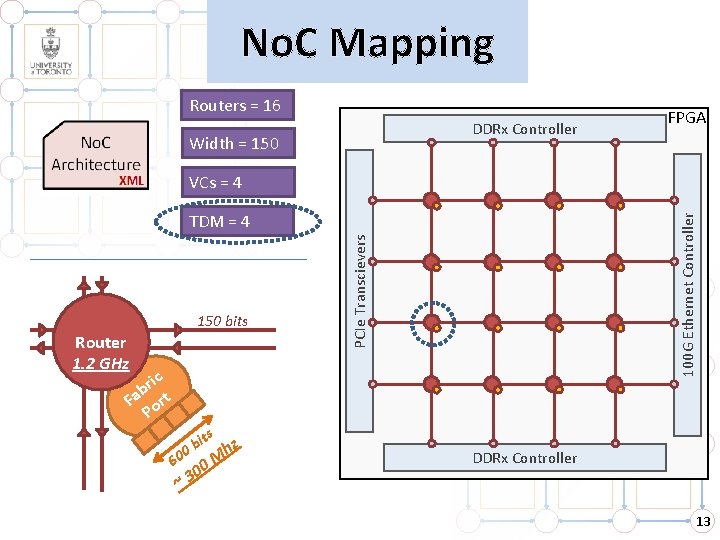

No. C Mapping Routers = 16 DDRx Controller Width = 150 FPGA VCs = 4 Router 1. 2 GHz ric b Fa ort P s it b 0 60 ~3 M 00 hz PCIe Transcievers 150 bits 100 G Ethernet Controller TDM = 4 DDRx Controller 13

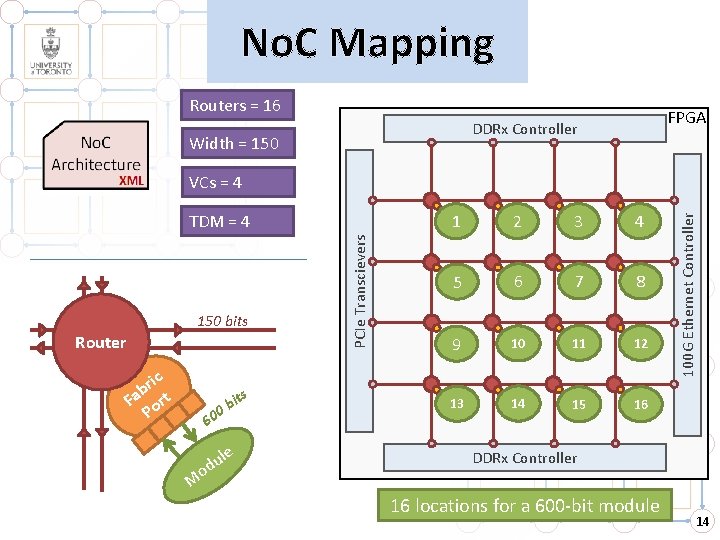

No. C Mapping Routers = 16 FPGA DDRx Controller Width = 150 bits Router ric b Fa ort P it b 0 60 le u od M s PCIe Transcievers TDM = 4 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 100 G Ethernet Controller VCs = 4 DDRx Controller 16 locations for a 600 -bit module 14

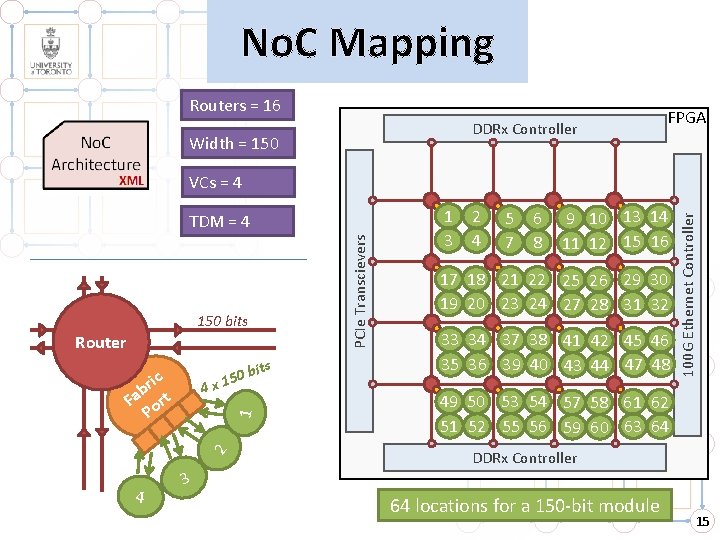

No. C Mapping Routers = 16 DDRx Controller Width = 150 FPGA PCIe Transcievers TDM = 4 150 bits Router 0 bi 5 1 4 x 2 1 ric b Fa ort P 4 ts 1 3 2 4 5 7 6 8 9 10 13 14 11 12 15 16 17 18 21 22 25 26 29 30 19 20 23 24 27 28 31 32 33 34 37 38 41 42 45 46 35 36 39 40 43 44 47 48 100 G Ethernet Controller VCs = 4 49 50 53 54 57 58 61 62 51 52 55 56 59 60 63 64 DDRx Controller 3 64 locations for a 150 -bit module 15

CAD • Map modules and clusters to suitable locations on the No. C • Simulated annealing • Maximize throughput and minimize latency 16

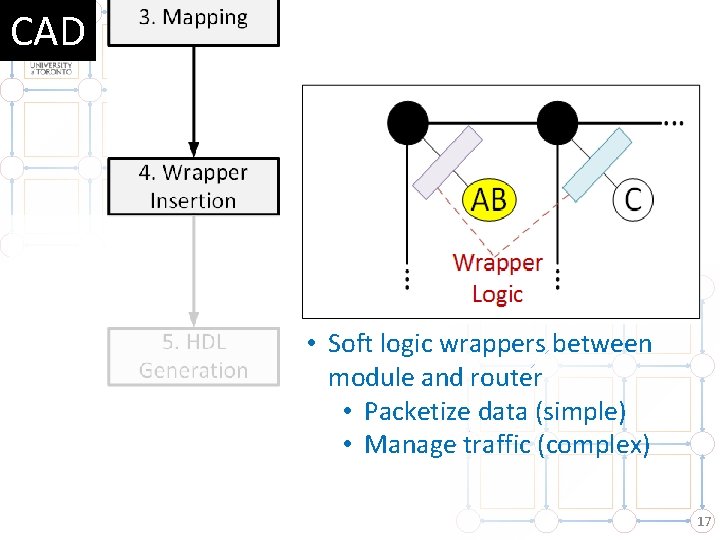

CAD • Soft logic wrappers between module and router • Packetize data (simple) • Manage traffic (complex) 17

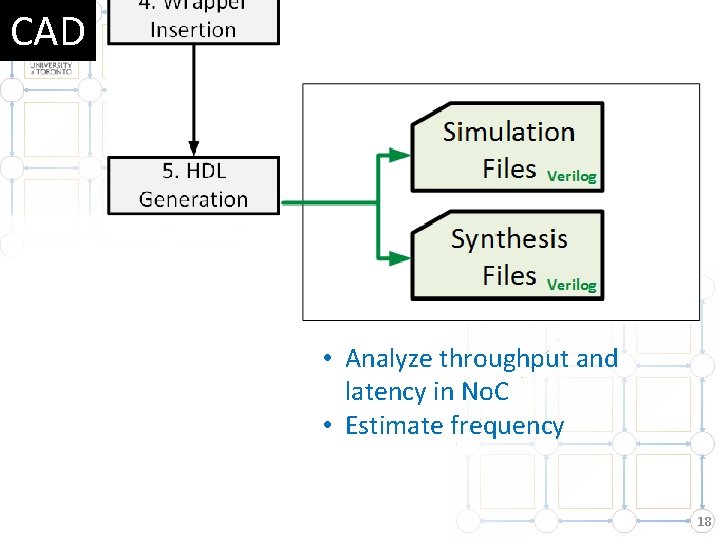

CAD • Analyze throughput and latency in No. C • Estimate frequency 18

CAD • Java • Open-source • Available at: eecg. utoronto. ca/~mohamed/lynx Supports all features of commercial bus-based tools: • Streaming/transaction • E. g. : Uneven arbitration Challenge: No. C is distributed 19

Outline 1. LYNX CAD Flow How can we automate the use of No. Cs? 2. Transaction Communication How to handle request – reply communication on No. C? 3. Comparison LYNX + No. C compared to Qsys + Bus 20

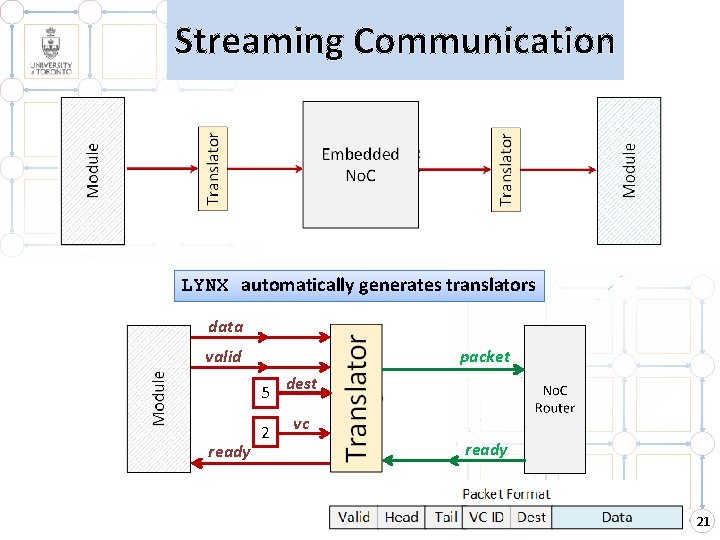

Streaming Communication Streaming communication Point-to-point LYNX automatically generates translators data valid packet 5 ready 2 dest vc ready 21

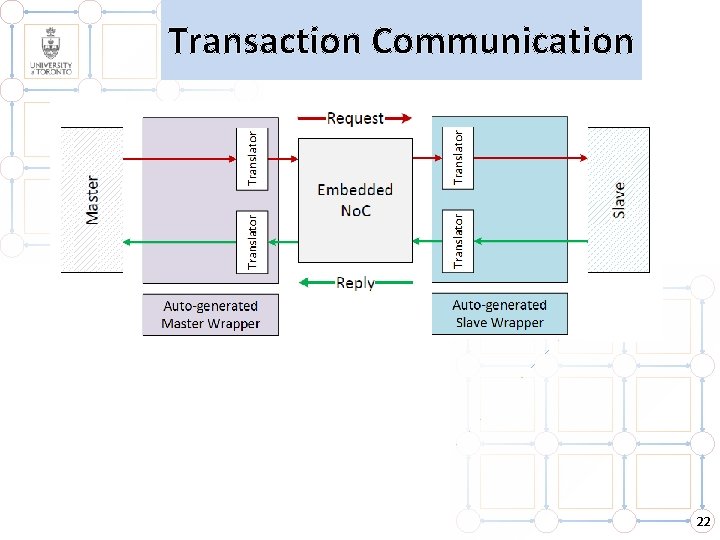

Transaction Communication 22

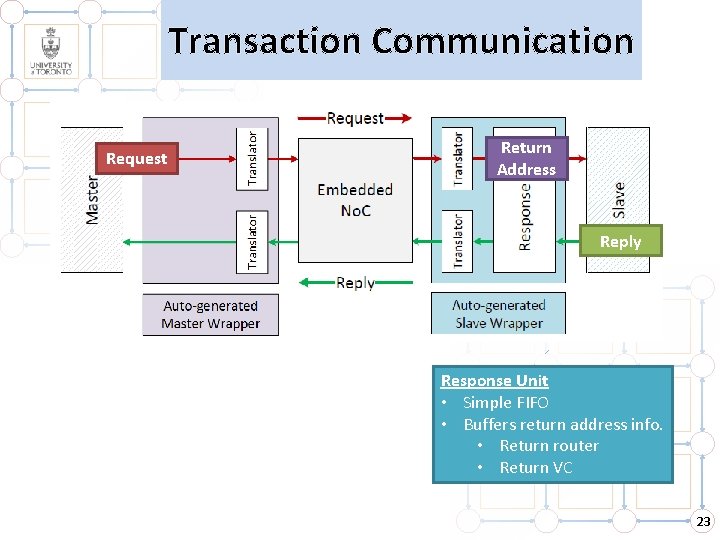

Transaction Communication Request Return Address Reply Response Unit • Simple FIFO • Buffers return address info. • Return router • Return VC 23

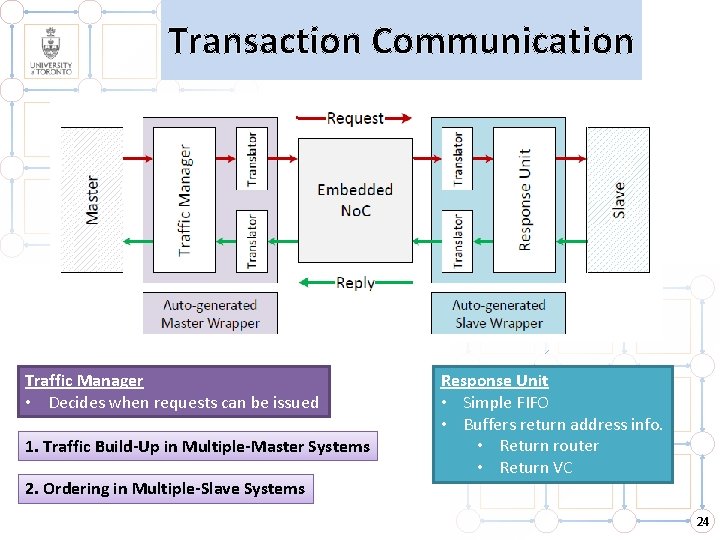

Transaction Communication Traffic Manager • Decides when requests can be issued 1. Traffic Build-Up in Multiple-Master Systems 2. Ordering in Multiple-Slave Systems Response Unit • Simple FIFO • Buffers return address info. • Return router • Return VC 24

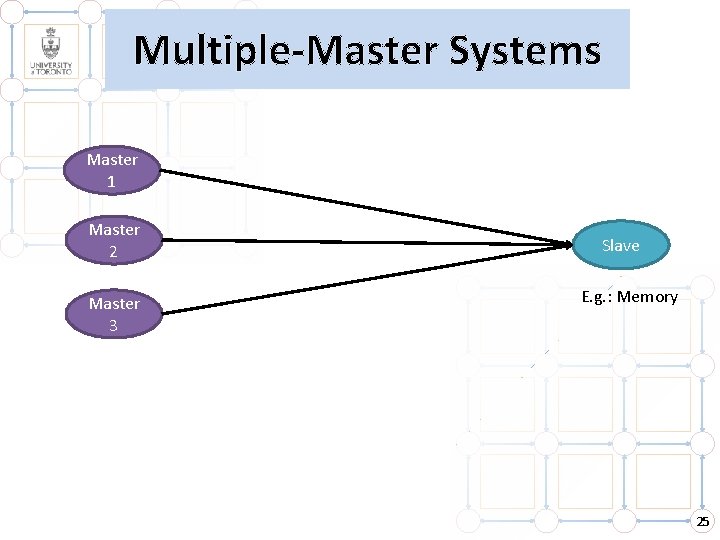

Multiple-Master Systems Master 1 Master 2 Master 3 Slave E. g. : Memory 25

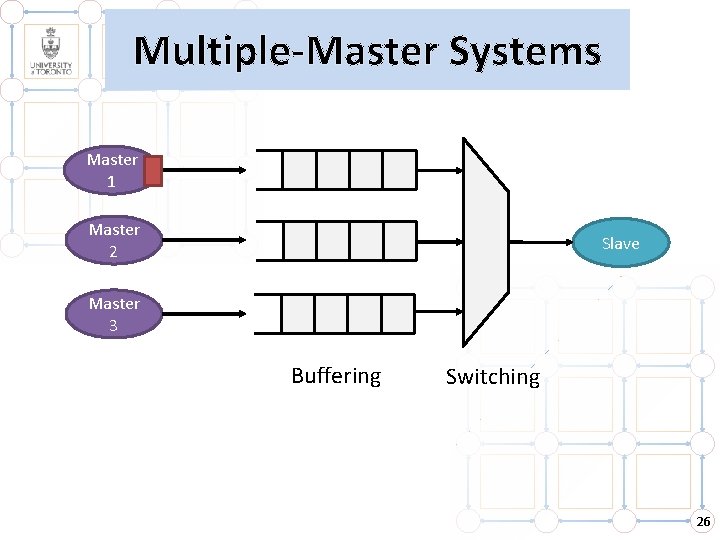

Multiple-Master Systems Master 1 Master 2 Slave Master 3 Buffering Switching 26

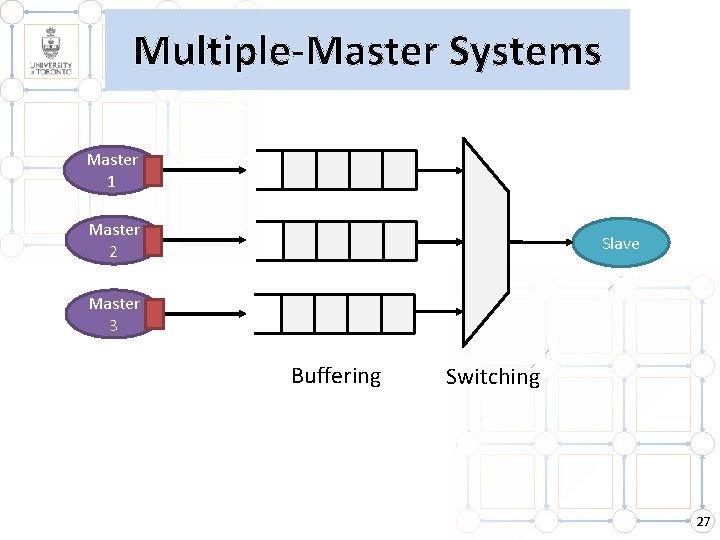

Multiple-Master Systems Master 1 Master 2 Slave Master 3 Buffering Switching 27

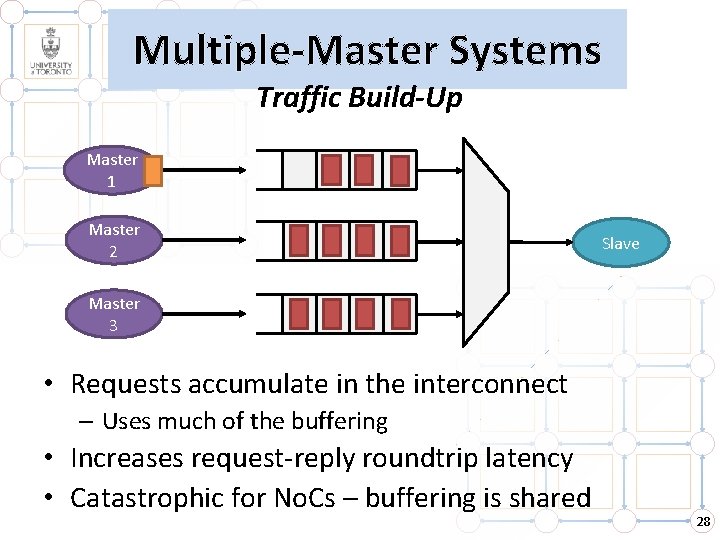

Multiple-Master Systems Traffic Build-Up Master 1 Master 2 Slave Master 3 • Requests accumulate in the interconnect – Uses much of the buffering • Increases request-reply roundtrip latency • Catastrophic for No. Cs – buffering is shared 28

Multiple-Master Systems Traffic Build-Up Master 1 A Master 2 Master 3 Slave B • Requests accumulate in the interconnect – Uses much of the buffering • Increases request-reply roundtrip latency • Catastrophic for No. Cs – buffering is shared 29

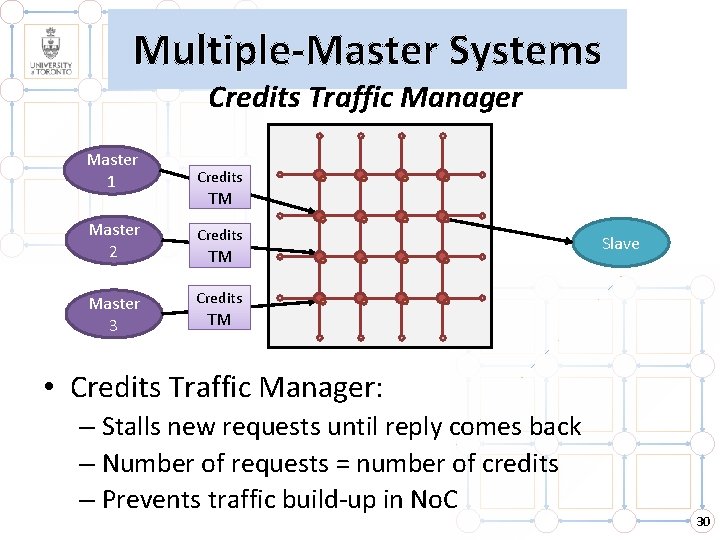

Multiple-Master Systems Credits Traffic Manager Master 1 Credits Master 2 Credits Master 3 Credits TM TM Slave TM • Credits Traffic Manager: – Stalls new requests until reply comes back – Number of requests = number of credits – Prevents traffic build-up in No. C 30

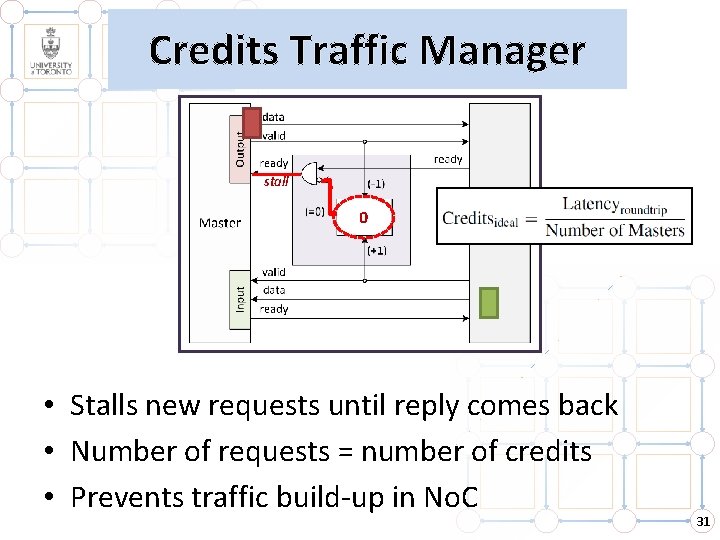

Credits Traffic Manager stall 3 21 0 • Stalls new requests until reply comes back • Number of requests = number of credits • Prevents traffic build-up in No. C 31

![Latency 70 Latency [Clock Cycles] 60 50 Without credits traffic manager 40 With credits Latency 70 Latency [Clock Cycles] 60 50 Without credits traffic manager 40 With credits](http://slidetodoc.com/presentation_image_h/c7d647bd34c996955b6bce3dfb7d4bd3/image-32.jpg)

Latency 70 Latency [Clock Cycles] 60 50 Without credits traffic manager 40 With credits traffic manager 30 20 10 0 1 4 7 10 13 16 Number of Masters Credits TM improves roundtrip latency (drastically) … and reduces No. C contention 32

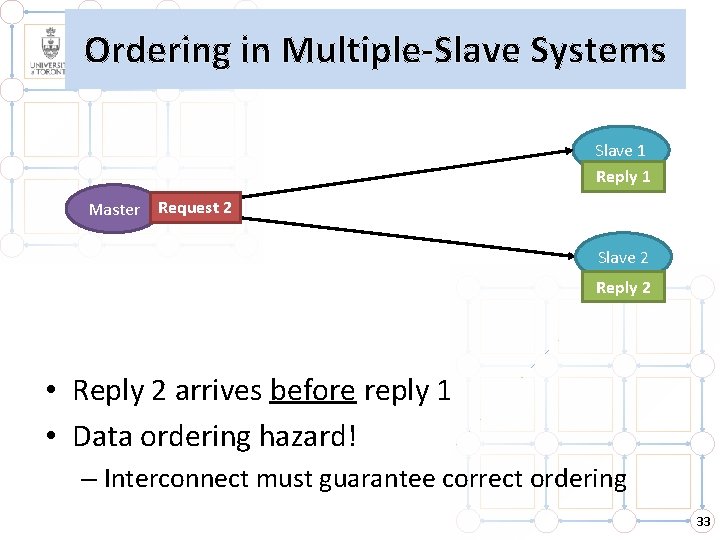

Ordering in Multiple-Slave Systems Slave 1 Reply 1 Master Request 21 Slave 2 Reply 2 • Reply 2 arrives before reply 1 • Data ordering hazard! – Interconnect must guarantee correct ordering 33

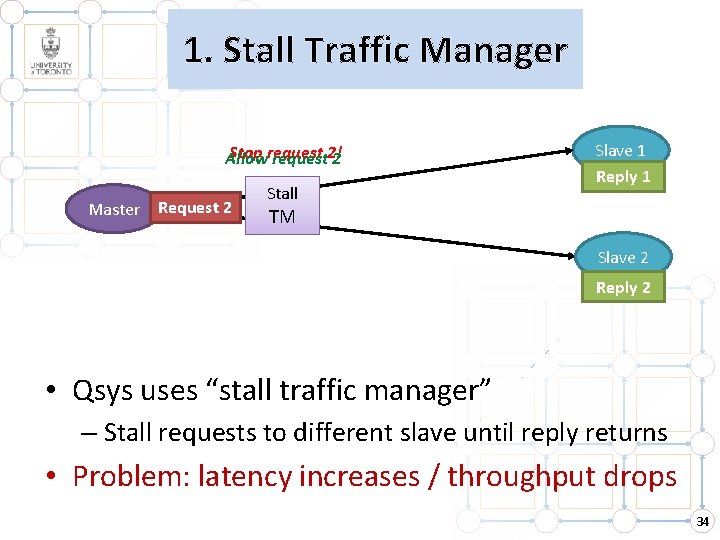

1. Stall Traffic Manager Stop request Allow request 2! 2 Master Request 21 Stall Slave 1 Reply 1 TM Slave 2 Reply 2 • Qsys uses “stall traffic manager” – Stall requests to different slave until reply returns • Problem: latency increases / throughput drops 34

2. VC Traffic Manager Buffer replies in No. C Allow reply 1 2 Master Request 21 (VC 2) (VC 1) VC TM Slave 1 Reply 1 (VC 1) Slave 2 Reply 2 (VC 2) • Leverage VCs & reorder at master – Increase throughput / reduce latency – Use VC buffers in No. C no added area • Throughput limited by number of VCs 35

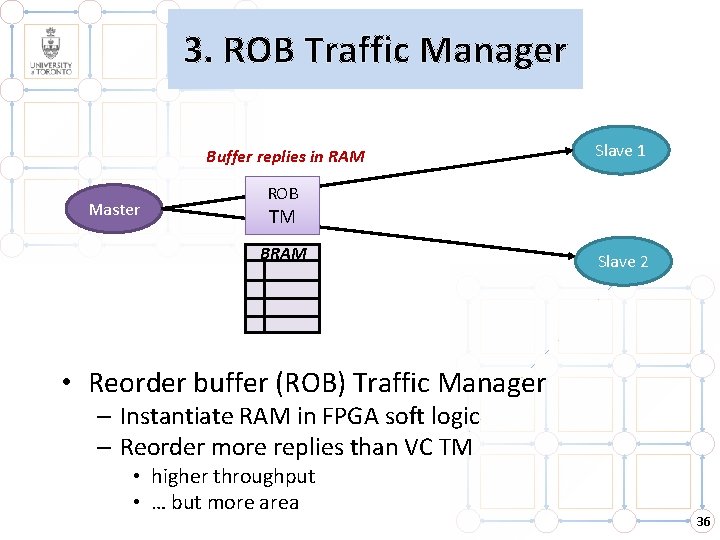

3. ROB Traffic Manager Buffer replies in RAM Master Slave 1 ROB TM BRAM Slave 2 • Reorder buffer (ROB) Traffic Manager – Instantiate RAM in FPGA soft logic – Reorder more replies than VC TM • higher throughput • … but more area 36

![Three Traffic Managers for Ordering Performance Throughput [Requests/Cycle] 1. 2 1 0. 8 0. Three Traffic Managers for Ordering Performance Throughput [Requests/Cycle] 1. 2 1 0. 8 0.](http://slidetodoc.com/presentation_image_h/c7d647bd34c996955b6bce3dfb7d4bd3/image-37.jpg)

Three Traffic Managers for Ordering Performance Throughput [Requests/Cycle] 1. 2 1 0. 8 0. 6 0. 4 LYNX No. C (ROB) 0. 2 LYNX No. C (Stall) 0 0 5 10 15 Number of Consecutive Requests to Each Slave 20 Depending on traffic VC or ROB TM 37

![Three Traffic Managers for Ordering Performance Throughput [Requests/Cycle] 1. 2 1 0. 8 Qsys Three Traffic Managers for Ordering Performance Throughput [Requests/Cycle] 1. 2 1 0. 8 Qsys](http://slidetodoc.com/presentation_image_h/c7d647bd34c996955b6bce3dfb7d4bd3/image-38.jpg)

Three Traffic Managers for Ordering Performance Throughput [Requests/Cycle] 1. 2 1 0. 8 Qsys 0. 6 0. 4 LYNX No. C (ROB) Qsys Bus (Stall) 0. 2 LYNX No. C (Stall) 0 0 5 10 15 Number of Consecutive Requests to Each Slave 20 Depending on traffic VC or ROB TM Performance (much) better than Qsys 38

Outline 1. LYNX CAD Flow How can we automate the use of No. Cs? 2. Transaction Communication How to handle request – reply communication on No. C? 3. Comparison LYNX + No. C compared to Qsys + Bus 39

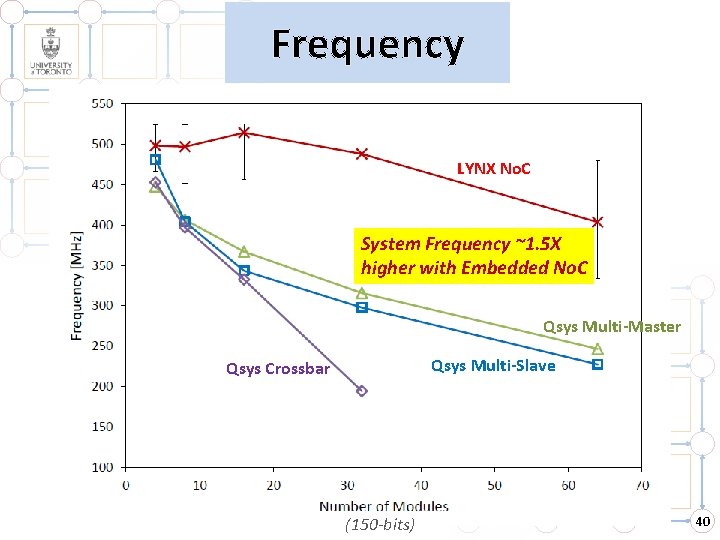

Frequency LYNX No. C System Frequency ~1. 5 X higher with Embedded No. C Qsys Multi-Master Qsys Multi-Slave Qsys Crossbar (150 -bits) 40

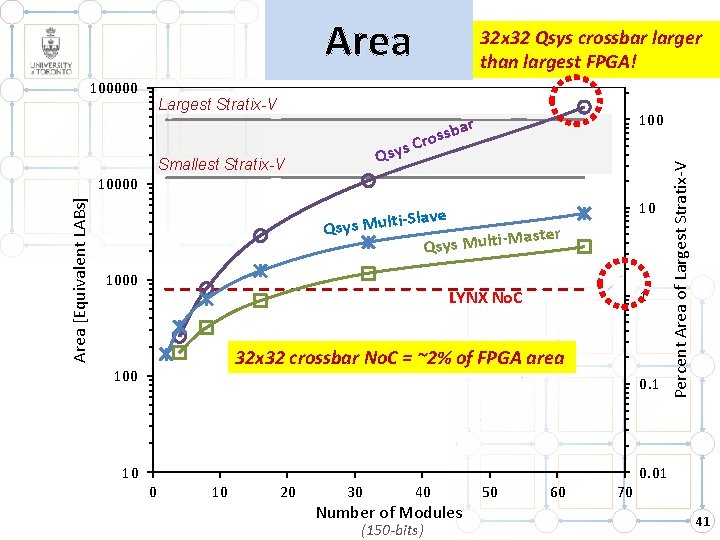

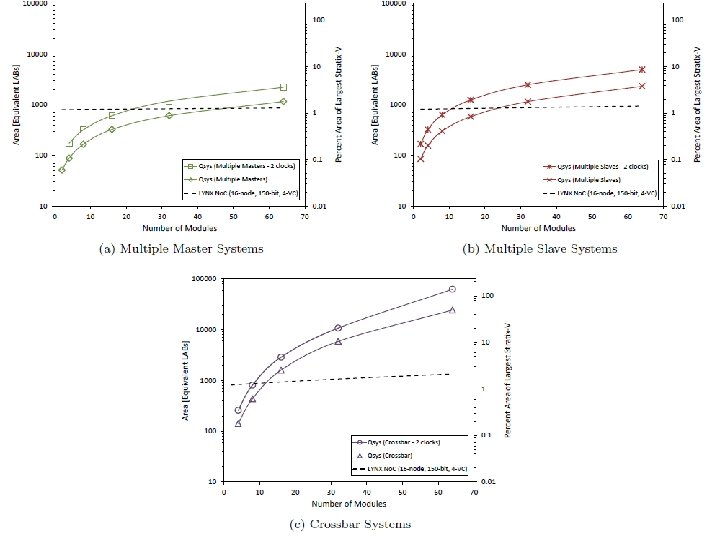

Area Largest Stratix-V ar b s s o s Cr 100 i-Slave Qsys Mult Master ilt u M s y s Q 10 Qsy Smallest Stratix-V Area [Equivalent LABs] 10000 1 LYNX No. C 32 x 32 crossbar No. C = ~2% of FPGA area 100 0. 1 10 Percent Area of Largest Stratix-V 100000 32 x 32 Qsys crossbar larger than largest FPGA! 0. 01 0 10 20 30 40 Number of Modules (150 -bits) 50 60 70 41

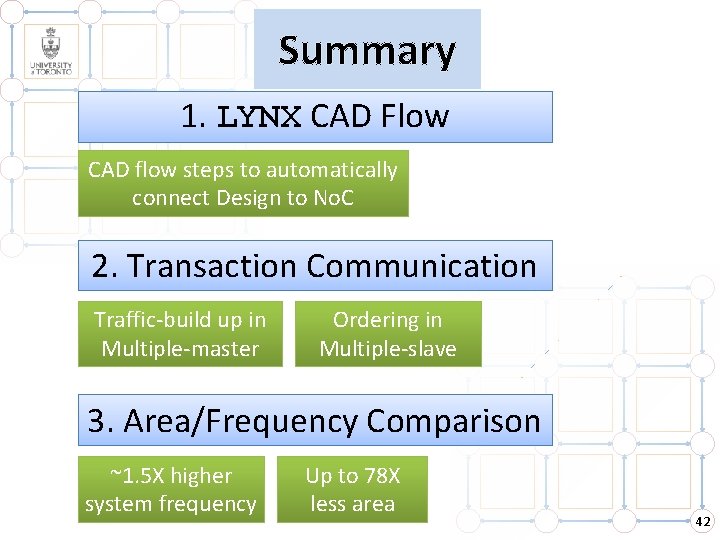

Summary 1. LYNX CAD Flow CAD flow steps to automatically connect Design to No. C 2. Transaction Communication Traffic-build up in Multiple-master Ordering in Multiple-slave 3. Area/Frequency Comparison ~1. 5 X higher system frequency Up to 78 X less area 42

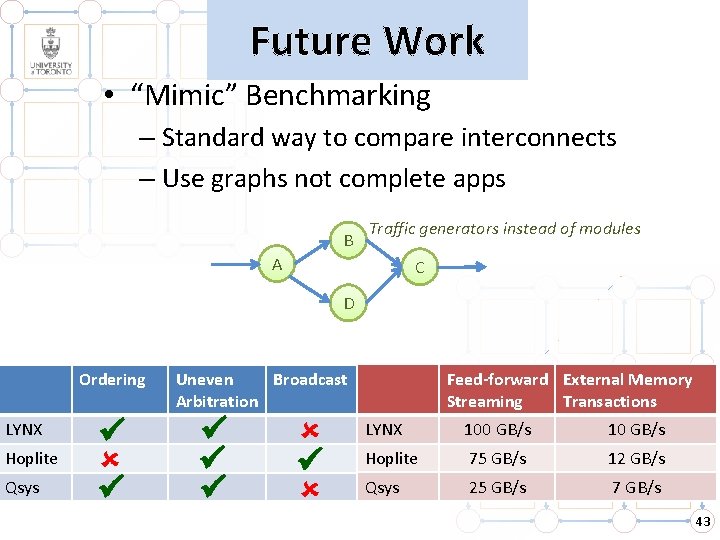

Future Work • “Mimic” Benchmarking – Standard way to compare interconnects – Use graphs not complete apps B Traffic generators instead of modules A C D Ordering LYNX Hoplite Qsys Feed-forward External Memory Streaming Transactions Uneven Broadcast Arbitration LYNX 100 GB/s 10 GB/s Hoplite 75 GB/s 12 GB/s Qsys 25 GB/s 7 GB/s 43

Thank You! 44

![Three Traffic Managers for Ordering Area [Equivalent LABs] 45 40 ROB TM VC TM Three Traffic Managers for Ordering Area [Equivalent LABs] 45 40 ROB TM VC TM](http://slidetodoc.com/presentation_image_h/c7d647bd34c996955b6bce3dfb7d4bd3/image-45.jpg)

Three Traffic Managers for Ordering Area [Equivalent LABs] 45 40 ROB TM VC TM Stall TM 35 30 25 20 0 5 10 15 Number of Credits 20 ROB TM twice the area VC/Stall TMs 25 45

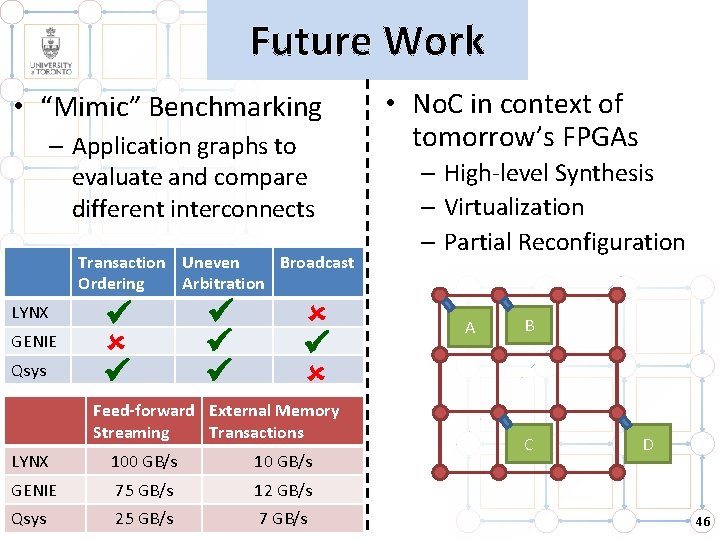

Future Work • “Mimic” Benchmarking – Application graphs to evaluate and compare different interconnects Transaction Uneven Broadcast Ordering Arbitration LYNX GENIE Qsys • No. C in context of tomorrow’s FPGAs – High-level Synthesis – Virtualization – Partial Reconfiguration A B Feed-forward External Memory Streaming Transactions LYNX 100 GB/s 10 GB/s GENIE 75 GB/s 12 GB/s Qsys 25 GB/s 7 GB/s C D 46

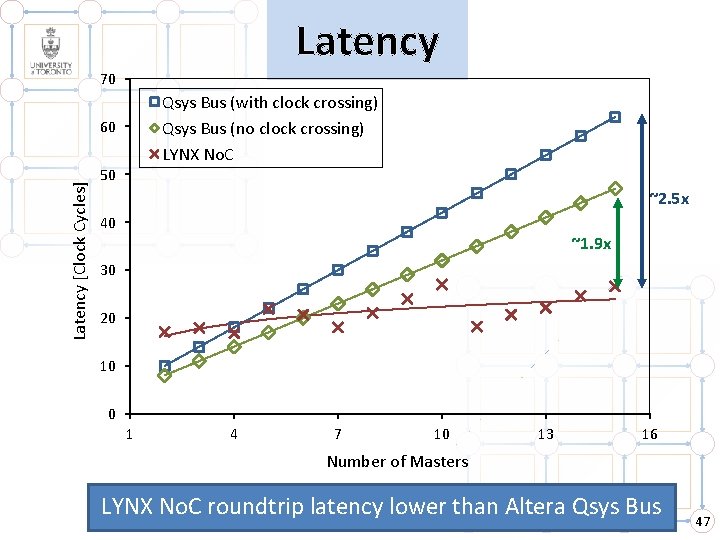

Latency 70 Qsys Bus (with clock crossing) Qsys Bus (no clock crossing) LYNX No. C Latency [Clock Cycles] 60 50 ~2. 5 x 40 ~1. 9 x 30 20 10 0 1 4 7 10 13 16 Number of Masters LYNX No. C roundtrip latency lower than Altera Qsys Bus 47

48

- Slides: 49