Lowlevel Thinking in Highlevel Shading Languages Emil Persson

![Rules ● D 3 D 10+ generally follows IEEE-754 -2008 [1] ● Exceptions include Rules ● D 3 D 10+ generally follows IEEE-754 -2008 [1] ● Exceptions include](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-15.jpg)

![● ● ● mul(v, m) Built-in functions ● v. x * m[0] + v. ● ● ● mul(v, m) Built-in functions ● v. x * m[0] + v.](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-29.jpg)

![Additional recommendations ● Communicate intention with [branch], [flatten], [loop], [unroll] ● [branch] turns “divergent Additional recommendations ● Communicate intention with [branch], [flatten], [loop], [unroll] ● [branch] turns “divergent](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-43.jpg)

![References [1] IEEE-754 [2] Floating-Point Rules [3] Fabian Giesen: Finish your derivations, please References [1] IEEE-754 [2] Floating-Point Rules [3] Fabian Giesen: Finish your derivations, please](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-46.jpg)

- Slides: 47

Low-level Thinking in High-level Shading Languages Emil Persson Head of Research, Avalanche Studios

Problem formulation “Nowadays renowned industry luminaries include shader snippets in their GDC presentations where trivial transforms would have resulted in a faster shader”

Goal of this presentation “Show that low-level thinking is still relevant today”

Background ● In the good ol' days, when grandpa was young. . . ● Shaders were short ● ● SM 1: Max 8 instructions, SM 2: Max 64 instructions Shaders were written in assembly ● Already getting phased out in SM 2 days ● D 3 D opcodes mapped well to real HW ● Hand-optimizing shaders was a natural thing to do def c 0, 0. 3 f, 2. 5 f, 0, 0 texld sub mul r 0, t 0 r 0, c 0. x r 0, c 0. y ⇨ def c 0, -0. 75 f, 2. 5 f, 0, 0 texld mad r 0, t 0 r 0, c 0. y, c 0. x

Background ● Low-level shading languages are dead ● Unproductive way of writing shaders ● No assembly option in DX 10+ ● Nobody used it anyway ● Compilers and driver optimizers do a great job (sometimes. . . ) ● Hell, these days artists author shaders! ● Using visual shader editors ● With boxes and arrows ● Without counting cycles, or inspecting the asm ● Without even consulting technical documentation ● Argh, the kids these day! Back in my days we had. . . ● Consequently: ● Shader writers have lost touch with the HW

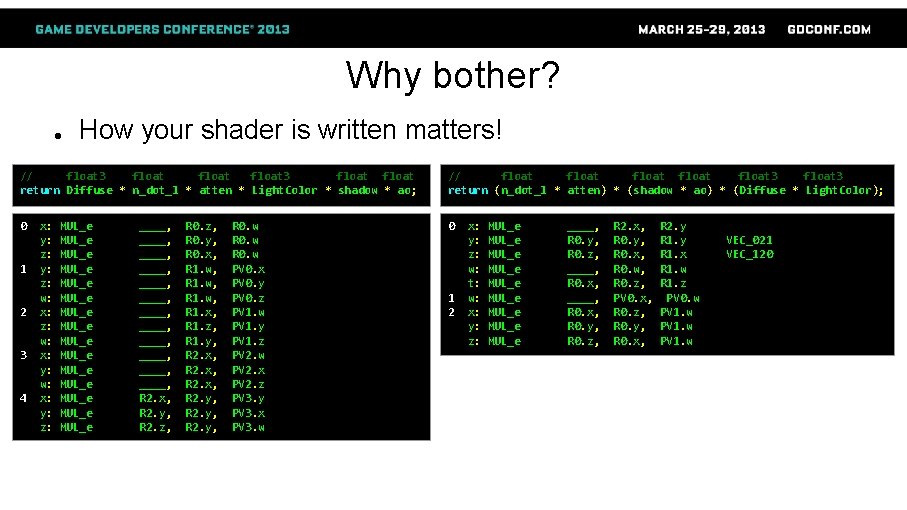

Why bother? ● How your shader is written matters! // float 3 float return Diffuse * n_dot_l * atten * Light. Color * shadow * ao; // float float 3 return (n_dot_l * atten) * (shadow * ao) * (Diffuse * Light. Color); 0 0 1 2 3 4 x: y: z: w: x: y: z: MUL_e MUL_e MUL_e MUL_e ____, ____, ____, R 2. x, R 2. y, R 2. z, R 0. z, R 0. y, R 0. x, R 1. w, R 1. x, R 1. z, R 1. y, R 2. x, R 2. y, R 0. w PV 0. x PV 0. y PV 0. z PV 1. w PV 1. y PV 1. z PV 2. w PV 2. x PV 2. z PV 3. y PV 3. x PV 3. w 1 2 x: y: z: w: t: w: x: y: z: MUL_e MUL_e MUL_e ____, R 0. y, R 0. z, ____, R 0. x, R 0. y, R 0. z, R 2. x, R 0. y, R 0. x, R 0. w, R 0. z, PV 0. x, R 0. z, R 0. y, R 0. x, R 2. y R 1. x R 1. w R 1. z PV 0. w PV 1. w VEC_021 VEC_120

Why bother? ● Better performance ● “We're not ALU bound. . . ” ● ● “We'll optimize at the end of the project …” ● ● ● Save power More punch once you optimize for TEX/BW/etc. More headroom for new features Pray that content doesn't lock you in. . . Consistency ● There is often a best way to do things ● Improve readability It's fun!

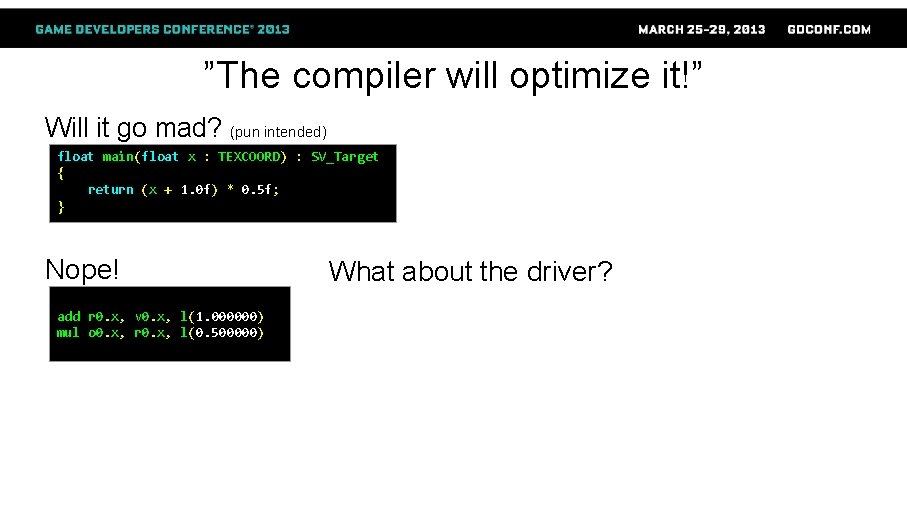

”The compiler will optimize it!”

”The compiler will optimize it!” ● Compilers are cunning! ● ● Smart enough to fool themselves! However: ● They can't read your mind ● They don't have the whole picture ● They work with limited data ● They can't break rules ● Well, mostly … (they can make up their own rules)

”The compiler will optimize it!” Will it go mad? (pun intended) float main(float x : TEXCOORD) : SV_Target { return (x + 1. 0 f) * 0. 5 f; }

”The compiler will optimize it!” Will it go mad? (pun intended) float main(float x : TEXCOORD) : SV_Target { return (x + 1. 0 f) * 0. 5 f; } Nope! add r 0. x, v 0. x, l(1. 000000) mul o 0. x, r 0. x, l(0. 500000) What about the driver?

”The compiler will optimize it!” Will it go mad? (pun intended) float main(float x : TEXCOORD) : SV_Target { return (x + 1. 0 f) * 0. 5 f; } Nope! add r 0. x, v 0. x, l(1. 000000) mul o 0. x, r 0. x, l(0. 500000) Nope! 00 ALU: ADDR(32) CNT(2) 0 y: ADD ____, 1 x: MUL_e R 0. x, 01 EXP_DONE: PIX 0, R 0. x___ R 0. x, 1. 0 f PV 0. y, 0. 5

Why not? ● The result might not be exactly the same ● May introduce INFs or Na. Ns ● Generally, the compiler is great at: ● ● Removing dead code ● Eliminating unused resources ● Folding constants ● Register assignment ● Code scheduling But generally does not: ● Change the meaning of the code ● Break dependencies ● Break rules

Therefore: Write the shader the way you want the hardware to run it! That means: Low-level thinking

![Rules D 3 D 10 generally follows IEEE754 2008 1 Exceptions include Rules ● D 3 D 10+ generally follows IEEE-754 -2008 [1] ● Exceptions include](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-15.jpg)

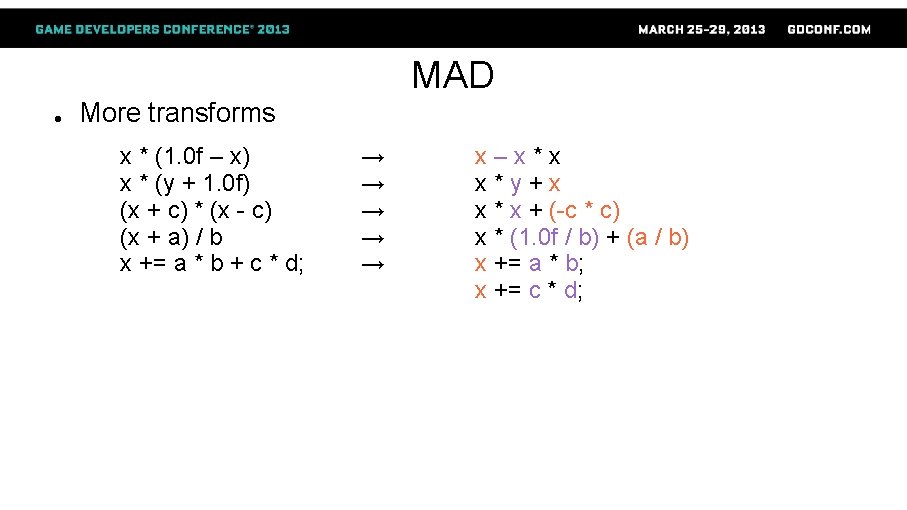

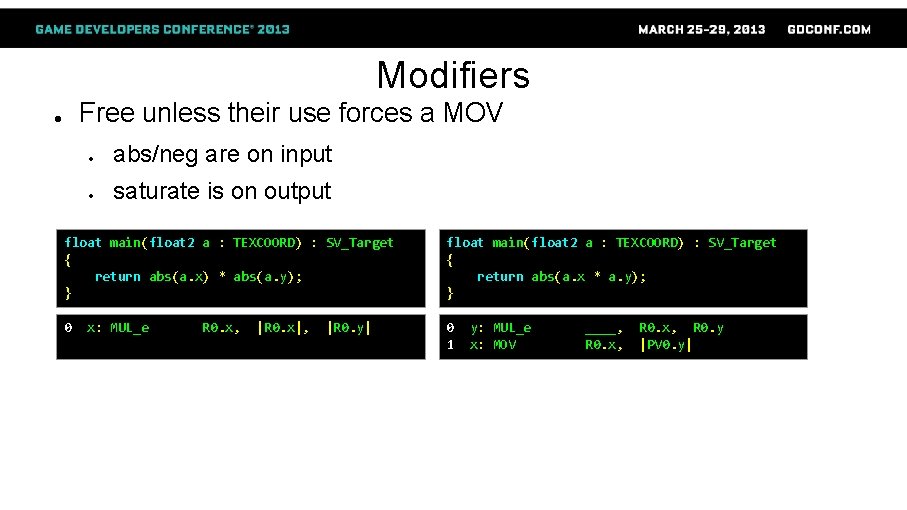

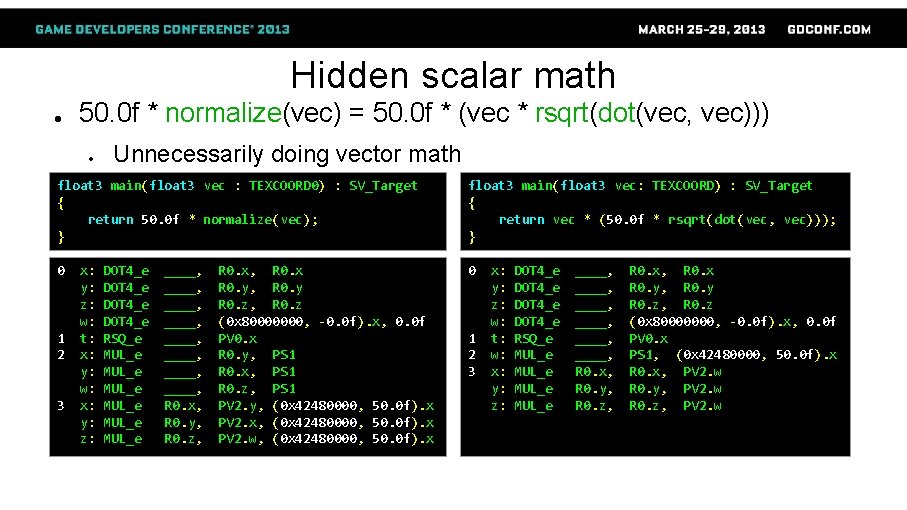

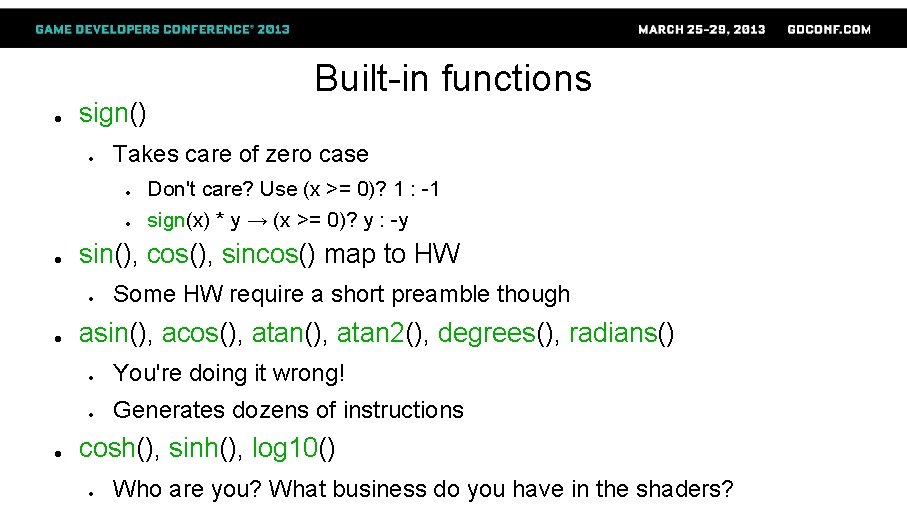

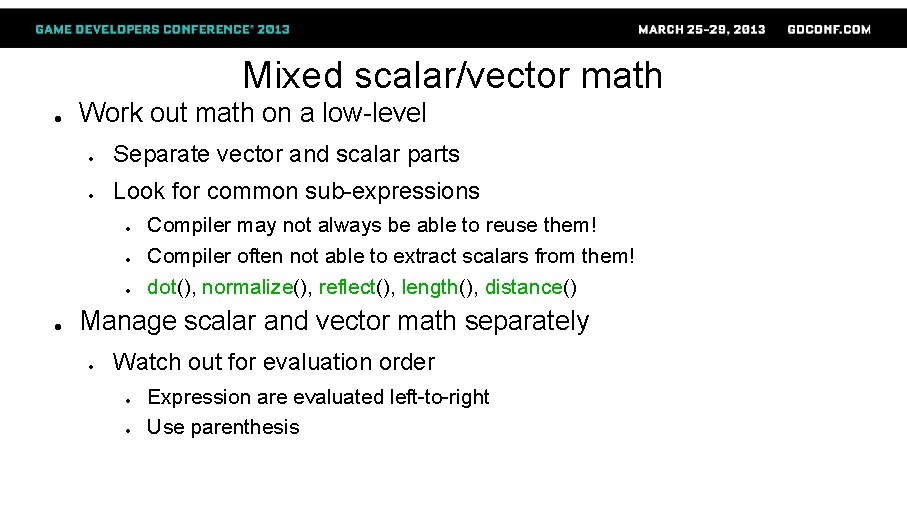

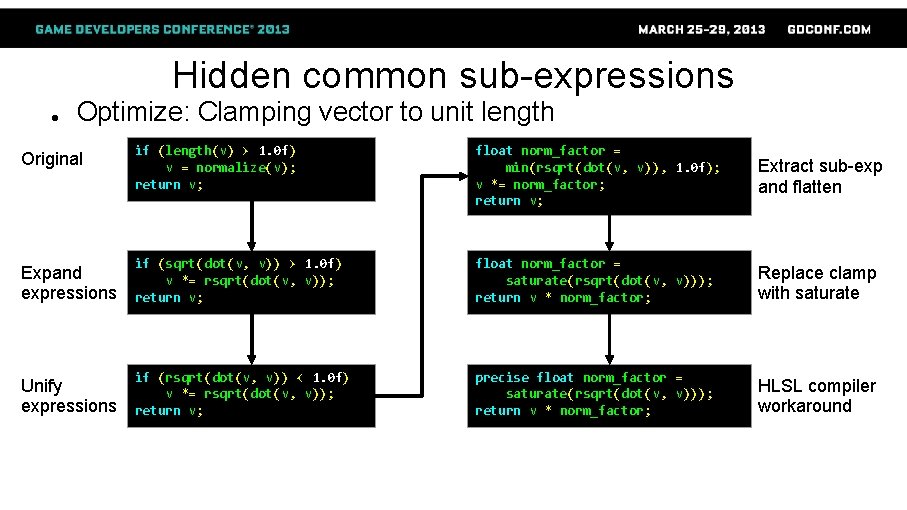

Rules ● D 3 D 10+ generally follows IEEE-754 -2008 [1] ● Exceptions include [2]: ● 1 ULP instead of 0. 5 ● Denorms flushed on math ops ● ● ● Except MOVs Min/max flush on input, but not necessarily on output HLSL compiler ignores: ● The possibility of Na. Ns or INFs ● ● ● e. g. x * 0 = 0, despite Na. N * 0 = Na. N Except with precise keyword or IEEE strictness enabled Beware: compiler may optimize away your isnan() and isfinite() calls!

Universal* facts about HW ● Multiply-add is one instruction – Add-multiply is two ● abs, negate and saturate are free ● Except when their use forces a MOV ● Scalar ops use fewer resources than vector ● Shader math involving only constants is crazy ● Not doing stuff is faster than doing stuff * For a limited set of known universes

MAD ● Any linear ramp → mad ● With a clamp → mad_sat ● ● ● If clamp is not to [0, 1] → mad_sat + mad Remapping a range == linear ramp MAD not always the most intuitive form ● MAD = x * slope + offset_at_zero ● Generate slope & offset from intuitive params (x – start) * slope (x – start) / (end – start) (x – mid_point) / range + 0. 5 f clamp(s 1 + (x-s 0)*(e 1 -s 1)/(e 0 -s 0), s 1, e 1) → → x * slope + (-start * slope) x * (1. 0 f / (end - start)) + (-start / (end - start)) x * (1. 0 f / range) + (0. 5 f - mid_point / range) saturate(x * (1. 0 f/(e 0 -s 0)) + (-s 0/(e 0 -s 0))) * (e 1 -s 1) + s 1

Division ● ● a / b typically implemented as a * rcp(b) ● D 3 D asm may use DIV instruction though ● Explicit rcp() sometimes generates better code Transforms a / (x + b) a / (x * b) → → a / (x * b + c) (x + a) / x (x * a + b) / x → → → rcp(x * (1. 0 f / a) + (b / a)) rcp(x) * (a / b) rcp(x * (b / a) + (c / a)) 1. 0 f + a * rcp(x) a + b * rcp(x) ● It's all junior high-school math! ● It's all about finishing your derivations! [3]

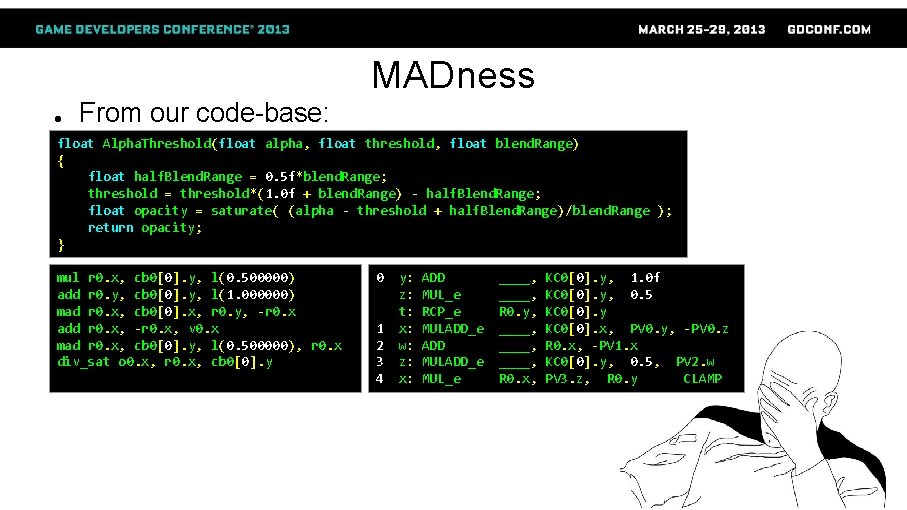

MADness ● From our code-base: float Alpha. Threshold(float alpha, float threshold, float blend. Range) { float half. Blend. Range = 0. 5 f*blend. Range; threshold = threshold*(1. 0 f + blend. Range) - half. Blend. Range; float opacity = saturate( (alpha - threshold + half. Blend. Range)/blend. Range ); return opacity; } mul r 0. x, cb 0[0]. y, l(0. 500000) add r 0. y, cb 0[0]. y, l(1. 000000) mad r 0. x, cb 0[0]. x, r 0. y, -r 0. x add r 0. x, -r 0. x, v 0. x mad r 0. x, cb 0[0]. y, l(0. 500000), r 0. x div_sat o 0. x, r 0. x, cb 0[0]. y 0 1 2 3 4 y: z: t: x: w: z: x: ADD MUL_e RCP_e MULADD_e ADD MULADD_e MUL_e ____, R 0. y, ____, R 0. x, KC 0[0]. y, 1. 0 f KC 0[0]. y, 0. 5 KC 0[0]. y KC 0[0]. x, PV 0. y, -PV 0. z R 0. x, -PV 1. x KC 0[0]. y, 0. 5, PV 2. w PV 3. z, R 0. y CLAMP

MADness ● Alpha. Threshold() reimagined! // scale = 1. 0 f / blend. Range // offset = 1. 0 f - (threshold/blend. Range + threshold) float Alpha. Threshold(float alpha, float scale, float offset) { return saturate( alpha * scale + offset ); } mad_sat o 0. x, v 0. x, cb 0[0]. y 0 x: MULADD_e R 0. x, KC 0[0]. y CLAMP

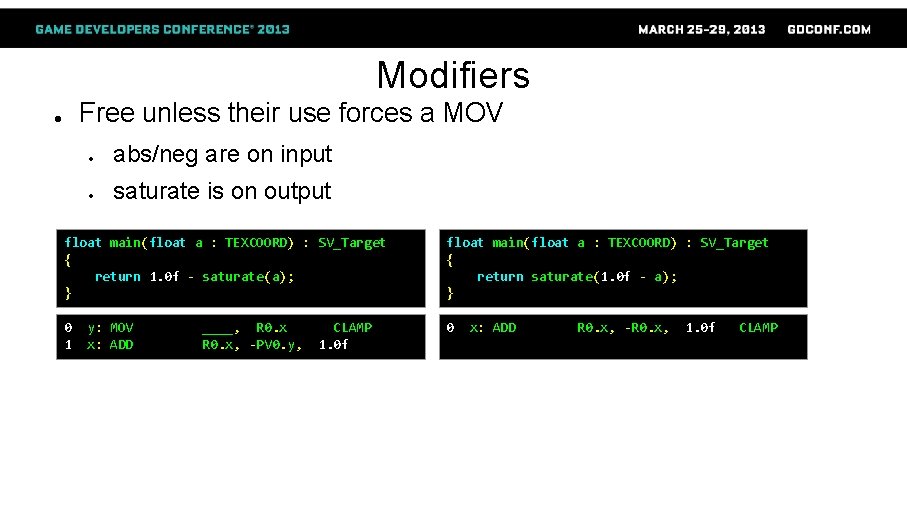

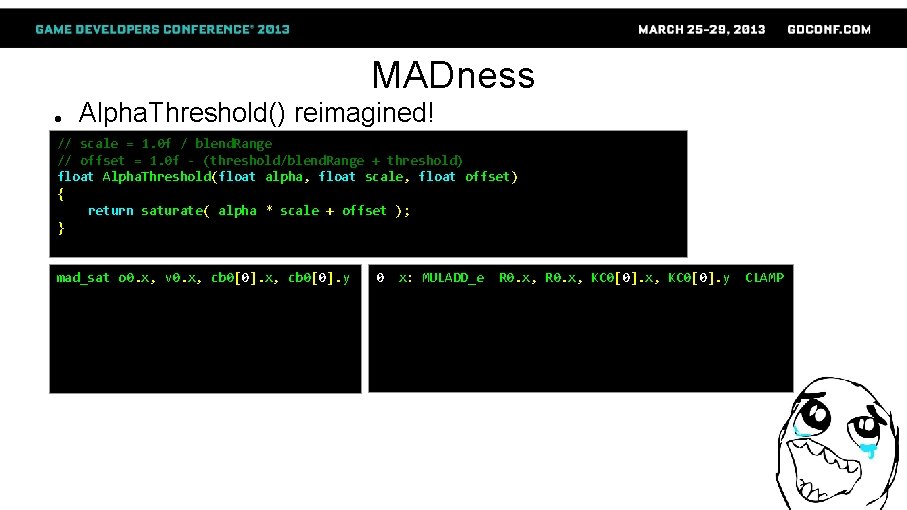

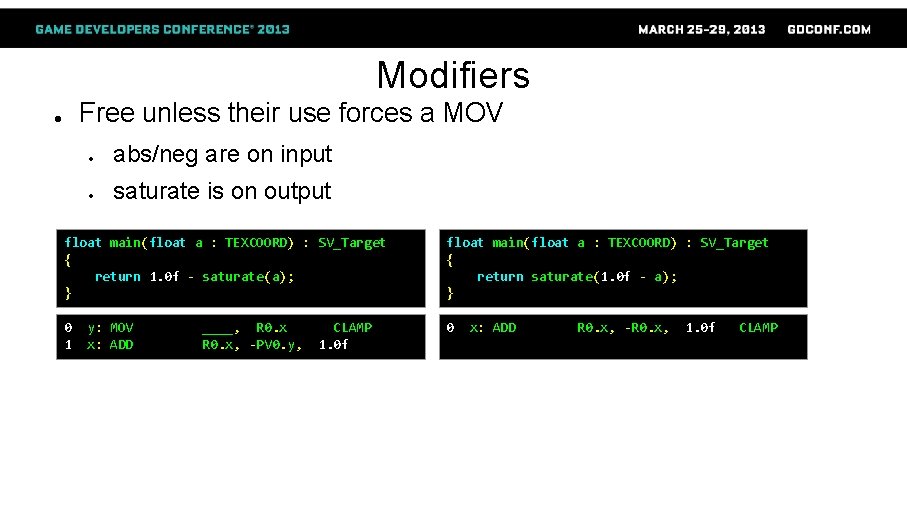

Modifiers Free unless their use forces a MOV ● ● abs/neg are on input ● saturate is on output float main(float 2 a : TEXCOORD) : SV_Target { return abs(a. x) * abs(a. y); } float main(float 2 a : TEXCOORD) : SV_Target { return abs(a. x * a. y); } 0 0 1 x: MUL_e R 0. x, |R 0. x|, |R 0. y| y: MUL_e x: MOV ____, R 0. x, R 0. y |PV 0. y|

Modifiers Free unless their use forces a MOV ● ● abs/neg are on input ● saturate is on output float main(float 2 a : TEXCOORD) : SV_Target { return -a. x * a. y; } float main(float 2 a : TEXCOORD) : SV_Target { return -(a. x * a. y); } 0 0 1 x: MUL_e R 0. x, -R 0. x, R 0. y y: MUL_e x: MOV ____, R 0. x, -PV 0. y R 0. y

Modifiers Free unless their use forces a MOV ● ● abs/neg are on input ● saturate is on output float main(float a : TEXCOORD) : SV_Target { return 1. 0 f - saturate(a); } float main(float a : TEXCOORD) : SV_Target { return saturate(1. 0 f - a); } 0 1 0 y: MOV x: ADD ____, R 0. x, -PV 0. y, CLAMP 1. 0 f x: ADD R 0. x, -R 0. x, 1. 0 f CLAMP

Modifiers ● saturate() is free, min() & max() are not ● Use saturate(x) even when max(x, 0. 0 f) or min(x, 1. 0 f) is sufficient ● ● Unless (x > 1. 0 f) or (x < 0. 0 f) respectively can happen and matters Unfortunately, HLSL compiler sometimes does the reverse … ● ● saturate(dot(a, a)) → “Yay! dot(a, a) is always positive” → min(dot(a, a), 1. 0 f) Workarounds: ● ● Obfuscate actual ranges from compiler ● e. g. move literal values to constants Use precise keyword ● Enforces IEEE strictness ● Be prepared to work around the workaround and triple-check results ● The mad(x, slope, offset) function can reinstate lost MADs

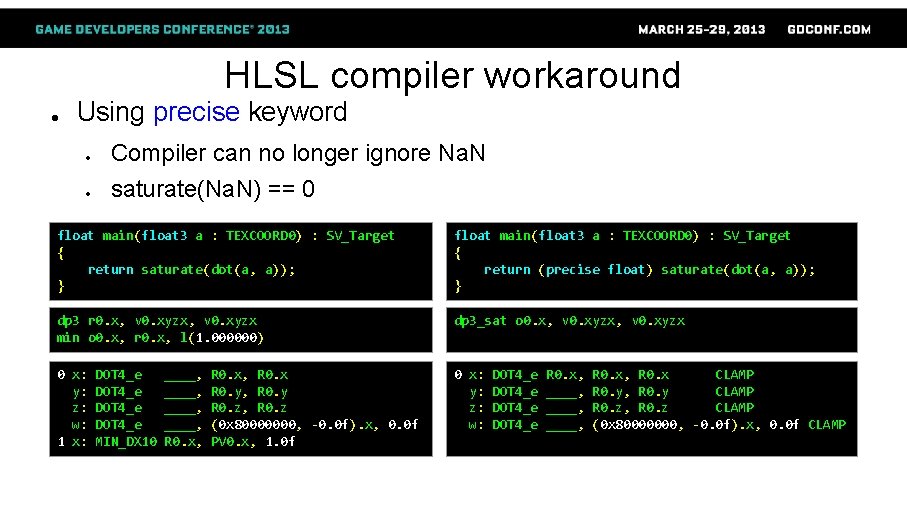

HLSL compiler workaround ● Using precise keyword ● Compiler can no longer ignore Na. N ● saturate(Na. N) == 0 float main(float 3 a : TEXCOORD 0) : SV_Target { return saturate(dot(a, a)); } float main(float 3 a : TEXCOORD 0) : SV_Target { return (precise float) saturate(dot(a, a)); } dp 3 r 0. x, v 0. xyzx min o 0. x, r 0. x, l(1. 000000) dp 3_sat o 0. x, v 0. xyzx 0 x: y: z: w: 1 x: 0 x: y: z: w: DOT 4_e MIN_DX 10 ____, R 0. x, R 0. x R 0. y, R 0. y R 0. z, R 0. z (0 x 80000000, -0. 0 f). x, 0. 0 f PV 0. x, 1. 0 f DOT 4_e R 0. x, ____, R 0. x, R 0. x CLAMP R 0. y, R 0. y CLAMP R 0. z, R 0. z CLAMP (0 x 80000000, -0. 0 f). x, 0. 0 f CLAMP

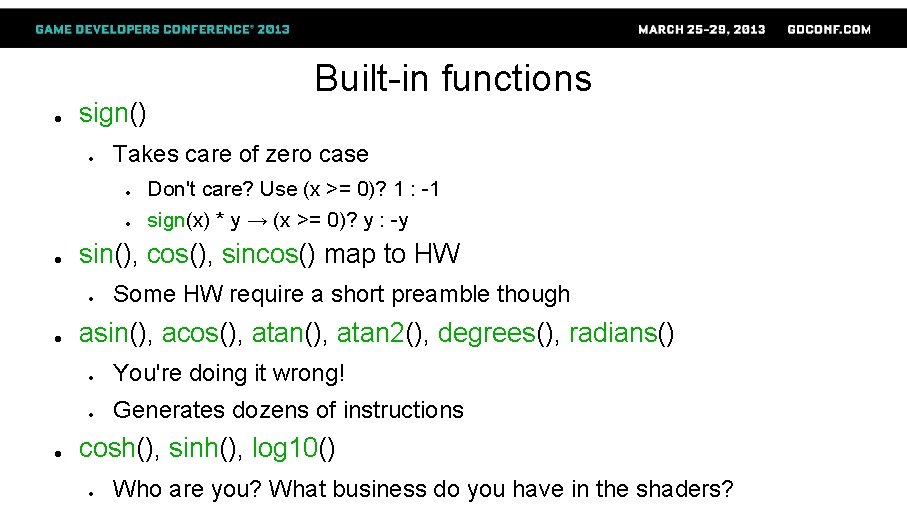

Built-in functions ● rcp(), rsqrt(), sqrt()* map directly to HW instructions ● Equivalent math may not be optimal … ● ● 1. 0 f / x tends to yield rcp(x) ● 1. 0 f / sqrt(x) yields rcp(sqrt(x)), NOT rsqrt(x)! exp 2() and log 2() maps to HW, exp() and log() do not ● ● Implemented as exp 2(x * 1. 442695 f) and log 2(x * 0. 693147 f) pow(x, y) implemented as exp 2(log 2(x) * y) ● Special cases for some literal values of y ● z * pow(x, y) = exp 2(log 2(x) * y + log 2(z)) ● ● Free multiply if log 2(z) can be precomputed e. g. specular_normalization * pow(n_dot_h, specular_power)

● sign() ● Takes care of zero case ● ● Don't care? Use (x >= 0)? 1 : -1 sign(x) * y → (x >= 0)? y : -y sin(), cos(), sincos() map to HW ● ● Built-in functions Some HW require a short preamble though asin(), acos(), atan 2(), degrees(), radians() ● You're doing it wrong! ● Generates dozens of instructions cosh(), sinh(), log 10() ● Who are you? What business do you have in the shaders?

![mulv m Builtin functions v x m0 v ● ● ● mul(v, m) Built-in functions ● v. x * m[0] + v.](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-29.jpg)

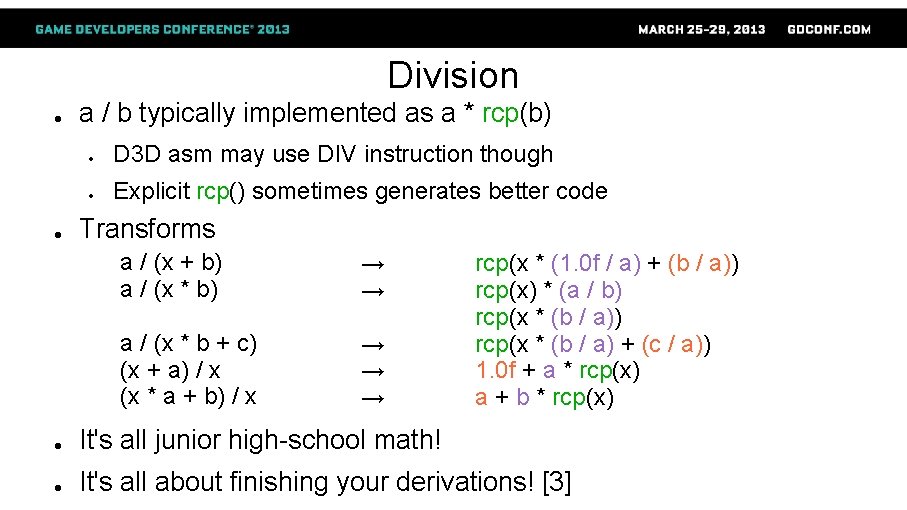

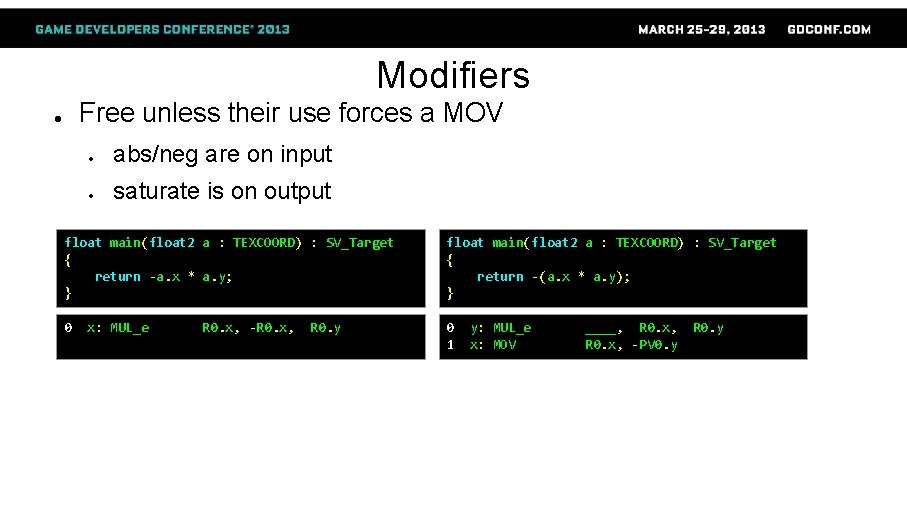

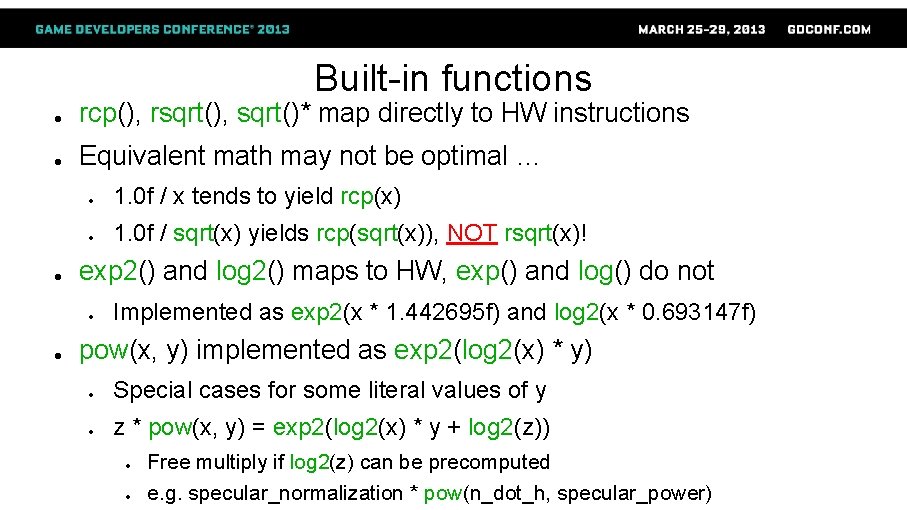

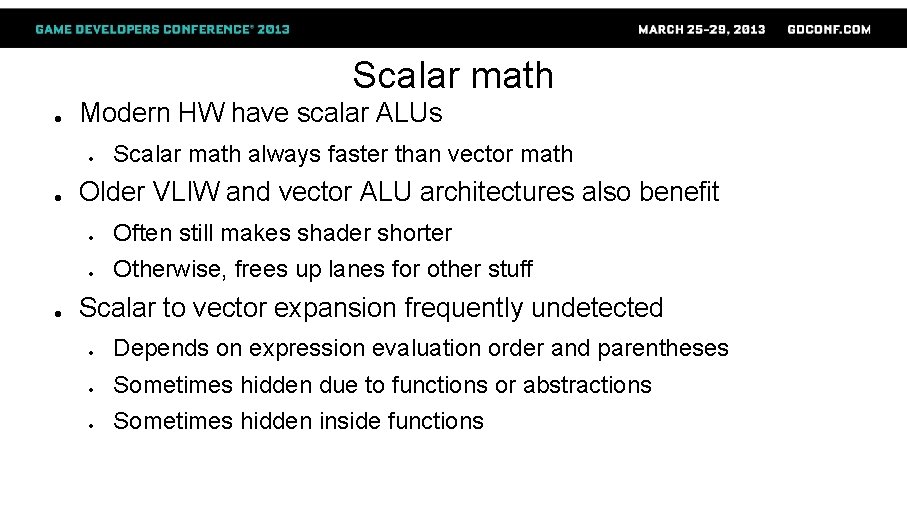

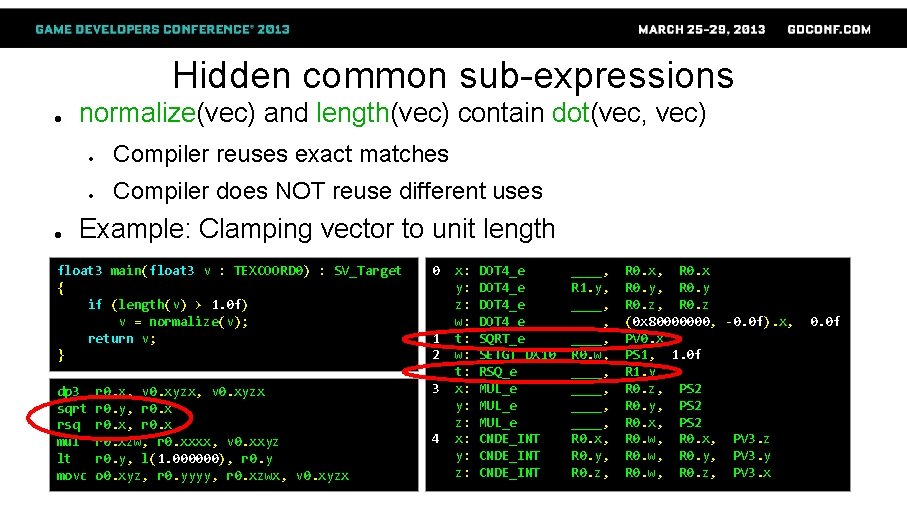

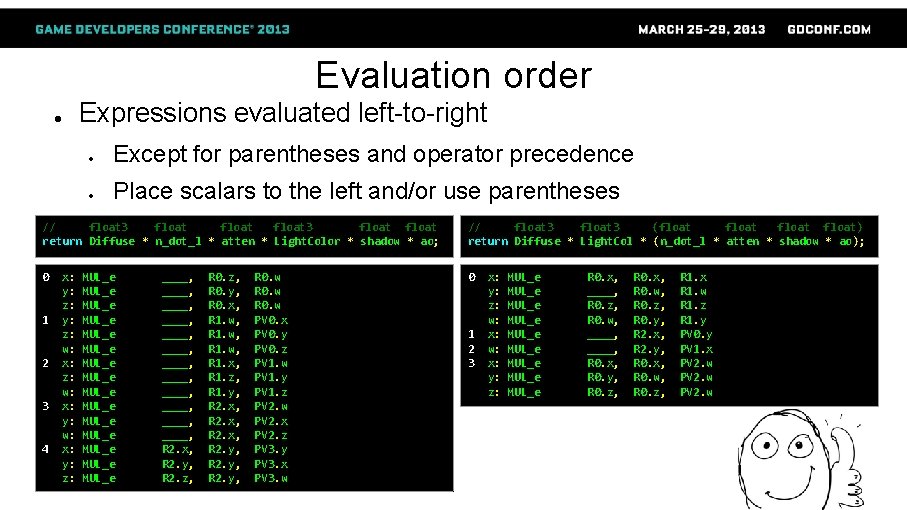

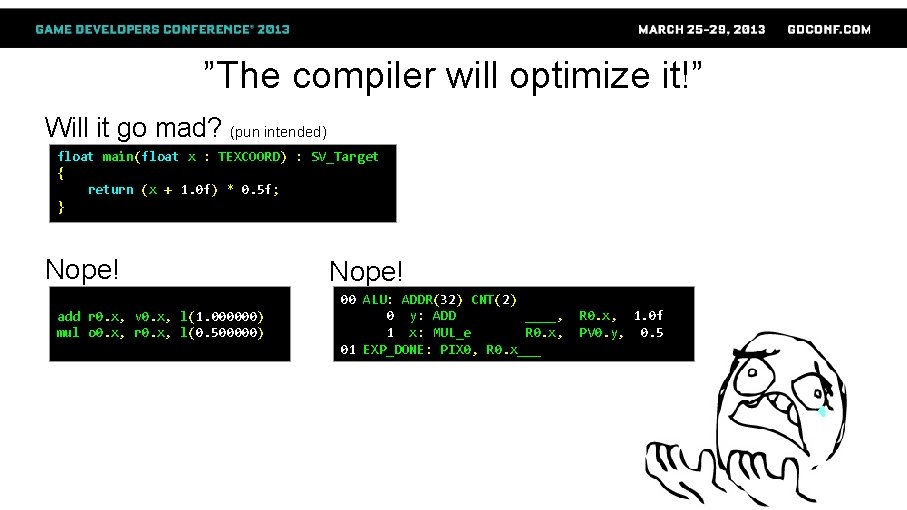

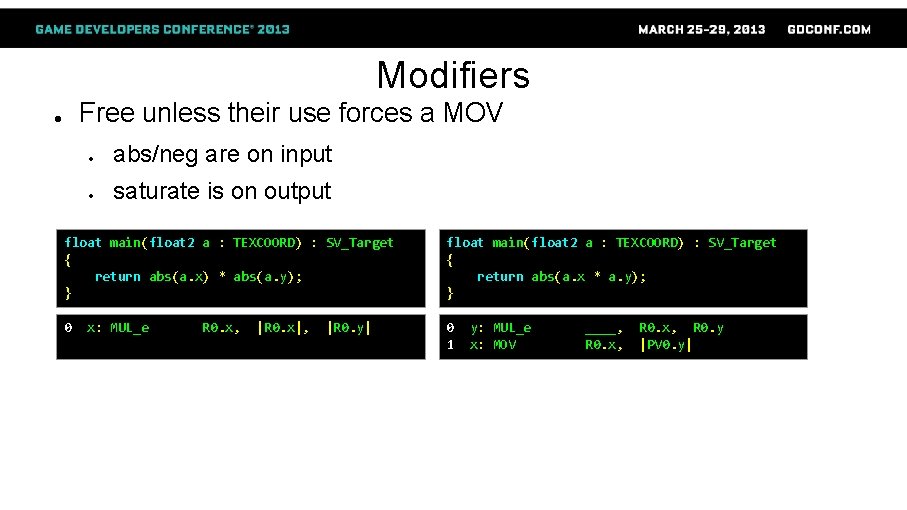

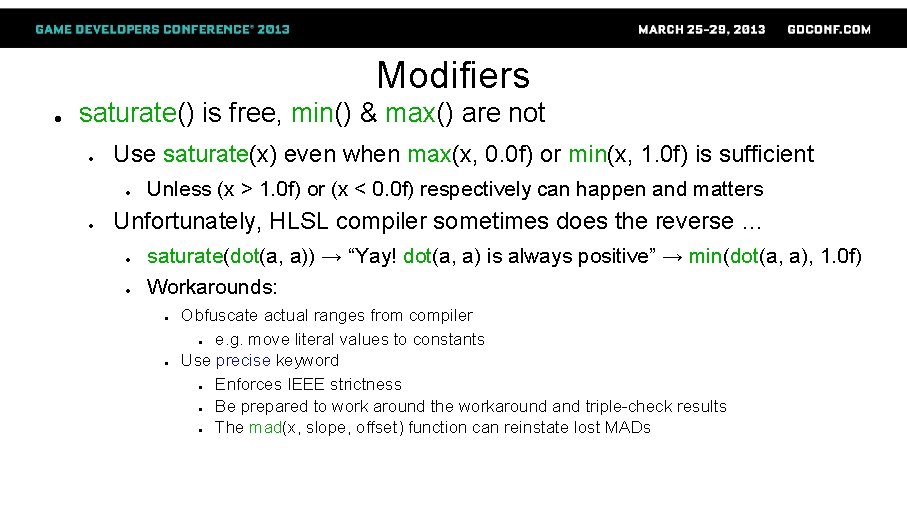

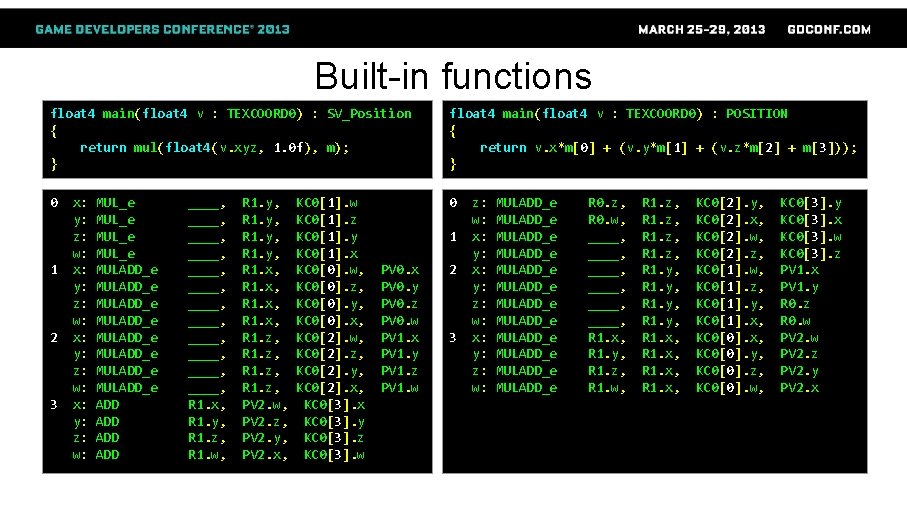

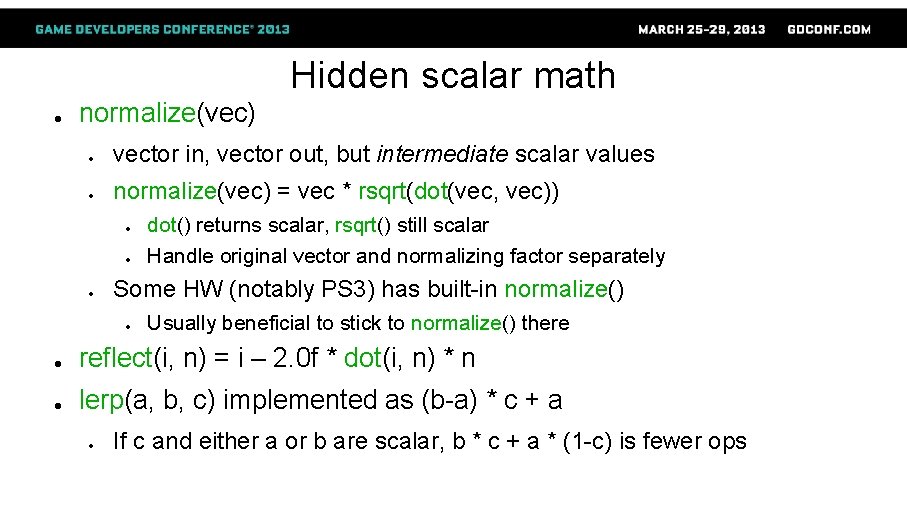

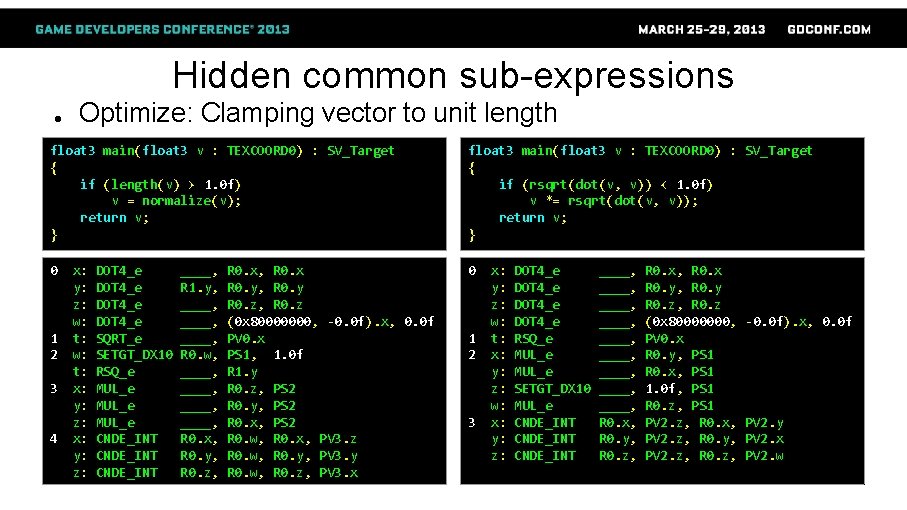

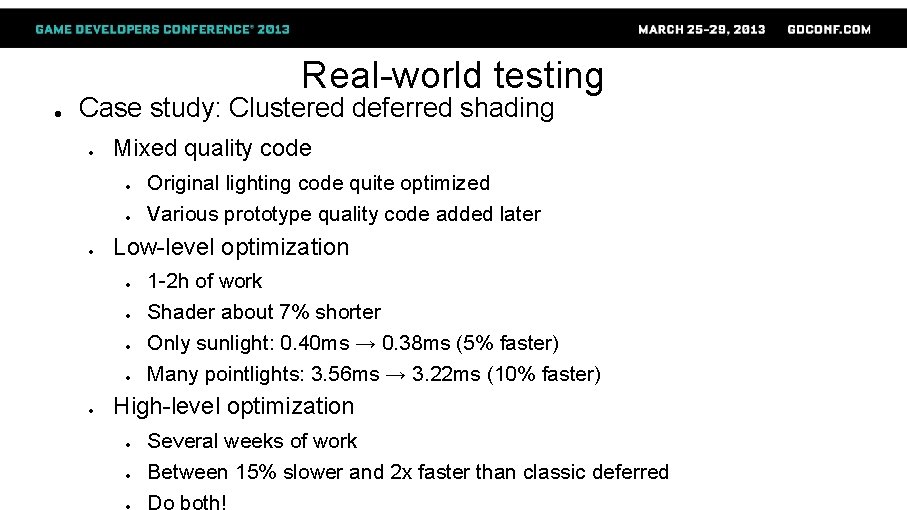

● ● ● mul(v, m) Built-in functions ● v. x * m[0] + v. y * m[1] + v. z * m[2] + v. w * m[3] ● MUL – MAD mul(float 4(v. xyz, 1), m) ● v. x * m[0] + v. y * m[1] + v. z * m[2] + m[3] ● MUL – MAD – ADD v. x * m[0] + (v. y * m[1] + (v. z * m[2] + m[3])) ● MAD – MAD

Built-in functions float 4 main(float 4 v : TEXCOORD 0) : SV_Position { return mul(float 4(v. xyz, 1. 0 f), m); } float 4 main(float 4 v : TEXCOORD 0) : POSITION { return v. x*m[0] + (v. y*m[1] + (v. z*m[2] + m[3])); } 0 0 1 2 3 x: y: z: w: MUL_e MULADD_e MULADD_e ADD ADD ____, ____, ____, R 1. x, R 1. y, R 1. z, R 1. w, R 1. y, R 1. x, R 1. z, PV 2. w, PV 2. z, PV 2. y, PV 2. x, KC 0[1]. w KC 0[1]. z KC 0[1]. y KC 0[1]. x KC 0[0]. w, KC 0[0]. z, KC 0[0]. y, KC 0[0]. x, KC 0[2]. w, KC 0[2]. z, KC 0[2]. y, KC 0[2]. x, KC 0[3]. x KC 0[3]. y KC 0[3]. z KC 0[3]. w 1 PV 0. x PV 0. y PV 0. z PV 0. w PV 1. x PV 1. y PV 1. z PV 1. w 2 3 z: w: x: y: z: w: MULADD_e MULADD_e MULADD_e R 0. z, R 0. w, ____, ____, R 1. x, R 1. y, R 1. z, R 1. w, R 1. z, R 1. y, R 1. x, KC 0[2]. y, KC 0[2]. x, KC 0[2]. w, KC 0[2]. z, KC 0[1]. w, KC 0[1]. z, KC 0[1]. y, KC 0[1]. x, KC 0[0]. y, KC 0[0]. z, KC 0[0]. w, KC 0[3]. y KC 0[3]. x KC 0[3]. w KC 0[3]. z PV 1. x PV 1. y R 0. z R 0. w PV 2. z PV 2. y PV 2. x

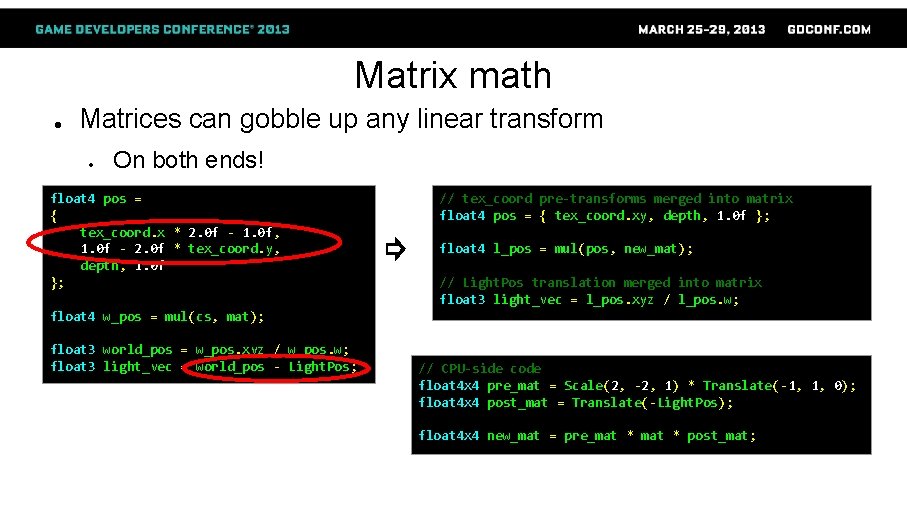

Matrix math ● Matrices can gobble up any linear transform ● On both ends! float 4 pos = { tex_coord. x * 2. 0 f - 1. 0 f, 1. 0 f - 2. 0 f * tex_coord. y, depth, 1. 0 f }; // tex_coord pre-transforms merged into matrix float 4 pos = { tex_coord. xy, depth, 1. 0 f }; ⇨ float 4 l_pos = mul(pos, new_mat); // Light. Pos translation merged into matrix float 3 light_vec = l_pos. xyz / l_pos. w; float 4 w_pos = mul(cs, mat); float 3 world_pos = w_pos. xyz / w_pos. w; float 3 light_vec = world_pos - Light. Pos; // CPU-side code float 4 x 4 pre_mat = Scale(2, -2, 1) * Translate(-1, 1, 0); float 4 x 4 post_mat = Translate(-Light. Pos); float 4 x 4 new_mat = pre_mat * post_mat;

Scalar math ● Modern HW have scalar ALUs ● ● ● Scalar math always faster than vector math Older VLIW and vector ALU architectures also benefit ● Often still makes shader shorter ● Otherwise, frees up lanes for other stuff Scalar to vector expansion frequently undetected ● Depends on expression evaluation order and parentheses ● Sometimes hidden due to functions or abstractions ● Sometimes hidden inside functions

Mixed scalar/vector math ● Work out math on a low-level ● Separate vector and scalar parts ● Look for common sub-expressions ● ● Compiler may not always be able to reuse them! Compiler often not able to extract scalars from them! dot(), normalize(), reflect(), length(), distance() Manage scalar and vector math separately ● Watch out for evaluation order ● ● Expression are evaluated left-to-right Use parenthesis

Hidden scalar math ● normalize(vec) ● vector in, vector out, but intermediate scalar values ● normalize(vec) = vec * rsqrt(dot(vec, vec)) ● ● ● dot() returns scalar, rsqrt() still scalar Handle original vector and normalizing factor separately Some HW (notably PS 3) has built-in normalize() ● Usually beneficial to stick to normalize() there ● reflect(i, n) = i – 2. 0 f * dot(i, n) * n ● lerp(a, b, c) implemented as (b-a) * c + a ● If c and either a or b are scalar, b * c + a * (1 -c) is fewer ops

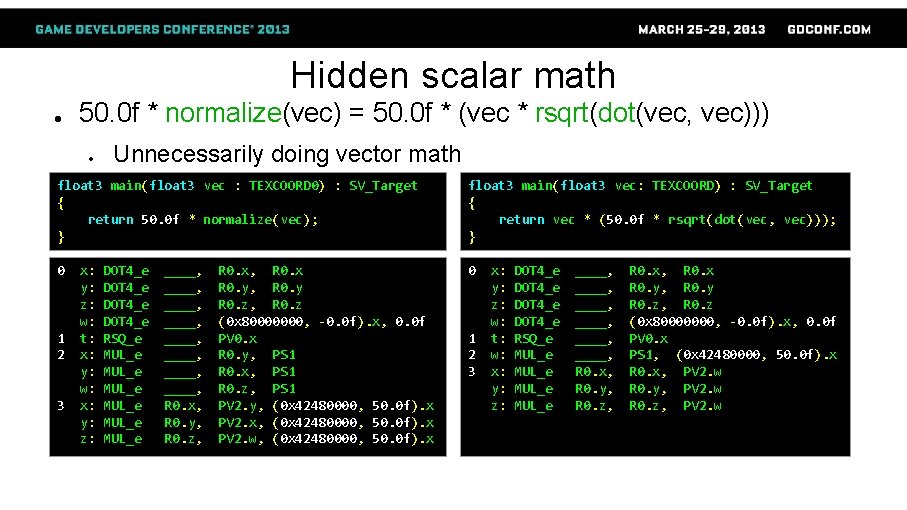

Hidden scalar math ● 50. 0 f * normalize(vec) = 50. 0 f * (vec * rsqrt(dot(vec, vec))) ● Unnecessarily doing vector math float 3 main(float 3 vec : TEXCOORD 0) : SV_Target { return 50. 0 f * normalize(vec); } float 3 main(float 3 vec: TEXCOORD) : SV_Target { return vec * (50. 0 f * rsqrt(dot(vec, vec))); } 0 0 1 2 3 x: y: z: w: t: x: y: w: x: y: z: DOT 4_e RSQ_e MUL_e MUL_e ____, ____, R 0. x, R 0. y, R 0. z, R 0. x, R 0. x R 0. y, R 0. y R 0. z, R 0. z (0 x 80000000, -0. 0 f). x, 0. 0 f PV 0. x R 0. y, PS 1 R 0. x, PS 1 R 0. z, PS 1 PV 2. y, (0 x 42480000, 50. 0 f). x PV 2. x, (0 x 42480000, 50. 0 f). x PV 2. w, (0 x 42480000, 50. 0 f). x 1 2 3 x: y: z: w: t: w: x: y: z: DOT 4_e RSQ_e MUL_e ____, ____, R 0. x, R 0. y, R 0. z, R 0. x, R 0. x R 0. y, R 0. y R 0. z, R 0. z (0 x 80000000, -0. 0 f). x, 0. 0 f PV 0. x PS 1, (0 x 42480000, 50. 0 f). x R 0. x, PV 2. w R 0. y, PV 2. w R 0. z, PV 2. w

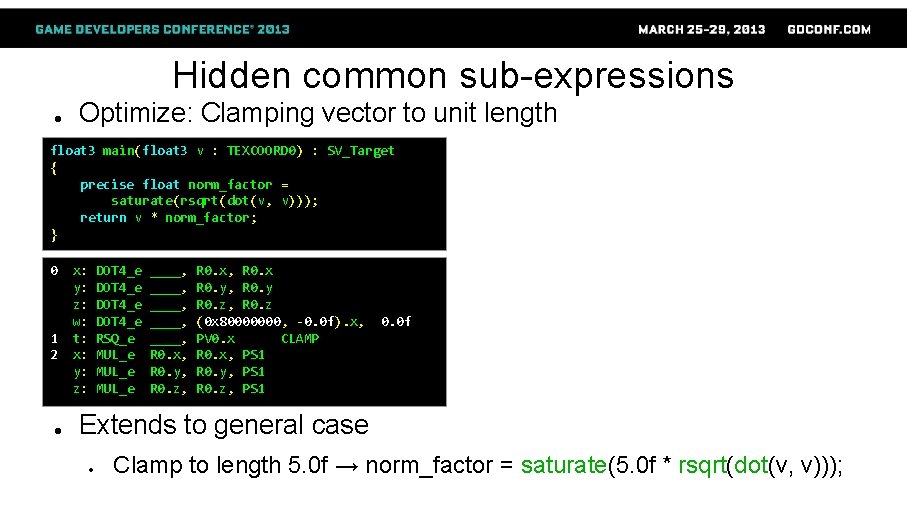

Hidden common sub-expressions ● ● normalize(vec) and length(vec) contain dot(vec, vec) ● Compiler reuses exact matches ● Compiler does NOT reuse different uses Example: Clamping vector to unit length float 3 main(float 3 v : TEXCOORD 0) : SV_Target { if (length(v) > 1. 0 f) v = normalize(v); return v; } 0 dp 3 sqrt rsq mul lt movc 3 r 0. x, v 0. xyzx r 0. y, r 0. xzw, r 0. xxxx, v 0. xxyz r 0. y, l(1. 000000), r 0. y o 0. xyz, r 0. yyyy, r 0. xzwx, v 0. xyzx 1 2 4 x: y: z: w: t: x: y: z: DOT 4_e SQRT_e SETGT_DX 10 RSQ_e MUL_e CNDE_INT ____, R 1. y, ____, R 0. w, ____, R 0. x, R 0. y, R 0. z, R 0. x, R 0. x R 0. y, R 0. y R 0. z, R 0. z (0 x 80000000, -0. 0 f). x, PV 0. x PS 1, 1. 0 f R 1. y R 0. z, PS 2 R 0. y, PS 2 R 0. x, PS 2 R 0. w, R 0. x, PV 3. z R 0. w, R 0. y, PV 3. y R 0. w, R 0. z, PV 3. x 0. 0 f

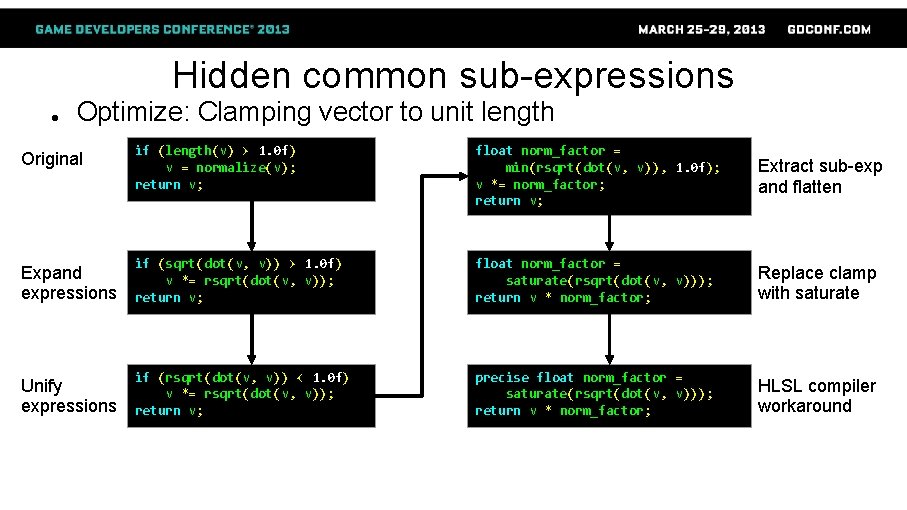

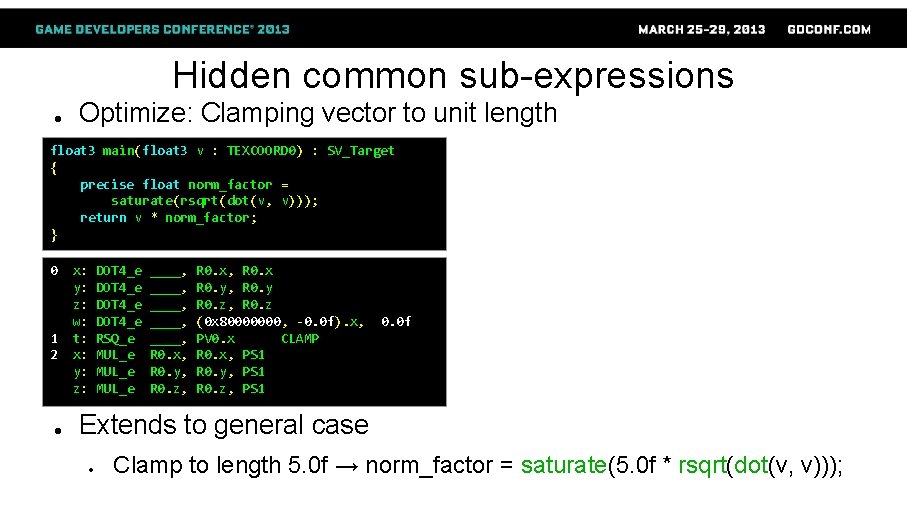

Hidden common sub-expressions ● Optimize: Clamping vector to unit length if (length(v) > 1. 0 f) v = normalize(v); return v; float norm_factor = min(rsqrt(dot(v, v)), 1. 0 f); v *= norm_factor; return v; Extract sub-exp and flatten Expand expressions if (sqrt(dot(v, v)) > 1. 0 f) v *= rsqrt(dot(v, v)); return v; float norm_factor = saturate(rsqrt(dot(v, v))); return v * norm_factor; Replace clamp with saturate Unify expressions if (rsqrt(dot(v, v)) < 1. 0 f) v *= rsqrt(dot(v, v)); return v; precise float norm_factor = saturate(rsqrt(dot(v, v))); return v * norm_factor; HLSL compiler workaround Original

Hidden common sub-expressions ● Optimize: Clamping vector to unit length float 3 main(float 3 v : TEXCOORD 0) : SV_Target { if (length(v) > 1. 0 f) v = normalize(v); return v; } float 3 main(float 3 v : TEXCOORD 0) : SV_Target { if (rsqrt(dot(v, v)) < 1. 0 f) v *= rsqrt(dot(v, v)); return v; } 0 0 1 2 3 4 x: y: z: w: t: x: y: z: DOT 4_e SQRT_e SETGT_DX 10 RSQ_e MUL_e CNDE_INT ____, R 1. y, ____, R 0. w, ____, R 0. x, R 0. y, R 0. z, R 0. x, R 0. x R 0. y, R 0. y R 0. z, R 0. z (0 x 80000000, -0. 0 f). x, 0. 0 f PV 0. x PS 1, 1. 0 f R 1. y R 0. z, PS 2 R 0. y, PS 2 R 0. x, PS 2 R 0. w, R 0. x, PV 3. z R 0. w, R 0. y, PV 3. y R 0. w, R 0. z, PV 3. x 1 2 3 x: y: z: w: t: x: y: z: w: x: y: z: DOT 4_e RSQ_e MUL_e SETGT_DX 10 MUL_e CNDE_INT ____, ____, ____, R 0. x, R 0. y, R 0. z, R 0. x, R 0. x R 0. y, R 0. y R 0. z, R 0. z (0 x 80000000, PV 0. x R 0. y, PS 1 R 0. x, PS 1 1. 0 f, PS 1 R 0. z, PS 1 PV 2. z, R 0. x, PV 2. z, R 0. y, PV 2. z, R 0. z, -0. 0 f). x, 0. 0 f PV 2. y PV 2. x PV 2. w

Hidden common sub-expressions ● Optimize: Clamping vector to unit length float 3 main(float 3 v : TEXCOORD 0) : SV_Target { precise float norm_factor = saturate(rsqrt(dot(v, v))); return v * norm_factor; } 0 1 2 ● x: y: z: w: t: x: y: z: DOT 4_e RSQ_e MUL_e ____, ____, R 0. x, R 0. y, R 0. z, R 0. x, R 0. x R 0. y, R 0. y R 0. z, R 0. z (0 x 80000000, -0. 0 f). x, PV 0. x CLAMP R 0. x, PS 1 R 0. y, PS 1 R 0. z, PS 1 0. 0 f Extends to general case ● Clamp to length 5. 0 f → norm_factor = saturate(5. 0 f * rsqrt(dot(v, v)));

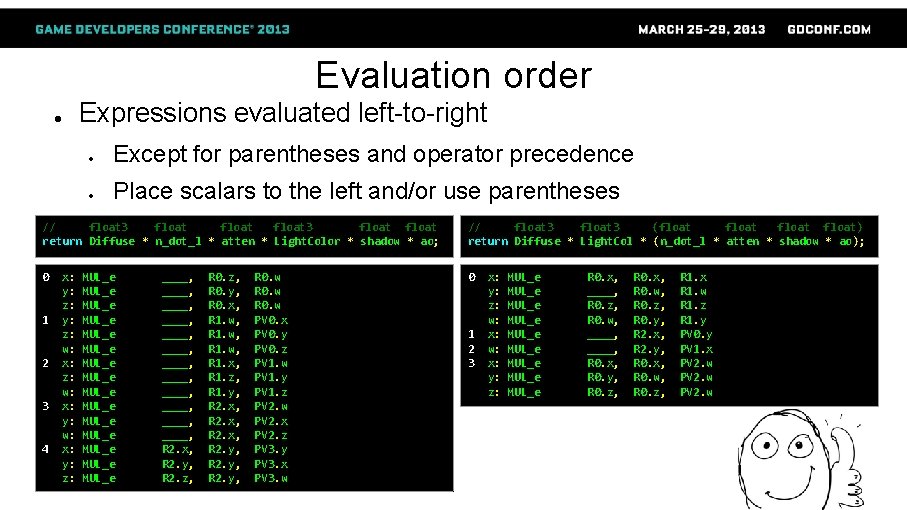

Evaluation order Expressions evaluated left-to-right ● ● Except for parentheses and operator precedence ● Place scalars to the left and/or use parentheses // float 3 float return Diffuse * n_dot_l * atten * Light. Color * shadow * ao; // float 3 (float float) return Diffuse * Light. Col * (n_dot_l * atten * shadow * ao); 0 0 1 2 3 4 x: y: z: w: x: y: z: MUL_e MUL_e MUL_e MUL_e ____, ____, ____, R 2. x, R 2. y, R 2. z, R 0. z, R 0. y, R 0. x, R 1. w, R 1. x, R 1. z, R 1. y, R 2. x, R 2. y, R 0. w PV 0. x PV 0. y PV 0. z PV 1. w PV 1. y PV 1. z PV 2. w PV 2. x PV 2. z PV 3. y PV 3. x PV 3. w 1 2 3 x: y: z: w: x: y: z: MUL_e MUL_e MUL_e R 0. x, ____, R 0. z, R 0. w, ____, R 0. x, R 0. y, R 0. z, R 0. x, R 0. w, R 0. z, R 0. y, R 2. x, R 2. y, R 0. x, R 0. w, R 0. z, R 1. x R 1. w R 1. z R 1. y PV 0. y PV 1. x PV 2. w

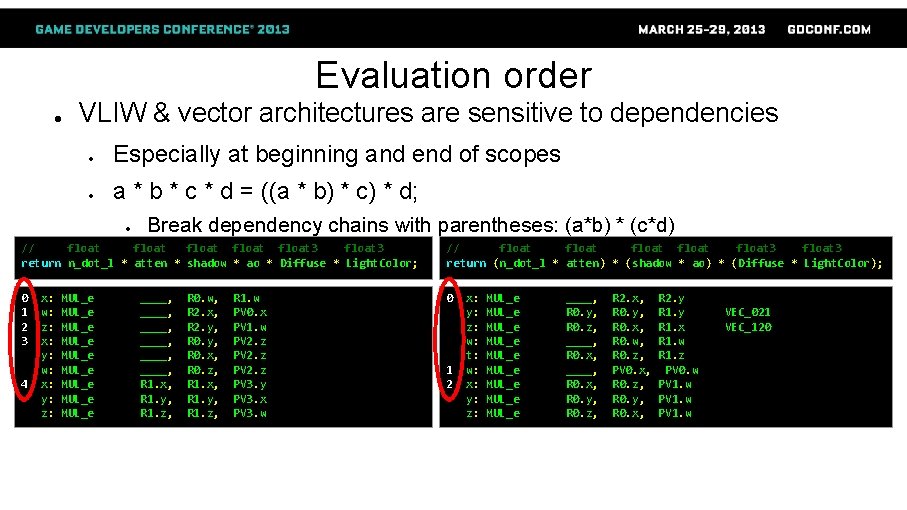

Evaluation order ● VLIW & vector architectures are sensitive to dependencies ● Especially at beginning and end of scopes ● a * b * c * d = ((a * b) * c) * d; ● Break dependency chains with parentheses: (a*b) * (c*d) // float float 3 return n_dot_l * atten * shadow * ao * Diffuse * Light. Color; // float float 3 return (n_dot_l * atten) * (shadow * ao) * (Diffuse * Light. Color); 0 1 2 3 0 4 x: w: z: x: y: w: x: y: z: MUL_e MUL_e MUL_e ____, ____, R 1. x, R 1. y, R 1. z, R 0. w, R 2. x, R 2. y, R 0. x, R 0. z, R 1. x, R 1. y, R 1. z, R 1. w PV 0. x PV 1. w PV 2. z PV 3. y PV 3. x PV 3. w 1 2 x: y: z: w: t: w: x: y: z: MUL_e MUL_e MUL_e ____, R 0. y, R 0. z, ____, R 0. x, R 0. y, R 0. z, R 2. x, R 0. y, R 0. x, R 0. w, R 0. z, PV 0. x, R 0. z, R 0. y, R 0. x, R 2. y R 1. x R 1. w R 1. z PV 0. w PV 1. w VEC_021 VEC_120

Real-world testing ● Case study: Clustered deferred shading ● Mixed quality code ● ● ● Low-level optimization ● ● ● Original lighting code quite optimized Various prototype quality code added later 1 -2 h of work Shader about 7% shorter Only sunlight: 0. 40 ms → 0. 38 ms (5% faster) Many pointlights: 3. 56 ms → 3. 22 ms (10% faster) High-level optimization ● ● ● Several weeks of work Between 15% slower and 2 x faster than classic deferred Do both!

![Additional recommendations Communicate intention with branch flatten loop unroll branch turns divergent Additional recommendations ● Communicate intention with [branch], [flatten], [loop], [unroll] ● [branch] turns “divergent](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-43.jpg)

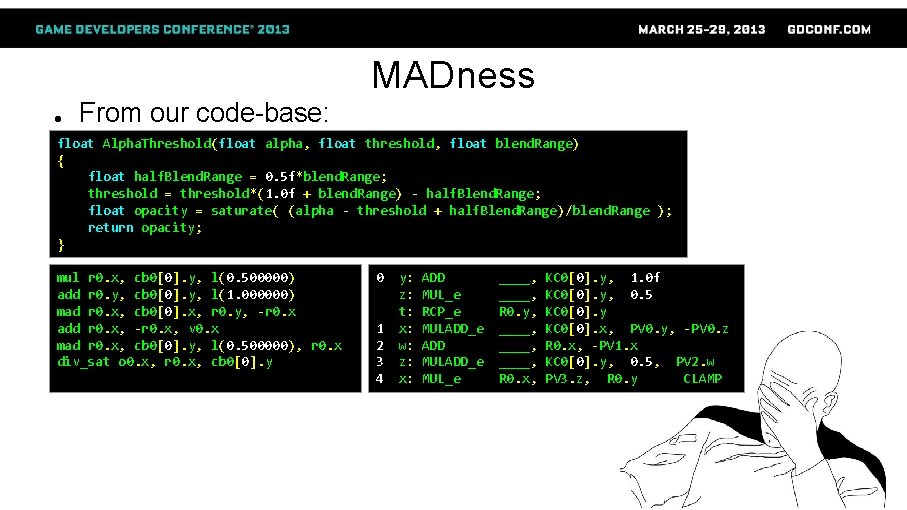

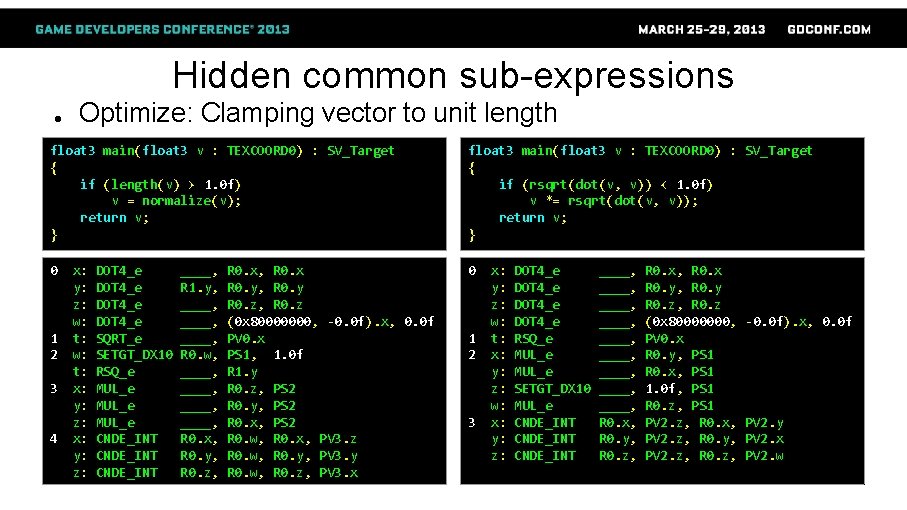

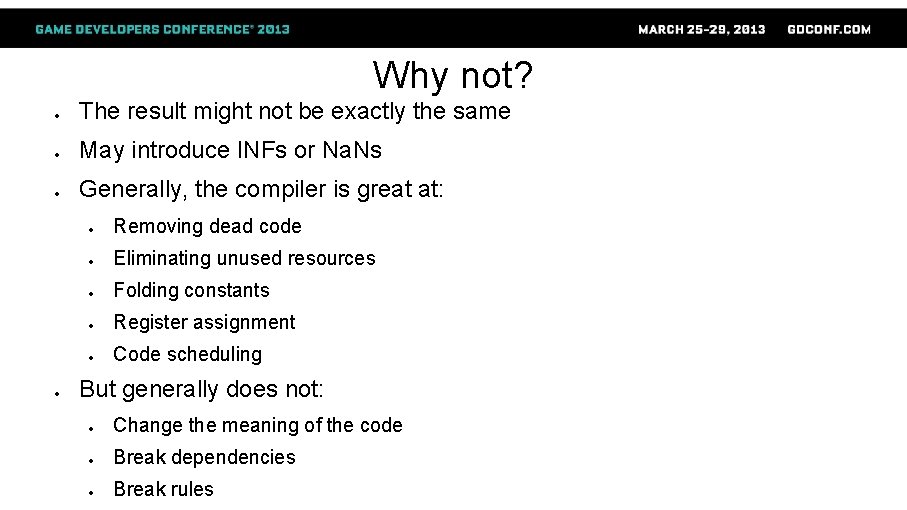

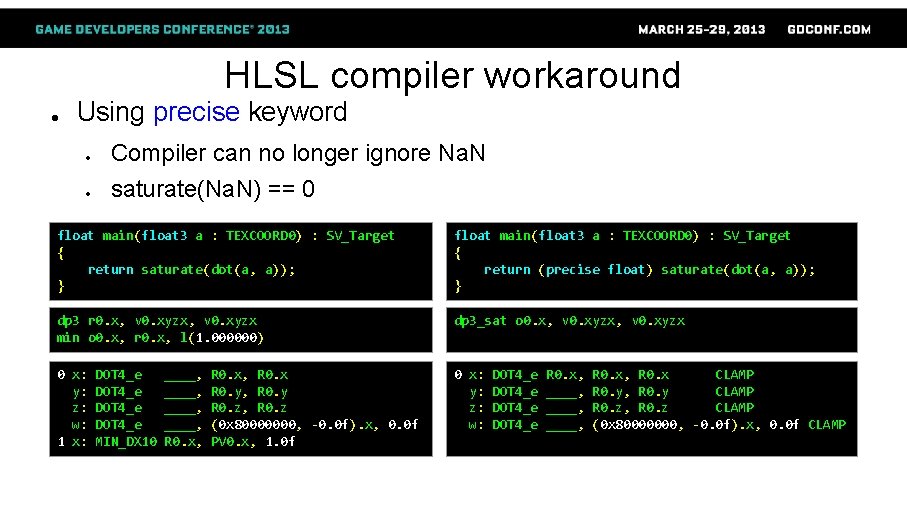

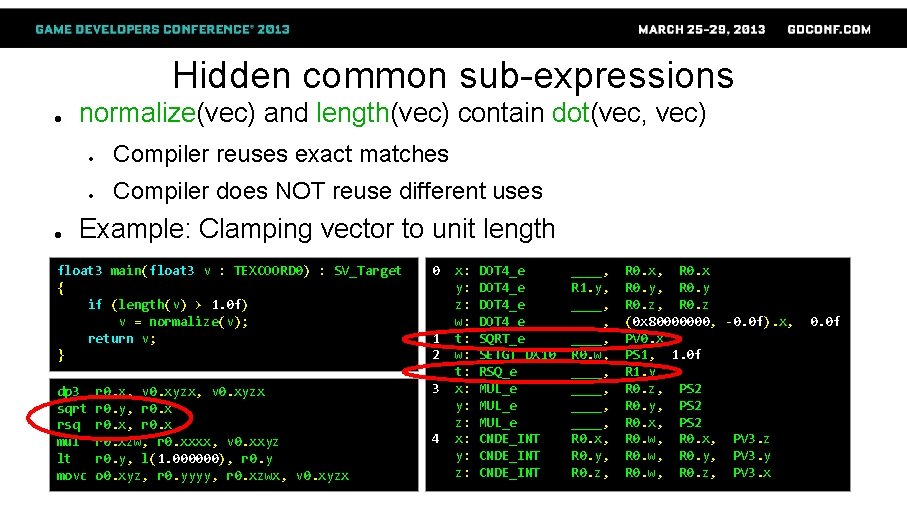

Additional recommendations ● Communicate intention with [branch], [flatten], [loop], [unroll] ● [branch] turns “divergent gradient” warning into error ● ● Which is great! Otherwise pulls chunks of code outside branch ● Don't do in shader what can be done elsewhere ● Move linear ops to vertex shader ● ● Unless vertex bound of course Don't output more than needed ● SM 4+ doesn't require float 4 SV_Target ● Don't write unused alphas! float 2 Clip. Space. To. Texcoord(float 3 Cs) { Cs. xy = Cs. xy / Cs. z; Cs. xy = Cs. xy * 0. 5 h + 0. 5 h; Cs. y = ( 1. h - Cs. y ); return Cs. xy; } float 2 tex_coord = Cs. xy / Cs. z;

How can I be a better low-level coder? ● Familiarize yourself with GPU HW instructions ● ● ● Also learn D 3 D asm on PC Familiarize yourself with HLSL ↔ HW code mapping ● GPUShader. Analyzer, NVShader. Perf, fxc. exe ● Compare result across HW and platforms Monitor shader edits' effect on shader length ● Abnormal results? → Inspect asm, figure out cause and effect ● Also do real-world benchmarking

Optimize all the shaders!

![References 1 IEEE754 2 FloatingPoint Rules 3 Fabian Giesen Finish your derivations please References [1] IEEE-754 [2] Floating-Point Rules [3] Fabian Giesen: Finish your derivations, please](https://slidetodoc.com/presentation_image_h/7c3c9094f685f1b1301244f9b72a7110/image-46.jpg)

References [1] IEEE-754 [2] Floating-Point Rules [3] Fabian Giesen: Finish your derivations, please

Questions? @_Humus_ emil. persson@avalanchestudios. se We are hiring! New York, Stockholm