Logic Programming LPMLN Table of Contents Logic programming

Logic Programming & LPMLN

Table of Contents • Logic programming • Answer set programming • Markov logic network • LPMLN

Logic Programming

Definitions •

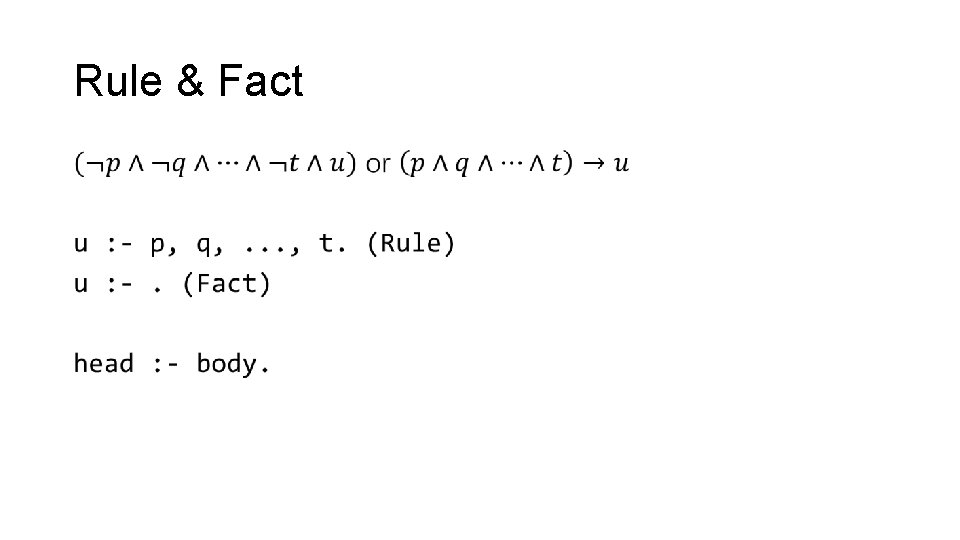

Rule & Fact •

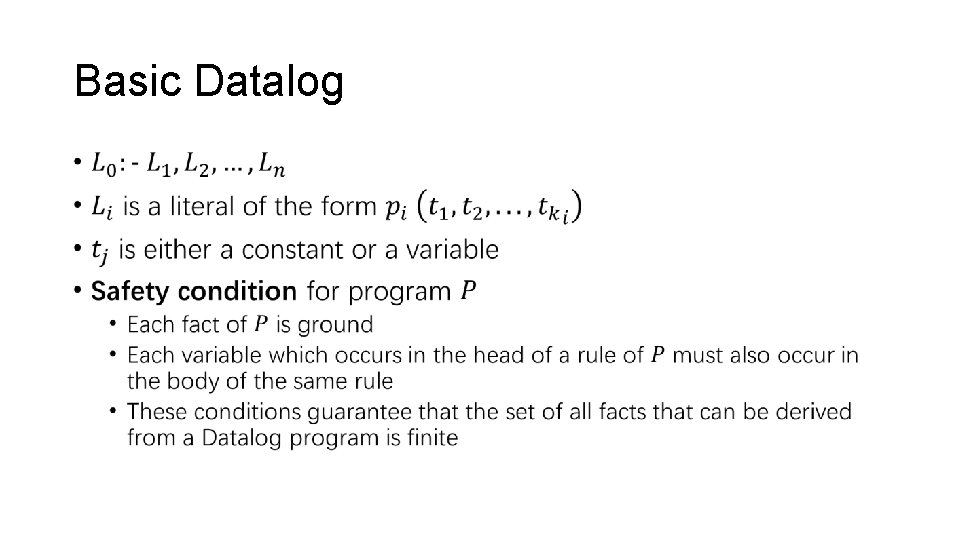

Basic Datalog •

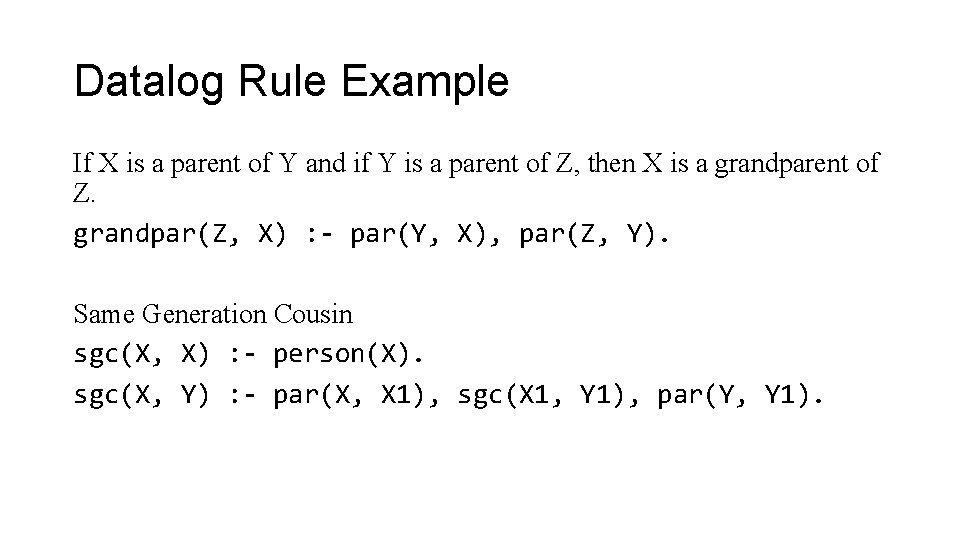

Datalog Rule Example If X is a parent of Y and if Y is a parent of Z, then X is a grandparent of Z. grandpar(Z, X) : - par(Y, X), par(Z, Y). Same Generation Cousin sgc(X, X) : - person(X). sgc(X, Y) : - par(X, X 1), sgc(X 1, Y 1), par(Y, Y 1).

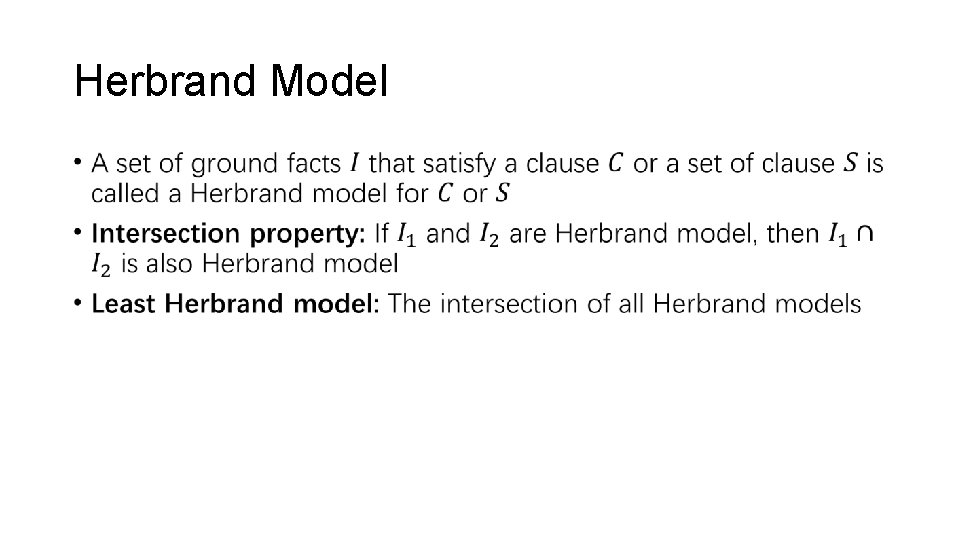

Herbrand Model •

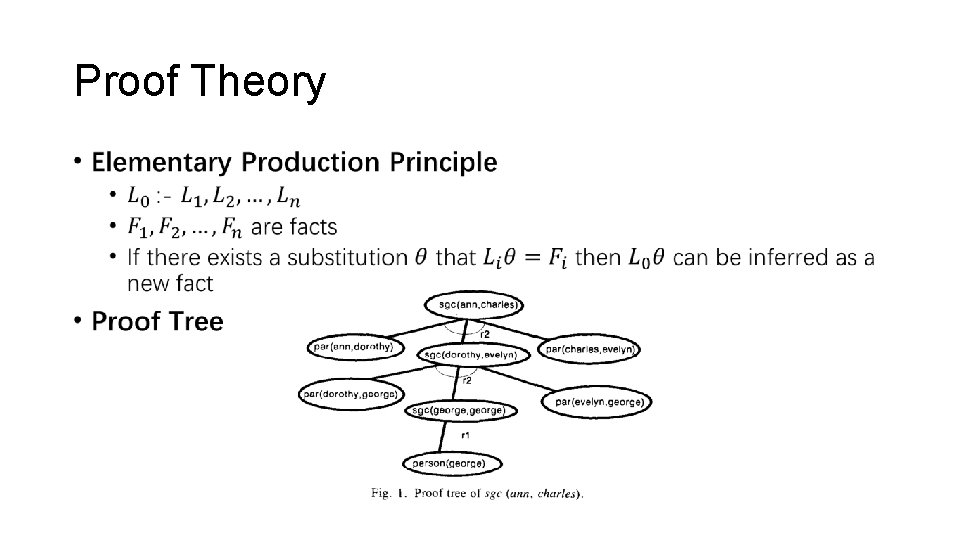

Proof Theory •

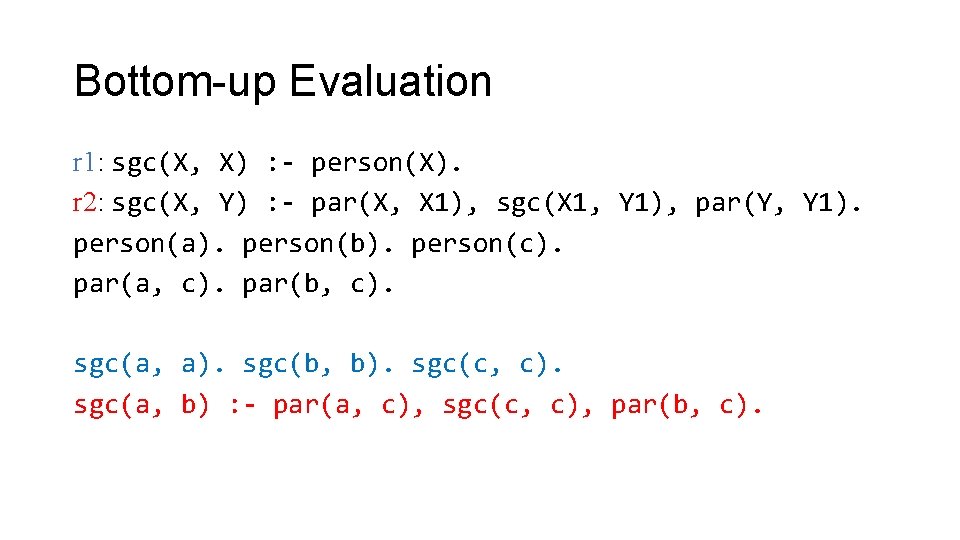

Bottom-up Evaluation r 1: sgc(X, X) : - person(X). r 2: sgc(X, Y) : - par(X, X 1), sgc(X 1, Y 1), par(Y, Y 1). person(a). person(b). person(c). par(a, c). par(b, c). sgc(a, a). sgc(b, b). sgc(c, c). sgc(a, b) : - par(a, c), sgc(c, c), par(b, c).

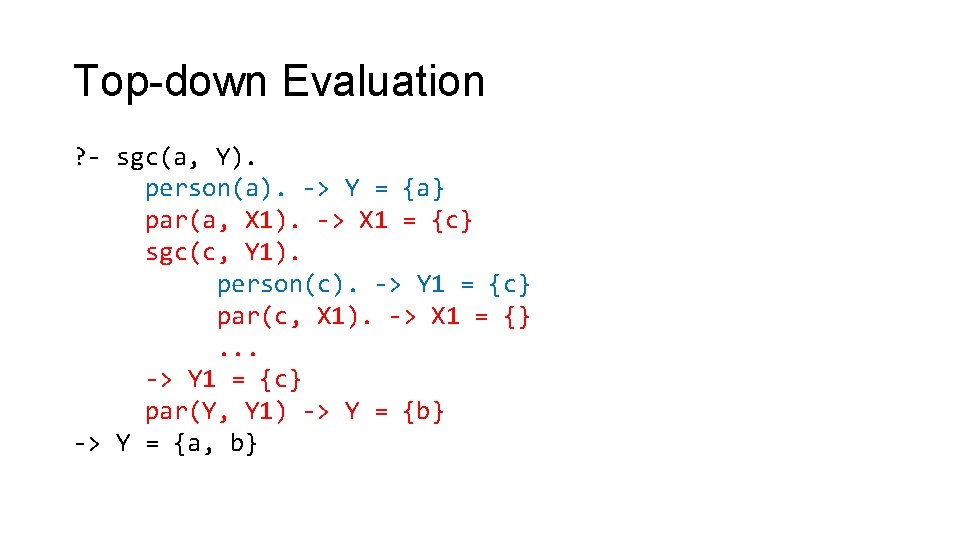

Top-down Evaluation ? - sgc(a, Y). person(a). -> Y = {a} par(a, X 1). -> X 1 = {c} sgc(c, Y 1). person(c). -> Y 1 = {c} par(c, X 1). -> X 1 = {}. . . -> Y 1 = {c} par(Y, Y 1) -> Y = {b} -> Y = {a, b}

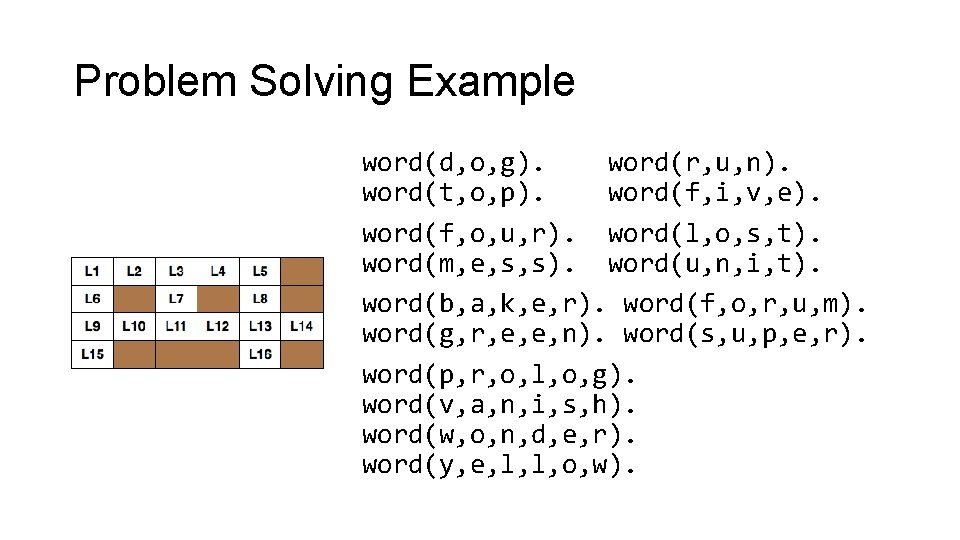

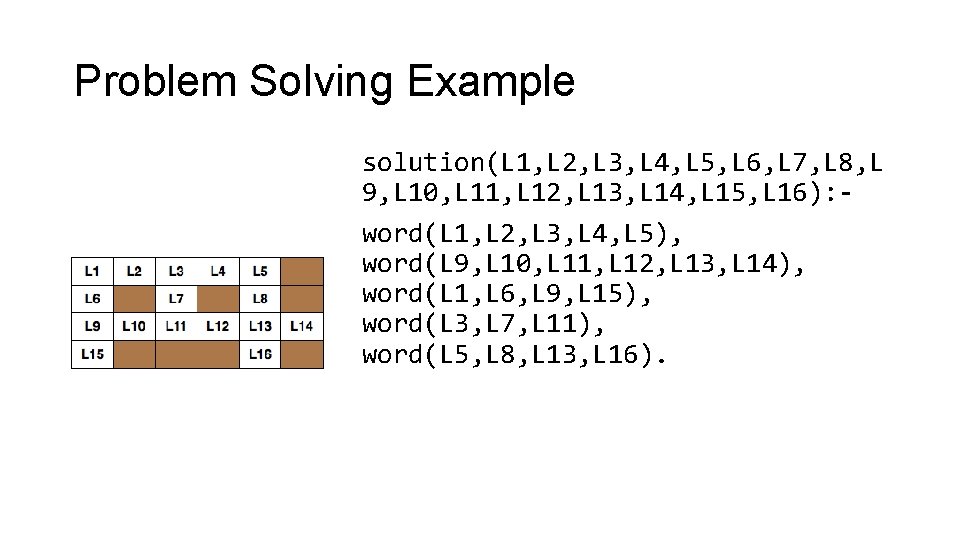

Problem Solving Example word(d, o, g). word(r, u, n). word(t, o, p). word(f, i, v, e). word(f, o, u, r). word(l, o, s, t). word(m, e, s, s). word(u, n, i, t). word(b, a, k, e, r). word(f, o, r, u, m). word(g, r, e, e, n). word(s, u, p, e, r). word(p, r, o, l, o, g). word(v, a, n, i, s, h). word(w, o, n, d, e, r). word(y, e, l, l, o, w).

Problem Solving Example solution(L 1, L 2, L 3, L 4, L 5, L 6, L 7, L 8, L 9, L 10, L 11, L 12, L 13, L 14, L 15, L 16): word(L 1, L 2, L 3, L 4, L 5), word(L 9, L 10, L 11, L 12, L 13, L 14), word(L 1, L 6, L 9, L 15), word(L 3, L 7, L 11), word(L 5, L 8, L 13, L 16).

Bottom-up Evaluation Optimization • Naïve approach: iteratively apply rules to all facts until no new fact can be derived • Problem • One fact is derived many times • The fact irrelevant to goal is also derived • Solution • Semi-naïve approach • Magic set rewriting

Answer Set Programming

Rules with Negation •

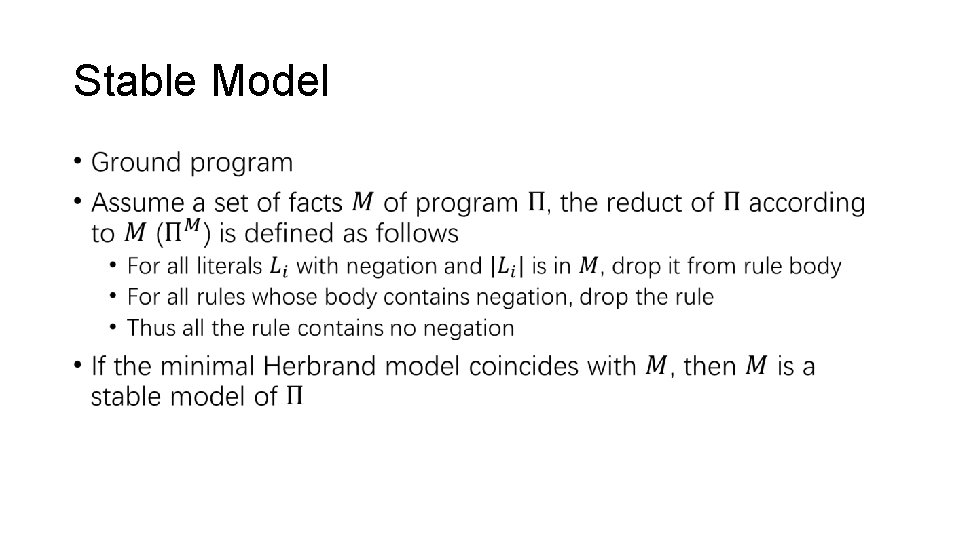

Stable Model •

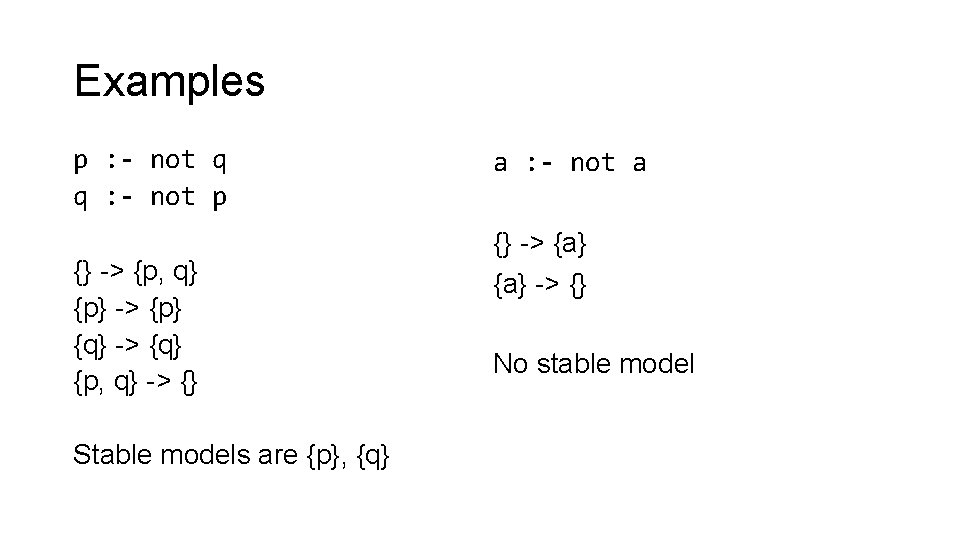

Examples p : - not q q : - not p {} -> {p, q} {p} -> {p} {q} -> {q} {p, q} -> {} Stable models are {p}, {q} a : - not a {} -> {a} -> {} No stable model

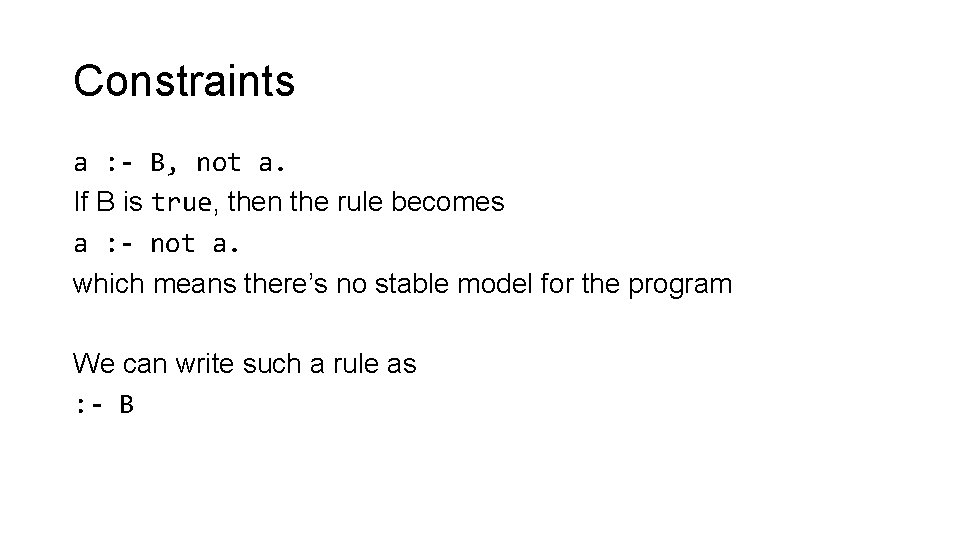

Constraints a : - B, not a. If B is true, then the rule becomes a : - not a. which means there’s no stable model for the program We can write such a rule as : - B

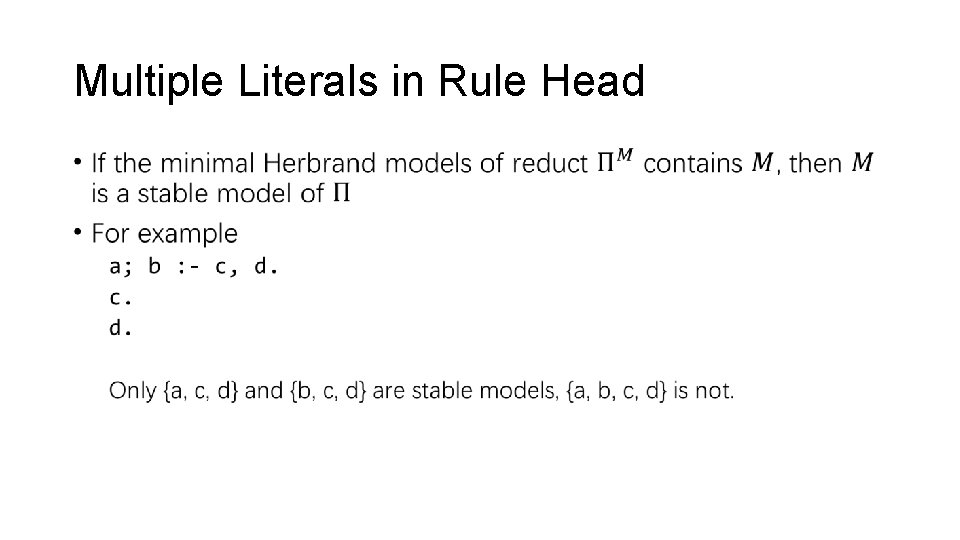

Multiple Literals in Rule Head •

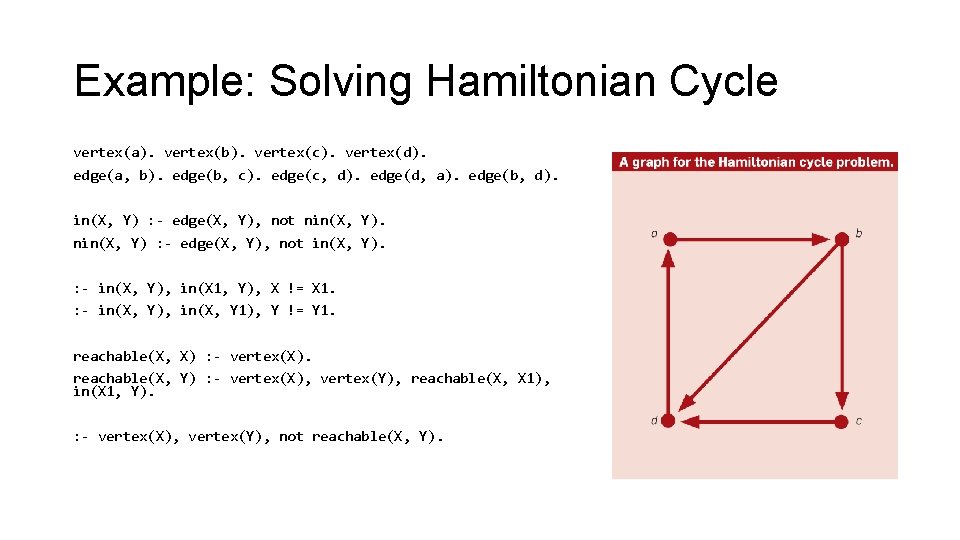

Example: Solving Hamiltonian Cycle vertex(a). vertex(b). vertex(c). vertex(d). edge(a, b). edge(b, c). edge(c, d). edge(d, a). edge(b, d). in(X, Y) : - edge(X, Y), not nin(X, Y) : - edge(X, Y), not in(X, Y). : - in(X, Y), in(X 1, Y), X != X 1. : - in(X, Y), in(X, Y 1), Y != Y 1. reachable(X, X) : - vertex(X). reachable(X, Y) : - vertex(X), vertex(Y), reachable(X, X 1), in(X 1, Y). : - vertex(X), vertex(Y), not reachable(X, Y).

Markov Logic Network

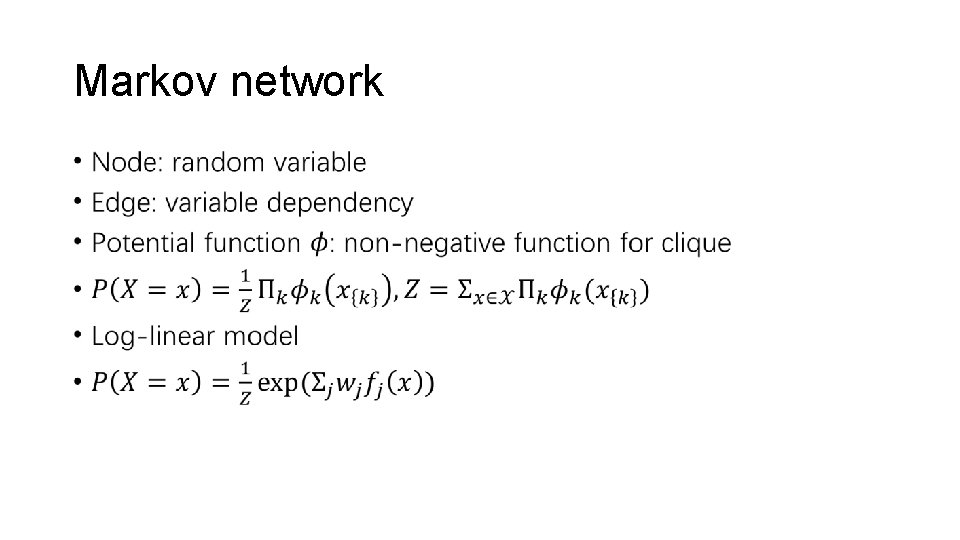

Markov network •

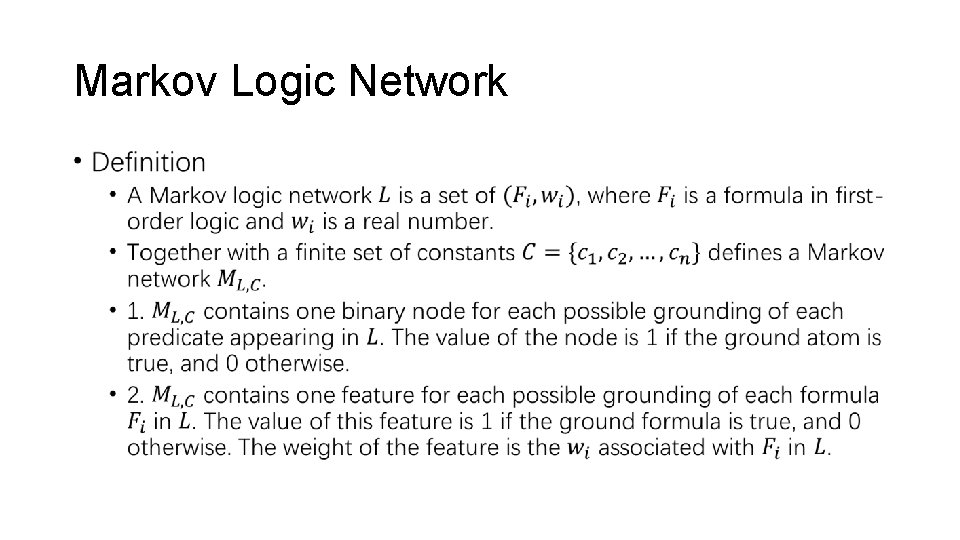

Markov Logic Network •

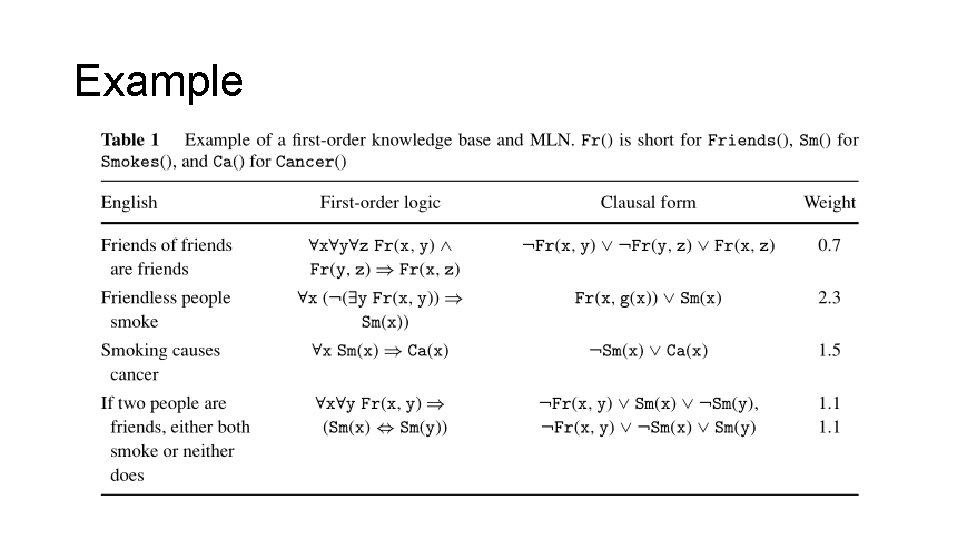

Example

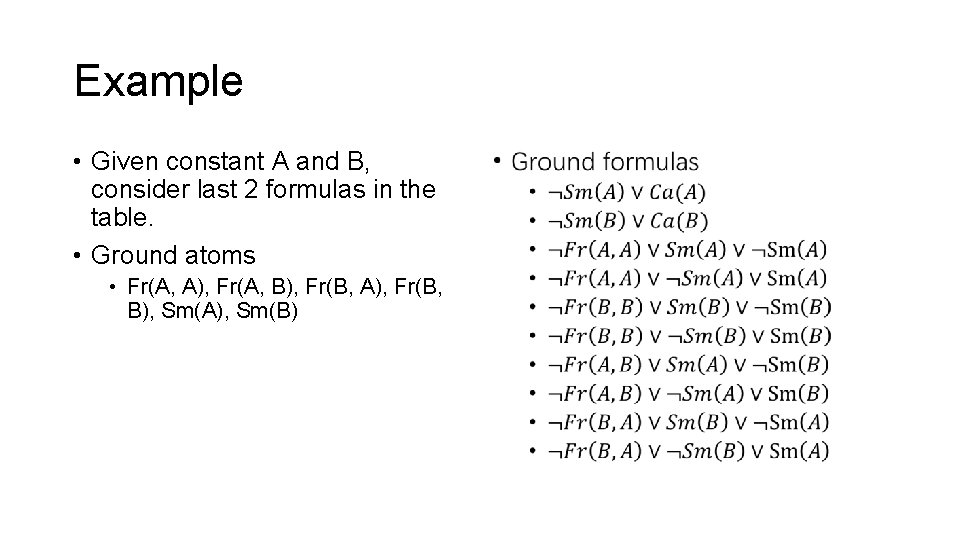

Example • Given constant A and B, consider last 2 formulas in the table. • Ground atoms • Fr(A, A), Fr(A, B), Fr(B, A), Fr(B, B), Sm(A), Sm(B) •

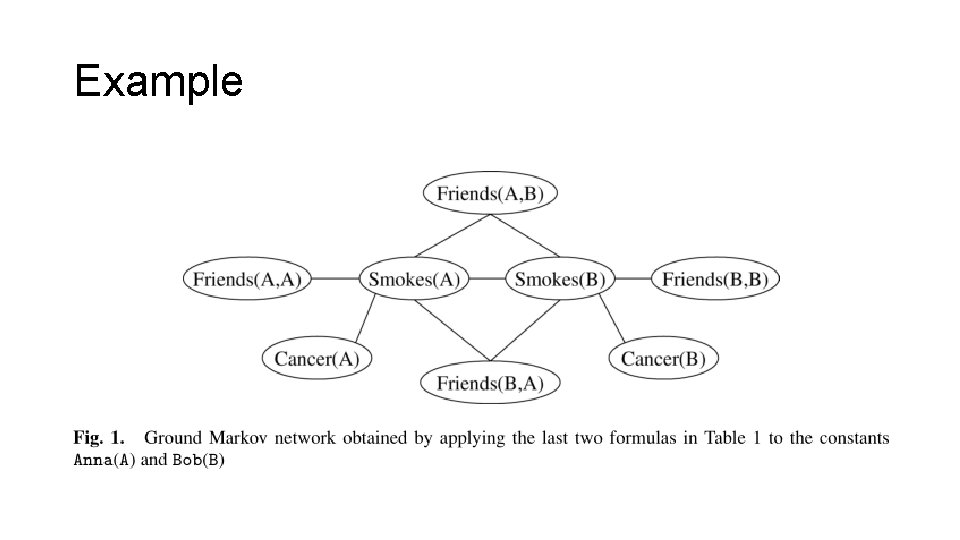

Example

LPMLN Weighted Rules under the Stable Model Semantics

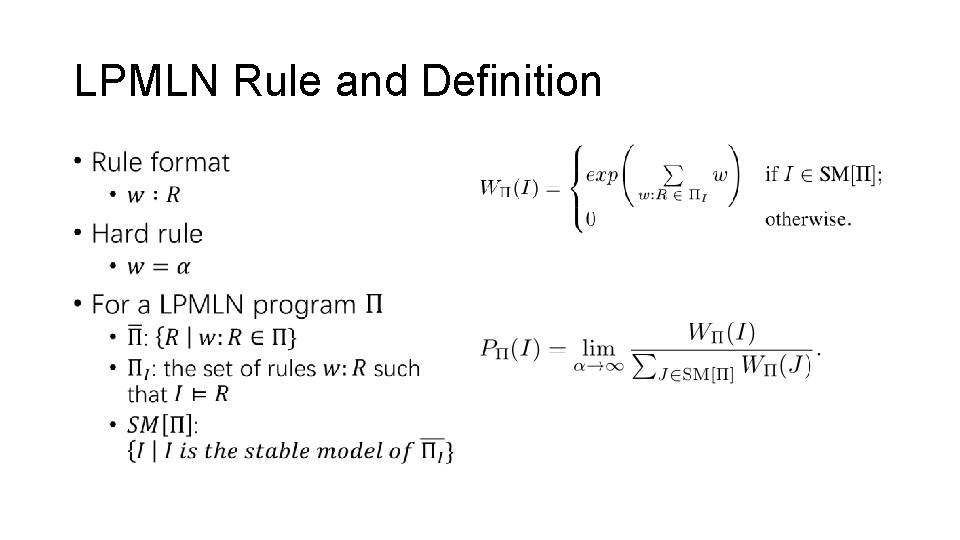

LPMLN Rule and Definition •

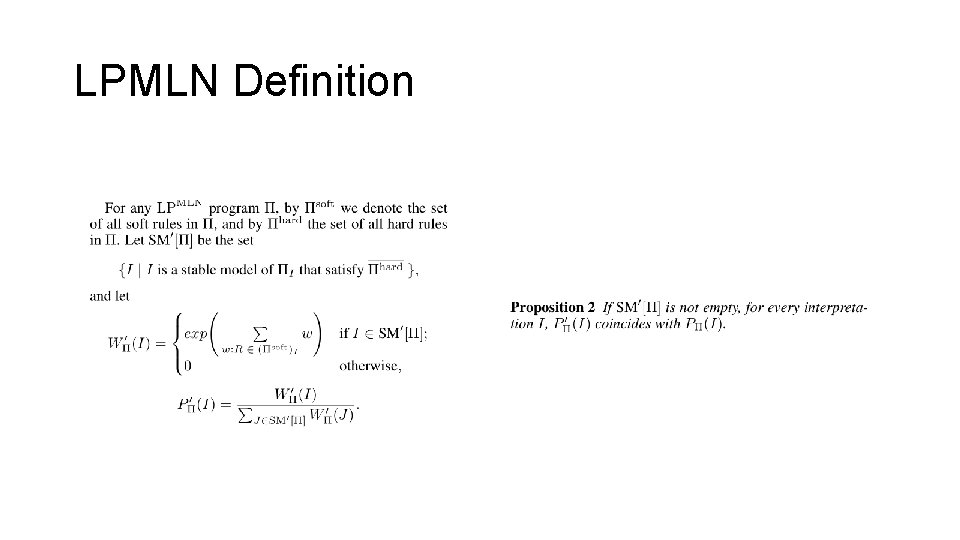

LPMLN Definition

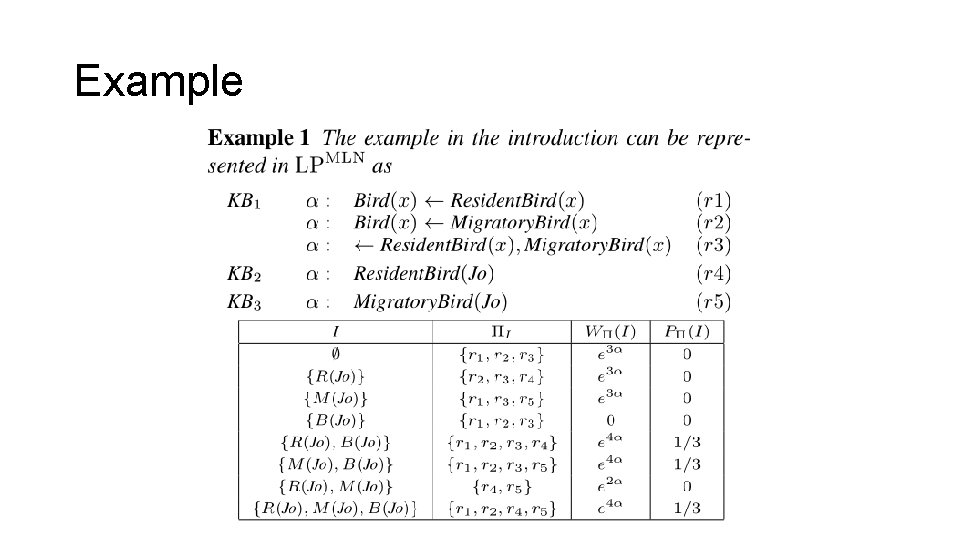

Example

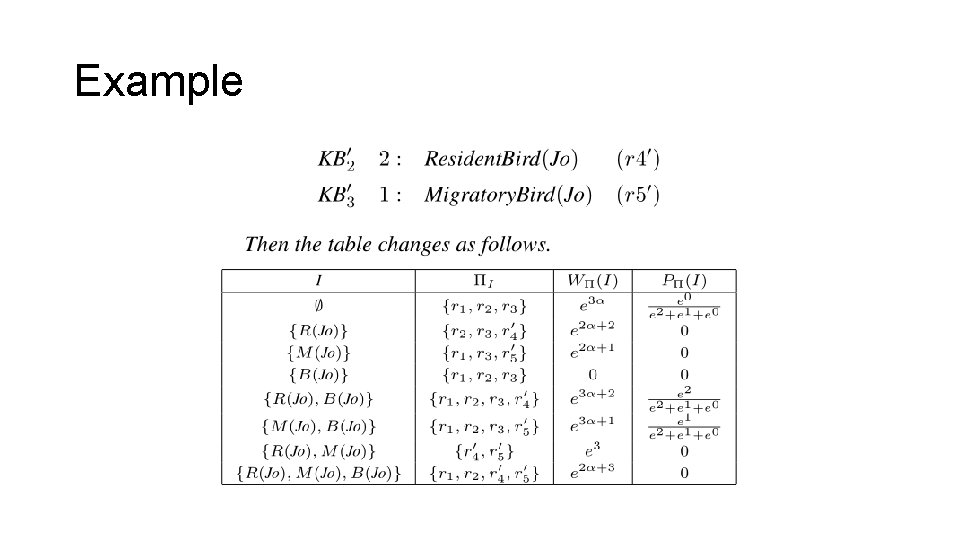

Example

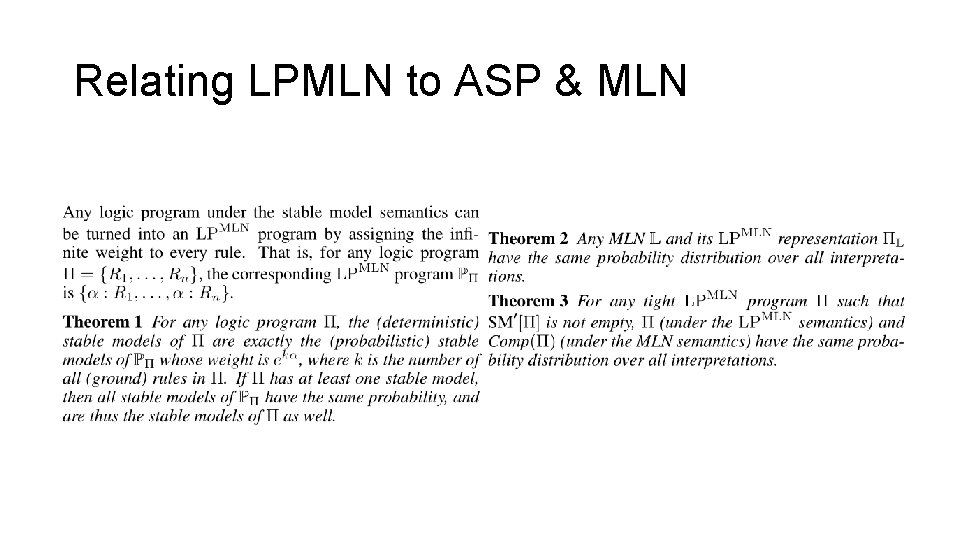

Relating LPMLN to ASP & MLN

LPMLN Implementation

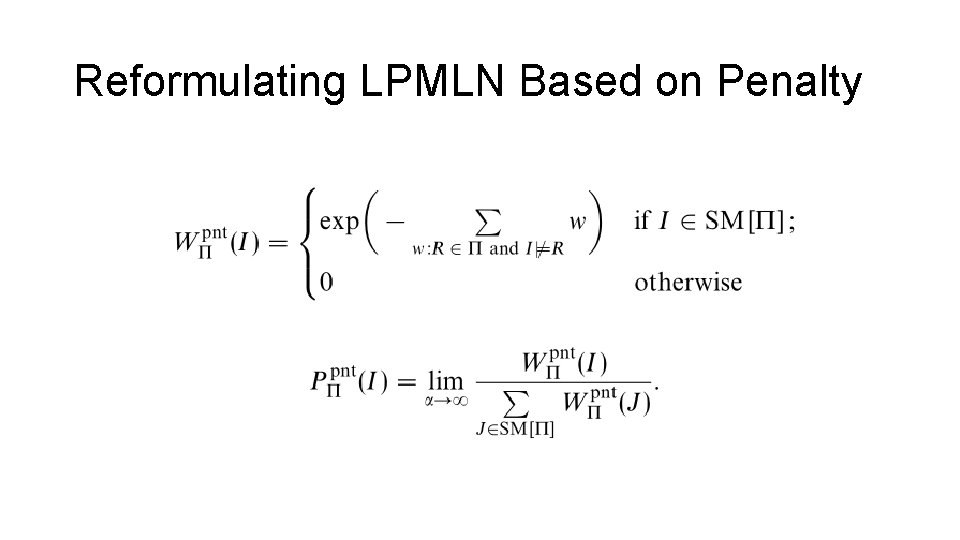

Reformulating LPMLN Based on Penalty

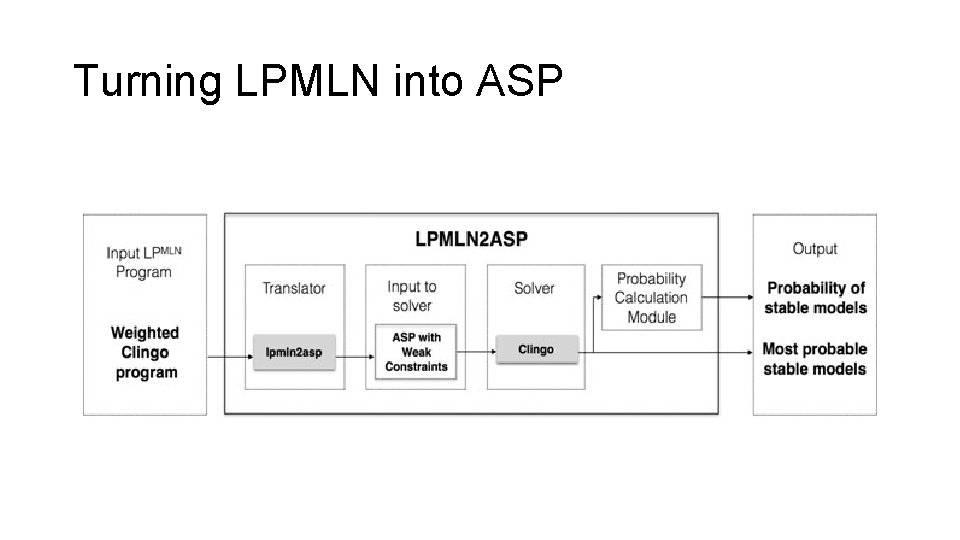

Turning LPMLN into ASP

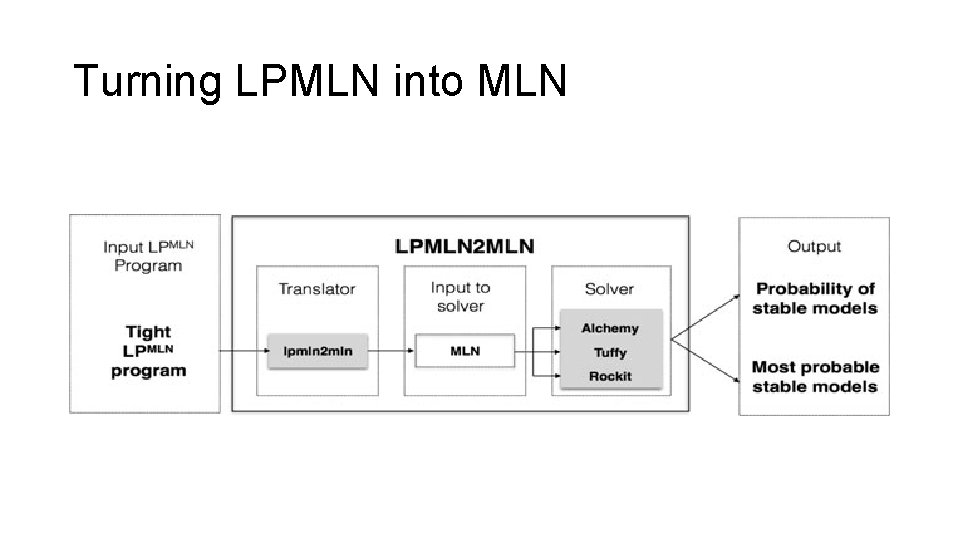

Turning LPMLN into MLN

References • Ceri, Stefano, Georg Gottlob, and Letizia Tanca. "What you always wanted to know about Datalog (and never dared to ask). " IEEE transactions on knowledge and data engineering 1. 1 (1989): 146 -166. • Brewka, Gerhard, Thomas Eiter, and Mirosław Truszczyński. "Answer set programming at a glance. " Communications of the ACM 54. 12 (2011): 92 -103. • Richardson, Matthew, and Pedro Domingos. "Markov logic networks. " Machine learning 62. 1 -2 (2006): 107 -136. • Lee, Joohyung, and Yi Wang. "Weighted Rules under the Stable Model Semantics. " KR. 2016. • Lee, Joohyung, Samidh Talsania, and Yi Wang. "Computing LP MLN using ASP and MLN solvers. " Theory and Practice of Logic Programming 17. 5 -6 (2017): 942 -960.

Thank you

- Slides: 39