Logic and Knowledge Base Systems Outline Knowledgebased systems

Logic and Knowledge Base Systems

Outline • • • Knowledge-based systems Wumpus world Logic in general - models and entailment Propositional (Boolean) logic Equivalence, validity, satisfiability Inference rules and theorem proving – forward chaining – backward chaining – resolution

“The beginning of knowledge is the discovery of something that we do not understand. ” – Frank Herbert

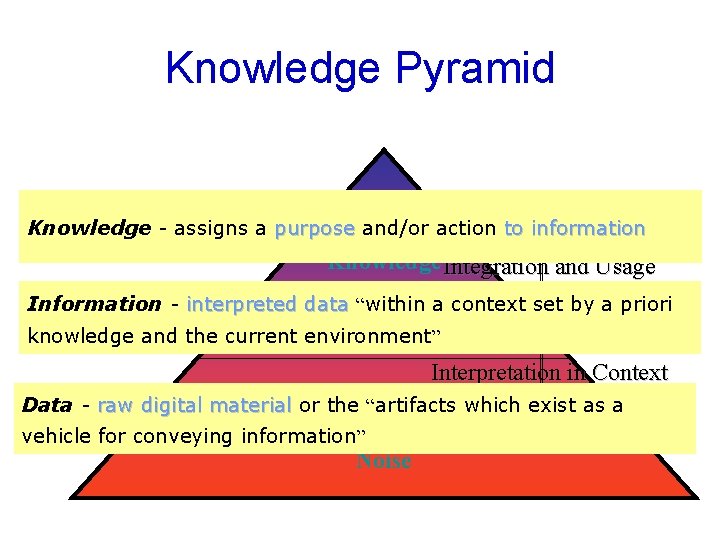

Knowledge Pyramid Knowledge – the integration of Meta. Knowledge the information into a knowledge Knowledge - assigns a purpose and/or action to information base to be effectively utilized Knowledge Integration and Usage Information the Information -– interpreted data “within a context set by a priori interpretation of artifacts in Information knowledge and the current environment” some context Data Interpretation in Context Data digitalsymbols materialand or the “artifacts which exist as a Data -–raw collected Data vehicle for conveying information” artifacts Noise

Data, information, … • Spreadsheet holds the data • RDBMS makes information from the data • Processing tables to find a product, applications of appropriate formulae to solve problems. • Wisdom requires soul.

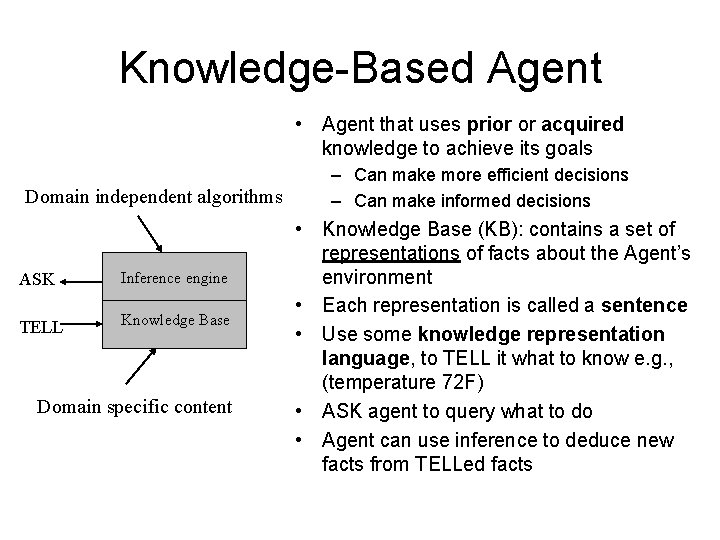

Knowledge-Based Agent • Agent that uses prior or acquired knowledge to achieve its goals Domain independent algorithms ASK Inference engine TELL Knowledge Base Domain specific content – Can make more efficient decisions – Can make informed decisions • Knowledge Base (KB): contains a set of representations of facts about the Agent’s environment • Each representation is called a sentence • Use some knowledge representation language, to TELL it what to know e. g. , (temperature 72 F) • ASK agent to query what to do • Agent can use inference to deduce new facts from TELLed facts

Knowledge and Reasoning • Knowledge + reasoning → AI based systems → partial observable environment • KB systems → general form of knowledge + current percepts → infer hidden aspects of current state prior to selecting actions – E. g. intention of speaker, when he says “ Ali saw the diamond thru window and covered it” we know that “it” refers to diamond and not window

Knowledge and Reasoning • KB systems → Flexibility – Accept new tasks – Achieve competence by learning new knowledge about the environment – Adapt to changes in environment

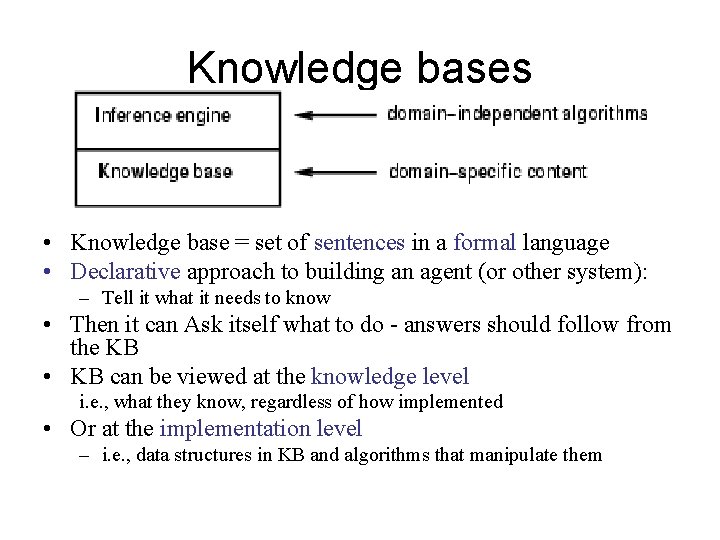

Knowledge bases • Knowledge base = set of sentences in a formal language • Declarative approach to building an agent (or other system): – Tell it what it needs to know • Then it can Ask itself what to do - answers should follow from the KB • KB can be viewed at the knowledge level i. e. , what they know, regardless of how implemented • Or at the implementation level – i. e. , data structures in KB and algorithms that manipulate them

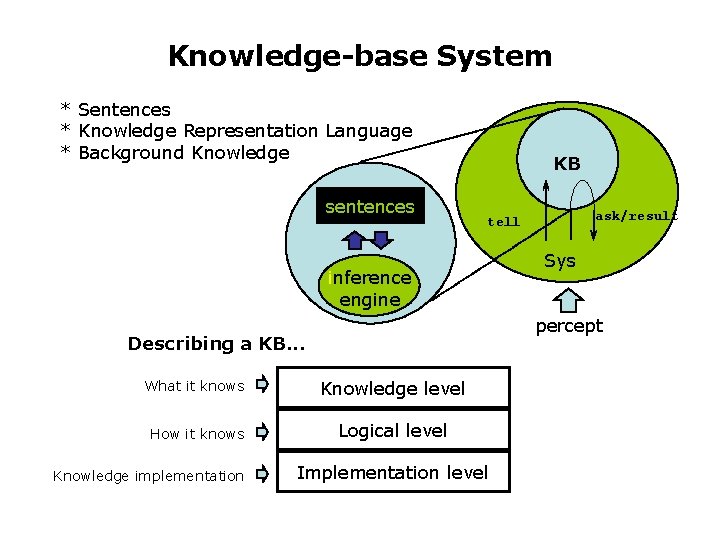

Knowledge-base System * Sentences * Knowledge Representation Language * Background Knowledge sentences KB inference engine Sys percept Describing a KB. . . What it knows Knowledge level How it knows Logical level Knowledge implementation ask/result tell Implementation level

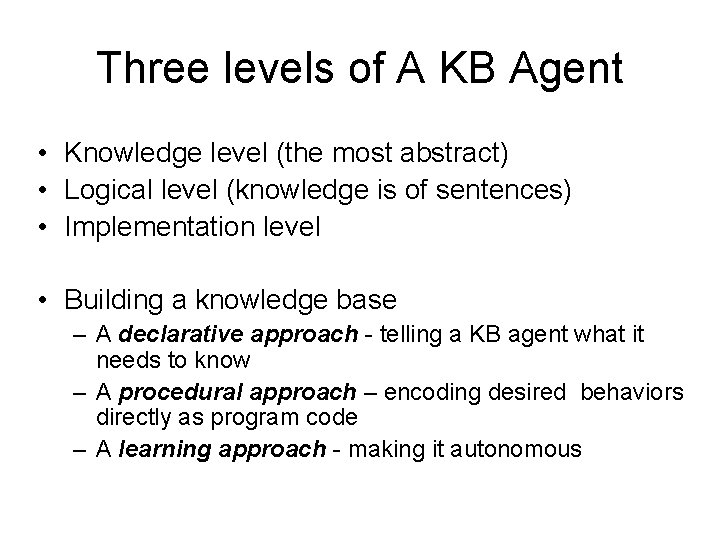

Three levels of A KB Agent • Knowledge level (the most abstract) • Logical level (knowledge is of sentences) • Implementation level • Building a knowledge base – A declarative approach - telling a KB agent what it needs to know – A procedural approach – encoding desired behaviors directly as program code – A learning approach - making it autonomous

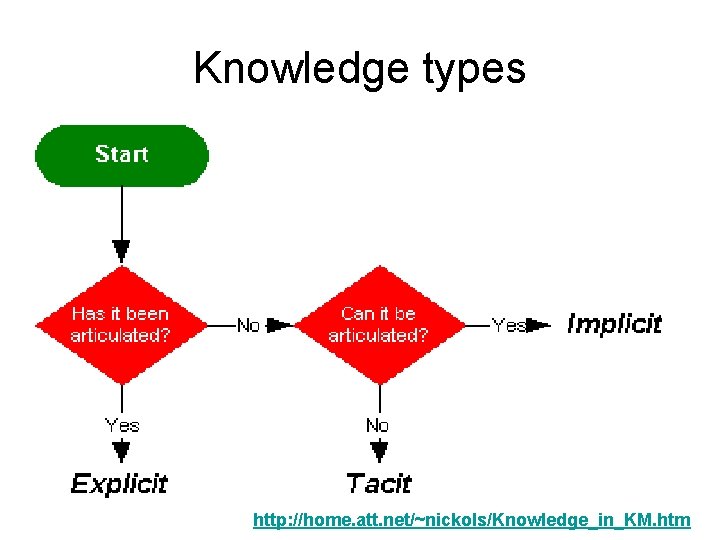

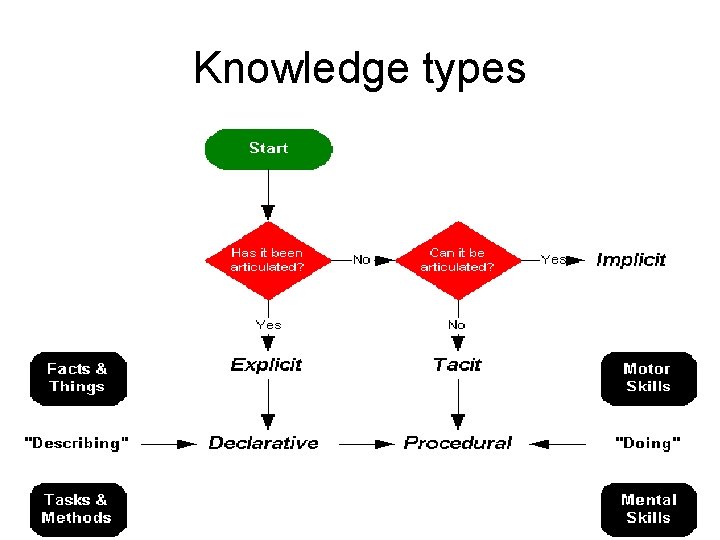

Knowledge types http: //home. att. net/~nickols/Knowledge_in_KM. htm

Explicit knowledge • Knowledge that has been articulated and, more often than not, captured in the form of text, tables, diagrams, product specifications and so on. • E. g. the formula for finding the area of a rectangle, the formalized standards by which an insurance claim is adjudicated and the official expectations for performance set forth in written work objectives.

Tacit Knowledge • Michael Polanyi (1997), the chemist-turnedphilosopher who coined the term put it, "We know more than we can tell. " • Polanyi used the example of being able to recognize a person’s face but being only vaguely able to describe how that is done. Reading the reaction on a customer’s face or entering text at a high rate of speed using a word processor offer other instances of situations in which we are able to perform well but unable to articulate exactly what we know or how we put it into practice. In such cases, the knowing is in the doing

Implicit Knowledge • Its existence is implied by or inferred from observable behavior or performance. • Can often be teased out of a competent performer (task analyst, knowledge engineer) • E. g. processing applications in an insurance company, the range of outcomes for the underwriters’ work took three basic forms: (1) they could approve the policy application, (2) they could deny it or (3) they could counter offer. Yet, not one of the underwriters articulated these as boundaries on their work at the outset of the analysis. Once these outcomes were identified, it was a comparatively simple matter to identify the criteria used to determine the response to a given application. In so doing, implicit knowledge became explicit knowledge.

Where Logic lies? • Architectures with declarative representations have knowledge in a format that may be manipulated decomposed analyzed by its reasoners. A classic example of a declarative representation is logic. Advantages of declarative knowledge are: – The ability to use knowledge in ways that the system designer did not forsee • Architectures with procedural representations encode how to achieve a particular result. Advantages of procedural knowledge are: – Possibly faster usage

Knowledge types

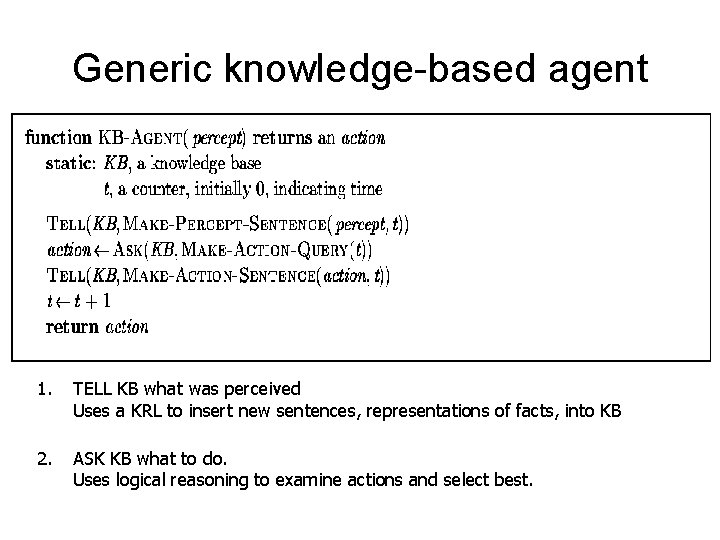

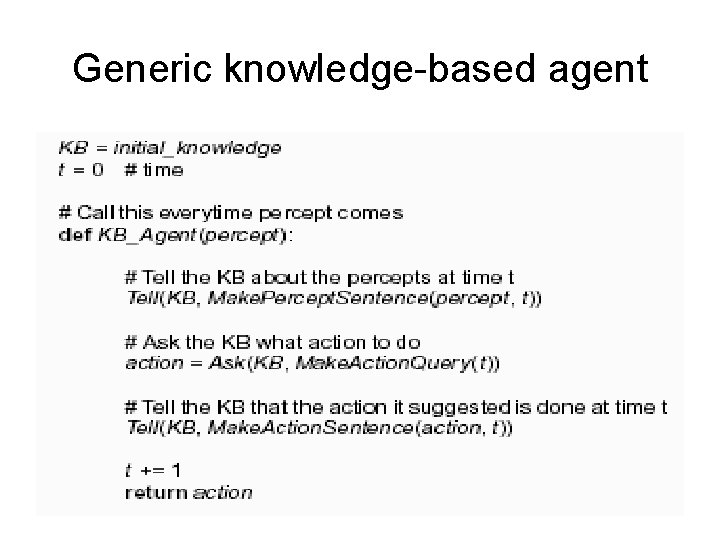

Generic knowledge-based agent 1. TELL KB what was perceived Uses a KRL to insert new sentences, representations of facts, into KB 2. ASK KB what to do. Uses logical reasoning to examine actions and select best.

Generic knowledge-based agent

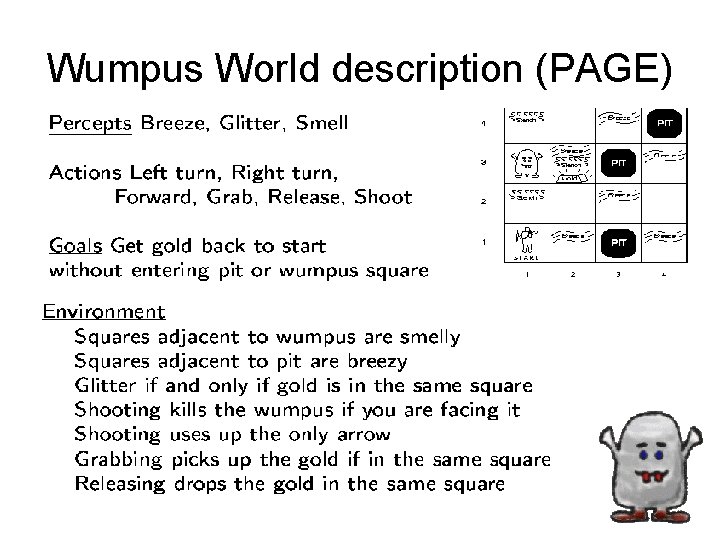

Wumpus World description (PAGE)

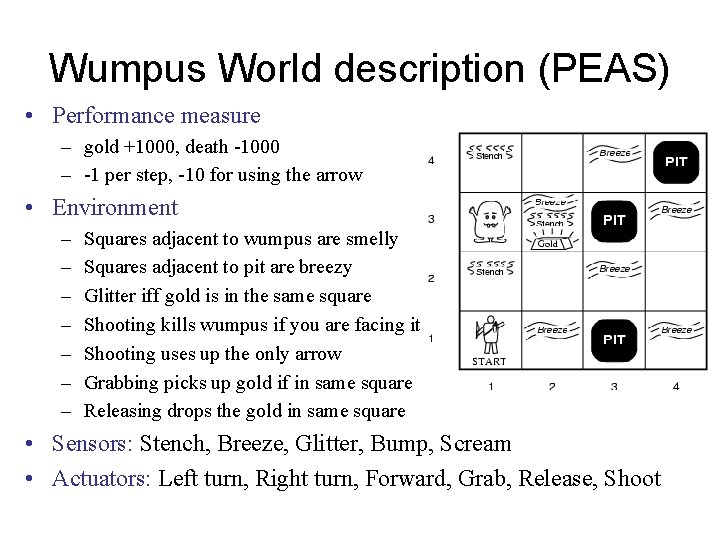

Wumpus World description (PEAS) • Performance measure – gold +1000, death -1000 – -1 per step, -10 for using the arrow • Environment – – – – Squares adjacent to wumpus are smelly Squares adjacent to pit are breezy Glitter iff gold is in the same square Shooting kills wumpus if you are facing it Shooting uses up the only arrow Grabbing picks up gold if in same square Releasing drops the gold in same square • Sensors: Stench, Breeze, Glitter, Bump, Scream • Actuators: Left turn, Right turn, Forward, Grab, Release, Shoot

Wumpus world characterization • Deterministic? • Accessible? • Static? • Discrete? • Episodic?

Wumpus world characterization • Deterministic? Yes – outcome exactly specified. • Accessible? No – only local perception. • Static? Yes – Wumpus and pits do not move. • Discrete? Yes • Episodic? (Yes) – because static.

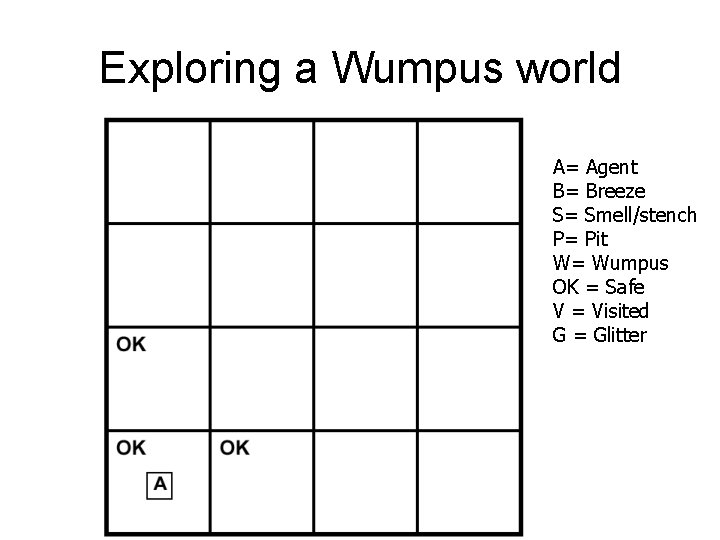

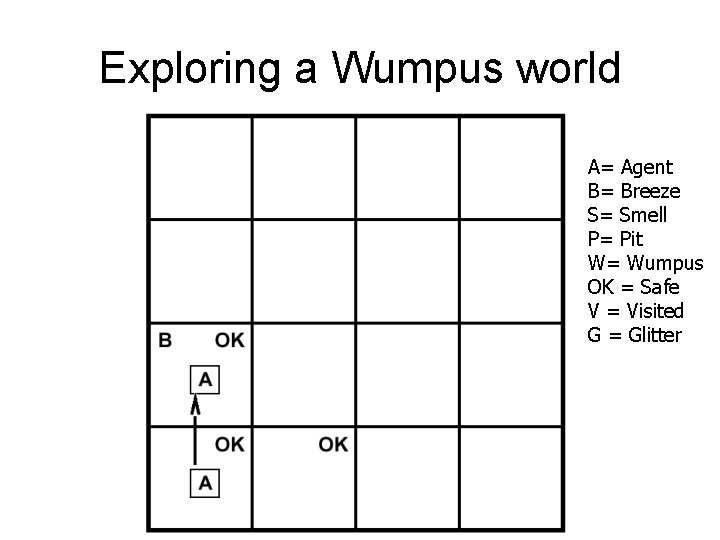

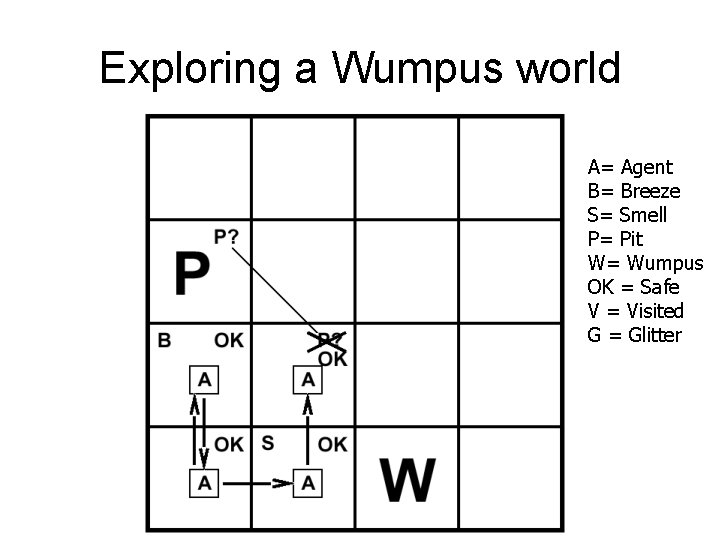

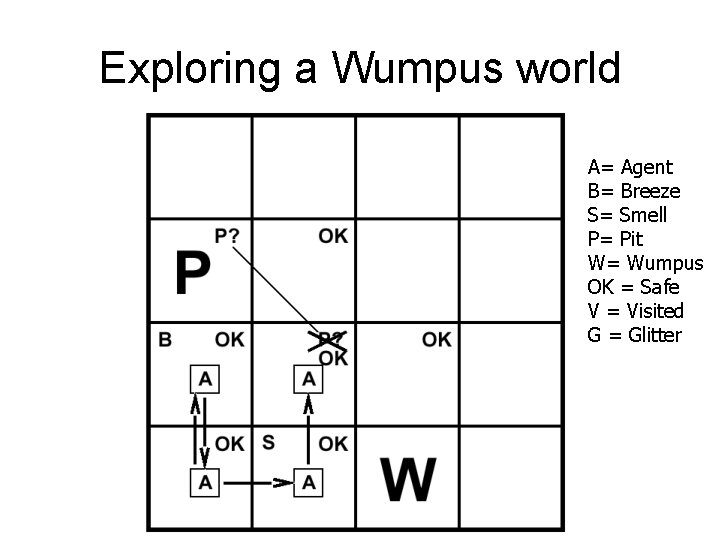

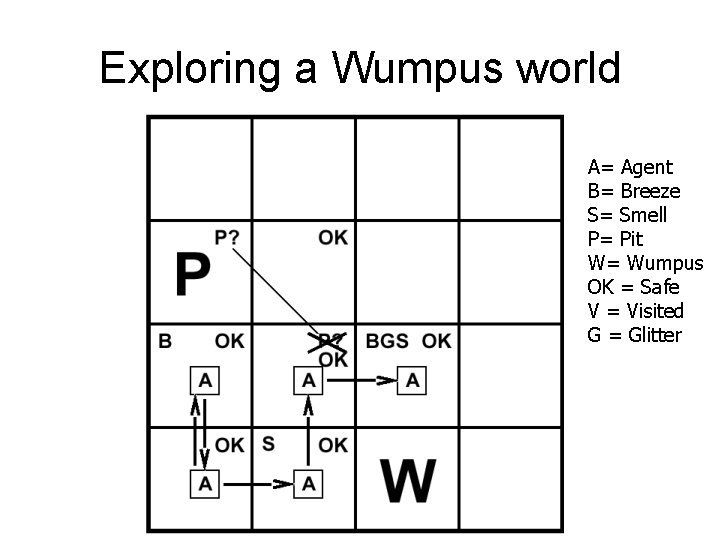

Exploring a Wumpus world A= Agent B= Breeze S= Smell/stench P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

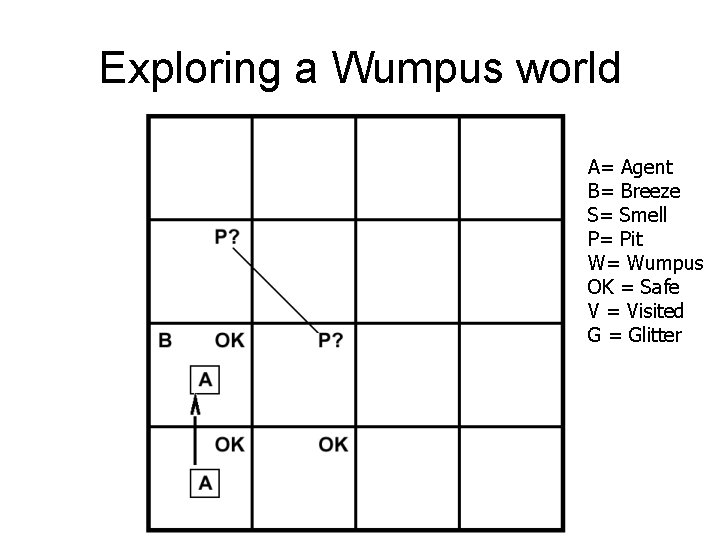

Exploring a Wumpus world A= Agent B= Breeze S= Smell P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

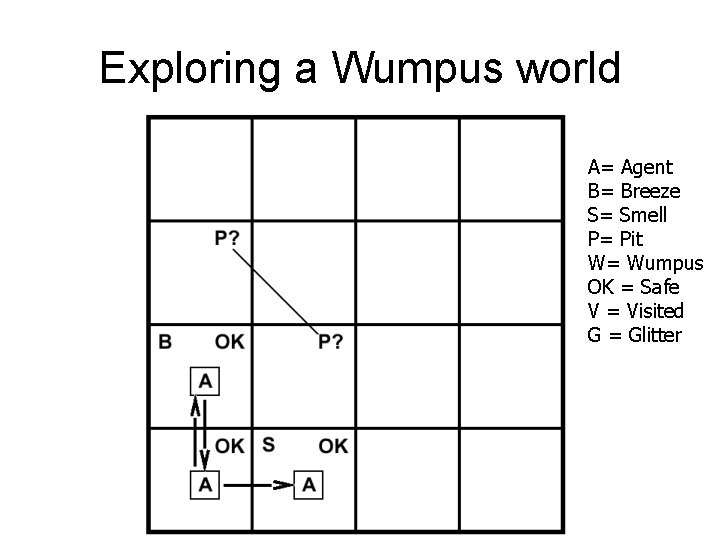

Exploring a Wumpus world A= Agent B= Breeze S= Smell P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

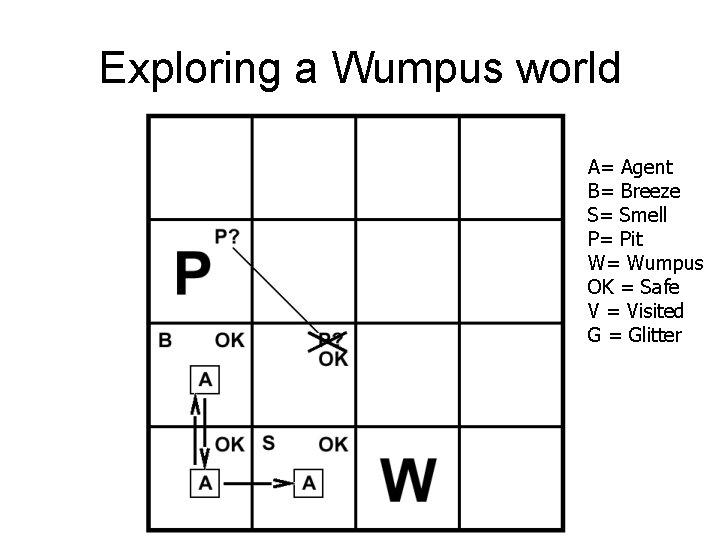

Exploring a Wumpus world A= Agent B= Breeze S= Smell P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

Exploring a Wumpus world A= Agent B= Breeze S= Smell P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

Exploring a Wumpus world A= Agent B= Breeze S= Smell P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

Exploring a Wumpus world A= Agent B= Breeze S= Smell P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

Exploring a Wumpus world A= Agent B= Breeze S= Smell P= Pit W= Wumpus OK = Safe V = Visited G = Glitter

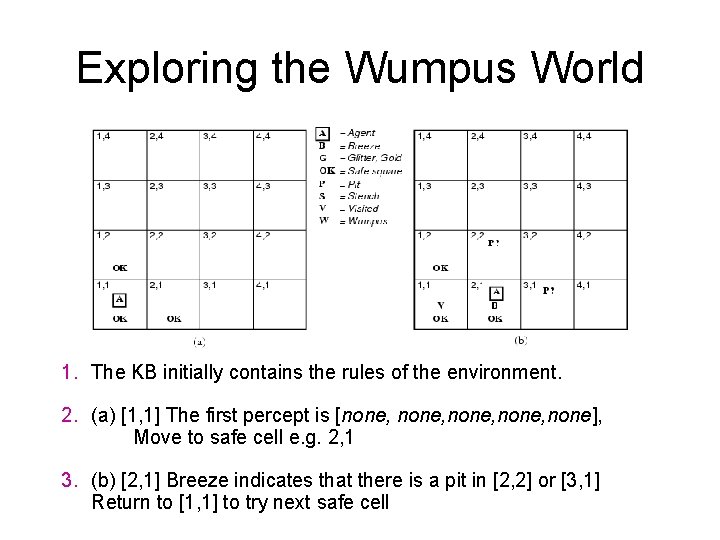

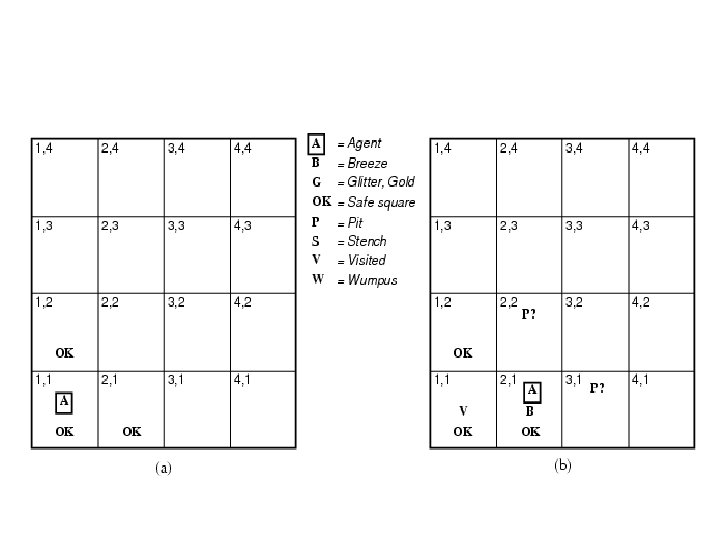

Exploring the Wumpus World 1. The KB initially contains the rules of the environment. 2. (a) [1, 1] The first percept is [none, none], Move to safe cell e. g. 2, 1 3. (b) [2, 1] Breeze indicates that there is a pit in [2, 2] or [3, 1] Return to [1, 1] to try next safe cell

![Exploring the Wumpus World 4. [1, 2] Stench in cell: wumpus is in [1, Exploring the Wumpus World 4. [1, 2] Stench in cell: wumpus is in [1,](http://slidetodoc.com/presentation_image_h/cee24b0275eb89ee56c745a60c4e293a/image-33.jpg)

Exploring the Wumpus World 4. [1, 2] Stench in cell: wumpus is in [1, 3] or [2, 2] YET … not in [1, 1] Thus … not in [2, 2] or stench would have been detected in [2, 1] Thus … wumpus is in [1, 3] Thus … [2, 2] is safe because of lack of breeze in [1, 2] Thus … pit in [3, 1] Move to next safe cell [2, 2]

![Exploring the Wumpus World 5. [2, 2] Detect nothing Move to unvisited safe cell Exploring the Wumpus World 5. [2, 2] Detect nothing Move to unvisited safe cell](http://slidetodoc.com/presentation_image_h/cee24b0275eb89ee56c745a60c4e293a/image-34.jpg)

Exploring the Wumpus World 5. [2, 2] Detect nothing Move to unvisited safe cell e. g. [2, 3] 6. [2, 3] Detect glitter , smell, breeze Thus… pick up gold Thus… pit in [3, 3] or [2, 4]

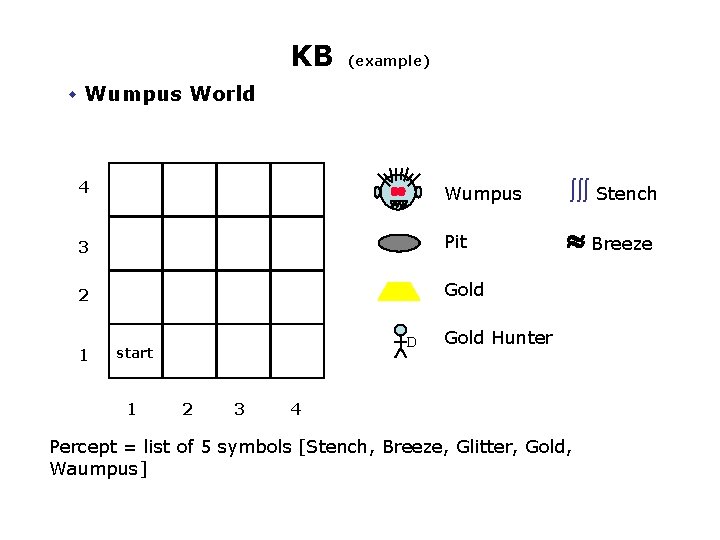

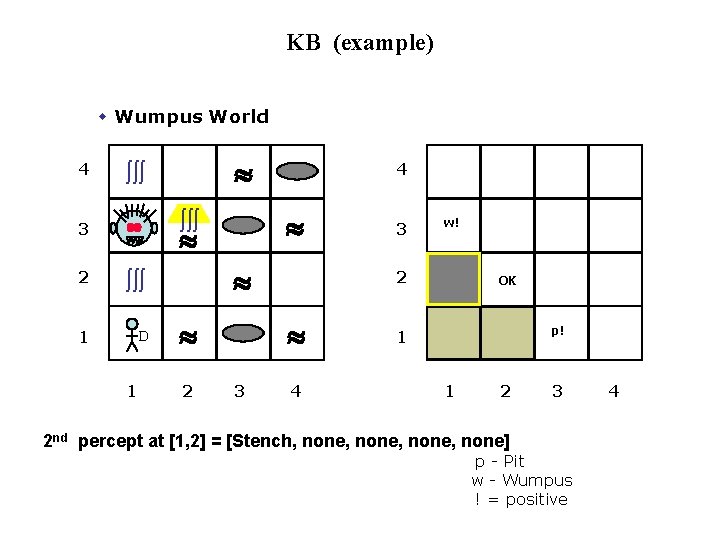

KB (example) w Wumpus World 4 Wumpus òòò Stench 3 Pit » Breeze 2 Gold 1 D start 1 2 3 Gold Hunter 4 Percept = list of 5 symbols [Stench, Breeze, Glitter, Gold, Waumpus]

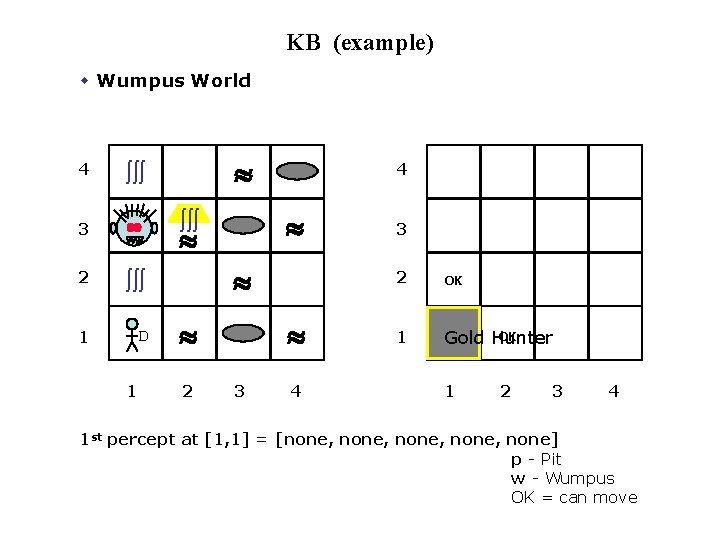

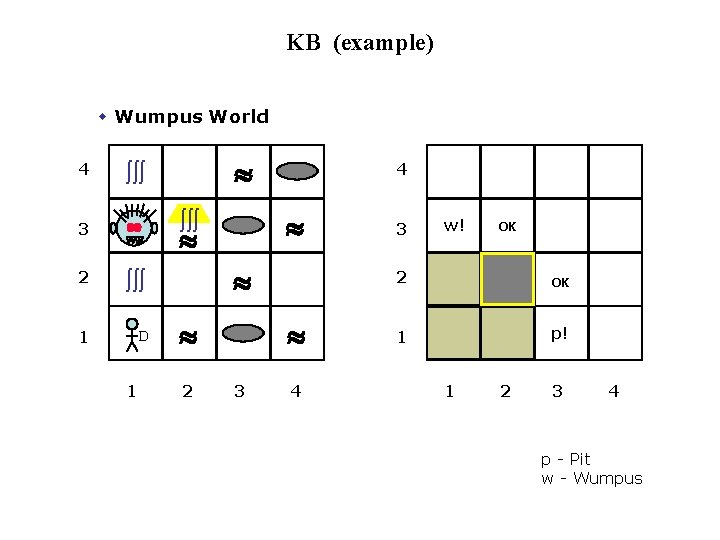

KB (example) w Wumpus World 4 » òòò » 3 2 òòò 1 D 1 4 » » 2 » 3 4 3 2 OK 1 OK Gold Hunter 1 2 3 4 1 st percept at [1, 1] = [none, none] p - Pit w - Wumpus OK = can move

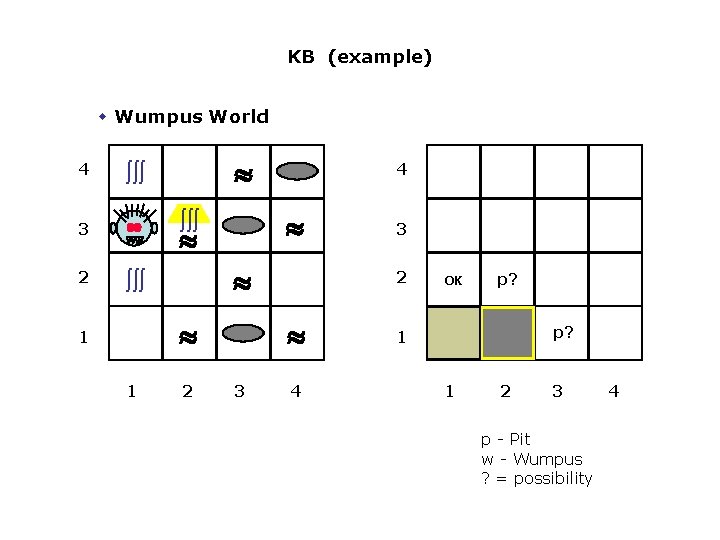

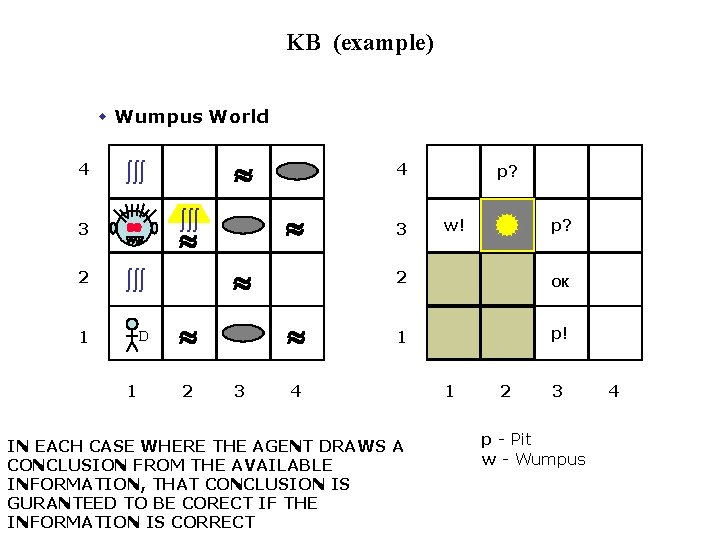

KB (example) w Wumpus World 4 » » òòò » 1 1 4 » òòò 3 2 » òòò 2 2 » 3 3 4 OK p? p? 1 1 2 3 p - Pit w - Wumpus ? = possibility 4

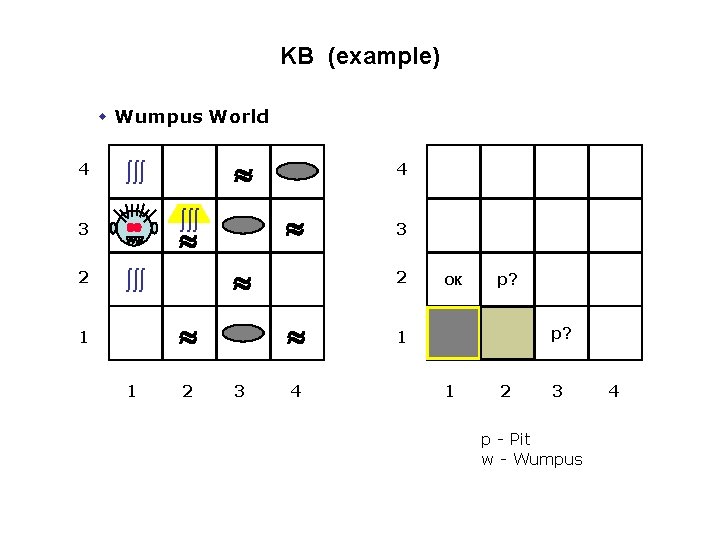

KB (example) w Wumpus World 4 » » òòò » 1 1 4 » òòò 3 2 » òòò 2 2 » 3 3 4 OK p? p? 1 1 2 3 p - Pit w - Wumpus 4

KB (example) w Wumpus World 4 » òòò » 3 2 òòò 1 D 1 4 » » 2 w! 2 » 3 3 4 OK p! 1 1 2 3 2 nd percept at [1, 2] = [Stench, none, none] p - Pit w - Wumpus ! = positive 4

KB (example) w Wumpus World 4 » òòò » 3 2 òòò 1 D 1 4 » » 2 » 3 4 3 w! OK 2 OK 1 p! 1 2 3 4 p - Pit w - Wumpus

KB (example) w Wumpus World 4 » òòò » 3 2 òòò 1 D 1 4 » » 2 » 3 3 p? w! p? 2 OK 1 p! 4 IN EACH CASE WHERE THE AGENT DRAWS A CONCLUSION FROM THE AVAILABLE INFORMATION, THAT CONCLUSION IS GURANTEED TO BE CORECT IF THE INFORMATION IS CORRECT 1 2 3 p - Pit w - Wumpus 4

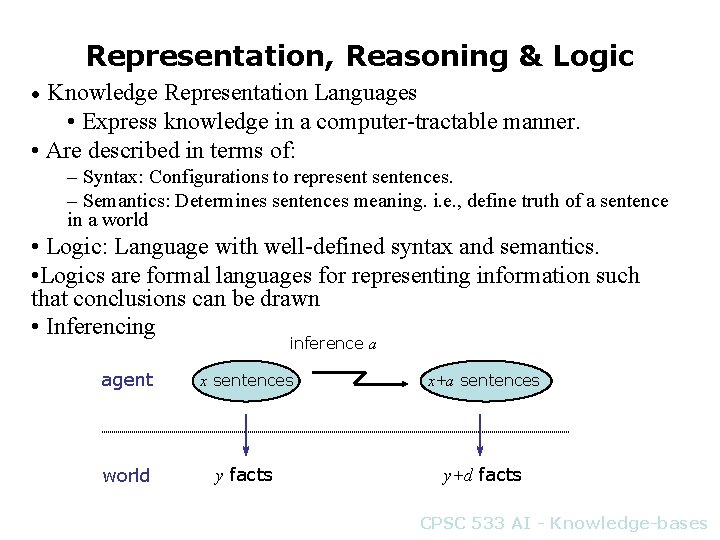

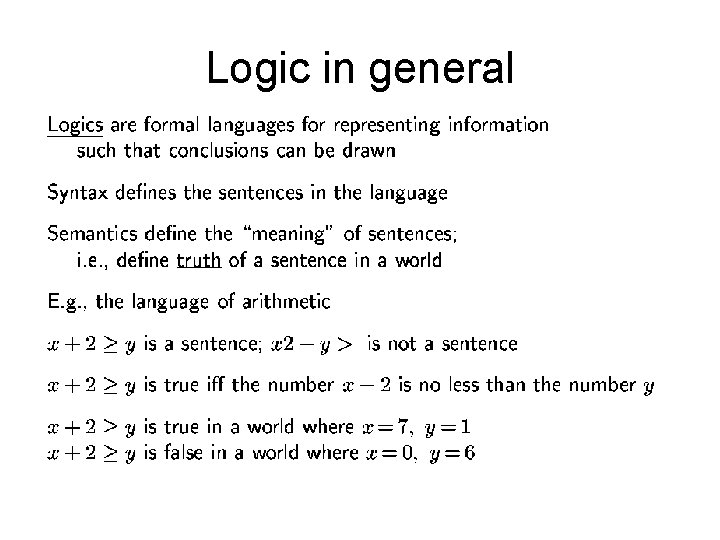

Representation, Reasoning & Logic Knowledge Representation Languages • Express knowledge in a computer-tractable manner. • Are described in terms of: • – Syntax: Configurations to representences. – Semantics: Determines sentences meaning. i. e. , define truth of a sentence in a world • Logic: Language with well-defined syntax and semantics. • Logics are formal languages for representing information such that conclusions can be drawn • Inferencing inference a agent x sentences x+a sentences world y facts y+d facts CPSC 533 AI - Knowledge-bases

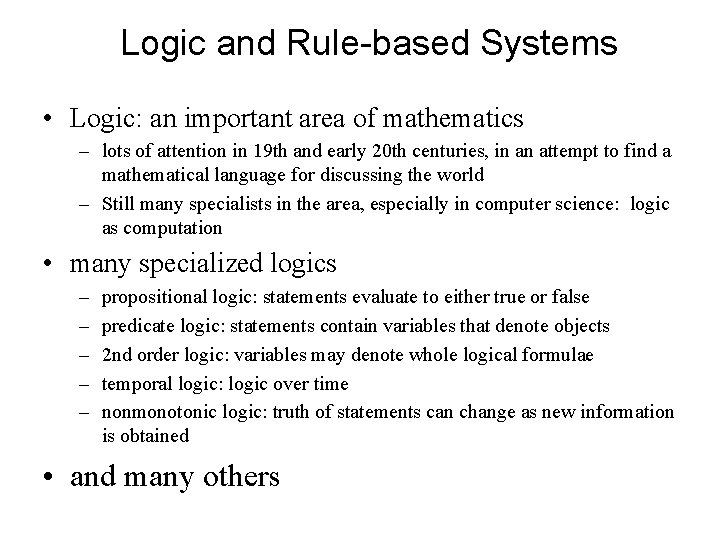

Logic and Rule-based Systems • Logic: an important area of mathematics – lots of attention in 19 th and early 20 th centuries, in an attempt to find a mathematical language for discussing the world – Still many specialists in the area, especially in computer science: logic as computation • many specialized logics – – – propositional logic: statements evaluate to either true or false predicate logic: statements contain variables that denote objects 2 nd order logic: variables may denote whole logical formulae temporal logic: logic over time nonmonotonic logic: truth of statements can change as new information is obtained • and many others

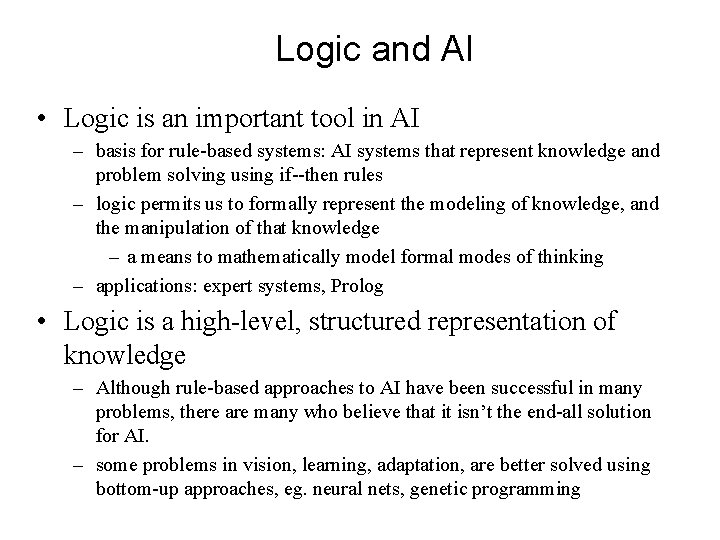

Logic and AI • Logic is an important tool in AI – basis for rule-based systems: AI systems that represent knowledge and problem solving using if--then rules – logic permits us to formally represent the modeling of knowledge, and the manipulation of that knowledge – a means to mathematically model formal modes of thinking – applications: expert systems, Prolog • Logic is a high-level, structured representation of knowledge – Although rule-based approaches to AI have been successful in many problems, there are many who believe that it isn’t the end-all solution for AI. – some problems in vision, learning, adaptation, are better solved using bottom-up approaches, eg. neural nets, genetic programming

Logic in general

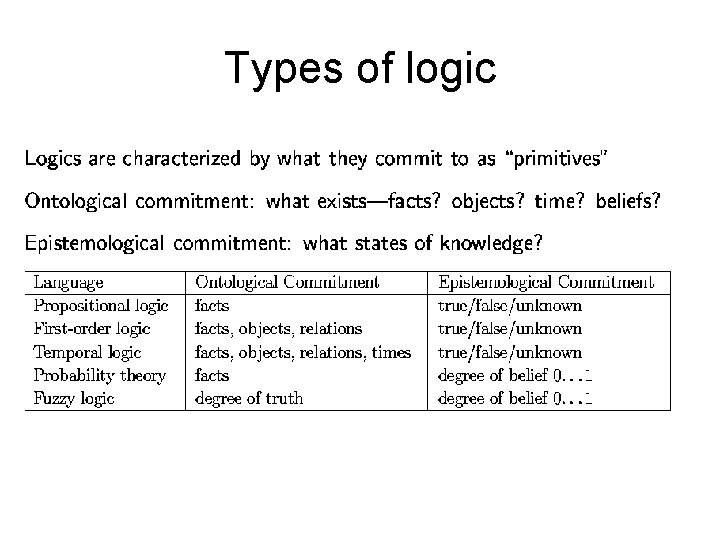

Types of logic

Semantics • Possible worlds might be thought of as (potentially) real environments that the agent might or might not be in • Models are mathematical abstractions. The semantics of a logic defines the truth of each sentence with respect to each possible world • A model of a sentence is an interpretation in which the sentence evaluates to True • E. g. , Today. Is. Tuesday -> Class. AI is true in model {Today. Is. Tuesday=True, Class. AI=True} • We say {Today. Is. Tuesday=True, Class. AI=True} is a model of the sentence

Propositional Logic • • Logic for knowledge representation. Propositional logic. Syntax of propositional logic. Semantics of propositional logic: – Truth tables. Additional: • • • Tautologies and Contradictions Entailment and Proof Modus ponens and modus tollens Soundness and Completeness Equivalence relations

What is logic? • Logic determines whether it is justified to reason from given assumptions to a conclusion – note: a logician cannot determine whether it rains – he can conclude it rains from the assumptions if I hear drips on the roof, then it rains and I hear drips on the roof

A Formal Approach • Any logic comes in three parts: • syntax: what are the well-formed formulae (wffs)? • semantics: what do formulae mean, how do we interpret them? • deduction: how to mechanically formulate formulae, giving us for instance, the valid ones? Or is concerned with manipulating formulae according to certain rules (Also called the proof theory)

Propositional Logic • The syntax of propositional logic is made up of propositions and connectives.

Propositions • A statement in some language that can be evaluated to either true or false (but it cannot be both). Example propositions: • • It is raining. Nawaz Sharif is the Indian Prime Minister. 5 + 5 = 10. Karachi is the capital of Pakistan. Not propositions: • Where are you? • Oh no! • Liverpool is not.

Propositions • Of the valid propositions, each can be evaluated to either true or false. • e. g. It is true that it is raining • e. g. It is false that Nawaz Sharif is the Indian Minister. • An easy way to determine whether or not a statement is a proposition is to see if you can prefix it with “it is true that” and if it subsequently still makes sense.

Propositions • we represent propositions using the propositional variables p, q, r etc. • The previous examples of propositions are all atomic. We can combine these atomic propositions to form compound propositions…

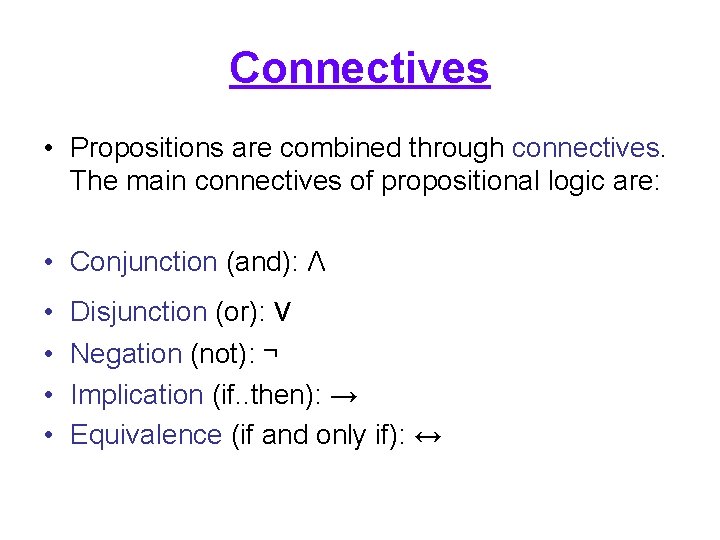

Connectives • Propositions are combined through connectives. The main connectives of propositional logic are: • Conjunction (and): Λ • • Disjunction (or): v Negation (not): ¬ Implication (if. . then): → Equivalence (if and only if): ↔

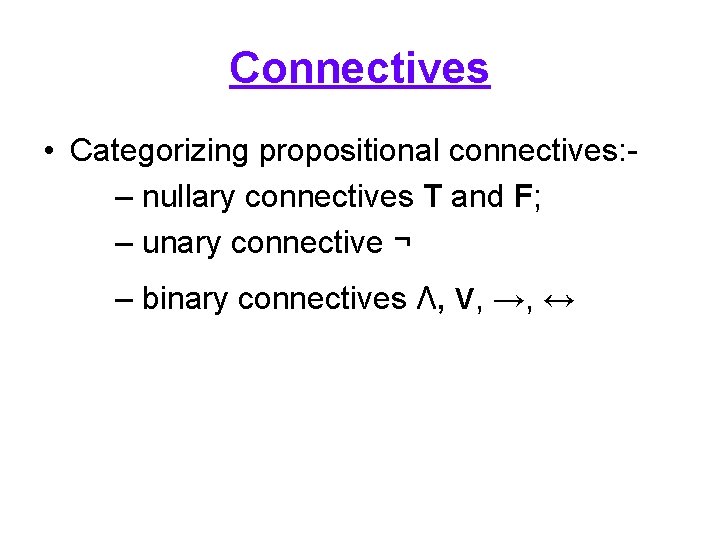

Connectives • Categorizing propositional connectives: – nullary connectives T and F; – unary connective ¬ – binary connectives Λ, v, →, ↔

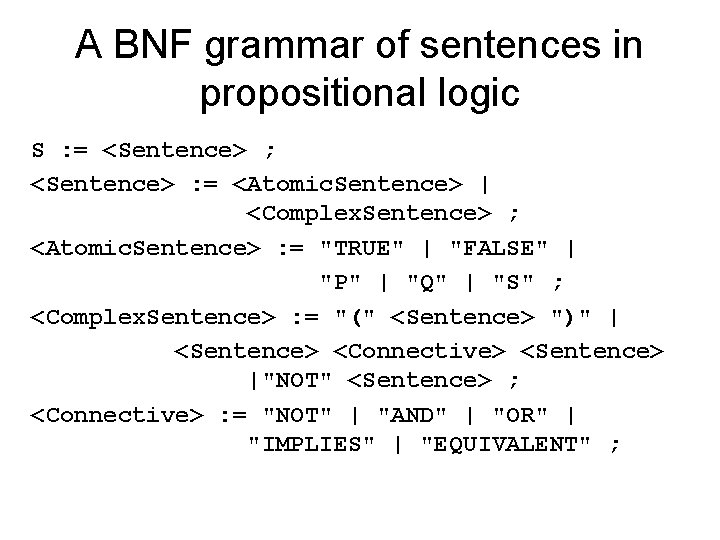

A BNF grammar of sentences in propositional logic S : = <Sentence> ; <Sentence> : = <Atomic. Sentence> | <Complex. Sentence> ; <Atomic. Sentence> : = "TRUE" | "FALSE" | "P" | "Q" | "S" ; <Complex. Sentence> : = "(" <Sentence> ")" | <Sentence> <Connective> <Sentence> |"NOT" <Sentence> ; <Connective> : = "NOT" | "AND" | "OR" | "IMPLIES" | "EQUIVALENT" ;

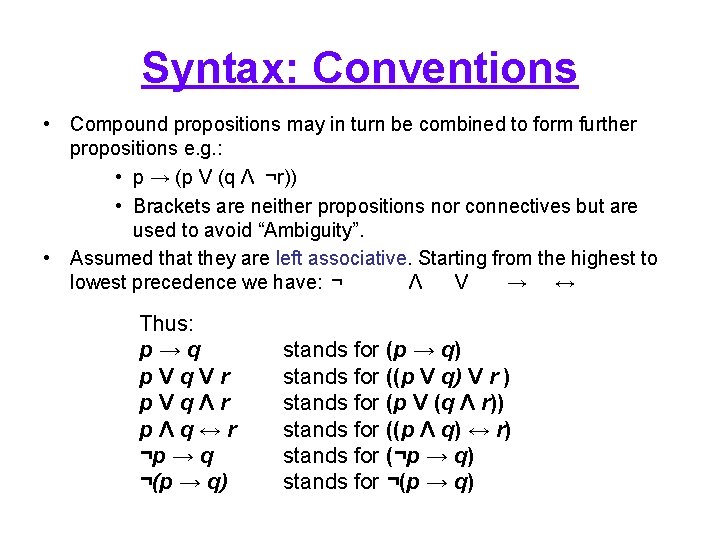

Syntax: Conventions • Compound propositions may in turn be combined to form further propositions e. g. : • p → (p V (q Λ ¬r)) • Brackets are neither propositions nor connectives but are used to avoid “Ambiguity”. • Assumed that they are left associative. Starting from the highest to lowest precedence we have: ¬ Λ V → ↔ Thus: p→q p. Vq. Vr p. VqΛr pΛq↔r ¬p → q ¬(p → q) stands for ((p V q) V r ) stands for (p V (q Λ r)) stands for ((p Λ q) ↔ r) stands for (¬p → q) stands for ¬(p → q)

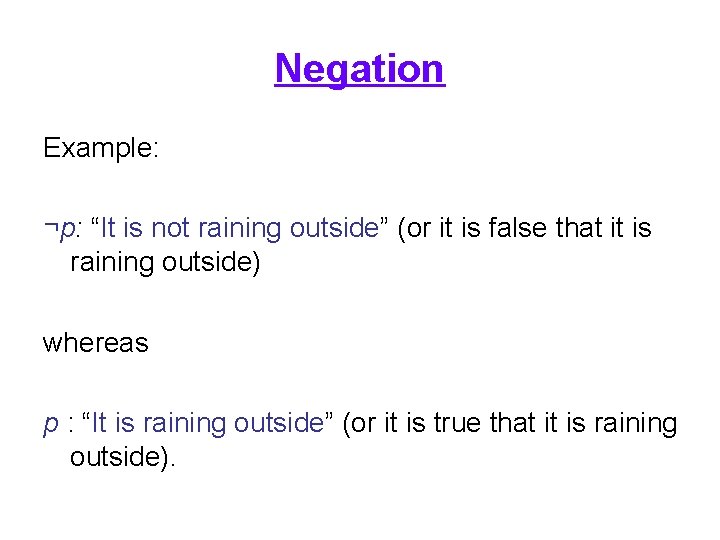

Negation Example: ¬p: “It is not raining outside” (or it is false that it is raining outside) whereas p : “It is raining outside” (or it is true that it is raining outside).

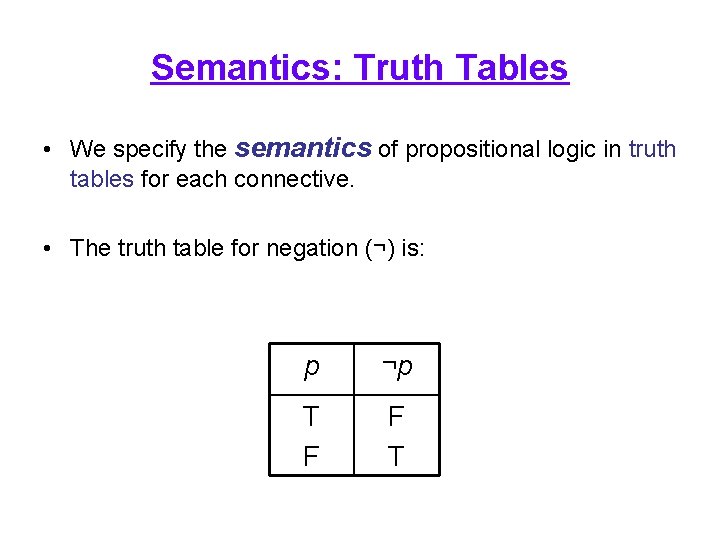

Semantics: Truth Tables • We specify the semantics of propositional logic in truth tables for each connective. • The truth table for negation (¬) is: p ¬p T F F T

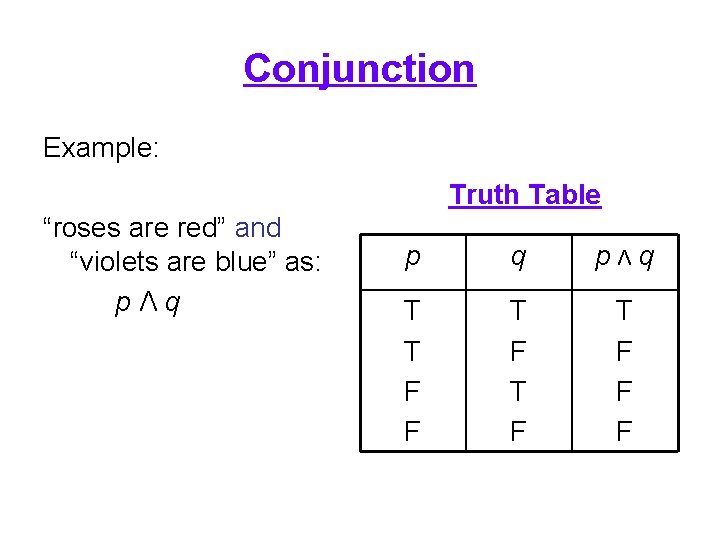

Conjunction Example: Truth Table “roses are red” and “violets are blue” as: pΛq p q pΛq T T F F T F T F F F

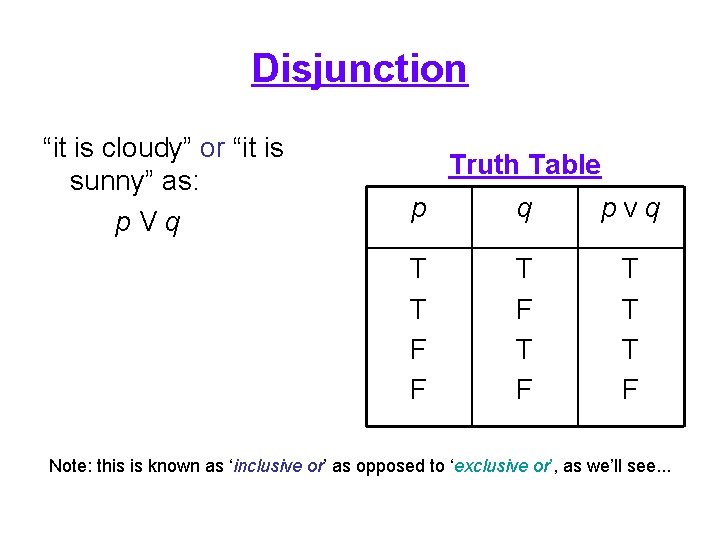

Disjunction “it is cloudy” or “it is sunny” as: p. Vq Truth Table p q p. Vq T T F F T F T T T F Note: this is known as ‘inclusive or’ as opposed to ‘exclusive or’, as we’ll see. . .

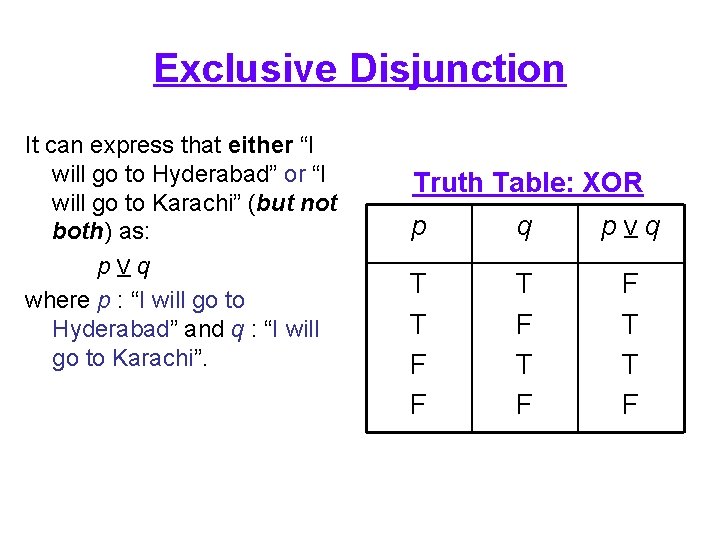

Exclusive Disjunction It can express that either “I will go to Hyderabad” or “I will go to Karachi” (but not both) as: p. Vq where p : “I will go to Hyderabad” and q : “I will go to Karachi”. Truth Table: XOR p q p. Vq T T F F T T F

Material Implication • For the implication truth table, p → q is false only when p is true and q is false. • The last two cases, where p is false, we cannot say whether q will be true, but as we cannot say it will definitely be false, then we evaluate these cases to true.

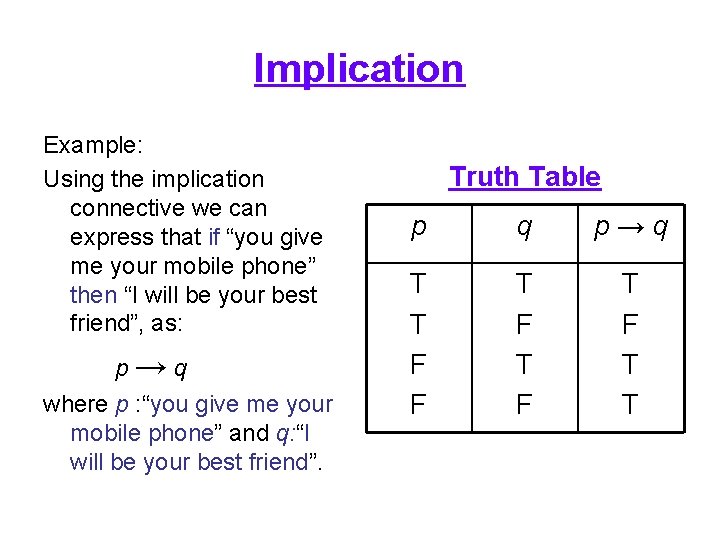

Implication Example: Using the implication connective we can express that if “you give me your mobile phone” then “I will be your best friend”, as: p→q where p : “you give me your mobile phone” and q: “I will be your best friend”. Truth Table p q p→q T T F F T F T T

Implication • We need to be careful with → as it may not quite capture our intuitions about implication. • In particular (taking the previous example), p → q is true in the following situations: – I study hard and I get rich; or – I don't study hard and I don't get rich. • Note the last two situations, where the implication is true regardless of the truth of p. • The one thing we can say is that if I've studied hard but failed to become rich then the proposition is clearly false.

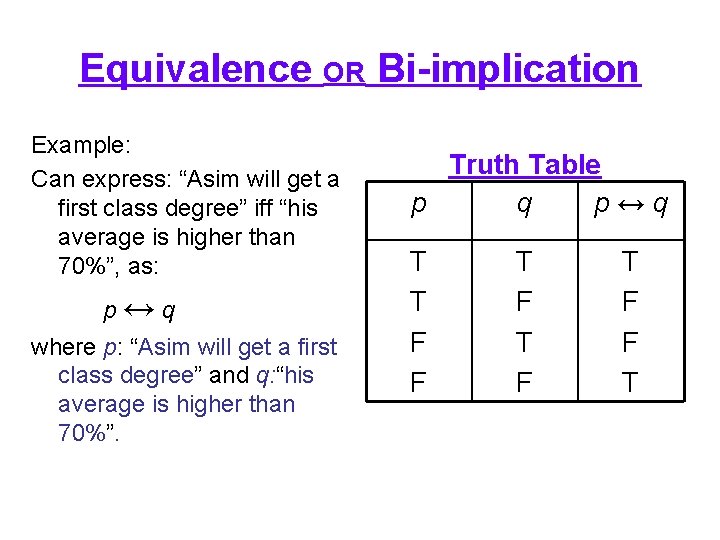

Equivalence OR Bi-implication Example: Can express: “Asim will get a first class degree” iff “his average is higher than 70%”, as: p↔q where p: “Asim will get a first class degree” and q: “his average is higher than 70%”. Truth Table p q p↔q T T F F T F T F F T

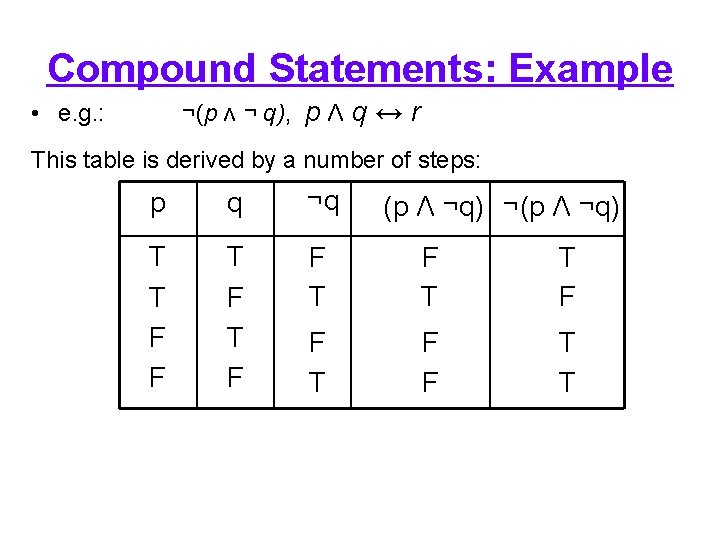

Compound Statements: Example ¬(p Λ ¬ q), p Λ q ↔ r • e. g. : This table is derived by a number of steps: p q ¬q T T F F T F T T F F T T (p Λ ¬q) ¬(p Λ ¬q)

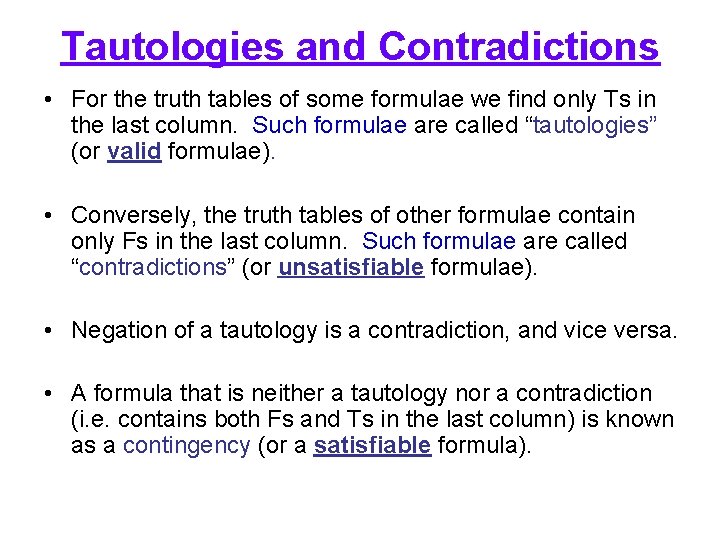

Tautologies and Contradictions • For the truth tables of some formulae we find only Ts in the last column. Such formulae are called “tautologies” (or valid formulae). • Conversely, the truth tables of other formulae contain only Fs in the last column. Such formulae are called “contradictions” (or unsatisfiable formulae). • Negation of a tautology is a contradiction, and vice versa. • A formula that is neither a tautology nor a contradiction (i. e. contains both Fs and Ts in the last column) is known as a contingency (or a satisfiable formula).

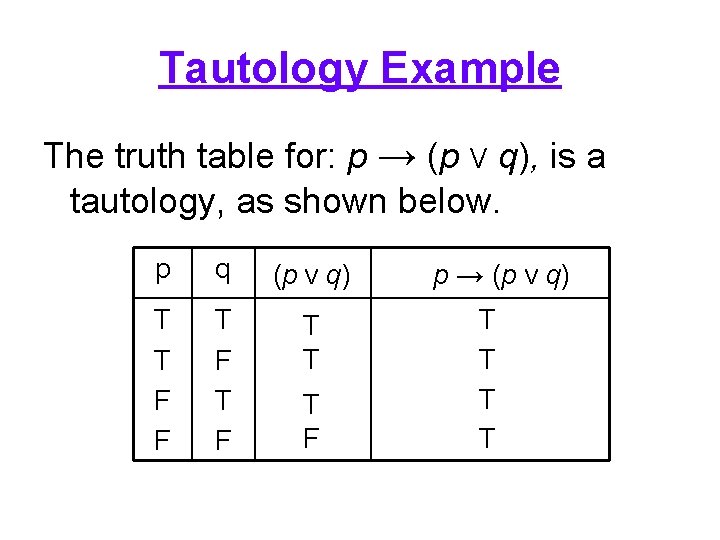

Tautology Example The truth table for: p → (p V q), is a tautology, as shown below. p q (p V q) T T F F T F T T T F p → (p V q) T T

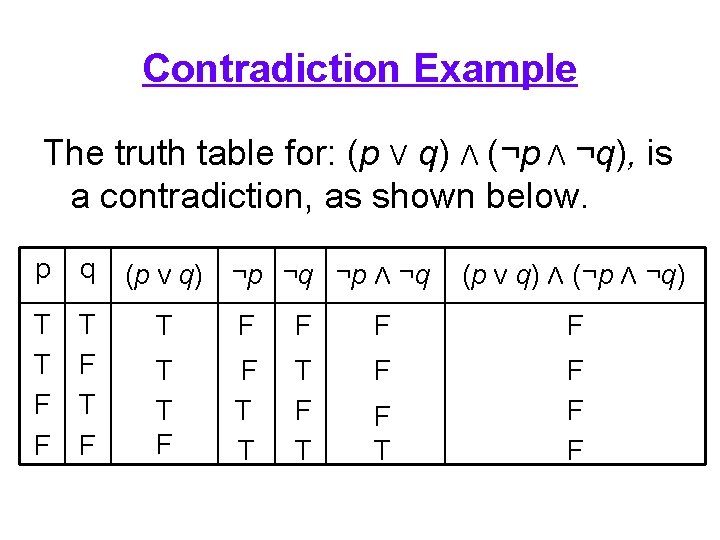

Contradiction Example The truth table for: (p V q) Λ (¬p Λ ¬q), is a contradiction, as shown below. p q T T F F T F (p V q) ¬p ¬q ¬p Λ ¬q (p V q) Λ (¬p Λ ¬q) T F F T T T F F F T

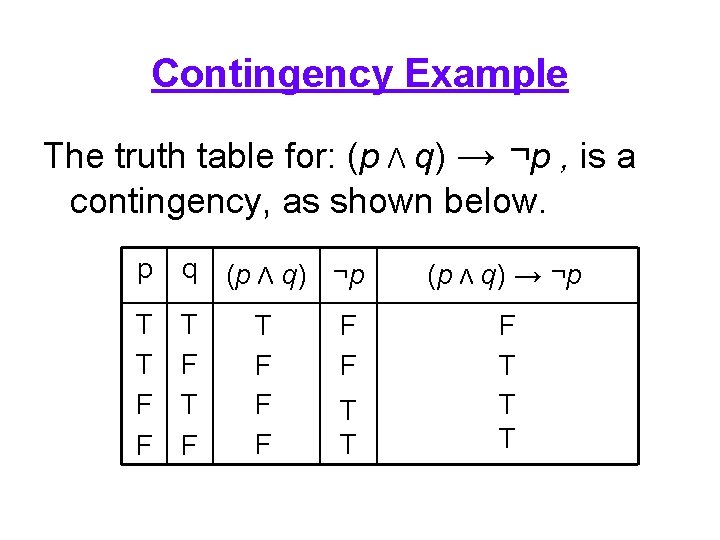

Contingency Example The truth table for: (p Λ q) → ¬p , is a contingency, as shown below. p q T T F F T F (p Λ q) ¬p T F F F T T (p Λ q) → ¬p F T T T

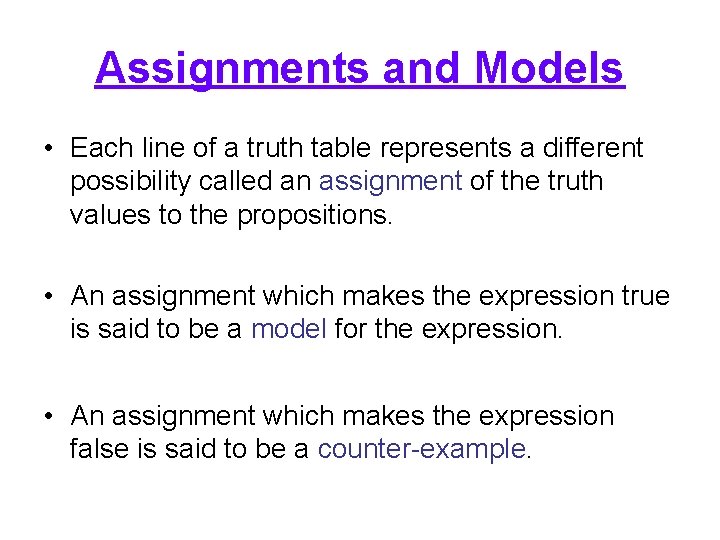

Assignments and Models • Each line of a truth table represents a different possibility called an assignment of the truth values to the propositions. • An assignment which makes the expression true is said to be a model for the expression. • An assignment which makes the expression false is said to be a counter-example.

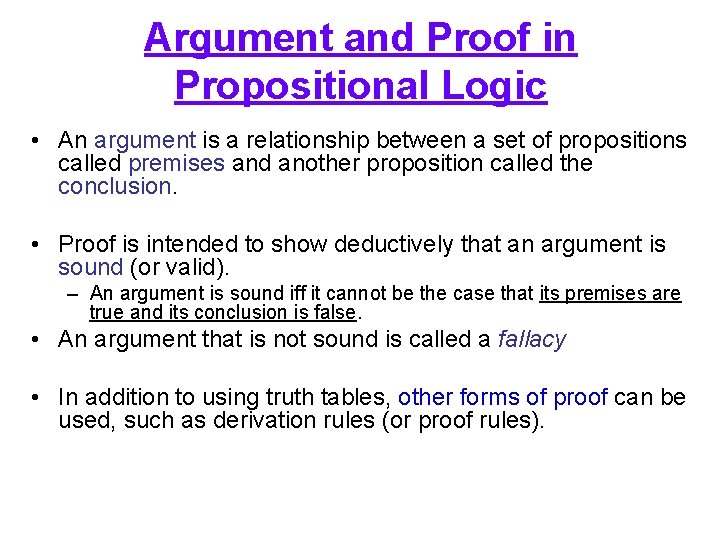

Argument and Proof in Propositional Logic • An argument is a relationship between a set of propositions called premises and another proposition called the conclusion. • Proof is intended to show deductively that an argument is sound (or valid). – An argument is sound iff it cannot be the case that its premises are true and its conclusion is false. • An argument that is not sound is called a fallacy • In addition to using truth tables, other forms of proof can be used, such as derivation rules (or proof rules).

Entailment and Proof • To clarify the difference between entailment and proof: • Entailment: if we have a set of formulae which are true, then as a logical consequence of this, some particular formula must also be true. • Proof: a formula is provable (derivable) in some logical system if there are rules of inference that allow the formula to be derived by performing some operations on the formulae. • Entailment is concerned with the semantics of formulae, proof is concerned with syntax only.

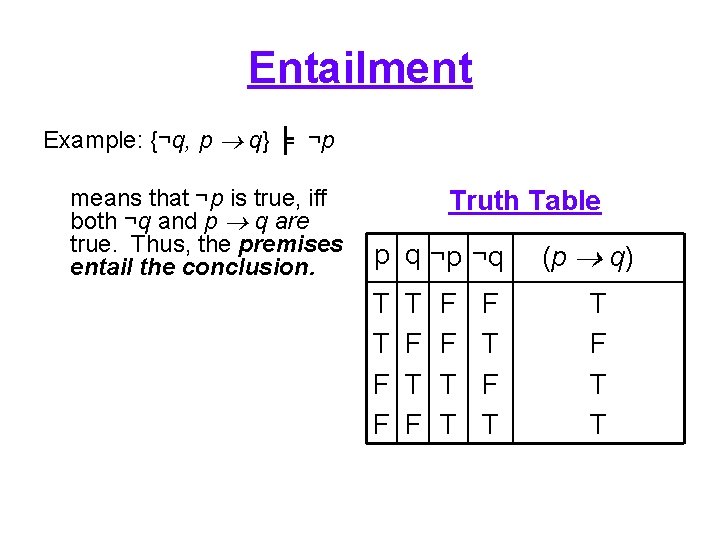

Entailment Example: {¬q, p q} ╞ ¬p means that ¬p is true, iff both ¬q and p q are true. Thus, the premises entail the conclusion. Truth Table p q ¬p ¬q T T F F F T T F T (p q) T F T T

Entailment • Lines 1, 2 and 3 all have false truth assignments so we disregard them. • This means that we are left with one assignment, where all assignments for the formula are true. – i. e. ¬q is true, p q is true and ¬p is true. • Therefore, ¬p is entailed by {¬q, p q} , or more formally: {¬q, p q} ╞ ¬p

Modus Ponens • (Latin term means) Affirming the antecedent • One particularly important derivation rule is modus ponens, as shown on the previous slide. • This takes the following form: p → q, p ╞ q • Essentially, this argument states that given the premise p → q, and the premise p then we must conclude q.

Modus Ponens Example • An example argument of the form modus ponens: Premises: - If it is raining then ground is wet (p → q), - It is raining (p), Conclusion: - Therefore, the ground is wet (q).

Modus Tollens • Denying the consequent • Another important derivation rule is modus tollens (also known as the contraposition) have the following form: p → q, ¬q ╞ ¬p • Example: Premises: - If it is raining then the ground is wet (p → q), - The ground is not wet (¬q) Conclusion: - Therefore, it is not raining (¬p).

Soundness and Completeness • Two important properties to consider in inference systems are soundness and completeness. • A “logic is sound”, with respect to its semantics, if only true formulae are derivable under the inference rules, from premises which themselves are all true. (i. e. the inference rules are correct) • A “logic is complete” if all the true formulae are provable from the rules of the logic. (i. e. no other rules are required)

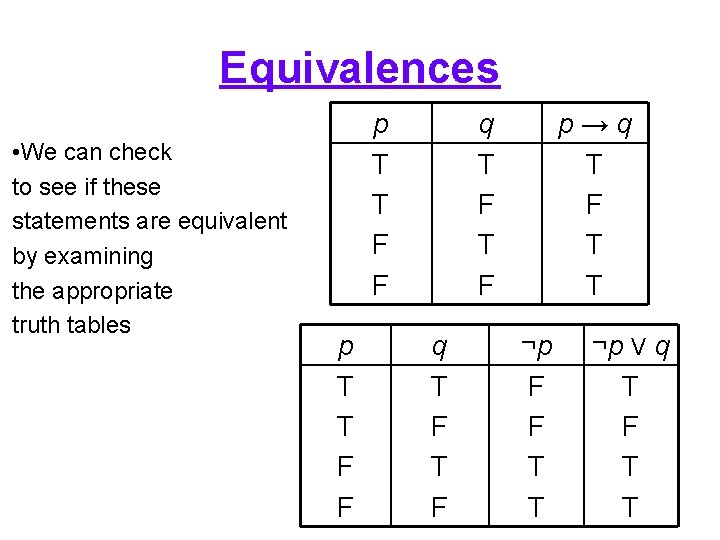

Equivalences • It is worth noting that there a number of equivalences between the logical connectives. Thus: p q is equivalent to ¬p V q p Λ q is equivalent to ¬(¬p V ¬q) p V q is equivalent to ¬p q • The symbol we use to denote equivalence is: ≡ e. g. p q ≡ ¬p V q etc.

Equivalences • We can check to see if these statements are equivalent by examining the appropriate truth tables p T T F F q T F T F p→q T F T T ¬p F F T T ¬p V q T F T T

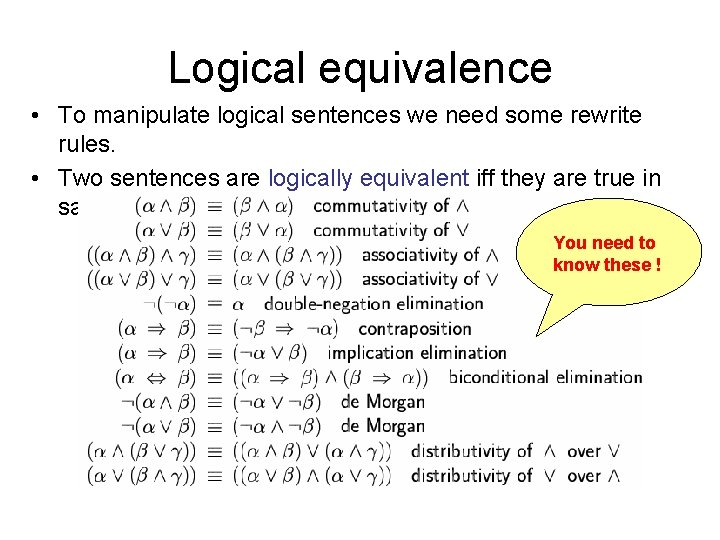

Logical equivalence • To manipulate logical sentences we need some rewrite rules. • Two sentences are logically equivalent iff they are true in same models: α ≡ ß iff α╞ β and β╞ α You need to know these !

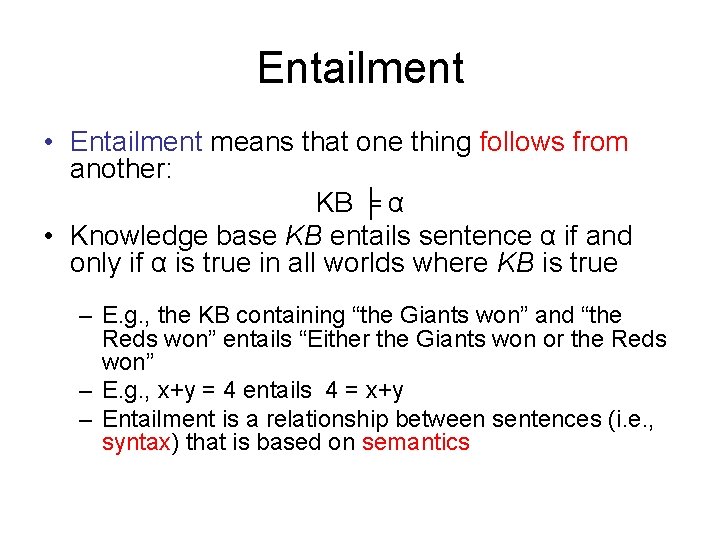

Entailment • Entailment means that one thing follows from another: KB ╞ α • Knowledge base KB entails sentence α if and only if α is true in all worlds where KB is true – E. g. , the KB containing “the Giants won” and “the Reds won” entails “Either the Giants won or the Reds won” – E. g. , x+y = 4 entails 4 = x+y – Entailment is a relationship between sentences (i. e. , syntax) that is based on semantics

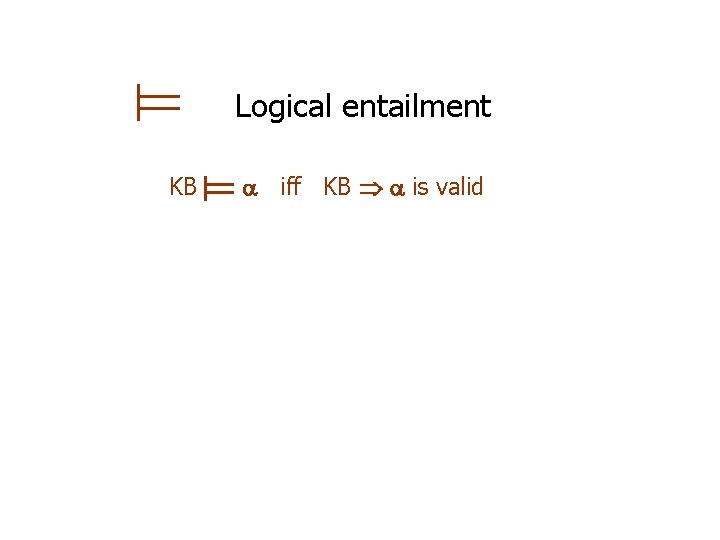

Logical entailment KB iff KB is valid

Models • Models are formally structured worlds with respect to which truth can be evaluated. • m is a model of a sentence if is true in m • M( ) is the set of all models of

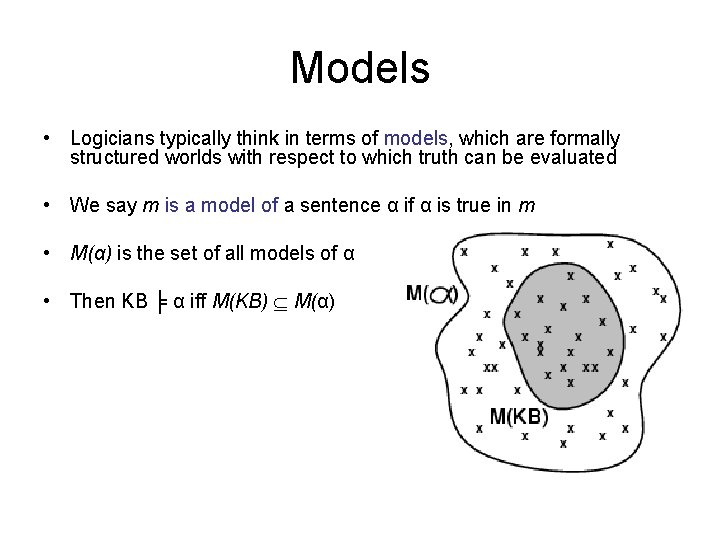

Models • Logicians typically think in terms of models, which are formally structured worlds with respect to which truth can be evaluated • We say m is a model of a sentence α if α is true in m • M(α) is the set of all models of α • Then KB ╞ α iff M(KB) M(α)

![Entailment in the wumpus world Situation after detecting nothing in [1, 1], moving right, Entailment in the wumpus world Situation after detecting nothing in [1, 1], moving right,](http://slidetodoc.com/presentation_image_h/cee24b0275eb89ee56c745a60c4e293a/image-89.jpg)

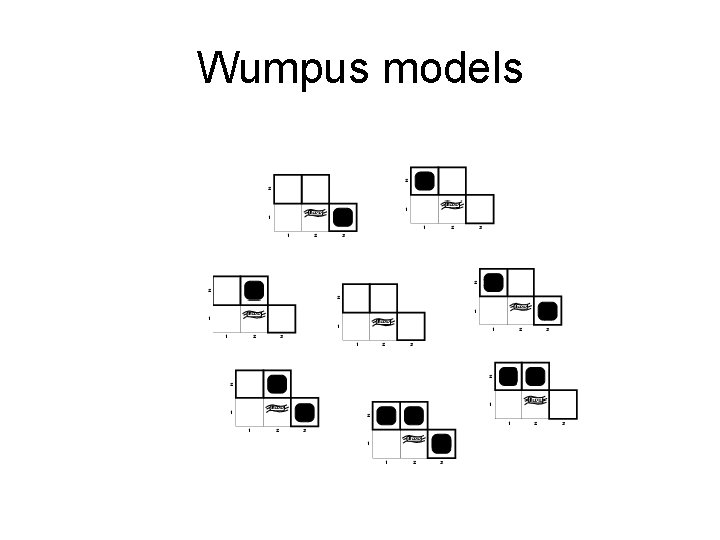

Entailment in the wumpus world Situation after detecting nothing in [1, 1], moving right, breeze in [2, 1] Consider possible models for KB assuming only pits 3 Boolean choices 8 possible models

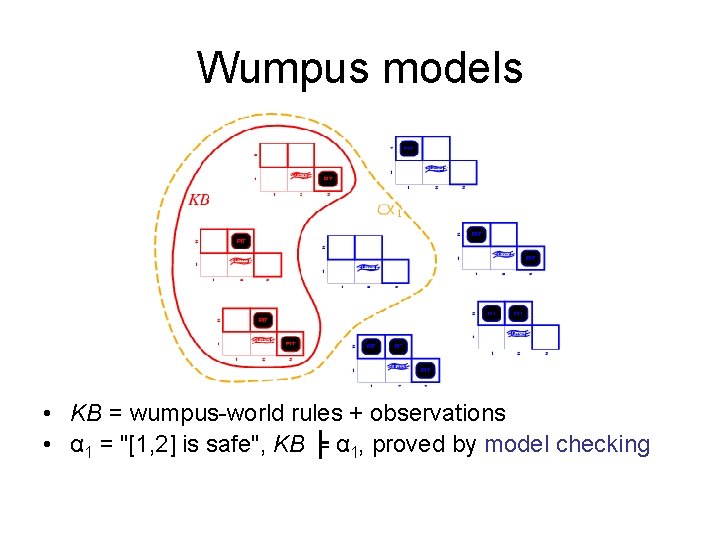

Wumpus models

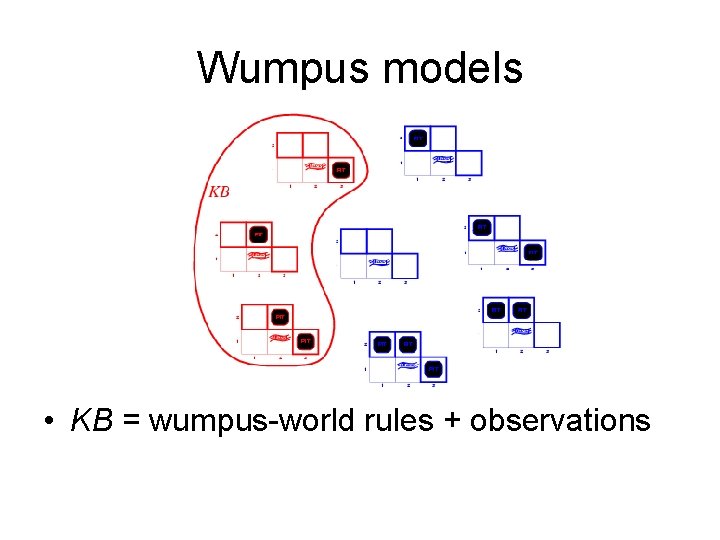

Wumpus models • KB = wumpus-world rules + observations

Wumpus models • KB = wumpus-world rules + observations • α 1 = "[1, 2] is safe", KB ╞ α 1, proved by model checking

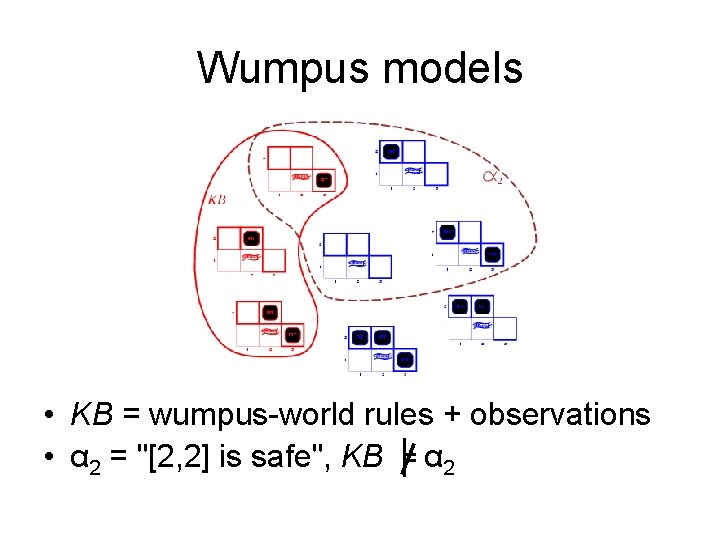

Wumpus models • KB = wumpus-world rules + observations

Wumpus models • KB = wumpus-world rules + observations • α 2 = "[2, 2] is safe", KB ╞ α 2

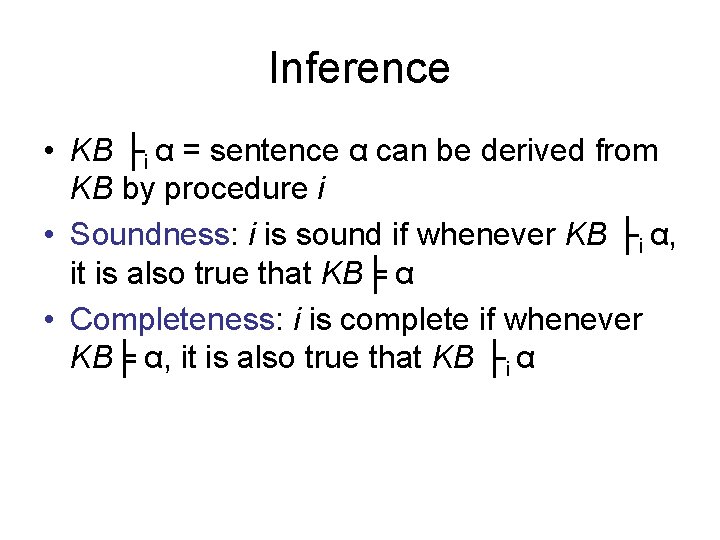

Inference • KB ├i α = sentence α can be derived from KB by procedure i • Soundness: i is sound if whenever KB ├i α, it is also true that KB╞ α • Completeness: i is complete if whenever KB╞ α, it is also true that KB ├i α

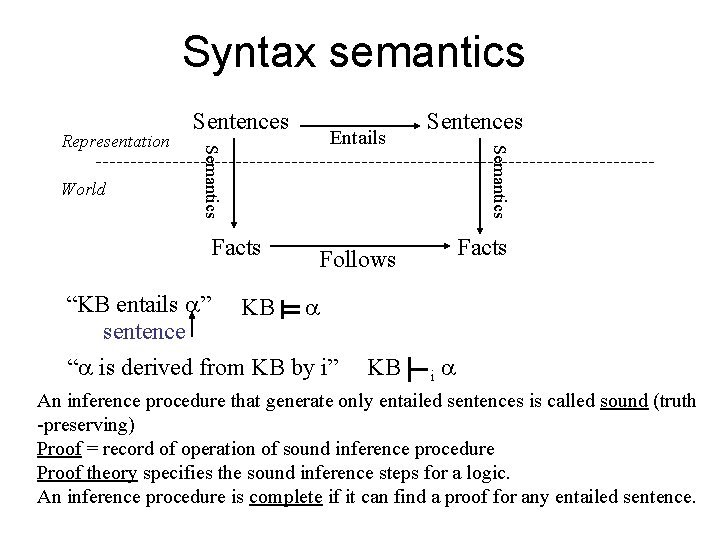

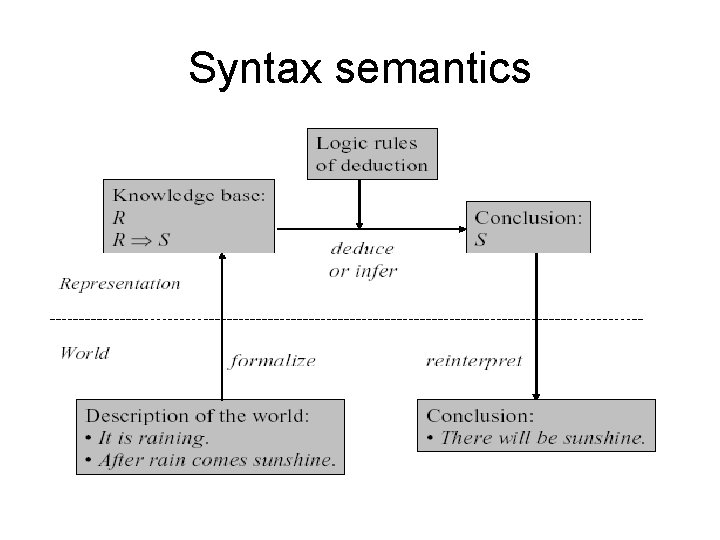

Syntax semantics Facts Entails Sentences Semantics World Semantics Representation Sentences Facts Follows “KB entails ” KB sentence “ is derived from KB by i” KB i An inference procedure that generate only entailed sentences is called sound (truth -preserving) Proof = record of operation of sound inference procedure Proof theory specifies the sound inference steps for a logic. An inference procedure is complete if it can find a proof for any entailed sentence.

Syntax semantics

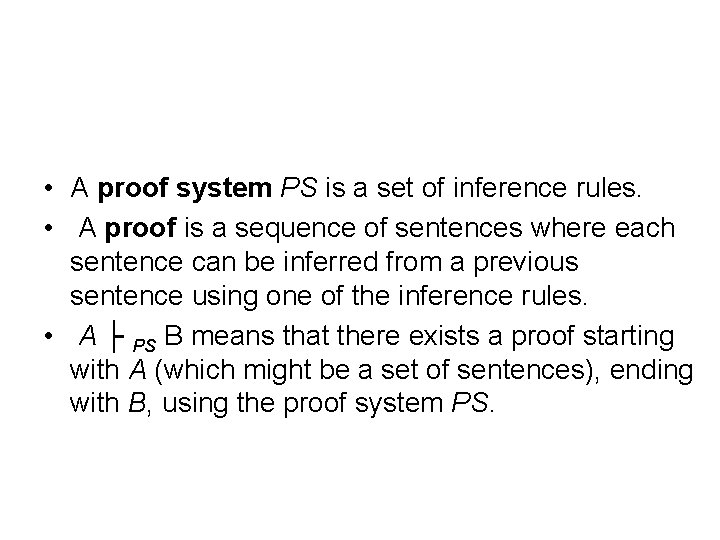

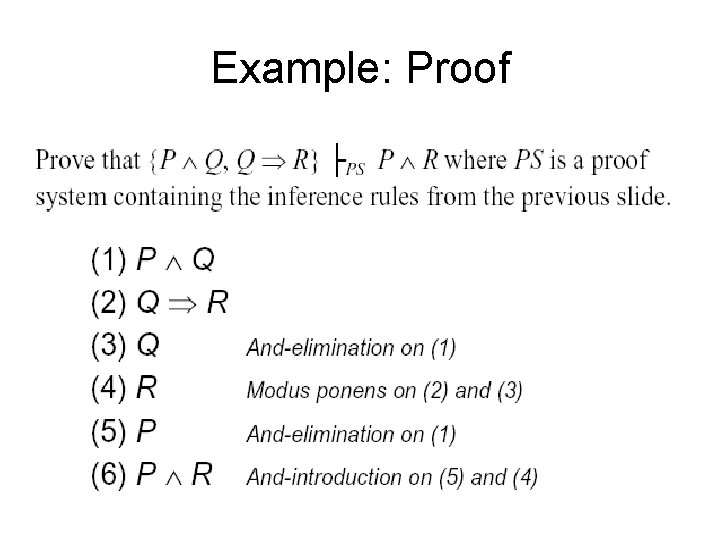

• A proof system PS is a set of inference rules. • A proof is a sequence of sentences where each sentence can be inferred from a previous sentence using one of the inference rules. • A ├ PS B means that there exists a proof starting with A (which might be a set of sentences), ending with B, using the proof system PS.

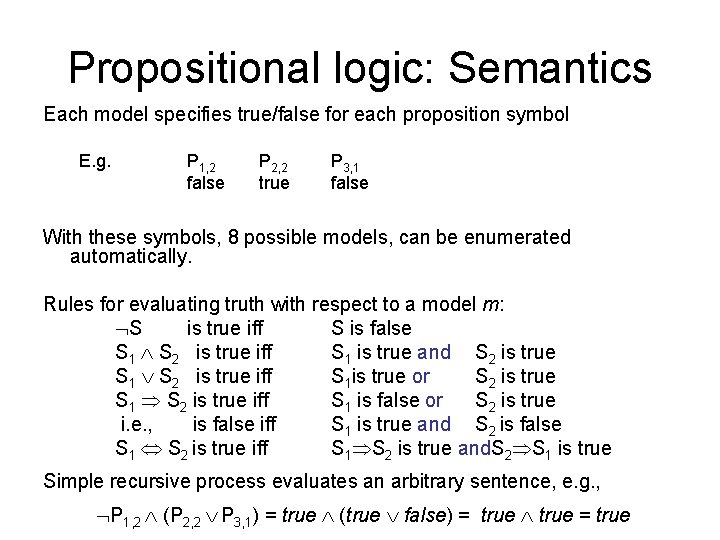

Propositional logic: Semantics Each model specifies true/false for each proposition symbol E. g. P 1, 2 false P 2, 2 true P 3, 1 false With these symbols, 8 possible models, can be enumerated automatically. Rules for evaluating truth with respect to a model m: S is true iff S is false S 1 S 2 is true iff S 1 is true and S 2 is true S 1 S 2 is true iff S 1 is true or S 2 is true S 1 S 2 is true iff S 1 is false or S 2 is true i. e. , is false iff S 1 is true and S 2 is false S 1 S 2 is true iff S 1 S 2 is true and. S 2 S 1 is true Simple recursive process evaluates an arbitrary sentence, e. g. , P 1, 2 (P 2, 2 P 3, 1) = true (true false) = true

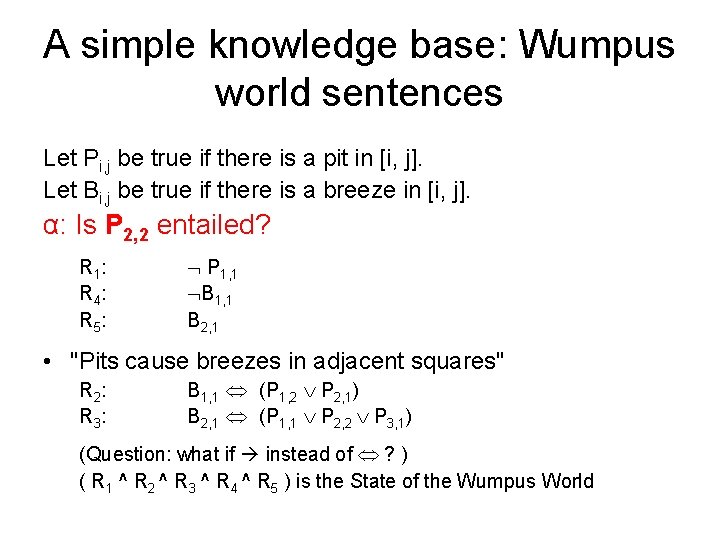

A simple knowledge base: Wumpus world sentences Let Pi, j be true if there is a pit in [i, j]. Let Bi, j be true if there is a breeze in [i, j]. α: Is P 2, 2 entailed? R 1 : R 4 : R 5 : P 1, 1 B 2, 1 • "Pits cause breezes in adjacent squares" R 2 : R 3 : B 1, 1 (P 1, 2 P 2, 1) B 2, 1 (P 1, 1 P 2, 2 P 3, 1) (Question: what if instead of ? ) ( R 1 ^ R 2 ^ R 3 ^ R 4 ^ R 5 ) is the State of the Wumpus World

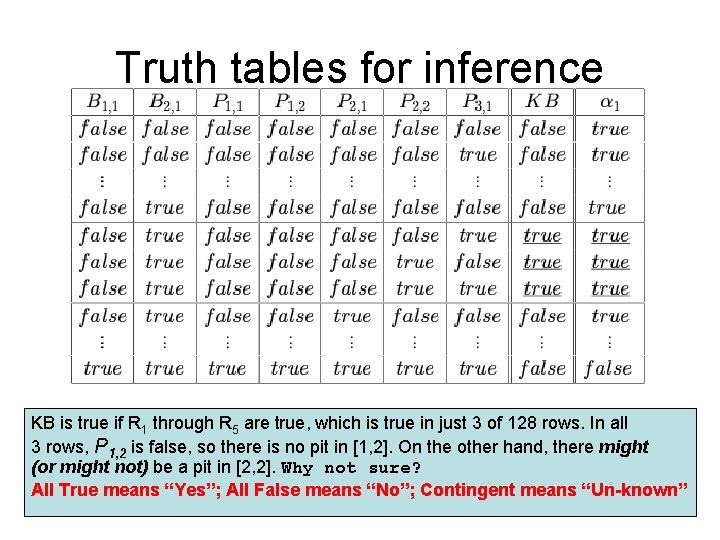

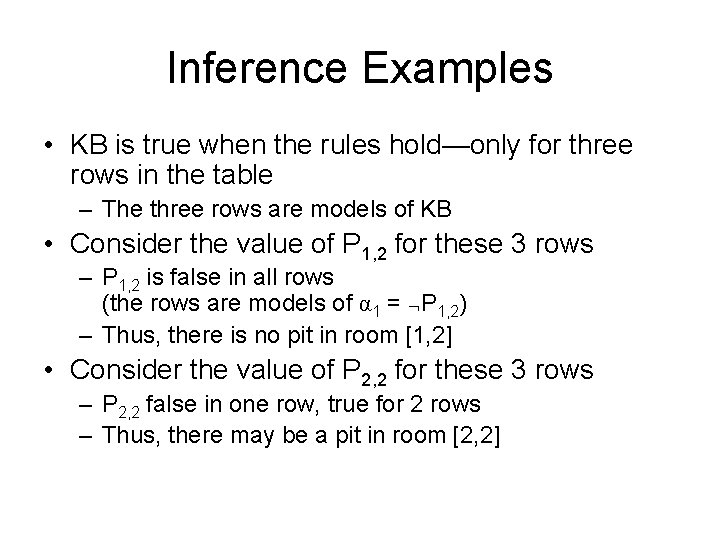

Truth tables for inference KB is true if R 1 through R 5 are true, which is true in just 3 of 128 rows. In all 3 rows, P 1, 2 is false, so there is no pit in [1, 2]. On the other hand, there might (or might not) be a pit in [2, 2]. Why not sure? All True means “Yes”; All False means “No”; Contingent means “Un-known”

Inference Examples • KB is true when the rules hold—only for three rows in the table – The three rows are models of KB • Consider the value of P 1, 2 for these 3 rows – P 1, 2 is false in all rows (the rows are models of α 1 = P 1, 2) – Thus, there is no pit in room [1, 2] • Consider the value of P 2, 2 for these 3 rows – P 2, 2 false in one row, true for 2 rows – Thus, there may be a pit in room [2, 2]

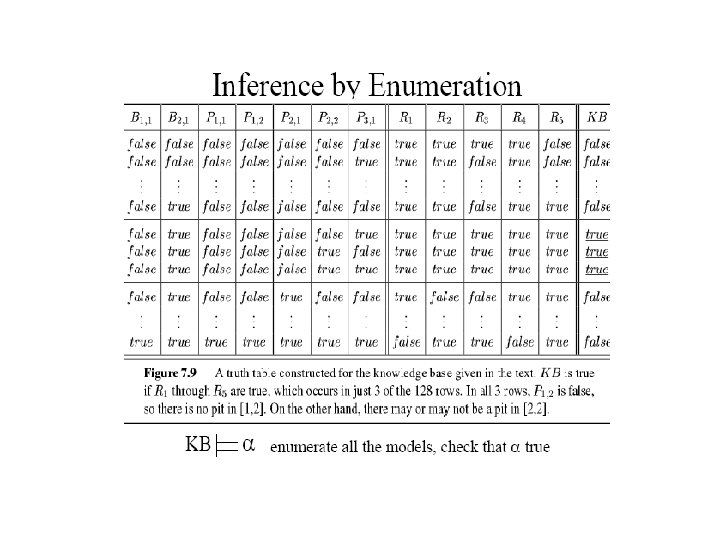

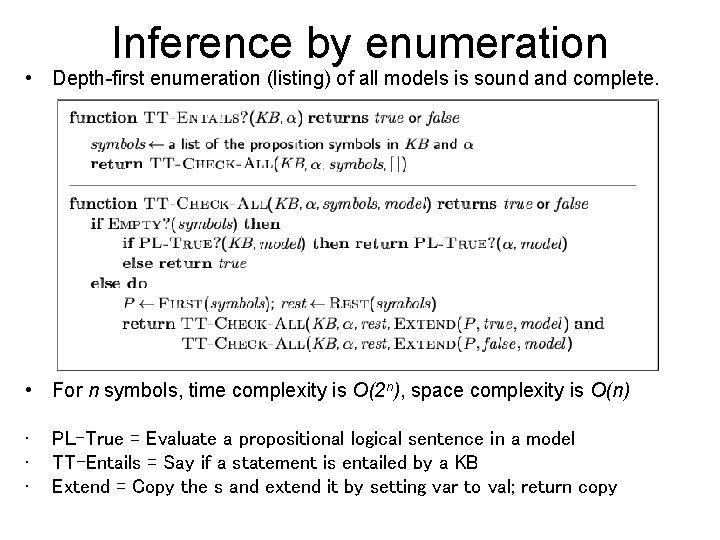

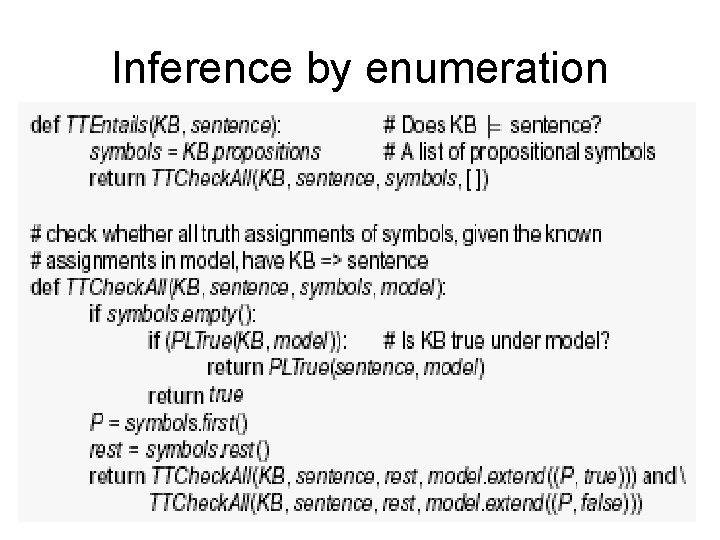

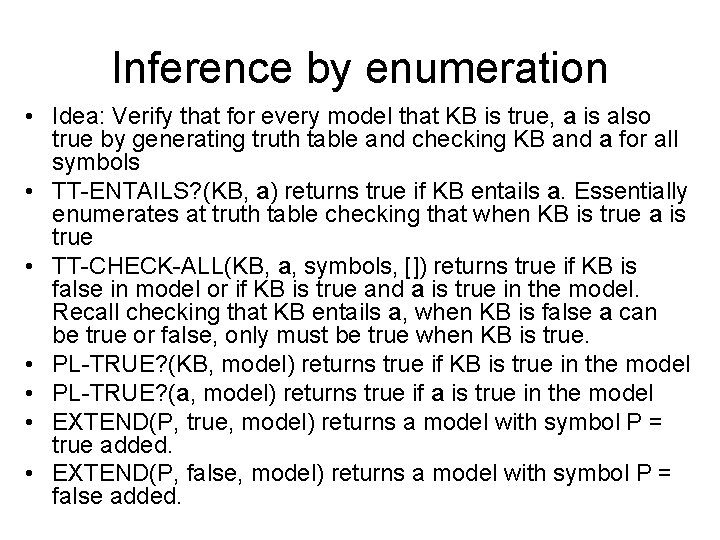

Inference by enumeration • Depth-first enumeration (listing) of all models is sound and complete. • For n symbols, time complexity is O(2 n), space complexity is O(n) • • • PL-True = Evaluate a propositional logical sentence in a model TT-Entails = Say if a statement is entailed by a KB Extend = Copy the s and extend it by setting var to val; return copy

Inference by enumeration

Inference by enumeration • Idea: Verify that for every model that KB is true, a is also true by generating truth table and checking KB and a for all symbols • TT-ENTAILS? (KB, a) returns true if KB entails a. Essentially enumerates at truth table checking that when KB is true a is true • TT-CHECK-ALL(KB, a, symbols, []) returns true if KB is false in model or if KB is true and a is true in the model. Recall checking that KB entails a, when KB is false a can be true or false, only must be true when KB is true. • PL-TRUE? (KB, model) returns true if KB is true in the model • PL-TRUE? (a, model) returns true if a is true in the model • EXTEND(P, true, model) returns a model with symbol P = true added. • EXTEND(P, false, model) returns a model with symbol P = false added.

Inference rules • Logical inference is used to create new sentences that logically follow from a given set of predicate calculus sentences (KB). • An inference rule is sound if every sentence X produced by an inference rule operating on a KB logically follows from the KB. • An inference rule is complete if it is able to produce every expression that logically follows from (is entailed by) the KB.

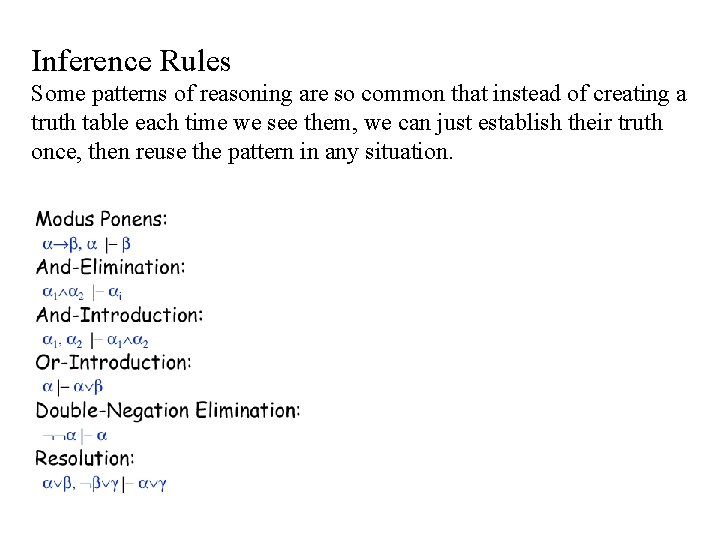

Inference Rules Some patterns of reasoning are so common that instead of creating a truth table each time we see them, we can just establish their truth once, then reuse the pattern in any situation.

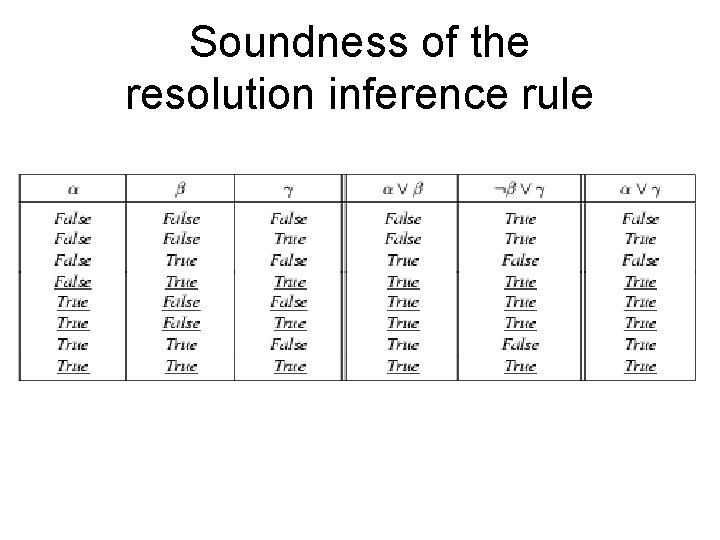

Soundness of the resolution inference rule

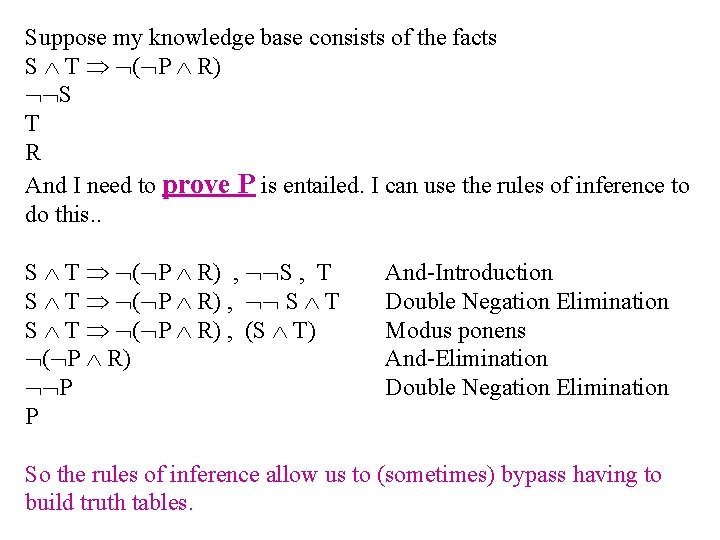

Suppose my knowledge base consists of the facts S T ( P R) S T R And I need to prove P is entailed. I can use the rules of inference to do this. . S T ( P R) , S , T S T ( P R) , (S T) ( P R) P P And-Introduction Double Negation Elimination Modus ponens And-Elimination Double Negation Elimination So the rules of inference allow us to (sometimes) bypass having to build truth tables.

Example: Proof

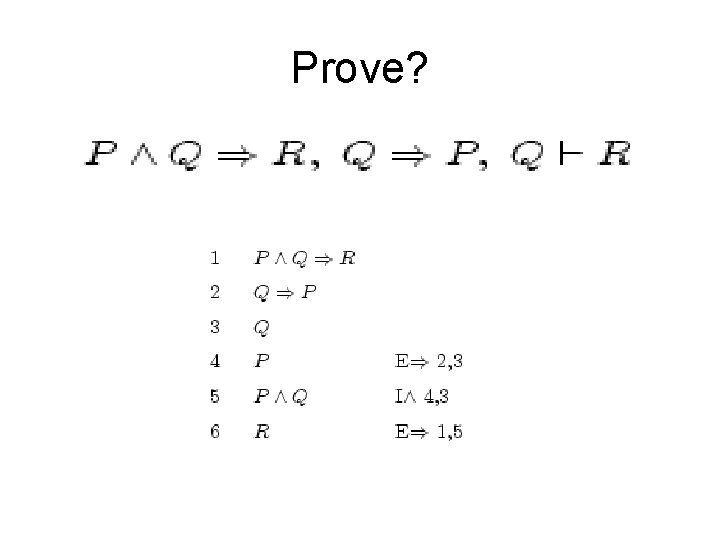

Prove?

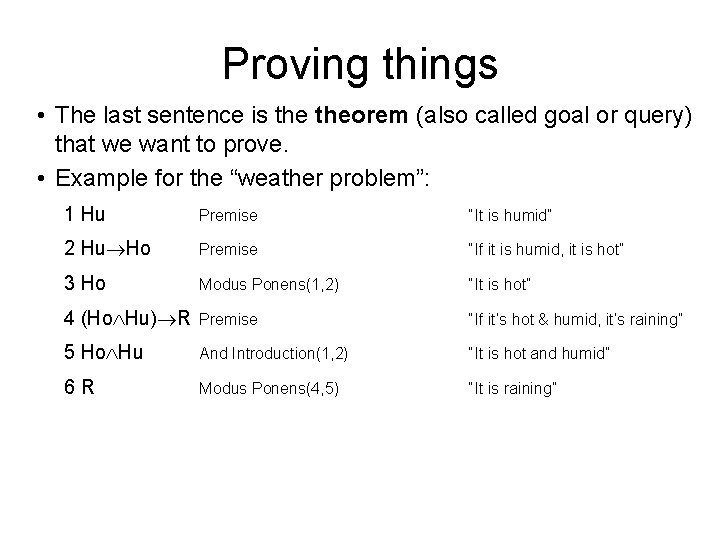

Proving things • The last sentence is theorem (also called goal or query) that we want to prove. • Example for the “weather problem”: 1 Hu Premise “It is humid” 2 Hu Ho Premise “If it is humid, it is hot” 3 Ho Modus Ponens(1, 2) “It is hot” 4 (Ho Hu) R Premise “If it’s hot & humid, it’s raining” 5 Ho Hu And Introduction(1, 2) “It is hot and humid” 6 R Modus Ponens(4, 5) “It is raining”

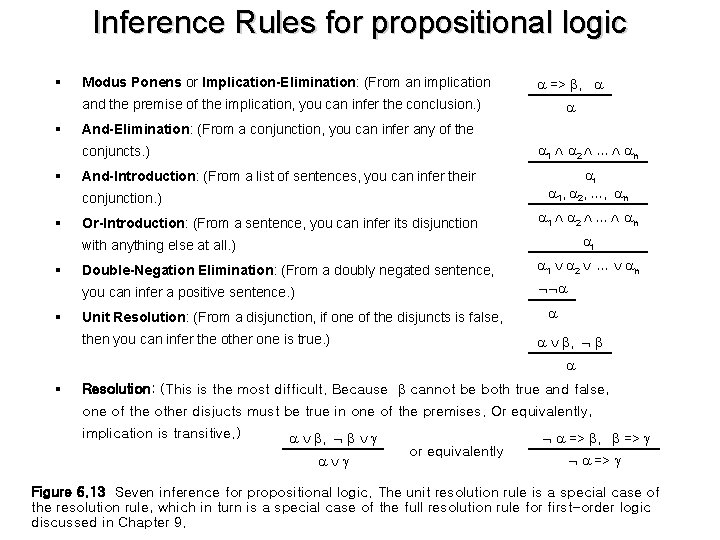

Inference Rules for propositional logic § Modus Ponens or Implication-Elimination: (From an implication => , and the premise of the implication, you can infer the conclusion. ) § And-Elimination: (From a conjunction, you can infer any of the 1 2 … n conjuncts. ) § And-Introduction: (From a list of sentences, you can infer their conjunction. ) § Or-Introduction: (From a sentence, you can infer its disjunction i 1, 2, …, n 1 2 … n i with anything else at all. ) § § Double-Negation Elimination: (From a doubly negated sentence, 1 2 … n you can infer a positive sentence. ) Unit Resolution: (From a disjunction, if one of the disjuncts is false, then you can infer the other one is true. ) , § Resolution: (This is the most difficult. Because cannot be both true and false, one of the other disjucts must be true in one of the premises. Or equivalently, implication is transitive. ) , or equivalently => , => Figure 6. 13 Seven inference for propositional logic. The unit resolution rule is a special case of the resolution rule, which in turn is a special case of the full resolution rule for first-order logic discussed in Chapter 9.

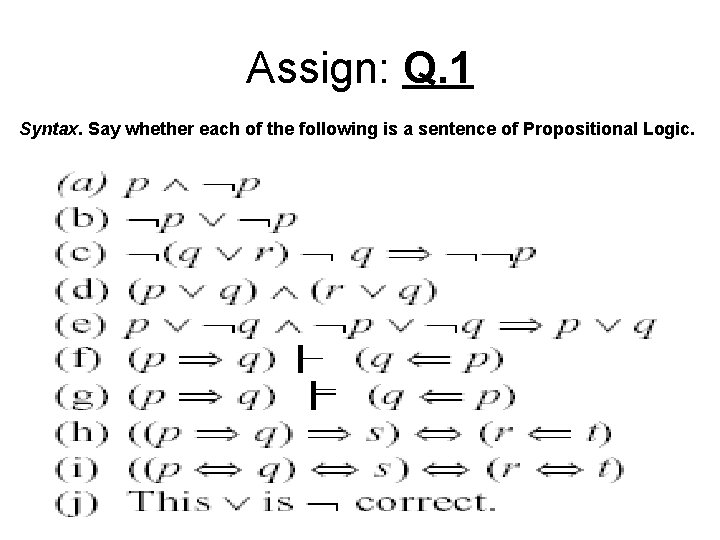

Assign: Q. 1 Syntax. Say whether each of the following is a sentence of Propositional Logic.

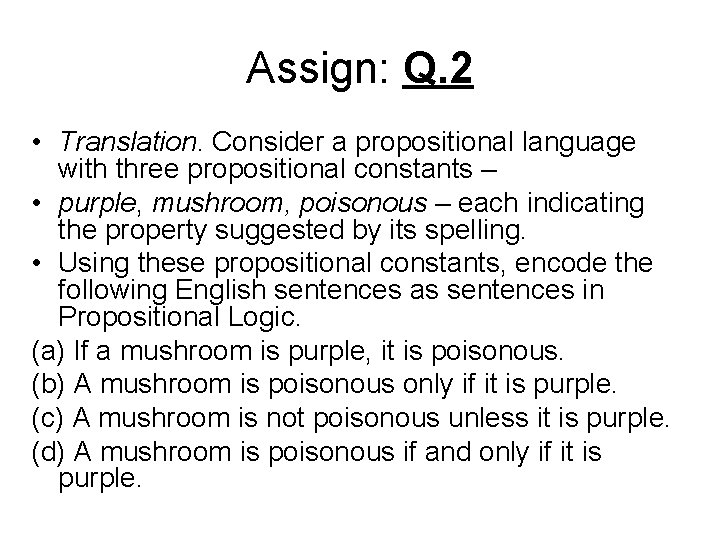

Assign: Q. 2 • Translation. Consider a propositional language with three propositional constants – • purple, mushroom, poisonous – each indicating the property suggested by its spelling. • Using these propositional constants, encode the following English sentences as sentences in Propositional Logic. (a) If a mushroom is purple, it is poisonous. (b) A mushroom is poisonous only if it is purple. (c) A mushroom is not poisonous unless it is purple. (d) A mushroom is poisonous if and only if it is purple.

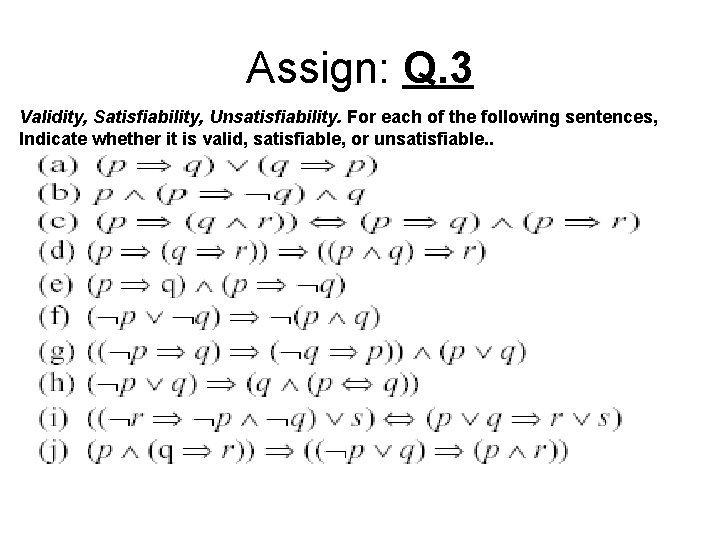

Assign: Q. 3 Validity, Satisfiability, Unsatisfiability. For each of the following sentences, Indicate whether it is valid, satisfiable, or unsatisfiable. .

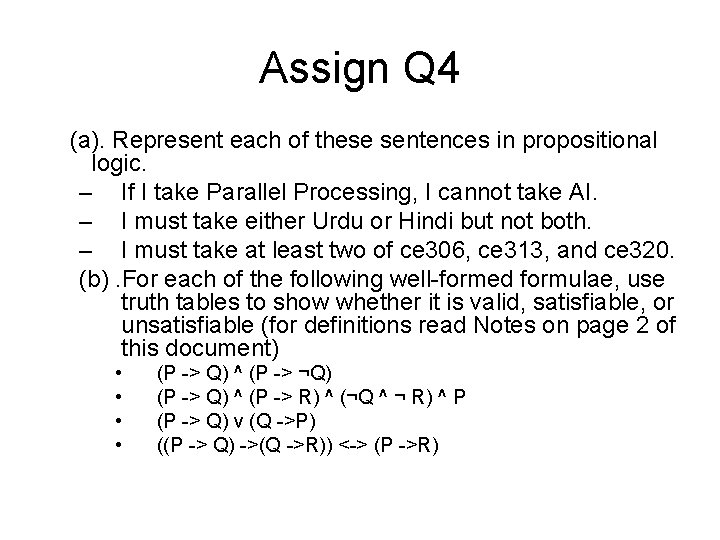

Assign Q 4 (a). Represent each of these sentences in propositional logic. – If I take Parallel Processing, I cannot take AI. – I must take either Urdu or Hindi but not both. – I must take at least two of ce 306, ce 313, and ce 320. (b). For each of the following well-formed formulae, use truth tables to show whether it is valid, satisfiable, or unsatisfiable (for definitions read Notes on page 2 of this document) • • (P -> Q) ^ (P -> ¬Q) (P -> Q) ^ (P -> R) ^ (¬Q ^ ¬ R) ^ P (P -> Q) v (Q ->P) ((P -> Q) ->(Q ->R)) <-> (P ->R)

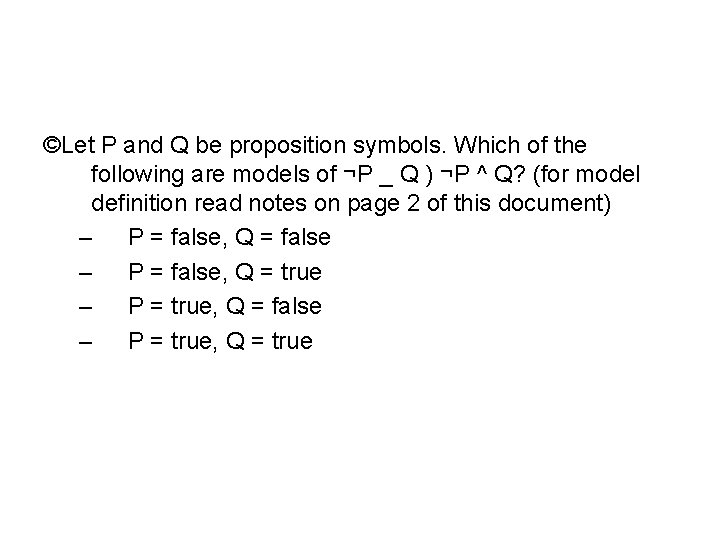

©Let P and Q be proposition symbols. Which of the following are models of ¬P _ Q ) ¬P ^ Q? (for model definition read notes on page 2 of this document) – P = false, Q = false – P = false, Q = true – P = true, Q = false – P = true, Q = true

Assign: Q. 5 • Given the following, can you prove that the unicorn is mythical? How about magical? Horned? – If the unicorn is mythical, then it is immortal, but if it is not mythical, then it is a mortal mammal. If the unicorn is either immortal or a mammal, then it is horned. The unicorn is magical if it is horned.

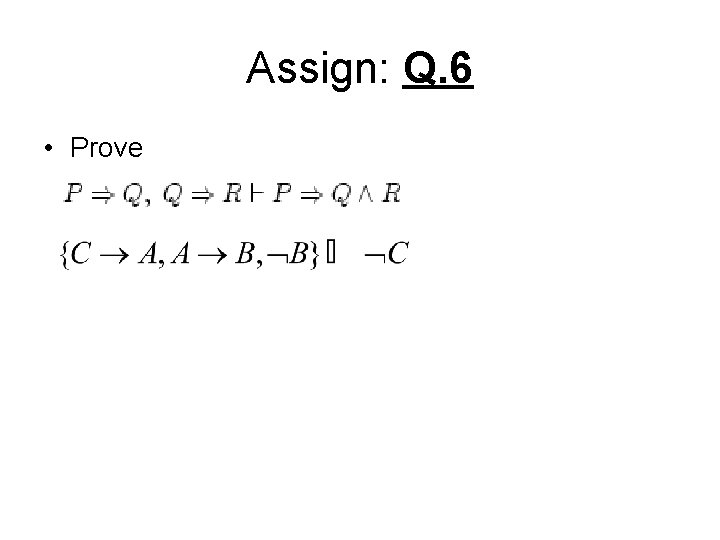

Assign: Q. 6 • Prove

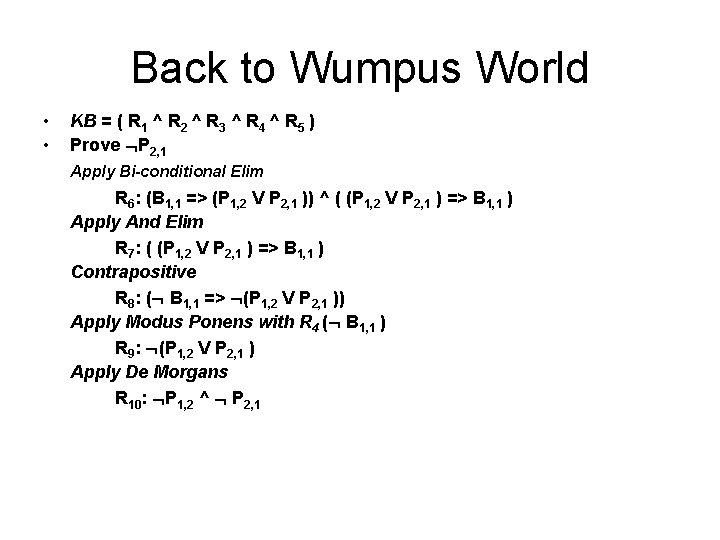

Back to Wumpus World • • KB = ( R 1 ^ R 2 ^ R 3 ^ R 4 ^ R 5 ) Prove P 2, 1 Apply Bi-conditional Elim R 6: (B 1, 1 => (P 1, 2 V P 2, 1 )) ^ ( (P 1, 2 V P 2, 1 ) => B 1, 1 ) Apply And Elim R 7: ( (P 1, 2 V P 2, 1 ) => B 1, 1 ) Contrapositive R 8: ( B 1, 1 => (P 1, 2 V P 2, 1 )) Apply Modus Ponens with R 4 ( B 1, 1 ) R 9: (P 1, 2 V P 2, 1 ) Apply De Morgans R 10: P 1, 2 ^ P 2, 1

Hard and Monotonic Note: Inference in propositional logic is NPComplete Searching for proofs in worst-case is no better than enumerating models. Monotonicity of Logic: Set of entailed/derived sentences can only increase as information is added to the KB; means Monotonicity: When we add new sentences to KB, all the sentences entailed by the original KB are still entailed.

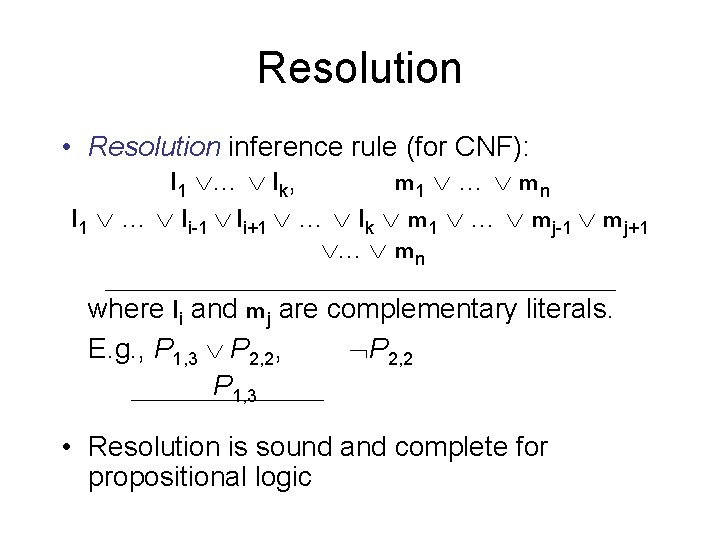

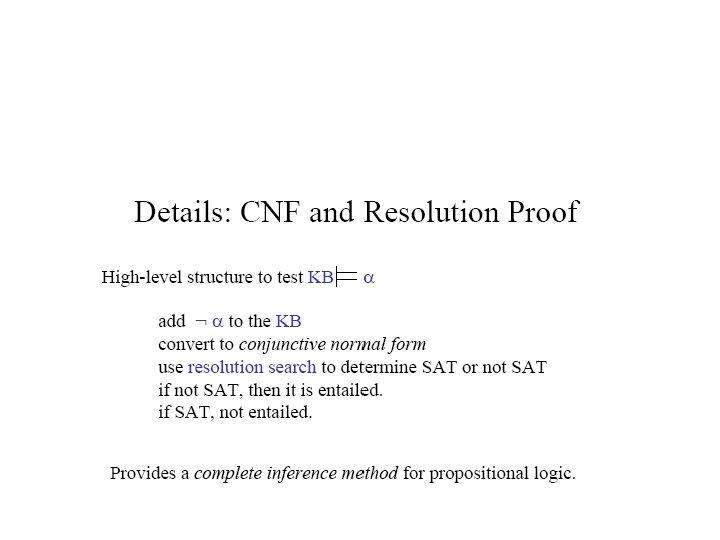

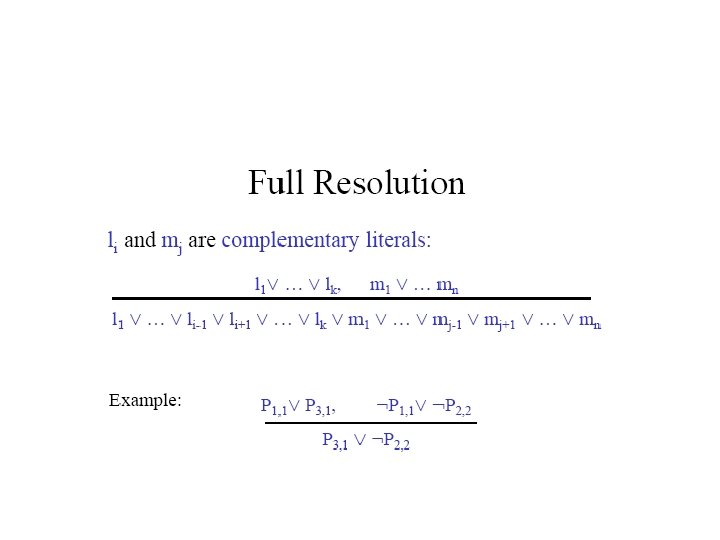

Resolution • Resolution inference rule (for CNF): l 1 … lk, m 1 … mn l 1 … li-1 li+1 … lk m 1 … mj-1 mj+1 . . . mn where li and mj are complementary literals. E. g. , P 1, 3 P 2, 2, P 2, 2 P 1, 3 • Resolution is sound and complete for propositional logic

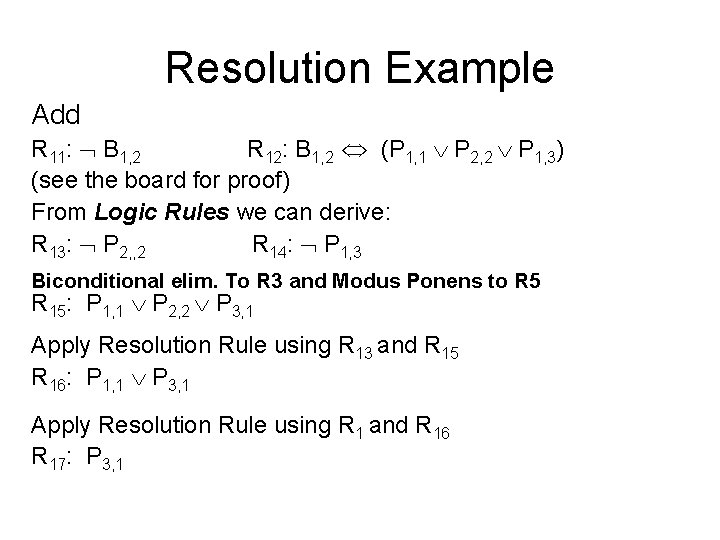

Resolution Example Add R 11: B 1, 2 R 12: B 1, 2 (P 1, 1 P 2, 2 P 1, 3) (see the board for proof) From Logic Rules we can derive: R 13: P 2, , 2 R 14: P 1, 3 Biconditional elim. To R 3 and Modus Ponens to R 5 R 15: P 1, 1 P 2, 2 P 3, 1 Apply Resolution Rule using R 13 and R 15 R 16: P 1, 1 P 3, 1 Apply Resolution Rule using R 1 and R 16 R 17: P 3, 1

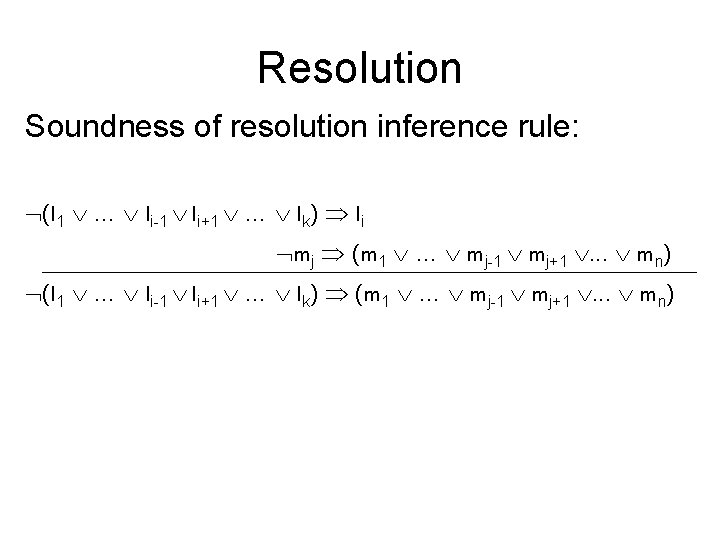

Resolution Soundness of resolution inference rule: (l 1 … li-1 li+1 … lk) li mj (m 1 … mj-1 mj+1 . . . mn) (l 1 … li-1 li+1 … lk) (m 1 … mj-1 mj+1 . . . mn)

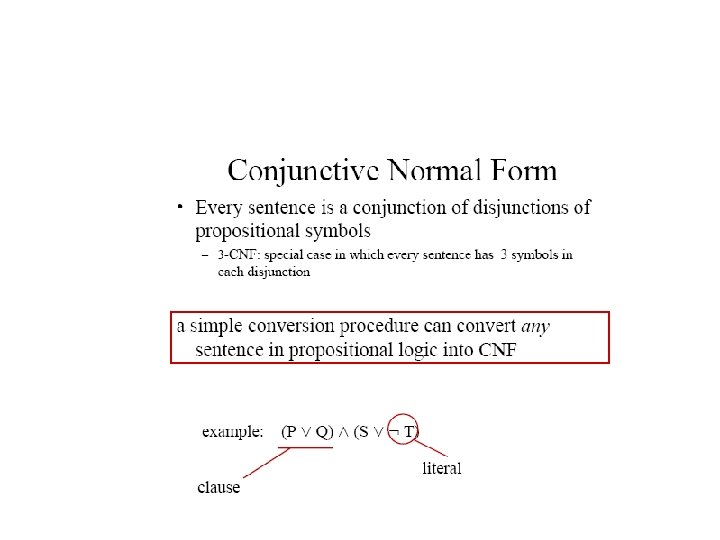

Conjunctive Normal Form (CNF) conjunction of disjunctions of literals clauses E. g. , (A B) (B C D)

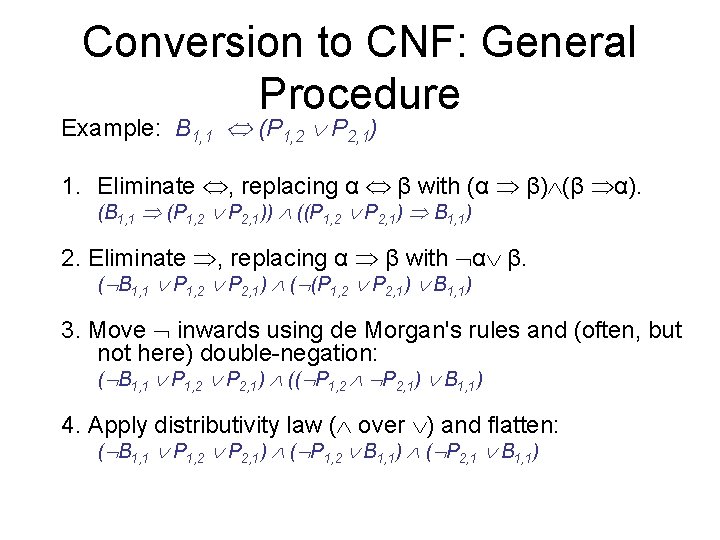

Conversion to CNF: General Procedure Example: B 1, 1 (P 1, 2 P 2, 1) 1. Eliminate , replacing α β with (α β) (β α). (B 1, 1 (P 1, 2 P 2, 1)) ((P 1, 2 P 2, 1) B 1, 1) 2. Eliminate , replacing α β with α β. ( B 1, 1 P 1, 2 P 2, 1) ( (P 1, 2 P 2, 1) B 1, 1) 3. Move inwards using de Morgan's rules and (often, but not here) double-negation: ( B 1, 1 P 1, 2 P 2, 1) (( P 1, 2 P 2, 1) B 1, 1) 4. Apply distributivity law ( over ) and flatten: ( B 1, 1 P 1, 2 P 2, 1) ( P 1, 2 B 1, 1) ( P 2, 1 B 1, 1)

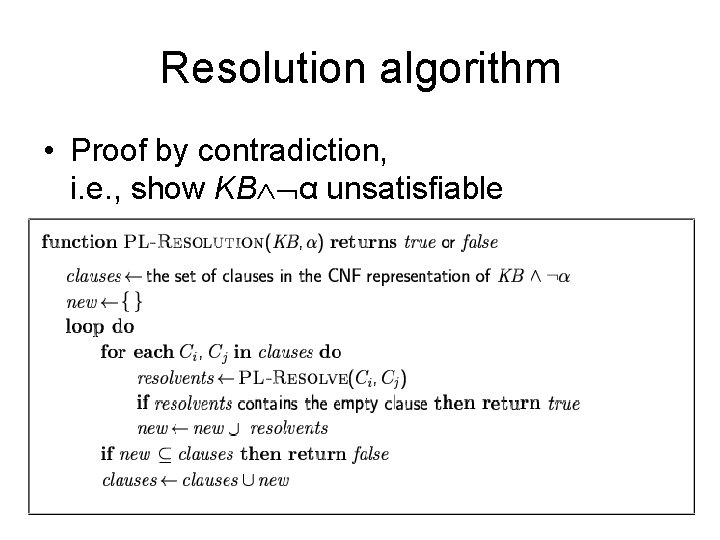

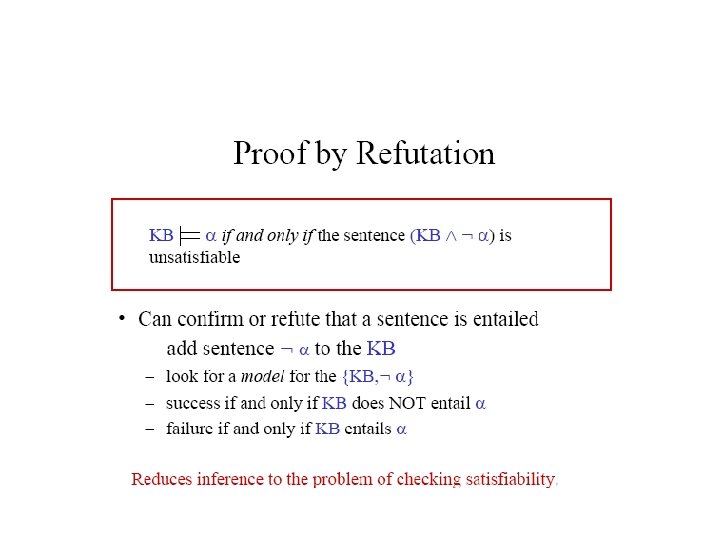

Resolution algorithm • Proof by contradiction, i. e. , show KB α unsatisfiable

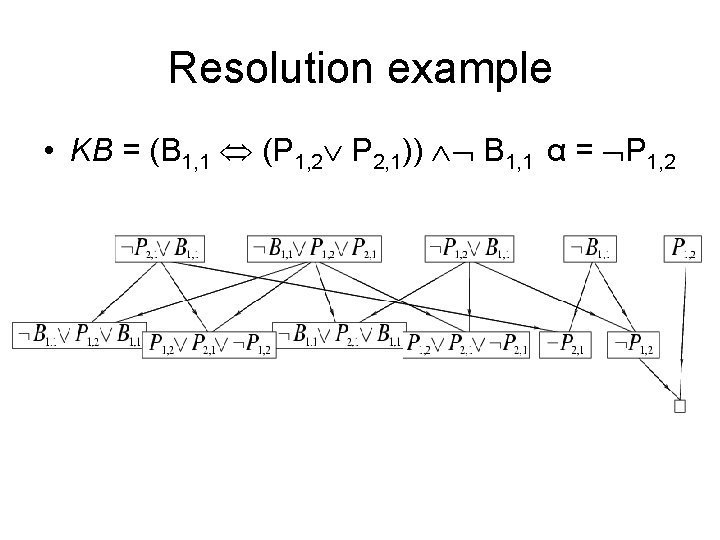

Resolution example • KB = (B 1, 1 (P 1, 2 P 2, 1)) B 1, 1 α = P 1, 2

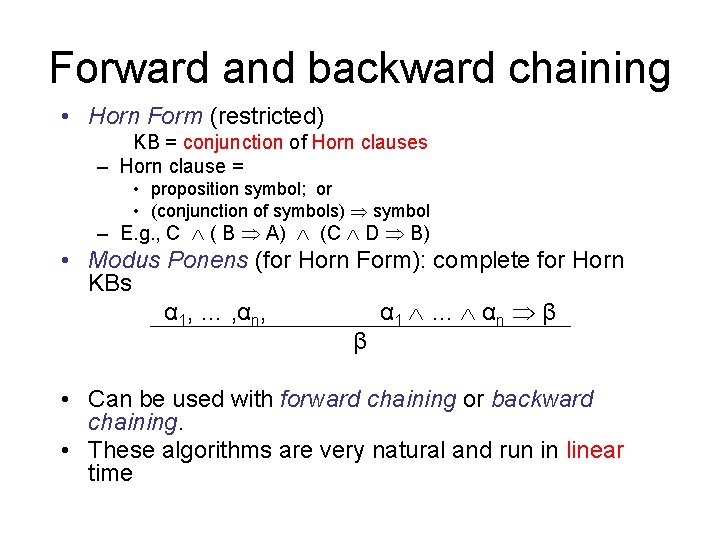

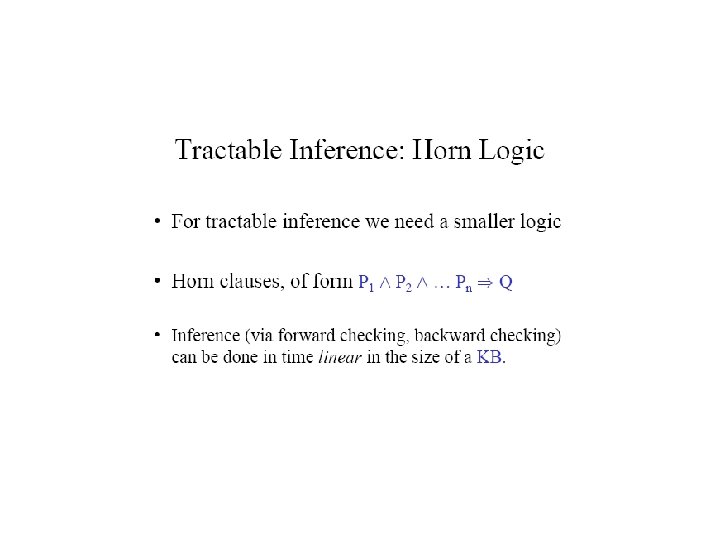

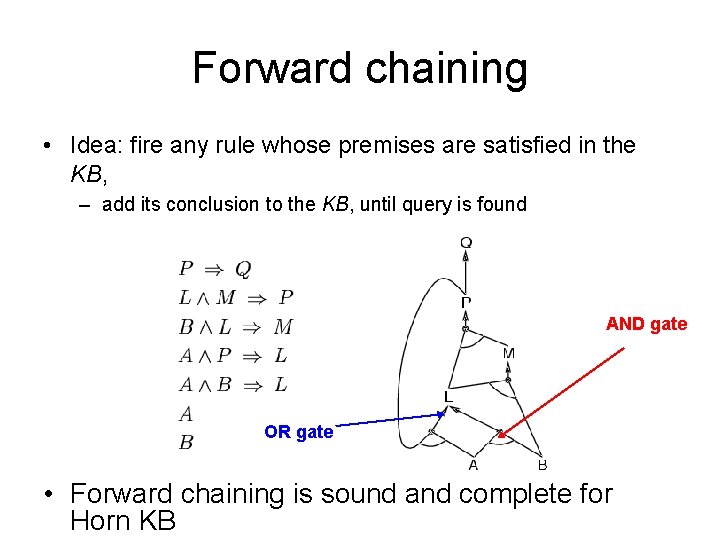

Forward and backward chaining • Horn Form (restricted) KB = conjunction of Horn clauses – Horn clause = • proposition symbol; or • (conjunction of symbols) symbol – E. g. , C ( B A) (C D B) • Modus Ponens (for Horn Form): complete for Horn KBs α 1, … , αn, α 1 … α n β β • Can be used with forward chaining or backward chaining. • These algorithms are very natural and run in linear time

The party example • • • If Alex goes, then Beki goes: A B If Chris goes, then Alex goes: C A Beki does not go: not B Chris goes: C Query: Is it possible to satisfy all these conditions? – Should I go to the party?

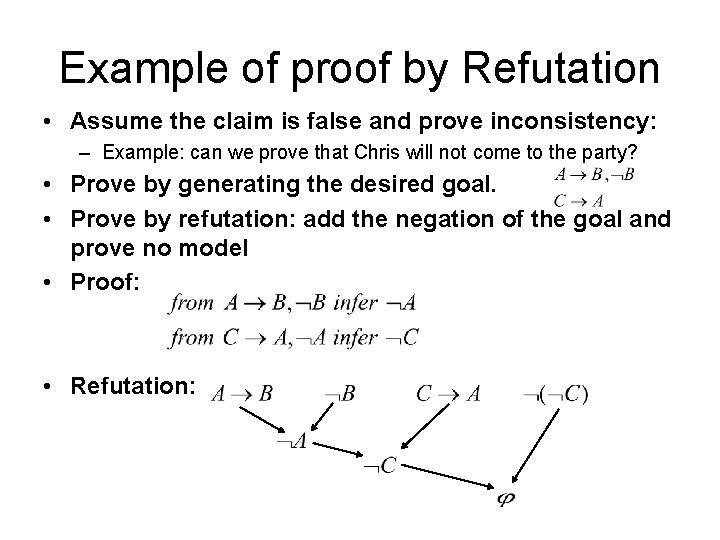

Example of proof by Refutation • Assume the claim is false and prove inconsistency: – Example: can we prove that Chris will not come to the party? • Prove by generating the desired goal. • Prove by refutation: add the negation of the goal and prove no model • Proof: • Refutation:

Horn sentences • A Horn sentence or Horn clause has the form: P 1 P 2 P 3. . . Pn Q or alternatively P 1 P 2 P 3. . . Pn Q (P Q) = ( P Q) where Ps and Q are non-negated atoms • To get a proof for Horn sentences, apply Modus Ponens repeatedly until nothing can be done • We will use the Horn clause form later

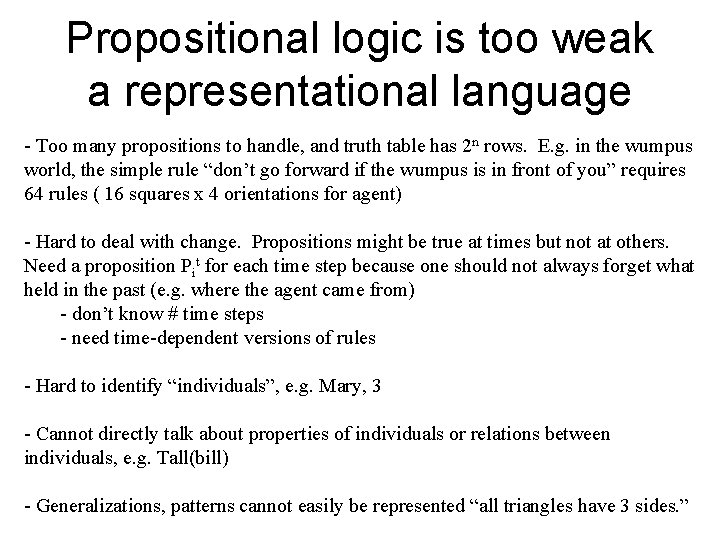

Propositional logic is a weak language • Hard to identify “individuals” (e. g. , Mary, 3) • Can’t directly talk about properties of individuals or relations between individuals (e. g. , “Bill is tall”) • Generalizations, patterns, regularities can’t easily be represented (e. g. , “all triangles have 3 sides”)

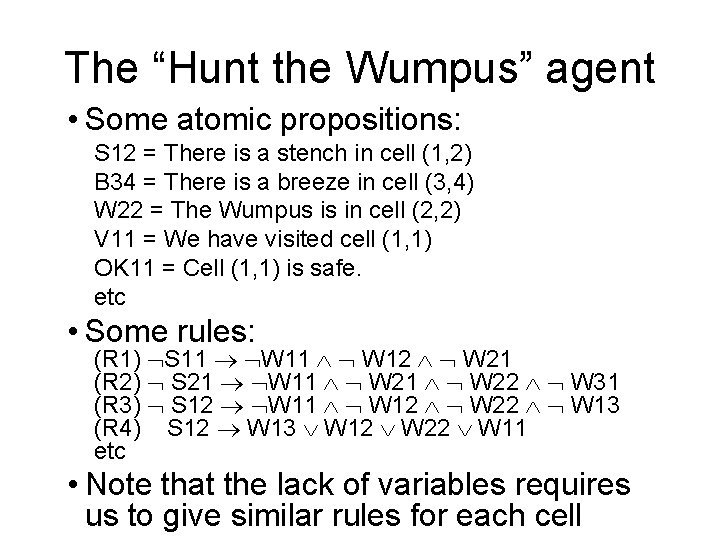

The “Hunt the Wumpus” agent • Some atomic propositions: S 12 = There is a stench in cell (1, 2) B 34 = There is a breeze in cell (3, 4) W 22 = The Wumpus is in cell (2, 2) V 11 = We have visited cell (1, 1) OK 11 = Cell (1, 1) is safe. etc • Some rules: (R 1) S 11 W 11 W 12 W 21 (R 2) S 21 W 11 W 22 W 31 (R 3) S 12 W 11 W 12 W 22 W 13 (R 4) S 12 W 13 W 12 W 22 W 11 etc • Note that the lack of variables requires us to give similar rules for each cell

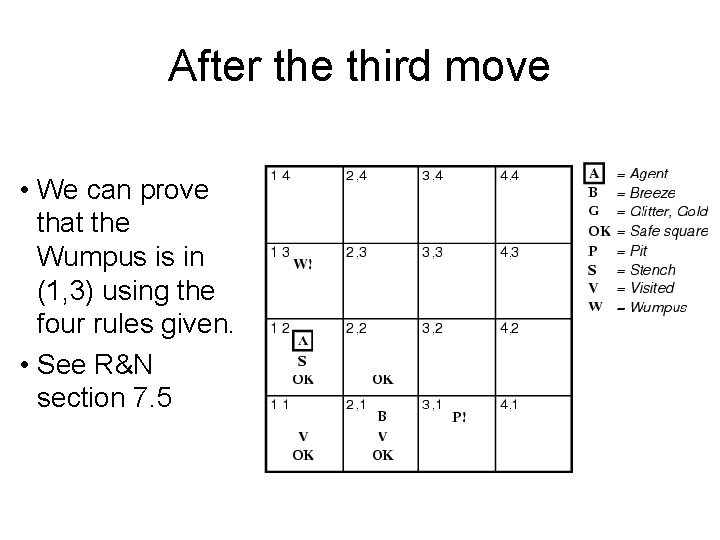

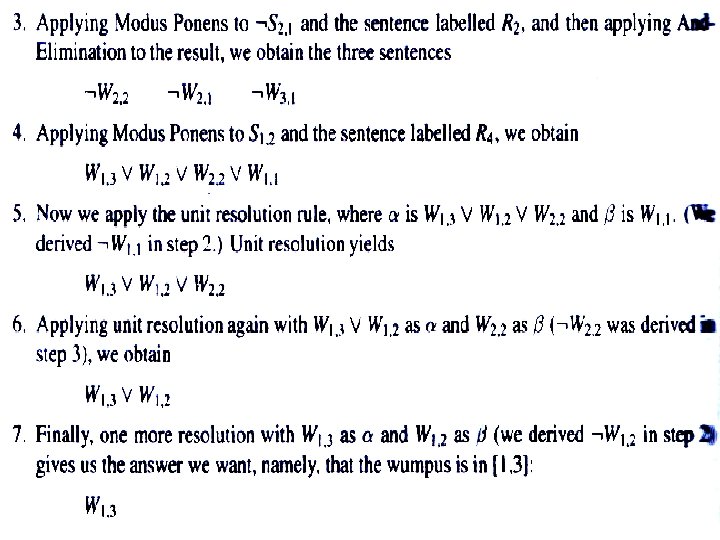

After the third move • We can prove that the Wumpus is in (1, 3) using the four rules given. • See R&N section 7. 5

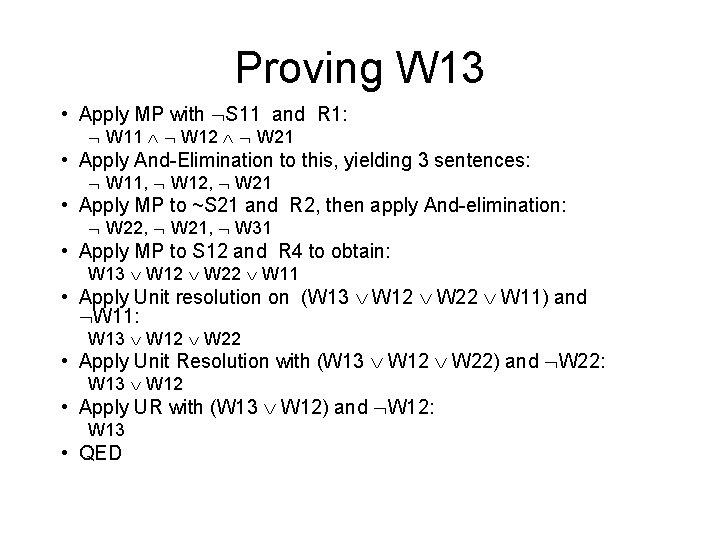

Proving W 13 • Apply MP with S 11 and R 1: W 11 W 12 W 21 • Apply And-Elimination to this, yielding 3 sentences: W 11, W 12, W 21 • Apply MP to ~S 21 and R 2, then apply And-elimination: W 22, W 21, W 31 • Apply MP to S 12 and R 4 to obtain: W 13 W 12 W 22 W 11 • Apply Unit resolution on (W 13 W 12 W 22 W 11) and W 11: W 13 W 12 W 22 • Apply Unit Resolution with (W 13 W 12 W 22) and W 22: W 13 W 12 • Apply UR with (W 13 W 12) and W 12: W 13 • QED

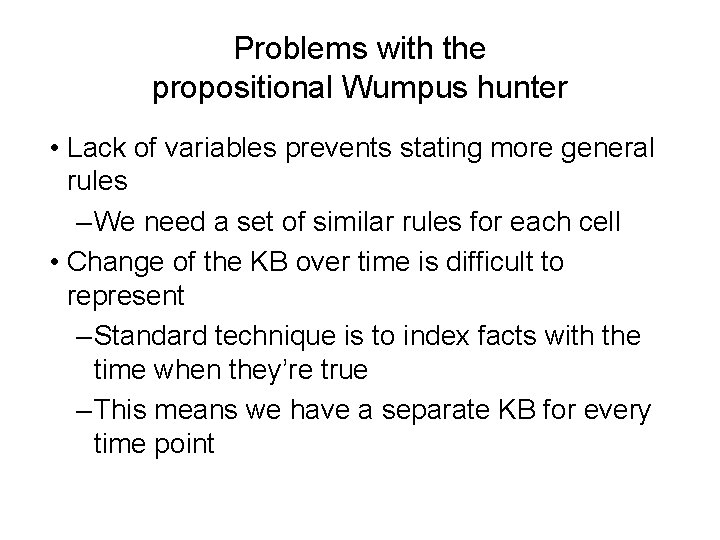

Problems with the propositional Wumpus hunter • Lack of variables prevents stating more general rules – We need a set of similar rules for each cell • Change of the KB over time is difficult to represent – Standard technique is to index facts with the time when they’re true – This means we have a separate KB for every time point

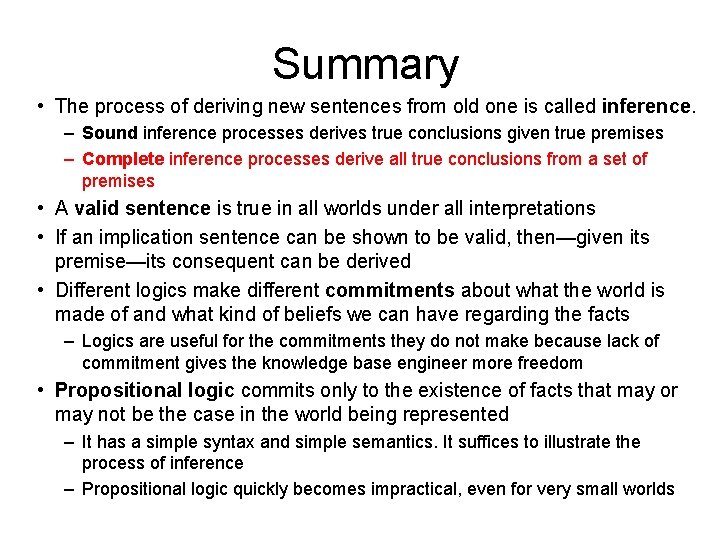

Summary • The process of deriving new sentences from old one is called inference. – Sound inference processes derives true conclusions given true premises – Complete inference processes derive all true conclusions from a set of premises • A valid sentence is true in all worlds under all interpretations • If an implication sentence can be shown to be valid, then—given its premise—its consequent can be derived • Different logics make different commitments about what the world is made of and what kind of beliefs we can have regarding the facts – Logics are useful for the commitments they do not make because lack of commitment gives the knowledge base engineer more freedom • Propositional logic commits only to the existence of facts that may or may not be the case in the world being represented – It has a simple syntax and simple semantics. It suffices to illustrate the process of inference – Propositional logic quickly becomes impractical, even for very small worlds

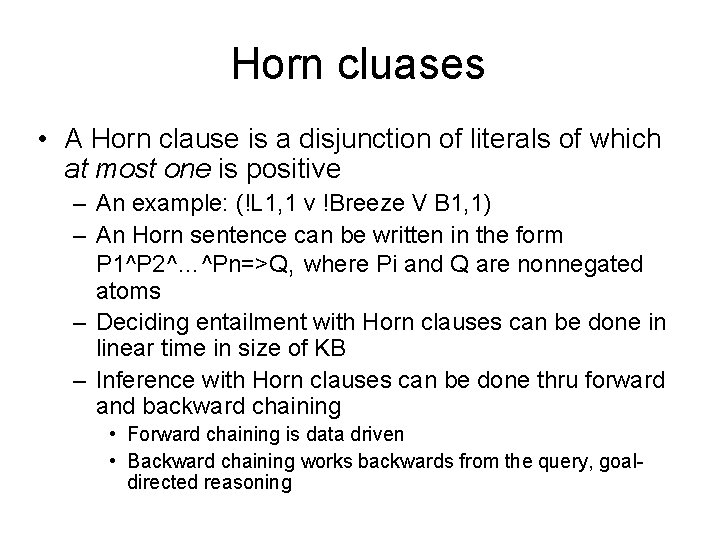

Horn cluases • A Horn clause is a disjunction of literals of which at most one is positive – An example: (!L 1, 1 v !Breeze V B 1, 1) – An Horn sentence can be written in the form P 1^P 2^…^Pn=>Q, where Pi and Q are nonnegated atoms – Deciding entailment with Horn clauses can be done in linear time in size of KB – Inference with Horn clauses can be done thru forward and backward chaining • Forward chaining is data driven • Backward chaining works backwards from the query, goaldirected reasoning

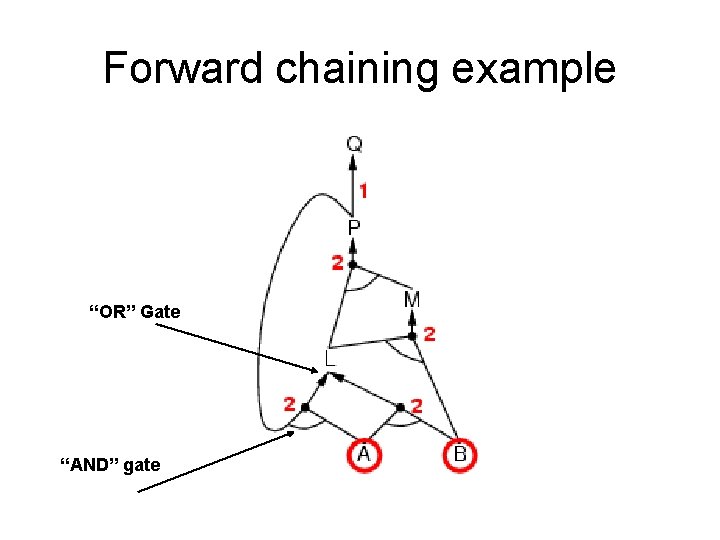

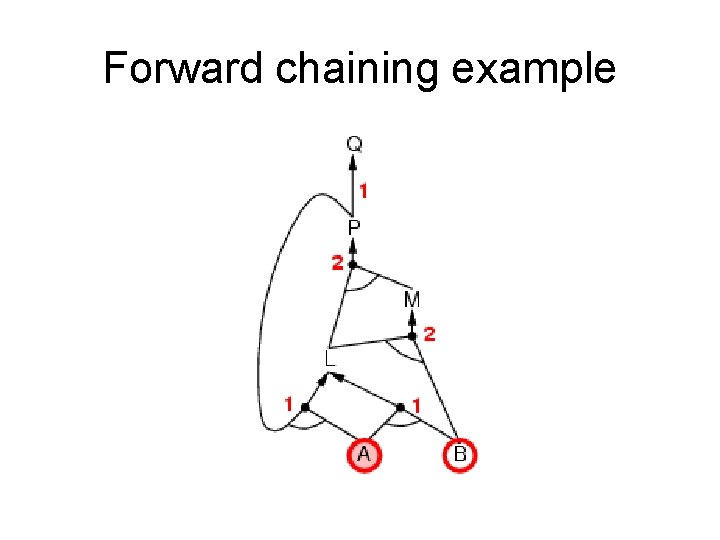

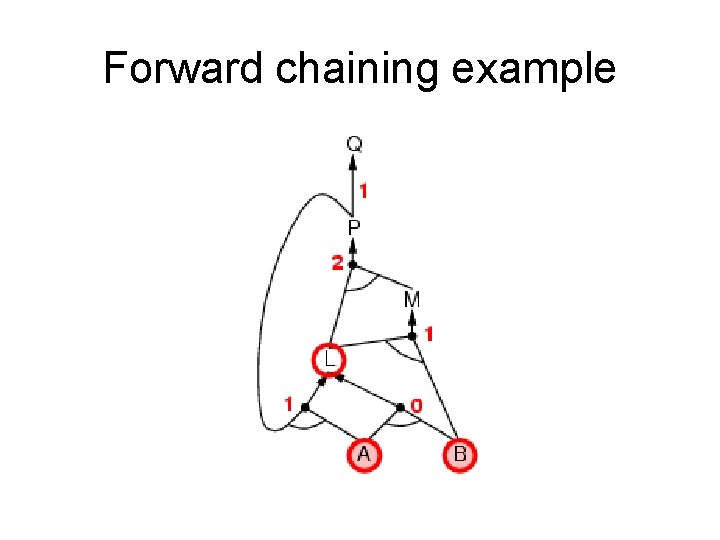

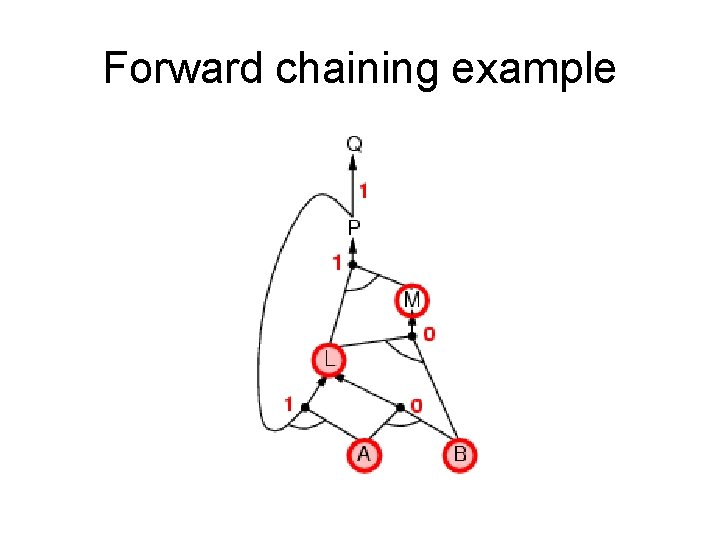

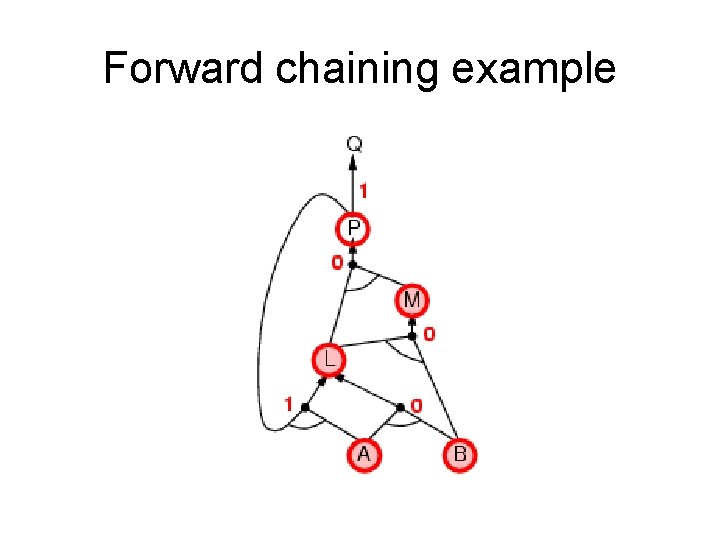

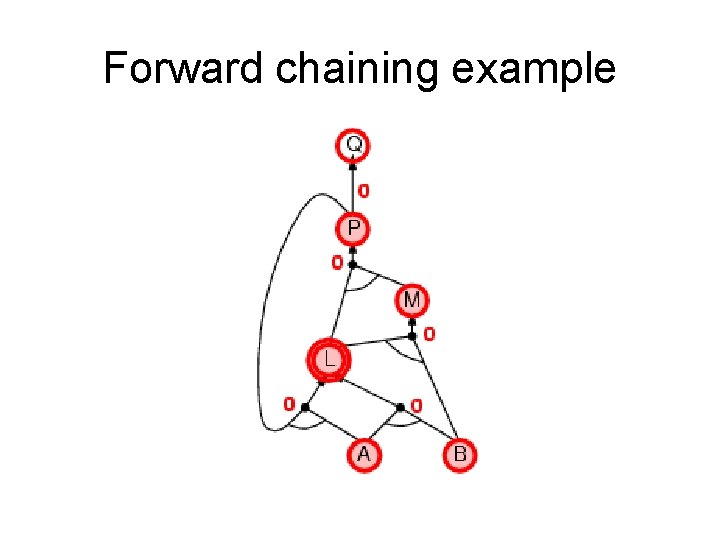

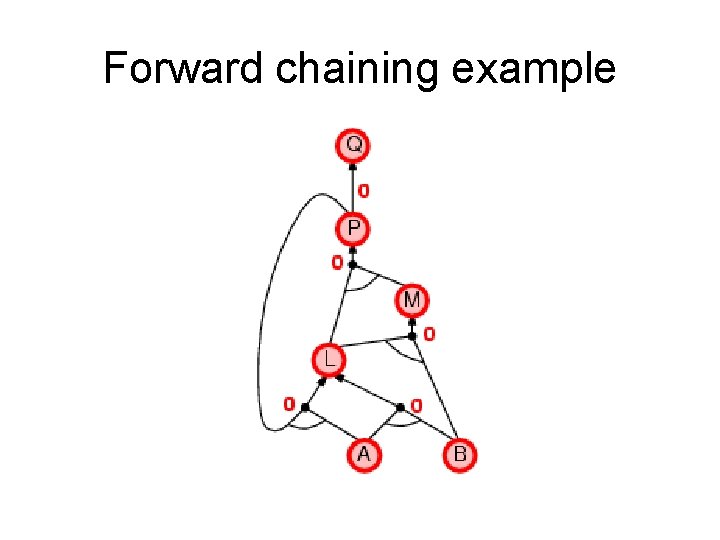

Forward chaining • Idea: fire any rule whose premises are satisfied in the KB, – add its conclusion to the KB, until query is found AND gate OR gate • Forward chaining is sound and complete for Horn KB

Forward chaining example “OR” Gate “AND” gate

Forward chaining example

Forward chaining example

Forward chaining example

Forward chaining example

Forward chaining example

Forward chaining example

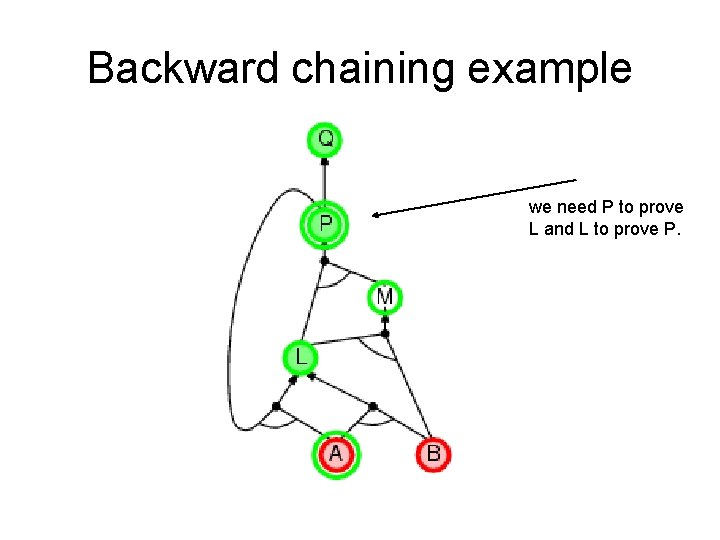

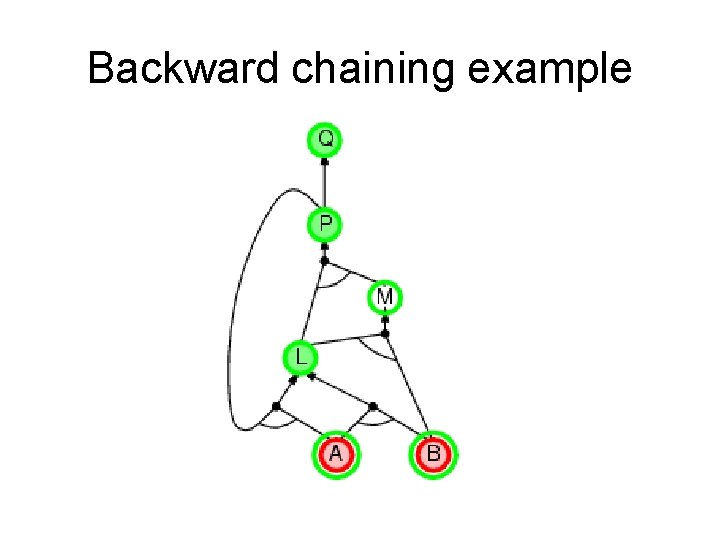

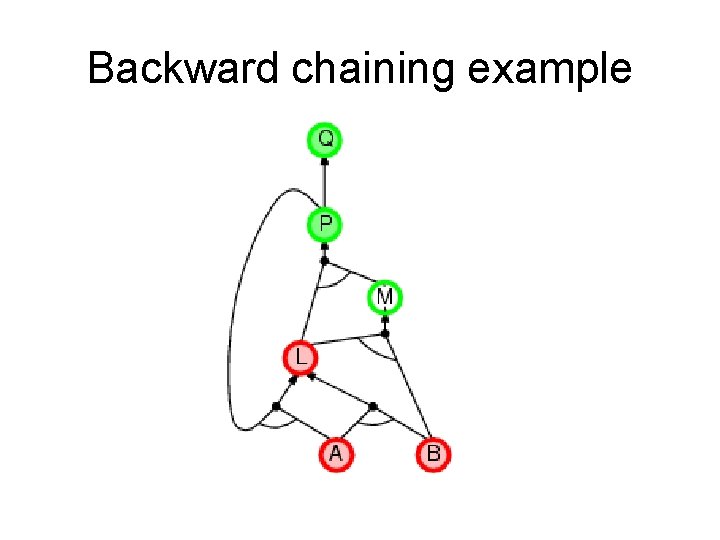

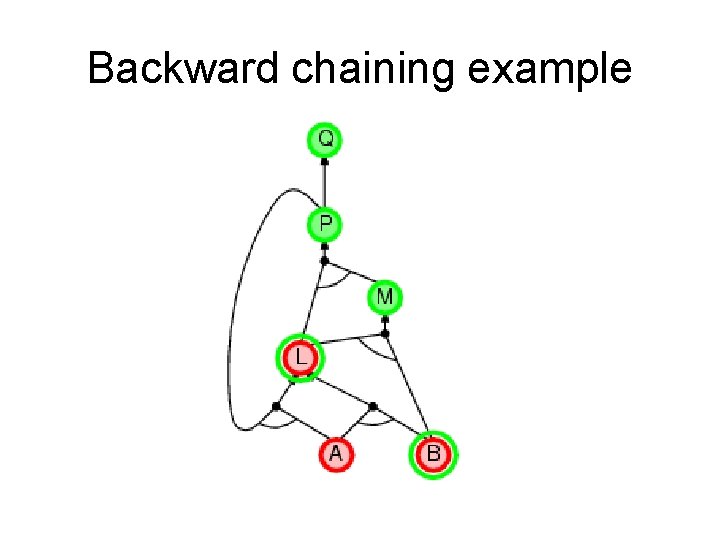

Backward chaining Idea: work backwards from the query q • • • check if q is known already, or prove by BC all premises of some rule concluding q Hence BC maintains a stack of sub-goals that need to be proved to get to q. Avoid loops: check if new sub-goal is already on the goal stack Avoid repeated work: check if new sub-goal 1. has already been proved true, or 2. has already failed

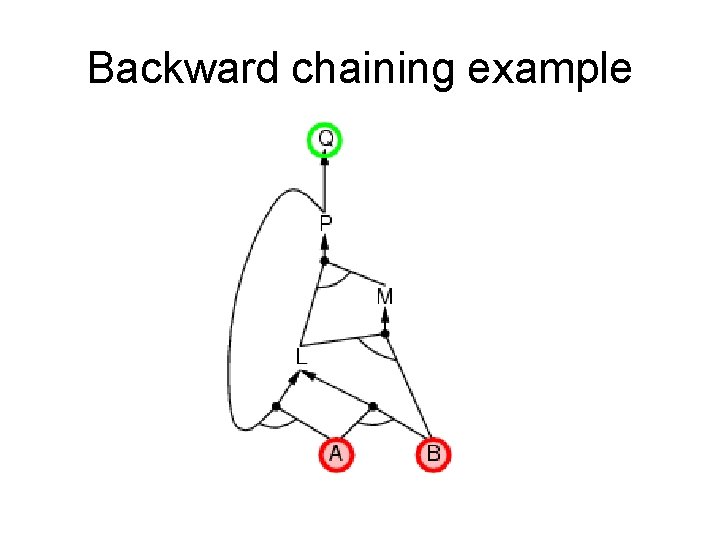

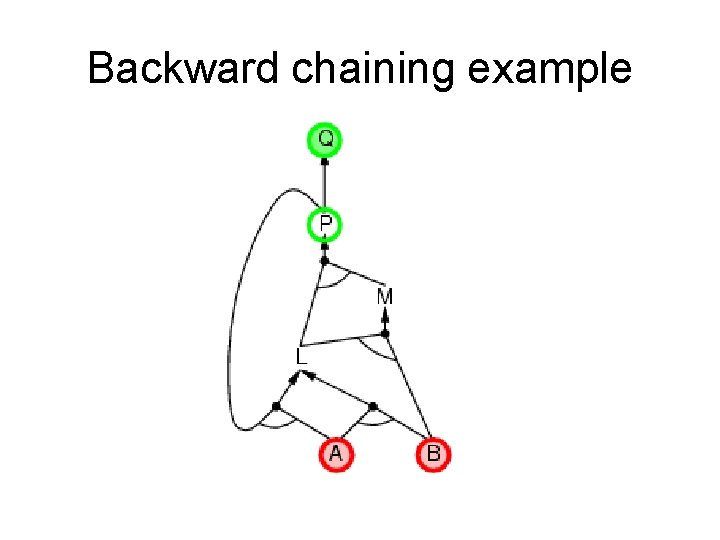

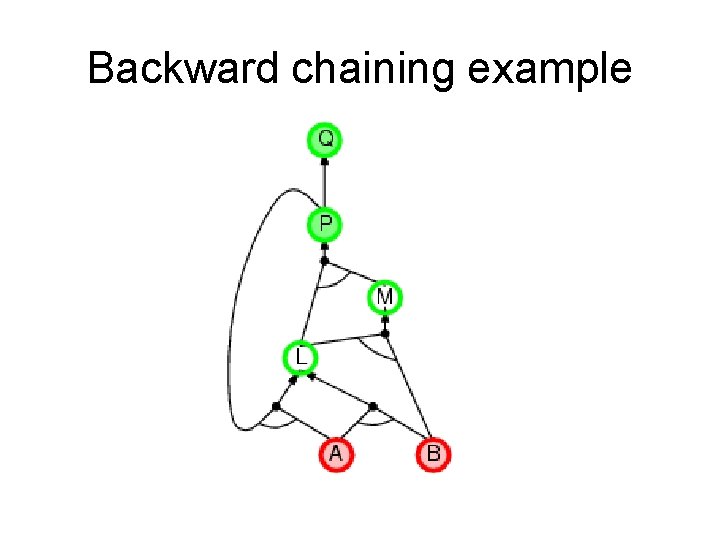

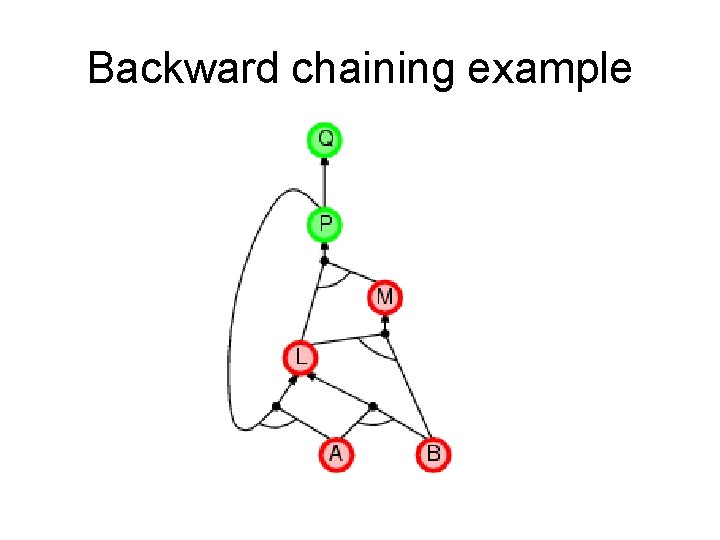

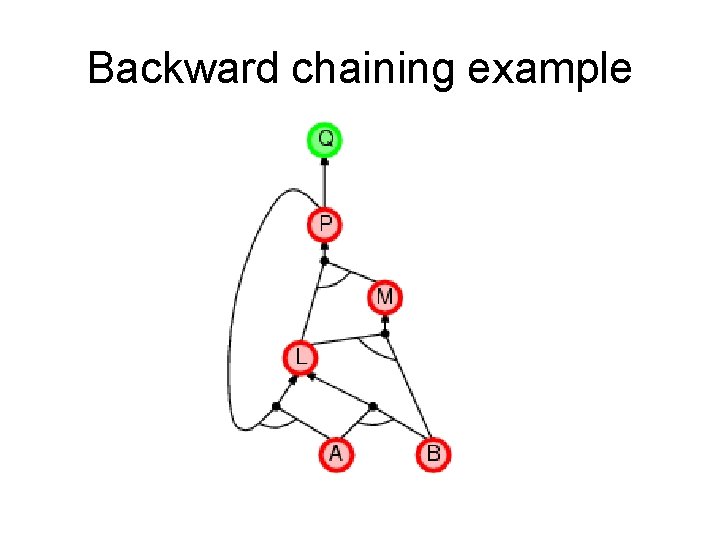

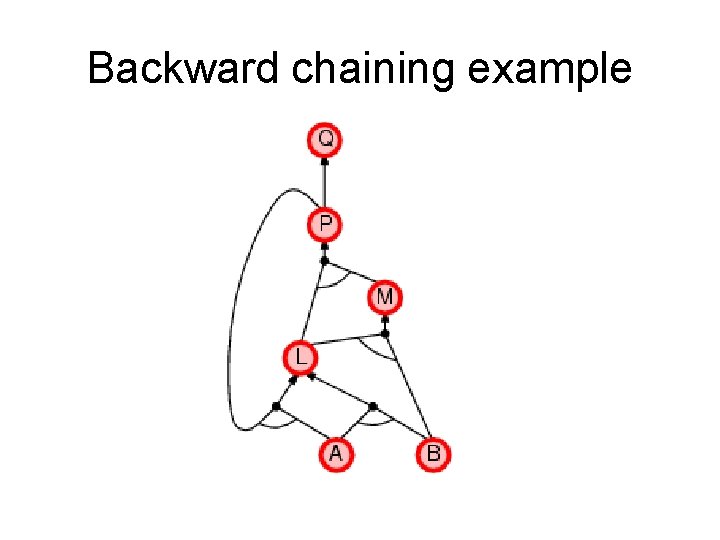

Backward chaining example

Backward chaining example

Backward chaining example

Backward chaining example we need P to prove L and L to prove P.

Backward chaining example

Backward chaining example

Backward chaining example

Backward chaining example

Backward chaining example

Backward chaining example

Forward vs. backward chaining • FC is data-driven, automatic, unconscious processing, – e. g. , object recognition, routine decisions • May do lots of work that is irrelevant to the goal • BC is goal-driven, appropriate for problem-solving, – e. g. , Where are my keys? How do I get into a Ph. D program? • Complexity of BC can be much less than linear in size of KB

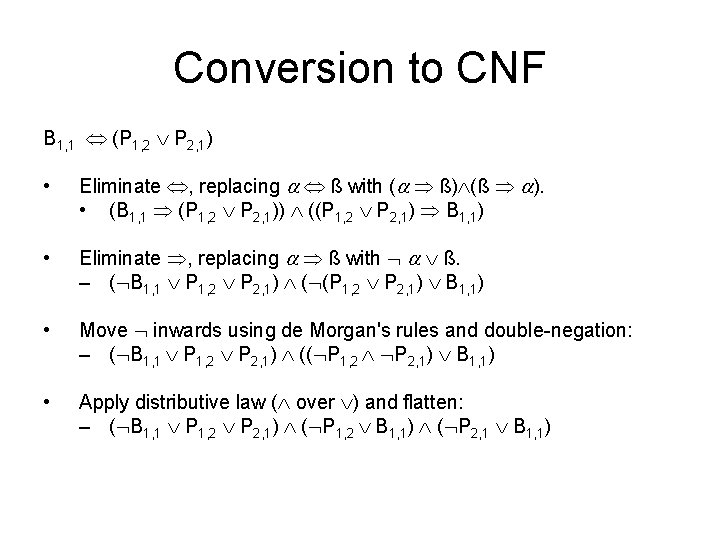

Conversion to CNF B 1, 1 (P 1, 2 P 2, 1) • Eliminate , replacing ß with ( ß) (ß ). • (B 1, 1 (P 1, 2 P 2, 1)) ((P 1, 2 P 2, 1) B 1, 1) • Eliminate , replacing ß with ß. – ( B 1, 1 P 1, 2 P 2, 1) ( (P 1, 2 P 2, 1) B 1, 1) • Move inwards using de Morgan's rules and double-negation: – ( B 1, 1 P 1, 2 P 2, 1) (( P 1, 2 P 2, 1) B 1, 1) • Apply distributive law ( over ) and flatten: – ( B 1, 1 P 1, 2 P 2, 1) ( P 1, 2 B 1, 1) ( P 2, 1 B 1, 1)

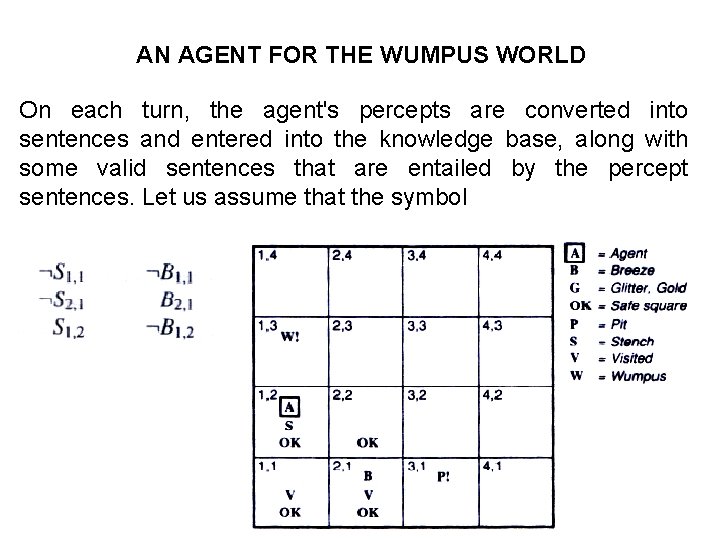

AN AGENT FOR THE WUMPUS WORLD On each turn, the agent's percepts are converted into sentences and entered into the knowledge base, along with some valid sentences that are entailed by the percept sentences. Let us assume that the symbol

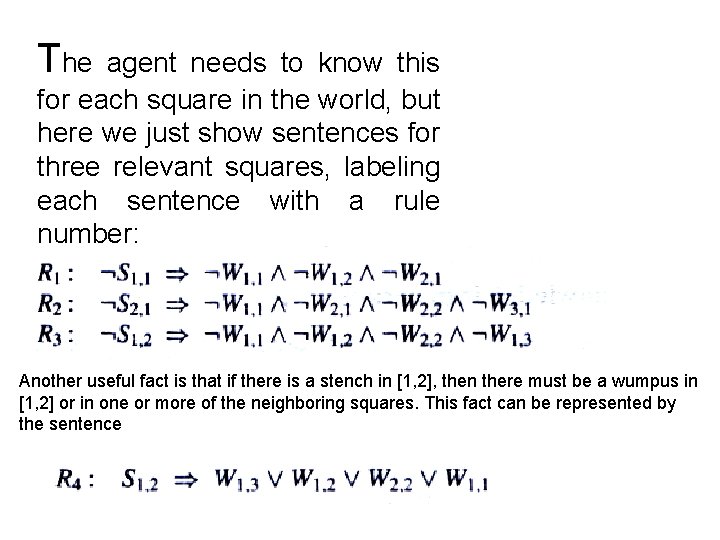

The agent needs to know this for each square in the world, but here we just show sentences for three relevant squares, labeling each sentence with a rule number: Another useful fact is that if there is a stench in [1, 2], then there must be a wumpus in [1, 2] or in one or more of the neighboring squares. This fact can be represented by the sentence

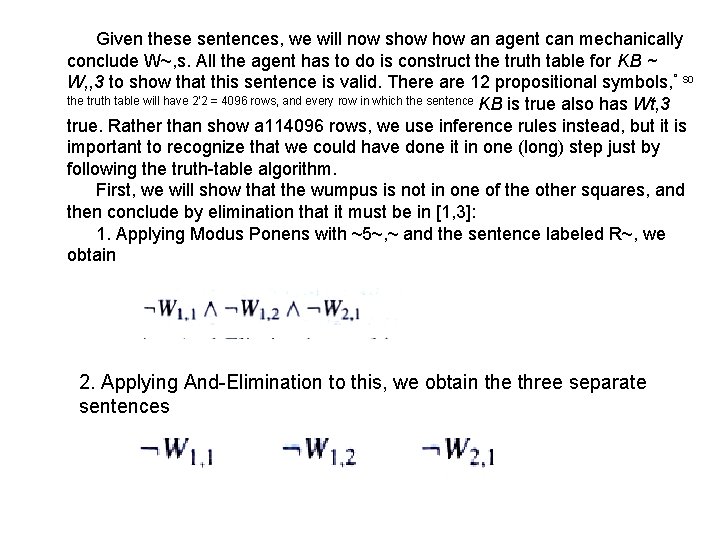

Given these sentences, we will now show an agent can mechanically conclude W~, s. All the agent has to do is construct the truth table for KB ~ W, , 3 to show that this sentence is valid. There are 12 propositional symbols, ° so the truth table will have 2'2 = 4096 rows, and every row in which the sentence KB is true also has Wt, 3 true. Rather than show a 114096 rows, we use inference rules instead, but it is important to recognize that we could have done it in one (long) step just by following the truth-table algorithm. First, we will show that the wumpus is not in one of the other squares, and then conclude by elimination that it must be in [1, 3]: 1. Applying Modus Ponens with ~5~, ~ and the sentence labeled R~, we obtain 2. Applying And-Elimination to this, we obtain the three separate sentences

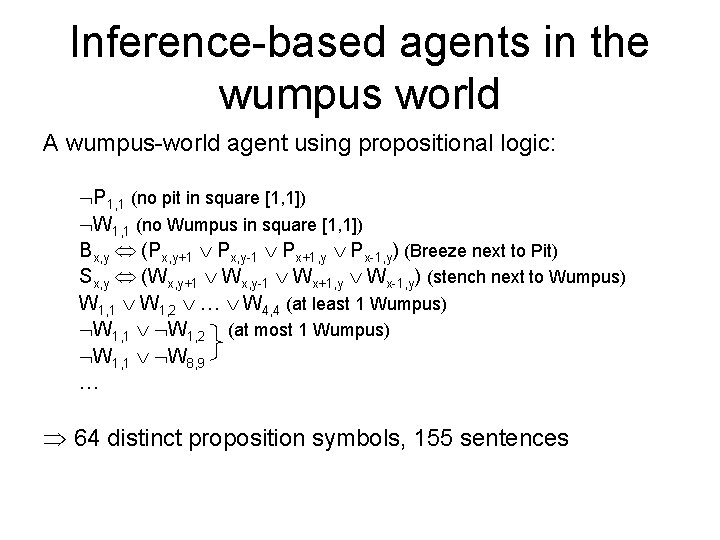

Inference-based agents in the wumpus world A wumpus-world agent using propositional logic: P 1, 1 (no pit in square [1, 1]) W 1, 1 (no Wumpus in square [1, 1]) Bx, y (Px, y+1 Px, y-1 Px+1, y Px-1, y) (Breeze next to Pit) Sx, y (Wx, y+1 Wx, y-1 Wx+1, y Wx-1, y) (stench next to Wumpus) W 1, 1 W 1, 2 … W 4, 4 (at least 1 Wumpus) W 1, 1 W 1, 2 (at most 1 Wumpus) W 1, 1 W 8, 9 … 64 distinct proposition symbols, 155 sentences

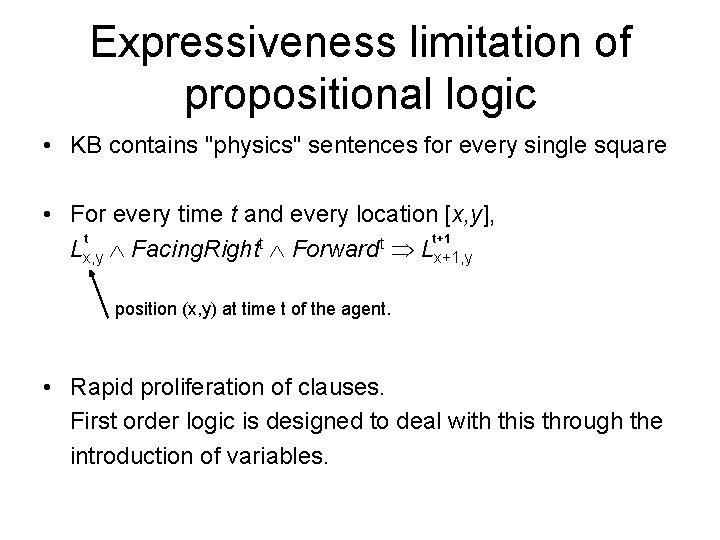

Expressiveness limitation of propositional logic • KB contains "physics" sentences for every single square • For every time t and every location [x, y], t t+1 t t Lx, y Facing. Right Forward Lx+1, y position (x, y) at time t of the agent. • Rapid proliferation of clauses. First order logic is designed to deal with this through the introduction of variables.

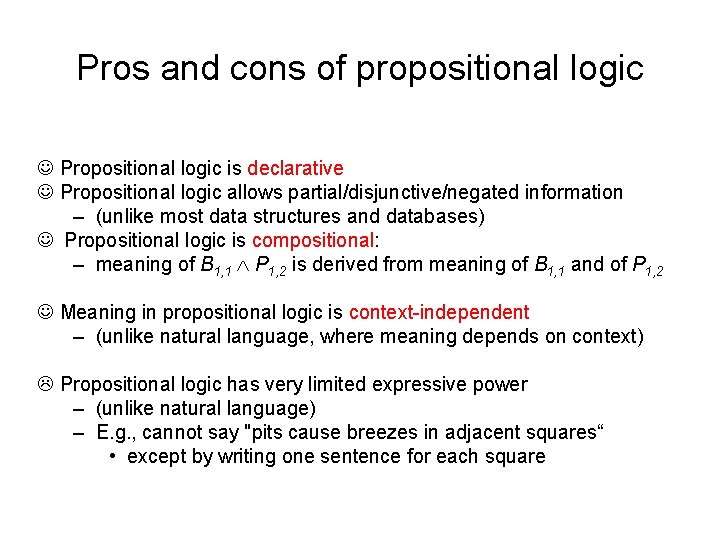

Pros and cons of propositional logic Propositional logic is declarative Propositional logic allows partial/disjunctive/negated information – (unlike most data structures and databases) Propositional logic is compositional: – meaning of B 1, 1 P 1, 2 is derived from meaning of B 1, 1 and of P 1, 2 Meaning in propositional logic is context-independent – (unlike natural language, where meaning depends on context) Propositional logic has very limited expressive power – (unlike natural language) – E. g. , cannot say "pits cause breezes in adjacent squares“ • except by writing one sentence for each square

Propositional logic is too weak a representational language - Too many propositions to handle, and truth table has 2 n rows. E. g. in the wumpus world, the simple rule “don’t go forward if the wumpus is in front of you” requires 64 rules ( 16 squares x 4 orientations for agent) - Hard to deal with change. Propositions might be true at times but not at others. Need a proposition Pit for each time step because one should not always forget what held in the past (e. g. where the agent came from) - don’t know # time steps - need time-dependent versions of rules - Hard to identify “individuals”, e. g. Mary, 3 - Cannot directly talk about properties of individuals or relations between individuals, e. g. Tall(bill) - Generalizations, patterns cannot easily be represented “all triangles have 3 sides. ”

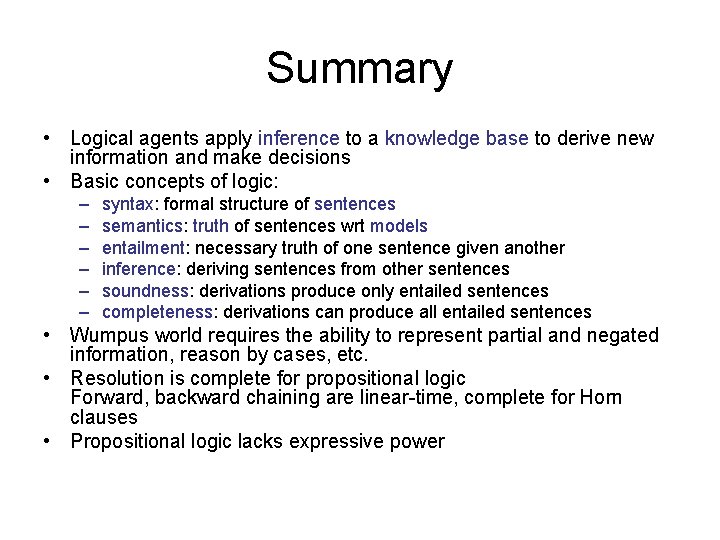

Summary • Logical agents apply inference to a knowledge base to derive new information and make decisions • Basic concepts of logic: – – – syntax: formal structure of sentences semantics: truth of sentences wrt models entailment: necessary truth of one sentence given another inference: deriving sentences from other sentences soundness: derivations produce only entailed sentences completeness: derivations can produce all entailed sentences • Wumpus world requires the ability to represent partial and negated information, reason by cases, etc. • Resolution is complete for propositional logic Forward, backward chaining are linear-time, complete for Horn clauses • Propositional logic lacks expressive power

- Slides: 177