Localization 2 Basic Concepts in Probability Joint and

Localization (2)

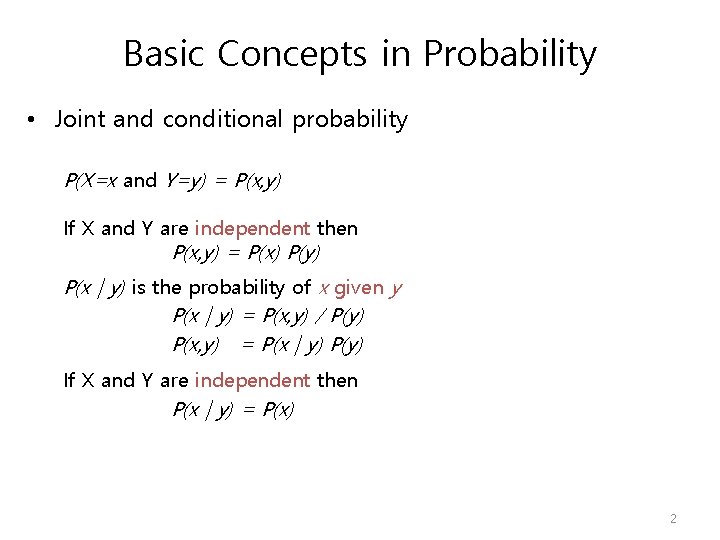

Basic Concepts in Probability • Joint and conditional probability P(X=x and Y=y) = P(x, y) If X and Y are independent then P(x, y) = P(x) P(y) P(x | y) is the probability of x given y P(x | y) = P(x, y) / P(y) P(x, y) = P(x | y) P(y) If X and Y are independent then P(x | y) = P(x) 2

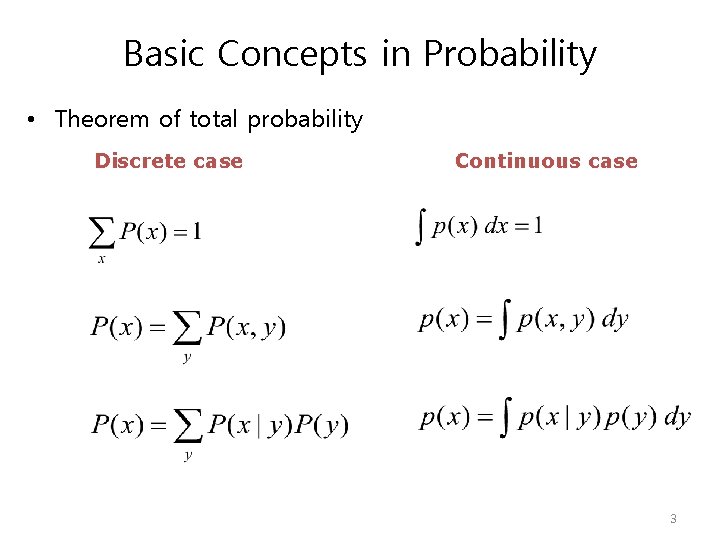

Basic Concepts in Probability • Theorem of total probability Discrete case Continuous case 3

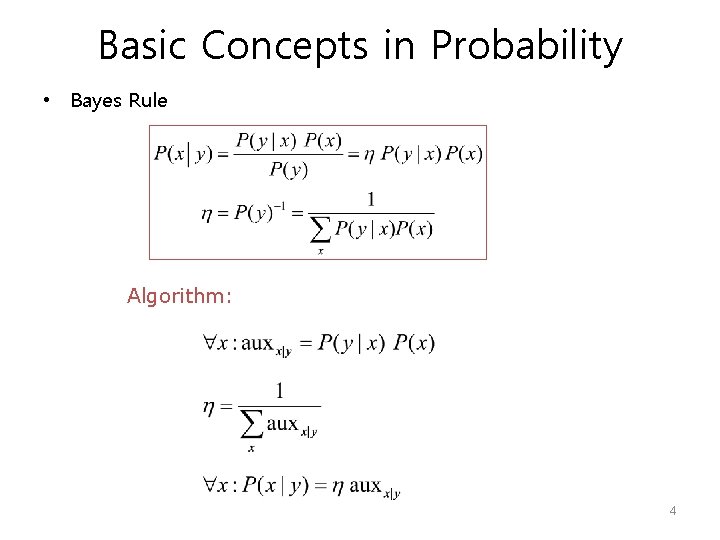

Basic Concepts in Probability • Bayes Rule Algorithm: 4

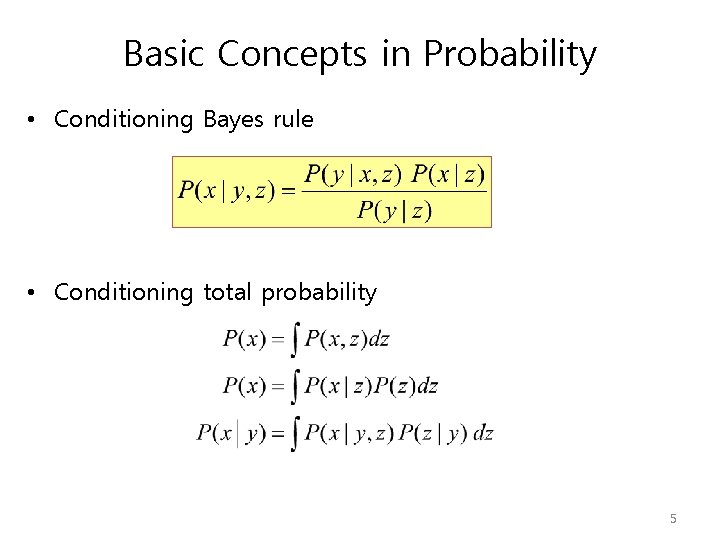

Basic Concepts in Probability • Conditioning Bayes rule • Conditioning total probability 5

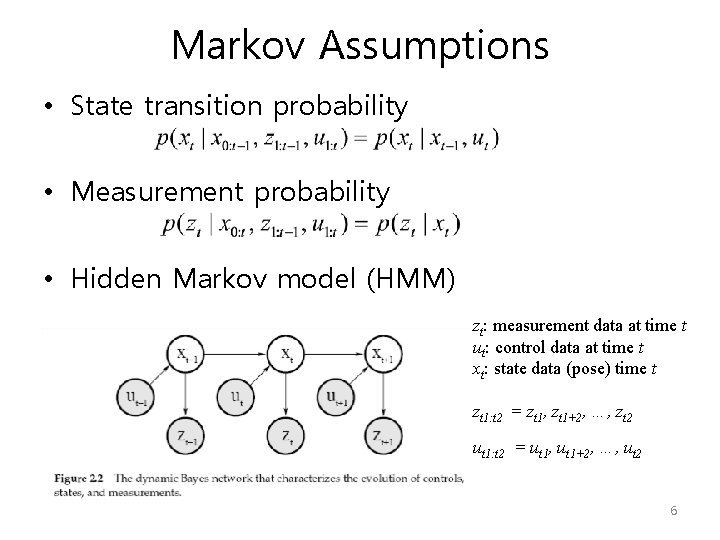

Markov Assumptions • State transition probability • Measurement probability • Hidden Markov model (HMM) zt: measurement data at time t ut: control data at time t xt: state data (pose) time t zt 1: t 2 = zt 1, zt 1+2, …, zt 2 ut 1: t 2 = ut 1, ut 1+2, …, ut 2 6

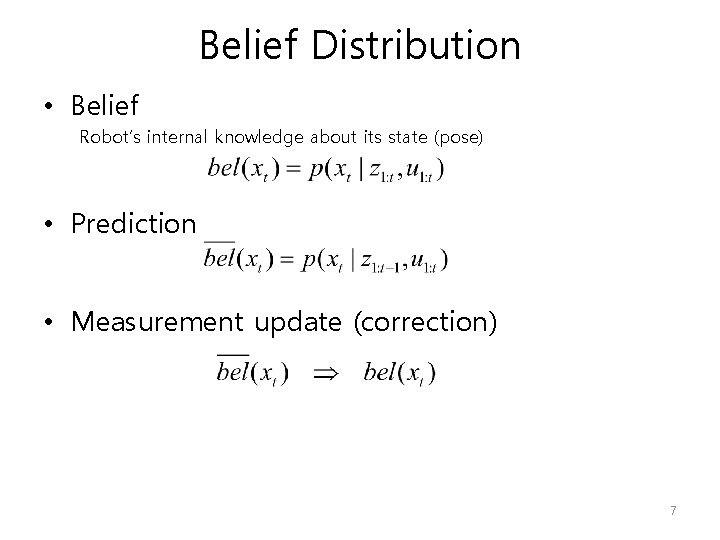

Belief Distribution • Belief Robot’s internal knowledge about its state (pose) • Prediction • Measurement update (correction) 7

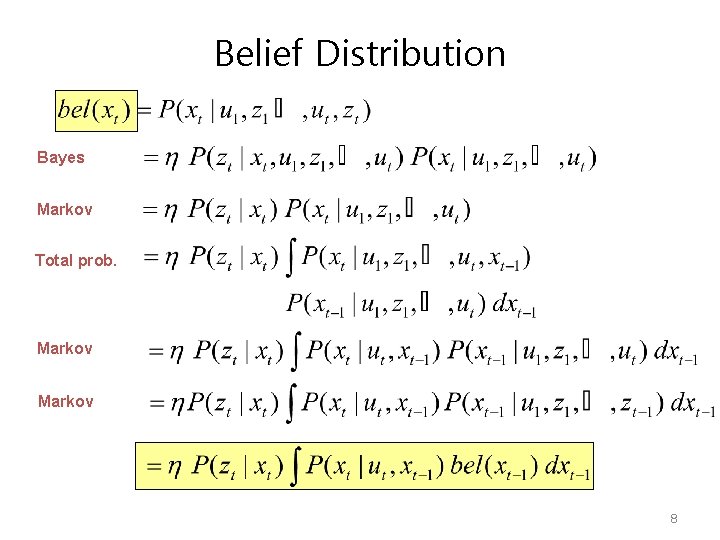

Belief Distribution Bayes Markov Total prob. Markov 8

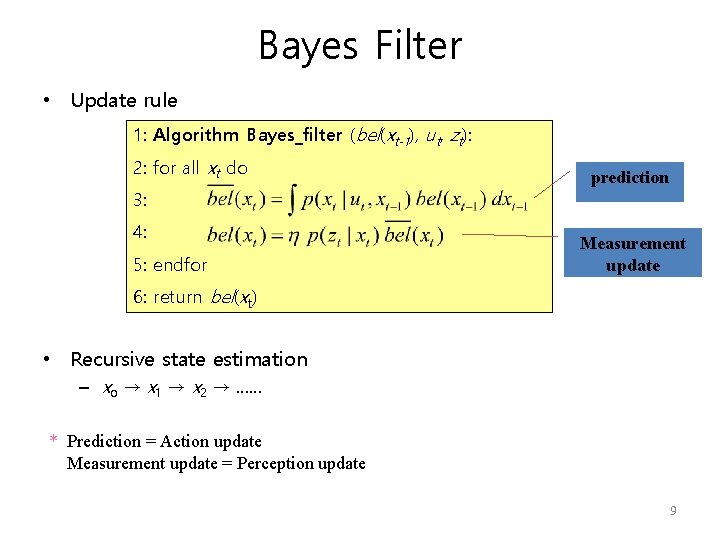

Bayes Filter • Update rule 1: Algorithm Bayes_filter (bel(xt-1), ut, zt): 2: for all xt do 3: 4: 5: endfor prediction Measurement update 6: return bel(xt) • Recursive state estimation – xo → x 1 → x 2 → …… * Prediction = Action update Measurement update = Perception update 9

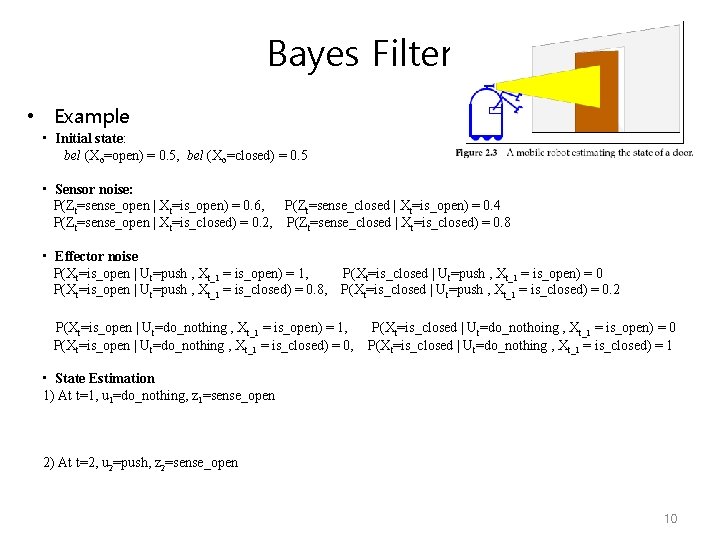

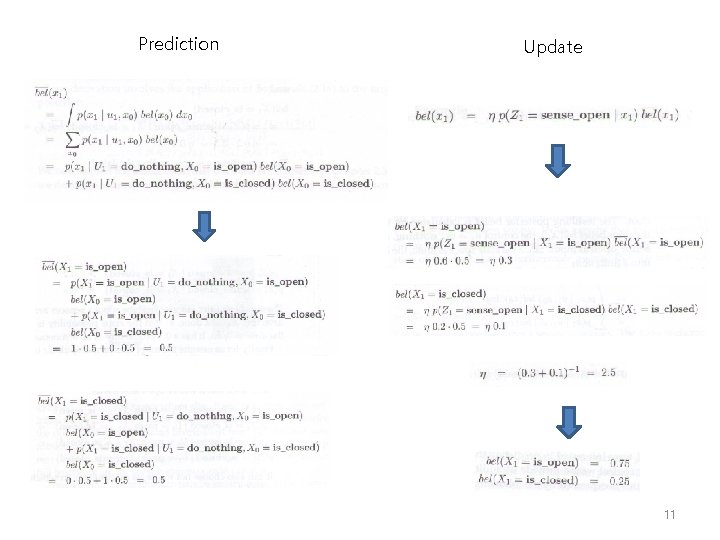

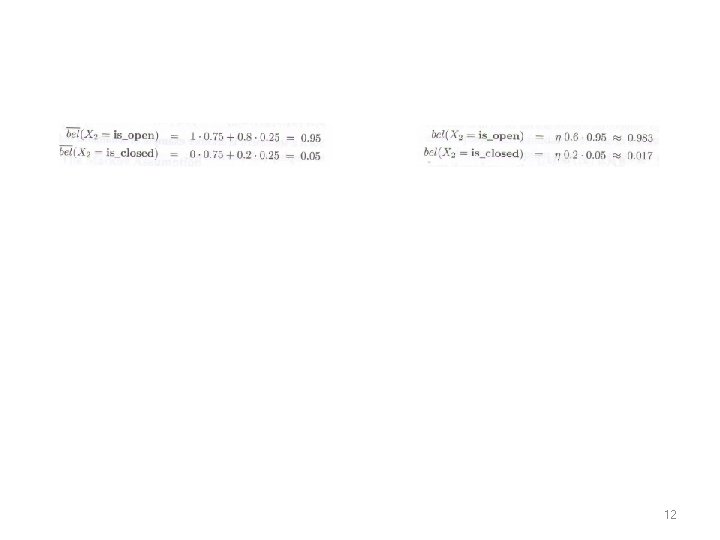

Bayes Filter • Example • Initial state: bel (Xo=open) = 0. 5, bel (Xo=closed) = 0. 5 • Sensor noise: P(Zt=sense_open | Xt=is_open) = 0. 6, P(Zt=sense_closed | Xt=is_open) = 0. 4 P(Zt=sense_open | Xt=is_closed) = 0. 2, P(Zt=sense_closed | Xt=is_closed) = 0. 8 • Effector noise P(Xt=is_open | Ut=push , Xt_1 = is_open) = 1, P(Xt=is_closed | Ut=push , Xt_1 = is_open) = 0 P(Xt=is_open | Ut=push , Xt_1 = is_closed) = 0. 8, P(Xt=is_closed | Ut=push , Xt_1 = is_closed) = 0. 2 P(Xt=is_open | Ut=do_nothing , Xt_1 = is_open) = 1, P(Xt=is_open | Ut=do_nothing , Xt_1 = is_closed) = 0, P(Xt=is_closed | Ut=do_nothoing , Xt_1 = is_open) = 0 P(Xt=is_closed | Ut=do_nothing , Xt_1 = is_closed) = 1 • State Estimation 1) At t=1, u 1=do_nothing, z 1=sense_open 2) At t=2, u 2=push, z 2=sense_open 10

Prediction Update 11

12

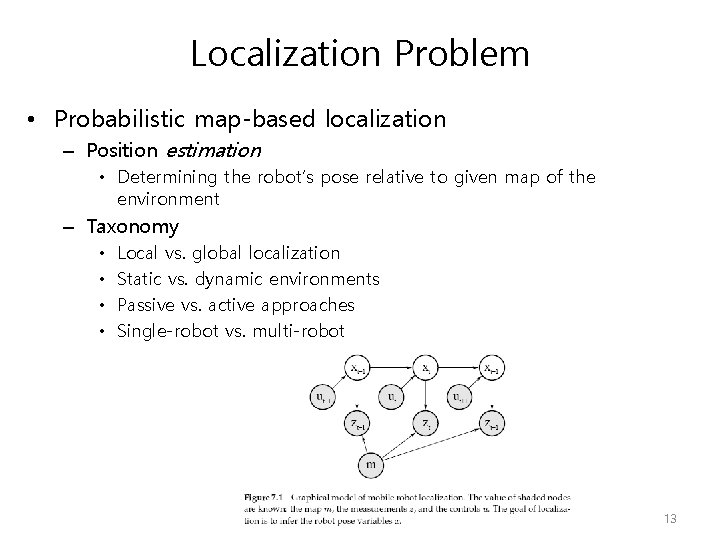

Localization Problem • Probabilistic map-based localization – Position estimation • Determining the robot’s pose relative to given map of the environment – Taxonomy • • Local vs. global localization Static vs. dynamic environments Passive vs. active approaches Single-robot vs. multi-robot 13

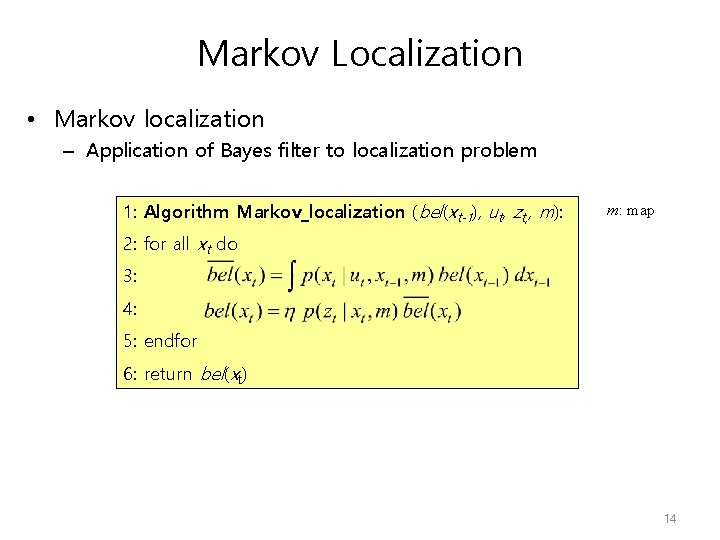

Markov Localization • Markov localization – Application of Bayes filter to localization problem 1: Algorithm Markov_localization (bel(xt-1), ut, zt, , m): m: map 2: for all xt do 3: 4: 5: endfor 6: return bel(xt) 14

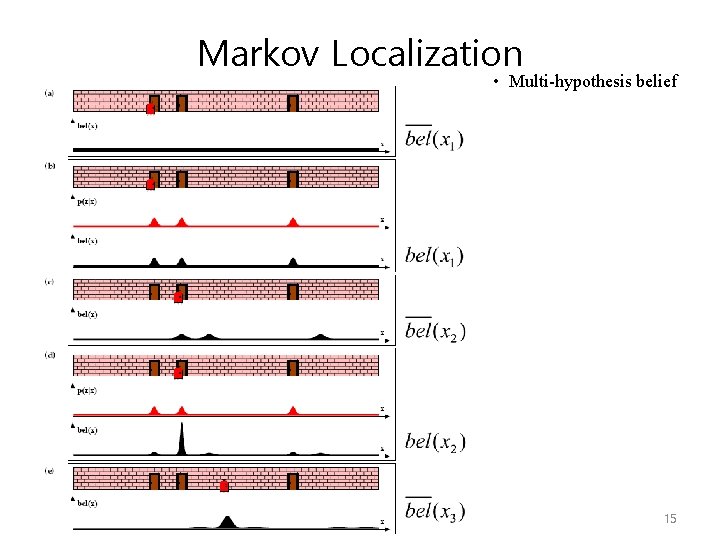

Markov Localization • Multi-hypothesis belief 15

Markov Localization • Advantages Multi-hypothesis belief • recover from highly uncertain situation • start from any unknown position • Disadvantages Update the probability of all positions within the whole state space at any time • Requires the discrete representation of the space – Topological map – Grid map • Memory and computational power may limit the precision and map size. 16

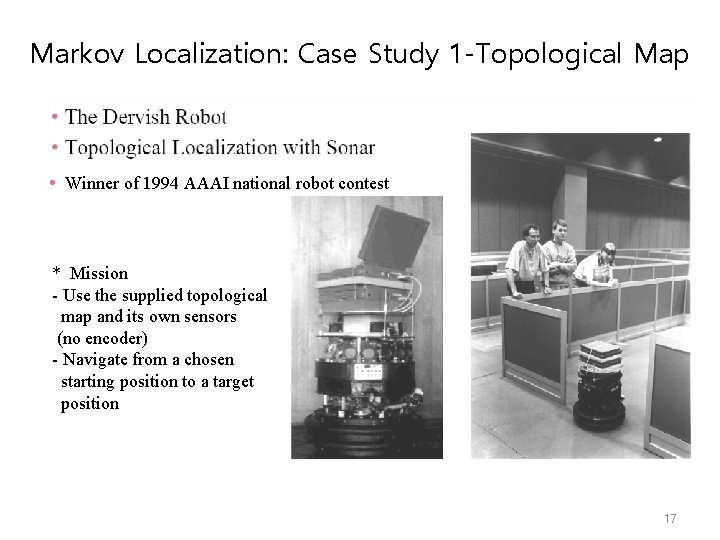

Markov Localization: Case Study 1 -Topological Map • Winner of 1994 AAAI national robot contest * Mission - Use the supplied topological map and its own sensors (no encoder) - Navigate from a chosen starting position to a target position 17

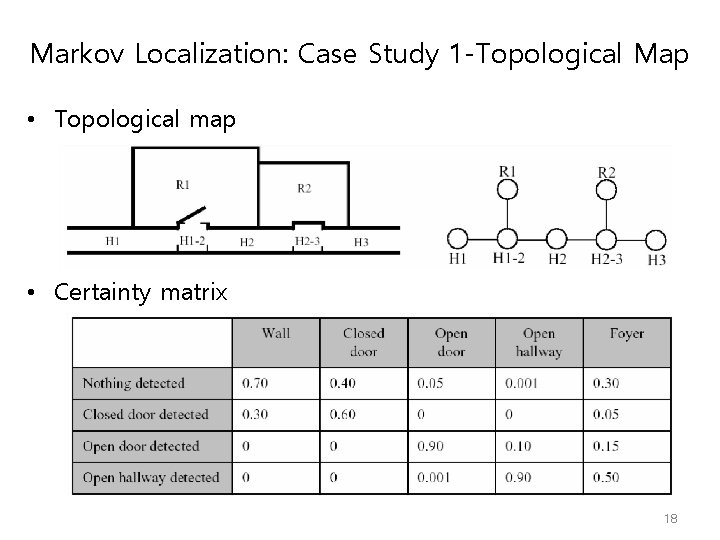

Markov Localization: Case Study 1 -Topological Map • Topological map • Certainty matrix 18

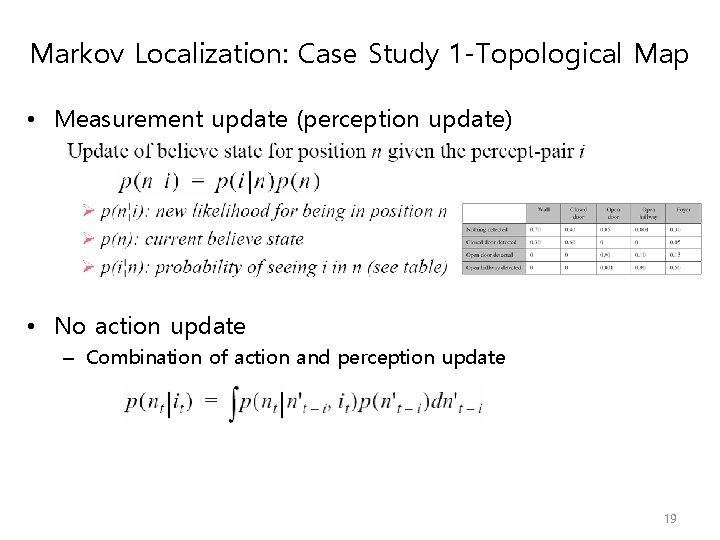

Markov Localization: Case Study 1 -Topological Map • Measurement update (perception update) • No action update – Combination of action and perception update 19

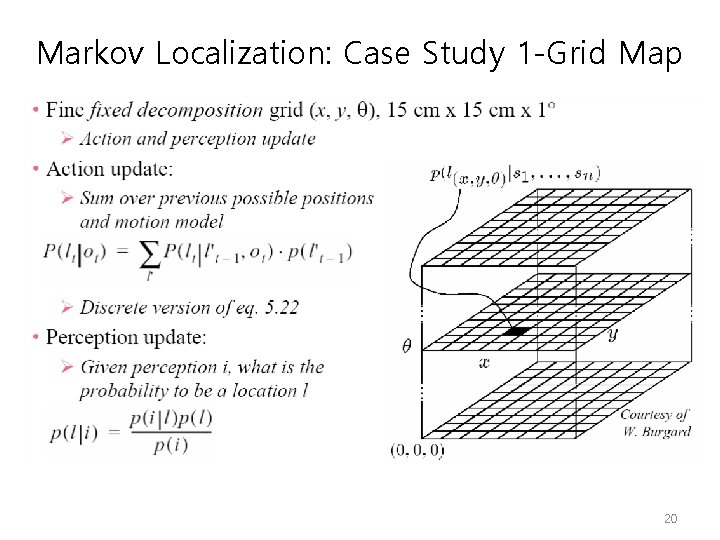

Markov Localization: Case Study 1 -Grid Map 20

- Slides: 20