Locality vs Criticality Hima Bindu Alwal Pranathi Boddu

Locality vs. Criticality Hima Bindu Alwal Pranathi Boddu

Motivation n n 1. 2. 3. In order to identify and exploit instruction level parallelism, most of today's processors employ techniques such as dynamic scheduling, branch prediction, and speculative execution. The above techniques are very effective for wellbehaved programs with short-latency operations. However, long latency operations such as load instructions, can reduce their effectiveness for the following reasons: Data. Dependencies Finite Resources Branch Mis-predictions

Introduction n n Caches work by exploiting locality both temporal and spatial. Recent research shows that not all memory accesses are equal. It may be possible to improve overall performance by decreasing the latency of these critical loads at the expense of increased latency for non critical loads. Loads that must complete early to prevent to avoid performance degradation are critical and those that can tolerate long latencies are non critical

Critical load Classification n 1. 2. 3. A load is considered critical if The load feeds into a mispredicted branch The load feeds into another load that incurs an L 1 cache miss The number of independent instructions issued in an N cycle window following the load is below threshold.

Load Criticality n 1. 2. To eliminate the discrepancy between latency demands and actual incurred latency , we must identify the critical loads provide a memory hierarchy that satisfies critical loads with minimal latency.

An Implementation n Load/store queue (LSQ): holds loads and stores in program order, indicating status of load/store access: 1. issued: address computation complete, memory access in progress completed: memory access has completed, stored value available squashed: memory access was squashed, ignore this entry 2. 3. n The RUU is an order circular queue, in which instructions are inserted in fetch (program) order, results are stored in the RUU buffers, and later when an RUU entry is the oldest entry in the machines, it and its instruction's value is retired to the architectural register file in program order,

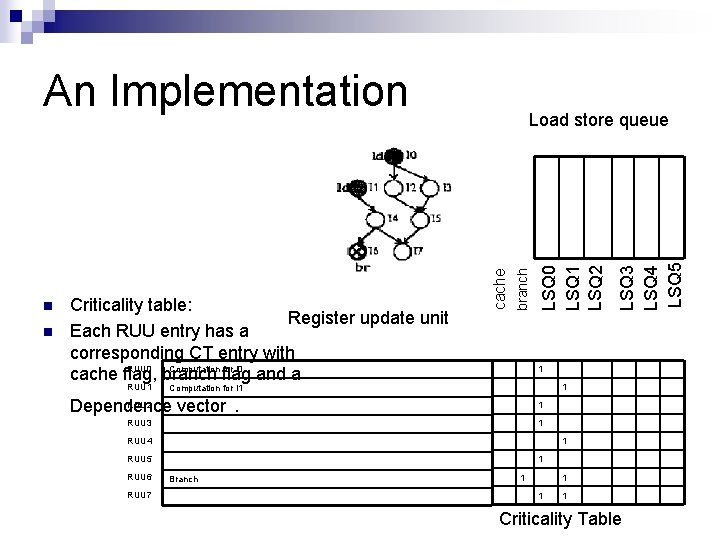

An Implementation n RUU 1 1 1 Computation for I 1 RUU 2 Dependence vector . 1 RUU 3 1 RUU 4 1 RUU 5 RUU 6 RUU 7 LSQ 3 LSQ 4 LSQ 5 LSQ 0 LSQ 1 LSQ 2 branch Criticality table: Register update unit Each RUU entry has a corresponding CT entry with Computation for I 0 RUU 0 cache flag, branch flag and a cache n Load store queue 1 Branch 1 1 Criticality Table

Cache Organization n The central idea behind criticality-based caching is to keep critical data close to the processor at the potential expense of non-critical data. n 2. To achieve this New cache replacement policy A different cache organization. n Criticality based Cache organization n Locality based Cache organization 1.

Critical/Locality based cache organization n n Locality Based Cache organization: This scheme consists of a locality cache alongside the primary cache. The capacity, block-size and associativity of the locality cache same as those of the critical cache. Retains both critical and non critical data. Criticality based Cache organization: Critical Cache: A critical cache serves as a victim cache for critical data. On every reference, both the primary cache and the critical cache are accessed in parallel.

Locality/Critical based caching n Locality vs. Criticality at the L 1 Cache Level The critical/locality cache configuration consists of a 4 KB 2 -way set associative LI cache and a 4 KB 2 -way set associative critical/locality cache. n Locality vs. Criticality at the L 2 cache Level The first configuration 128 KB 2 -way set associative critical cache alongside a 128 KB 2 -way set associative L 2 cache. The second configuration has an 8 KB 2 -way set associative critical cache along with a 256 KB 2 -way set associative L 2 cache. For comparison, in each configuration the size of the locality cache matches the critical cache size.

Performance Comparision n IPC Overall Miss Ratio Critical Load Miss Ratio for the three configurations of cache (Traditional Memory system, Critical Cache and Locality Cache) Expected Results: n Improvement in IPC with critical cache n Lowest load miss ratio for critical cache n Lowest overall miss ratio for critical cache

Criticality Based Prefetching: n Prefetching exploits the spatial nature of accesses and the regularity of access patterns by predicting future accesses and initiating a memory access even before a load is actually issued. n Reference Prediction Table (RPT) n Spatial Footprint Predictor (SFP)

Progress: n n Tools: Simplescalar Benchmarks: Olden and Spec 2000 integer Algorithm for the criticality table has been formulated The benchmarks have been run on the unchanged cache organization.

References n A Data Cache with Multiple Caching Strategies Tuned to Different Types of Locality, Antonio Gonzfilez, Carlos Aliagas and Mateo Valero n A New Approach to Cache Management Gary Tyson, Matthew Farrens, John Matthews and Andrew Pleszkun n Design and performance evaluation of a cache assist to implement selective caching, L. John and Subramanian n Exploiting Spatial Locality in data caches using spatial footprints Sanjeev Kumar and Cristopher Wilkerson

Questions? ? Thank you!

- Slides: 15