Local Search with Very LargeScale Neighborhoods for Optimal

Local Search with Very Large-Scale Neighborhoods for Optimal Permutations in Machine Translation Jason Eisner and Roy Tromble 1

Motivation n MT is really easy! Just use a finite-state transducer! Phrases, morphology, the works! Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 2

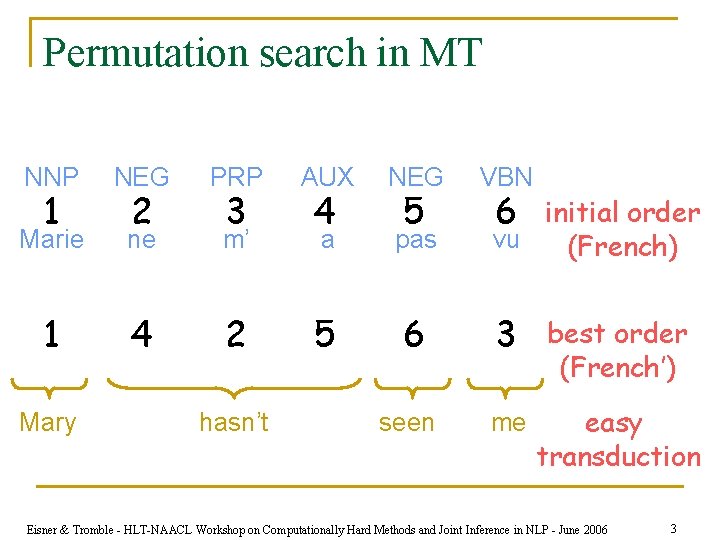

Permutation search in MT NNP NEG PRP AUX NEG Marie ne m’ a pas 1 4 2 5 6 3 best order (French’) seen me easy transduction 1 Mary 2 3 hasn’t 4 5 VBN 6 initial order vu (French) Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 3

Motivation n MT is really easy! Just use a finite-state transducer! Phrases, morphology, the works! n Have just to fix that pesky word order. n n Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 4

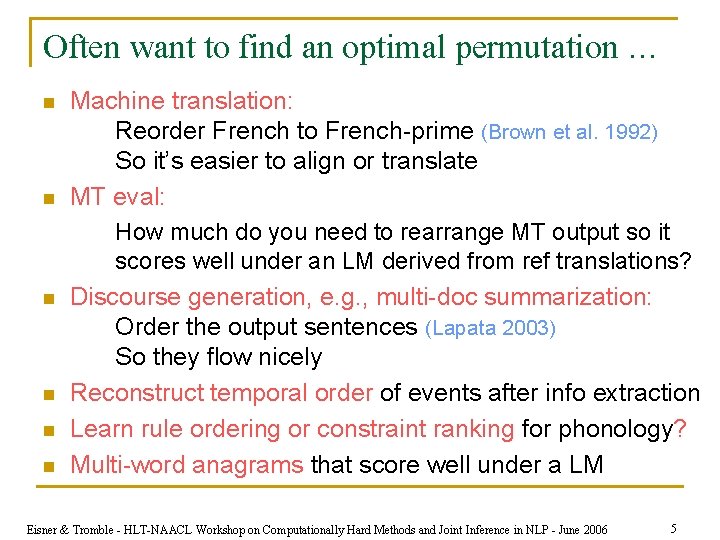

Often want to find an optimal permutation … n n n Machine translation: Reorder French to French-prime (Brown et al. 1992) So it’s easier to align or translate MT eval: How much do you need to rearrange MT output so it scores well under an LM derived from ref translations? Discourse generation, e. g. , multi-doc summarization: Order the output sentences (Lapata 2003) So they flow nicely Reconstruct temporal order of events after info extraction Learn rule ordering or constraint ranking for phonology? Multi-word anagrams that score well under a LM Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 5

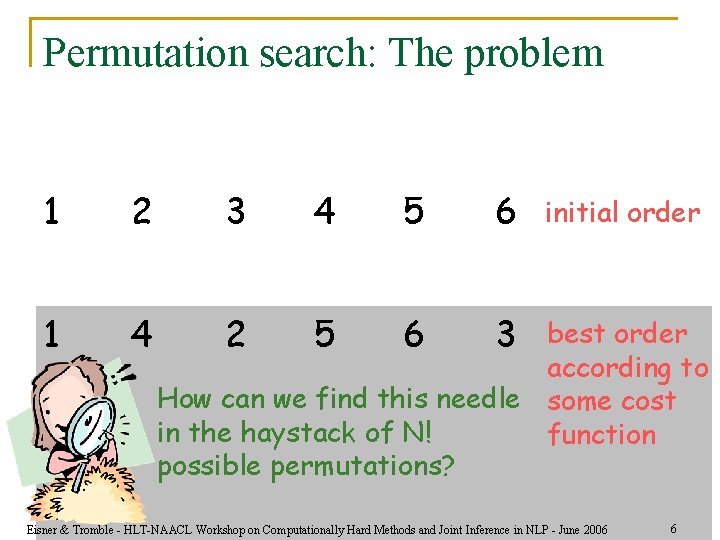

Permutation search: The problem 1 2 3 4 5 6 1 4 2 5 6 3 initial order best order according to How can we find this needle some cost in the haystack of N! function possible permutations? Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 6

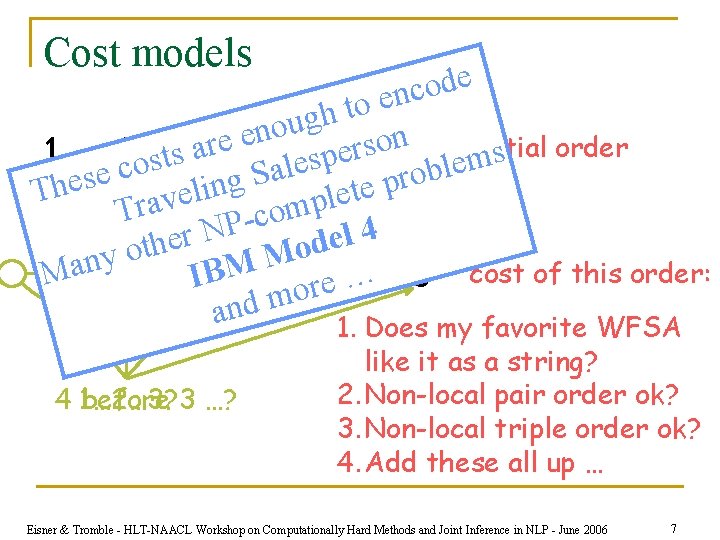

Cost models e d o c n e o t h g u o n e n o e r s initial r a e 1 2 costs 3 4 alesp 5 6 blem s order e o S r s g e p n h e i t l T e e l v p a m Tr o c P 4 N l r e e d h t o o M y M 1 an 4 2 IBM 5 ore 6… 3 cost of this order: and m 1. Does my favorite WFSA 4 1… 2… 3? before 3 …? like it as a string? 2. Non-local pair order ok? 3. Non-local triple order ok? 4. Add these all up … Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 7

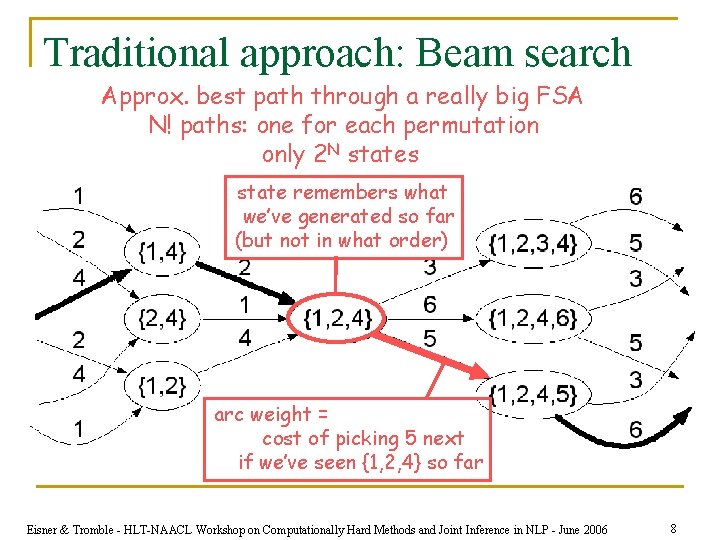

Traditional approach: Beam search Approx. best path through a really big FSA N! paths: one for each permutation only 2 N states state remembers what we’ve generated so far (but not in what order) arc weight = cost of picking 5 next if we’ve seen {1, 2, 4} so far Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 8

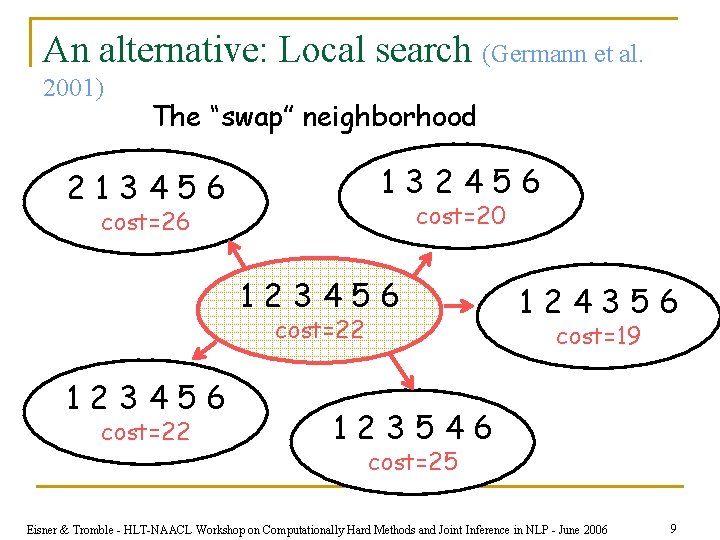

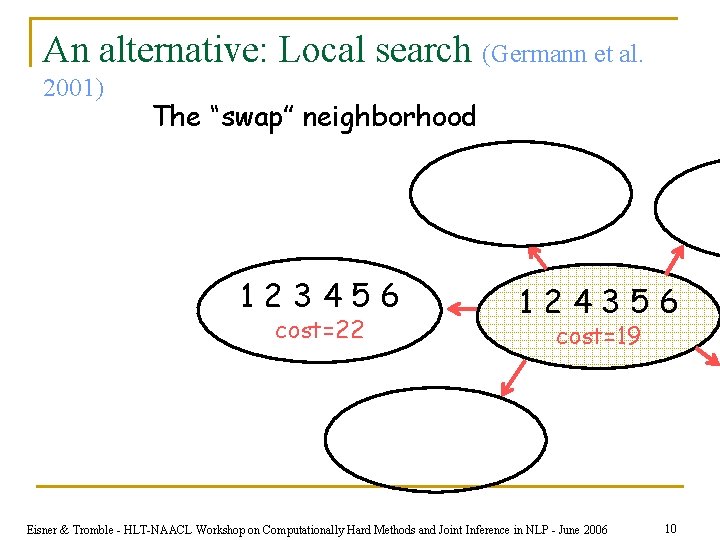

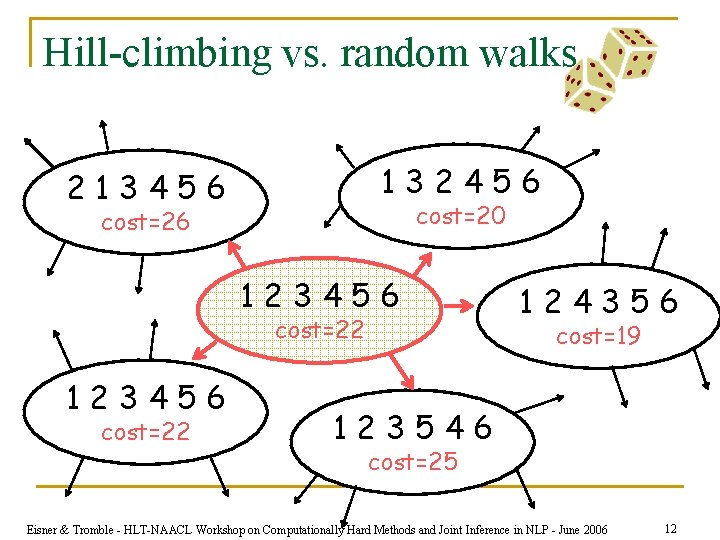

An alternative: Local search (Germann et al. 2001) The “swap” neighborhood 132456 213456 cost=20 cost=26 123456 cost=22 124356 cost=19 123546 cost=25 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 9

An alternative: Local search (Germann et al. 2001) The “swap” neighborhood 123456 cost=22 124356 cost=19 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 10

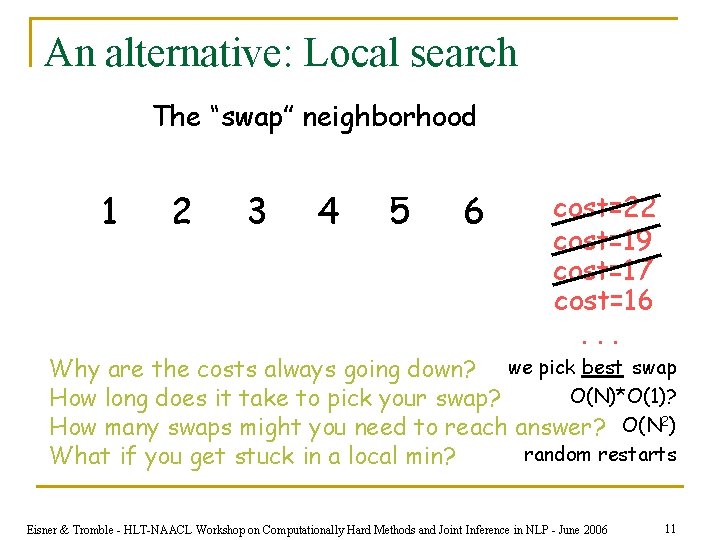

An alternative: Local search The “swap” neighborhood 1 2 3 4 5 6 cost=22 cost=19 cost=17 cost=16. . . Why are the costs always going down? we pick best swap O(N)*O(1)? How long does it take to pick your swap? How many swaps might you need to reach answer? O(N 2) random restarts What if you get stuck in a local min? Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 11

Hill-climbing vs. random walks 132456 213456 cost=20 cost=26 123456 cost=22 124356 cost=19 123546 cost=25 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 12

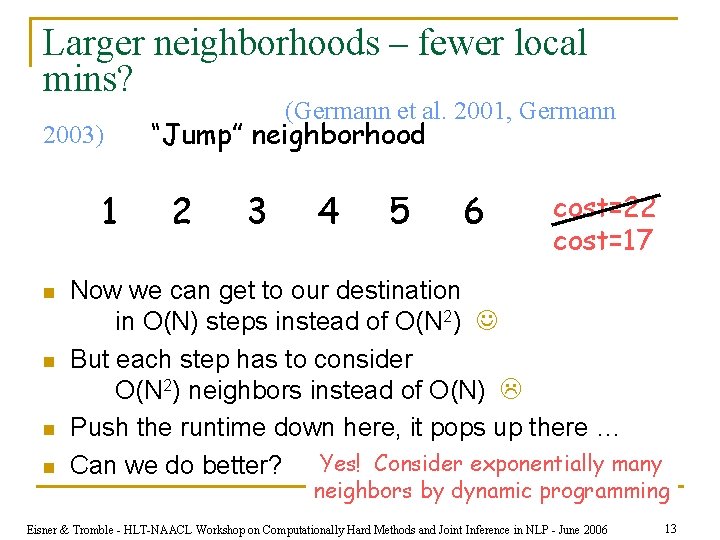

Larger neighborhoods – fewer local mins? 2003) 1 n n (Germann et al. 2001, Germann “Jump” neighborhood 2 3 4 5 6 cost=22 cost=17 Now we can get to our destination in O(N) steps instead of O(N 2) But each step has to consider O(N 2) neighbors instead of O(N) Push the runtime down here, it pops up there … Can we do better? Yes! Consider exponentially many neighbors by dynamic programming Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 13

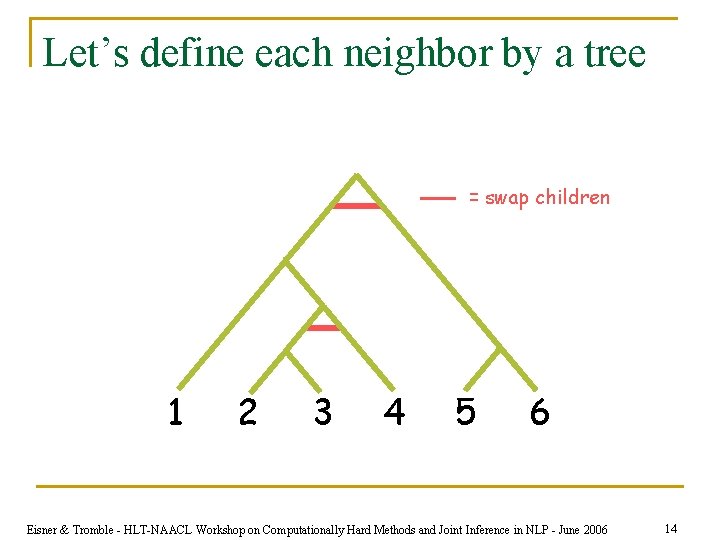

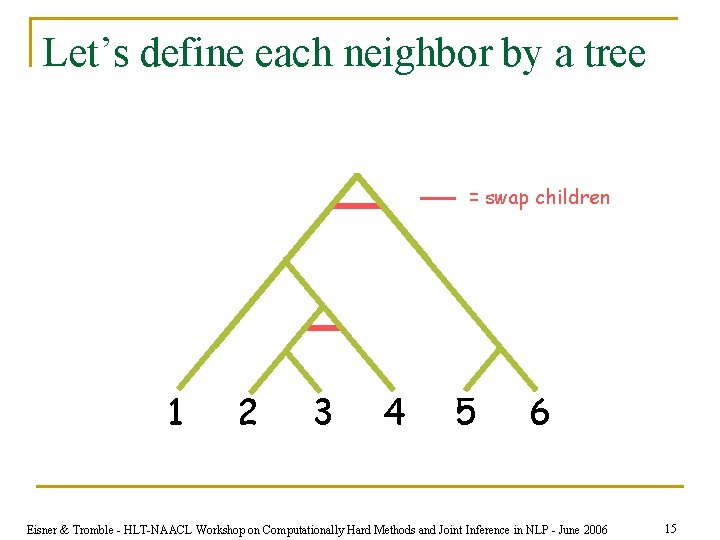

Let’s define each neighbor by a tree = swap children 1 2 3 4 5 6 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 14

Let’s define each neighbor by a tree = swap children 1 2 3 4 5 6 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 15

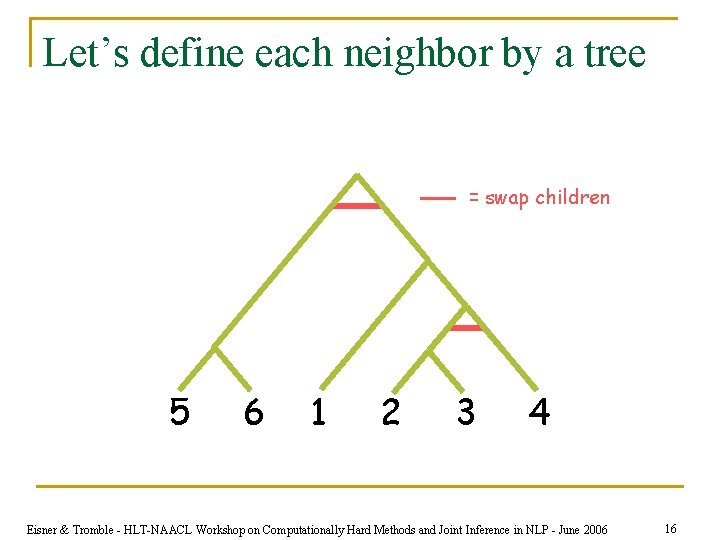

Let’s define each neighbor by a tree = swap children 5 6 1 2 3 4 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 16

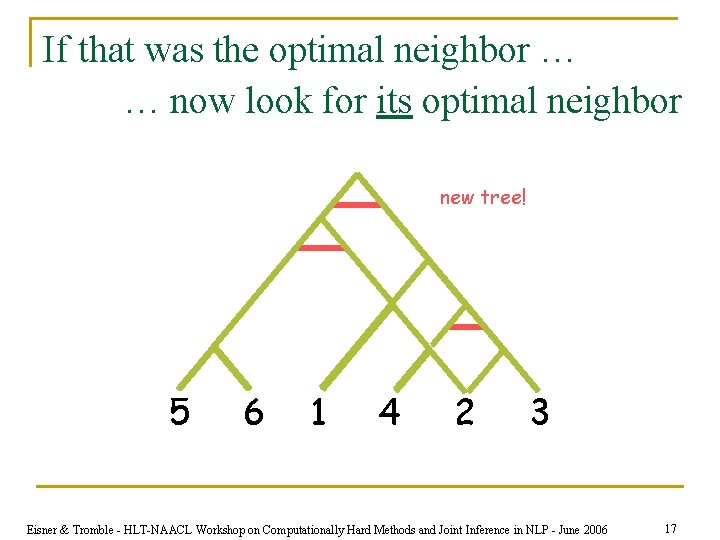

If that was the optimal neighbor … … now look for its optimal neighbor new tree! 5 6 1 4 2 3 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 17

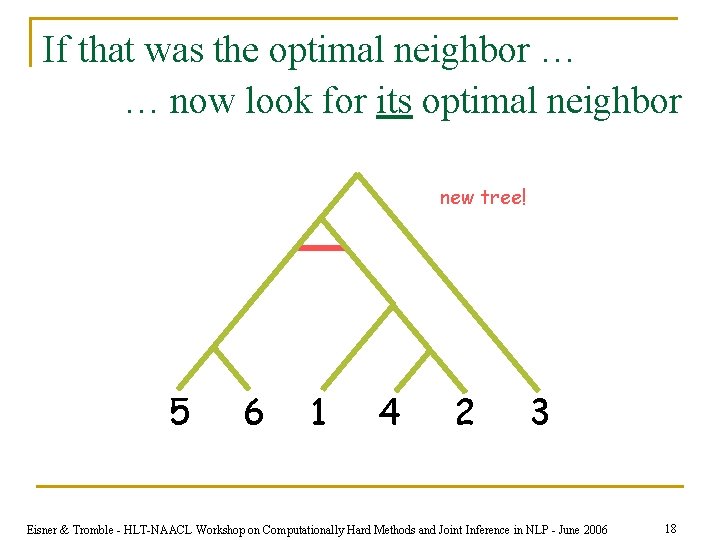

If that was the optimal neighbor … … now look for its optimal neighbor new tree! 5 6 1 4 2 3 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 18

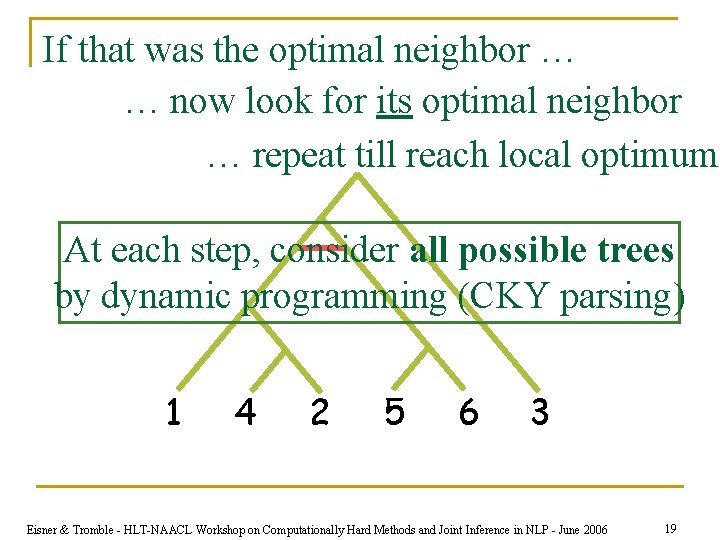

If that was the optimal neighbor … … now look for its optimal neighbor … repeat till reach local optimum At each step, consider all possible trees by dynamic programming (CKY parsing) 1 4 2 5 6 3 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 19

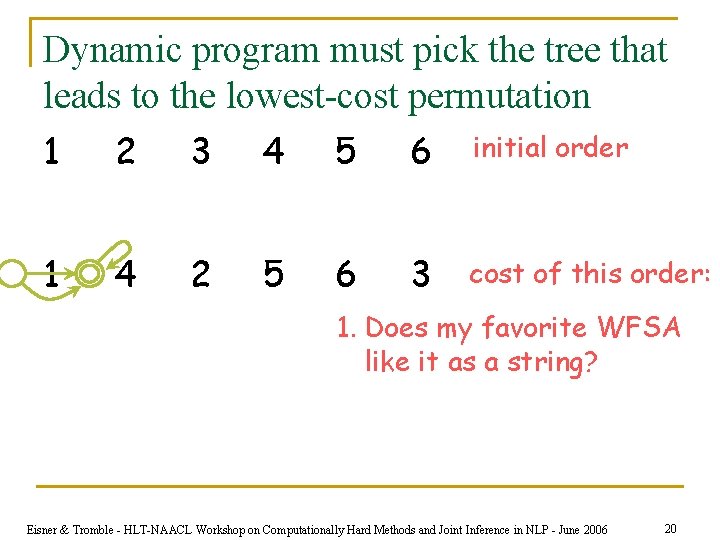

Dynamic program must pick the tree that leads to the lowest-cost permutation 1 2 3 4 5 6 initial order 1 4 2 5 6 3 cost of this order: 1. Does my favorite WFSA like it as a string? Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 20

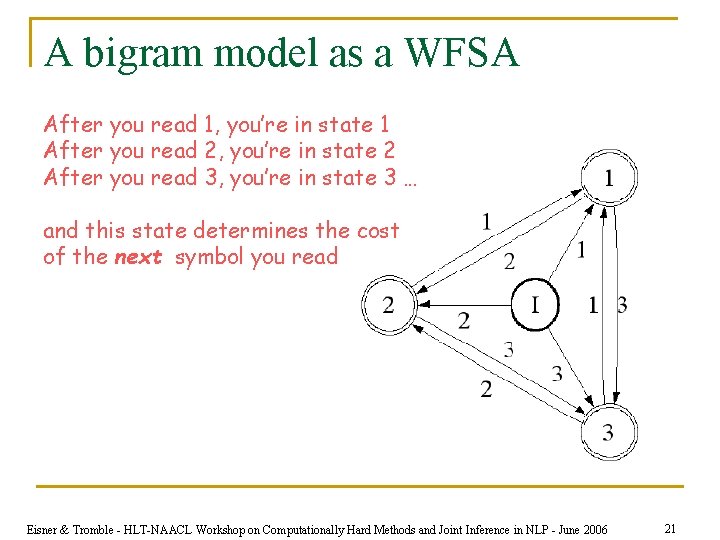

A bigram model as a WFSA After you read 1, you’re in state 1 After you read 2, you’re in state 2 After you read 3, you’re in state 3 … and this state determines the cost of the next symbol you read Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 21

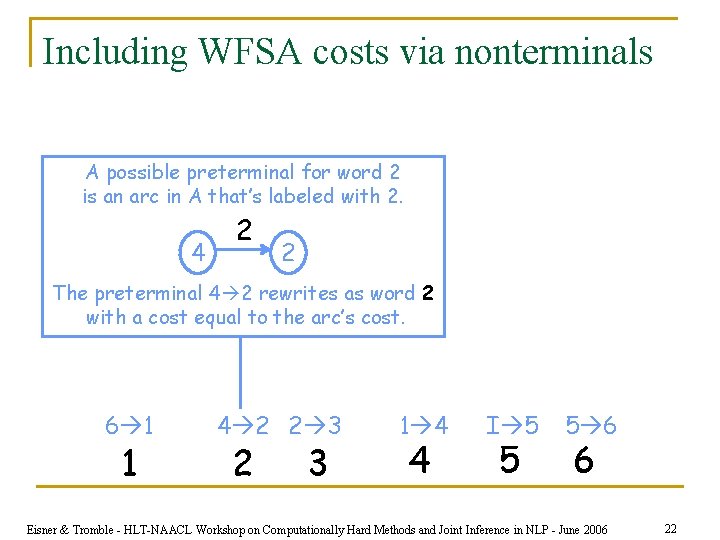

Including WFSA costs via nonterminals A possible preterminal for word 2 is an arc in A that’s labeled with 2. 4 2 2 The preterminal 4 2 rewrites as word 2 with a cost equal to the arc’s cost. 6 1 1 4 2 2 3 1 4 4 I 5 5 5 6 6 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 22

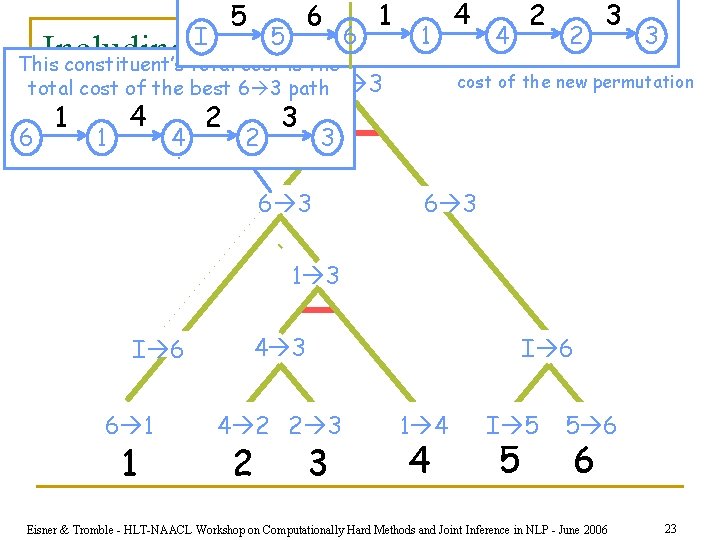

5 I 6 5 6 1 1. 4 4 2 2 3 3 Including WFSA costs via nonterminals This constituent’s total cost is the total cost of the best 6 3 path I 3 6 1 1 4 4. 2 2 3 cost of the new permutation 3 6 3 1 3 I 6 6 1 1 4 3 I 6 4 2 2 3 1 4 4 I 5 5 5 6 6 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 23

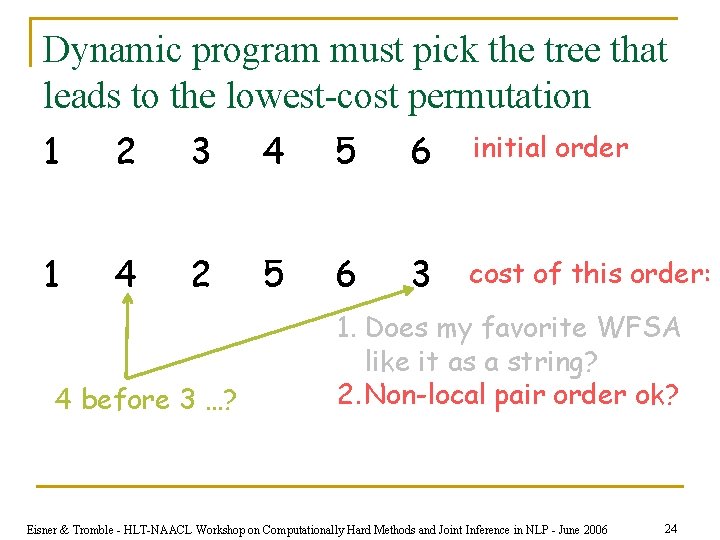

Dynamic program must pick the tree that leads to the lowest-cost permutation 1 2 3 4 5 6 initial order 1 4 2 5 6 3 cost of this order: 4 before 3 …? 1. Does my favorite WFSA like it as a string? 2. Non-local pair order ok? Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 24

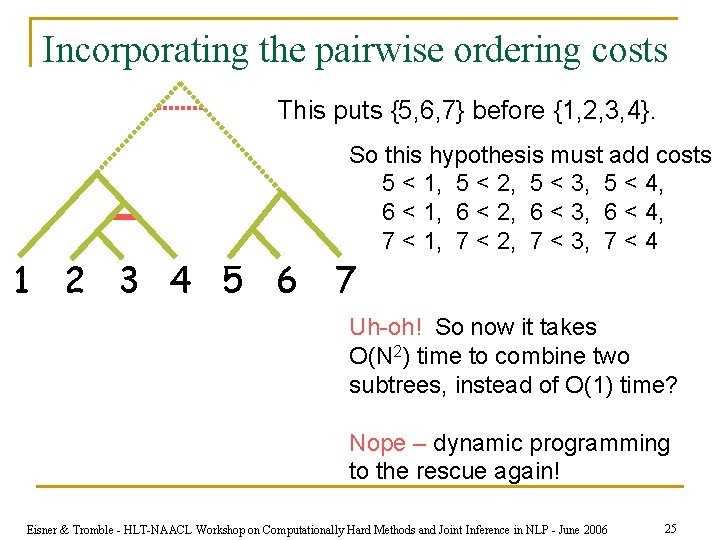

Incorporating the pairwise ordering costs This puts {5, 6, 7} before {1, 2, 3, 4}. 1 2 3 4 5 6 So this hypothesis must add costs 5 < 1, 5 < 2, 5 < 3, 5 < 4, 6 < 1, 6 < 2, 6 < 3, 6 < 4, 7 < 1, 7 < 2, 7 < 3, 7 < 4 7 Uh-oh! So now it takes O(N 2) time to combine two subtrees, instead of O(1) time? Nope – dynamic programming to the rescue again! Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 25

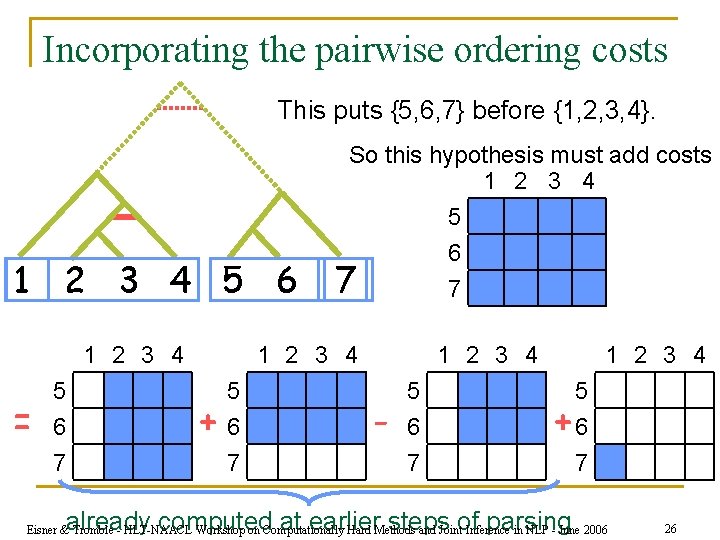

Incorporating the pairwise ordering costs This puts {5, 6, 7} before {1, 2, 3, 4}. 1 2 3 4 5 6 1 2 3 4 = 5 6 7 So this hypothesis must add costs 1 2 3 4 5 6 7 7 + 5 6 7 1 2 3 4 - 5 6 7 1 2 3 4 5 +6 7 already computed at earlier steps of parsing Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 26

Incorporating 3 -way ordering costs n See the paper … n A little tricky, but q q comes “for free” if you’re willing to accept a certain restriction on these costs more expensive without that restriction, but possible Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 27

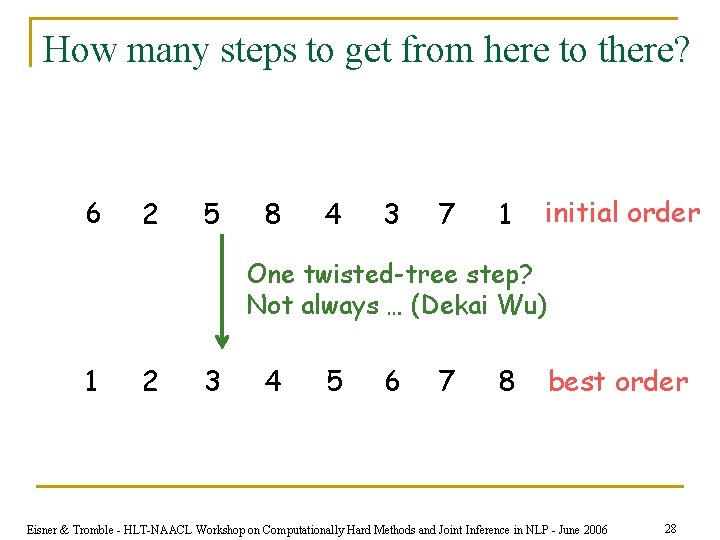

How many steps to get from here to there? 6 2 5 8 4 3 7 1 initial order One twisted-tree step? Not always … (Dekai Wu) 1 2 3 4 5 6 7 8 best order Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 28

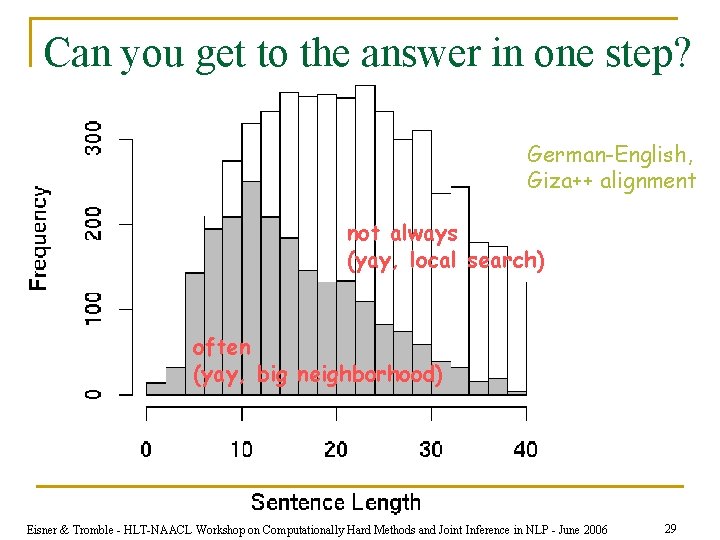

Can you get to the answer in one step? German-English, Giza++ alignment not always (yay, local search) often (yay, big neighborhood) Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 29

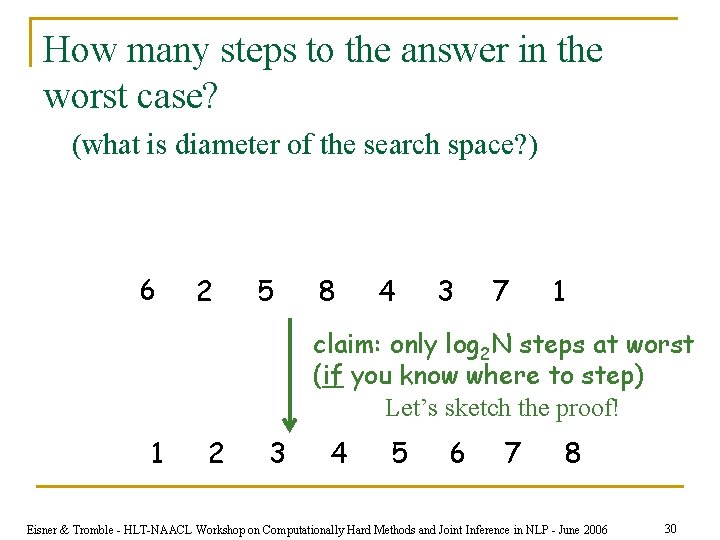

How many steps to the answer in the worst case? (what is diameter of the search space? ) 6 2 5 8 4 3 7 1 claim: only log 2 N steps at worst (if you know where to step) Let’s sketch the proof! 1 2 3 4 5 6 7 8 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 30

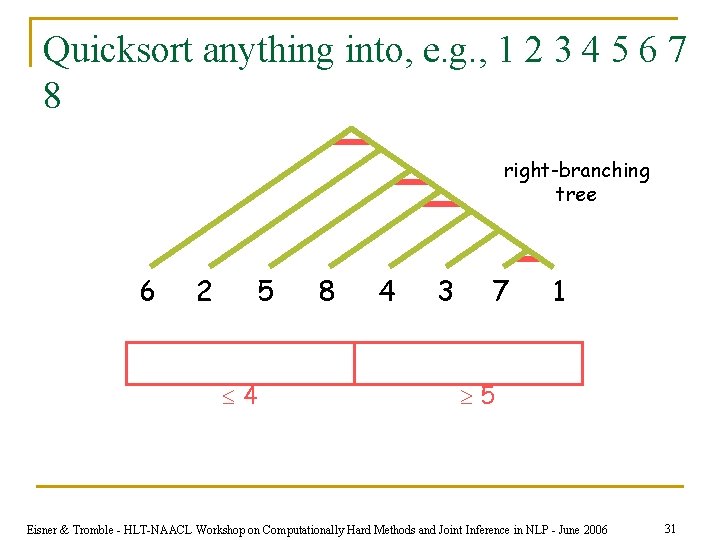

Quicksort anything into, e. g. , 1 2 3 4 5 6 7 8 right-branching tree 6 2 5 4 8 4 3 7 1 5 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 31

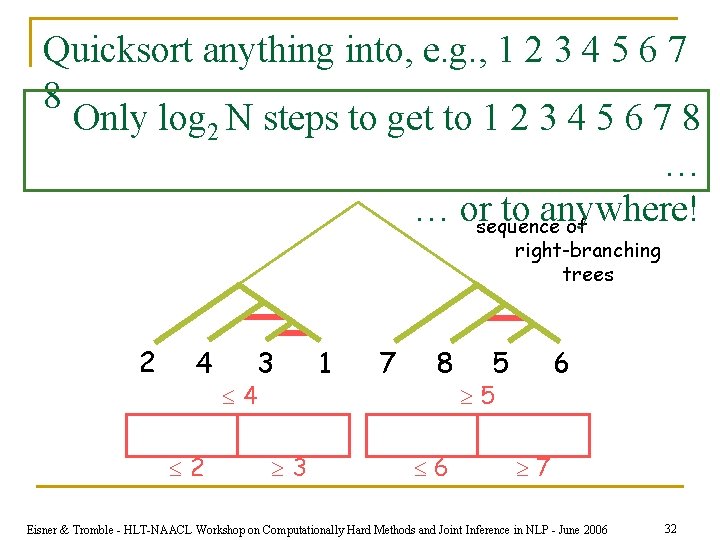

Quicksort anything into, e. g. , 1 2 3 4 5 6 7 8 Only log 2 N steps to get to 1 2 3 4 5 6 7 8 … … orsequence to anywhere! of right-branching trees 2 4 2 4 3 3 1 7 8 6 5 6 5 7 Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 32

Speedups (read the paper!) n We’re just parsing the current permutation as a string – and we know how to speed up parsers! q q n Can restrict to a subset of parse trees q q n pruning A* best-first coarse-to-fine Gives us smaller neighborhoods, quicker to search, but still exponentially large Right-branching trees, asymmetric trees … Note: Even w/o any of this, super-fast and effective on the LOP (no WFSA no grammar const). Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 33

More on modeling (read the paper!) n Encoding classical NP-complete problems n Encoding translation decoding in general q q n Encoding IBM Model 4 Encoding soft phrasal constraints via hidden bracket symbols Costs that depend on features of source sentence q Training the feature weights Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 34

Summary n Local search is fun and easy q q n n Popular elsewhere in AI Closely related to MCMC sampling Probably useful for translation Can efficiently use huge local neighborhoods q q Algorithms are closely related to parsing and FSMs We know that stuff better than anyone! Eisner & Tromble - HLT-NAACL Workshop on Computationally Hard Methods and Joint Inference in NLP - June 2006 35

- Slides: 35