LKRhash The Design of a Scalable Hashtable George

LKRhash The Design of a Scalable Hashtable George V. Reilly http: //www. georgevreilly. com

Origin Story �LKRhash invented at Microsoft in 1997 Paul (Per-Åke) Larson — Microsoft Research Murali R. Krishnan — (then) Internet Information Server George V. Reilly — (then) IIS

LKRhash Design Techniques �Linear Hashing—smooth resizing �Cache-friendly data structures �Fine-grained locking

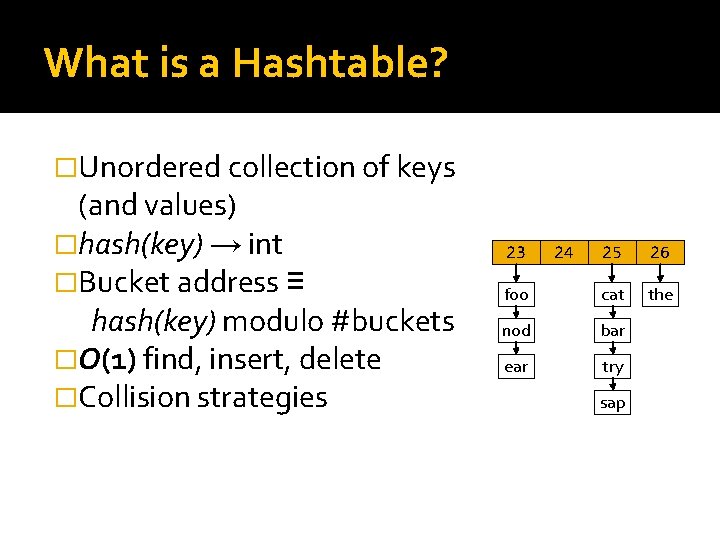

What is a Hashtable? �Unordered collection of keys (and values) �hash(key) → int �Bucket address ≡ hash(key) modulo #buckets �O(1) find, insert, delete �Collision strategies 23 24 25 26 foo cat the nod bar ear try sap

Size Does Matter http: //brechnuss. deviantart. com/art/size-does-matter-73413798

Fixed Size is Never the Right Size �Unless you already know cardinality �Too big—wastes memory �Too small—long chains degenerate to O(n) accesses

Degradation in Fixed-Size Table Insertion Cost 25 20 15 Insertion Cost 10 5 1 11 21 31 41 51 61 71 81 91 101 111 121 131 141 151 161 171 181 191 201 211 221 231 241 251 261 271 281 291 301 311 321 331 341 351 361 371 381 391 0 � 20 -bucket table, 400 insertions from random shuffle

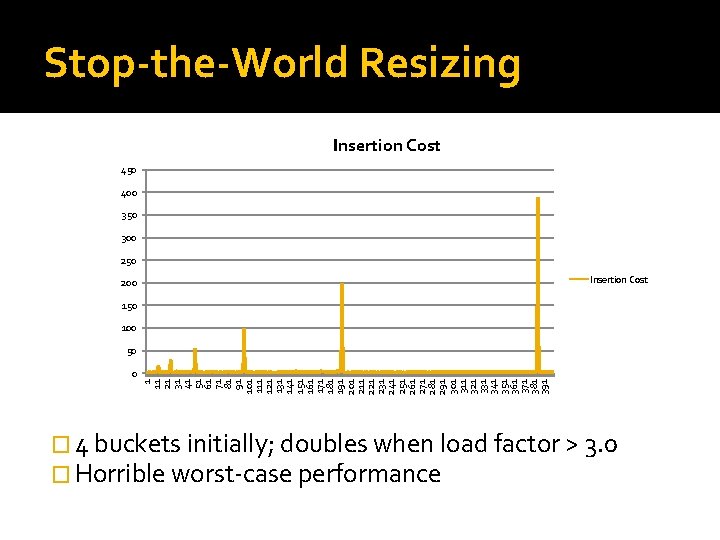

Stop-the-World Resizing Insertion Cost 450 400 350 300 250 Insertion Cost 200 150 100 50 1 11 21 31 41 51 61 71 81 91 101 111 121 131 141 151 161 171 181 191 201 211 221 231 241 251 261 271 281 291 301 311 321 331 341 351 361 371 381 391 0 � 4 buckets initially; doubles when load factor > 3. 0 � Horrible worst-case performance

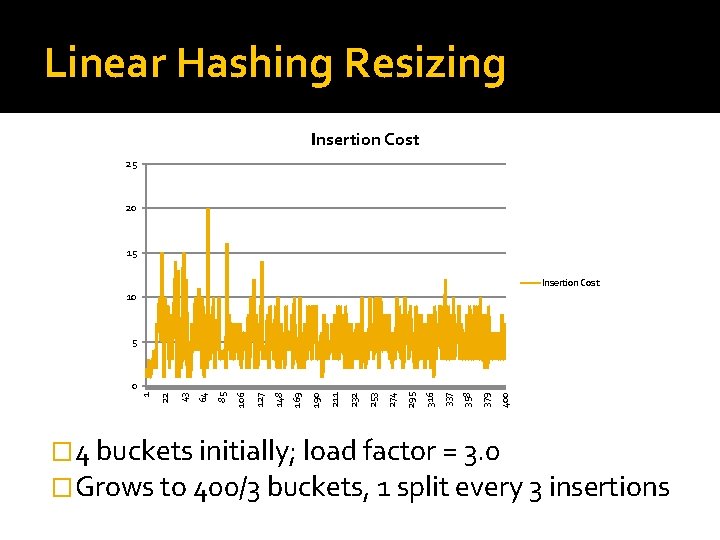

Linear Hashing Resizing Insertion Cost 25 20 15 Insertion Cost 10 5 400 379 358 337 316 295 274 253 232 211 190 169 148 127 106 85 64 43 22 1 0 � 4 buckets initially; load factor = 3. 0 �Grows to 400/3 buckets, 1 split every 3 insertions

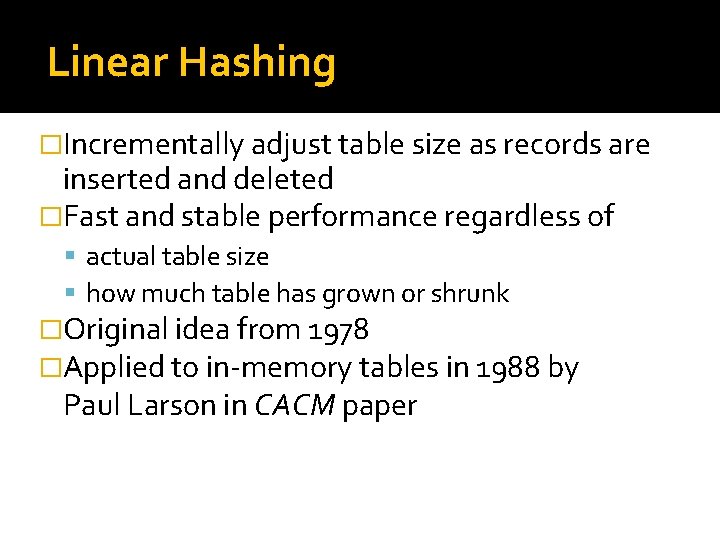

Linear Hashing �Incrementally adjust table size as records are inserted and deleted �Fast and stable performance regardless of actual table size how much table has grown or shrunk �Original idea from 1978 �Applied to in-memory tables in 1988 by Paul Larson in CACM paper

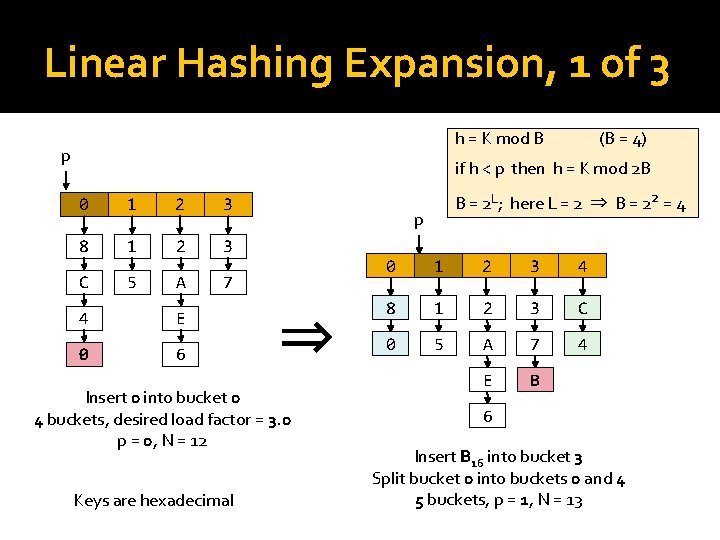

Linear Hashing Expansion, 1 of 3 h = K mod B p (B = 4) if h < p then h = K mod 2 B 0 1 2 3 8 1 2 3 C 5 A 7 4 E 0 6 p ⇒ Insert 0 into bucket 0 4 buckets, desired load factor = 3. 0 p = 0, N = 12 Keys are hexadecimal B = 2 L; here L = 2 ⇒ B = 22 = 4 0 1 2 3 4 8 1 2 3 C 0 5 A 7 4 E B 6 Insert B 16 into bucket 3 Split bucket 0 into buckets 0 and 4 5 buckets, p = 1, N = 13

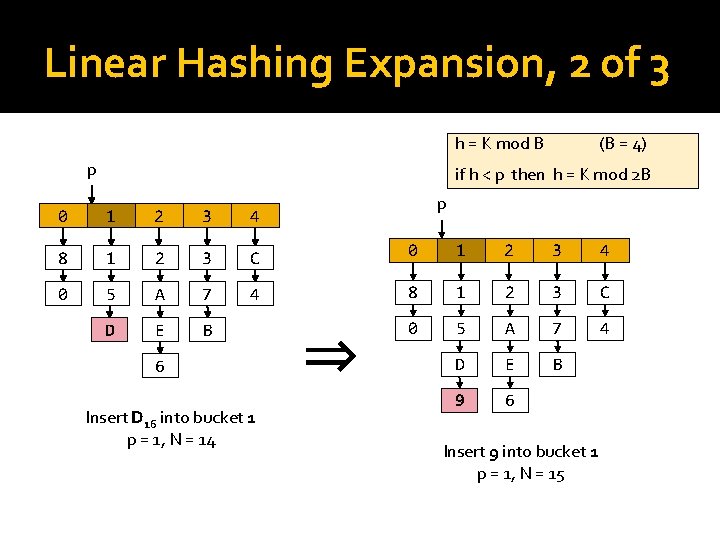

Linear Hashing Expansion, 2 of 3 h = K mod B p (B = 4) if h < p then h = K mod 2 B p 0 1 2 3 4 8 1 2 3 C 0 1 2 3 4 0 5 A 7 4 8 1 2 3 C D E B 0 5 A 7 4 D E B 9 6 6 Insert D 16 into bucket 1 p = 1, N = 14 ⇒ Insert 9 into bucket 1 p = 1, N = 15

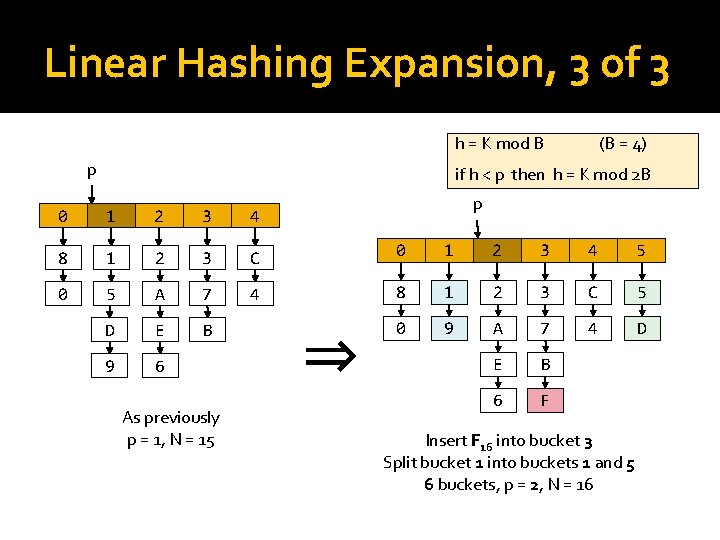

Linear Hashing Expansion, 3 of 3 h = K mod B p (B = 4) if h < p then h = K mod 2 B p 0 1 2 3 4 8 1 2 3 C 0 1 2 3 4 5 0 5 A 7 4 8 1 2 3 C 5 D E B 0 9 A 7 4 D 9 6 E B 6 F As previously p = 1, N = 15 ⇒ Insert F 16 into bucket 3 Split bucket 1 into buckets 1 and 5 6 buckets, p = 2, N = 16

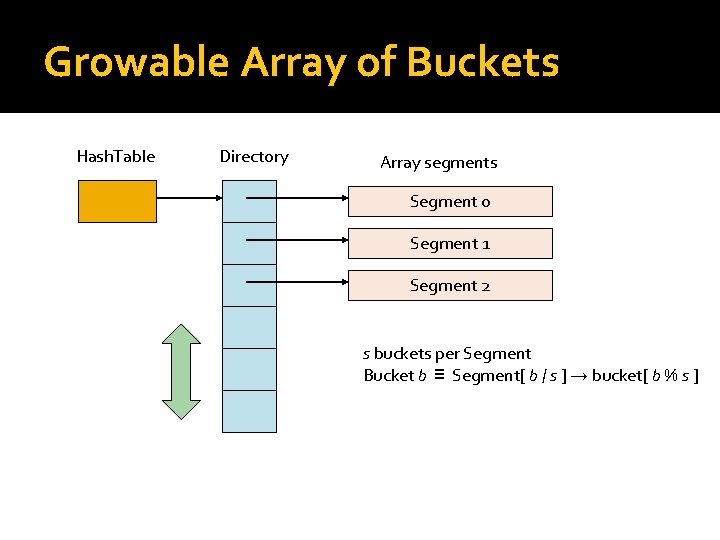

Growable Array of Buckets Hash. Table Directory Array segments Segment 0 Segment 1 Segment 2 s buckets per Segment Bucket b ≡ Segment[ b / s ] → bucket[ b % s ]

Cache-friendliness

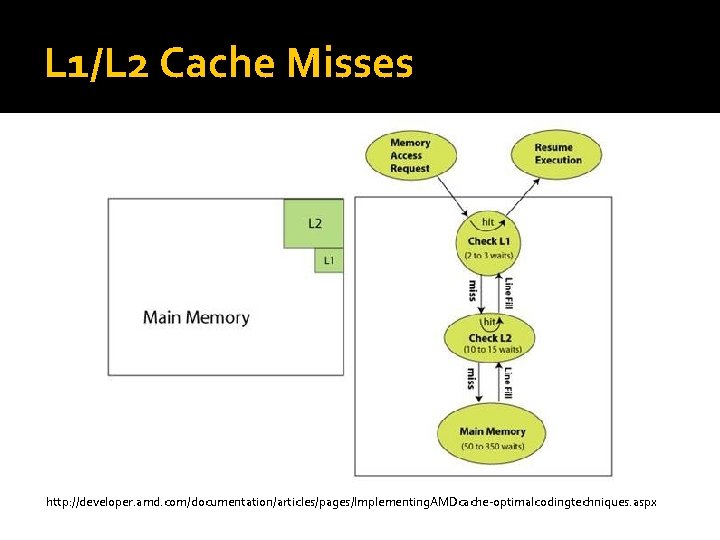

L 1/L 2 Cache Misses http: //developer. amd. com/documentation/articles/pages/Implementing. AMDcache-optimalcodingtechniques. aspx

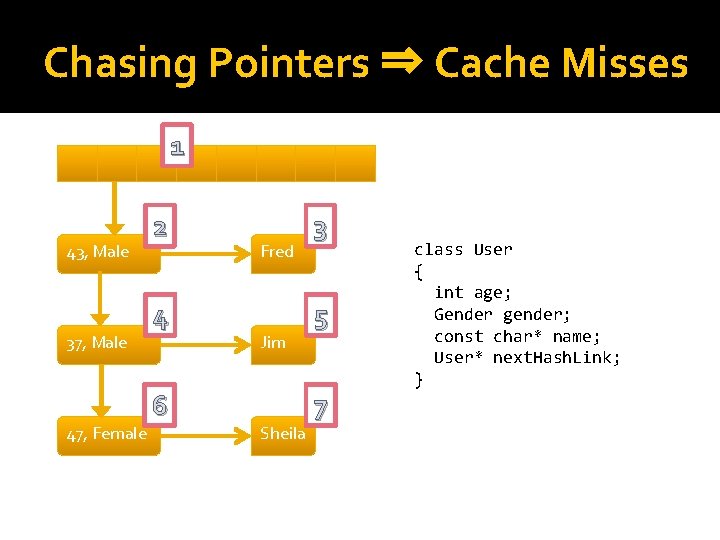

Chasing Pointers ⇒ Cache Misses 1 43, Male 37, Male 47, Female 2 4 6 Fred Jim Sheila 3 5 7 class User { int age; Gender gender; const char* name; User* next. Hash. Link; }

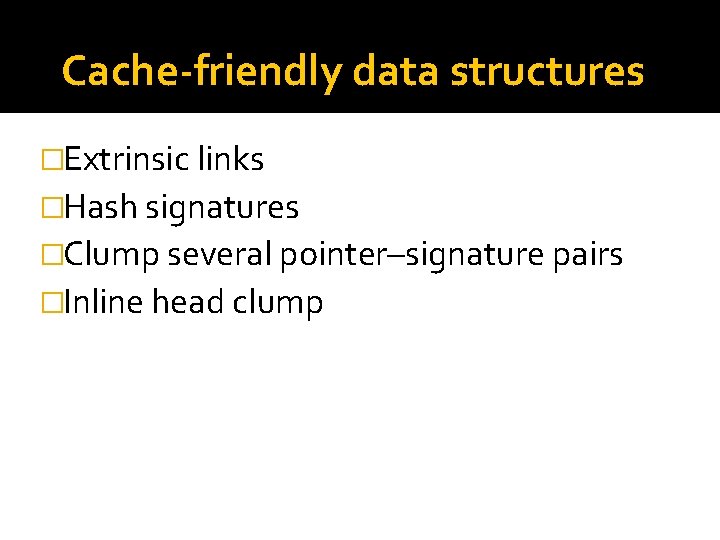

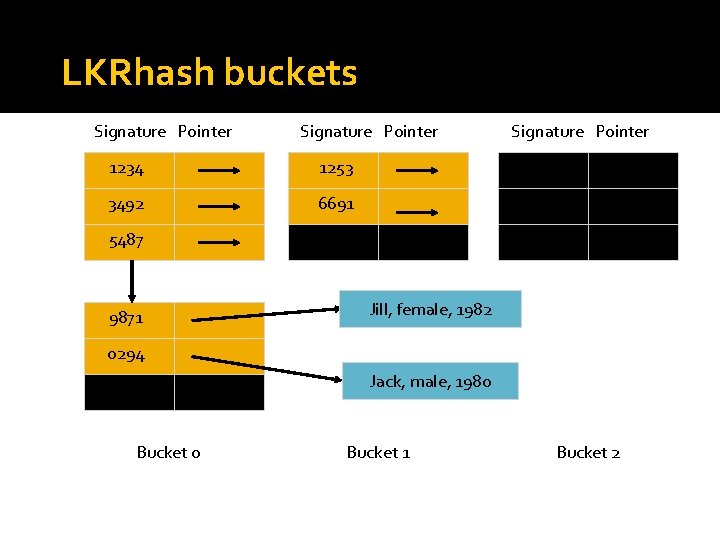

Cache-friendly data structures �Extrinsic links �Hash signatures �Clump several pointer–signature pairs �Inline head clump

LKRhash buckets Signature Pointer 1234 1253 3492 6691 Signature Pointer 5487 9871 Jill, female, 1982 0294 Jack, male, 1980 Bucket 1 Bucket 2

Lock Contention http: //www. flickr. com/photos/hetty_kate/4308051420/

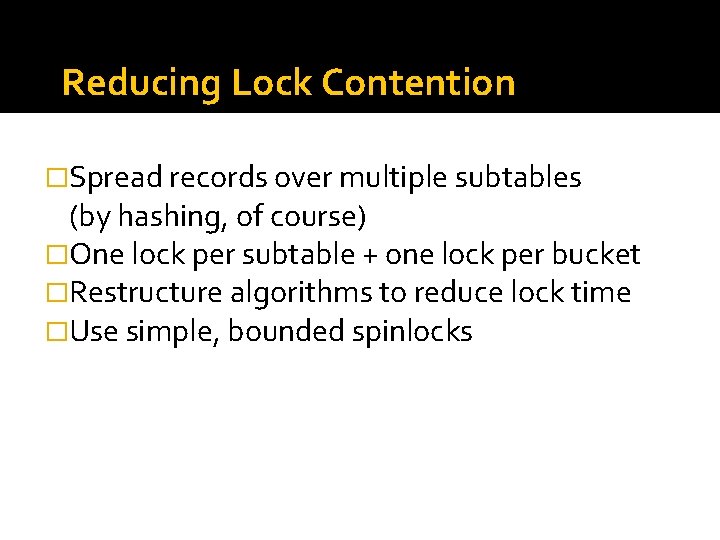

Reducing Lock Contention �Spread records over multiple subtables (by hashing, of course) �One lock per subtable + one lock per bucket �Restructure algorithms to reduce lock time �Use simple, bounded spinlocks

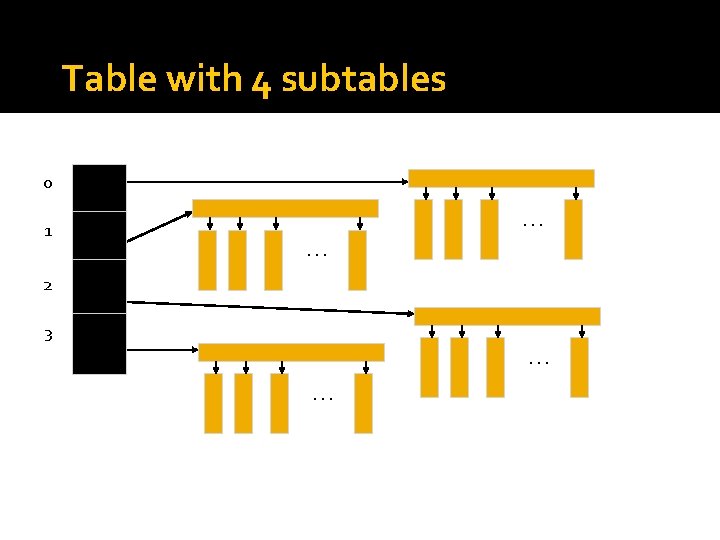

Table with 4 subtables 0 1 0. . . 2 3. . .

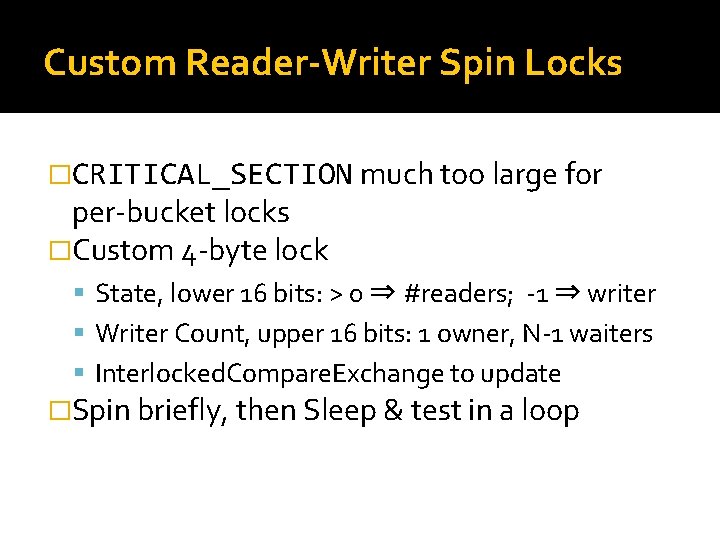

Custom Reader-Writer Spin Locks �CRITICAL_SECTION much too large for per-bucket locks �Custom 4 -byte lock State, lower 16 bits: > 0 ⇒ #readers; -1 ⇒ writer Writer Count, upper 16 bits: 1 owner, N-1 waiters Interlocked. Compare. Exchange to update �Spin briefly, then Sleep & test in a loop

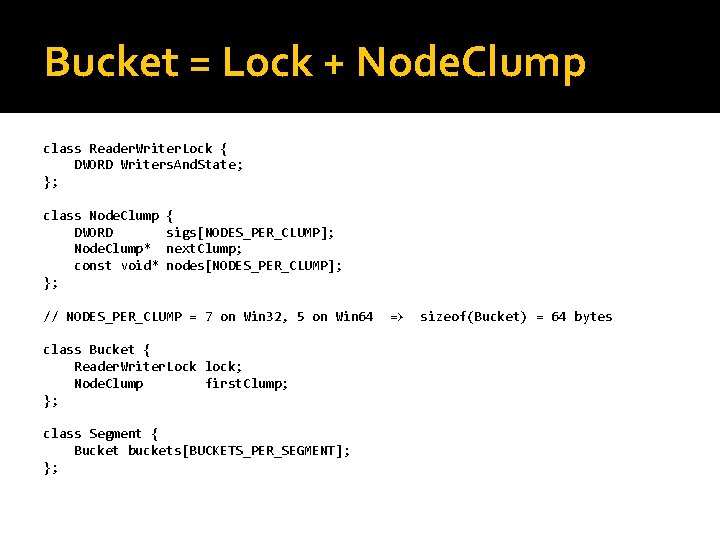

Bucket = Lock + Node. Clump class Reader. Writer. Lock { DWORD Writers. And. State; }; class Node. Clump DWORD Node. Clump* const void* }; { sigs[NODES_PER_CLUMP]; next. Clump; nodes[NODES_PER_CLUMP]; // NODES_PER_CLUMP = 7 on Win 32, 5 on Win 64 class Bucket { Reader. Writer. Lock lock; Node. Clump first. Clump; }; class Segment { Bucket buckets[BUCKETS_PER_SEGMENT]; }; => sizeof(Bucket) = 64 bytes

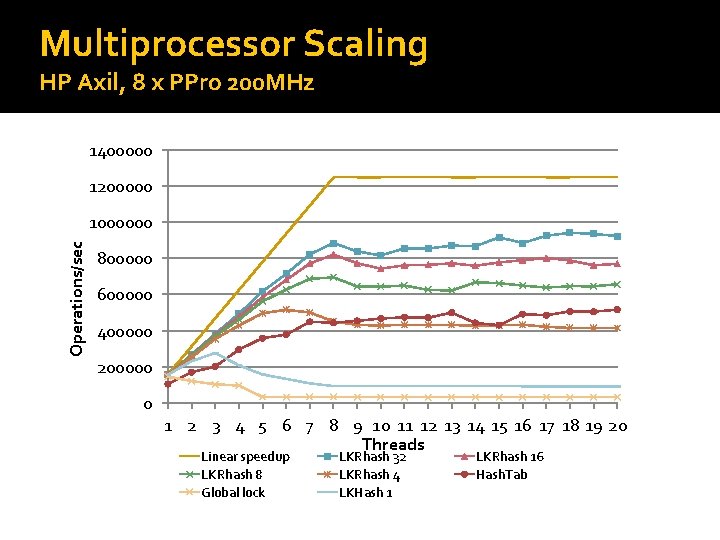

Multiprocessor Scaling HP Axil, 8 x PPro 200 MHz 1400000 1200000 Operations/sec 1000000 800000 600000 400000 200000 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 Threads Linear speedup LKRhash 8 Global lock LKRhash 32 LKRhash 4 LKHash 1 LKRhash 16 Hash. Tab

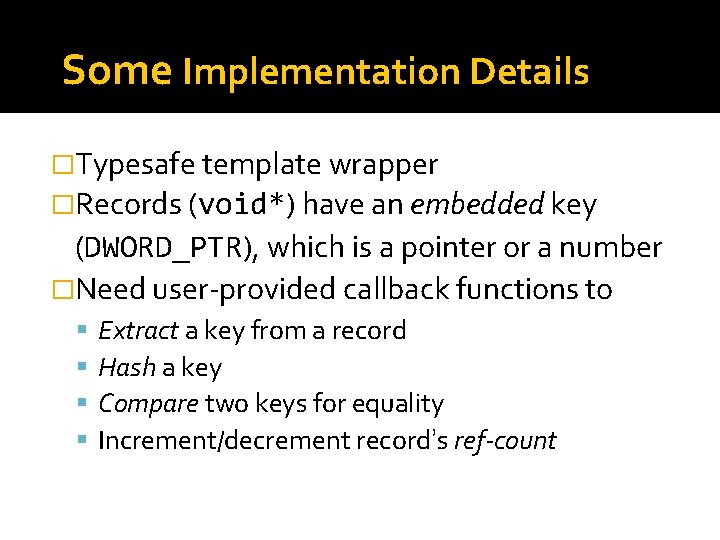

Some Implementation Details �Typesafe template wrapper �Records (void*) have an embedded key (DWORD_PTR), which is a pointer or a number �Need user-provided callback functions to Extract a key from a record Hash a key Compare two keys for equality Increment/decrement record’s ref-count

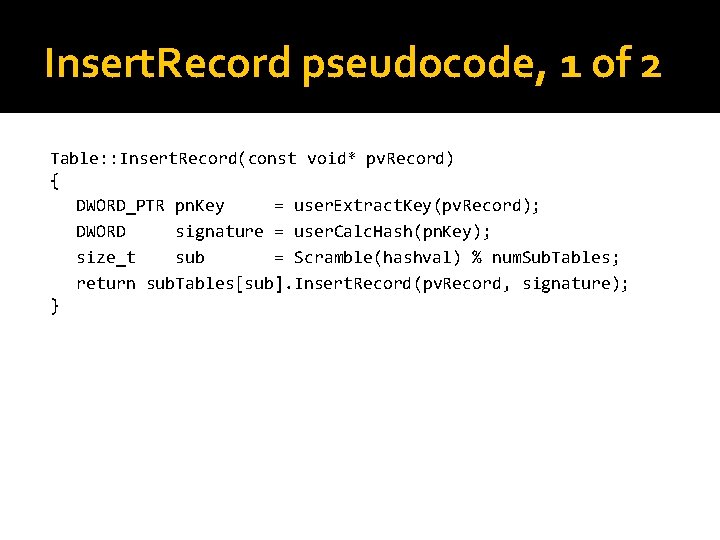

Insert. Record pseudocode, 1 of 2 Table: : Insert. Record(const void* pv. Record) { DWORD_PTR pn. Key = user. Extract. Key(pv. Record); DWORD signature = user. Calc. Hash(pn. Key); size_t sub = Scramble(hashval) % num. Sub. Tables; return sub. Tables[sub]. Insert. Record(pv. Record, signature); }

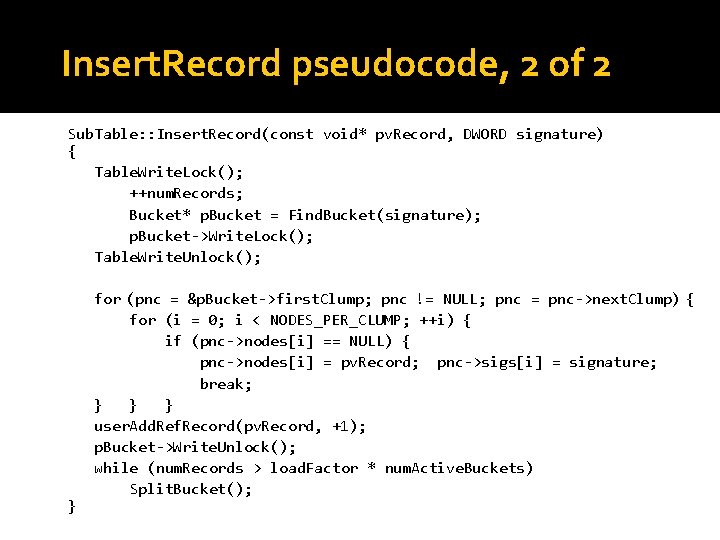

Insert. Record pseudocode, 2 of 2 Sub. Table: : Insert. Record(const void* pv. Record, DWORD signature) { Table. Write. Lock(); ++num. Records; Bucket* p. Bucket = Find. Bucket(signature); p. Bucket->Write. Lock(); Table. Write. Unlock(); } for (pnc = &p. Bucket->first. Clump; pnc != NULL; pnc = pnc->next. Clump) { for (i = 0; i < NODES_PER_CLUMP; ++i) { if (pnc->nodes[i] == NULL) { pnc->nodes[i] = pv. Record; pnc->sigs[i] = signature; break; } } } user. Add. Ref. Record(pv. Record, +1); p. Bucket->Write. Unlock(); while (num. Records > load. Factor * num. Active. Buckets) Split. Bucket();

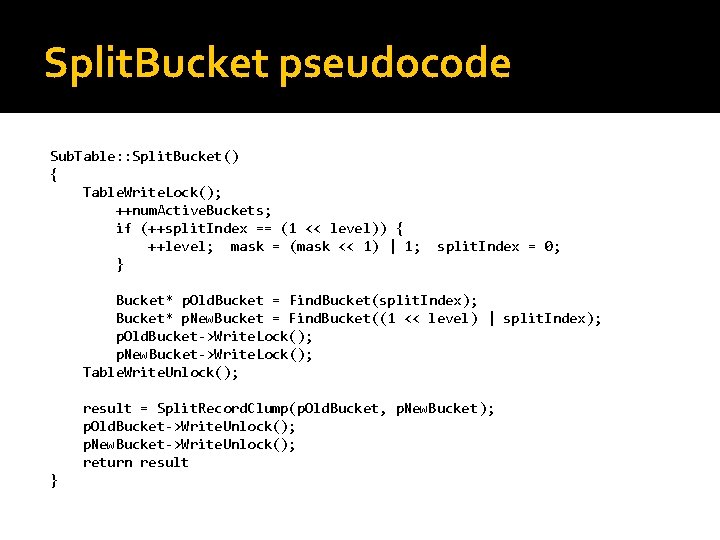

Split. Bucket pseudocode Sub. Table: : Split. Bucket() { Table. Write. Lock(); ++num. Active. Buckets; if (++split. Index == (1 << level)) { ++level; mask = (mask << 1) | 1; } split. Index = 0; Bucket* p. Old. Bucket = Find. Bucket(split. Index); Bucket* p. New. Bucket = Find. Bucket((1 << level) | split. Index); p. Old. Bucket->Write. Lock(); p. New. Bucket->Write. Lock(); Table. Write. Unlock(); result = Split. Record. Clump(p. Old. Bucket, p. New. Bucket); p. Old. Bucket->Write. Unlock(); p. New. Bucket->Write. Unlock(); return result }

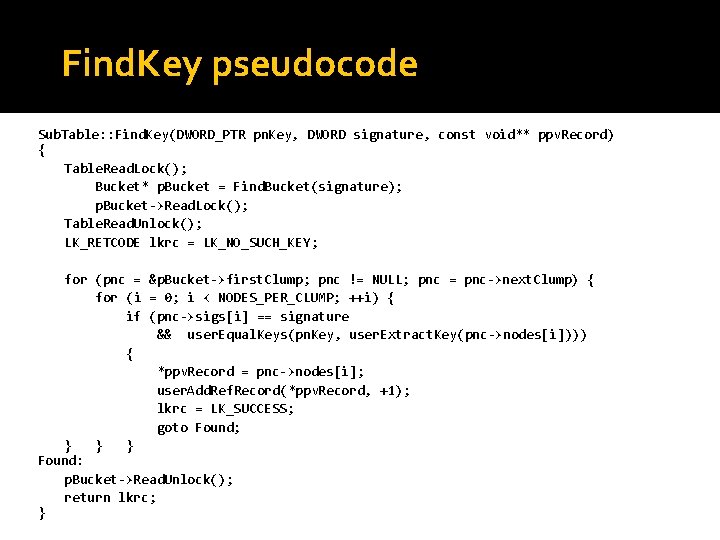

Find. Key pseudocode Sub. Table: : Find. Key(DWORD_PTR pn. Key, DWORD signature, const void** ppv. Record) { Table. Read. Lock(); Bucket* p. Bucket = Find. Bucket(signature); p. Bucket->Read. Lock(); Table. Read. Unlock(); LK_RETCODE lkrc = LK_NO_SUCH_KEY; for (pnc = &p. Bucket->first. Clump; pnc != NULL; pnc = pnc->next. Clump) { for (i = 0; i < NODES_PER_CLUMP; ++i) { if (pnc->sigs[i] == signature && user. Equal. Keys(pn. Key, user. Extract. Key(pnc->nodes[i]))) { *ppv. Record = pnc->nodes[i]; user. Add. Ref. Record(*ppv. Record, +1); lkrc = LK_SUCCESS; goto Found; } } } Found: p. Bucket->Read. Unlock(); return lkrc; }

Gotchas �Patent 6578131 �Closed Source

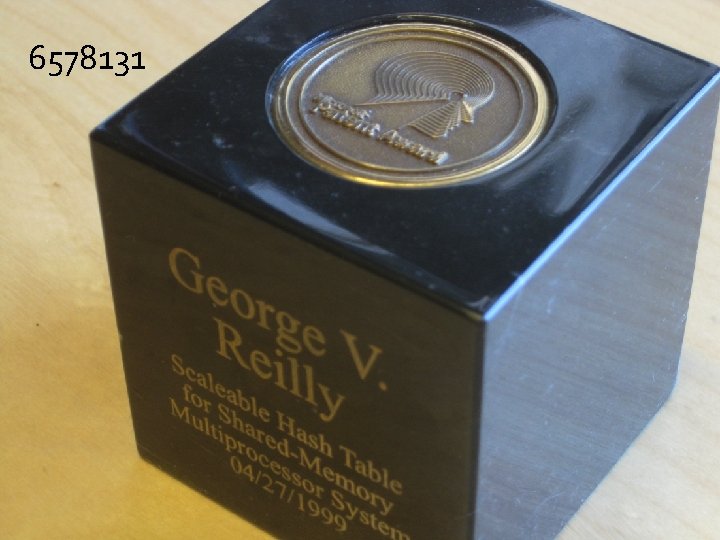

6578131 Patent 6578131 �Scaleable hash table for shared-memory multiprocessor system

Closed Source �Hoping that Microsoft will make LKRhash available on Code. Plex

References �P. -Å. Larson, “Dynamic Hash Tables”, Communications of the ACM, Vol 31, No 4, pp. 446– 457 �http: //www. google. com/patents/US 65781 31. pdf

Other (Multithreaded) Hashtables �Cliff Click’s Non-Blocking Hashtable �Facebook’s Atomic. Hash. Map: video, Github �Intel’s tbb: : concurrent_hash_map �Hash Table Performance Tests (not MT)

- Slides: 35