Live MigrationLM Benchmark Research College of Computer Science

Live Migration(LM) Benchmark Research College of Computer Science Zhejiang University China

Outline Ø Background and Motives Ø Virt-LM Benchmark Overview Ø Further Issues and Possible Solutions Ø Conclusion Ø Our Possible Work under the Cloud WG

Background and Motives

Significance of Live Migration Ø Concept: l l Migration: Move VM between different physical machines Live: Without disconnecting client or application (invisible) Ø Relation to Cloud Computing and Data Centers: l l Cloud Infrastructures and data centers have to efficiently use their huge scales of hardware resources. Virtualization Technology provides two approaches: ü Server Consolidation ü Live Migration Ø Roles in a Data Center: l l Flexibly remap hardware among VMs. Balance workload Save energy Enhance service availability and fault tolerance

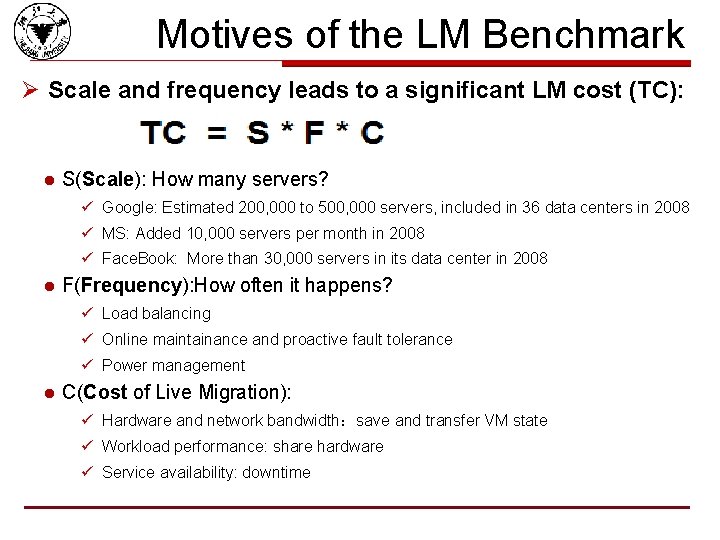

Motives of the LM Benchmark Ø Scale and frequency leads to a significant LM cost (TC): l S(Scale): How many servers? ü Google: Estimated 200, 000 to 500, 000 servers, included in 36 data centers in 2008 ü MS: Added 10, 000 servers per month in 2008 ü Face. Book: More than 30, 000 servers in its data center in 2008 l F(Frequency): How often it happens? ü Load balancing ü Online maintainance and proactive fault tolerance ü Power management l C(Cost of Live Migration): ü Hardware and network bandwidth:save and transfer VM state ü Workload performance: share hardware ü Service availability: downtime

Motives of the LM Benchmark Ø A LM benchmark is in need. l LM benchmark helps make right decisions to reduce cost ü Design better LM strategies ü Choose better platform l Evaluation of a data center should include its LM performance ü VMware released VMmark 2. 0 for multi-server performance in DEC, 2010 Ø Existing evaluation methodologies have their limitations. l VMmark 2. x ü Dedicated to the VMware’s platforms ü A macro benchmark -- no spefic metrics about LM performance l Existing research on LM ü ([Vee 09 Hines], [HPDC 09 Liu], [Cluster 09 Jin], [IWVT 08 Liu], [NSDI 05 Clark], …) ü All dedicated to design LM strategies ü No unified metrics and workloads. Results are not comparable to each other. ü Some critical issues are not mentioned. Ø Still lack of a formal and qualified LM benchmark

Virt-LM Benchmark Overview

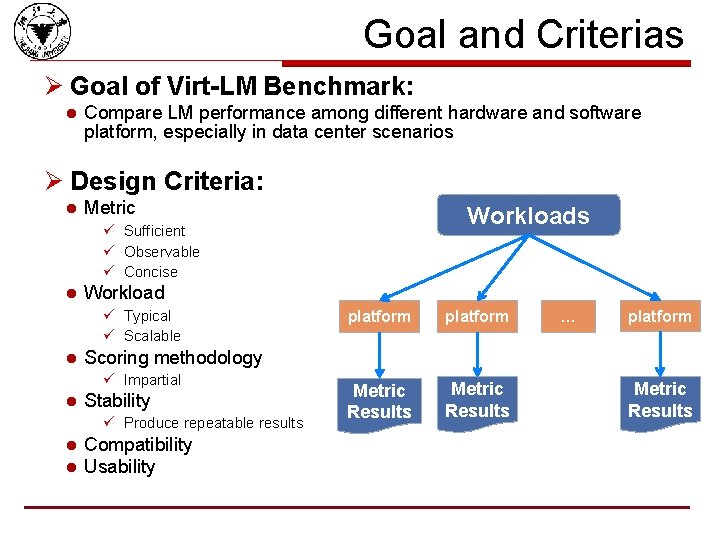

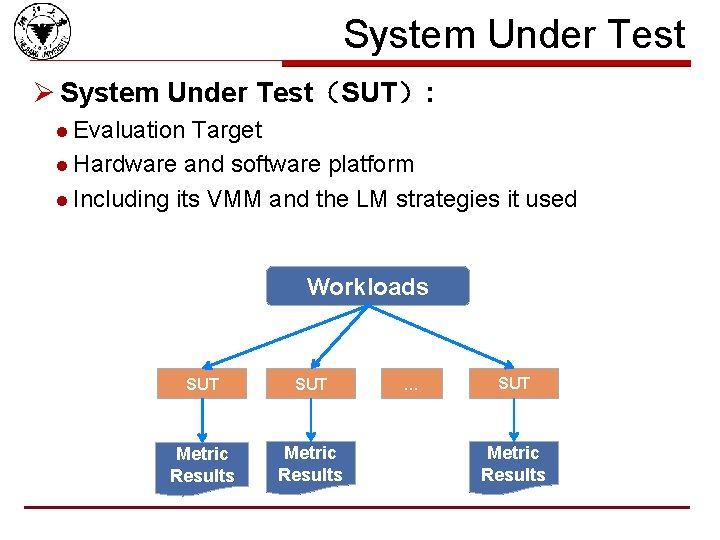

Goal and Criterias Ø Goal of Virt-LM Benchmark: l Compare LM performance among different hardware and software platform, especially in data center scenarios Ø Design Criteria: l Metric Workloads ü Sufficient ü Observable ü Concise l Workload ü Typical ü Scalable l Stability ü Produce repeatable results l l platform Metric Results … platform Scoring methodology ü Impartial l platform Compatibility Usability Metric Results

System Under Test Ø System Under Test(SUT): l Evaluation Target l Hardware and software platform l Including its VMM and the LM strategies it used Workloads SUT Metric Results … SUT Metric Results

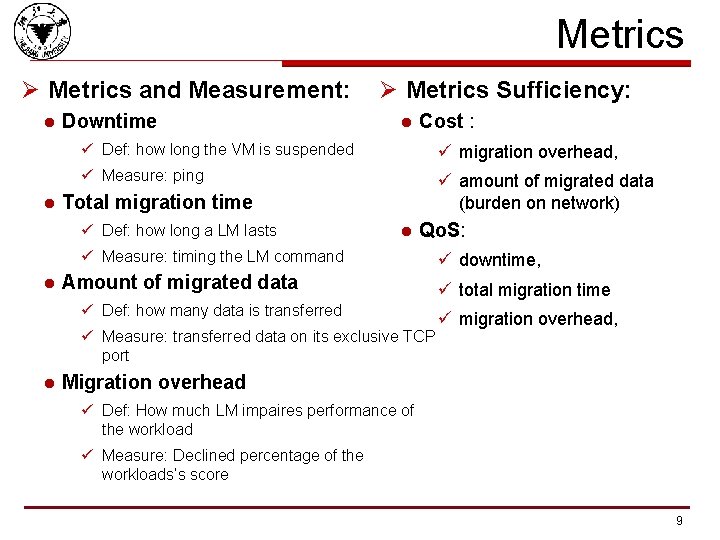

Metrics Ø Metrics and Measurement: l l Downtime Ø Metrics Sufficiency: l Cost : ü Def: how long the VM is suspended ü migration overhead, ü Measure: ping ü amount of migrated data (burden on network) Total migration time ü Def: how long a LM lasts l Qo. S: ü Measure: timing the LM command l Amount of migrated data ü Def: how many data is transferred ü Measure: transferred data on its exclusive TCP port l ü downtime, ü total migration time ü migration overhead, Migration overhead ü Def: How much LM impaires performance of the workload ü Measure: Declined percentage of the workloads’s score 9

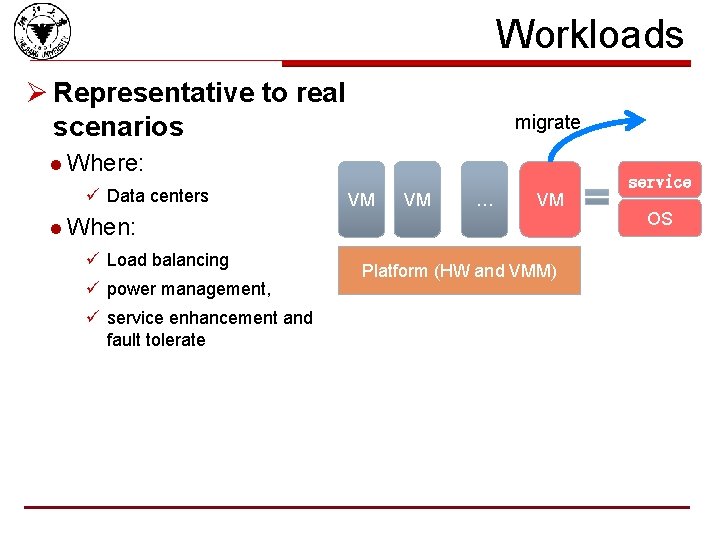

Workloads Ø Representative to real scenarios migrate l Where: ü Data centers service VM VM … VM l When: ü Load balancing ü power management, ü service enhancement and fault tolerate Platform (HW and VMM) OS

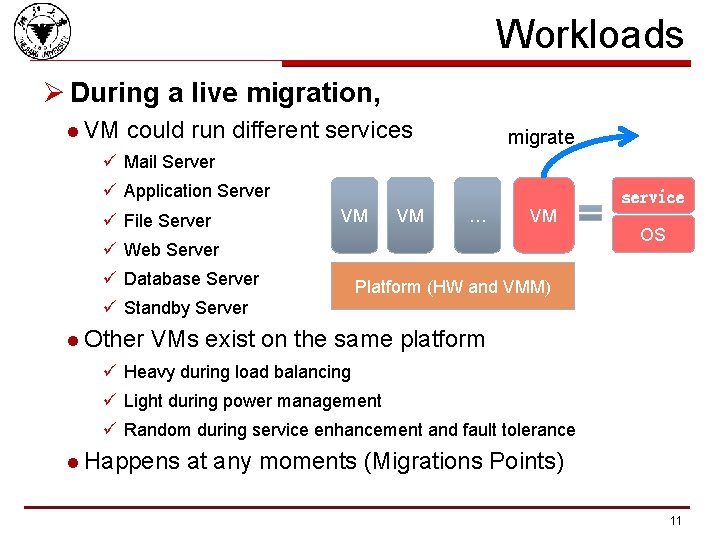

Workloads Ø During a live migration, l VM could run different services migrate ü Mail Server ü Application Server ü File Server service VM VM … VM ü Web Server ü Database Server OS Platform (HW and VMM) ü Standby Server l Other VMs exist on the same platform ü Heavy during load balancing ü Light during power management ü Random during service enhancement and fault tolerance l Happens at any moments (Migrations Points) 11

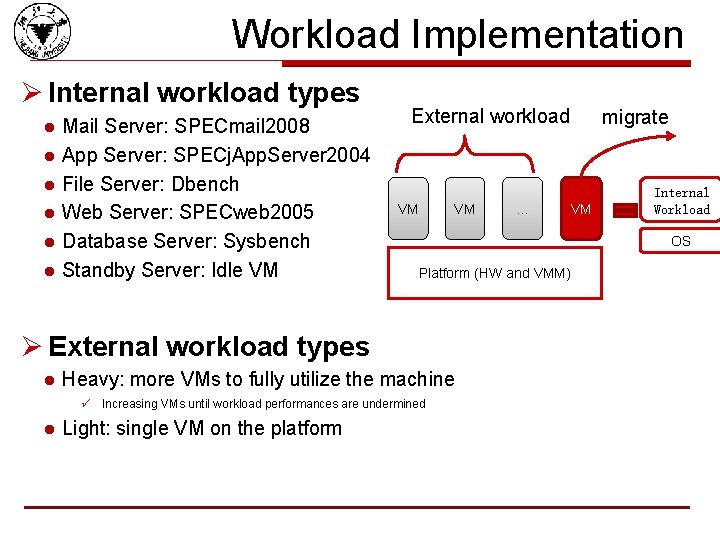

Workload Implementation Ø Internal workload types l l l Mail Server: SPECmail 2008 App Server: SPECj. App. Server 2004 File Server: Dbench Web Server: SPECweb 2005 Database Server: Sysbench Standby Server: Idle VM External workload VM VM Platform (HW and VMM) Heavy: more VMs to fully utilize the machine ü Increasing VMs until workload performances are undermined l Light: single VM on the platform VM Internal Workload OS Ø External workload types l … migrate

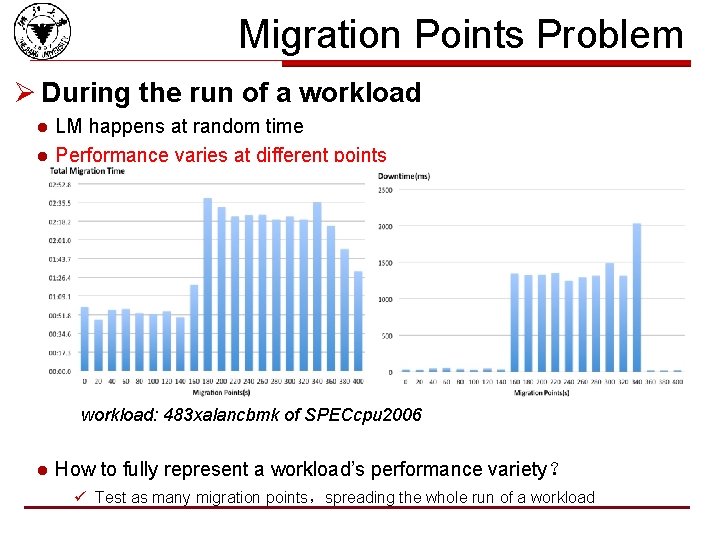

Migration Points Problem Ø During the run of a workload l l LM happens at random time Performance varies at different points workload: 483 xalancbmk of SPECcpu 2006 l How to fully represent a workload’s performance variety? ü Test as many migration points,spreading the whole run of a workload

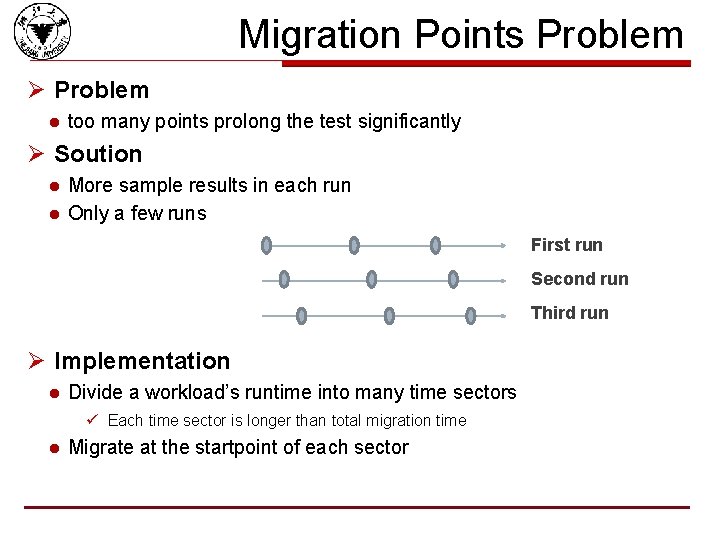

Migration Points Problem Ø Problem l too many points prolong the test significantly Ø Soution l l More sample results in each run Only a few runs First run Second run Third run Ø Implementation l Divide a workload’s runtime into many time sectors ü Each time sector is longer than total migration time l Migrate at the startpoint of each sector

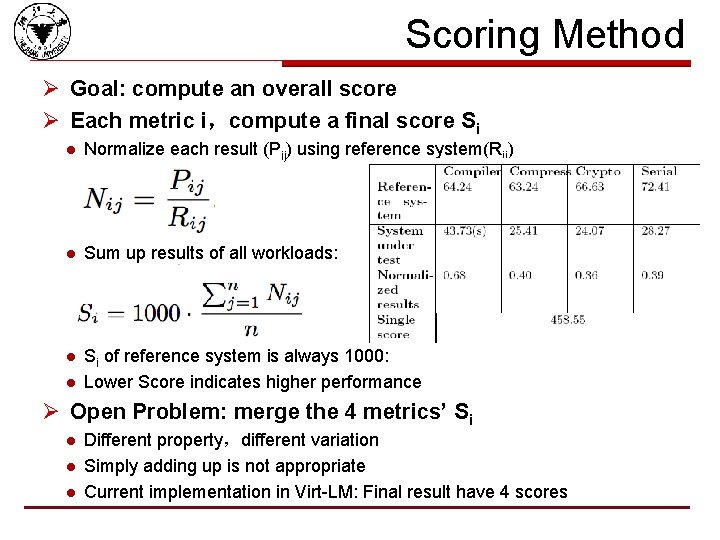

Scoring Method Ø Goal: compute an overall score Ø Each metric i,compute a final score Si l Normalize each result (Pij) using reference system(Rij) l Sum up results of all workloads: l Si of reference system is always 1000: Lower Score indicates higher performance l Ø Open Problem: merge the 4 metrics’ Si l l l Different property,different variation Simply adding up is not appropriate Current implementation in Virt-LM: Final result have 4 scores

Other Criterias Ø Usability l Easy to configure ü VM images Provided ü Workloads pre-installed l Easy to run ü Automatically managed after launch Ø Compatibility l Successful on Xen and KVM Ø Scalable workload: Fully utilize the hardware ü Heavy enough macro workload n Live migration lasts a long time. ü (Multiple live migration) n more than one are migrated concurrently

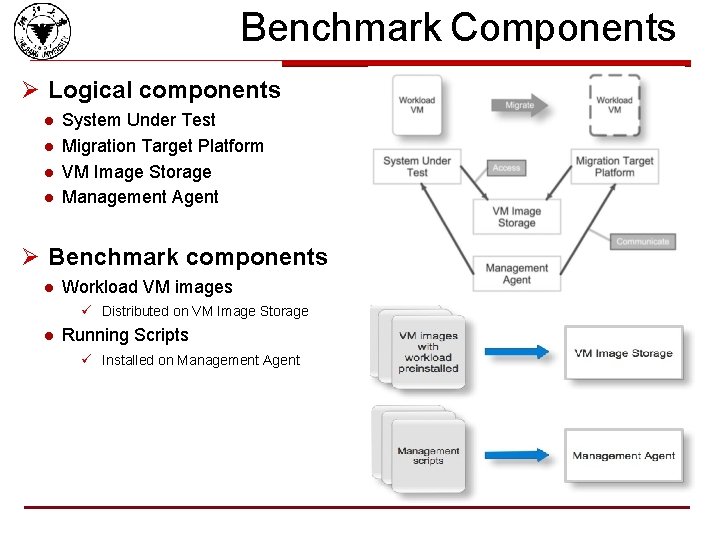

Benchmark Components Ø Logical components l l System Under Test Migration Target Platform VM Image Storage Management Agent Ø Benchmark components l Workload VM images ü Distributed on VM Image Storage l Running Scripts ü Installed on Management Agent

Internal Running Process Ø For every class of workload l Initialize the environment l Run the workload l Migrate the VM at different migration points l Fetch the metrics results Ø Collect all results and Compute an overall score Ø Management Agent automatically control the whole process 18

Experiments on Xen and KVM Ø Experiment Setup l SUT-XEN ü VMM:Xen 3. 3 on Linux 2. 6. 27 ü Hardware:DELL OPTIPLEX 755, 2. 4 GHz Intel Core Quad Q 6600, 2 GB memory, sata disk, 100 Mbit network l SUT-KVM ü VMM:KVM-84 on Linux 2. 6. 27 ü Hardware:Same as SUT-XEN l VM ü Linux 2. 6. 27, 512 MB mem, one core l Workload ü Internal: SPECjvm 2008, cpu/mem intensive workloads ü External: Light: single VM ü Migration Points: Spreading the whole running

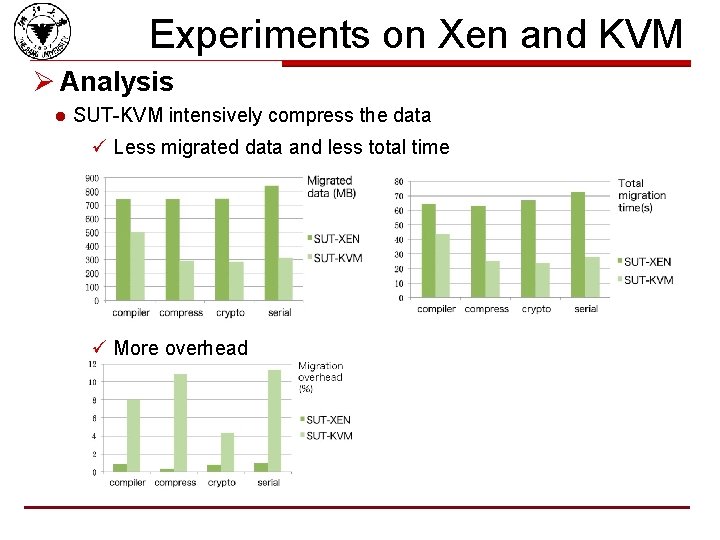

Experiments on Xen and KVM Ø Analysis l SUT-KVM intensively compress the data ü Less migrated data and less total time ü More overhead

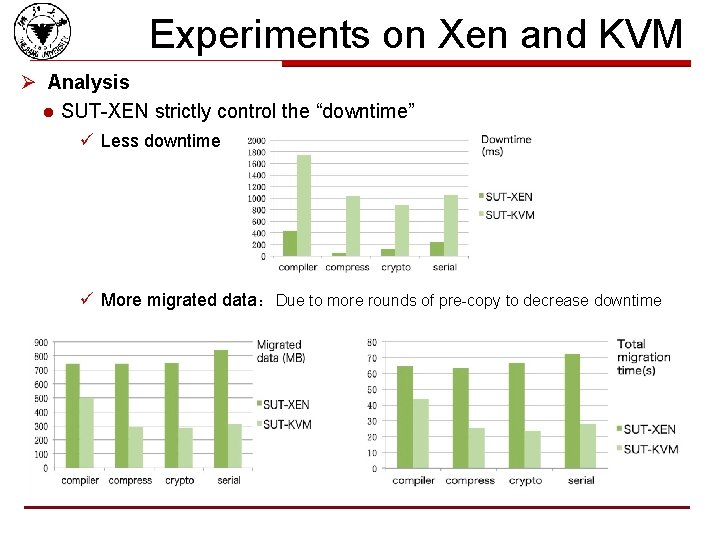

Experiments on Xen and KVM Ø Analysis l SUT-XEN strictly control the “downtime” ü Less downtime ü More migrated data:Due to more rounds of pre-copy to decrease downtime

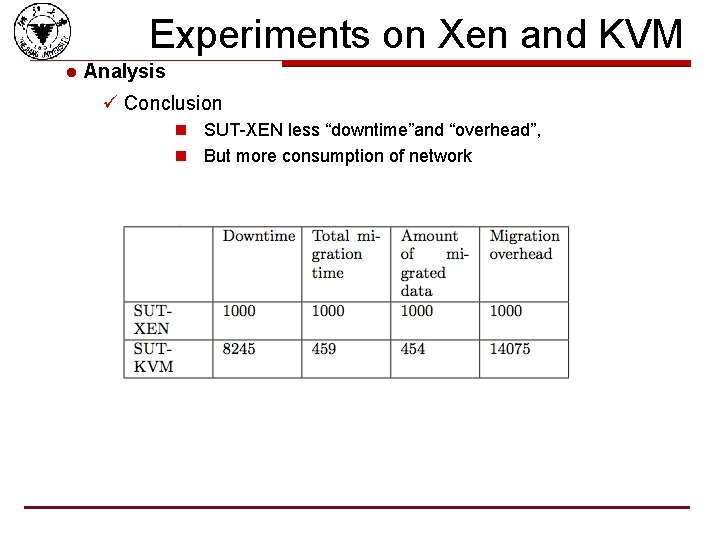

Experiments on Xen and KVM l Analysis ü Conclusion n SUT-XEN less “downtime”and “overhead”, n But more consumption of network

Further Issues and Possible Solutions

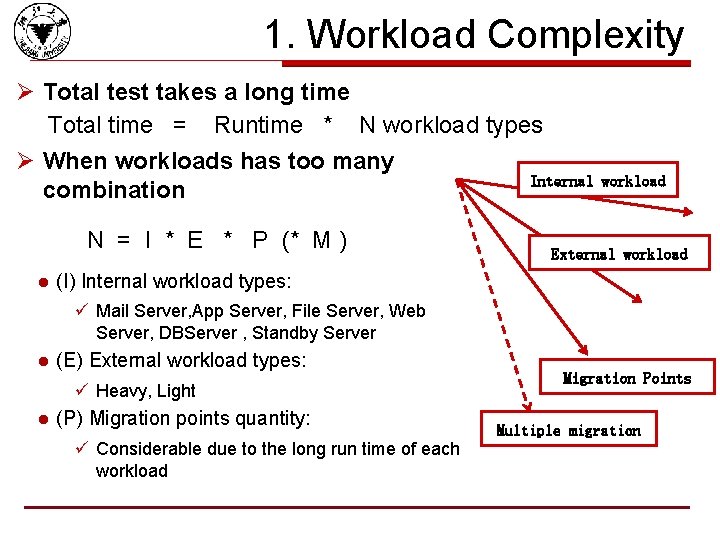

1. Workload Complexity Ø Total test takes a long time Total time = Runtime * N workload types Ø When workloads has too many Internal combination N = I * E * P (* M ) l workload External workload (I) Internal workload types: ü Mail Server, App Server, File Server, Web Server, DBServer , Standby Server l (E) External workload types: ü Heavy, Light l (P) Migration points quantity: ü Considerable due to the long run time of each workload Migration Points Multiple migration

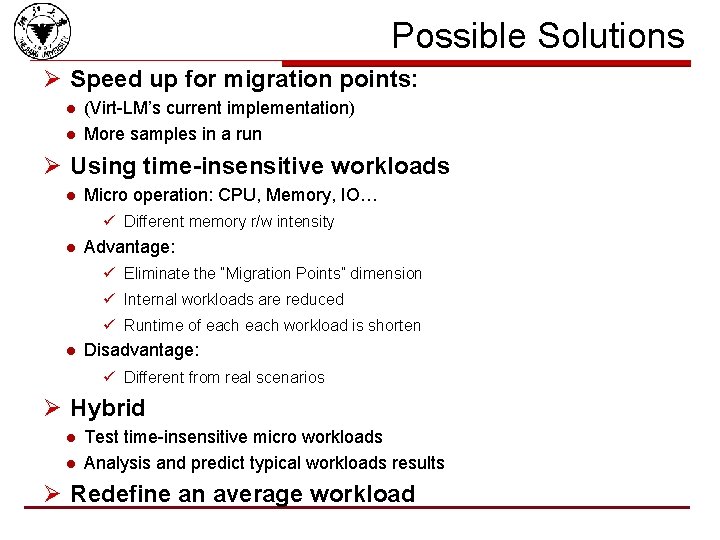

Possible Solutions Ø Speed up for migration points: l l (Virt-LM’s current implementation) More samples in a run Ø Using time-insensitive workloads l Micro operation: CPU, Memory, IO… ü Different memory r/w intensity l Advantage: ü Eliminate the “Migration Points” dimension ü Internal workloads are reduced ü Runtime of each workload is shorten l Disadvantage: ü Different from real scenarios Ø Hybrid l l Test time-insensitive micro workloads Analysis and predict typical workloads results Ø Redefine an average workload

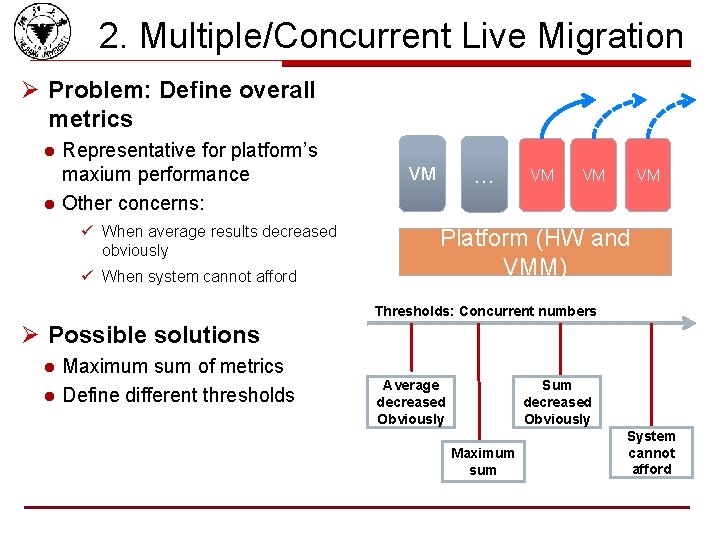

2. Multiple/Concurrent Live Migration Ø Problem: Define overall metrics l l Representative for platform’s maxium performance Other concerns: ü When average results decreased obviously ü When system cannot afford … VM VM Platform (HW and VMM) Thresholds: Concurrent numbers Ø Possible solutions l l Maximum sum of metrics Define different thresholds Average decreased Obviously Sum decreased Obviously Maximum sum System cannot afford

3. Other Issues Ø Overall score computation l Virt-LM produces 4 scores as the final result Ø Definition of external workloads l Current implementation is simple Ø Repeatability l l Need more experiment to exam Migration points are not precisely arranged Ø Compatibility l Should be compatible to other VMM, besides Xen and KVM Ø Usability l More easy to configure and run

Conclusion

Current Work Ø Investigation on recent work on LM Ø Summarize the critical problems l Migration points l Workload complexity l Scoring methods l Multiple live migration Ø Present some possible solutions Ø Implement a benchmark prototype – Virt-LM More details in “Virt-LM: A Benchmark for Live Migration of Virtual Machine”(ICPE 2011)

Future work Ø Improve and complete Virt-LM l Implement and test other solutions ü Workload complexity ü Multiple live migration ü Overall score computation ü Others l Test and compare their effectiveness and choose best one 30

Our Possible Work under the Cloud WG

Possible Work Ø Relation to the cloud benchmark l Enough migration cost in the workload l ü Although the cost maybe not a metric, we have to ensure workload could cause enough cost. How fast could a cloud reallocate resources? ü If implemented by live migration technology, it regards to following two factors: n 1. how many migrations (determined by) resource management and reallocation strategies n 2. how fast for each migration live migration efficiency & cost Ø Possible future work under cloud benchmark l We may work on how to ensure the workload produce enough live migration cost 32

Possible Work Ø We hope to cooperate with other members, maybe join a sub-project related to live migration. Ø We hope can contribute to the design of the Cloud Benchmark 33

Team Members Ø Prof. Dr. Qinming He l hqm@zju. edu. cn Ø Kejiang Ye l l Representative of the SPEC Research Group yekejiang@zju. edu. cn Ø Assoc. Prof. Dr. Deshi Ye l yedeshi@zju. edu. cn Ø Jianhai Chen l Chenjh 919@zju. edu. cn Ø Dawei Huang l tossboyhdw@zju. edu. cn Ø …….

Appendix: Team’s Recent Work

Virtualization Performance Ø Virtualization in Cloud Computing System l IEEE Cloud’ 2011, IEEE/ACM Green. Com’ 2010 Ø Performance Evaluation & Benchmark of VM l ACM/SPEC ICPE’ 2011, IWVT’ 2008 (ISCA Workshop), EUC’ 2008 Ø Performance Optimization of VM l ACM HPDC’ 2010, IEEE HPCC’ 2010, IEEE ISPA’ 2009 Ø Performance Modeling of VM l IEEE HPCC’ 2010, IFIP NPC’ 2010 Ø Performance Testing Toolkit for VM l IEEE China. Grid’ 2010 36

![Publications Ø [1] Live Migration of Multiple Virtual Machines with Resource Reservation in Cloud Publications Ø [1] Live Migration of Multiple Virtual Machines with Resource Reservation in Cloud](http://slidetodoc.com/presentation_image_h2/c808aa69ab46d1ca9b15fe597c41683e/image-38.jpg)

Publications Ø [1] Live Migration of Multiple Virtual Machines with Resource Reservation in Cloud Computing Environments (IEEE Cloud’ 2011, Accept) Ø [2] Virt-LM: A Benchmark for Live Migration of Virtual Machine (ACM/SPEC ICPE’ 2011) Ø [3] Virtual Machine Based Energy-Efficient Data Center Architecture for Cloud Computing: A Performance Perspective” (IEEE/ACM Green. Com’ 2010) Ø [4] Analyzing and Modeling the Performance in Xen-based Virtual Cluster Environment, (IEEE HPCC’ 2010 ) Ø [5] Two Optimization Mechanisms to Improve the Isolation Property of Server Consolidation in Virtualized Multi-core Server, (IEEE HPCC’ 2010) Ø [6] Evaluate the Performance and Scalability of Image Deployment in Virtual Data Center, (IFIP NPC’ 2010) Ø [7] v. Testkit: A Performance Benchmarking Framework for Virtualization Environments, (IEEE China. Grid’ 2010) Ø [8] Improving Host Swapping Using Adaptive Prefetching and Paging Notifier, (ACM HPDC’ 2010) Ø [9] Load Balancing in Server Consolidation, (IEEE ISPA’ 2009) Ø [10] A Framework to Evaluate and Predict Performances in Virtual Machines Environment, (IEEE EUC’ 2008) Ø [11] Performance Measuring and Comparing of Virtual Machine Monitors, (IWVT’ 2008, ISCA Workshop) 37

Thank you!

- Slides: 39