LING 138238 SYMBSYS 138 Intro to Computer Speech

LING 138/238 SYMBSYS 138 Intro to Computer Speech and Language Processing Lecture 9: Speech Recognition (I) October 26, 2004 Dan Jurafsky 1/23/2022 LING 138/238 Autumn 2004 1

Outline for ASR this week • • Acoustic Phonetics ASR Architecture • Five easy pieces of an ASR system • – The Noisy Channel Model 1) 2) 3) 4) 5) Feature Extraction Acoustic Model Lexicon/Pronunciation Model Decoder Language Model Evaluation 1/23/2022 LING 138/238 Autumn 2004 2

Acoustic Phonetics • Sound Waves – http: //www. kettering. edu/~drussell/Demos/wave s-intro/waves-intro. html – http: //www. kettering. edu/~drussell/Demos/wave s/Lwave. gif 1/23/2022 LING 138/238 Autumn 2004 3

![Waveforms for speech • Waveform of the vowel [iy] • • • Frequency: repetitions/second Waveforms for speech • Waveform of the vowel [iy] • • • Frequency: repetitions/second](http://slidetodoc.com/presentation_image_h2/8cc44019f2183ac9f0db5b7f004e222a/image-4.jpg)

Waveforms for speech • Waveform of the vowel [iy] • • • Frequency: repetitions/second of a wave Above vowel has 28 reps in. 11 secs So freq is 28/. 11 = 255 Hz This is speed that vocal folds move, hence voicing Amplitude: y axis: amount of air pressure at that point in time • Zero is normal air pressure, negative is rarefaction 1/23/2022 LING 138/238 Autumn 2004 4

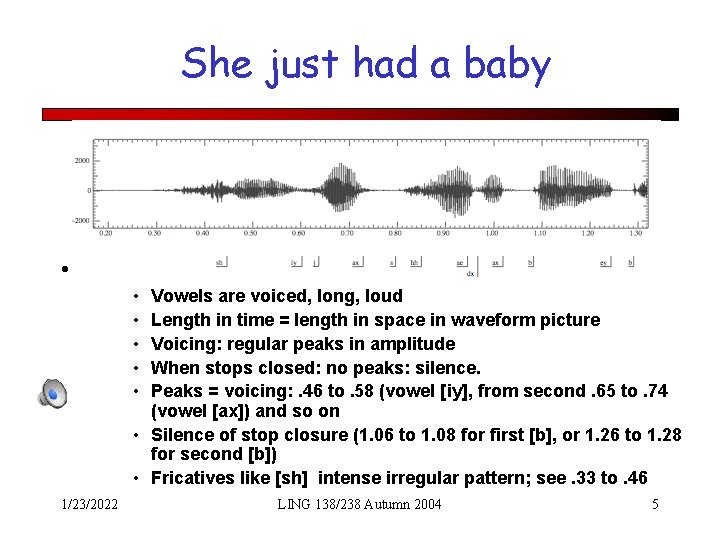

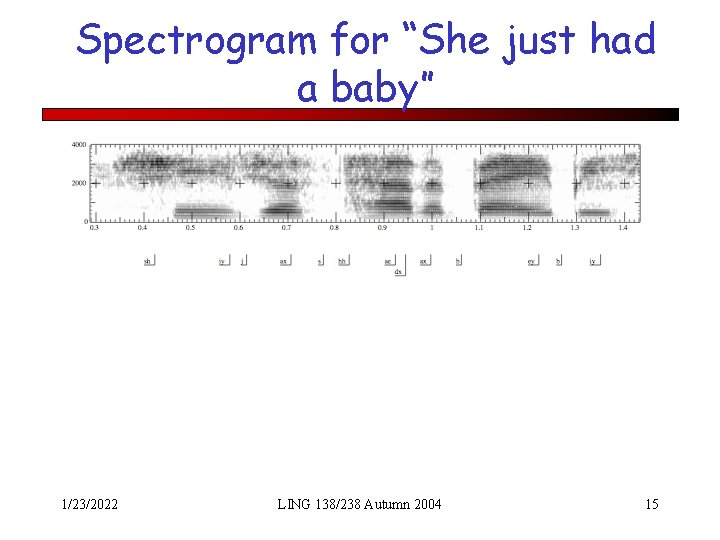

She just had a baby • What can we learn from a wavefile? • • • Vowels are voiced, long, loud Length in time = length in space in waveform picture Voicing: regular peaks in amplitude When stops closed: no peaks: silence. Peaks = voicing: . 46 to. 58 (vowel [iy], from second. 65 to. 74 (vowel [ax]) and so on • Silence of stop closure (1. 06 to 1. 08 for first [b], or 1. 26 to 1. 28 for second [b]) • Fricatives like [sh] intense irregular pattern; see. 33 to. 46 1/23/2022 LING 138/238 Autumn 2004 5

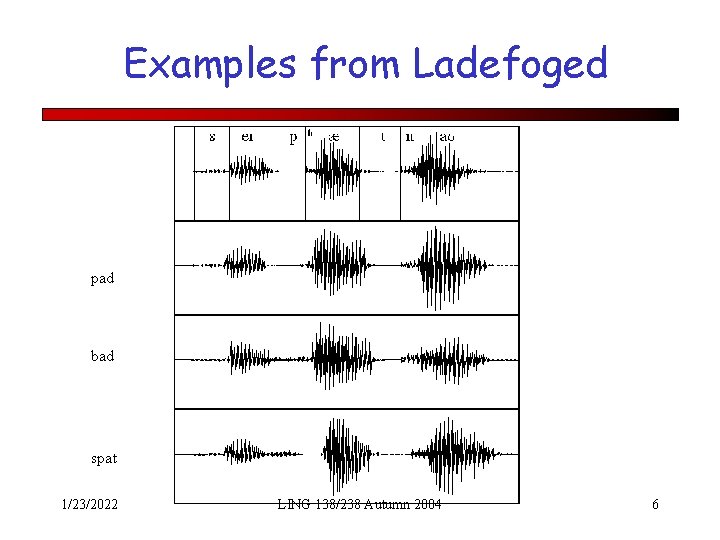

Examples from Ladefoged pad bad spat 1/23/2022 LING 138/238 Autumn 2004 6

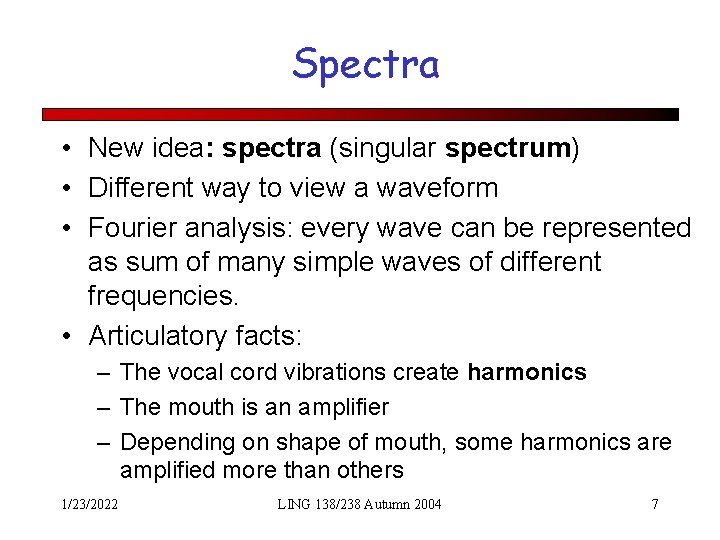

Spectra • New idea: spectra (singular spectrum) • Different way to view a waveform • Fourier analysis: every wave can be represented as sum of many simple waves of different frequencies. • Articulatory facts: – The vocal cord vibrations create harmonics – The mouth is an amplifier – Depending on shape of mouth, some harmonics are amplified more than others 1/23/2022 LING 138/238 Autumn 2004 7

![Part of [ae] waveform from “had” • Note complex wave repeating nine times in Part of [ae] waveform from “had” • Note complex wave repeating nine times in](http://slidetodoc.com/presentation_image_h2/8cc44019f2183ac9f0db5b7f004e222a/image-8.jpg)

Part of [ae] waveform from “had” • Note complex wave repeating nine times in figure • Plus smaller waves which repeats 4 times for every large pattern • Large wave has frequency of 250 Hz (9 times in. 036 seconds) • Small wave roughly 4 times this, or roughly 1000 Hz • Two little tiny waves on top of peak of 1000 Hz waves 1/23/2022 LING 138/238 Autumn 2004 8

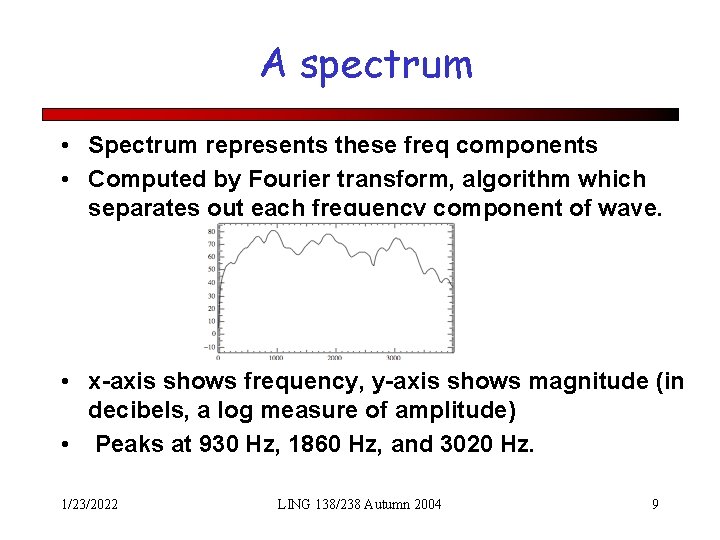

A spectrum • Spectrum represents these freq components • Computed by Fourier transform, algorithm which separates out each frequency component of wave. • x-axis shows frequency, y-axis shows magnitude (in decibels, a log measure of amplitude) • Peaks at 930 Hz, 1860 Hz, and 3020 Hz. 1/23/2022 LING 138/238 Autumn 2004 9

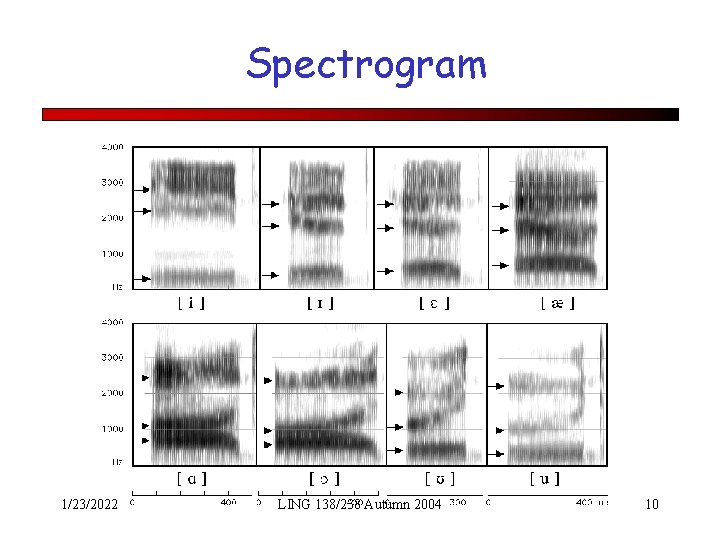

Spectrogram 1/23/2022 LING 138/238 Autumn 2004 10

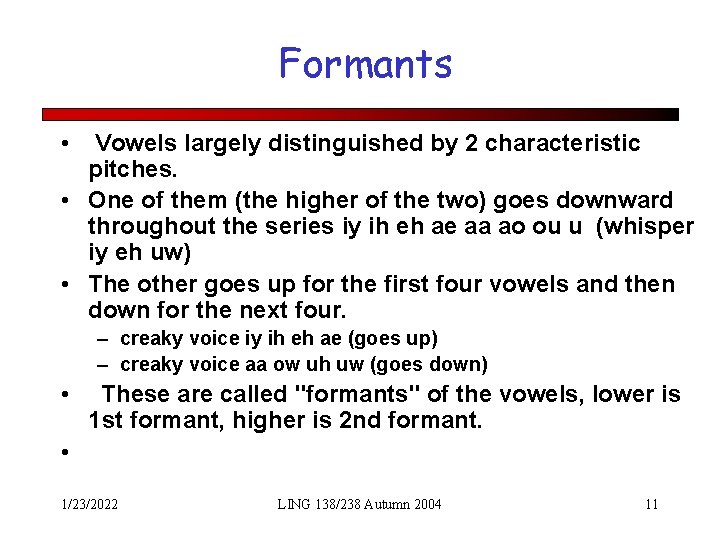

Formants • Vowels largely distinguished by 2 characteristic pitches. • One of them (the higher of the two) goes downward throughout the series iy ih eh ae aa ao ou u (whisper iy eh uw) • The other goes up for the first four vowels and then down for the next four. – creaky voice iy ih eh ae (goes up) – creaky voice aa ow uh uw (goes down) • These are called "formants" of the vowels, lower is 1 st formant, higher is 2 nd formant. • 1/23/2022 LING 138/238 Autumn 2004 11

How formants are produced • Q: why do vowels have different pitches if the vocal cords are same rate? • A: mouth as "amplifier"; amplifies different frequencies • Formants are result of differen shapes of vocal tract. • Any body of air will vibrate in a way that depends on its size and shape. Air in vocal tract is set in vibration by action of focal cords. Every time the vocal cords open and close, pulse of air from the lungs, acting like sharp taps on air in vocal tract, • Setting resonating cavities into vibration so produce a number of different frequencies. 1/23/2022 LING 138/238 Autumn 2004 12

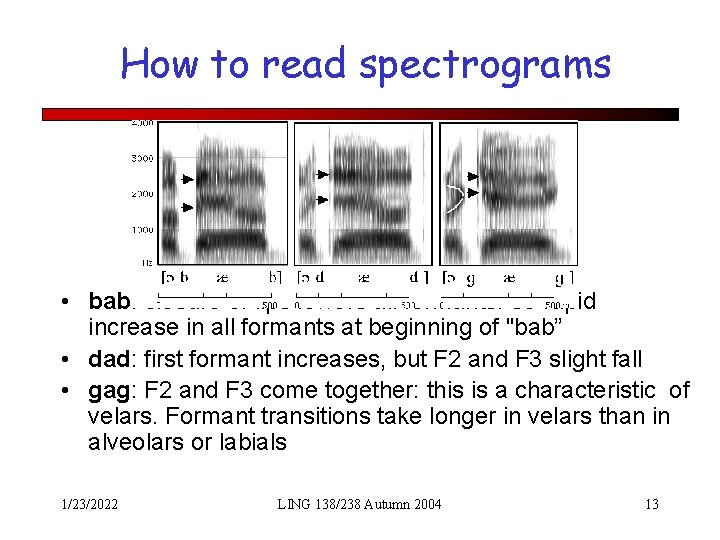

How to read spectrograms • bab: closure of lips lowers all formants: so rapid increase in all formants at beginning of "bab” • dad: first formant increases, but F 2 and F 3 slight fall • gag: F 2 and F 3 come together: this is a characteristic of velars. Formant transitions take longer in velars than in alveolars or labials 1/23/2022 LING 138/238 Autumn 2004 13

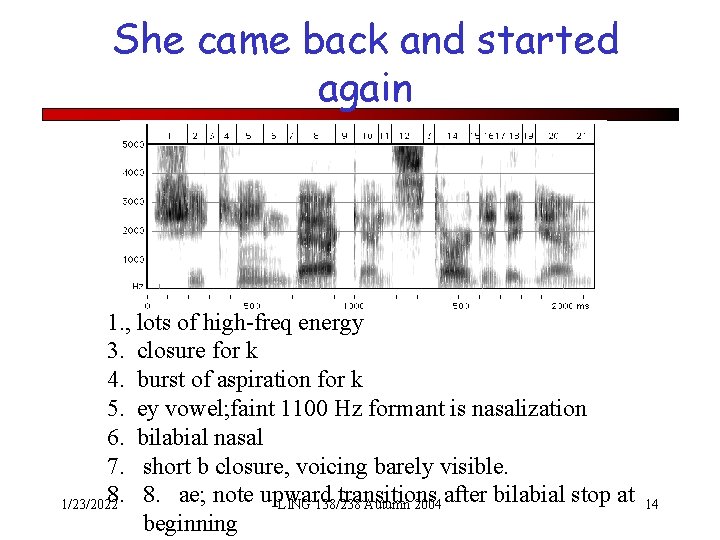

She came back and started again 1. , lots of high-freq energy 3. closure for k 4. burst of aspiration for k 5. ey vowel; faint 1100 Hz formant is nasalization 6. bilabial nasal 7. short b closure, voicing barely visible. 8. 8. ae; note upward transitions 1/23/2022 LING 138/238 Autumn 2004 after bilabial stop at beginning 14

Spectrogram for “She just had a baby” 1/23/2022 LING 138/238 Autumn 2004 15

Perceptual properties • Pitch: perceptual correlate of frequency • Loudness: perceptual correlate of power, which is related to square of amplitude 1/23/2022 LING 138/238 Autumn 2004 16

Speech Recognition • Applications of Speech Recognition (ASR) – Dictation – Telephone-based Information (directions, air travel, banking, etc) – Hands-free (in car) – Speaker Identification – Language Identification – Second language ('L 2') (accent reduction) – Audio archive searching 1/23/2022 LING 138/238 Autumn 2004 17

LVCSR • Large Vocabulary Continuous Speech Recognition • ~20, 000 -64, 000 words • Speaker independent (vs. speakerdependent) • Continuous speech (vs isolated-word) 1/23/2022 LING 138/238 Autumn 2004 18

LVCSR Design Intuition • Build a statistical model of the speech-towords process • Collect lots and lots of speech, and transcribe all the words. • Train the model on the labeled speech • Paradigm: Supervised Machine Learning + Search 1/23/2022 LING 138/238 Autumn 2004 19

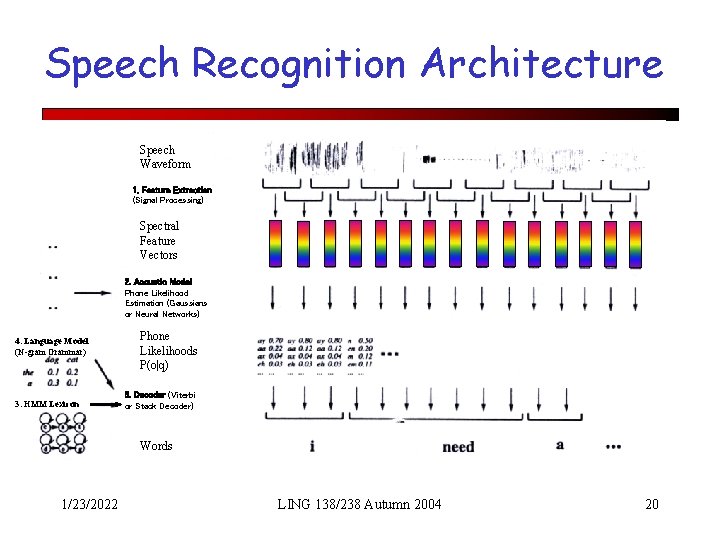

Speech Recognition Architecture Speech Waveform 1. Feature Extraction (Signal Processing) Spectral Feature Vectors 2. Acoustic Model Phone Likelihood Estimation (Gaussians or Neural Networks) 4. Language Model (N-gram Grammar) 3. HMM Lexicon Phone Likelihoods P(o|q) 5. Decoder (Viterbi or Stack Decoder) Words 1/23/2022 LING 138/238 Autumn 2004 20

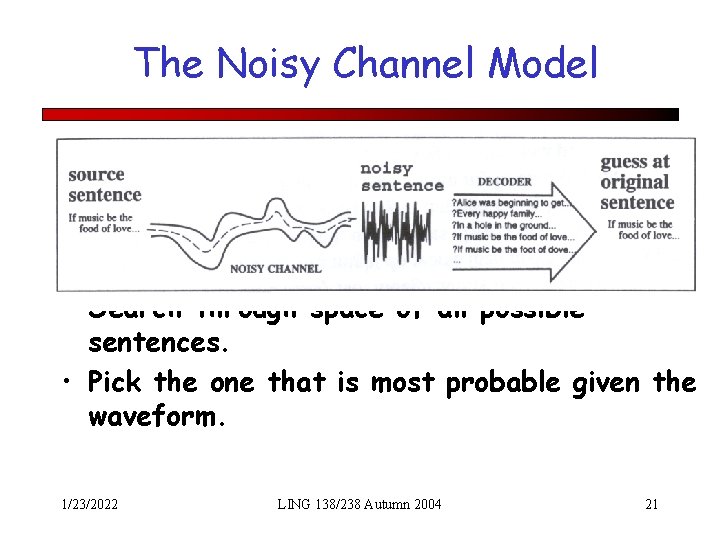

The Noisy Channel Model • Search through space of all possible sentences. • Pick the one that is most probable given the waveform. 1/23/2022 LING 138/238 Autumn 2004 21

The Noisy Channel Model (II) • What is the most likely sentence out of all sentences in the language L given some acoustic input O? • Treat acoustic input O as sequence of individual observations – O = o 1, o 2, o 3, …, ot • Define a sentence as a sequence of words: – W = w 1, w 2, w 3, …, wn 1/23/2022 LING 138/238 Autumn 2004 22

Noisy Channel Model (III) • Probabilistic implication: Pick the highest prob S: • We can use Bayes rule to rewrite this: • Since denominator is the same for each candidate sentence W, we can ignore it for the argmax: 1/23/2022 LING 138/238 Autumn 2004 23

A quick derivation of Bayes Rule • Conditionals • Rearranging • And also 1/23/2022 LING 138/238 Autumn 2004 24

Bayes (II) • We know… • So rearranging things 1/23/2022 LING 138/238 Autumn 2004 25

Noisy channel model likelihood 1/23/2022 LING 138/238 Autumn 2004 prior 26

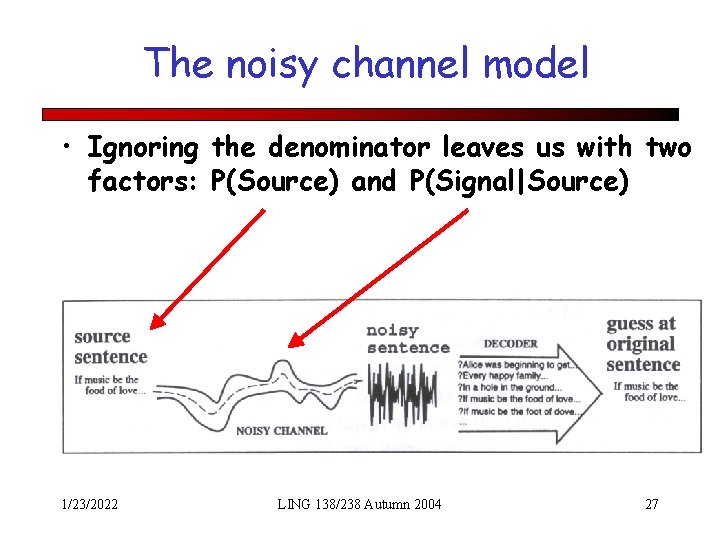

The noisy channel model • Ignoring the denominator leaves us with two factors: P(Source) and P(Signal|Source) 1/23/2022 LING 138/238 Autumn 2004 27

Five easy pieces • • • Feature extraction Acoustic Modeling HMMs, Lexicons, and Pronunciation Decoding Language Modeling 1/23/2022 LING 138/238 Autumn 2004 28

Feature Extraction • Digitize Speech • Extract Frames 1/23/2022 LING 138/238 Autumn 2004 29

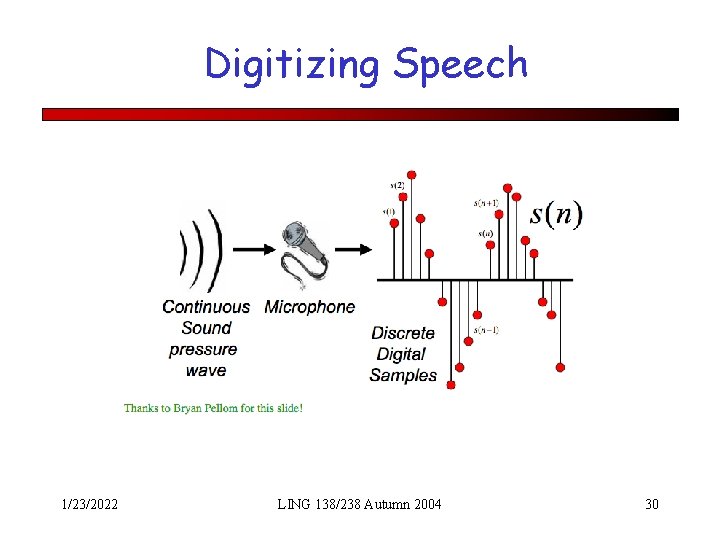

Digitizing Speech 1/23/2022 LING 138/238 Autumn 2004 30

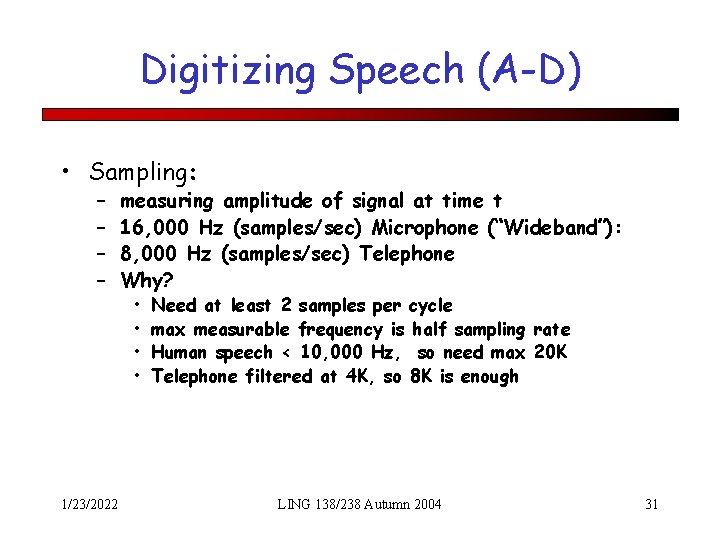

Digitizing Speech (A-D) • Sampling: – – 1/23/2022 measuring amplitude of signal at time t 16, 000 Hz (samples/sec) Microphone (“Wideband”): 8, 000 Hz (samples/sec) Telephone Why? • • Need at least 2 samples per cycle max measurable frequency is half sampling rate Human speech < 10, 000 Hz, so need max 20 K Telephone filtered at 4 K, so 8 K is enough LING 138/238 Autumn 2004 31

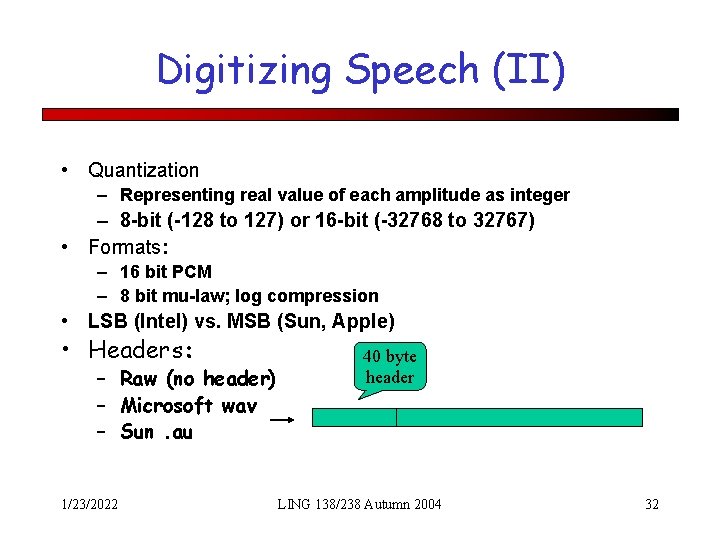

Digitizing Speech (II) • Quantization – Representing real value of each amplitude as integer – 8 -bit (-128 to 127) or 16 -bit (-32768 to 32767) • Formats: – 16 bit PCM – 8 bit mu-law; log compression • LSB (Intel) vs. MSB (Sun, Apple) • Headers: – Raw (no header) – Microsoft wav – Sun. au 1/23/2022 40 byte header LING 138/238 Autumn 2004 32

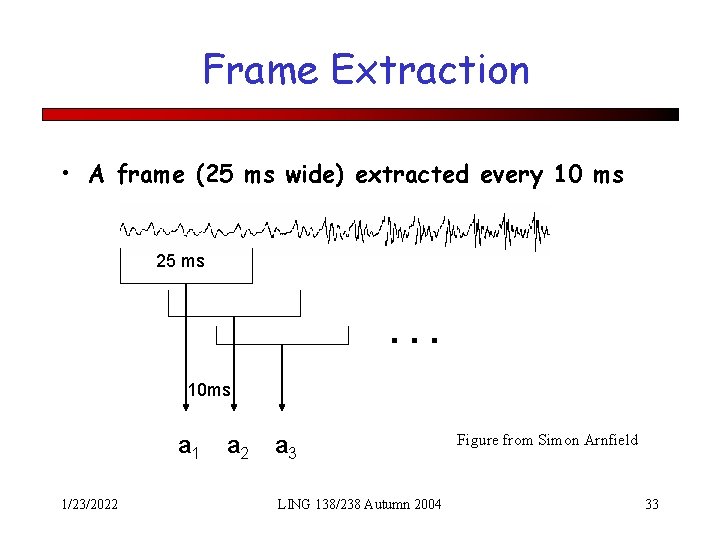

Frame Extraction • A frame (25 ms wide) extracted every 10 ms 25 ms . . . 10 ms a 1 1/23/2022 a 3 LING 138/238 Autumn 2004 Figure from Simon Arnfield 33

MFCC (Mel Frequency Cepstral Coefficients) • Do FFT to get spectral information – Like the spectrogram/spectrum we saw earlier • Apply Mel scaling – Linear below 1 k. Hz, log above, equal samples above and below 1 k. Hz – Models human ear; more sensitivity in lower freqs • Plus Discrete Cosine Transformation 1/23/2022 LING 138/238 Autumn 2004 34

Final Feature Vector • 39 Features per 10 ms frame: – – – 12 MFCC features 12 Delta-Delta MFCC features 1 (log) frame energy 1 Delta-Delta (log frame energy) • So each frame represented by a 39 D vector 1/23/2022 LING 138/238 Autumn 2004 35

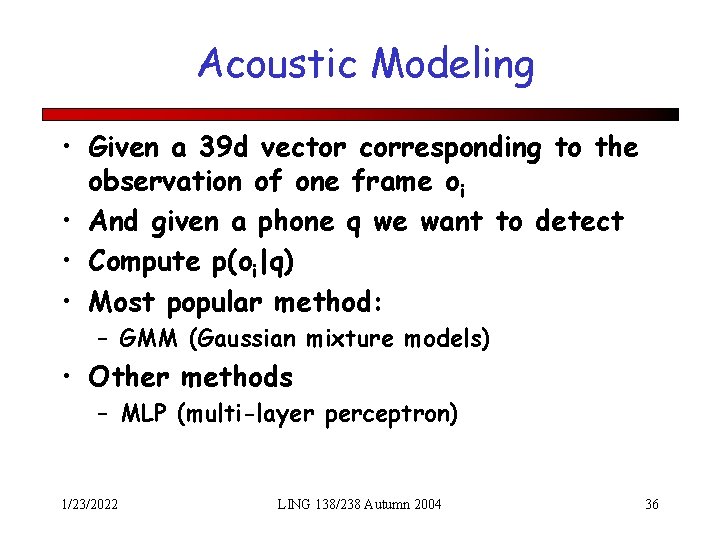

Acoustic Modeling • Given a 39 d vector corresponding to the observation of one frame oi • And given a phone q we want to detect • Compute p(oi|q) • Most popular method: – GMM (Gaussian mixture models) • Other methods – MLP (multi-layer perceptron) 1/23/2022 LING 138/238 Autumn 2004 36

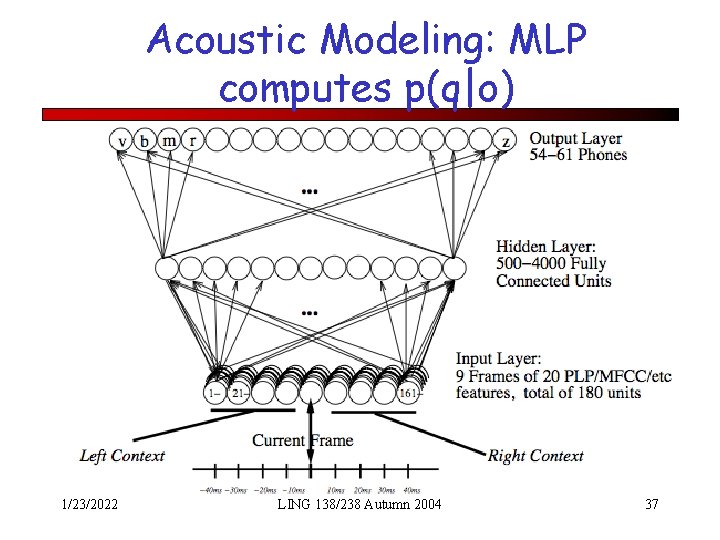

Acoustic Modeling: MLP computes p(q|o) 1/23/2022 LING 138/238 Autumn 2004 37

Gaussian Mixture Models • Also called “fully-continuous HMMs” • P(o|q) computed by a Gaussian: 1/23/2022 LING 138/238 Autumn 2004 38

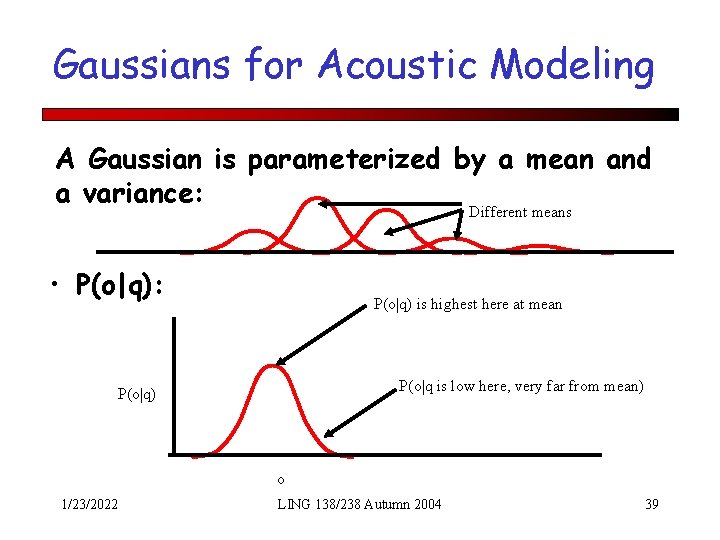

Gaussians for Acoustic Modeling A Gaussian is parameterized by a mean and a variance: Different means • P(o|q): P(o|q) is highest here at mean P(o|q is low here, very far from mean) P(o|q) o 1/23/2022 LING 138/238 Autumn 2004 39

Training Gaussians • A (single) Gaussian is characterized by a mean and a variance • Imagine that we had some training data in which each phone was labeled • We could just compute the mean and variance from the data: 1/23/2022 LING 138/238 Autumn 2004 40

But we need 39 gaussians, not 1! • The observation o is really a vector of length 39 • So need a vector of Gaussians: 1/23/2022 LING 138/238 Autumn 2004 41

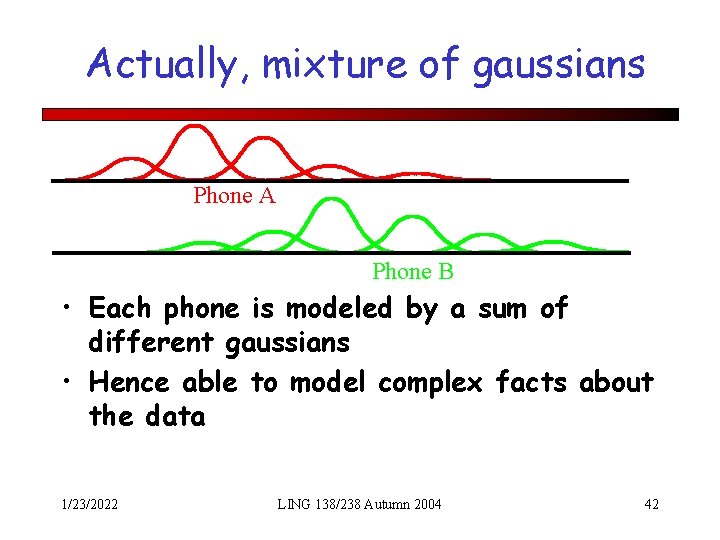

Actually, mixture of gaussians Phone A Phone B • Each phone is modeled by a sum of different gaussians • Hence able to model complex facts about the data 1/23/2022 LING 138/238 Autumn 2004 42

Gaussians acoustic modeling • Summary: each phone is represented by a GMM parameterized by – M mixture weights – M mean vectors – M covariance matrices • Usually assume covariance matrix is diagonal • I. e. just keep separate variance for each cepstral feature 1/23/2022 LING 138/238 Autumn 2004 43

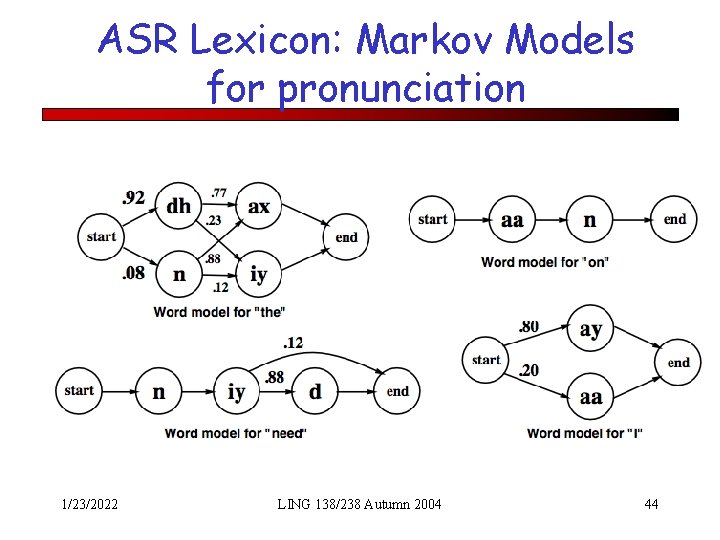

ASR Lexicon: Markov Models for pronunciation 1/23/2022 LING 138/238 Autumn 2004 44

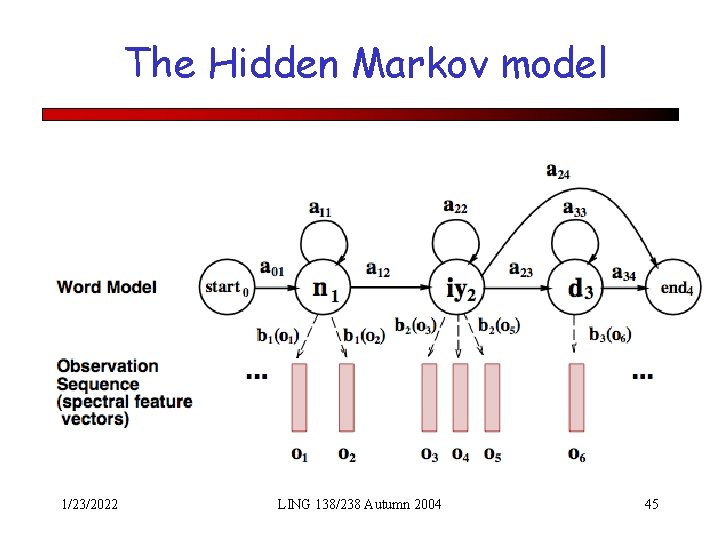

The Hidden Markov model 1/23/2022 LING 138/238 Autumn 2004 45

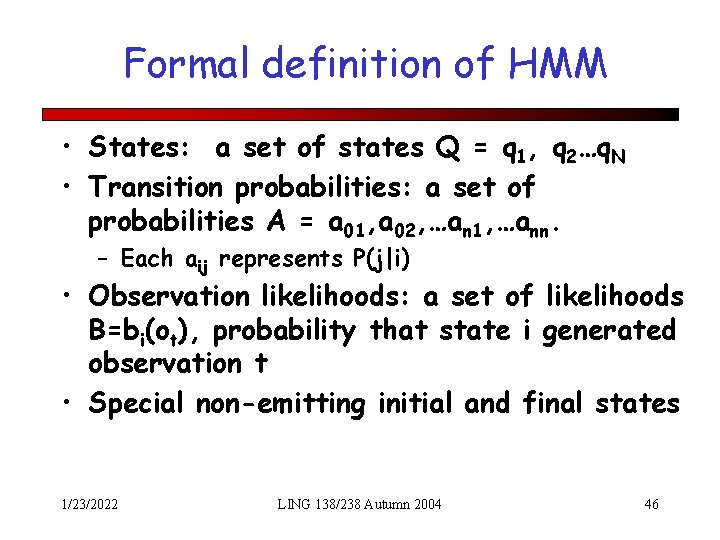

Formal definition of HMM • States: a set of states Q = q 1, q 2…q. N • Transition probabilities: a set of probabilities A = a 01, a 02, …an 1, …ann. – Each aij represents P(j|i) • Observation likelihoods: a set of likelihoods B=bi(ot), probability that state i generated observation t • Special non-emitting initial and final states 1/23/2022 LING 138/238 Autumn 2004 46

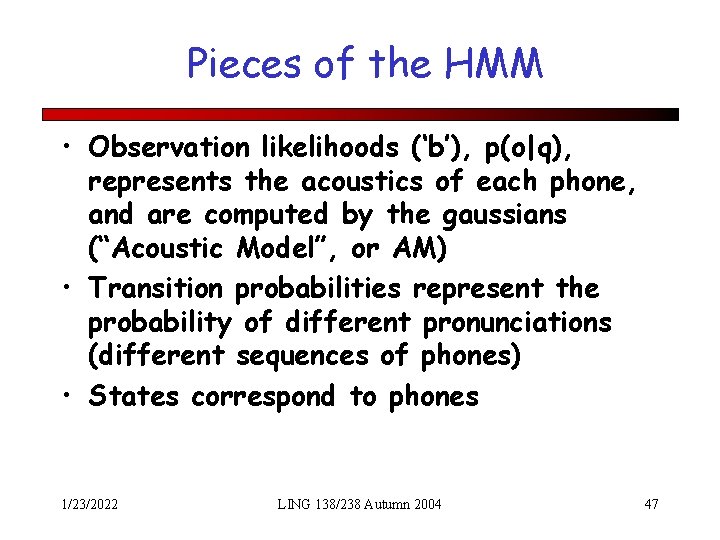

Pieces of the HMM • Observation likelihoods (‘b’), p(o|q), represents the acoustics of each phone, and are computed by the gaussians (“Acoustic Model”, or AM) • Transition probabilities represent the probability of different pronunciations (different sequences of phones) • States correspond to phones 1/23/2022 LING 138/238 Autumn 2004 47

Pieces of the HMM • Actually, I lied when I say states correspond to phones • Actually states usually correspond to triphones • CHEESE (phones): ch iy z • CHEESE (triphones) #-ch+iy, ch-iy+z, iyz+# 1/23/2022 LING 138/238 Autumn 2004 48

Pieces of the HMM • Actually, I lied again when I said states correspond to triphones • In fact, each triphone has 3 states for beginning, middle, and end of the triphone. • 1/23/2022 LING 138/238 Autumn 2004 49

A real HMM 1/23/2022 LING 138/238 Autumn 2004 50

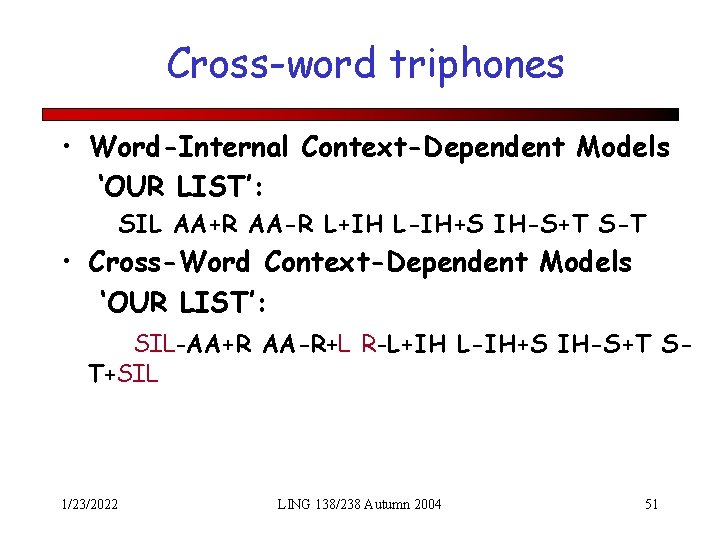

Cross-word triphones • Word-Internal Context-Dependent Models ‘OUR LIST’: SIL AA+R AA-R L+IH L-IH+S IH-S+T S-T • Cross-Word Context-Dependent Models ‘OUR LIST’: SIL-AA+R AA-R+L R-L+IH L-IH+S IH-S+T ST+SIL 1/23/2022 LING 138/238 Autumn 2004 51

Summary • ASR Architecture • Five easy pieces of an ASR system – The Noisy Channel Model 1) Feature Extraction: 39 “MFCC” features 2) Acoustic Model: Gaussians for computing p(o|q) 3) Lexicon/Pronunciation Model • • HMM: Next time: Decoding: how to combine these to compute words from speech! 1/23/2022 LING 138/238 Autumn 2004 52

- Slides: 52