Linear Sorts Counting sort Bucket sort Radix sort

Linear Sorts Counting sort Bucket sort Radix sort

Linear Sorts • We will study algorithms that do not depend only on comparing whole keys to be sorted. • Counting sort • Bucket sort • Radix sort 2

Counting sort • Assumptions: – n records – Each record contains keys and data – All keys are in the range of 1 to k • Space – The unsorted list is stored in A, the sorted list will be stored in an additional array B – Uses an additional array C of size k 3

Counting sort • Main idea: 1. For each key value i, i = 1, …, k, count the number of times the keys occurs in the unsorted input array A. Store results in an auxiliary array, C 2. Use these counts to compute the offset. Offseti is used to calculate the location where the record with key value i will be stored in the sorted output list B. The offseti value has the location where the last keyi. • When would you use counting sort? • How much memory is needed? 4

![Counting Sort Input: A [ 1. . n ], A[J] {1, 2, . . Counting Sort Input: A [ 1. . n ], A[J] {1, 2, . .](http://slidetodoc.com/presentation_image/12c130ccbd3acb704016370954394210/image-5.jpg)

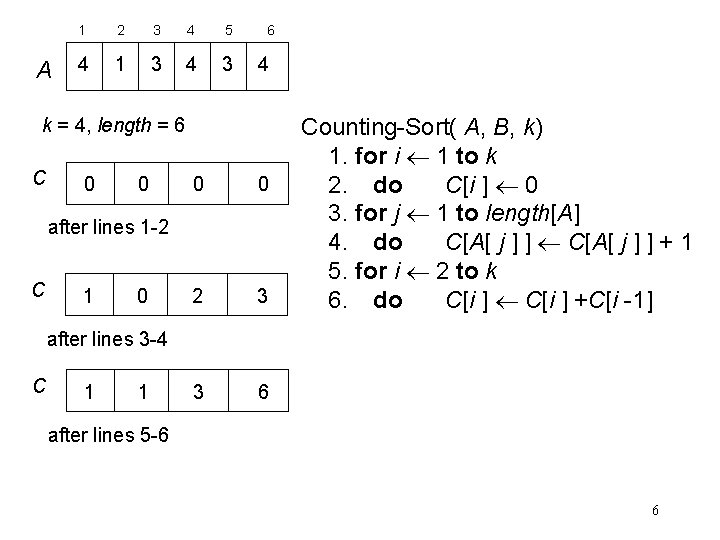

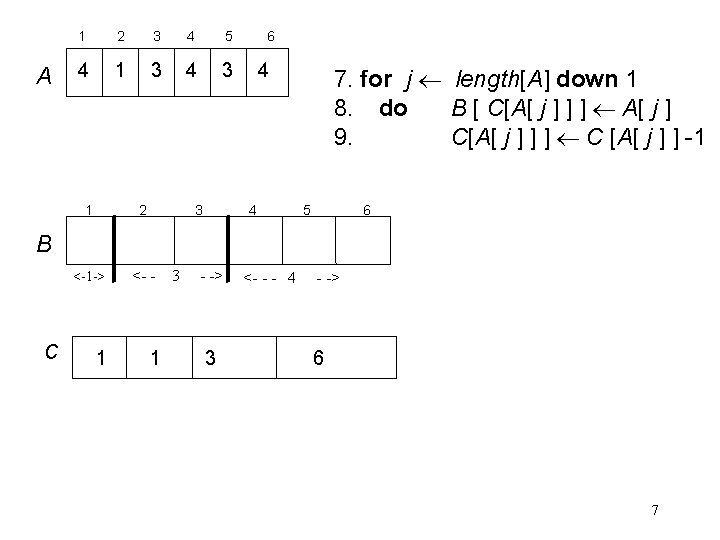

Counting Sort Input: A [ 1. . n ], A[J] {1, 2, . . . , k } Output: B [ 1. . n ], sorted Uses C [ 1. . k ], auxiliary storage Counting-Sort( A, B, k) 1. for i 1 to k 2. do C[i ] 0 3. for j 1 to length[A] 4. do C[A[ j ] ] + 1 5. for i 2 to k 6. do C[i ] +C[i -1] 7. for j length[A] down 1 8. do B [ C[A[ j ] ] ] A[ j ] 9. C[A[ j ] ] ] C [A[ j ] ] -1 Analysis: Adapted from Cormen, Leiserson, Rivest 5

A 1 2 3 4 5 4 1 3 4 3 6 4 k = 4, length = 6 C 0 0 2 3 3 6 after lines 1 -2 C 1 0 Counting-Sort( A, B, k) 1. for i 1 to k 2. do C[i ] 0 3. for j 1 to length[A] 4. do C[A[ j ] ] + 1 5. for i 2 to k 6. do C[i ] +C[i -1] after lines 3 -4 C 1 1 after lines 5 -6 6

A 1 2 3 4 5 4 1 3 4 3 1 2 3 6 4 4 7. for j length[A] down 1 8. do B [ C[A[ j ] ] ] A[ j ] 9. C[A[ j ] ] ] C [A[ j ] ] -1 5 6 B <-1 -> C 1 <- - 3 - -> 1 3 <- - - 4 - -> 6 7

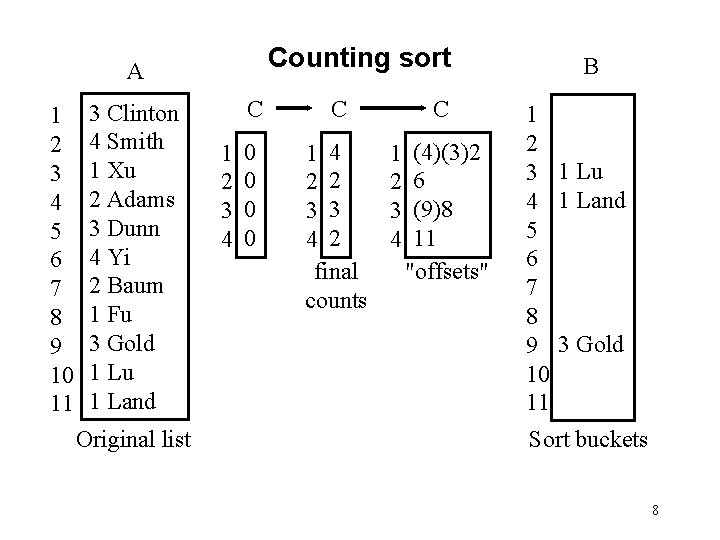

Counting sort A 1 2 3 4 5 6 7 8 9 10 11 3 Clinton 4 Smith 1 Xu 2 Adams 3 Dunn 4 Yi 2 Baum 1 Fu 3 Gold 1 Lu 1 Land Original list 1 2 3 4 C C 0 0 1 4 2 2 3 3 4 2 final counts C 1 2 3 4 (4)(3)2 6 (9)8 11 "offsets" B 1 2 3 1 Lu 4 1 Land 5 6 7 8 9 3 Gold 10 11 Sort buckets 8

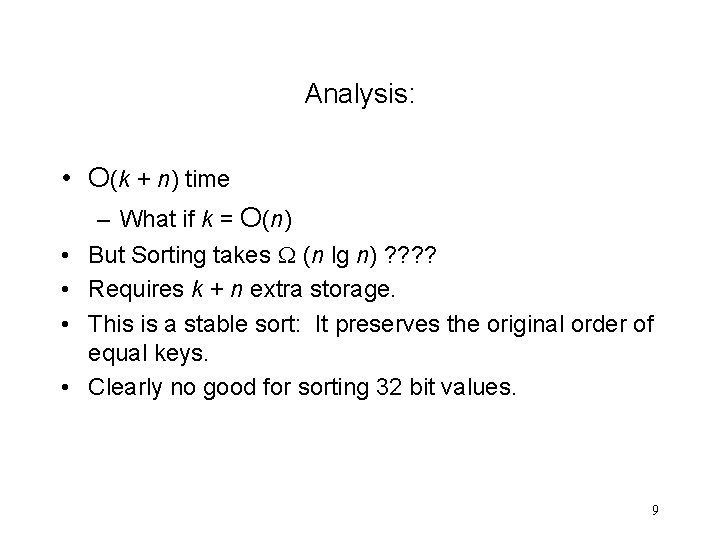

Analysis: • O(k + n) time • • – What if k = O(n) But Sorting takes (n lg n) ? ? Requires k + n extra storage. This is a stable sort: It preserves the original order of equal keys. Clearly no good for sorting 32 bit values. 9

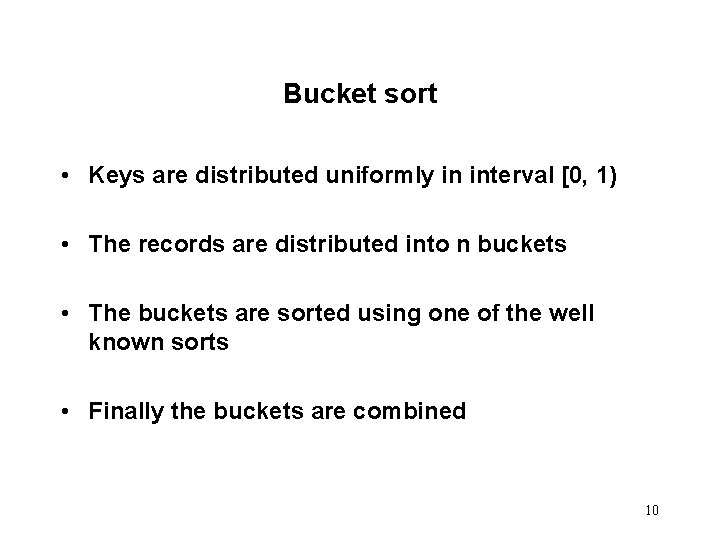

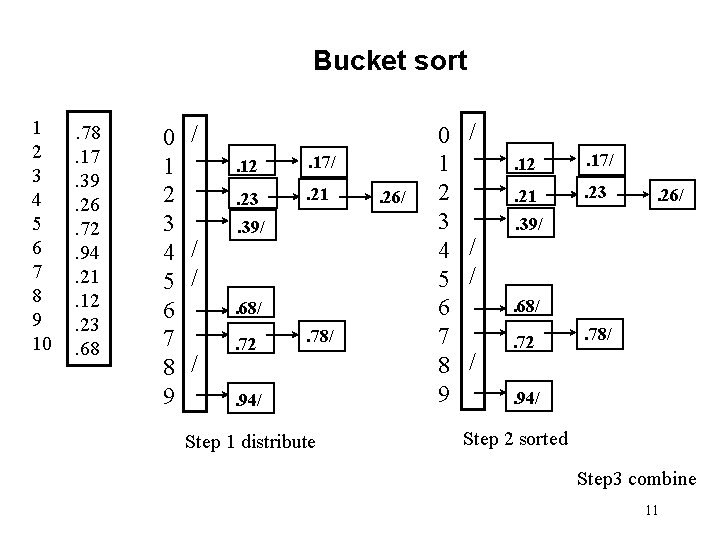

Bucket sort • Keys are distributed uniformly in interval [0, 1) • The records are distributed into n buckets • The buckets are sorted using one of the well known sorts • Finally the buckets are combined 10

Bucket sort 1 2 3 4 5 6 7 8 9 10 . 78. 17. 39. 26. 72. 94. 21. 12. 23. 68 0 1 2 3 4 5 6 7 8 9 / / / . 12 . 17/ . 23 . 21 . 39/ . 68/ / . 72 . 78/ . 94/ Step 1 distribute . 26/ 0 1 2 3 4 5 6 7 8 9 / / / . 12 . 17/ . 21 . 23 . 26/ . 39/ . 68/ / . 72 . 78/ . 94/ Step 2 sorted Step 3 combine 11

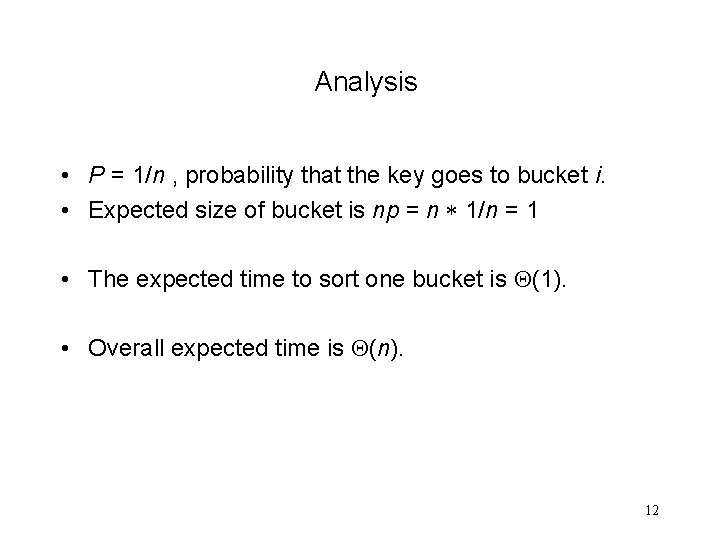

Analysis • P = 1/n , probability that the key goes to bucket i. • Expected size of bucket is np = n 1/n = 1 • The expected time to sort one bucket is (1). • Overall expected time is (n). 12

How did IBM get rich originally? • In the early 1900's IBM produced punched card readers for census tabulation. • Cards are 80 columns with 12 places for punches per column. Only 10 places needed for decimals. – Picture of punch card. • Sorters had 12 bins. • Key idea: sort the least significant digit first. 13

A punched card 14

IBM card. Card punching machine 15

Hollerith’s tabulating machines • As the cards were fed through a "tabulating machine, " pins passed through the positions where holes were punched completing an electrical circuit and subsequently registered a value. • The 1880 census in the U. S. took seven years to complete • With Hollerith's "tabulating machines" the 1890 census took the Census Bureau six weeks 16

Card sorting machine IBM’s card sorting machine 17

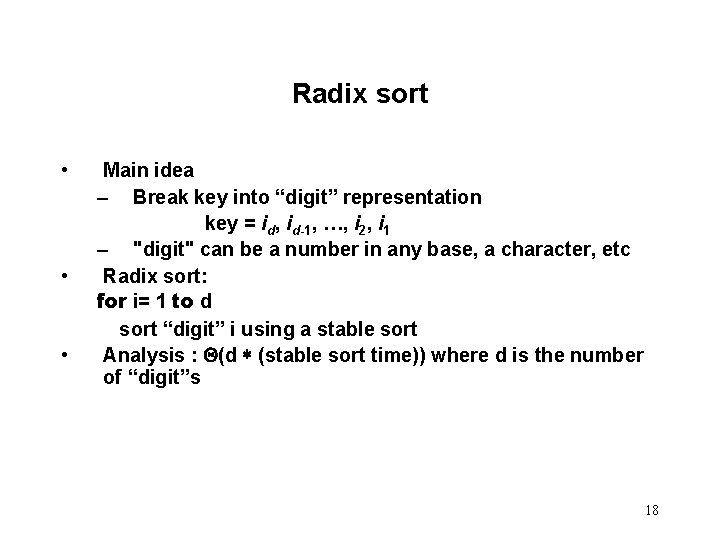

Radix sort • • • Main idea – Break key into “digit” representation key = id, id-1, …, i 2, i 1 – "digit" can be a number in any base, a character, etc Radix sort: for i= 1 to d sort “digit” i using a stable sort Analysis : (d (stable sort time)) where d is the number of “digit”s 18

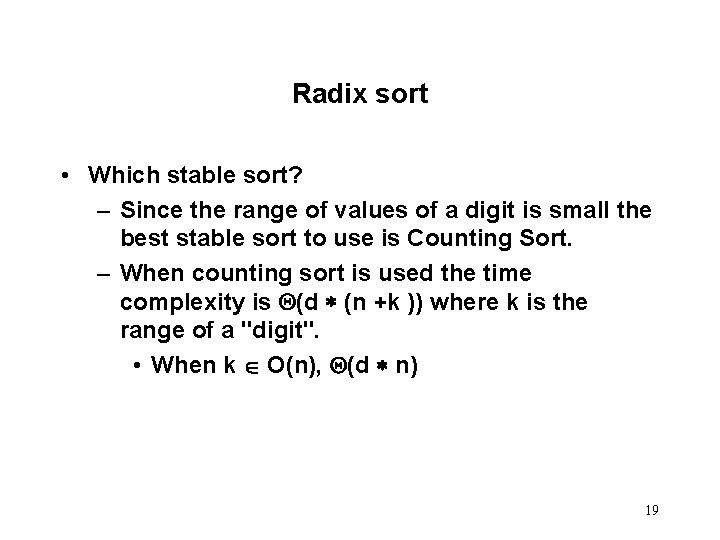

Radix sort • Which stable sort? – Since the range of values of a digit is small the best stable sort to use is Counting Sort. – When counting sort is used the time complexity is (d (n +k )) where k is the range of a "digit". • When k O(n), (d n) 19

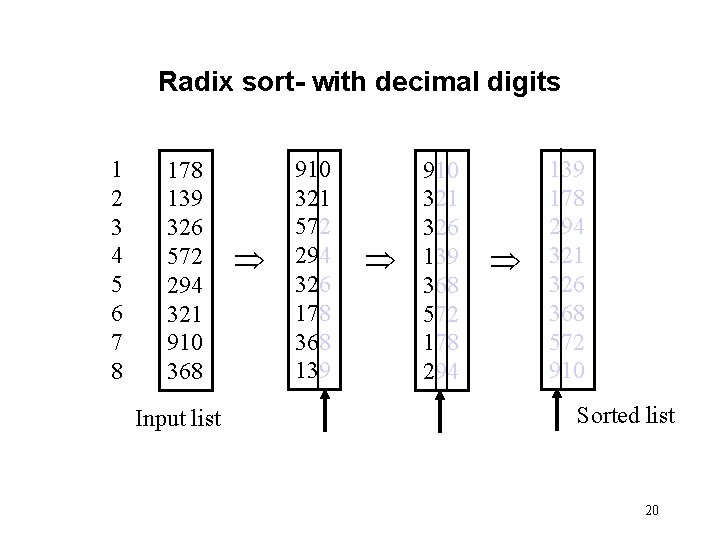

Radix sort- with decimal digits 1 2 3 4 5 6 7 8 178 139 326 572 294 321 910 368 Input list 910 321 572 294 326 178 368 139 910 321 326 139 368 572 178 294 139 178 294 321 326 368 572 910 Sorted list 20

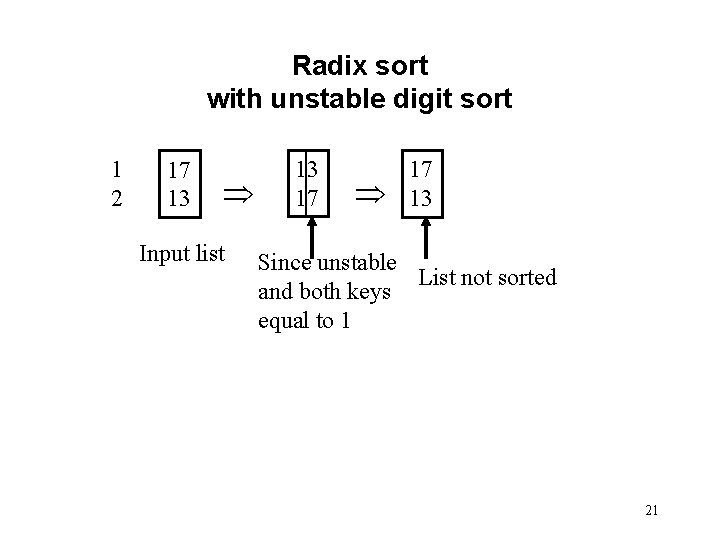

Radix sort with unstable digit sort 1 2 17 13 Input list 13 17 17 13 Since unstable List not sorted and both keys equal to 1 21

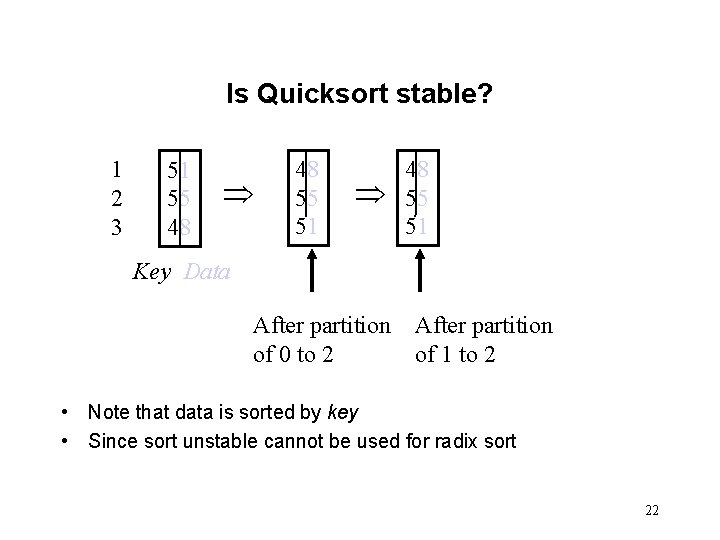

Is Quicksort stable? 1 2 3 51 55 48 48 55 51 Key Data After partition of 0 to 2 After partition of 1 to 2 • Note that data is sorted by key • Since sort unstable cannot be used for radix sort 22

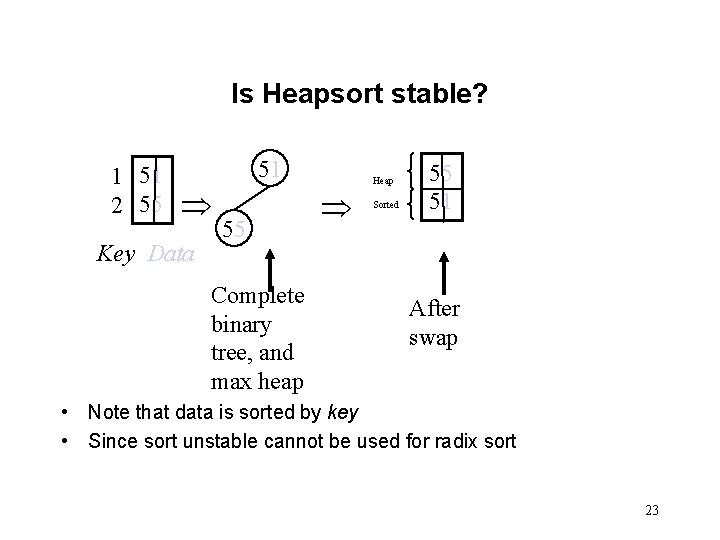

Is Heapsort stable? 1 51 2 55 51 Key Data 55 Complete binary tree, and max heap Heap Sorted 55 51 After swap • Note that data is sorted by key • Since sort unstable cannot be used for radix sort 23

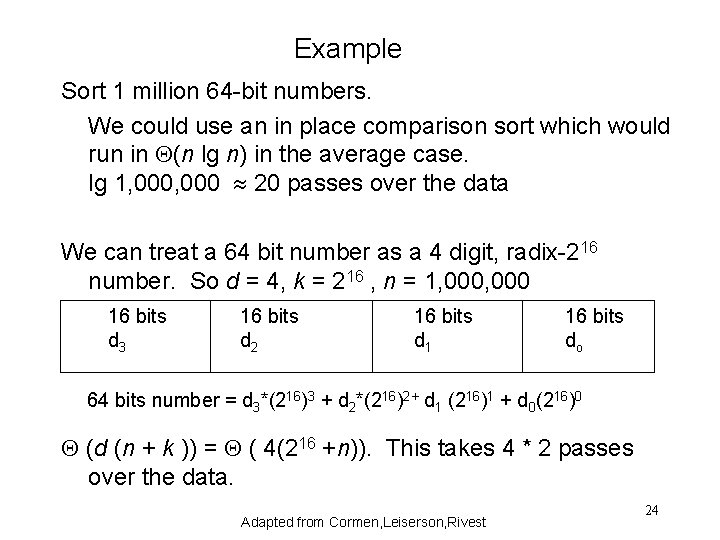

Example Sort 1 million 64 -bit numbers. We could use an in place comparison sort which would run in (n lg n) in the average case. lg 1, 000 20 passes over the data We can treat a 64 bit number as a 4 digit, radix-216 number. So d = 4, k = 216 , n = 1, 000 16 bits d 3 16 bits d 2 16 bits d 1 16 bits do 64 bits number = d 3*(216)3 + d 2*(216)2+ d 1 (216)1 + d 0(216)0 (d (n + k )) = ( 4(216 +n)). This takes 4 * 2 passes over the data. Adapted from Cormen, Leiserson, Rivest 24

- Slides: 24