Linear regression Petter Mostad mostadchalmers se Relationships between

Linear regression Petter Mostad mostad@chalmers. se

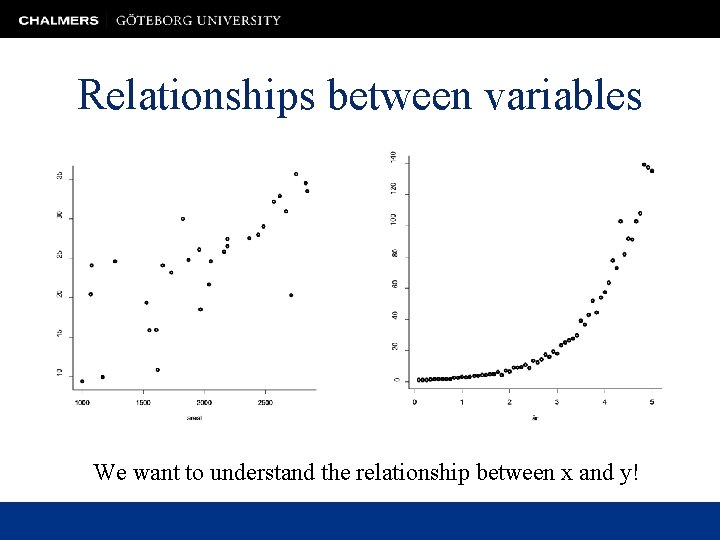

Relationships between variables We want to understand the relationship between x and y!

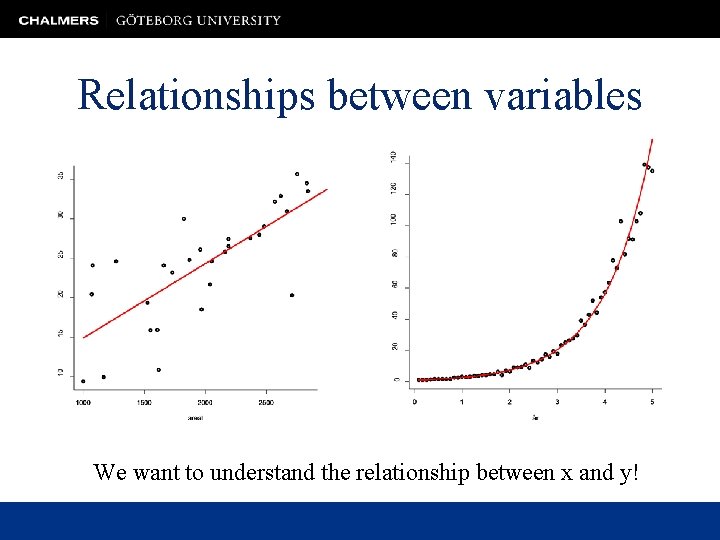

Relationships between variables We want to understand the relationship between x and y!

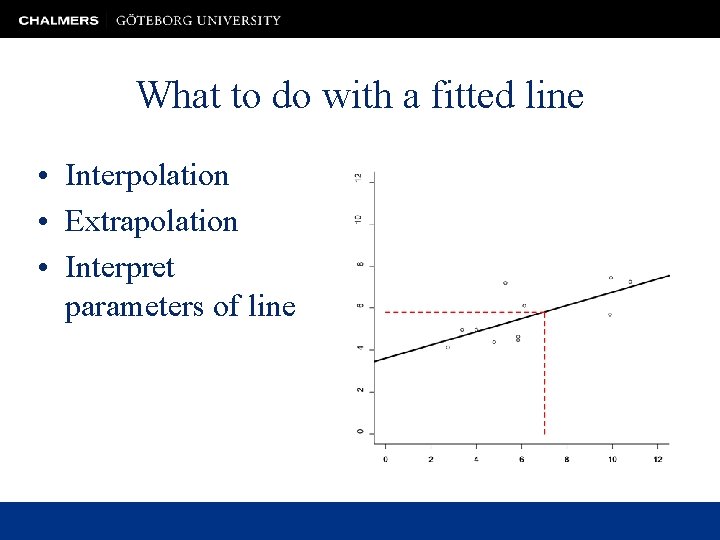

What to do with a fitted line • Interpolation • Extrapolation • Interpret parameters of line

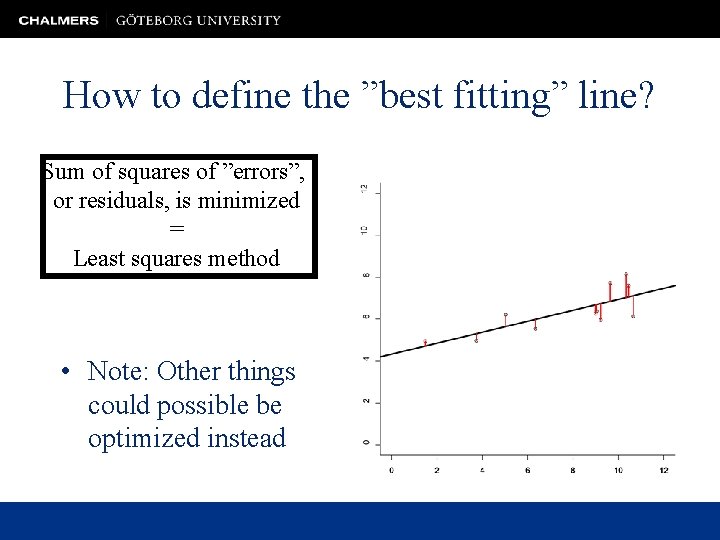

How to define the ”best fitting” line? Sum of squares of ”errors”, or residuals, is minimized = Least squares method • Note: Other things could possible be optimized instead

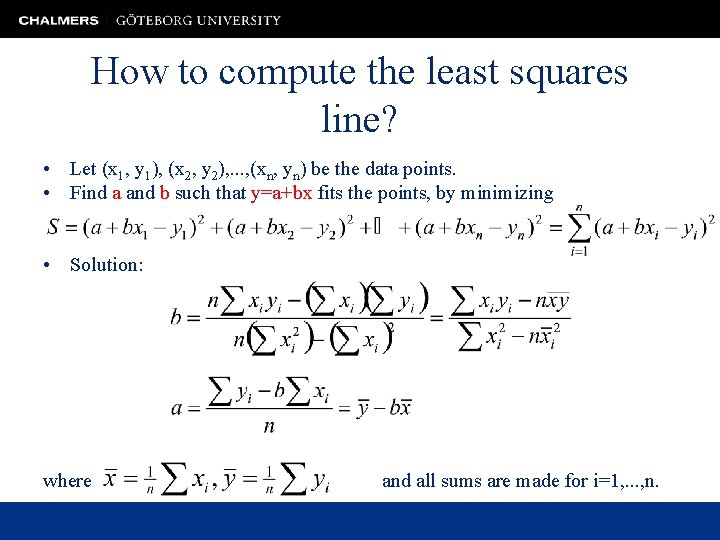

How to compute the least squares line? • Let (x 1, y 1), (x 2, y 2), . . . , (xn, yn) be the data points. • Find a and b such that y=a+bx fits the points, by minimizing • Solution: where and all sums are made for i=1, . . . , n.

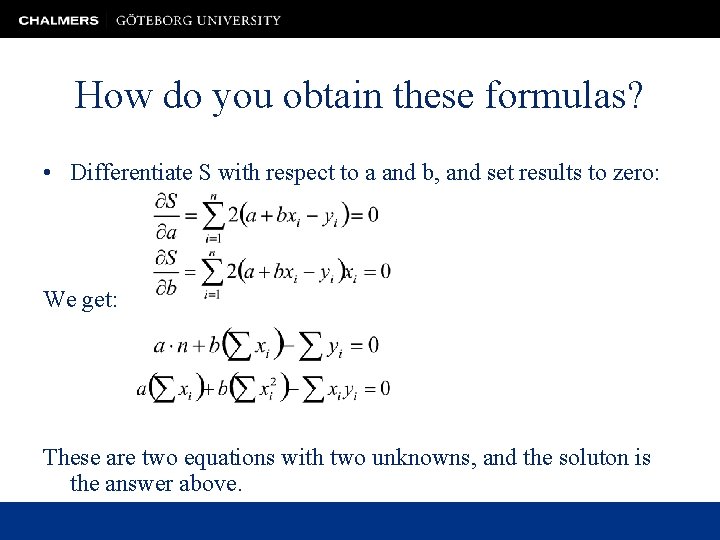

How do you obtain these formulas? • Differentiate S with respect to a and b, and set results to zero: We get: These are two equations with two unknowns, and the soluton is the answer above.

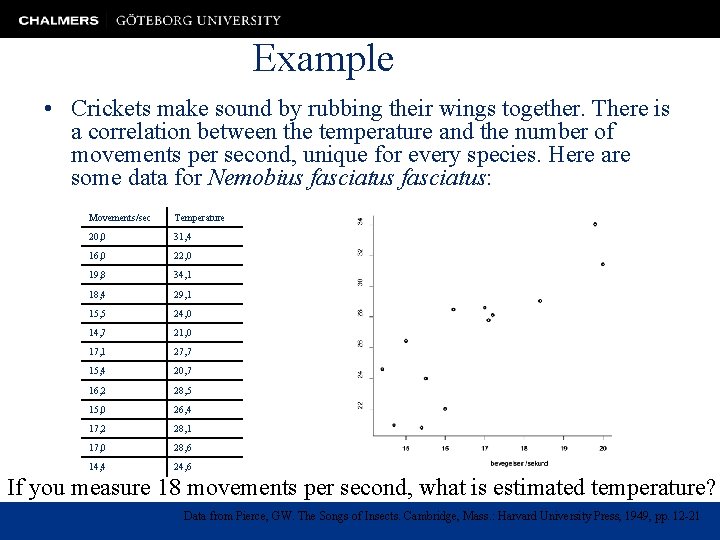

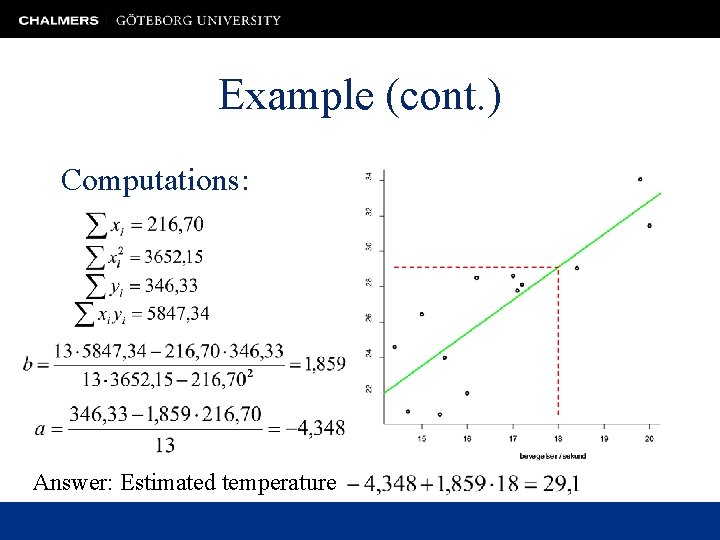

Example • Crickets make sound by rubbing their wings together. There is a correlation between the temperature and the number of movements per second, unique for every species. Here are some data for Nemobius fasciatus: Movements/sec Temperature 20, 0 31, 4 16, 0 22, 0 19, 8 34, 1 18, 4 29, 1 15, 5 24, 0 14, 7 21, 0 17, 1 27, 7 15, 4 20, 7 16, 2 28, 5 15, 0 26, 4 17, 2 28, 1 17, 0 28, 6 14, 4 24, 6 If you measure 18 movements per second, what is estimated temperature? Data from Pierce, GW. The Songs of Insects. Cambridge, Mass. : Harvard University Press, 1949, pp. 12 -21

Example (cont. ) Computations: Answer: Estimated temperature

What about the uncertainty in the prediction? • Temperature is not perfectly predicted! • We assume: – A linear model relates the number of wing movements with a mean predicted temperature – The actual temperature has a normal distribution around that mean prediction, with variance σ2 • In order to make a prediction with uncertainty, we must: – First estimate the parameters of the line from data – Find the predicted temperature at the given wing movement number, with uncertainty – Add the ”random error”, with the estimated variance

More examples • A model for prediction of y claims that every unit increase in a variable x increases the expected value of y by 1. 4, and that y is normally distributed around this expectation with some fixed variance. How can we test this model? • You have a choice between a model relating y with x where either y=ax+b+error, or y=ax+error. How can you choose?

Procedure for answering such quesitons: • We set up a linear model for our observations • We estimate its parameters, with uncertainty • We draw conclusions from these estimates, with uncertainty, or • We use the estimates, with uncertainties, to make predictions, with uncertainties

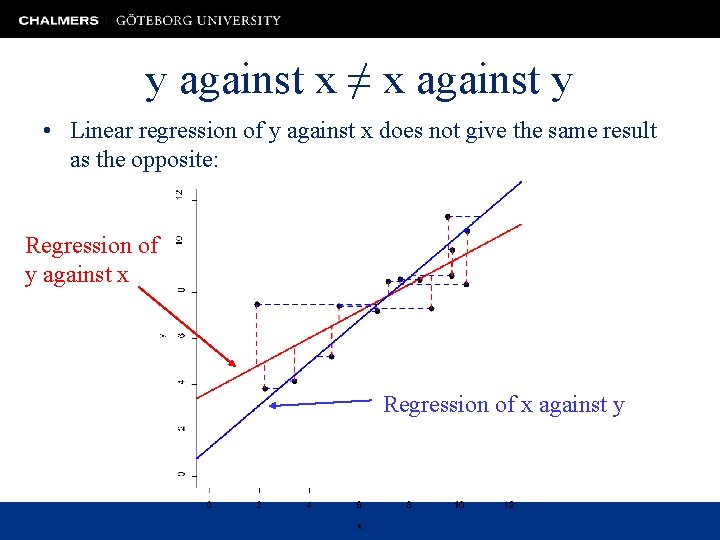

y against x ≠ x against y • Linear regression of y against x does not give the same result as the opposite: Regression of y against x Regression of x against y

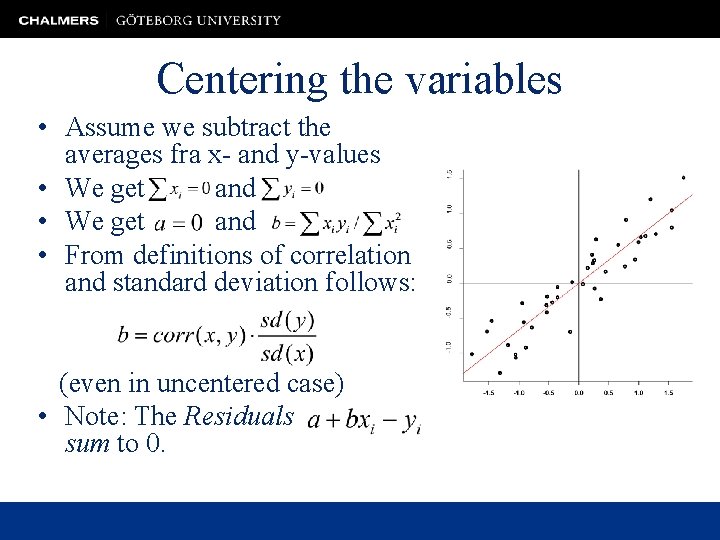

Centering the variables • Assume we subtract the averages fra x- and y-values • We get and • From definitions of correlation and standard deviation follows: (even in uncentered case) • Note: The Residuals sum to 0.

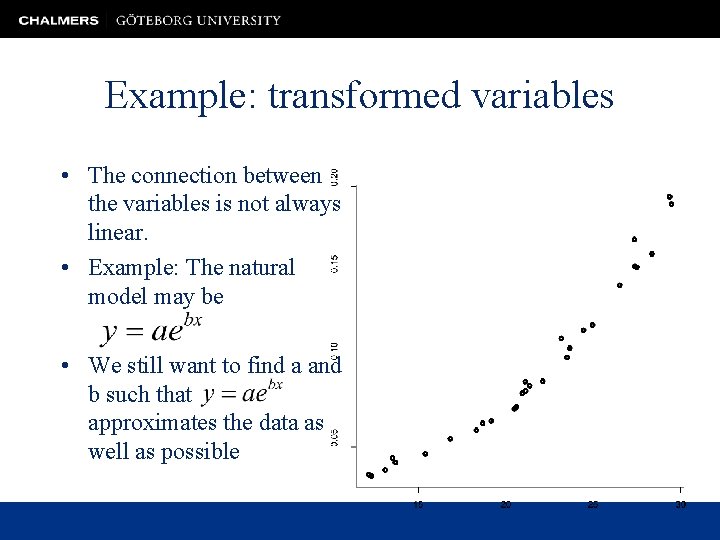

Example: transformed variables • The connection between the variables is not always linear. • Example: The natural model may be • We still want to find a and b such that approximates the data as well as possible

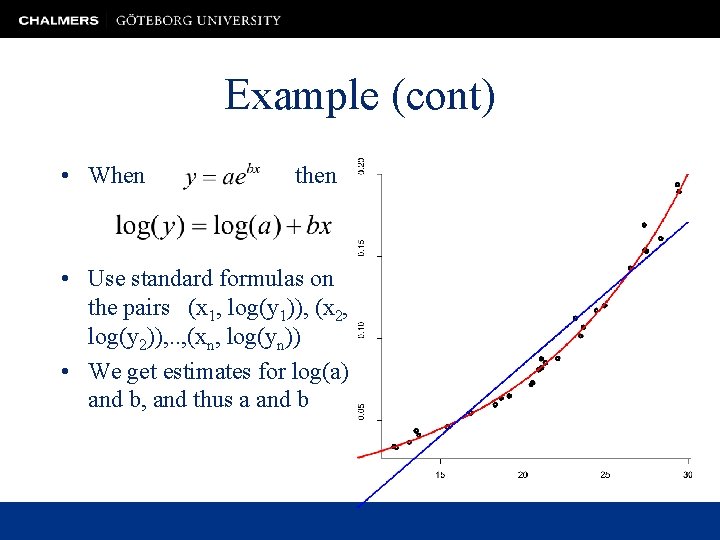

Example (cont) • When then • Use standard formulas on the pairs (x 1, log(y 1)), (x 2, log(y 2)), . . , (xn, log(yn)) • We get estimates for log(a) and b, and thus a and b

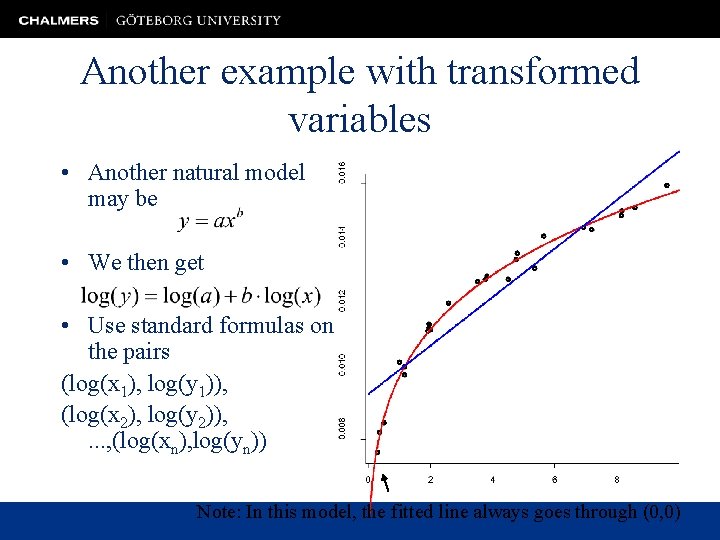

Another example with transformed variables • Another natural model may be • We then get • Use standard formulas on the pairs (log(x 1), log(y 1)), (log(x 2), log(y 2)), . . . , (log(xn), log(yn)) Note: In this model, the fitted line always goes through (0, 0)

Several explanatory variables • Assume our data is of the type (x 11, x 12, y 1), (x 21, x 22, x 23, y 2), . . . • We can try to predict or ”explain” y from the xvalues with a model • Exactly as before we can deduce formulas for a, b, c, d minimizing the sum of the squares of the ”errors”, or residuals. • x 1, x 2, x 3 can be transformations of different variables, or even the same variable.

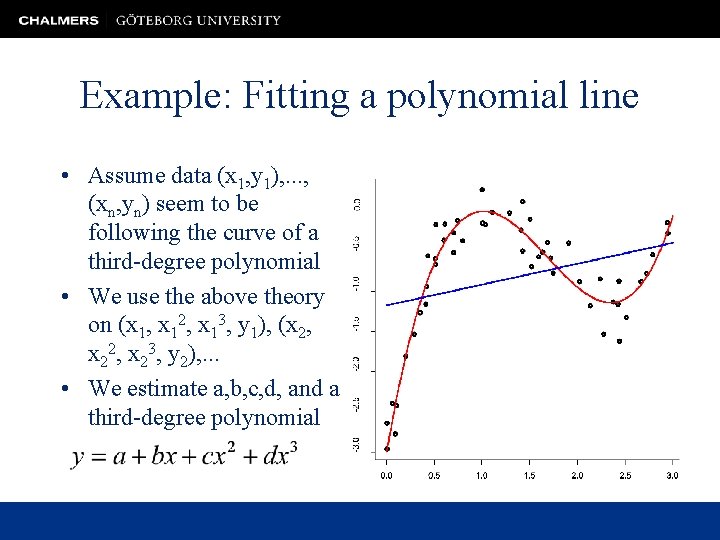

Example: Fitting a polynomial line • Assume data (x 1, y 1), . . . , (xn, yn) seem to be following the curve of a third-degree polynomial • We use the above theory on (x 1, x 12, x 13, y 1), (x 2, x 23, y 2), . . . • We estimate a, b, c, d, and a third-degree polynomial

Regression as a linear model • Responses are modelled as a linear function of ”explanatory variables”, with unknown coefficients, plus a random error. • The random error is normally distributed with zero mean and a fixed variance for all observations. • With these assumptions, values for the unknown coefficients can be estimated using least squares. • We can find an estimate for the variance. • This can be used to obtain confidence intervals, and confidence regions, for the unknown coefficients.

- Slides: 20