Linear Regression II Political Science 30 Political Inquiry

- Slides: 20

Linear Regression II Political Science 30: Political Inquiry

Linear Regression II: Making Sense of Regression Results �Interpreting SPSS regression output Coefficients for independent variables Fit of the regression: R Square �Statistical significance How to reject the null hypothesis �Multivariate regressions College graduation rates Ethnicity and voting

Linear Regression: Review � Want to draw a line that best represents the relationship between the IV (X) and DV (Y). Y = a + b*X Allows us to predict DV given value of IV � Regression finds the values for a and b that minimizes the distance between the points and the line � Technically, a and b are population parameters. We only get to calculate sample statistics, a-hat and b-hat.

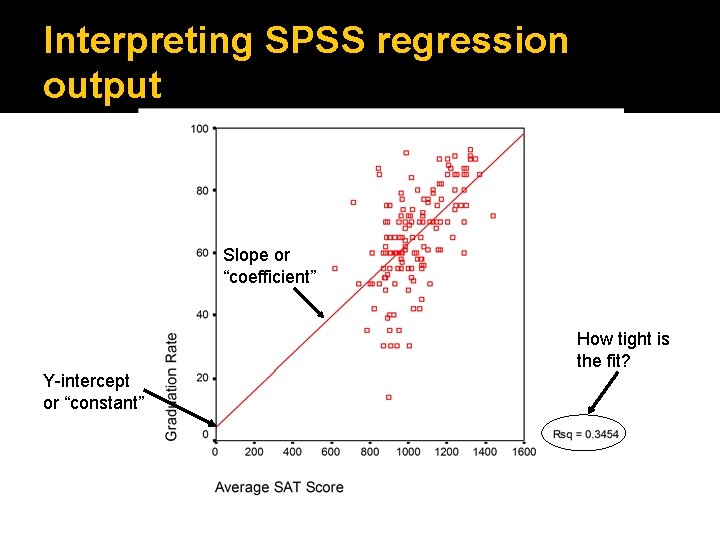

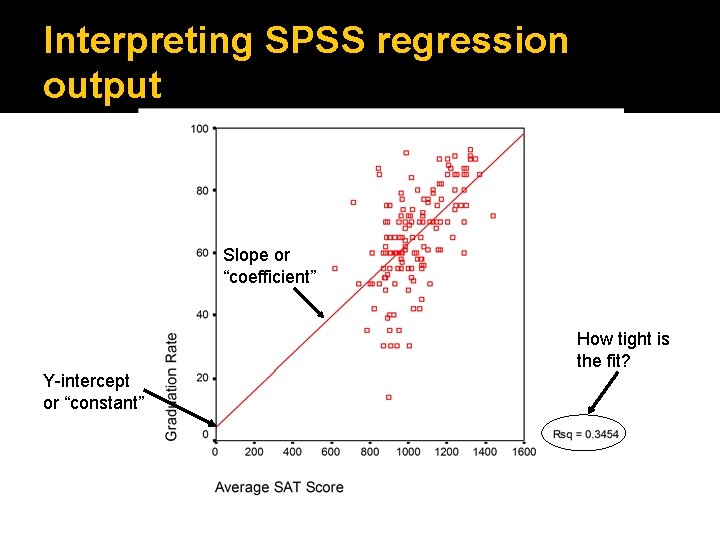

Interpreting SPSS regression output Slope or “coefficient” How tight is the fit? Y-intercept or “constant”

Interpreting SPSS regression output �An SPSS regression output includes two key tables for interpreting your results: A “Coefficients” table that contains the y- intercept (or “constant”) of the regression, a coefficient for every independent variable, and the standard error of that coefficient. A “Model Summary” table that gives you information on the fit of your regression.

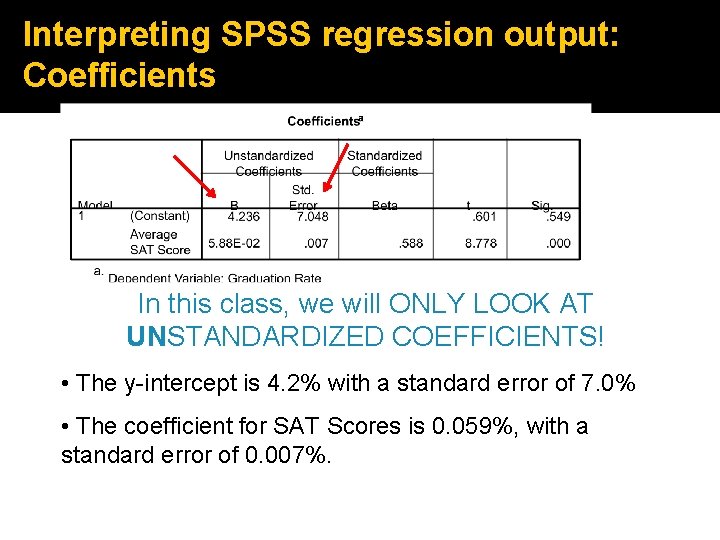

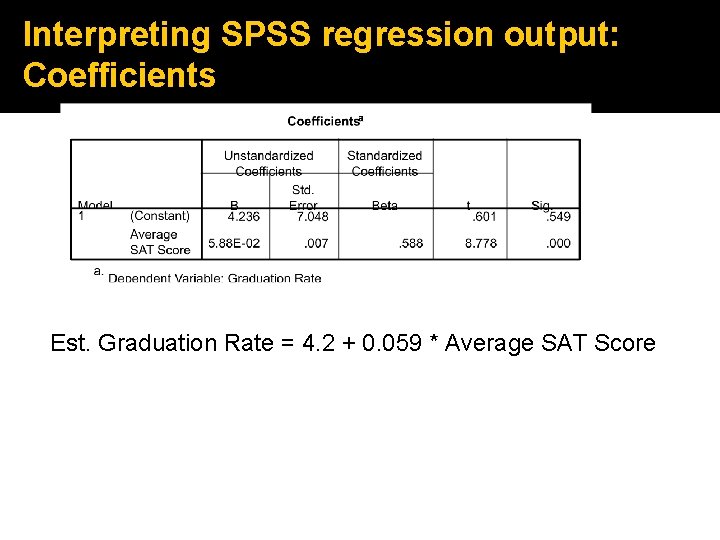

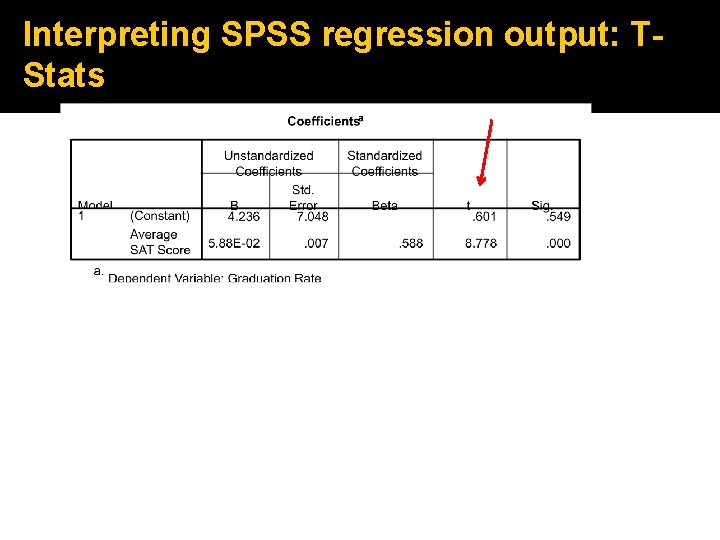

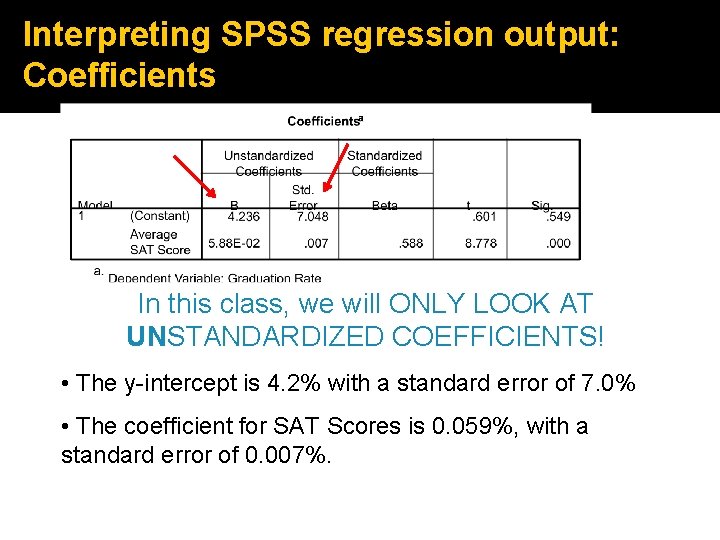

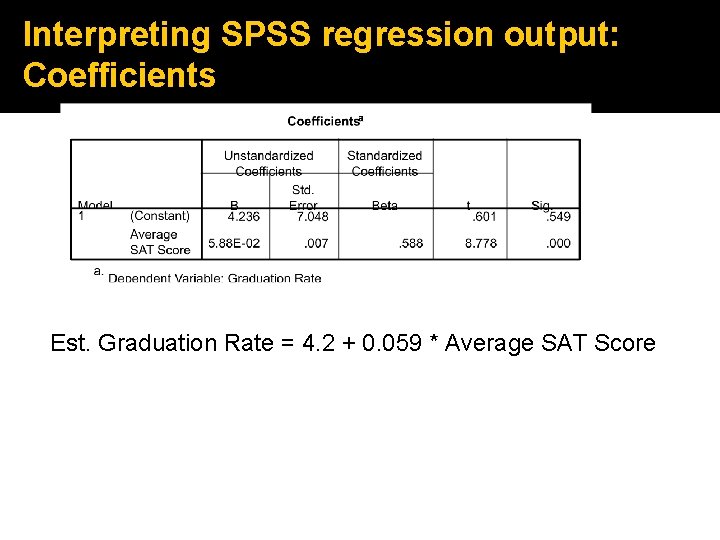

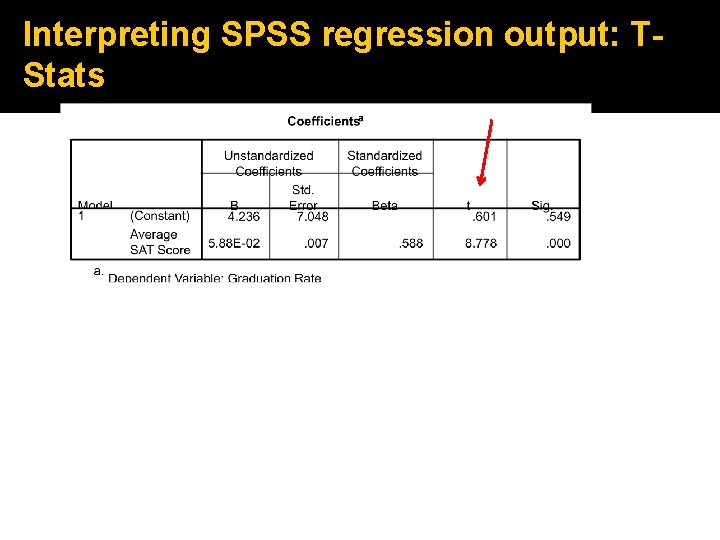

Interpreting SPSS regression output: Coefficients In this class, we will ONLY LOOK AT UNSTANDARDIZED COEFFICIENTS! • The y-intercept is 4. 2% with a standard error of 7. 0% • The coefficient for SAT Scores is 0. 059%, with a standard error of 0. 007%.

Interpreting SPSS regression output: Coefficients Est. Graduation Rate = 4. 2 + 0. 059 * Average SAT Score

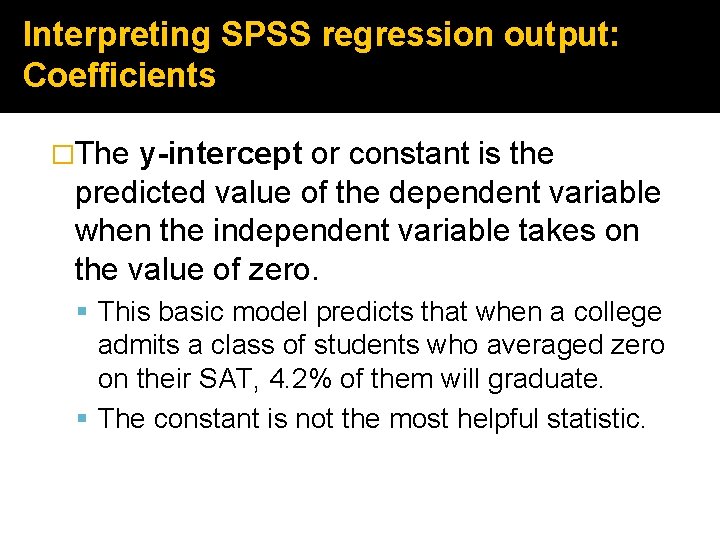

Interpreting SPSS regression output: Coefficients �The y-intercept or constant is the predicted value of the dependent variable when the independent variable takes on the value of zero. This basic model predicts that when a college admits a class of students who averaged zero on their SAT, 4. 2% of them will graduate. The constant is not the most helpful statistic.

Interpreting SPSS regression output: Coefficients �The coefficient of an independent variable is the predicted change in the dependent variable that results from a one unit increase in the independent variable. A college with students whose SAT scores are one point higher on average will have a graduation rate that is 0. 059% higher. Increasing SAT scores by 200 points leads to a (200)(0. 059%) = 11. 8% rise in graduation rates

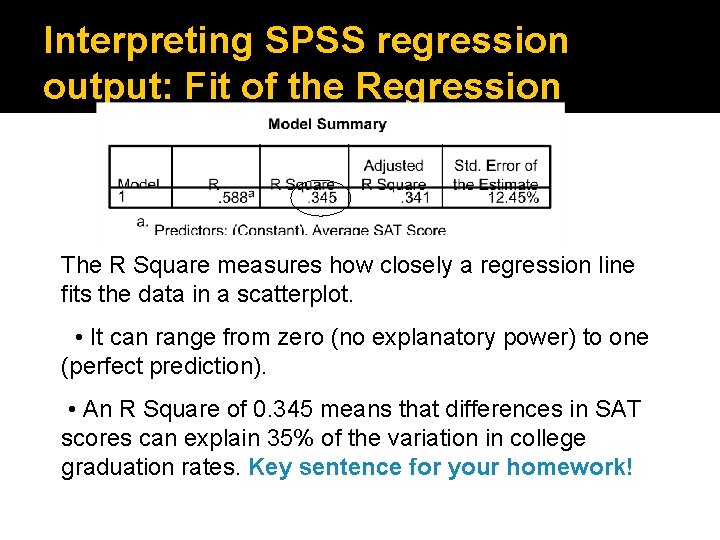

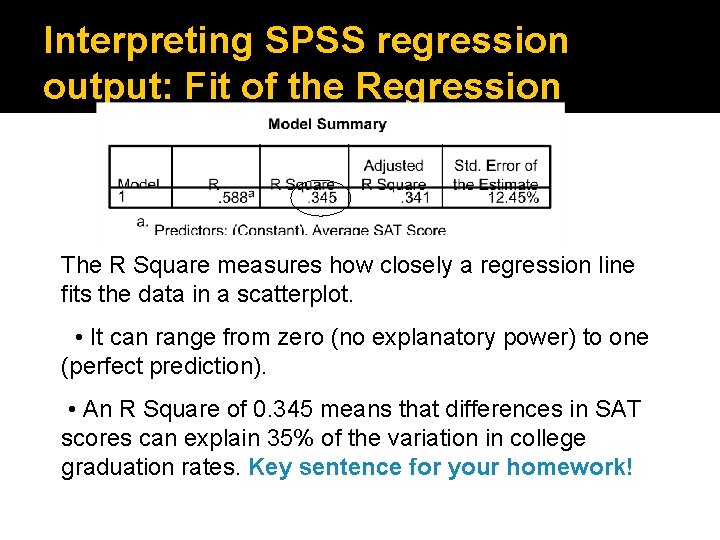

Interpreting SPSS regression output: Fit of the Regression The R Square measures how closely a regression line fits the data in a scatterplot. • It can range from zero (no explanatory power) to one (perfect prediction). • An R Square of 0. 345 means that differences in SAT scores can explain 35% of the variation in college graduation rates. Key sentence for your homework!

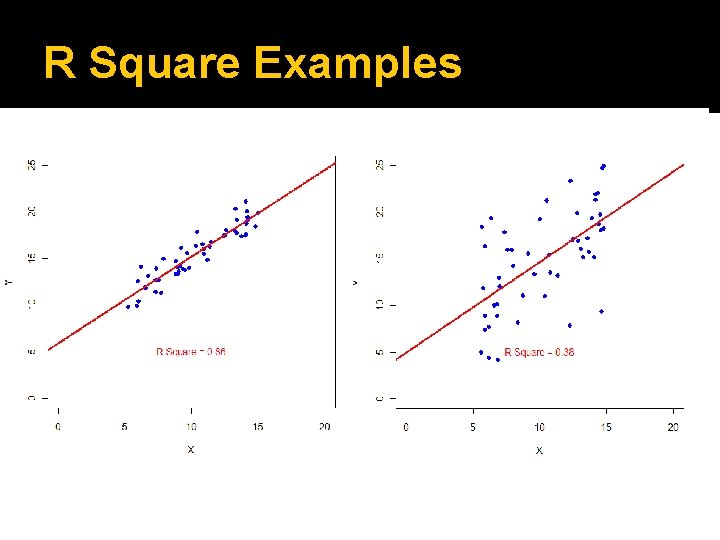

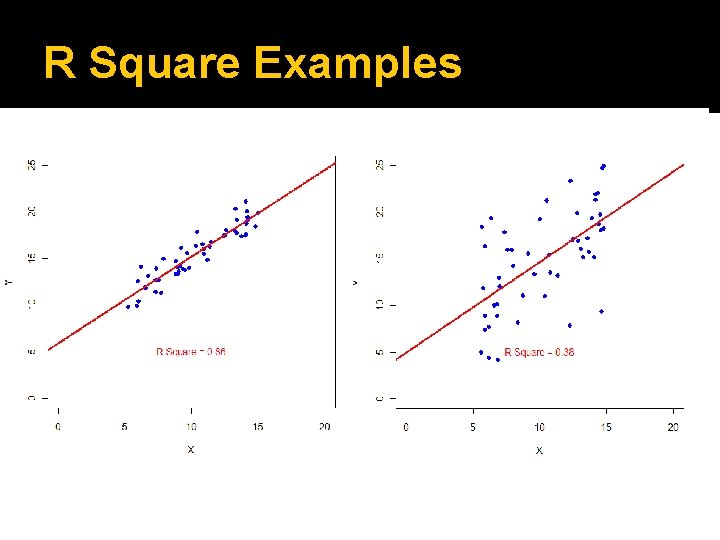

R Square Examples

Statistical Significance �What would the null hypothesis look like in a scatterplot? If the independent variable has no effect on the dependent variable, the scatterplot should look random, the regression line should be flat, and its slope should be zero. Null hypothesis: The regression coefficient (b) for an independent variable equals zero. Can we reject null b=0 based on our estimate of b-hat?

Statistical Significance �Our formal test of statistical significance asks whether we can be sure that a regression coefficient for the population differs from zero. Just like in a difference in means/proportions test, the “standard error” is the standard deviation of the sample distribution. If a coefficient is more than two standard errors away from zero, we can reject the null hypothesis (that it equals zero).

Statistical Significance �So, if a coefficient is more than twice the size of its standard error, we reject the null hypothesis with 95% confidence. This works whether the coefficient is negative or positive. The coefficient/standard error ratio is called the “test statistic” or “t-stat. ” A t-stat bigger than 2 or less than -2 indicates at statistically significant correlation.

Interpreting SPSS regression output: TStats

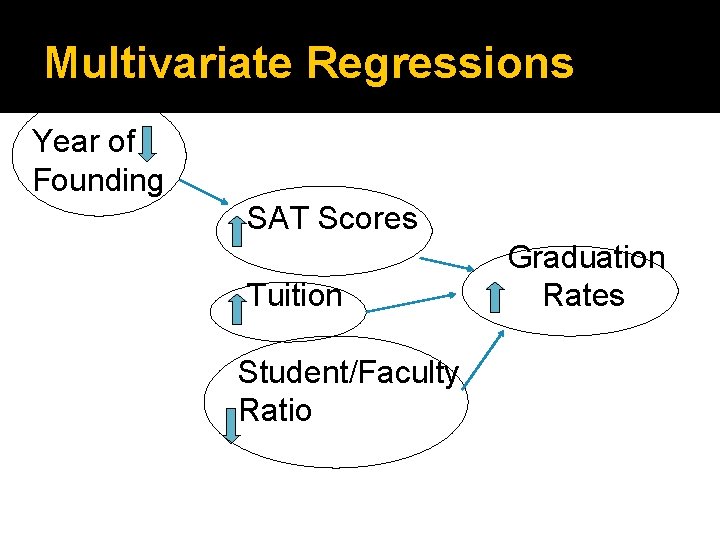

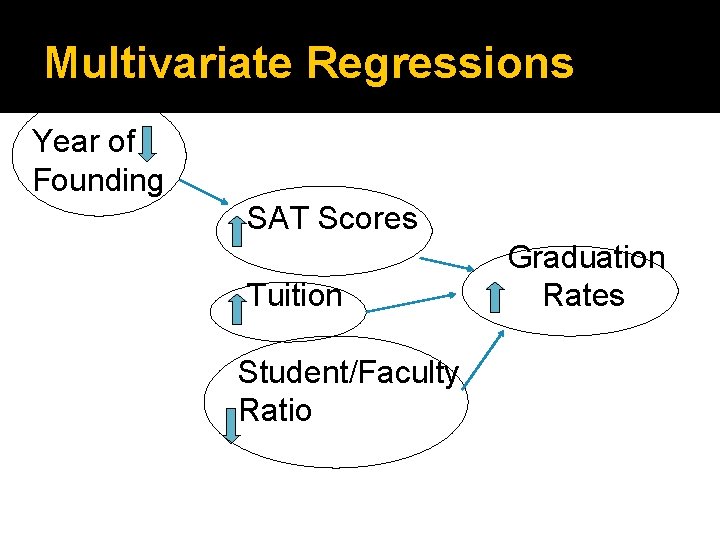

Multivariate Regressions �A “multivariate regression” uses more than one independent variable (or confound) to explain variation in a dependent variable. The coefficient for each independent variable reports its effect on the DV, holding constant all of the other IVs in the regression. Thought experiment: Comparing two colleges founded in the same year with the same student faculty ratio, what is the effect of SATs?

Multivariate Regressions Year of Founding SAT Scores Tuition Student/Faculty Ratio Graduation Rates

Multivariate Regressions �Again, want to estimate coefficients: Est. Grad. Rate = a + b 1*SAT Score + b 2*Year Founded+ b 3*Tuition + b 4*Faculty Ratio

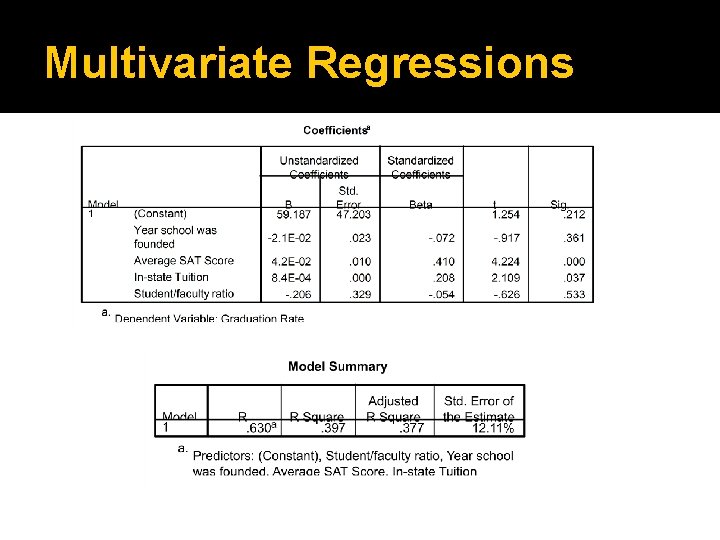

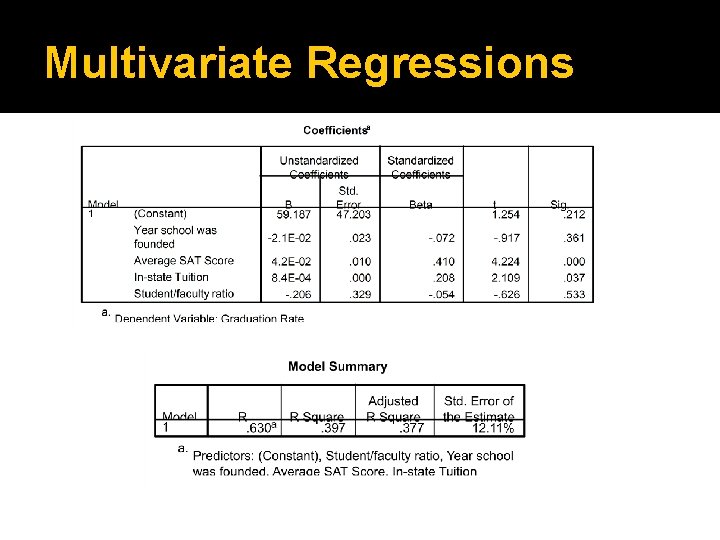

Multivariate Regressions

Multivariate Regressions � Holding all other factors constant, a 200 point increase in SAT scores leads to a predicted (200)(0. 042) = 8. 4% increase in the graduation rate, and this effect is statistically significant. � Controlling for other factors, a college that is 100 years younger should have a graduation rate that is (100)(-0. 021) = 2. 1% lower, but this effect is not significantly different from zero.