Linear Programming Boosting for Uneven Datasets Jurij Leskovec

Linear Programming Boosting for Uneven Datasets Jurij Leskovec, Jožef Stefan Institute, Slovenia John Shawe-Taylor, Royal Holloway University of London, UK ICML 2003 1

Motivation n n There are 800 million of Europeans and 2 million of them are Slovenians Want to build a classifier to distinguish Slovenians from the rest of Europeans A traditional unaware classifier (e. g. politician) would not even notice Slovenia as an entity We don’t want that! ICML 2003 2

Problem setting Unbalanced Dataset n 2 classes: ¨ positive (small) ¨ negative (large) n Train a binary classifier to separate highly unbalanced classes n ICML 2003 3

Our solution framework n We will use Boosting ¨ Combine many simple and inaccurate categorization rules (weak learners) into a single highly accurate categorization rule ¨ The simple rules are trained sequentially; each rule is trained on examples which are most difficult to classify by preceding rules ICML 2003 4

Outline Boosting algorithms n Weak learners n Experimental setup n Results n Conclusions n ICML 2003 5

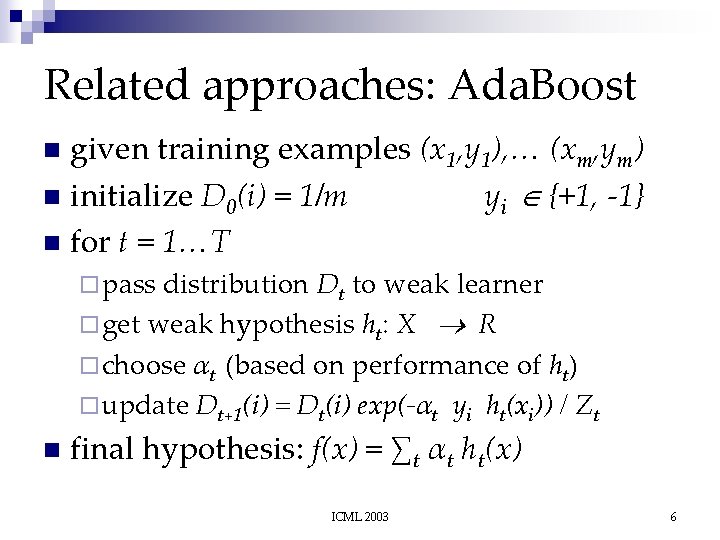

Related approaches: Ada. Boost given training examples (x 1, y 1), … (xm, ym) n initialize D 0(i) = 1/m yi {+1, -1} n for t = 1…T n ¨ pass distribution Dt to weak learner ¨ get weak hypothesis ht: X R ¨ choose αt (based on performance of ht) ¨ update Dt+1(i) = Dt(i) exp(-αt yi ht(xi)) / Zt n final hypothesis: f(x) = ∑t αt ht(x) ICML 2003 6

Ada. Boost - Intuition n weak hypothesis h(x) ¨ sign of h(x) is the predicted binary label ¨ magnitude |h(x)| as a confidence n αt controls the influence of each ht(x) ICML 2003 7

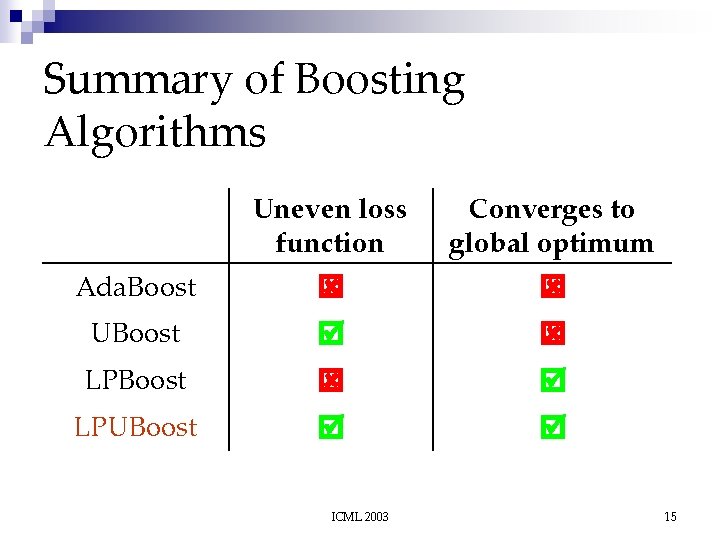

More Boosting Algorithms differ in the way of initializing weights D 0(i) (misclassification costs) and updating them n 4 boosting algorithms: n ¨ Ada. Boost – Greedy approach ¨ UBoost – Uneven loss function + greedy ¨ LPBoost – Linear Programming (optimal solution) ¨ LPUBoost – Our proposed solution (LP + uneven) ICML 2003 8

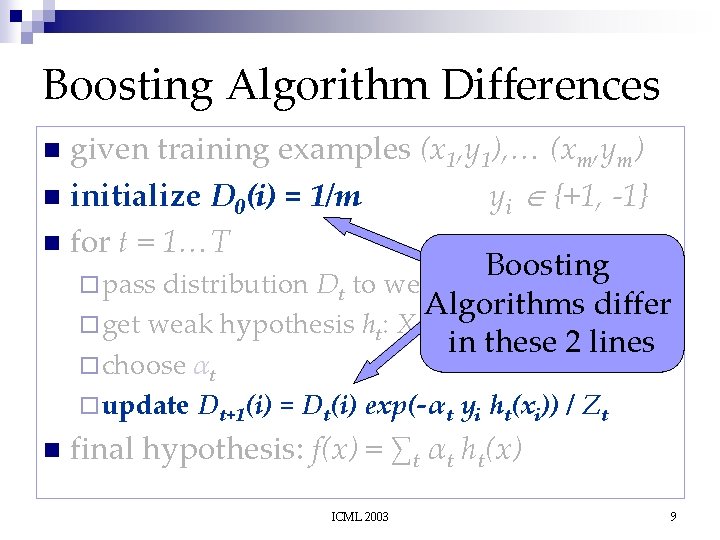

Boosting Algorithm Differences given training examples (x 1, y 1), … (xm, ym) n initialize D 0(i) = 1/m yi {+1, -1} n for t = 1…T Boosting ¨ pass distribution Dt to weak learner Algorithms differ ¨ get weak hypothesis ht: X R in these 2 lines n ¨ choose αt ¨ update Dt+1(i) = Dt(i) exp(-αt yi ht(xi)) / Zt n final hypothesis: f(x) = ∑t αt ht(x) ICML 2003 9

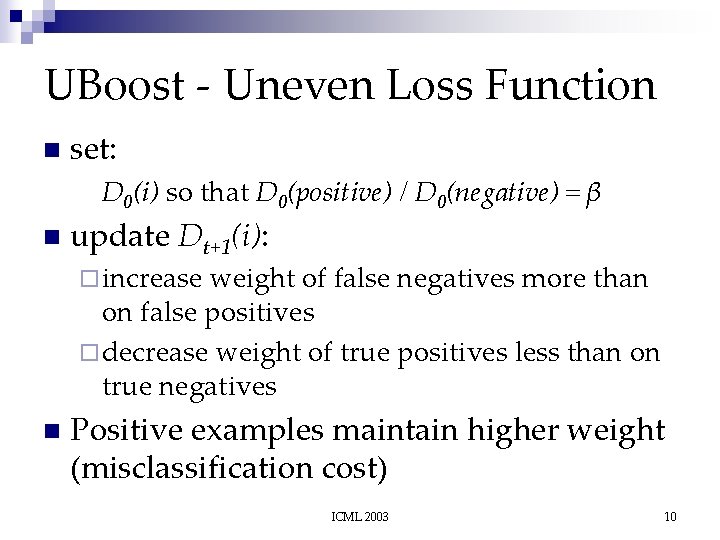

UBoost - Uneven Loss Function n set: D 0(i) so that D 0(positive) / D 0(negative) = β n update Dt+1(i): ¨ increase weight of false negatives more than on false positives ¨ decrease weight of true positives less than on true negatives n Positive examples maintain higher weight (misclassification cost) ICML 2003 10

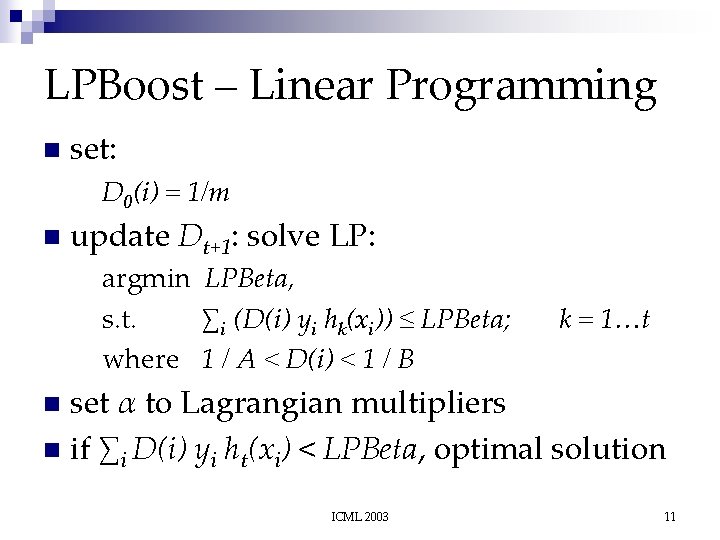

LPBoost – Linear Programming n set: D 0(i) = 1/m n update Dt+1: solve LP: argmin LPBeta, s. t. ∑i (D(i) yi hk(xi)) ≤ LPBeta; where 1 / A < D(i) < 1 / B k = 1…t set α to Lagrangian multipliers n if ∑i D(i) yi ht(xi) < LPBeta, optimal solution n ICML 2003 11

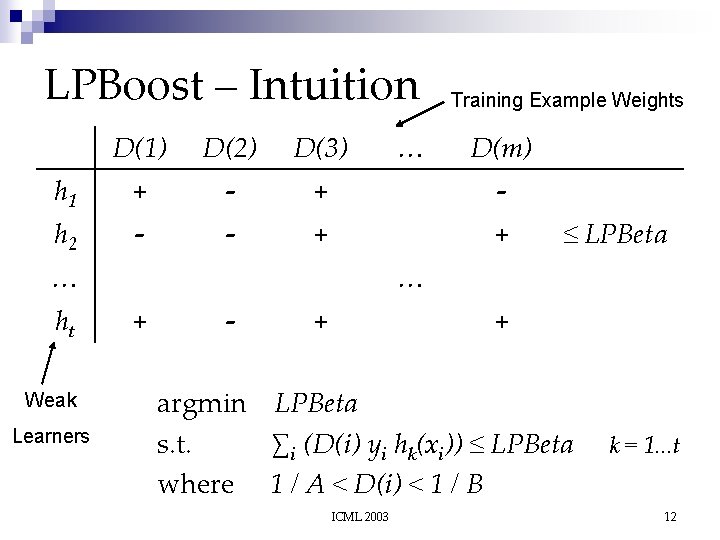

LPBoost – Intuition D(1) D(2) D(3) h 1 + - h 2 - - + + … ht Weak Learners … Training Example Weights D(m) ≤ LPBeta … + - + + argmin LPBeta s. t. ∑i (D(i) yi hk(xi)) ≤ LPBeta where 1 / A < D(i) < 1 / B ICML 2003 k = 1. . . t 12

LPBoost – Example D(1) D(2) Training Example Weights D(3) h 1 + 0. 3 D(1) + 0. 7 D(2) - 0. 2 D(3) ≤ LPBeta h 2 + 0. 1 D(1) - 0. 4 D(2) - 0. 5 D(3) ≤ LPBeta h 3 + 0. 5 D(1) - 0. 1 D(2) - 0. 3 D(3) ≤ LPBeta Weak Learners Correctly Classified Incorrectly Classified Confidence argmin LPBeta s. t. ∑i (yi hk(xi) D(i)) ≤ LPBeta where 1 / A < D(i) < 1 / B ICML 2003 k = 1. . . 3 13

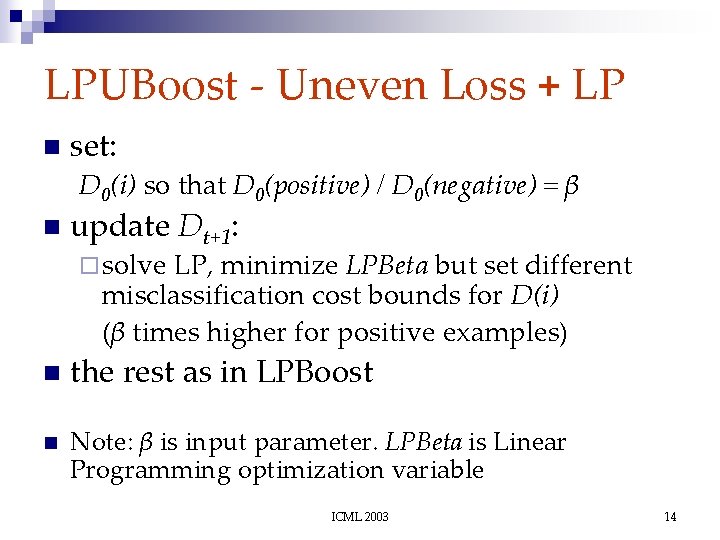

LPUBoost - Uneven Loss + LP n set: D 0(i) so that D 0(positive) / D 0(negative) = β n update Dt+1: ¨ solve LP, minimize LPBeta but set different misclassification cost bounds for D(i) (β times higher for positive examples) n n the rest as in LPBoost Note: β is input parameter. LPBeta is Linear Programming optimization variable ICML 2003 14

Summary of Boosting Algorithms Uneven loss function Converges to global optimum Ada. Boost UBoost LPUBoost ICML 2003 15

Weak Learners n One-level decision tree (IF-THEN rule): if word w occurs in a document X return P else return N ¨P and N are real numbers chosen based on misclassification cost weights Dt(i) interpret the sign of P and N as the predicted binary label n magnitude |P| and |N| as the confidence n ICML 2003 16

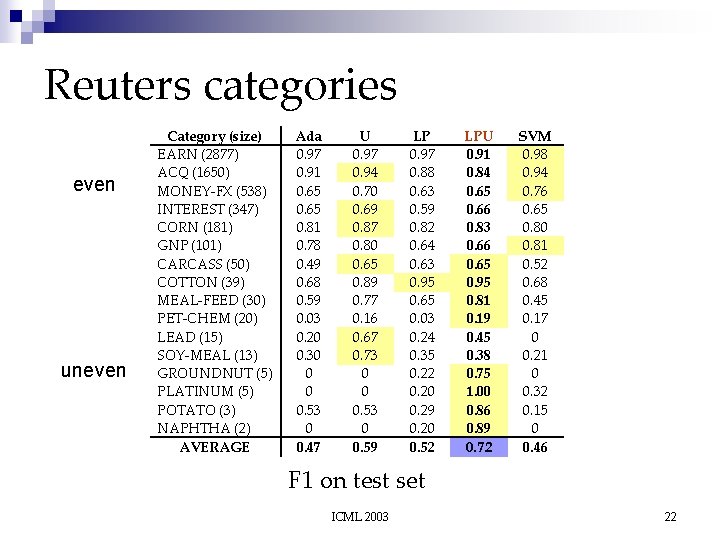

Experimental setup Reuters newswire articles (Reuters-21578) n Mod. Apte split: 9603 train, 3299 test docs n 16 categories representing all sizes n Train binary classifier n 5 fold cross validation n Measures: Precision = TP / (TP + FP) Recall = TP / (TP + FN) F 1 = 2 Prec Rec / (Prec + Rec) n ICML 2003 17

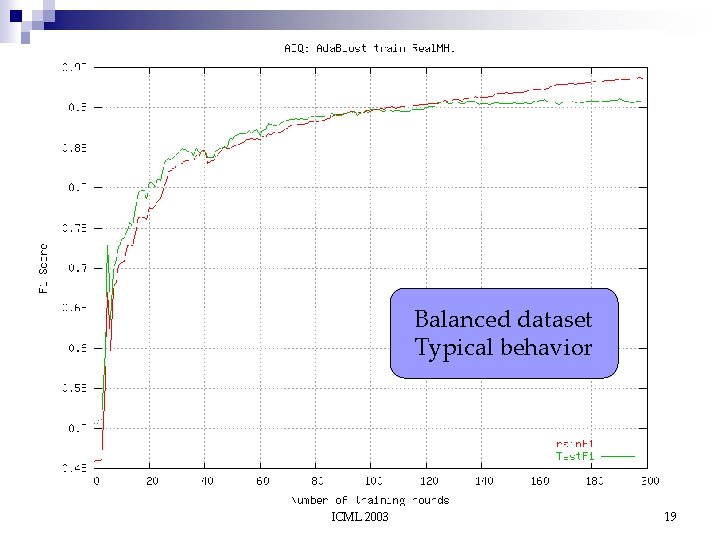

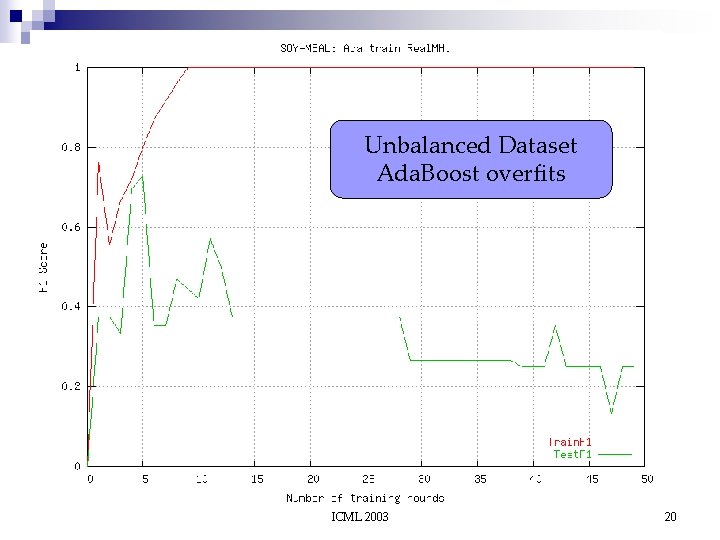

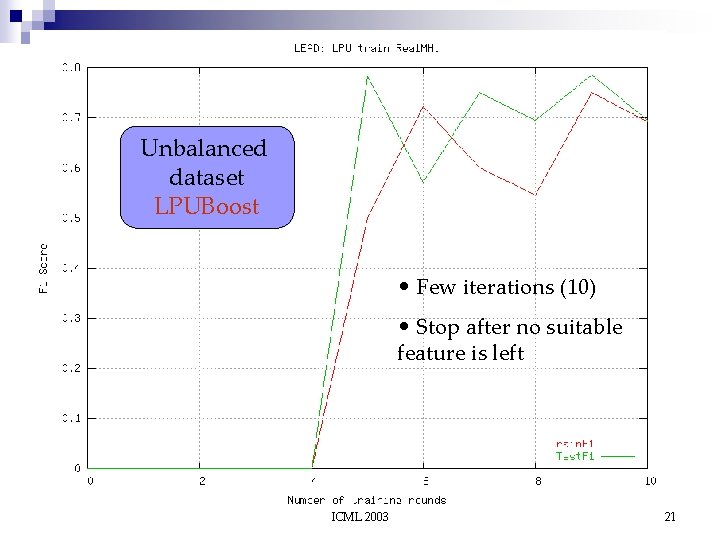

Typical situations n Balanced training dataset ¨ all learning algorithms show similar performance n Unbalanced training dataset ¨ Ada. Boost overfits ¨ LPUBoost does not overfit – converges fast using only a few weak learners ¨ UBoost and LPBoost are somewhere in between ICML 2003 18

Balanced dataset Typical behavior ICML 2003 19

Unbalanced Dataset Ada. Boost overfits ICML 2003 20

Unbalanced dataset LPUBoost • Few iterations (10) • Stop after no suitable feature is left ICML 2003 21

Reuters categories even uneven Category (size) EARN (2877) ACQ (1650) MONEY-FX (538) INTEREST (347) CORN (181) GNP (101) CARCASS (50) COTTON (39) MEAL-FEED (30) PET-CHEM (20) LEAD (15) SOY-MEAL (13) GROUNDNUT (5) PLATINUM (5) POTATO (3) NAPHTHA (2) AVERAGE Ada 0. 97 0. 91 0. 65 0. 81 0. 78 0. 49 0. 68 0. 59 0. 03 0. 20 0. 30 0 0 0. 53 0 0. 47 U 0. 97 0. 94 0. 70 0. 69 0. 87 0. 80 0. 65 0. 89 0. 77 0. 16 0. 67 0. 73 0 0 0. 53 0 0. 59 LP 0. 97 0. 88 0. 63 0. 59 0. 82 0. 64 0. 63 0. 95 0. 65 0. 03 0. 24 0. 35 0. 22 0. 20 0. 29 0. 20 0. 52 LPU 0. 91 0. 84 0. 65 0. 66 0. 83 0. 66 0. 65 0. 95 0. 81 0. 19 0. 45 0. 38 0. 75 1. 00 0. 86 0. 89 0. 72 SVM 0. 98 0. 94 0. 76 0. 65 0. 80 0. 81 0. 52 0. 68 0. 45 0. 17 0 0. 21 0 0. 32 0. 15 0 0. 46 F 1 on test set ICML 2003 22

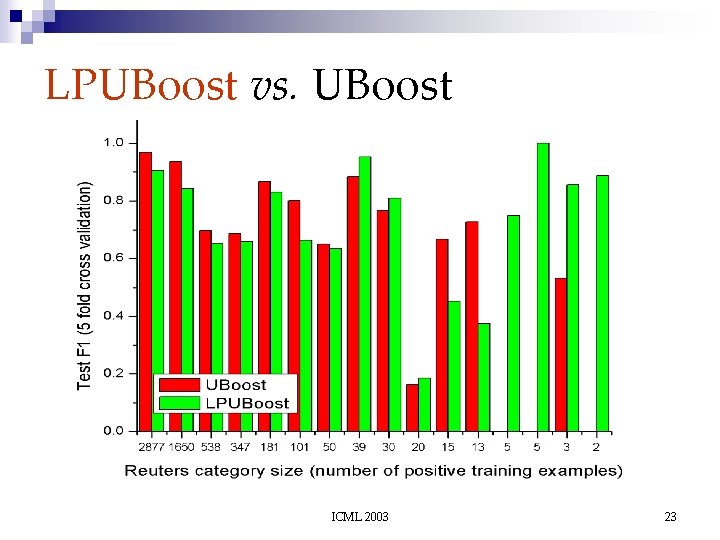

LPUBoost vs. UBoost ICML 2003 23

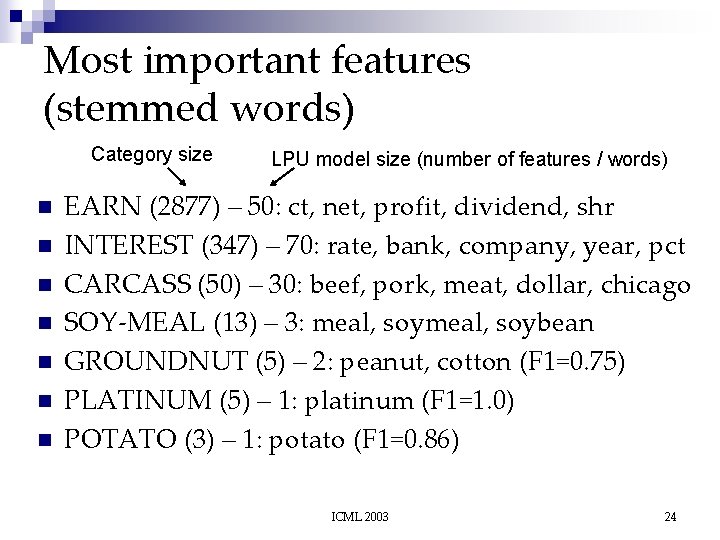

Most important features (stemmed words) Category size n n n n LPU model size (number of features / words) EARN (2877) – 50: ct, net, profit, dividend, shr INTEREST (347) – 70: rate, bank, company, year, pct CARCASS (50) – 30: beef, pork, meat, dollar, chicago SOY-MEAL (13) – 3: meal, soybean GROUNDNUT (5) – 2: peanut, cotton (F 1=0. 75) PLATINUM (5) – 1: platinum (F 1=1. 0) POTATO (3) – 1: potato (F 1=0. 86) ICML 2003 24

Computational efficiency Ada. Boost and UBoost are the fastest – the simplest n LPBoost and LPUBoost are a little slower n ¨ LP computation takes much of the time but since LPUBoost chooses fewer weak hypotheses the times get comparable to those of Ada. Boost ICML 2003 25

Conclusions LPUBoost is suitable for text categorization for highly unbalanced datasets n All benefits (well-defined stopping criteria, unequal loss function) show up n No overfitting: it is able to find simple (small) and complicated (large) hypotheses n ICML 2003 26

- Slides: 26