Lightweight Data Replicator Scott Koranda University of WisconsinMilwaukee

- Slides: 12

Lightweight Data Replicator Scott Koranda University of Wisconsin-Milwaukee & National Center for Supercomputing Applications Brian Moe University of Wisconsin-Milwaukee www. griphyn. org Scott Koranda, UWM & NCSA

LIGO data replication needs • Sites at Livingston, LA (LLO) and Hanford, WA (LHO) • 2 interferometers at LHO, 1 at LLO • 1000’s of channels recorded at rates of 16 KHz, 16 Hz, 1 Hz, … • Output is binary ‘frame’ files holding 16 seconds data with GPS timestamp ~ 100 MB from LHO ~ 50 MB from LLO • ~ 1 TB/day in total • S 1 run ~ 2 weeks • S 2 run ~ 8 weeks www. griphyn. org Scott Koranda, UWM & NCSA

Networking to IFOs Limited Grid. Fed. Ex protocol • LIGO IFOs remote, making bandwidth expensive • Couple of T 1 lines for email/administration only • Ship tapes to Caltech (SAMQFS) • Reduced data sets (RDS) generated and stored on disk ~ 20 % size of raw data ~ 200 GB/day Bandwidth to LHO increases dramatically for S 3! www. griphyn. org Scott Koranda, UWM & NCSA

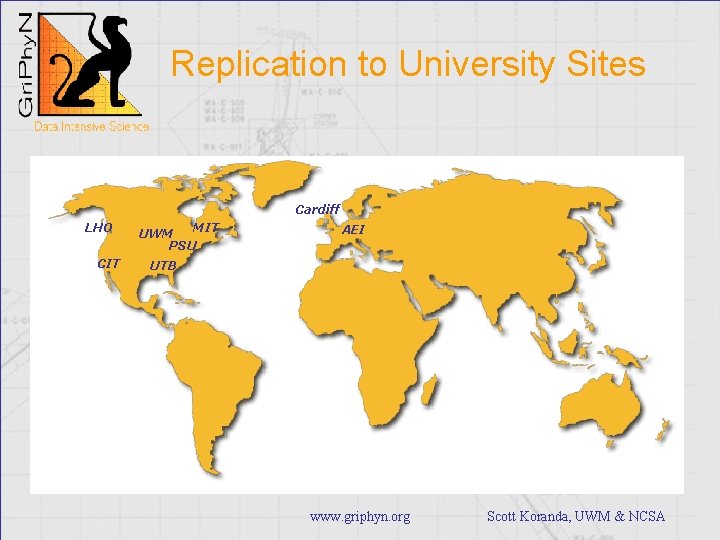

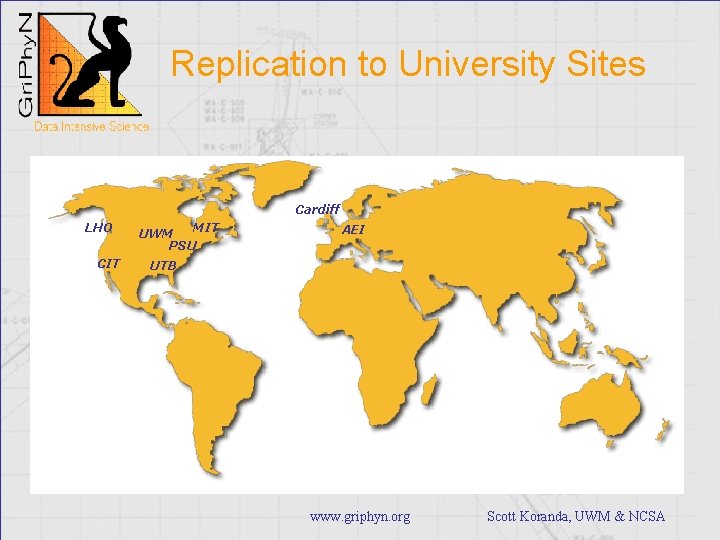

Replication to University Sites Cardiff LHO CIT MIT UWM PSU AEI UTB www. griphyn. org Scott Koranda, UWM & NCSA

Why Bulk Replication to University Sites? • Each has compute resources (Linux clusters) – Early plan was to provide one or two analysis centers – Now everyone has a cluster • Cheap storage is cheap – $1/GB for drives – TB RAID-5 < $10 K – Throw more drives into your cluster • Analysis applications read a lot of data – Different ways to slice some problems, but most want access to large sets of data for a particular instance of search parameters www. griphyn. org Scott Koranda, UWM & NCSA

LIGO Data Replication Challenge • Replicate 200 GB/day of data to multiple sites securely, efficiently, robustly (no babysitting…) • Support a number of storage models at sites – – CIT → SAM-QFS (tape) and large IDE farms UWM → 600 partitions on 300 cluster nodes PSU → multiple 1 TB RAID-5 servers AEI → 150 partitions on 150 nodes with redundancy • Coherent mechanism for data discovery by users and their codes • Know what data we have, where it is, and replicate it fast and easy www. griphyn. org Scott Koranda, UWM & NCSA

Prototyping “Realizations” • Need to keep “pipe” full to achieve desired transfer rates – Mindful of overhead of setting up connections – Set up Grid. FTP connection with multiple channels, tuned TCP windows and I/O buffers and leave it open – Sustained 10 MB/s between Caltech and UWM, peaks up to 21 MB/s • Need cataloging that scales and performs – Globus Replica Catalog (LDAP) < 105 and not acceptable – Need solution with relational database backend scales to 107 and fast updates/reads • Not necessarily need “reliable file transfer” (RFT) – Problem with any single transfer? Forget it, come back later… • Need robust mechanism for selecting collections of files – Users/sites demand flexibility choosing what data to replicate www. griphyn. org Scott Koranda, UWM & NCSA

LIGO, err… Lightweight Data Replicator (LDR) • What data we have… – Globus Metadata Catalog Service (MCS) • Where data is… – Globus Replica Location Service (RLS) • Replicate it fast… – Globus Grid. FTP protocol – What client to use? Right now we use our own • Replicate it easy… – Logic we added – Is there a better solution? www. griphyn. org Scott Koranda, UWM & NCSA

Lightweight Data Replicator • Replicated > 20 TB to UWM thus far • Less to MIT, PSU • Just deployed version 0. 5. 5 to MIT, PSU, AEI, CIT, UWM, LHO, LLO for LIGO/GEO S 3 run • Deployment in progress at Cardiff • LDRdata. Find. Server running at UWM for S 2, soon at all sites for S 3 www. griphyn. org Scott Koranda, UWM & NCSA

Lightweight Data Replicator • “Lightweight” because we think it is the minimal collection of code needed to get the job done • Logic coded in Python – Use SWIG to wrap Globus RLS – Use py. Globus from LBL elsewhere • Each site is any combination of publisher, provider, subscriber – Publisher populates metadata catalog – Provider populates location catalog (RLS) – Subscriber replicates data using information provided by publishers and providers • small, independent daemons that each do one thing – LDRMaster, LDRMetadata, LDRSchedule, LDRTransfer, … www. griphyn. org Scott Koranda, UWM & NCSA

Future? • Held LDR face-to-face at UWM last summer • CIT, MIT, PSU, UWM, AEI, Cardiff all represented • LDR “Needs” – Better/easier installation, configuration – “Dashboard” for admins for insights into LDR state – More robustness, especially with RLS server hangs • Fixed with version 2. 0. 9 – API and templates for publishing www. griphyn. org Scott Koranda, UWM & NCSA

Future? • LDR is a tool that works now for LIGO • Still, we recognize a number of projects need bulk data replication – There has to be common ground • What middleware can be developed and shared? – We are looking for “opportunities” • Code for “solve our problems for us…” – Still want to investigate Stork, Disk. Router, ? – Do contact me if you do bulk data replication… www. griphyn. org Scott Koranda, UWM & NCSA