Lexical and Syntax Analysis Presented by Ahmed Imad

Lexical and Syntax Analysis Presented by Ahmed Imad Hatem EL-Azab Prof. Dr. Mostafa Abdel Aziem Mostafa

Chapter 4 Topics Introduction Lexical Analysis The Parsing Problem Recursive Descent Parsing Bottom Up Parsing 1 2

Why study lexical and syntax analyzers? Shows application of grammars discussed in chapter 3 Lexical and syntax analysis not just used in compiler design: program formatters programs that compute complexity programs that analyze and react to configuration files Good to have some background, since compiler design not required 1 3

Introduction Language implementation systems must analyze source code, regardless of the specific implementation approach (compiled, interpreted, hybrid) Nearly all syntax analysis is based on a formal description of the syntax of the source language (context free grammars or BNF) The parser can be based directly on the BNF Parsers based on BNF are easy to maintain (modular) 1 4

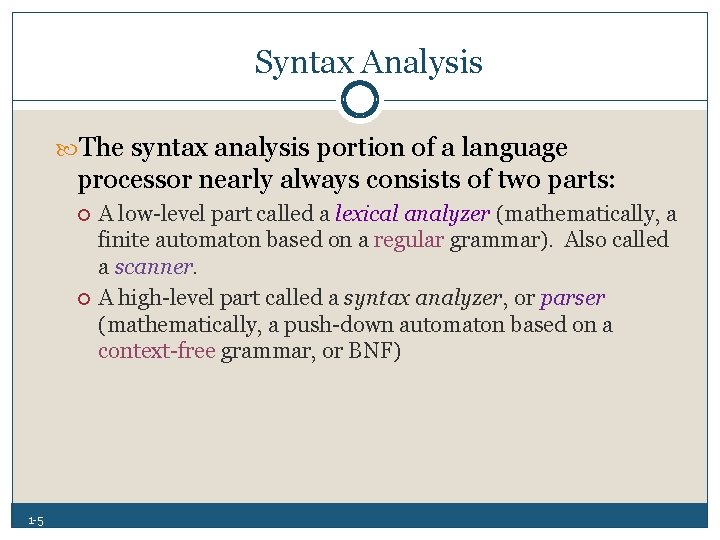

Syntax Analysis The syntax analysis portion of a language processor nearly always consists of two parts: 1 5 A low level part called a lexical analyzer (mathematically, a finite automaton based on a regular grammar). Also called a scanner. A high level part called a syntax analyzer, or parser (mathematically, a push down automaton based on a context free grammar, or BNF)

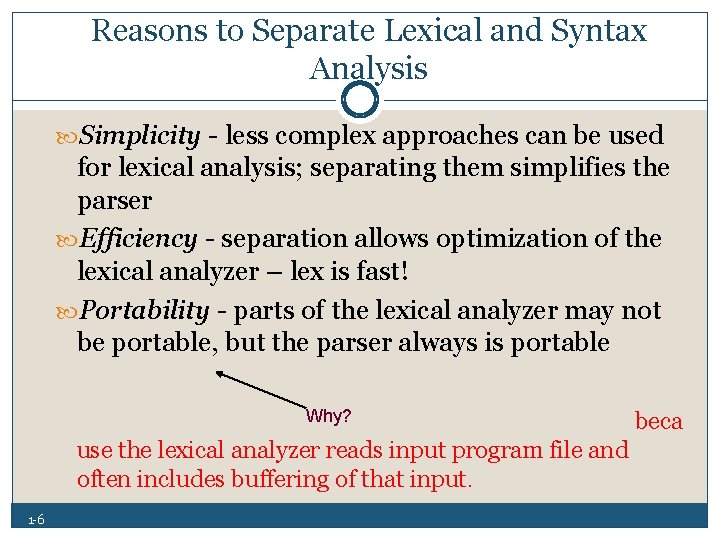

Reasons to Separate Lexical and Syntax Analysis Simplicity less complex approaches can be used for lexical analysis; separating them simplifies the parser Efficiency separation allows optimization of the lexical analyzer – lex is fast! Portability parts of the lexical analyzer may not be portable, but the parser always is portable Why? beca use the lexical analyzer reads input program file and often includes buffering of that input. 1 6

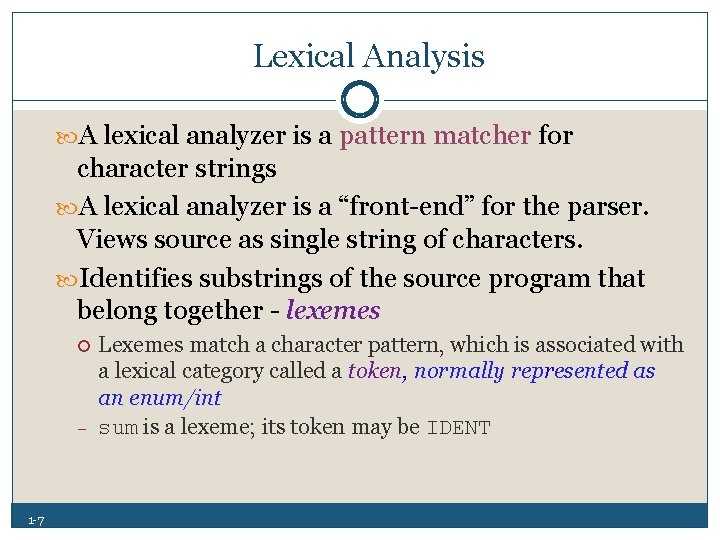

Lexical Analysis A lexical analyzer is a pattern matcher for character strings A lexical analyzer is a “front end” for the parser. Views source as single string of characters. Identifies substrings of the source program that belong together lexemes – 1 7 Lexemes match a character pattern, which is associated with a lexical category called a token, normally represented as an enum/int sum is a lexeme; its token may be IDENT

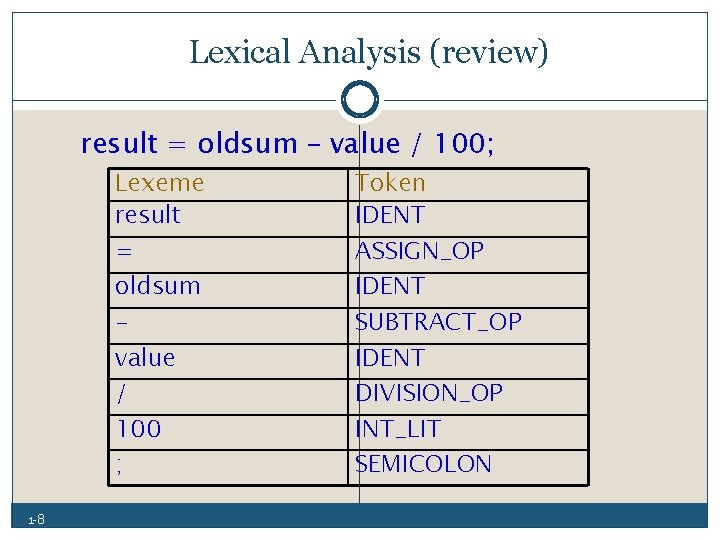

Lexical Analysis (review) result = oldsum – value / 100; Lexeme result = oldsum value / 100 ; 1 8 Token IDENT ASSIGN_OP IDENT SUBTRACT_OP IDENT DIVISION_OP INT_LIT SEMICOLON

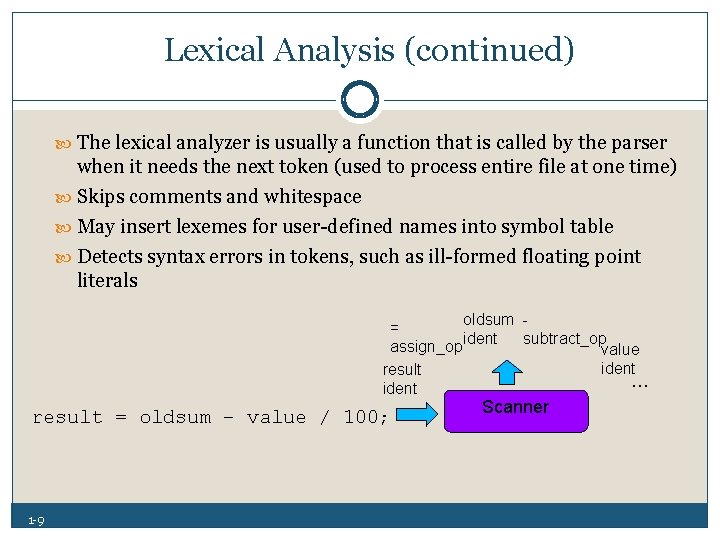

Lexical Analysis (continued) The lexical analyzer is usually a function that is called by the parser when it needs the next token (used to process entire file at one time) Skips comments and whitespace May insert lexemes for user defined names into symbol table Detects syntax errors in tokens, such as ill formed floating point literals oldsum = subtract_op assign_opident value ident result … ident result = oldsum – value / 100; 1 9 Scanner

Lexical Analysis (continued) Three approaches to building a lexical analyzer: Write a formal description of the tokens based on regular expressions and use a software tool that constructs a lexical analyzer given such a description (e. g. , lex, flex) Design a state diagram that describes the tokens and write a program that implements the state diagram Design a state diagram that describes the tokens and hand construct a table driven implementation of the state diagram 1 10

Lexical Analysis (continued) six approaches to building a lexical analyzer: Define token, lexemes, patterns. Define regular expressions for patterns that have a regular expression. Define regular definitions. Draw transition diagrams. Draw non deterministic finite automata NFA. Transform NFAs to DFAs. 1 11

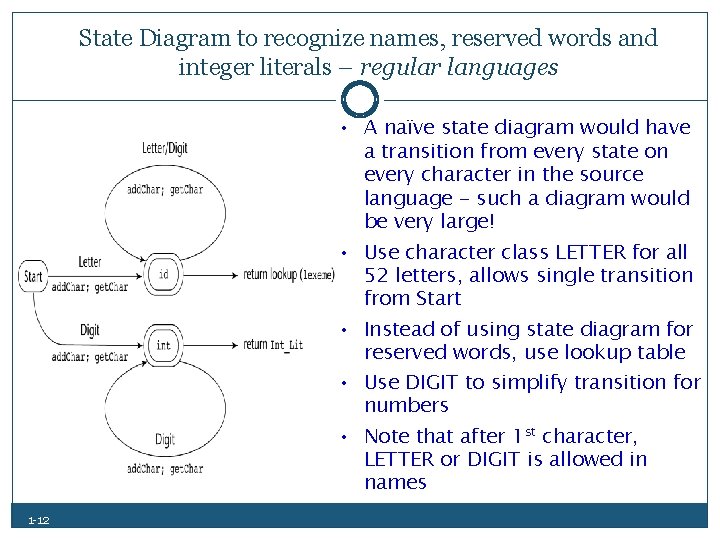

State Diagram to recognize names, reserved words and integer literals – regular languages • A naïve state diagram would have a transition from every state on every character in the source language - such a diagram would be very large! • Use character class LETTER for all 52 letters, allows single transition from Start • Instead of using state diagram for reserved words, use lookup table • Use DIGIT to simplify transition for numbers • Note that after 1 st character, LETTER or DIGIT is allowed in names 1 12

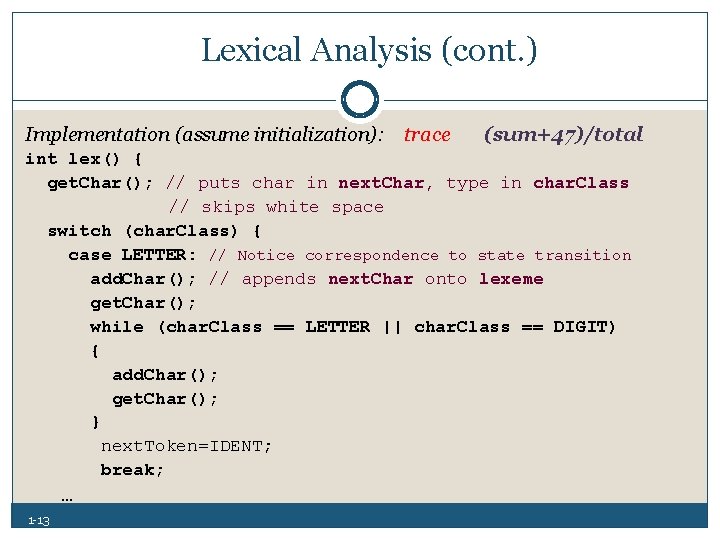

Lexical Analysis (cont. ) Implementation (assume initialization): trace (sum+47)/total int lex() { get. Char(); // puts char in next. Char, type in char. Class // skips white space switch (char. Class) { case LETTER: // Notice correspondence to state transition add. Char(); // appends next. Char onto lexeme get. Char(); while (char. Class == LETTER || char. Class == DIGIT) { add. Char(); get. Char(); } next. Token=IDENT; break; … 1 13

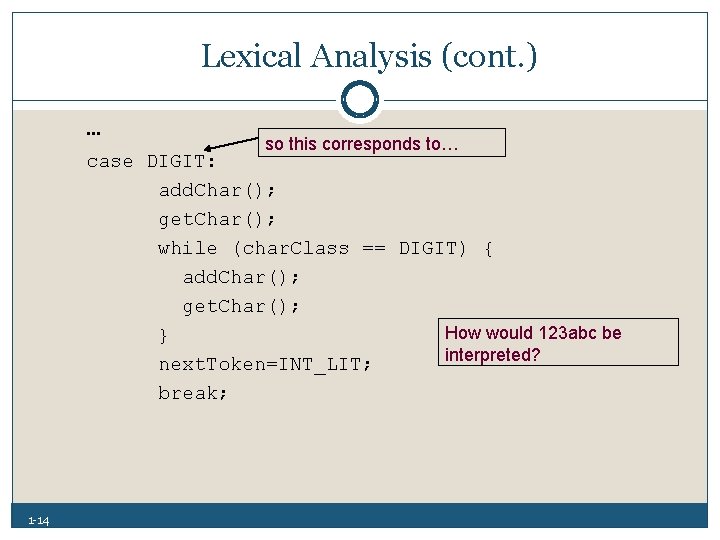

Lexical Analysis (cont. ) … so this corresponds to… case DIGIT: add. Char(); get. Char(); while (char. Class == DIGIT) { add. Char(); get. Char(); How would 123 abc be } interpreted? next. Token=INT_LIT; break; 1 14

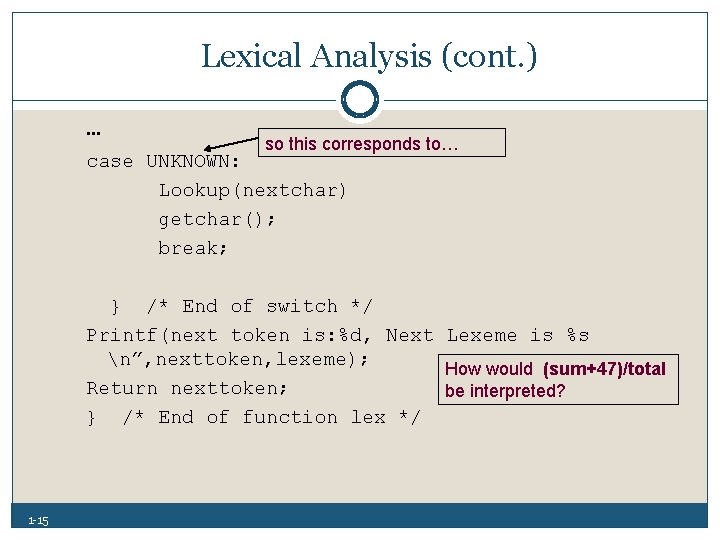

Lexical Analysis (cont. ) … so this corresponds to… case UNKNOWN: Lookup(nextchar) getchar(); break; } /* End of switch */ Printf(next token is: %d, Next Lexeme is %s n”, nexttoken, lexeme); How would (sum+47)/total Return nexttoken; be interpreted? } /* End of function lex */ 1 15

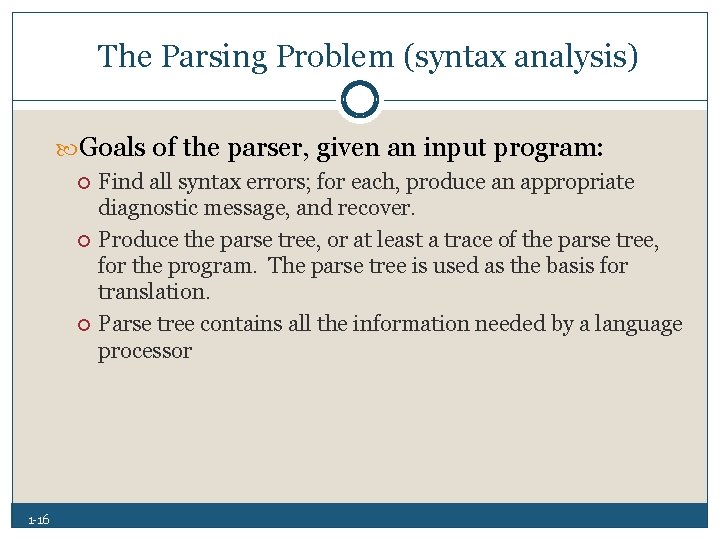

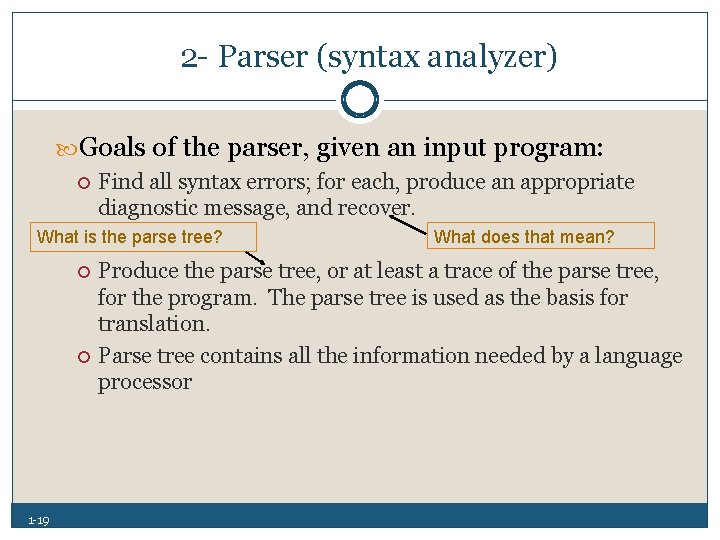

The Parsing Problem (syntax analysis) Goals of the parser, given an input program: 1 16 Find all syntax errors; for each, produce an appropriate diagnostic message, and recover. Produce the parse tree, or at least a trace of the parse tree, for the program. The parse tree is used as the basis for translation. Parse tree contains all the information needed by a language processor

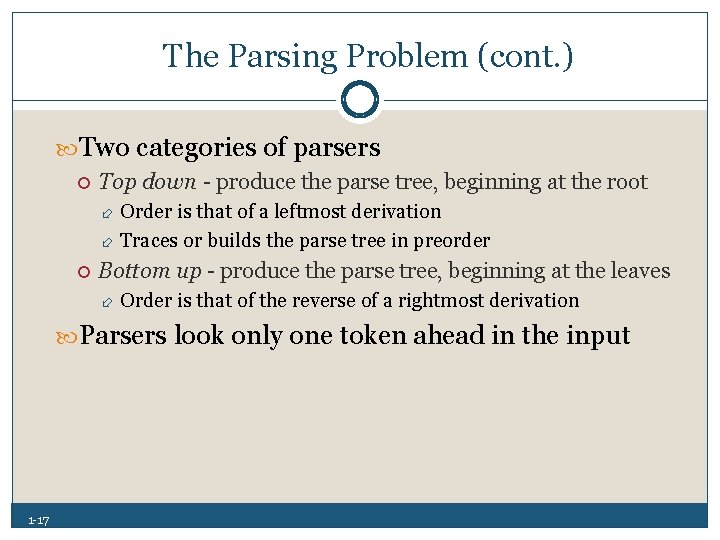

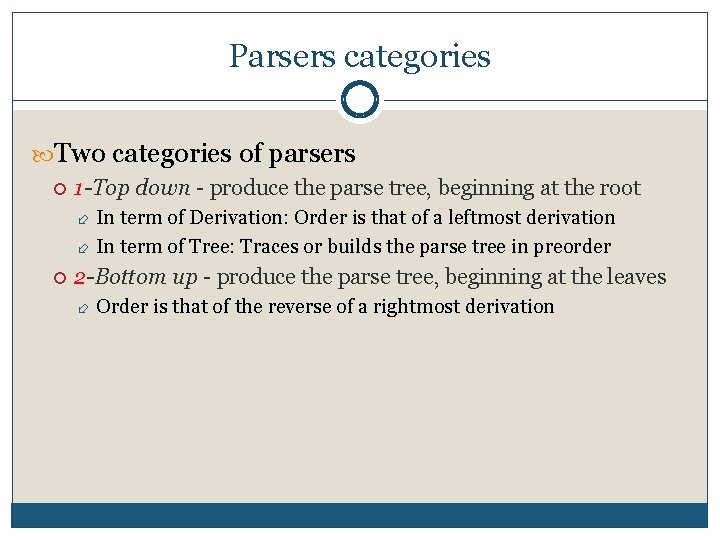

The Parsing Problem (cont. ) Two categories of parsers Top down produce the parse tree, beginning at the root Order is that of a leftmost derivation Traces or builds the parse tree in preorder Bottom up produce the parse tree, beginning at the leaves Order is that of the reverse of a rightmost derivation Parsers look only one token ahead in the input 1 17

The Complexity of Parsing Parsers that work for any unambiguous grammar are complex and inefficient ( O(n 3), where n is the length of the input ). Algorithms must frequently back up and reparse, requires more maintenance of tree. Too slow to be used in practice. Compilers use parsers that only work for a subset of all unambiguous grammars, but do it in linear time ( O(n), where n is the length of the input ) 1 18

2 Parser (syntax analyzer) Goals of the parser, given an input program: Find all syntax errors; for each, produce an appropriate diagnostic message, and recover. What is the parse tree? 1 19 What does that mean? Produce the parse tree, or at least a trace of the parse tree, for the program. The parse tree is used as the basis for translation. Parse tree contains all the information needed by a language processor

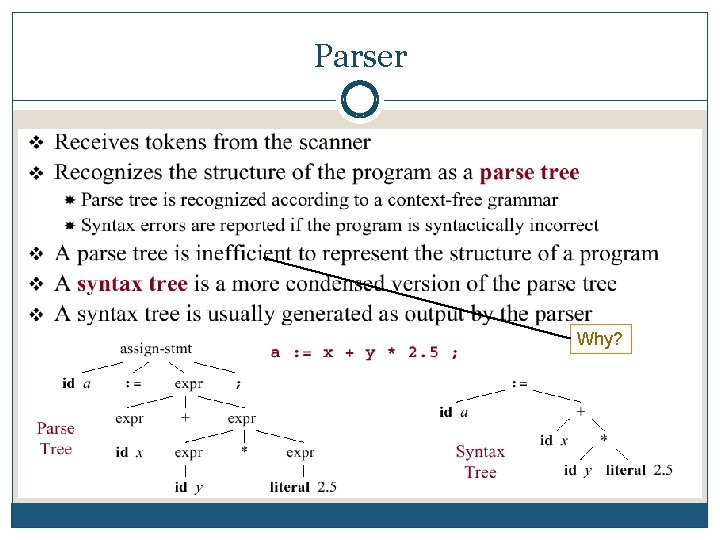

Parser Why?

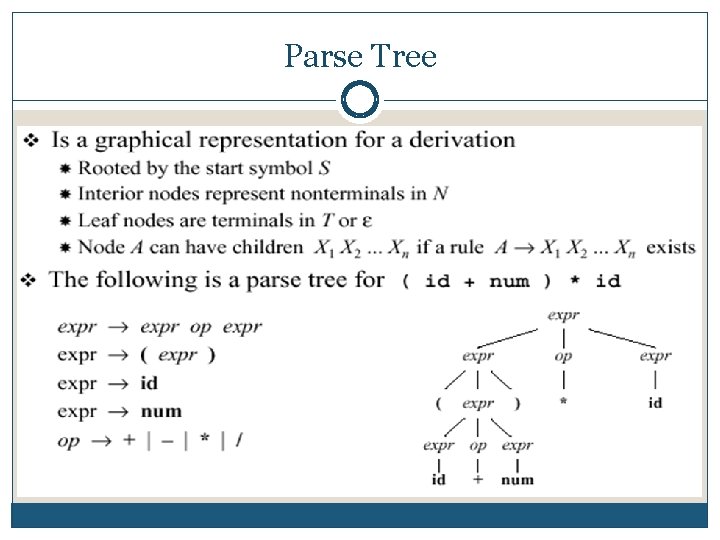

Parse Tree

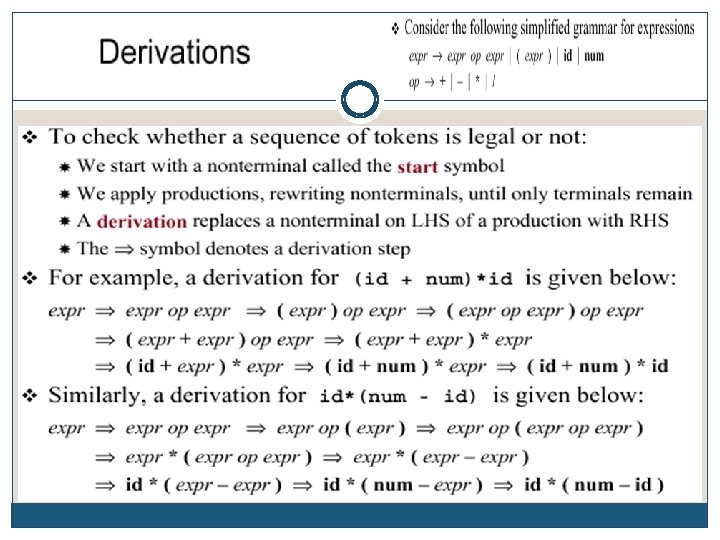

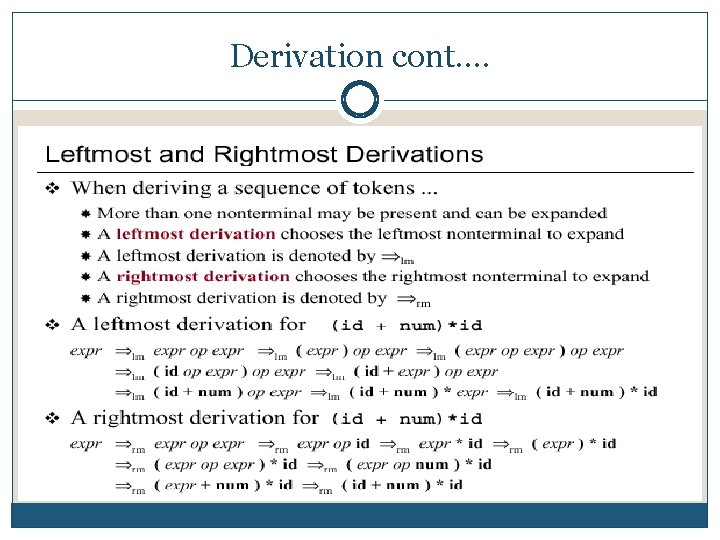

Derivation cont….

Parsers categories Two categories of parsers 1 -Top down produce the parse tree, beginning at the root In term of Derivation: Order is that of a leftmost derivation In term of Tree: Traces or builds the parse tree in preorder 2 -Bottom up produce the parse tree, beginning at the leaves Order is that of the reverse of a rightmost derivation

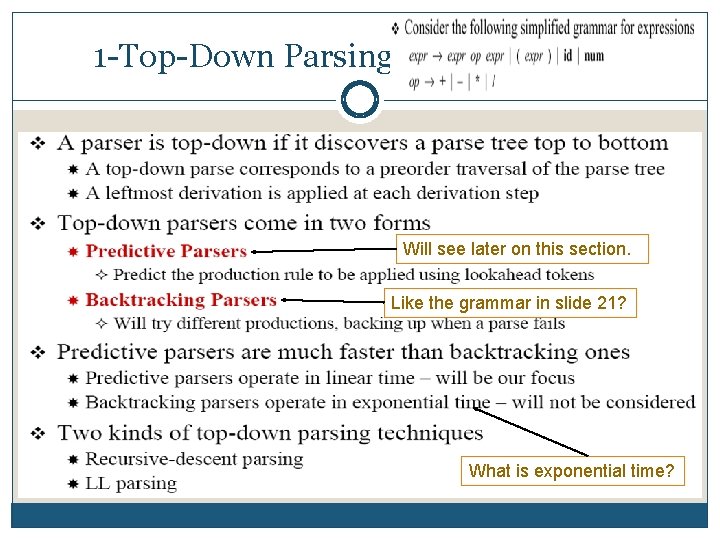

1 Top Down Parsing Will see later on this section. Like the grammar in slide 21? What is exponential time?

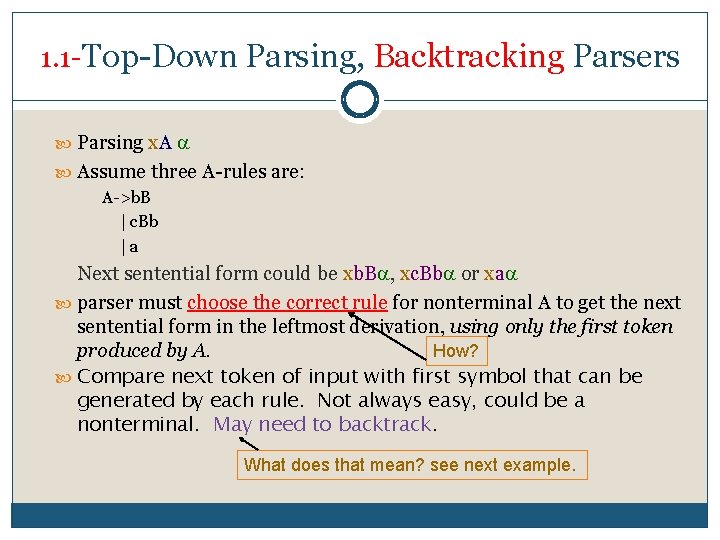

1. 1 Top Down Parsing, Backtracking Parsers Parsing x. A Assume three A rules are: A >b. B | c. Bb | a Next sentential form could be xb. B , xc. Bb or xa parser must choose the correct rule for nonterminal A to get the next sentential form in the leftmost derivation, using only the first token produced by A. How? Compare next token of input with first symbol that can be generated by each rule. Not always easy, could be a nonterminal. May need to backtrack. What does that mean? see next example.

Backtrack Example Parsing ba Assume the rules are (start symbol is A): A >b. E |Ba B > b E > e

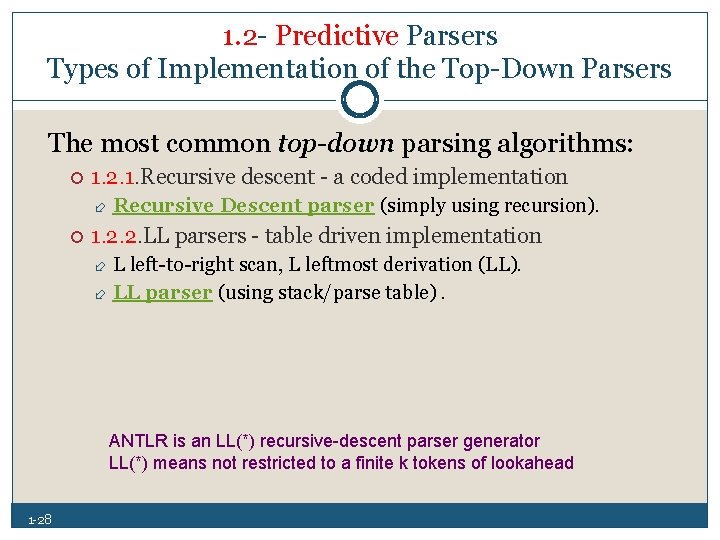

1. 2 Predictive Parsers Types of Implementation of the Top Down Parsers The most common top-down parsing algorithms: 1. 2. 1. Recursive descent a coded implementation Recursive Descent parser (simply using recursion). 1. 2. 2. LL parsers table driven implementation L left to right scan, L leftmost derivation (LL). LL parser (using stack/parse table). ANTLR is an LL(*) recursive-descent parser generator LL(*) means not restricted to a finite k tokens of lookahead 1 28

1. 2. 1 Recursive Descent Parser Hand coded solution – general approach Write a subprogram for each nonterminal (e. g. , <expr>) Subprograms may be recursive (e. g. , for nested structures). A recursive descent parsing is so named because it consists of collection of subprograms, many of which are recursive. Produces a parse tree in top down order EBNF is ideally suited to be the basis for a recursive descent parser, because EBNF minimizes the number of nonterminals (i. e. , fewer subprograms) 1 29

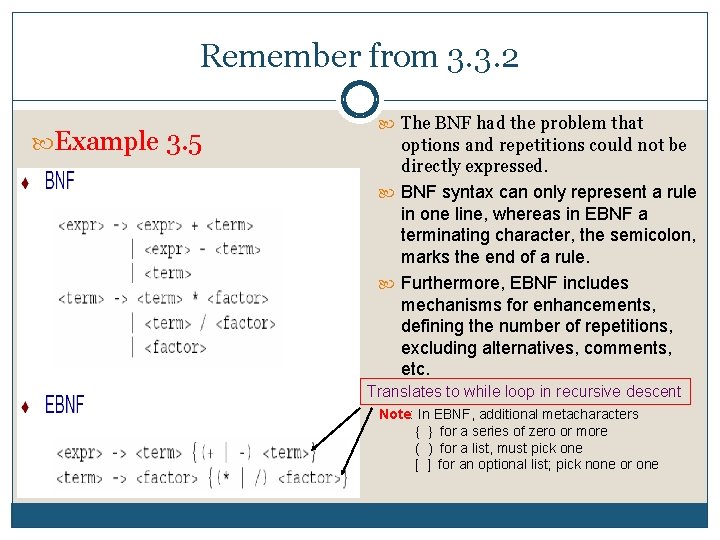

Remember from 3. 3. 2 Example 3. 5 The BNF had the problem that options and repetitions could not be directly expressed. BNF syntax can only represent a rule in one line, whereas in EBNF a terminating character, the semicolon, marks the end of a rule. Furthermore, EBNF includes mechanisms for enhancements, defining the number of repetitions, excluding alternatives, comments, etc. Translates to while loop in recursive descent Note: In EBNF, additional metacharacters { } for a series of zero or more ( ) for a list, must pick one [ ] for an optional list; pick none or one

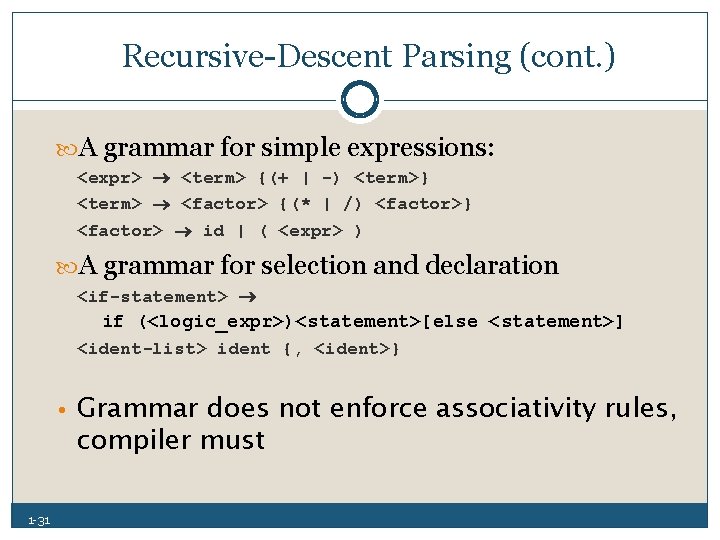

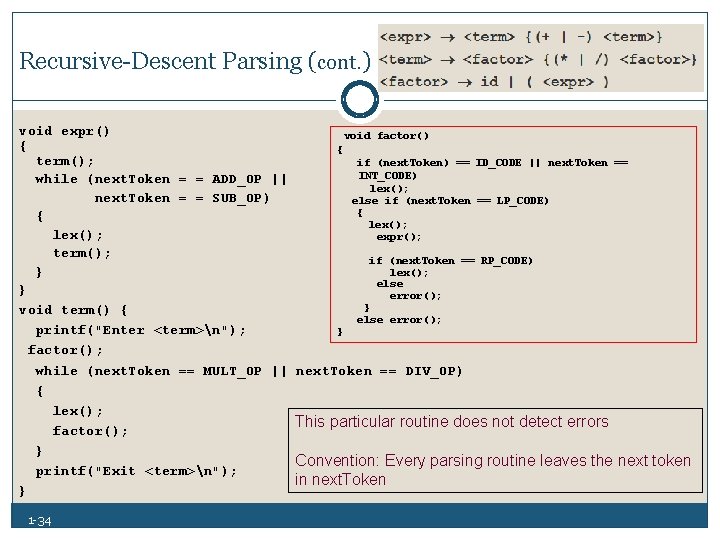

Recursive Descent Parsing (cont. ) A grammar for simple expressions: <expr> <term> {(+ | -) <term>} <term> <factor> {(* | /) <factor>} <factor> id | ( <expr> ) A grammar for selection and declaration <if-statement> if (<logic_expr>)<statement>[else <statement>] <ident-list> ident {, <ident>} • Grammar does not enforce associativity rules, compiler must 1 31

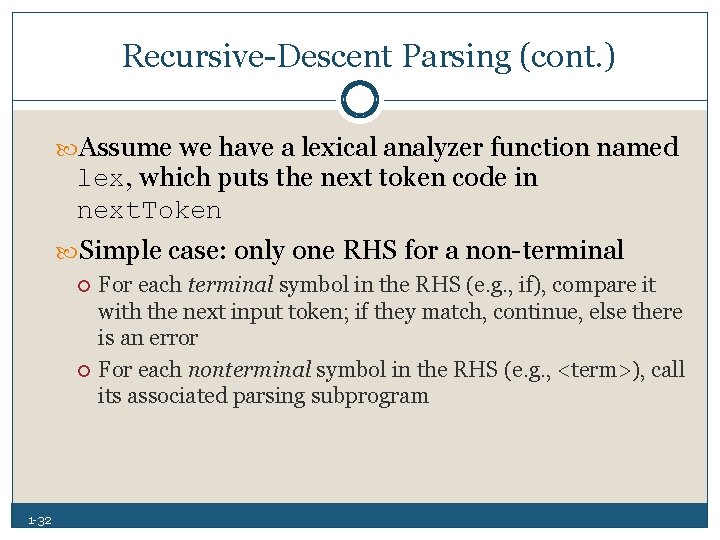

Recursive Descent Parsing (cont. ) Assume we have a lexical analyzer function named lex, which puts the next token code in next. Token Simple case: only one RHS for a non terminal 1 32 For each terminal symbol in the RHS (e. g. , if), compare it with the next input token; if they match, continue, else there is an error For each nonterminal symbol in the RHS (e. g. , <term>), call its associated parsing subprogram

Recursive Descent Parsing (cont. ) More complex: Nonterminal has more than one RHS. Requires an initial process to determine which RHS it is to parse. 1 33 The correct RHS is chosen on the basis of the next token of input (the lookahead) The next token is compared with the first token that can be generated by each RHS until a match is found If no match is found, it is a syntax error

Recursive Descent Parsing (cont. ) void expr() void factor() { { term(); if (next. Token) == ID_CODE || next. Token == INT_CODE) while (next. Token = = ADD_OP || lex(); next. Token = = SUB_OP) else if (next. Token == LP_CODE) { { lex(); expr(); term(); if (next. Token == RP_CODE) lex(); } else } error(); } void term() { else error(); printf("Enter <term>n"); } factor(); while (next. Token == MULT_OP || next. Token == DIV_OP) { lex(); This particular routine does not detect errors factor(); } Convention: Every parsing routine leaves the next token printf("Exit <term>n"); in next. Token } 1 34

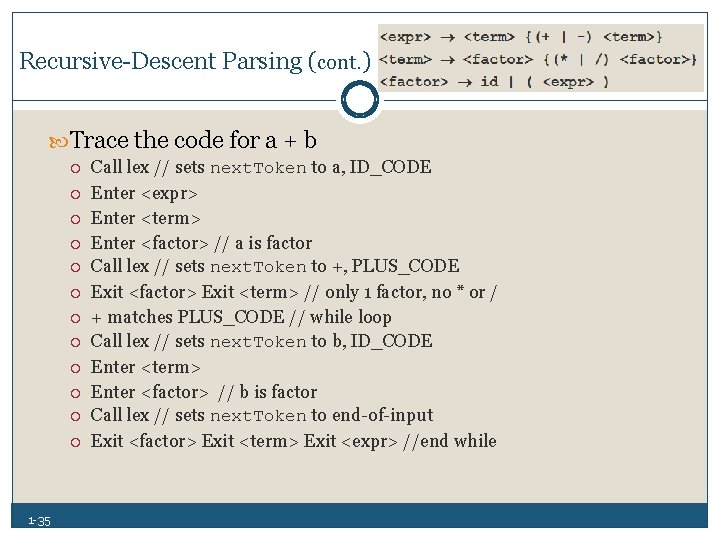

Recursive Descent Parsing (cont. ) Trace the code for a + b 1 35 Call lex // sets next. Token to a, ID_CODE Enter <expr> Enter <term> Enter <factor> // a is factor Call lex // sets next. Token to +, PLUS_CODE Exit <factor> Exit <term> // only 1 factor, no * or / + matches PLUS_CODE // while loop Call lex // sets next. Token to b, ID_CODE Enter <term> Enter <factor> // b is factor Call lex // sets next. Token to end of input Exit <factor> Exit <term> Exit <expr> //end while

Recursive Descent Quick Exercise Do Recursive Descent exercises : Trace the expression (sum+47)/total

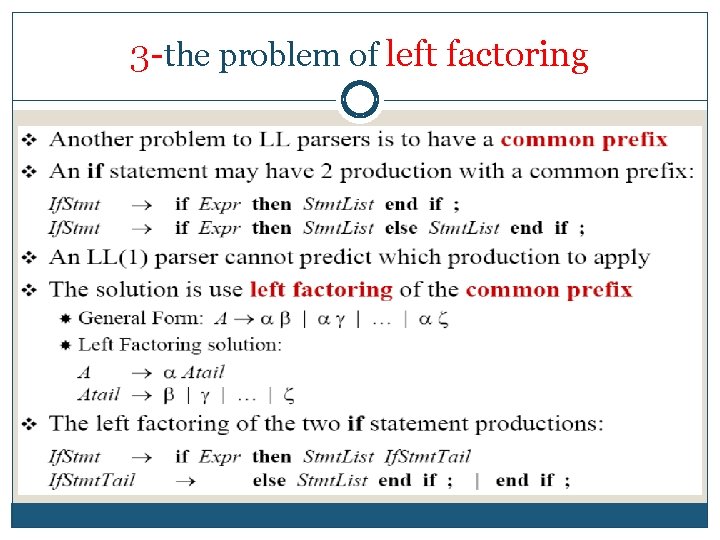

Recursive Descent Parsing / LL Grammars and grammar restrictions and approach One must consider the limitation s of the approach in terms of grammar restrictions: 1. The problem of Left recursion. 2. Whether the parser can always choose correct RHS. Compare next token of input with first symbol that can be generated by each rule. Not always easy, could be a nonterminal. 3. Left factoring of common prefixes.

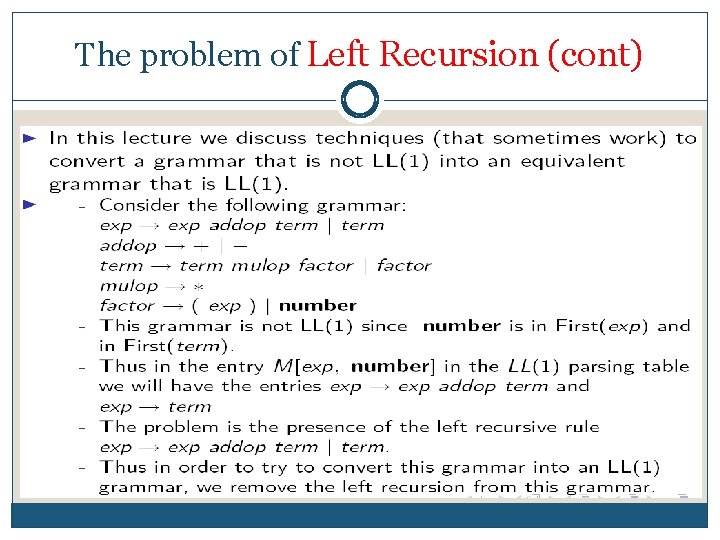

1 The problem of Left Recursion In terms of context free grammar , a non terminal r is left recursive if the left most symbol in any of r’s ‘alternatives’ either immediately (direct left recursive) or through some other non terminal definitions (indirect/hidden left recursive) rewrites to r again. This is a problem for all top down parsing algorithms, not just recursive descent

Recursive Descent Parsing / LL Grammars and Left Recursion Important to consider limitations of recursive descent in terms of grammar restrictions Left-to-right scan Leftmost The LL Grammar Class The Left Recursion Problem If a grammar has left recursion, either direct or indirect, it cannot be the basis for a top-down parser. it causes a catastrophic problem for LL parsers. A grammar can be modified to remove left recursion 1 39 A > A + B // direct left recursive continues to try to parse A

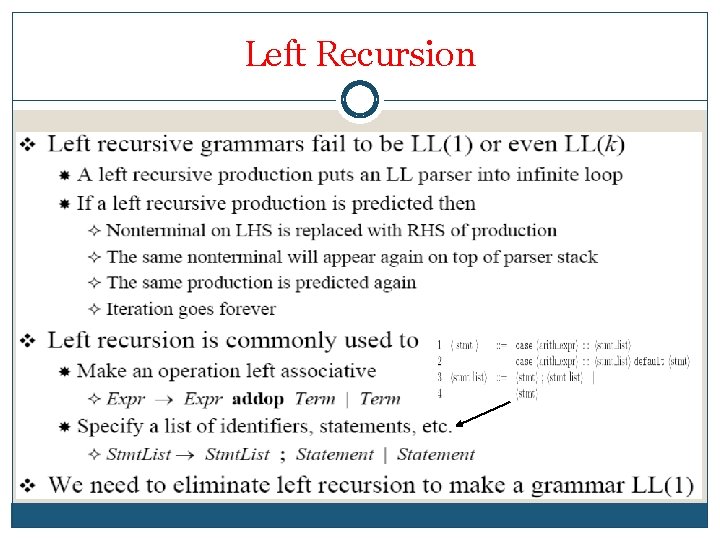

Left Recursion

The problem of Left Recursion (cont)

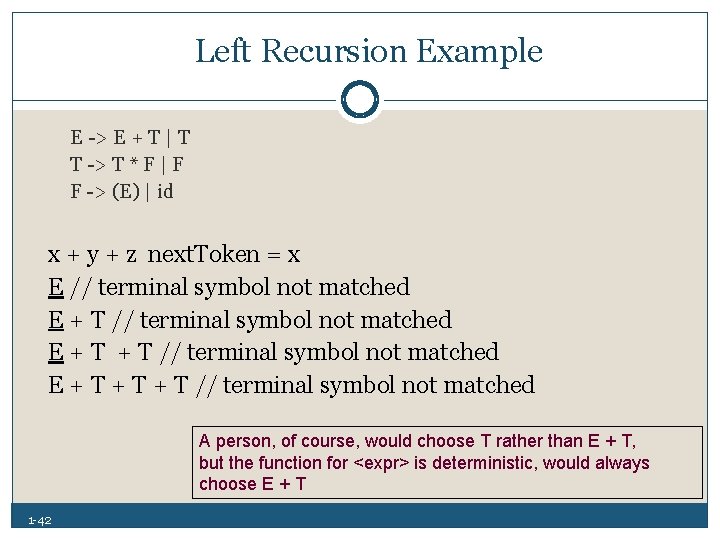

Left Recursion Example E > E + T | T T > T * F | F F > (E) | id x + y + z next. Token = x E // terminal symbol not matched E + T + T // terminal symbol not matched A person, of course, would choose T rather than E + T, but the function for <expr> is deterministic, would always choose E + T 1 42

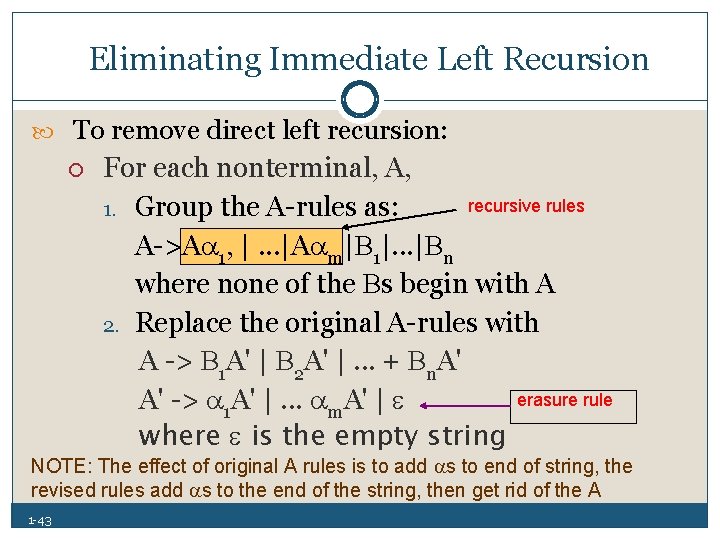

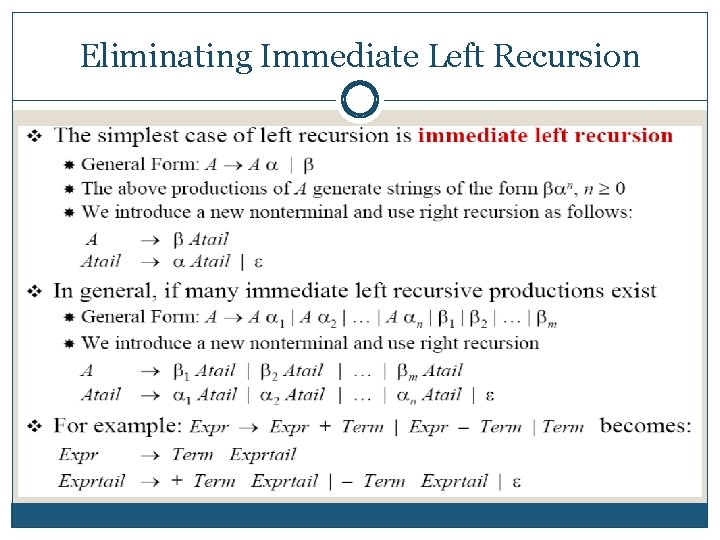

Eliminating Immediate Left Recursion To remove direct left recursion: For each nonterminal, A, recursive rules 1. Group the A rules as: A >A 1, |. . . |A m| 1|. . . | n where none of the s begin with A 2. Replace the original A rules with A > 1 A' | 2 A' |. . . + n. A' erasure rule A' > 1 A' |. . . m. A' | where is the empty string NOTE: The effect of original A rules is to add s to end of string, the revised rules add s to the end of the string, then get rid of the A 1 43

Eliminating Immediate Left Recursion

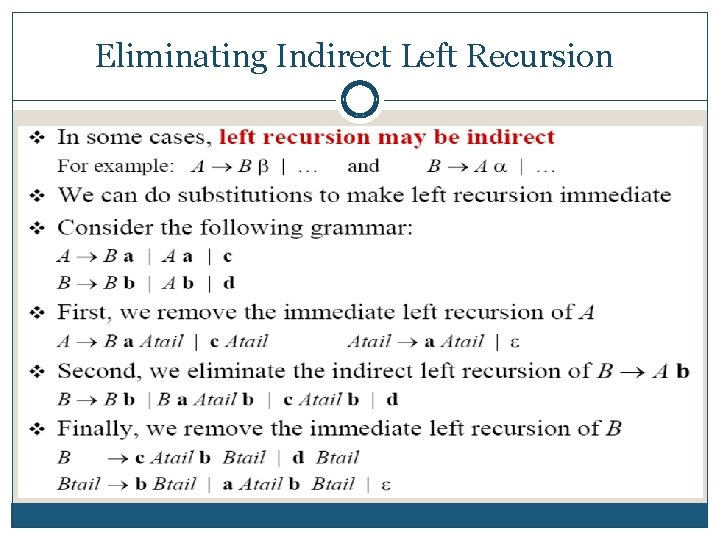

Eliminating Indirect Left Recursion

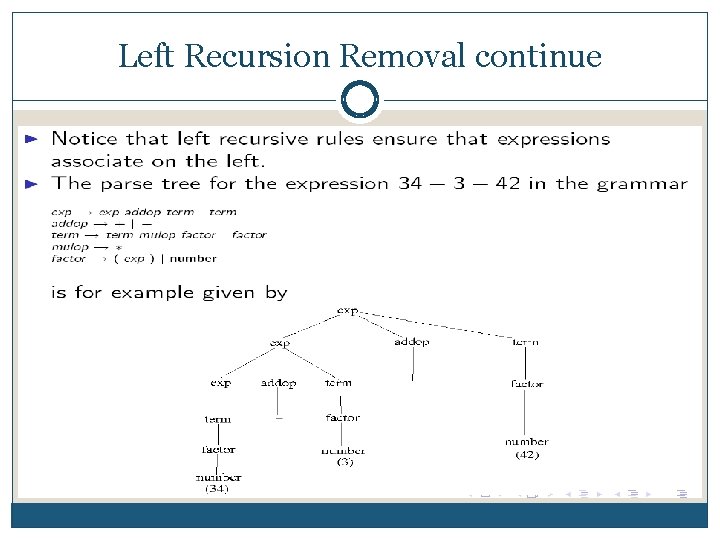

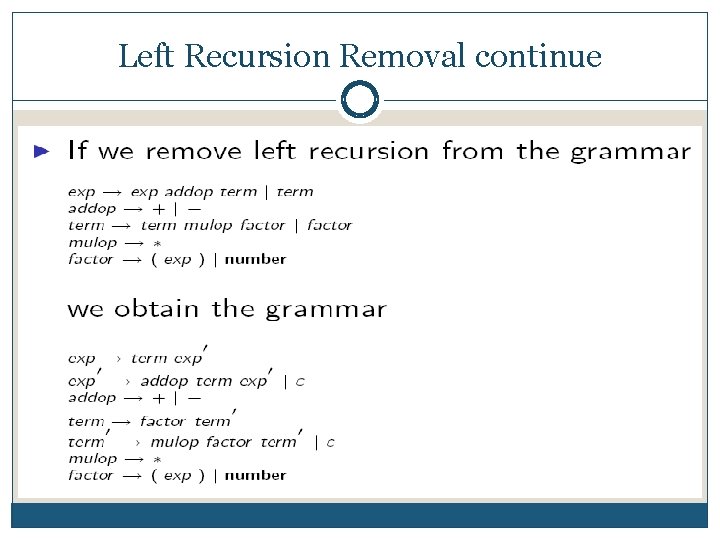

Left Recursion Removal continue

Left Recursion Removal continue

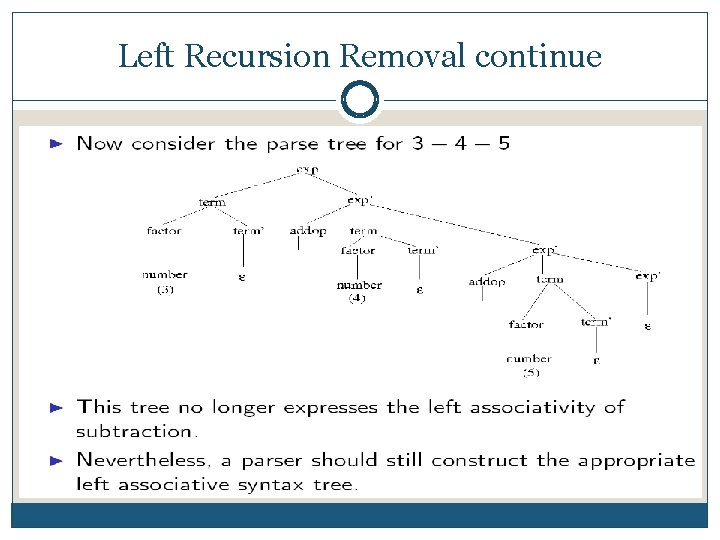

Left Recursion Removal continue

Left Recursion Removal continue

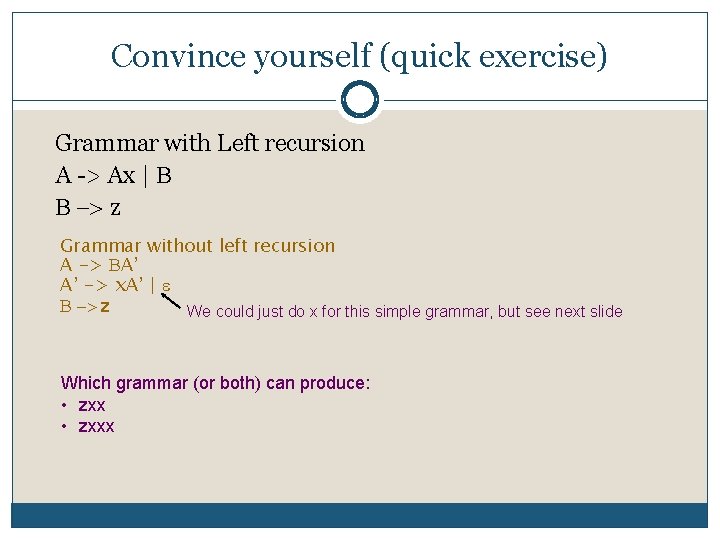

Convince yourself (quick exercise) Grammar with Left recursion A > Ax | -> z Grammar without left recursion A -> A’ A’ -> x. A’ | -> z We could just do x for this simple grammar, but see next slide Which grammar (or both) can produce: • zxxx

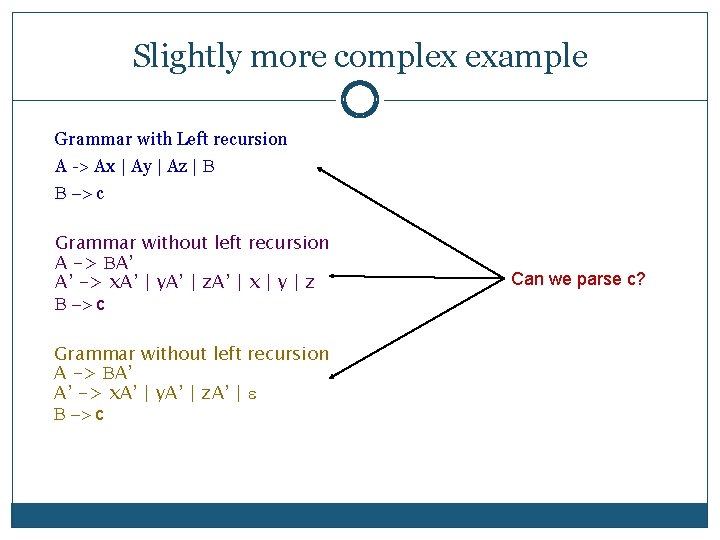

Slightly more complex example Grammar with Left recursion A > Ax | Ay | Az | -> c Grammar without left recursion A -> A’ A’ -> x. A’ | y. A’ | z. A’ | x | y | z -> c Grammar without left recursion A -> A’ A’ -> x. A’ | y. A’ | z. A’ | -> c Can we parse c?

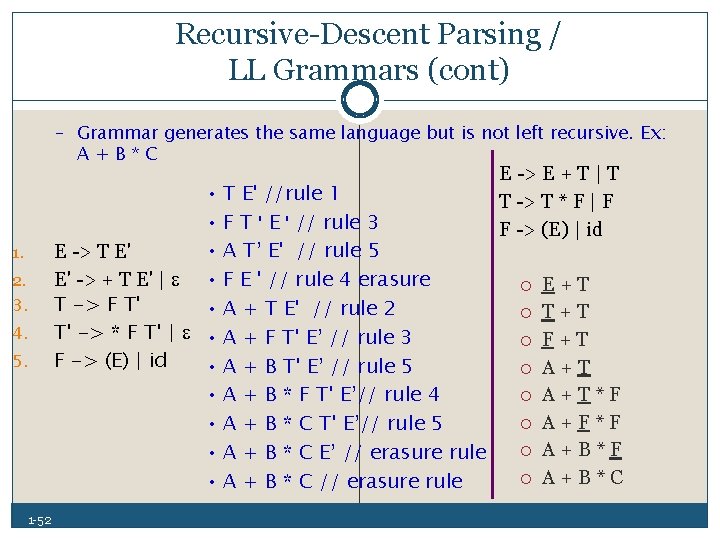

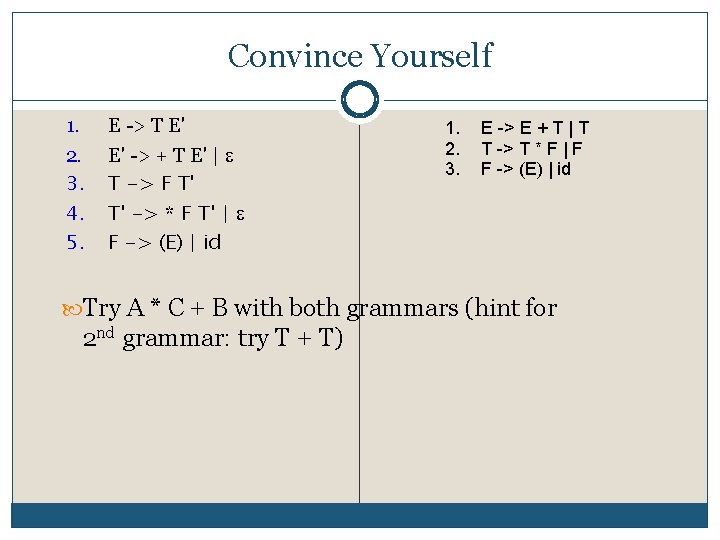

Recursive Descent Parsing / LL Grammars (cont) – Grammar generates the same language but is not left recursive. Ex: A+B*C 1. 2. 3. 4. 5. 1 52 • T E' //rule 1 • F T ' E ' // rule 3 • A T’ E' // rule 5 E > T E' E' > + T E' | • F E ' // rule 4 erasure T -> F T' • A + T E' // rule 2 T' -> * F T' | • A + F T' E’ // rule 3 F -> (E) | id • A + B T' E’ // rule 5 • A + B * F T' E’// rule 4 • A + B * C T' E’// rule 5 • A + B * C E’ // erasure rule • A + B * C // erasure rule E > E + T | T T > T * F | F F > (E) | id E + T T + T F + T A + T * F A + F * F A + B * C

Convince Yourself 1. 2. 3. 4. 5. E > T E' E' > + T E' | T -> F T' T' -> * F T' | F -> (E) | id 1. 2. 3. E -> E + T | T T -> T * F | F F -> (E) | id Try A * C + B with both grammars (hint for 2 nd grammar: try T + T)

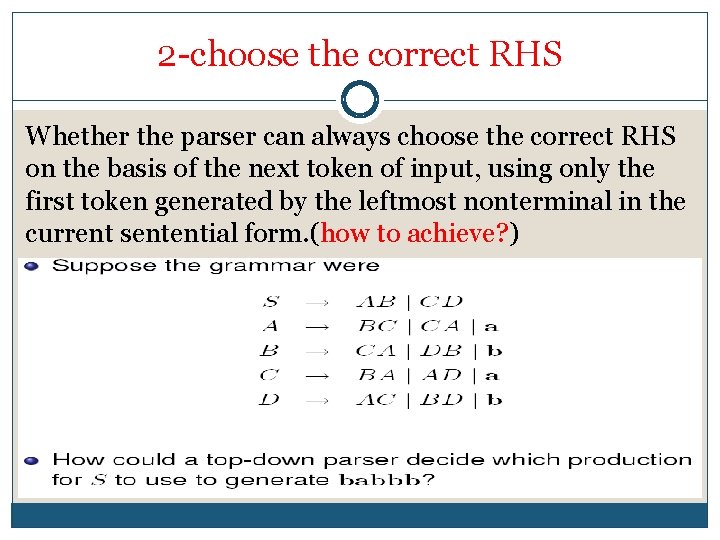

2 choose the correct RHS Whether the parser can always choose the correct RHS on the basis of the next token of input, using only the first token generated by the leftmost nonterminal in the current sentential form. (how to achieve? )

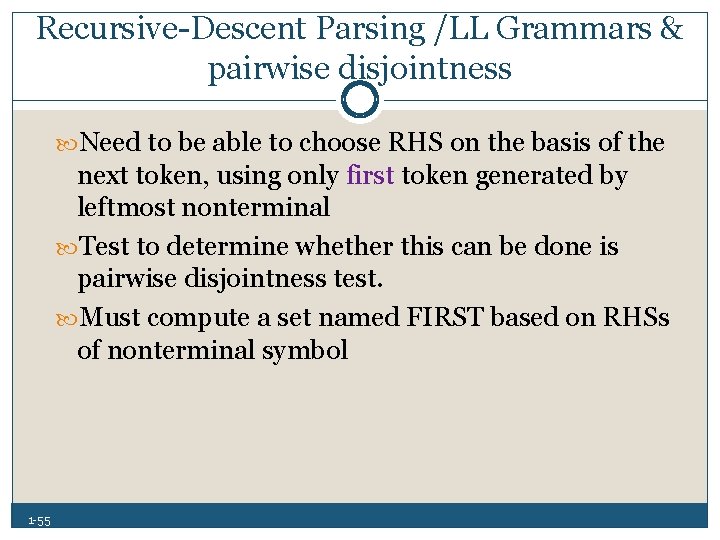

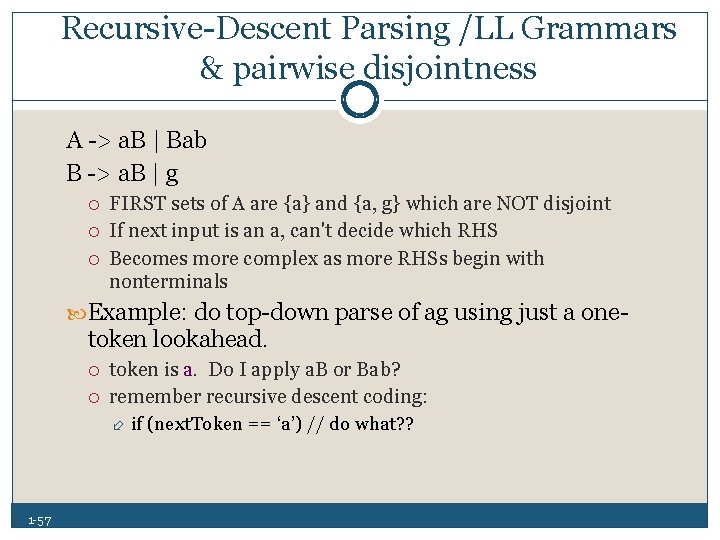

Recursive Descent Parsing /LL Grammars & pairwise disjointness Need to be able to choose RHS on the basis of the next token, using only first token generated by leftmost nonterminal Test to determine whether this can be done is pairwise disjointness test. Must compute a set named FIRST based on RHSs of nonterminal symbol 1 55

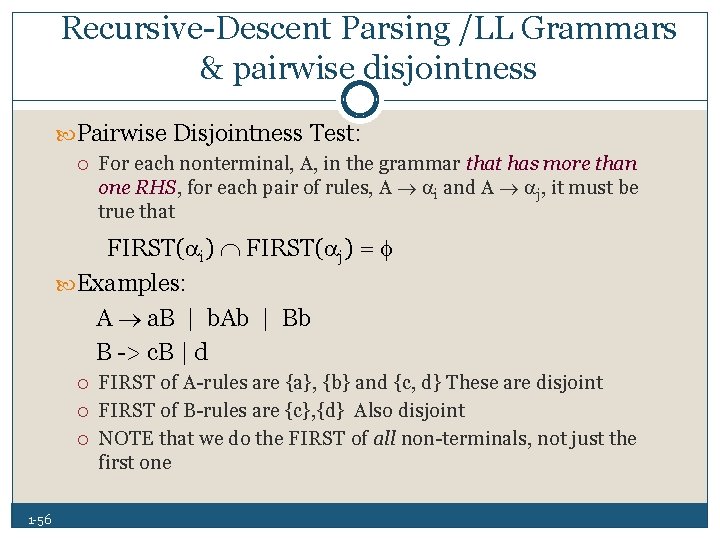

Recursive Descent Parsing /LL Grammars & pairwise disjointness Pairwise Disjointness Test: For each nonterminal, A, in the grammar that has more than one RHS, for each pair of rules, A i and A j, it must be true that FIRST( i) FIRST( j) = Examples: A a. B | b. Ab | Bb B > c. B | d 1 56 FIRST of A rules are {a}, {b} and {c, d} These are disjoint FIRST of B rules are {c}, {d} Also disjoint NOTE that we do the FIRST of all non terminals, not just the first one

Recursive Descent Parsing /LL Grammars & pairwise disjointness A > a. B | Bab B > a. B | g FIRST sets of A are {a} and {a, g} which are NOT disjoint If next input is an a, can't decide which RHS Becomes more complex as more RHSs begin with nonterminals Example: do top down parse of ag using just a one token lookahead. token is a. Do I apply a. B or Bab? remember recursive descent coding: 1 57 if (next. Token == ‘a’) // do what? ?

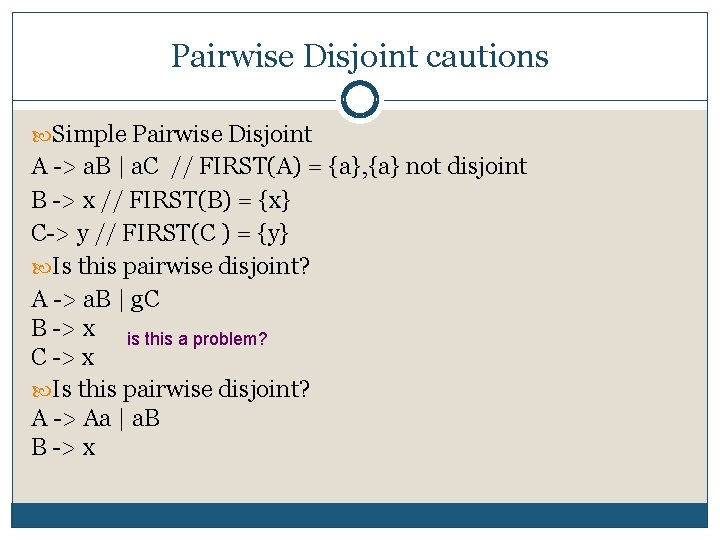

Pairwise Disjoint cautions Simple Pairwise Disjoint A > a. B | a. C // FIRST(A) = {a}, {a} not disjoint B > x // FIRST(B) = {x} C > y // FIRST(C ) = {y} Is this pairwise disjoint? A > a. B | g. C B > x is this a problem? C > x Is this pairwise disjoint? A > Aa | a. B B > x

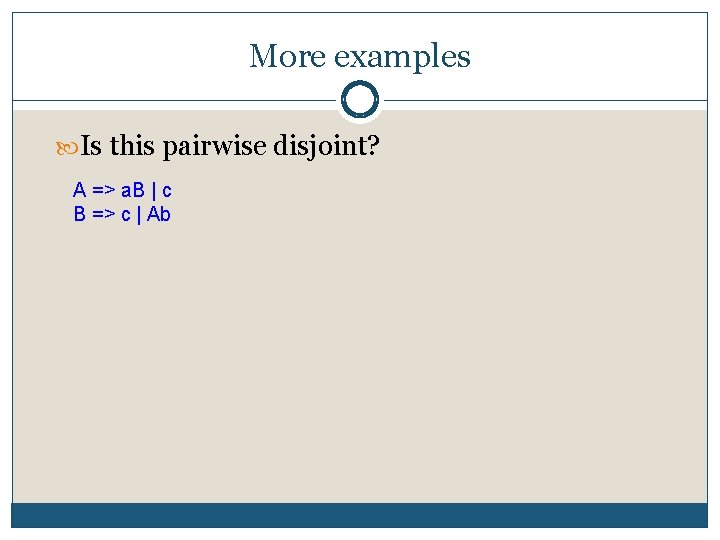

More examples Is this pairwise disjoint? A => a. B | c B => c | Ab

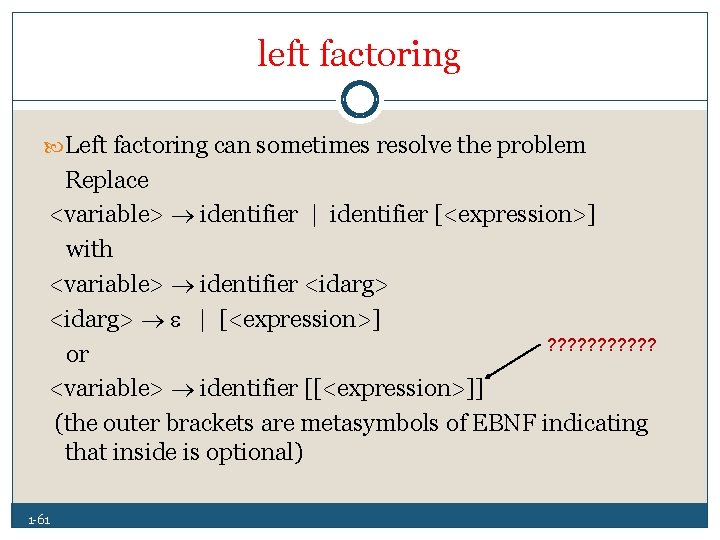

3 the problem of left factoring

left factoring Left factoring can sometimes resolve the problem Replace <variable> identifier | identifier [<expression>] with <variable> identifier <idarg> | [<expression>] ? ? ? or <variable> identifier [[<expression>]] (the outer brackets are metasymbols of EBNF indicating that inside is optional) 1 61

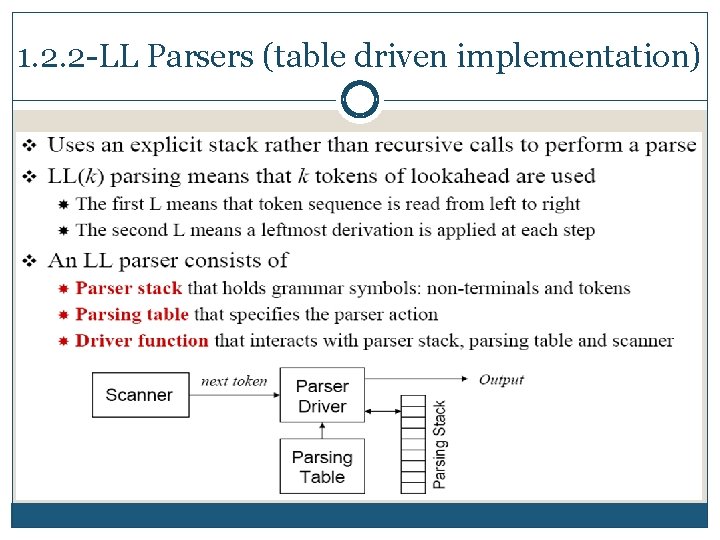

1. 2. 2 LL Parsers (table driven implementation)

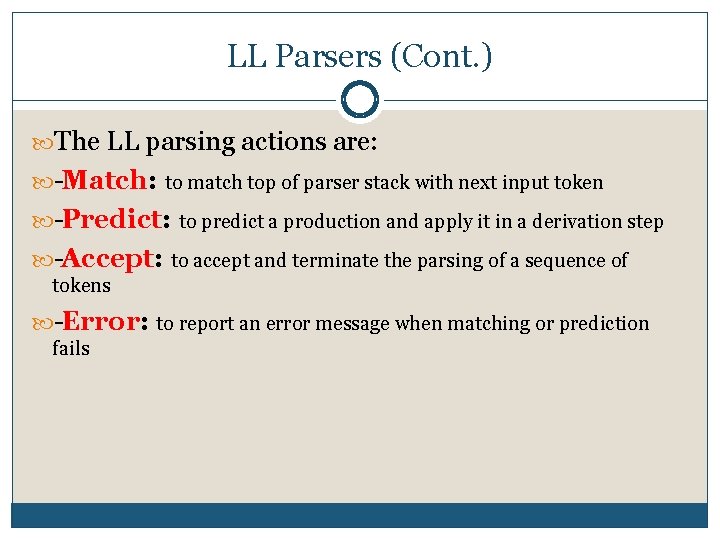

LL Parsers (Cont. ) The LL parsing actions are: Match: to match top of parser stack with next input token Predict: to predict a production and apply it in a derivation step Accept: to accept and terminate the parsing of a sequence of tokens Error: to report an error message when matching or prediction fails

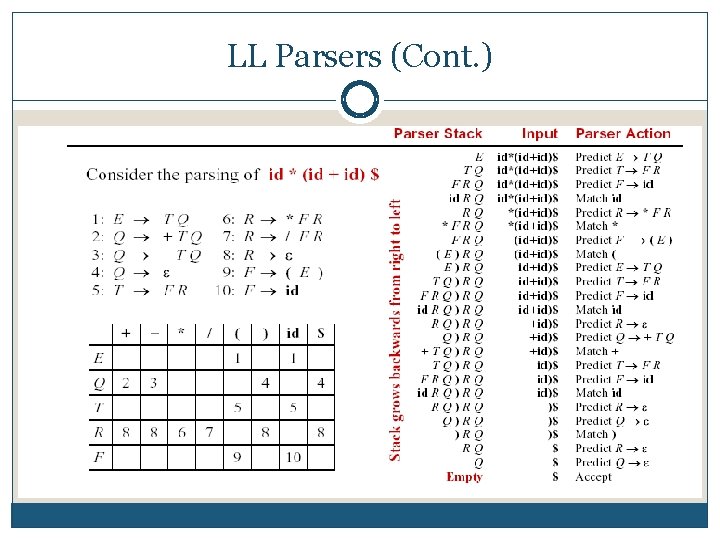

LL Parsers (Cont. )

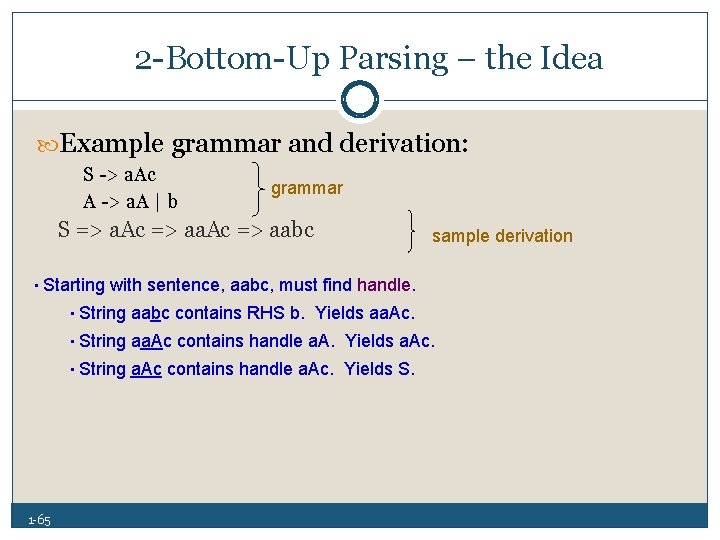

2 Bottom Up Parsing – the Idea Example grammar and derivation: S > a. Ac A > a. A | b grammar S => a. Ac => aabc sample derivation • Starting with sentence, aabc, must find handle. • String aabc contains RHS b. Yields aa. Ac. • String aa. Ac contains handle a. A. Yields a. Ac. • String a. Ac contains handle a. Ac. Yields S. 1 65

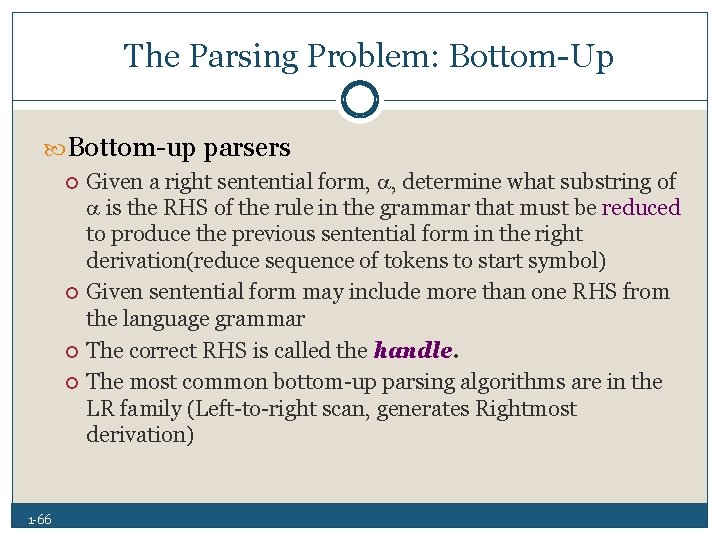

The Parsing Problem: Bottom Up Bottom up parsers 1 66 Given a right sentential form, , determine what substring of is the RHS of the rule in the grammar that must be reduced to produce the previous sentential form in the right derivation(reduce sequence of tokens to start symbol) Given sentential form may include more than one RHS from the language grammar The correct RHS is called the handle. The most common bottom up parsing algorithms are in the LR family (Left to right scan, generates Rightmost derivation)

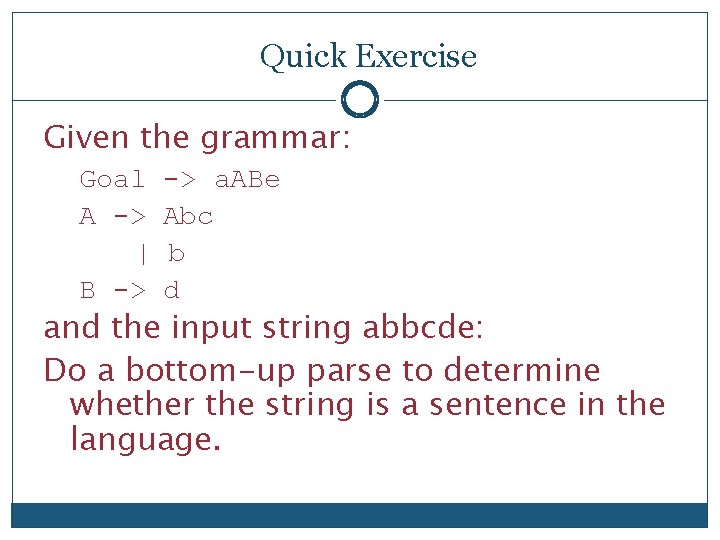

Quick Exercise Given the grammar: Goal A -> | B -> -> a. ABe Abc b d and the input string abbcde: Do a bottom-up parse to determine whether the string is a sentence in the language.

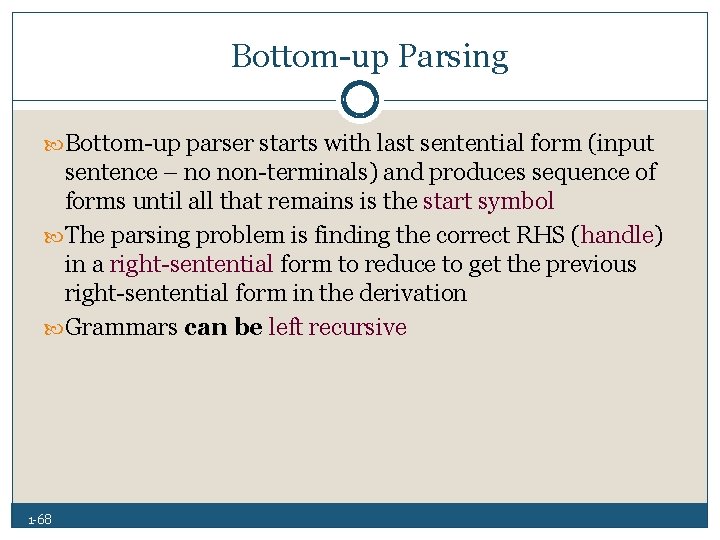

Bottom up Parsing Bottom up parser starts with last sentential form (input sentence – no non terminals) and produces sequence of forms until all that remains is the start symbol The parsing problem is finding the correct RHS (handle) in a right sentential form to reduce to get the previous right sentential form in the derivation Grammars can be left recursive 1 68

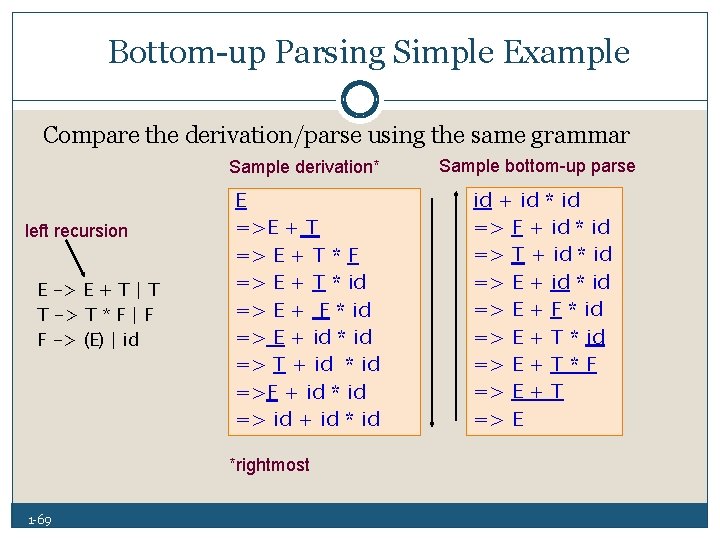

Bottom up Parsing Simple Example Compare the derivation/parse using the same grammar left recursion E -> E + T | T T -> T * F | F F -> (E) | id Sample derivation* Sample bottom-up parse E =>E + T => E + T * F => E + T * id => E + F * id => E + id * id => T + id * id =>F + id * id => id + id * id => F + id * id => T + id * id => E + F * id => E + T * F => E + T => E *rightmost 1 69

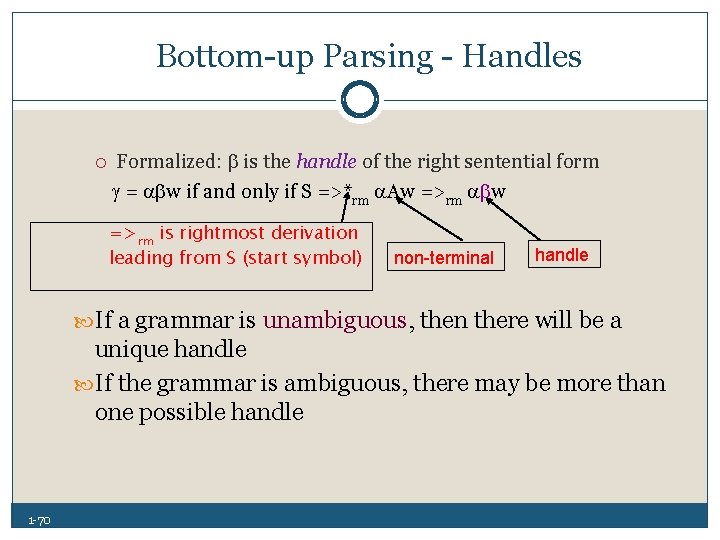

Bottom up Parsing Handles Formalized: is the handle of the right sentential form = w if and only if S =>*rm Aw =>rm w =>rm is rightmost derivation leading from S (start symbol) non-terminal handle If a grammar is unambiguous, then there will be a unique handle If the grammar is ambiguous, there may be more than one possible handle 1 70

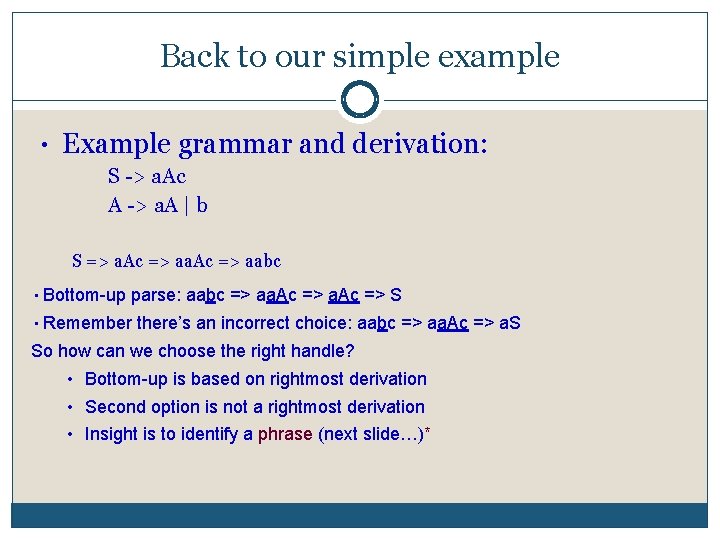

Back to our simple example • Example grammar and derivation: S > a. Ac A > a. A | b S => a. Ac => aabc • Bottom-up parse: aabc => aa. Ac => S • Remember there’s an incorrect choice: aabc => aa. Ac => a. S So how can we choose the right handle? • Bottom-up is based on rightmost derivation • Second option is not a rightmost derivation • Insight is to identify a phrase (next slide…)*

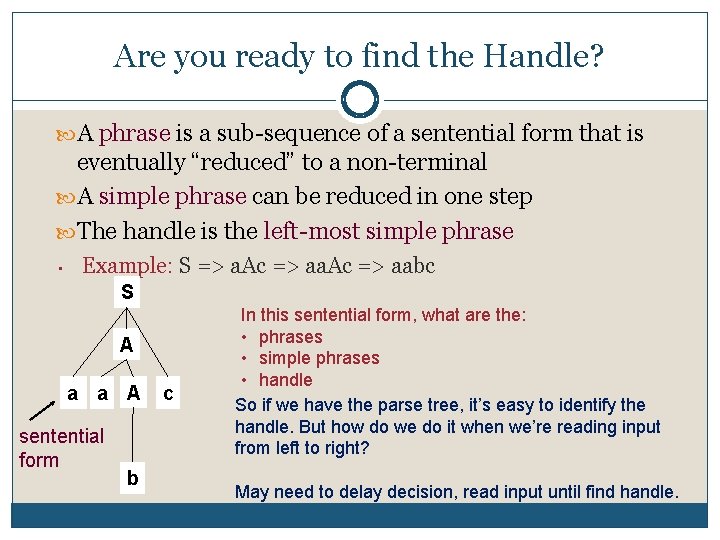

Are you ready to find the Handle? A phrase is a sub sequence of a sentential form that is eventually “reduced” to a non terminal A simple phrase can be reduced in one step The handle is the left most simple phrase • Example: S => a. Ac => aabc S A a a A sentential form b c In this sentential form, what are the: • phrases • simple phrases • handle So if we have the parse tree, it’s easy to identify the handle. But how do we do it when we’re reading input from left to right? May need to delay decision, read input until find handle.

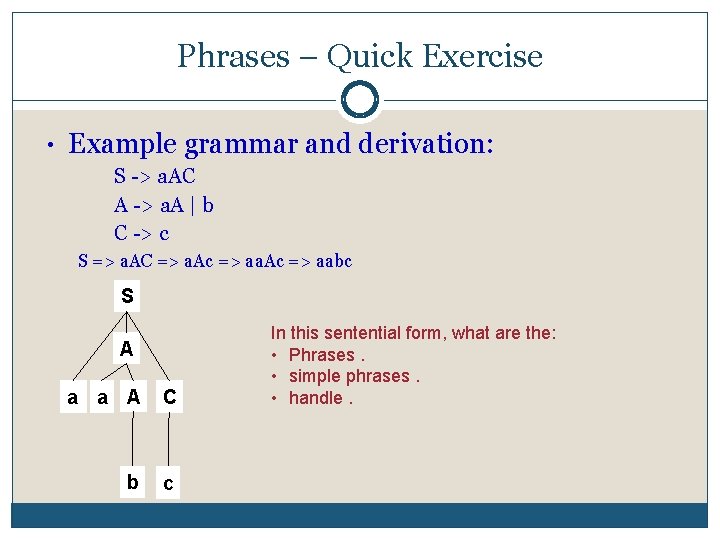

Phrases – Quick Exercise • Example grammar and derivation: S > a. AC A > a. A | b C > c S => a. AC => a. Ac => aabc S A a a A C b c In this sentential form, what are the: • Phrases. • simple phrases. • handle.

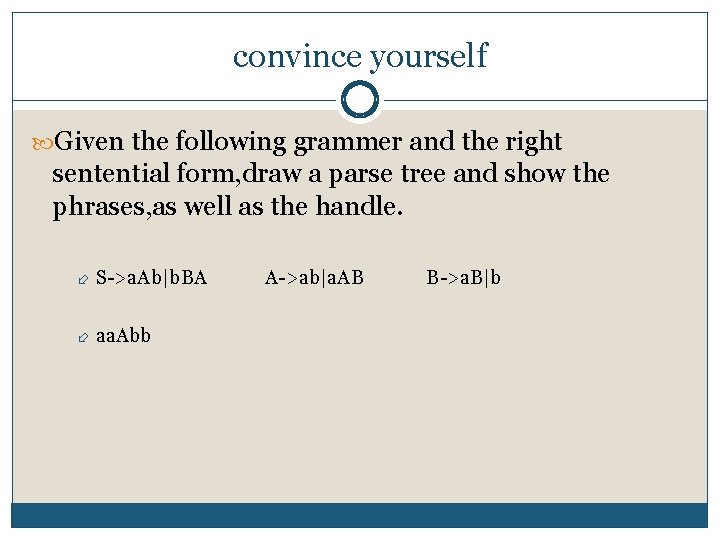

convince yourself Given the following grammer and the right sentential form, draw a parse tree and show the phrases, as well as the handle. S >a. Ab|b. BA A >ab|a. AB B >a. B|b aa. Abb

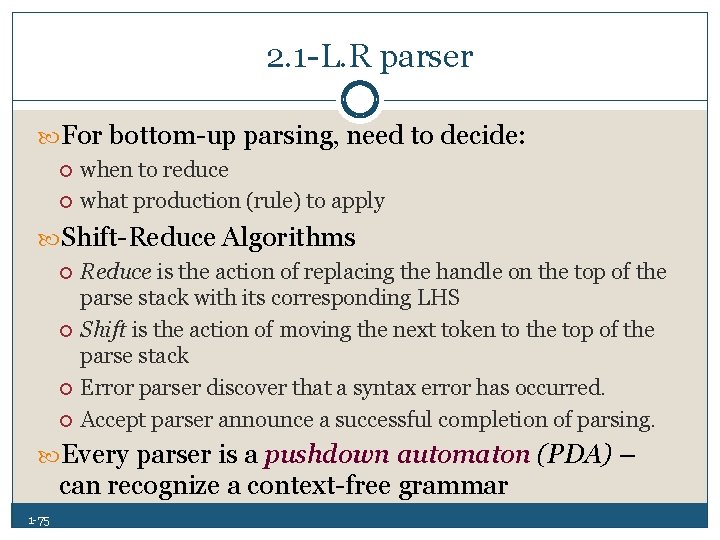

2. 1 L. R parser For bottom up parsing, need to decide: when to reduce what production (rule) to apply Shift Reduce Algorithms Reduce is the action of replacing the handle on the top of the parse stack with its corresponding LHS Shift is the action of moving the next token to the top of the parse stack Error parser discover that a syntax error has occurred. Accept parser announce a successful completion of parsing. Every parser is a pushdown automaton (PDA) – can recognize a context free grammar 1 75

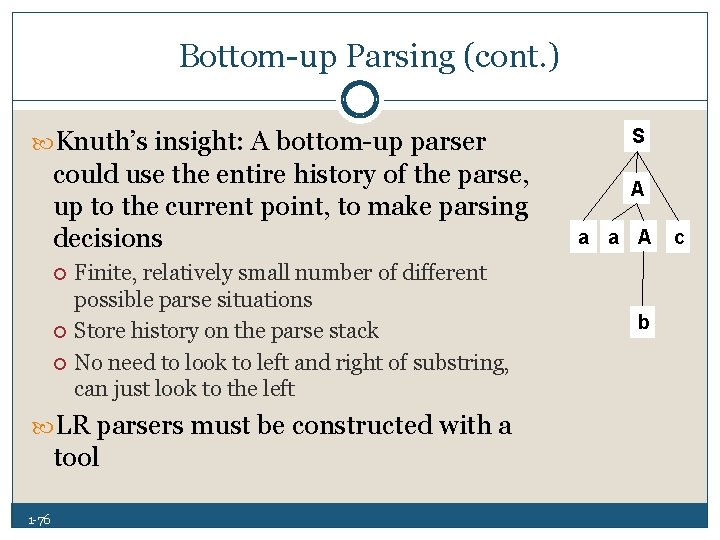

Bottom up Parsing (cont. ) Knuth’s insight: A bottom up parser could use the entire history of the parse, up to the current point, to make parsing decisions Finite, relatively small number of different possible parse situations Store history on the parse stack No need to look to left and right of substring, can just look to the left LR parsers must be constructed with a tool 1 76 S A a a A b c

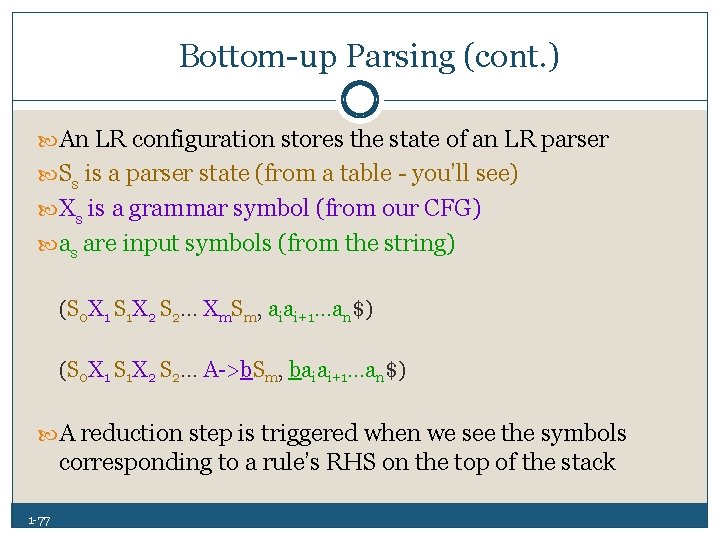

Bottom up Parsing (cont. ) An LR configuration stores the state of an LR parser Ss is a parser state (from a table you’ll see) Xs is a grammar symbol (from our CFG) as are input symbols (from the string) (S 0 X 1 S 1 X 2 S 2… Xm. Sm, aiai+1…an$) (S 0 X 1 S 1 X 2 S 2… A >b. Sm, baiai+1…an$) A reduction step is triggered when we see the symbols corresponding to a rule’s RHS on the top of the stack 1 77

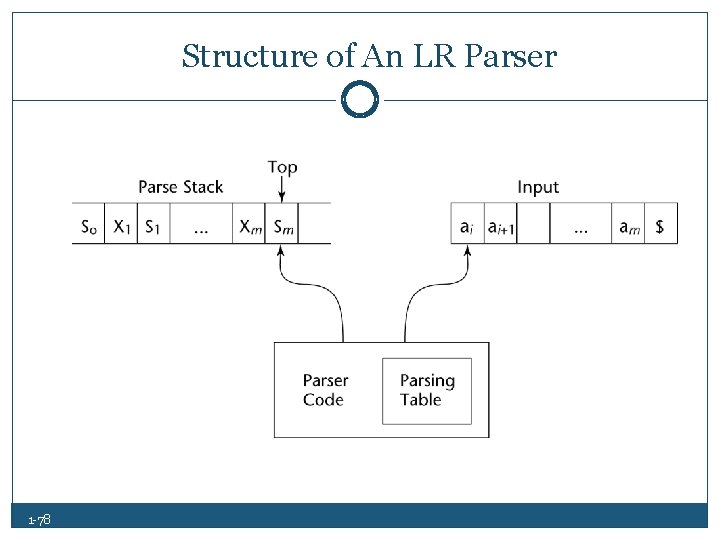

Structure of An LR Parser 1 78

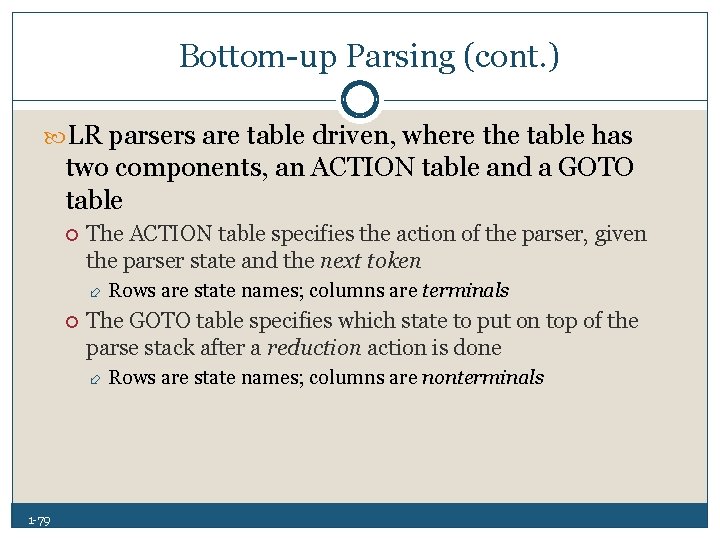

Bottom up Parsing (cont. ) LR parsers are table driven, where the table has two components, an ACTION table and a GOTO table The ACTION table specifies the action of the parser, given the parser state and the next token The GOTO table specifies which state to put on top of the parse stack after a reduction action is done 1 79 Rows are state names; columns are terminals Rows are state names; columns are nonterminals

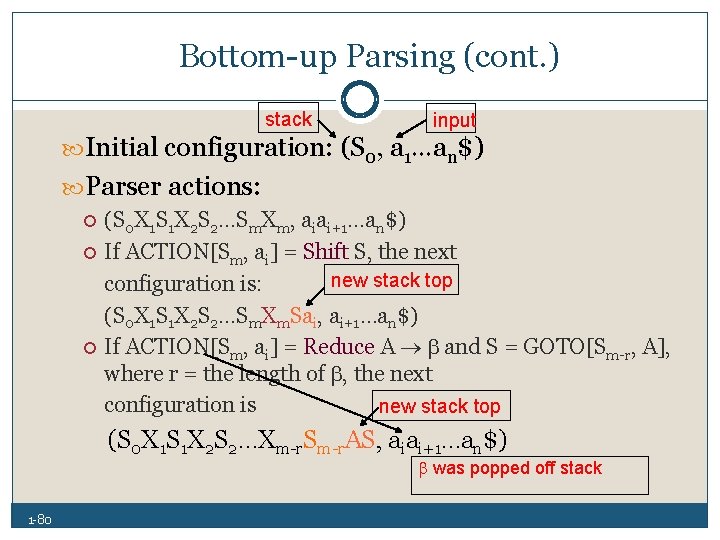

Bottom up Parsing (cont. ) stack input Initial configuration: (S 0, a 1…an$) Parser actions: (S 0 X 1 S 1 X 2 S 2…Sm. Xm, aiai+1…an$) If ACTION[Sm, ai] = Shift S, the next new stack top configuration is: (S 0 X 1 S 1 X 2 S 2…Sm. Xm. Sai, ai+1…an$) If ACTION[Sm, ai] = Reduce A and S = GOTO[Sm r, A], where r = the length of , the next configuration is new stack top (S 0 X 1 S 1 X 2 S 2…Xm r. Sm r. AS, aiai+1…an$) was popped off stack 1 80

![Bottom up Parsing (cont. ) Parser actions (continued): 1 81 If ACTION[Sm, ai] = Bottom up Parsing (cont. ) Parser actions (continued): 1 81 If ACTION[Sm, ai] =](http://slidetodoc.com/presentation_image_h/2a94b295f15840a5a6ffdd09a40c8581/image-81.jpg)

Bottom up Parsing (cont. ) Parser actions (continued): 1 81 If ACTION[Sm, ai] = Accept, the parse is complete and no errors were found. If ACTION[Sm, ai] = Error, the parser calls an error handling routine.

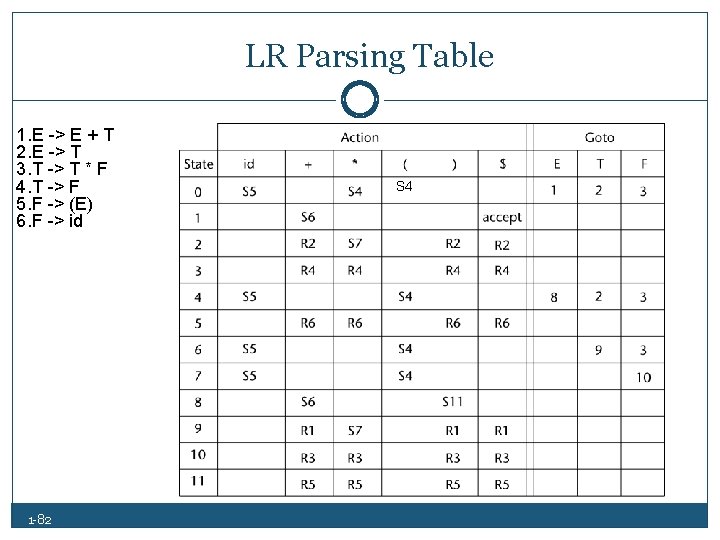

LR Parsing Table 1. E -> E + T 2. E -> T 3. T -> T * F 4. T -> F 5. F -> (E) 6. F -> id 1 82 S 4

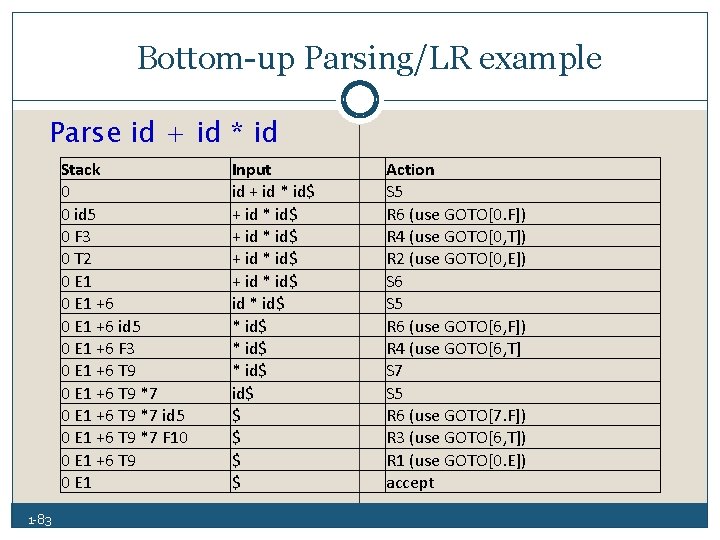

Bottom up Parsing/LR example Parse id + id * id Stack 0 0 id 5 0 F 3 0 T 2 0 E 1 +6 id 5 0 E 1 +6 F 3 0 E 1 +6 T 9 *7 id 5 0 E 1 +6 T 9 *7 F 10 0 E 1 +6 T 9 0 E 1 1 83 Input id + id * id$ + id * id$ * id$ $ $ Action S 5 R 6 (use GOTO[0. F]) R 4 (use GOTO[0, T]) R 2 (use GOTO[0, E]) S 6 S 5 R 6 (use GOTO[6, F]) R 4 (use GOTO[6, T] S 7 S 5 R 6 (use GOTO[7. F]) R 3 (use GOTO[6, T]) R 1 (use GOTO[0. E]) accept

Bottom up Parsing/ LR parsers use a relatively small program and a parsing table Advantages of LR parsers: 1 84 They will work for nearly all grammars that describe programming languages. They work on a larger class of grammars than other bottom up algorithms, but are as efficient as any other bottom up parser. They can detect syntax errors as soon as it is possible in left to right scan The LR class of grammars is a superset of the class parsable by LL parsers.

- Slides: 84