Lex and Yacc COP 3402 General Compiler Infrastructure

![Pattern Matching and Action Match a character in the a-z range Buffer [a-z] { Pattern Matching and Action Match a character in the a-z range Buffer [a-z] {](https://slidetodoc.com/presentation_image/b746263ed1478b7723fdb0b68f3a75c9/image-10.jpg)

- Slides: 15

Lex and Yacc COP - 3402

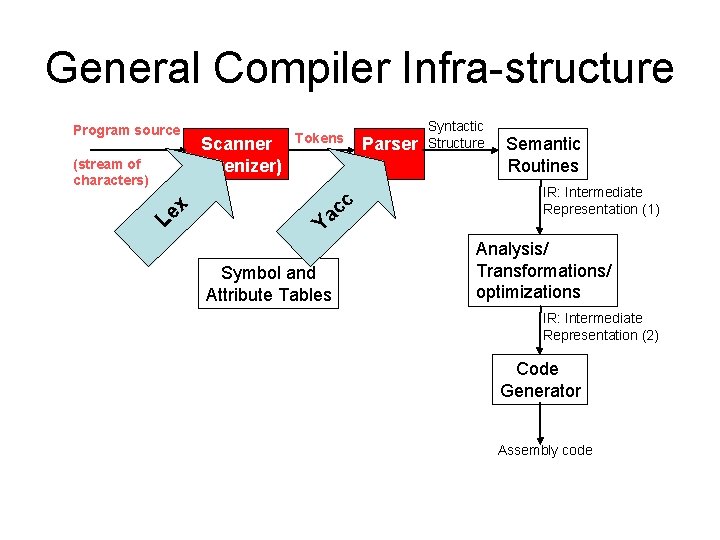

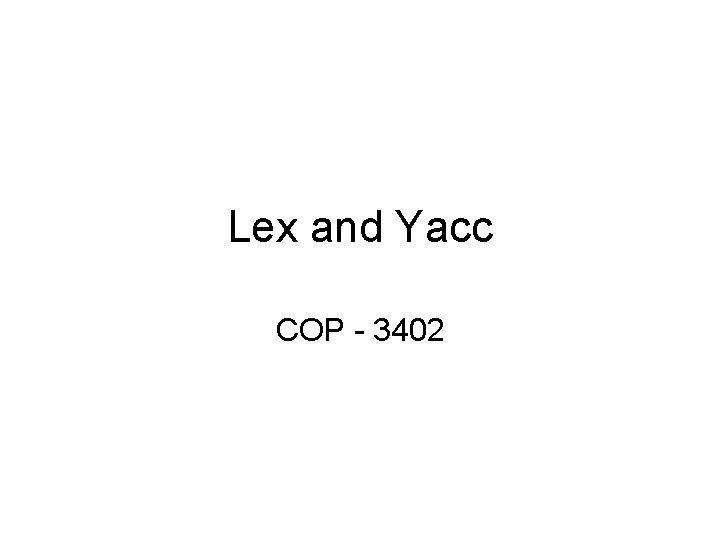

General Compiler Infra-structure Program source (stream of characters) x e L Scanner (tokenizer) Tokens Ya Symbol and Attribute Tables cc Parser Syntactic Structure Semantic Routines IR: Intermediate Representation (1) Analysis/ Transformations/ optimizations IR: Intermediate Representation (2) Code Generator Assembly code

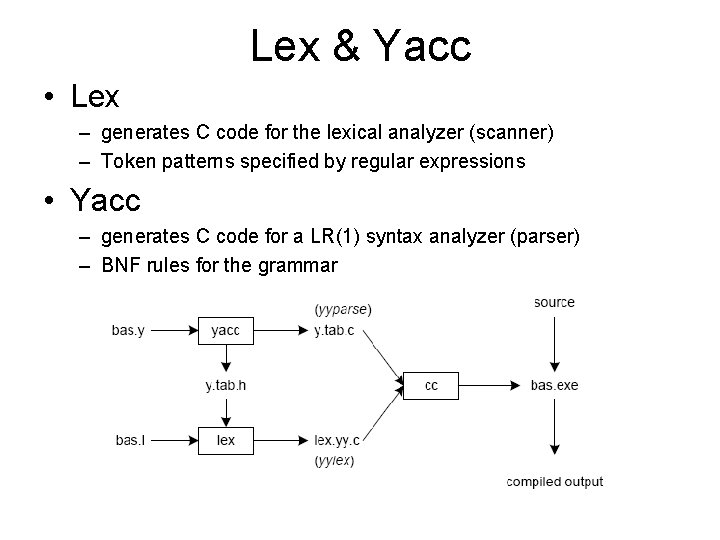

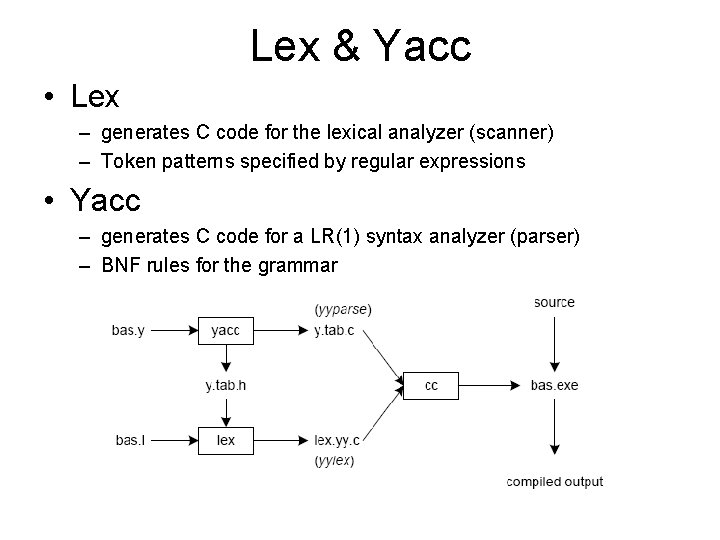

Lex & Yacc • Lex – generates C code for the lexical analyzer (scanner) – Token patterns specified by regular expressions • Yacc – generates C code for a LR(1) syntax analyzer (parser) – BNF rules for the grammar

Lex lex is a program (generator) that generates lexical analyzers, (widely used on Unix). It is mostly used with Yacc parser generator. Written It by Eric Schmidt and Mike Lesk. reads the input stream (specifying the lexical analyzer ) and outputs source code implementing the lexical analyzer in the C programming language. Lex will read patterns (regular expressions); then produces C code for a lexical analyzer that scans for identifiers.

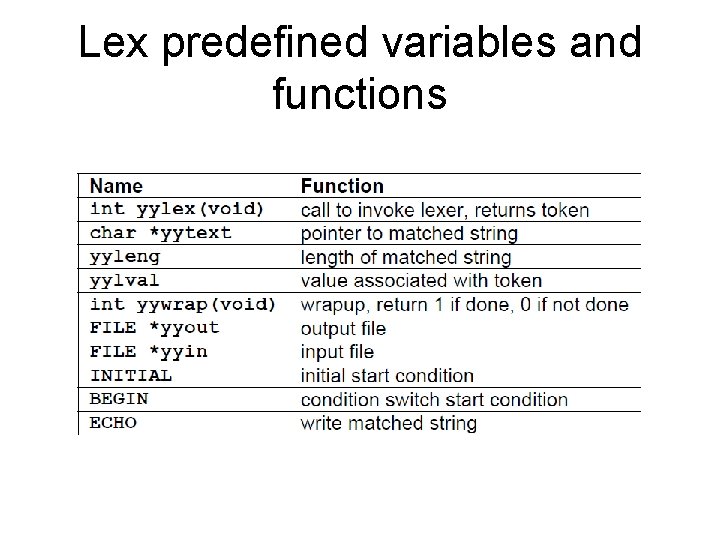

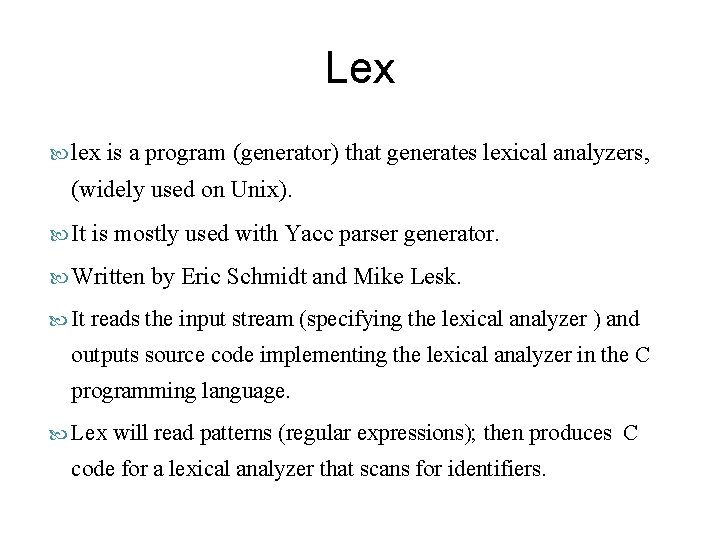

Lex predefined variables and functions

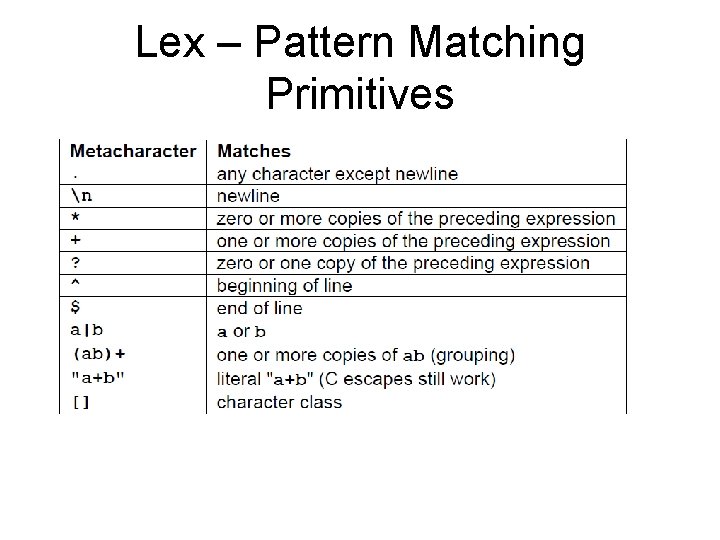

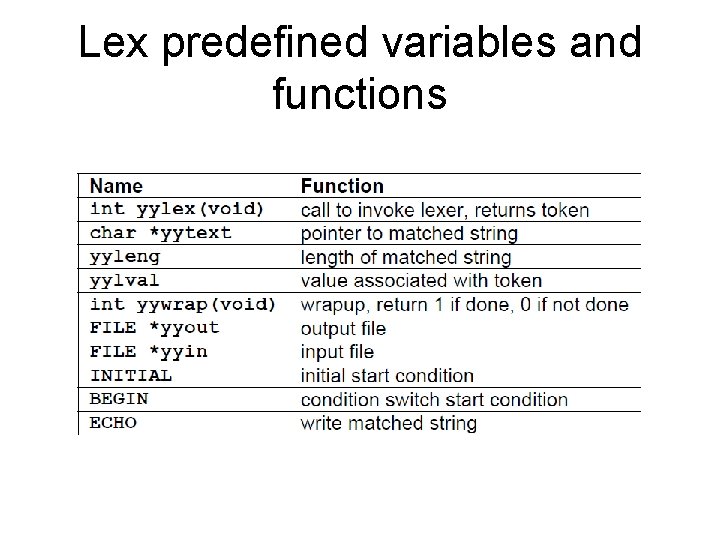

Lex – Pattern Matching Primitives

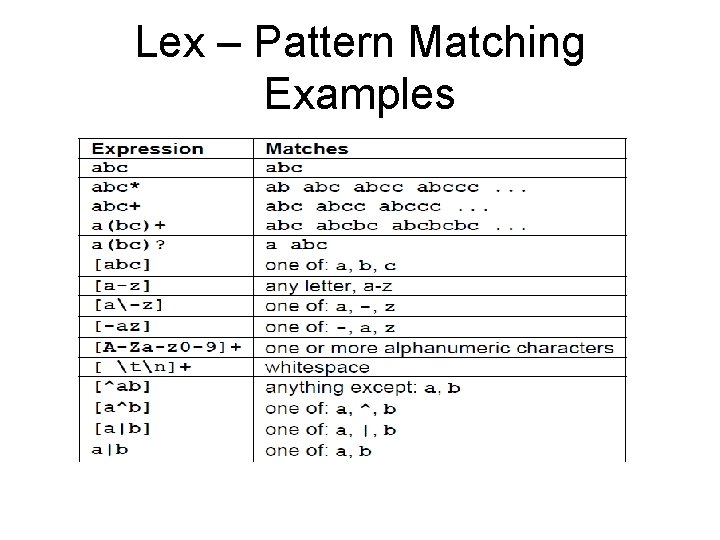

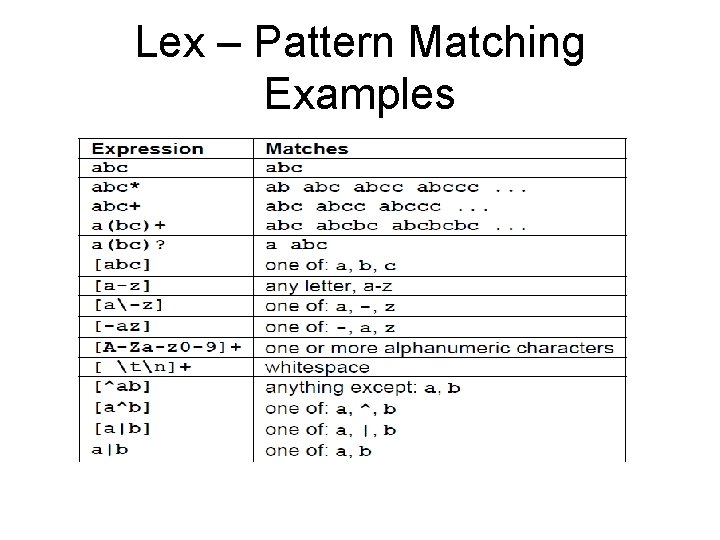

Lex – Pattern Matching Examples

Example: Simple Calculator • Computes the basic arithmetic operations • Allows declaration of variables • Enough to illustrate the basic structure of Lex and Yacc programs

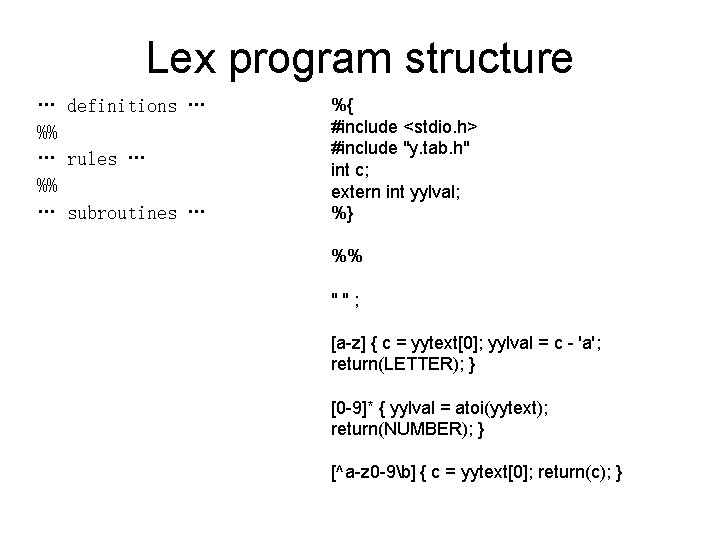

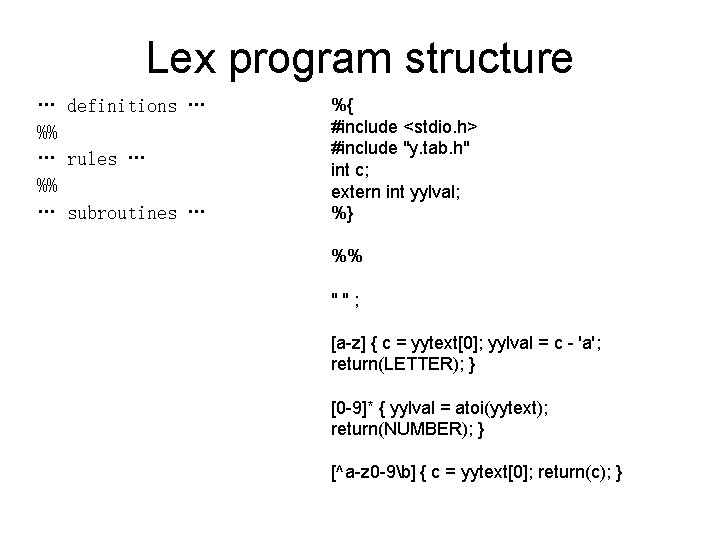

Lex program structure … definitions … %% … rules … %% … subroutines … %{ #include <stdio. h> #include "y. tab. h" int c; extern int yylval; %} %% " " ; [a-z] { c = yytext[0]; yylval = c - 'a'; return(LETTER); } [0 -9]* { yylval = atoi(yytext); return(NUMBER); } [^a-z 0 -9b] { c = yytext[0]; return(c); }

![Pattern Matching and Action Match a character in the az range Buffer az Pattern Matching and Action Match a character in the a-z range Buffer [a-z] {](https://slidetodoc.com/presentation_image/b746263ed1478b7723fdb0b68f3a75c9/image-10.jpg)

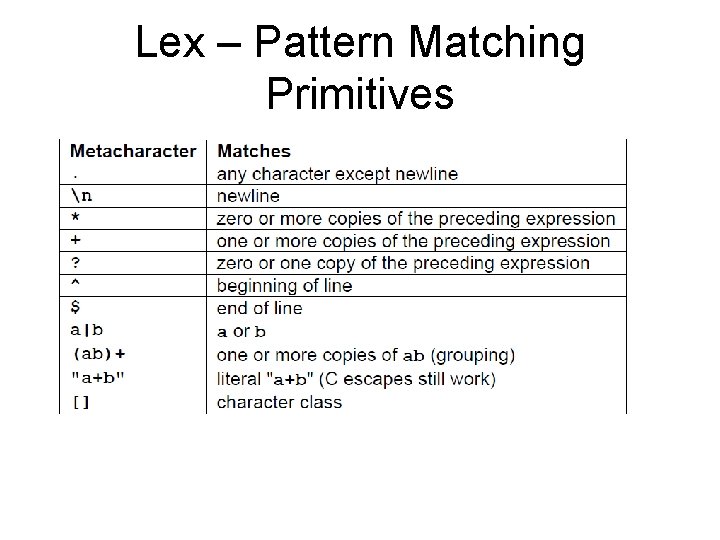

Pattern Matching and Action Match a character in the a-z range Buffer [a-z] { c = yytext[0]; yylval = c - 'a'; return(LETTER); } Place the offset c – ‘a’ Match a positive integer (sequence of 0 -9 digits) [0 -9]* { yylval = atoi(yytext); return(NUMBER); } In the stack Place the integer value In the stack

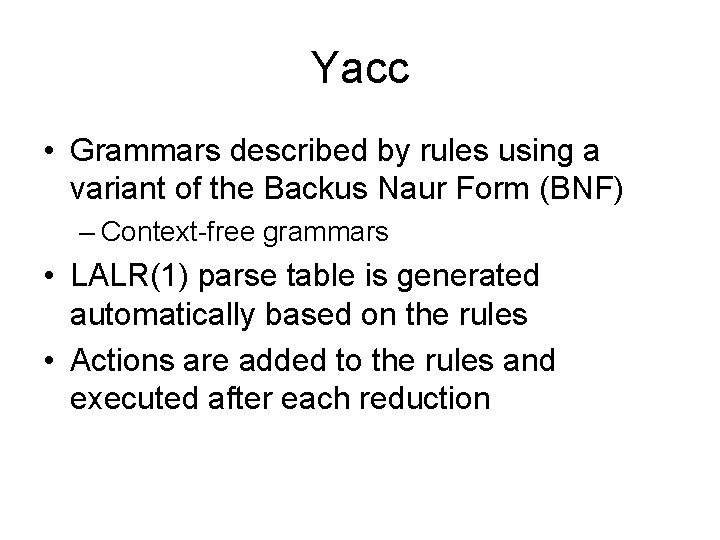

Yacc • Grammars described by rules using a variant of the Backus Naur Form (BNF) – Context-free grammars • LALR(1) parse table is generated automatically based on the rules • Actions are added to the rules and executed after each reduction

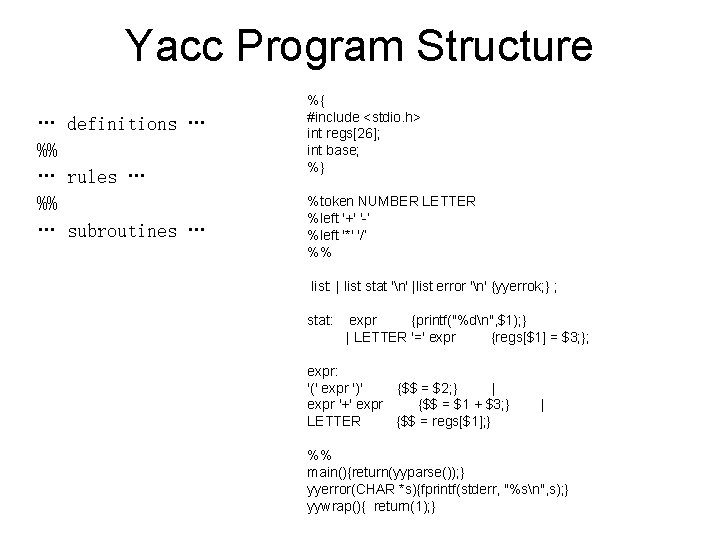

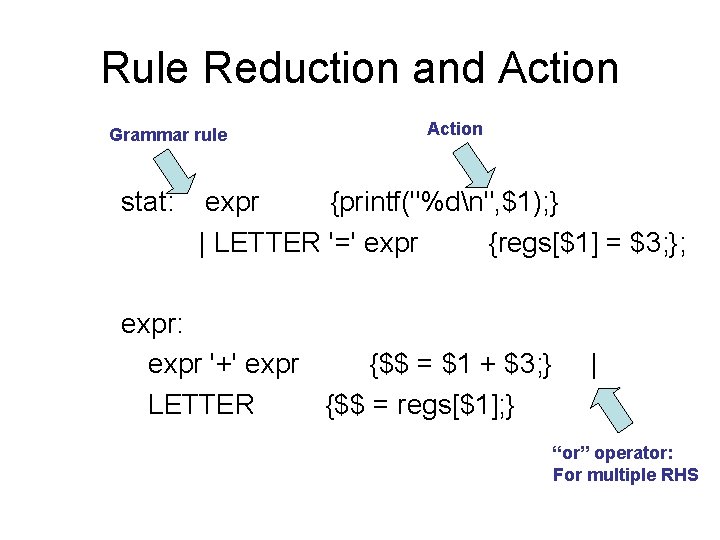

Yacc Program Structure … definitions … %% … rules … %% … subroutines … %{ #include <stdio. h> int regs[26]; int base; %} %token NUMBER LETTER %left '+' '-‘ %left '*' '/‘ %% list: | list stat 'n' |list error 'n' {yyerrok; } ; stat: expr {printf("%dn", $1); } | LETTER '=' expr {regs[$1] = $3; }; expr: '(' expr ')' {$$ = $2; } | expr '+' expr {$$ = $1 + $3; } | LETTER {$$ = regs[$1]; } %% main(){return(yyparse()); } yyerror(CHAR *s){fprintf(stderr, "%sn", s); } yywrap(){ return(1); }

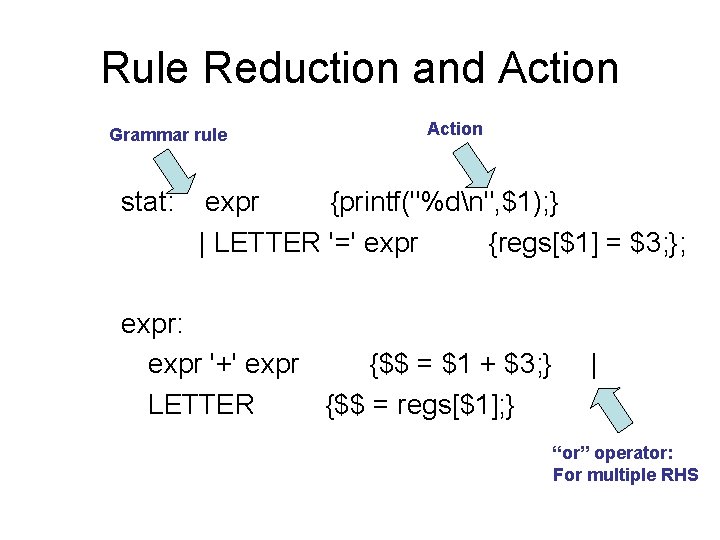

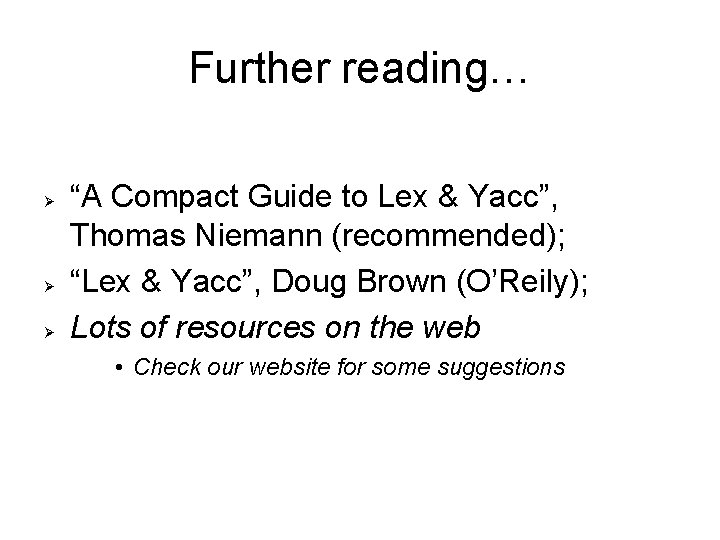

Rule Reduction and Action Grammar rule Action stat: expr {printf("%dn", $1); } | LETTER '=' expr {regs[$1] = $3; }; expr: expr '+' expr {$$ = $1 + $3; } | LETTER {$$ = regs[$1]; } “or” operator: For multiple RHS

Further reading… “A Compact Guide to Lex & Yacc”, Thomas Niemann (recommended); “Lex & Yacc”, Doug Brown (O’Reily); Lots of resources on the web • Check our website for some suggestions

Conclusions • Yacc and Lex are very helpful for building the compiler front-end • A lot of time is saved when compared to hand-implementation of parser and scanner • They both work as a mixture of “rules” and “C code” • C code is generated and is merged with the rest of the compiler code