Leveraging Dynamically Scalable Cores in CMPs Thesis Proposal

Leveraging Dynamically Scalable Cores in CMPs Thesis Proposal 5 May 2009 Dan Gibson 1

Scalability defn. SCALABLE Pronunciation: 'skā-lə-bəl Function: adjective 1. capable of being scaled expanded/upgraded OR reduced in size 2. capable of being easily expanded or upgraded on demand <a scalable computer network> [Merriam-Webster 2009] 2

Executive Summary (1/2) n n CMPs target a wide userbase Future CMPs should deliver: ¨ TLP, when many threads are available ¨ ILP, when few threads are available ¨ Reasonable power envelopes, all the time n Future Scalable CMPs should be able to: ¨ Scale UP for performance, ¨ Scale DOWN for energy conservation 3

Executive Summary (2/2) n Scalable CMP = Cores that can scale (mechanism) + Policies for scaling Forwardflow: Scalable Uniprocessors Proposed: Dynamically Scalable Forwardflow Cores Proposed: Hierarchical Operand Networks for Scalable Cores Proposed: Control Independence for Scalable Cores 4

Outline n n n CMP 2009 2019: Trends toward Scalable Chips Work So Far: Forwardflow Proposed Work ¨ Methodology ¨ Dynamic Scaling ¨ Operand Networks ¨ Control Independence ¨ Miscellanea (Maybe) n Schedule/Closing Remarks 5

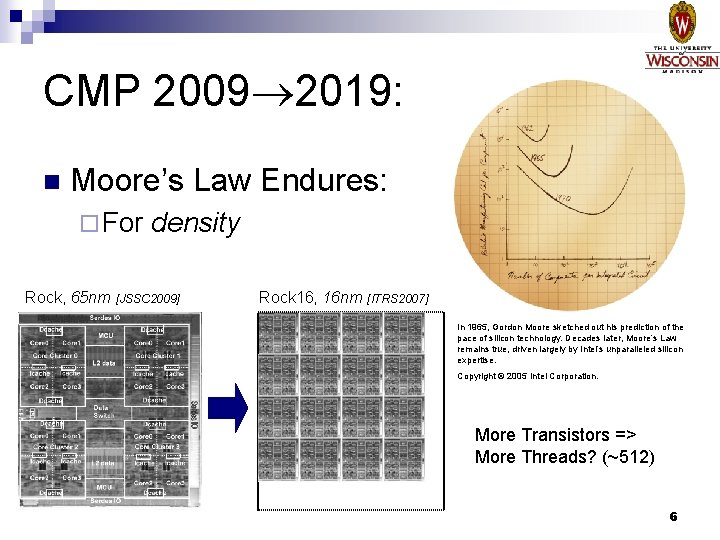

CMP 2009 2019: n Moore’s Law Endures: ¨ For Rock, 65 nm density [JSSC 2009] Rock 16, 16 nm [ITRS 2007] In 1965, Gordon Moore sketched out his prediction of the pace of silicon technology. Decades later, Moore’s Law remains true, driven largely by Intel’s unparalleled silicon expertise. Copyright © 2005 Intel Corporation. More Transistors => More Threads? (~512) 6

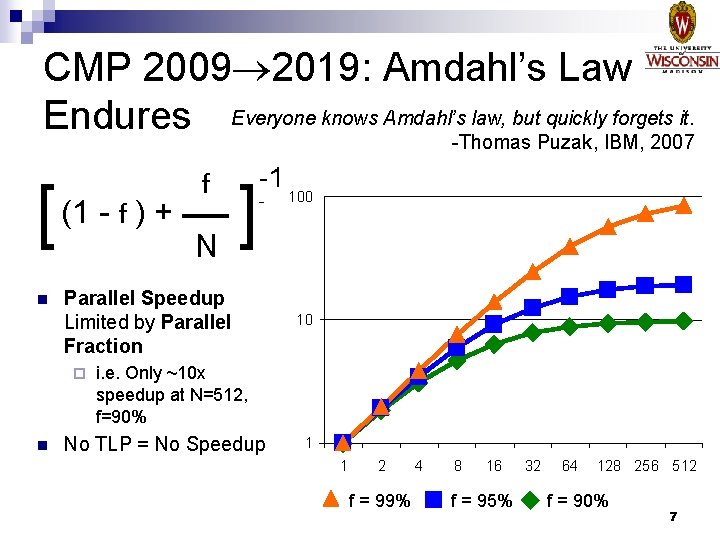

CMP 2009 2019: Amdahl’s Law Endures Everyone knows Amdahl’s law, but quickly forgets it. -Thomas Puzak, IBM, 2007 f [ (1 - f ) + N ] n Parallel Speedup Limited by Parallel Fraction ¨ n -1 100 10 i. e. Only ~10 x speedup at N=512, f=90% No TLP = No Speedup 1 1 2 f = 99% 4 8 16 f = 95% 32 64 128 256 512 f = 90% 7

![CMP 2009 2019: SAF [Chakraborty 2008] Simultaneously Active Fraction (SAF): Fraction of 1 0. CMP 2009 2019: SAF [Chakraborty 2008] Simultaneously Active Fraction (SAF): Fraction of 1 0.](http://slidetodoc.com/presentation_image_h/2c57335e51055040a8920dc03c25a2a4/image-8.jpg)

CMP 2009 2019: SAF [Chakraborty 2008] Simultaneously Active Fraction (SAF): Fraction of 1 0. 8 Dynamic SAF n devices that can be active at the same time, while still remaining within the chip’s power budget. 0. 6 0. 4 0. 2 0 HP Devices LP Devices 90 nm 65 nm 45 nm 32 nm More Transistors Lots of them have to be off [Hill 08] Flavors of “Off” 8

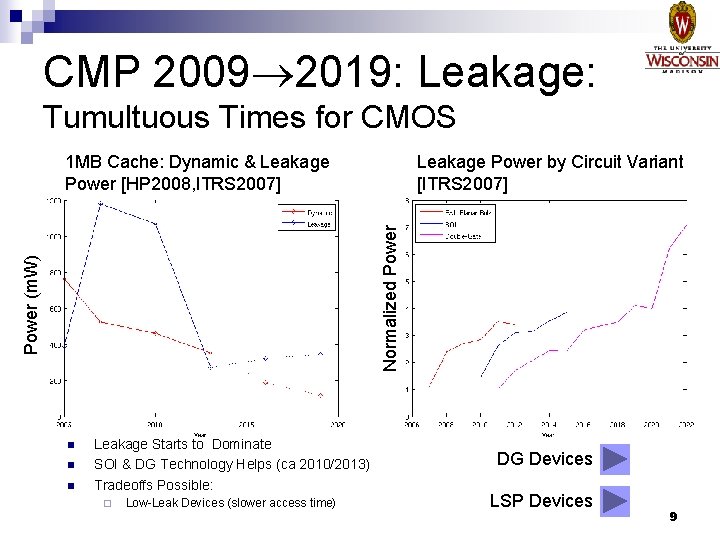

CMP 2009 2019: Leakage: Tumultuous Times for CMOS Leakage Power by Circuit Variant [ITRS 2007] Power (m. W) Normalized Power 1 MB Cache: Dynamic & Leakage Power [HP 2008, ITRS 2007] n n n Leakage Starts to Dominate SOI & DG Technology Helps (ca 2010/2013) Tradeoffs Possible: ¨ Low-Leak Devices (slower access time) DG Devices LSP Devices 9

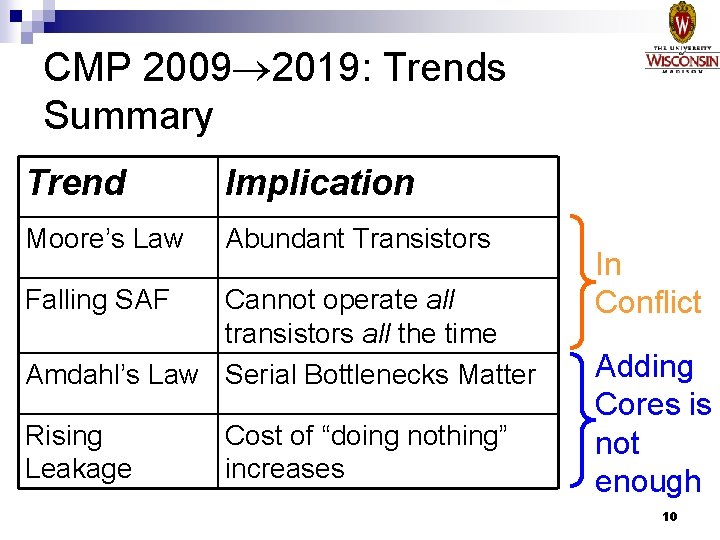

CMP 2009 2019: Trends Summary Trend Implication Moore’s Law Abundant Transistors Falling SAF Cannot operate all transistors all the time Amdahl’s Law Serial Bottlenecks Matter Rising Leakage Cost of “doing nothing” increases In Conflict Adding Cores is not enough 10

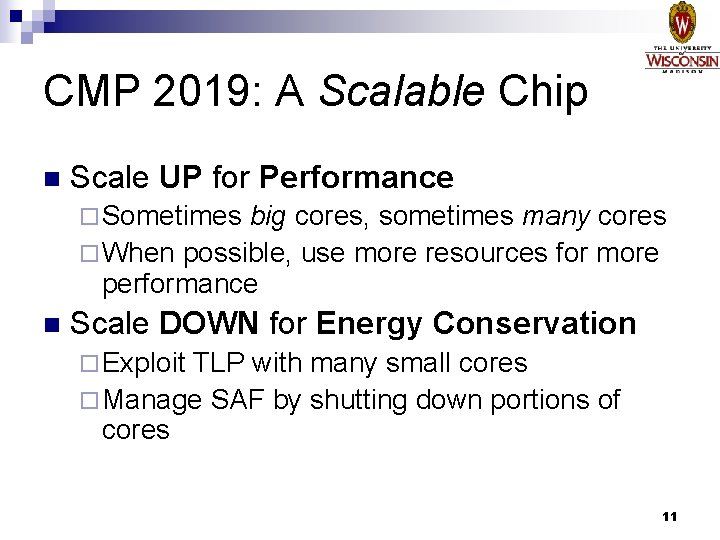

CMP 2019: A Scalable Chip n Scale UP for Performance ¨ Sometimes big cores, sometimes many cores ¨ When possible, use more resources for more performance n Scale DOWN for Energy Conservation ¨ Exploit TLP with many small cores ¨ Manage SAF by shutting down portions of cores 11

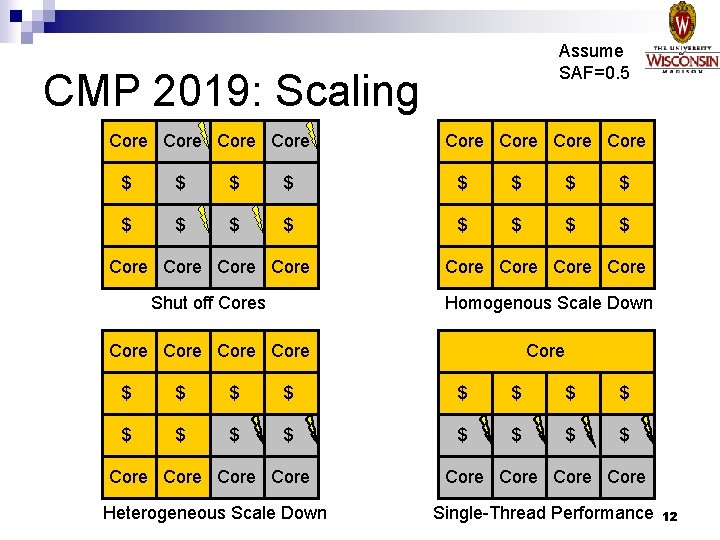

Assume SAF=0. 5 CMP 2019: Scaling Core Core $ $ $ $ Core Core Shut off Cores Homogenous Scale Down Core Core Core $ $ $ $ Core Heterogeneous Scale Down Core Single-Thread Performance 12

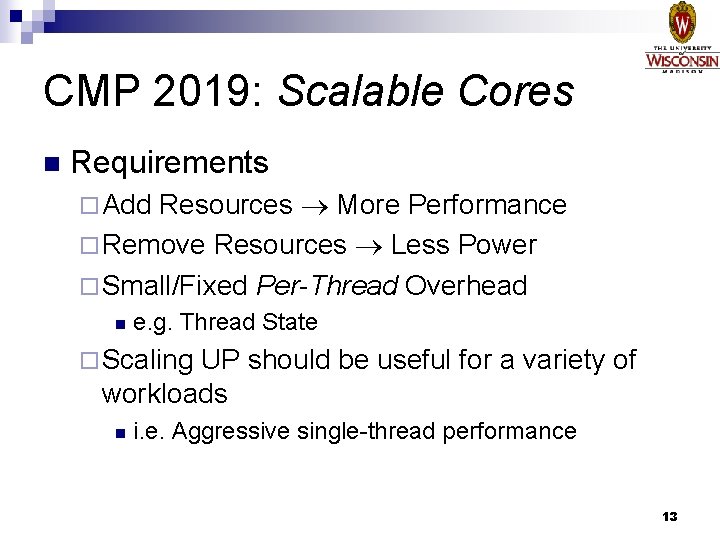

CMP 2019: Scalable Cores n Requirements Resources More Performance ¨ Remove Resources Less Power ¨ Small/Fixed Per-Thread Overhead ¨ Add n e. g. Thread State ¨ Scaling UP should be useful for a variety of workloads n i. e. Aggressive single-thread performance 13

Scalable Cores: Open Questions n Scaling UP ¨ Where do added resources come from? n From statically-provisioned private pools? n From other cores? n Scaling DOWN ¨ What happens to unused n Turn off? How “off” is off? n When to Scale? ¨ In n resources? HW/SW? How to communicate? 14

Outline n n n CMP 2009 2019: Trends toward Scalable Chips Work So Far: Forwardflow Proposed Work ¨ Methodology ¨ Dynamic Scaling ¨ Operand Networks ¨ Control Independence ¨ Miscellanea (Maybe) n Schedule/Closing Remarks 15

Forwardflow: A Scalable Core n Conventional Oo. O does not scale well: ¨ Structures must be scaled n ROB, PRF, RAT, LSQ, … ¨ Wire n together delay is not friendly to scaling Forwardflow has ONE logical backend structure ¨ Scale one structure, scale it well ¨ Tolerates (some) wire delay 16

Forwardflow Overview n Design Philosophy: ¨ Avoid n ‘broadcast’ accesses (e. g. , no CAMs) Avoid ‘search’ operations (via pointers) ¨ Prefer short wires, tolerate long wires ¨ Decouple frontend from backend details n Abstract backend as a pipeline 17

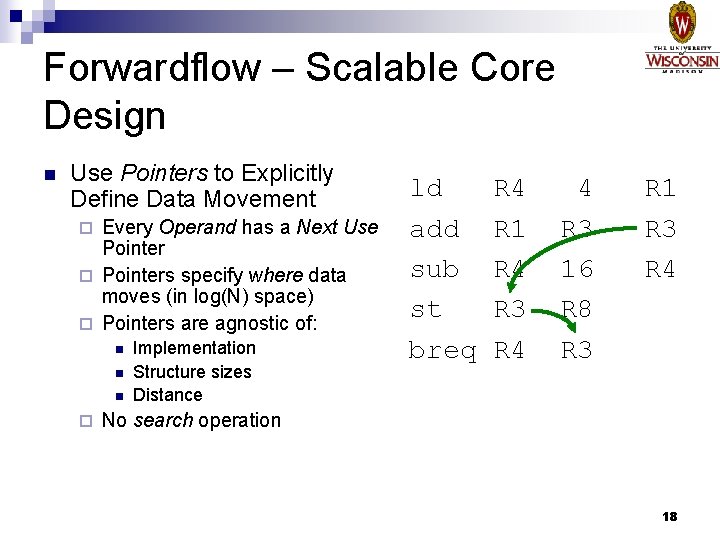

Forwardflow – Scalable Core Design n Use Pointers to Explicitly Define Data Movement Every Operand has a Next Use Pointer ¨ Pointers specify where data moves (in log(N) space) ¨ Pointers are agnostic of: ¨ n n n ¨ Implementation Structure sizes Distance ld add sub st breq R 4 R 1 R 4 R 3 R 4 4 R 3 16 R 8 R 3 R 1 R 3 R 4 No search operation 18

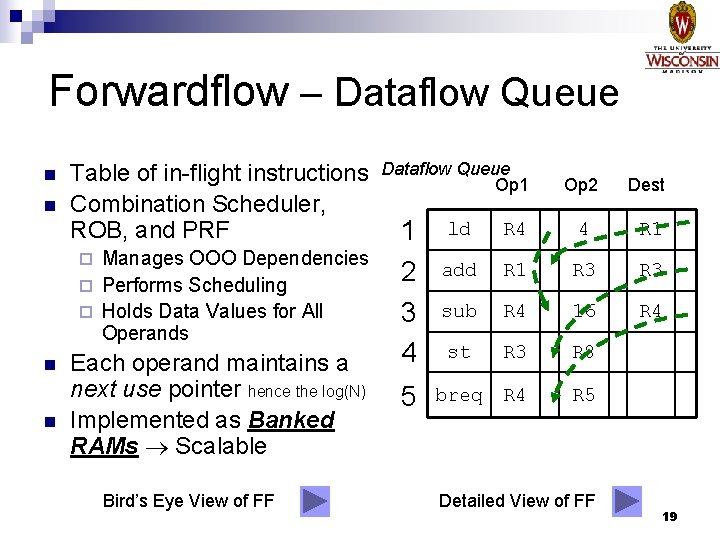

Forwardflow – Dataflow Queue n n Table of in-flight instructions Combination Scheduler, ROB, and PRF Manages OOO Dependencies ¨ Performs Scheduling ¨ Holds Data Values for All Operands ¨ n n Each operand maintains a next use pointer hence the log(N) Implemented as Banked RAMs Scalable Bird’s Eye View of FF Dataflow Queue Op 1 1 2 3 4 5 Op 2 Dest ld R 4 4 R 1 add R 1 R 3 sub R 4 16 R 4 st R 3 R 8 breq R 4 R 5 Detailed View of FF 19

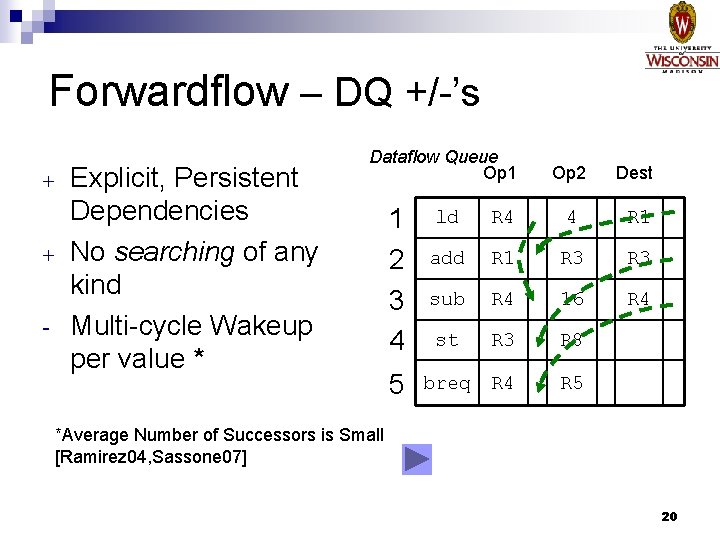

Forwardflow – DQ +/-’s + + - Explicit, Persistent Dependencies No searching of any kind Multi-cycle Wakeup per value * Dataflow Queue Op 1 1 2 3 4 5 Op 2 Dest ld R 4 4 R 1 add R 1 R 3 sub R 4 16 R 4 st R 3 R 8 breq R 4 R 5 *Average Number of Successors is Small [Ramirez 04, Sassone 07] 20

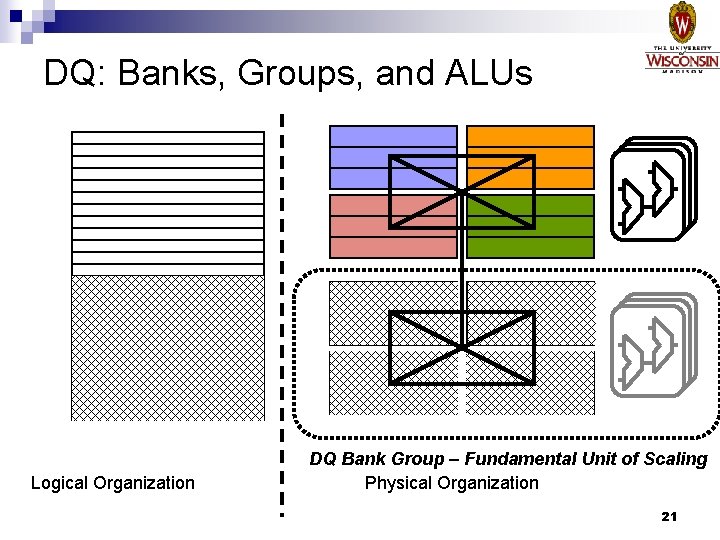

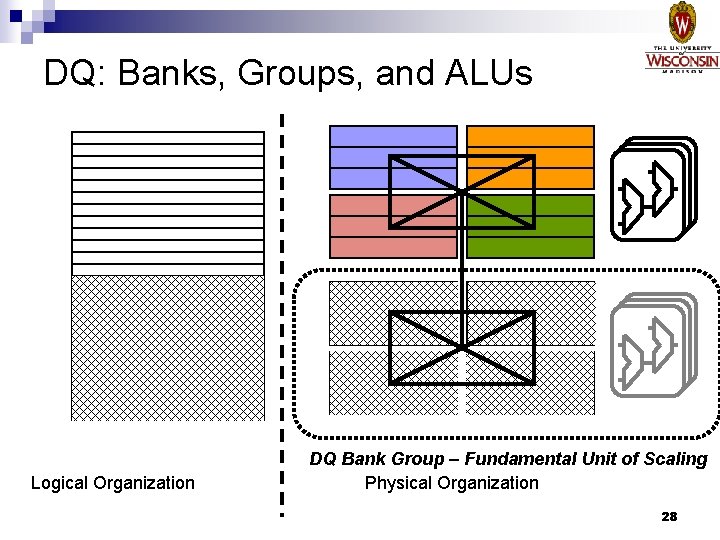

DQ: Banks, Groups, and ALUs Logical Organization DQ Bank Group – Fundamental Unit of Scaling Physical Organization 21

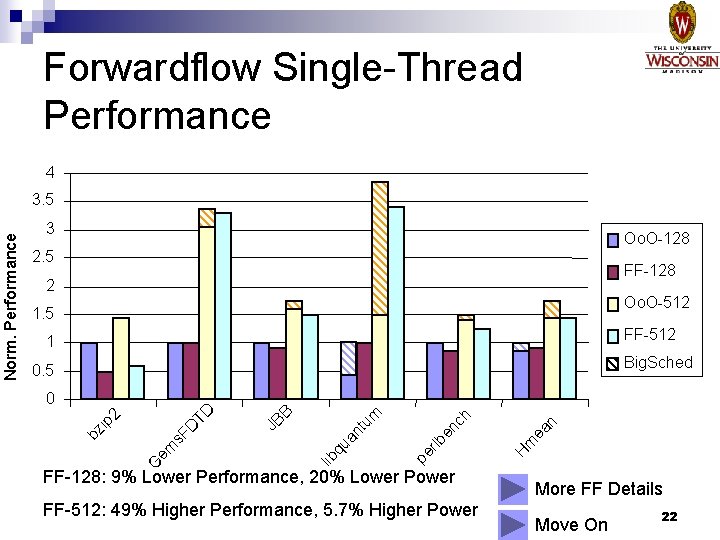

Forwardflow Single-Thread Performance 4 3 Oo. O-128 2. 5 FF-128 2 Oo. O-512 1. 5 FF-512 1 Big. Sched 0. 5 FF-128: 9% Lower Performance, 20% Lower Power FF-512: 49% Higher Performance, 5. 7% Higher Power n m ea H rlb en pe nt u qu a ch m B lib em G JB s. F D ip 2 TD 0 bz Norm. Performance 3. 5 More FF Details Move On 22

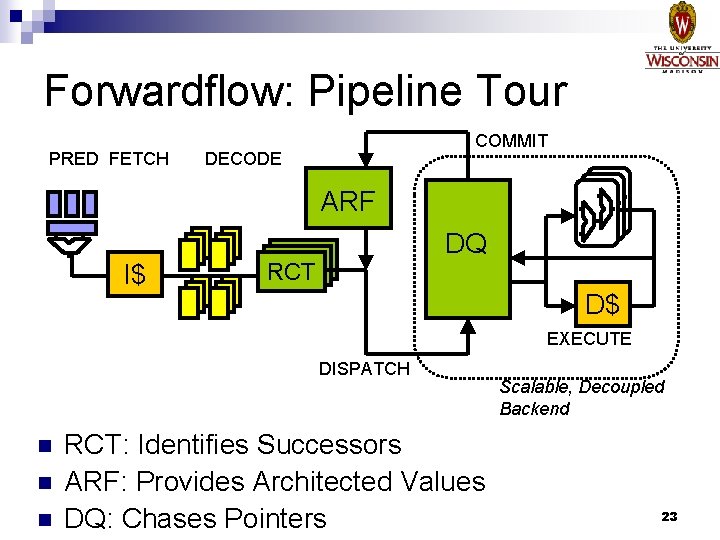

Forwardflow: Pipeline Tour PRED FETCH COMMIT DECODE ARF I$ RCT RCT DQ D$ EXECUTE DISPATCH n n n RCT: Identifies Successors ARF: Provides Architected Values DQ: Chases Pointers Scalable, Decoupled Backend 23

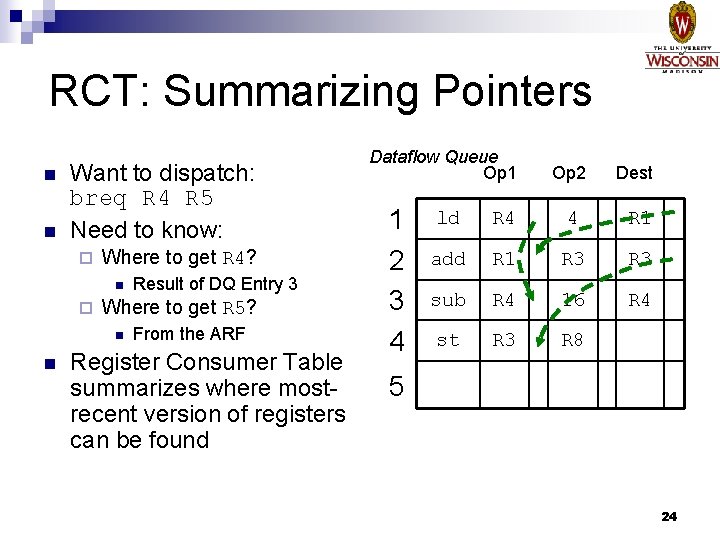

RCT: Summarizing Pointers n n Want to dispatch: breq R 4 R 5 Need to know: ¨ Where to get R 4? n ¨ Where to get R 5? n n Result of DQ Entry 3 From the ARF Register Consumer Table summarizes where mostrecent version of registers can be found Dataflow Queue Op 1 1 2 3 4 Op 2 Dest ld R 4 4 R 1 add R 1 R 3 sub R 4 16 R 4 st R 3 R 8 5 24

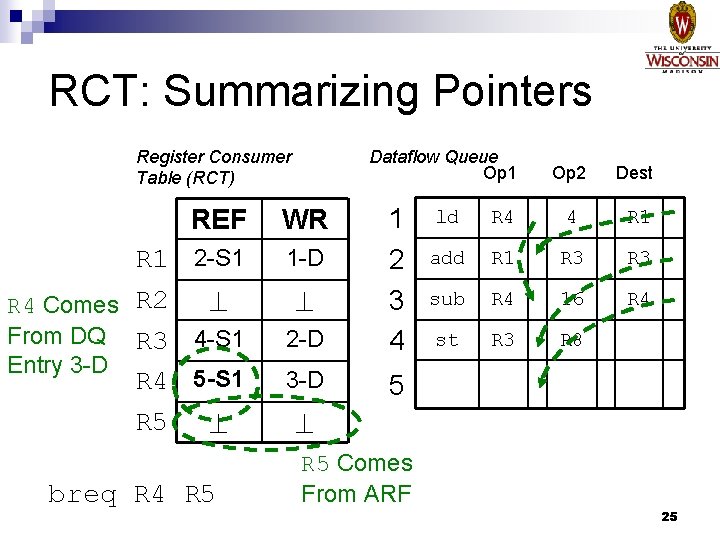

RCT: Summarizing Pointers Register Consumer Table (RCT) Dataflow Queue Op 1 2 -D 1 2 3 4 3 -D 5 REF WR R 1 2 -S 1 R 4 Comes R 2 From DQ R 3 4 -S 1 Entry 3 -D R 4 5 -S 1 3 -D R 5 1 -D breq R 4 R 5 Op 2 Dest ld R 4 4 R 1 add R 1 R 3 sub R 4 16 R 4 st R 3 R 8 breq R 4 7 R 5 Comes From ARF 25

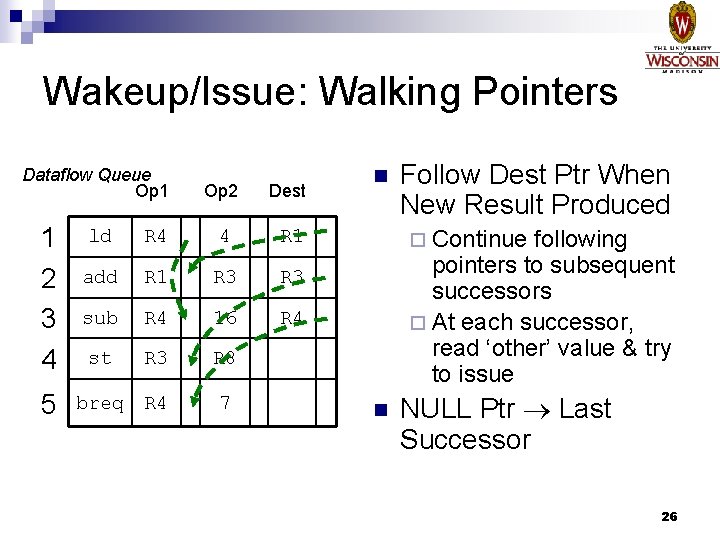

Wakeup/Issue: Walking Pointers Dataflow Queue Op 1 1 2 3 4 5 Op 2 Dest ld R 4 4 R 1 add R 1 R 3 sub R 4 16 R 4 st R 3 R 8 breq R 4 7 n Follow Dest Ptr When New Result Produced ¨ Continue following pointers to subsequent successors ¨ At each successor, read ‘other’ value & try to issue n NULL Ptr Last Successor 26

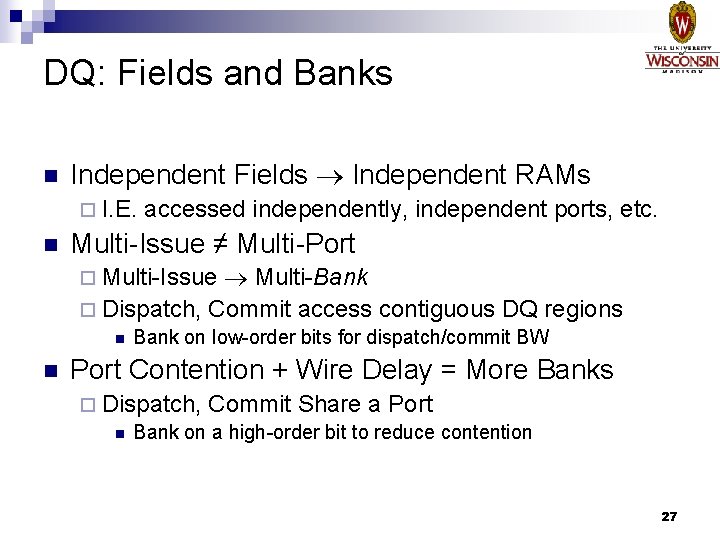

DQ: Fields and Banks n Independent Fields Independent RAMs ¨ I. E. n accessed independently, independent ports, etc. Multi-Issue ≠ Multi-Port Multi-Bank ¨ Dispatch, Commit access contiguous DQ regions ¨ Multi-Issue n n Bank on low-order bits for dispatch/commit BW Port Contention + Wire Delay = More Banks ¨ Dispatch, Commit Share a Port n Bank on a high-order bit to reduce contention 27

DQ: Banks, Groups, and ALUs Logical Organization DQ Bank Group – Fundamental Unit of Scaling Physical Organization 28

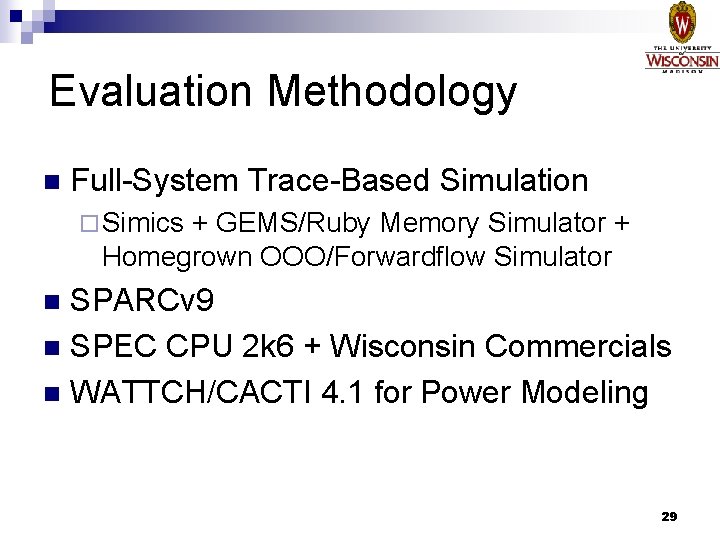

Evaluation Methodology n Full-System Trace-Based Simulation ¨ Simics + GEMS/Ruby Memory Simulator + Homegrown OOO/Forwardflow Simulator SPARCv 9 n SPEC CPU 2 k 6 + Wisconsin Commercials n WATTCH/CACTI 4. 1 for Power Modeling n 29

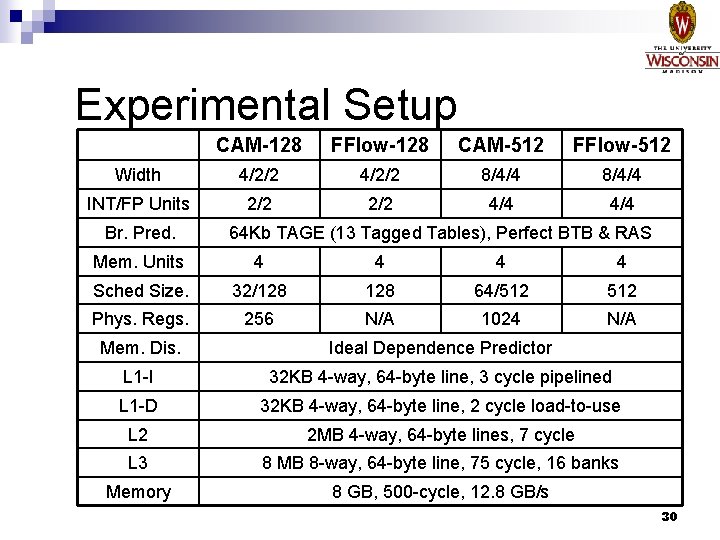

Experimental Setup CAM-128 FFlow-128 CAM-512 FFlow-512 Width 4/2/2 8/4/4 INT/FP Units 2/2 4/4 Br. Pred. 64 Kb TAGE (13 Tagged Tables), Perfect BTB & RAS Mem. Units 4 4 Sched Size. 32/128 64/512 Phys. Regs. 256 N/A 1024 N/A Mem. Dis. Ideal Dependence Predictor L 1 -I 32 KB 4 -way, 64 -byte line, 3 cycle pipelined L 1 -D 32 KB 4 -way, 64 -byte line, 2 cycle load-to-use L 2 2 MB 4 -way, 64 -byte lines, 7 cycle L 3 8 MB 8 -way, 64 -byte line, 75 cycle, 16 banks Memory 8 GB, 500 -cycle, 12. 8 GB/s 30

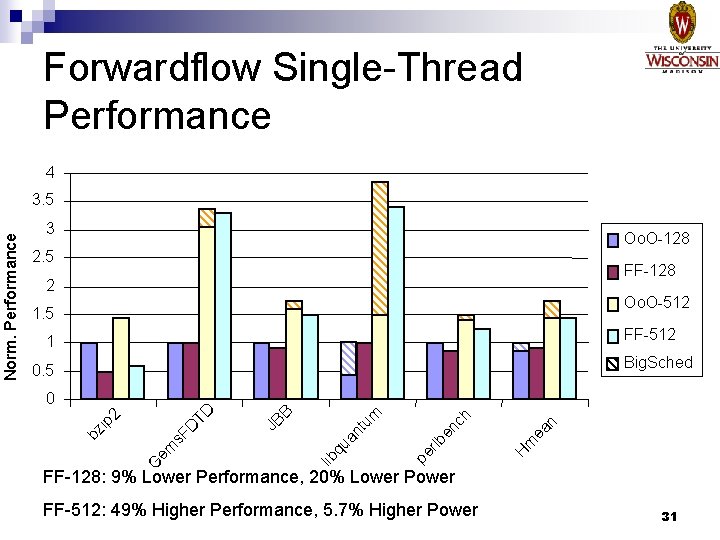

Forwardflow Single-Thread Performance 4 3 Oo. O-128 2. 5 FF-128 2 Oo. O-512 1. 5 FF-512 1 Big. Sched 0. 5 n m ea H rlb en pe nt u qu a ch m B lib em G JB s. F D ip 2 TD 0 bz Norm. Performance 3. 5 FF-128: 9% Lower Performance, 20% Lower Power FF-512: 49% Higher Performance, 5. 7% Higher Power 31

![Related Work n Scalable Schedulers ¨ Direct Instruction Wakeup [Ramirez 04]: n Scheduler has Related Work n Scalable Schedulers ¨ Direct Instruction Wakeup [Ramirez 04]: n Scheduler has](http://slidetodoc.com/presentation_image_h/2c57335e51055040a8920dc03c25a2a4/image-32.jpg)

Related Work n Scalable Schedulers ¨ Direct Instruction Wakeup [Ramirez 04]: n Scheduler has a pointer to the first successor n Secondary table for matrix of successors ¨ Hybrid Wakeup [Huang 02]: n Scheduler has a pointer to the first successor n Each entry has a broadcast bit for multiple successors ¨ Half Price [Kim 02]: n Slice the scheduler in half n Second operand often unneeded 32

![Related Work n Dataflow & Distributed Machines ¨ Tagged-Token n [Arvind 90] Values (tokens) Related Work n Dataflow & Distributed Machines ¨ Tagged-Token n [Arvind 90] Values (tokens)](http://slidetodoc.com/presentation_image_h/2c57335e51055040a8920dc03c25a2a4/image-33.jpg)

Related Work n Dataflow & Distributed Machines ¨ Tagged-Token n [Arvind 90] Values (tokens) flow to successors ¨ TRIPS [Sankaralingam 03]: Discrete Execution Tiles: X, RF, $, etc. n EDGE ISA n ¨ Clustered n Designs [e. g. Palacharla 97] Independent execution queues 33

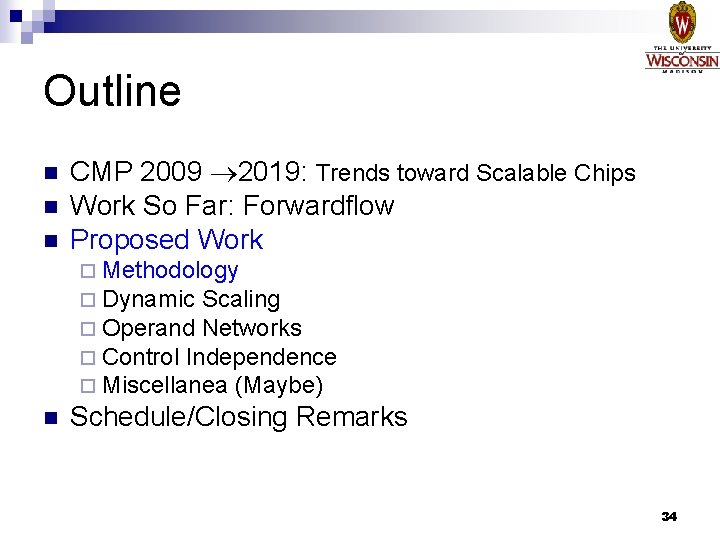

Outline n n n CMP 2009 2019: Trends toward Scalable Chips Work So Far: Forwardflow Proposed Work ¨ Methodology ¨ Dynamic Scaling ¨ Operand Networks ¨ Control Independence ¨ Miscellanea (Maybe) n Schedule/Closing Remarks 34

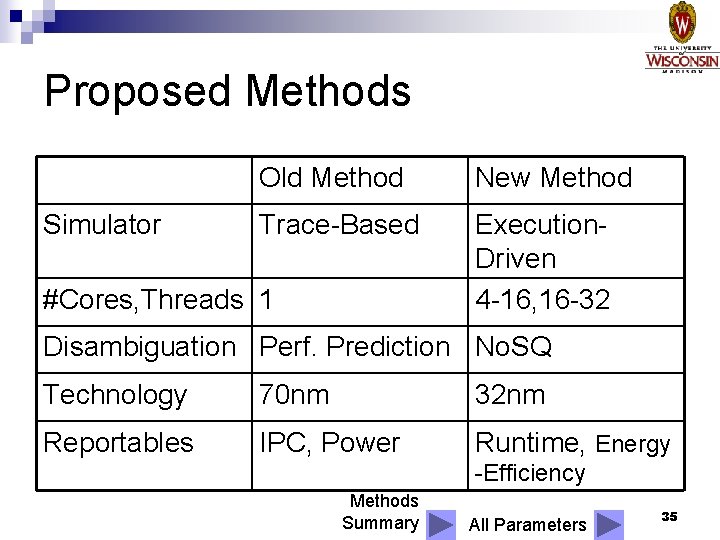

Proposed Methods Simulator Old Method New Method Trace-Based Execution. Driven 4 -16, 16 -32 #Cores, Threads 1 Disambiguation Perf. Prediction No. SQ Technology 70 nm 32 nm Reportables IPC, Power Runtime, Energy -Efficiency Methods Summary All Parameters 35

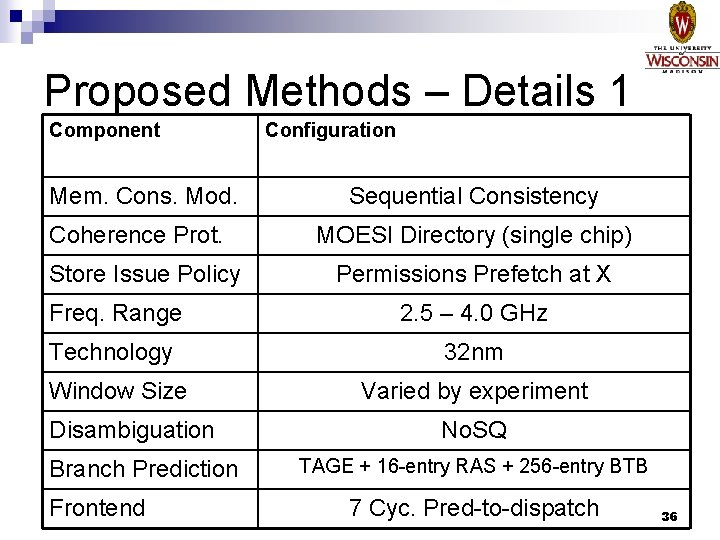

Proposed Methods – Details 1 Component Mem. Cons. Mod. Coherence Prot. Store Issue Policy Configuration Sequential Consistency MOESI Directory (single chip) Permissions Prefetch at X Freq. Range 2. 5 – 4. 0 GHz Technology 32 nm Window Size Disambiguation Branch Prediction Frontend Varied by experiment No. SQ TAGE + 16 -entry RAS + 256 -entry BTB 7 Cyc. Pred-to-dispatch 36

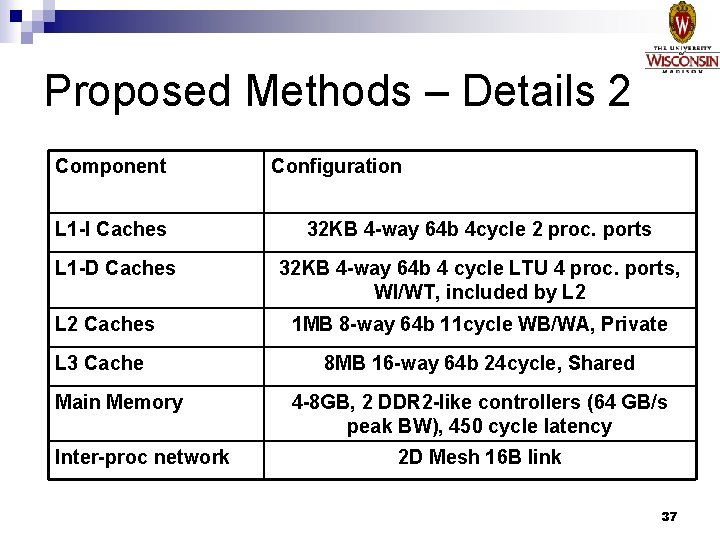

Proposed Methods – Details 2 Component Configuration L 1 -I Caches 32 KB 4 -way 64 b 4 cycle 2 proc. ports L 1 -D Caches 32 KB 4 -way 64 b 4 cycle LTU 4 proc. ports, WI/WT, included by L 2 Caches 1 MB 8 -way 64 b 11 cycle WB/WA, Private L 3 Cache 8 MB 16 -way 64 b 24 cycle, Shared Main Memory Inter-proc network 4 -8 GB, 2 DDR 2 -like controllers (64 GB/s peak BW), 450 cycle latency 2 D Mesh 16 B link 37

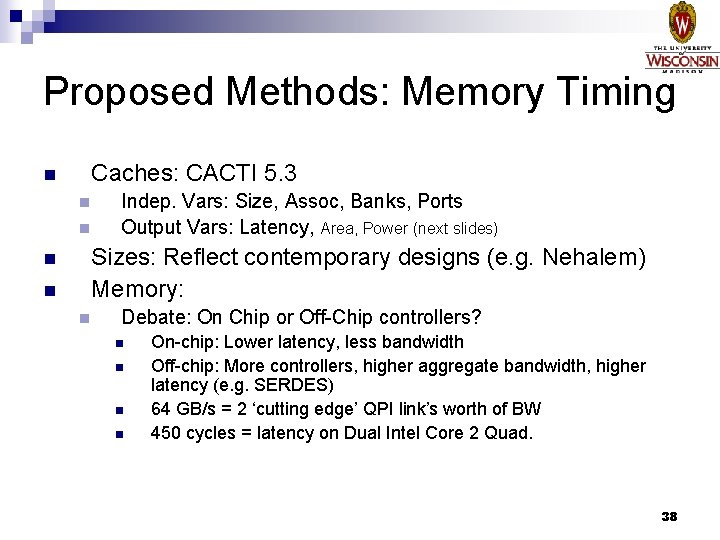

Proposed Methods: Memory Timing n Caches: CACTI 5. 3 n n Indep. Vars: Size, Assoc, Banks, Ports Output Vars: Latency, Area, Power (next slides) Sizes: Reflect contemporary designs (e. g. Nehalem) Memory: n Debate: On Chip or Off-Chip controllers? n n On-chip: Lower latency, less bandwidth Off-chip: More controllers, higher aggregate bandwidth, higher latency (e. g. SERDES) 64 GB/s = 2 ‘cutting edge’ QPI link’s worth of BW 450 cycles = latency on Dual Intel Core 2 Quad. 38

Proposed Methods: Area 1. Unit Area/AR Estimates n 2. Floorplanning n 3. 4. Manual and automated Repeat 1&2 hierarchically for entire design Latencies determined by floorplanned distance n n CACTI, WATTCH, or literature Heuristic-guided optimistic repeater placement Area of I/O pads, clock gen, etc. not included 39

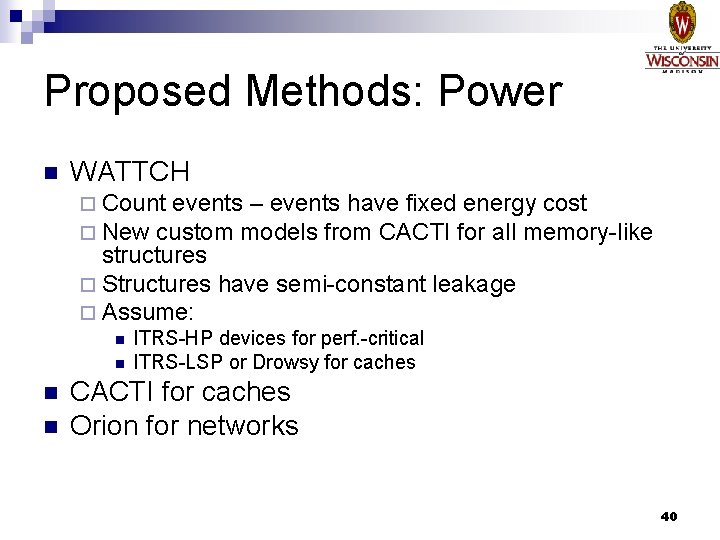

Proposed Methods: Power n WATTCH ¨ Count events – events have fixed energy cost ¨ New custom models from CACTI for all memory-like structures ¨ Structures have semi-constant leakage ¨ Assume: n n ITRS-HP devices for perf. -critical ITRS-LSP or Drowsy for caches CACTI for caches Orion for networks 40

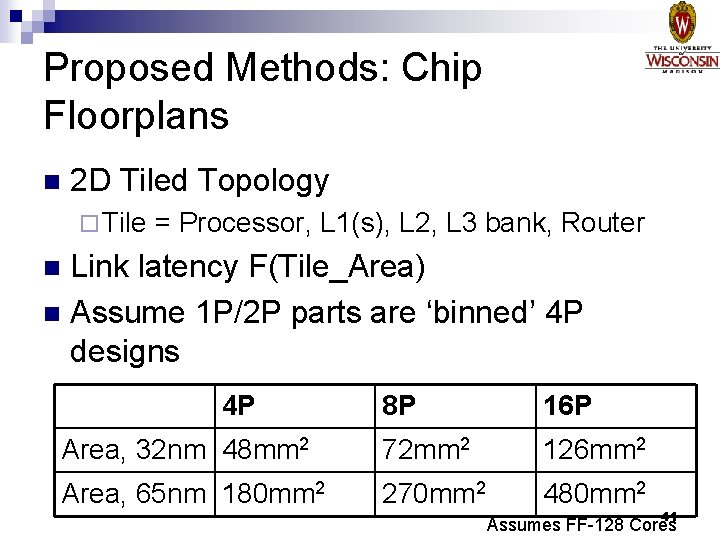

Proposed Methods: Chip Floorplans n 2 D Tiled Topology ¨ Tile = Processor, L 1(s), L 2, L 3 bank, Router Link latency F(Tile_Area) n Assume 1 P/2 P parts are ‘binned’ 4 P designs n 4 P 8 P 16 P Area, 32 nm 48 mm 2 72 mm 2 126 mm 2 Area, 65 nm 180 mm 2 270 mm 2 480 mm 2 41 Assumes FF-128 Cores

Outline n n n CMP 2009 2019: Trends toward Scalable Chips Work So Far: Forwardflow Proposed Work ¨ Methodology ¨ Dynamic Scaling (Executive ¨ Operand Networks ¨ Control Independence ¨ Miscellanea (Maybe) n Summary) Schedule/Closing Remarks 42

Proposed Work Executive Summary n Dynamic Scaling in CMPs ¨ ¨ n CMPs will have many cores, caches, etc. , all burning power SW Requirements will be varied Hierarchical Operand Networks ¨ Scalable Cores need Scalable, Generalized Communication n Control Independence ¨ Increase performance from scaling up n n Exploit Regular Communication Adapt to Changing Conditions/Topologies (e. g. , from Dynamic Scaling) Trades off window space for window utilization Miscellanea 43

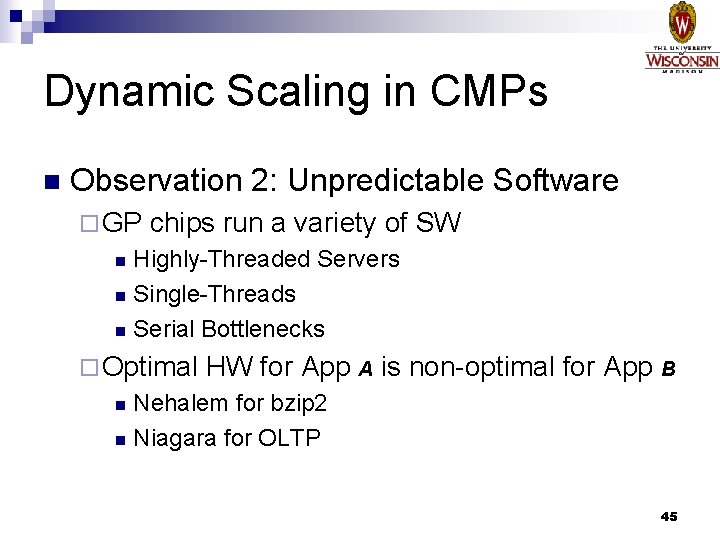

Dynamic Scaling in CMPs n Observation 1: Power Constraints ¨ Many design-time options to maintain a power envelope: Many Small Cores vs. Few, Aggressive Cores n How much Cache per Core? n ¨ Fewer run-time options DVS/DFS n Shut Cores Off, e. g. OPMS n 44

Dynamic Scaling in CMPs n Observation 2: Unpredictable Software ¨ GP chips run a variety of SW Highly-Threaded Servers n Single-Threads n Serial Bottlenecks n ¨ Optimal HW for App A is non-optimal for App B Nehalem for bzip 2 n Niagara for OLTP n 45

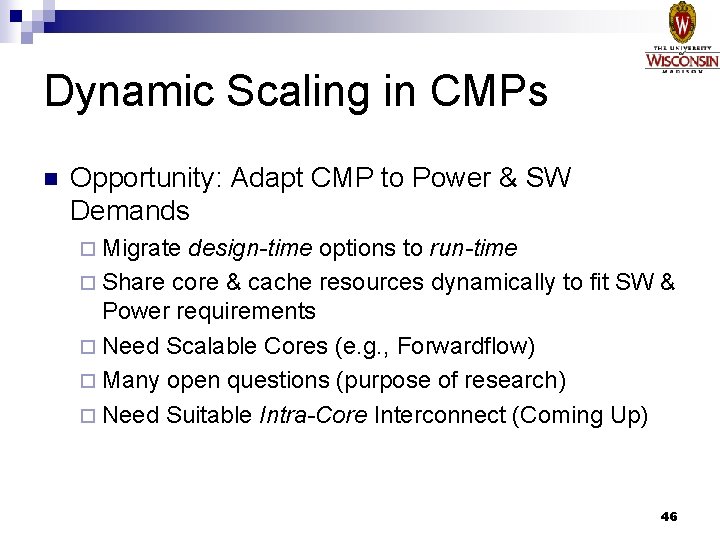

Dynamic Scaling in CMPs n Opportunity: Adapt CMP to Power & SW Demands ¨ Migrate design-time options to run-time ¨ Share core & cache resources dynamically to fit SW & Power requirements ¨ Need Scalable Cores (e. g. , Forwardflow) ¨ Many open questions (purpose of research) ¨ Need Suitable Intra-Core Interconnect (Coming Up) 46

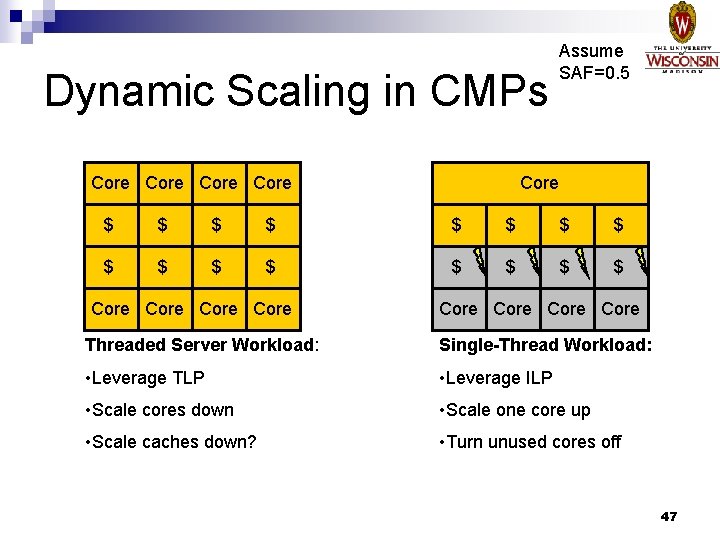

Dynamic Scaling in CMPs Core Assume SAF=0. 5 Core Core $ $ $ $ Core Core Threaded Server Workload: Single-Thread Workload: • Leverage TLP • Leverage ILP • Scale cores down • Scale one core up • Scale caches down? • Turn unused cores off 47

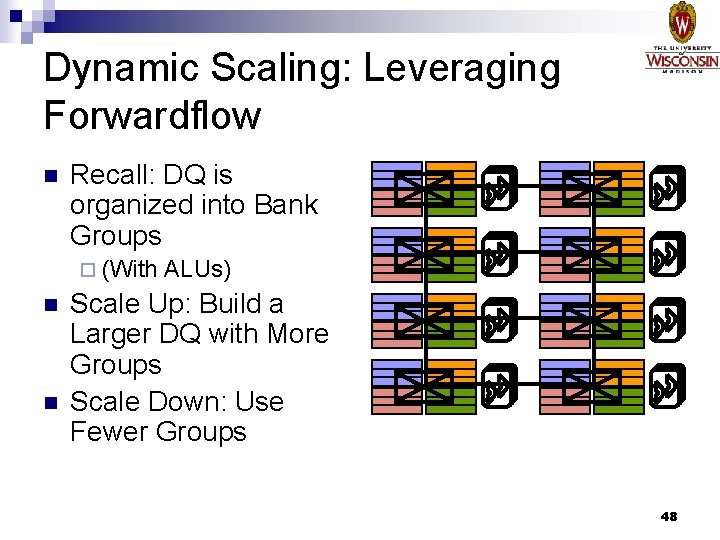

Dynamic Scaling: Leveraging Forwardflow n Recall: DQ is organized into Bank Groups ¨ (With n n ALUs) Scale Up: Build a Larger DQ with More Groups Scale Down: Use Fewer Groups 48

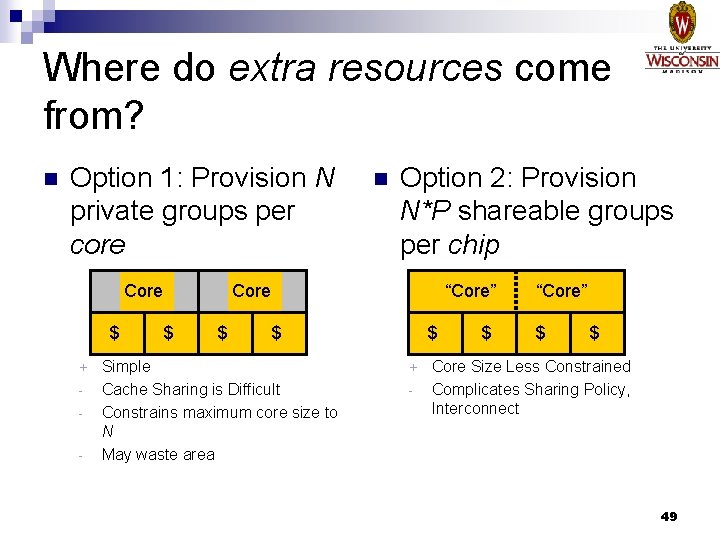

Where do extra resources come from? n Option 1: Provision N private groups per core Core $ + - $ n Option 2: Provision N*P shareable groups per chip Core $ “Core” $ Simple Cache Sharing is Difficult Constrains maximum core size to N May waste area $ + - $ “Core” $ $ Core Size Less Constrained Complicates Sharing Policy, Interconnect 49

Dynamic Scaling: More Open Questions n Scale in HW or SW? ¨ SW has scheduling visibility ¨ HW can react at fine granularity What about Frontends? n What about Caches? n ¨ Multiple caches for one thread 50

Dynamic Scaling: “Choose your own adventure” prelim n Preliminary Uniprocessor Scaling Results n Related Work n Move on to Operand Networks 51

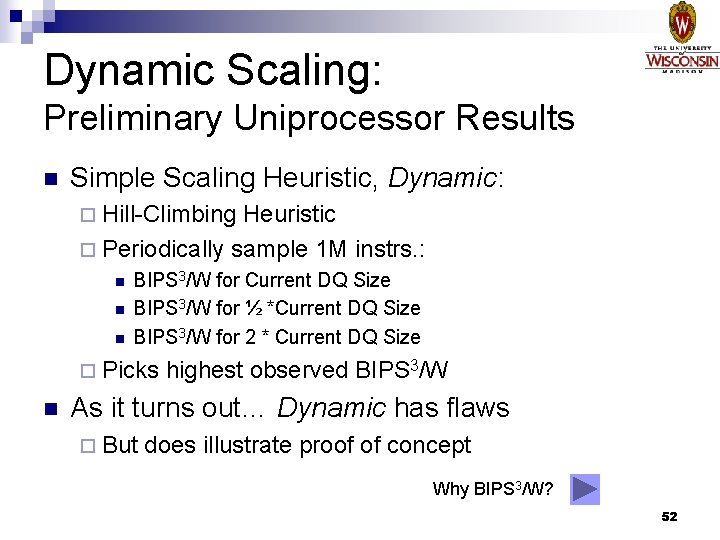

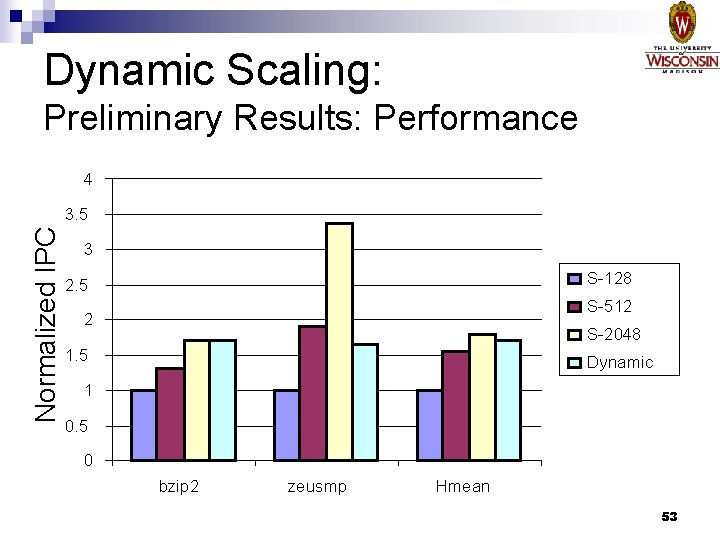

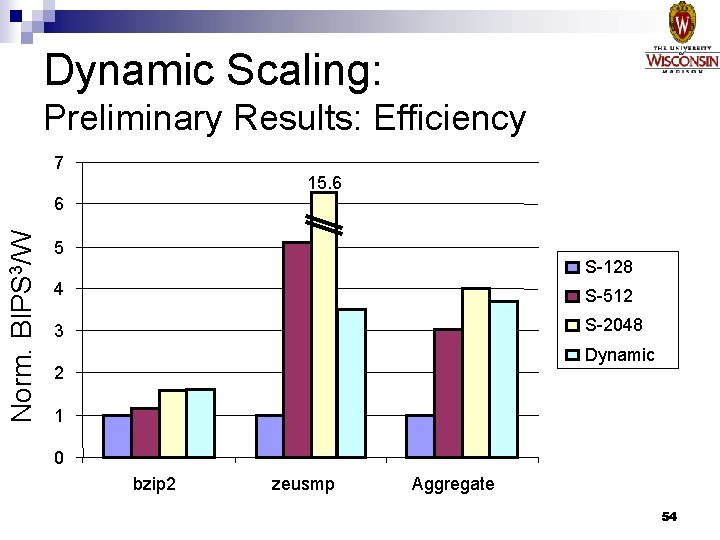

Dynamic Scaling: Preliminary Uniprocessor Results n Simple Scaling Heuristic, Dynamic: ¨ Hill-Climbing Heuristic ¨ Periodically sample 1 M instrs. : n n n BIPS 3/W for Current DQ Size BIPS 3/W for ½ *Current DQ Size BIPS 3/W for 2 * Current DQ Size ¨ Picks n highest observed BIPS 3/W As it turns out… Dynamic has flaws ¨ But does illustrate proof of concept Why BIPS 3/W? 52

Dynamic Scaling: Preliminary Results: Performance 4 Normalized IPC 3. 5 3 S-128 2. 5 S-512 2 S-2048 1. 5 Dynamic 1 0. 5 0 bzip 2 zeusmp Hmean 53

Dynamic Scaling: Preliminary Results: Efficiency 7 15. 6 Norm. BIPS 3/W 6 5 S-128 4 S-512 3 S-2048 Dynamic 2 1 0 bzip 2 zeusmp Aggregate 54

![Dynamic Scaling: Related Work n Core. Fusion [Ipek 07] ¨ Fuse n individual core Dynamic Scaling: Related Work n Core. Fusion [Ipek 07] ¨ Fuse n individual core](http://slidetodoc.com/presentation_image_h/2c57335e51055040a8920dc03c25a2a4/image-55.jpg)

Dynamic Scaling: Related Work n Core. Fusion [Ipek 07] ¨ Fuse n individual core structures into bigger cores Power aware microarchitecture resource scaling [Iyer 01] ¨ Varies n RUU & Width Positional Adaptation [Huang 03] ¨ Adaptively n Applies Low-Power Techniques: Instruction Filtering, Sequential Cache, Reduced ALUs 55

Outline n n n CMP 2009 2019: Trends toward Scalable Chips Work So Far: Forwardflow Proposed Work ¨ Methodology ¨ Dynamic Scaling ¨ Operand Networks ¨ Control Independence ¨ Miscellanea (Maybe) n Schedule/Closing Remarks 56

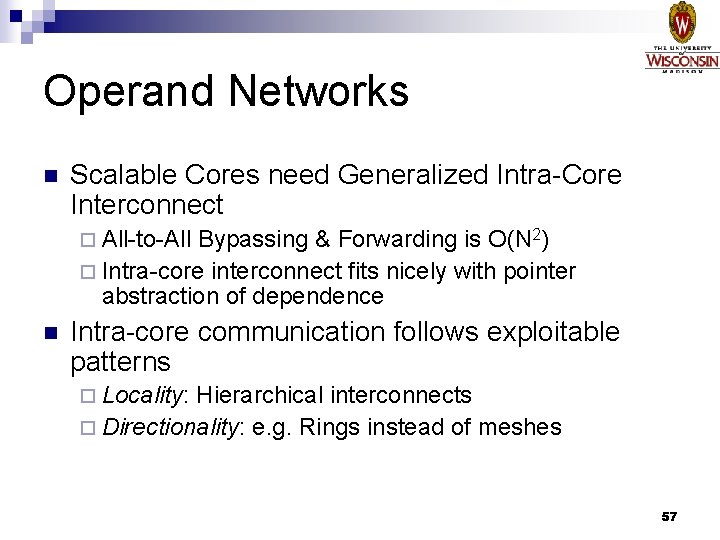

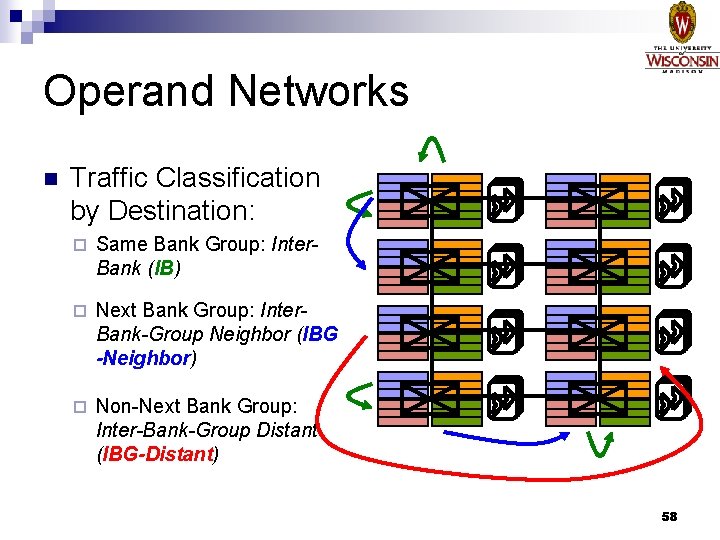

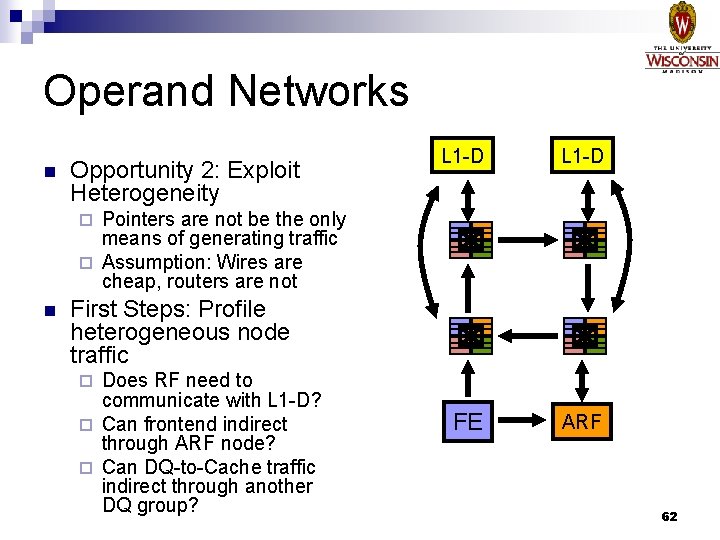

Operand Networks n Scalable Cores need Generalized Intra-Core Interconnect ¨ All-to-All Bypassing & Forwarding is O(N 2) ¨ Intra-core interconnect fits nicely with pointer abstraction of dependence n Intra-core communication follows exploitable patterns ¨ Locality: Hierarchical interconnects ¨ Directionality: e. g. Rings instead of meshes 57

Operand Networks n Traffic Classification by Destination: ¨ Same Bank Group: Inter. Bank (IB) ¨ Next Bank Group: Inter. Bank-Group Neighbor (IBG -Neighbor) ¨ Non-Next Bank Group: Inter-Bank-Group Distant (IBG-Distant) 58

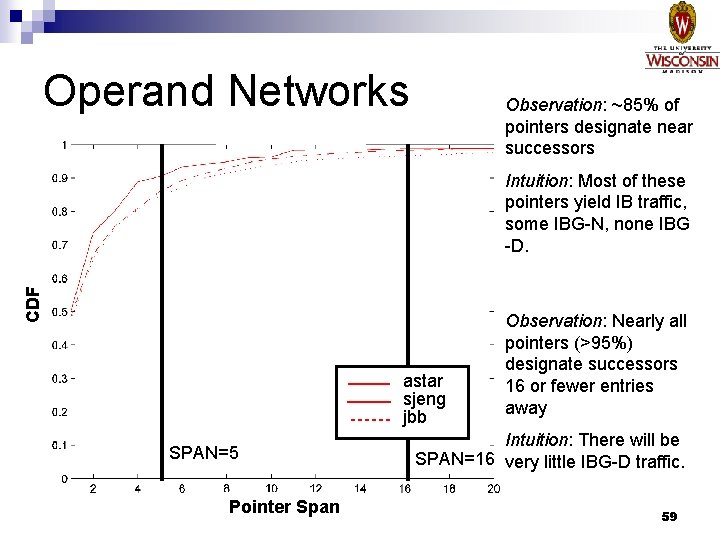

Operand Networks Observation: ~85% of pointers designate near successors CDF Intuition: Most of these pointers yield IB traffic, some IBG-N, none IBG -D. astar sjeng jbb SPAN=5 Pointer Span Observation: Nearly all pointers (>95%) designate successors 16 or fewer entries away Intuition: There will be SPAN=16 very little IBG-D traffic. 59

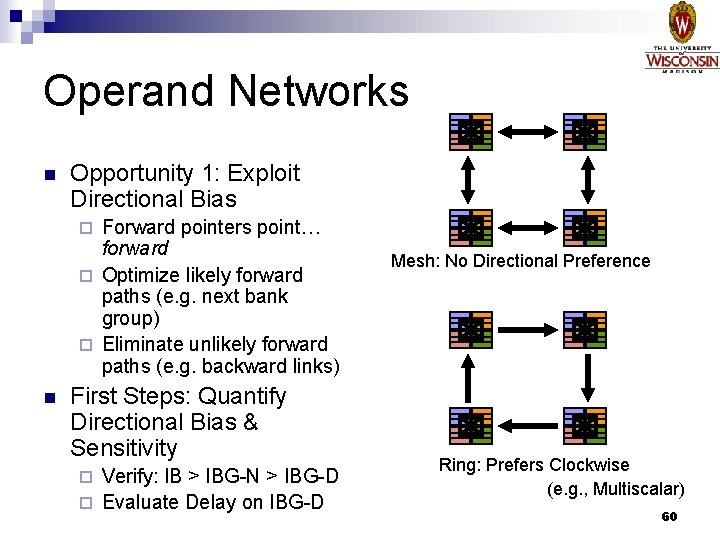

Operand Networks n Opportunity 1: Exploit Directional Bias Forward pointers point… forward ¨ Optimize likely forward paths (e. g. next bank group) ¨ Eliminate unlikely forward paths (e. g. backward links) ¨ n First Steps: Quantify Directional Bias & Sensitivity Verify: IB > IBG-N > IBG-D ¨ Evaluate Delay on IBG-D ¨ Mesh: No Directional Preference Ring: Prefers Clockwise (e. g. , Multiscalar) 60

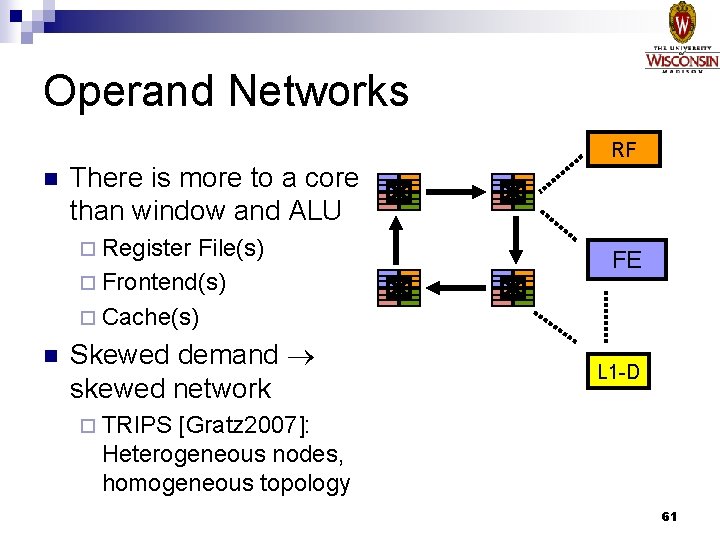

Operand Networks RF n There is more to a core than window and ALU ¨ Register File(s) ¨ Frontend(s) ¨ Cache(s) n Skewed demand skewed network FE L 1 -D ¨ TRIPS [Gratz 2007]: Heterogeneous nodes, homogeneous topology 61

Operand Networks n Opportunity 2: Exploit Heterogeneity L 1 -D FE ARF Pointers are not be the only means of generating traffic ¨ Assumption: Wires are cheap, routers are not ¨ n First Steps: Profile heterogeneous node traffic Does RF need to communicate with L 1 -D? ¨ Can frontend indirect through ARF node? ¨ Can DQ-to-Cache traffic indirect through another DQ group? ¨ 62

![Operand Networks: Related Work n TRIPS [Gratz 07] Skip: Move on to Control Independence Operand Networks: Related Work n TRIPS [Gratz 07] Skip: Move on to Control Independence](http://slidetodoc.com/presentation_image_h/2c57335e51055040a8920dc03c25a2a4/image-63.jpg)

Operand Networks: Related Work n TRIPS [Gratz 07] Skip: Move on to Control Independence ¨ 2 D Mesh, distributed single thread, heterogeneous nodes n RAW [Taylor 04] ¨ 2 D Mesh, distributed multiple thread, mostly heterogenous nodes n ILDP [Kim 02] ¨ Point-to-point, homogenous endpoints 63

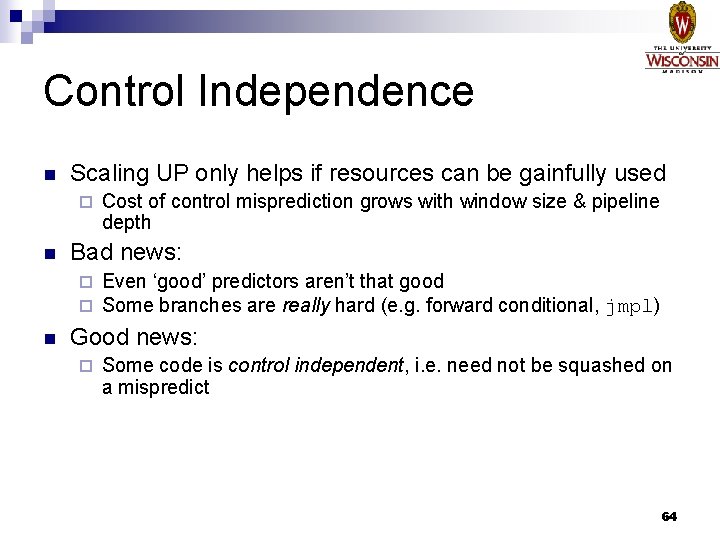

Control Independence n Scaling UP only helps if resources can be gainfully used ¨ n Cost of control misprediction grows with window size & pipeline depth Bad news: Even ‘good’ predictors aren’t that good ¨ Some branches are really hard (e. g. forward conditional, jmpl) ¨ n Good news: ¨ Some code is control independent, i. e. need not be squashed on a mispredict 64

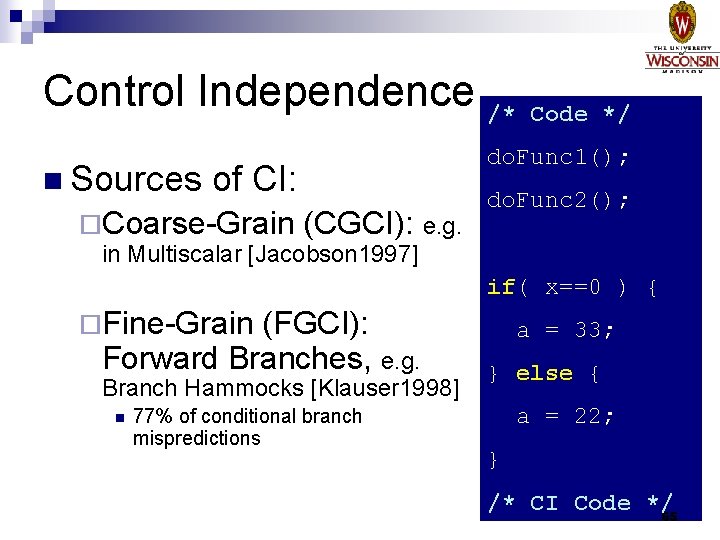

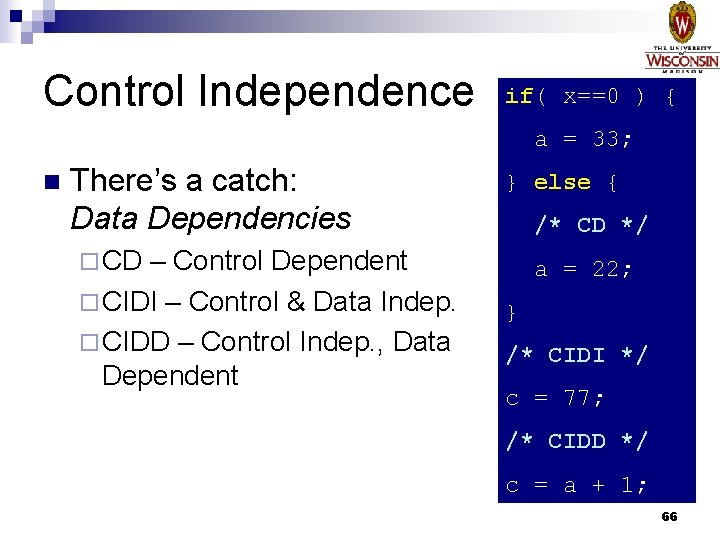

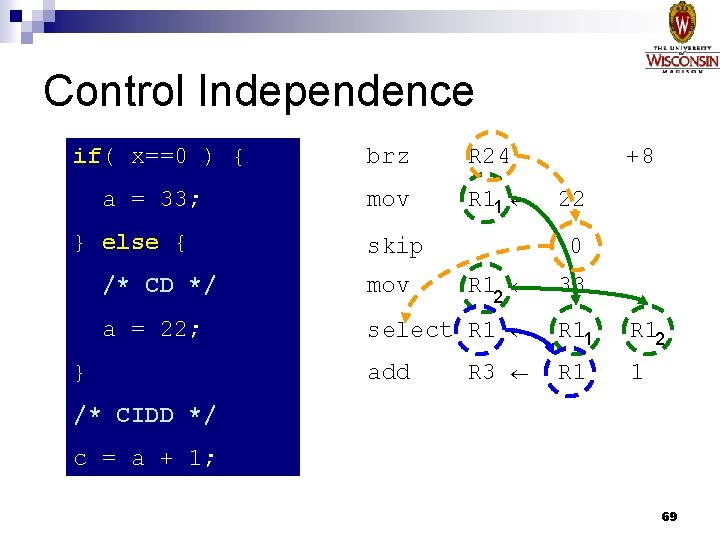

Control Independence /* n Sources do. Func 1(); of CI: ¨Coarse-Grain Code */ (CGCI): e. g. do. Func 2(); in Multiscalar [Jacobson 1997] if( x==0 ) { ¨Fine-Grain (FGCI): Forward Branches, e. g. Branch Hammocks [Klauser 1998] n 77% of conditional branch mispredictions a = 33; } else { a = 22; } /* CI Code */ 65

Control Independence if( x==0 ) { a = 33; n There’s a catch: Data Dependencies – Control Dependent ¨ CIDI – Control & Data Indep. ¨ CIDD – Control Indep. , Data Dependent } else { /* CD */ ¨ CD a = 22; } /* CIDI */ c = 77; /* CIDD */ c = a + 1; 66

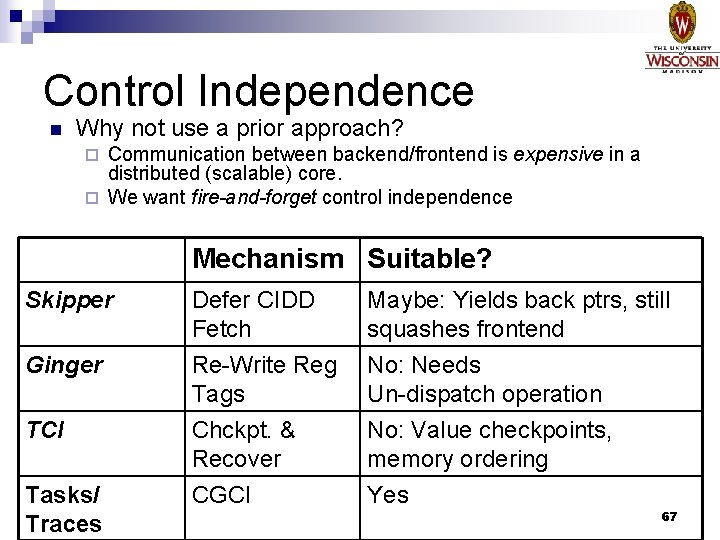

Control Independence n Why not use a prior approach? Communication between backend/frontend is expensive in a distributed (scalable) core. ¨ We want fire-and-forget control independence ¨ Mechanism Suitable? Skipper Ginger TCI Tasks/ Traces Defer CIDD Fetch Re-Write Reg Tags Maybe: Yields back ptrs, still squashes frontend No: Needs Un-dispatch operation Chckpt. & Recover CGCI No: Value checkpoints, memory ordering Yes 67

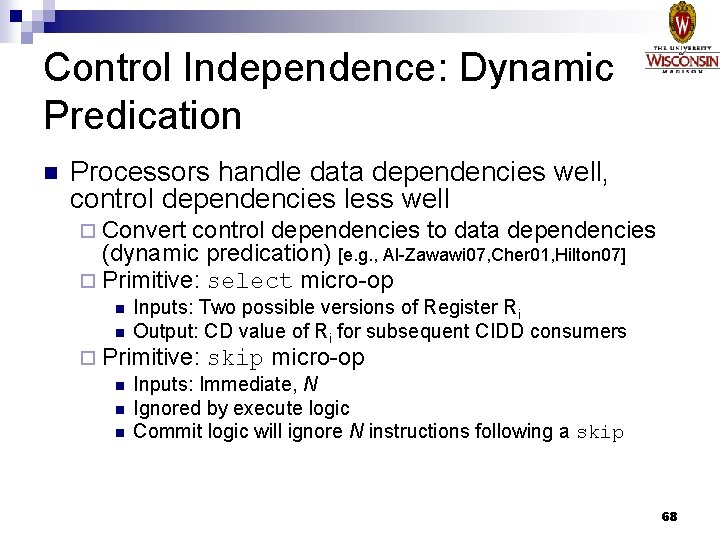

Control Independence: Dynamic Predication n Processors handle data dependencies well, control dependencies less well ¨ Convert control dependencies to data dependencies (dynamic predication) [e. g. , Al-Zawawi 07, Cher 01, Hilton 07] ¨ Primitive: select micro-op n n Inputs: Two possible versions of Register Ri Output: CD value of Ri for subsequent CIDD consumers ¨ Primitive: skip micro-op n Inputs: Immediate, N n Ignored by execute logic n Commit logic will ignore N instructions following a skip 68

Control Independence if( x==0 ) { a = 33; } else { brz R 24 mov R 11 br skip /* CD */ mov a = 22; } +8 22 0 R 12 +4 33 select R 11 R 12 add R 1 1 R 3 /* CIDD */ c = a + 1; 69

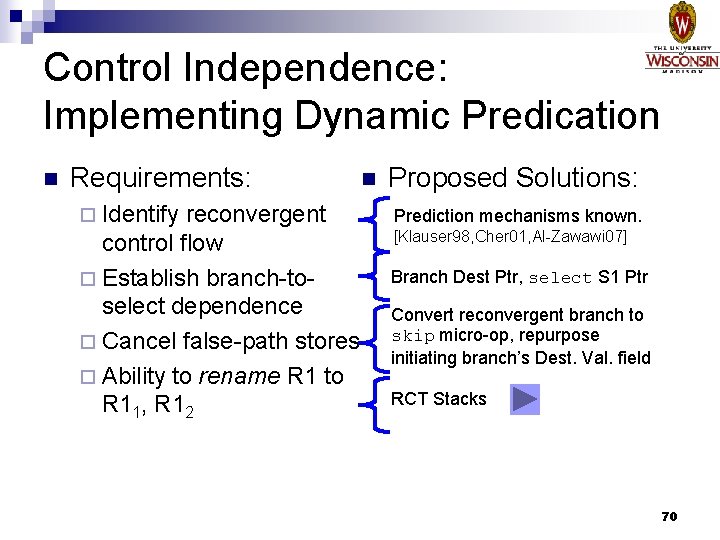

Control Independence: Implementing Dynamic Predication n Requirements: ¨ Identify reconvergent control flow ¨ Establish branch-toselect dependence ¨ Cancel false-path stores ¨ Ability to rename R 1 to R 11, R 12 n Proposed Solutions: Prediction mechanisms known. [Klauser 98, Cher 01, Al-Zawawi 07] Branch Dest Ptr, select S 1 Ptr Convert reconvergent branch to skip micro-op, repurpose initiating branch’s Dest. Val. field RCT Stacks 70

“Choose your own adventure” prelim n Move on to… ¨ Forward Slice Replay ¨ ARF Write Elision ¨ Proposed Work Summary 71

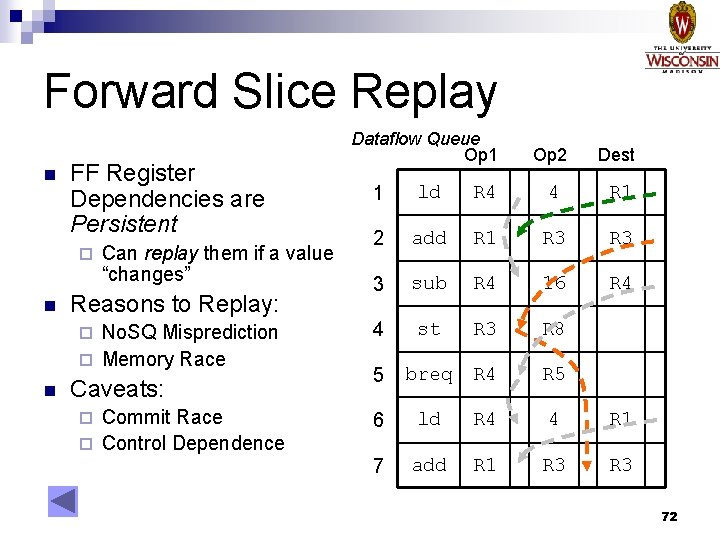

Forward Slice Replay n FF Register Dependencies are Persistent ¨ n Can replay them if a value “changes” Reasons to Replay: No. SQ Misprediction ¨ Memory Race ¨ n Caveats: Commit Race ¨ Control Dependence ¨ Dataflow Queue Op 1 Op 2 Dest 1 ld R 4 4 R 1 2 add R 1 R 3 3 sub R 4 16 R 4 4 st R 3 R 8 5 breq R 4 R 5 6 ld R 4 4 R 1 7 add R 1 R 3 72

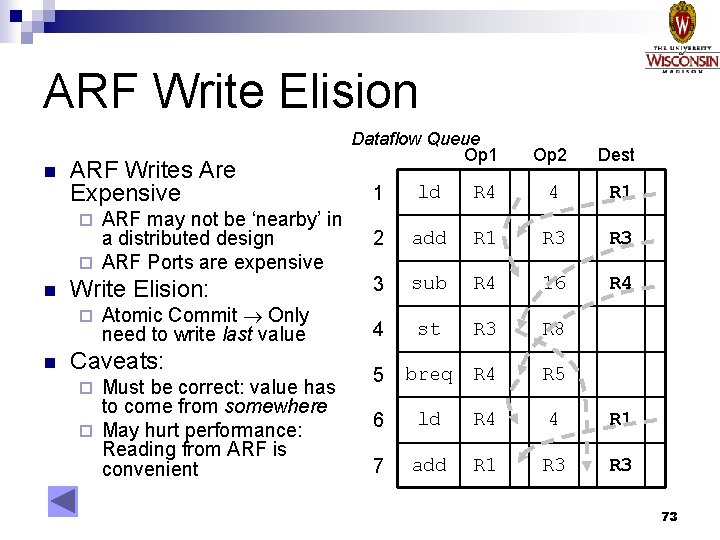

ARF Write Elision n ARF Writes Are Expensive ARF may not be ‘nearby’ in a distributed design ¨ ARF Ports are expensive ¨ n Write Elision: ¨ n Atomic Commit Only need to write last value Caveats: Must be correct: value has to come from somewhere ¨ May hurt performance: Reading from ARF is convenient ¨ Dataflow Queue Op 1 Op 2 Dest 1 ld R 4 4 R 1 2 add R 1 R 3 3 sub R 4 16 R 4 4 st R 3 R 8 5 breq R 4 R 5 6 ld R 4 4 R 1 7 add R 1 R 3 73

Contributions n Forwardflow Cores ¨ Scalable n Oo. O Proposed: Dynamic Scaling in CMPs ¨ Resource n Sharing Mechanisms and Policies Proposed: Operand Network Hierarchies ¨ Exploitable n communication Proposed: Control Independence ¨ Fire-and-forget for scalable cores 74

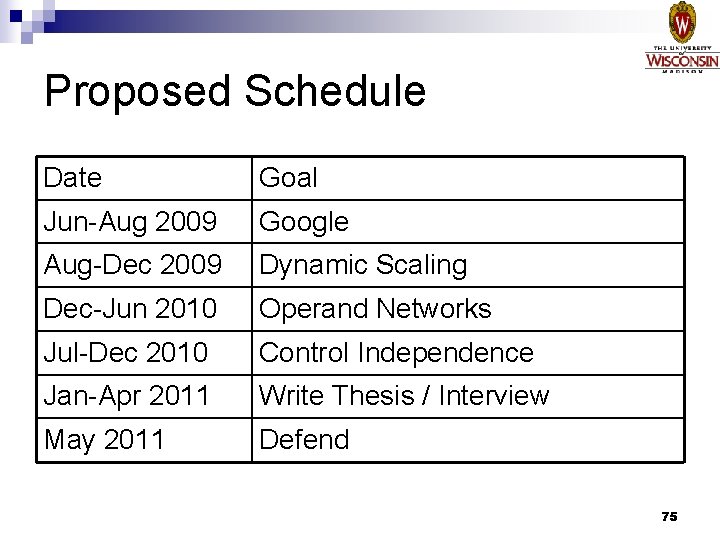

Proposed Schedule Date Goal Jun-Aug 2009 Google Aug-Dec 2009 Dynamic Scaling Dec-Jun 2010 Operand Networks Jul-Dec 2010 Control Independence Jan-Apr 2011 Write Thesis / Interview May 2011 Defend 75

Thank you, committee, slide reviewers and “practice committee” for constructive criticism. 76

Backup Slides 77

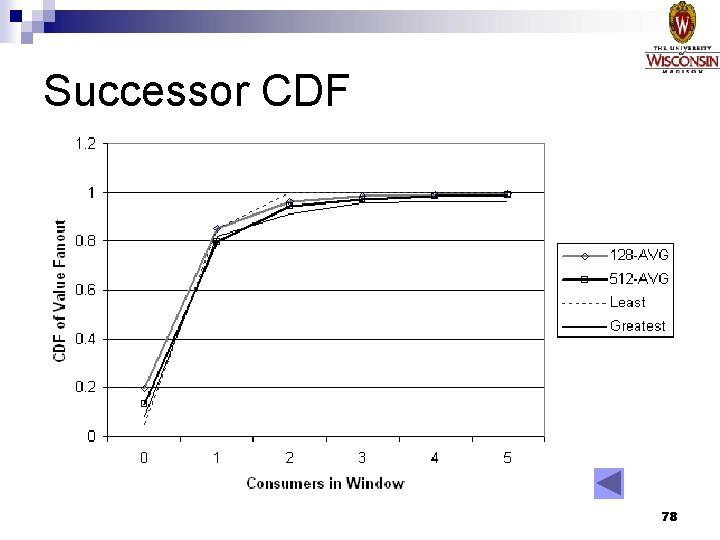

Successor CDF 78

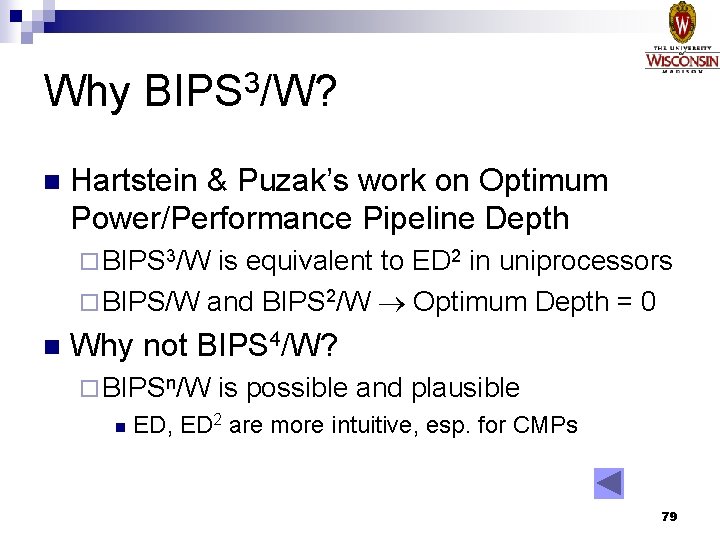

Why BIPS 3/W? n Hartstein & Puzak’s work on Optimum Power/Performance Pipeline Depth ¨ BIPS 3/W is equivalent to ED 2 in uniprocessors ¨ BIPS/W and BIPS 2/W Optimum Depth = 0 n Why not BIPS 4/W? ¨ BIPSn/W n is possible and plausible ED, ED 2 are more intuitive, esp. for CMPs 79

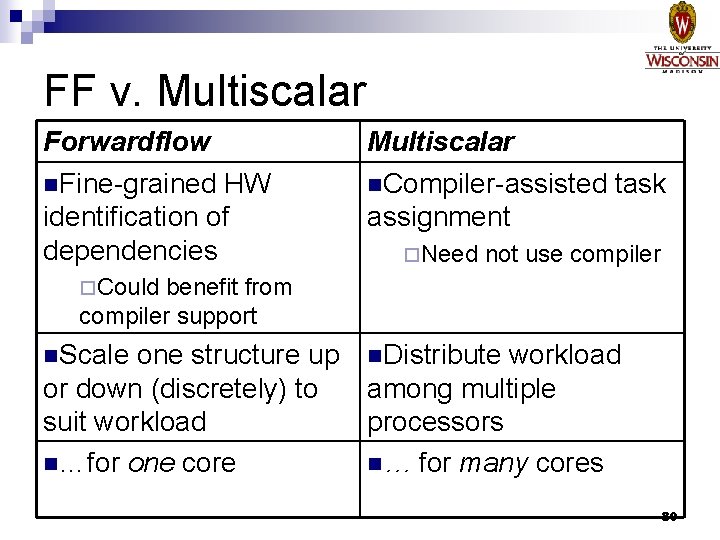

FF v. Multiscalar Forwardflow n. Fine-grained HW identification of dependencies Multiscalar n. Compiler-assisted task assignment ¨Need not use compiler ¨Could benefit from compiler support n. Scale one structure up n. Distribute workload or down (discretely) to among multiple suit workload processors n…for one core n… for many cores 80

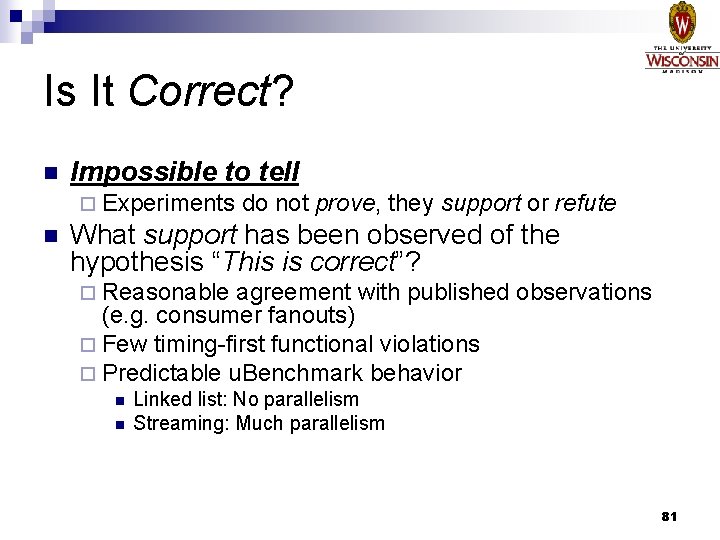

Is It Correct? n Impossible to tell ¨ Experiments n do not prove, they support or refute What support has been observed of the hypothesis “This is correct”? ¨ Reasonable agreement with published observations (e. g. consumer fanouts) ¨ Few timing-first functional violations ¨ Predictable u. Benchmark behavior n n Linked list: No parallelism Streaming: Much parallelism 81

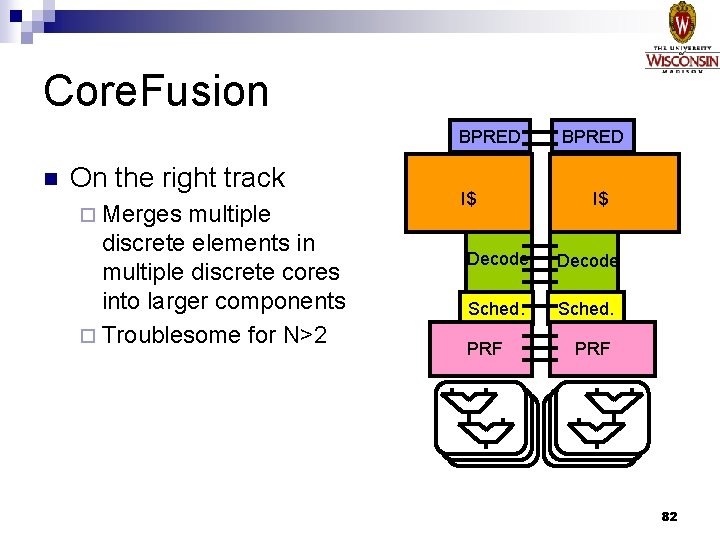

Core. Fusion BPRED n On the right track ¨ Merges multiple discrete elements in multiple discrete cores into larger components ¨ Troublesome for N>2 I$ BPRED I$ Decode Sched. PRF 82

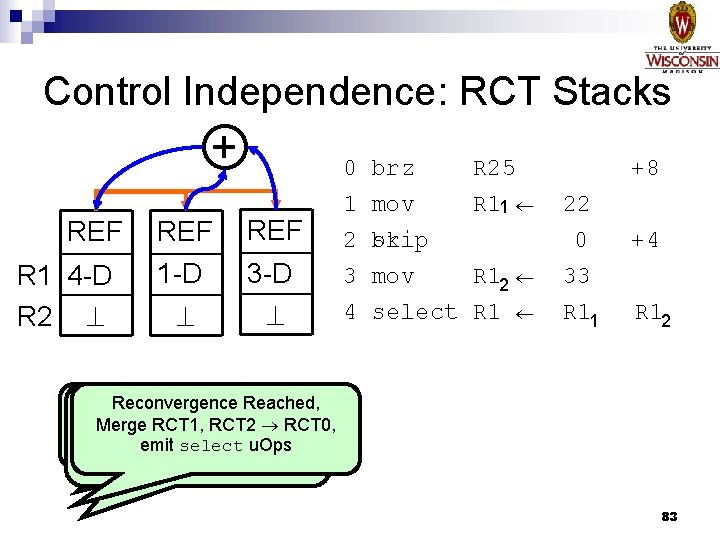

Control Independence: RCT Stacks + REF R 1 4 -D R 2 REF 1 -D REF 3 -D 0 1 2 3 4 brz mov skip br mov select R 25 R 11 R 12 R 1 +8 22 0 33 R 11 +4 R 12 Predict Hammock, Reconvergence Reached, Predict Reconvergence at PC+16: Merge RCT 1, RCT 2 RCT 0, PC+8: emit select u. Ops Convert Branch skip, Clone RCT 0 to RCT 1 Clone RCT 0 RCT 2 83

Control Independence: RCT Stacks + - No changes to pointer structure at branchresolution time (Unlike Skipper/Ginger/TCI) Changes META X-port from R/O to R/W RCT ‘Diff’ Operation Fetches both paths 84

“Vanilla” CMOS N+ P- N+ N 85

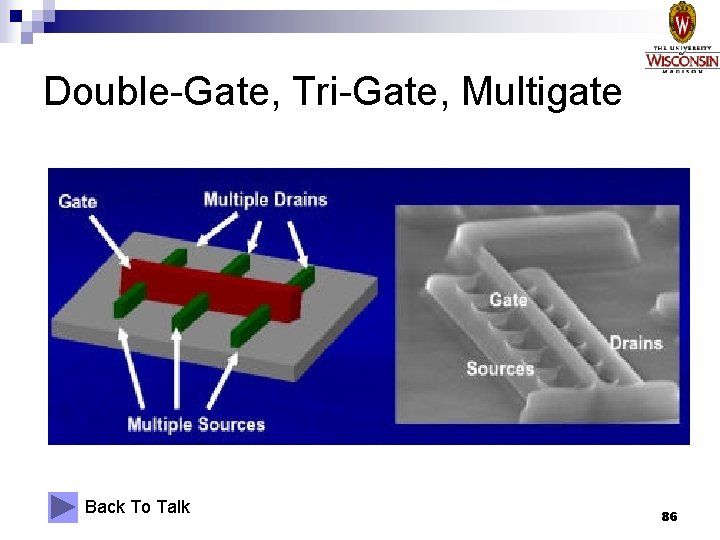

Double-Gate, Tri-Gate, Multigate Back To Talk 86

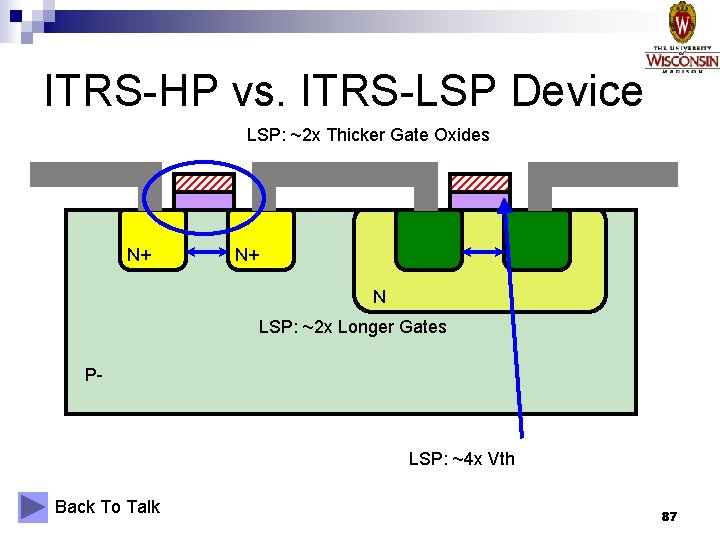

ITRS-HP vs. ITRS-LSP Device LSP: ~2 x Thicker Gate Oxides N+ N+ N LSP: ~2 x Longer Gates P- LSP: ~4 x Vth Back To Talk 87

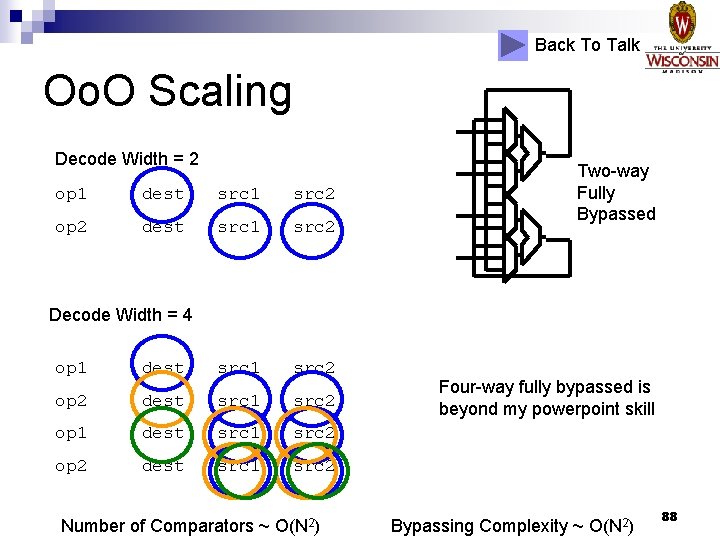

Back To Talk Oo. O Scaling Decode Width = 2 op 1 dest src 1 src 2 op 2 dest src 1 src 2 Two-way Fully Bypassed Decode Width = 4 op 1 dest src 1 src 2 op 2 dest src 1 src 2 Number of Comparators ~ O(N 2) Four-way fully bypassed is beyond my powerpoint skill Bypassing Complexity ~ O(N 2) 88

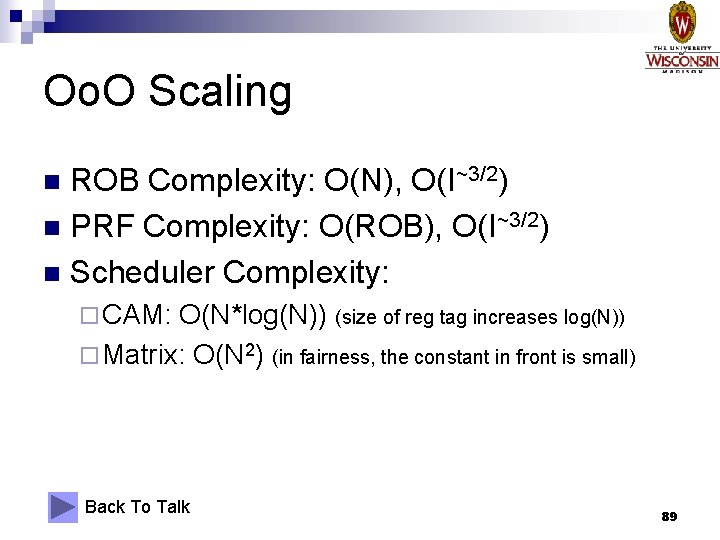

Oo. O Scaling ROB Complexity: O(N), O(I~3/2) n PRF Complexity: O(ROB), O(I~3/2) n Scheduler Complexity: n ¨ CAM: O(N*log(N)) (size of reg tag increases log(N)) ¨ Matrix: O(N 2) (in fairness, the constant in front is small) Back To Talk 89

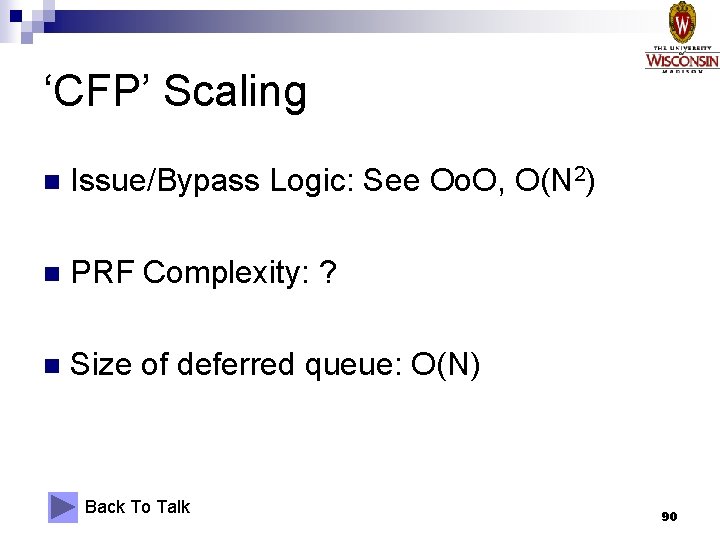

‘CFP’ Scaling n Issue/Bypass Logic: See Oo. O, O(N 2) n PRF Complexity: ? n Size of deferred queue: O(N) Back To Talk 90

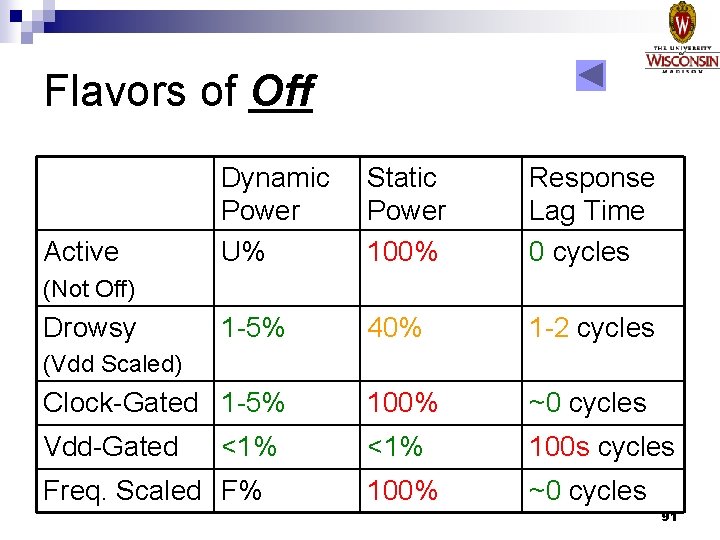

Flavors of Off Dynamic Power U% Static Power 100% Response Lag Time 0 cycles 1 -5% 40% 1 -2 cycles Clock-Gated 1 -5% 100% ~0 cycles Vdd-Gated <1% 100 s cycles 100% ~0 cycles Active (Not Off) Drowsy (Vdd Scaled) <1% Freq. Scaled F% 91

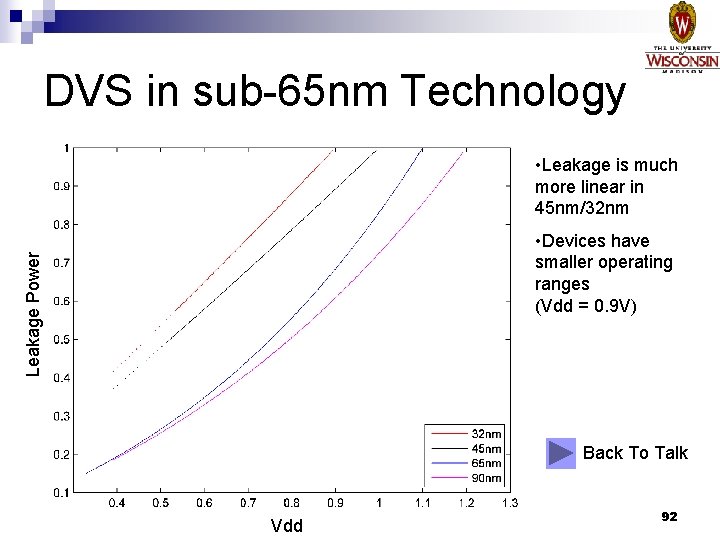

DVS in sub-65 nm Technology • Leakage is much more linear in 45 nm/32 nm Leakage Power • Devices have smaller operating ranges (Vdd = 0. 9 V) Back To Talk Vdd 92

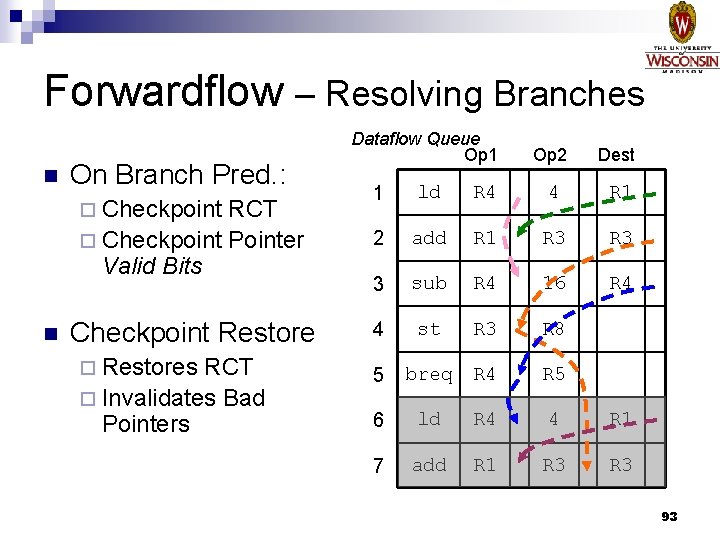

Forwardflow – Resolving Branches n On Branch Pred. : ¨ Checkpoint RCT ¨ Checkpoint Pointer Valid Bits n Checkpoint Restore ¨ Restores RCT ¨ Invalidates Bad Pointers Dataflow Queue Op 1 Op 2 Dest 1 ld R 4 4 R 1 2 add R 1 R 3 3 sub R 4 16 R 4 4 st R 3 R 8 5 breq R 4 R 5 6 ld R 4 4 R 1 7 add R 1 R 3 93

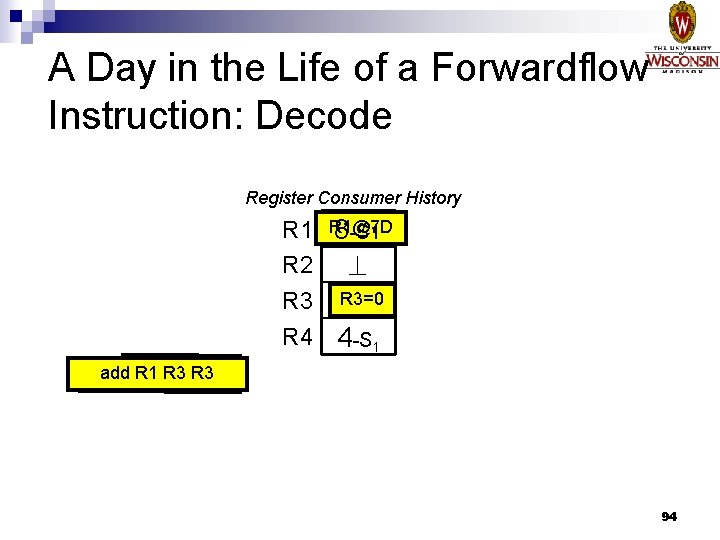

A Day in the Life of a Forwardflow Instruction: Decode Register Consumer History R 1@7 D 87 -S 1 -D R 2 R 3=0 R 3 8 -D R 4 4 -S 1 add R 1 R 38 R 3 add 8 -S 1 -D 94

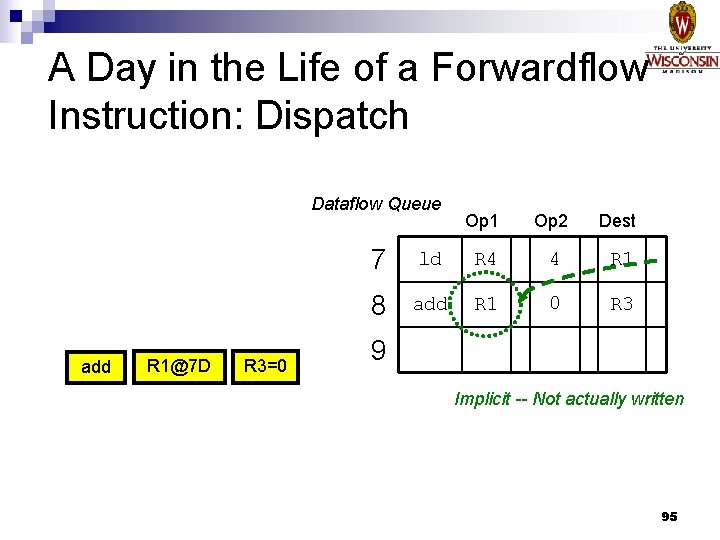

A Day in the Life of a Forwardflow Instruction: Dispatch Dataflow Queue add R 1@7 D R 3=0 Op 1 Op 2 Dest 7 ld R 4 4 R 1 8 add R 1 0 R 3 9 Implicit -- Not actually written 95

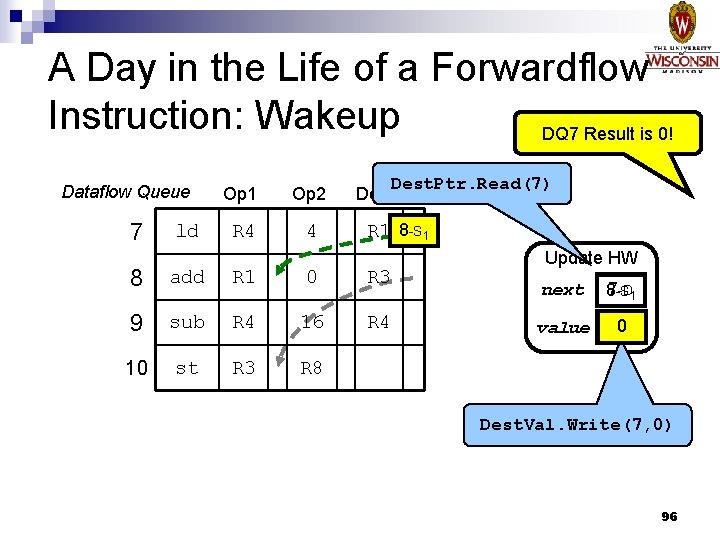

A Day in the Life of a Forwardflow Instruction: Wakeup DQ 7 Result is 0! Dataflow Queue 7 ld Op 1 Op 2 R 4 4 Dest. Ptr. Read(7) Dest R 1 8 -S 1 8 add R 1 0 R 3 9 sub R 4 16 R 4 10 st R 3 R 8 Update HW next -D 1 87 -S value 0 Dest. Val. Write(7, 0) 96

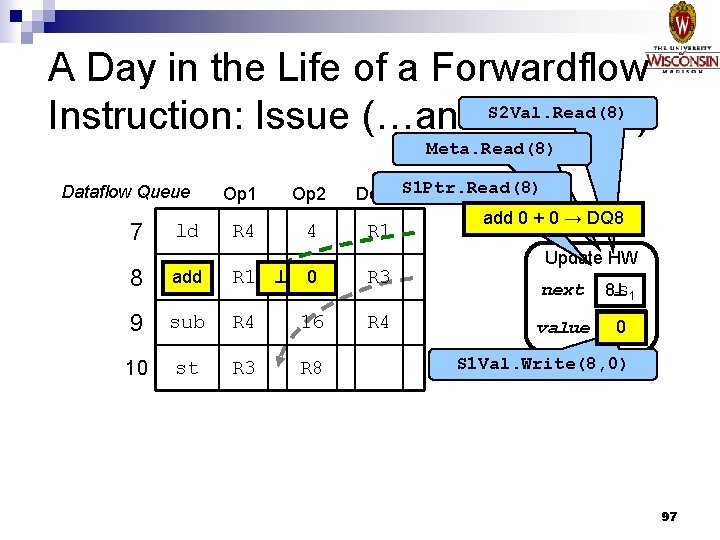

A Day in the Life of a Forwardflow Instruction: Issue (…and S 2 Val. Read(8) Execute) Meta. Read(8) Dataflow Queue 7 ld Op 1 Op 2 R 4 4 8 add R 1 9 sub 10 st Dest S 1 Ptr. Read(8) add 0 + 0 → DQ 8 R 1 0 R 3 R 4 16 R 4 R 3 R 8 Update HW next 8 -S 1 value 0 S 1 Val. Write(8, 0) 97

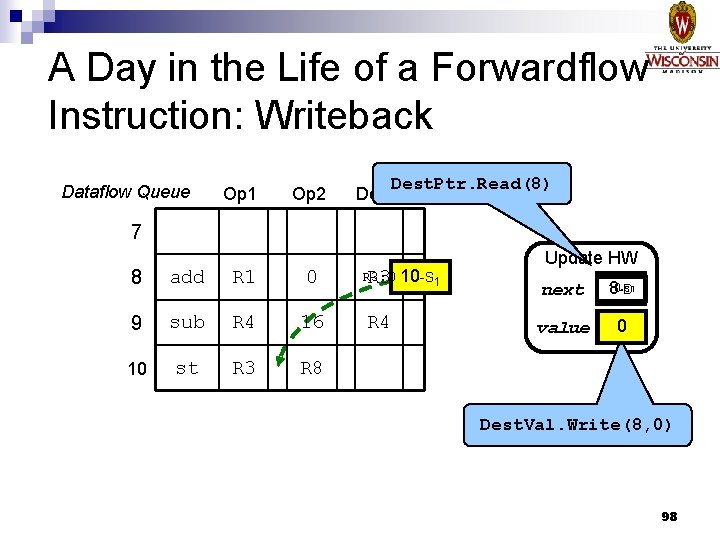

A Day in the Life of a Forwardflow Instruction: Writeback Dataflow Queue Op 1 Op 2 Dest. Ptr. Read(8) Dest 7 8 add R 1 0 9 sub R 4 16 10 st R 3 R 8 R 3: 0 R 3 10 -S 1 R 4 Update HW next 10 -S 1 8 -D value 0 Dest. Val. Write(8, 0) 98

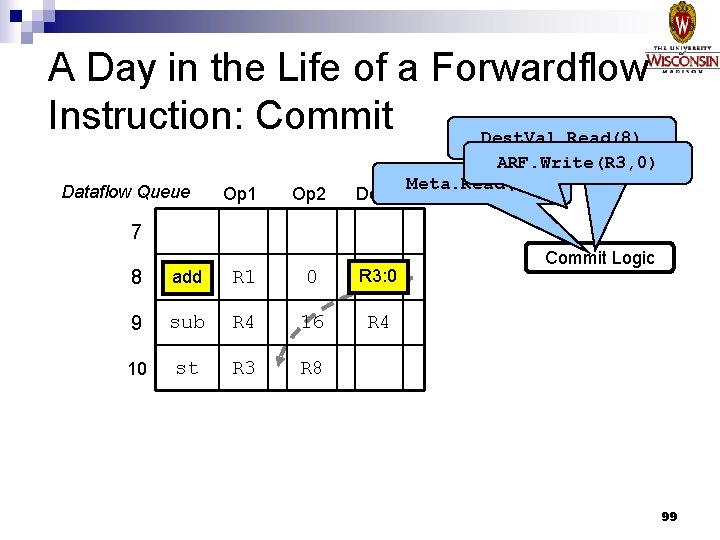

A Day in the Life of a Forwardflow Instruction: Commit Dest. Val. Read(8) Dataflow Queue Op 1 Op 2 Dest ARF. Write(R 3, 0) Meta. Read(8) 7 8 add R 1 0 R 3: 0 9 sub R 4 16 R 4 10 st R 3 R 8 Commit Logic 99

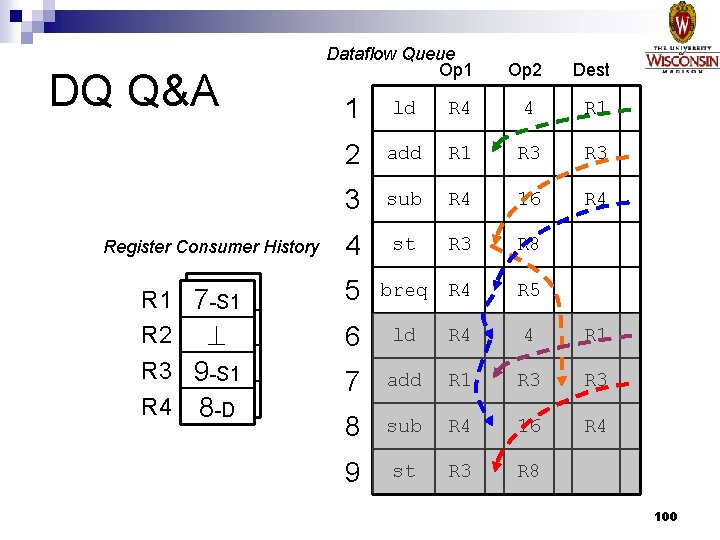

DQ Q&A Register Consumer History R 1 R 2 R 3 R 4 -S 1 72 -S 1 94 -S 1 5 -D -S 1 8 Dataflow Queue Op 1 Op 2 Dest 1 ld R 4 4 R 1 2 add R 1 R 3 3 sub R 4 16 R 4 4 st R 3 R 8 5 breq R 4 R 5 6 ld R 4 4 R 1 7 add R 1 R 3 8 sub R 4 16 R 4 9 st R 3 R 8 100

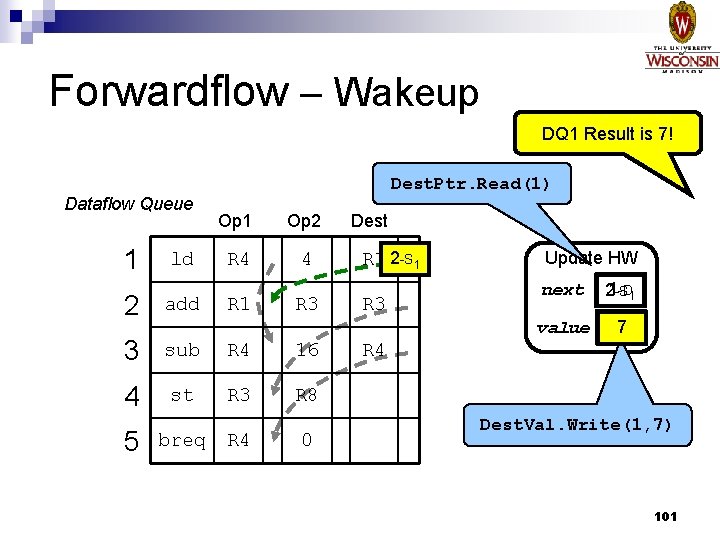

Forwardflow – Wakeup DQ 1 Result is 7! Dest. Ptr. Read(1) Dataflow Queue 1 ld Op 1 Op 2 R 4 4 2 add 3 sub R 4 16 4 st R 3 R 8 5 breq R 1 R 4 R 3 0 Dest R 1 2 -S 1 R 3 Update HW next -D 1 21 -S value 7 R 4 Dest. Val. Write(1, 7) 101

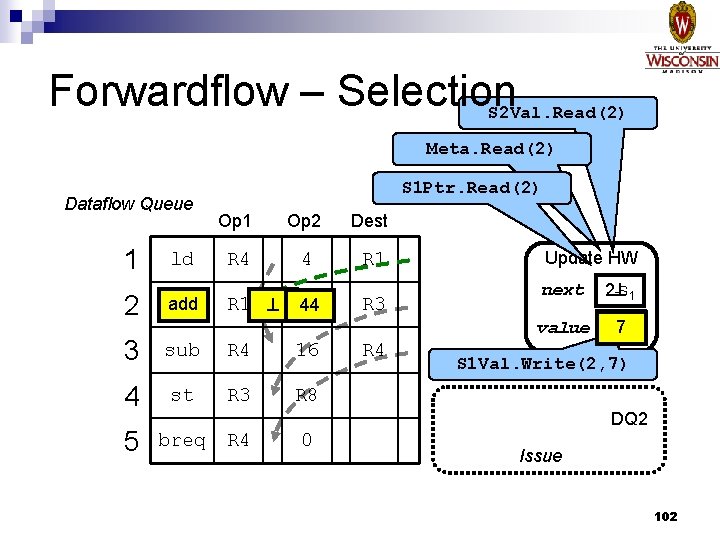

Forwardflow – Selection S 2 Val. Read(2) Meta. Read(2) Dataflow Queue 1 ld S 1 Ptr. Read(2) Op 1 Op 2 Dest R 4 4 R 1 2 add 3 sub R 4 16 4 st R 3 R 8 5 breq R 1 R 3 44 R 3 R 4 Update HW next 2 -S 1 value 7 S 1 Val. Write(2, 7) DQ 2 R 4 0 Issue 102

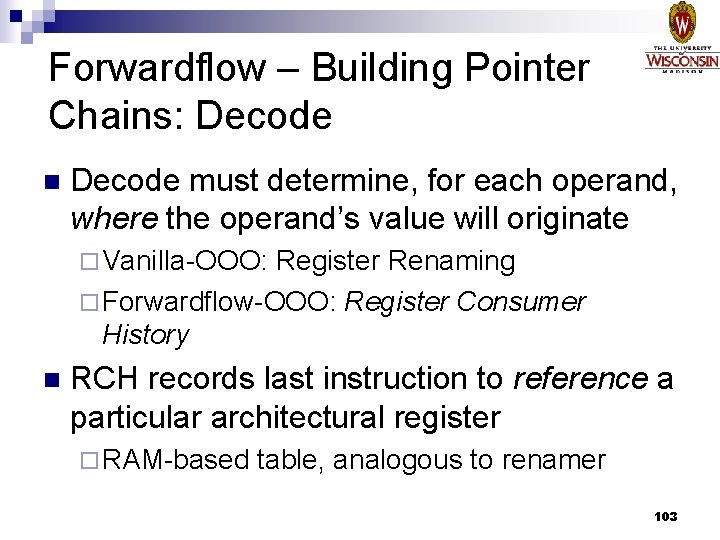

Forwardflow – Building Pointer Chains: Decode n Decode must determine, for each operand, where the operand’s value will originate ¨ Vanilla-OOO: Register Renaming ¨ Forwardflow-OOO: Register Consumer History n RCH records last instruction to reference a particular architectural register ¨ RAM-based table, analogous to renamer 103

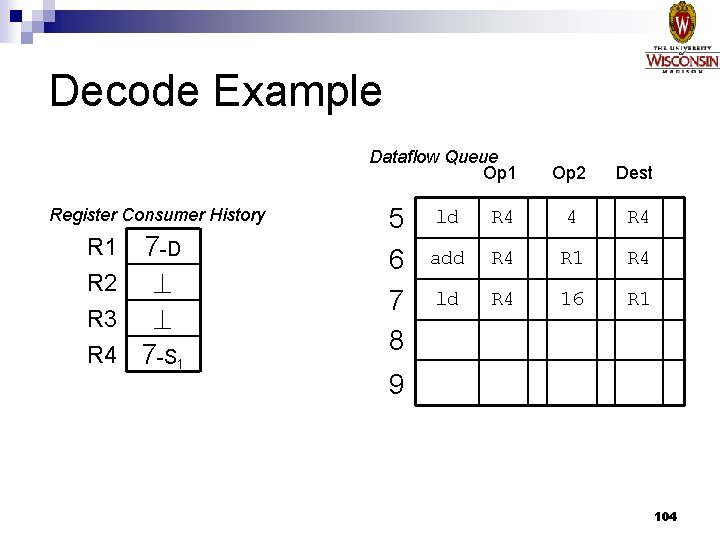

Decode Example Dataflow Queue Op 1 Register Consumer History R 1 R 2 R 3 R 4 7 -D 7 -S 1 5 6 7 8 Op 2 Dest ld R 4 4 R 4 add R 4 R 1 R 4 ld R 4 16 R 1 9 104

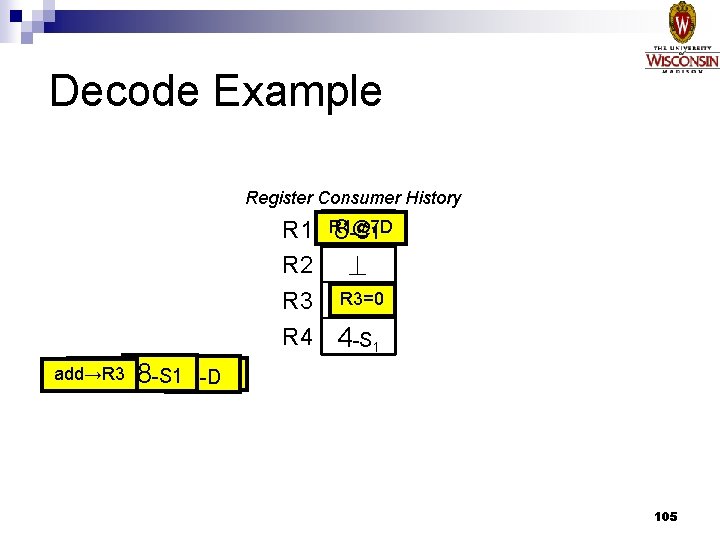

Decode Example Register Consumer History R 1@7 D 87 -S 1 -D R 2 R 3=0 R 3 8 -D R 4 4 -S 1 8: add 8 R 1 R 3 add→R 3 -S 1 8 -D 105

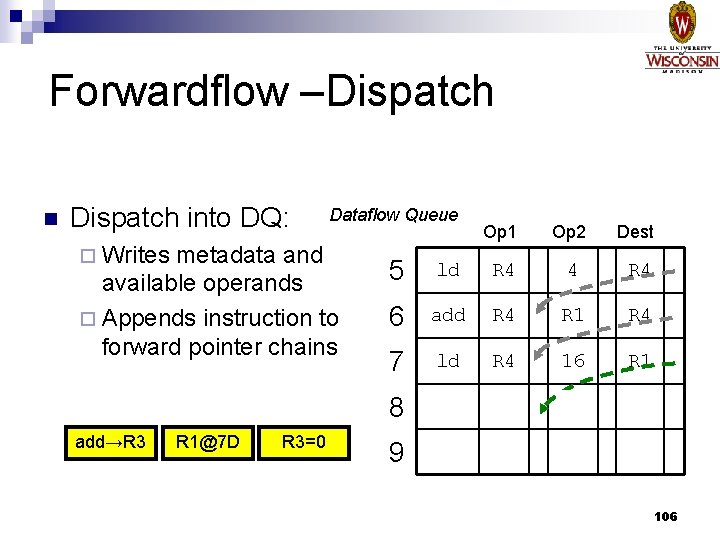

Forwardflow –Dispatch n Dispatch into DQ: ¨ Writes Dataflow Queue metadata and available operands ¨ Appends instruction to forward pointer chains add→R 3 R 1@7 D R 3=0 Op 1 Op 2 Dest 5 ld R 4 4 R 4 6 add R 4 R 1 R 4 7 ld R 4 16 R 1 8 add R 1 0 R 3 9 106

- Slides: 106