Lessons learned from the MDIC mock submission CRT

Lessons learned from the MDIC mock submission CRT, February 20, 2017 Rajesh Nair, Ph. D. Team Leader, Therapeutic Statistics Branch II Division of Biostatistics CDRH, FDA 1

No Disclosures

Outline n Overview of the MDIC mock submission Proposed pivotal clinical trial for mock device which leverages simulated clinical performance based on engineering models n Novel methodology using Bayesian methods for incorporating good engineering models n n Benefits of proposed approach n Lessons learned from mock submission 3

MDIC computational modeling and simulation working group n Going from bench-to-bedside: Device manufacturers increasingly use engineering models to predict safety and effectiveness outcomes during the product development process n Can we leverage simulated clinical performance of a device to improve efficiency of clinical trials? n n Work group brought together scientists from many device companies and FDA under the umbrella of MDIC 4

MDIC computational modeling and simulation working group n Framework for augmenting clinical trial with simulations Utilize engineering models to simulate clinical performance of device in a virtual patient (VP) population n Novel Bayesian method combines virtual patients with prospective clinical data from real patients n Potentially smaller and cost-efficient clinical trials n n Seek feedback from FDA on regulatory 5

Regulatory feedback obtained via CDRH presubmission (Pre. Sub) n Pre. Subs provide regulatory feedback to sponsors prior to an intended submission Submitted detailed clinical trial protocol for a mock device utilizing Bayesian framework for augmenting clinical trial data with virtual patients (VP) n Mock submission reviewed by independent team within CDRH comprising medical officers, engineers and statisticians n n Multiple rounds of interaction with CDRH reviewers provided timely regulatory 6

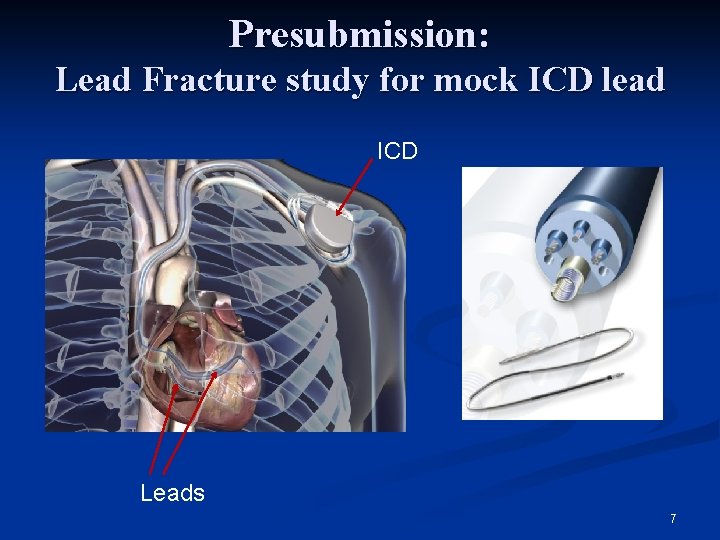

Presubmission: Lead Fracture study for mock ICD lead ICD Leads 7

Presubmission: Lead Fracture study for mock ICD lead n Investigational model: 2014 VP n n n Previous generation 2010 model market approved Updated design to improve handling characteristics Design changes could affect fatigue performance and lead failure in a patient. Bench testing can be used to measure fatigue strength and bending stiffness of the new lead. n We have in-vivo use conditions on the predicate lead. n We have distributions of patient characteristics for the predicate lead. n n Using these inputs we build a virtual patient model, predicting lead failure for the 2014 VP lead 8

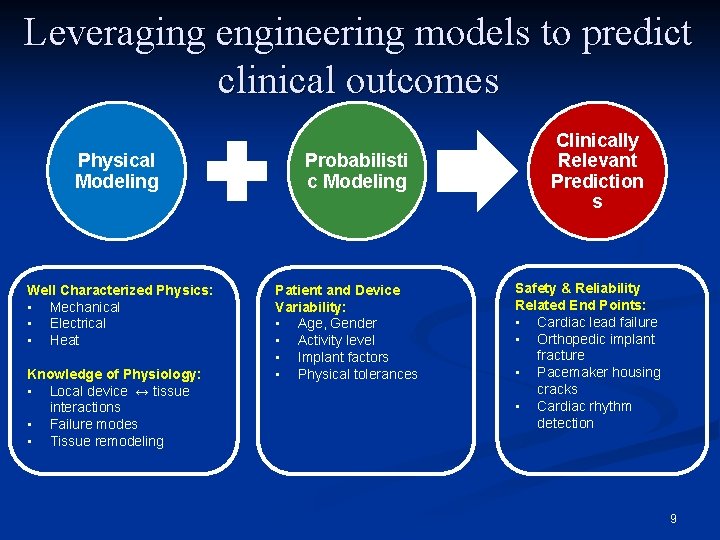

Leveraging engineering models to predict clinical outcomes Physical Modeling Well Characterized Physics: • Mechanical • Electrical • Heat Knowledge of Physiology: • Local device ↔ tissue interactions • Failure modes • Tissue remodeling Probabilisti c Modeling Patient and Device Variability: • Age, Gender • Activity level • Implant factors • Physical tolerances Clinically Relevant Prediction s Safety & Reliability Related End Points: • Cardiac lead failure • Orthopedic implant fracture • Pacemaker housing cracks • Cardiac rhythm detection 9

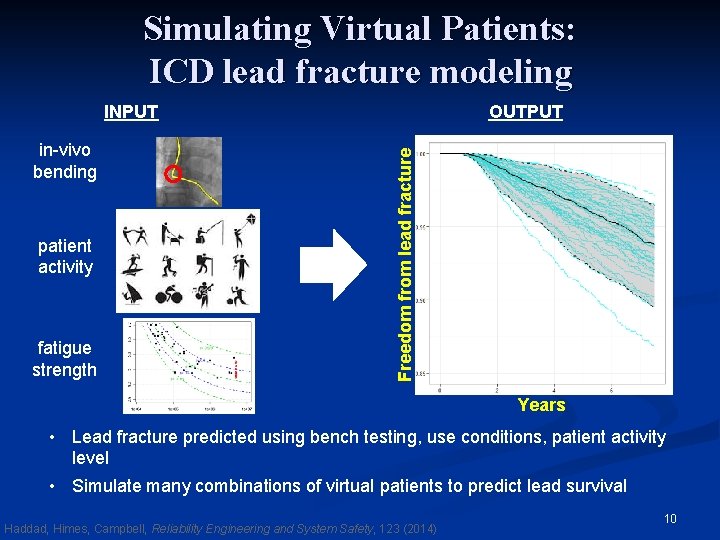

Simulating Virtual Patients: ICD lead fracture modeling in-vivo bending patient activity fatigue strength OUTPUT Freedom from lead fracture INPUT Years • Lead fracture predicted using bench testing, use conditions, patient activity level • Simulate many combinations of virtual patients to predict lead survival Haddad, Himes, Campbell, Reliability Engineering and System Safety, 123 (2014) 10

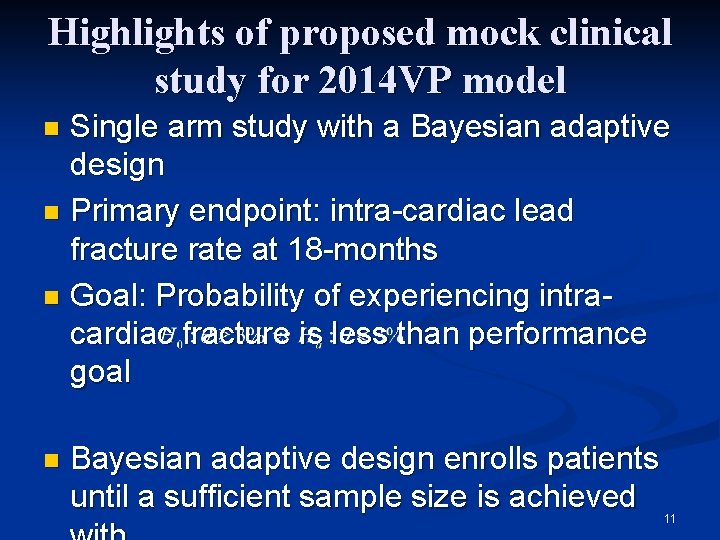

Highlights of proposed mock clinical study for 2014 VP model Single arm study with a Bayesian adaptive design n Primary endpoint: intra-cardiac lead fracture rate at 18 -months n Goal: Probability of experiencing intracardiac fracture is less than performance goal n n Bayesian adaptive design enrolls patients until a sufficient sample size is achieved 11

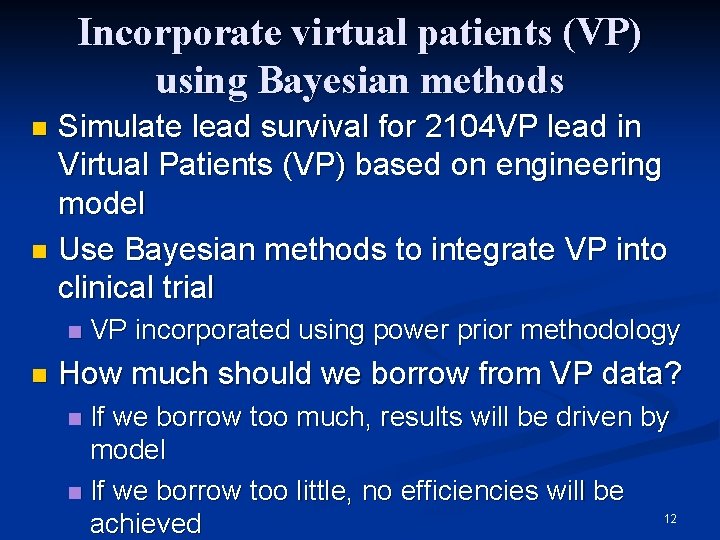

Incorporate virtual patients (VP) using Bayesian methods Simulate lead survival for 2104 VP lead in Virtual Patients (VP) based on engineering model n Use Bayesian methods to integrate VP into clinical trial n n n VP incorporated using power prior methodology How much should we borrow from VP data? If we borrow too much, results will be driven by model n If we borrow too little, no efficiencies will be 12 achieved n

Control borrowing based on consistency with experimental data n Achieving the right amount of borrowing n We can simulate an infinite number of VP so down weighting needed n Achieved n using power prior methodology Use loss function to control borrowing n Borrow more when data agree n Borrow less when data do not agree n Maximum borrowing capped in consultation with regulators n Our proposed flexible loss function achieves desired amount of borrowing n Ensure good frequentist operating characteristics 13

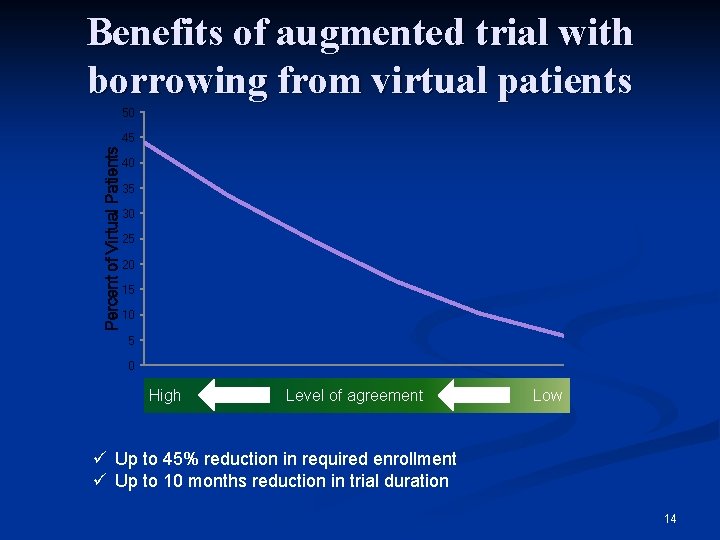

Benefits of augmented trial with borrowing from virtual patients 50 Percent of Virtual Patients 45 40 35 30 25 20 15 10 5 0 High Level of agreement Low ü Up to 45% reduction in required enrollment ü Up to 10 months reduction in trial duration 14

Lessons learned Due to heavy reliance on modeling early interaction with regulators is critical n Model credibility and context of use critical for regulatory acceptance n Is the model reliable? n Does model account for engineering uncertainties as well as patient to patient variation? n Have all relevant covariates been included? n Can virtual patients be considered exchangeable with real patients in the trial? n 15

Lessons learned n Be prepared to answer questions from regulators n n n Bias and type I error control Weight of prior information and choice of loss function Results robust to misspecification of virtual patient model High simulation burden to address regulatory concerns n Method likely to be useful for mature device areas which are in 2 nd or 3 rd n 16

Lessons learned n Challenging to review for regulators n n Requires collaboration between clinicians, statisticians and engineers at the FDA Multiple face-to-face meetings may be required during review process on account of added complexity Extensive interaction with sponsor needed to identify areas where there are gaps in understanding Mock submission gave FDA reviewers advance opportunity to understand potential regulatory issues related to use of simulations for regulatory approval n Enable development of regulatory science 17

Summary n Mock Pre. Sub and interaction with FDA available online through MDIC site http: //mdic. org/computer-modeling/virtual-patients/ n n Promote development of industry proposals leveraging these methods Fosters a collaborative approach to developing methods for improving efficiency of medical device clinical trials Benefits the broader ecosystem through publications Vehicle for creating innovation in regulatory science 18

References 1. 2. 3. Visit MDIC. org for more information on the MDIC computational modeling & simulation project T. Haddad, A. Himes and M. Campbell, "Fracture prediction of cardiac lead medical devices using Bayesian networks, " Reliability Engineering and System Safety, vol. 123, pp. 145 -157, 2014 T. Haddad, et al “Incorporation of stochastic engineering models as prior information in Bayesian medical device trials" to appear in Journal of Biopharmaceutical Statistics 19

Acknowledgements n n n MDIC team Tarek Haddad Adam Himes Laura Thompson Telba Irony Valentin Parvu n n FDA team Sherry Yan Xuefeng Li Jack Zhou Robert Kazmierski This collaborative work was done under the Medical Device Innovation Consortium (MDIC) public-private partnership. For more information please visit http: //mdic. org Thanks for attending! 20

- Slides: 20