Lecture 9 Probabilistic Retrieval Principles of Information Retrieval

Lecture 9: Probabilistic Retrieval Principles of Information Retrieval Prof. Ray Larson University of California, Berkeley School of Information IS 240 – Spring 2011. 02. 16 - SLIDE 1

Mini-TREC • Need to make groups – Today – Give me a note with group members (names and login names) • Systems – SMART (not recommended…) • ftp: //ftp. cs. cornell. edu/pub/smart – MG (from down under - ANZ) • http: //www. mds. rmit. edu. au/mg/welcome. html – Cheshire II & 3 • II = ftp: //cheshire. berkeley. edu/pub/cheshire & http: //cheshire. berkeley. edu • 3 = http: //cheshire 3. sourceforge. org – IRF (new Java-based IR framework from NIST) • http: //www. itl. nist. gov/iaui/894. 02/projects/irf. html – Terrier (from the IR research group at U. of Glasgow) • http: //terrier. org/ – Lemur (from J. Callan’s group at CMU) • http: //www-2. cs. cmu. edu/~lemur – Lucene/SOLR (Apache Java-based Text search engine) • http: //lucene. apache. org/ – Galago (Also Java-based) • http: //www. galagosearch. org – Others? ? (See http: //searchtools. com ) IS 240 – Spring 2011. 02. 16 - SLIDE 2

Mini-TREC • Proposed Schedule – February 9 – Database and previous Queries – March 2 – report on system acquisition and setup – March 9, New Queries for testing… – April 18, Results due – April 20, Results and system rankings – April 27 Group reports and discussion IS 240 – Spring 2011. 02. 16 - SLIDE 3

Today • Review – Clustering and Automatic Classification • Probabilistic Models – Probabilistic Indexing (Model 1) – Probabilistic Retrieval (Model 2) – Unified Model (Model 3) – Model 0 and real-world IR – Regression Models – The “Okapi Weighting Formula” IS 240 – Spring 2011. 02. 16 - SLIDE 4

Today • Review – Clustering and Automatic Classification • Probabilistic Models – Probabilistic Indexing (Model 1) – Probabilistic Retrieval (Model 2) – Unified Model (Model 3) – Model 0 and real-world IR – Regression Models – The “Okapi Weighting Formula” IS 240 – Spring 2011. 02. 16 - SLIDE 5

Review: IR Models • Set Theoretic Models – Boolean – Fuzzy – Extended Boolean • Vector Models (Algebraic) • Probabilistic Models (probabilistic) IS 240 – Spring 2011. 02. 16 - SLIDE 6

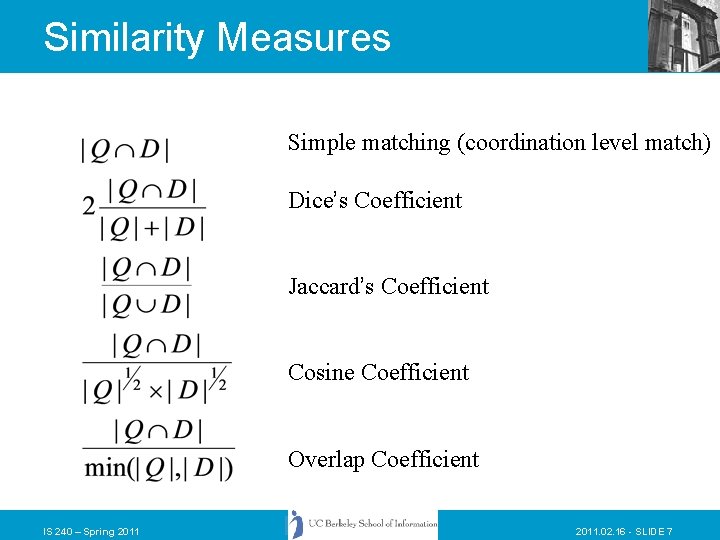

Similarity Measures Simple matching (coordination level match) Dice’s Coefficient Jaccard’s Coefficient Cosine Coefficient Overlap Coefficient IS 240 – Spring 2011. 02. 16 - SLIDE 7

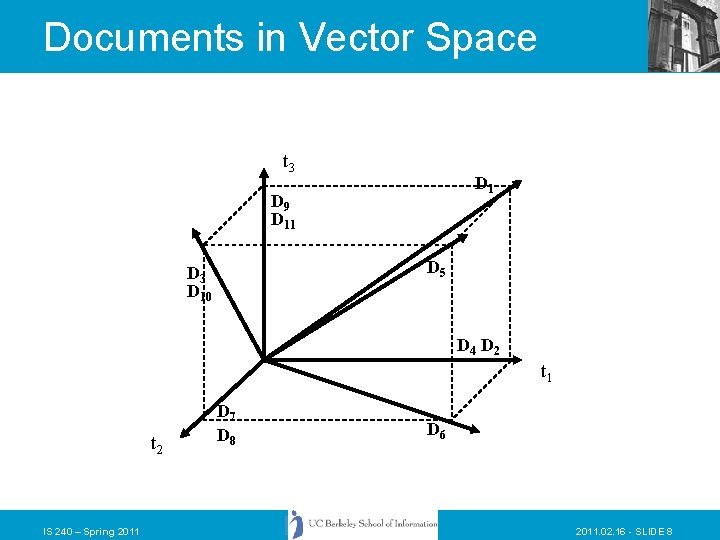

Documents in Vector Space t 3 D 1 D 9 D 11 D 5 D 3 D 10 D 4 D 2 t 1 t 2 IS 240 – Spring 2011 D 7 D 8 D 6 2011. 02. 16 - SLIDE 8

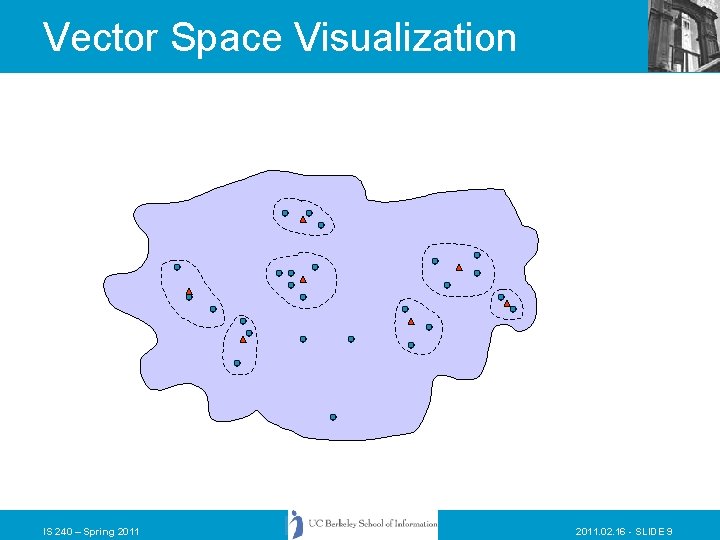

Vector Space Visualization IS 240 – Spring 2011. 02. 16 - SLIDE 9

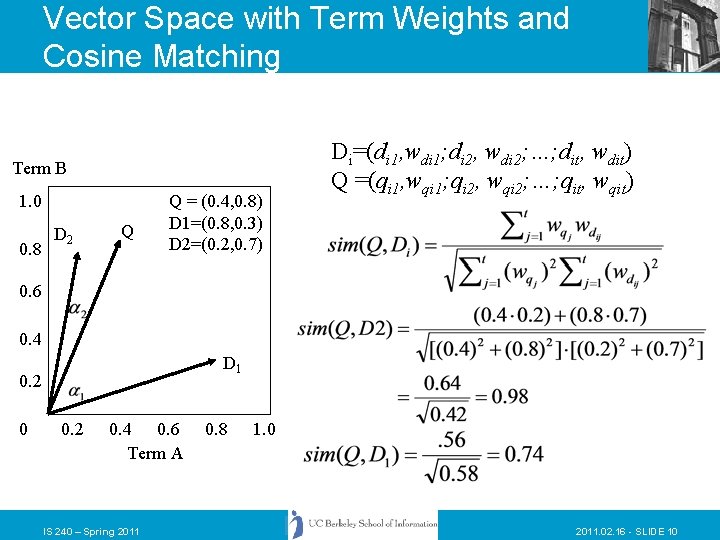

Vector Space with Term Weights and Cosine Matching Term B 1. 0 0. 8 D 2 Q Q = (0. 4, 0. 8) D 1=(0. 8, 0. 3) D 2=(0. 2, 0. 7) Di=(di 1, wdi 1; di 2, wdi 2; …; dit, wdit) Q =(qi 1, wqi 1; qi 2, wqi 2; …; qit, wqit) 0. 6 0. 4 D 1 0. 2 0. 4 0. 6 Term A IS 240 – Spring 2011 0. 8 1. 0 2011. 02. 16 - SLIDE 10

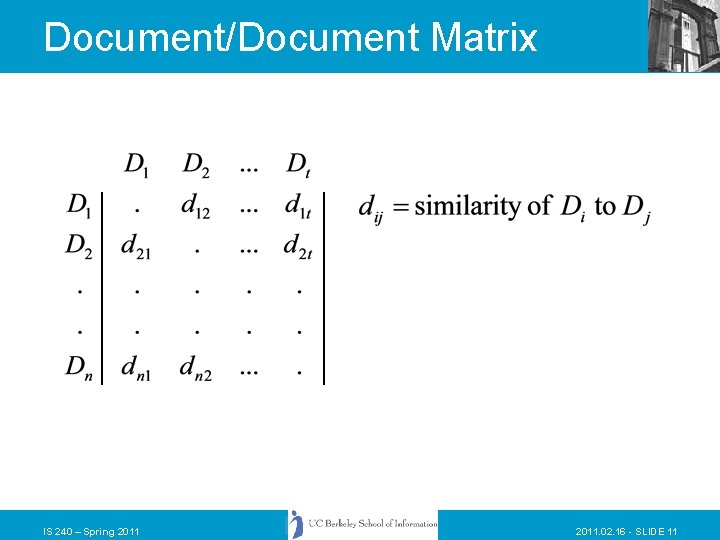

Document/Document Matrix IS 240 – Spring 2011. 02. 16 - SLIDE 11

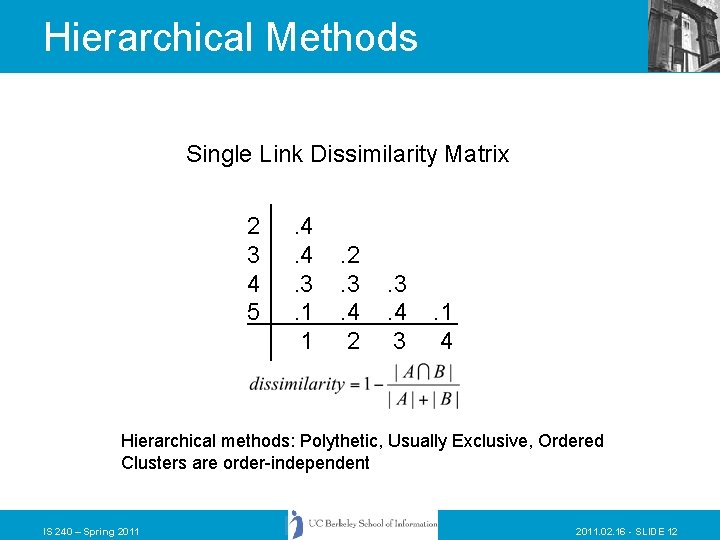

Hierarchical Methods Single Link Dissimilarity Matrix 2 3 4 5 . 4. 4. 3. 1 1 . 2. 3. 4 2 . 3. 4 3 . 1 4 Hierarchical methods: Polythetic, Usually Exclusive, Ordered Clusters are order-independent IS 240 – Spring 2011. 02. 16 - SLIDE 12

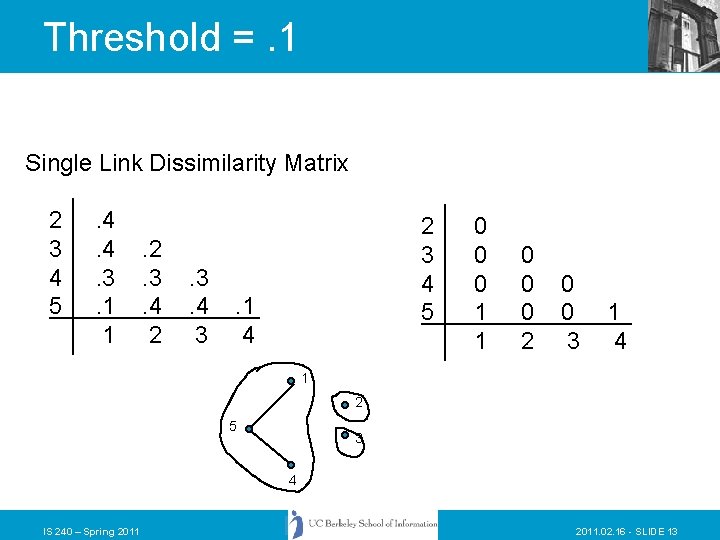

Threshold =. 1 Single Link Dissimilarity Matrix 2 3 4 5 . 4. 4. 3. 1 1 . 2. 3. 4 2 . 3. 4 3 2 3 4 5 . 1 4 0 0 0 1 1 0 0 0 2 0 0 3 1 4 1 2 5 3 4 IS 240 – Spring 2011. 02. 16 - SLIDE 13

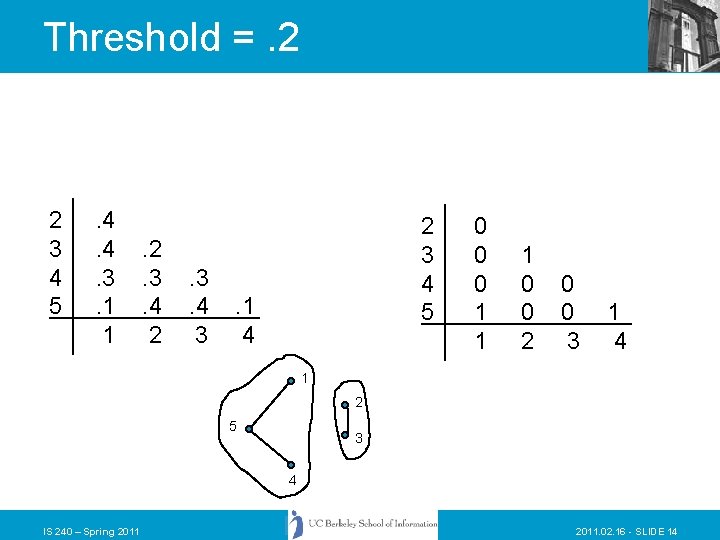

Threshold =. 2 2 3 4 5 . 4. 4. 3. 1 1 . 2. 3. 4 2 . 3. 4 3 2 3 4 5 . 1 4 0 0 0 1 1 1 0 0 2 0 0 3 1 4 1 2 5 3 4 IS 240 – Spring 2011. 02. 16 - SLIDE 14

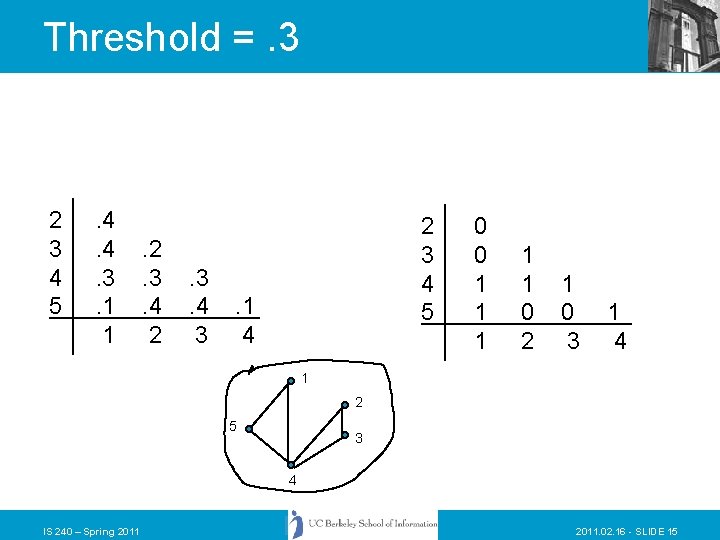

Threshold =. 3 2 3 4 5 . 4. 4. 3. 1 1 . 2. 3. 4 2 . 3. 4 3 2 3 4 5 . 1 4 0 0 1 1 1 0 2 1 0 3 1 4 1 2 5 3 4 IS 240 – Spring 2011. 02. 16 - SLIDE 15

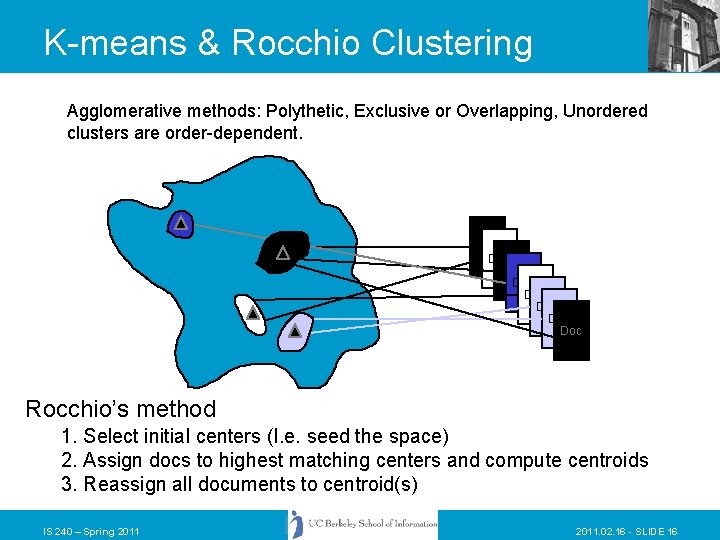

K-means & Rocchio Clustering Agglomerative methods: Polythetic, Exclusive or Overlapping, Unordered clusters are order-dependent. Doc Doc Rocchio’s method 1. Select initial centers (I. e. seed the space) 2. Assign docs to highest matching centers and compute centroids 3. Reassign all documents to centroid(s) IS 240 – Spring 2011. 02. 16 - SLIDE 16

Clustering • Advantages: – See some main themes • Disadvantage: – Many ways documents could group together are hidden • Thinking point: what is the relationship to classification systems and facets? IS 240 – Spring 2011. 02. 16 - SLIDE 17

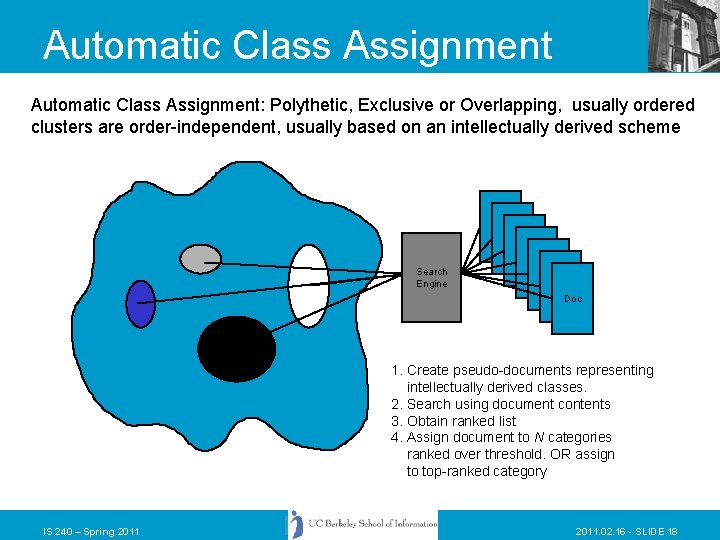

Automatic Class Assignment: Polythetic, Exclusive or Overlapping, usually ordered clusters are order-independent, usually based on an intellectually derived scheme Search Engine Doc Doc 1. Create pseudo-documents representing intellectually derived classes. 2. Search using document contents 3. Obtain ranked list 4. Assign document to N categories ranked over threshold. OR assign to top-ranked category IS 240 – Spring 2011. 02. 16 - SLIDE 18

Automatic Categorization in Cheshire II • Cheshire supports a method we call “classification clustering” that relies on having a set of records that have been indexed using some controlled vocabulary. • Classification clustering has the following steps… IS 240 – Spring 2011. 02. 16 - SLIDE 19

Start with a collection of documents. IS 240 – Spring 2011. 02. 16 - SLIDE 20

Index Classify and index with controlled vocabulary. Ideally, use a database already indexed IS 240 – Spring 2011. 02. 16 - SLIDE 21

Index “pass mtr veh spark ign eng” IS 240 – Spring 2011 Problem: Controlled Vocabularies can be difficult for people to use. 2011. 02. 16 - SLIDE 22

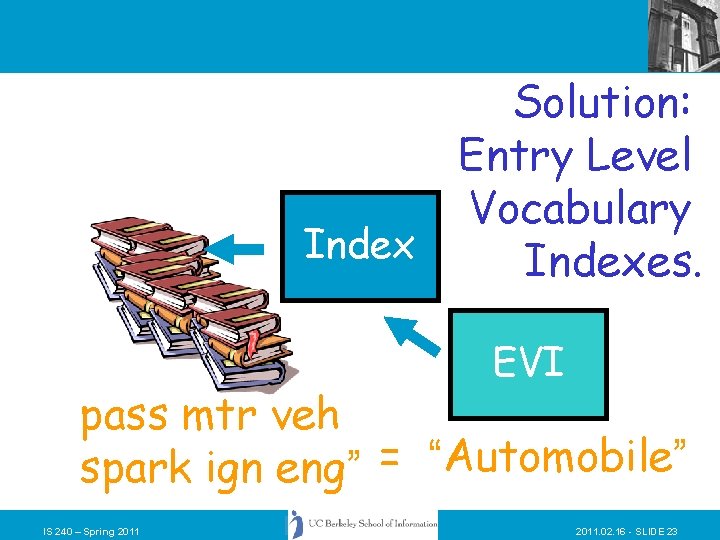

Solution: Entry Level Vocabulary Indexes. EVI pass mtr veh spark ign eng” = “Automobile” IS 240 – Spring 2011. 02. 16 - SLIDE 23

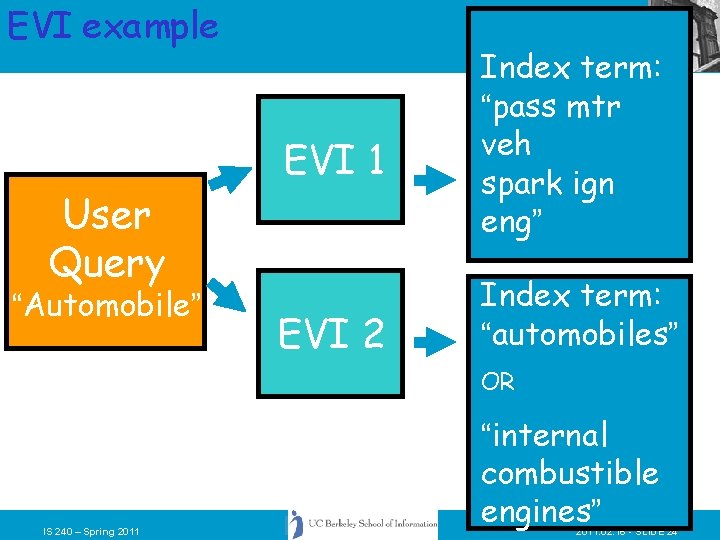

EVI example User Query “Automobile” EVI 1 EVI 2 Index term: “pass mtr veh spark ign eng” Index term: “automobiles” OR IS 240 – Spring 2011 “internal combustible engines” 2011. 02. 16 - SLIDE 24

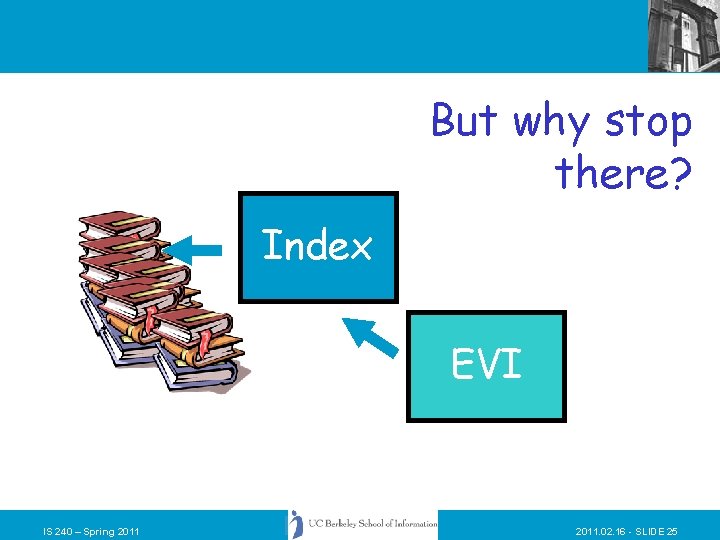

But why stop there? Index EVI IS 240 – Spring 2011. 02. 16 - SLIDE 25

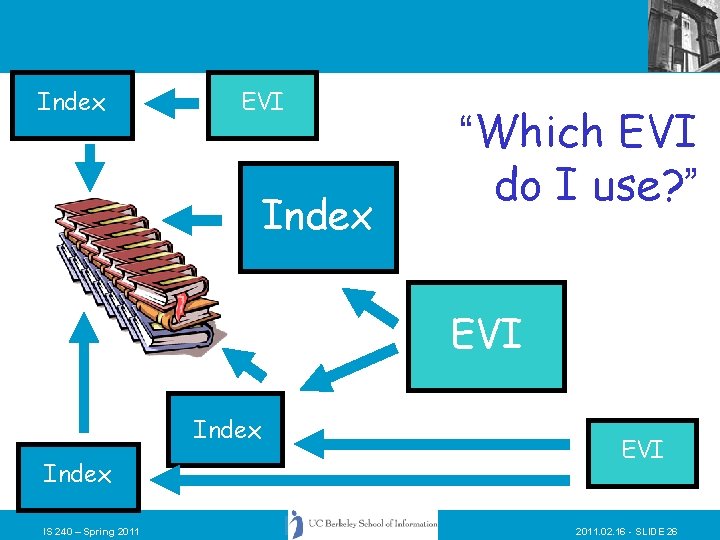

Index EVI Index “Which EVI do I use? ” EVI Index IS 240 – Spring 2011 EVI 2011. 02. 16 - SLIDE 26

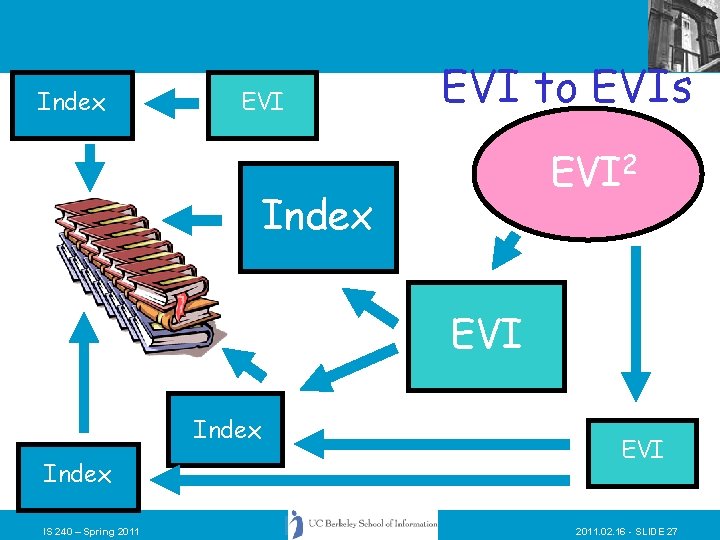

Index EVI to EVIs EVI 2 Index EVI Index IS 240 – Spring 2011 EVI 2011. 02. 16 - SLIDE 27

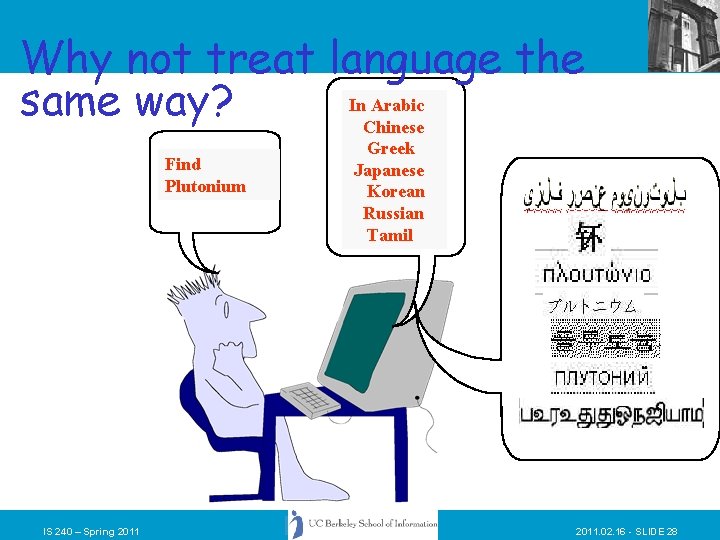

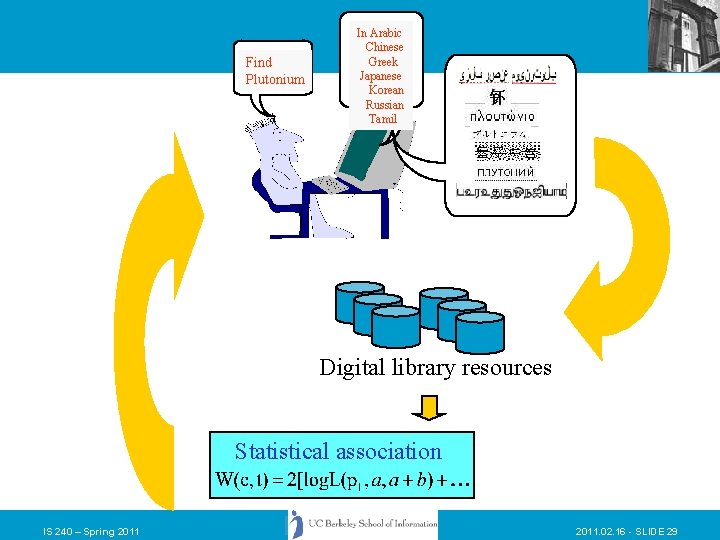

Why not treat language the In Arabic same way? Chinese Find Plutonium IS 240 – Spring 2011 Greek Japanese Korean Russian Tamil 2011. 02. 16 - SLIDE 28

Find Plutonium In Arabic Chinese Greek Japanese Korean Russian Tamil Digital library resources Statistical association IS 240 – Spring 2011. 02. 16 - SLIDE 29

Cheshire II - Two-Stage Retrieval • Using the LC Classification System – Pseudo-Document created for each LC class containing terms derived from “content-rich” portions of documents in that class (e. g. , subject headings, titles, etc. ) – Permits searching by any term in the class – Ranked Probabilistic retrieval techniques attempt to present the “Best Matches” to a query first. – User selects classes to feed back for the “second stage” search of documents. • Can be used with any classified/Indexed collection. IS 240 – Spring 2011. 02. 16 - SLIDE 30

Cheshire EVI Demo IS 240 – Spring 2011. 02. 16 - SLIDE 31

Problems with Vector Space • There is no real theoretical basis for the assumption of a term space – it is more for visualization than having any real basis – most similarity measures work about the same regardless of model • Terms are not really orthogonal dimensions – Terms are not independent of all other terms IS 240 – Spring 2011. 02. 16 - SLIDE 32

Today • Review – Clustering and Automatic Classification • Probabilistic Models – Probabilistic Indexing (Model 1) – Probabilistic Retrieval (Model 2) – Unified Model (Model 3) – Model 0 and real-world IR – Regression Models – The “Okapi Weighting Formula” IS 240 – Spring 2011. 02. 16 - SLIDE 33

Probabilistic Models • Rigorous formal model attempts to predict the probability that a given document will be relevant to a given query • Ranks retrieved documents according to this probability of relevance (Probability Ranking Principle) • Relies on accurate estimates of probabilities IS 240 – Spring 2011. 02. 16 - SLIDE 34

Probability Ranking Principle • If a reference retrieval system’s response to each request is a ranking of the documents in the collections in the order of decreasing probability of usefulness to the user who submitted the request, where the probabilities are estimated as accurately as possible on the basis of whatever data has been made available to the system for this purpose, then the overall effectiveness of the system to its users will be the best that is obtainable on the basis of that data. Stephen E. Robertson, J. Documentation 1977 IS 240 – Spring 2011. 02. 16 - SLIDE 35

Model 1 – Maron and Kuhns • Concerned with estimating probabilities of relevance at the point of indexing: – If a patron came with a request using term ti, what is the probability that she/he would be satisfied with document Dj ? IS 240 – Spring 2011. 02. 16 - SLIDE 36

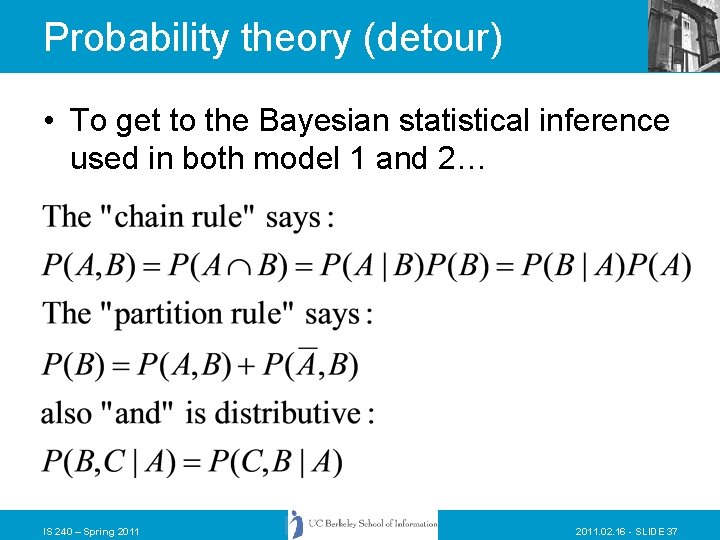

Probability theory (detour) • To get to the Bayesian statistical inference used in both model 1 and 2… IS 240 – Spring 2011. 02. 16 - SLIDE 37

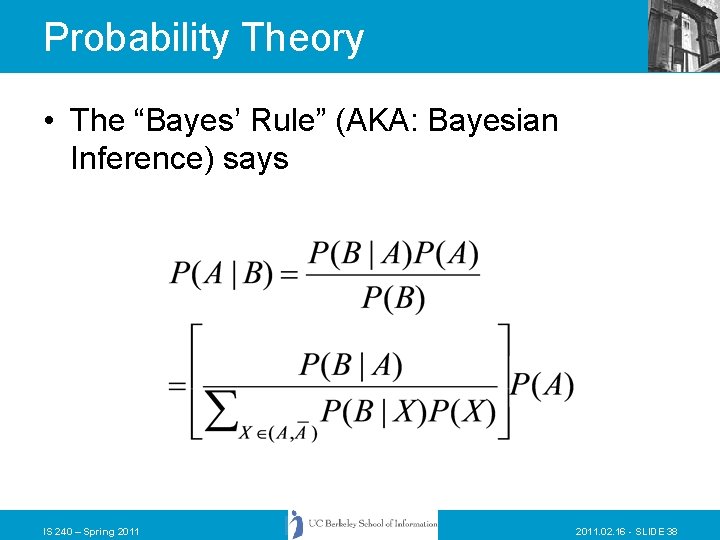

Probability Theory • The “Bayes’ Rule” (AKA: Bayesian Inference) says IS 240 – Spring 2011. 02. 16 - SLIDE 38

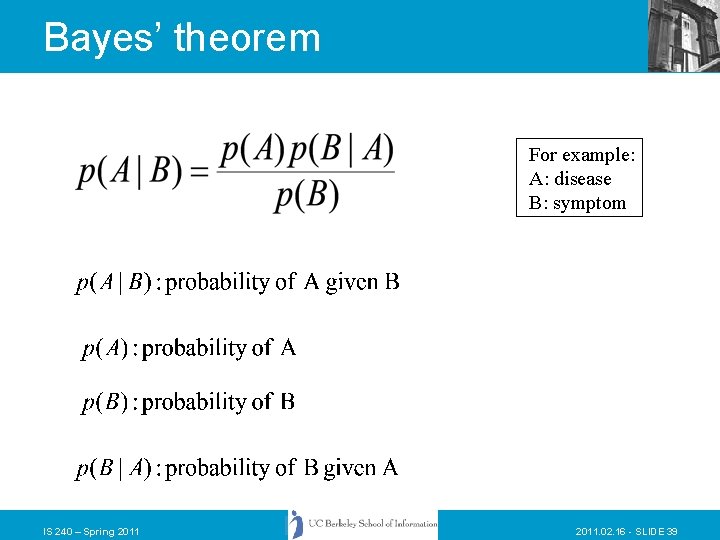

Bayes’ theorem For example: A: disease B: symptom IS 240 – Spring 2011. 02. 16 - SLIDE 39

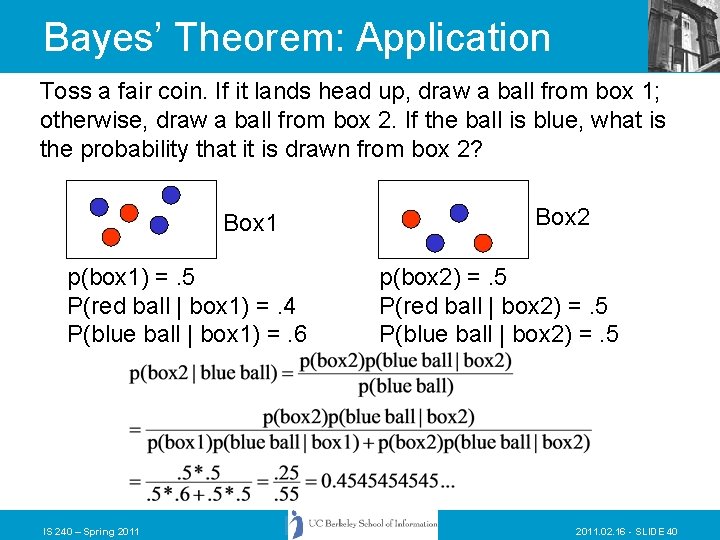

Bayes’ Theorem: Application Toss a fair coin. If it lands head up, draw a ball from box 1; otherwise, draw a ball from box 2. If the ball is blue, what is the probability that it is drawn from box 2? Box 1 p(box 1) =. 5 P(red ball | box 1) =. 4 P(blue ball | box 1) =. 6 IS 240 – Spring 2011 Box 2 p(box 2) =. 5 P(red ball | box 2) =. 5 P(blue ball | box 2) =. 5 2011. 02. 16 - SLIDE 40

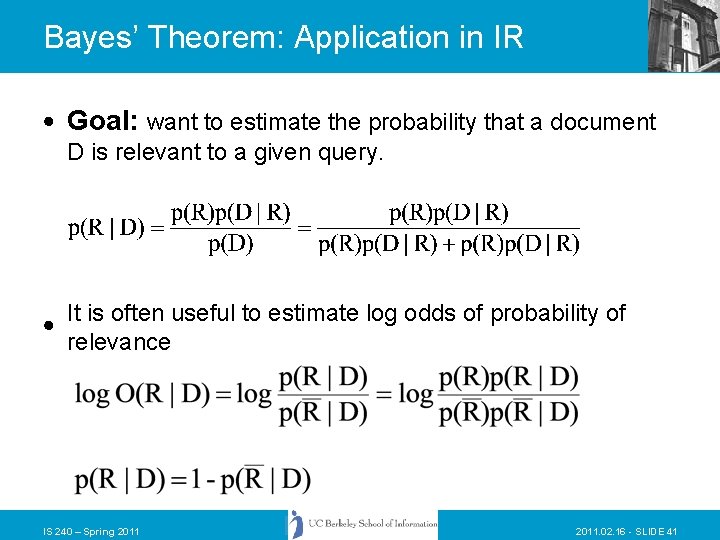

Bayes’ Theorem: Application in IR Goal: want to estimate the probability that a document D is relevant to a given query. It is often useful to estimate log odds of probability of relevance IS 240 – Spring 2011. 02. 16 - SLIDE 41

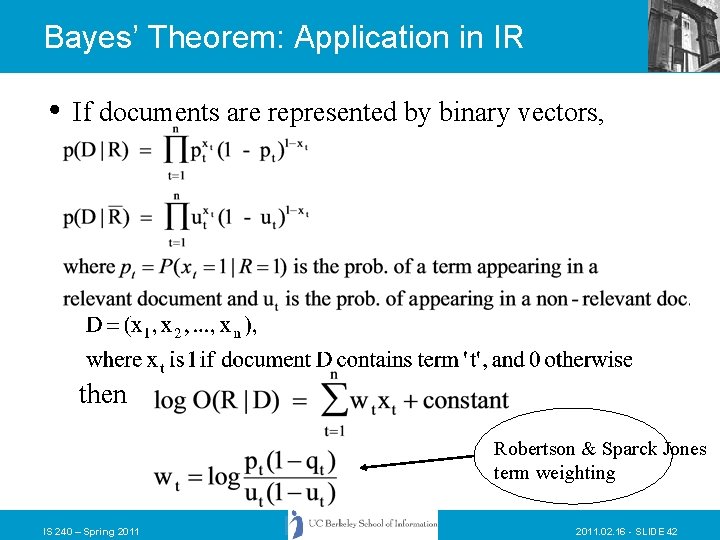

Bayes’ Theorem: Application in IR If documents are represented by binary vectors, then Robertson & Sparck Jones term weighting IS 240 – Spring 2011. 02. 16 - SLIDE 42

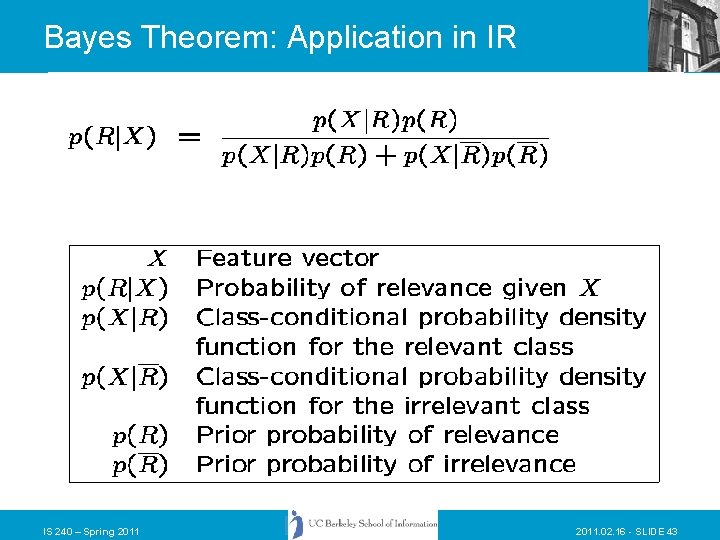

Bayes Theorem: Application in IR IS 240 – Spring 2011. 02. 16 - SLIDE 43

Model 1 • A patron submits a query (call it Q) consisting of some specification of her/his information need. Different patrons submitting the same stated query may differ as to whether or not they judge a specific document to be relevant. The function of the retrieval system is to compute for each individual document the probability that it will be judged relevant by a patron who has submitted query Q. Robertson, Maron & Cooper, 1982 IS 240 – Spring 2011. 02. 16 - SLIDE 44

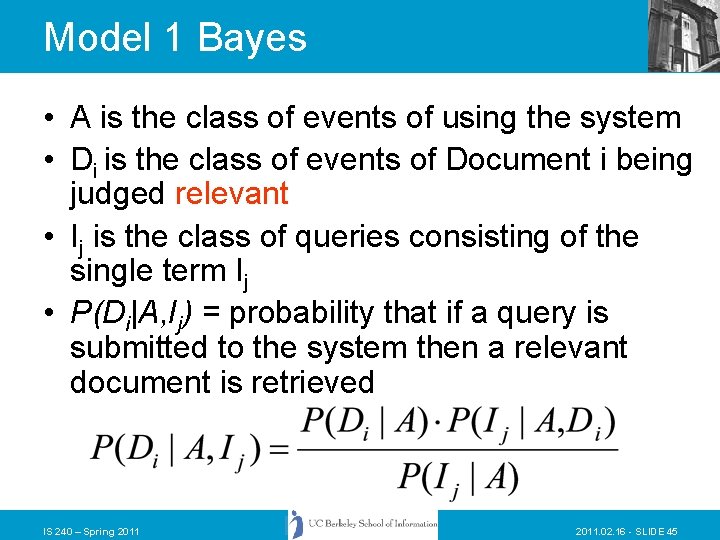

Model 1 Bayes • A is the class of events of using the system • Di is the class of events of Document i being judged relevant • Ij is the class of queries consisting of the single term Ij • P(Di|A, Ij) = probability that if a query is submitted to the system then a relevant document is retrieved IS 240 – Spring 2011. 02. 16 - SLIDE 45

Model 2 • Documents have many different properties; some documents have all the properties that the patron asked for, and other documents have only some or none of the properties. If the inquiring patron were to examine all of the documents in the collection she/he might find that some having all the sought after properties were relevant, but others (with the same properties) were not relevant. And conversely, he/she might find that some of the documents having none (or only a few) of the sought after properties were relevant, others not. The function of a document retrieval system is to compute the probability that a document is relevant, given that it has one (or a set) of specified properties. Robertson, Maron & Cooper, 1982 IS 240 – Spring 2011. 02. 16 - SLIDE 46

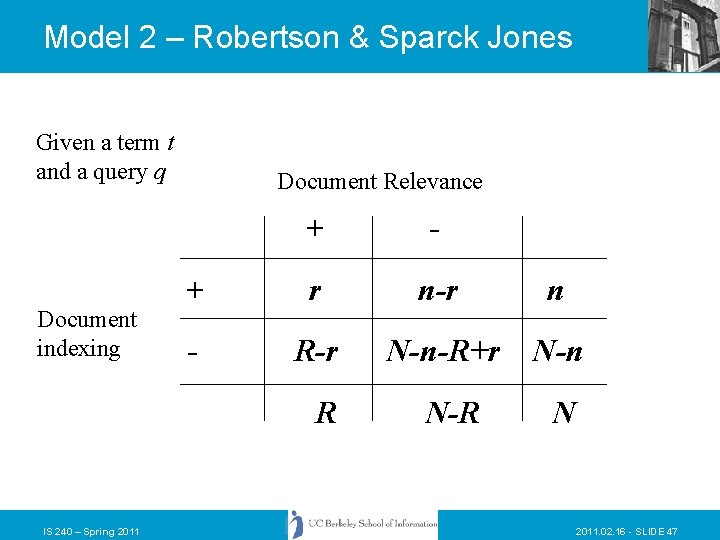

Model 2 – Robertson & Sparck Jones Given a term t and a query q Document indexing Document Relevance + - + r n-r n - R-r N-n-R+r N-n N-R N R IS 240 – Spring 2011. 02. 16 - SLIDE 47

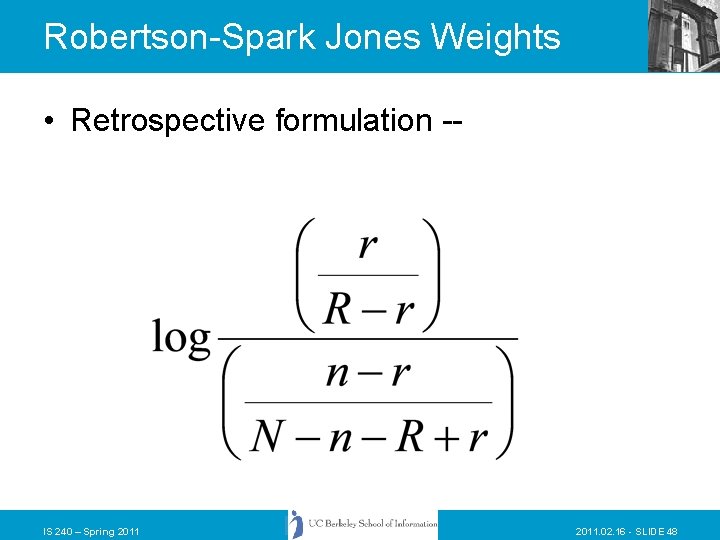

Robertson-Spark Jones Weights • Retrospective formulation -- IS 240 – Spring 2011. 02. 16 - SLIDE 48

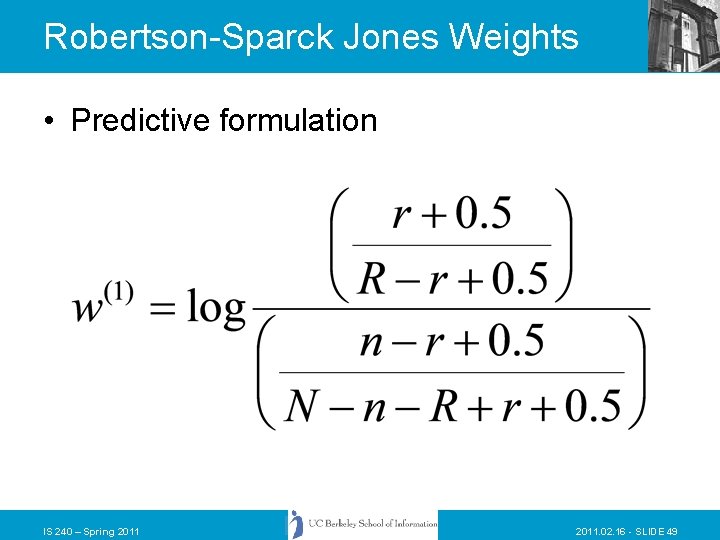

Robertson-Sparck Jones Weights • Predictive formulation IS 240 – Spring 2011. 02. 16 - SLIDE 49

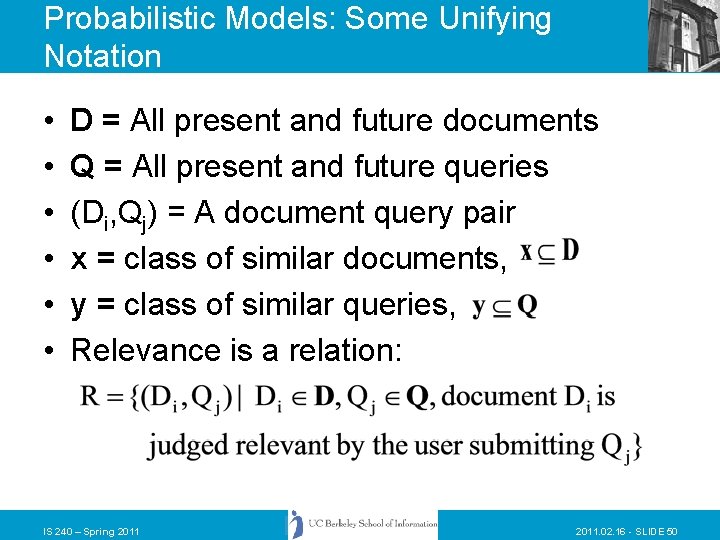

Probabilistic Models: Some Unifying Notation • • • D = All present and future documents Q = All present and future queries (Di, Qj) = A document query pair x = class of similar documents, y = class of similar queries, Relevance is a relation: IS 240 – Spring 2011. 02. 16 - SLIDE 50

Probabilistic Models • Model 1 -- Probabilistic Indexing, P(R|y, Di) • Model 2 -- Probabilistic Querying, P(R|Qj, x) • Model 3 -- Merged Model, P(R| Qj, Di) • Model 0 -- P(R|y, x) • Probabilities are estimated based on prior usage or relevance estimation IS 240 – Spring 2011. 02. 16 - SLIDE 51

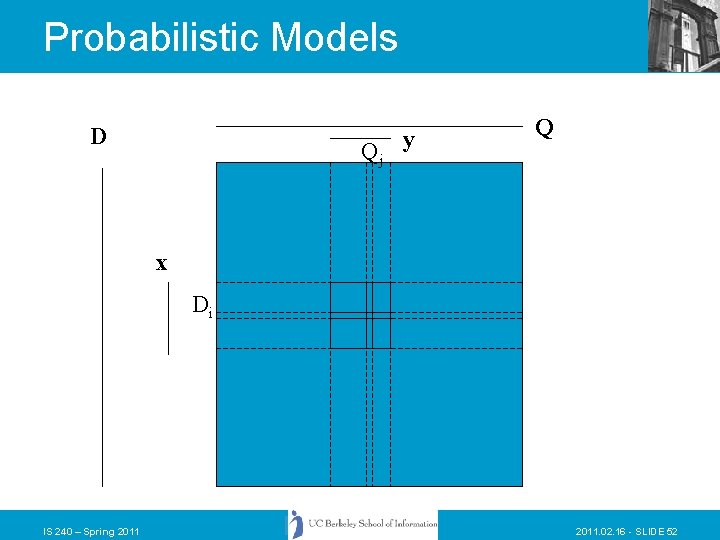

Probabilistic Models D Qj y Q x Di IS 240 – Spring 2011. 02. 16 - SLIDE 52

Logistic Regression • Based on work by William Cooper, Fred Gey and Daniel Dabney • Builds a regression model for relevance prediction based on a set of training data • Uses less restrictive independence assumptions than Model 2 – Linked Dependence IS 240 – Spring 2011. 02. 16 - SLIDE 53

Dependence assumptions • In Model 2 term independence was assumed – P(R|A, B) = P(R|A)P(R|B) – This is not very realistic as we have discussed before • Cooper, Gey, and Dabney proposed linked dependence: – If two or more retrieval clues are statistically dependent in the set of all relevance-related querydocument pairs then they are statistically dependent to a corresponding degree in the set of all nonrelevance-related pairs. – Thus dependency in the relevant and nonrelevant documents is linked IS 240 – Spring 2011. 02. 16 - SLIDE 54

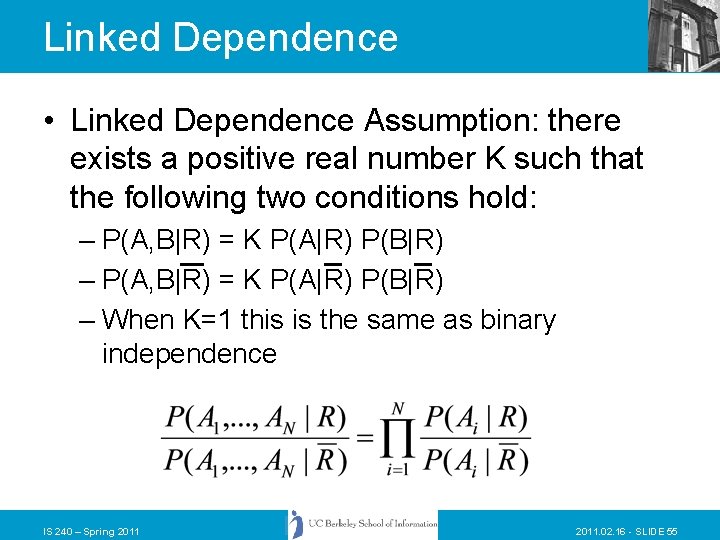

Linked Dependence • Linked Dependence Assumption: there exists a positive real number K such that the following two conditions hold: – P(A, B|R) = K P(A|R) P(B|R) – When K=1 this is the same as binary independence IS 240 – Spring 2011. 02. 16 - SLIDE 55

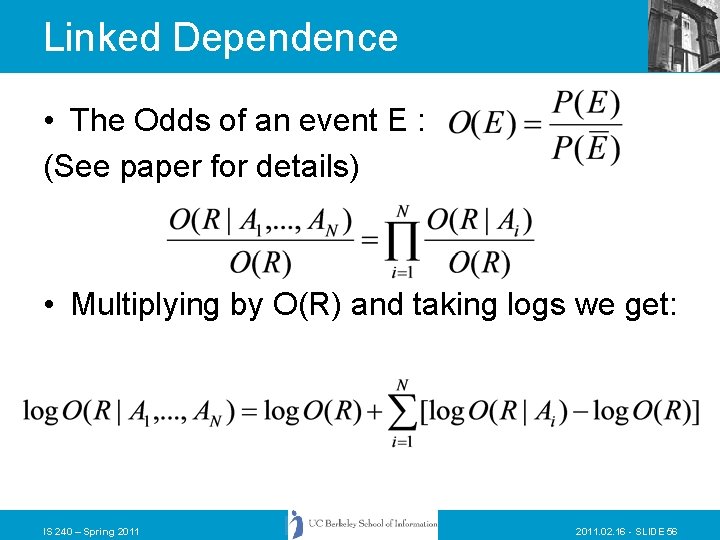

Linked Dependence • The Odds of an event E : (See paper for details) • Multiplying by O(R) and taking logs we get: IS 240 – Spring 2011. 02. 16 - SLIDE 56

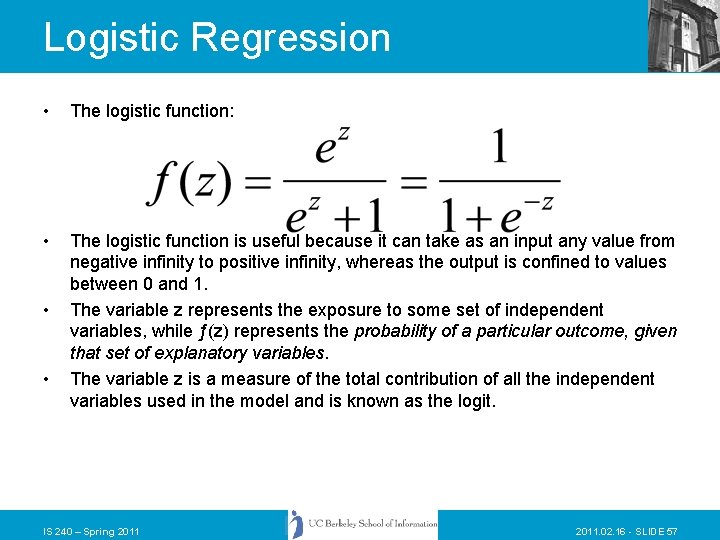

Logistic Regression • The logistic function: • The logistic function is useful because it can take as an input any value from negative infinity to positive infinity, whereas the output is confined to values between 0 and 1. The variable z represents the exposure to some set of independent variables, while ƒ(z) represents the probability of a particular outcome, given that set of explanatory variables. The variable z is a measure of the total contribution of all the independent variables used in the model and is known as the logit. • • IS 240 – Spring 2011. 02. 16 - SLIDE 57

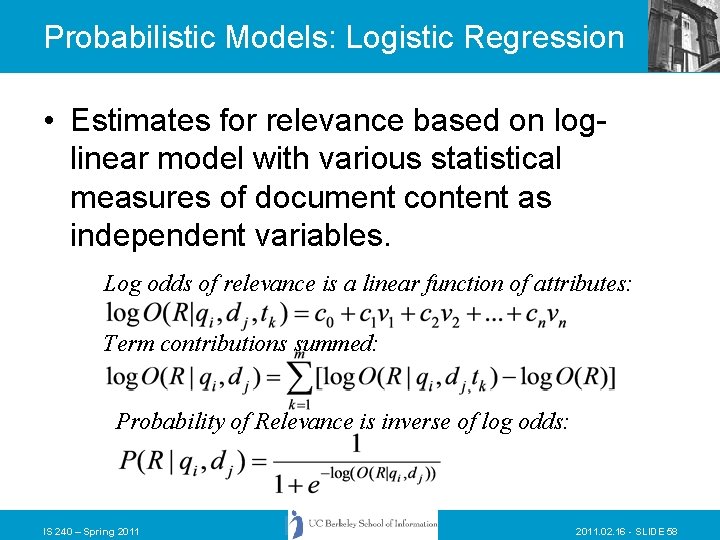

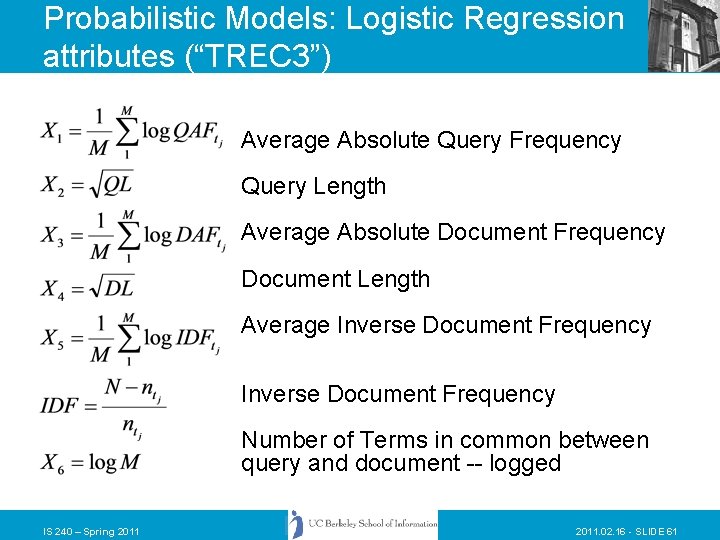

Probabilistic Models: Logistic Regression • Estimates for relevance based on loglinear model with various statistical measures of document content as independent variables. Log odds of relevance is a linear function of attributes: Term contributions summed: Probability of Relevance is inverse of log odds: IS 240 – Spring 2011. 02. 16 - SLIDE 58

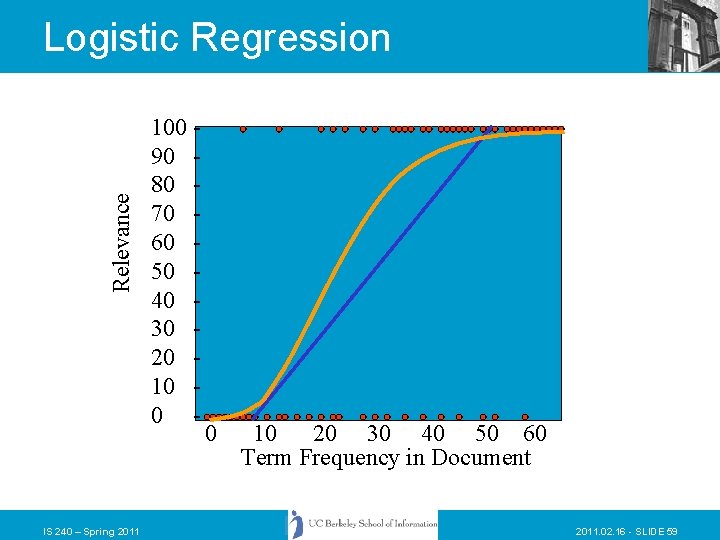

Relevance Logistic Regression IS 240 – Spring 2011 100 90 80 70 60 50 40 30 20 10 0 - 0 10 20 30 40 50 60 Term Frequency in Document 2011. 02. 16 - SLIDE 59

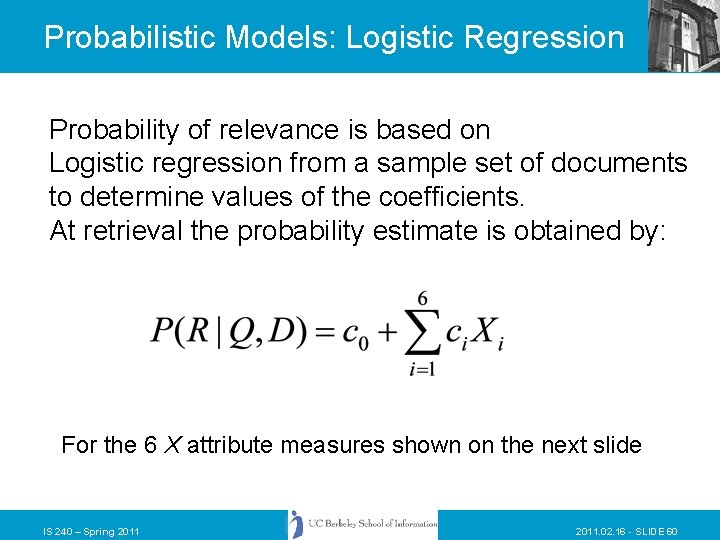

Probabilistic Models: Logistic Regression Probability of relevance is based on Logistic regression from a sample set of documents to determine values of the coefficients. At retrieval the probability estimate is obtained by: For the 6 X attribute measures shown on the next slide IS 240 – Spring 2011. 02. 16 - SLIDE 60

Probabilistic Models: Logistic Regression attributes (“TREC 3”) Average Absolute Query Frequency Query Length Average Absolute Document Frequency Document Length Average Inverse Document Frequency Number of Terms in common between query and document -- logged IS 240 – Spring 2011. 02. 16 - SLIDE 61

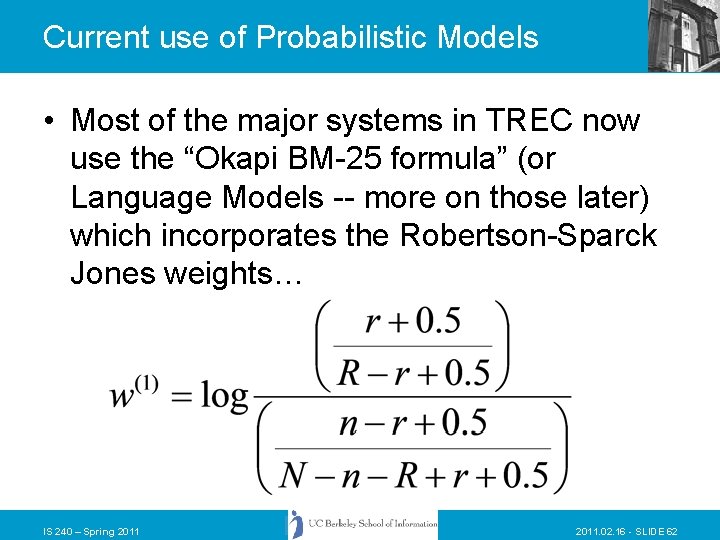

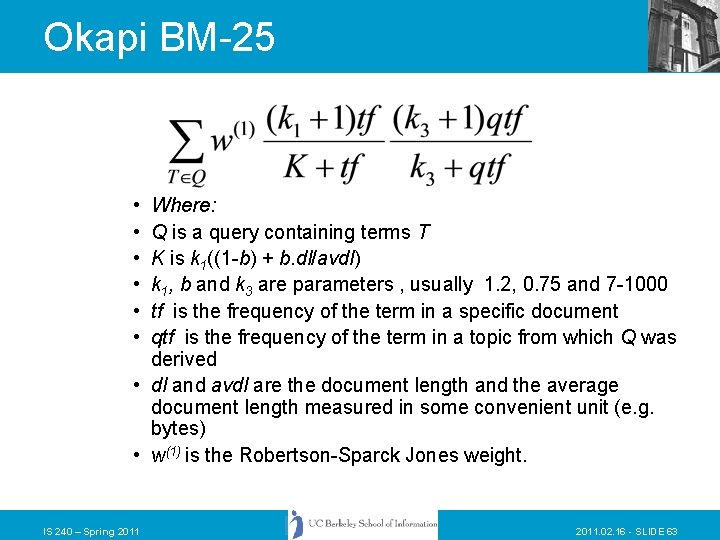

Current use of Probabilistic Models • Most of the major systems in TREC now use the “Okapi BM-25 formula” (or Language Models -- more on those later) which incorporates the Robertson-Sparck Jones weights… IS 240 – Spring 2011. 02. 16 - SLIDE 62

Okapi BM-25 • • • Where: Q is a query containing terms T K is k 1((1 -b) + b. dl/avdl) k 1, b and k 3 are parameters , usually 1. 2, 0. 75 and 7 -1000 tf is the frequency of the term in a specific document qtf is the frequency of the term in a topic from which Q was derived • dl and avdl are the document length and the average document length measured in some convenient unit (e. g. bytes) • w(1) is the Robertson-Sparck Jones weight. IS 240 – Spring 2011. 02. 16 - SLIDE 63

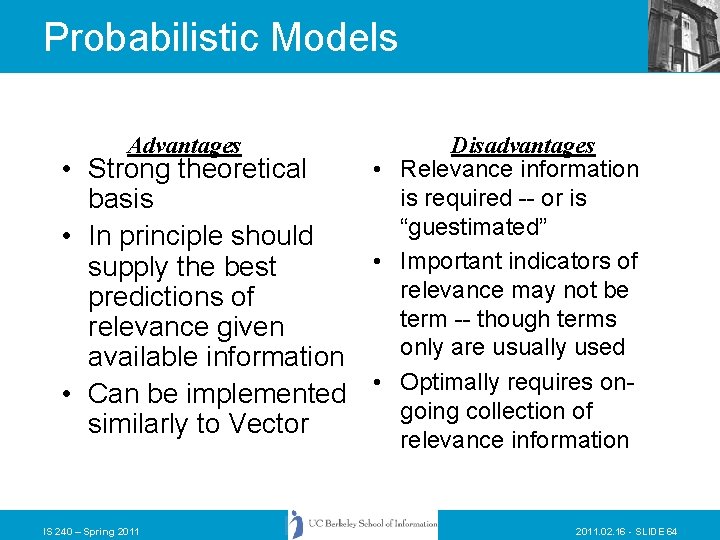

Probabilistic Models Advantages • Strong theoretical basis • In principle should supply the best predictions of relevance given available information • Can be implemented similarly to Vector IS 240 – Spring 2011 Disadvantages • Relevance information is required -- or is “guestimated” • Important indicators of relevance may not be term -- though terms only are usually used • Optimally requires ongoing collection of relevance information 2011. 02. 16 - SLIDE 64

Vector and Probabilistic Models • • • Support “natural language” queries Treat documents and queries the same Support relevance feedback searching Support ranked retrieval Differ primarily in theoretical basis and in how the ranking is calculated – Vector assumes relevance – Probabilistic relies on relevance judgments or estimates IS 240 – Spring 2011. 02. 16 - SLIDE 65

- Slides: 65