Lecture 7 Scheduling preemptivenonpreemptive scheduler CPU bursts scheduling

Lecture 7: Scheduling § § § preemptive/non-preemptive scheduler CPU bursts scheduling policy goals and metrics basic scheduling algorithms: • First Come First Served (FCFS) • Round Robin (RR) • Shortest Job First (SJF) • Shortest Remainder First (SRF) • priority • multilevel queue • multilevel feedback queue Unix scheduling 1

Types of CPU Schedulers § § CPU scheduler (dispatcher or short-term scheduler) selects a process from the ready queue and lets it run on the CPU types: • non-preemptive executes when: • process is terminated • process switches from running to blocked F simple to implement but unsuitable for time-sharing systems • preemptive executes at times above and: • process is created • blocked process becomes ready • a timer interrupt occurs F more overhead, but keeps long processes from monopolizing CPU F must not preempt OS kernel while it’s servicing a system call (e. g. , reading a file) or otherwise OS may end up in an inconsistent state 2

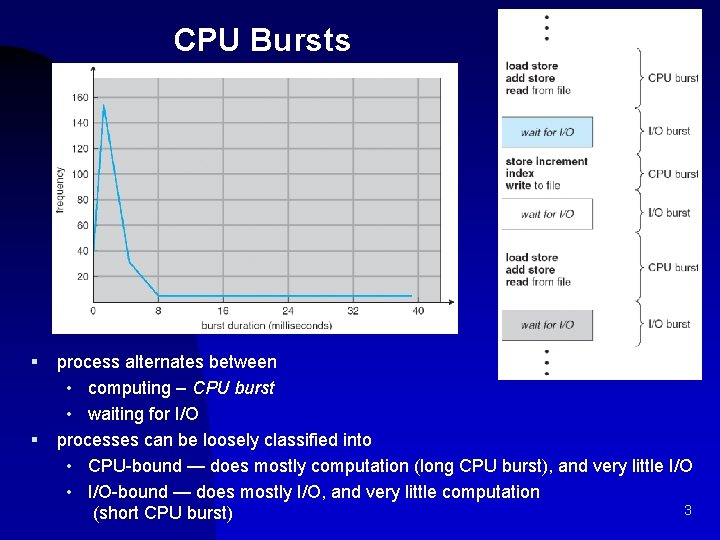

CPU Bursts § § process alternates between • computing – CPU burst • waiting for I/O processes can be loosely classified into • CPU-bound — does mostly computation (long CPU burst), and very little I/O • I/O-bound — does mostly I/O, and very little computation 3 (short CPU burst)

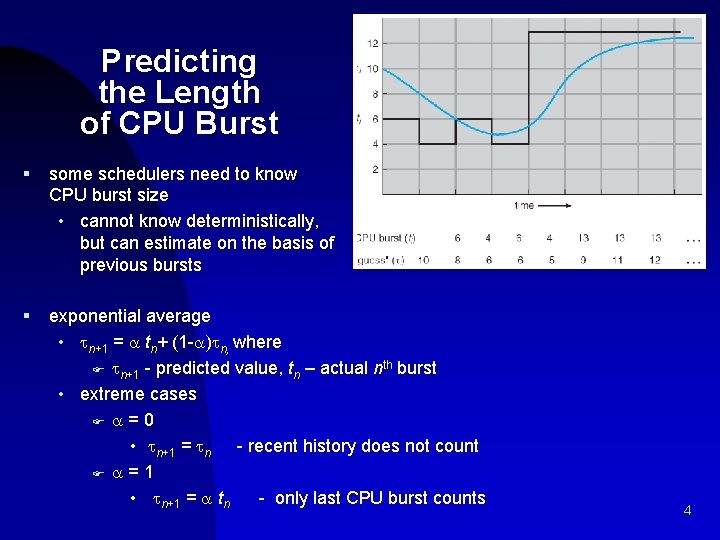

Predicting the Length of CPU Burst § some schedulers need to know CPU burst size • cannot know deterministically, but can estimate on the basis of previous bursts § exponential average • n+1 = tn+ (1 - ) n, where F n+1 - predicted value, tn – actual nth burst • extreme cases F = 0 • n+1 = n - recent history does not count F = 1 • n+1 = tn - only last CPU burst counts 4

Scheduling Policy system oriented: • maximize CPU utilization – scheduler needs to keep CPU as busy as possible. Mainly, the CPU should not be idle if there are processes ready to run • maximize throughput – number of processes completed per unit time • ensure fairness of CPU allocation F should avoid starvation – process is never scheduled • minimize overhead – incurred due to scheduling F in time or CPU utilization (e. g. due to context switches or policy computation) F in space (e. g. data structures) user-oriented: • minimize turnaround time – interval from time process becomes ready till the time it is done • minimize average and worst-case waiting time – sum of periods spent waiting in the ready queue • minimize average and worst-case response time – time from process entering the ready queue till it is first scheduled 5

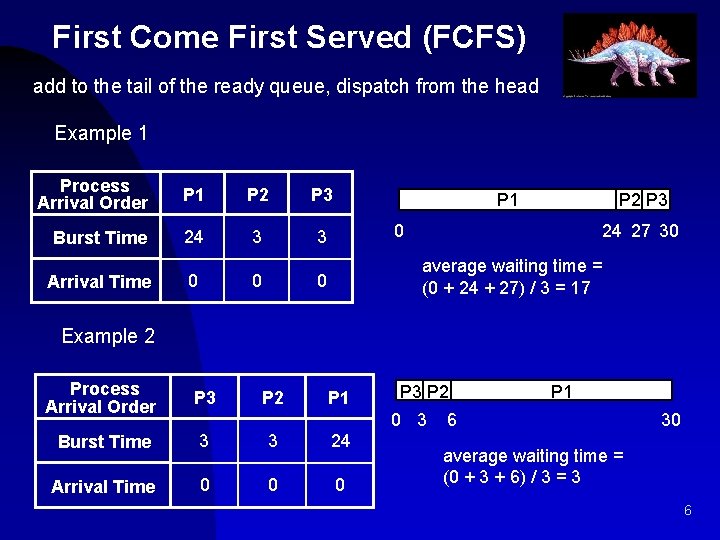

First Come First Served (FCFS) add to the tail of the ready queue, dispatch from the head Example 1 Process Arrival Order P 1 P 2 P 3 Burst Time 24 3 3 Arrival Time 0 0 0 P 1 P 2 P 3 0 24 27 30 average waiting time = (0 + 24 + 27) / 3 = 17 Example 2 Process Arrival Order P 3 Burst Time 3 3 24 Arrival Time 0 0 0 P 2 P 1 P 3 P 2 0 3 P 1 6 30 average waiting time = (0 + 3 + 6) / 3 = 3 6

FCFS Evaluation § § § non-preemptive response time — may have variance or be long • convoy effect – one long-burst process is followed by many shortburst processes, short processes have to wait a long time throughput — not emphasized fairness — penalizes short-burst processes • starvation — not possible overhead — minimal 7

Round-Robin (RR) § § preemptive version of FCFS policy • define a fixed time slice (also called a time quantum) – typically 10 -100 ms • choose process from head of ready queue • run that process for at most one time slice, and if it hasn’t completed or blocked, add it to the tail of the ready queue • choose another process from the head of the ready queue, and run that process for at most one time slice … § implement using • hardware timer that interrupts at periodic intervals • FIFO ready queue (add to tail, take from head) 8

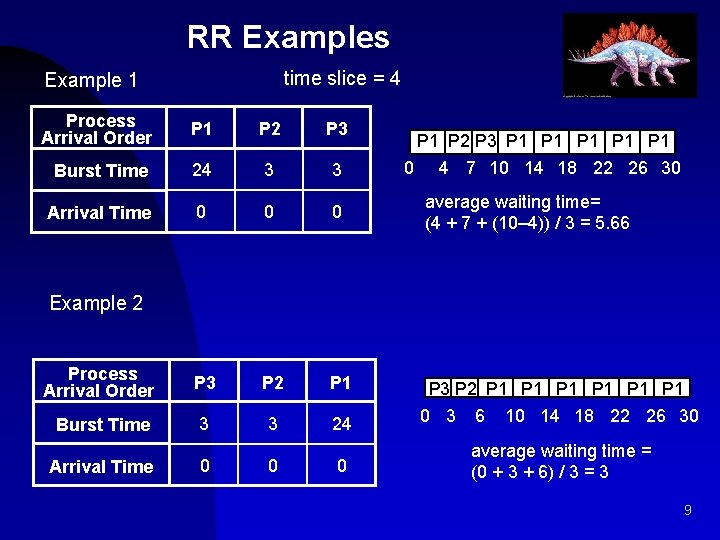

RR Examples time slice = 4 Example 1 Process Arrival Order P 1 P 2 P 3 Burst Time 24 3 3 Arrival Time 0 0 0 Process Arrival Order P 3 P 2 P 1 Burst Time 3 3 24 P 3 P 2 P 1 P 1 P 1 0 3 6 10 14 18 22 26 30 Arrival Time 0 0 0 average waiting time = (0 + 3 + 6) / 3 = 3 P 1 P 2 P 3 P 1 P 1 P 1 0 4 7 10 14 18 22 26 30 average waiting time= (4 + 7 + (10– 4)) / 3 = 5. 66 Example 2 9

RR Evaluation § § § preemptive (at end of time slice) response time — good for short processes • long processes may have to wait n*q time units for another time slice F n = number of other processes, q = length of time slice throughput — depends on time slice • too small — too many context switches • too large — approximates FCFS fairness — penalizes I/O-bound processes (may not use full time slice) starvation — not possible overhead — somewhat low 10

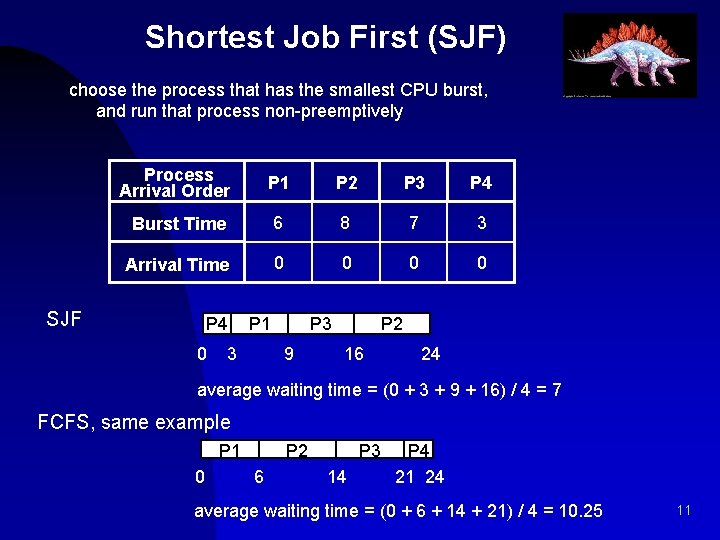

Shortest Job First (SJF) choose the process that has the smallest CPU burst, and run that process non-preemptively Process Arrival Order P 1 P 2 P 3 P 4 Burst Time 6 8 7 3 Arrival Time 0 0 SJF P 4 0 P 1 3 P 3 9 P 2 16 24 average waiting time = (0 + 3 + 9 + 16) / 4 = 7 FCFS, same example P 1 0 P 2 6 P 3 14 P 4 21 24 average waiting time = (0 + 6 + 14 + 21) / 4 = 10. 25 11

SJF Evaluation § § § non-preemptive response time — okay (worse than RR) • long processes may have to wait until a large number of short processes finish • provably optimal average waiting time — minimizes average waiting time for a given set of processes (if preemption is not considered) F average waiting time decreases if short and long processes are swapped in the ready queue throughput — better than RR fairness — penalizes long processes starvation — possible for long processes overhead — can be high (requires recording and estimating CPU burst times) 12

Shortest Remaining Time (SRT) § § preemptive version of SJF policy • choose the process that has the smallest next CPU burst, and run that process preemptively F until termination or blocking, or F until another process enters the ready queue • at that point, choose another process to run if one has a smaller expected CPU burst than what is left of the current process’ CPU burst 13

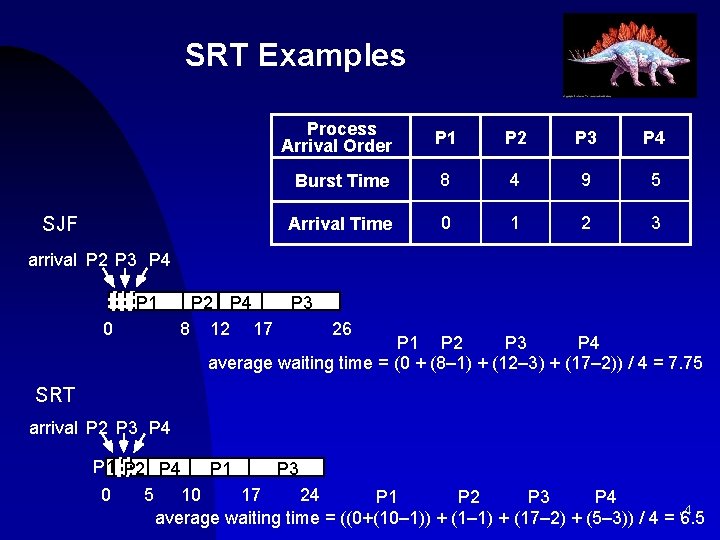

SRT Examples SJF Process Arrival Order P 1 P 2 P 3 P 4 Burst Time 8 4 9 5 Arrival Time 0 1 2 3 arrival P 2 P 3 P 4 P 1 0 P 2 P 4 8 12 17 P 3 26 P 1 P 2 P 3 P 4 average waiting time = (0 + (8– 1) + (12– 3) + (17– 2)) / 4 = 7. 75 SRT arrival P 2 P 3 P 4 P 1 P 2 P 4 P 1 P 3 0 5 10 17 24 P 1 P 2 P 3 P 4 average waiting time = ((0+(10– 1)) + (1– 1) + (17– 2) + (5– 3)) / 4 = 14 6. 5

SRT Evaluation § § § preemptive (at arrival of process into ready queue) response time — good (still worse than RR) • provably optimal waiting time throughput — high fairness — penalizes long processes • note that long processes may eventually become short processes starvation — possible for long processes overhead — can be high (requires recording and estimating CPU burst times) 15

Priority Scheduling § § policy: • associate a priority with each process F externally defined • ex: based on importance – employee’s processes given higher preference than visitor’s F internally defined, based on memory requirements, file requirements, CPU requirements vs. I/O requirements, etc. • SJF is priority scheduling, where priority is inversely proportional to length of next CPU burst • run the process with the highest priority evaluation • starvation — possible for low-priority processes 16

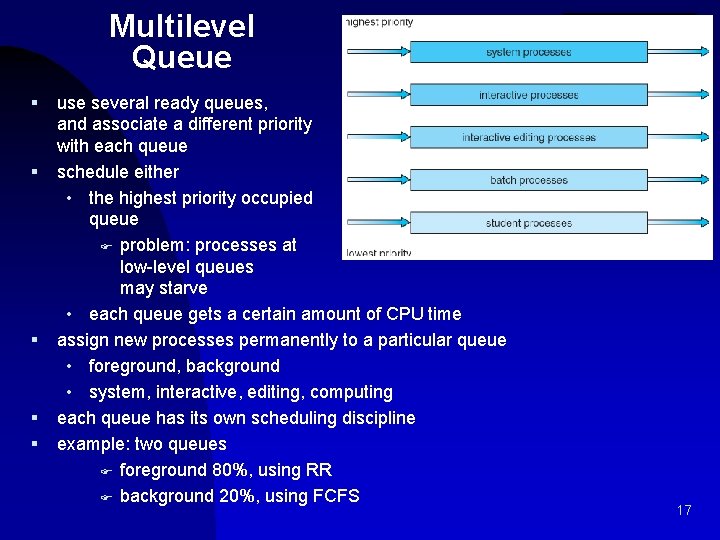

Multilevel Queue § § § use several ready queues, and associate a different priority with each queue schedule either • the highest priority occupied queue F problem: processes at low-level queues may starve • each queue gets a certain amount of CPU time assign new processes permanently to a particular queue • foreground, background • system, interactive, editing, computing each queue has its own scheduling discipline example: two queues F foreground 80%, using RR F background 20%, using FCFS 17

Multilevel Feedback Queue feedback – allow scheduler to move processes between queues to ensure fairness § aging – moving older processes to higher-priority queue § decay – moving older processes to lower-priority queue example § three queues, feedback with process decay • Q 0 – RR with time slice of 8 milliseconds • Q 1 – RR with time slice of 16 milliseconds • Q 2 – FCFS § scheduling • new job enters queue Q 0; when it gains CPU, job receives 8 milliseconds If it does not finish in 8 milliseconds, job is moved to queue Q 1 • in Q 1 job is again served RR and receives 16 additional milliseconds • if it still does not complete, it is preempted and moved to queue Q 2. 18

Traditional Unix CPU Scheduling • avoid sorting ready queue by priority – expensive. Instead: • multiple queues (32), each with a priority value - 0 -127 (low value = high priority): F kernel processes (or user processes in kernel mode) the lower values (0 -49) – kernel processes are not preemptible! F user processes have higher value (50 -127) • choose the process from the occupied queue with the highest priority, and run that process preemptively, using a timer (time slice typically around 100 ms) F RR in each queue • move processes between queues F keep track of clock ticks (60/second) F once per second, add clock ticks to priority value F also change priority based on whether or not process has used more than it’s “fair share” of CPU time (compared to others) • users can decrease priority 19

- Slides: 19