Lecture 7 Backpropagation Gradient descent and computational graph

Lecture 7. Backpropagation - Gradient descent and computational graph Computational Graph: used in for example • Tensorflow • Theano • CNTK

Backpropagation l Phase 1: Propagation ¡Forward pass, generating output values ¡Backward pass: calculating the gradients l Phase 2: Weight update ¡Updating the weights by a ratio of the weight’s gradient. This ratio is also called learning rate, η. ¡Δwij = -η E / wij

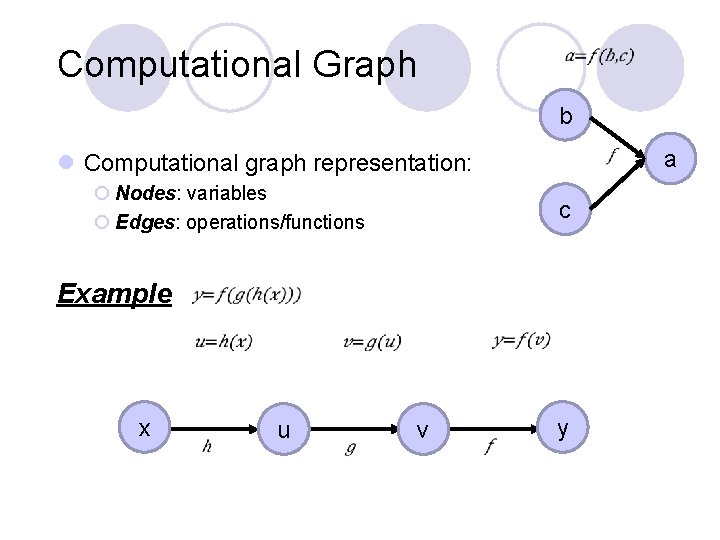

Computational Graph b a l Computational graph representation: ¡ Nodes: variables ¡ Edges: operations/functions c Example x u v y

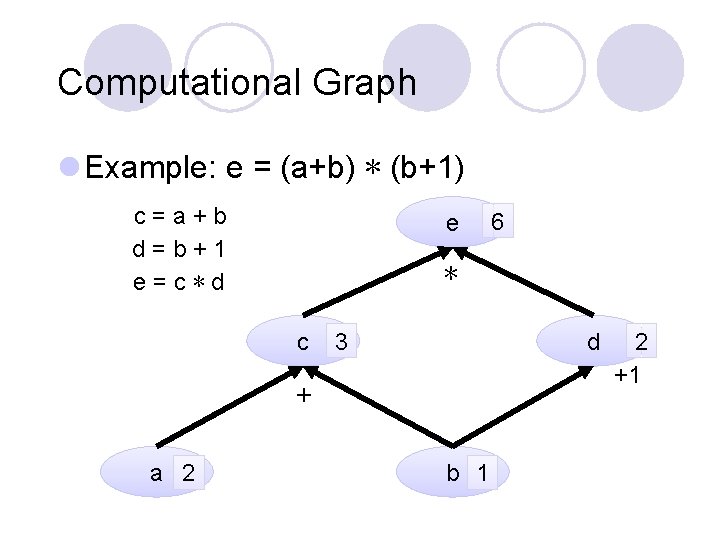

Computational Graph l Example: e = (a+b) ∗ (b+1) c=a+b d=b+1 e=c∗d e ∗ c 3 d + a 2 6 b 1 2 +1

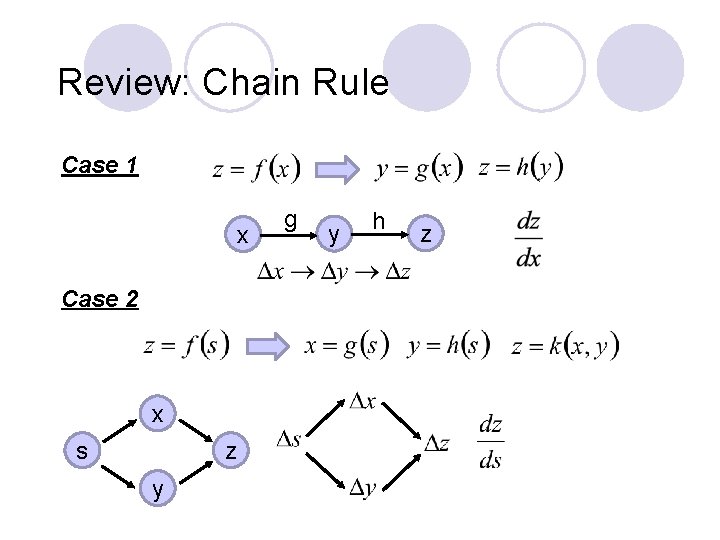

Review: Chain Rule Case 1 x Case 2 x s z y g y h z

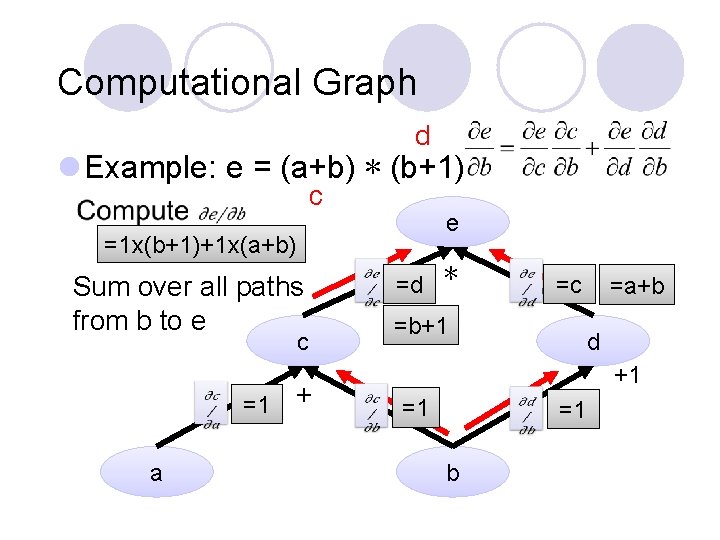

Computational Graph d l Example: e = (a+b) ∗ (b+1) c e =1 x(b+1)+1 x(a+b) Sum over all paths from b to e c =1 a + =d ∗ =b+1 =c =a+b d +1 =1 =1 b

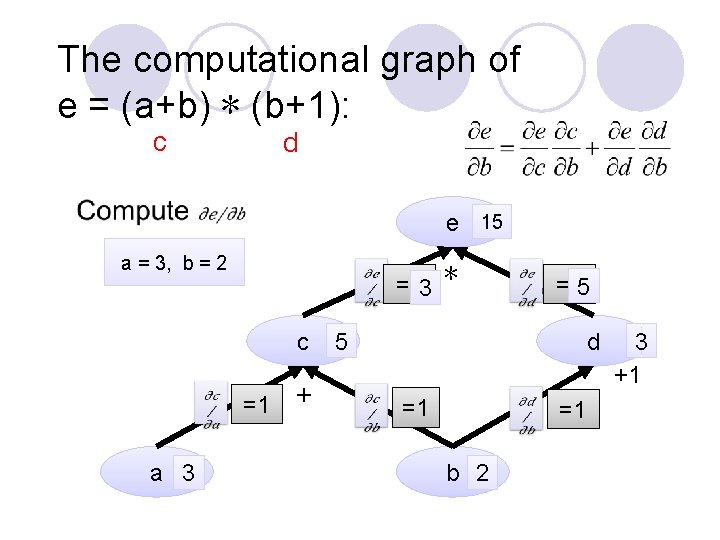

The computational graph of e = (a+b) ∗ (b+1): c d e 15 a = 3, b = 2 =d 3 ∗ c =1 a 3 + 5 =c 5 d =1 =1 b 2 3 +1

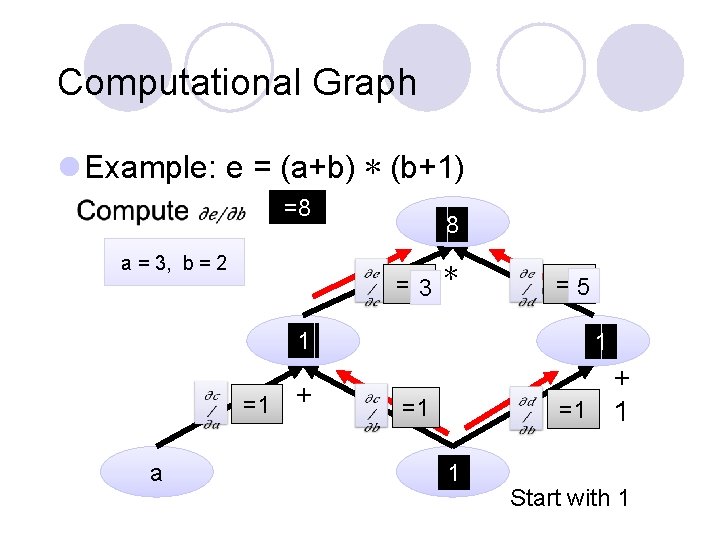

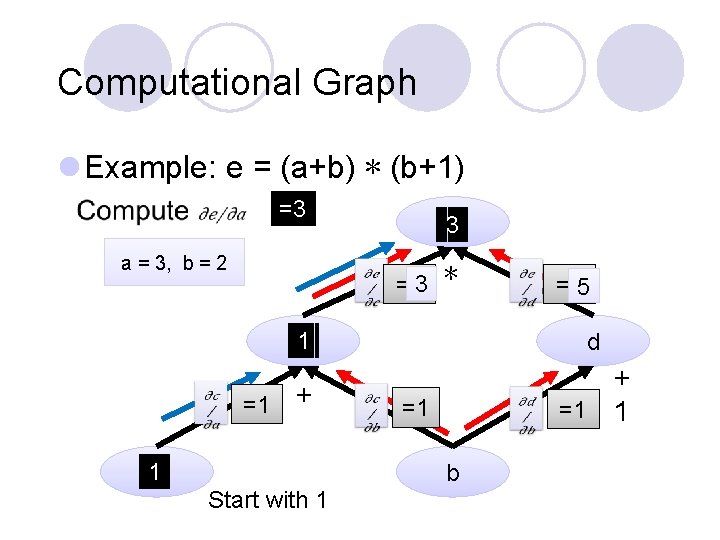

Computational Graph l Example: e = (a+b) ∗ (b+1) =8 a = 3, b = 2 =d 3 ∗ =1 a e 8 =c 5 1 c d 1 + + =1 1 =1 b 1 Start with 1

Computational Graph l Example: e = (a+b) ∗ (b+1) =3 a = 3, b = 2 e 3 =d 3 ∗ =1 =c 5 1 c d + + =1 1 1 a =1 b Start with 1

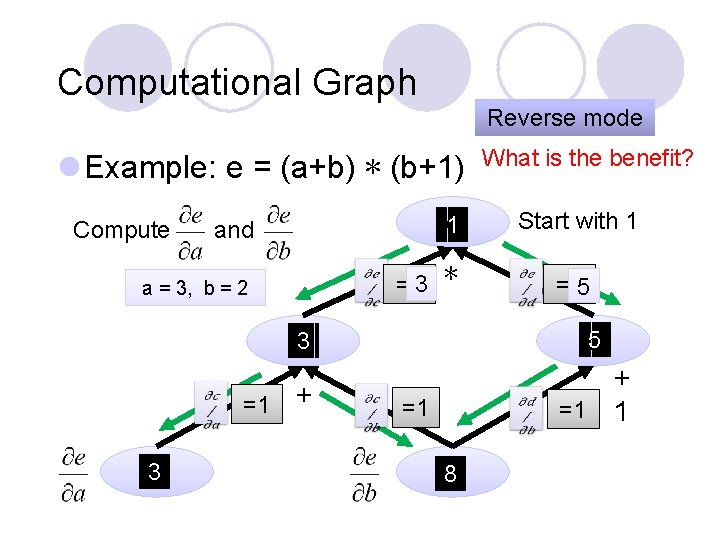

Computational Graph Reverse mode l Example: e = (a+b) ∗ (b+1) and e 1 a = 3, b = 2 =d 3 ∗ Compute =1 3 a What is the benefit? Start with 1 =c 5 3 c d 5 + + =1 1 =1 8 b

Summary l Two step computation: ¡Forward step: compute the values before differentiation for each node from the bottom. ¡Reverse step: compute the values for differentiation from the bottom.

Computational Graph l Parameter sharing: the same parameters appearing in different nodes y=xex^2 x u=ex^2 ∗ u ∗ v x Note: Treat each individual x as different variables Adding three paths together: = ex^2 + 2 x 2 ex^2 x y

Computational graphs for feed forward networks

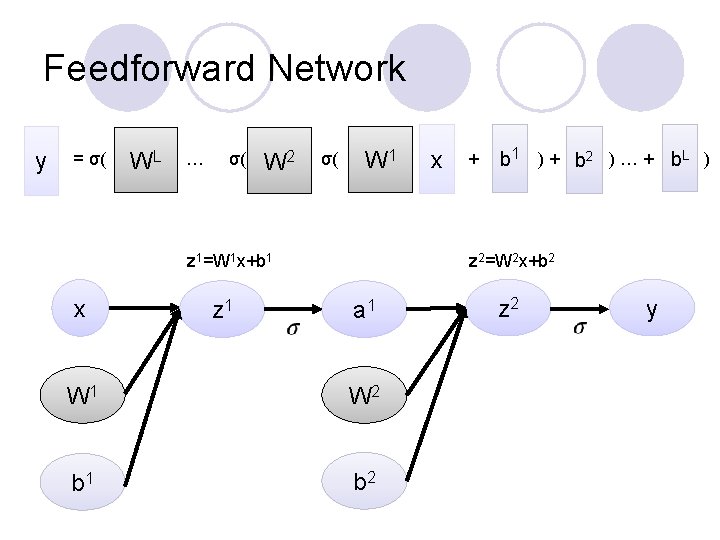

Feedforward Network y = σ( WL … σ( W 2 σ( W 1 z 1=W 1 x+b 1 x z 1 x + b 1 ) + b 2 ) … + b. L ) z 2=W 2 x+b 2 a 1 W 2 b 1 b 2 z 2 y

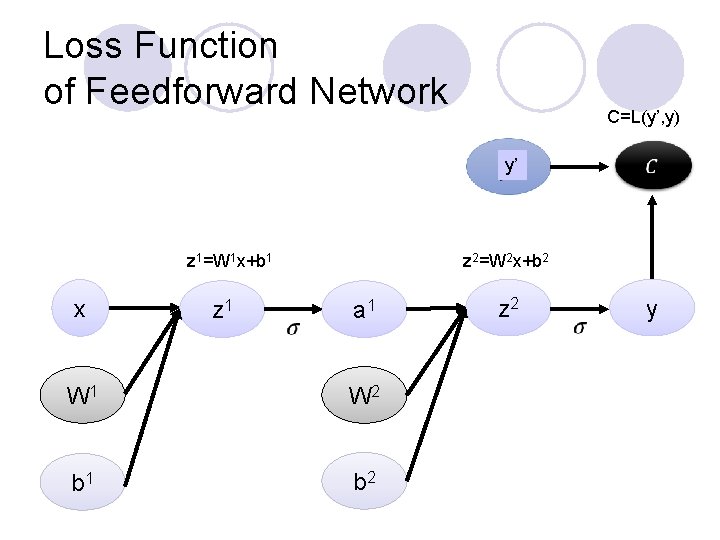

Loss Function of Feedforward Network C=L(y’, y) y’ z 1=W 1 x+b 1 x z 1 z 2=W 2 x+b 2 a 1 W 2 b 1 b 2 z 2 y

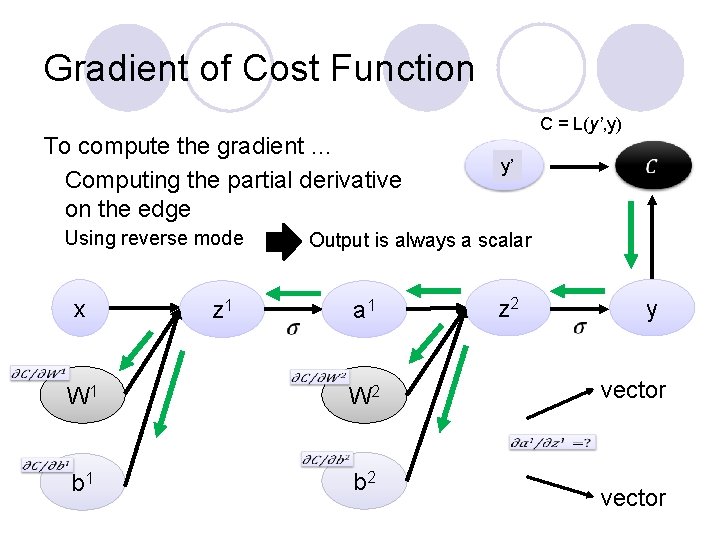

Gradient of Cost Function To compute the gradient … Computing the partial derivative on the edge Using reverse mode x z 1 C = L(y’, y) y’ Output is always a scalar a 1 W 2 b 1 b 2 z 2 y vector

![Jacobian Matrix y=f(x), x=[x 1, x 2, x 3], y=[y 1, y 2] size Jacobian Matrix y=f(x), x=[x 1, x 2, x 3], y=[y 1, y 2] size](http://slidetodoc.com/presentation_image_h2/7c6d3a141b027ee0d518be5842424887/image-17.jpg)

Jacobian Matrix y=f(x), x=[x 1, x 2, x 3], y=[y 1, y 2] size of y size of x

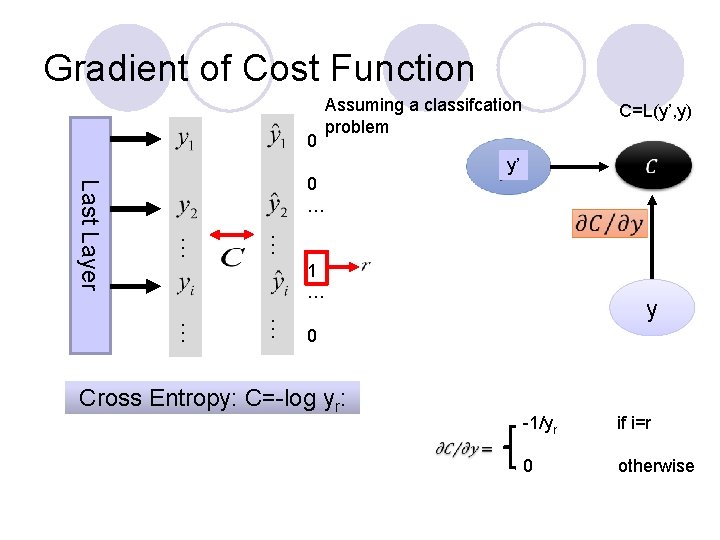

Gradient of Cost Function 0 Assuming a classifcation problem … … Last Layer 0 … C=L(y’, y) y’ 1 … … … y 0 Cross Entropy: C=-log yr: -1/yr if i=r 0 otherwise

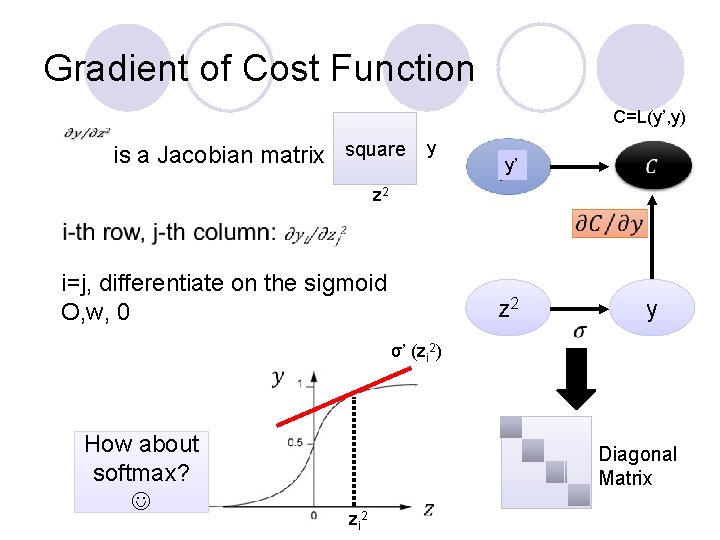

Gradient of Cost Function C=L(y’, y) is a Jacobian matrix square y y’ z 2 i=j, differentiate on the sigmoid O, w, 0 z 2 y σ’ (zi 2) How about softmax? Diagonal Matrix zi 2

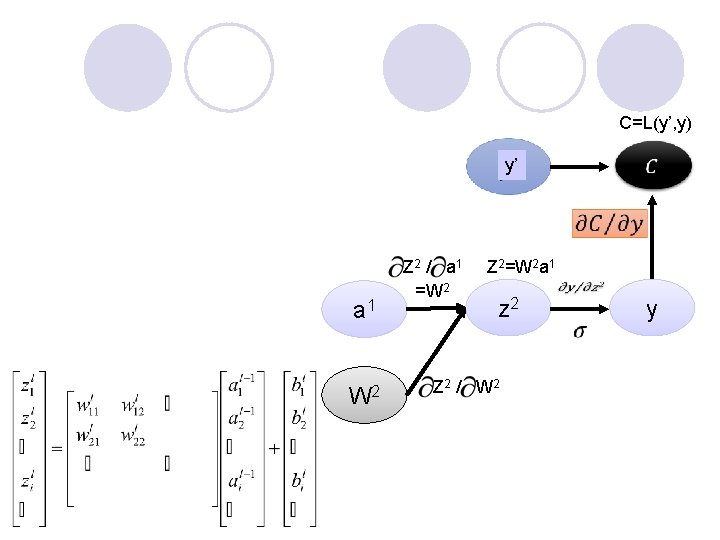

C=L(y’, y) y’ a 1 W 2 Z 2 / a 1 =W 2 Z 2=W 2 a 1 z 2 Z 2 / W 2 y

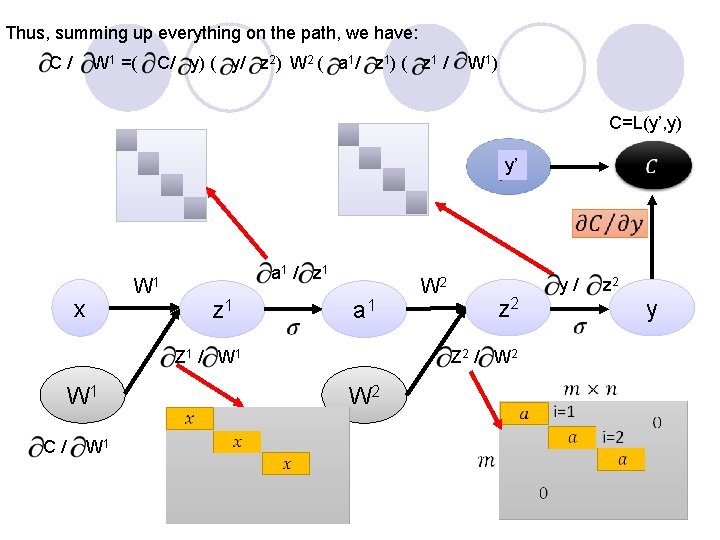

Thus, summing up everything on the path, we have: C/ W 1 =( C/ y) ( y/ z 2) W 2 ( a 1/ z 1) ( z 1 / W 1) C=L(y’, y) y’ x W 1 a 1 / z 1 a 1 Z 1 / W 1 C/ W 1 W 2 z 2 Z 2 / W 2 y/ z 2 y

Computational Graphs for Recurrent Networks

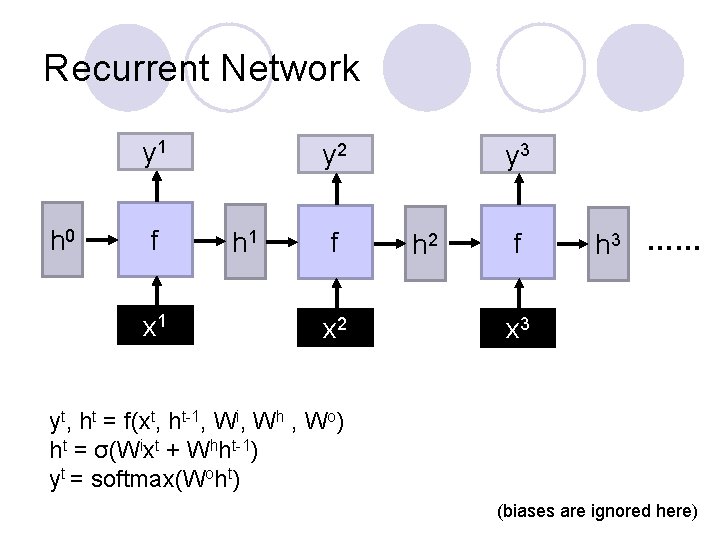

Recurrent Network y 1 h 0 f x 1 y 2 h 1 f x 2 y 3 h 2 f h 3 …… x 3 yt, ht = f(xt, ht-1, Wi, Wh , Wo) ht = σ(Wixt + Whht-1) yt = softmax(Woht) (biases are ignored here)

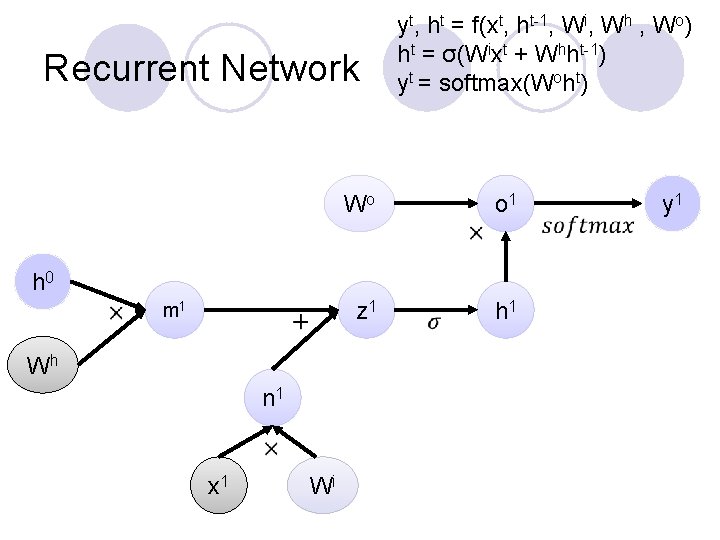

Recurrent Network yt, ht = f(xt, ht-1, Wi, Wh , Wo) ht = σ(Wixt + Whht-1) yt = softmax(Woht) Wo o 1 z 1 h 0 m 1 Wh n 1 x 1 Wi y 1

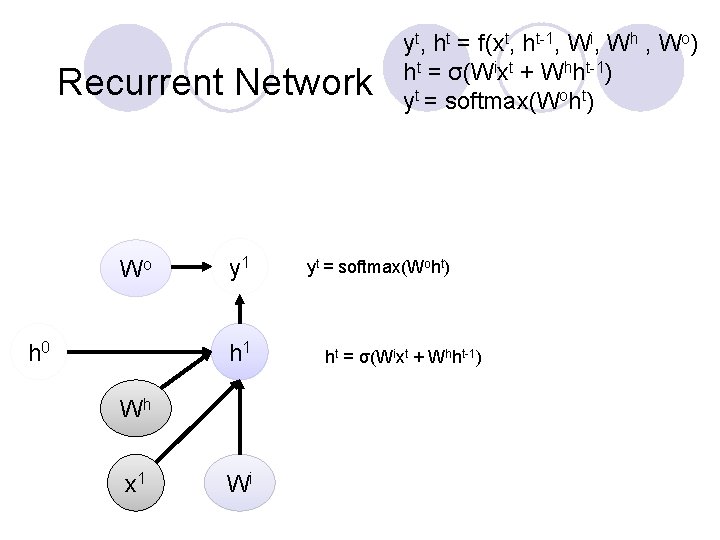

Recurrent Network Wo h 0 y 1 h 1 Wh x 1 Wi yt, ht = f(xt, ht-1, Wi, Wh , Wo) ht = σ(Wixt + Whht-1) yt = softmax(Woht) ht = σ(Wixt + Whht-1)

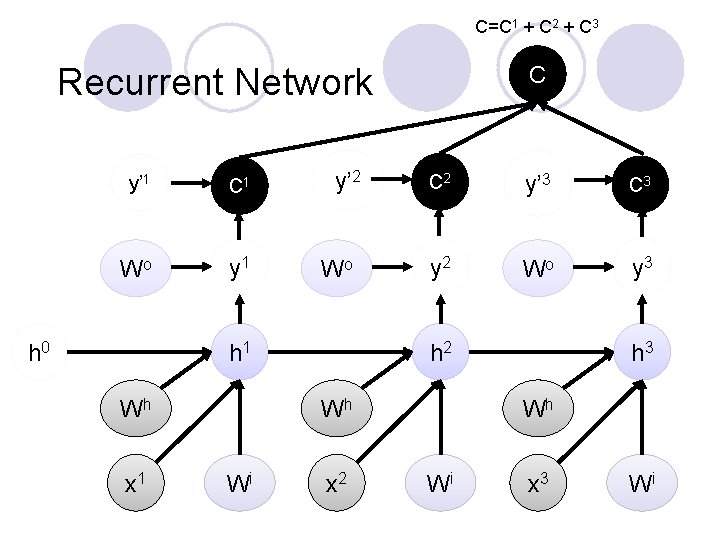

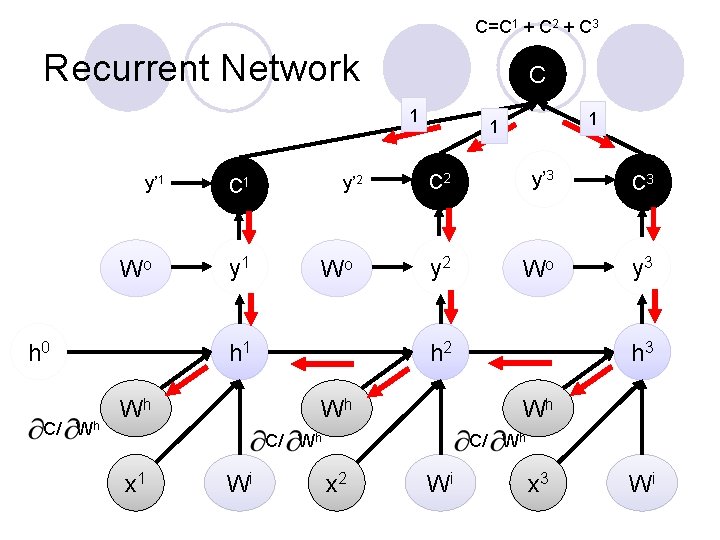

C=C 1 + C 2 + C 3 Recurrent Network y’ 1 C 1 Wo y 1 h 0 y’ 2 Wo h 1 Wh x 1 C C 2 y’ 3 C 3 y 2 Wo y 3 h 2 Wh Wi x 2 h 3 Wh Wi x 3 Wi

C=C 1 + C 2 + C 3 Recurrent Network C 1 y’ 1 Wo h 0 C/ Wh y’ 2 C 1 y 1 Wo h 1 Wh C 2 y’ 3 C 3 y 2 Wo y 3 h 2 Wh Wi h 3 Wh C/ Wh x 1 1 1 C/ Wh x 2 Wi x 3 Wi

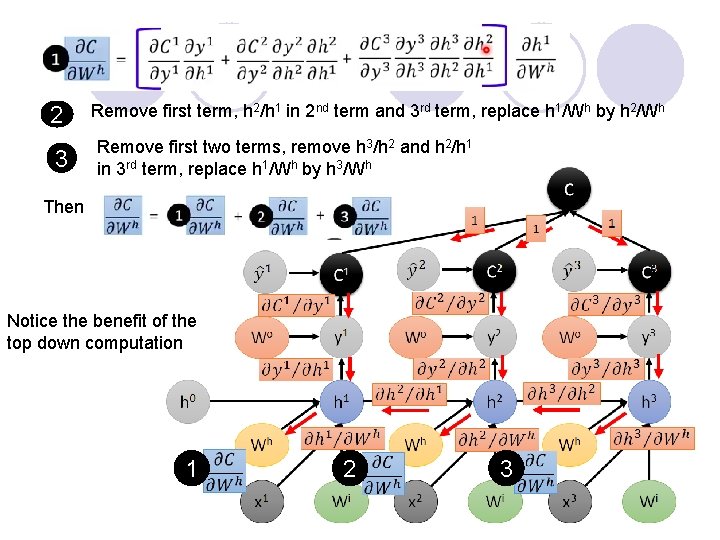

2 3 Remove first term, h 2/h 1 in 2 nd term and 3 rd term, replace h 1/Wh by h 2/Wh Remove first two terms, remove h 3/h 2 and h 2/h 1 in 3 rd term, replace h 1/Wh by h 3/Wh Then Notice the benefit of the top down computation 1 2 3

- Slides: 28