Lecture 7 An Introduction to pipelining Pipelining Its

![1 st and 2 nd Instruction cycles • Instruction fetch (IF) IR NPC Mem[PC]; 1 st and 2 nd Instruction cycles • Instruction fetch (IF) IR NPC Mem[PC];](https://slidetodoc.com/presentation_image_h2/0501704b673a20204805a8f69e6a4514/image-10.jpg)

- Slides: 19

Lecture 7 An Introduction to pipelining

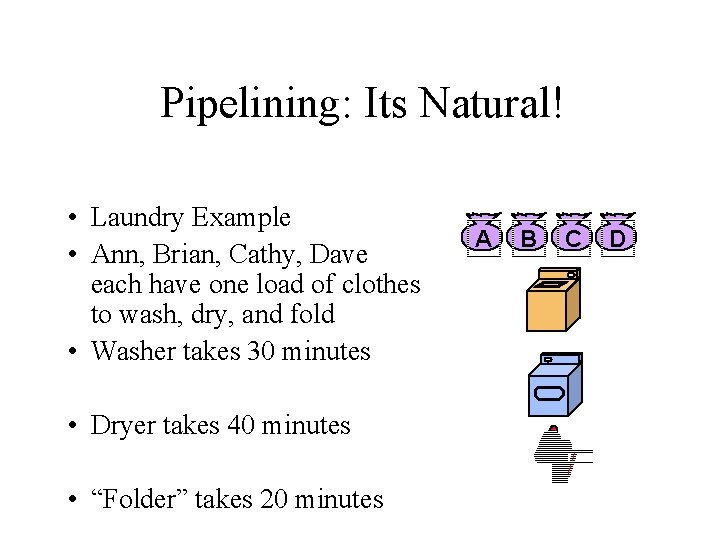

Pipelining: Its Natural! • Laundry Example • Ann, Brian, Cathy, Dave each have one load of clothes to wash, dry, and fold • Washer takes 30 minutes • Dryer takes 40 minutes • “Folder” takes 20 minutes A B C D

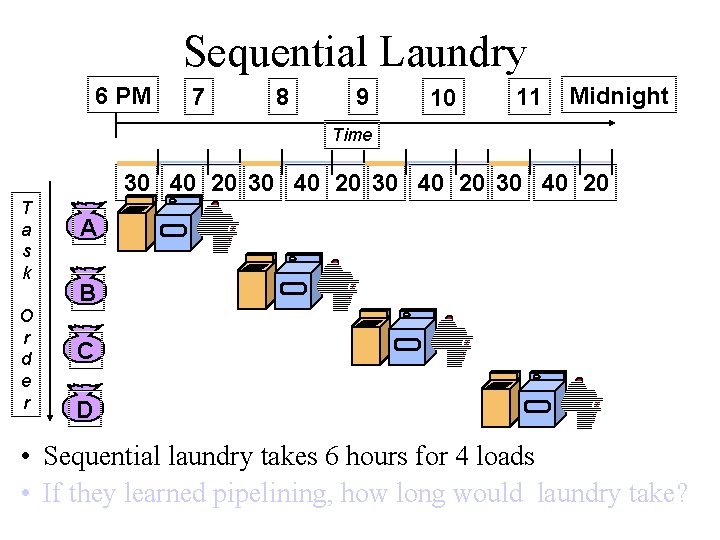

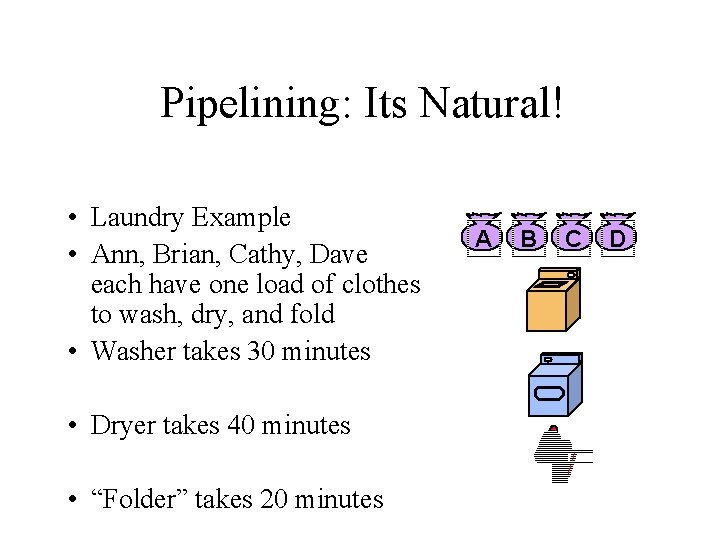

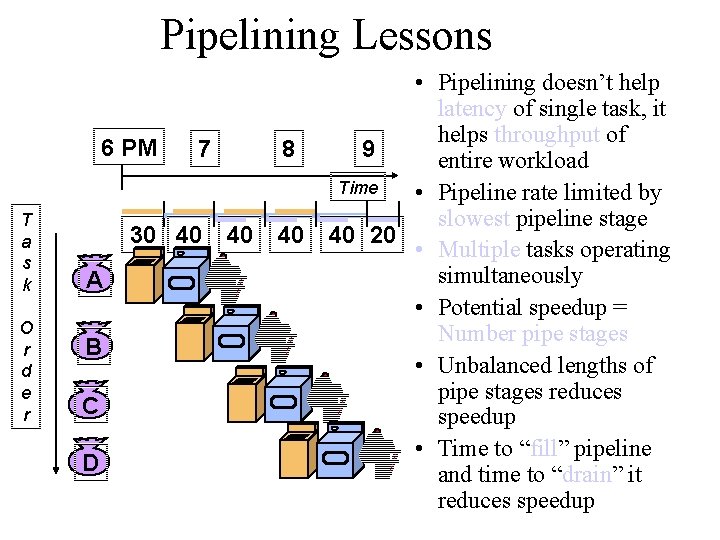

Sequential Laundry 6 PM 7 8 9 10 11 Midnight Time 30 40 20 T a s k O r d e r A B C D • Sequential laundry takes 6 hours for 4 loads • If they learned pipelining, how long would laundry take?

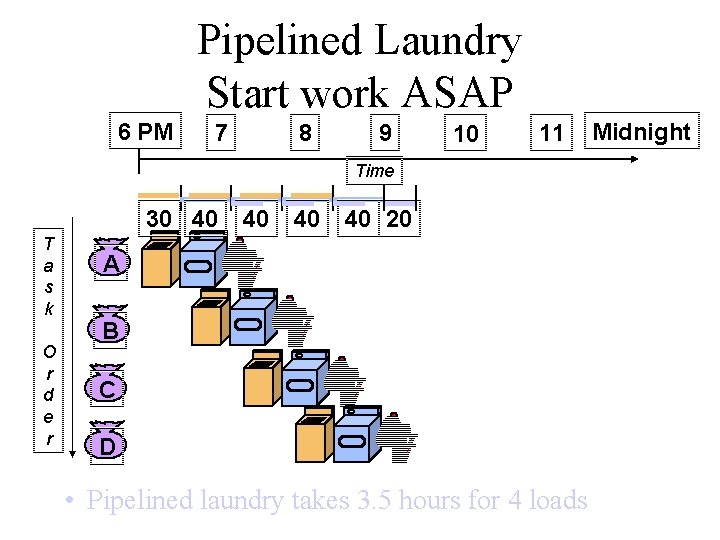

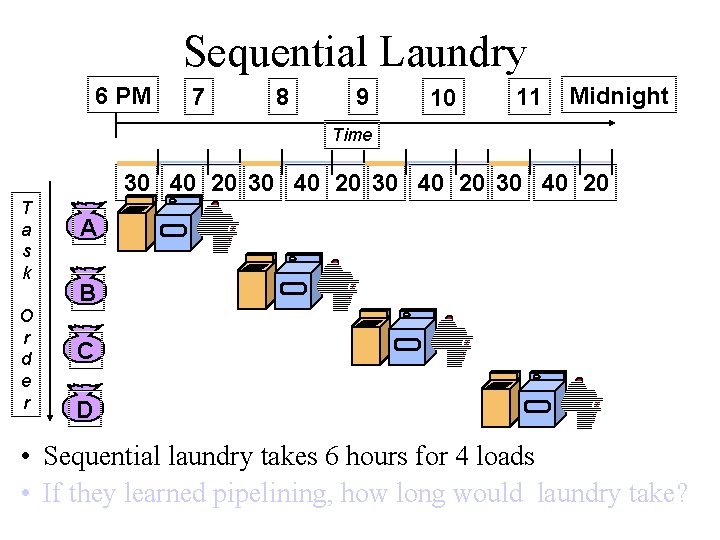

Pipelined Laundry Start work ASAP 6 PM 7 8 9 10 11 Time 30 40 T a s k O r d e r 40 40 40 20 A B C D • Pipelined laundry takes 3. 5 hours for 4 loads Midnight

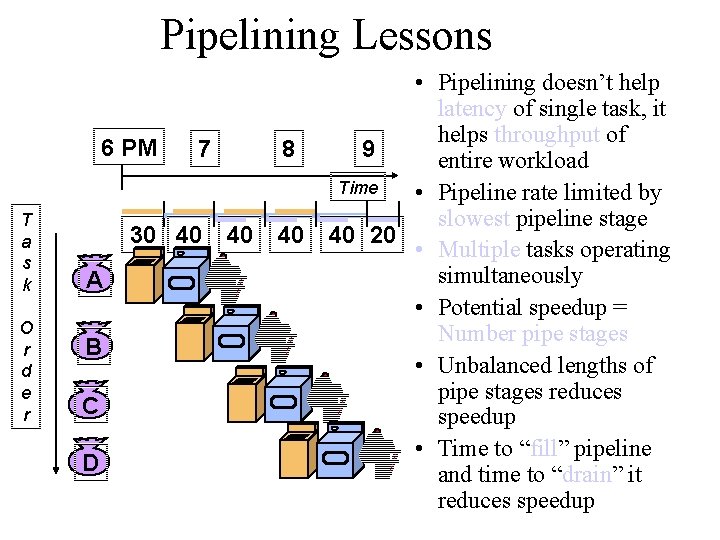

Pipelining Lessons T a s k O r d e r • Pipelining doesn’t help latency of single task, it helps throughput of 6 PM 7 8 9 entire workload Time • Pipeline rate limited by slowest pipeline stage 30 40 40 20 • Multiple tasks operating simultaneously A • Potential speedup = Number pipe stages B • Unbalanced lengths of pipe stages reduces C speedup • Time to “fill” pipeline D and time to “drain” it reduces speedup

Definitions • • • Pipe stage or pipe segment Pipeline depth Machine cycle Latency Throughput

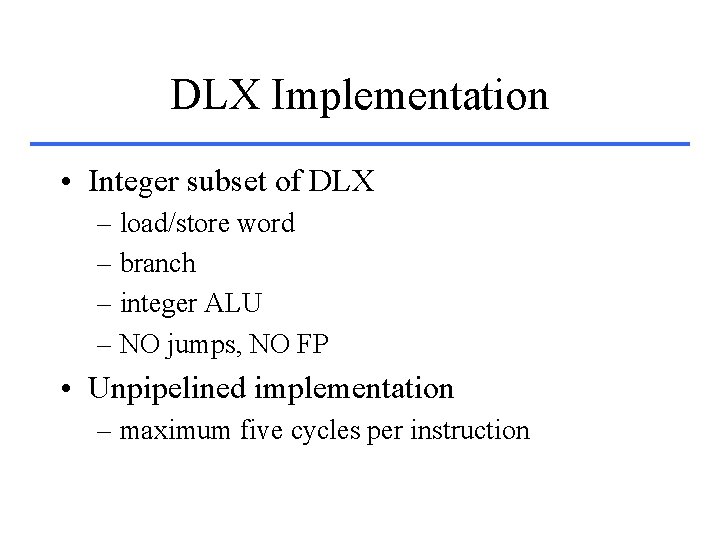

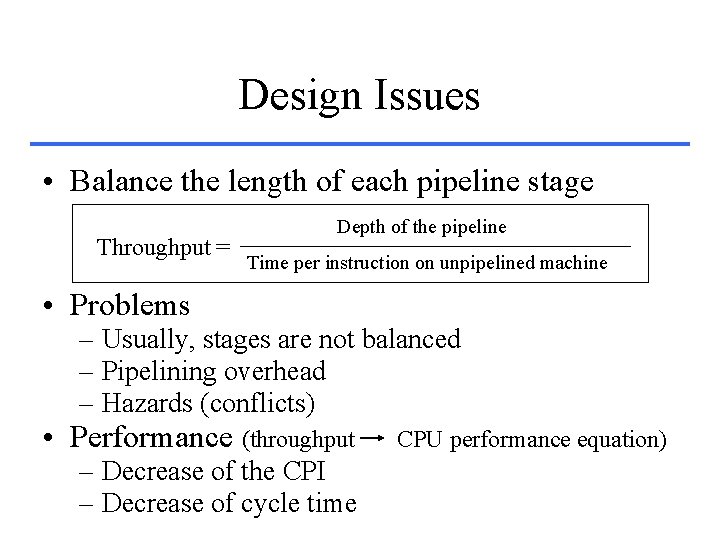

Design Issues • Balance the length of each pipeline stage Throughput = Depth of the pipeline Time per instruction on unpipelined machine • Problems – Usually, stages are not balanced – Pipelining overhead – Hazards (conflicts) • Performance (throughput – Decrease of the CPI – Decrease of cycle time CPU performance equation)

DLX Implementation • Integer subset of DLX – load/store word – branch – integer ALU – NO jumps, NO FP • Unpipelined implementation – maximum five cycles per instruction

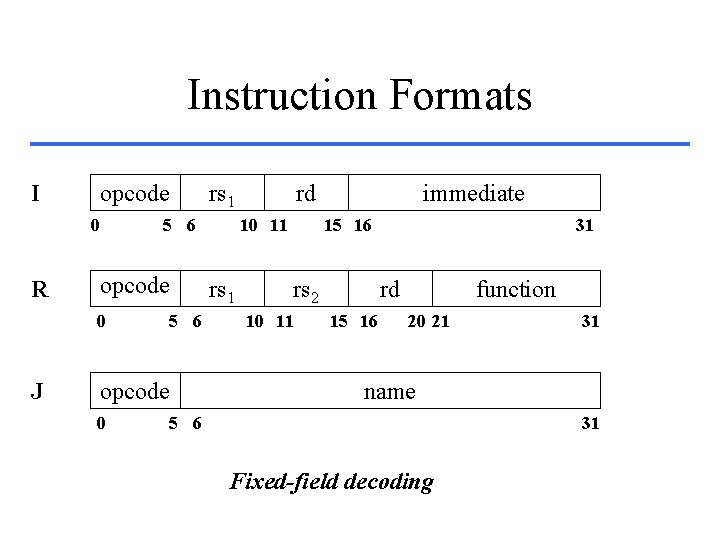

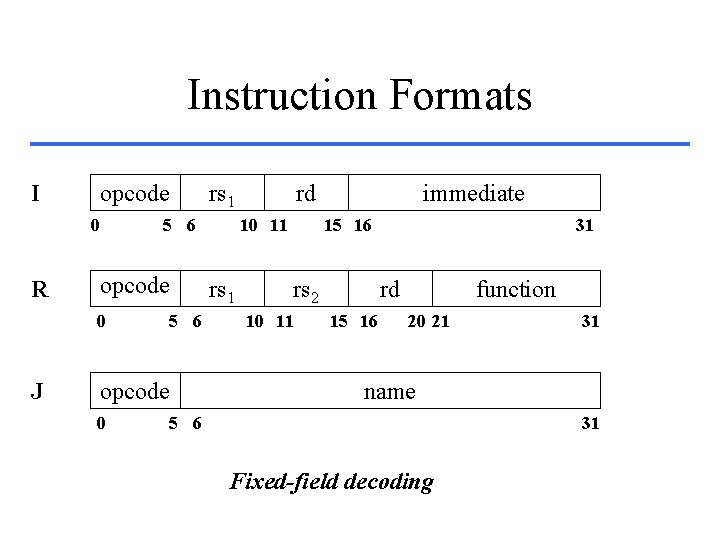

Instruction Formats I opcode 0 R 5 6 opcode 0 J rs 1 5 6 opcode 0 rd 10 11 rs 1 immediate 15 16 rs 2 10 11 31 rd 15 16 function 20 21 31 name 5 6 31 Fixed-field decoding

![1 st and 2 nd Instruction cycles Instruction fetch IF IR NPC MemPC 1 st and 2 nd Instruction cycles • Instruction fetch (IF) IR NPC Mem[PC];](https://slidetodoc.com/presentation_image_h2/0501704b673a20204805a8f69e6a4514/image-10.jpg)

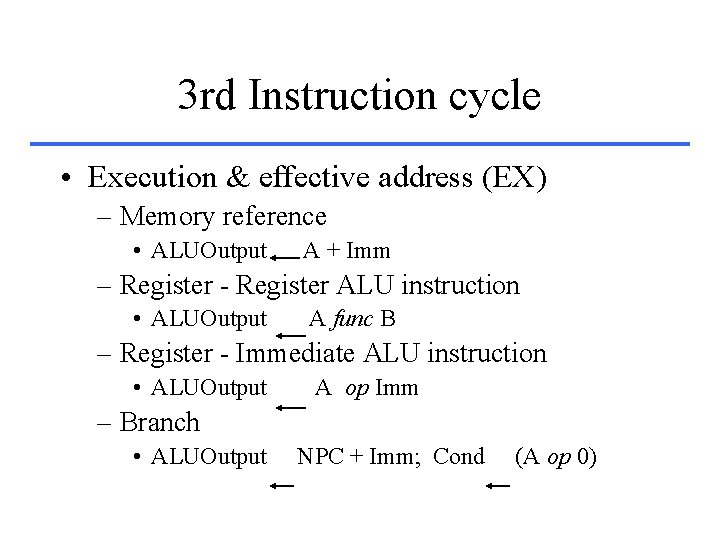

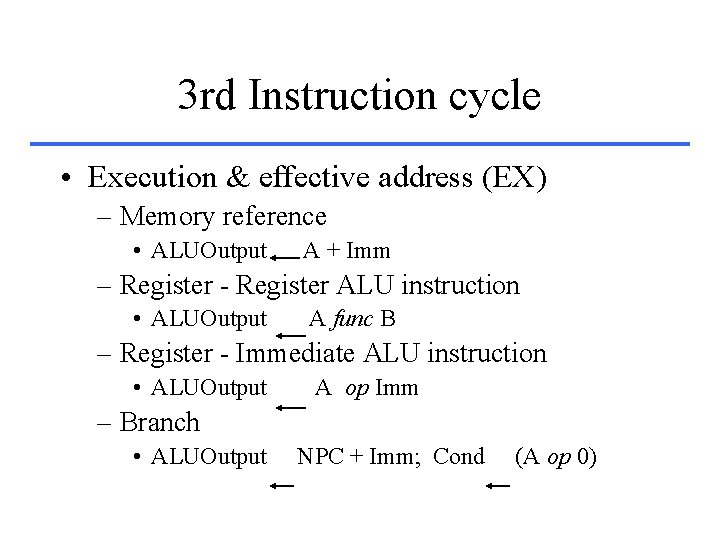

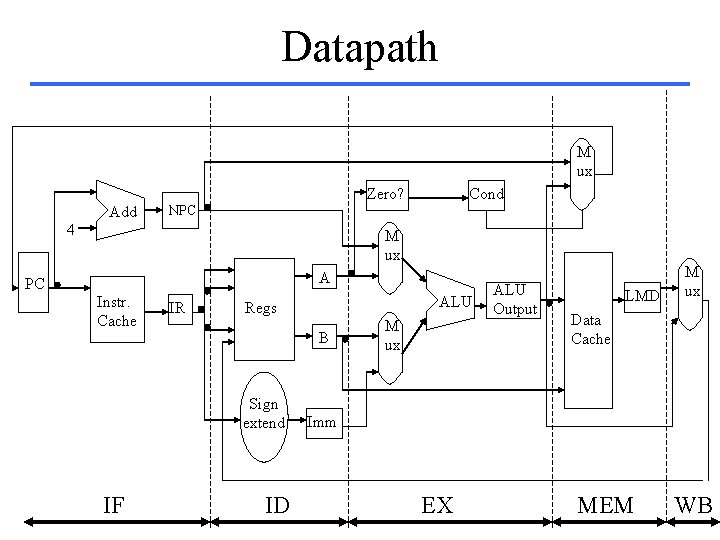

1 st and 2 nd Instruction cycles • Instruction fetch (IF) IR NPC Mem[PC]; PC + 4 • Instruction decode & register fetch (ID) A Regs[IR 6. . 10]; B Regs[IR 11. . 15]; Imm ((IR 16)16 # # IR 16. . 31)

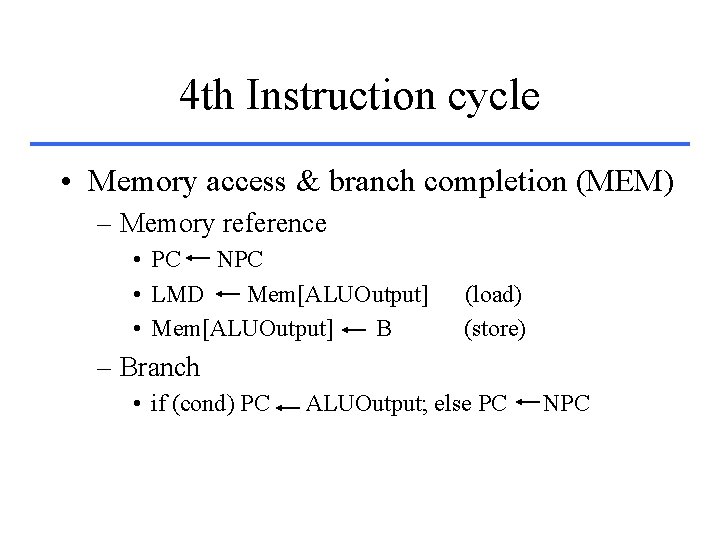

3 rd Instruction cycle • Execution & effective address (EX) – Memory reference • ALUOutput A + Imm – Register - Register ALU instruction • ALUOutput A func B – Register - Immediate ALU instruction • ALUOutput A op Imm – Branch • ALUOutput NPC + Imm; Cond (A op 0)

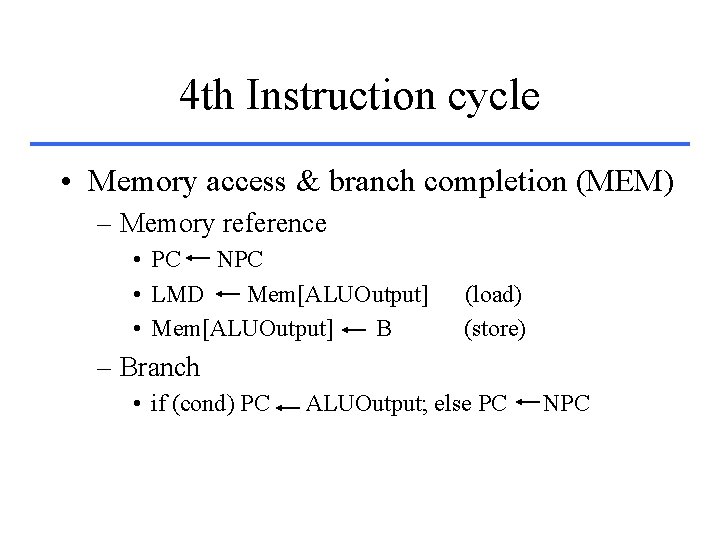

4 th Instruction cycle • Memory access & branch completion (MEM) – Memory reference • PC NPC • LMD Mem[ALUOutput] • Mem[ALUOutput] B (load) (store) – Branch • if (cond) PC ALUOutput; else PC NPC

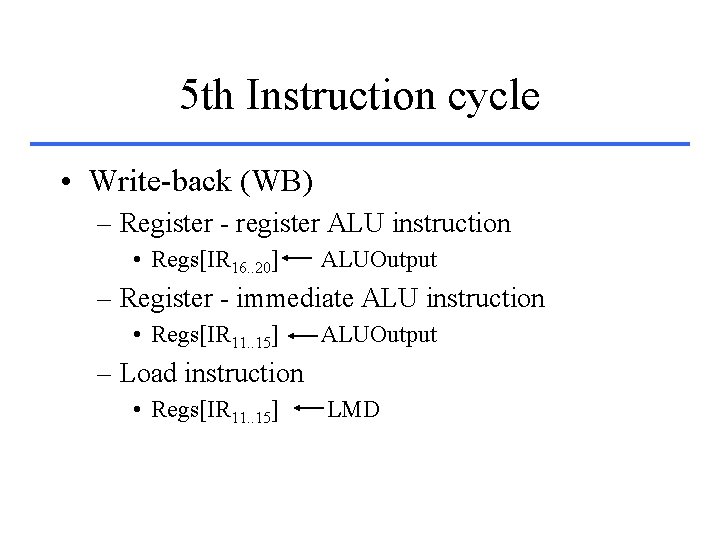

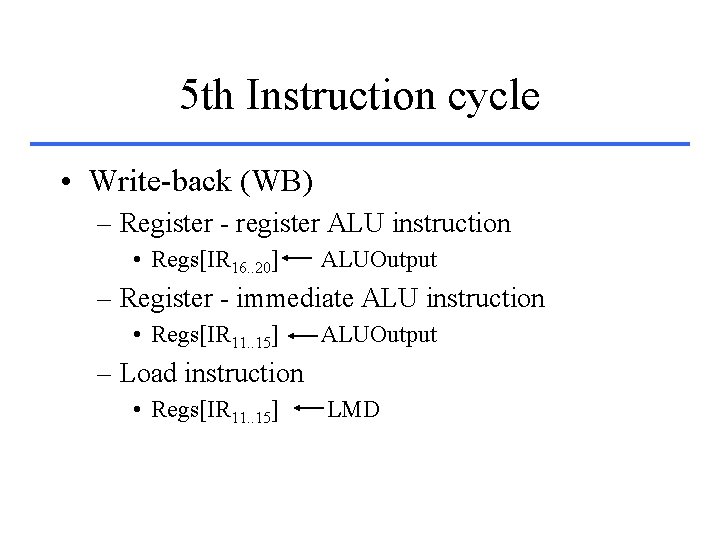

5 th Instruction cycle • Write-back (WB) – Register - register ALU instruction • Regs[IR 16. . 20] ALUOutput – Register - immediate ALU instruction • Regs[IR 11. . 15] ALUOutput – Load instruction • Regs[IR 11. . 15] LMD

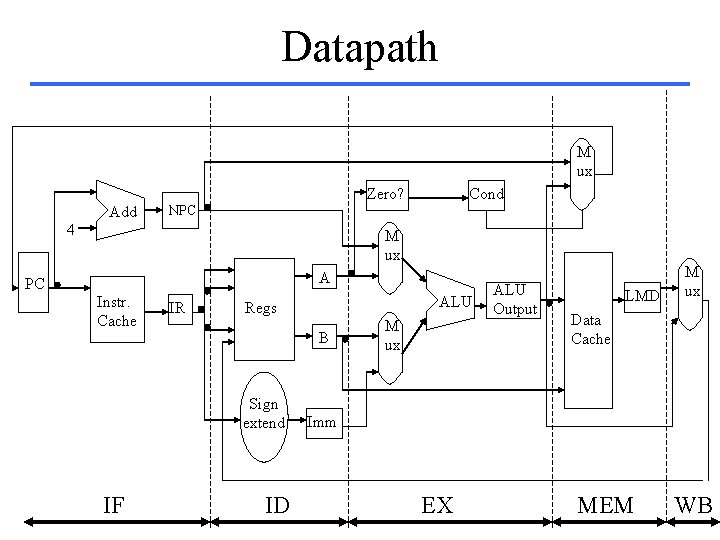

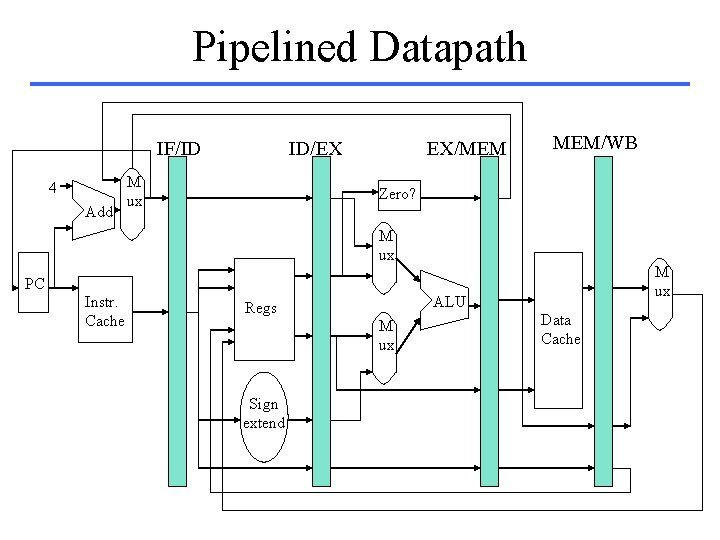

Datapath M ux Zero? Add Cond NPC 4 M ux A PC Instr. Cache IR Regs B Sign extend IF ALU ID M ux ALU Output LMD M ux Data Cache Imm EX MEM WB

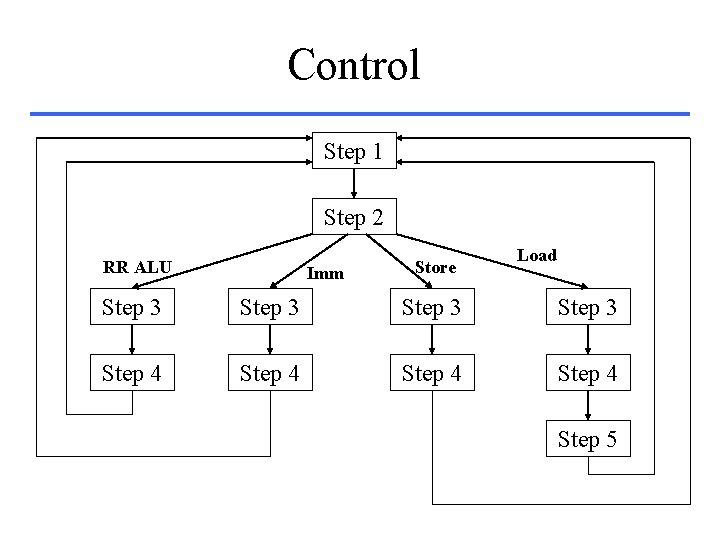

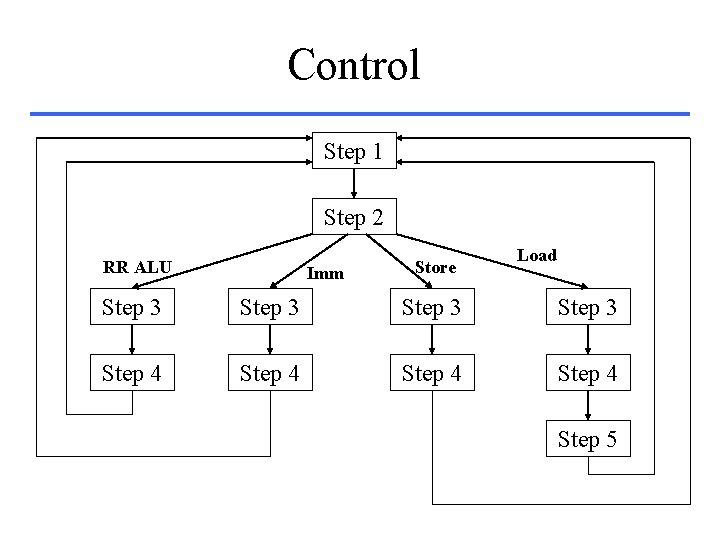

Control Step 1 Step 2 RR ALU Imm Store Load Step 3 Step 4 Step 5

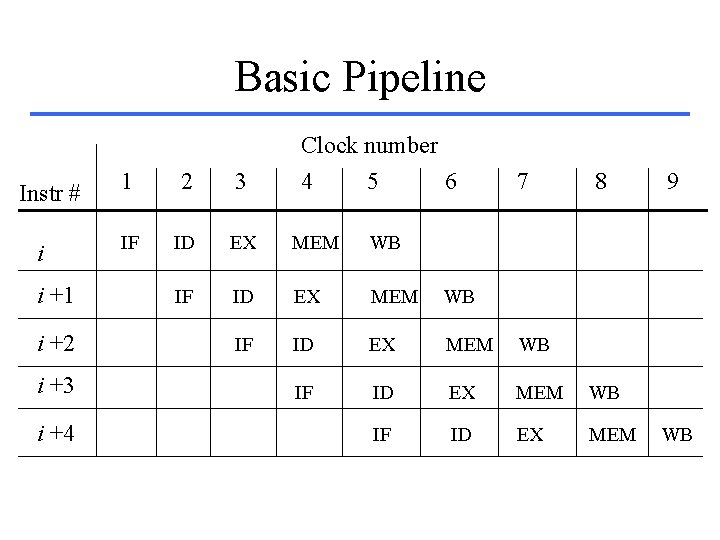

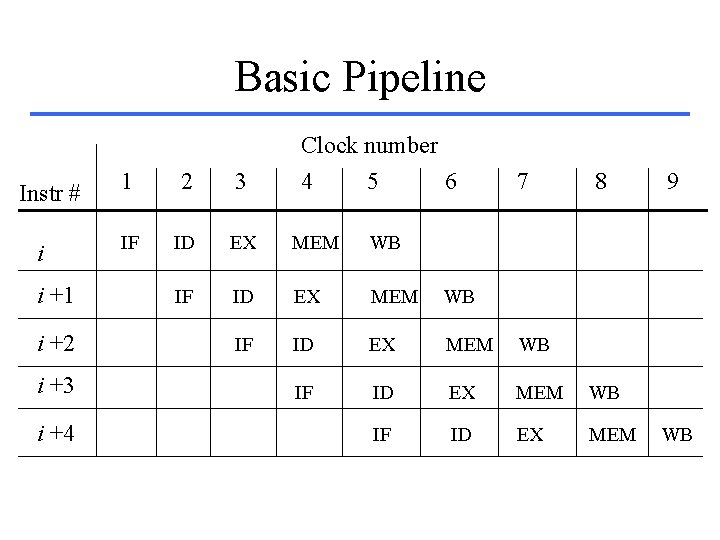

Basic Pipeline Instr # i i +1 i +2 i +3 i +4 Clock number 4 5 6 1 2 3 7 8 IF ID EX MEM WB IF ID EX MEM 9 WB

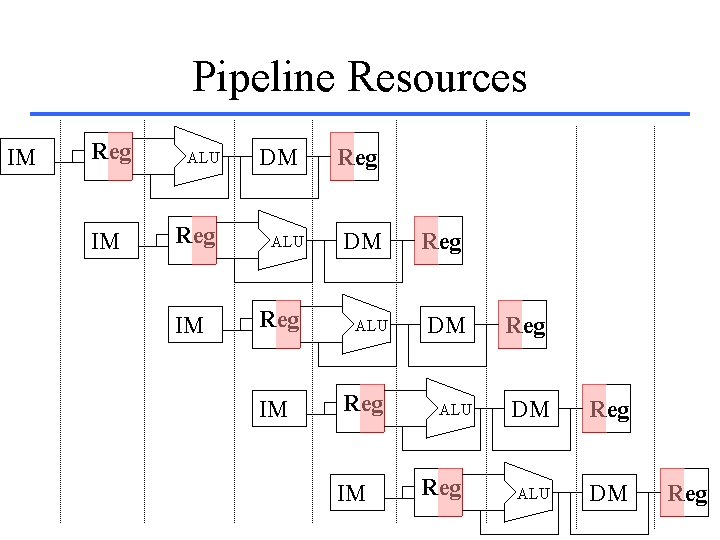

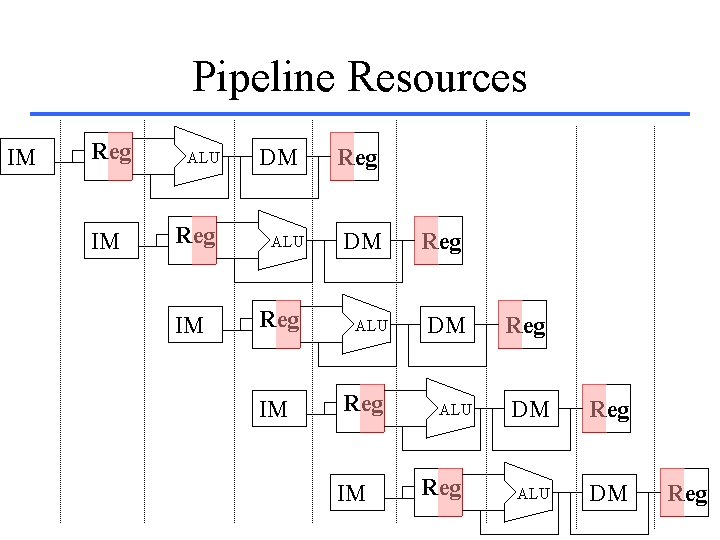

Pipeline Resources IM Reg IM ALU Reg IM DM ALU Reg IM Reg DM ALU Reg DM Reg ALU DM Reg

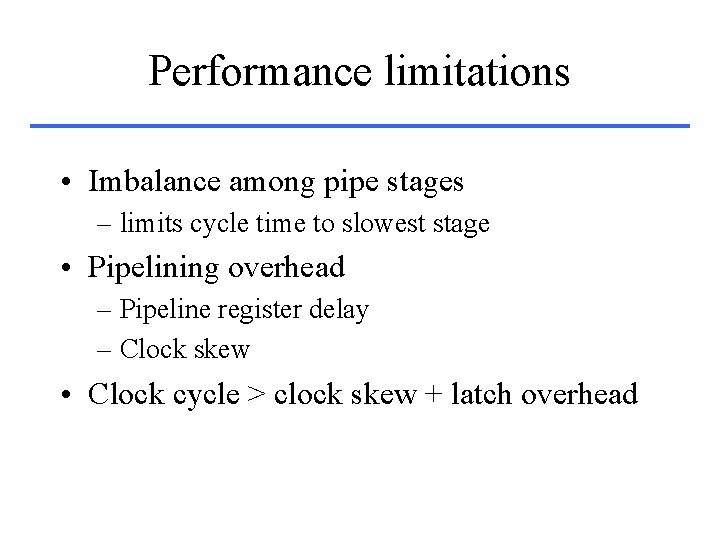

Pipelined Datapath IF/ID 4 Add ID/EX M ux EX/MEM MEM/WB Zero? M ux PC Instr. Cache ALU Regs M ux Sign extend Data Cache

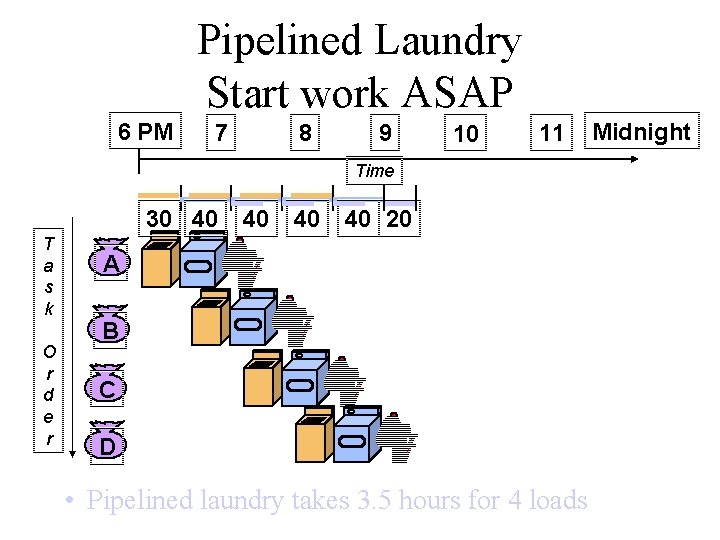

Performance limitations • Imbalance among pipe stages – limits cycle time to slowest stage • Pipelining overhead – Pipeline register delay – Clock skew • Clock cycle > clock skew + latch overhead