Lecture 7 2 Distributed Algorithms for Sorting Courtesy

Lecture 7 -2 : Distributed Algorithms for Sorting Courtesy : Michael J. Quinn, Parallel Programming in C with MPI and Open. MP (chapter 14)

Definitions of “Sorted” n Definition 1 n n Definition 2 (distributed memory algorithm) n n n Sorted list held in memory of a single machine Portion of list in every processor’s memory is sorted Value of last element on Pi’s list is less than or equal to value of first element on Pi+1’s list We adopt Definition 2 n Allows problem size to scale with number of processors

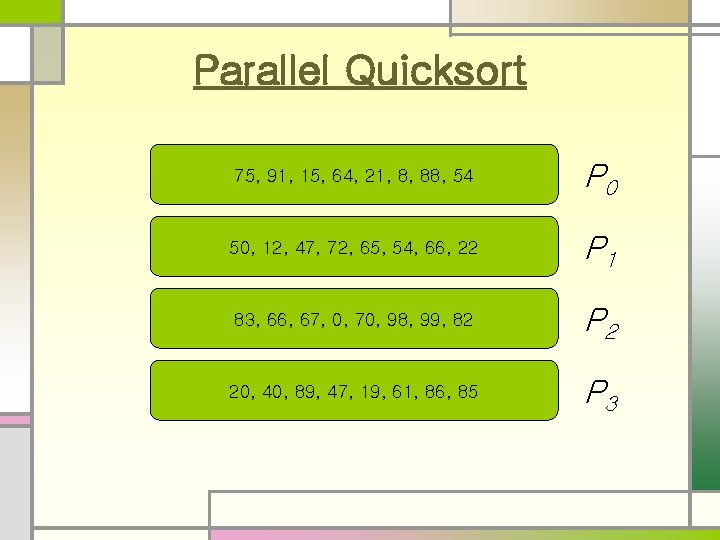

Parallel Quicksort n We consider the case of distributed memory n Each process holds a segment of the unsorted list n n The unsorted list is evenly distributed among the processes Desired result of a parallel quicksort algorithm: n n n The list segment stored on each process is sorted The last element on process i’s list is smaller than the first element on process i + 1’s list

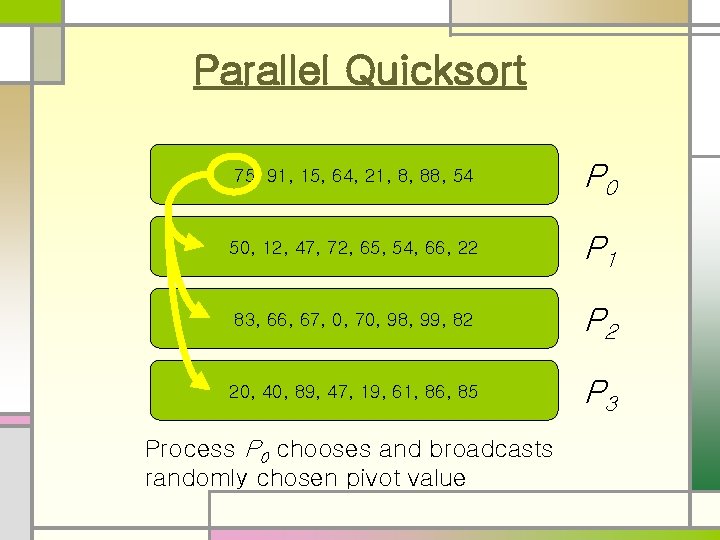

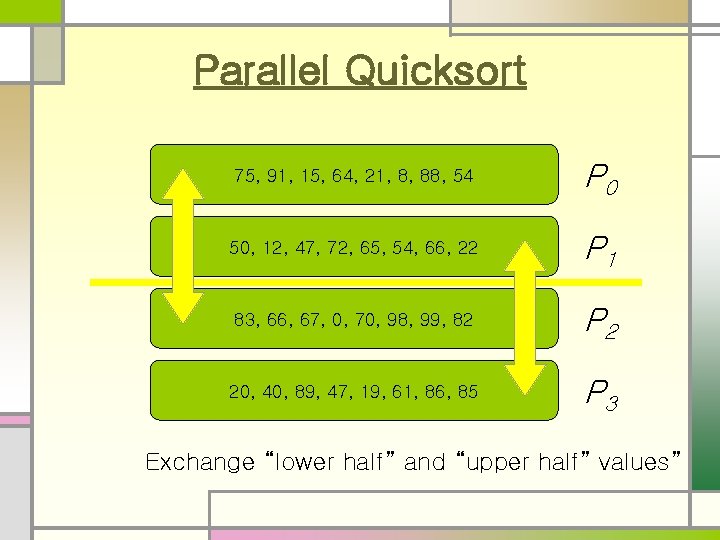

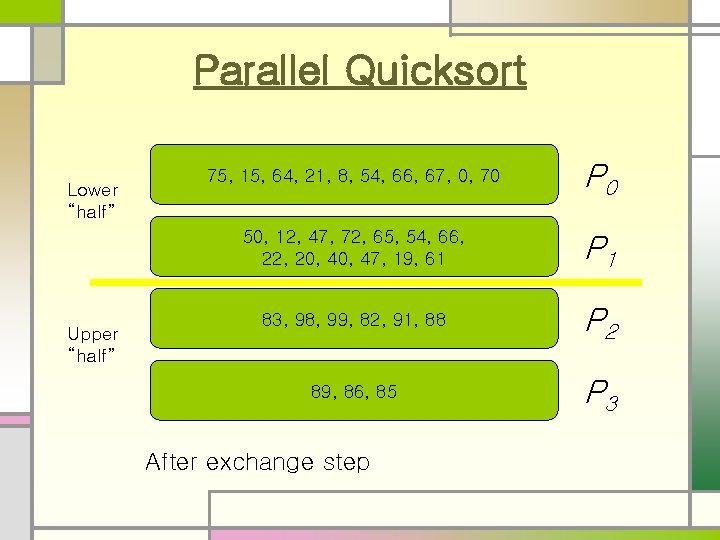

Parallel Quicksort n n n We randomly choose a pivot from one of the processes and broadcast it to every process Each process divides its unsorted list into two lists: those smaller than (or equal) the pivot, those greater than the pivot Each process in the upper half of the process list sends its “low list” to a partner process in the lower half of the process list and receives a “high list” in return Now, the upper-half processes have only values greater than the pivot, and the lower-half processes have only values smaller than the pivot. Thereafter, the processes divide themselves into two groups and the algorithm recurses. After log P recursions, every process has an unsorted list of values completely disjoint from the values held by the other processes. n n The largest value on process i will be smaller than the smallest value held by process i+1 Each process can sort its list using sequential quicksort

Parallel Quicksort 75, 91, 15, 64, 21, 8, 88, 54 P 0 50, 12, 47, 72, 65, 54, 66, 22 P 1 83, 66, 67, 0, 70, 98, 99, 82 P 2 20, 40, 89, 47, 19, 61, 86, 85 P 3

Parallel Quicksort 75, 91, 15, 64, 21, 8, 88, 54 P 0 50, 12, 47, 72, 65, 54, 66, 22 P 1 83, 66, 67, 0, 70, 98, 99, 82 P 2 20, 40, 89, 47, 19, 61, 86, 85 P 3 Process P 0 chooses and broadcasts randomly chosen pivot value

Parallel Quicksort 75, 91, 15, 64, 21, 8, 88, 54 P 0 50, 12, 47, 72, 65, 54, 66, 22 P 1 83, 66, 67, 0, 70, 98, 99, 82 P 2 20, 40, 89, 47, 19, 61, 86, 85 P 3 Exchange “lower half” and “upper half” values”

Parallel Quicksort Lower “half” Upper “half” 75, 15, 64, 21, 8, 54, 66, 67, 0, 70 P 0 50, 12, 47, 72, 65, 54, 66, 22, 20, 47, 19, 61 P 1 83, 98, 99, 82, 91, 88 P 2 89, 86, 85 P 3 After exchange step

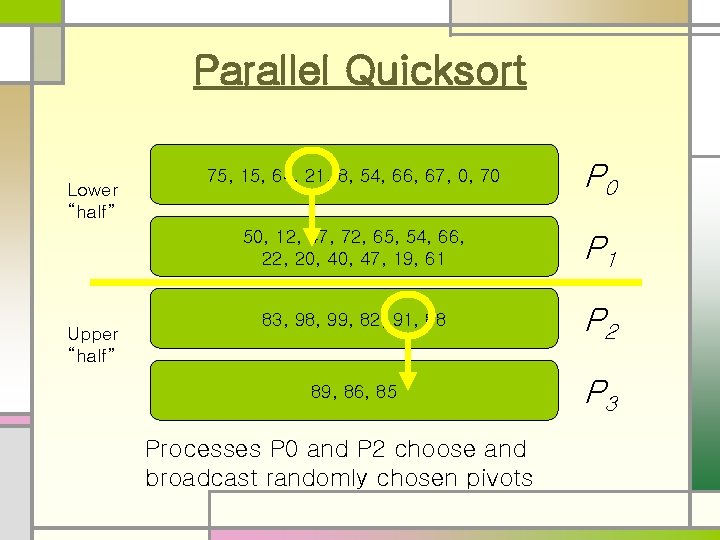

Parallel Quicksort Lower “half” Upper “half” 75, 15, 64, 21, 8, 54, 66, 67, 0, 70 P 0 50, 12, 47, 72, 65, 54, 66, 22, 20, 47, 19, 61 P 1 83, 98, 99, 82, 91, 88 P 2 89, 86, 85 P 3 Processes P 0 and P 2 choose and broadcast randomly chosen pivots

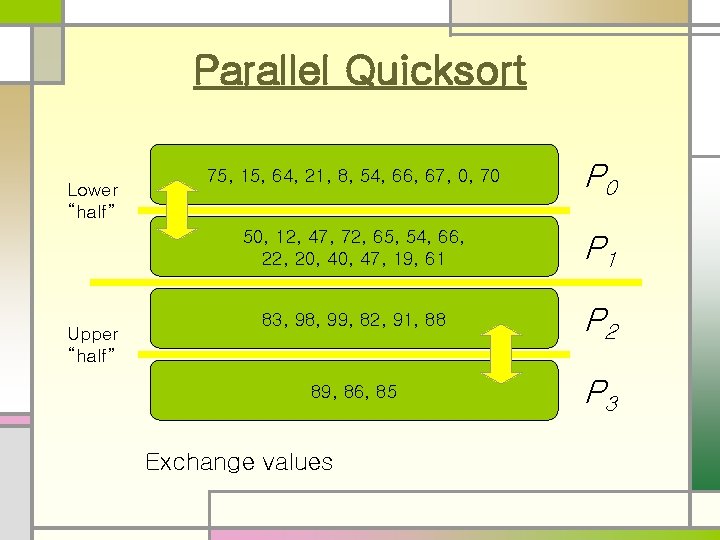

Parallel Quicksort Lower “half” Upper “half” 75, 15, 64, 21, 8, 54, 66, 67, 0, 70 P 0 50, 12, 47, 72, 65, 54, 66, 22, 20, 47, 19, 61 P 1 83, 98, 99, 82, 91, 88 P 2 89, 86, 85 P 3 Exchange values

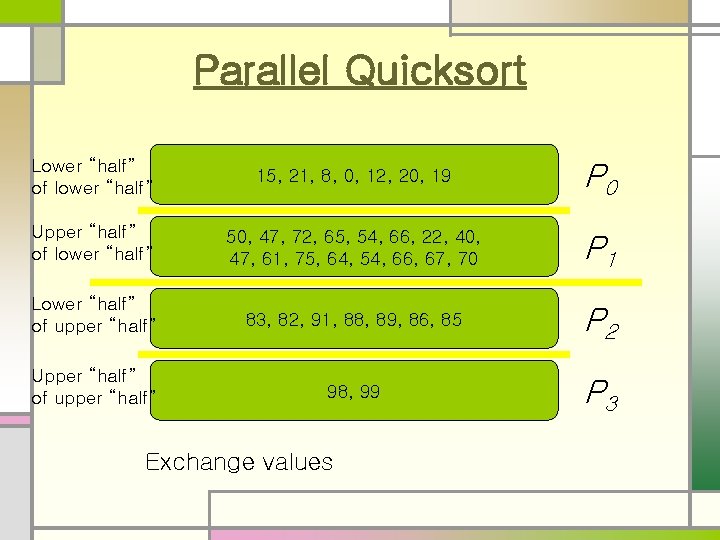

Parallel Quicksort Lower “half” of lower “half” 15, 21, 8, 0, 12, 20, 19 P 0 Upper “half” of lower “half” 50, 47, 72, 65, 54, 66, 22, 40, 47, 61, 75, 64, 54, 66, 67, 70 P 1 Lower “half” of upper “half” 83, 82, 91, 88, 89, 86, 85 P 2 Upper “half” of upper “half” 98, 99 P 3 Exchange values

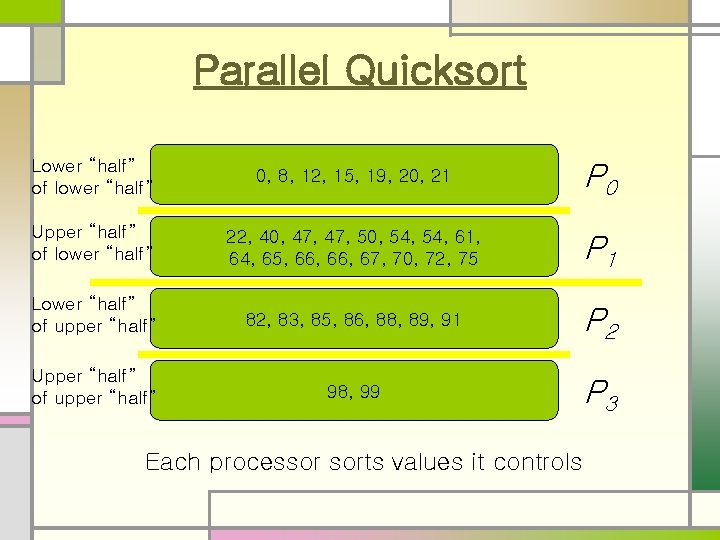

Parallel Quicksort Lower “half” of lower “half” 0, 8, 12, 15, 19, 20, 21 P 0 Upper “half” of lower “half” 22, 40, 47, 50, 54, 61, 64, 65, 66, 67, 70, 72, 75 P 1 Lower “half” of upper “half” 82, 83, 85, 86, 88, 89, 91 P 2 Upper “half” of upper “half” 98, 99 P 3 Each processor sorts values it controls

Analysis of Parallel Quicksort n Execution time dictated by when last process completes n Algorithm likely to do a poor job of load balancing number of elements sorted by each process n Cannot expect pivot value to be true median n Can choose a better pivot value

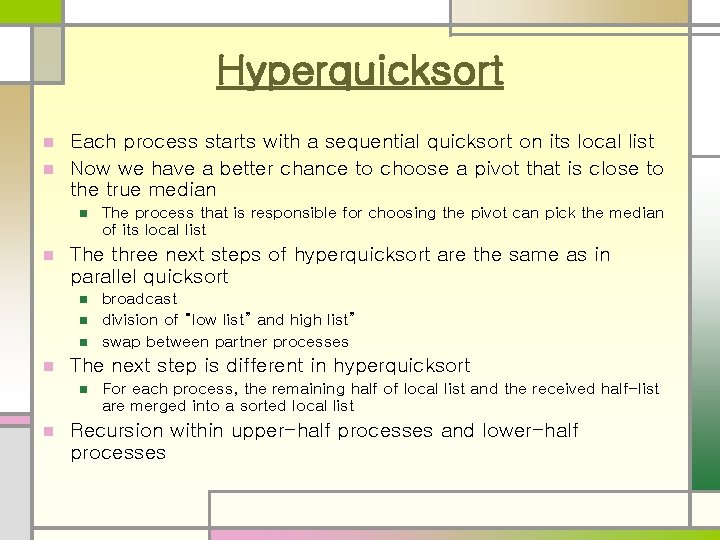

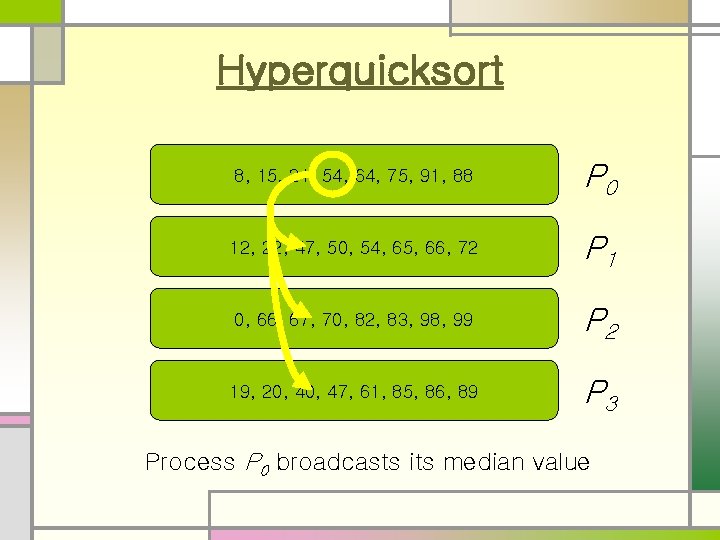

Hyperquicksort Each process starts with a sequential quicksort on its local list n Now we have a better chance to choose a pivot that is close to the true median n The three next steps of hyperquicksort are the same as in parallel quicksort n n broadcast division of “low list” and high list” swap between partner processes The next step is different in hyperquicksort n n The process that is responsible for choosing the pivot can pick the median of its local list For each process, the remaining half of local list and the received half-list are merged into a sorted local list Recursion within upper-half processes and lower-half processes

Hyperquicksort 75, 91, 15, 64, 21, 8, 88, 54 P 0 50, 12, 47, 72, 65, 54, 66, 22 P 1 83, 66, 67, 0, 70, 98, 99, 82 P 2 20, 40, 89, 47, 19, 61, 86, 85 P 3 Number of processors is a power of 2

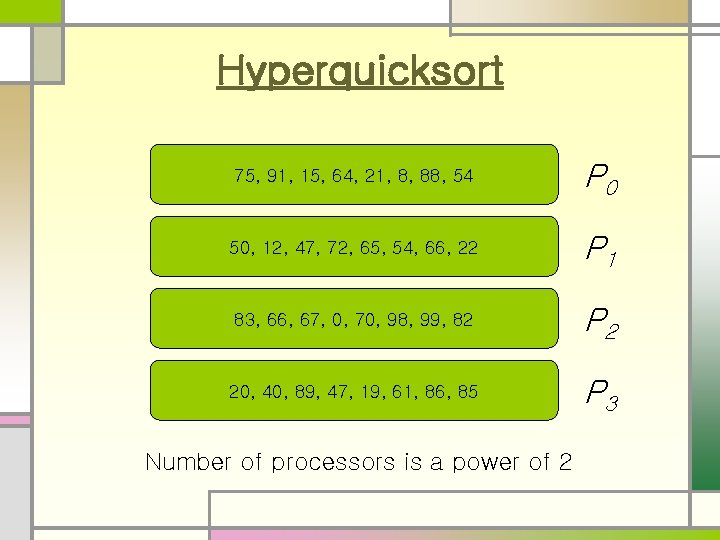

Hyperquicksort 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 19, 20, 47, 61, 85, 86, 89 P 3 Each process sorts values it controls

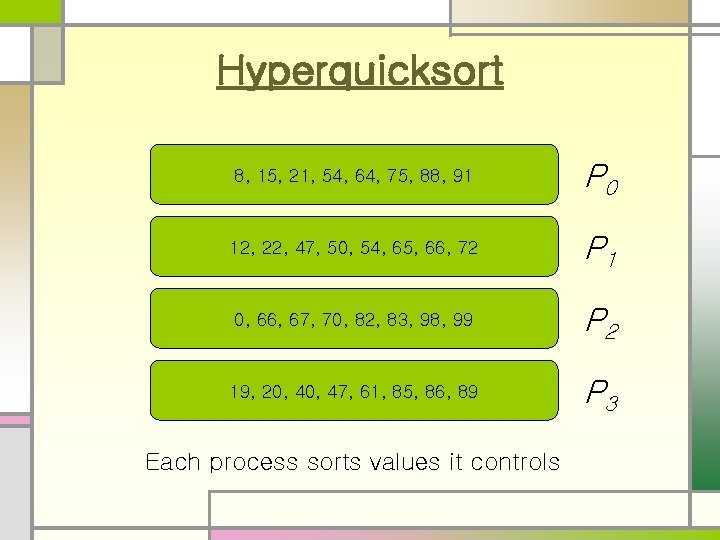

Hyperquicksort 8, 15, 21, 54, 64, 75, 91, 88 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 19, 20, 47, 61, 85, 86, 89 P 3 Process P 0 broadcasts its median value

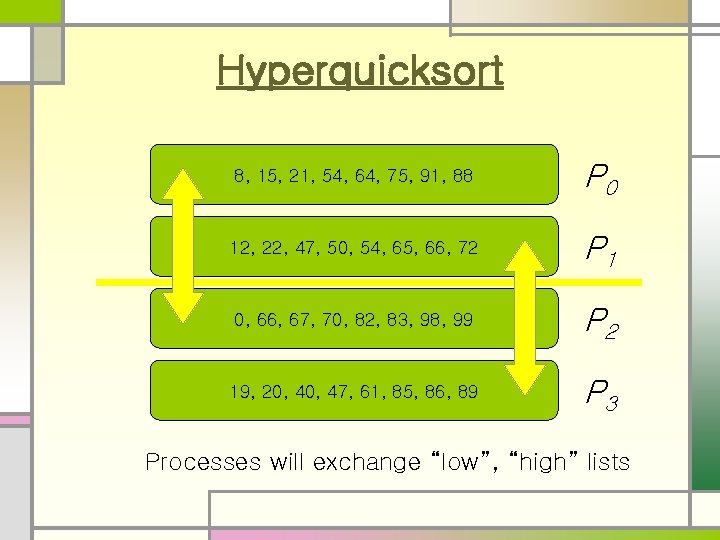

Hyperquicksort 8, 15, 21, 54, 64, 75, 91, 88 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 19, 20, 47, 61, 85, 86, 89 P 3 Processes will exchange “low”, “high” lists

Hyperquicksort 0, 8, 15, 21, 54 P 0 12, 19, 20, 22, 40, 47, 50, 54 P 1 64, 66, 67, 70, 75, 82, 83, 88, 91, 98, 99 P 2 61, 65, 66, 72, 85, 86, 89 P 3 Processes merge kept and received values.

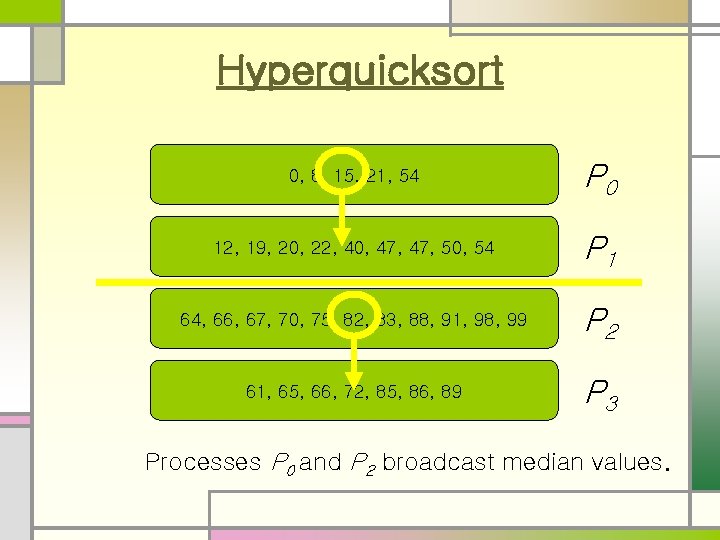

Hyperquicksort 0, 8, 15, 21, 54 P 0 12, 19, 20, 22, 40, 47, 50, 54 P 1 64, 66, 67, 70, 75, 82, 83, 88, 91, 98, 99 P 2 61, 65, 66, 72, 85, 86, 89 P 3 Processes P 0 and P 2 broadcast median values.

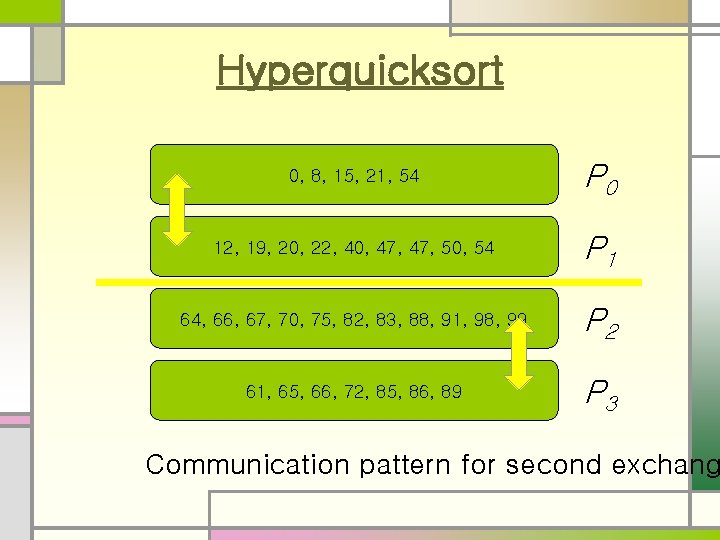

Hyperquicksort 0, 8, 15, 21, 54 P 0 12, 19, 20, 22, 40, 47, 50, 54 P 1 64, 66, 67, 70, 75, 82, 83, 88, 91, 98, 99 P 2 61, 65, 66, 72, 85, 86, 89 P 3 Communication pattern for second exchang

Hyperquicksort 0, 8, 12, 15 P 0 19, 20, 21, 22, 40, 47, 50, 54 P 1 61, 64, 65, 66, 67, 70, 72, 75, 82 P 2 83, 85, 86, 88, 89, 91, 98, 99 P 3 After exchange-and-merge step

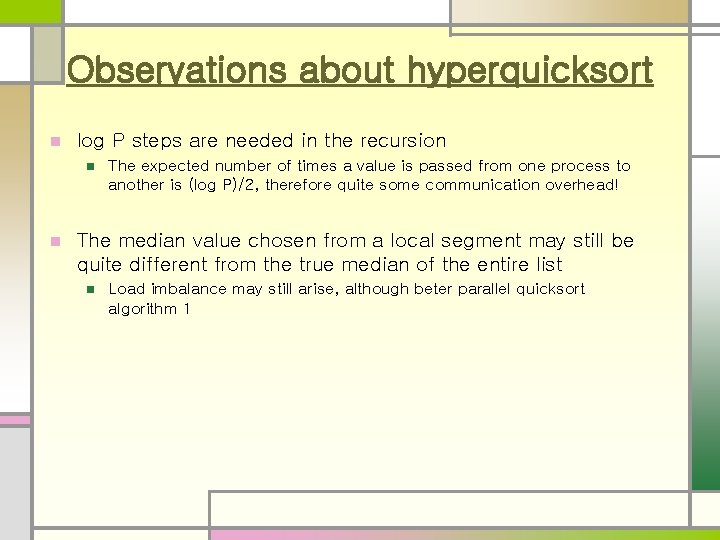

Observations about hyperquicksort n log P steps are needed in the recursion n n The expected number of times a value is passed from one process to another is (log P)/2, therefore quite some communication overhead! The median value chosen from a local segment may still be quite different from the true median of the entire list n Load imbalance may still arise, although beter parallel quicksort algorithm 1

Another Scalability Concern n Our analysis assumes lists remain balanced n As p increases, each processor’s share of list decreases n Hence as p increases, likelihood of lists becoming unbalanced increases n Unbalanced lists lower efficiency n Would be better to get sample values from all processes before choosing median

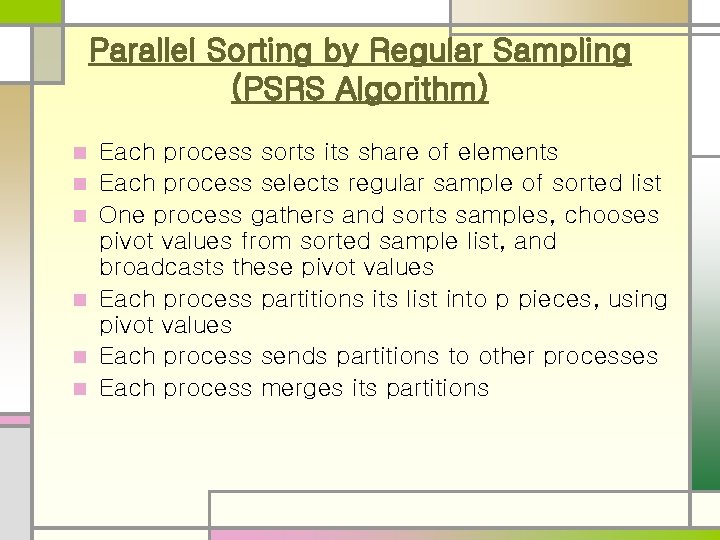

Parallel Sorting by Regular Sampling (PSRS Algorithm) n n n Each process sorts its share of elements Each process selects regular sample of sorted list One process gathers and sorts samples, chooses pivot values from sorted sample list, and broadcasts these pivot values Each process partitions its list into p pieces, using pivot values Each process sends partitions to other processes Each process merges its partitions

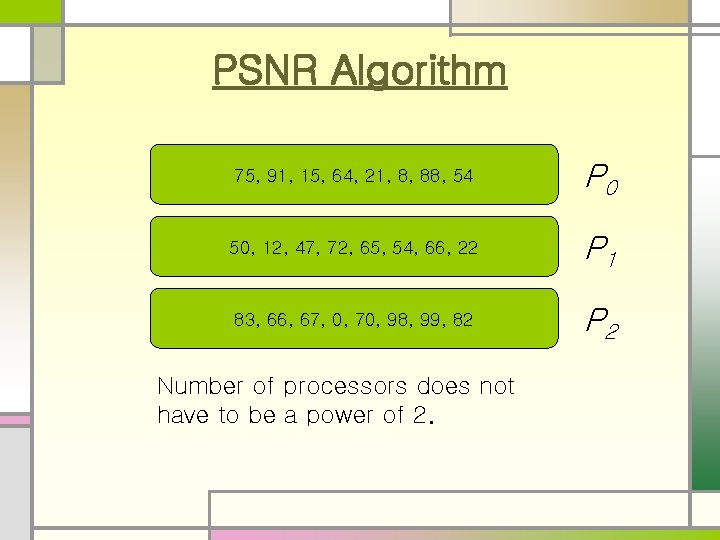

PSNR Algorithm 75, 91, 15, 64, 21, 8, 88, 54 P 0 50, 12, 47, 72, 65, 54, 66, 22 P 1 83, 66, 67, 0, 70, 98, 99, 82 P 2 Number of processors does not have to be a power of 2.

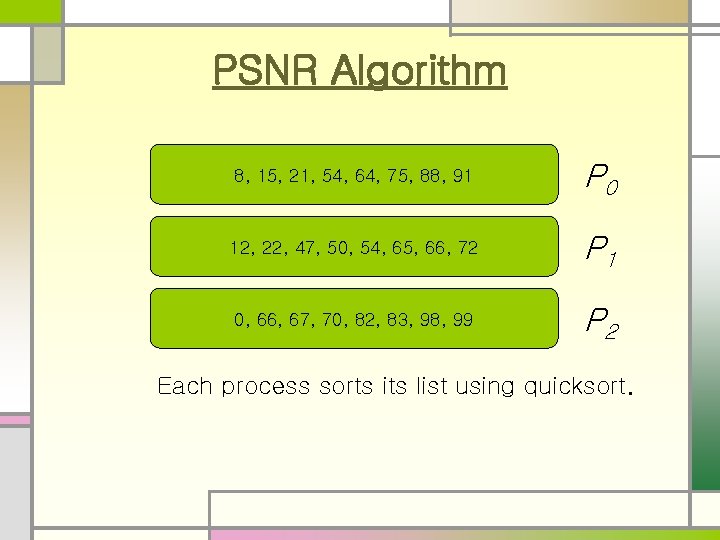

PSNR Algorithm 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 Each process sorts its list using quicksort.

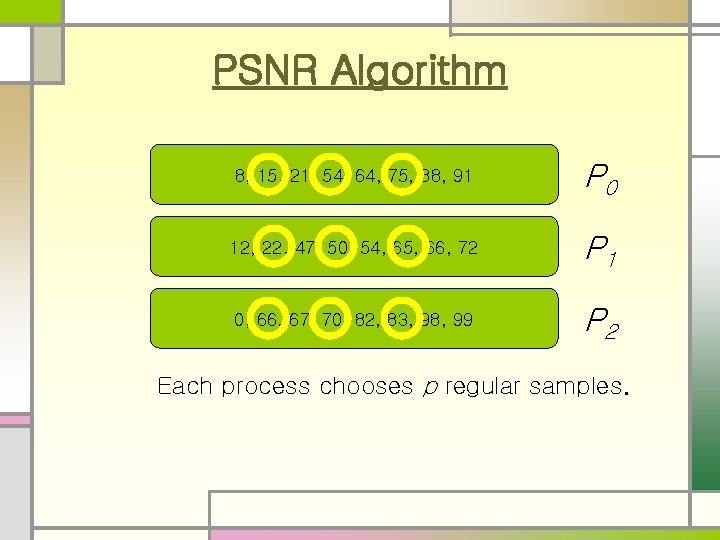

PSNR Algorithm 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 Each process chooses p regular samples.

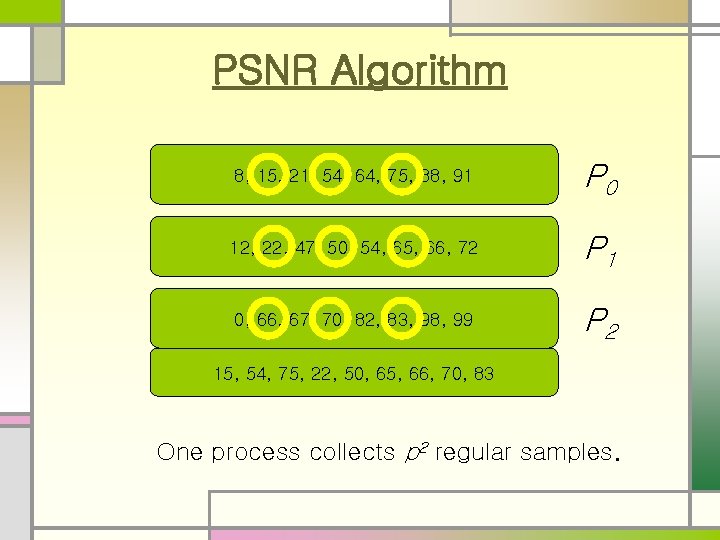

PSNR Algorithm 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 15, 54, 75, 22, 50, 65, 66, 70, 83 One process collects p 2 regular samples.

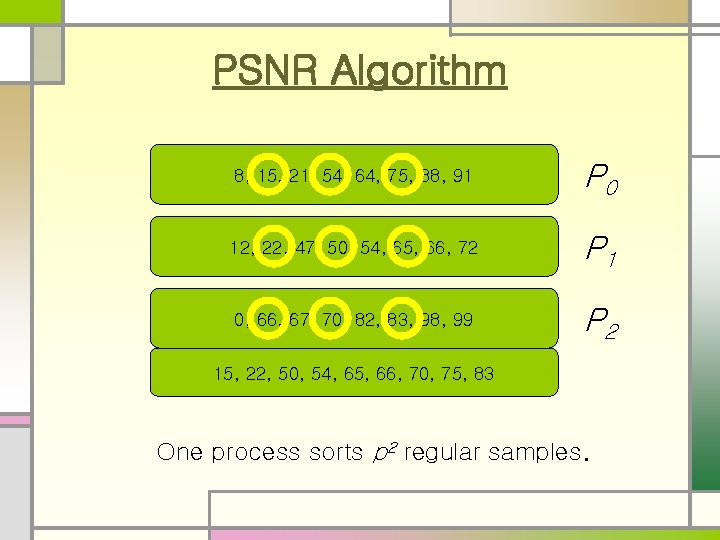

PSNR Algorithm 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 15, 22, 50, 54, 65, 66, 70, 75, 83 One process sorts p 2 regular samples.

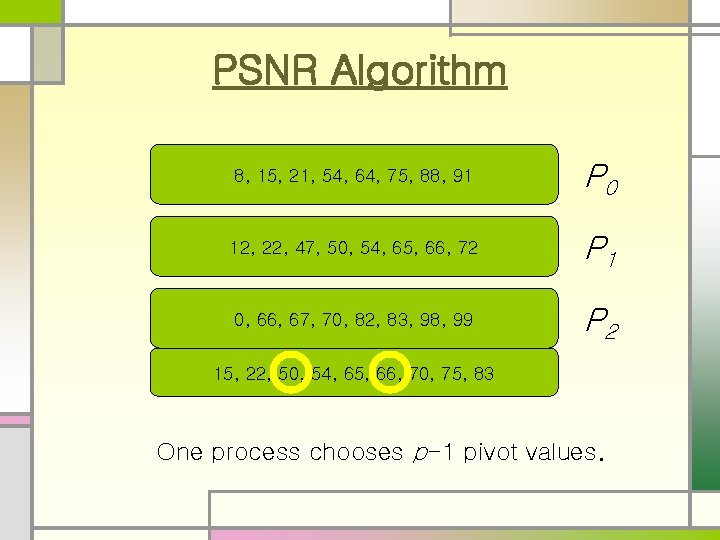

PSNR Algorithm 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 15, 22, 50, 54, 65, 66, 70, 75, 83 One process chooses p-1 pivot values.

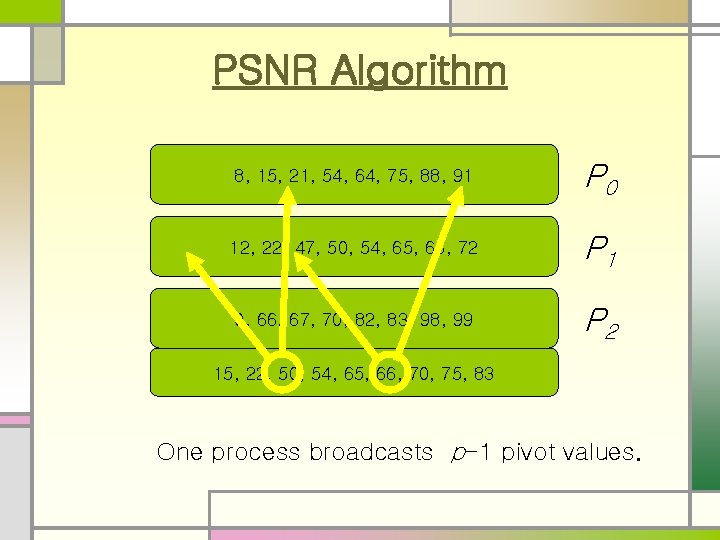

PSNR Algorithm 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 15, 22, 50, 54, 65, 66, 70, 75, 83 One process broadcasts p-1 pivot values.

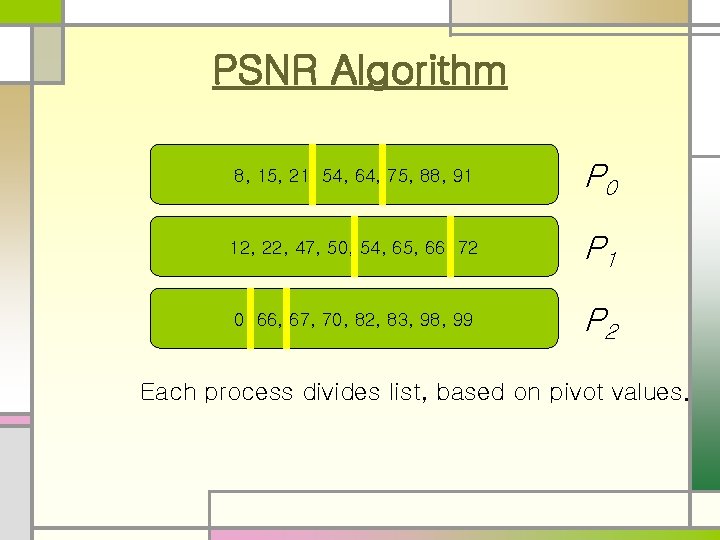

PSNR Algorithm 8, 15, 21, 54, 64, 75, 88, 91 P 0 12, 22, 47, 50, 54, 65, 66, 72 P 1 0, 66, 67, 70, 82, 83, 98, 99 P 2 Each process divides list, based on pivot values.

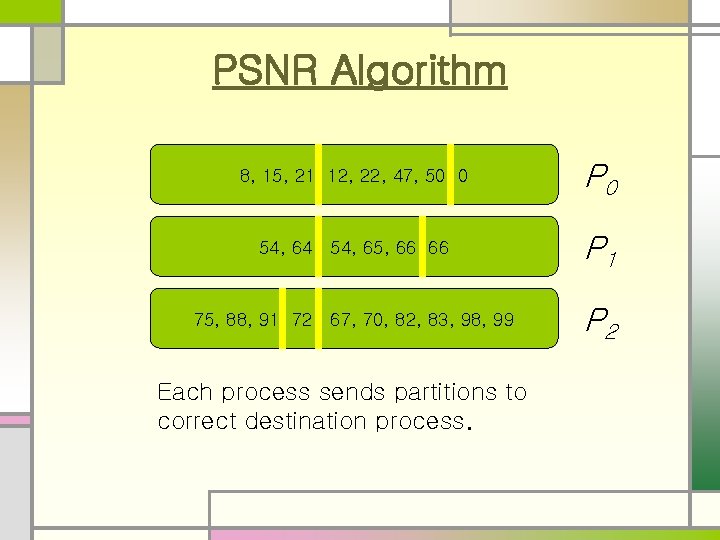

PSNR Algorithm 8, 15, 21 12, 22, 47, 50 0 P 0 54, 64 54, 65, 66 66 P 1 75, 88, 91 72 67, 70, 82, 83, 98, 99 P 2 Each process sends partitions to correct destination process.

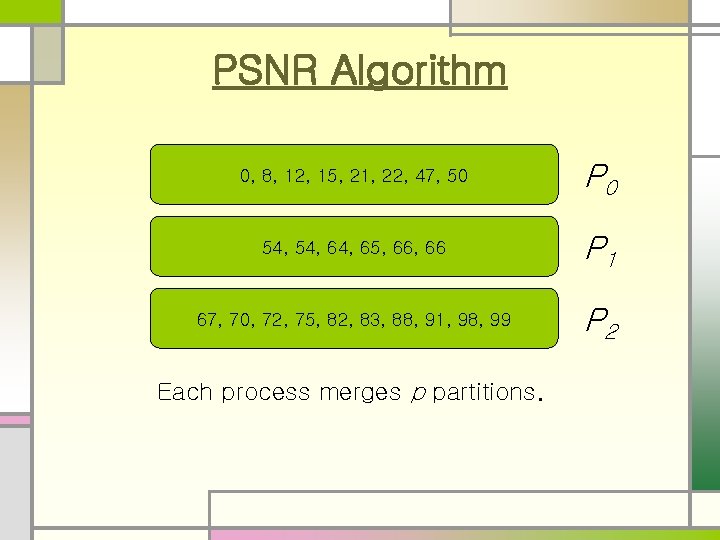

PSNR Algorithm 0, 8, 12, 15, 21, 22, 47, 50 P 0 54, 64, 65, 66 P 1 67, 70, 72, 75, 82, 83, 88, 91, 98, 99 P 2 Each process merges p partitions.

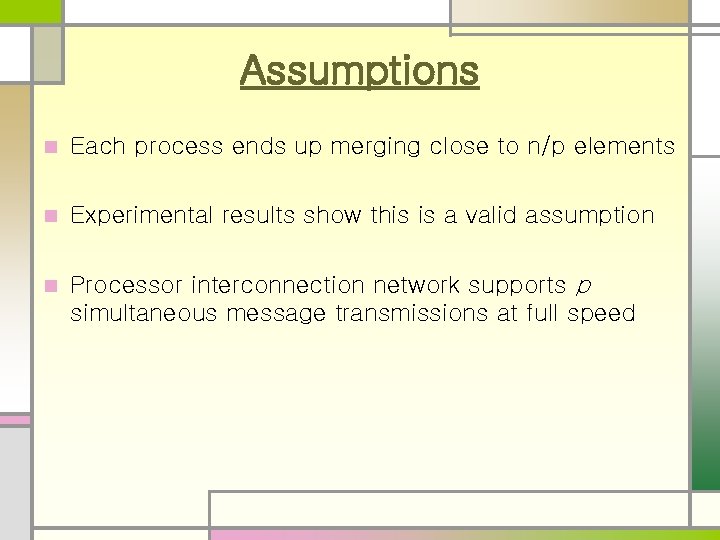

Assumptions n Each process ends up merging close to n/p elements n Experimental results show this is a valid assumption n Processor interconnection network supports p simultaneous message transmissions at full speed

Advantages of PSRS n Better load balance (although perfect load balance can not be guaranteed) n Repeated communications of a same value are avoided n The number of processes does not have to be power of 2, which is required by parallel quicksort algorithm and hyperquicksort

Summary Three distributed algorithms based on quicksort n Keeping list sizes balanced n n Parallel quicksort: poor Hyperquicksort: better PSRS algorithm: excellent

- Slides: 38