Lecture 4 4 Memory Hierarchy Virtual Memory Learning

- Slides: 23

Lecture 4. 4 Memory Hierarchy: Virtual Memory

Learning Objectives n n n Translate virtual addresses to physical addresses with page table Use TLB to facilitate the address translation Identify various events n n TLB hit/miss Page table hit/miss n Page table miss -> page fault 2

Coverage n Textbook: Chapter 5. 7 3

n A memory management technique developed for multitasking computer architectures n n Virtualize various forms of data storage Allow a program to be designed as there is only one type of memory, i. e. , “virtual” memory n n § 5. 7 Virtual Memory Each program runs on its own virtual address space Use main memory as a “cache” for secondary (disk) storage n n n Allow efficient and safe sharing of memory among multiple programs Provide the ability to easily run programs larger than the size of physical memory Simplify the process of loading a program for execution by code relocation Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 4

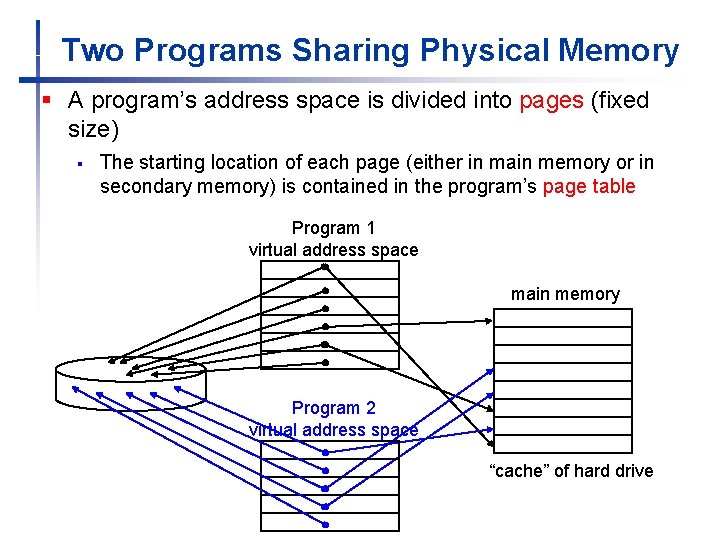

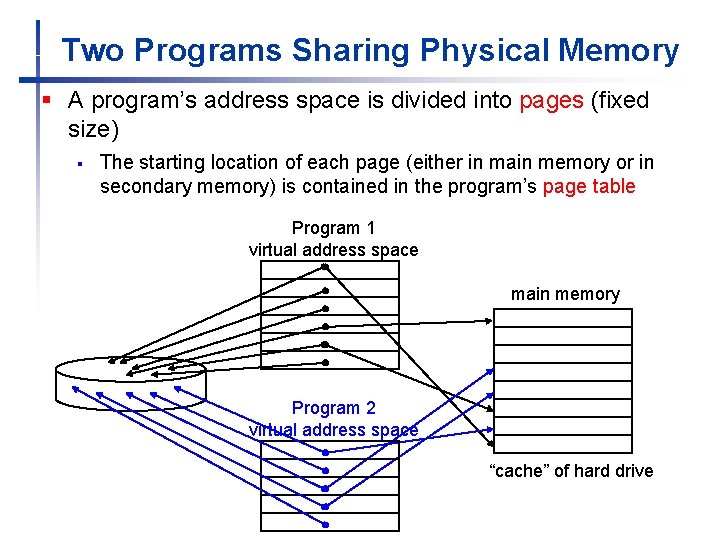

Two Programs Sharing Physical Memory § A program’s address space is divided into pages (fixed size) § The starting location of each page (either in main memory or in secondary memory) is contained in the program’s page table Program 1 virtual address space main memory Program 2 virtual address space “cache” of hard drive

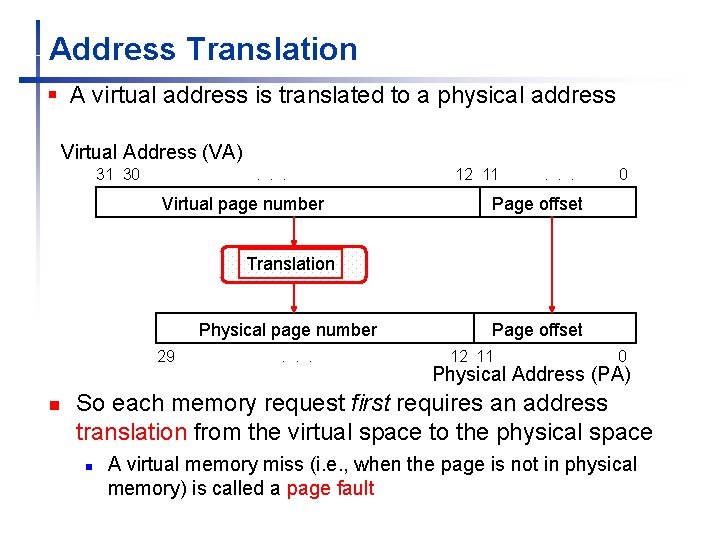

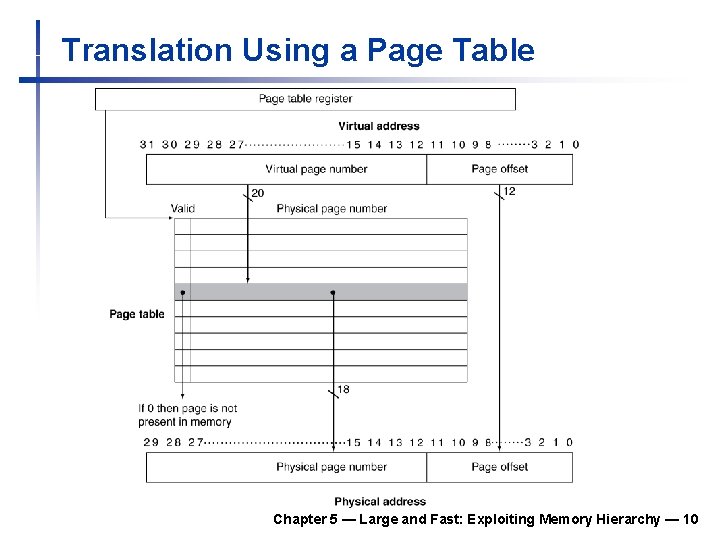

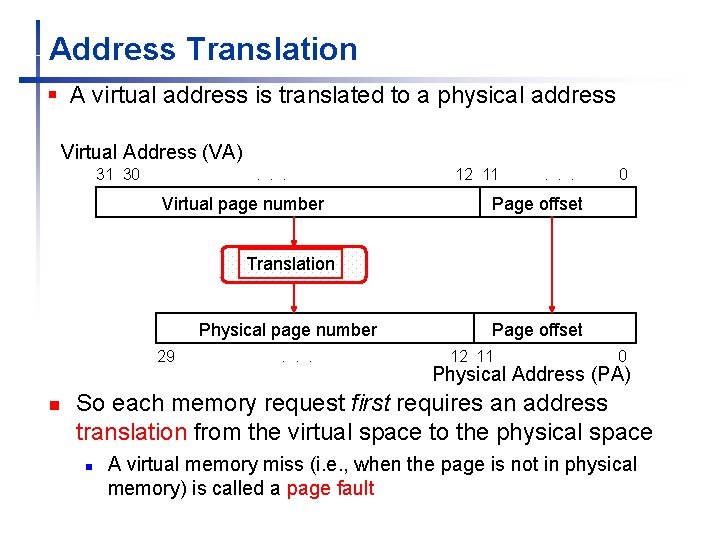

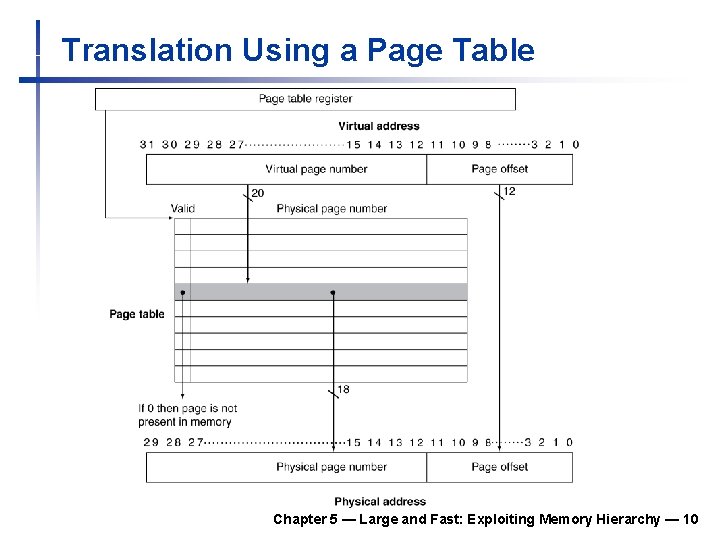

Address Translation § A virtual address is translated to a physical address Virtual Address (VA) 31 30 . . . Virtual page number 12 11 . . . 0 Page offset Translation Physical page number 29 n . . . Page offset 12 11 0 Physical Address (PA) So each memory request first requires an address translation from the virtual space to the physical space n A virtual memory miss (i. e. , when the page is not in physical memory) is called a page fault

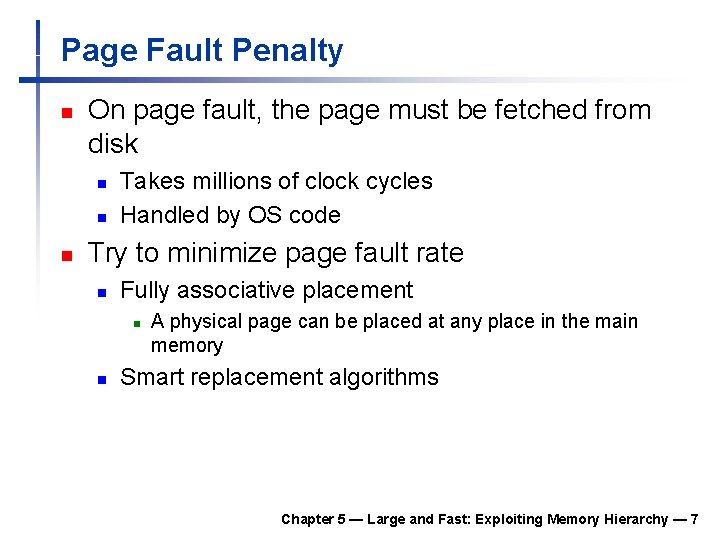

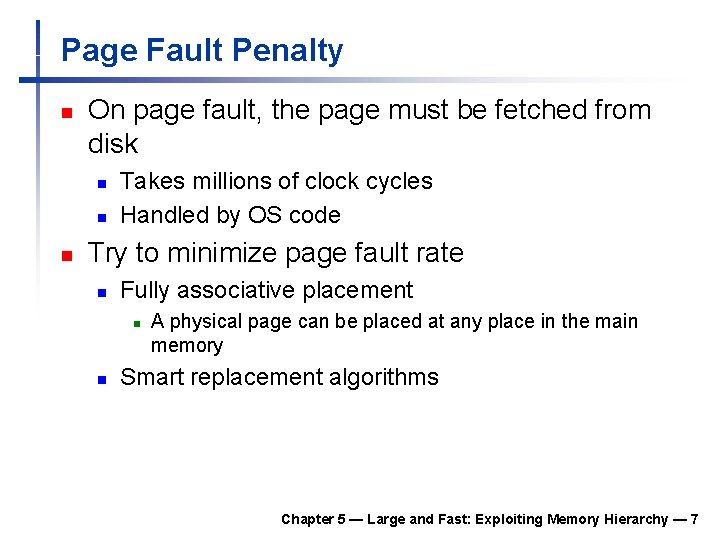

Page Fault Penalty n On page fault, the page must be fetched from disk n n n Takes millions of clock cycles Handled by OS code Try to minimize page fault rate n Fully associative placement n n A physical page can be placed at any place in the main memory Smart replacement algorithms Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 7

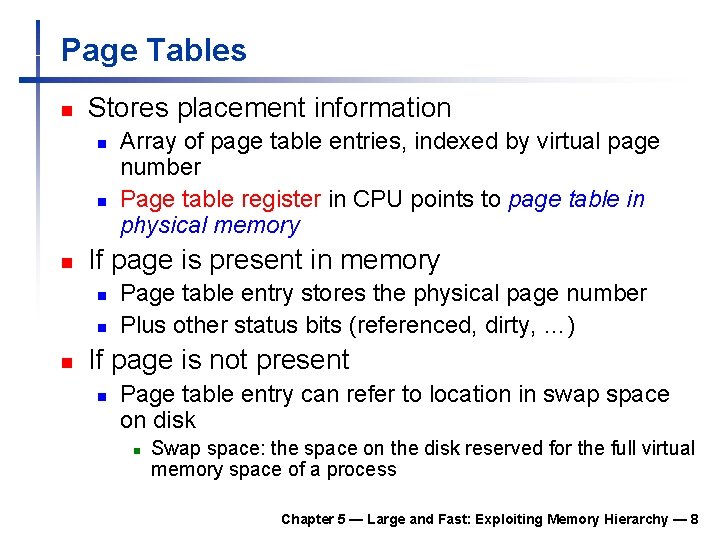

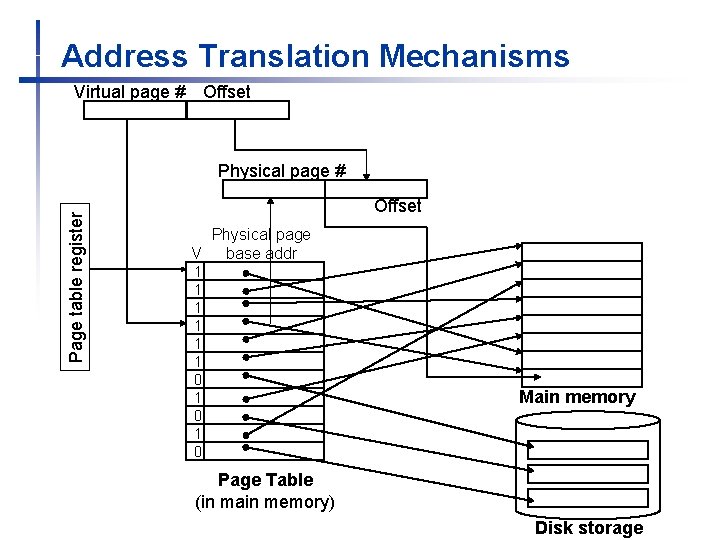

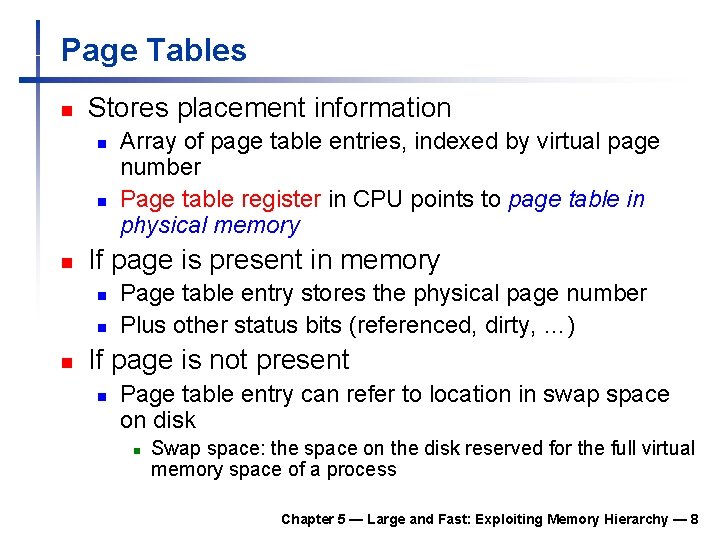

Page Tables n Stores placement information n If page is present in memory n n n Array of page table entries, indexed by virtual page number Page table register in CPU points to page table in physical memory Page table entry stores the physical page number Plus other status bits (referenced, dirty, …) If page is not present n Page table entry can refer to location in swap space on disk n Swap space: the space on the disk reserved for the full virtual memory space of a process Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 8

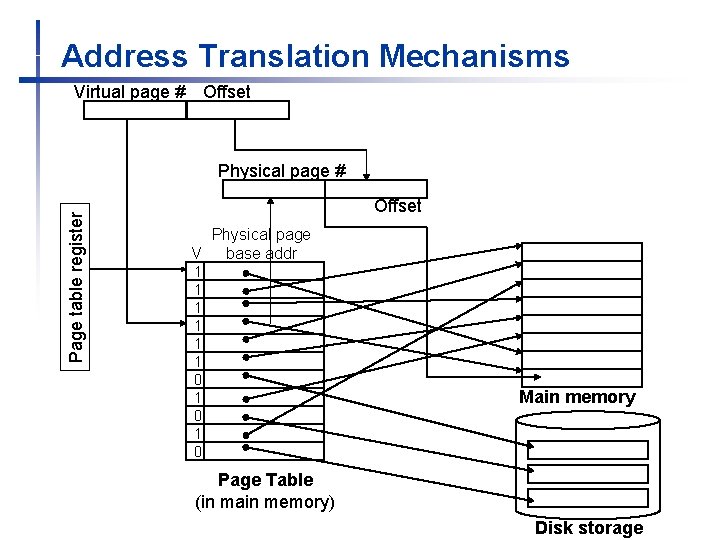

Address Translation Mechanisms Virtual page # Offset Page table register Physical page # Offset Physical page V base addr 1 1 1 0 1 0 Main memory Page Table (in main memory) Disk storage

Translation Using a Page Table Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 10

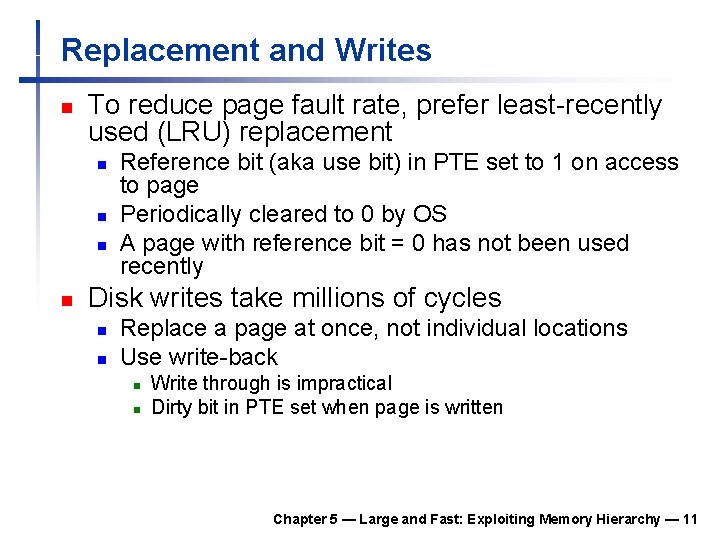

Replacement and Writes n To reduce page fault rate, prefer least-recently used (LRU) replacement n n Reference bit (aka use bit) in PTE set to 1 on access to page Periodically cleared to 0 by OS A page with reference bit = 0 has not been used recently Disk writes take millions of cycles n n Replace a page at once, not individual locations Use write-back n n Write through is impractical Dirty bit in PTE set when page is written Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 11

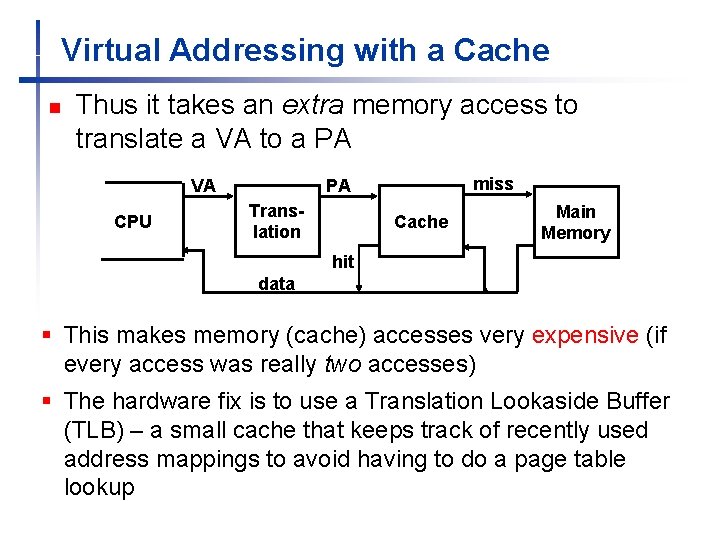

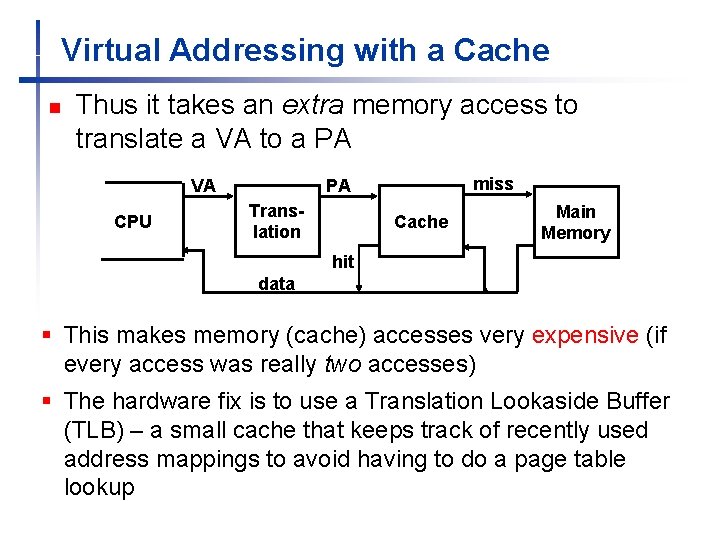

Virtual Addressing with a Cache n Thus it takes an extra memory access to translate a VA to a PA VA CPU miss PA Translation Cache Main Memory hit data § This makes memory (cache) accesses very expensive (if every access was really two accesses) § The hardware fix is to use a Translation Lookaside Buffer (TLB) – a small cache that keeps track of recently used address mappings to avoid having to do a page table lookup

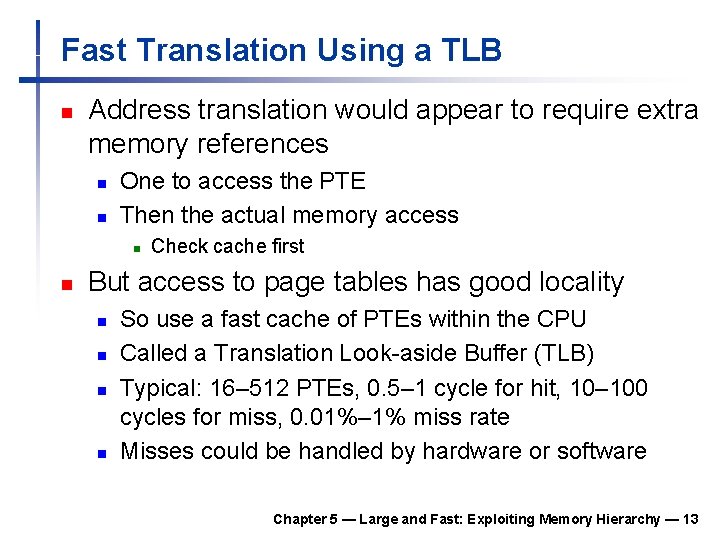

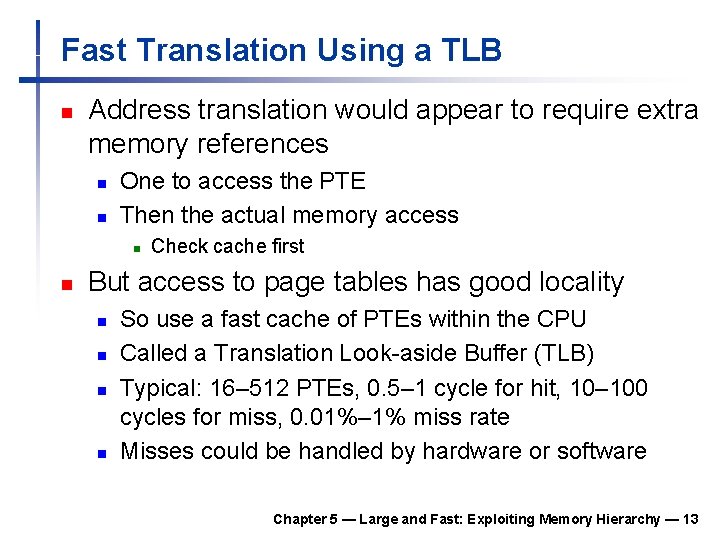

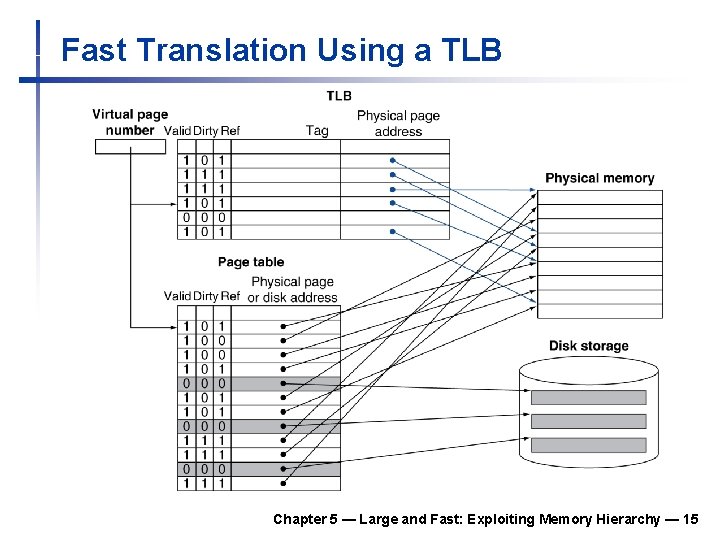

Fast Translation Using a TLB n Address translation would appear to require extra memory references n n One to access the PTE Then the actual memory access n n Check cache first But access to page tables has good locality n n So use a fast cache of PTEs within the CPU Called a Translation Look-aside Buffer (TLB) Typical: 16– 512 PTEs, 0. 5– 1 cycle for hit, 10– 100 cycles for miss, 0. 01%– 1% miss rate Misses could be handled by hardware or software Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 13

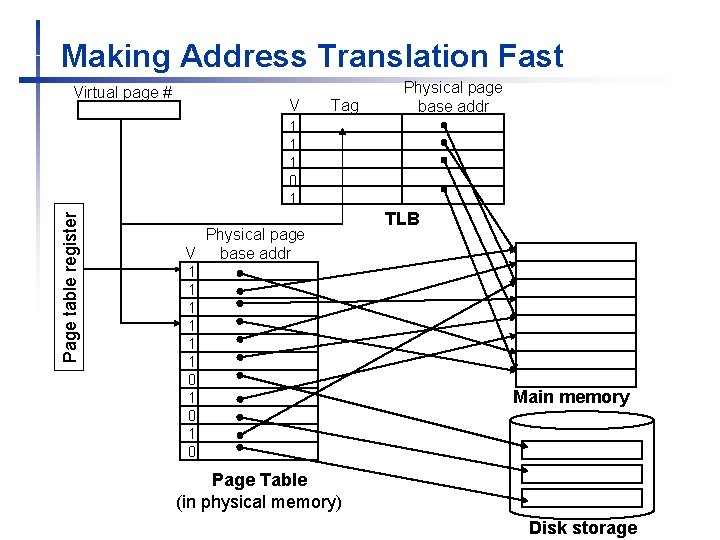

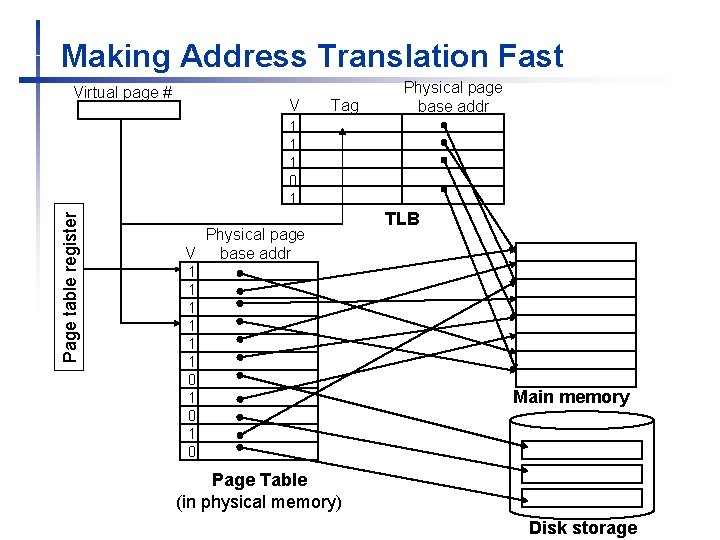

Making Address Translation Fast Virtual page # V Tag Physical page base addr Page table register 1 1 1 0 1 Physical page V base addr 1 1 1 0 1 0 TLB Main memory Page Table (in physical memory) Disk storage

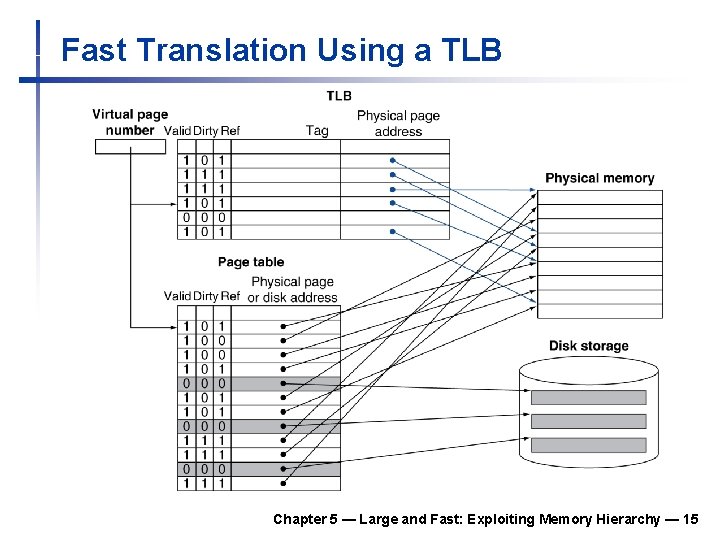

Fast Translation Using a TLB Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 15

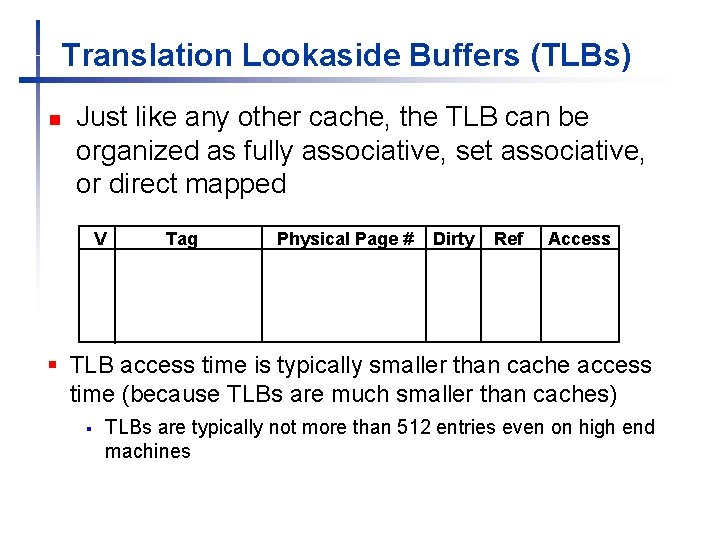

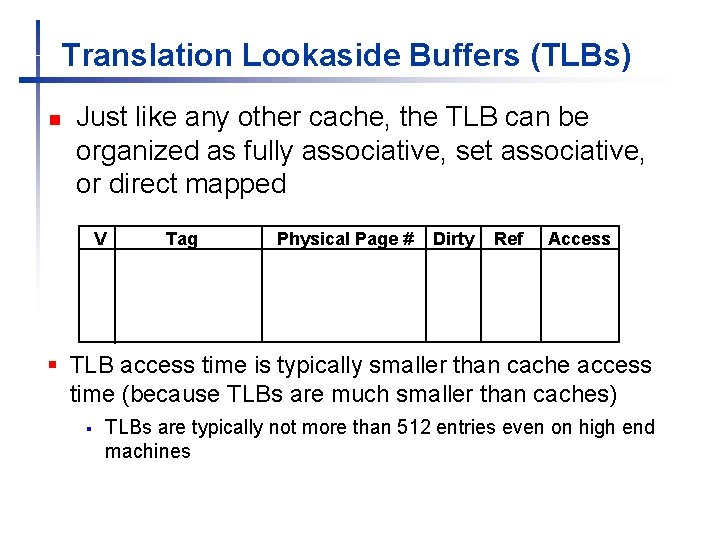

Translation Lookaside Buffers (TLBs) n Just like any other cache, the TLB can be organized as fully associative, set associative, or direct mapped V Tag Physical Page # Dirty Ref Access § TLB access time is typically smaller than cache access time (because TLBs are much smaller than caches) § TLBs are typically not more than 512 entries even on high end machines

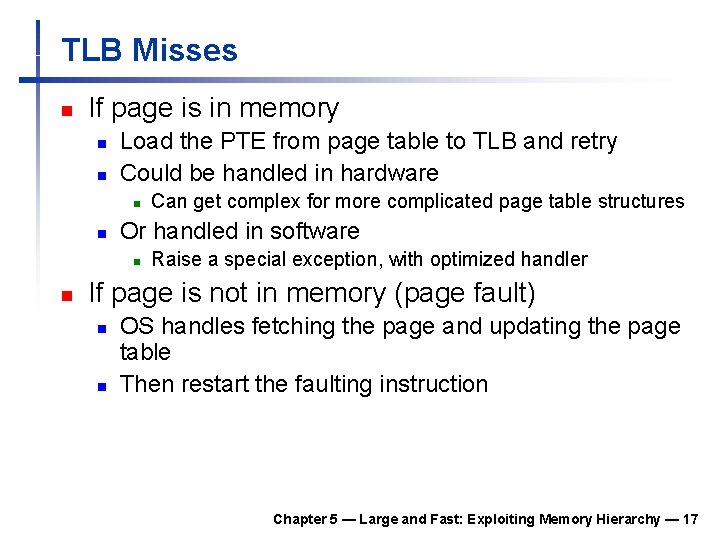

TLB Misses n If page is in memory n n Load the PTE from page table to TLB and retry Could be handled in hardware n n Or handled in software n n Can get complex for more complicated page table structures Raise a special exception, with optimized handler If page is not in memory (page fault) n n OS handles fetching the page and updating the page table Then restart the faulting instruction Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 17

TLB Miss Handler n TLB miss indicates two possibilities n Page present, but PTE not in TLB n n n Handler copies PTE from memory to TLB Then restarts instruction Page not present in main memory n Page fault will occur Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 18

Page Fault Handler 1. 2. 3. Use virtual address to find PTE Locate page on disk Choose page to replace if necessary n 4. 5. If the chosen page is dirty, write to disk first Read page into memory and update page table Make process runnable again n Restart from faulting instruction Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 19

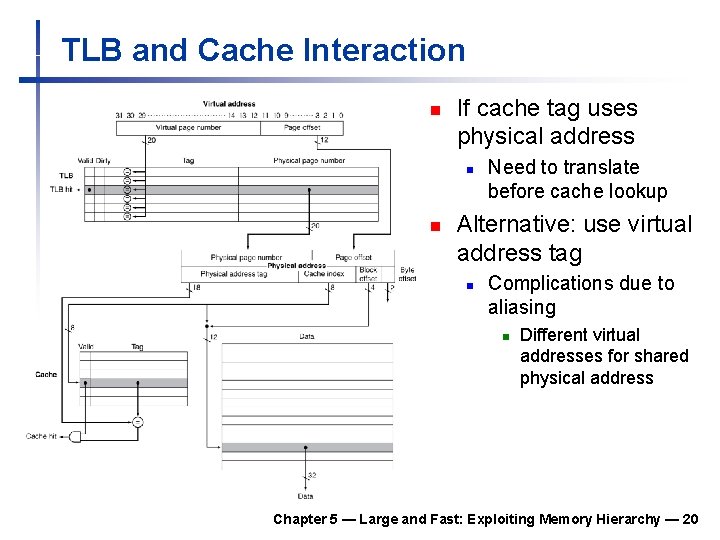

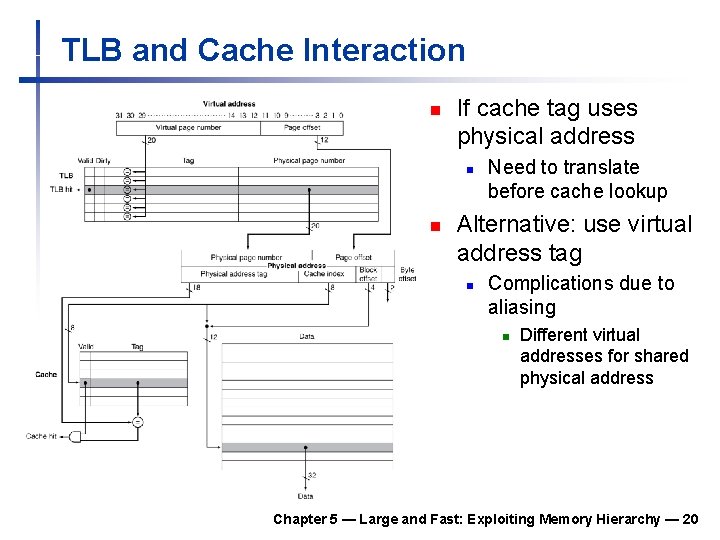

TLB and Cache Interaction n If cache tag uses physical address n n Need to translate before cache lookup Alternative: use virtual address tag n Complications due to aliasing n Different virtual addresses for shared physical address Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 20

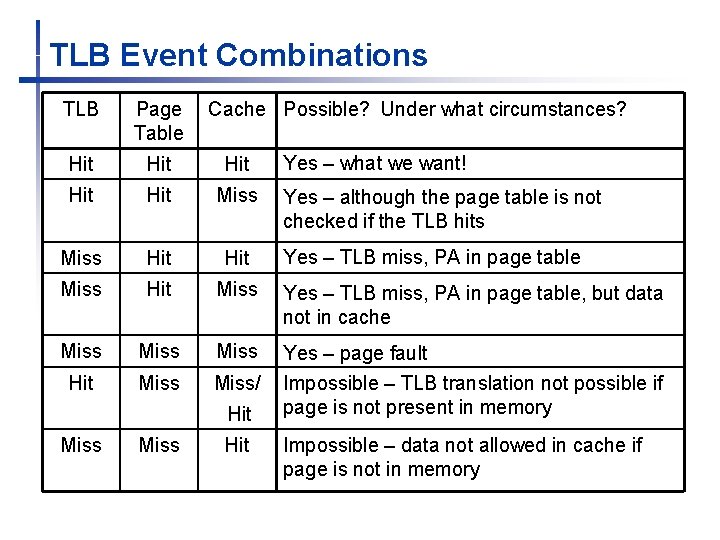

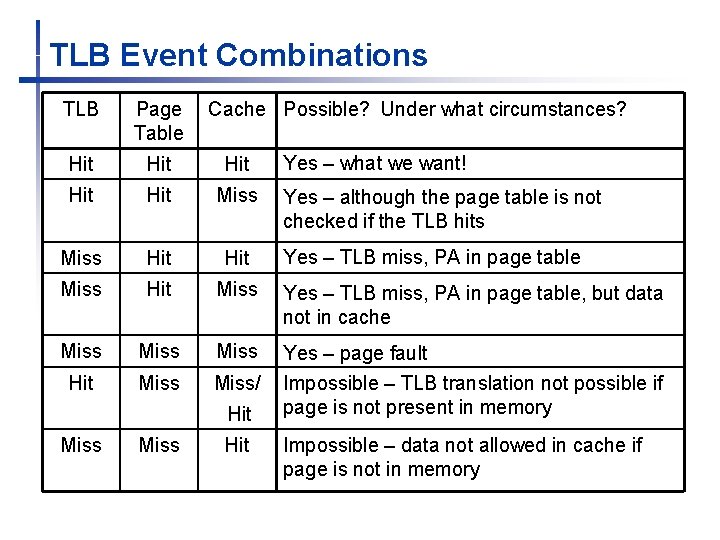

TLB Event Combinations TLB Page Table Cache Possible? Under what circumstances? Hit Hit Hit Miss Hit Miss Yes – TLB miss, PA in page table, but data not in cache Miss Hit Miss/ Yes – page fault Impossible – TLB translation not possible if page is not present in memory Hit Miss Hit Yes – what we want! Yes – although the page table is not checked if the TLB hits Yes – TLB miss, PA in page table Impossible – data not allowed in cache if page is not in memory

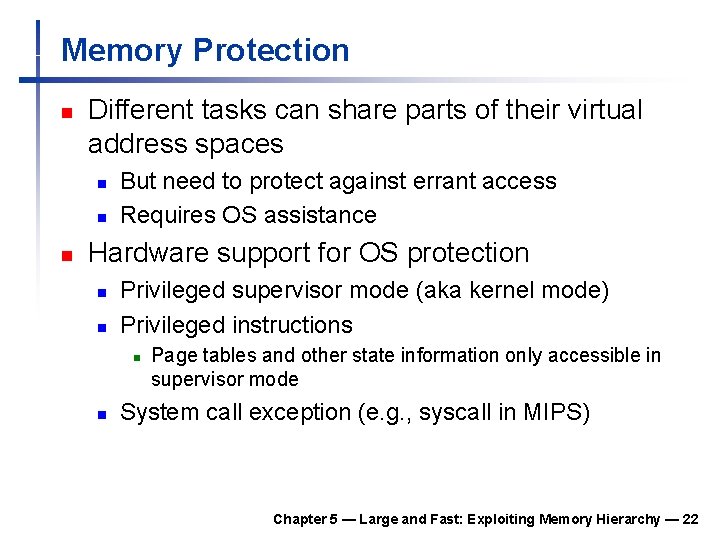

Memory Protection n Different tasks can share parts of their virtual address spaces n n n But need to protect against errant access Requires OS assistance Hardware support for OS protection n n Privileged supervisor mode (aka kernel mode) Privileged instructions n n Page tables and other state information only accessible in supervisor mode System call exception (e. g. , syscall in MIPS) Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 22

n Fast memories are small, large memories are slow n n n Principle of locality n n Programs use a small part of their memory space frequently Memory hierarchy n n We really want fast, large memories Caching gives this illusion § 5. 16 Concluding Remarks L 1 cache L 2 cache … DRAM memory disk Memory system design is critical for multiprocessors Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 23