Lecture 3 Chiong and Shum Random Projection Estimation

- Slides: 23

Lecture 3: Chiong and Shum, “Random Projection Estimation of Discrete-Choice Models with Large Choice Sets” Stephen P. Ryan Olin Business School Washington University in St. Louis

Overview • Discrete choice models are ubiquitous workhorse in many settings • Most important decisions of your life are discrete: • • • Job Housing Car Education Spouse (perhaps dynamic!) • Enormous amount of research on these models, starting with Mc. Fadden (1974) and moving forward • Chiong and Shum (2017): What to do when you have a huge number of choices?

Review of discrete choice models •

Choice Probabilities •

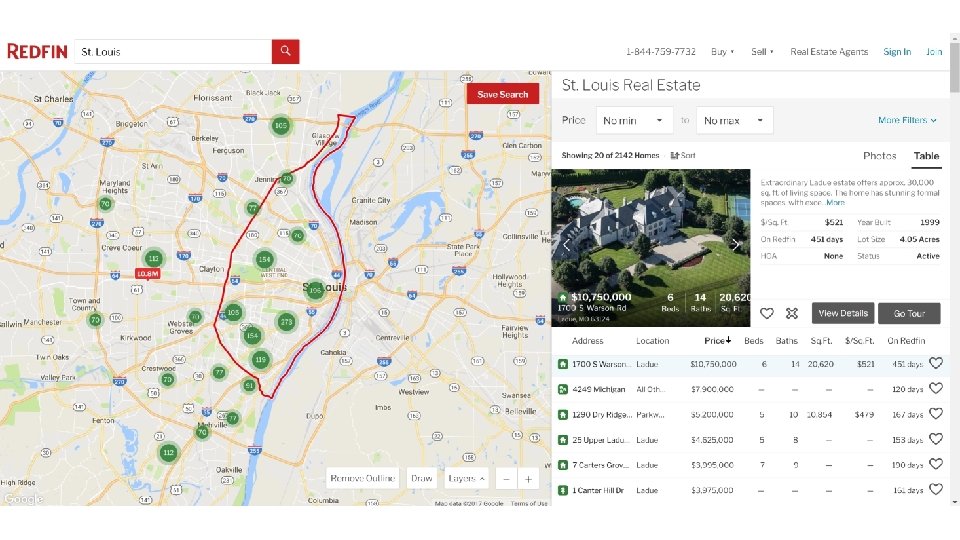

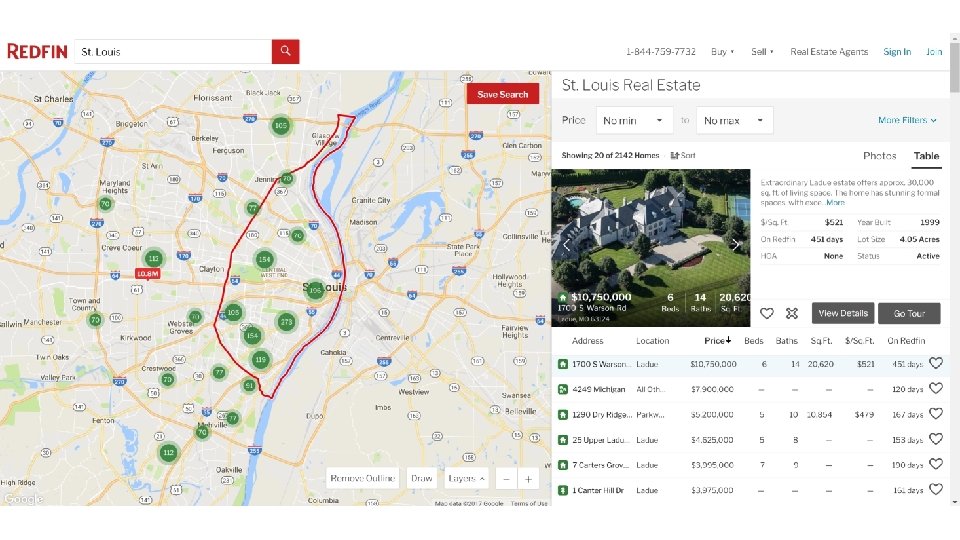

So What’s the Problem? • Consider the problem of buying a place in STL • Let’s go to Redfin and see what it says about our choice set…

Hmm… • That didn’t seem so bad? • I think we could plausibly estimate a model with 2, 142 choices • But instead of thinking about your decision at the margin, we thought about modeling utility functions of everyone who is currently located somewhere? • Apparently there are 141, 312 occupied housing units in STL • What about some other important situations? • What about your choice of a mate?

Maybe this starts to get a little harder • The set of available mates in a given period of time is fairly large • Population of Germany is about 83 million • Assuming uniform distribution of ages, people live to 83, one million people your age • How many possible combinations of one million people? • Answer: A LOT • Many, many settings look like this either with lots of choices outright or in combination with timing, location, bundling, etc. • So what is the problem? Two-fold: 1. Computational time of running model with millions of choices 2. The probabilities are getting really, really small -> this can cause problems computationally

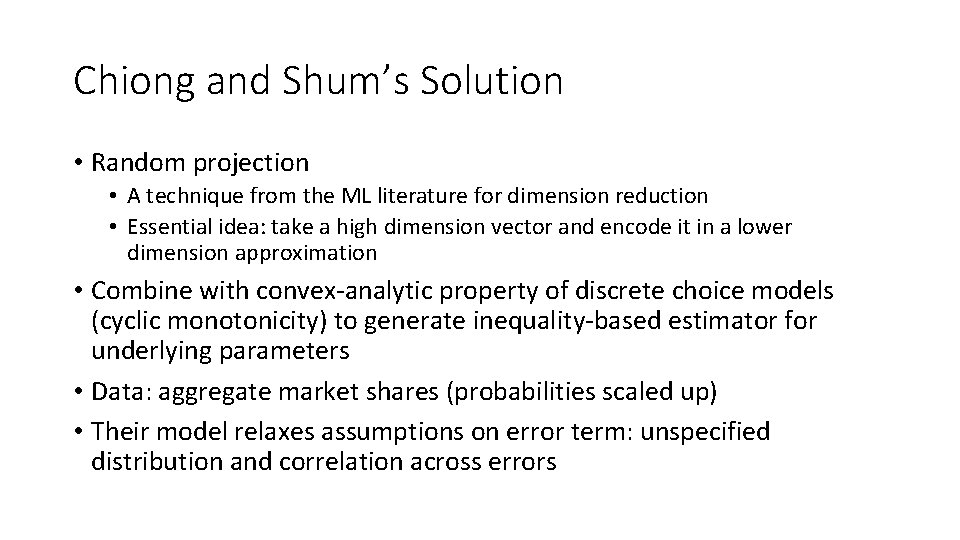

Chiong and Shum’s Solution • Random projection • A technique from the ML literature for dimension reduction • Essential idea: take a high dimension vector and encode it in a lower dimension approximation • Combine with convex-analytic property of discrete choice models (cyclic monotonicity) to generate inequality-based estimator for underlying parameters • Data: aggregate market shares (probabilities scaled up) • Their model relaxes assumptions on error term: unspecified distribution and correlation across errors

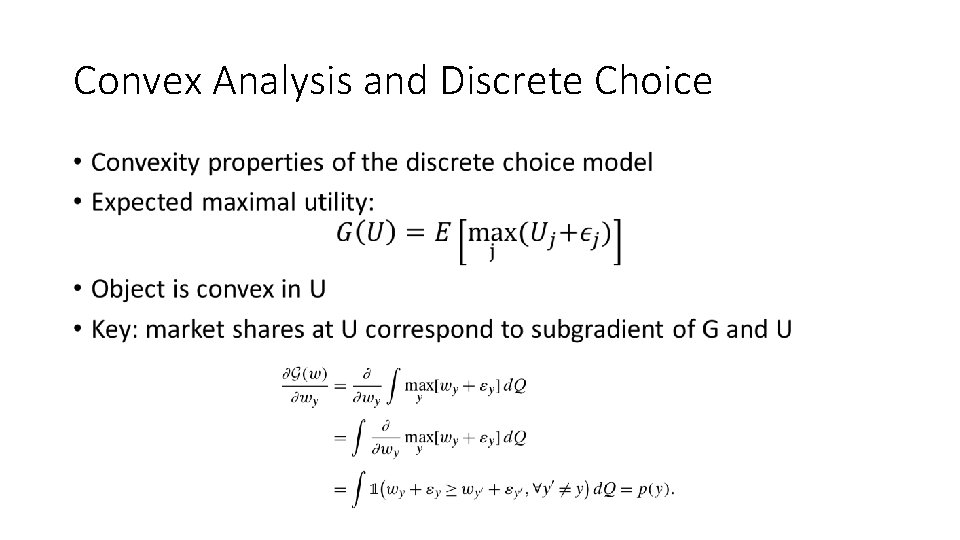

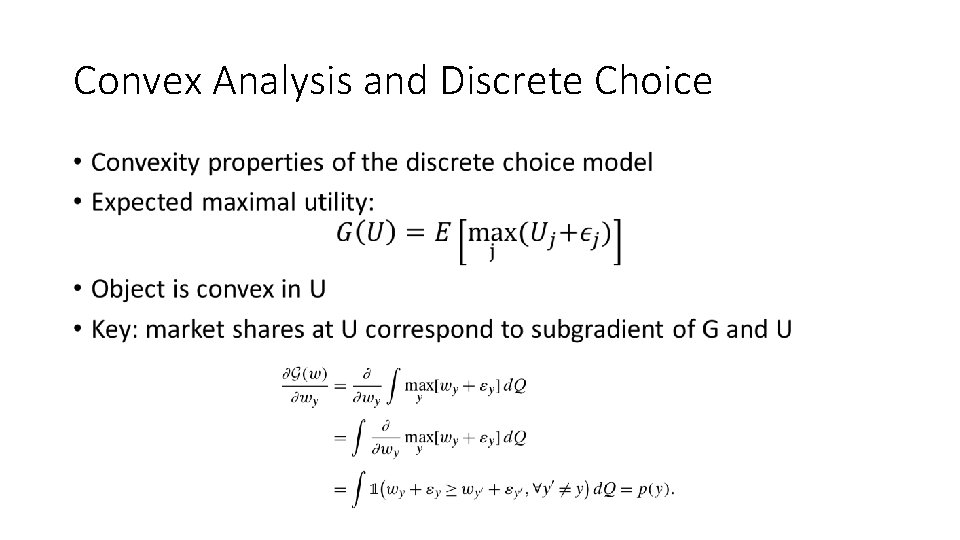

Convex Analysis and Discrete Choice •

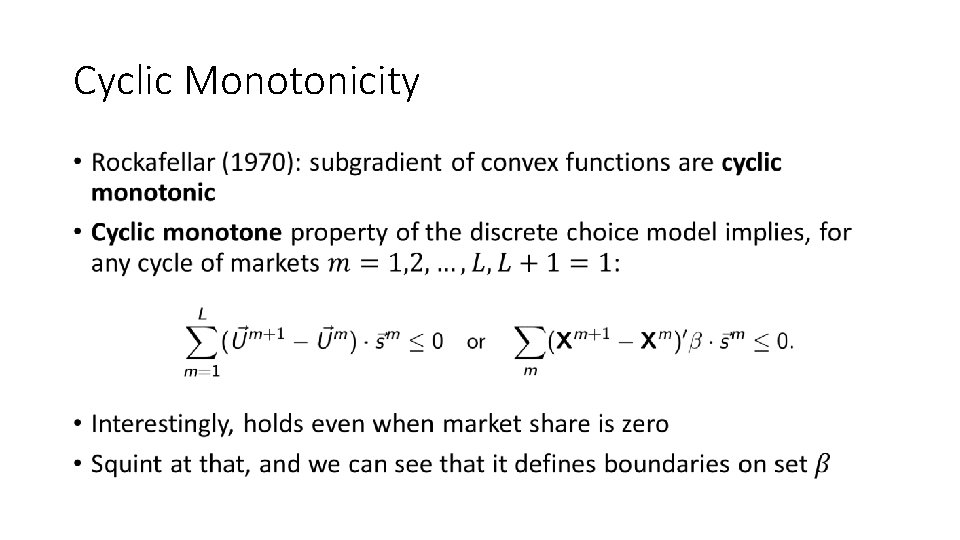

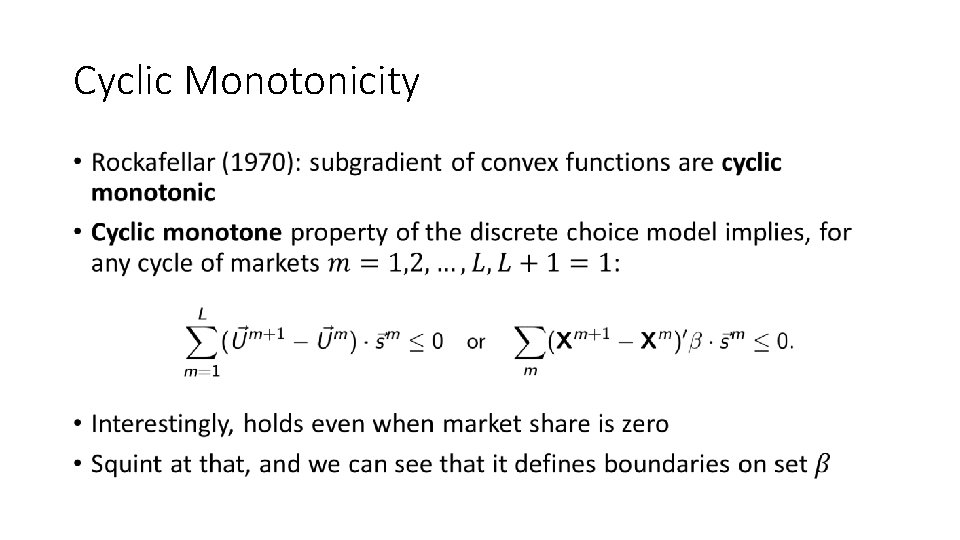

Cyclic Monotonicity •

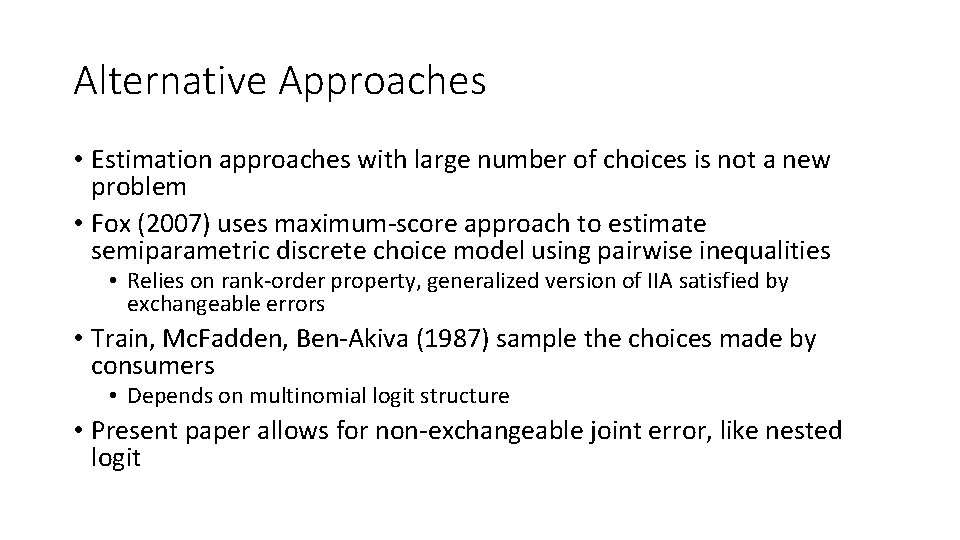

Alternative Approaches • Estimation approaches with large number of choices is not a new problem • Fox (2007) uses maximum-score approach to estimate semiparametric discrete choice model using pairwise inequalities • Relies on rank-order property, generalized version of IIA satisfied by exchangeable errors • Train, Mc. Fadden, Ben-Akiva (1987) sample the choices made by consumers • Depends on multinomial logit structure • Present paper allows for non-exchangeable joint error, like nested logit

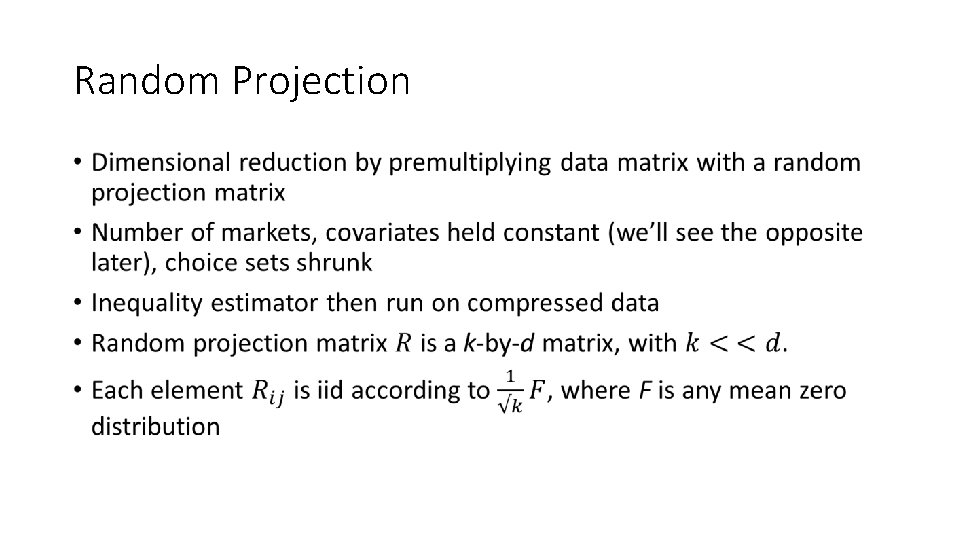

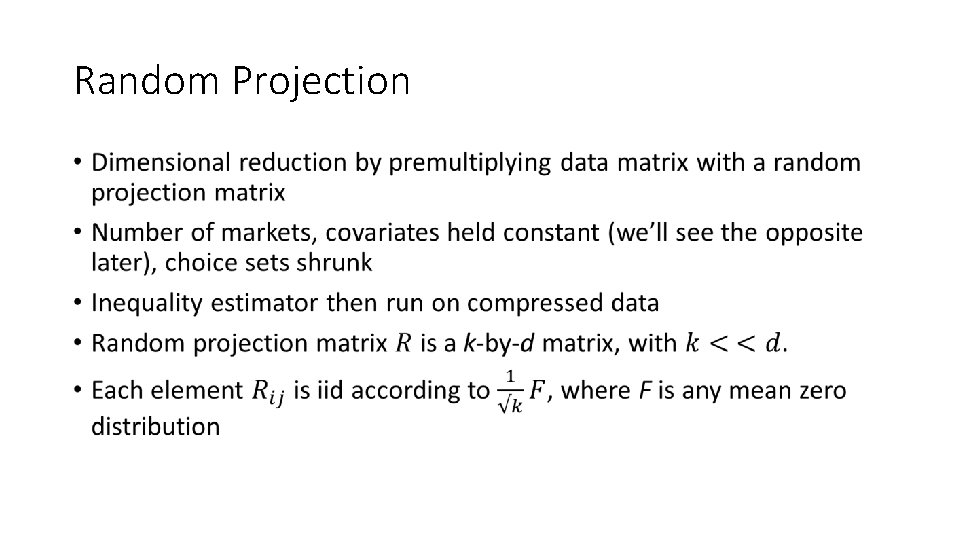

Random Projection •

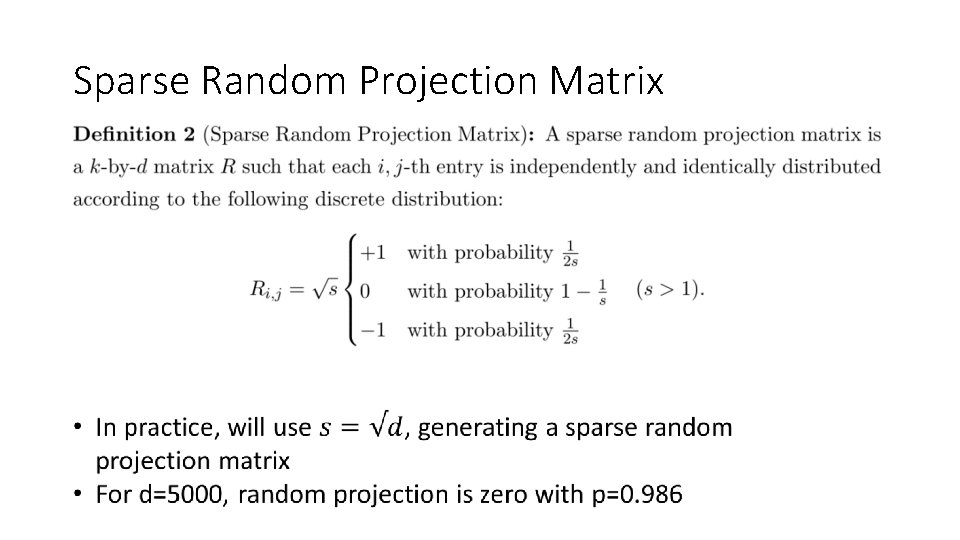

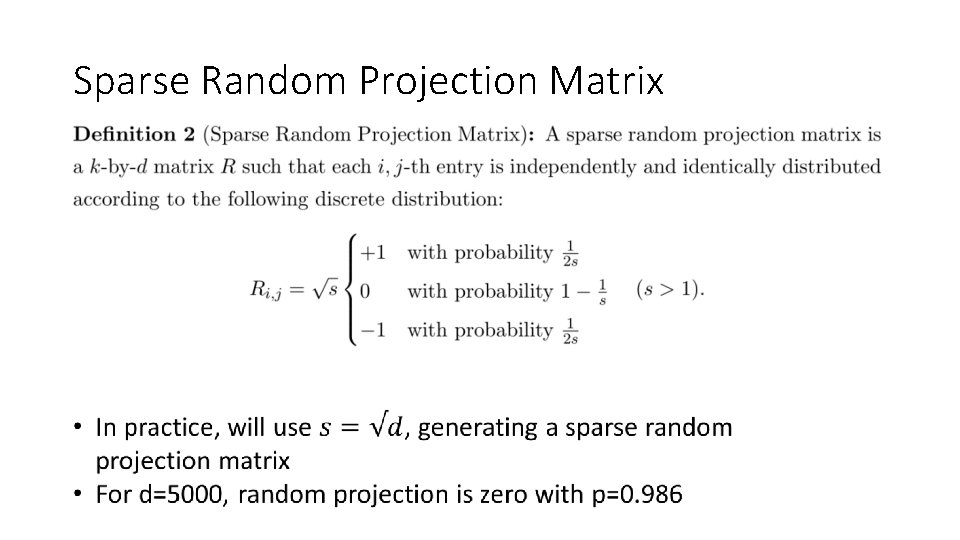

Sparse Random Projection Matrix

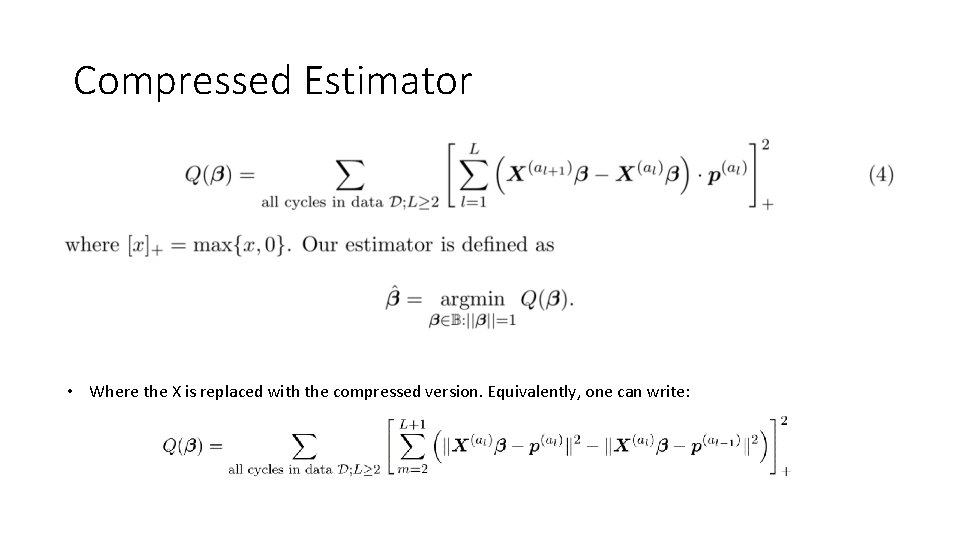

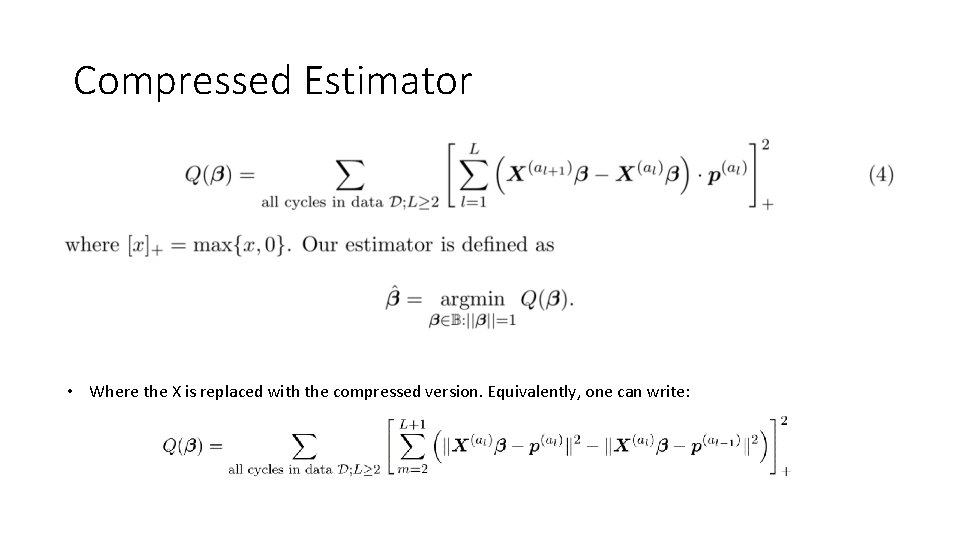

Compressed Estimator • Where the X is replaced with the compressed version. Equivalently, one can write:

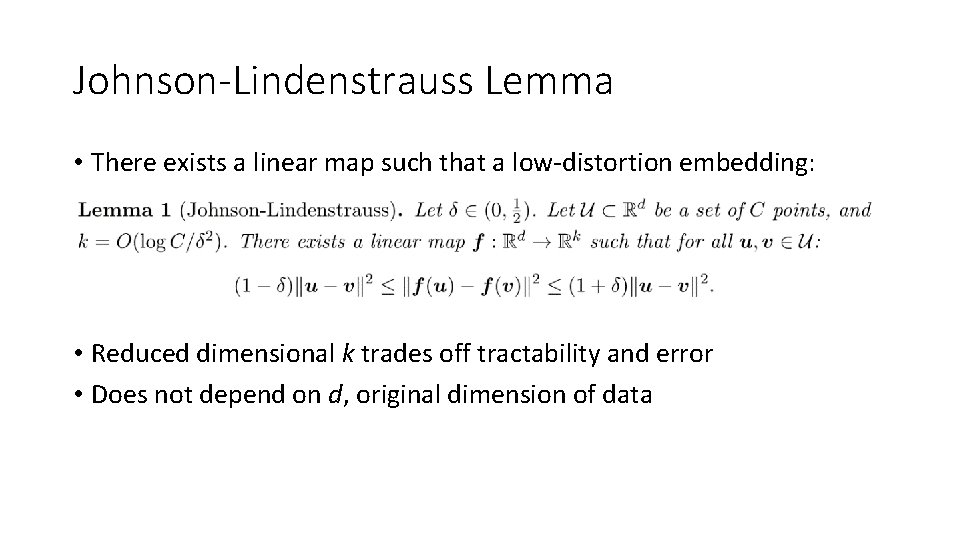

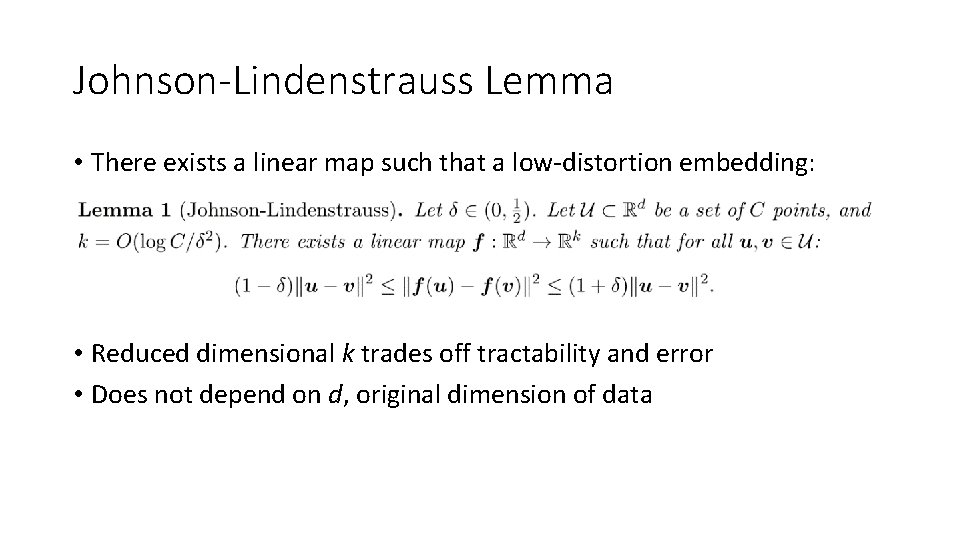

Johnson-Lindenstrauss Lemma • There exists a linear map such that a low-distortion embedding: • Reduced dimensional k trades off tractability and error • Does not depend on d, original dimension of data

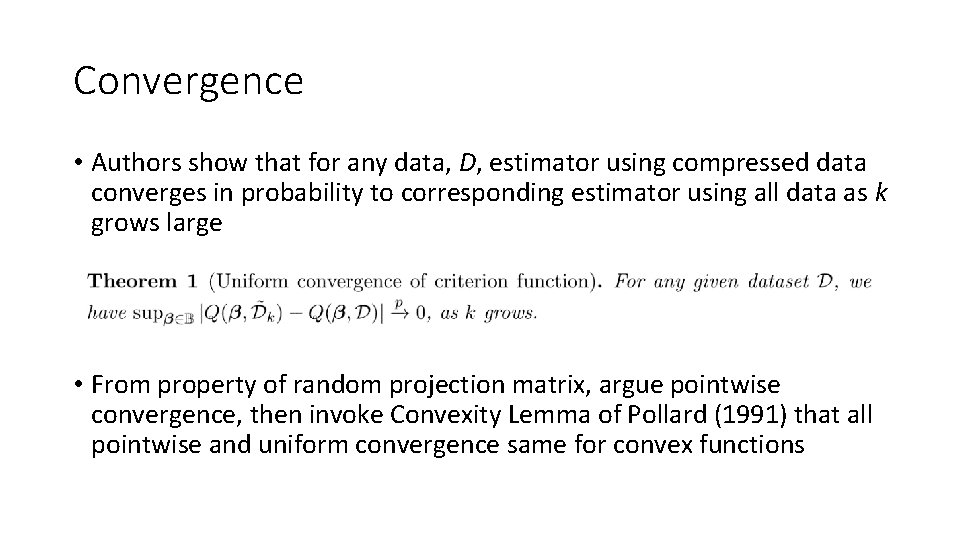

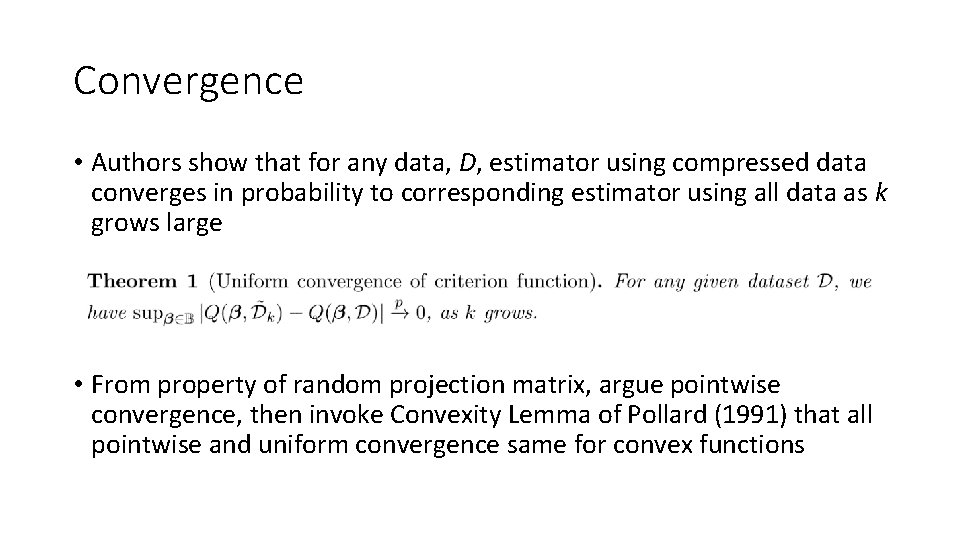

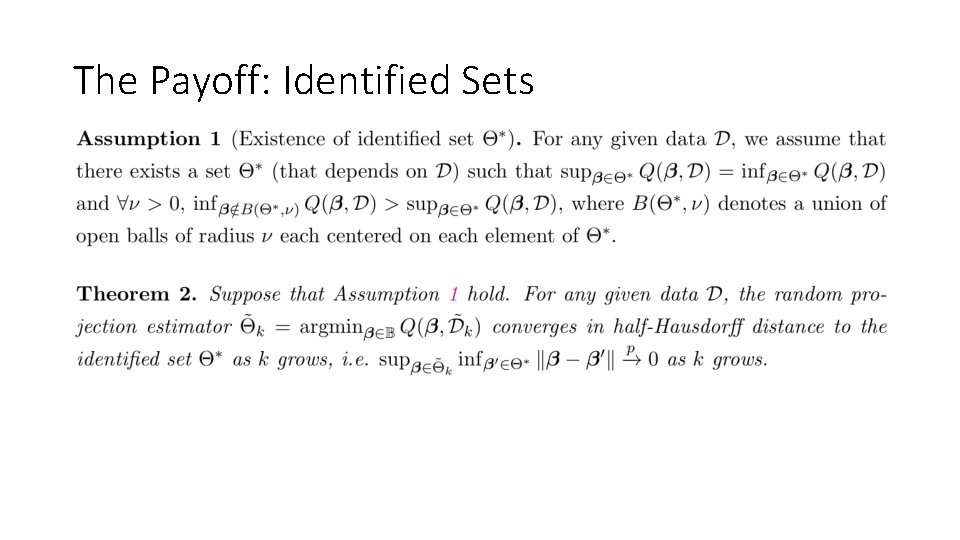

Convergence • Authors show that for any data, D, estimator using compressed data converges in probability to corresponding estimator using all data as k grows large • From property of random projection matrix, argue pointwise convergence, then invoke Convexity Lemma of Pollard (1991) that all pointwise and uniform convergence same for convex functions

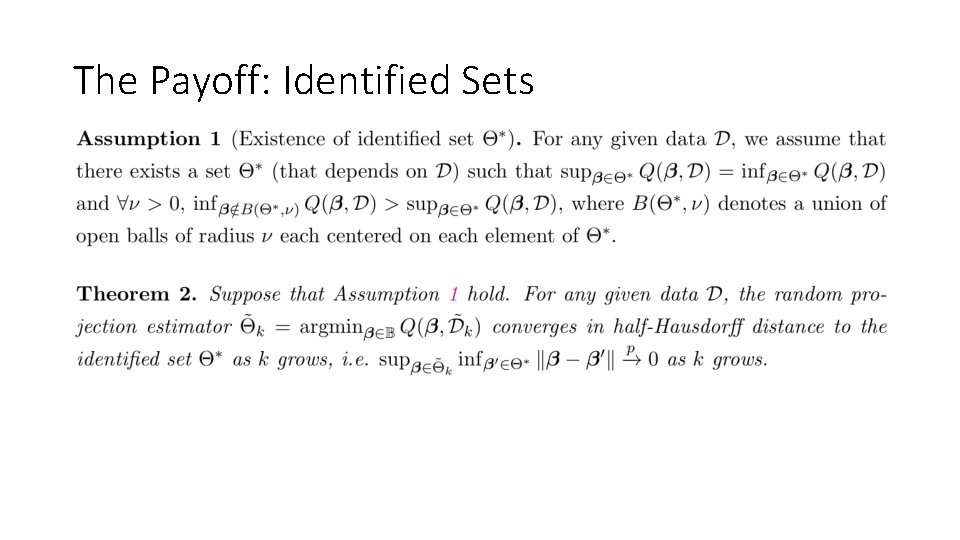

The Payoff: Identified Sets

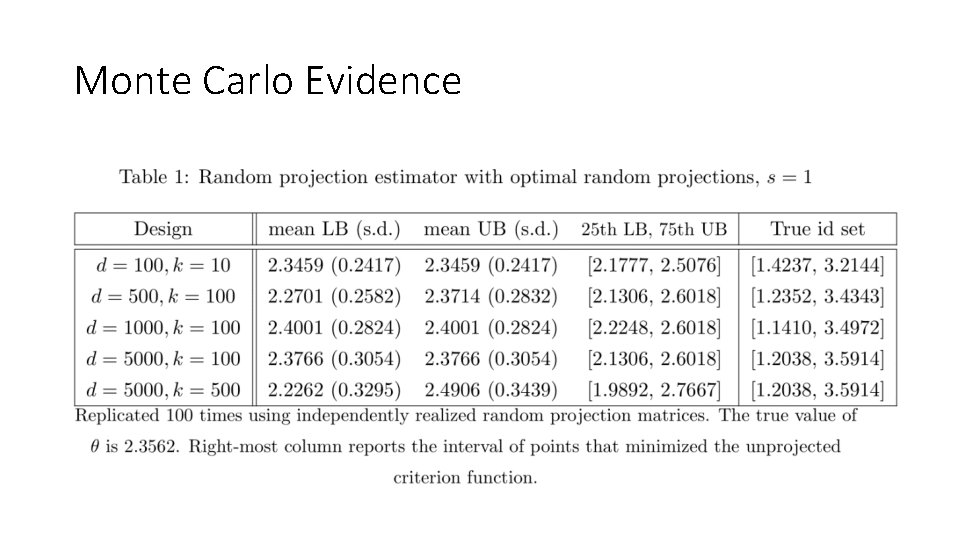

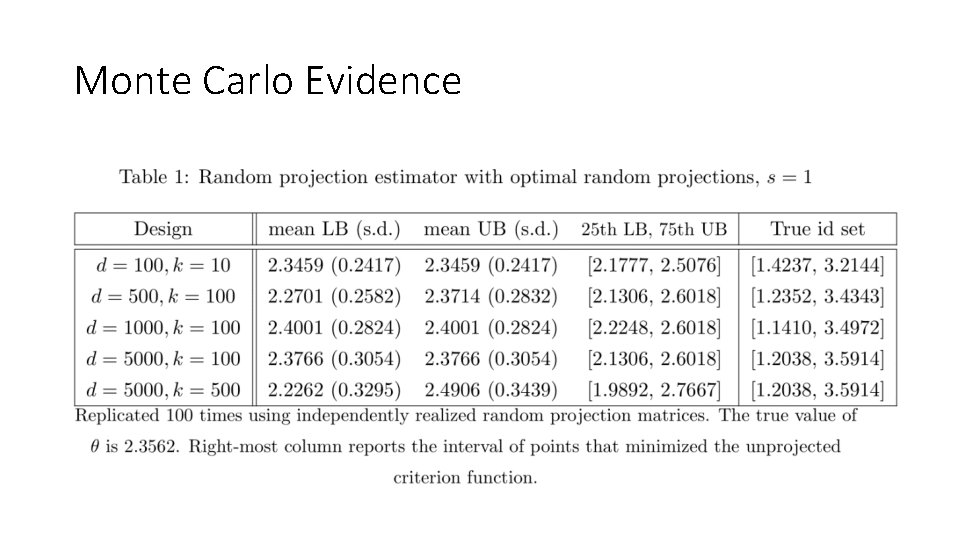

Monte Carlo Evidence

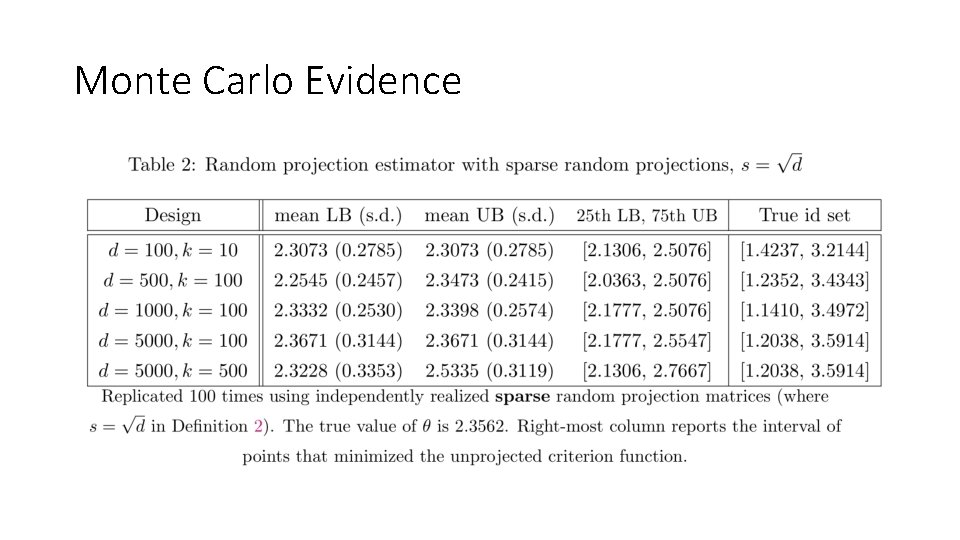

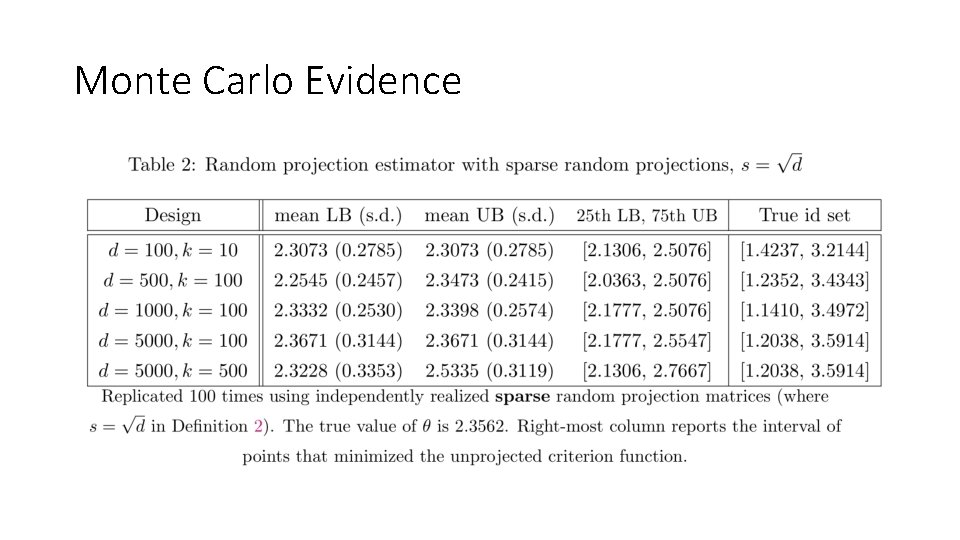

Monte Carlo Evidence

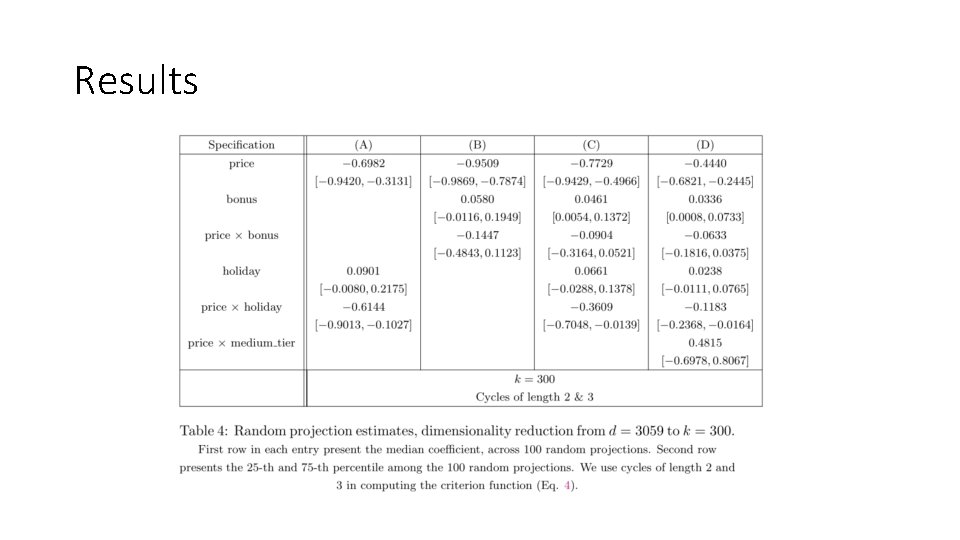

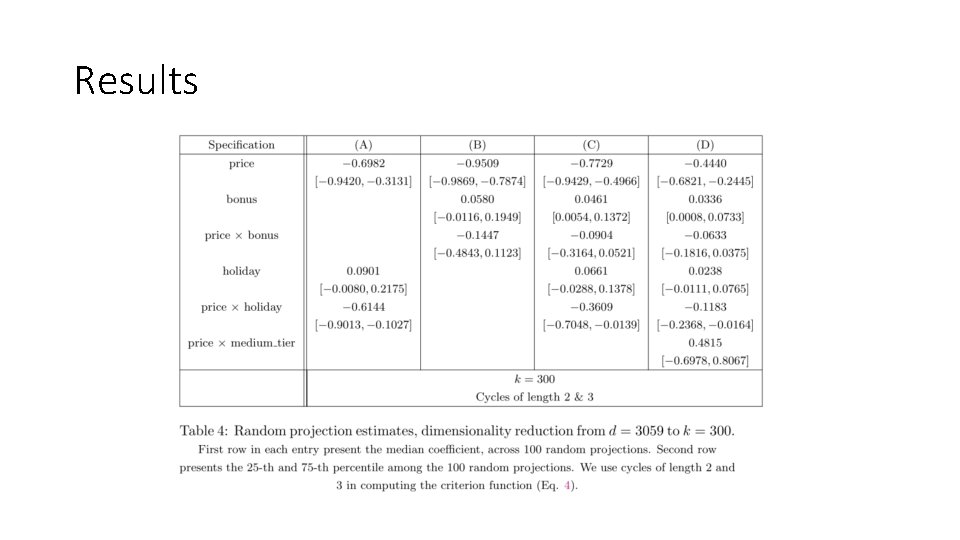

Application: Supermarket Scanner Data •

Results

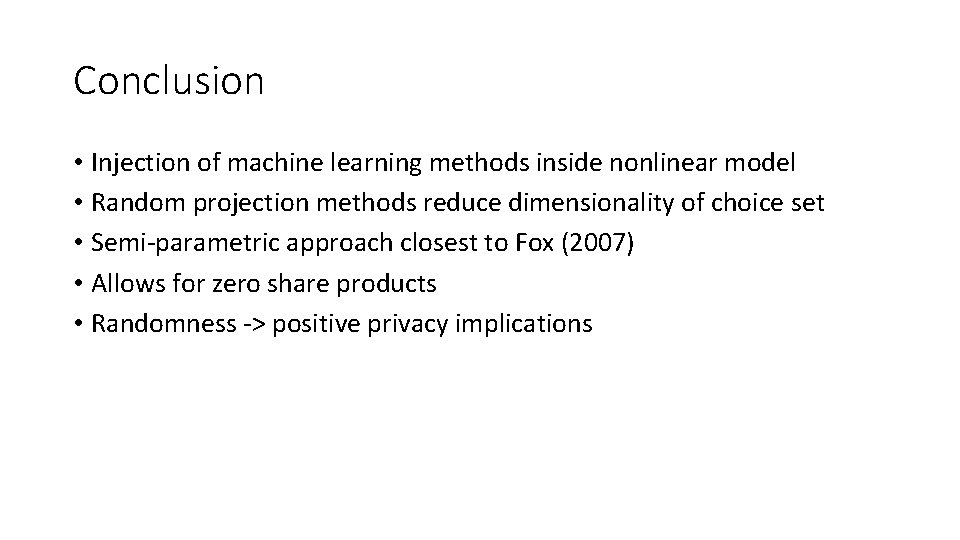

Conclusion • Injection of machine learning methods inside nonlinear model • Random projection methods reduce dimensionality of choice set • Semi-parametric approach closest to Fox (2007) • Allows for zero share products • Randomness -> positive privacy implications