Lecture 3 Analysis of Algorithms Part II Plan

- Slides: 14

Lecture 3 Analysis of Algorithms, Part II

Plan for today • Finish Big Oh, more motivation and examples, do some limit calculations. • Little Oh, Theta notation • Start Recurrences. – Ex. 1 ch. 1 in textbook – Tower of Hanoi. – Binary search recurrence

An example of Proof by Induction for a Recursive Program • Ex 1 Ch 1 textbook • Function: int g(int n) if n<=1 return n else return 5 g(n-1) + 6 g(n-2) • Prove that g(n)=3 n – 2 n

More about Big Oh • lim a(n)/b(n) = constant if and only if a(n) < c b(n) for all n sufficiently large • This allows to estimate, when we do not know how to compute limits. • Example: the Harmonic numbers H(n) = 1+1/2+1/3+1/4+…+1/n < 1+1+…+1=n Hence H(n)=O(n).

Complexity Classes • Big Oh allows to talk about algorithms with asymptotically comparable running times • Most important in practice (tractable problems): – Logarithmic and poly-logarithmic: log n, logkn – Polynomial: n, n 2, n 3, …, nk, . . • Untractable problems: – Exponential: 2 n, 3 n, 2 n^2, … – Higher (super-exponential): 22^n, 2 n^n, …

Most efficient: logarithmic and poly-logarithmic • Functions which are O(log n) or polynomials in log n. • Examples: – Base of the logarithm is irrelevant inside Big Oh: logb n (any base b) = O(log n) – Harmonic series: 1+1/2+1/3+…+ 1/n = O(log n) • Most efficient data structures have search time O(log n) or O(log 2 n)

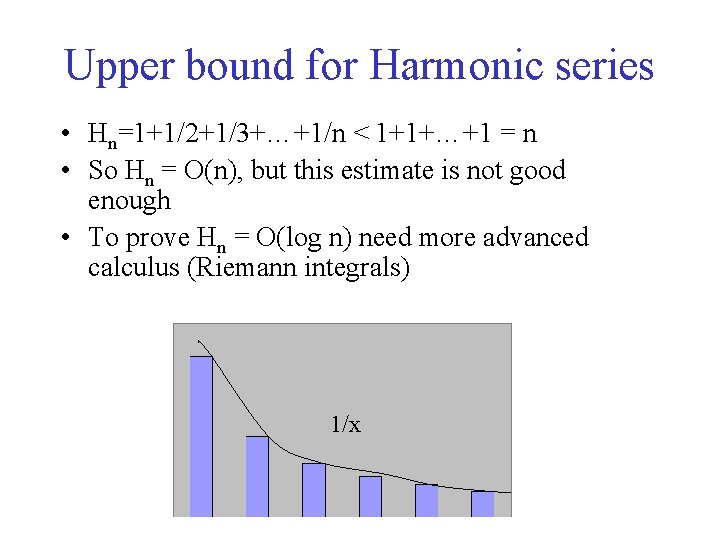

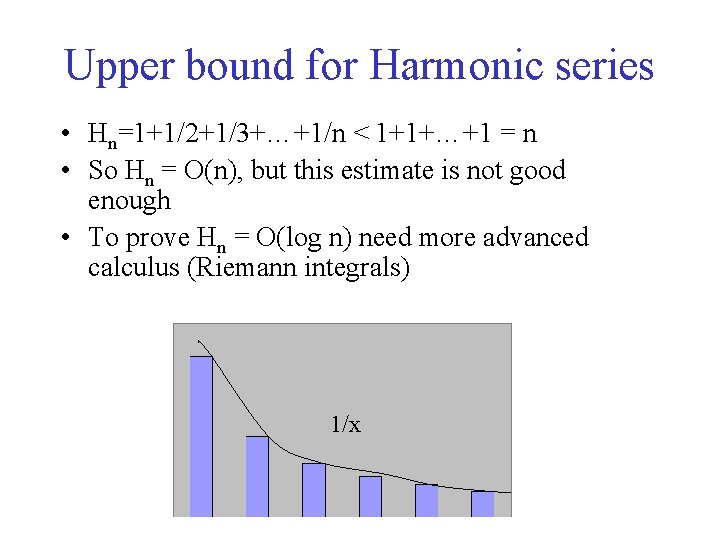

Upper bound for Harmonic series • Hn=1+1/2+1/3+…+1/n < 1+1+…+1 = n • So Hn = O(n), but this estimate is not good enough • To prove Hn = O(log n) need more advanced calculus (Riemann integrals) 1/x

Examples • Binary search: main paradigm, search with O(log n) time per operation – Binary search trees: worst case time is linear, but on average search time is log n – B-trees (used in databases) and B+ trees – AVL-trees – Red-black trees – Splay trees, finger trees • Dynamic convex hull algorithms: O(log 2 n) time per update operation

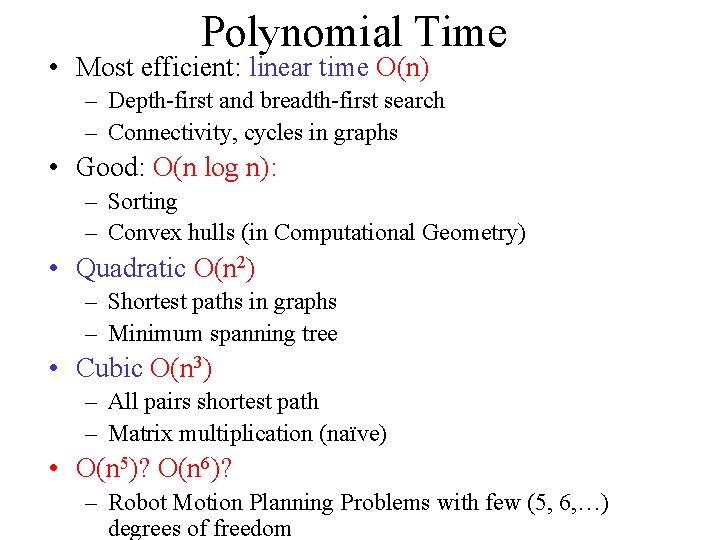

Polynomial Time • Most efficient: linear time O(n) – Depth-first and breadth-first search – Connectivity, cycles in graphs • Good: O(n log n): – Sorting – Convex hulls (in Computational Geometry) • Quadratic O(n 2) – Shortest paths in graphs – Minimum spanning tree • Cubic O(n 3) – All pairs shortest path – Matrix multiplication (naïve) • O(n 5)? O(n 6)? – Robot Motion Planning Problems with few (5, 6, …) degrees of freedom

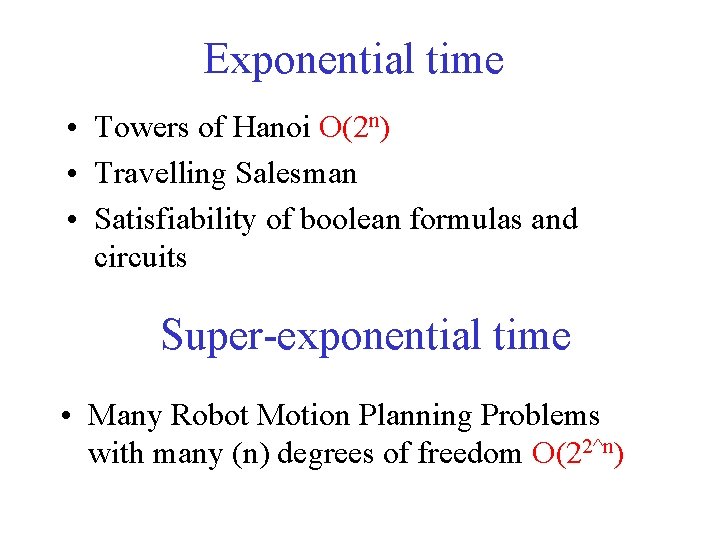

Exponential time • Towers of Hanoi O(2 n) • Travelling Salesman • Satisfiability of boolean formulas and circuits Super-exponential time • Many Robot Motion Planning Problems with many (n) degrees of freedom O(22^n)

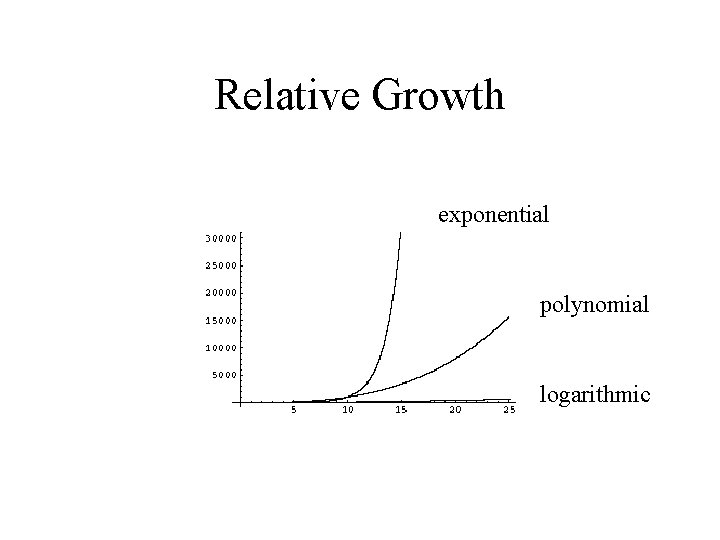

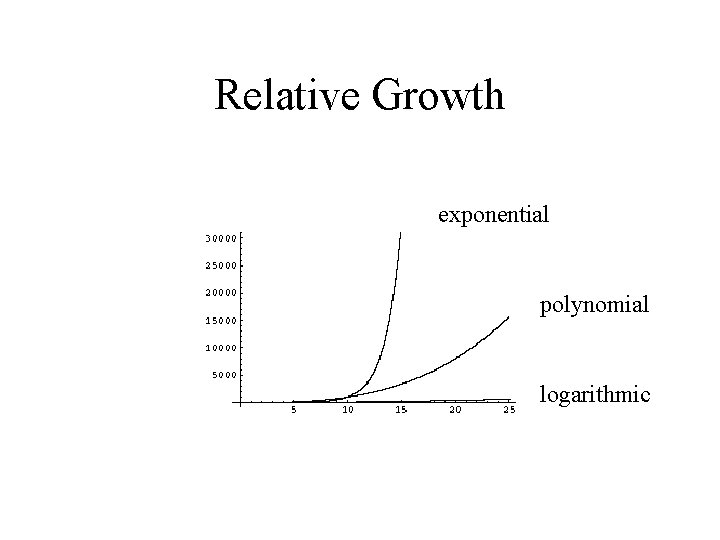

Relative Growth exponential polynomial logarithmic

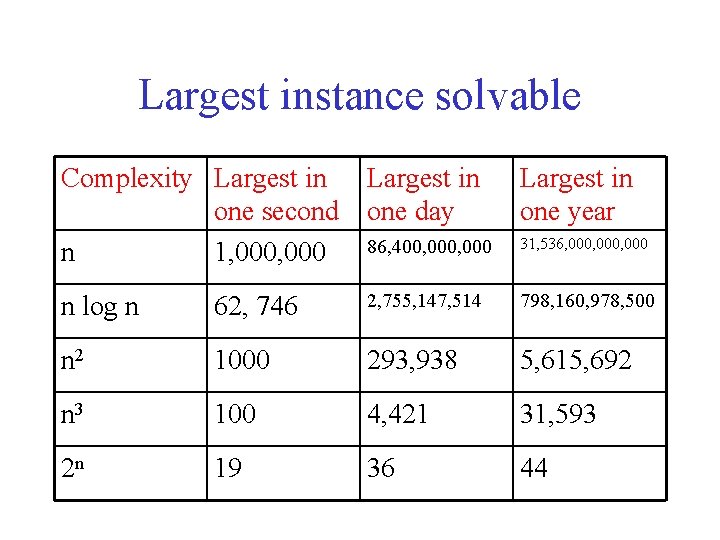

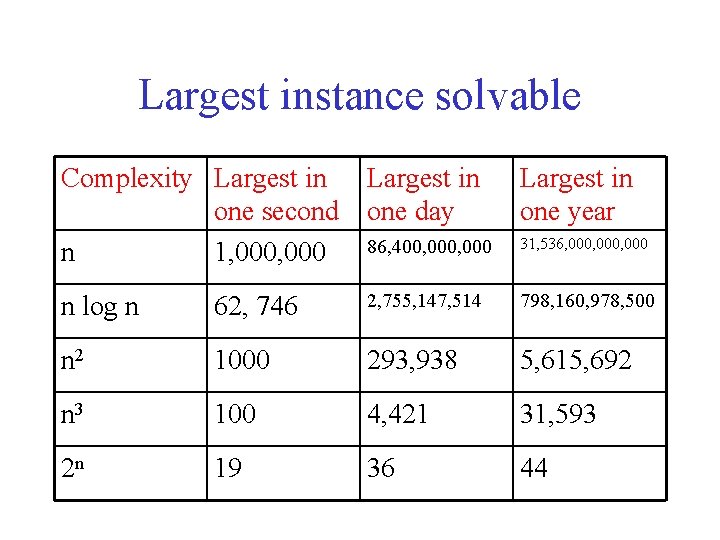

Largest instance solvable Complexity Largest in one second one day n 1, 000 86, 400, 000 Largest in one year 31, 536, 000, 000 n log n 62, 746 2, 755, 147, 514 798, 160, 978, 500 n 2 1000 293, 938 5, 615, 692 n 3 100 4, 421 31, 593 2 n 19 36 44

Big Omega and Theta • Big Oh and Small Oh give Upper bounds on functions • Reverses the role in Big Oh: – f(n) = Omega(g(n)) iff g(n) = O(f(n)) lim g(n) / f(n) < constant • Big Oh gives upper bounds, Omega notation gives lower bounds • Theta: both Big Oh and Omega

Examples • f(n) = n 2+2 n-10 and g(n)=3 n 2 -23 are Theta(n 2) and Theta of each other. Why? • f(n)=log 2 n and g(n)=log 3 n are Theta of each other (logarithm base doesn’t count) • f(n)=2 n and g(n)=3 n are NOT Theta of each other. f(n)=o(g(n)), grows much slower. Because lim 2 n/3 n=0.