Lecture 15 Distributed Multimedia Systems Haibin Zhu Ph

Lecture 15: Distributed Multimedia Systems Haibin Zhu, Ph. D. Assistant Professor Department of Computer Science Nipissing University © 2002

Contents 15. 1 15. 2 15. 3 15. 4 15. 5 15. 6 15. 7 Introduction Characteristics of multimedia data Quality of service management Resource management Stream adaptation Case study: Tiger video file server Summary 2

Learning objectives To understand the nature of multimedia data and the scheduling and resource issues associated with it. To become familiar with the components and design of distributed multimedia applications. To understand the nature of quality of service and the system support that it requires. To explore the design of a state-of-the-art, scalable video file service; illustrating a radically novel design approach for quality of service. 3 *

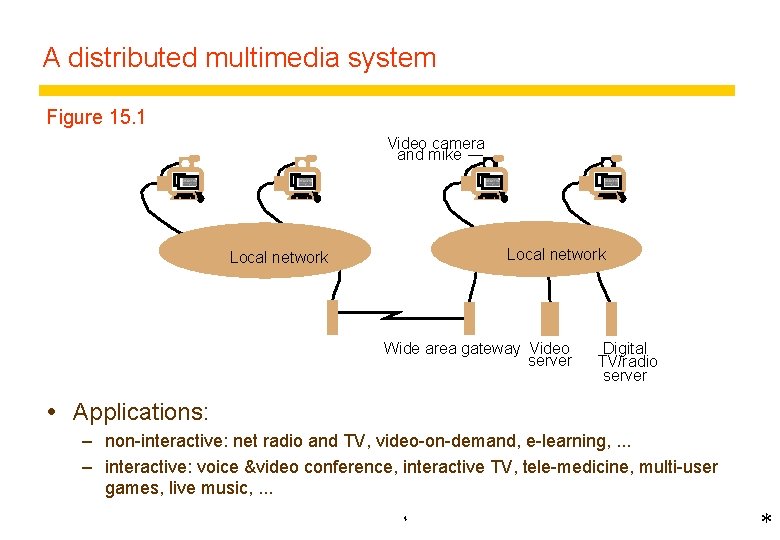

A distributed multimedia system Figure 15. 1 Video camera and mike Local network Wide area gateway Video server Digital TV/radio server Applications: – non-interactive: net radio and TV, video-on-demand, e-learning, . . . – interactive: voice &video conference, interactive TV, tele-medicine, multi-user games, live music, . . . 4 *

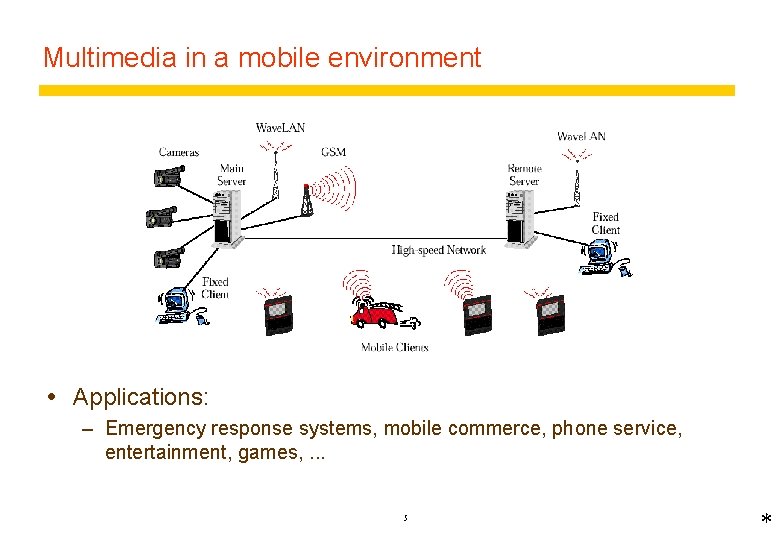

Multimedia in a mobile environment Applications: – Emergency response systems, mobile commerce, phone service, entertainment, games, . . . 5 *

Characteristics of multimedia applications Large quantities of continuous data Timely and smooth delivery is critical – deadlines – throughput and response time guarantees Interactive MM applications require low round-trip delays Need to co-exist with other applications – must not hog resources Reconfiguration is a common occurrence – varying resource requirements Resources required: – – – Processor cycles in workstations and servers Network bandwidth (+ latency) Dedicated memory Disk bandwidth (for stored media) At the right time and in the right quantities 6 *

Application requirements Network phone and audio conferencing – relatively low bandwidth (~ 64 Kbits/sec), but delay times must be short ( < 250 ms round-trip) Video on demand services – High bandwidth (~ 10 Mbits/s), critical deadlines, latency not critical Simple video conference – Many high-bandwidth streams to each node (~1. 5 Mbits/s each), high bandwidth, low latency ( < 100 ms round-trip), synchronised states. Music rehearsal and performance facility – high bandwidth (~1. 4 Mbits/s), very low latency (< 100 ms round trip), highly synchronised media (sound and video < 50 ms). 7 *

System support issues and requirements Scheduling and resource allocation in most current OS’s divides the resources equally amongst all comers (processes) – no limit on load – can’t guarantee throughput or response time MM and other time-critical applications require resource allocation and scheduling to meet deadlines – Quality of Service (Qo. S) management w Admission control: controls demand w Qo. S negotiation: enables applications to negotiate admission and reconfigurations w Resource management: guarantees availability of resources for admitted applications – real-time processor and other resource scheduling 8 *

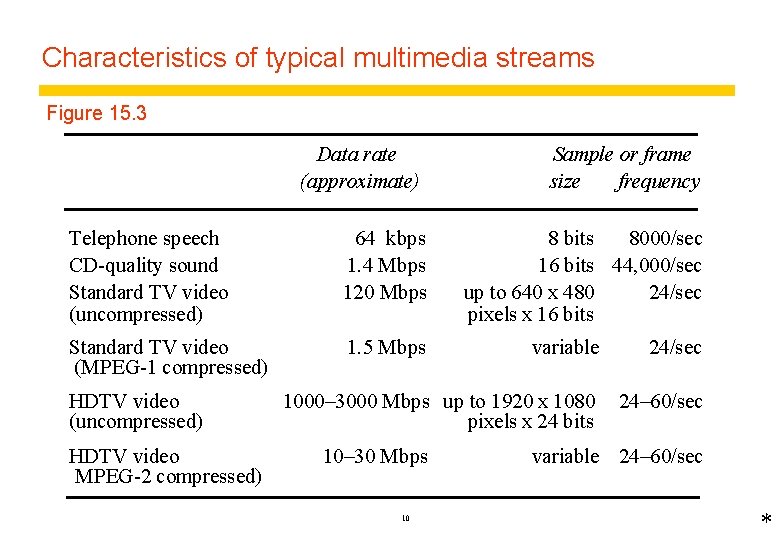

Characteristics of typical multimedia streams Figure 15. 3 Data rate (approximate) Telephone speech CD-quality sound Standard TV video (uncompressed) 64 kbps 1. 4 Mbps 120 Mbps Standard TV video (MPEG-1 compressed) 1. 5 Mbps HDTV video (uncompressed) HDTV video MPEG-2 compressed) Sample or frame frequency size 8 bits 8000/sec 16 bits 44, 000/sec up to 640 x 480 24/sec pixels x 16 bits variable 24/sec 1000– 3000 Mbps up to 1920 x 1080 pixels x 24 bits 24– 60/sec 10– 30 Mbps 10 variable 24– 60/sec *

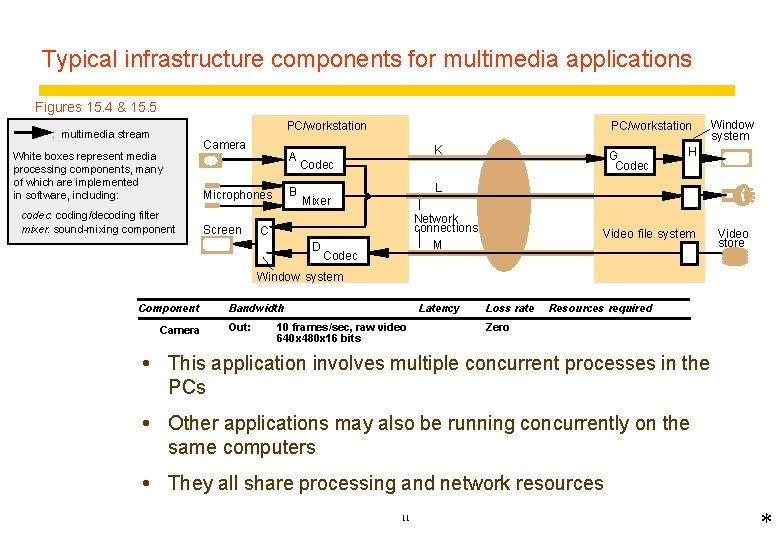

Typical infrastructure components for multimedia applications Figures 15. 4 & 15. 5 PC/workstation : multimedia stream White boxes represent media processing components, many of which are implemented in software, including: codec: coding/decoding filter mixer: sound-mixing component Camera A B Microphones Screen PC/workstation K G Codec H L Mixer Network connections M C D Window system Codec Video file system Video store Window system Component Camera Bandwidth Out: Latency 10 frames/sec, raw video 640 x 480 x 16 bits Loss rate Resources required Zero In: 10 frames/sec, raw video Interactive Low 10 ms CPU each 100 ms; Codec This application involves multiple concurrent processes in the Out: MPEG-1 stream 10 Mbytes RAM PCs In: B Mixer 2 44 kbps audio Interactive Very low 1 ms CPU each 100 ms; A Out: 1 44 kbps audio 1 Mbytes RAM Window Other applications may also Interactive be running onms; the In: various Low concurrently 5 ms CPU each 100 system Out: 50 frame/sec framebuffer 5 Mbytes RAM same computers H K Network In/Out: connection MPEG-1 stream, approx. 1. 5 Mbps Interactive Low 1. 5 Mbps, low-loss stream protocol Network They all. In/Out: share processing and network resources Audio 44 kbps Interactive Very low 44 kbps, very low-loss L stream protocol connection 11 *

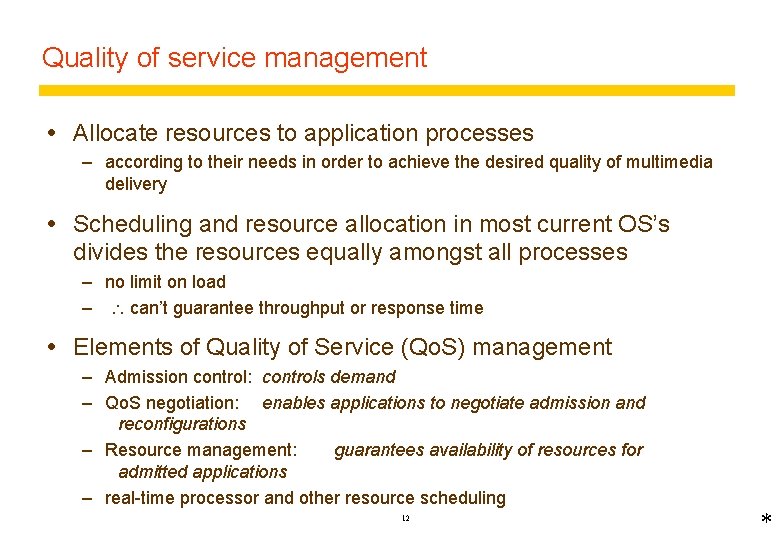

Quality of service management Allocate resources to application processes – according to their needs in order to achieve the desired quality of multimedia delivery Scheduling and resource allocation in most current OS’s divides the resources equally amongst all processes – no limit on load – can’t guarantee throughput or response time Elements of Quality of Service (Qo. S) management – Admission control: controls demand – Qo. S negotiation: enables applications to negotiate admission and reconfigurations – Resource management: guarantees availability of resources for admitted applications – real-time processor and other resource scheduling 12 *

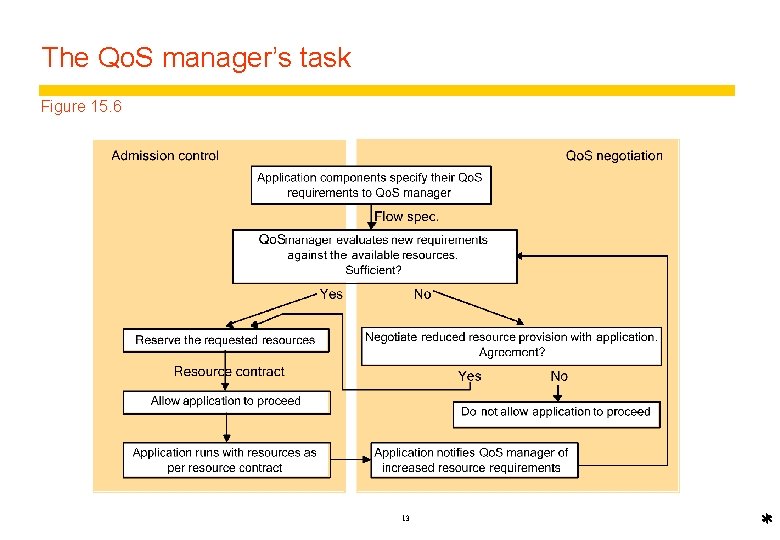

The Qo. S manager’s task Figure 15. 6 13 *

Qo. S Parameters Bandwidth – rate of flow of multimedia data Latency – time required for the end-to-end transmission of a single data element Jitter w variation in latency : – d. L/dt Loss rate – the proportion of data elements that can be dropped or delivered late 14 *

Managing the flow of multimedia data Flows are variable – video compression methods such as MPEG (1 -4) are based on similarities between consecutive frames – can produce large variations in data rate Burstiness – Linear bounded arrival process (LBAP) model: w maximum flow per interval t = Rt + B burst) (R = average rate, B = max. – buffer requirements are determined by burstiness – Latency and jitter are affected (buffers introduce additional delays) Traffic shaping – method for scheduling the way a buffer is emptied 15 *

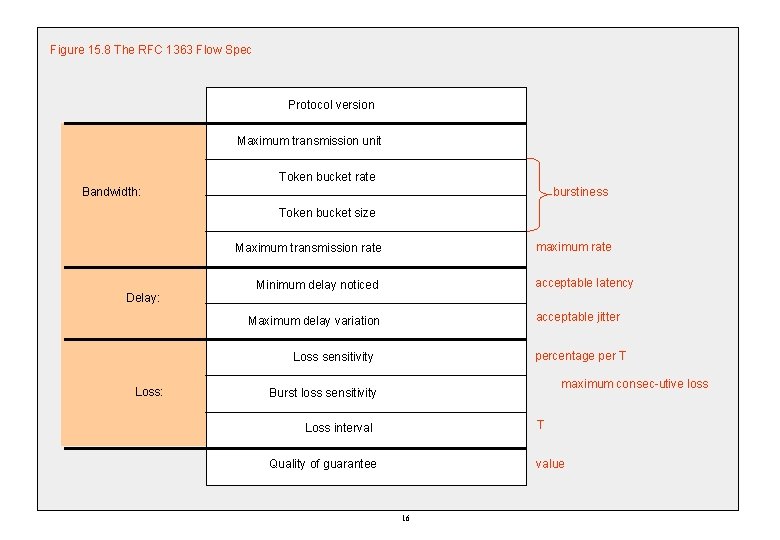

Figure 15. 8 The RFC 1363 Flow Spec Protocol version Maximum transmission unit Token bucket rate burstiness Bandwidth: Token bucket size maximum rate Maximum transmission rate Delay: acceptable latency Minimum delay noticed acceptable jitter Maximum delay variation percentage per T Loss sensitivity Loss: maximum consec-utive loss Burst loss sensitivity T Loss interval value Quality of guarantee 16

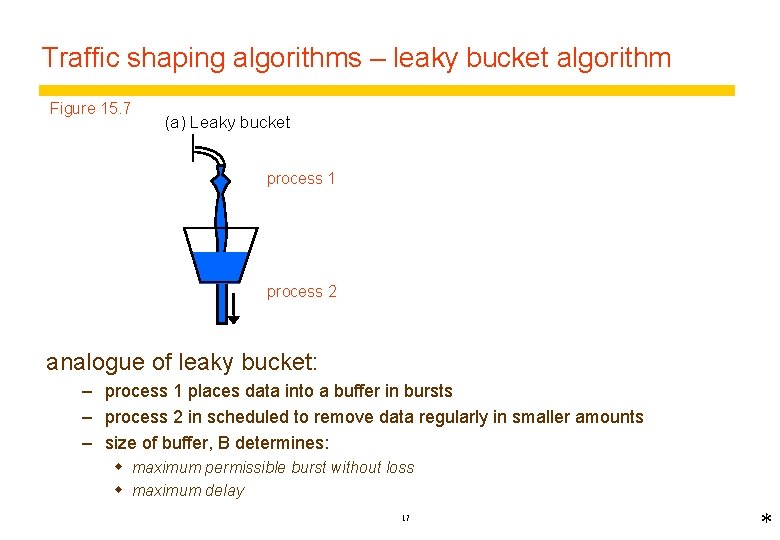

Traffic shaping algorithms – leaky bucket algorithm Figure 15. 7 (a) Leaky bucket process 1 process 2 analogue of leaky bucket: – process 1 places data into a buffer in bursts – process 2 in scheduled to remove data regularly in smaller amounts – size of buffer, B determines: w maximum permissible burst without loss w maximum delay 17 *

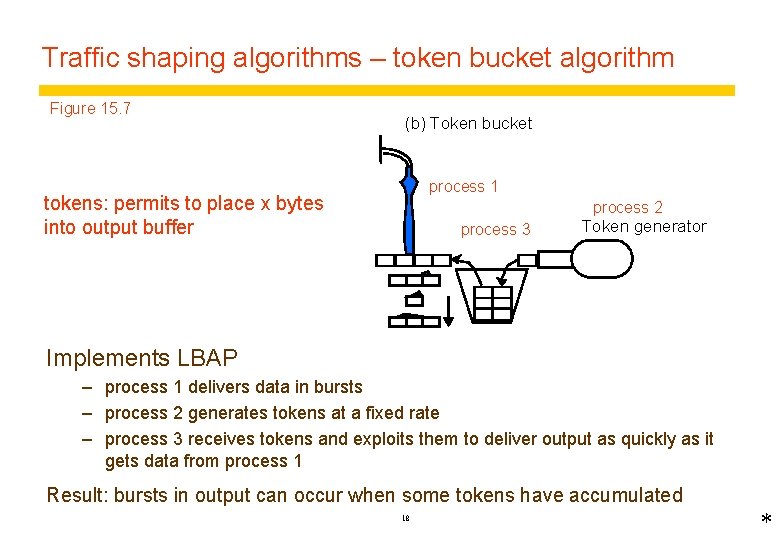

Traffic shaping algorithms – token bucket algorithm Figure 15. 7 (b) Token bucket process 1 tokens: permits to place x bytes into output buffer process 2 process 3 Token generator Implements LBAP – process 1 delivers data in bursts – process 2 generates tokens at a fixed rate – process 3 receives tokens and exploits them to deliver output as quickly as it gets data from process 1 Result: bursts in output can occur when some tokens have accumulated 18 *

Admission control delivers a contract to the application guaranteeing: For each network connection: For each computer: w cpu time, available at specific intervals w memory wbandwidth wlatency For disks, etc. : wbandwifth wlatency Before admission, it must assess resource requirements and reserve them for the application – Flow specs provide some information for admission control, but not all - assessment procedures are needed – there is an optimisation problem: w clients don't use all of the resources that they requested w flow specs may permit a range of qualities – Admission controller must negotiate with applications to produce an acceptable result 19 *

Resource management Scheduling of resources to meet the existing guarantees: e. g. for each computer: wcpu time, available at specific intervals wmemory Fair scheduling allows all processes some portion of the resources based on fairness: w E. g. round-robin scheduling (equal turns), fair queuing (keep queue lengths equal) w not appropriate for real-time MM because there are deadlines for the delivery of data Real-time scheduling traditionally used in special OS for system control applications - e. g. avionics. RT schedulers must ensure that tasks are completed by a scheduled time. Real-time MM requires real-time scheduling with very frequent deadlines. Suitable types of scheduling are: Earliest deadline first (EDF) Rate-monotonic 20 *

EDF scheduling Each task specifies a deadline T and CPU seconds S to the scheduler for each work item (e. g. video frame). EDF scheduler schedules the task to run at least S seconds before T (and pre-empts it after S if it hasn't yielded). It has been shown that EDF will find a schedule that meets the deadlines, if one exists. (But for MM, S is likely to be a millisecond or so, and there is a danger that the scheduler may have to run so frequently that it hogs the cpu). Rate-monotonic scheduling assigns priorities to tasks according to their rate of data throughput (or workload). Uses less CPU for scheduling decisions. Has been shown to work well where total workload is < 69% of CPU. 21

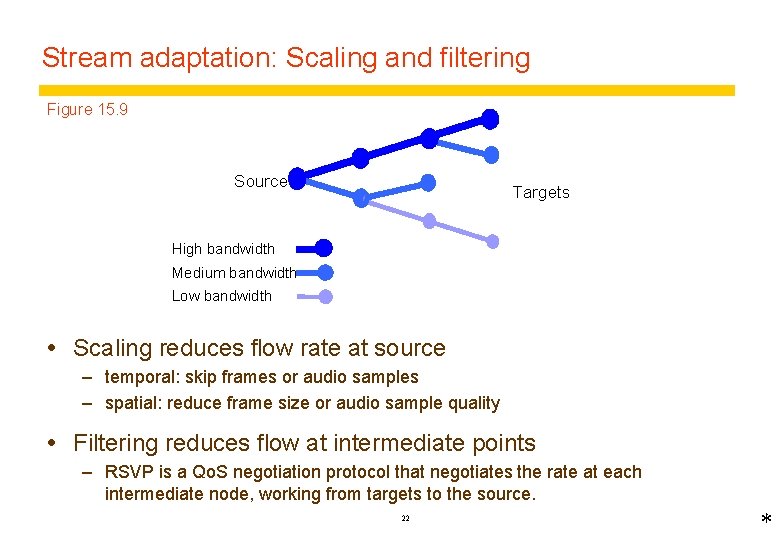

Stream adaptation: Scaling and filtering Figure 15. 9 Source Targets High bandwidth Medium bandwidth Low bandwidth Scaling reduces flow rate at source – temporal: skip frames or audio samples – spatial: reduce frame size or audio sample quality Filtering reduces flow at intermediate points – RSVP is a Qo. S negotiation protocol that negotiates the rate at each intermediate node, working from targets to the source. 22 *

Qo. S and the Internet Very little Qo. S in the Internet at present – New protocols to support Qo. S have been developed, but their implementation raises some difficult issues about the management of resources in the Internet. RSVP – Network resource reservation – Doesn’t ensure enforcement of reservations RTP – Real time data transmission over IP need to avoid adding undesirable complexity to the Internet IPv 6 has some hooks for it 23

IPv 6 header layout * 24

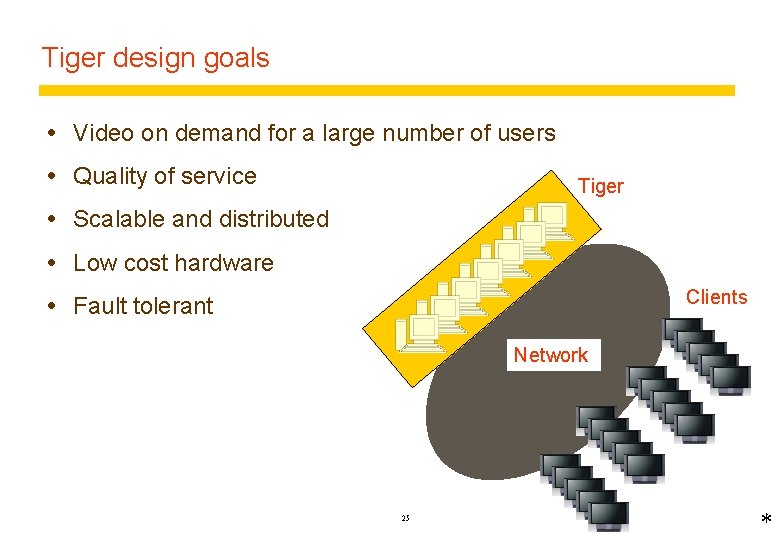

Tiger design goals Video on demand for a large number of users Quality of service Tiger Scalable and distributed Low cost hardware Clients Fault tolerant Network 25 *

Tiger architecture Storage organization – – Striping Mirroring Distributed schedule Tolerate failure of any single computer or disk Network support Other functions – pause, stop, start 26 *

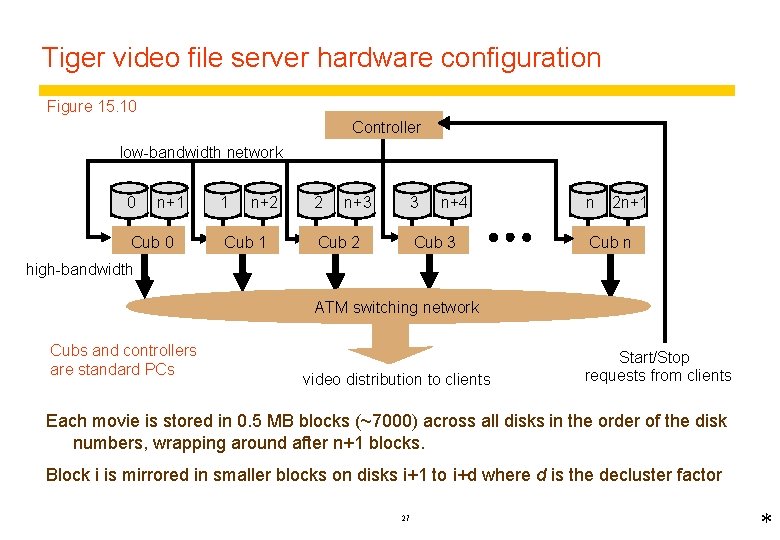

Tiger video file server hardware configuration Figure 15. 10 Controller low-bandwidth network 0 n+1 Cub 0 1 n+2 Cub 1 2 n+3 3 Cub 2 n+4 Cub 3 n 2 n+1 Cub n high-bandwidth ATM switching network Cubs and controllers are standard PCs video distribution to clients Start/Stop requests from clients Each movie is stored in 0. 5 MB blocks (~7000) across all disks in the order of the disk numbers, wrapping around after n+1 blocks. Block i is mirrored in smaller blocks on disks i+1 to i+d where d is the decluster factor 27 *

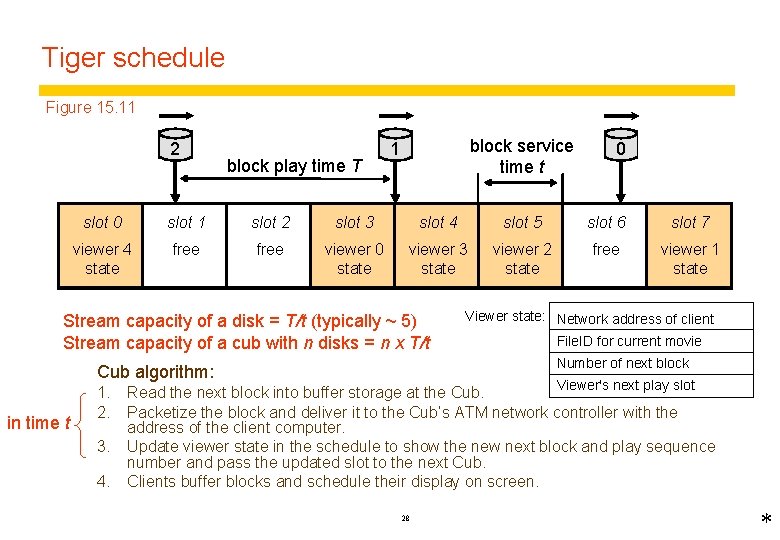

Tiger schedule Figure 15. 11 2 block play time T block service time t 1 slot 0 slot 1 slot 2 slot 3 slot 4 slot 5 slot 6 slot 7 viewer 4 state free viewer 0 state viewer 3 state viewer 2 state free viewer 1 state viewer client Stream capacity of a disk = T/t (typically ~ 5) Stream capacity of a cub with n disks = n x T/t viewer state: Viewer state: Network address of client File. ID for current movie Number of next block Cub algorithm: in time t 0 Viewer's next play slot 1. Read the next block into buffer storage at the Cub. 2. Packetize the block and deliver it to the Cub’s ATM network controller with the address of the client computer. 3. Update viewer state in the schedule to show the new next block and play sequence number and pass the updated slot to the next Cub. 4. Clients buffer blocks and schedule their display on screen. 28 *

Tiger performance and scalability 1994 measurements: – 5 x cubs: 133 MHz Pentium Win NT, 3 x 2 Gb disks each, ATM network. – supported streaming movies to 68 clients simultaneously without lost frames. – with one cub down, frame loss rate 0. 02% 1997 measurements: – 14 x cubs: 4 disks each, ATM network – supported streaming 2 Mbps movies to 602 clients simultaneously with loss rate of <. 01% – with one cub failed, loss rate <. 04% The designers suggested that Tiger could be scaled to 1000 cubs supporting 30, 000 clients. 29

Summary MM applications and systems require new system mechanisms to handle large volumes of time-dependent data in real time (media streams). The most important mechanism is Qo. S management, which includes resource negotiation, admission control, resource reservation and resource management. Negotiation and admission control ensure that resources are not over-allocated, resource management ensures that admitted tasks receive the resources they were allocated. Tiger file server: case study in scalable design of a streamoriented service with Qo. S. 30

- Slides: 29